Designing a Cyber-Physical System for Ambient Assisted Living: A Use-Case Analysis for Social Robot Navigation in Caregiving Centers

Abstract

1. Introduction

2. Overview of Cyber-Physical Systems in Caregiving Environments

Cyber-Physical Systems and Healthcare Initiatives

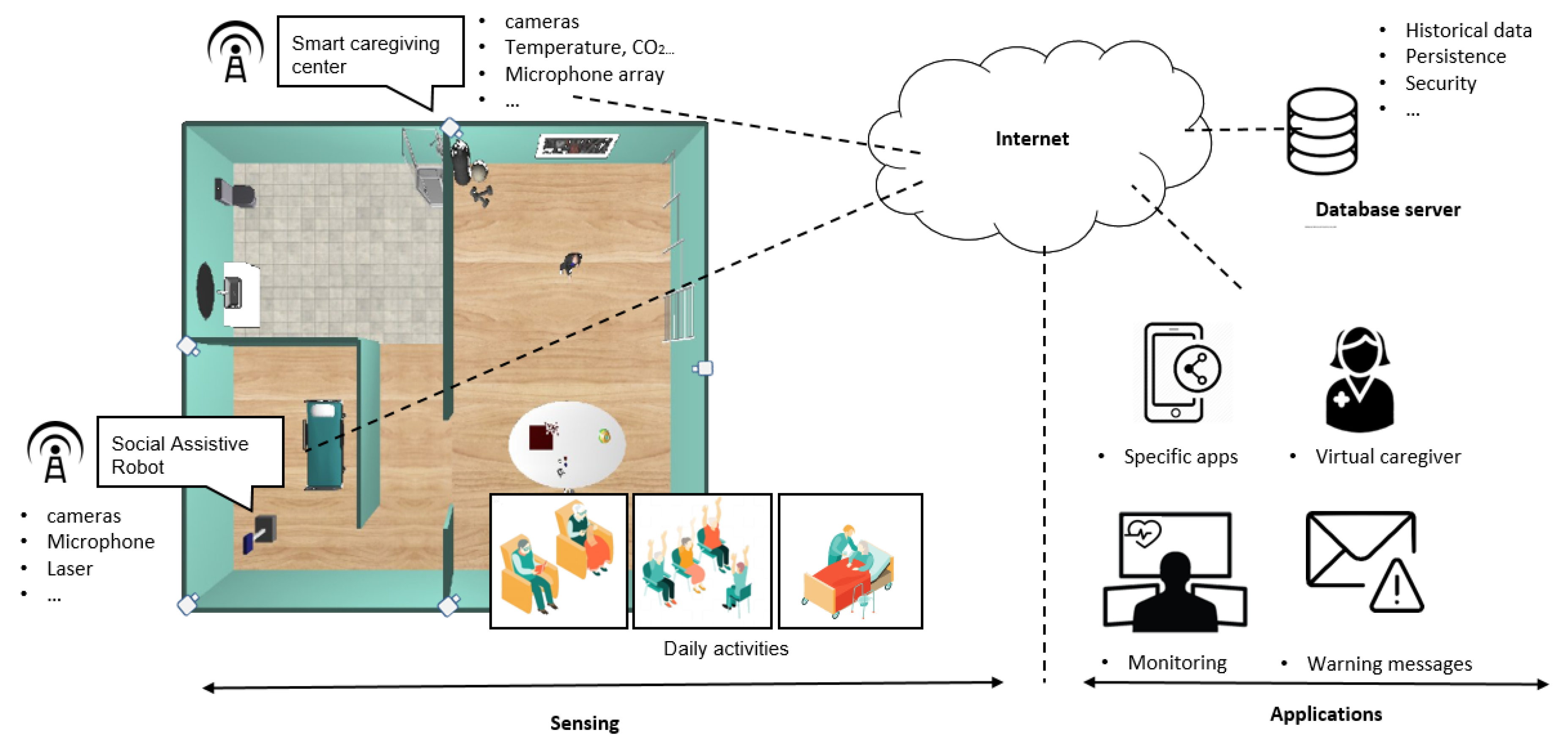

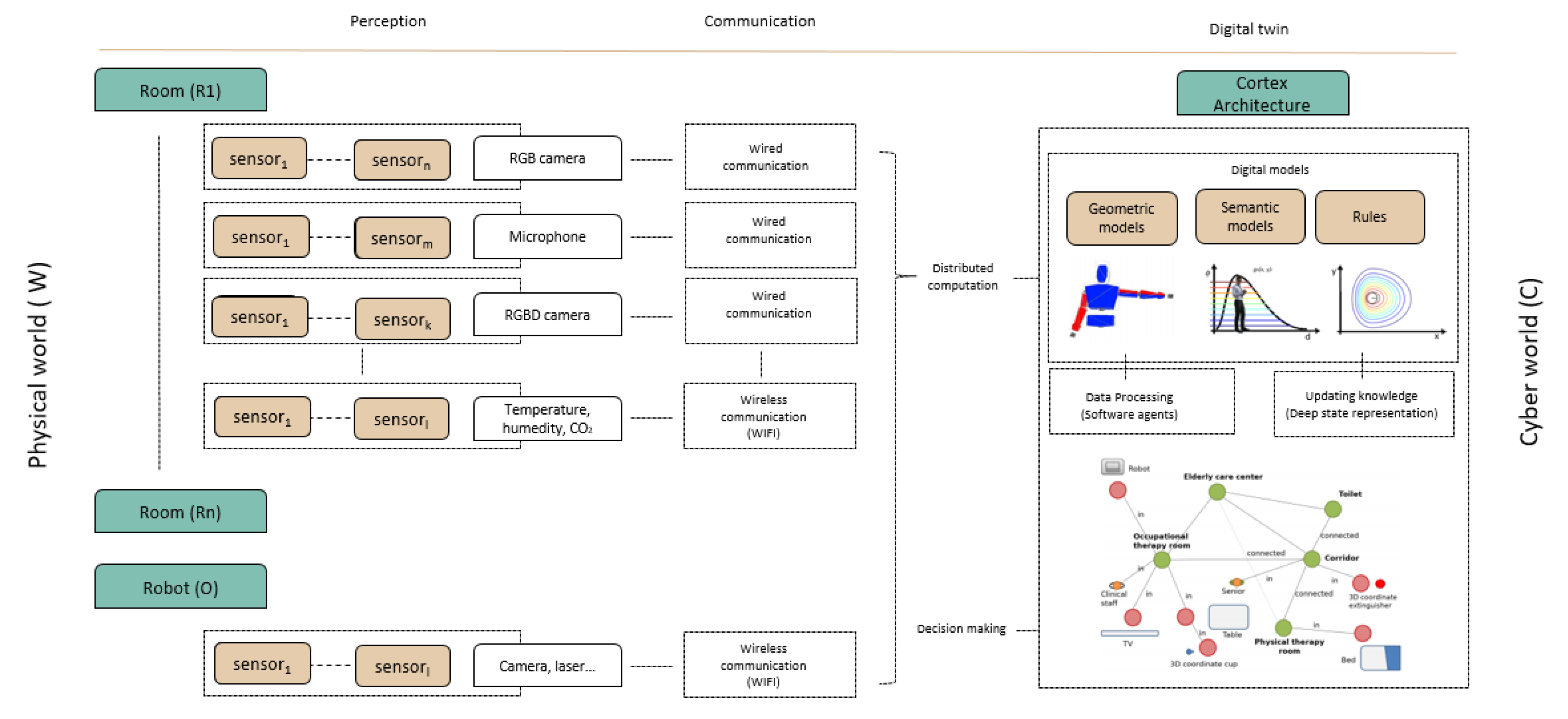

3. Cyber-Physical System for Caregiving Centers

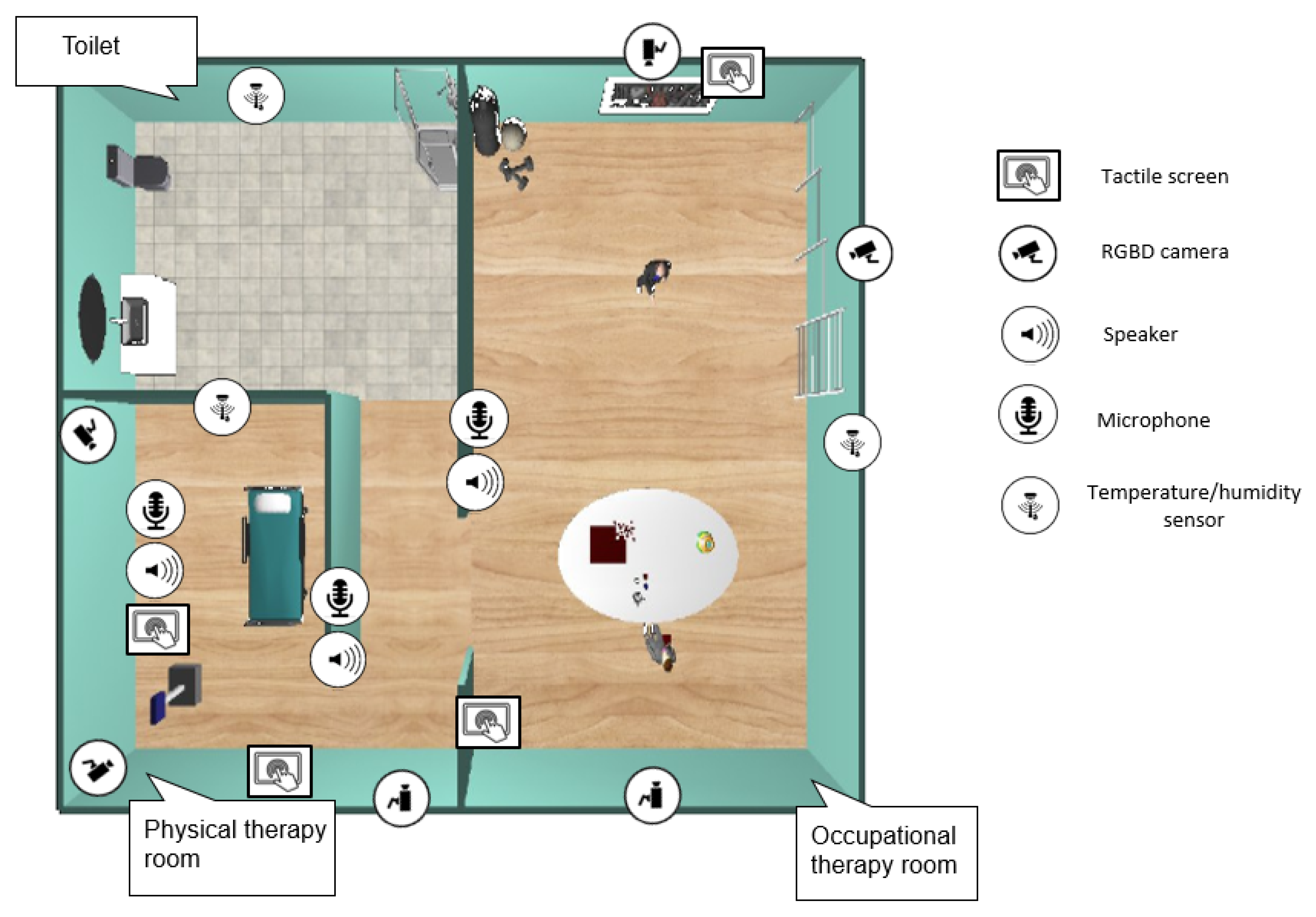

3.1. Designing the Physical World

3.1.1. Ambient Assisted Living

3.1.2. Socially Assistive Robot

3.2. Data Storage Subsystem

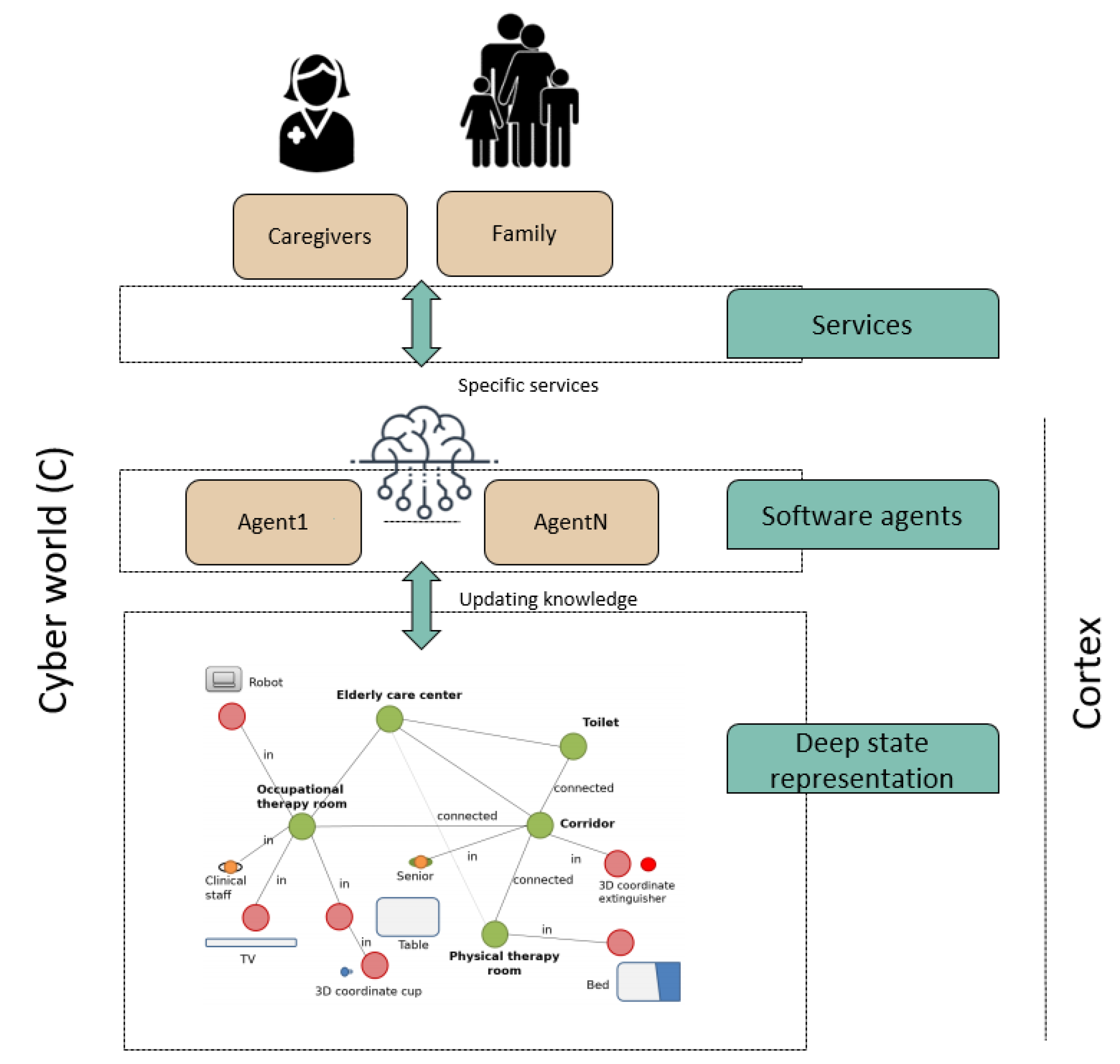

3.3. Designing the Cyber-World

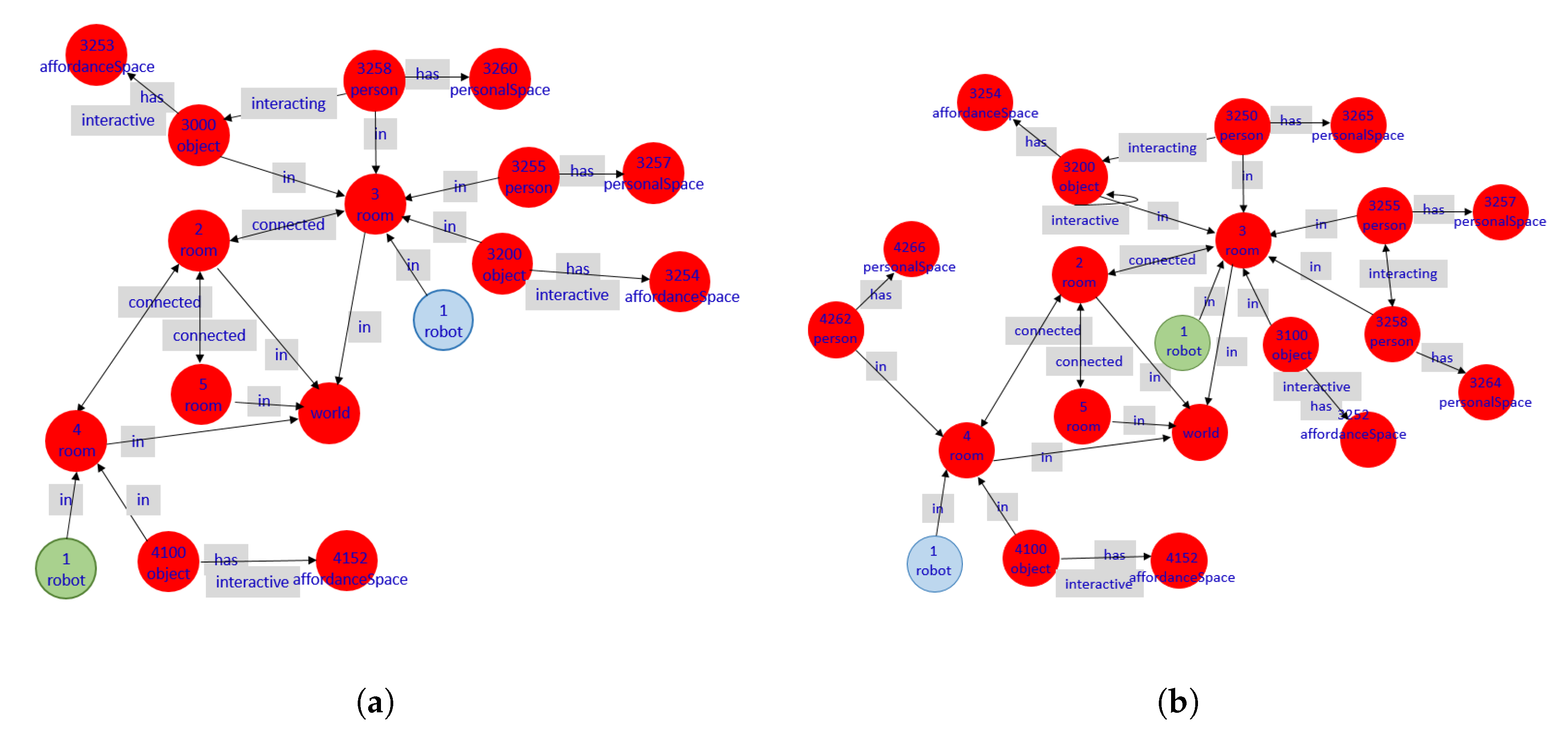

Digital Twin Model

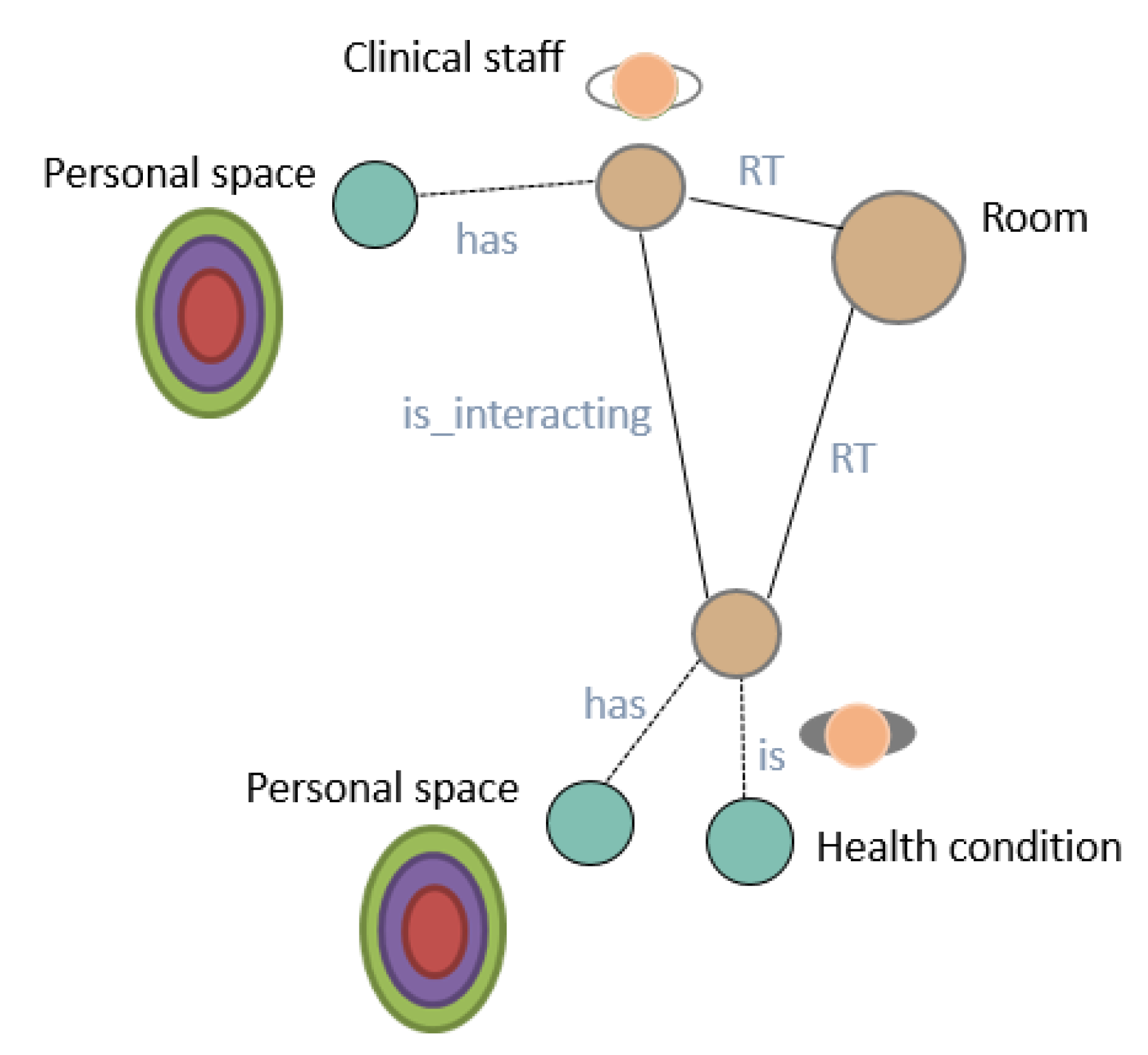

- Deep State Representation.Figure 6 shows a simple example of the DSR for a room and a person inside. The DSR is a directed graph , where the symbolic information states logic attributes related by predicates that, within the graph, are stored in nodes and edges, respectively. The clinical staff and senior nodes are geometrical entities, both linked to the room by rigid transformations (). Moreover, the senior has a particular health condition (i.e., an agent is updating this information in the graph) and both the senior and the clinical staff are interacting with each other (i.e., an agent is also annotating this situation in the graph), and each one has specific models (i.e., previous knowledge based on proxemics) of their personal spaces for decision making during social robot navigation.Formally, on the one hand, nodes N of the graph store information that can be symbolic, geometric, or a mix of both. Metric concepts are associated with any information associated with this node, such as temperature or humidity conditions, for example, which is directly related to the physical world . On the other hand, edges E represent relationships between symbols. Two nodes and may have several kinds of relationships , but only one of them can be geometric, which is expressed with a fixed label .

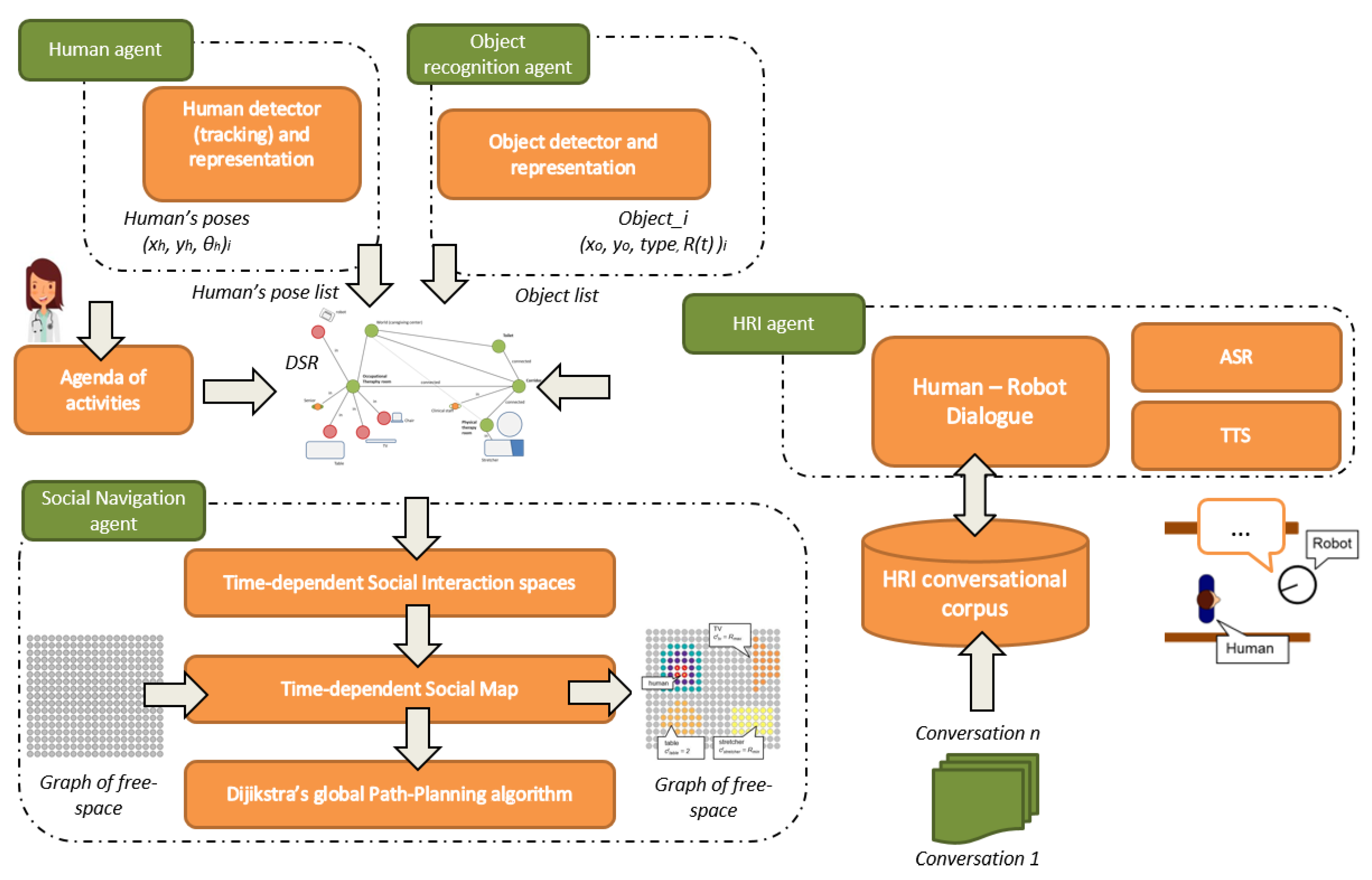

- CORTEX is cognitive architecture for robots and is described as a group of agents that cooperate using the DSR to achieve a particular goal. The agents at CORTEX are conceptual entities that are implemented with one or more software components. In CORTEX, the agents define classic Robotics functionalities, such as navigation, manipulation, person perception, object perception, conversation, reasoning, symbolic learning, or planning [18].In the proposed CPS-AAL, the network of sensors distributed in the environment enriches the DSR by enhancing the initial capabilities of the CORTEX agents. The agents also allow the implementation of actions that the CPS-AAL must carry out for elderly care: propose serious-games, notify the end of a session, or interact with the user. A brief description of the principal agents used is provided next:

- -

- Object recognition: The object recognition agent recognizes and estimates the position of objects in the environment. Each identified object is stored in the DSR, as a node. Its position and orientation are updated in the corresponding link.

- -

- Human recognition: Agent in charge of detecting and tracking people. This agent is in charge of detecting humans, including them in the DSR, generating the social interaction spaces, and keeping them in time. This information is used by the navigation agent, to warn the presence of humans on their route and make the necessary adjustments to try to move in a way more in line with our social norms.

- -

- Human-robot interaction: Agent in charge of human-robot interaction (HRI). This agent provides tools for collaboration and communication between humans and robots. The agent implements capabilities such as holding small conversations, detecting voice commands, or requesting information about unknown objects.

- -

- Planner (Executive): This agent is responsible for high-level planning, supervising the changes made in the DSR by the agents, and the correct execution of the plan. It integrates the AGGL planner [23] based on PDDL. The stages of the plan are completed through the collaboration of different agents. The DSR is updated and reflects the actions of each stage. This information allows the agent to use the current state of the DSR, the domain, the target, and the previous stage to update the running plan accordingly.

- -

- Navigation: The agent is in charge of navigating in compliance with the social rules. For this purpose, the agent is in charge of the social path-planning and SLAM. The location of the robot is updated and maintained in the DSR by this agent.

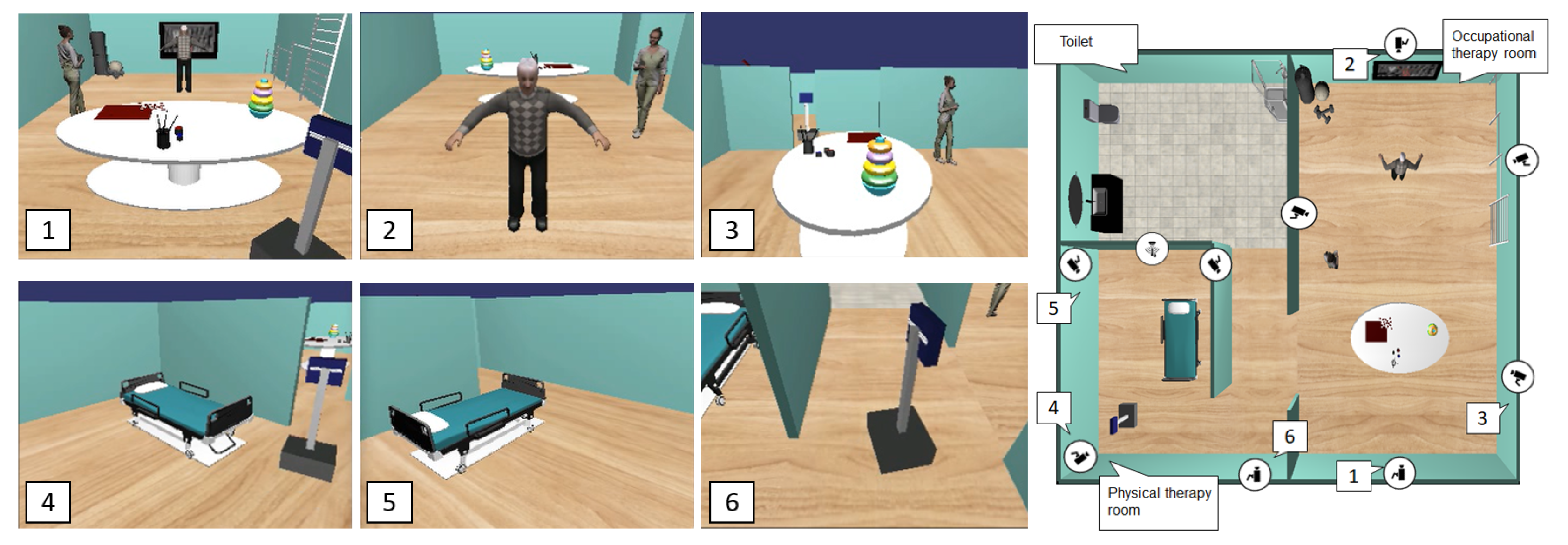

4. Use Case: Social Robot Navigation in Caregiving Center

4.1. Problem Statement

4.2. Use-Case Definition

4.3. Social Robot Navigation Framework Based on CPS-AAL

4.3.1. Social Mapping Based on Interaction Spaces

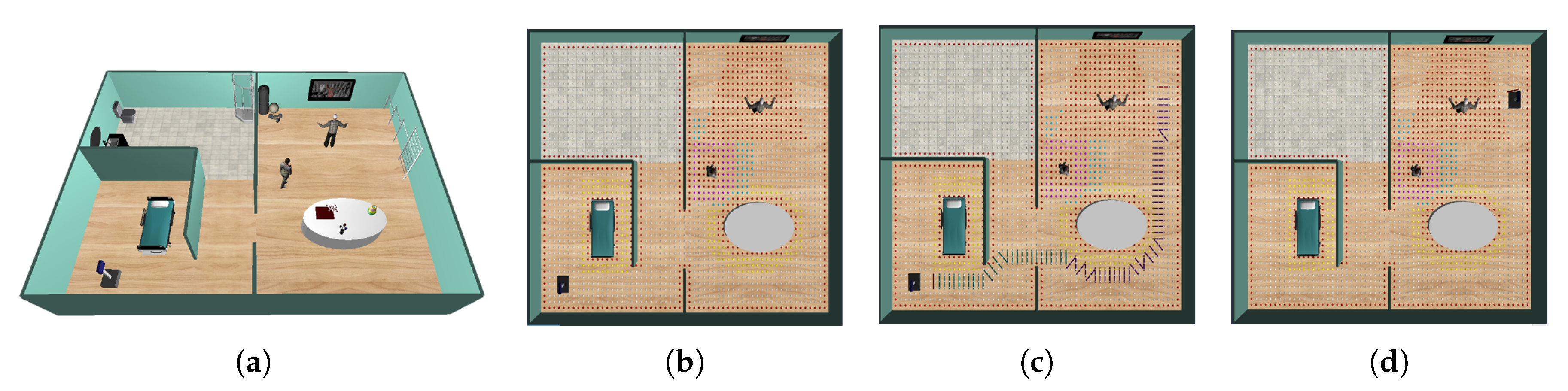

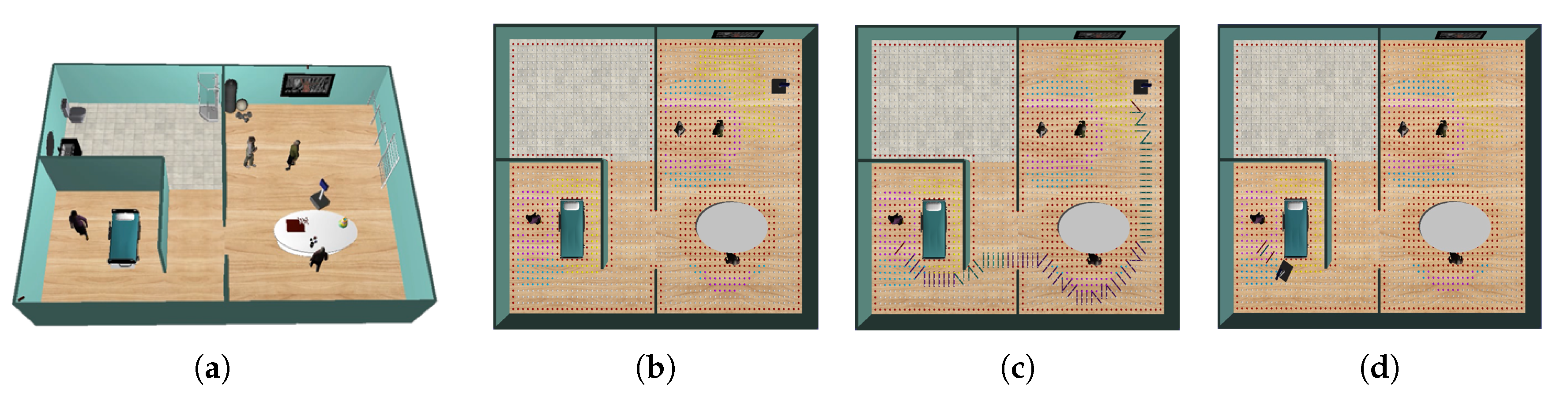

- Social mapping: people in the environment. Let be a set of n humans detected by the software agent, where is the pose of the i-th human in the environment. To model the personal space of each individual an asymmetric 2-D Gaussian curve is used [30]:being , and the coefficients used to take into account the rotation of the function , defined by the relationswhere is the variance on the left and right ( direction) and defines the variance along the direction (), or the variance to the rear (). See [30] for details.

- Social mapping: Space Affordances and Activity Spaces. Let be the set of M objects with which humans interact in the environment. The position and type of these objects is information known to the CPS-AAL. Thus, each object stores the interaction space as an attribute, which is associated with the space required to interact with this object and also its poseDifferent objects in the environment have different interaction spaces . For example, the table for therapies has a smaller space compared to watching TV because the latter interaction can be done from a further distance.

4.3.2. Socially Acceptable Path-Planning Approach

- Graph-based grid mapping. Space is represented by a graph of n nodes, regularly distributed in the environment. Each node has two parameters: availability, , and cost, . The availability of a node is a Boolean variable whose value is 1 if the space is free, 0 otherwise. The cost, , indicates the traversal cost of a node, i.e., what it takes for the robot to visit that node (high values of indicates that the robot should avoid this path). Initially, all nodes have the same cost of 1 (see [32] for details).

- Social graph-based grid mapping. The space graph includes the social interaction spaces, both for individuals and groups of people, as for objects. The availability and the cost parameters of each node in these regions are modified accordingly (see [32] for details).

4.4. Experimental Results and Discussion

- Average distance to the closest human during navigation: A measure of the average distance from the robot pose, , to the closest human along the robot’s path , being N the number of points of the path planned by the agent.

- Distance traveled: length of the path planned by the navigation framework, in meters.

- Navigation time: time since the robot starts the navigation, , until it arrives to the target, .

- Cumulative Heading Changes (CHC): a measure to count the cumulative heading changes of the robot during navigation [38]. Angles are normalized between and .

- Personal space intrusions (): In this paper, four different areas are defined: Intimate (); Personal (); Social (); and Public (). This metric measures the percentage of the time spent in each area along the robot’s path as:where defines the distance range for classification (intimate, personal, social and public), and is the indicator function.

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

Abbreviations

| AAL | Ambient Assisted Living |

| CPS | Cyber-Physical System |

| DSR | Deep State Representation |

| IoT | Internet of Things |

| SAR | Socially Assistive Robot |

References

- Corporate Authors. World Population Prospects 2019. Highlights Technical Report, United Nations. 2019. Available online: https://population.un.org/wpp/Publications/Files/WPP2019_Highlights.pdf (accessed on 15 June 2020).

- Corporate Authors. Ageing Europe. Looking at the Lives of Older People in the EU. Technical Report, Eurostat. 2019. Available online: https://ec.europa.eu/eurostat/statistics-explained/index.php?title=Ageing_Europe_-_looking_at_the_lives_of_older_people_in_the_EU (accessed on 15 June 2020). [CrossRef]

- Haque, S.; Aziz, S.; Rahman, M. Review of Cyber-Physical System in Healthcare. Int. J. Distrib. Sens. Netw. 2014, 2014, 20. [Google Scholar] [CrossRef]

- Broekens, J.; Heerink, M.; Rosendal, H. Assistive social robots in elderly care: A review. Gerontechnology 2009, 8, 94–103. [Google Scholar] [CrossRef]

- Blackman, S.; Matlo, C.; Bobrovitskiy, C.; Waldoch, A.; Fang, M.L.; Jackson, P.; Mihailidis, A.; Nygård, L.; Astell, A.; Sixsmith, A. Ambient assisted living technologies for aging well: A scoping review. Int. J. Intell. Syst. 2016, 25, 55–69. [Google Scholar] [CrossRef]

- Serpanos, D. The Cyber-Physical Systems revolution. Computer 2018, 51, 70–73. [Google Scholar] [CrossRef]

- Jamaludin, J.; Rohani, J. Cyber-Physical System (CPS): State of the Art. In Proceedings of the 2018 International Conference on Computing, Electronic and Electrical Engineering (ICE Cube), Quetta, Pakistan, 12–13 November 2018; pp. 1–5. [Google Scholar] [CrossRef]

- Bhrugubanda, M. A review on applications of Cyber Physical Systems information. Int. J. Innov. Sci. Eng. Technol. 2015, 728–730. [Google Scholar]

- Lee, E.; Seshia, S. Introduction to Embedded Systems—A Cyber-Physical Systems Approach; Mit Press: Cambridge, MA, USA, 2017. [Google Scholar]

- Lee, J.; Bagheri, B.; Kao, H.A. A Cyber-Physical Systems architecture for Industry 4.0-based manufacturing systems. SME Manuf. Lett. 2014, 3. [Google Scholar] [CrossRef]

- Nie, J.; Sun, R.; Li, X. A precision agriculture architecture with Cyber-Physical Systems design technology. Appl. Mech. Mater. 2014, 543–547, 1567–1570. [Google Scholar] [CrossRef]

- Zhang, Y.; Qiu, M.; Tsai, C.W.; Hassan, M.; Alamri, A. Health-CPS: Healthcare Cyber-Physical System Assisted by Cloud and Big Data. IEEE Syst. J. 2015, 11, 1–8. [Google Scholar] [CrossRef]

- Leng, J.; Zhang, H.; Yan, D.; Liu, Q.; Chen, X.; Zhang, D. Digital twin-driven manufacturing cyber-physical system for parallel controlling of smart workshop. J. Ambient Intell. Humaniz. Comput. 2019, 10, 1155–1166. [Google Scholar] [CrossRef]

- Alam, K.M.; El Saddik, A. C2PS: A digital twin architecture reference model for the Cloud-based Cyber-Physical Systems. IEEE Access 2017, PP, 1. [Google Scholar] [CrossRef]

- Rahman, A.; Hossain, M.S. A cloud-based virtual caregiver for elderly people in a cyber physical IoT system. Cluster Comput. 2019, 22. [Google Scholar] [CrossRef]

- Dimitrov, V.; Jagtap, V.; Wills, M.; Skorinko, J.; Padir, T. A cyber physical system testbed for assistive robotics technologies in the home. In Proceedings of the International Conference on Advanced Robotics, Istanbul, Turkey, 27–31 July 2015; pp. 323–328. [Google Scholar] [CrossRef]

- Manso, L.; Bachiller, P.; Bustos, P.; Núñez, P.; Cintas, R.; Calderita, L. RoboComp: A tool-based robotics framework. In Proceedings of the International Conference on Simulation, Modeling, and Programming for Autonomous Robots, Darmstadt, Germany, 15–18 November 2010; Volume 6472, pp. 251–262. [Google Scholar] [CrossRef]

- Calderita, L.V. Deep State Representation: An Unified Internal Representation for the Robotics Cognitive Architecture CORTEX. Ph.D. Thesis, Universidad de Extremadura, Extremadura, Spain, 2016. [Google Scholar]

- Bonaccorsi, M.; Fiorini, L.; Cavallo, F.; Saffiotti, A.; Dario, P. A Cloud robotics solution to improve social assistive robots for active and healthy aging. Int. J. Soc. Rob. 2016, 8. [Google Scholar] [CrossRef]

- Romero-Garcés, A.; Calderita, L.V.; Martınez-Gómez, J.; Bandera, J.P.; Marfil, R.; Manso, L.J.; Bustos, P.; Bandera, A. The cognitive architecture of a robotic salesman. In Proceedings of the Conferencia de la Asociación Española para la Inteligencia Artificial CAEPIA’15, Albacete, Spain, 9–12 November 2015; pp. 16–24. [Google Scholar]

- Romero-Garcés, A.; Calderita, L.V.; Martínez-Gómez, J.; Bandera, J.P.; Marfil, R.; Manso, L.J.; Bandera, A.; Bustos, P. Testing a fully autonomous robotic salesman in real scenarios. In Proceedings of the 2015 IEEE International Conference on Autonomous Robot Systems and Competitions, Vila Real, Portugal, 8–10 April 2015; pp. 124–130. [Google Scholar]

- Bustos, P.; Manso, L.J.; Bandera, A.J.; Bandera, J.P.; Garcia-Varea, I.; Martinez-Gomez, J. The CORTEX cognitive robotics architecture: Use cases. Cogn. Syst. Res. 2019, 55, 107–123. [Google Scholar] [CrossRef]

- Manso, L.; Calderita, L.; Bustos, P.; Bandera, A. Use and advances in the active grammar-based modeling architecture. J. Phys. Agents 2016, 8, 33–38. [Google Scholar]

- Vega, A.; Manso, L.J.; Cintas, R.; Núñez, P. Planning human-robot interaction for social navigation in crowded environments. In Proceedings of the Workshop of Physical Agents, Madrid, Spain, 22–23 November 2018; pp. 195–208. [Google Scholar]

- Kruse, T.; Pandey, A.K.; Alami, R.; Kirsch, A. Human-aware robot navigation: A survey. Rob. Autom. Syst. 2013, 61, 1726–1743. [Google Scholar] [CrossRef]

- Rios-Martinez, J.; Spalanzani, A.; Laugier, C. From Proxemics Theory to socially-aware navigation: A survey. Int. J. Social Rob. 2014, 7, 137–153. [Google Scholar] [CrossRef]

- Charalampous, K.; Kostavelis, I.; Gasteratos, A. Recent trends in social aware robot navigation: A survey. Rob. Autom. Syst. 2017, 93. [Google Scholar] [CrossRef]

- Papadakis, P.; Spalanzani, A.; Laugier, C. Social mapping of human-populated environments by implicit function learning. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems, Tokyo, Japan, 3–7 November 2013. [Google Scholar]

- Charalampous, K.; Kostavelis, I.; Gasteratos, A. Robot navigation in large-scale social maps: An action recognition approach. Expert Syst. Appl. 2016, 66. [Google Scholar] [CrossRef]

- Vega, A.; Manso, L.J.; Macharet, D.G.; Bustos, P.; Núñez, P. Socially aware robot navigation system in human-populated and interactive environments based on an adaptive spatial density function and space affordances. Pattern Recognit. Lett. 2019, 118, 72–84. [Google Scholar] [CrossRef]

- Munaro, M.; Basso, F.; Menegatti, E. OpenPTrack: Open source multi-camera calibration and people tracking for RGB-D camera networks. Rob. Autom. Syst. 2015, 75. [Google Scholar] [CrossRef]

- Vega Magro, A.; Cintas, R.; Manso, L.; Bustos, P.; Núñez, P. Socially-accepted path planning for robot navigation based on social interaction spaces. In Proceedings of the Robot 2019: Fourth Iberian Robotics Conference, Advances in Intelligent Systems and Computing, Porto, Portugal, 20–22 November 2019; pp. 644–655. [Google Scholar] [CrossRef]

- Vega-Magro, A.; Calderita, L.V.; Bustos, P.; Núñez, P. Human-aware robot navigation based on time-dependent social interaction spaces: A use case for assistive robotics. In Proceedings of the 2020 IEEE International Conference on Autonomous Robot Systems and Competitions (ICARSC), Azores, Portugal, 15–17 April 2020; pp. 140–145. [Google Scholar]

- Rios-Martinez, J. Socially-Aware Robot Navigation: Combining Risk Assessment and Social Conventions. Ph.D. Thesis, University of Grenoble, Grenoble, France, 2013. [Google Scholar]

- Silva, A.D.G.; Macharet, D.G. Are you with me? Determining the association of individuals and the collective social space. In Proceedings of the 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Macau, China, 3–8 November 2019; pp. 313–318. [Google Scholar]

- Kostavelis, I.; Kargakos, A.; Giakoumis, D.; Tzovaras, D. Robot’s workspace enhancement with dynamic human presence for socially-aware navigation. In Proceedings of the International Conference on Computer Vision Systems, Shenzhen, China, 10–13 July 2017; pp. 279–288. [Google Scholar]

- Okal, B.; Arras, K.O. Formalizing normative robot behavior. In Proceedings of the International Conference on Social Robotics (ICSR’16), Kansas City, MO, USA, 1–3 November 2016; pp. 62–71. [Google Scholar]

- Okal, B.; Arras, K.O. Learning socially normative robot navigation behaviors with bayesian inverse reinforcement learning. In Proceedings of the 2016 IEEE International Conference on Robotics and Automation (ICRA), Stockholm, Sweden, 16–21 May 2016; pp. 2889–2895. [Google Scholar]

| Category | Robotic Application | ||||

|---|---|---|---|---|---|

| Type | Data | Purpose | Format | Assistive | Social |

| Environmental | Temperature | Measure room temperature | Time series | x | |

| Humidity | Measure room humidity | Time series | x | ||

| CO | Measure room CO ppm | Time series | x | ||

| People presence | Motion detection | Categorical | x | x | |

| Personal | RGB/RGBD cameras | Monitoring and tracking, daily activity detection, … | Multimedia | x | x |

| Microphone | Voice detection, HRI | Audio | x | x | |

| Speakers | Alerts and instructions, HRI | Audio | x | x | |

| Tactile TV/monitor | Visual information, HRI | Multimedia | x | x | |

| sonar/laser | Robot navigation | Time series | x | ||

| Actor | Action |

|---|---|

| Caregiver | The caregiver keeps the therapy schedule updated on the center’s calendar |

| Senior | The user performs his scheduled activity in the occupational therapy room |

| Physical World | |

| RGBD cameras | RGBD cameras collect the data of the caregiving center useful for navigation |

| Microphones/speakers | The microphones/speakers of the robot and the environment are used in the phase of interaction with the users |

| Communication | This data is sent via Ethernet |

| Digital Twin Model | |

| Object detection agent | The agent estimates the position of the objects, and if there have been changes, updates the DSR |

| Person detection agent | The agent estimates the position of the users and updates the DSR |

| Caregiving center management agent | When the time of the end of the activity is reached, the module triggers an alert service to the robot. |

| SAR | Once the reminder is received, the robot launches its plan: to reach the occupational therapy room |

| Social navigation agent | The agent plans a socially acceptable path and navigates it to its goal. |

| HRI agent | The agent interacts with users to warn them of the end of the activity |

| Senior | The user leaves the room |

| Physical World | Physical devices corroborate that users leave the room |

| Actor | Action |

|---|---|

| Caregiver | The caregiver keeps the therapy schedule updated on the center’s calendar |

| Senior | The user waits in the physical therapy room |

| Physical World | |

| RGBD cameras | RGBD cameras collect the data of the caregiving center useful for navigation |

| Microphones/speakers | The microphones/speakers of the robot and the environment are used in the phase of interaction with the users |

| Communication | This data is sent via Ethernet |

| Digital Twin Model | |

| Object detection agent | The agent estimates the position of the objects, and if there have been changes, updates the DSR |

| Person detection agent | The agent estimates the position of the users and updates the DSR. |

| Caregiving center management agent | When the time of the end of the activity is reached, the module triggers an alert service to the robot |

| SAR | Once the reminder is received, the robot launches its plan: to reach the physical therapy room |

| Social navigation agent | The agent plans a socially acceptable path and navigates it to its goal |

| HRI agent | The agent interacts with users to warn them of the start of the activity |

| Physical therapy agent | The agent interacts with users and launch the therapy |

| Senior | The user performs the physical activity, interacting with the touch screen and by voice message |

| Physical World | The physical devices corroborate that the users correctly perform the activity proposed by the robot |

| Social Path-Planning | Classical Dijkstra’s Path-Planning | |

|---|---|---|

| Parameter | Value | Value |

| (m) | 15.01 | 12.21 |

| τ (s) | 46.64 | 36.21 |

| CHC | 7.42 (1.27) | 8.23 |

| (m) | 2.88 | 1.23 |

| (m) | 2.14 | 1.13 |

| Ψ (Intimate) (%) | 0.0 | 0.0 |

| Ψ (Personal)(%) | 0.0 | 11 |

| Ψ (Social)(%) | 0.0 | 8 |

| Ψ (Public)(%) | 100.0 | 81.0 |

| Social Path-Planning | Classical Dijkstra’s Path-Planning | |

|---|---|---|

| Parameter | Value | Value |

| (m) | 16.27 | 12.54 |

| τ (s) | 72.68 | 61.22 |

| CHC | 1.52 (0.6) | 2.32 |

| (m) | 4.13 | 3.35 |

| (m) | 2.70 | 0.85 |

| (m) | 1.125 | 3.45 |

| (m) | 1.318 | 1.318 |

| Ψ (Intimate) (%) | 0.0 | 2.23 |

| Ψ (Personal)(%) | 1.31 | 6.36 |

| Ψ (Social)(%) | 8.23 | 10.01 |

| Ψ (Public)(%) | 90.46 | 81.04 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Calderita, L.V.; Vega, A.; Barroso-Ramírez, S.; Bustos, P.; Núñez, P. Designing a Cyber-Physical System for Ambient Assisted Living: A Use-Case Analysis for Social Robot Navigation in Caregiving Centers. Sensors 2020, 20, 4005. https://doi.org/10.3390/s20144005

Calderita LV, Vega A, Barroso-Ramírez S, Bustos P, Núñez P. Designing a Cyber-Physical System for Ambient Assisted Living: A Use-Case Analysis for Social Robot Navigation in Caregiving Centers. Sensors. 2020; 20(14):4005. https://doi.org/10.3390/s20144005

Chicago/Turabian StyleCalderita, Luis V., Araceli Vega, Sergio Barroso-Ramírez, Pablo Bustos, and Pedro Núñez. 2020. "Designing a Cyber-Physical System for Ambient Assisted Living: A Use-Case Analysis for Social Robot Navigation in Caregiving Centers" Sensors 20, no. 14: 4005. https://doi.org/10.3390/s20144005

APA StyleCalderita, L. V., Vega, A., Barroso-Ramírez, S., Bustos, P., & Núñez, P. (2020). Designing a Cyber-Physical System for Ambient Assisted Living: A Use-Case Analysis for Social Robot Navigation in Caregiving Centers. Sensors, 20(14), 4005. https://doi.org/10.3390/s20144005