Extrinsic Calibration between Camera and LiDAR Sensors by Matching Multiple 3D Planes †

Abstract

1. Introduction

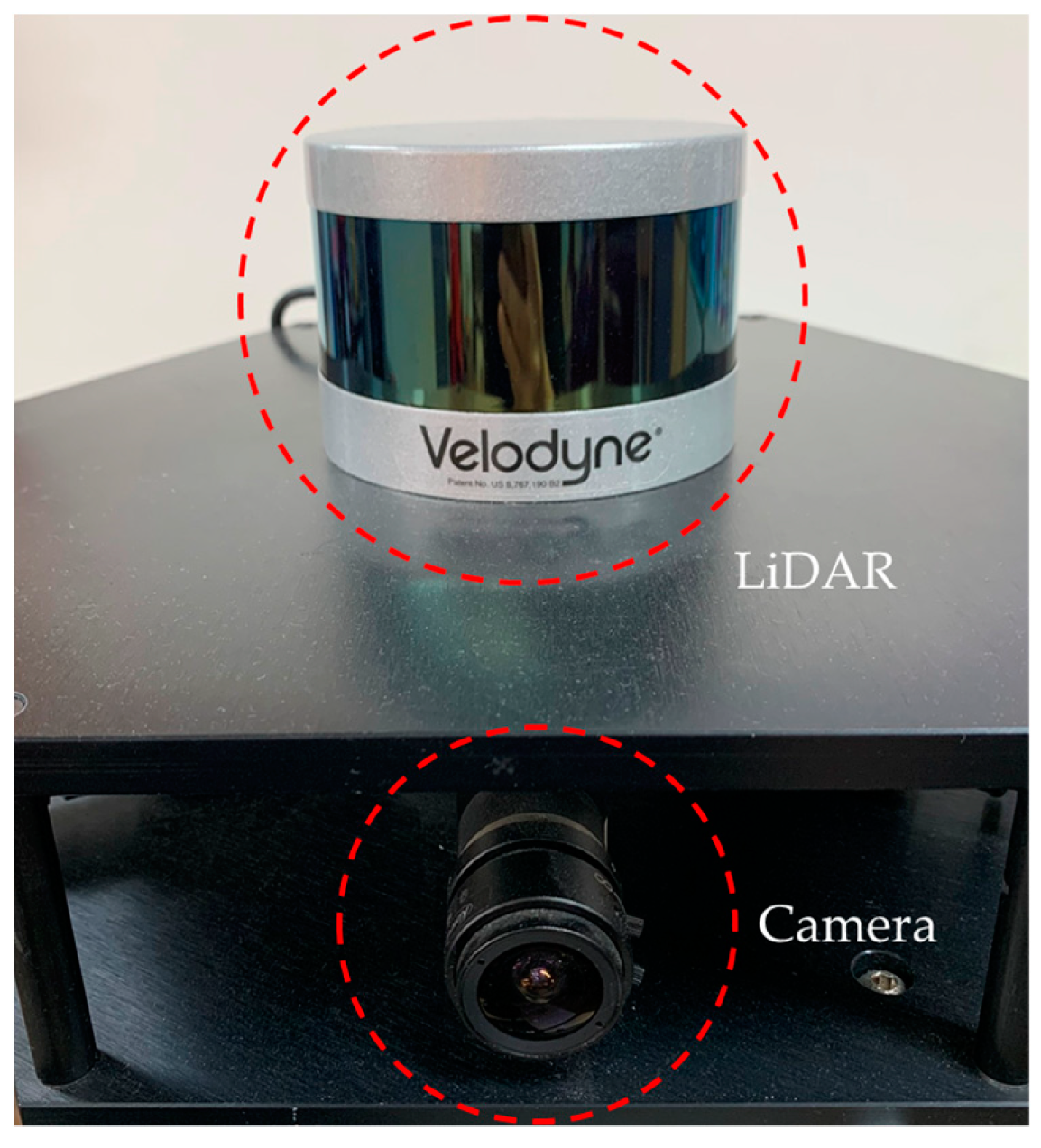

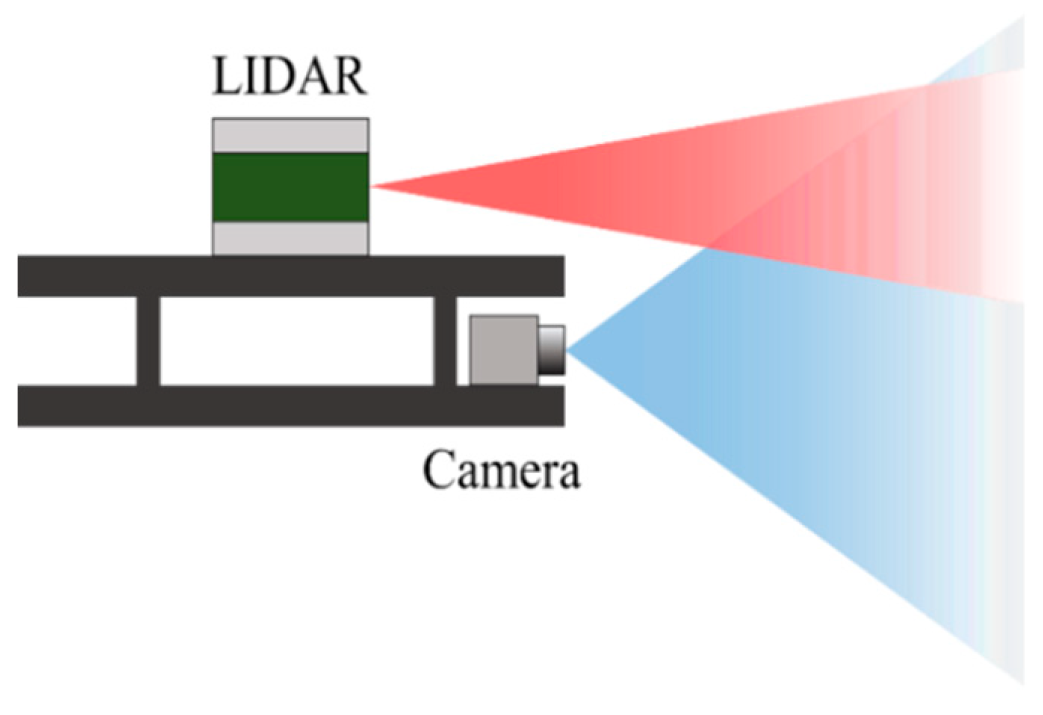

2. An Omnidirectional Camera–LiDAR System

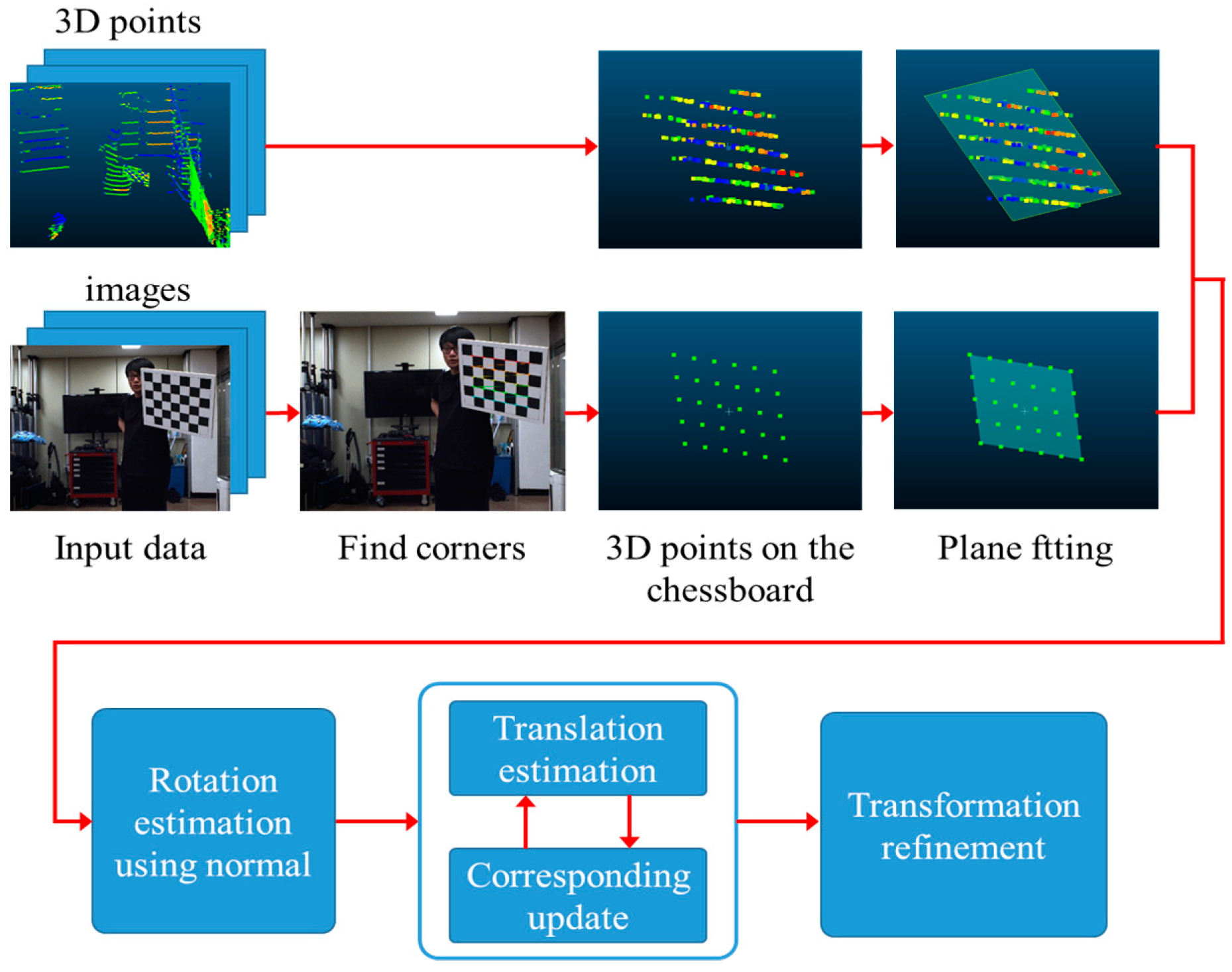

3. Extrinsic Calibration of the Camera–LiDAR System

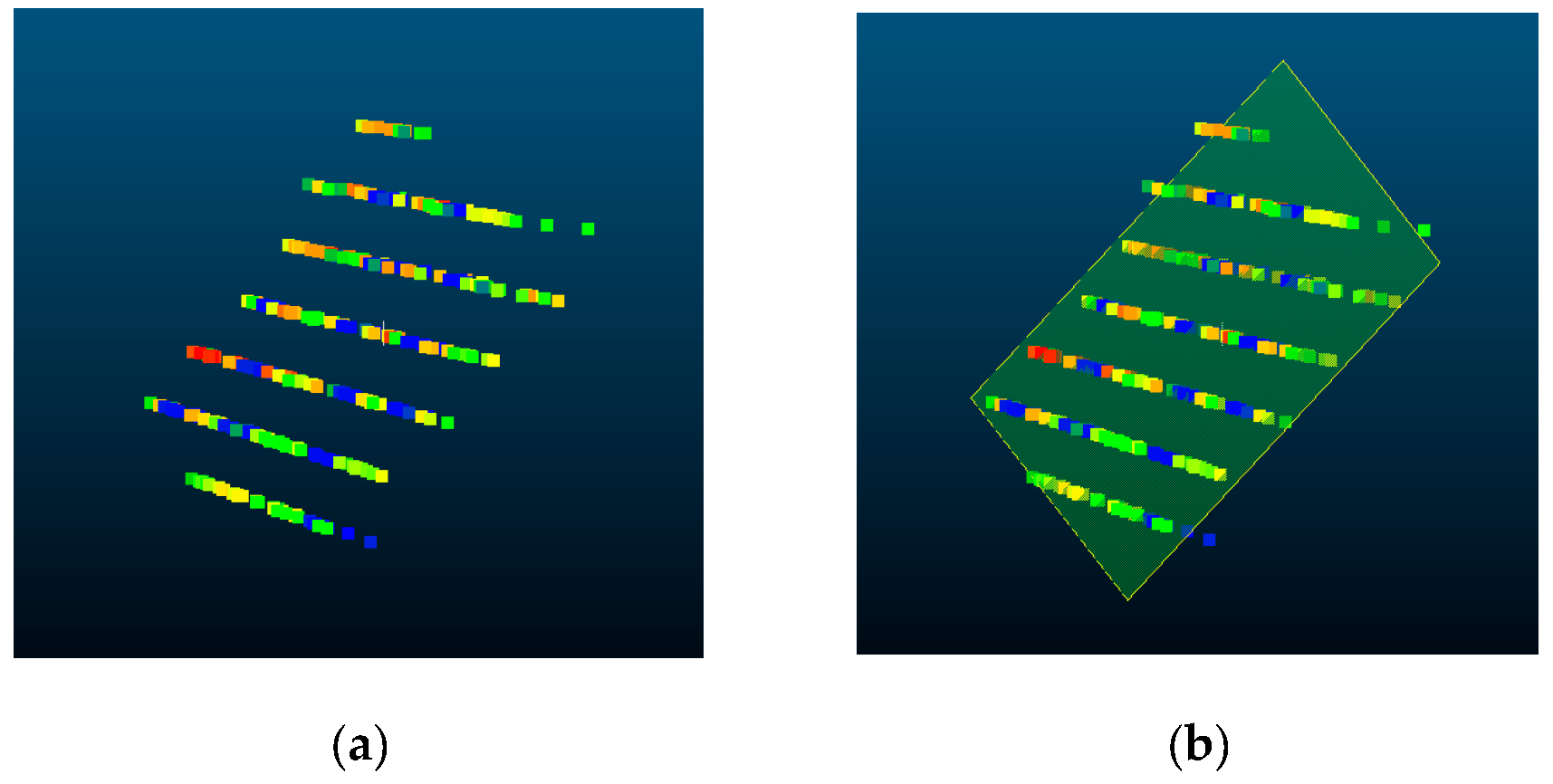

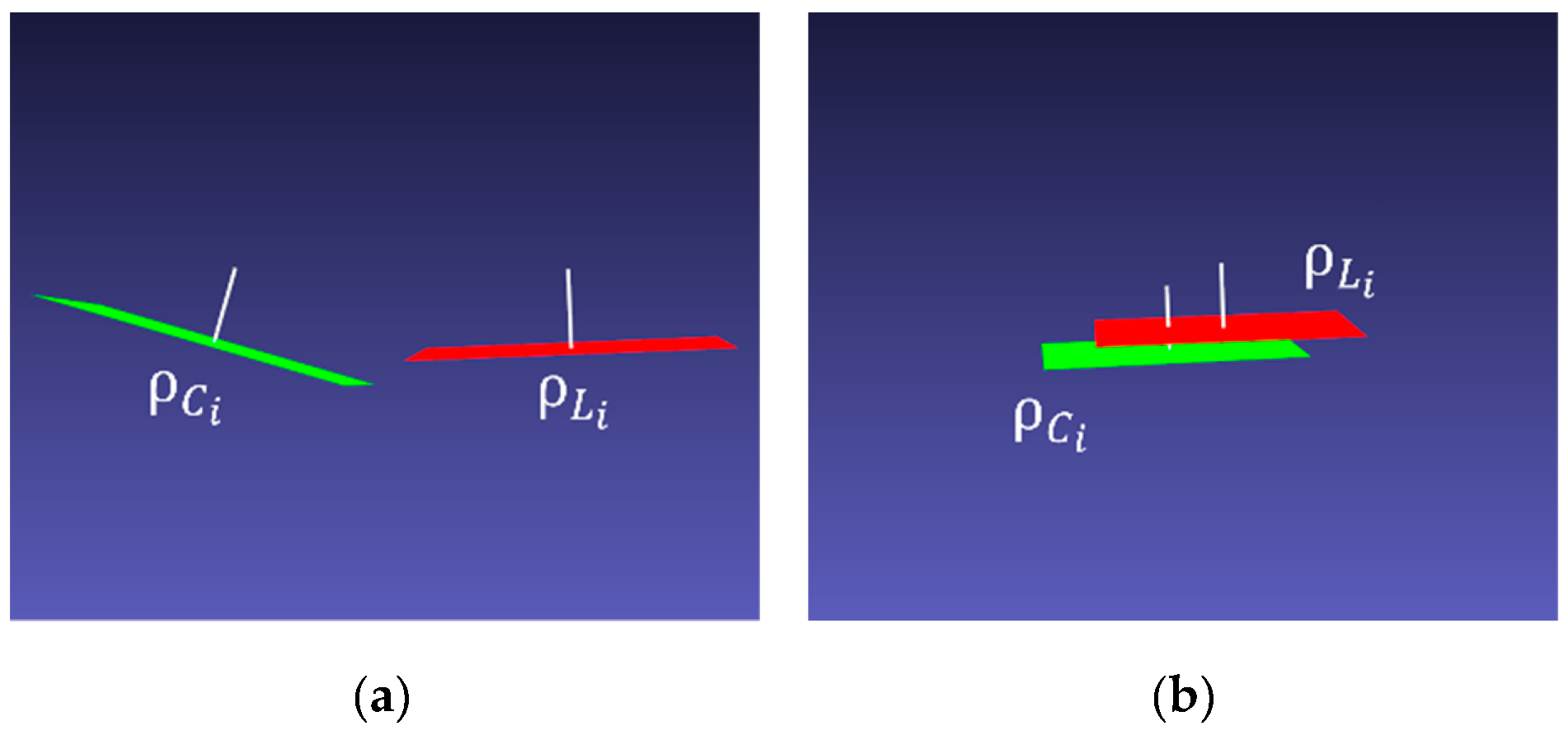

3.1. 3D Chessboard Fitting Using LiDAR Data

- Randomly selecting three points from 3D points on the chessboard.

- Calculating the plane equation using the selected three points.

- Finding inliers using the distance between the fitted plane and all the other 3D points.

- Repeat above steps until finding the best plane with the highest inlier ratio.

3.2. 3D Chessboard Fitting Using Camera Images

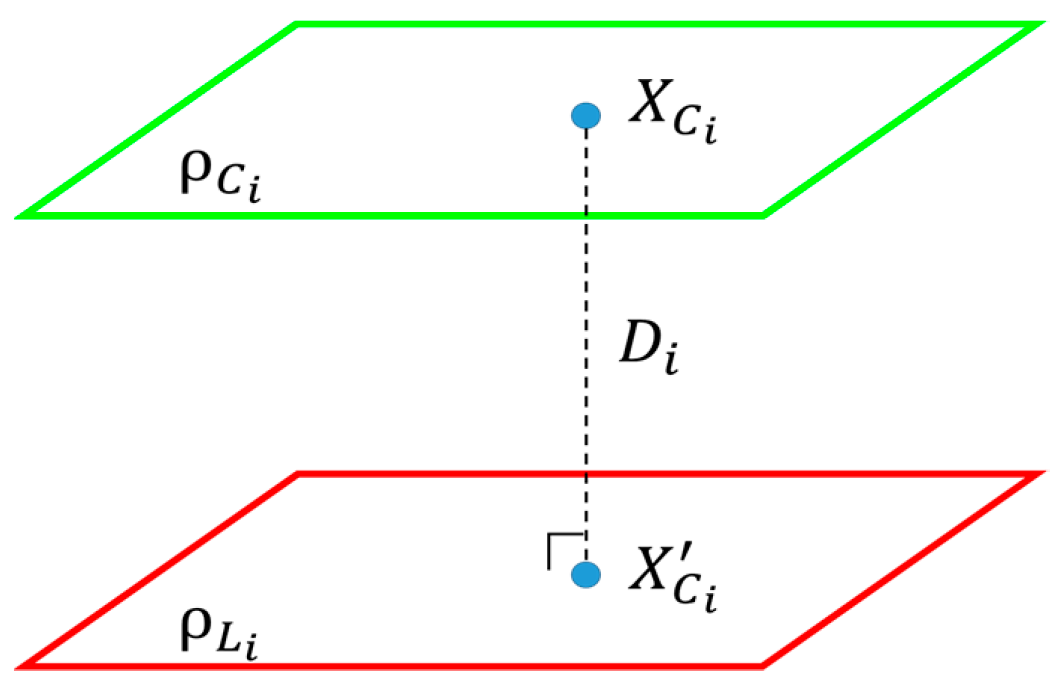

3.3. Calculating Initial Transformation between Camera and LiDAR Sensors

3.4. Transformation Refinement

4. Experimental Results

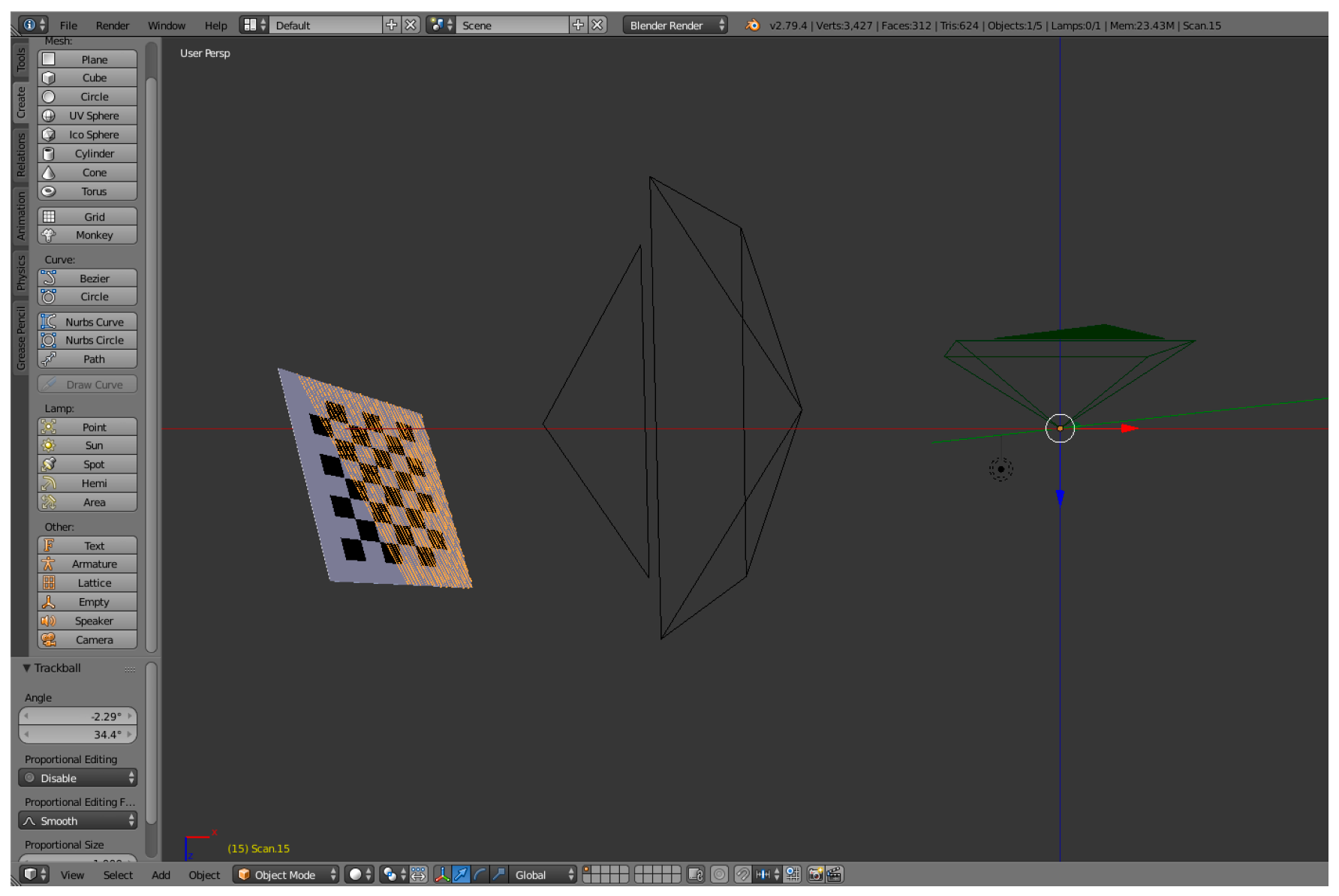

4.1. Error Analysis Using Simulation Data

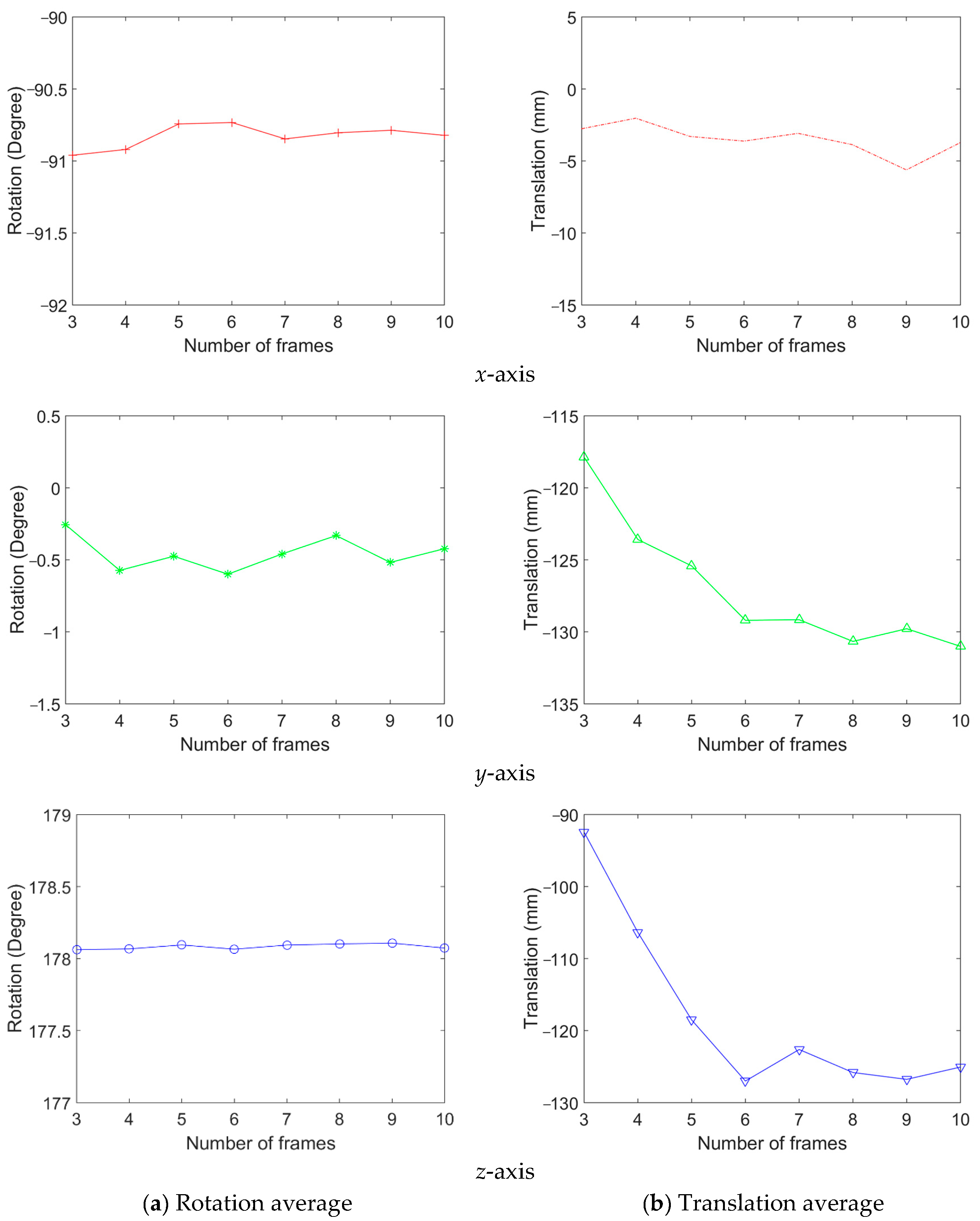

4.2. Consistency Analysis Using Real Data

- To test in a different field of view, two types of lens are used, that is, a 3.5 mm lens and an 8 mm lens;

- To test with a different number of image frames, a total of 61 and 75 frames are obtained from the 3.5 mm and 8 mm lens, respectively.

- The translation between the coordinate system;

- The rotation angle between the coordinate system along the three coordinate axes;

- The measurement difference between the results of using the 3.5 mm and 8 mm lenses (for consistency check).

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Kim, J.W.; Jeong, J.Y.; Shin, Y.S.; Cho, Y.G.; Roh, H.C.; Kim, A.Y. LiDAR Configuration Comparison for Urban Mapping System. In Proceedings of the 2017 14th International Conference on Ubiquitous Robots and Ambient Intelligence (URAI), Jeju, Korea, 28 June–1 July 2017; pp. 854–857. [Google Scholar]

- Blanco-Claraco, J.L.; Moreno-Dueñas, F.Á.; González-Jiménez, J. The Málaga urban dataset: High-rate stereo and LiDARs in a realistic urban scenario. Trans. Int. J. Robot. Res. 2014, 33, 207–214. [Google Scholar] [CrossRef]

- Carlevaris-Bianco, N.; Ushani, A.K.; Eustice, R.M. University of Michigan North Campus long-term vision and lidar dataset. Trans. Int. J. Robot. Res. 2016, 35, 1023–1035. [Google Scholar] [CrossRef]

- Zhou, L.; Li, Z.; Kaess, M. Automatic Extrinsic Calibration of a Camera and a 3D LiDAR using Line and Plane Correspondences. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 5562–5569. [Google Scholar]

- Pusztai, Z.; Hajder, L. Accurate Calibration of LiDAR-Camera Systems using Ordinary Boxes. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 394–402. [Google Scholar]

- Lee, G.M.; Lee, J.H.; Park, S.Y. Calibration of VLP-16 Lidar and Multi-view Cameras Using a Ball for 360 degree 3D Color Map Acquisition. In Proceedings of the 2017 IEEE International Conference on Multisensor Fusion and Integration for Intelligent Systems (MFI), Daegu, Korea, 16–18 November 2017; pp. 64–69. [Google Scholar]

- Dhall, A.; Chelani, K.; Radhakrishnan, V.; Krishn, K.M. LiDAR-Camera Calibration Using 3D-3D Point Correspondences. arXiv 2017, arXiv:1705.09785. [Google Scholar]

- Chai, Z.; Sun, Y.; Xiong, Z. A Novel Method for LiDAR Camera Calibration by Plane Fitting. In Proceedings of the 2018 IEEE/ASME International Conference on Advanced Intelligent Mechatronics (AIM), Auckland, New Zealand, 9–12 July 2018; pp. 286–291. [Google Scholar]

- Mirzaei, F.M.; Kottas, D.G.; Roumeliotis, S.I. 3d lidar-camera intrinsic and extrinsic calibration: Observability analysis and analytical least squares-based initialization. Trans. Int. J. Robot. Res. 2012, 31, 452–467. [Google Scholar] [CrossRef]

- Pandey, G.; McBride, J.R.; Savarese, S.; Eustice, R. Extrinsic Calibration of a 3D Laser Scanner and an Omnidirectional Camera. 7th IFAC Symp. Intell. Auton. Veh. 2010, 43, 336–341. [Google Scholar] [CrossRef]

- Sui, J.; Wang, S. Extrinsic Calibration of Camera and 3D Laser Sensor System. In Proceedings of the 36th Chinese Control Conference (CCC), Dalian, China, 26–28 July 2017; pp. 6881–6886. [Google Scholar]

- Huang, L.; Barth, M. A Novel Multi-Planar LIDAR and Computer Vision Calibration Procedure Using 2D Patterns for Automated Navigation. In Proceedings of the 2009 IEEE Intelligent Vehicles Symposium, Xi’an, China, 3–5 June 2009; pp. 117–122. [Google Scholar]

- Velas, M.; Spanel, M.; Materna, Z.; Herout, A. Calibration of RGB Camera with Velodyne LiDAR. In Proceedings of the International Conference on Computer Graphics, Visualization and Computer Vision (WSCG); Václav Skala-UNION Agency: Pilsen, Czech, 2014; pp. 135–144. [Google Scholar]

- Geiger, A.; Moosmann, F.; Omer, C.; Schuster, B. Automatic Camera and Range Sensor Calibration Using a Single Shot. In Proceedings of the International Conference on Robotics and Automation (ICRA), Saint Paul, MN, USA, 14–18 May 2012; pp. 3936–3943. [Google Scholar]

- Pandey, G.; McBride, J.R.; Savarese, S.; Eustice, R. Automatic extrinsic calibration of vision and lidar by maximizing mutual information. Trans. J. Field Robot. 2015, 32, 696–722. [Google Scholar] [CrossRef]

- Budge, S.E.; Neeraj, S.B. Calibration method for texel images created from fused flash lidar and digital camera images. Opt. Eng. 2013, 52, 103101. [Google Scholar] [CrossRef]

- Budge, S.E. A calibration-and-error correction method for improved texel (fused ladar/digital camera) images. In Proceedings of the SPIE–The International Society for Optical Engineering, Baltimore, MD, USA, 14 May 2012; p. 8379. [Google Scholar]

- Kim, E.S.; Park, S.Y. Extrinsic calibration of a camera-LIDAR multi sensor system using a planar chessboard. In Proceedings of the 12th International Conference on Ubiquitous and Future Networks, Zagreb, Croatia, 2–5 July 2019; pp. 89–91. [Google Scholar]

- Fischler, M.A.; Bolles, R.C. Random sample consensus: A paradigm for model fitting with applications to image analysis and automated cartography. Commun. ACM 1981, 24, 381–395. [Google Scholar] [CrossRef]

- Zhang, Z. A flexible new technique for camera calibration. IEEE Trans. Pattern Anal. Mach. Intell. 2000, 22, 1330–1334. [Google Scholar] [CrossRef]

- Lepetit, V.; Moreno-Noguer, F.; Fua, P. EPnP An Accurate O(n) Solution to the PnP Problem. Int. J. Comput. Vis. 2009, 81, 155–166. [Google Scholar] [CrossRef]

- BlenSor. Available online: https://www.blensor.org/ (accessed on 25 November 2019).

| Initial | Refined | |||

|---|---|---|---|---|

| Number of Frames | Rotation (10−5) (Standard Deviation) | Translation (mm) (Standard Deviation) | Rotation (10−5) (Standard Deviation) | Translation (mm) (Standard Deviation) |

| 3 | 0.87 (1.77) | 133.86 (130.05) | 0.87 (1.86) | 22.82 (43.13) |

| 5 | 0.43 (0.77) | 38.69 (57.78) | 0.26 (0.48) | 5.76 (5.56) |

| 10 | 0.16 (0.15) | 8.88 (13.60) | 0.08 (0.13) | 2.58 (1.12) |

| 15 | 0.13 (0.14) | 4.90 (4.82) | 0.10 (0.13) | 2.36 (0.87) |

| 20 | 0.17 (0.13) | 3.05 (1.79) | 0.05 (0.05) | 2.34 (0.71) |

| 25 | 0.10 (0.08) | 2.92 (1.16) | 0.08 (0.09) | 1.85 (0.59) |

| 30 | 0.13 (0.09) | 2.11 (0.74) | 0.08 (0.08) | 1.88 (0.61) |

| Focal Length | Rotation (Degree) (Standard Deviation) | Translation (mm) (Standard Deviation) | ||||

|---|---|---|---|---|---|---|

| X | Y | Z | X | Y | Z | |

| 3.5 mm | −89.300 (0.487) | −0.885 (0.517) | 178.496 (0.335) | −14.22 (11.60) | −125.26 (5.34) | −112.13 (19.00) |

| 8.0 mm | −90.822 (0.506) | −0.598 (0.460) | 178.073 (0.272) | −3.72 (9.56) | −131.00 (5.34) | −125.05 (16.36) |

| Measure difference | 1.522 | −0.287 | 0.423 | 10.49 | −5.74 | −12.91 |

| Focal Length | Distance (m) | Rotation (Degree) (Standard Deviation) | Translation (mm) (Standard Deviation) | ||||

|---|---|---|---|---|---|---|---|

| X | Y | Z | X | Y | Z | ||

| 3.5 mm | 2 | −88.896 (0.569) | 1.131 (1.434) | 177.934 (0.351) | −17.46 (14.43) | −129.92 (5.20) | −107.90 (26.21) |

| 3 | −88.960 (0.746) | 0.337 (2.022) | 178.359 (0.538) | −6.05 (19.77) | −123.94 (8.39) | −89.90 (50.69) | |

| 4 | −89.6116 (1.170) | 0.106 (2.294) | 178.693 (0.788) | −35.89 (56.83) | −125.08 (17.08) | −92.04 (76.45) | |

| 5 | − | − | − | − | − | − | |

| 8.0 mm | 2 | −88.624 (0.485) | −0.236 (0.653) | 177.981 (0.349) | −3.80 (15.65) | −147.55 (3.29) | −83.52 (15.66) |

| 3 | −87.766 (0.570) | 0.249 (0.899) | 177.984 (0.461) | 3.56 (22.07) | −145.37 (5.78) | −123.69 (28.05) | |

| 4 | −88.033 (1.009) | −0.100 (1.744) | 178.533 (0.483) | −36.87 (38.57) | −133.52 (8.18) | −133.52 (66.78) | |

| 5 | −85.222 (4.115) | 3.345 (7.675) | 178.222 (1.214) | −58.58 (93.93) | −114.38 (24.91) | −310.56 (319.27) | |

| Measure difference | 2 | −0.271 | 1.368 | −0.046 | −13.65696 | 17.62756 | 24.37621 |

| 3 | −1.193 | 0.087 | 0.375 | −9.61441 | 21.42924 | −32.97788 | |

| 4 | −1.578 | 0.207 | 0.159 | 0.98018 | 8.43786 | −37.84989 | |

| 5 | − | − | − | − | − | − | |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kim, E.-s.; Park, S.-Y. Extrinsic Calibration between Camera and LiDAR Sensors by Matching Multiple 3D Planes. Sensors 2020, 20, 52. https://doi.org/10.3390/s20010052

Kim E-s, Park S-Y. Extrinsic Calibration between Camera and LiDAR Sensors by Matching Multiple 3D Planes. Sensors. 2020; 20(1):52. https://doi.org/10.3390/s20010052

Chicago/Turabian StyleKim, Eung-su, and Soon-Yong Park. 2020. "Extrinsic Calibration between Camera and LiDAR Sensors by Matching Multiple 3D Planes" Sensors 20, no. 1: 52. https://doi.org/10.3390/s20010052

APA StyleKim, E.-s., & Park, S.-Y. (2020). Extrinsic Calibration between Camera and LiDAR Sensors by Matching Multiple 3D Planes. Sensors, 20(1), 52. https://doi.org/10.3390/s20010052