Brain and Body Emotional Responses: Multimodal Approximation for Valence Classification

Abstract

1. Introduction

2. Materials and Methods

2.1. Experimental Procedure and Data Analysis

2.1.1. EEG

2.1.2. ECG

2.1.3. Skin Temperature

2.1.4. Multimodal Approximation

3. Results

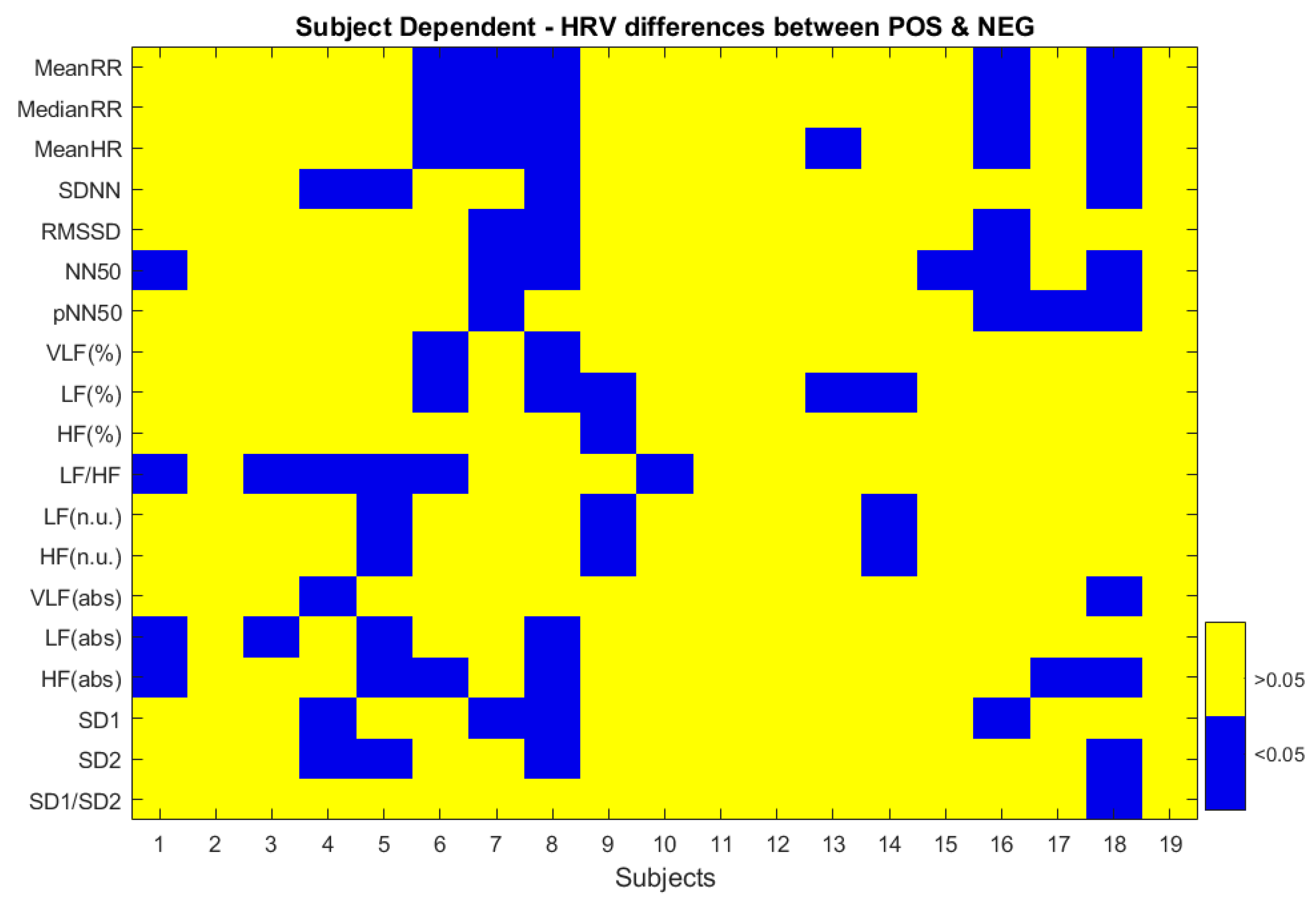

3.1. ECG

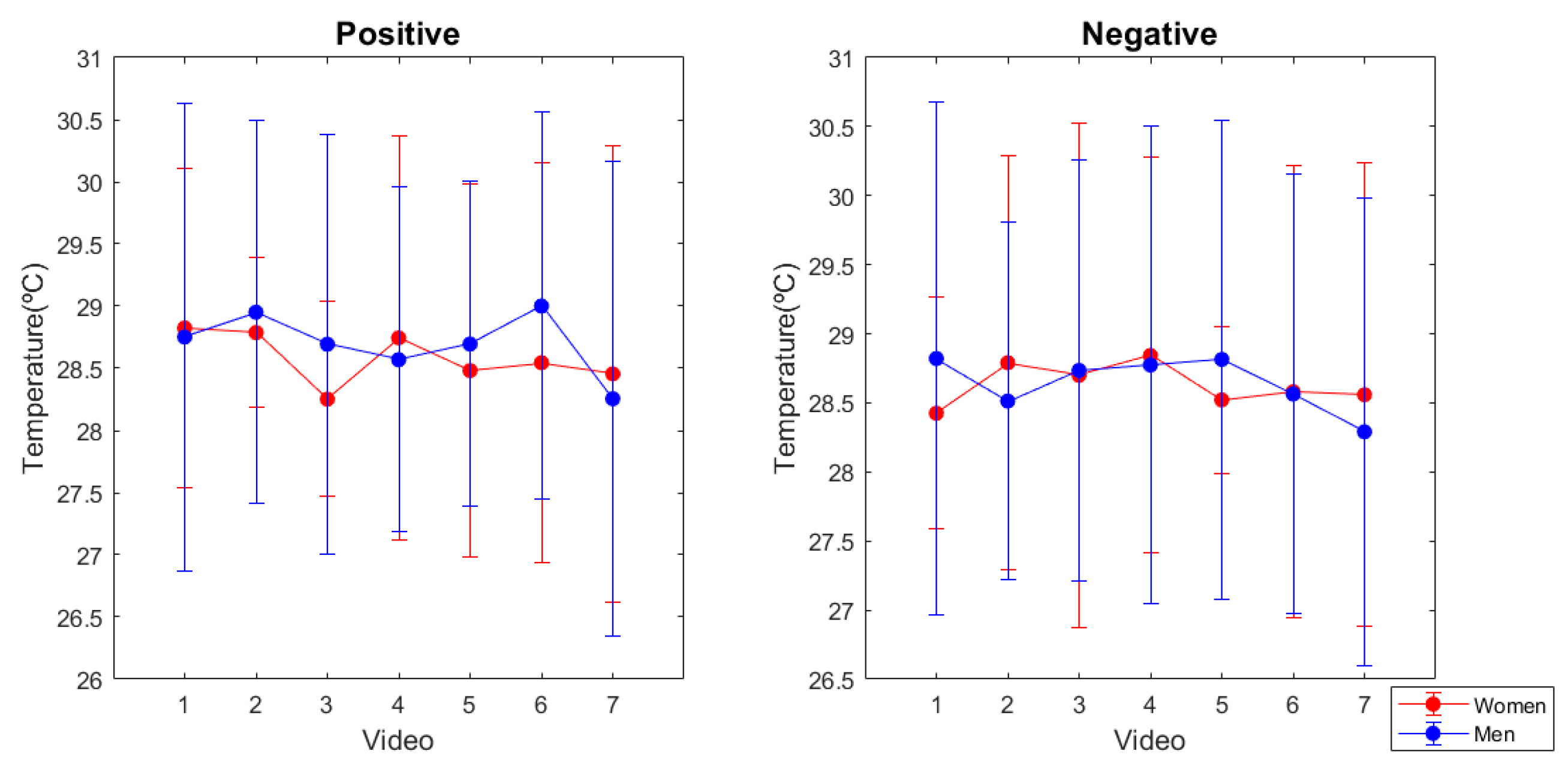

3.2. Skin Temperature

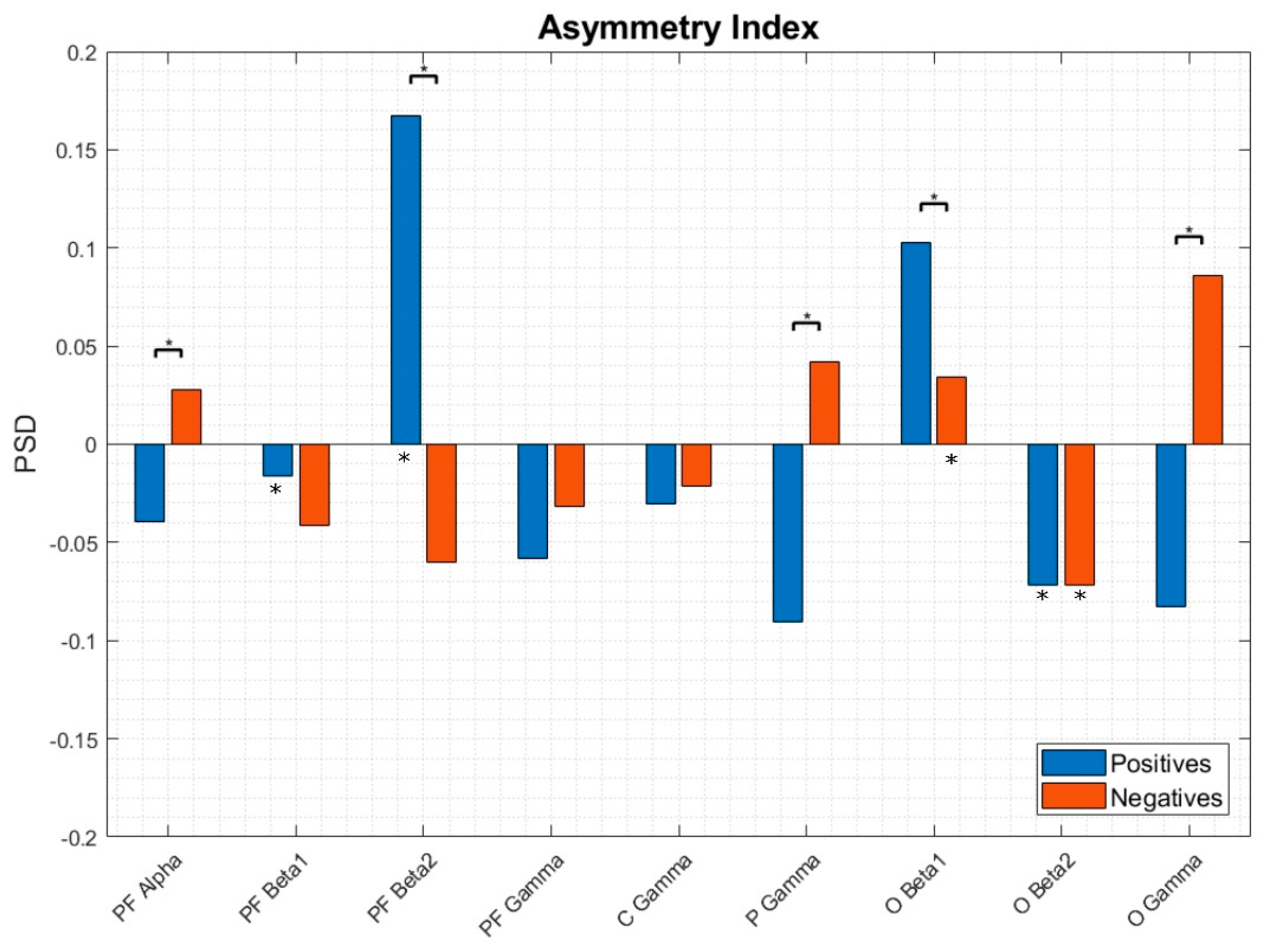

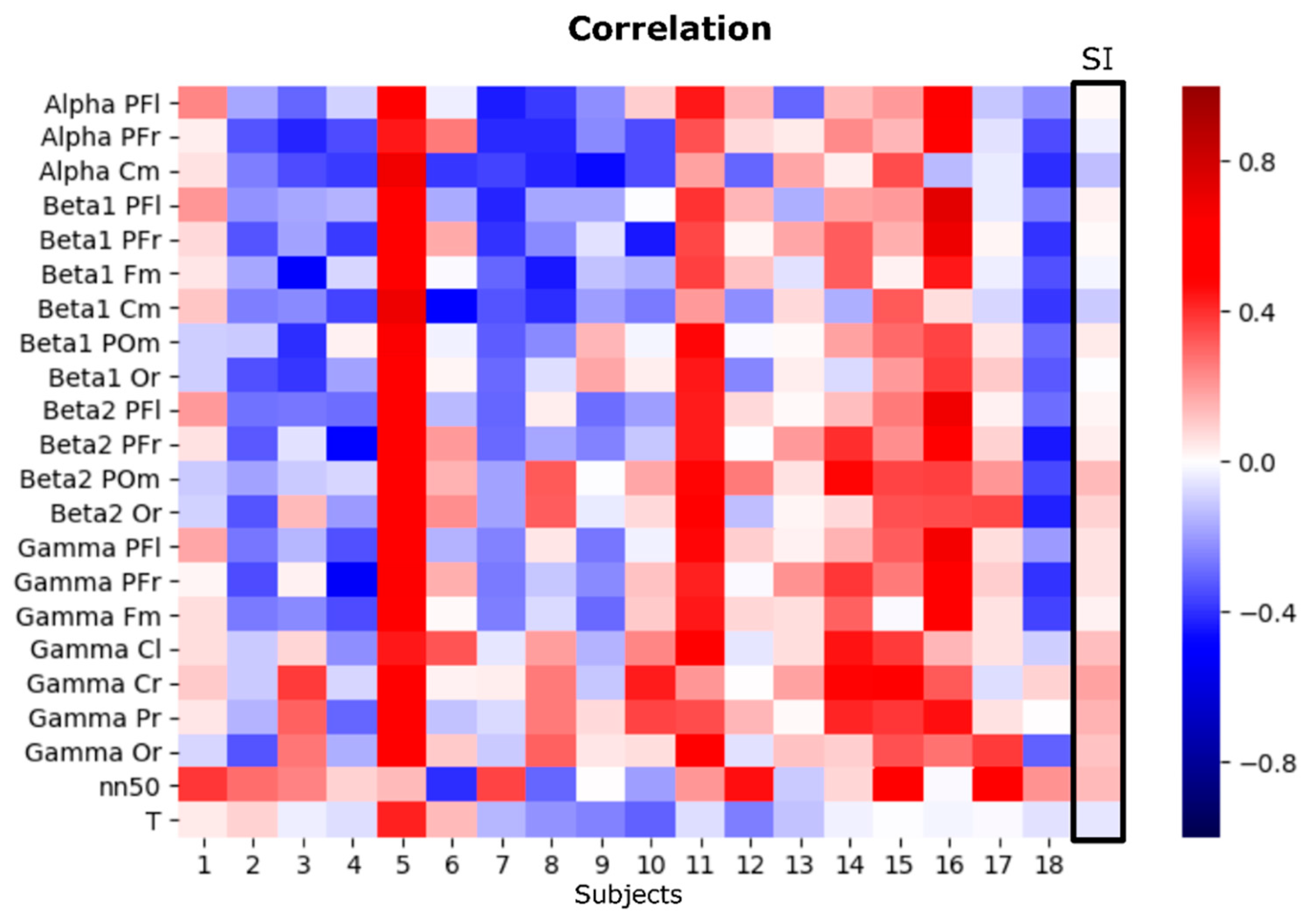

3.3. EEG Asymmetries

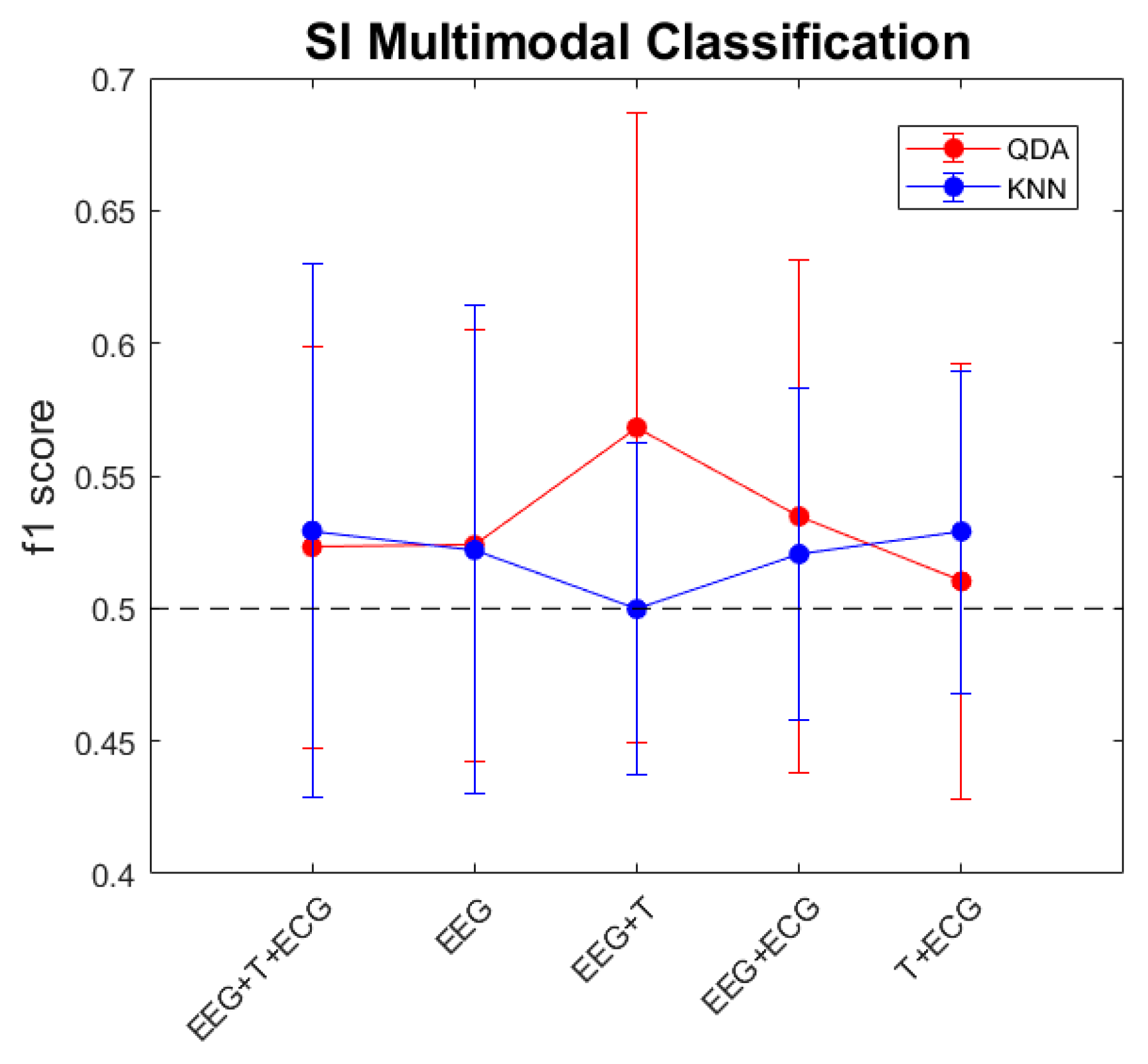

3.4. Multimodal Approximation

4. Discussion

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Kleinginna, P.R.; Kleinginna, A.M. A categorized list of motivation definitions, with a suggestion for a consensual definition. Motiv. Emot. 1981, 5, 263–291. [Google Scholar] [CrossRef]

- Darwin, C.; Prodger, P. The Expression of the Emotions in Man and Animals; Oxford University Press: Oxford, UK, 1988. [Google Scholar]

- Russell, J. A circumplex model of affect. J. Personal. Soc. Psychol. 1980, 39, 1161. [Google Scholar] [CrossRef]

- Wundt, W. Lectures on Human and Animal Psychology; Swan Sonnenschein & Co.: London, UK, 1894. [Google Scholar]

- Davidson, R.J.; Ekman, P.; Saron, C.D.; Senulis, J.A.; Friesen, W.V. Approach-withdrawal and cerebral asymmetry: Emotional expression and brain physiology: I. J. Personal. Soc. Psychol. 1990, 58, 330–341. [Google Scholar] [CrossRef]

- Picard, R.W. Affective Computing; The MIT Press: Cambridge, MA, USA, 1997. [Google Scholar]

- Calvo, R.A.; Member, S.; Mello, S.D.; Society, I.C. Affect Detection: An Interdisciplinary Review of Models, Methods, and Their Applications. IEEE Trans. Affect. Comput. 2010, 1, 18–37. [Google Scholar] [CrossRef]

- Ruiz, S.; Buyukturkoglu, K.; Rana, M.; Birbaumer, N.; Sitaram, R. Real-time fMRI brain computer interfaces: Self-regulation of single brain regions to networks. Biol. Psychol. 2014, 95, 4–20. [Google Scholar] [CrossRef]

- Mauss, I.B.; Robinson, M.D. Measures of emotion: A review. Cogn. Emot. 2009, 23, 209–237. [Google Scholar] [CrossRef]

- Tomarken, A.J.; Davidson, R.J.; Wheeler, R.E.; Doss, R.C. Individual Differences in Anterior Brain Asymmetry and Fundamental Dimensions of Emotion. J. Personal. Soc. Psychol. 1992, 62, 676–687. [Google Scholar] [CrossRef]

- Ioannou, S.; Gallese, V.; Merla, A. Thermal infrared imaging in psychophysiology: Potentialities and limits. Psychophysiology 2014, 51, 951–963. [Google Scholar] [CrossRef]

- Hagemann, D.; Waldstein, S.R.; Thayer, J.F. Central and autonomic nervous system integration in emotion. Brain Cogn. 2003, 52, 79–87. [Google Scholar] [CrossRef]

- Lang, P.; Greenwald, M.; Bradley, M.; Hamm, A. Looking at picture: Affective, facial, visceral, and behavioral reactions. Psychophysiology 1993, 30, 261–273. [Google Scholar] [CrossRef]

- Kreibig, S.D. Autonomic nervous system activity in emotion: A review. Biol. Psychol. 2010, 84, 394–421. [Google Scholar] [CrossRef]

- Levenson, R.W. Blood, Sweat, and Fears the Autonomic Architecture of Emotion. Ann. N. Y. Acad. Sci. 2003, 1000, 348–366. [Google Scholar] [CrossRef] [PubMed]

- James, W. What is an Emotion? Mind 1884, 9, 188–205. [Google Scholar] [CrossRef]

- Schachter, S.; Singer, J.E. Cognitive, social, and physiological determinants of emotional state. Psychol. Rev. 1962, 69, 379–399. [Google Scholar] [CrossRef] [PubMed]

- Eckman, P. Universals and cultural differences in facial expressions of emotion. Neb. Symp. Motiv. 1972, 19, 207–284. [Google Scholar] [CrossRef]

- Levenson, R. Emotion and the autonomic nervous system: A prospectus for research on autonomic specificity. In Social Psychophysiology and Emotion: Theory and Clinical Applications; Wagner, H.L., Ed.; John Wiley & Sons Ltd: Hoboken, NJ, USA, 1988. [Google Scholar]

- Goshvarpour, A.; Abbasi, A.; Goshvarpour, A. An accurate emotion recognition system using ECG and GSR signals and matching pursuit method. Biomed. J. 2017, 40, 355–368. [Google Scholar] [CrossRef]

- Valderas, T.; Bolea, J.; Laguna, P.; Ieee, S.M. Human Emotion Recognition Using Heart Rate Variability Analysis with Spectral Bands Based on Respiration. In Proceedings of the 2015 37th IEEE Engineering in Medicine and Biology Society, Milan, Italy, 25–29 August 2015; pp. 6134–6137. [Google Scholar]

- McFarland, R.A. Relationship of Skin Temperature Changes to the Emotions Accompanying Music. Biofeedback Self-Regul. 1985, 10, 255–267. [Google Scholar] [CrossRef] [PubMed]

- Rimm-kaufman, S.E.; Kagan, J. The Psychological Significance of Changes in Skin Temperature. Motiv. Emot. 1996, 20, 63–78. [Google Scholar] [CrossRef]

- Wu, G.; Liu, G.; Hao, M. The analysis of emotion recognition from GSR based on PSO. In Proceedings of the 2010 International Symposium on Intelligence Information Processing and Trusted Computing, Huanggang, China, 28–29 October 2010; pp. 360–363. [Google Scholar]

- Torres, C.A.; Orozco, A.; Alvarez, M.A. Feature Selection for Multimodal Emotion Recognition in the Arousal-Valence Space. In Proceedings of the 35th Annual International Conference of the IEEE EMBS, Osaka, Japan, 3–7 July 2013; pp. 4330–4333. [Google Scholar]

- Koelstra, S.; Patras, I. Fusion of facial expressions and EEG for implicit affective tagging. Image Vis. Comput. 2013, 31, 164–174. [Google Scholar] [CrossRef]

- Li, L.; Chen, J.H. Emotion recognition using physiological signals. In Proceedings of the International Conference on Artificial Reality and Telexistence, Hangzhou, China, 29 November–1 December 2006; pp. 437–446. [Google Scholar]

- Sorinas, J.; Grima, M.D.; Ferrandez, J.M. Identifying Suitable Brain Regions and Trial Size Segmentation for Positive/Negative Emotion Recognition. Int. J. Neural Syst. 2018, 29. [Google Scholar] [CrossRef]

- Sorinas, J.; Fernandez-Troyano, J.C.; Calvo, M.V.; Ferrandez, J.M.; Fernandez, E. A new model for the implementation of positive and negative emotion recognition. arXiv 2019, arXiv:1905.00230. [Google Scholar]

- Chatrian, G.E.; Lettich, E.; Nelson, P.L. Ten percent electrode system for topographic studies of spontaneous and evoked EEG activities. Am. J. EEG Technol. 1985, 25, 83–92. [Google Scholar] [CrossRef]

- Ferree, T.C.; Luu, P.; Russell, G.S.; Tucker, D.M. Scalp electrode impedance, infection risk, and EEG data quality. Clin. Neurophysiol. 2001, 112, 536–544. [Google Scholar] [CrossRef]

- Delorme, A.; Makeig, S. EEGLAB: An Open Source Toolbox for Analysis of Sin-gle-trail EEG Dynamics Including Independent Compo-nent Anlaysis. J. Neurosci. Methods 2004, 134, 9–21. [Google Scholar] [CrossRef] [PubMed]

- Jung, T.P.; Humphries, C.; Lee, T.W.; Makeig, S.; McKeown, M.J.; Iragui, V.; Sejnowski, T.J. Extended ICA removes artifacts from electroencephalographic recordings. Adv. Neural Inf. Process. Syst. 1998, 10, 894–900. [Google Scholar]

- Davidson, R.J. EEG Measures of Cerebral Asymmetry: Conceptual and Methodological Issues. Int. J. Neurosci. 1988, 39, 71–89. [Google Scholar] [CrossRef]

- Hollander, M.; Wolfe, D.A. Nonparametric Statistical Methods; Hoboken, N.J., Ed.; John Wiley & Sons Inc: Hoboken, NJ, USA, 1999. [Google Scholar]

- Kaufmann, T.; Sütterlin, S.; Schulz, S.M.; Vögele, C. ARTiiFACT: A tool for heart rate artifact processing and heart rate variability analysis. Behav. Res. Methods 2011, 43, 1161–1170. [Google Scholar] [CrossRef]

- Khandoker, A.H.; Karmakar, C.; Brennan, M.; Voss, A.; Palaniswami, M. Quantitative Poincaré Plot. In Poincaré Plot Methods for Heart Rate Variability Analysis; Springer: Boston, MA, USA, 2013. [Google Scholar]

- Hoshi, R.A.; Pastre, C.M.; Vanderlei, L.C.M.; Godoi, M.F. Poincaré plot indexes of heart rate variability: Relationships with other nonlinear variables. Auton. Neurosci. Basic Clin. 2013, 177, 271–274. [Google Scholar] [CrossRef]

- Woo, M.A.; Stevenson, W.G.; Moser, D.K.; Trelease, R.B.; Harper, R.M. Patterns of beat-to-beat heart rate variability in advanced heart failure. Am. Heart J. 1992, 123, 704–710. [Google Scholar] [CrossRef]

- Tulppo, M.P.; Makikallio, T.H.; Takala, T.E.; Seppanen, T.; Huikuri, H.V. Quantitative beat-to-beat analysis of heart rate dynamics during exercise. Am. J. Physiol. 1996, 271, 244–252. [Google Scholar] [CrossRef]

- Task Force of the European Society of Cardiology and the North American Society of Pacing and Electrophysiology. Heart rate variability: Standards of measurement, physiological interpretation, and clinical use. Eur. Heart J. 1996, 17, 354–381. [Google Scholar] [CrossRef]

- Malliani, A.; Pagani, M.; Lombardi, F.; Cerutti, S. Cardiovascular neural regulation explored in the frequency domain. Circulation 1991, 84, 482–492. [Google Scholar] [CrossRef] [PubMed]

- del Paso, G.A.R.; Langewitz, W.; Mulder, L.M.; van Roon, A.; Duschek, S. The utility of low frequency heart rate variability as an index of sympathetic cardiac tone: A review with emphasis on a reanalysis of previous studies. Psychophysiology 2013, 50, 477–487. [Google Scholar] [CrossRef] [PubMed]

- Eckberg, D. Sympathovagal balance: A critical appraisal. Circulation 1997, 96, 3224–3232. [Google Scholar] [CrossRef] [PubMed]

- Mourot, L.; Bouhaddi, M.; Perrey, S.; Rouillon, J.-D.; Regnard, J. Quantitative Poincare plot analysis of heart rate variability: Effect of endurance training. Eur. J. Appl. Physiol. 2004, 91, 79–87. [Google Scholar] [CrossRef] [PubMed]

- Storbeck, J.; Clore, G.L. On the interdependence of cognition and emotion. Cogn. Emot. 2007, 21, 1212–1237. [Google Scholar] [CrossRef]

- Kober, H.; Barrett, F.; Joseph, J.; Bliss-moreau, E.; Lindquist, K.; Wager, T.D. Functional grouping and cortical—Subcortical interactions in emotion: A meta-analysis of neuroimaging studies. Neuroimage 2008, 42, 998–1031. [Google Scholar] [CrossRef]

- Schmidt, L.A.; Trainor, L.J. Frontal brain electrical activity (EEG) distinguishes valence and intensity of musical emotions valence and intensity of musical emotions. Cogn. Emot. 2015, 15, 487–500. [Google Scholar] [CrossRef]

- Murphy, F.C.; Nimmo-smith, I.A.N.; Lawrence, A.D. Functional neuroanatomy of emotions: A meta-analysis. Cogn. Affect. Behav. Neurosci. 2003, 3, 207–233. [Google Scholar]

- Coan, J.A.; Allen, J.J.B. Frontal EEG asymmetry as a moderator and mediator of emotion. Biol. Psychol. 2004, 67, 7–49. [Google Scholar] [CrossRef]

- Vecchiato, G.; Toppi, J.; Astolfi, L.; De Vico Fallani, F.; Cincotti, F.; Mattia, D.; Bez, F.; Babiloni, F. Spectral EEG frontal asymmetries correlate with the experienced pleasantness of TV commercial advertisements. Med. Biol. Eng. Comput. 2011, 49, 579–583. [Google Scholar] [CrossRef]

- Nie, D.; Wang, X.; Shi, L.; Member, B.L.S. EEG-based emotion recognition during watching movies. In Proceedings of the 5th International IEEE EMBS Conference on Neural Engineering, Cancun, Mexico, 27 April–1 May 2011. [Google Scholar]

- Muller, M.; Keil, A.; Gruber, T.; Elbert, T. Processing of affective pictures modulates right-hemispheric gamma band EEG activity. Clin. Neurophysiol. 1999, 110, 1913–1920. [Google Scholar] [CrossRef]

- Porges, S. The polyvagal theory: New insights into adaptive reactions of the autonomic nervous system. Clevel. Clin. J. Med. 2009, 76, 86–90. [Google Scholar] [CrossRef]

- Guo, H.; Huang, Y. Heart Rate Variability Signal Features for Emotion Recognition by using Principal Component Analysis and Support Vectors Machine. In Proceedings of the 2016 IEEE 16th International Conference on Bioinformatics and Bioengineering, Taichung, Taiwan, 31 October–2 November 2016; pp. 2–5. [Google Scholar]

- McCraty, R.; Atkinson, M.; Tiller, W.A.; Rein, G.; Watkins, A.D. The effects of emotions on short-term power spectrum analysis of heart rate variability. Am. J. Cardiol. 1995, 76, 1089–1093. [Google Scholar] [CrossRef]

- Murugappan, M.; Murugappan, S.; Zheng, B. Frequency Band Analysis of Electrocardiogram (ECG) Signals for Human Emotional State Classification Using Discrete Wavelet Transform(DWT). J. Phys. Ther. Sci. 2013, 25, 753–759. [Google Scholar] [CrossRef] [PubMed]

- Umetani, K.E.N.; Singer, D.H.; Craty, R.M.C.; Atkinson, M. Twenty-Four Hour Time Domain Heart Rate Variability and Heart Rate: Relations to Age and Gender Over Nine Decades. J. Am. Coll. Cardiol. 1998, 31, 593–601. [Google Scholar] [CrossRef]

- Moodithaya, S.; Avadhany, S.T. Gender Differences in Age-Related Changes in Cardiac Autonomic Nervous Function. J. Aging Res. 2012. [Google Scholar] [CrossRef]

- Nater, U.M.; Abbruzzese, E.; Krebs, M.; Ehlert, U. Sex differences in emotional and psychophysiological responses to musical stimuli. Int. J. Psychophysiol. 2006, 62, 300–308. [Google Scholar] [CrossRef]

- Hardy, J.; Bois, E.D. Differences between men and women in their response to heat and cold. Physiology 1940, 26, 389–398. [Google Scholar] [CrossRef]

- Marchand, I.; Johnson, D.; Montgomery, D.; Brisson, G.; Perrault, H. Gender differences in temperature and vascular characteristics during exercise recovery. Can. J. Appl. Physiol. 2001, 26, 425–441. [Google Scholar] [CrossRef]

- Verma, G.K.; Tiwary, U.S. Multimodal fusion framework: A multiresolution approach for emotion classification and recognition from physiological signals. NeuroImage 2014, 102, 162–172. [Google Scholar] [CrossRef] [PubMed]

- Chen, J.; Hu, B.; Xu, L.; Moore, P.; Su, Y. Feature-level fusion of multimodal physiological signals for emotion recognition. In Proceedings of the 2015 IEEE International Conference on Bioinformatics and Biomedicine (BIBM), Washington, DC, USA, 9–12 November 2015; pp. 395–399. [Google Scholar]

- Jatupaiboon, N.; Pan-Ngum, S.; Israsena, P. Subject-dependent and subject-independent emotion classification using unimodal and multimodal physiological signals. J. Med. Imaging Health Inform. 2015, 5, 1020–1027. [Google Scholar] [CrossRef]

- Torres-Valencia, C.; Álvarez-López, M.; Orozco-Gutiérrez, Á. SVM-based feature selection methods for emotion recognition from multimodal data. J. Multimodal User Interfaces 2017, 11, 9–23. [Google Scholar] [CrossRef]

- Bailenson, J.N.; Pontikakis, E.D.; Mauss, I.B.; Gross, J.J.; Jabon, M.E.; Hutcherson, C.A.; Nass, C.; John, O. Real-time classification of evoked emotions using facial feature tracking and physiological responses. Int. J. Hum. Comput. Stud. 2008, 66, 303–317. [Google Scholar] [CrossRef]

- Hua, J.; Xiong, Z.; Lowey, J.; Suh, E.; Dougherty, E.R. Optimal number of features as a function of sample size for various classification rules. Bioinformatics 2005, 21, 1509–1515. [Google Scholar] [CrossRef]

- Harmon-Jones, E. Clarifying the emotive functions of asymmetrical frontal cortical activity. Psychophysiology 2003, 40, 838–848. [Google Scholar] [CrossRef]

| Time Domain | Mean RR: Mean Inter-Beat Interval |

|---|---|

| SDNN: standard deviation on NN (normal-to-normal) intervals | |

| RMSSD: square of the root of MSSD (mean square difference of successive NN intervals) | |

| NN50: the number of pairs of adjacent NN intervals differing by more than 50 ms | |

| pNN50: the proportion derived by dividing the NN50 by the total number of NN intervals | |

| RMSSD, NN50, and pNN50 are thought to represent parasympathetic mediated HRV [41]. | |

| Frequency Domain | VLF: very-low-frequency component (0.003–0.04 Hz) |

| LF: low-frequency component (0.04–0.15 Hz). There is controversy on whether the LF component reflects SNS activity, is a product of both SNS and PNS [41,42] or instead it is also mainly determined by the PNS [43]. | |

| HF: high-frequency component occurs at the frequency of adult respiration (0.15–0.4 Hz), primarily reflects cardiac parasympathetic influence due to respiratory sinus arrhythmia. | |

| LF/HF ratio: This rate is interpreted as an index of sympathovagal balance [44]. | |

| Poincare Plot | SD1: standard deviation of the instantaneous (short-term) beat-to-beat RR interval variability. As vagal regulation over the sinus node are known to be faster than the sympathetically mediated effects, SD1 is considered a parasympathetic index [45]. |

| SD2: standard deviation of the continuous long-term RR interval variability. There is evidence of both parasympathetic and sympathetic tones influenced on this index [38]. | |

| SD1/SD2 ratio: ratio between the short and long interval variation. |

| All Population | Women | Men | |

|---|---|---|---|

| QDA | 0.524 | 0.463 | 0.483 |

| classifier | (s.d. 0.082) | (s.d. 0.082) | (s.d. 0.076) |

| KNN | 0.522 | 0.499 | 0.474 |

| classifier | (s.d. 0.092) | (s.d. 0.041) | (s.d. 0.065) |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sorinas, J.; Ferrández, J.M.; Fernandez, E. Brain and Body Emotional Responses: Multimodal Approximation for Valence Classification. Sensors 2020, 20, 313. https://doi.org/10.3390/s20010313

Sorinas J, Ferrández JM, Fernandez E. Brain and Body Emotional Responses: Multimodal Approximation for Valence Classification. Sensors. 2020; 20(1):313. https://doi.org/10.3390/s20010313

Chicago/Turabian StyleSorinas, Jennifer, Jose Manuel Ferrández, and Eduardo Fernandez. 2020. "Brain and Body Emotional Responses: Multimodal Approximation for Valence Classification" Sensors 20, no. 1: 313. https://doi.org/10.3390/s20010313

APA StyleSorinas, J., Ferrández, J. M., & Fernandez, E. (2020). Brain and Body Emotional Responses: Multimodal Approximation for Valence Classification. Sensors, 20(1), 313. https://doi.org/10.3390/s20010313