Double-Diamond Model-Based Orientation Guidance in Wearable Human–Machine Navigation Systems for Blind and Visually Impaired People

Abstract

1. Introduction

2. Related Works

2.1. Smart Travel Aids for BVI People

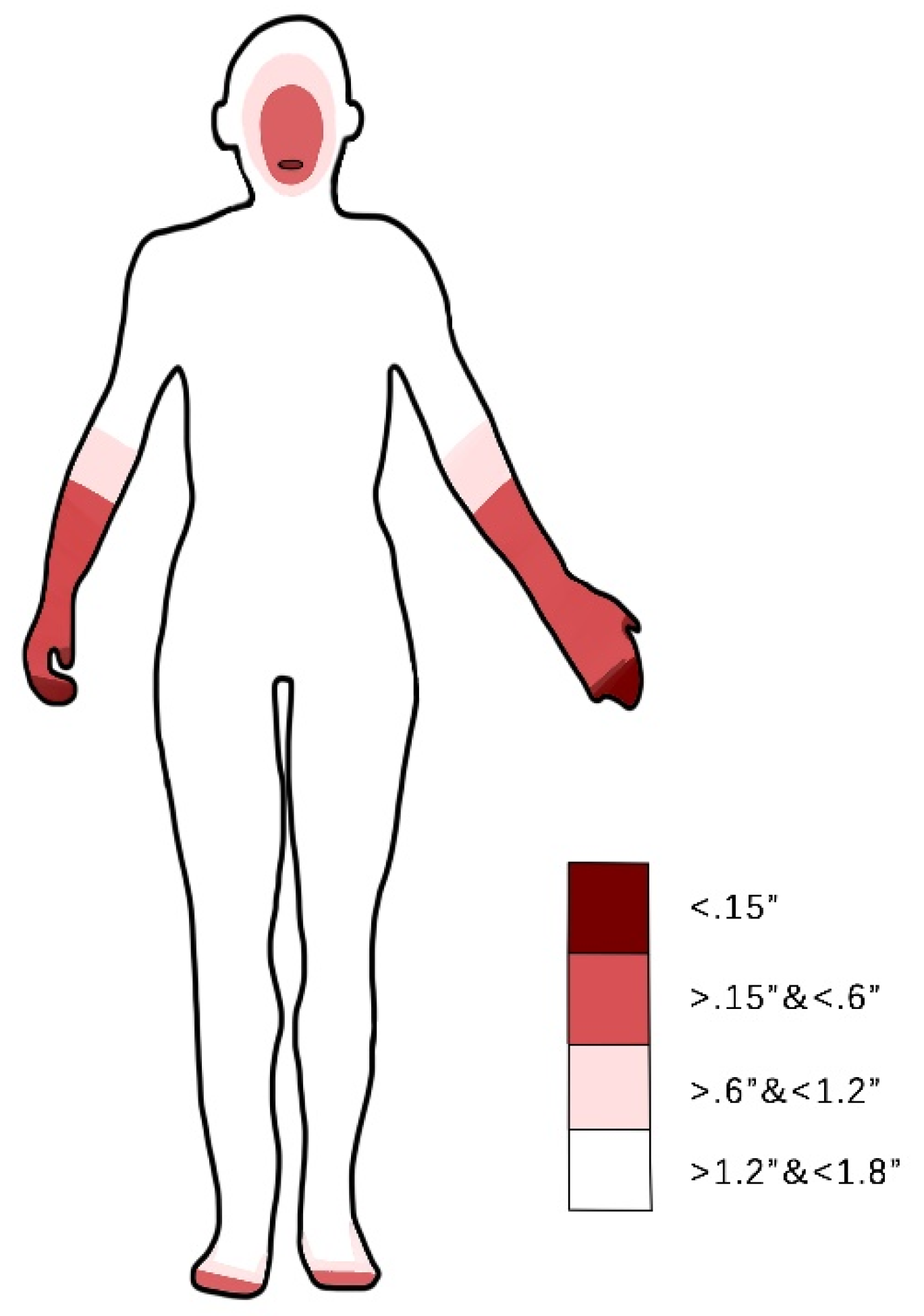

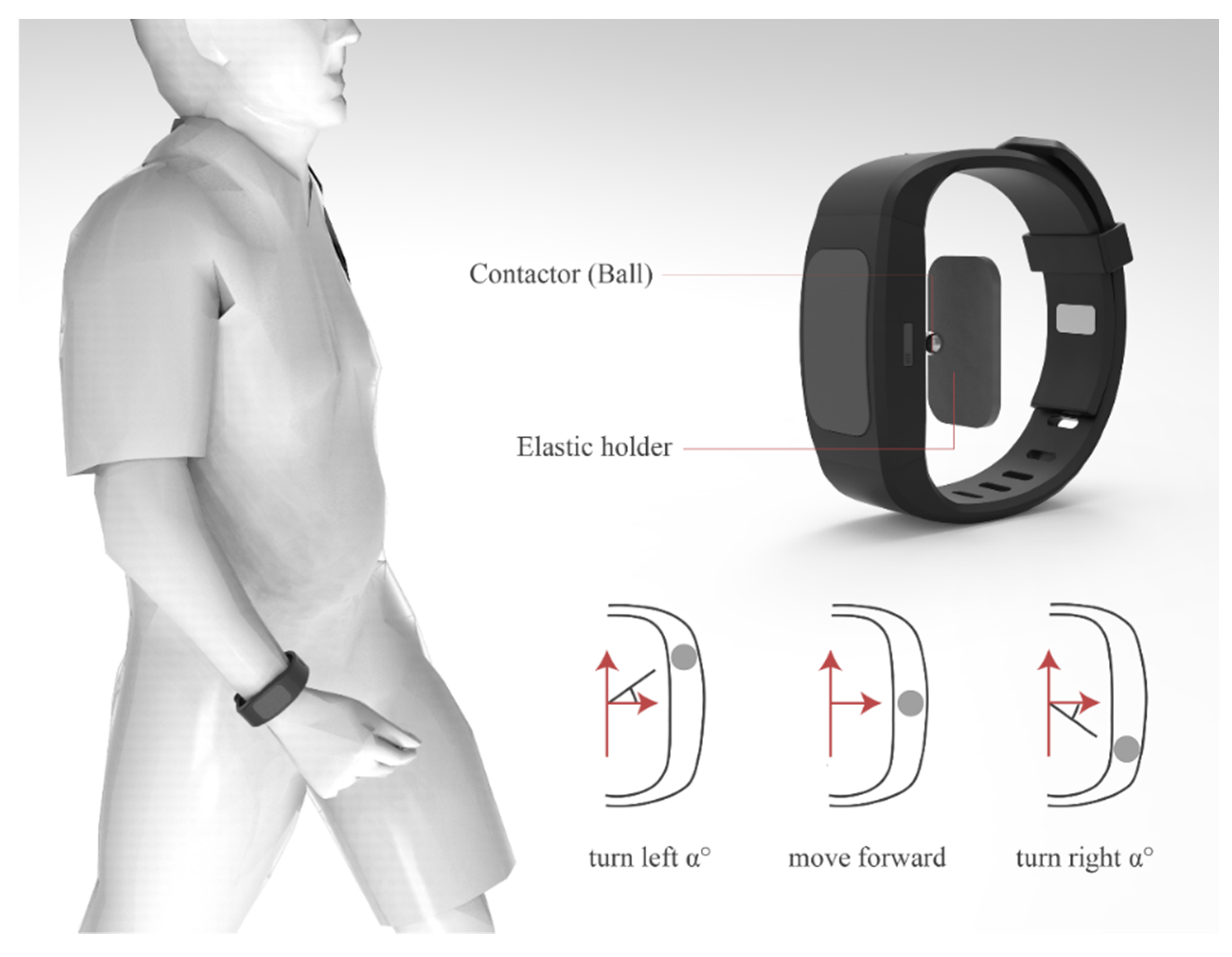

2.2. Human–Machine Interaction and Multimodal Feedback for Orientation Guidance

2.3. Design Thinking in Human-Centric Innovations

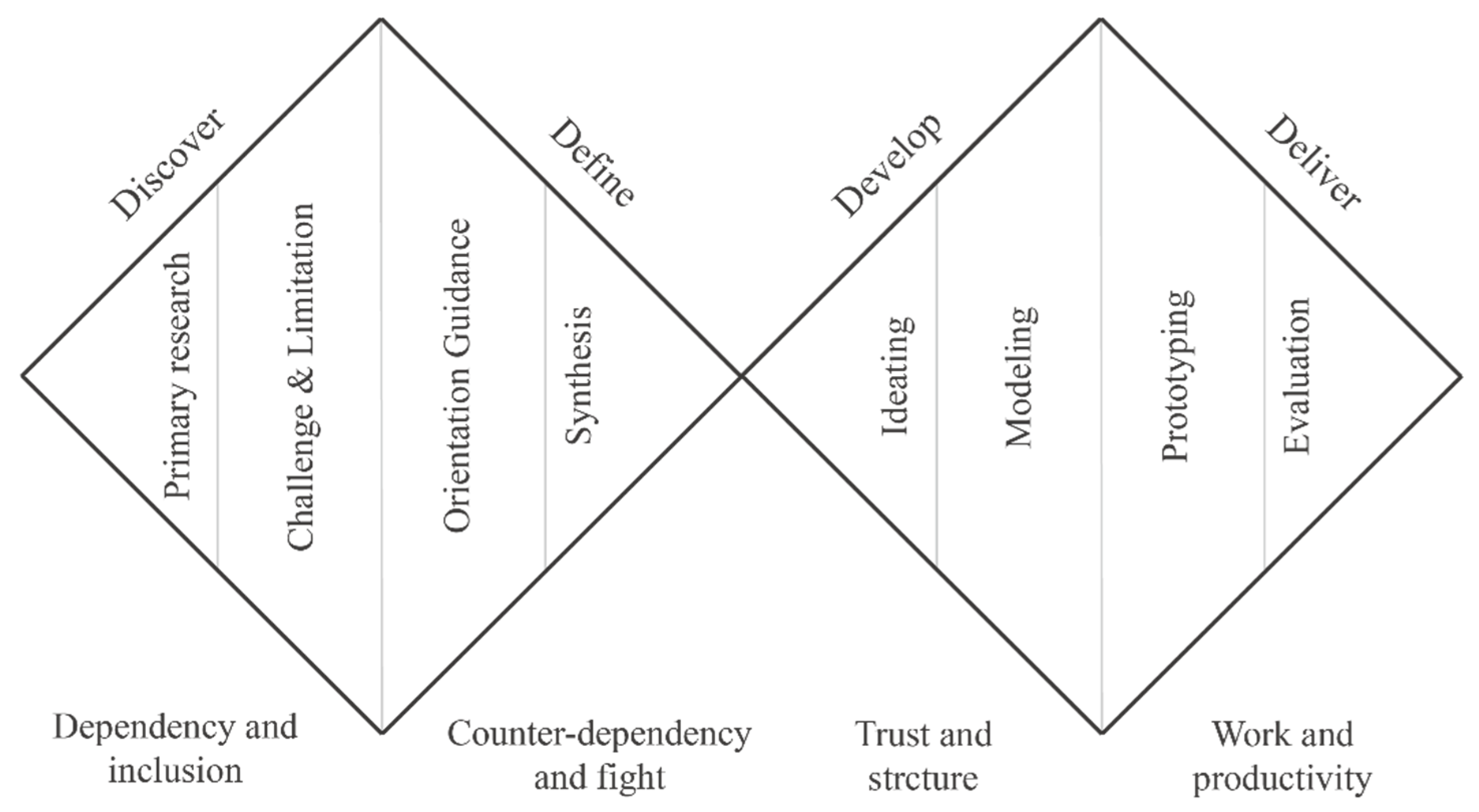

3. Design of BVI Orientation Guidance Using a Double-Diamond Design Model

3.1. Discover Stage

3.1.1. Primary Research Goal

3.1.2. Challenges and Limitations of Existing Work

3.2. Define Stage

3.2.1. Orientation Guidance in a BVI Human–Machine System

3.2.2. Synthesis Design Opportunities

3.3. Develop Stage

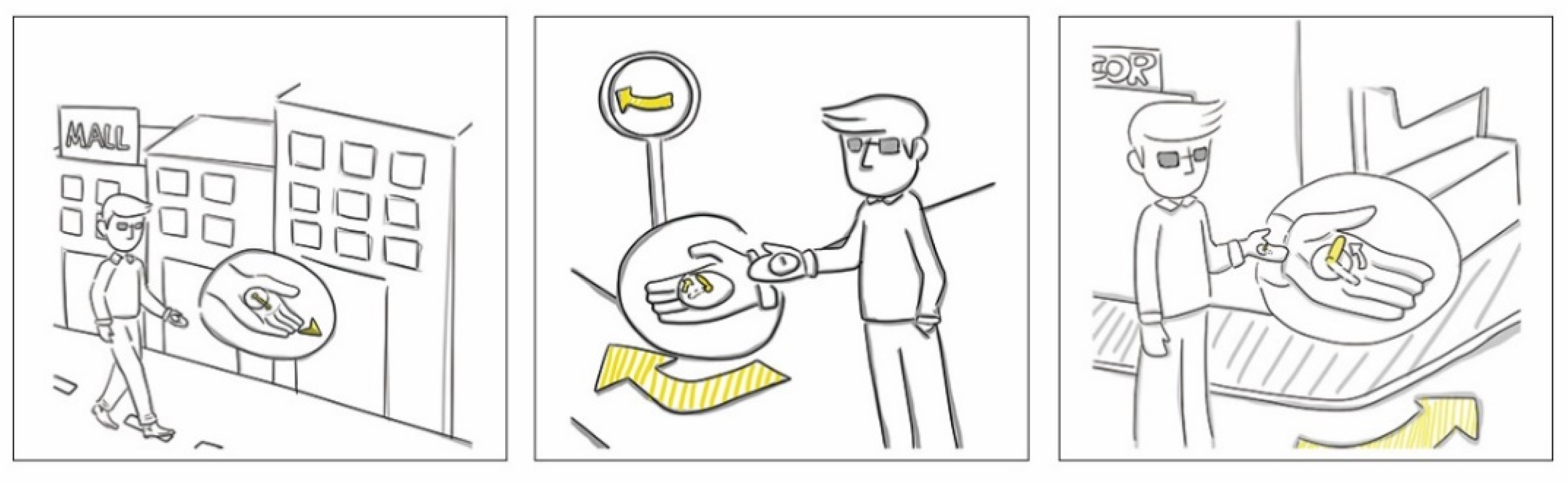

3.4. Deliver Stage

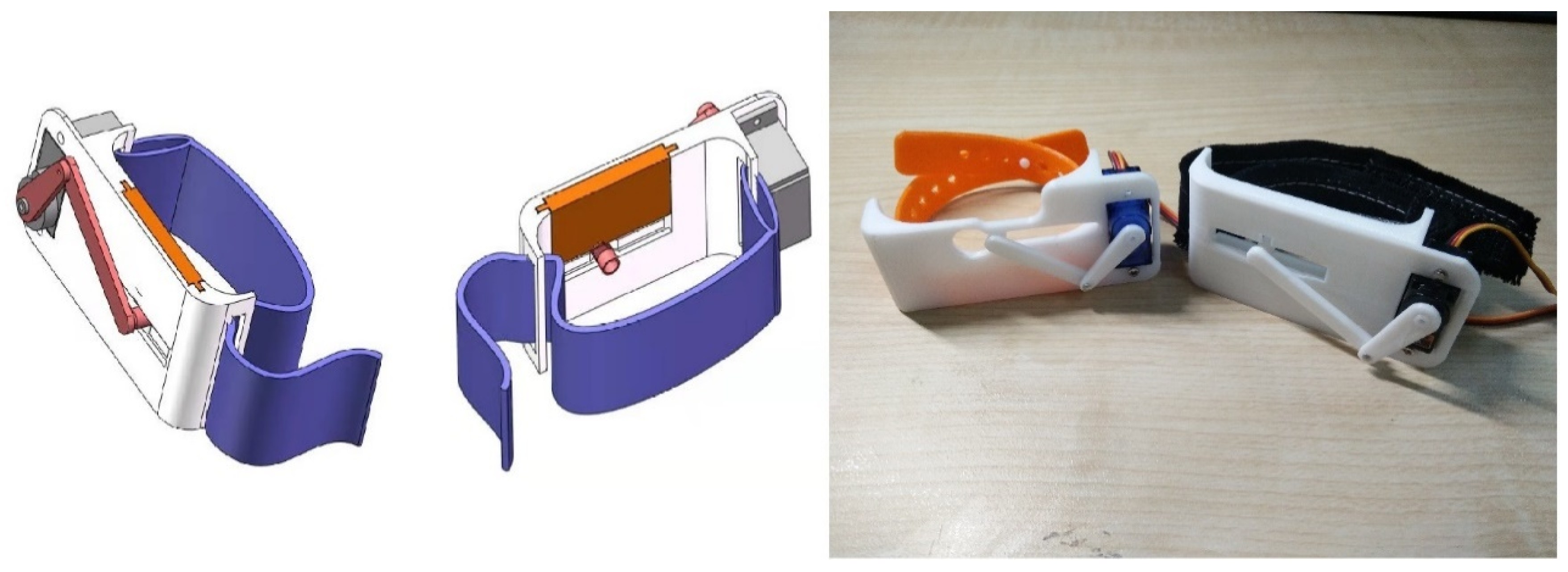

3.4.1. Prototyping

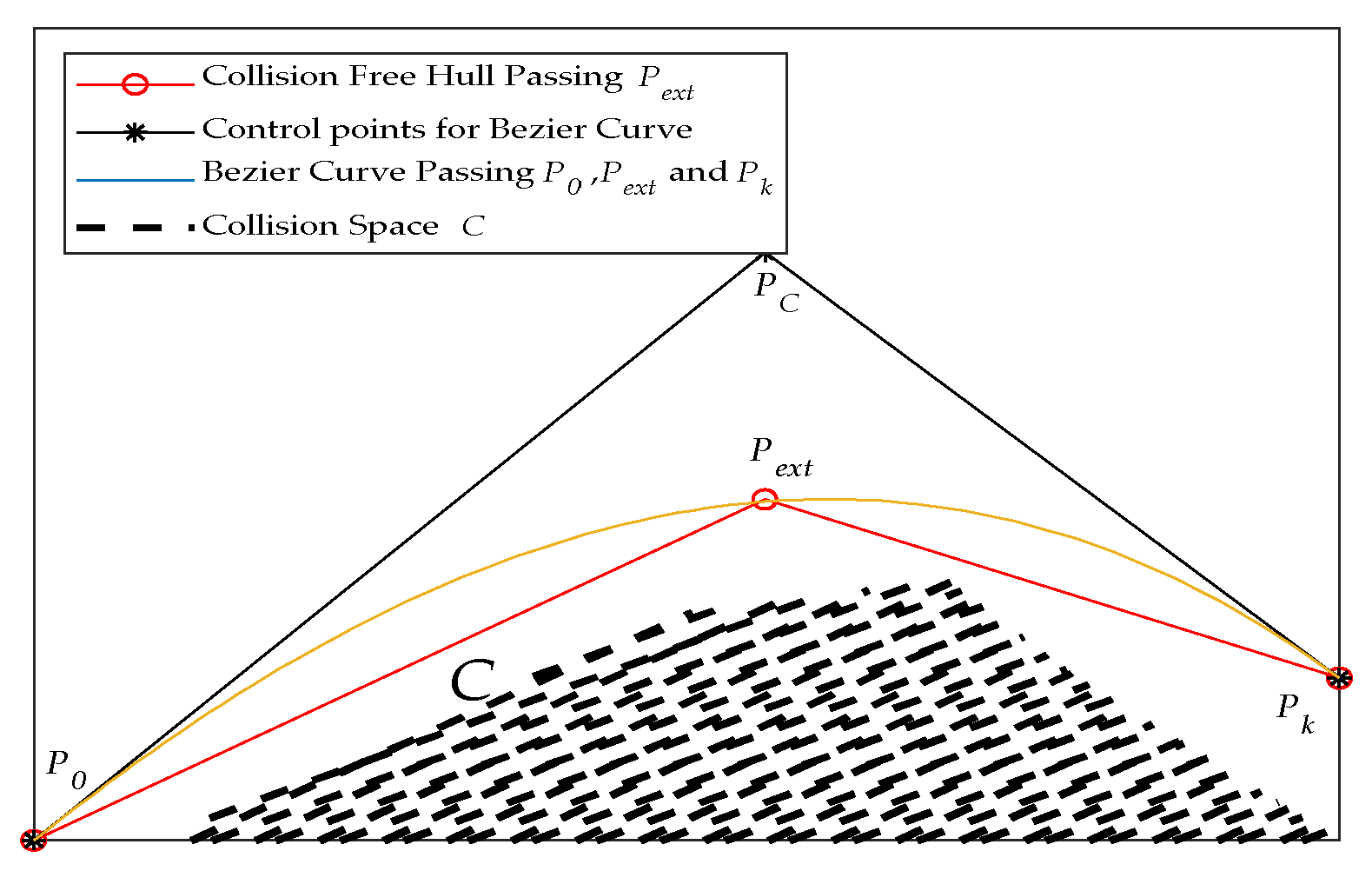

3.4.2. Bezier-Curve Based Planning

4. Experiment and Evaluation

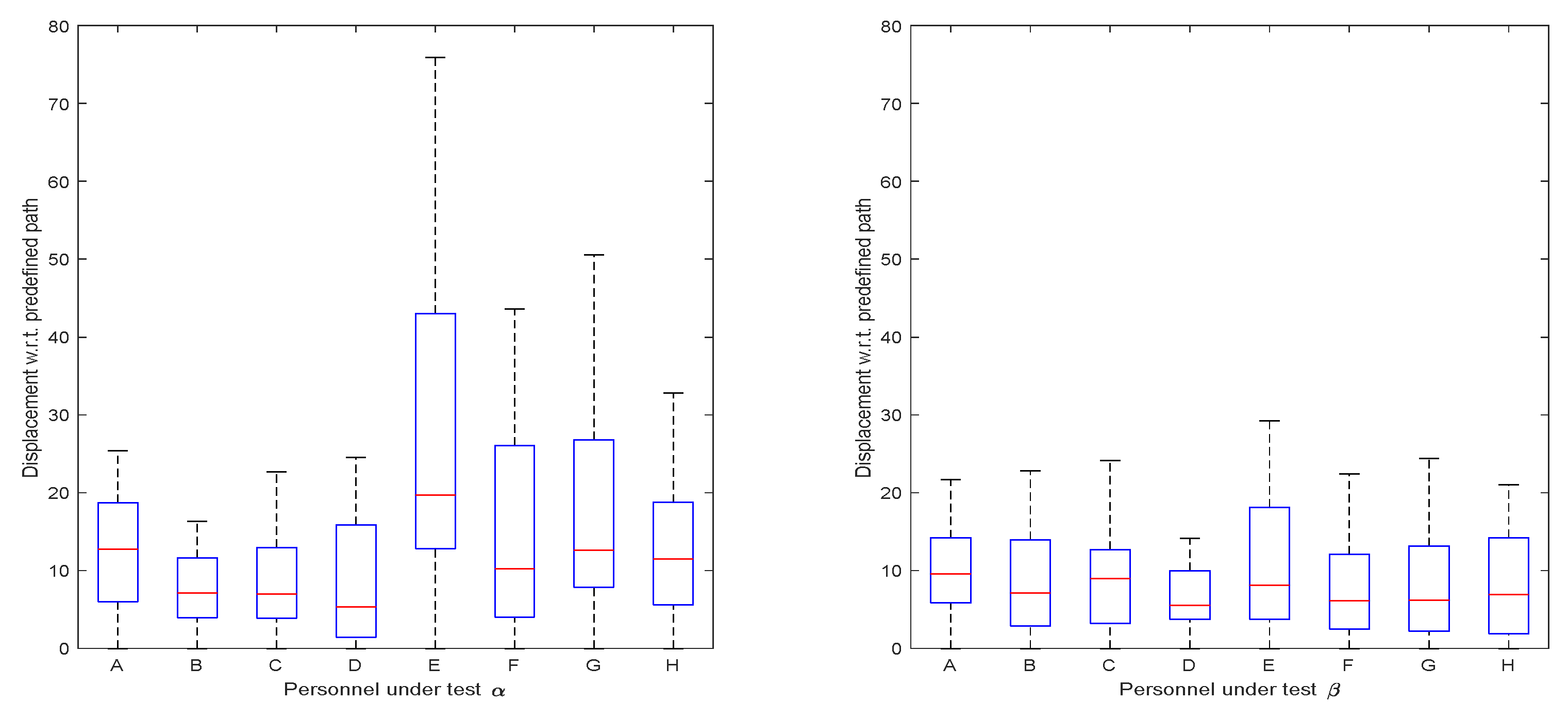

4.1. Orientation Guidance Tests in Virtual Test-Fields

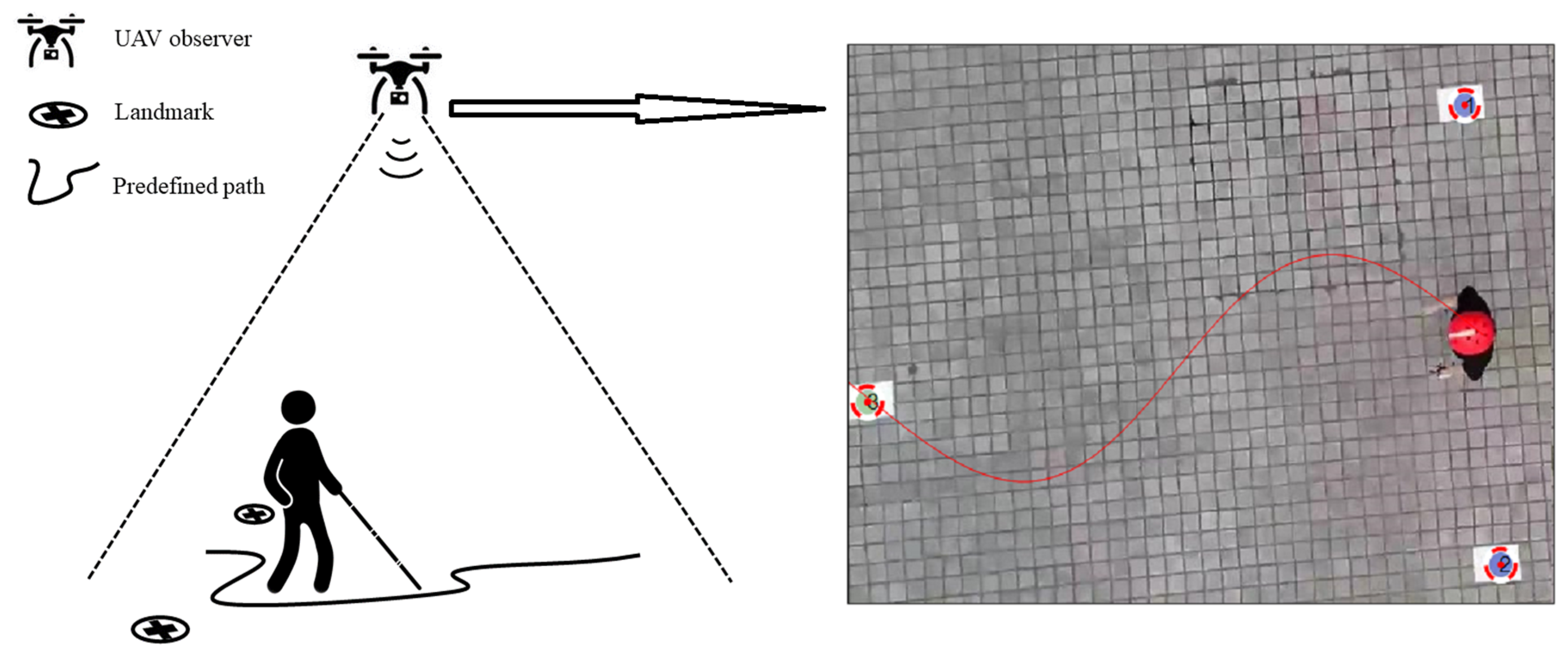

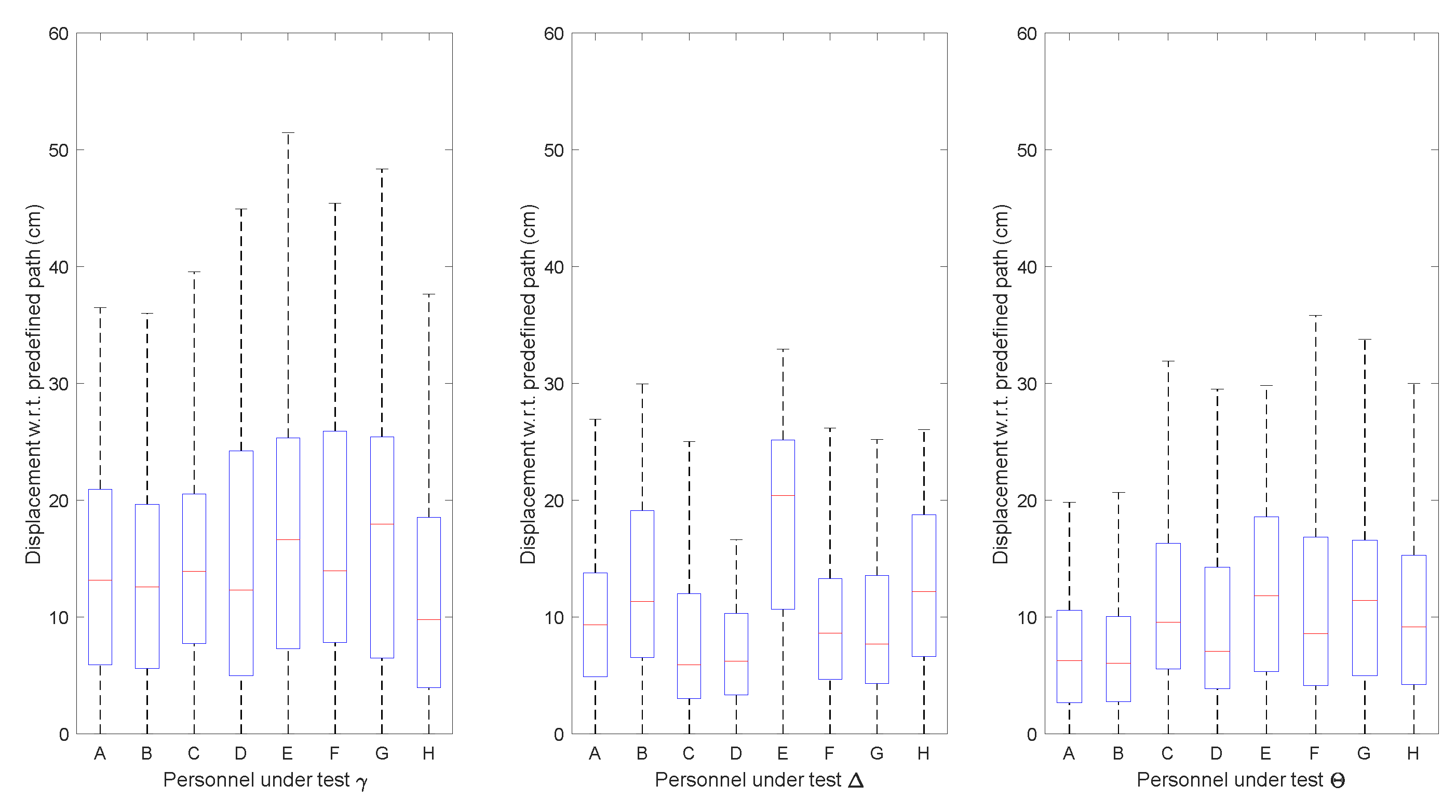

4.2. Orientation Guidance Tests in Real Test-Fields

5. Discussion

5.1. The Design Thinking in BVI Navigation Systems

5.2. Motion Behavior Style Differences between subjects in Virtual Test-Field and Real Test-Field

5.3. Adaptive Tactile Stimulation and Reactions

5.4. Orientation Finding and Spatial Perception Rehabilitation

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Rra, B.; Flaxman, S.R.; Braithwaite, T.; Cicinelli, M.V.; Das, A.; Jonas, J.B.; Keeffe, J.; Kempen, J.H.; Leasher, J.; Limburg, H.J.L.G.H. Magnitude, temporal trends, and projections of the global prevalence of blindness and distance and near vision impairment: A systematic review and meta-analysis. Lancet Glob. Health 2017, 5, e888–e897. [Google Scholar]

- World Health Organization. Blindness and Vision Impairment; WHO: Geneva, Switzerland, 2018. [Google Scholar]

- Islam, M.M.; Sadi, M.S.; Zamli, K.Z.; Ahmed, M.M. Developing walking assistants for visually impaired people: A review. IEEE Sens. J. 2019, 19, 2814–2828. [Google Scholar] [CrossRef]

- Bhowmick, A.; Hazarika, S.M. An insight into assistive technology for the visually impaired and blind people: State-of-the-art and future trends. J. Multimodal User Interfaces 2017, 11, 149–172. [Google Scholar] [CrossRef]

- Kulkarni, A.; Wang, A.; Urbina, L.; Steinfeld, A.; Dias, B. Robotic assistance in indoor navigation for people who are blind. In Proceedings of the 11th ACM/IEEE International Conference on Human-Robot Interaction (HRI), Christchurch, New Zealand, 7–10 March 2016; pp. 461–462. [Google Scholar]

- Pacaux-Lemoine, M.-P.; Trentesaux, D.; Rey, G.Z.; Millot, P. Designing intelligent manufacturing systems through Human-Machine Cooperation principles: A human-centered approach. Comput. Ind. Eng. 2017, 111, 581–595. [Google Scholar] [CrossRef]

- Li, B.; Munoz, J.P.; Rong, X.; Xiao, J.; Tian, Y.; Arditi, A. ISANA: Wearable context-aware indoor assistive navigation with obstacle avoidance for the blind. In Proceedings of the European Conference on Computer Vision 2016, Amsterdam, The Netherlands, 8–10 and 15–16 October 2016; pp. 448–462. [Google Scholar]

- Henry, P.; Krainin, M.; Herbst, E.; Ren, X.; Fox, D. RGB-D mapping: Using depth cameras for dense 3D modeling of indoor environments. In Experimental Robotics; Springer: Berlin/Heidelberg, Germany, 2014; pp. 477–491. [Google Scholar]

- Guerreiro, J.; Ohn-Bar, E.; Ahmetovic, D.; Kitani, K.; Asakawa, C. How context and user behavior affect indoor navigation assistance for blind people. In Proceedings of the Internet of Accessible Things, Lion, France, 23–25 April 2018; p. 2. [Google Scholar]

- Hafting, T.; Fyhn, M.; Molden, S.; Moser, M.-B.; Moser, E.I. Microstructure of a spatial map in the entorhinal cortex. Nature 2005, 436, 801. [Google Scholar] [CrossRef]

- Pissaloux, E.; Velázquez, R. On Spatial Cognition and Mobility Strategies. In Mobility of Visually Impaired People: Fundamentals and ICT Assistive Technologies; Pissaloux, E., Velazquez, R., Eds.; Springer: Cham, Switzerland, 2018; pp. 137–166. [Google Scholar]

- Katz, B.F.; Kammoun, S.; Parseihian, G.; Gutierrez, O.; Brilhault, A.; Auvray, M.; Truillet, P.; Denis, M.; Thorpe, S.; Jouffrais, C. NAVIG: Augmented reality guidance system for the visually impaired. Virtual Real. 2012, 16, 253–269. [Google Scholar] [CrossRef]

- Zhang, X. A Wearable Indoor Navigation System with Context Based Decision Making for Visually Impaired. Int. J. Adv. Robot. Autom. 2016, 1, 1–11. [Google Scholar] [CrossRef]

- Ahmetovic, D.; Gleason, C.; Kitani, K.M.; Takagi, H.; Asakawa, C. NavCog: Turn-by-turn smartphone navigation assistant for people with visual impairments or blindness. In Proceedings of the 13th Web for All Conference, Montreal, Canada, 11–13 April 2016; pp. 90–99. [Google Scholar]

- Li, B.; Munoz, J.P.; Rong, X.; Chen, Q.; Xiao, J.; Tian, Y.; Yousuf, M. Vision-based Mobile Indoor Assistive Navigation Aid for Blind People. IEEE Trans. Mob. Comput. 2019, 18, 702–714. [Google Scholar] [CrossRef]

- Nair, V.; Budhai, M.; Olmschenk, G.; Seiple, W.H.; Zhu, Z. ASSIST: Personalized Indoor Navigation via Multimodal Sensors and High-Level Semantic Information. In Proceedings of the Computer Vision—ECCV 2018 Workshops, Munich, Germany, 8–14 September 2019; pp. 128–143. [Google Scholar]

- Apostolopoulos, I.; Fallah, N.; Folmer, E.; Bekris, K.E. Integrated online localization and navigation for people with visual impairments using smart phones. ACM Trans. Interact. Intell. Syst. 2014, 3, 21. [Google Scholar] [CrossRef]

- Maurer, M.; Gerdes, J.C.; Lenz, B.; Winner, H. Autonomous Driving; Springer: Berlin/Heidelberg, Germany, 2016; pp. 973–978. [Google Scholar]

- Jiao, J.; Yuan, L.; Deng, Z.; Zhang, C.; Tang, W.; Wu, Q.; Jiao, J. A Smart Post-Rectification Algorithm Based on an ANN Considering Reflectivity and Distance for Indoor Scenario Reconstruction. IEEE Access 2018, 6, 58574–58586. [Google Scholar] [CrossRef]

- Joseph, S.L.; Xiao, J.; Zhang, X.; Chawda, B.; Narang, K.; Rajput, N.; Mehta, S.; Subramaniam, L.V. Being aware of the world: Toward using social media to support the blind with navigation. IEEE Trans. Hum. Mach. Syst. 2015, 45, 399–405. [Google Scholar] [CrossRef]

- Ganz, A.; Schafer, J.M.; Tao, Y.; Wilson, C.; Robertson, M. PERCEPT-II: Smartphone based indoor navigation system for the blind. In Proceedings of the 36th Annual International Conference of the IEEE Engineering in Medicine and Biology Society, Chicago, IL, USA, 26–30 August 2014; pp. 3662–3665. [Google Scholar]

- Fernandes, H.; Costa, P.; Filipe, V.; Paredes, H.; Barroso, J. A review of assistive spatial orientation and navigation technologies for the visually impaired. Univers. Access Inf. Soc. 2019, 18, 155–168. [Google Scholar] [CrossRef]

- Paden, B.; Cap, M.; Yong, S.Z.; Yershov, D.; Frazzoli, E. A Survey of Motion Planning and Control Techniques for Self-driving Urban Vehicles. IEEE Trans. Intell. Veh. 2016, 1, 33–55. [Google Scholar] [CrossRef]

- O’Brien, E.E.; Mohtar, A.A.; Diment, L.E.; Reynolds, K.J. A detachable electronic device for use with a long white cane to assist with mobility. Assist. Technol. 2014, 26, 219–226. [Google Scholar] [CrossRef] [PubMed]

- Rizvi, S.T.H.; Asif, M.J.; Ashfaq, H. Visual impairment aid using haptic and sound feedback. In Proceedings of the International Conference on Communication, Computing and Digital Systems (C-CODE), Islamabad, Pakistan, 8–9 March 2017; pp. 175–178. [Google Scholar]

- Sohl-Dickstein, J.; Teng, S.; Gaub, B.M.; Rodgers, C.C.; Li, C.; DeWeese, M.R.; Harper, N.S. A device for human ultrasonic echolocation. IEEE Trans. Biomed. Eng. 2015, 62, 1526–1534. [Google Scholar] [CrossRef] [PubMed]

- Bai, J.; Lian, S.; Liu, Z.; Wang, K.; Liu, D. Virtual-blind-road following-based wearable navigation device for blind people. IEEE Trans. Consum. Electron. 2018, 64, 136–143. [Google Scholar] [CrossRef]

- Patil, K.; Jawadwala, Q.; Shu, F.C. Design and construction of electronic aid for visually impaired people. IEEE Trans. Hum. -Mach. Syst. 2018, 48, 172–182. [Google Scholar] [CrossRef]

- Amemiya, T.; Sugiyama, H. Design of a Haptic Direction Indicator for Visually Impaired People in Emergency Situations. In Proceedings of the International Conference on Computers Helping People with Special Needs. Berlin, 9–11 July 2008; pp. 1141–1144. [Google Scholar]

- Kaushalya, V.; Premarathne, K.; Shadir, H.; Krithika, P.; Fernando, S. ‘AKSHI’: Automated help aid for visually impaired people using obstacle detection and GPS technology. Int. J. Sci. Res. Publ. 2016, 6, 579–583. [Google Scholar]

- Cardillo, E.; Di Mattia, V.; Manfredi, G.; Russo, P.; De Leo, A.; Caddemi, A.; Cerri, G. An electromagnetic sensor prototype to assist visually impaired and blind people in autonomous walking. IEEE Sens. J. 2018, 18, 2568–2576. [Google Scholar] [CrossRef]

- Brown, T. Design thinking. Harv. Bus. Rev. 2008, 86, 84. [Google Scholar]

- Przybilla, L.; Klinker, K.; Wiesche, M.; Krcmar, H. A Human-Centric Approach to Digital Innovation Projects in Health Care: Learnings from Applying Design Thinking. In Proceedings of the PACIS, Yokohama, Japan, 26–30 June 2018; p. 226. [Google Scholar]

- Kaler, J. Morality and strategy in stakeholder identification. J. Bus. Ethics 2002, 39, 91–100. [Google Scholar] [CrossRef]

- Porter, M.E. Industry structure and competitive strategy: Keys to profitability. Financ. Anal. J. 1980, 36, 30–41. [Google Scholar] [CrossRef]

- Kotler, P. Marketing Management; Prentice Hall: Englewood Cliffs, NJ, USA, 2000. [Google Scholar]

- The Design Process: What Is the Double Diamond. Available online: http://www.designcouncil.org.uk/news-opinion/design-process-what-double-diamond (accessed on 26 October 2019).

- Nielsen, J. Enhancing the Explanatory Power of Usability Heuristics. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Boston, MA, USA, 24–28 April 1994; pp. 152–158. [Google Scholar]

- Nassar, V.J.W. Common criteria for usability review. Work 2012, 41 (Suppl. 1), 1053–1057. [Google Scholar] [PubMed]

- Ilas, C. Electronic sensing technologies for autonomous ground vehicles: A review. In Proceedings of the 8th International Symposium on Advanced Topics in Electrical Engineering (ATEE), Bucharest, Romania, 23–25 May 2013; pp. 1–6. [Google Scholar]

- Miao, M.; Spindler, M.; Weber, G. Requirements of indoor navigation system from blind users. In Proceedings of the Symposium of the Austrian HCI and Usability Engineering Group, Graz, Austria, 25–26 November 2011; pp. 673–679. [Google Scholar]

- Lock, J.C.; Cielniak, G.; Bellotto, N. A Portable Navigation System with an Adaptive Multimodal Interface for the Blind. In Proceedings of the AAAI Spring Symposium Series, Stanford, CA, USA, 27–29 March 2017. [Google Scholar]

- Joseph, S.L.; Zhang, X.; Dryanovski, I.; Xiao, J.; Yi, C.; Tian, Y. Semantic indoor navigation with a blind-user oriented augmented reality. In Proceedings of the IEEE International Conference on Systems, Man, and Cybernetics, Manchester, UK, 13–16 October 2013; pp. 3585–3591. [Google Scholar]

- Bujacz, M.; Strumiłło, P. Sonification: Review of auditory display solutions in electronic travel aids for the blind. Arch. Acoust. 2016, 41, 401–414. [Google Scholar] [CrossRef]

- Balan, O.; Moldoveanu, A.; Moldoveanu, F. Navigational audio games: An effective approach toward improving spatial contextual learning for blind people. Int. J. Disabil. Hum. Dev. 2015, 14, 109–118. [Google Scholar] [CrossRef]

- Yang, K.; Wang, K.; Bergasa, L.; Romera, E.; Hu, W.; Sun, D.; Sun, J.; Cheng, R.; Chen, T.; López, E. Unifying terrain awareness for the visually impaired through real-time semantic segmentation. Sensors 2018, 18, 1506. [Google Scholar] [CrossRef]

- Cheraghi, S.A.; Namboodiri, V.; Walker, L. GuideBeacon: Beacon-based indoor wayfinding for the blind, visually impaired, and disoriented. In Proceedings of the IEEE International Conference on Pervasive Computing and Communications (PerCom), Kona, HI, USA, 13–17 March 2017; pp. 121–130. [Google Scholar]

- Mekhalfi, M.L.; Melgani, F.; Zeggada, A.; De Natale, F.G.; Salem, M.A.-M.; Khamis, A. Recovering the sight to blind people in indoor environments with smart technologies. Expert Syst. Appl. 2016, 46, 129–138. [Google Scholar] [CrossRef]

- Long, R.G.; Hill, E. Establishing and maintaining orientation for mobility. Found. Orientat. Mobil. 1997, 1, 49–62. [Google Scholar]

- Pawluk, D.T.; Adams, R.J.; Kitada, R. Designing haptic assistive technology for individuals who are blind or visually impaired. IEEE Trans. Haptics 2015, 8, 258–278. [Google Scholar] [CrossRef]

- Zeagler, C. Where to wear it: Functional, technical, and social considerations in on-body location for wearable technology 20 years of designing for wearability. In Proceedings of the ACM International Symposium on Wearable Computers, Maui, Hawaii, USA, 11–15 September 2017; pp. 150–157. [Google Scholar]

- Mendoza, J.E. Two-Point Discrimination. In Encyclopedia of Clinical Neuropsychology; Kreutzer, J.S., DeLuca, J., Kreutzer, B., Eds.; Springer: New York, NY, USA, 2011. [Google Scholar]

- Zhang, X.; Yao, X.; Zhu, Y.; Hu, F. An ARCore Based User Centric Assistive Navigation System for Visually Impaired People. Appl. Sci. 2019, 9, 989. [Google Scholar] [CrossRef]

- Schinazi, V.R.; Thrash, T.; Chebat, D.R. Spatial navigation by congenitally blind individuals. WIREs Cogn. Sci. 2016, 7, 37–58. [Google Scholar] [CrossRef] [PubMed]

- Majerova, H. The aspects of spatial cognitive mapping in persons with visual impairment. Procedia Soc. Behav. Sci. 2015, 174, 3278–3284. [Google Scholar] [CrossRef][Green Version]

- Nielsen, J. Usability Engineering; Academic Press: Boston, MA, USA, 1993. [Google Scholar]

- Hartson, H.R. Human–computer interaction: Interdisciplinary roots and trends. J. Syst. Softw. 1998, 43, 103–118. [Google Scholar] [CrossRef]

- International Organization for Standardization. Ergonomics of Human-System Interaction: Part. 210: Human-Centred Design for Interactive Systems; ISO: Geneva, Switzerland, 2010. [Google Scholar]

- Velázquez, R.; Pissaloux, E.; Rodrigo, P.; Carrasco, M.; Lay-Ekuakille, A.J.A.S. An Outdoor Navigation System for Blind Pedestrians Using GPS and Tactile-Foot Feedback. Appl. Sci. 2018, 8, 578. [Google Scholar] [CrossRef]

- Danilov, Y.; Tyler, M. Brainport: An alternative input to the brain. J. Integr. Neurosci. 2005, 4, 537–550. [Google Scholar] [CrossRef]

- Continuity Issues. Available online: http://pages.mtu.edu/~shene/COURSES/cs3621/NOTES/curves/continuity.html (accessed on 26 October 2019).

- Bartels, R.H.; Beatty, J.C.; Barsky, B.A. An Introduction to Splines for Use in Computer Graphics and Geometric Modeling; Morgan Kaufmann: Berlington, MA, USA, 1995. [Google Scholar]

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, X.; Zhang, H.; Zhang, L.; Zhu, Y.; Hu, F. Double-Diamond Model-Based Orientation Guidance in Wearable Human–Machine Navigation Systems for Blind and Visually Impaired People. Sensors 2019, 19, 4670. https://doi.org/10.3390/s19214670

Zhang X, Zhang H, Zhang L, Zhu Y, Hu F. Double-Diamond Model-Based Orientation Guidance in Wearable Human–Machine Navigation Systems for Blind and Visually Impaired People. Sensors. 2019; 19(21):4670. https://doi.org/10.3390/s19214670

Chicago/Turabian StyleZhang, Xiaochen, Hui Zhang, Linyue Zhang, Yi Zhu, and Fei Hu. 2019. "Double-Diamond Model-Based Orientation Guidance in Wearable Human–Machine Navigation Systems for Blind and Visually Impaired People" Sensors 19, no. 21: 4670. https://doi.org/10.3390/s19214670

APA StyleZhang, X., Zhang, H., Zhang, L., Zhu, Y., & Hu, F. (2019). Double-Diamond Model-Based Orientation Guidance in Wearable Human–Machine Navigation Systems for Blind and Visually Impaired People. Sensors, 19(21), 4670. https://doi.org/10.3390/s19214670