INSPEX: Optimize Range Sensors for Environment Perception as a Portable System

Abstract

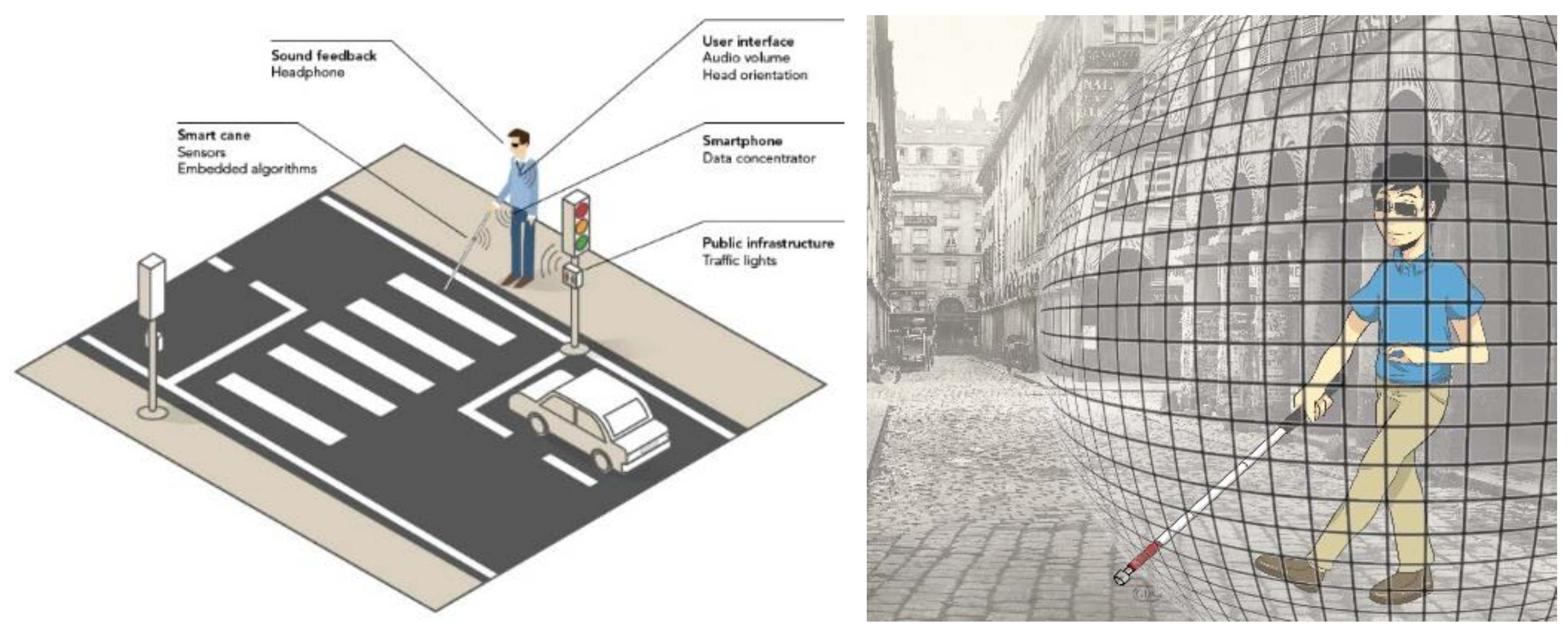

:1. Introduction

2. Related Work

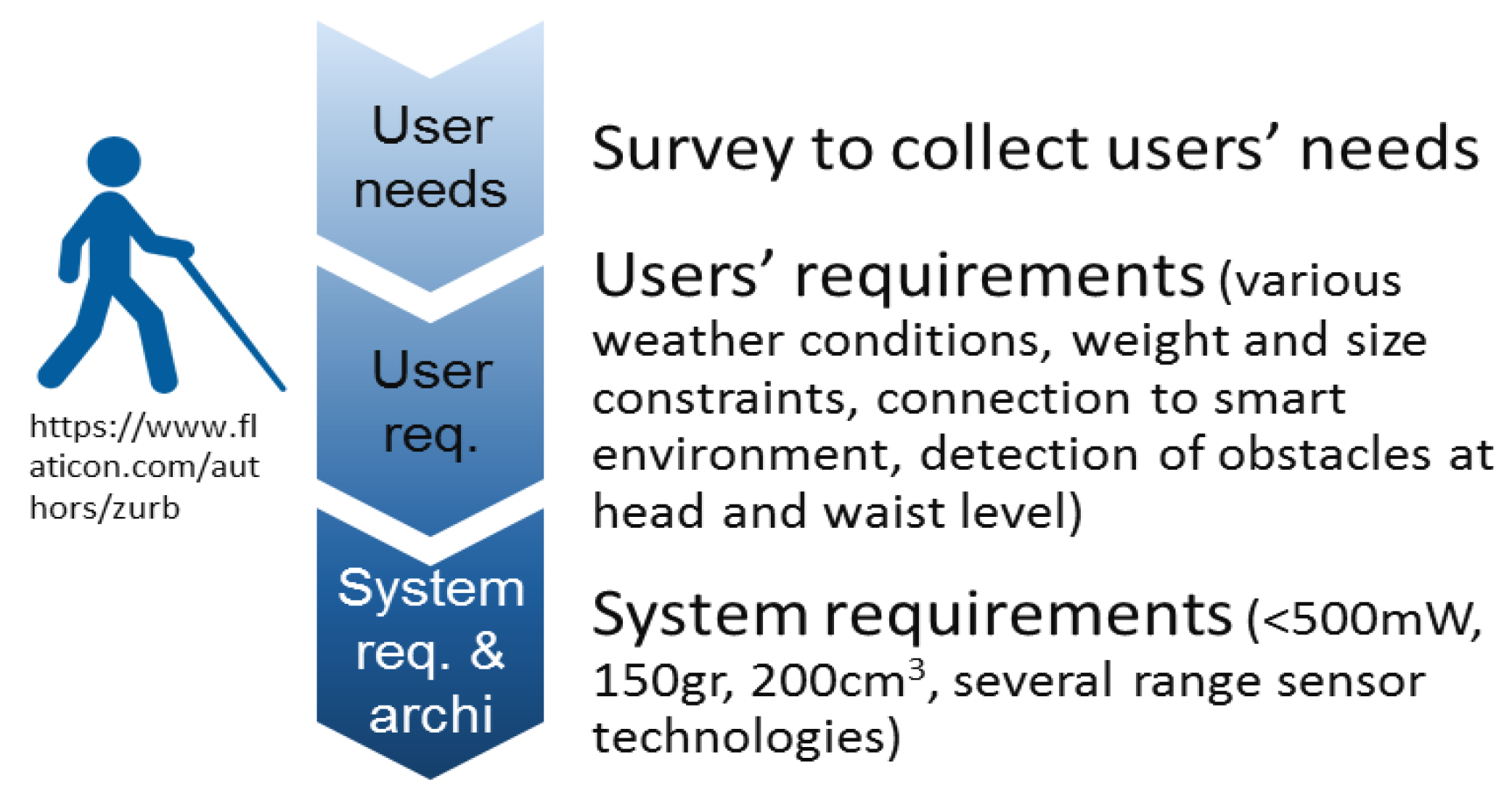

3. INSPEX Methodology

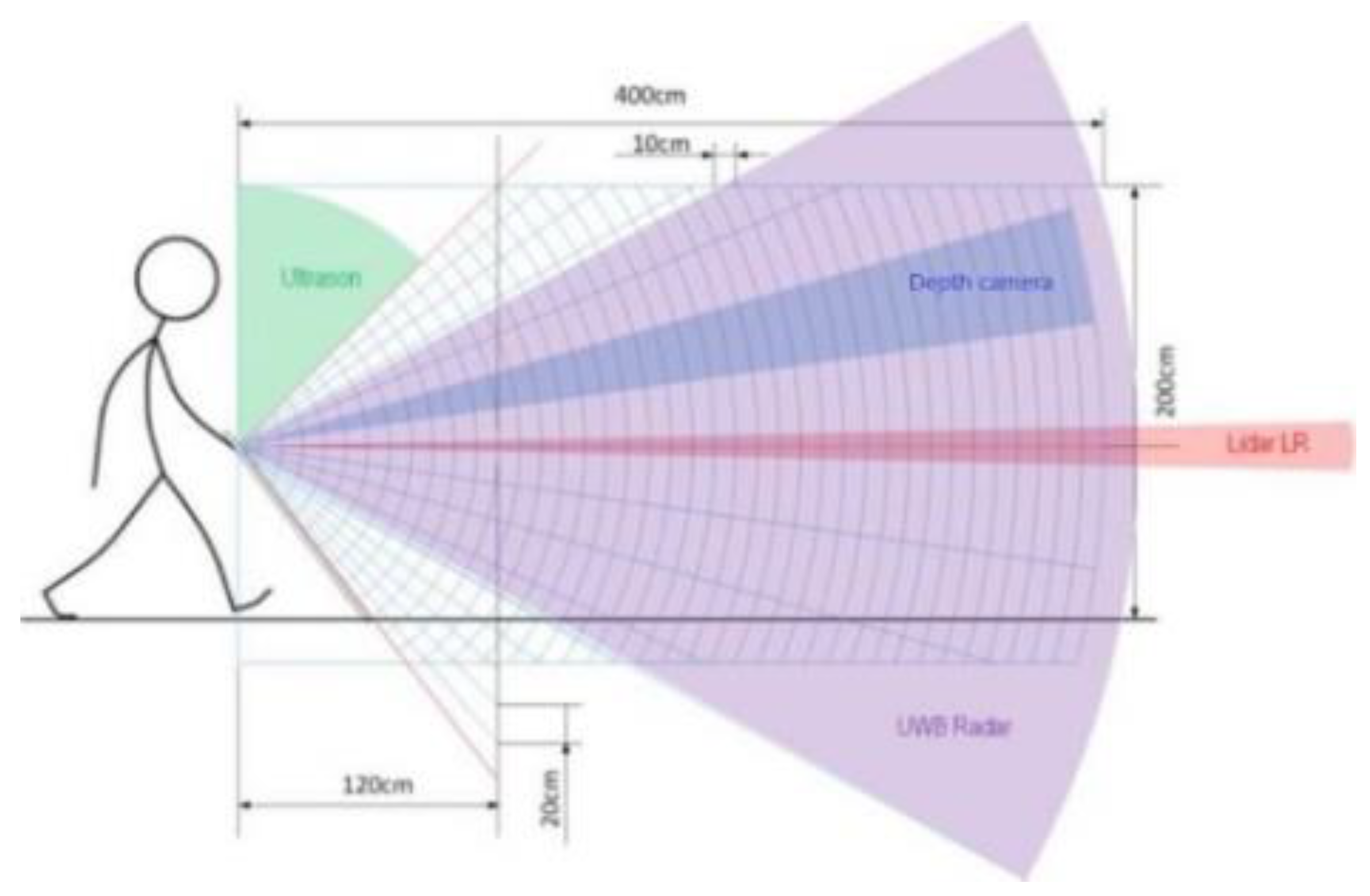

3.1. Overview

3.2. INSPEX and Its Legal Compliance to the GDPR

4. Optimization of Sensors and Their Results

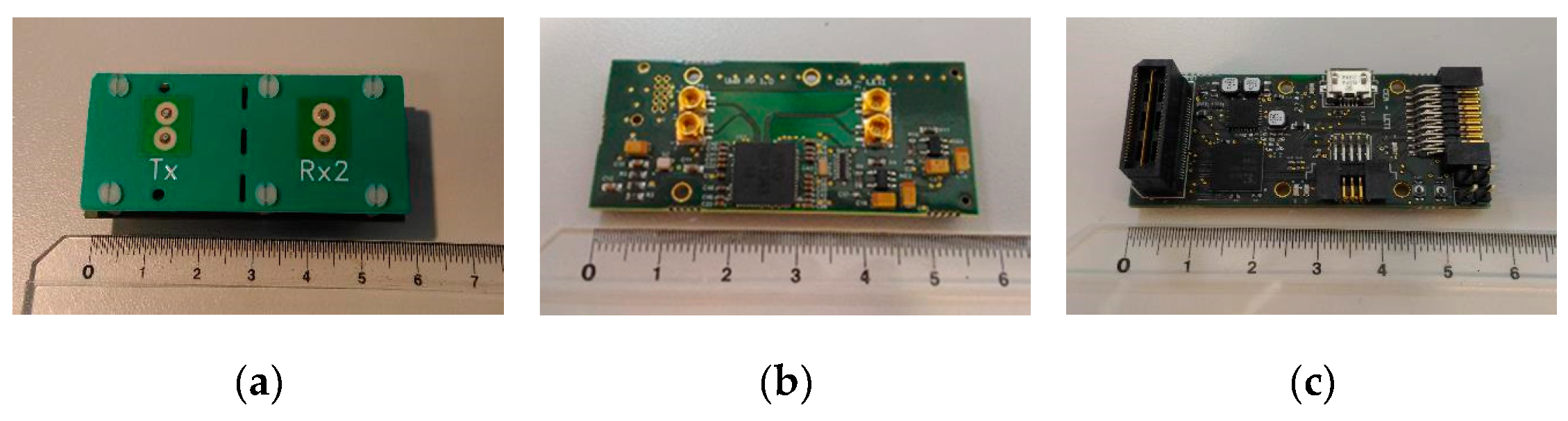

4.1. Ultrasound Module

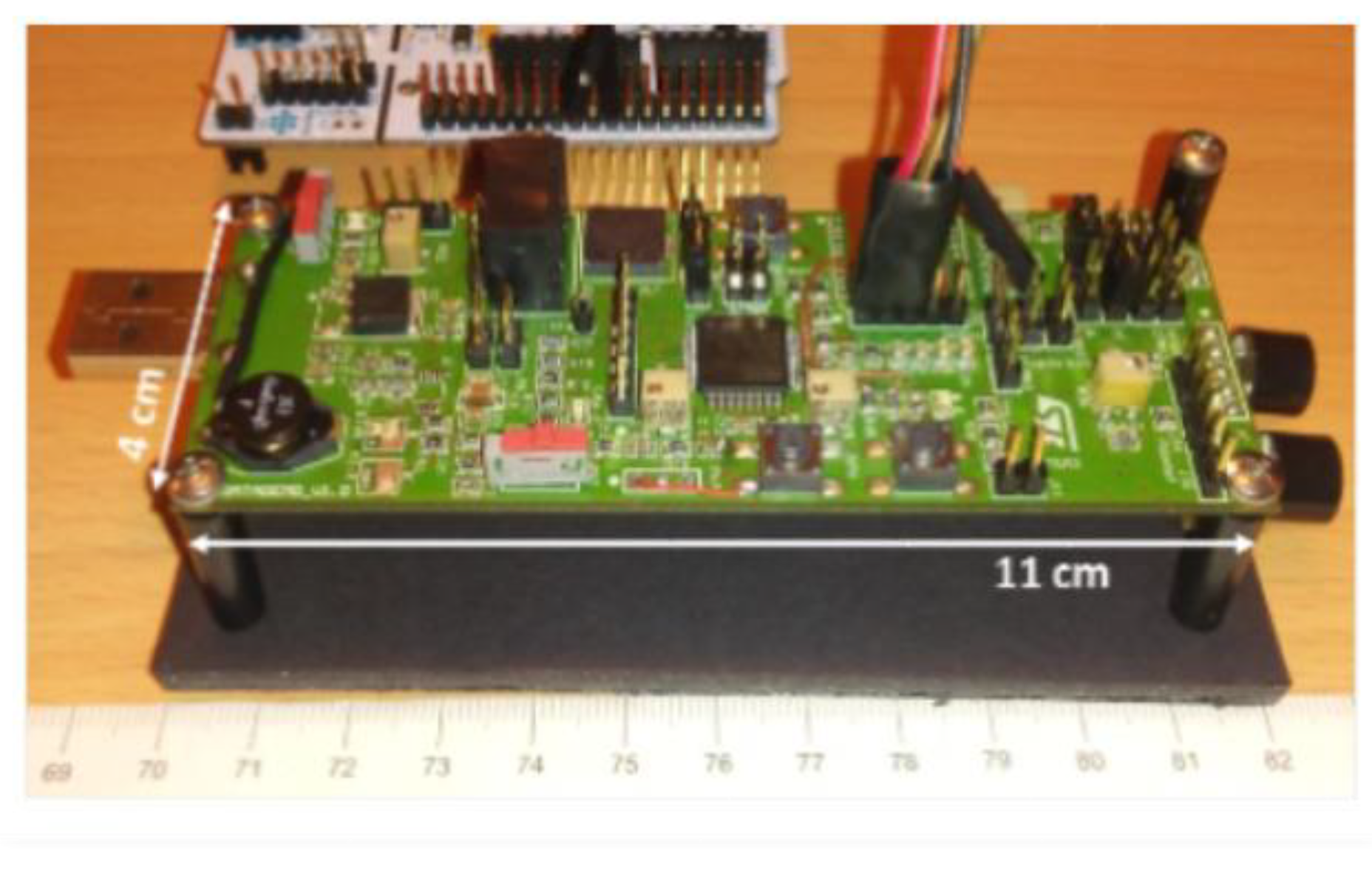

4.1.1. Ultrasound Prototype

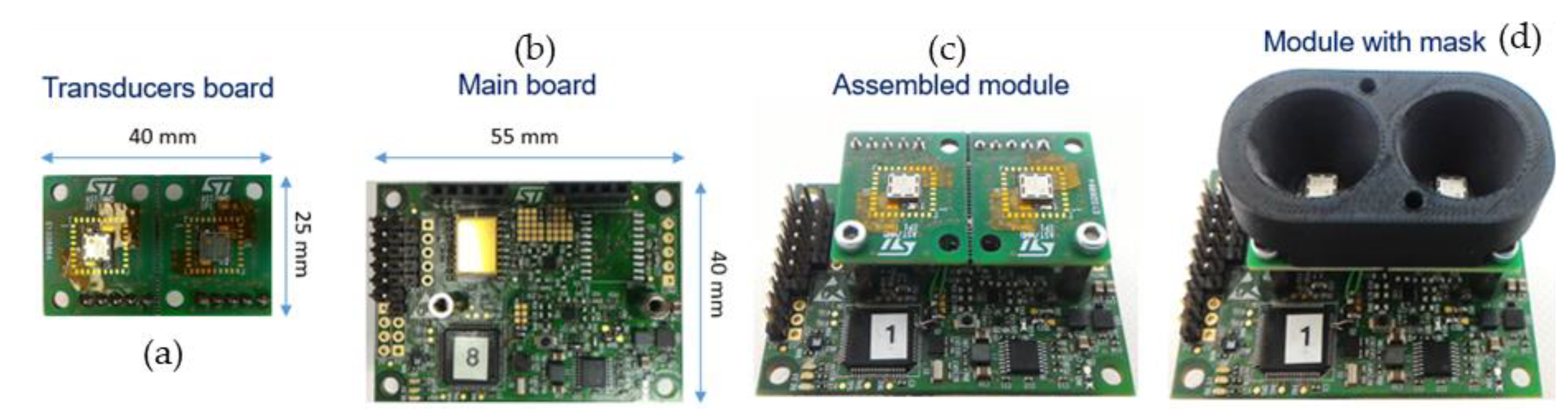

4.1.2. Optimized Module

- Size reduction: the board initial area was halved by careful layout and component selection. The transducers were changed to smaller ones (MA40 HIS from Murata);

- Power consumption: the whole bill of material was revised, in particular the power management section and the microcontroller. Moreover, the firmware was optimized to switch on the different subcircuits only when needed;

- The software algorithm for obstacle detection was changed from threshold-based to cross-correlation-based, and optimized to run as fast as possible on the microcontroller in real-time.

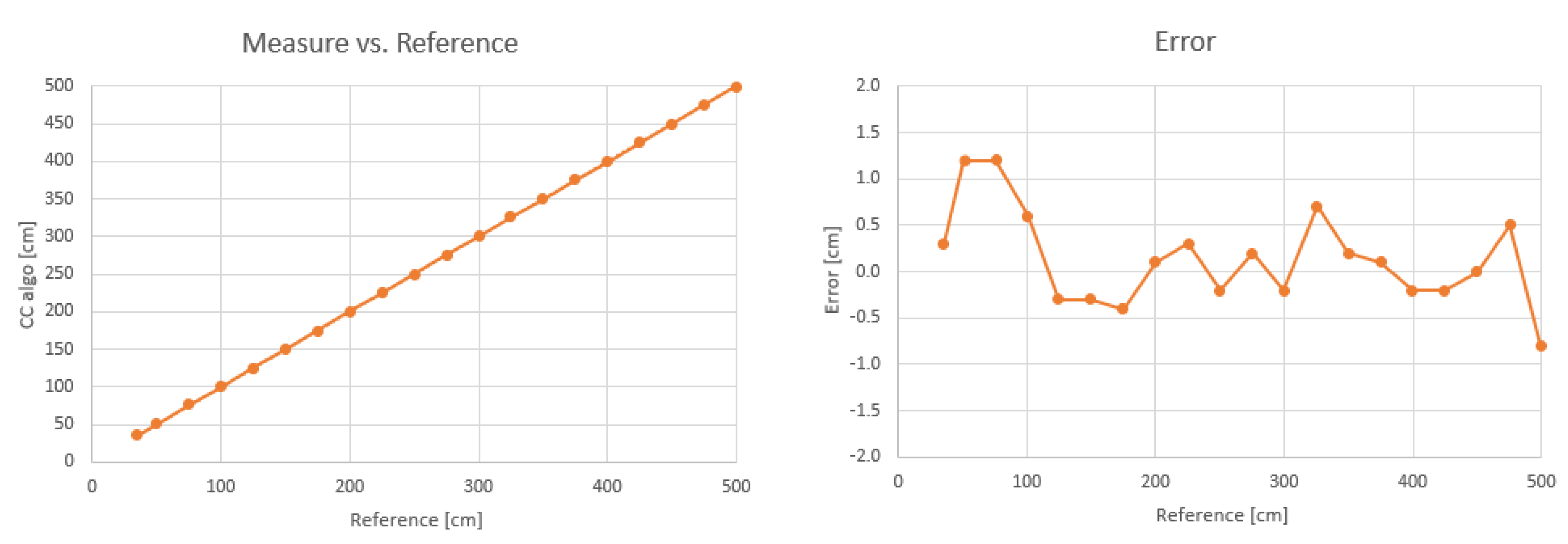

4.1.3. Results for the Optimized Ultrasound Module

4.2. Long-Range LiDAR

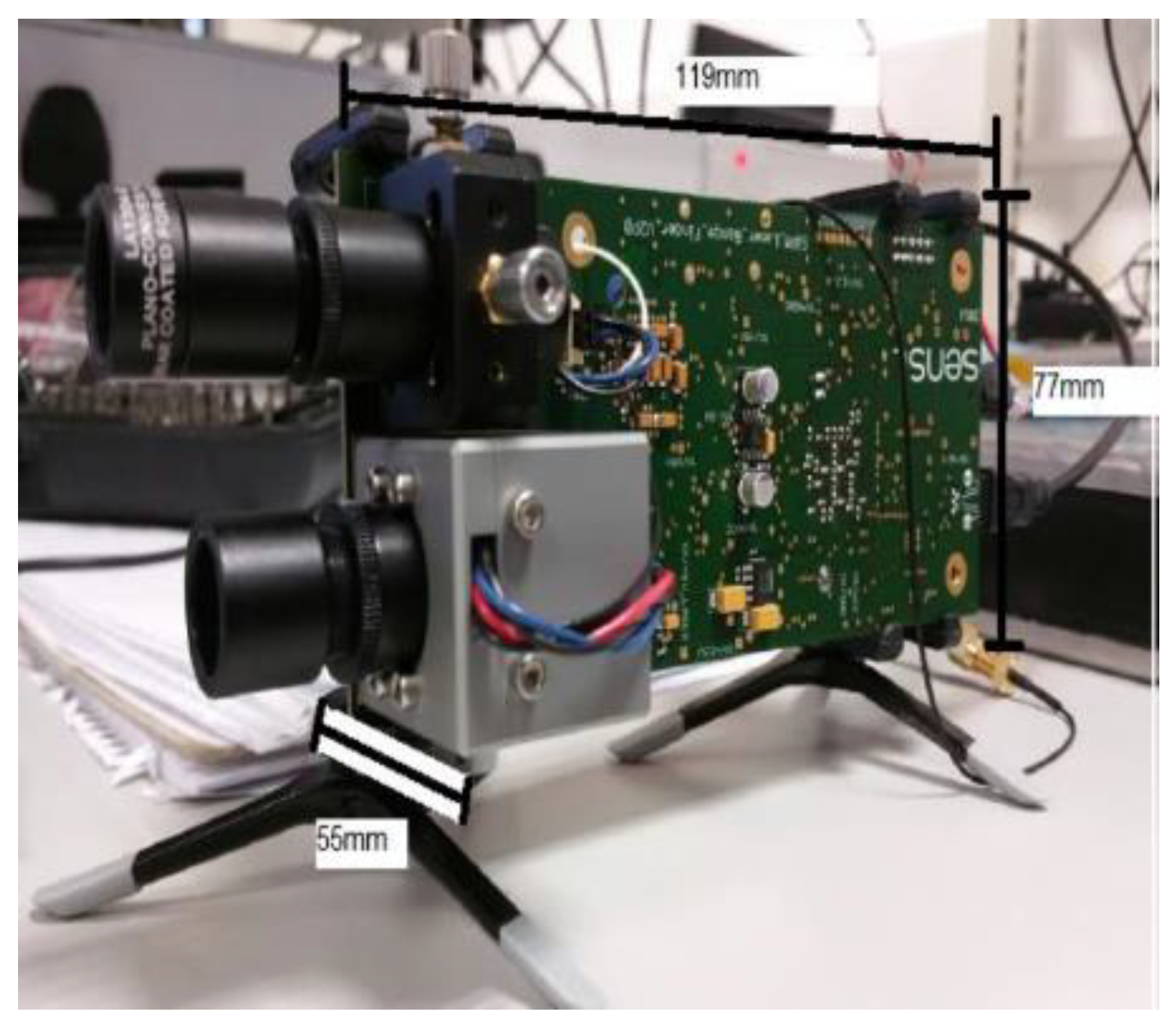

4.2.1. Long-Range LiDAR Prototype

4.2.2. Optimized Module

4.2.3. Results for the Optimized Long-Range LiDAR Module

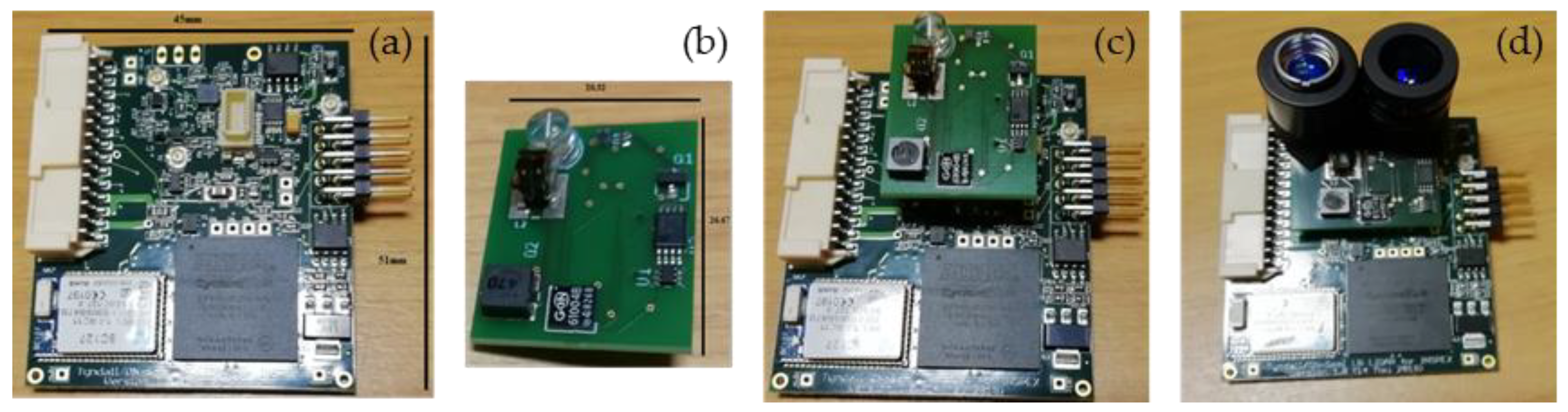

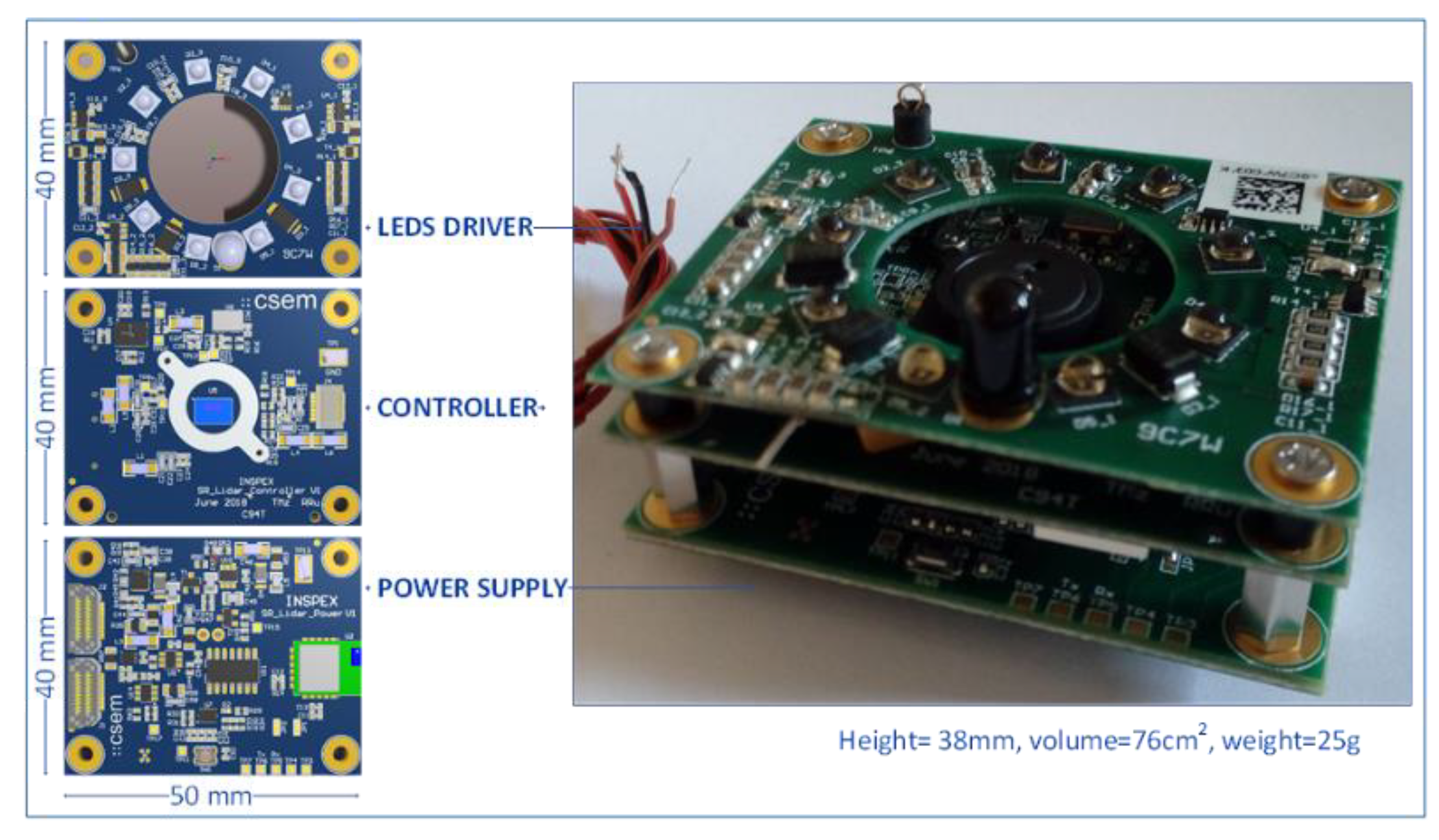

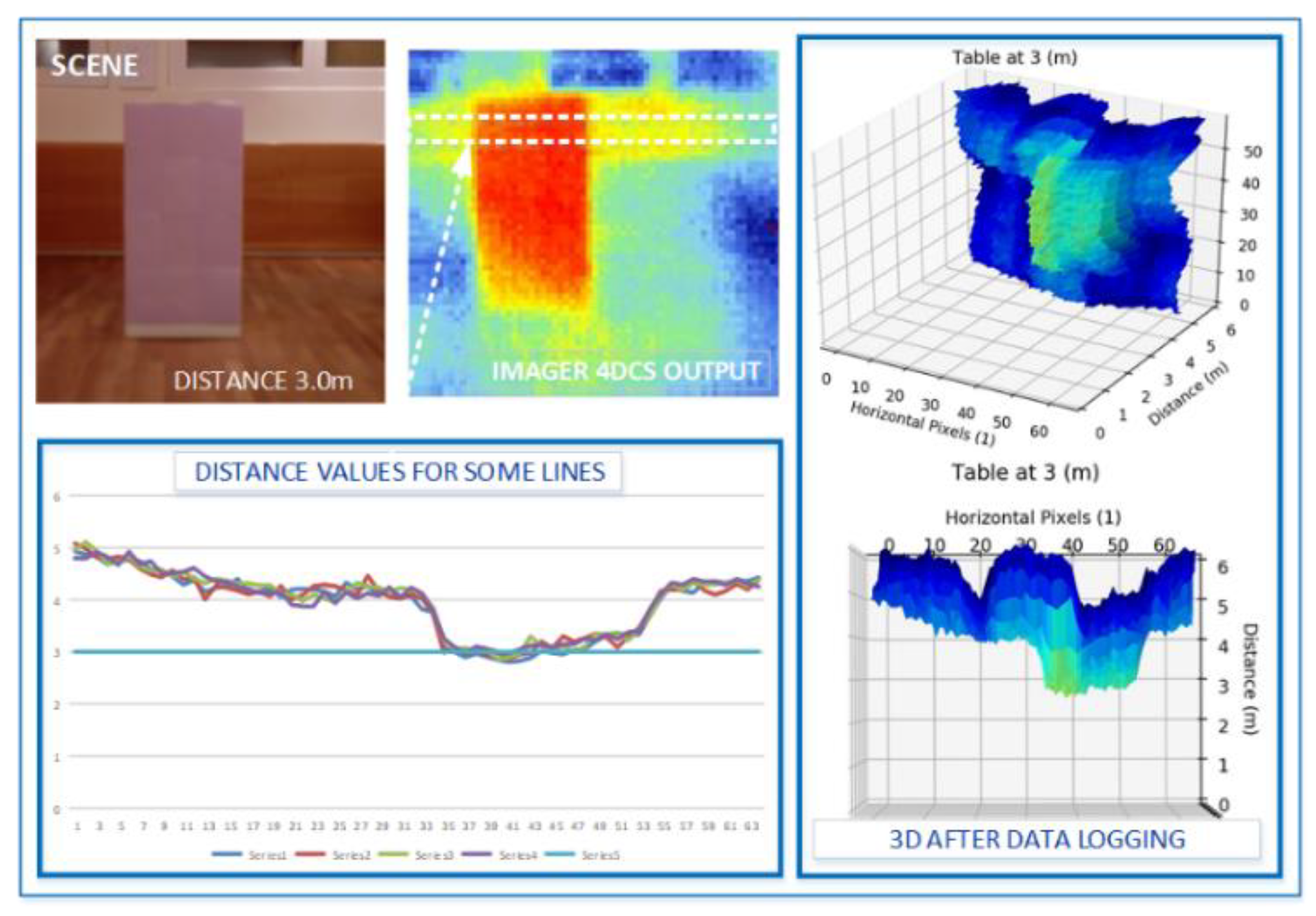

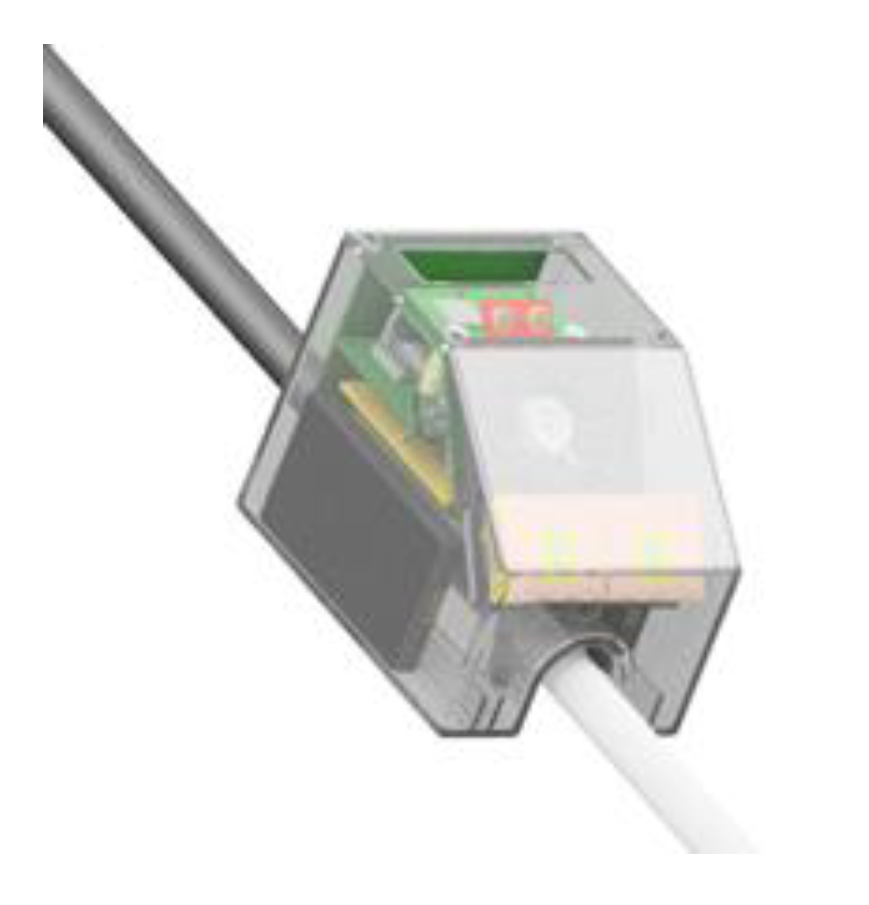

4.3. Depth Camera

4.3.1. Depth Camera Prototype

4.3.2. Optimized Module

4.3.3. Results for the Optimized Depth Camera Module

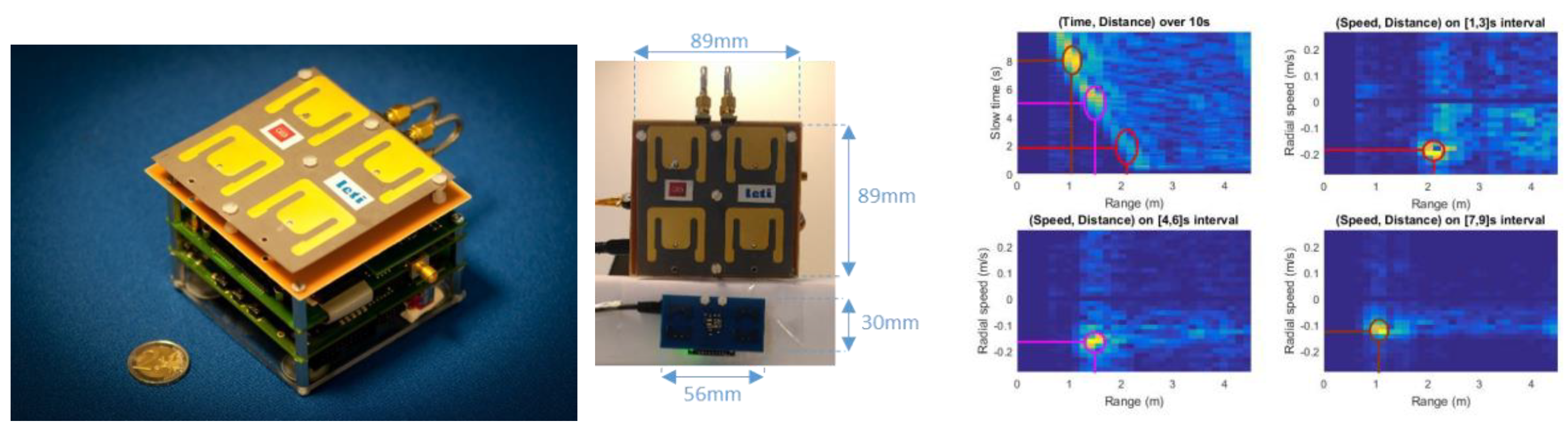

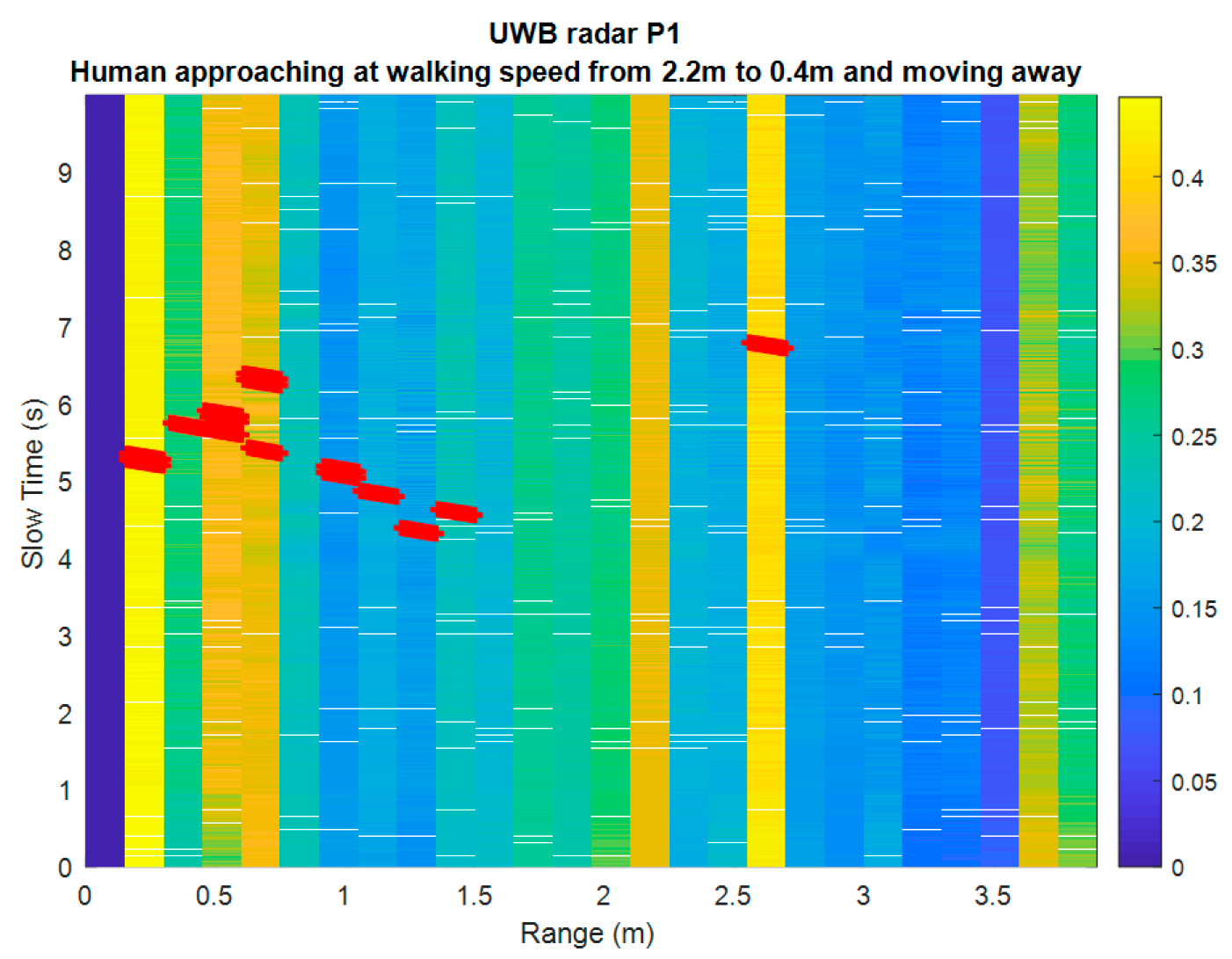

4.4. Ultra-Wideband Radar

4.4.1. Ultra-Wideband Radar Prototype

4.4.2. Optimized Module

- 8 GHz operation (instead of 4 GHz for the prototype);

- Antenna: Azimuth beam 56° (3 dB), 118° (10 dB), Elevation beam 56° (3 dB), 118° (10 dB), linear, vertical polarization;

- Up to 10 m range, 15 cm resolution, 200 Hz raw data refresh rate (acquisition rate);

- Non-ambiguous relative speed estimation over [−1.875, 1.875] m/s;

- Raw data baseband (I, Q) signal over the 64 distance bins (fast “channel” time axis);

- SPI interface to the general processing platform embedded in the overall device;

- Low-level signal Processing performed on the INSPEX main computing platform.

4.4.3. Results

- The effective isotropic radiated power (EIRP) output power is reduced by 8 dB (~6 dB for the integrated circuit itself and ~2 dB for the antenna with a larger elevation beam);

- The path loss is increased by 6 dB for the same distance since the frequency is doubled;

- The receiver antenna gain is reduced by 2 dB as for the transmitter one;

- The receiver noise is degraded by about 9 dB, which was measured for the ultra-wideband integrated circuit.

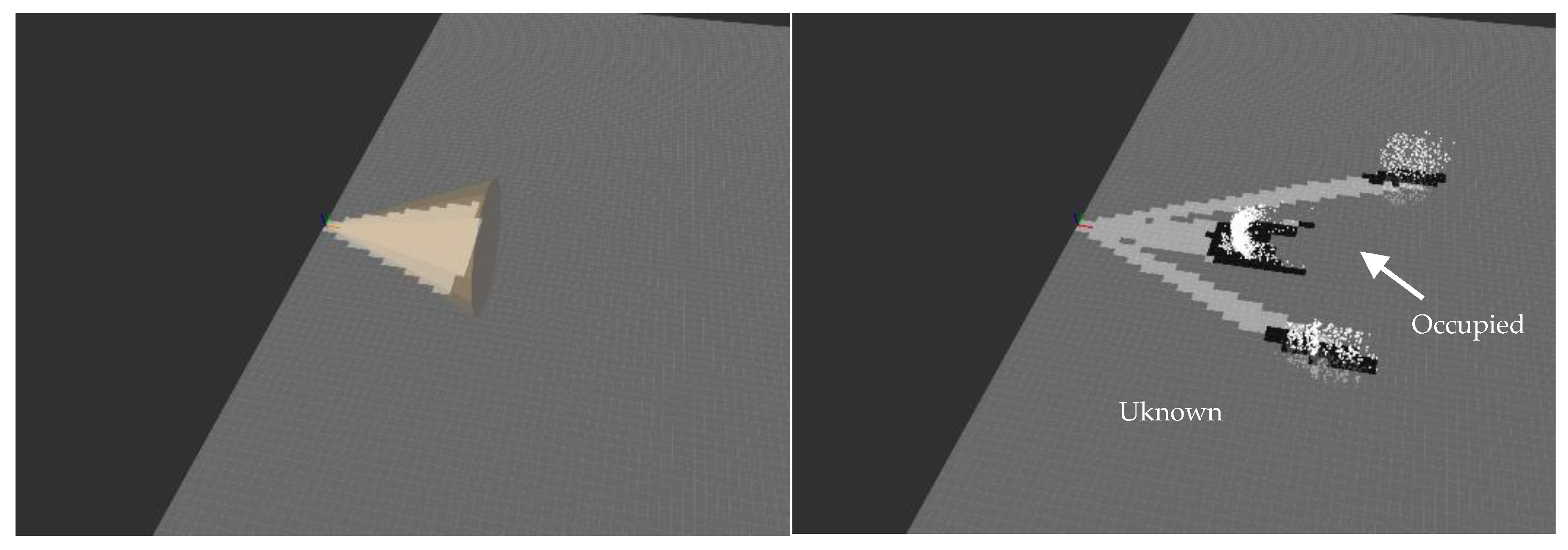

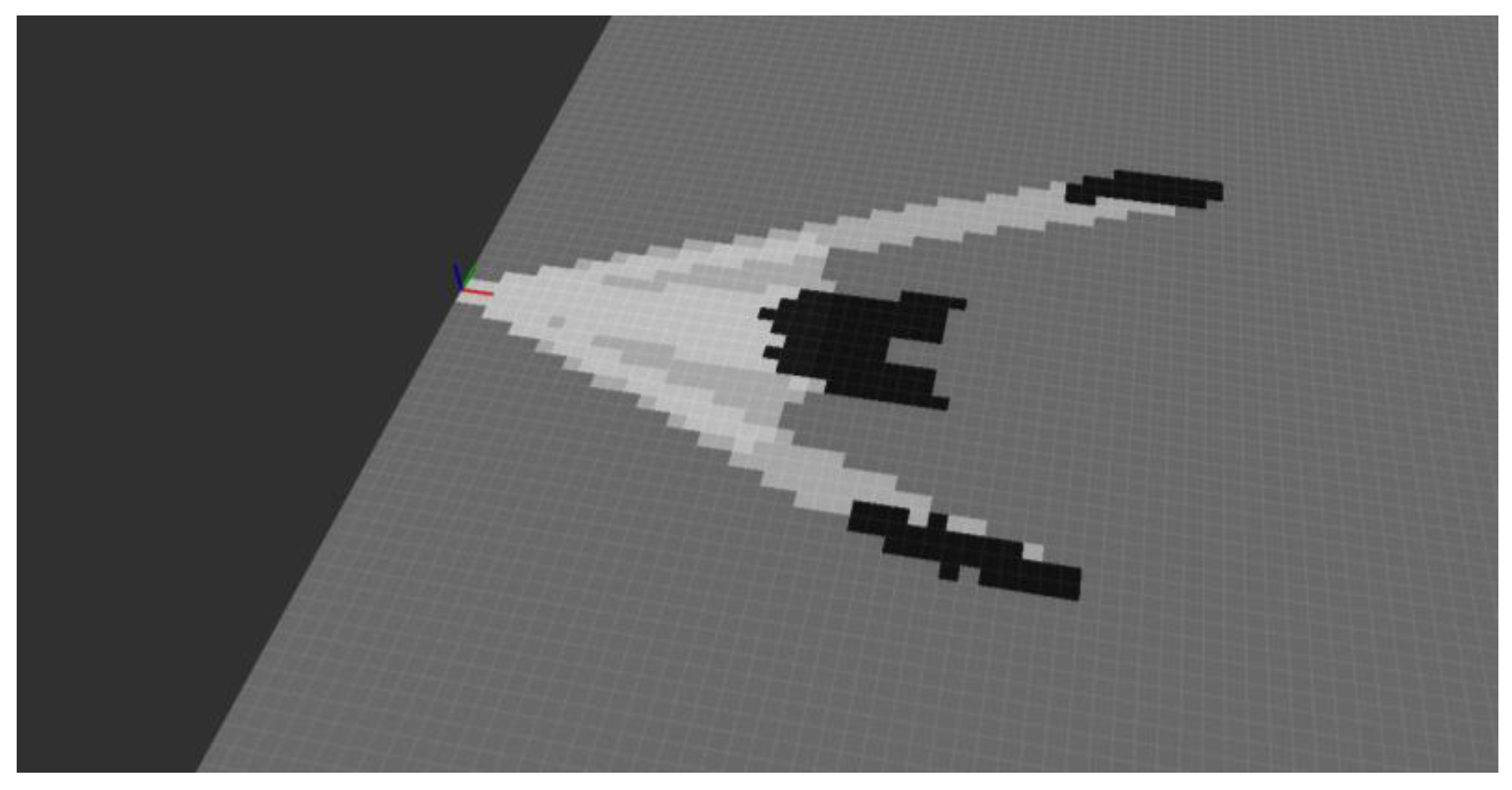

5. Data Fusion to Build a Model of the Environment

6. Discussion, and Future Work Directions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Manduchi, R.; Kurniawan, S. Mobility-related accidents experienced by people with visual impairment. Res. Pract. Vis. Impair. Blind. 2011, 4, 1–11. [Google Scholar]

- Sigrist, R.; Rauter, G.; Riene, R.; Wolf, P. Augmented visual, auditory, haptic, and multimodal feedback in motor learning: A review. Psychon. Bull. Rev. 2013, 20, 21–53. [Google Scholar] [CrossRef] [PubMed]

- EU Data Protection Rules Website. Available online: https://ec.europa.eu/commission/priorities/justice-and-fundamental-rights/data-protection/2018-reform-eu-data-protection-rules_en (accessed on 7 October 2019).

- Li, K. Electronic Travel Aids for Blind Guidance—An Industry Landscape Study; ECS: Berkeley, CA, USA, 2015. [Google Scholar]

- Dakopoulos, D.; Bourbakis, N.G. Wearable obstacle avoidance electronic travel aids for blind: A survey. IEEE Trans. Syst. Man Cybern. Part C Appl. Rev. 2010, 40, 25–35. [Google Scholar] [CrossRef]

- World Health Organization. Blindness and Vision Impairment. Available online: https://www.who.int/news-room/fact-sheets/detail/blindness-and-visual-impairment (accessed on 14 June 2019).

- Bourne, R.R.A.; Flaxman, S.R.; Braithwaite, T.; Cicinelli, M.V.; Das, A.; Jonas, J.B.; Keeffe, J.; Kempen, J.H.; Leasher, J.; Limburg, H.; et al. (Vision Loss Expert Group). Magnitude, temporal trends, and projections of the global prevalence of blindness and distance and near vision impairment: A systematic review and meta-analysis. Lancet Glob. Health 2017, 5, 888–897. [Google Scholar] [CrossRef]

- Mattia, V.D.; Manfredi, G.; Leo, A.D.; Russo, P.; Scalise, L.; Cerri, G. A feasibility study of a compact radar system for autonomous walking of blind people. In Proceedings of the IEEE 2nd International Forum on Research and Technologies for Society and Industry Leveraging a better tomorrow (RTSI), Bologna, Italy, 7–9 September 2016. [Google Scholar] [CrossRef]

- Cardillo, E.; Mattia, V.D.; Manfredi, G.; Russo, P.; Leo, A.D.; Caddemi, A.; Cerri, G. An electromagnetic sensor prototype to assist visually impaired and blind people in autonomous walking. IEEE Sens. J. 2018, 18, 2568–2576. [Google Scholar] [CrossRef]

- Dang, Q.K.; Chee, Y.; Pham, D.D.; Suh, Y.S. A virtual blind cane using a line laser-based vision system and an inertial measurement unit. Sensors 2016, 16, 95. [Google Scholar] [CrossRef] [PubMed]

- Al-Fahoum, A.S.; Al-Hmoud, H.B.; Al-Fraihat, A.A. A Smart Infrared Microcontroller-Based Blind Guidance System. Act. Passive Electron. Compon. 2013, 2013, 1–7. [Google Scholar] [CrossRef] [Green Version]

- Kedia, R.; Yoosuf, K.K.; Dedeepya, P.; Fazal, M.; Arora, C.; Balakrishnan, M. MAVI: An Embedded Device to Assist Mobility of Visually Impaired. In Proceedings of the 30th International Conference on VLSI Design and 16th Int. Conf. on Embedded Systems (VLSID), Hyderabad, India, 7–11 January 2017. [Google Scholar] [CrossRef]

- Poggi, M.; Mattoccia, S. A wearable mobility aid for the visually impaired based on embedded 3D vision and deep learning. In Proceedings of the 2016 IEEE Symposium on Computers and Communication (ISCC), Messina, Italy, 27–30 June 2016. [Google Scholar] [CrossRef]

- Mocanu, B.; Tapu, R.; Zaharia, T. When ultrasonic sensors and computer vision join forces for efficient obstacle detection and recognition. Sensors 2016, 16, 1807. [Google Scholar] [CrossRef] [PubMed]

- Rakotovao, T.; Mottin, J.; Puschini, D.; Laugier, C. Multi-sensor fusion of occupancy grids based on integer arithmetic. In Proceedings of the 2016 IEEE International Conference on Robotics and Automation (ICRA), Stockholm, Sweden, 16–21 May 2016. [Google Scholar] [CrossRef]

- Elfes, A. Using occupancy grids for mobile robot perception and navigation. Computer 1989, 22, 46–57. [Google Scholar] [CrossRef]

- Hersh, M.A.; Johnson, M.A. Assistive Technology for Visually Impaired and Blind People; Springer: London, UK, 2008. [Google Scholar] [CrossRef]

- INSPEX Consortium, Deliverable D1.3—Use Cases and Applications, Preliminary Version (Part 1). Available online: http://www.inspex-ssi.eu/Documents/INSPEX-D1.3-Use-Cases-and%20Applications%20v2017-06-30-18h20%20part1.pdf (accessed on 8 October 2019).

- Lesecq, S.; Foucault, J.; Correvon, M.; Heck, P.; Barrett, J.; Rea, S.; Birot, F.; de Chaumont, H.; Banach, R.; Van Gyseghem, J.-M.; et al. INSPEX: Design and integration of a portable/wearable smart spatial exploration system. In Proceedings of the Design, Automation & Test in Europe Conference & Exhibition (DATE), Lausanne, Switzerland, 27–31 March 2017. [Google Scholar] [CrossRef]

- Debicki, O.; Mareau, N.; Ouvry, L.; Foucault, J.; Lesecq, S.; Dudnik, G.; Correvon, M. INSPEX: Integrated Portable Multi-Sensor Obstacle Detection Device: Application to Navigation for Visually Impaired People. Available online: https://scholar.google.com/scholar?hl=pt-BR&as_sdt=0%2C5&q=20.%09Debicki+O.%2C+Mareau+N.%2C+Ouvry+L.%2C+Foucault+J.%2C+Lesecq+S.%2C+Dudnik+G.%2C+Correvon+M.%2C+INSPEX%3A+Integrated+portable+multi-sensor+obstacle+detection+device%3A+Application+to+navigation+for+visually+impaired+people.+Design+and+Automation+Conference+%28DAC%29%2C+IP+Track+%28Best+presentation+award%29%2C+San+Francisco%2C+USA%2C+24-28%2F06%2F2018.&btnG= (accessed on 8 October 2019).

- Technology Readiness Level (TRL)—Definition from Horizon 2020 Work Programme 2014–2015. Available online: https://ec.europa.eu/research/participants/data/ref/h2020/wp/2014_2015/annexes/h2020-wp1415-annex-g-trl_en.pdf (accessed on 5 June 2019).

- Marioli, D.; Narduzzi, C.; Offelli, C.; Petri, D.; Sardini, E.; Taroni, A. Digital time-of-flight measurement for ultrasonic sensors. IEEE Trans. Instrum. Meas. 1992, 41, 93–97. [Google Scholar] [CrossRef]

- Epc635 Evaluation Kit. Available online: https://www.espros.com/photonics/epc635-evaluation-kit/ (accessed on 5 June 2019).

- Epc635 Imager. Available online: https://www.espros.com/photonics/epc635/#picture (accessed on 5 June 2019).

- Banach, R.; Razavi, J.; Lesecq, S.; Debicki, O.; Mareau, N.; Foucault, J.; Correvon, M.; Dudnik, G. Formal Methods in Systems Integration: Deployment of Formal Techniques in INSPEX. In Proceedings of the International Conference on Complex Systems Design & Management, Paris, France, 18–19 December 2018. [Google Scholar] [CrossRef]

- GoSense Airdrives Description. Available online: http://www.gosense.com/fr/airdrives (accessed on 5 June 2019).

| Sensor Characteristics | Ultrasound | Long-Range LiDAR | Depth Camera | Ultra-Wideband Radar |

|---|---|---|---|---|

| Measurement range | >1 m | 10 m | 4 m | 4 m |

| Consumption | <100 mW | <200 mW | <100 mW | <600 mW |

| Size | <50 cm3 | <150 cm3 | <100 cm3 | <50 cm3 |

| Weight | <30 g | <50 g | <50 g | <50 g |

| Field-of-view | in [20°, 40°] | < 5° | >30° | >50° |

| Characteristics | Prototype | Optimized (Designed) | Requirements |

|---|---|---|---|

| Weight (g) | 44 | 25 | <30 |

| Dimension (cm3) | 11 × 4 × 3 | 5.5 × 4 × 2 = 44 | <50 |

| Power (mW) | 400 | 70 | <100 |

| Range (m) | <1 | >5 | >1 |

| Shape | Material | Dimension | Intensity (Arbitrary Unit) |

|---|---|---|---|

| Flat surface | wall | - | 2450 |

| Cylinder | aluminum | diam. 58 mm | 535 |

| Cylinder | steel (painted) | diam. 35 mm | 390 |

| Cylinder | plastic | diam. 18 mm | 275 |

| Cylinder | plastic | diam. 8 mm | 205 |

| Cylinder | wood (painted) | diam. 6 mm | 125 |

| Sphere | expanded polystyrene | diam. 244 mm | 225 |

| Sphere | expanded polystyrene | diam. 150 mm | 125 |

| Sphere | expanded polystyrene | diam. 100 mm | 120 |

| Characteristics | Prototype | Optimized (Design) | Optimized (Measured) | Requirements |

|---|---|---|---|---|

| Weight (g) | 465 | 70 | 50 | 50 |

| Dimension (cm3) | 11.9 × 7.7 × 5.5 = 465.8 | 6 × 7.7 × 3 = 138.6 | 4.5 × 5.1 × 3 = 68.85 | <150 |

| Power (mW) | 1320 | 500 | 400 | 100 |

| Range (m) | 25 | 10 | 10 | 10 |

| Characteristics | Prototype | Optimized | Requirements |

|---|---|---|---|

| Weight (g) | >100 | 50 | 50 |

| Dimension (cm3) | 9 × 7 × 5 = 315 | 5 × 4 × 3.8 = 76 | <100 |

| Power (mW) | >1000 | 140 | 100 |

| Range (m) | >10 | 4.5 | 4 |

| Characteristics | Prototype | Optimized | Requirements |

|---|---|---|---|

| Weight (g) | 200 | 25 | <50 |

| Dimension (cm3) | 9 × 9 × 3.5 | 5.6 × 2 × 3 = 33.6 | <50 |

| Power (mW) | 825 | 825 | <600 |

| Range (m) | 3–5 | 2 | 4 |

| Sensor Characteristics (Required/Achieved) | Ultrasound | Long-Range LiDAR | Depth Camera | Ultra-Wideband Radar |

|---|---|---|---|---|

| Measurement range (m) | > 1/> 2 | 10/10 | 4/4.5 | 4/2 |

| Consumption (mW) | <100/100 | <200/400 | <100/140 | <600/825 |

| Size (cm3) | <50/44 | <150/138.6 | <100/76 | <50/33.5 |

| Weight (g) | <30/25 | <50/50 | <50/50 | <50/25 |

| Field-of-view | in [20°, 40°]/25° | <5°/- | >30°/V33.5°× H40° | >50°/V56°× H56° |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Foucault, J.; Lesecq, S.; Dudnik, G.; Correvon, M.; O’Keeffe, R.; Di Palma, V.; Passoni, M.; Quaglia, F.; Ouvry, L.; Buckley, S.; et al. INSPEX: Optimize Range Sensors for Environment Perception as a Portable System. Sensors 2019, 19, 4350. https://doi.org/10.3390/s19194350

Foucault J, Lesecq S, Dudnik G, Correvon M, O’Keeffe R, Di Palma V, Passoni M, Quaglia F, Ouvry L, Buckley S, et al. INSPEX: Optimize Range Sensors for Environment Perception as a Portable System. Sensors. 2019; 19(19):4350. https://doi.org/10.3390/s19194350

Chicago/Turabian StyleFoucault, Julie, Suzanne Lesecq, Gabriela Dudnik, Marc Correvon, Rosemary O’Keeffe, Vincenza Di Palma, Marco Passoni, Fabio Quaglia, Laurent Ouvry, Steven Buckley, and et al. 2019. "INSPEX: Optimize Range Sensors for Environment Perception as a Portable System" Sensors 19, no. 19: 4350. https://doi.org/10.3390/s19194350

APA StyleFoucault, J., Lesecq, S., Dudnik, G., Correvon, M., O’Keeffe, R., Di Palma, V., Passoni, M., Quaglia, F., Ouvry, L., Buckley, S., Herveg, J., di Matteo, A., Rakotovao, T., Debicki, O., Mareau, N., Barrett, J., Rea, S., McGibney, A., Birot, F., ... Ó’Murchú, C. (2019). INSPEX: Optimize Range Sensors for Environment Perception as a Portable System. Sensors, 19(19), 4350. https://doi.org/10.3390/s19194350