Toward Dynamically Adaptive Simulation: Multimodal Classification of User Expertise Using Wearable Devices

Abstract

:1. Introduction

2. Related Work

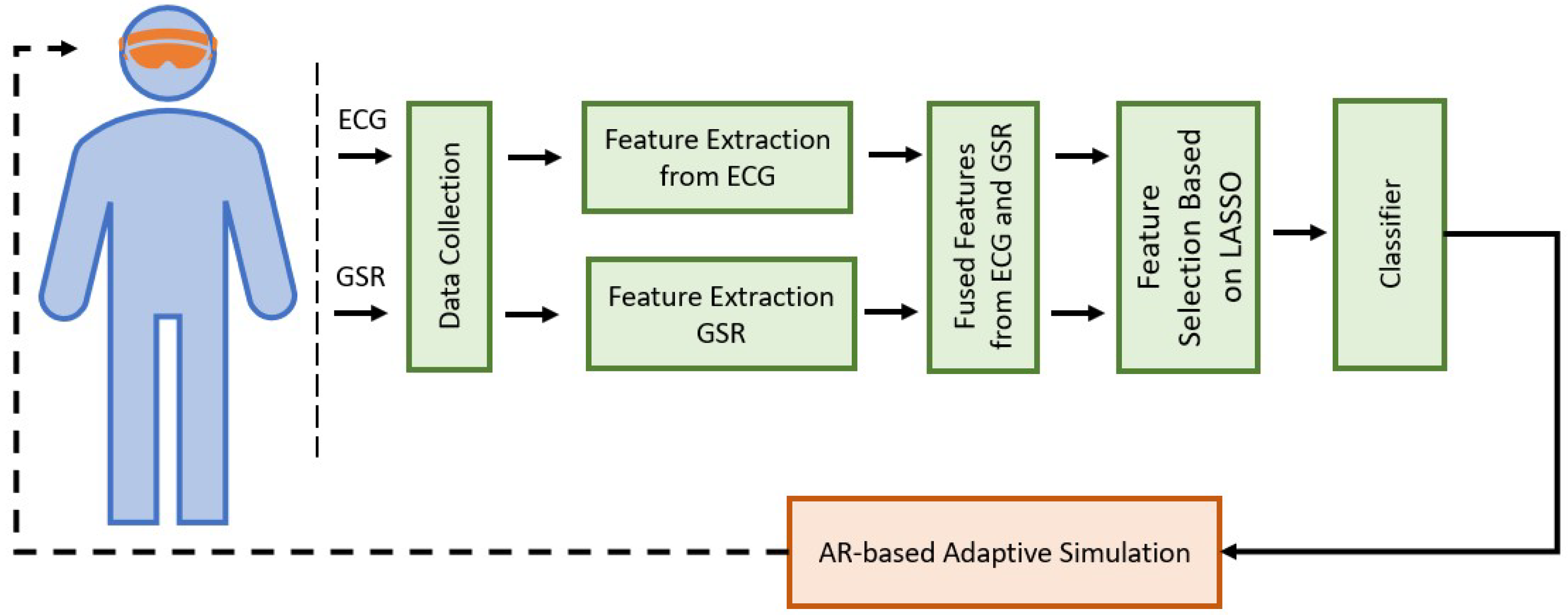

3. Materials and Methods

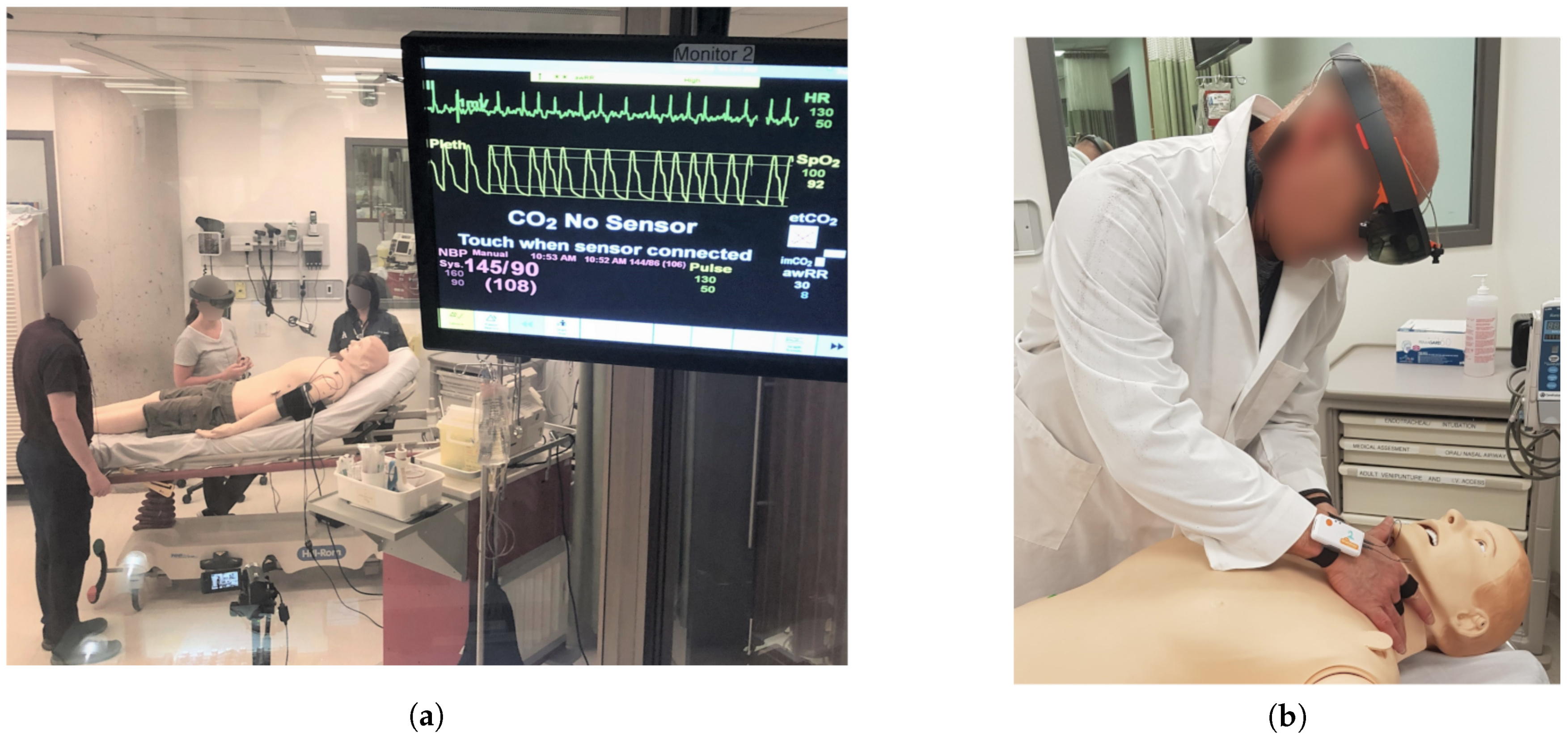

3.1. Simulation Design

3.1.1. Protocol and Subjects

3.1.2. Sensors and Data

3.2. Analysis and Classification

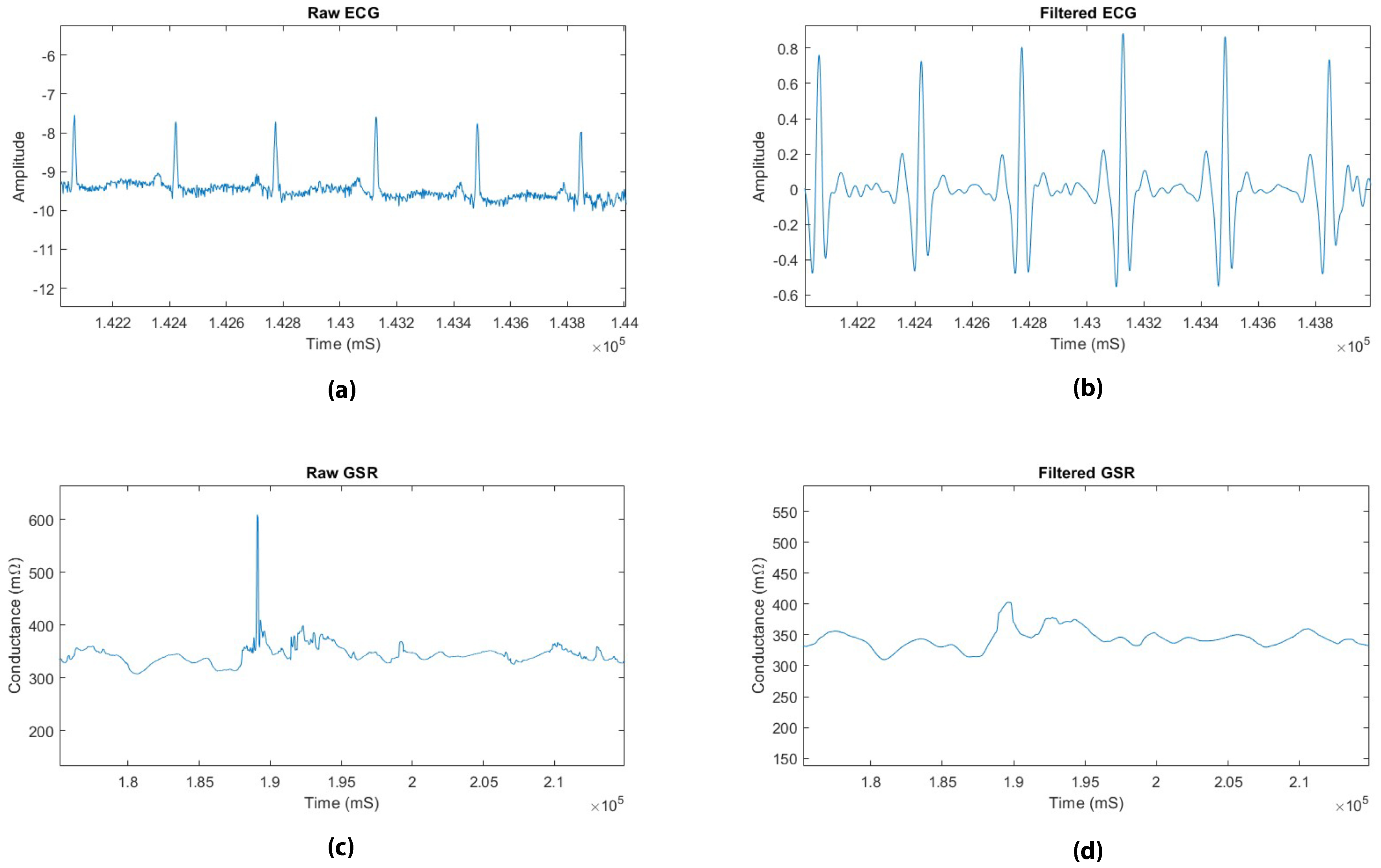

3.2.1. Pre-Processing

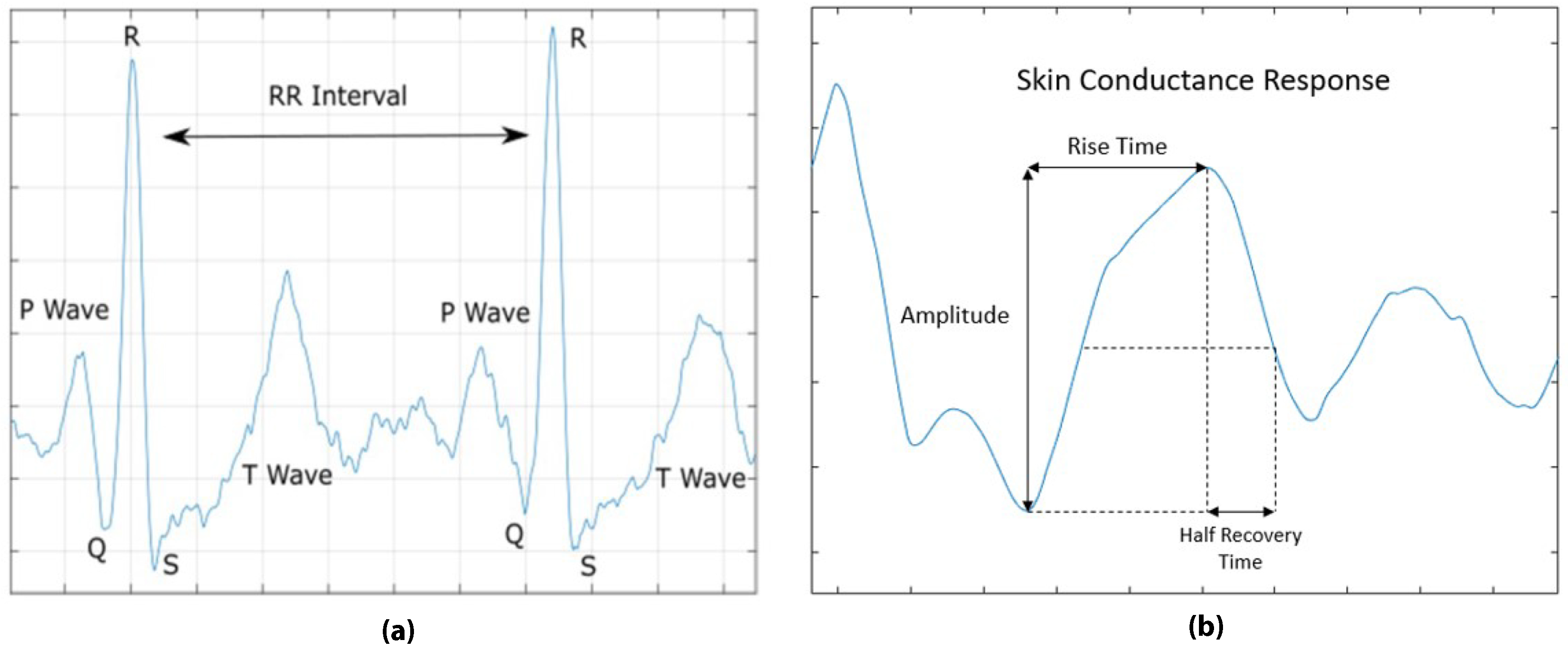

3.2.2. Feature Extraction

3.2.3. Feature Space Exploration

3.2.4. Feature Selection

3.2.5. Classification

3.2.6. Evaluation

4. Results and Discussion

4.1. t-SNE Based Projection

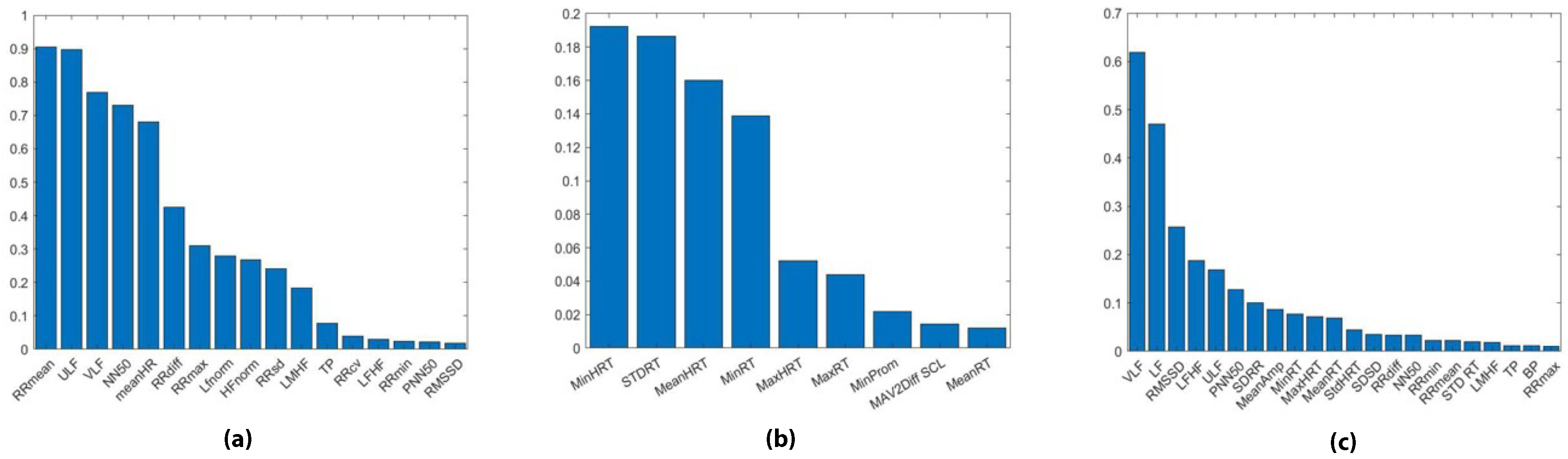

4.2. LASSO Feature Ranking

4.3. Classification

5. Conclusions and Future Work

Author Contributions

Funding

Conflicts of Interest

References

- Motola, I.; Devine, L.A.; Chung, H.S.; Sullivan, J.E.; Issenberg, S.B. Simulation in healthcare education: A best evidence practical guide. AMEE Guide No. 82. Med. Teach. 2013, 35, e1511–e1530. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Good, M.L. Patient simulation for training basic and advanced clinical skills. Med. Educ. 2003, 37, 14–21. [Google Scholar] [CrossRef] [PubMed]

- Susan, G. Simulation Techniques to Bridge the Gap between Novice and Competent Healthcare Professionals. Online J. Issues Nursing 2009, 14. [Google Scholar] [CrossRef]

- Aggarwal, R.; Mytton, O.T.; Derbrew, M.; Hananel, D.; Heydenburg, M.; Issenberg, B.; MacAulay, C.; Mancini, M.E.; Morimoto, T.; Soper, N.; et al. Training and simulation for patient safety. BMJ Qual. Saf. 2010, 19, i34–i43. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Rystedt, H.; Sjöblom, B. Realism, authenticity, and learning in healthcare simulations: Rules of relevance and irrelevance as interactive achievements. Instr. Sci. 2012, 40, 785–798. [Google Scholar] [CrossRef]

- Mehler, B.; Reimer, B.; Coughlin, J.F. Sensitivity of Physiological Measures for Detecting Systematic Variations in Cognitive Demand from a Working Memory Task: An On-Road Study across Three Age Groups. Hum. Factors 2012, 54, 396–412. [Google Scholar] [CrossRef] [PubMed]

- Kalyuga, S. Expertise Reversal Effect and Its Implications for Learner-Tailored Instruction. Edu. Psychol. Rev. 2007, 19, 509–539. [Google Scholar] [CrossRef]

- Sweller, J. Cognitive load theory, learning difficulty, and instructional design. Learn. Instr. 1994, 4, 295–312. [Google Scholar] [CrossRef]

- Fraser, K.L.; Ayres, P.; Sweller, J. Cognitive Load Theory for the Design of Medical Simulations. Simu. Healthc. 2015, 10, 295–307. [Google Scholar] [CrossRef]

- Sweller, J. Cognitive load during problem solving: Effects on learning. Cogn. Sci. 1988, 12, 257–285. [Google Scholar] [CrossRef]

- Laxmisan, A.; Hakimzada, F.; Sayan, O.R.; Green, R.A.; Zhang, J.; Patel, V.L. The multitasking clinician: Decision-making and cognitive demand during and after team handoffs in emergency care. Int. J. Med. Inf. 2007, 76, 801–811. [Google Scholar] [CrossRef]

- Van Merriënboer, J.J.G.; Sweller, J. Cognitive load theory in health professional education: Design principles and strategies. Med. Edu. 2010, 44, 85–93. [Google Scholar] [CrossRef] [PubMed]

- Rodenburg, D.; Hungler, P.; Etemad, S.A.; Howes, D.; Szulewski, A.; Mclellan, J. Dynamically adaptive simulation based on expertise and cognitive load. In Proceedings of the 2018 IEEE Games, Entertainment, Media Conference, Galway, Ireland, 15–17 August 2018; pp. 1–6. [Google Scholar]

- Kalyuga, S.; Ayres, P.; Chandler, P.; Sweller, J. The Expertise Reversal Effect. Educ. Psychol. 2003, 38, 23–31. [Google Scholar] [CrossRef] [Green Version]

- Paas, F.G.W.C.; van Merriënboer, J.J.G.; Adam, J.J. Measurement of Cognitive Load in Instructional Research. Percept. Motor Skills 1994, 79, 419–430. [Google Scholar] [CrossRef] [PubMed]

- Ryu, K.; Myung, R. Evaluation of mental workload with a combined measure based on physiological indices during a dual task of tracking and mental arithmetic. Int. J. Ind. Ergon. 2005, 35, 991–1009. [Google Scholar] [CrossRef]

- Sarkar, P.; Ross, K.; Ruberto, A.; Rodenburg, D.; Hungler, P.; Etemad, A. Classification of Cognitive Load and Expertise for Adaptive Simulation Using Deep Multitask Learning. arXiv 2019, arXiv:1908.00385. [Google Scholar]

- Oschlies-Strobel, A.; Gruss, S.; Jerg-Bretzke, L.; Walter, S.; Hazer-Rau, D. Preliminary classification of cognitive load states in a human machine interaction scenario. In Proceedings of the 2017 International Conference on Companion Technology, Ulm, Germany, 11–13 September 2017; pp. 1–5. [Google Scholar]

- Saitis, C.; Parvez, M.Z.; Kalimeri, K. Cognitive Load Assessment from EEG and Peripheral Biosignals for the Design of Visually Impaired Mobility Aids. Wirel. Commun. Mob. Comput. 2018, 1–9. [Google Scholar] [CrossRef]

- Selye, H. The Physiology and Pathology of Exposure to Stress; ACTA Publications: Montreal, QC, Canada, 1950. [Google Scholar]

- Conway, D.; Dick, I.; Li, Z.; Wang, Y.; Chen, F. The Effect of Stress on Cognitive Load Measurement. In Human-Computer Interaction–INTERACT 2013; Springer: Berlin/Heidelberg, Germany, 2013. [Google Scholar]

- Advanced Training in Emergency Care Procedures. SimMan® 3G. Available online: https://www.laerdal.com/ca/products/simulation-training/emergency-care-trauma/simman-3g/ (accessed on 4 April 2018).

- Work Smarter with the Ultimate Mixed Reality Device. Available online: https://www.microsoft.com/en-us/hololens (accessed on 4 April 2018).

- Consensys ECG Development Kits. Available online: http://www.shimmersensing.com/products/ecg-development-kit (accessed on 4 April 2018).

- Pan, J.; Tompkins, W.J. A Real-Time QRS Detection Algorithm. IEEE Trans. Biomed. Eng. 1985, BME-32, 230–236. [Google Scholar] [CrossRef]

- Bali, J.S.; Nandi, A.V.; Hiremath, P.S. Performance Comparison of Ann Classifiers for Sleep Apnea Detection Based on Ecg Signal Analysis Using Hilbert Transform. Int. J. Comput. Technol. 2018, 17, 7312–7325. [Google Scholar] [CrossRef]

- Braithwaite, J.; Watson, D.; Jones, R.; Rowe, M. A Guide for Analysing EDA & Skin Conductance Responses for Psychological Experiments. Psychophysiology 2013, 49, 1017–1034. [Google Scholar]

- Plataniotis, K.; Hatzinakos, D.; Lee, J. ECG Biometric Recognition without Fiducial Detection. In Proceedings of the 2006 Biometrics Symposium: Special Session on Research at the Biometric Consortium Conference, Baltimore, MD, USA, 21 August–19 September 2006; pp. 1–6. [Google Scholar]

- Malik, M. Heart Rate Variability: Standards of Measurement, Physiological Interpretation, and Clinical Use: Task Force of the European Society of Cardiology and the North American Society for Pacing and Electrophysiology. Ann. Noninvasive Electrocardiol. 1996, 1, 151–181. [Google Scholar] [CrossRef]

- Healey, J.; Picard, R.W. Detecting stress during real-world driving tasks using physiological sensors. IEEE Trans. Intell. Transp. Syst. 2005, 6, 156–166. [Google Scholar] [CrossRef]

- Lomb, N.R. Least-squares frequency analysis of unequally spaced data. Astrophys. Space Sci. 1976, 39, 447–462. [Google Scholar] [CrossRef]

- Calvo, R.A.; Brown, I.; Scheding, S. Effect of Experimental Factors on the Recognition of Affective Mental States through Physiological Measures. In Proceedings of the AI 2009: Advances in Artificial Intelligence. 22nd Australasian Joint Conference, Melbourne, Australia, 1–4 December 2009; pp. 62–70. [Google Scholar]

- Maaten, L.; Hinton, G. Visualizing data using t-SNE. J. Mach. Learn. Res. 2008, 9, 2579–2605. [Google Scholar]

- Tibshirani, R. Regression shrinkage and selection via the lasso. J. R. Stat. Soc. 1996, 58, 267–288. [Google Scholar] [CrossRef]

- Tang, J.; Alelyani, S.; Liu, H. Feature selection for classification: A review. Data Classif. 2014, 37–64. [Google Scholar] [CrossRef]

- Cortes, C.; Vapnik, V. Support-vector networks. Mach. Learn. 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Swain, P.H.; Hauska, H. The decision tree classifier: Design and potential. IEEE Trans. Geosci. Electron. 1977, 15, 142–147. [Google Scholar] [CrossRef]

- Breiman, L. RANDOM FORESTS–RANDOM FEATURES. Available online: http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.367.9714&rep=rep1&type=pdf (accessed on 16 July 2019).

- Cover, T.; Hart, P. Nearest neighbor pattern classification. IEEE Trans. Inf. Theory 1967, IT-13, 21–27. [Google Scholar] [CrossRef]

| Feature | Description |

|---|---|

| RRmin | Minimum value of RR interval |

| RRmax | Maximum value of RR interval |

| RRdiff | Difference between RRmax and RRmin |

| RRmean | Mean value of RR interval |

| RRSD | Standard deviation of RR interval |

| RRCV | Coefficient of Variation of RR intervals |

| SDSD | Standard deviation of successive differences of RR intervals |

| NN50 | Number of RR intervals greater than 50 ms |

| PNN50 | Percentage of RR intervals greater than 50 ms |

| ULF | Ultra low frequency band (<0.003) Hz |

| VLF | Very low frequency band (0.04–0.003) Hz |

| LF | Low frequency band (0.04–0.15) Hz |

| HF | High frequency band (0.15–0.4) Hz |

| TP | Total power (0–0.4) Hz |

| LFnorm | Normalized low frequency |

| HFnorm | Normalized high frequency |

| LF/HF | Ratio of low to high frequency power |

| LMHF | Sympatho vagal balance ratio, (LF+MF)/HF, using mid frequency (MF) range of (0.08–0.15) Hz |

| Feature | Description |

|---|---|

| RT | Rise time from SCR onset to peak response |

| HRT | Half recovery time of the SCR peak |

| Amp | Amplitude of the skin conductance response at its peak |

| Area | Area of the skin conductance response |

| Prom | Prominence of skin conductance response relative to the skin conductance level |

| SCL | Skin conductance level, the average electrodermal response |

| MAV1Diff SCL | First derivative of the mean absolute value of the skin conductance level |

| MAV2Diff SCL | Second derivative of the mean absolute value of the skin conductance level |

| BP | Band power power of the GSR signal |

| PSD | Power spectrum density estimate of the GSR signal |

| SVM | DT | RF | KNN | |||||

|---|---|---|---|---|---|---|---|---|

| Acc. | F1 Score | Acc. | F1 Score | Acc. | F1 Score | Acc. | F1 Score | |

| ECG | 0.7278 | 0.7398 | 0.6332 | 0.6454 | 0.7236 | 0.7270 | 0.5332 | 0.5234 |

| GSR | 0.7746 | 0.7712 | 0.7362 | 0.7123 | 0.7852 | 0.7665 | 0.7935 | 0.7889 |

| ECG+GSR | 0.7984 | 0.7815 | 0.7804 | 0.7931 | 0.6666 | 0.6804 | 0.8296 | 0.7996 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ross, K.; Sarkar, P.; Rodenburg, D.; Ruberto, A.; Hungler, P.; Szulewski, A.; Howes, D.; Etemad, A. Toward Dynamically Adaptive Simulation: Multimodal Classification of User Expertise Using Wearable Devices. Sensors 2019, 19, 4270. https://doi.org/10.3390/s19194270

Ross K, Sarkar P, Rodenburg D, Ruberto A, Hungler P, Szulewski A, Howes D, Etemad A. Toward Dynamically Adaptive Simulation: Multimodal Classification of User Expertise Using Wearable Devices. Sensors. 2019; 19(19):4270. https://doi.org/10.3390/s19194270

Chicago/Turabian StyleRoss, Kyle, Pritam Sarkar, Dirk Rodenburg, Aaron Ruberto, Paul Hungler, Adam Szulewski, Daniel Howes, and Ali Etemad. 2019. "Toward Dynamically Adaptive Simulation: Multimodal Classification of User Expertise Using Wearable Devices" Sensors 19, no. 19: 4270. https://doi.org/10.3390/s19194270

APA StyleRoss, K., Sarkar, P., Rodenburg, D., Ruberto, A., Hungler, P., Szulewski, A., Howes, D., & Etemad, A. (2019). Toward Dynamically Adaptive Simulation: Multimodal Classification of User Expertise Using Wearable Devices. Sensors, 19(19), 4270. https://doi.org/10.3390/s19194270