Machine-Learning-Based Detection of Craving for Gaming Using Multimodal Physiological Signals: Validation of Test-Retest Reliability for Practical Use

Abstract

1. Introduction

2. Methods

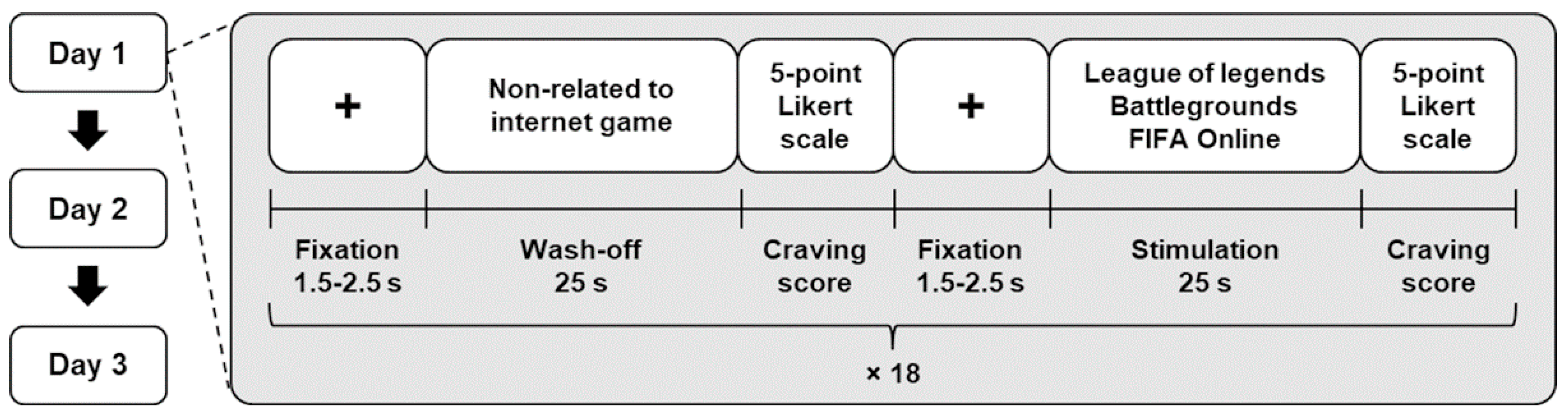

2.1. Experiment Paradigm

2.2. Participants

2.3. Acquisition of Multimodal Biosignals

2.4. Feature Extraction

2.5. Classification of Craving States and Feature Selection

2.6. Statistical Analysis

3. Results

3.1. Self-Reported Craving Score

3.2. Test-Retest Reliability of Features

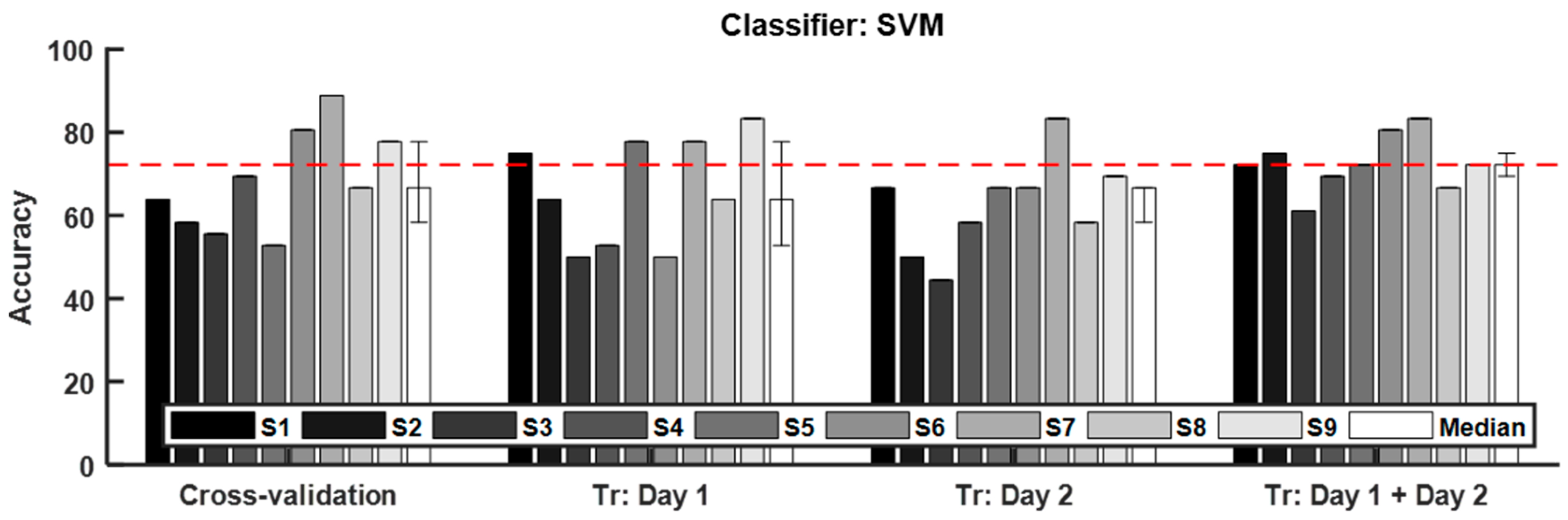

3.3. Classification of Craving State

4. Discussion

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Petry, N.M.; O’brien, C.P. Internet gaming disorder and the dsm-5. Addiction 2013, 108, 1186–1187. [Google Scholar] [CrossRef] [PubMed]

- Petry, N.M.; Rehbein, F.; Ko, C.-H.; O’Brien, C.P. Internet gaming disorder in the dsm-5. Curr. Psychiatry Rep. 2015, 17, 72. [Google Scholar] [CrossRef] [PubMed]

- Chambers, R.A.; Potenza, M.N. Neurodevelopment, impulsivity, and adolescent gambling. J. Gambl. Stud. 2003, 19, 53–84. [Google Scholar] [CrossRef] [PubMed]

- Crews, F.; He, J.; Hodge, C. Adolescent cortical development: A critical period of vulnerability for addiction. Pharmacol. Biochem. Behav. 2007, 86, 189–199. [Google Scholar] [CrossRef] [PubMed]

- McRae-Clark, A.L.; Carter, R.E.; Price, K.L.; Baker, N.L.; Thomas, S.; Saladin, M.E.; Giarla, K.; Nicholas, K.; Brady, K.T. Stress-and cue-elicited craving and reactivity in marijuana-dependent individuals. Psychopharmacology 2011, 218, 49–58. [Google Scholar] [CrossRef] [PubMed]

- Dong, G.; Wang, L.; Du, X.; Potenza, M.N. Gaming increases craving to gaming-related stimuli in individuals with internet gaming disorder. Biol. Psychiatry Cogn. Neurosci. Neuroimaging 2017, 2, 404–412. [Google Scholar] [CrossRef]

- Lu, D.W.; Wang, J.W.; Huang, A.C.W. Differentiation of internet addiction risk level based on autonomic nervous responses: The internet-addiction hypothesis of autonomic activity. Cyberpsychol. Behav. Soc. Netw. 2010, 13, 371–378. [Google Scholar] [CrossRef]

- Chang, J.S.; Kim, E.Y.; Jung, D.; Jeong, S.H.; Kim, Y.; Roh, M.-S.; Ahn, Y.M.; Hahm, B.-J. Altered cardiorespiratory coupling in young male adults with excessive online gaming. Biol. Psychol. 2015, 110, 159–166. [Google Scholar] [CrossRef]

- Kim, H.; Ha, J.; Chang, W.-D.; Park, W.; Kim, L.; Im, C.-H. Detection of craving for gaming in adolescents with internet gaming disorder using multimodal biosignals. Sensors 2018, 18, 102. [Google Scholar] [CrossRef]

- Dehghani-Arani, F.; Rostami, R.; Nadali, H. Neurofeedback training for opiate addiction: Improvement of mental health and craving. Appl. Psychophysiol. Biofeedback 2013, 38, 133–141. [Google Scholar] [CrossRef]

- Budney, A.J.; Stanger, C.; Tilford, J.M.; Scherer, E.B.; Brown, P.C.; Li, Z.; Li, Z.; Walker, D.D. Computer-assisted behavioral therapy and contingency management for cannabis use disorder. Psychol. Addict. Behav. 2015, 29, 501–511. [Google Scholar] [CrossRef] [PubMed]

- Fattahi, S.; Naderi, F.; Asgari, P.; Ahadi, H. Neuro-feedback training for overweight women: Improvement of food craving and mental health. NeuroQuantology 2017, 15, 232–238. [Google Scholar] [CrossRef]

- Carter, B.L.; Tiffany, S.T. Meta-analysis of cue-reactivity in addiction research. Addiction 1999, 94, 327–340. [Google Scholar] [CrossRef] [PubMed]

- Van Gucht, D.; Van den Bergh, O.; Beckers, T.; Vansteenwegen, D. Smoking behavior in context: Where and when do people smoke? J. Behav. Ther. Exp. Psychiatry 2010, 41, 172–177. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Y.; Ndasauka, Y.; Hou, J.; Chen, J.; Wang, Y.; Han, L.; Bu, J.; Zhang, P.; Zhou, Y.; Zhang, X. Cue-induced behavioral and neural changes among excessive internet gamers and possible application of cue exposure therapy to internet gaming disorder. Front. Psychol. 2016, 7, 675. [Google Scholar] [CrossRef] [PubMed]

- Shin, Y.-B.; Kim, J.-J.; Kim, M.-K.; Kyeong, S.; Jung, Y.H.; Eom, H.; Kim, E. Development of an effective virtual environment in eliciting craving in adolescents and young adults with internet gaming disorder. PLoS ONE 2018, 13, e0195677. [Google Scholar] [CrossRef] [PubMed]

- Beard, K.W.; Wolf, E.M. Modification in the proposed diagnostic criteria for internet addiction. CyberPsychol. Behav. 2001, 4, 377–383. [Google Scholar] [CrossRef]

- Young, K.S. Internet addiction: The emergence of a new clinical disorder. CyberPsychol. Behav. 1998, 1, 237–244. [Google Scholar] [CrossRef]

- Lee, K.-R.; Chang, W.-D.; Kim, S.; Im, C.-H. Real-time “eye-writing” recognition using electrooculogram. IEEE Trans. Neural Syst. Rehabil. Eng. 2017, 25, 40–51. [Google Scholar] [CrossRef]

- Kim, H.; Kim, J.-Y.; Im, C.-H. Fast and robust real-time estimation of respiratory rate from photoplethysmography. Sensors 2016, 16, 1494. [Google Scholar] [CrossRef]

- Chang, W.-D.; Cha, H.-S.; Kim, K.; Im, C.-H. Detection of eye blink artifacts from single prefrontal channel electroencephalogram. Comput. Methods Programs Biomed. 2016, 124, 19–30. [Google Scholar] [CrossRef] [PubMed]

- Chang, W.-D.; Cha, H.-S.; Kim, S.H.; Im, C.-H. Development of an electrooculogram-based eye-computer interface for communication of individuals with amyotrophic lateral sclerosis. J. Neuroeng. Rehabil. 2017, 14, 89. [Google Scholar] [CrossRef] [PubMed]

- Chang, C.-C.; Lin, C.-J. Libsvm: A library for support vector machines. ACM Trans. Intell. Syst. Technol. (TIST) 2011, 2, 27. [Google Scholar] [CrossRef]

- Duda, R.O.; Hart, P.E.; Stork, D.G. Pattern Classification; John Wiley & Sons: Hoboken, NJ, USA, 2012. [Google Scholar]

- Fix, E.; Hodges, J.L., Jr. Discriminatory Analysis-Nonparametric Discrimination: Consistency Properties; California University Berkeley: Berkeley, CA, USA, 1951. [Google Scholar]

- Nguyen, B.P.; Tay, W.; Chui, C. Robust biometric recognition from palm depth images for gloved hands. IEEE Trans. Hum. -Mach. Syst. 2015, 45, 799–804. [Google Scholar] [CrossRef]

- Breiman, L. Random forests. Mach. Learn. 2001, 451, 5–32. [Google Scholar] [CrossRef]

- McGraw, K.O.; Wong, S.P. Forming inferences about some intraclass correlation coefficients. Psychol. Methods 1996, 1, 30–46. [Google Scholar] [CrossRef]

- Shrout, P.E.; Fleiss, J.L. Intraclass correlations: Uses in assessing rater reliability. Psychol. Bull. 1979, 86, 420–428. [Google Scholar] [CrossRef]

- Koo, T.K.; Li, M.Y. A guideline of selecting and reporting intraclass correlation coefficients for reliability research. J. Chiropr. Med. 2016, 15, 155–163. [Google Scholar] [CrossRef]

- Tong, C.; Bovbjerg, D.H.; Erblich, J. Smoking-related videos for use in cue-induced craving paradigms. Addict. Behav. 2007, 32, 3034–3044. [Google Scholar] [CrossRef] [PubMed]

- Liu, L.; Yip, S.W.; Zhang, J.T.; Wang, L.J.; Shen, Z.J.; Liu, B.; Ma, S.S.; Yao, Y.W.; Fang, X.Y. Activation of the ventral and dorsal striatum during cue reactivity in internet gaming disorder. Addict. Biol. 2017, 22, 791–801. [Google Scholar] [CrossRef]

- Niu, G.-F.; Sun, X.-J.; Subrahmanyam, K.; Kong, F.-C.; Tian, Y.; Zhou, Z.-K. Cue-induced craving for internet among internet addicts. Addict. Behav. 2016, 62, 1–5. [Google Scholar] [CrossRef] [PubMed]

- Lam, L.; Suen, S. Application of majority voting to pattern recognition: An analysis of its behavior and performance. IEEE Trans. Syst. Man Cybern. -Part A Syst. Hum. 1997, 27, 553–568. [Google Scholar] [CrossRef]

- Han, C.-H.; Hwang, H.-J.; Lim, J.-H.; Im, C.-H. Assessment of user voluntary engagement during neurorehabilitation using functional near-infrared spectroscopy: A preliminary study. J. Neuroeng. Rehabil. 2018, 15, 27. [Google Scholar] [CrossRef] [PubMed]

- Van Erp, M.; Vuurpijl, L.; Schomaker, L. An overview and comparison of voting methods for pattern recognition. In Proceedings of the 8th International Workshop on Frontiers in Handwriting Recognition, Ontario, ON, Canada, 6–8 August 2002; IEEE: Piscataway, NJ, USA, 2002; pp. 195–200. [Google Scholar]

- İşcan, Z.; Dokur, Z. A novel steady-state visually evoked potential-based brain–computer interface design: Character plotter. Biomed. Signal Process. Control 2014, 10, 145–152. [Google Scholar] [CrossRef]

| Subj. | Age | Young Scale | Self-Reported Craving Score | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Day 1 | Day 2 | Day 3 | |||||||||

| Wo | S | Sig. | Wo | S | Sig. | Wo | S | Sig. | |||

| S1 | 19 | 64 | 1 (1) | 4 (1) | ** | 1 (1) | 4.5 (1) | ** | 1 (1) | 4.5 (2) | ** |

| S2 | 20 | 62 | 1 (1) | 4 (2) | ** | 1 (1) | 4 (1) | ** | 1 (0) | 3 (2) | ** |

| S3 | 21 | 55 | 2.5 (1) | 3 (1) | * | 2 (0) | 3 (1) | * | 2 (0) | 3 (1) | ** |

| S4 | 21 | 81 | 2 (1) | 4 (2) | ** | 1 (1) | 4 (1) | ** | 2 (1) | 4.5 (1) | ** |

| S5 | 22 | 66 | 1 (1) | 3 (1) | ** | 1.5 (1) | 4 (1) | ** | 1 (0) | 3 (1) | ** |

| S6 | 23 | 71 | 2 (1) | 3.5 (1) | ** | 2 (0) | 3 (0) | ** | 2 (1) | 3 (0) | ** |

| S7 | 20 | 56 | 2 (1) | 4 (0) | ** | 2 (0) | 4 (0) | ** | 2 (1) | 4 (0) | ** |

| S8 | 21 | 49 | 1 (0) | 4 (3) | ** | 2 (1) | 4 (1) | ** | 2 (1) | 4 (1) | ** |

| S9 | 19 | 53 | 1.5 (1) | 3.5 (1) | ** | 2 (0) | 3 (1) | ** | 2 (1) | 4 (2) | ** |

| Feature Number | Wash-Off Trial | Stimulation Trial | ||

|---|---|---|---|---|

| ICC | p-Value | ICC | p-Value | |

| 1 | 0.80 | 0.00 | 0.65 | 0.00 |

| 2 | 0.50 | 0.01 | 0.56 | 0.00 |

| 3 | 0.68 | 0.00 | 0.32 | 0.07 |

| 4 | 0.53 | 0.01 | 0.21 | 0.18 |

| 5 | 0.39 | 0.05 | 0.01 | 0.45 |

| 6 | 0.17 | 0.20 | 0.11 | 0.31 |

| 7 | 0.54 | 0.01 | 0.47 | 0.02 |

| 8 | 0.70 | 0.00 | 0.70 | 0.00 |

| 9 | 0.50 | 0.02 | 0.58 | 0.01 |

| 10 | 0.70 | 0.00 | 0.72 | 0.00 |

| 11 | 0.70 | 0.00 | 0.72 | 0.00 |

| 12 | 0.62 | 0.00 | 0.56 | 0.01 |

| 13 | 0.46 | 0.01 | 0.61 | 0.00 |

| 14 | 0.51 | 0.02 | 0.14 | 0.26 |

| Classifier | Median (Interquartile Range) of Classification Accuracy (%) | |||

|---|---|---|---|---|

| Cross-Validation | Tr: Day 1 | Tr: Day 2 | Tr: Day 1 + Day 2 | |

| SVM | 66.67 (20.83) | 63.89 (25.69) | 66.67 (11.11) | 72.22 (7.64) |

| LDA | 75.00 (17.36) | 63.89 (31.25) | 61.11 (23.61) | 72.22 (15.97) |

| kNN | 69.44 (20.14) | 58.33 (19.44) | 69.44 (20.83) | 69.44 (13.89)) |

| CDNN | 75.00 (17.36) | 63.89 (31.25) | 61.11 (23.61) | 72.22 (15.97) |

| Random forest | 66.67 (14.58) | 55.56 (17.36) | 58.33 (11.81) | 72.22 (12.50) |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kim, H.; Kim, L.; Im, C.-H. Machine-Learning-Based Detection of Craving for Gaming Using Multimodal Physiological Signals: Validation of Test-Retest Reliability for Practical Use. Sensors 2019, 19, 3475. https://doi.org/10.3390/s19163475

Kim H, Kim L, Im C-H. Machine-Learning-Based Detection of Craving for Gaming Using Multimodal Physiological Signals: Validation of Test-Retest Reliability for Practical Use. Sensors. 2019; 19(16):3475. https://doi.org/10.3390/s19163475

Chicago/Turabian StyleKim, Hodam, Laehyun Kim, and Chang-Hwan Im. 2019. "Machine-Learning-Based Detection of Craving for Gaming Using Multimodal Physiological Signals: Validation of Test-Retest Reliability for Practical Use" Sensors 19, no. 16: 3475. https://doi.org/10.3390/s19163475

APA StyleKim, H., Kim, L., & Im, C.-H. (2019). Machine-Learning-Based Detection of Craving for Gaming Using Multimodal Physiological Signals: Validation of Test-Retest Reliability for Practical Use. Sensors, 19(16), 3475. https://doi.org/10.3390/s19163475