A Micro-Level Compensation-Based Cost Model for Resource Allocation in a Fog Environment

Abstract

1. Introduction

- A micro-level cost model that takes into account user participation in fog computing environments.

- A novel resource-allocation algorithm based on stable matching (FSMRA) is proposed that benefits both users and providers in the fog environment.

2. Related Work

- IoT Amazon [16] For IoT, Amazon calculates the cost based on the rules triggered and actions executed. Pricing for IoT Amazon also depends on the number of messages, message size, and connectivity. They charge $0.25 for 5 kb of data. They charge $1.20 for a maximum of one billion messages with each message not exceeding 128 kb. The cost of the connectivity for one million minutes is $0.132.

- IBM Watson [17] The pricing model for IBM Watson depends on the number of devices, the number of messages, message size, and percentage of data analytics.

- AWS Green Grass [18] The pricing model by AWS Green Grass is based on the number of devices and core. The cost of each device is $0.22 per month.

- Microsoft Azure IoT Hub [19] The pricing of Azure IoT depends on the number of messages per day (400000 messages to 6 million per day of size 4 kb for $63).

- Microsoft Azure Event Hub [20] The pricing of Azure Event Hub depends on the triggered events ($0.036 per million events), connectivity, and throughput.

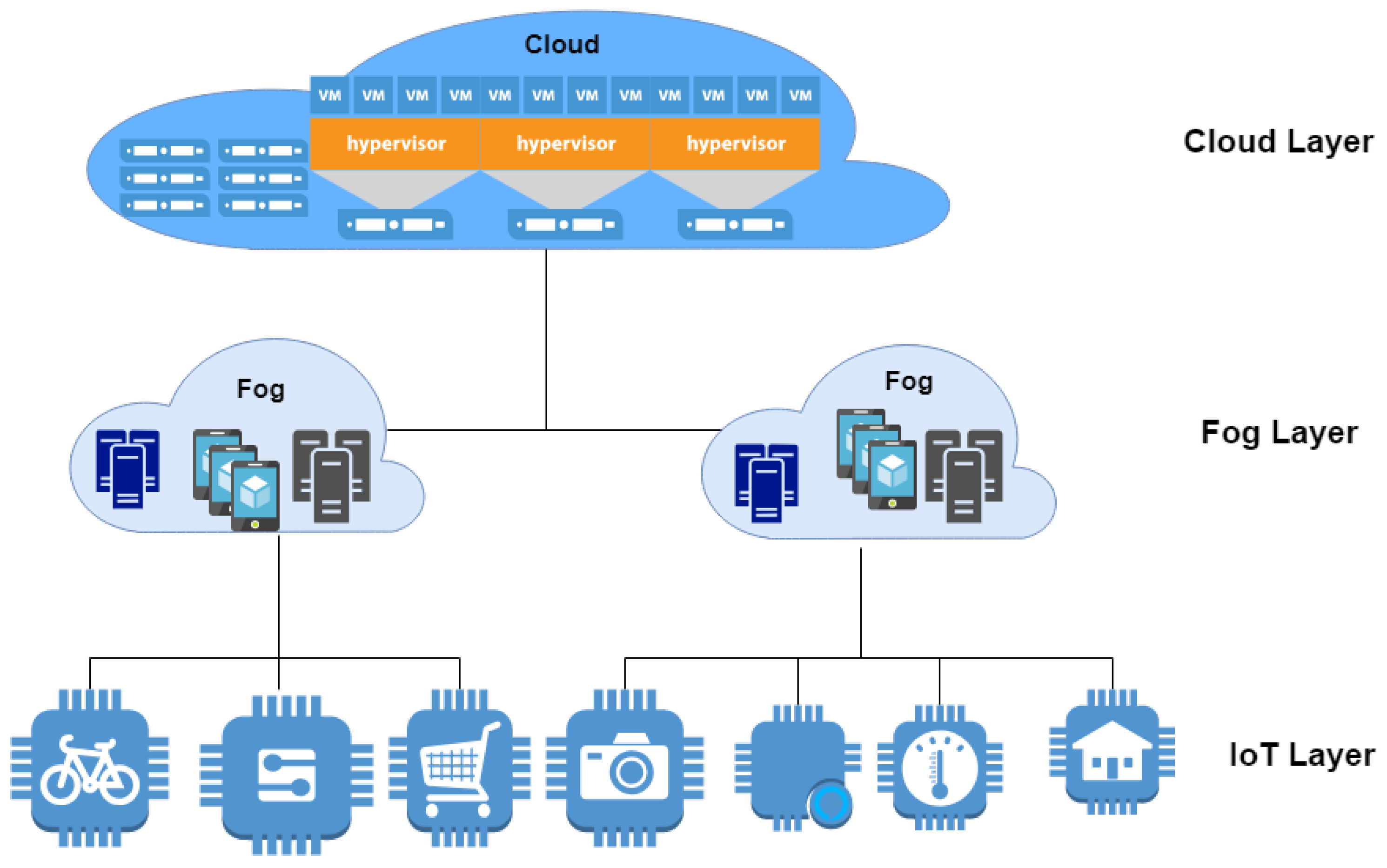

3. Problem Statement and Proposed Solution

- When the user request is received, how to decide whether the available fog devices can meet the requirements of the user and accept or reject the request?

- After accepting a user request, how to allocate the resources or fog devices that match the user request’s requirements and simultaneously optimize the cost of an application without affecting the benefits of providers.

4. Fog Computing Cost Model

- Communication costThe communication cost depends on the total number of received messages, the size of each message and the message unit cost. Equation (2) shows the formula for calculating communication cost.where is the total number of messages received by the device i, is the size of the jth message in bytes, x is the minimum size of the message in bytes defined by the provider and is the cost of each message.

- Processing costThe processing cost can be calculated in two ways based on user requirements. The user can either request to process the application or specifically request the containers and virtual machines required to process the application.The processing cost of application depends on the total number of actions triggered and performed. The processing cost is formulated in Equation (3).where is total processing cost for i, is the total number of actions triggered, is the quality of service factor, is the trigger processing cost, is the total number of actions performed, is the number of dependent tasks and is the action processing cost.The QoS factor depends on the number of actions received, triggered, and executed successfully in terms of meeting the user requirements at a particular location and time. The fog devices behave differently at different times and different places due to the variation in connectivity and the available energy. Hence, QoS of the fog device is calculated based on the previous history of fog devices at a particular time and location in terms of the ratio of the number of successful completion of requests by total number requests triggered. This factor can be calculated by Equation (4).where is the quality of service factor, is the total number of actions triggered, is the total number of requests received and is the total number of actions performed.If the user requests the containers and virtual machines instead of the processing actions-based cost, then the processing cost of the resource is calculated bywhere is the number of VCPU’s and is CPU usage in hours. The number of VCPU’s can vary from 0.25 to 72.

- Cloud-network costThese costs are imposed if coordination with the cloud is necessary. Network costs can be calculated by Equation (6).where is the cloud integration pricing and is the cloud integration time. Cloud integration time is the duration of the time taken by a fog device to send the data to the cloud and receive the data from the cloud after the required computation. This integration time depends on the bandwidth of the network connectivity with the cloud and includes the delay in the process as well.

- Migration costThis costs should be paid by the fog contributor who is contributing to fog services if migration takes place due to the fog contributor. However, the participating user should pay migration cost as a penalty if migration is taking place due to the user. Migration cost can be calculated using Equation (7).where is the total execution time of migration tasks, and is the cost per processing unit.

- Storage costDepend on the volume of data that needs to be stored, the time of storing particular data, and the encryption cost, as shown in Equation (8).where is the total storage size, is the encryption cost per bytes, is the storage time in minutes and is the storage cost per MB.

- Power costThis cost depends on the total number of connected sensors during application processing. The battery cost is calculated using Equation (9).where is the total number of fog devices, is the total number of sensors connected to the fog device, is the idle time that is the delay time between sensing and sending in second, and is the battery cost.

- Software Costis the commercial production cost which can be calculated by Equation (10).where is the commercial product cost per license, n is the number of months subscribed and z is the number of commercial products.

- Sensor costis calculated by multiplying the number of requests served with the sensor cost per request. Equation (11) shows the sensor cost.where is the total number of requests served by each sensor, is the total number of sensors and is the sensor cost per request.

- Operational costdepends on the sensor, fog device, and network operation costs, which is formulated as per Equation (12).where is the sensor operational cost per request, is the Fog device operational cost per request, and is the network operational cost.

4.1. Adjustment Factors

- Peak-time costIt is assumed that each device has its demands and it appears at times and is the demand for the device k at time t. The formula for calculating is given as follows:where is the peak-time cost, which charges during the higher demand of user requests or when the fog devices have met the promised availability but still if the system is requesting the provider to provide for extra time. is the demand unit, which represents the rate of extra charges due to the high demand. The formula for calculating is given as follows:where is the number of total available resources with the device configuration k. The logarithmic function is used in Equation (2) is to make the provider and user participation consistent in all cases.

- Scaling costScaling cost is needed based on user request, then the user should pay for scaling cost due to the on-demand resources. The scaling of resources can be done in two ways vertical scaling and horizontal scaling. In vertical scaling the resources will be added to the same device by extending the Virtual machine size or container size.The vertical scaling cost can be calculated as follows:where represents the cost of scaling, is the number of device processors that need to be scaled, represents the number of actions performed and is the cost per action performed. Hence, the total cost with the scaling can be calculated using Equation (17).The horizontal scaling cost can be calculated as follows:where represents the cost of scaling, is the number of devices that need to be scaled, represents the cost of on-demand resources. Hence, the total cost with the scaling can be calculated using Equation (17).

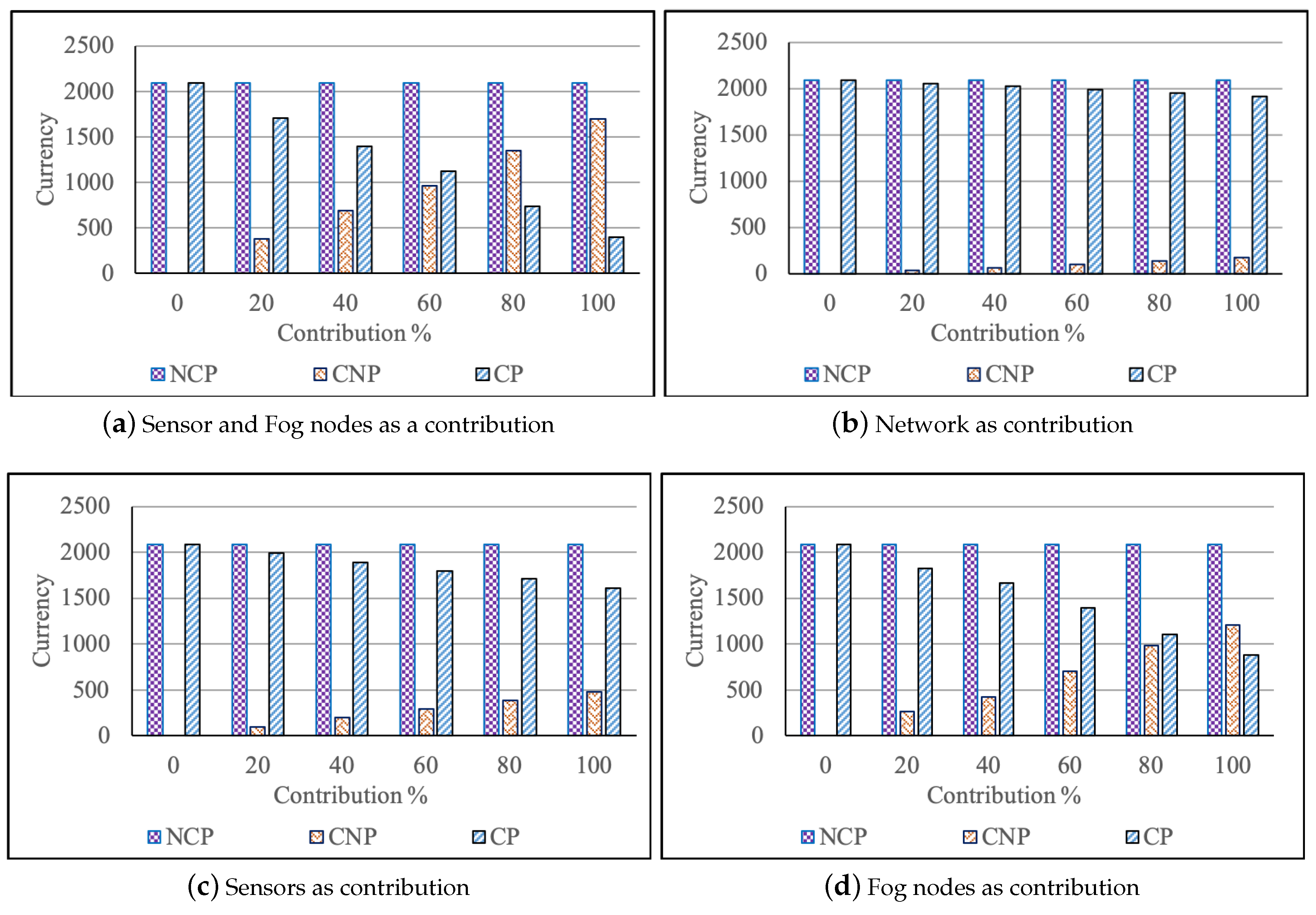

4.2. Different Example Scenarios

4.3. Case Study Example

5. Proposed Resource Allocation for Fog Computing

5.1. Fog Stable Matching Resource Allocation (FSMRA) Algorithm

5.2. Resource Allocation in Fog

| Algorithm 1 Resource-allocation algorithm |

|

6. Evaluation

6.1. Cost Model Evaluation Results

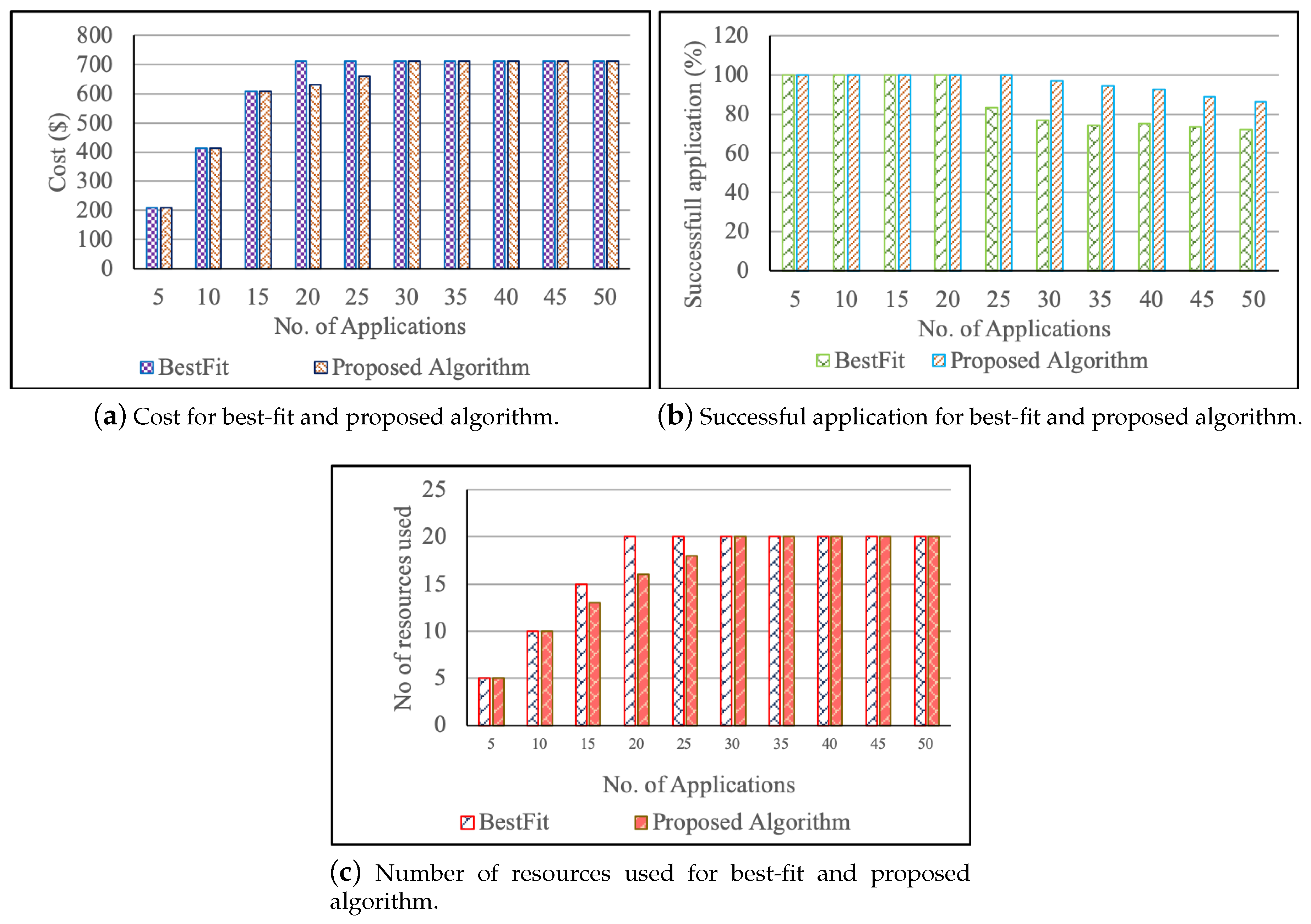

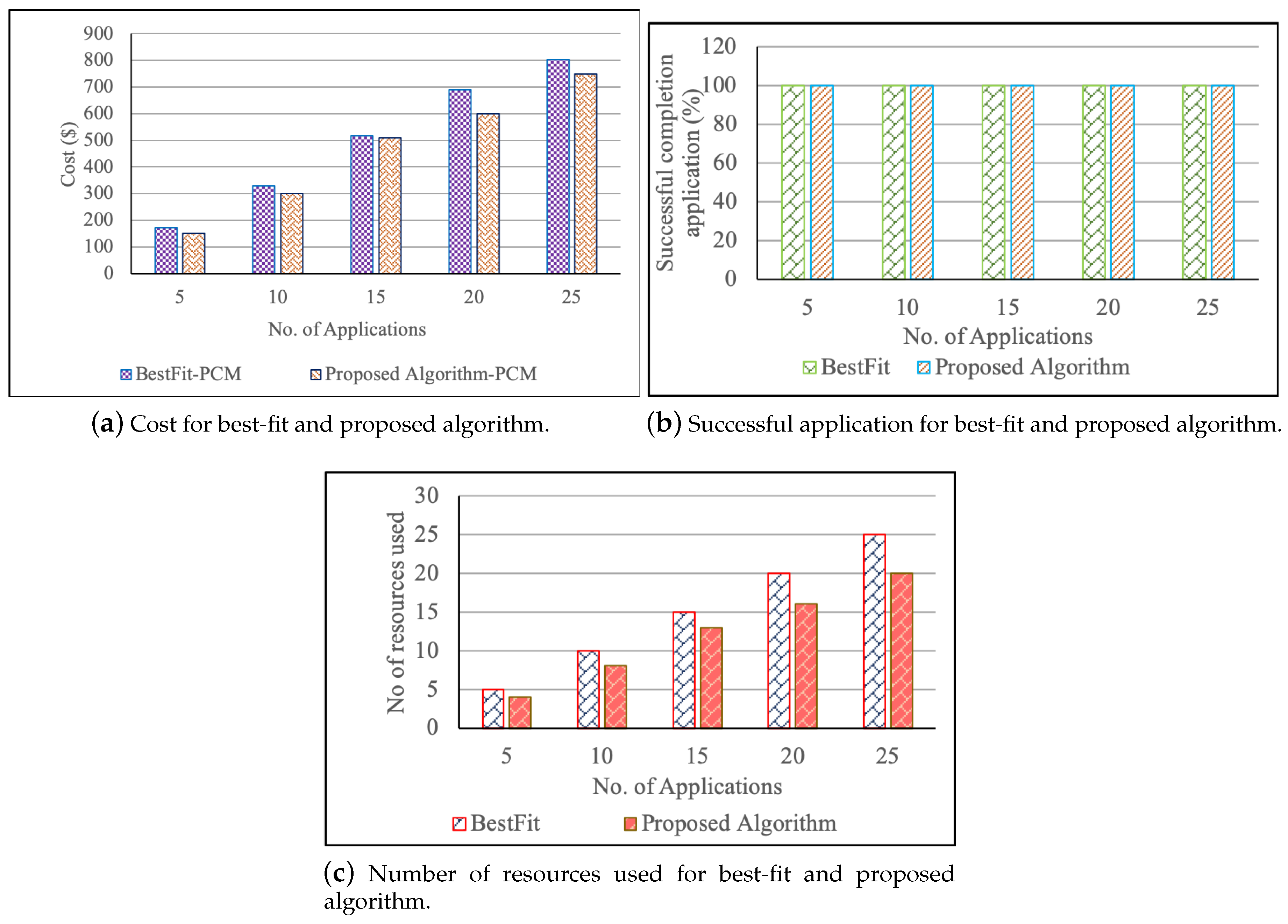

6.2. Experimental Evaluation of Proposed Algorithm

6.2.1. Experimental Setup

6.2.2. Results and Findings

7. Conclusions and Future Work

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| ND | Total number of devices | TSS | Total storage size |

| Storage time cost | NDT | Number of dependent tasks | |

| NAT | Total number of actions triggered | M | Total number of messages received |

| NRR | Total number of requests received | NSC | Total number of sensors connected |

| NAP | Total number of actions performed | IT | Idle time |

| Messaging cost | Battery cost | ||

| Encryption cost | Commercial product cost | ||

| QoSF | Quality of service factor | TRS | Total requests served |

| TPC | Trigger processing cost | Sensor cost per request | |

| APC | Action processing cost | DU | Demand unit |

| CIC | Cloud integration cost | ToD | Total demand |

| CIT | Cloud integration time | TRA | Total resource availability |

| Network cost | Sensor operational cost | ||

| Cost | Overall Cost | Network operational cost | |

| MPC | Migration processing cost | Total cost with scaling | |

| Fog device operational cost | NM | Total number of tasks migration | |

| Number of the devices need to be scaled | Storage cost | ||

| Total network cost | Power cost | ||

| Software Cost | Sensor cost | ||

| Operational cost | Fog device cost | ||

| Sensor cost | User receiving | ||

| Provider receiving | Cost per action triggered | ||

| Migration cost | number of actions performed | ||

| Processing cost | Cost of scaling | ||

| Storage time | On-demand Cost |

References

- Intel. Intel® Xeon® D-2100 Processor Product Brief. Available online: https://www.intel.com.au/content/dam/www/public/us/en/documents/product-briefs/xeon-d-2100-product-brief.pdf (accessed on 12 February 2018).

- Eddy, N. Gartner: 21 Billion IoT devices to invade by 2020. InformationWeek, 10 November 2015. [Google Scholar]

- Naha, R.K.; Garg, S.; Georgakopoulos, D.; Jayaraman, P.P.; Gao, L.; Xiang, Y.; Ranjan, R. Fog Computing: survey of trends, architectures, requirements, and research directions. IEEE Access 2018, 6, 47980–48009. [Google Scholar] [CrossRef]

- Ujjwal, K.; Garg, S.; Hilton, J.; Aryal, J.; Forbes-Smith, N. Cloud Computing in natural hazard modeling systems: Current research trends and future directions. Int. J. Disaster Risk Reduct. 2019, 38, 101188. [Google Scholar]

- Kleinman, Z. Cancer researchers need phones to process data. BBC News, 1 May 2018. [Google Scholar]

- Mahmud, R.; Kotagiri, R.; Buyya, R. Fog computing: A taxonomy, survey and future directions. In Internet of Everything; Springer: Berlin/Heidelberg, Germany, 2018; pp. 103–130. [Google Scholar]

- Zhang, H.; Xiao, Y.; Bu, S.; Niyato, D.; Yu, F.R.; Han, Z. Computing resource allocation in three-tier IoT fog networks: A joint optimization approach combining Stackelberg game and matching. IEEE Internet Things J. 2017, 4, 1204–1215. [Google Scholar] [CrossRef]

- Du, J.; Zhao, L.; Feng, J.; Chu, X. Computation offloading and resource allocation in mixed fog/cloud computing systems with min-max fairness guarantee. IEEE Trans. Commun. 2018, 66, 1594–1608. [Google Scholar] [CrossRef]

- Chiang, M.; Zhang, T. Fog and IoT: An overview of research opportunities. IEEE Internet Things J. 2016, 3, 854–864. [Google Scholar] [CrossRef]

- Gale, D.; Shapley, L.S. College admissions and the stability of marriage. Am. Math. Mon. 1962, 69, 9–15. [Google Scholar] [CrossRef]

- Ni, L.; Zhang, J.; Jiang, C.; Yan, C.; Yu, K. Resource allocation strategy in fog computing based on priced timed petri nets. IEEE Internet Things J. 2017, 4, 1216–1228. [Google Scholar] [CrossRef]

- Bellavista, P.; Zanni, A. Feasibility of fog computing deployment based on docker containerization over raspberrypi. In Proceedings of the 18th International Conference on Distributed Computing and Networking, Hyderabad, India, 5–7 January 2017; p. 16. [Google Scholar]

- Do, C.T.; Tran, N.H.; Pham, C.; Alam, M.G.R.; Son, J.H.; Hong, C.S. A proximal algorithm for joint resource allocation and minimizing carbon footprint in geo-distributed fog computing. In Proceedings of the 2015 International Conference on Information Networking (ICOIN), Cambodia, Siem Reap, Cambodia, 12–14 January 2015; pp. 324–329. [Google Scholar]

- Liang, K.; Zhao, L.; Zhao, X.; Wang, Y.; Ou, S. Joint resource allocation and coordinated computation offloading for fog radio access networks. China Commun. 2016, 13, 131–139. [Google Scholar] [CrossRef]

- Xu, X.; Fu, S.; Cai, Q.; Tian, W.; Liu, W.; Dou, W.; Sun, X.; Liu, A.X. Dynamic resource allocation for load balancing in fog environment. Wirel. Commun. Mob. Comput. 2018, 2018, 6421607. [Google Scholar] [CrossRef]

- AWS IoT Core Pricing—Amazon Web Services. Available online: https://aws.amazon.com/iot-core/pricing/ (accessed on 16 July 2018).

- IBM Watson Internet of Things (IoT). Available online: https://www.ibm.com/internet-of-things/spotlight/watson-iot-platform/pricing (accessed on 19 July 2018).

- AWS IoT Greengrass. Available online: https://aws.amazon.com/greengrass/pricing/ (accessed on 17 July 2018).

- Azure IoT Hub Pricing. Available online: https://azure.microsoft.com/en-us/pricing/details/iot-hub/ (accessed on 20 July 2018).

- Event Hubs Pricing. Available online: https://azure.microsoft.com/en-us/pricing/details/event-hubs (accessed on 21 July 2018).

- Rogers, O.; Cliff, D. A financial brokerage model for cloud computing. J. Cloud Comput. Adv. Syst. Appl. 2012, 1, 2. [Google Scholar] [CrossRef]

- Erdil, D.C. Autonomic cloud resource sharing for intercloud federations. Future Gener. Comput. Syst. 2013, 29, 1700–1708. [Google Scholar] [CrossRef]

- Kliks, A.; Holland, O.; Basaure, A.; Matinmikko, M. Spectrum and license flexibility for 5G networks. IEEE Commun. Mag. 2015, 53, 42–49. [Google Scholar] [CrossRef]

- Mei, L.; Li, W.; Nie, K. Pricing decision analysis for information services of the Internet of things based on Stackelberg game. In LISS 2012; Springer: Berlin/Heidelberg, Germany, 2013; pp. 1097–1104. [Google Scholar]

- Park, K.W.; Han, J.; Chung, J.; Park, K.H. THEMIS: A Mutually verifiable billing system for the cloud computing environment. IEEE Trans. Serv. Comput. 2013, 6, 300–313. [Google Scholar] [CrossRef]

- Sharma, B.; Thulasiram, R.K.; Thulasiraman, P.; Garg, S.K.; Buyya, R. Pricing cloud compute commodities: A novel financial economic model. In Proceedings of the 2012 12th IEEE/ACM International Symposium on Cluster, Cloud and Grid Computing (ccgrid 2012), Ottawa, ON, Canada, 13–16 May 2012; IEEE Computer Society: Washington, DC, USA, 2012; pp. 451–457. [Google Scholar]

- Lee, J.S.; Hoh, B. Sell your experiences: A market mechanism based incentive for participatory sensing. In Proceedings of the 2010 IEEE International Conference on Pervasive Computing and Communications (PerCom), Mannheim, Germany, 29 March–2 April 2010; pp. 60–68. [Google Scholar]

- Mihailescu, M.; Teo, Y.M. Dynamic resource pricing on federated clouds. In Proceedings of the 2010 10th IEEE/ACM International Conference on Cluster, Cloud and Grid Computing (CCGrid), Melbourne, Australia, 17–20 May 2010; pp. 513–517. [Google Scholar]

- Zhu, C.; Li, X.; Leung, V.C.; Yang, L.T.; Ngai, E.C.H.; Shu, L. Towards pricing for sensor-cloud. IEEE Trans. Cloud Comput. 2017. [Google Scholar] [CrossRef]

- OpenFog Consortium. OpenFog Reference Architecture for Fog Computing. Available online: https://www.openfogconsortium.org/wp-content/uploads/OpenFog_Reference_Architecture_2_09_17-FINAL.pdf (accessed on 16 July 2018).

- Fogonomics: Pricing and Incentivizing Fog Computing. Available online: https://www.openfogconsortium.org/fogonomics-pricing-and-incentivizing-fog-computing/ (accessed on 14 July 2018).

- Yousefpour, A.; Fung, C.; Nguyen, T.; Kadiyala, K.; Jalali, F.; Niakanlahiji, A.; Kong, J.; Jue, J.P. All one needs to know about fog computing and related edge computing paradigms: A complete survey. J. Syst. Archit. 2019. [Google Scholar] [CrossRef]

- Naha, R.K.; Garg, S.; Chan, A. Fog Computing Architecture: Survey and Challenges. arXiv 2018, arXiv:1811.09047. [Google Scholar]

- Intharawijitr, K.; Iida, K.; Koga, H. Analysis of fog model considering computing and communication latency in 5G cellular networks. In Proceedings of the 2016 IEEE International Conference on Pervasive Computing and Communication Workshops (PerCom Workshops), Sydney, NSW, Australia, 14–18 March 2016; pp. 1–4. [Google Scholar]

- Calheiros, R.N.; Ranjan, R.; Beloglazov, A.; De Rose, C.A.; Buyya, R. CloudSim: A toolkit for modeling and simulation of cloud computing environments and evaluation of resource provisioning algorithms. Softw. Pract. Exp. 2011, 41, 23–50. [Google Scholar] [CrossRef]

- Abedin, S.F.; Alam, M.G.R.; Kazmi, S.A.; Tran, N.H.; Niyato, D.; Hong, C.S. Resource allocation for ultra-reliable and enhanced mobile broadband IoT applications in fog network. IEEE Trans. Commun. 2019, 67, 489–502. [Google Scholar] [CrossRef]

| Cases | User’s Contribution | User Request | Cost |

|---|---|---|---|

| 1 | Fog devices, Sensors | N | |

| 2 | Fog devices, Sensors | N | |

| 3 | Network | N | |

| 4 | Network | Y | |

| 5 | Sensors | N | |

| 6 | Sensors | Y | |

| 7 | Fog devices | N | |

| 8 | Fog devices | Y | |

| 9 | None | N |

| Resource ID | Processor (GHz) | Network (Kbps) | RAM (GB) | Cost |

|---|---|---|---|---|

| AR1 | 2.3 | 300 | 2 | 0.008 |

| AR2 | 1.6 | 300 | 2 | 0.006 |

| AR3 | 1.5 | 1000 | 1 | 0.004 |

| AR4 | 1.2 | 150 | 1 | 0.002 |

| AR5 | 1.0 | 300 | 1 | 0.001 |

| AR6 | 0.9 | 1000 | 2 | 0.007 |

| User Request No | Pr1 | Pr2 | PR3 | PR4 | PR5 | PR6 |

|---|---|---|---|---|---|---|

| UR1 | AR6 | AR2 | AR1 | AR3 | AR4 | AR5 |

| UR2 | AR1 | AR2 | AR3 | AR4 | AR5 | AR6 |

| UR3 | AR2 | AR1 | AR3 | AR4 | AR5 | AR6 |

| UR4 | AR3 | AR2 | AR1 | AR4 | AR5 | AR6 |

| UR5 | AR4 | AR3 | AR2 | AR1 | AR5 | AR6 |

| UR6 | AR5 | AR4 | AR3 | AR2 | AR1 | AR6 |

| User Request No | Proposed Algorithm | Best-Fit | ||

|---|---|---|---|---|

| System | Status | System | Status | |

| UR1 | AR6 | S | AR1 | S |

| UR2 | AR1 | S | AR2 | NS |

| UR3 | AR2 | S | AR3 | NS |

| UR4 | AR3 | S | AR4 | NS |

| UR5 | AR4 | S | AR5 | NS |

| UR6 | AR5 | S | AR6 | NS |

| Parameter | Description | Value | Type |

|---|---|---|---|

| N | Number of Source nodes | 500 | temperature and proximity |

| M | Number of Fog nodes | 75–100 | medium, small and tiny |

| R | Ratio of Fog nodes | 5–10%, 30–35%,remaining% | medium, small and tiny |

| lh | Hop delay | 10 [ms/hop] | |

| C | Maximum capacity of one Fog node | 5, 10, 15, [actions executed] | tiny, small, medium |

| Producing work rate | 10–100 [actions executed/s] | temperature and proximity | |

| QoSF | QoS Factor | 90–100% | Fog nodes |

| NW | Network speed | 300–1000 Kbps | Fog nodes |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Battula, S.K.; Garg, S.; Naha, R.K.; Thulasiraman, P.; Thulasiram, R. A Micro-Level Compensation-Based Cost Model for Resource Allocation in a Fog Environment. Sensors 2019, 19, 2954. https://doi.org/10.3390/s19132954

Battula SK, Garg S, Naha RK, Thulasiraman P, Thulasiram R. A Micro-Level Compensation-Based Cost Model for Resource Allocation in a Fog Environment. Sensors. 2019; 19(13):2954. https://doi.org/10.3390/s19132954

Chicago/Turabian StyleBattula, Sudheer Kumar, Saurabh Garg, Ranesh Kumar Naha, Parimala Thulasiraman, and Ruppa Thulasiram. 2019. "A Micro-Level Compensation-Based Cost Model for Resource Allocation in a Fog Environment" Sensors 19, no. 13: 2954. https://doi.org/10.3390/s19132954

APA StyleBattula, S. K., Garg, S., Naha, R. K., Thulasiraman, P., & Thulasiram, R. (2019). A Micro-Level Compensation-Based Cost Model for Resource Allocation in a Fog Environment. Sensors, 19(13), 2954. https://doi.org/10.3390/s19132954