Towards the Recognition of the Emotions of People with Visual Disabilities through Brain–Computer Interfaces

Abstract

1. Introduction

- Why is it important to study the emotions of a person with a visual disability?

- Can artificial intelligence through affective computing obtain information of interest to represent the emotions of a person with a visual disability?

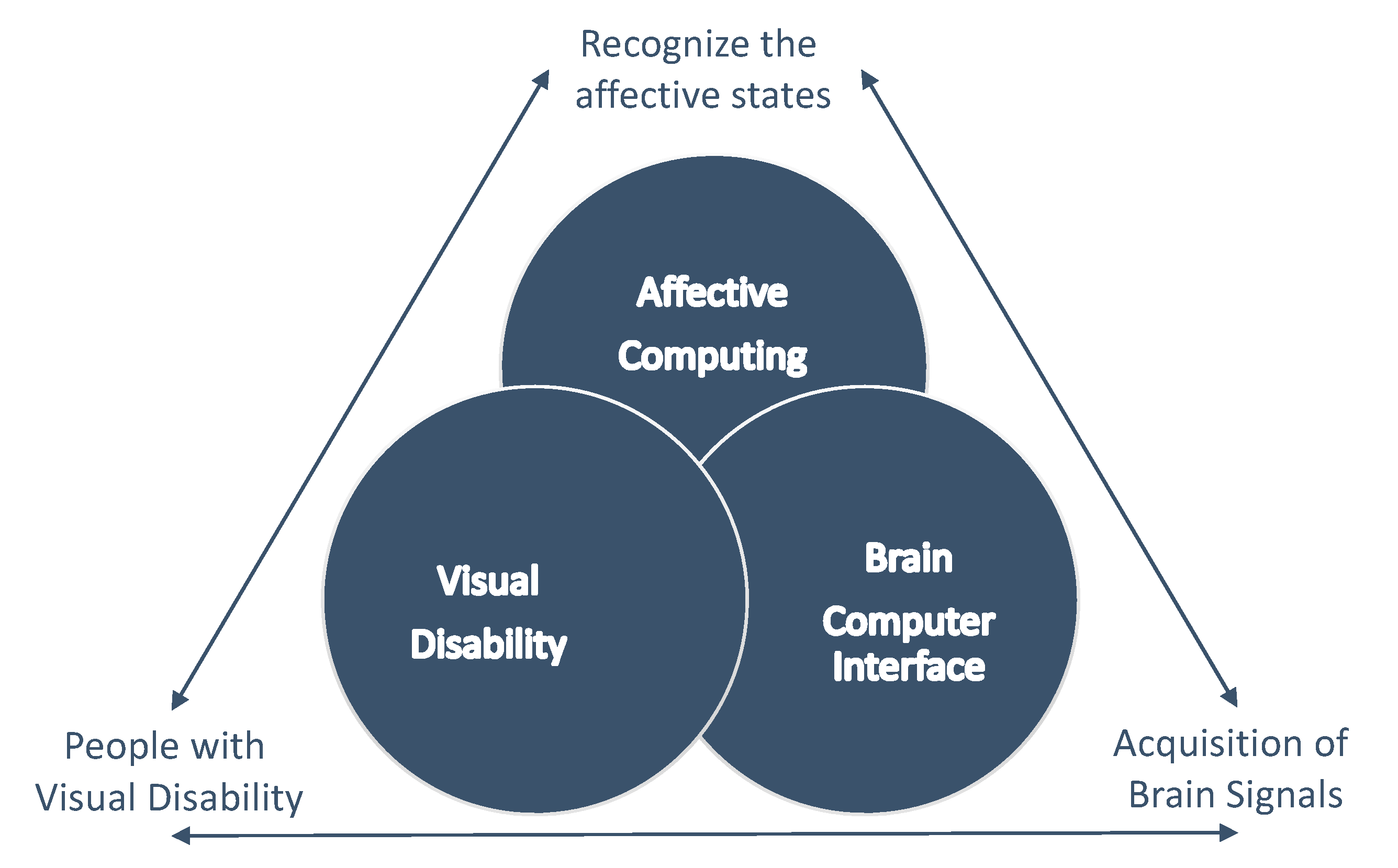

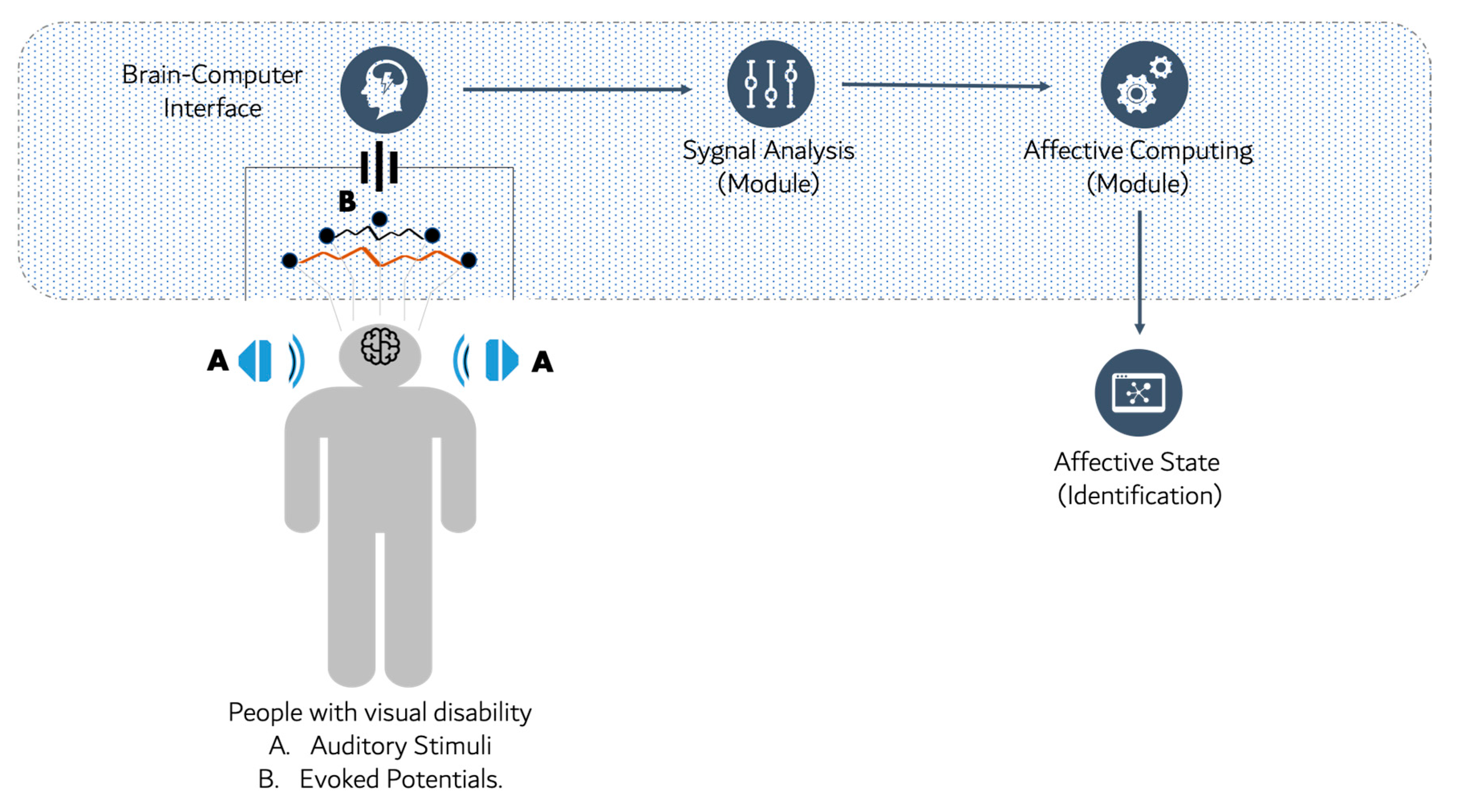

2. Perspective

2.1. Brain–Computer Interface

2.2. Affective Computing

2.3. Visual Disability

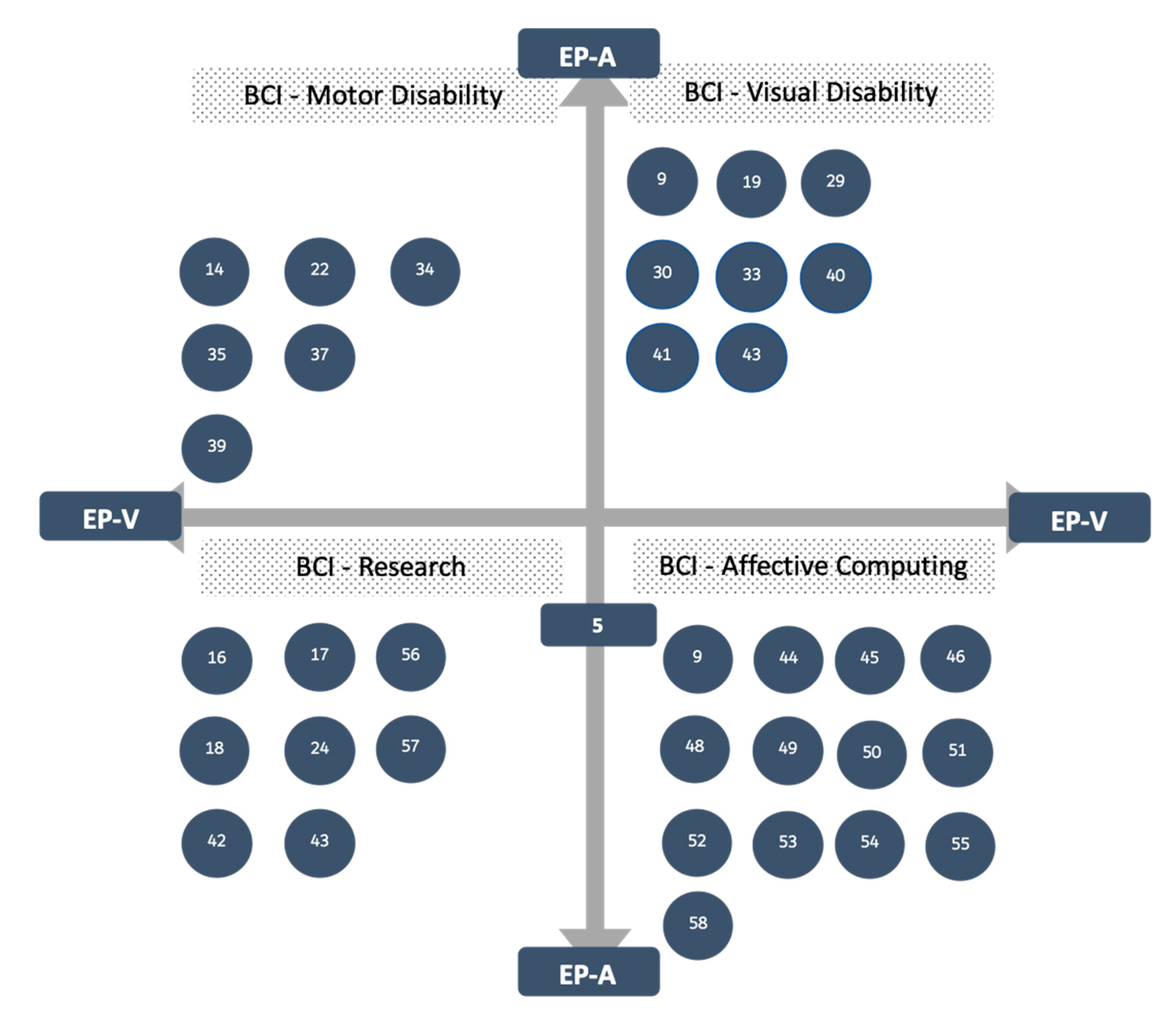

3. Related Work

3.1. BCI for People with a Visual Disability

3.2. BCI for People with Disabilities

3.3. BCI for Detection of Emotions

4. Results

5. Discussion

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Gao, S.; Wang, Y.; Gao, X.; Hong, B. Visual and auditory brain-computer interfaces. IEEE Trans. Biomed. Eng. 2014, 61, 1436–1447. [Google Scholar] [PubMed]

- Bashashati, A.; Fatourechi, M.; Ward, R.K.; Birch, G.E. A survey of signal processing algorithms in brain-computer interfaces based on electrical brain signals. J. Neural Eng. 2007, 4. [Google Scholar] [CrossRef] [PubMed]

- Domingo, M.C. An overview of the Internet of Things for people with disabilities. J. Netw. Comput. Appl. 2012, 35, 584–596. [Google Scholar] [CrossRef]

- Millán, J.D.R.; Rupp, R.; Müller-Putz, G.R.; Murray-Smith, R.; Giugliemma, C.; Tangermann, M.; Vidaurre, C.; Cincotti, F.; Kübler, A.; Leeb, R.; et al. Combining brain-computer interfaces and assistive technologies: State-of-the-art and challenges. Front. Neurosci. 2010, 4, 1–15. [Google Scholar] [CrossRef] [PubMed]

- Deng, J.; Yao, J.; Dewald, J.P.A. Classification of the intention to generate a shoulder versus elbow torque by means of a time-frequency synthesized spatial patterns BCI algorithm. J. Neural Eng. 2005, 2, 131–138. [Google Scholar] [CrossRef] [PubMed]

- Riccio, A.; Mattia, D.; Simione, L.; Olivetti, M.; Cincotti, F. Eye-gaze independent EEG-based brain-computer interfaces for communication. J. Neural Eng. 2012, 9. [Google Scholar] [CrossRef] [PubMed]

- Brereton, P.; Kitchenham, B.A.; Budgen, D.; Turner, M.; Khalil, M. Lessons from applying the systematic literature review process within the software engineering domain. J. Syst. Softw. 2007, 80, 571–583. [Google Scholar] [CrossRef]

- Kitchenham, B.; Charters, S. Procedures for Performing Systematic Literature Reviews in Software Engineering; Technical Report; Durham University: Durham, UK, 2007. [Google Scholar]

- Hamdi, H.; Richard, P.; Suteau, A.; Allain, P. Emotion assessment for affective computing based on physiological responses. In Proceedings of the 2012 IEEE International Conference on Fuzzy Systems, Brisbane, QLD, Australia, 10–15 June 2012. [Google Scholar]

- Vidal, J.J. Toward Direct Brain-Computer Communication. Annu. Rev. Biophys. Bioeng. 1973, 2, 157–180. [Google Scholar] [CrossRef] [PubMed]

- Jeanmonod, D.J.; Suzuki, K. We are IntechOpen, the world’s leading publisher of Open Access books Built by scientists, for scientists TOP 1% Control of a Proportional Hydraulic System. Intech. Open 2018, 2, 64. [Google Scholar]

- Blankertz, B.; Curio, G.; Vaughan, T.M.; Schalk, G.; Wolpaw, J.R.; Neuper, C.; Pfurtscheller, G.; Hinterberger, T.; Birbaumer, N. The BCI Competition 2003: Progress and Perspectives in Detection and Discrimination of EEG Single Trials. IEEE Trans. Biomed. Eng. 2004, 51, 1044–1051. [Google Scholar] [CrossRef]

- Khan, R. Future Internet: The Internet of Things Architecture, Possible Applications and Key Challenges. In Proceedings of the 2012 10th International Conference on Frontiers of Information Technology, Islamabad, India, 17–19 December 2012; pp. 257–260. [Google Scholar]

- Pattnaik, P.K.; Sarraf, J. Brain Computer Interface issues on hand movement. J. King Saud. Univ. Comput. Inf. Sci. 2018, 30, 18–24. [Google Scholar] [CrossRef]

- Lebedev, M.A.; Nicolelis, M.A.L. Brain-machine interfaces: past, present and future. Trends Neurosci. 2006, 29, 536–546. [Google Scholar] [CrossRef] [PubMed]

- Minguillon, J.; Lopez-Gordo, M.A.; Pelayo, F. Trends in EEG-BCI for daily-life: Requirements for artifact removal. Biomed. Signal Process. Control 2017, 31, 407–418. [Google Scholar] [CrossRef]

- Jafarifarmand, A.; Badamchizadeh, M.A. Artifacts removal in EEG signal using a new neural network enhanced adaptive filter. Neurocomputing 2013, 103, 222–231. [Google Scholar] [CrossRef]

- Arvaneh, M.; Guan, C.; Ang, K.K.; Quek, C. Optimizing the channel selection and classification accuracy in EEG-based BCI. IEEE Trans. Biomed. Eng. 2011, 58, 1865–1873. [Google Scholar] [CrossRef]

- Kübler, A.; Furdea, A.; Halder, S.; Hammer, E.M.; Nijboer, F.; Kotchoubey, B. A brain-computer interface controlled auditory event-related potential (p300) spelling system for locked-in patients. Ann. N. Y. Acad. Sci. 2009, 1157, 90–100. [Google Scholar] [CrossRef]

- Shukla, R.; Trivedi, J.K.; Singh, R.; Singh, Y.; Chakravorty, P. P300 event related potential in normal healthy controls of different age groups. Indian J. Psychiatry 2000, 42, 397–401. [Google Scholar]

- Núñez-Peña, M.I.; Corral, M.J.; Escera, C. Potenciales evocados cerebrales en el contexto de la investigación psicológica: Una actualización. Anu. Psicol. 2004, 35, 3–21. [Google Scholar]

- Utsumi, K.; Takano, K.; Okahara, Y.; Komori, T.; Onodera, O.; Kansaku, K. Operation of a P300-based braincomputer interface in patients with Duchenne muscular dystrophy. Sci. Rep. 2018, 8, 1753. [Google Scholar] [CrossRef]

- Polich, J. Clinical application of the P300 event-related brain potential. Phys. Med. Rehabil. Clin. N. Am. 2004, 15, 133–161. [Google Scholar] [CrossRef]

- Chi, Y.M.; Wang, Y.T.; Wang, Y.; Maier, C.; Jung, T.P.; Cauwenberghs, G. Dry and noncontact EEG sensors for mobile brain-computer interfaces. IEEE Trans. Neural Syst. Rehabil. Eng. 2012, 20, 228–235. [Google Scholar] [CrossRef] [PubMed]

- Terven, J.R.; Salas, J.; Raducanu, B. New Opportunities for computer vision-based assistive technology systems for the visually impaired. Computer (Long. Beach. Calif.) 2014, 47, 52–58. [Google Scholar]

- Tsai, T.-W.; Lo, H.Y.; Chen, K.-S. An affective computing approach to develop the game-based adaptive learning material for the elementary students. In Proceedings of the 2012 Joint International Conference on Human-Centered Computer Environments (HCCE ’12), New York, NY, USA, 8–13 March 2012. [Google Scholar]

- Picard, R.W. Affective Computing; MIT Press: Cambridge, MA, USA, 1997. [Google Scholar]

- Lobera, J.; Mondragón, V.; Contreras, B. Guía Didáctica para la Inclusión en Educación inicial y Básica; Technical Report; Secretaria de Educación Pública: México city, México, 2010.

- Sarwar, S.Z.; Aslam, M.S.; Manarvi, I.; Ishaque, A.; Azeem, M. Noninvasive imaging system for visually impaired people. In Proceedings of the 2010 3rd International Conference on Computer Science and Information Technology (ICCSIT 2010), Chengdu, China, 9–11 July 2010. [Google Scholar]

- Guo, J.; Gao, S.; Hong, B. An Auditory Brain–Computer Interface. IEEE Trans. Neural Syst. Rehabil. Eng. 2010, 18, 230–235. [Google Scholar] [PubMed]

- Hinterberger, T.; Hi, J.; Birbaume, N. Auditory brain-computer communication device. In Proceedings of the IEEE International Workshop on Biomedical Circuits and Systems, Singapore, 1–3 December 2004. [Google Scholar]

- Nijboer, F.; Furdea, A.; Gunst, I.; Mellinger, J.; McFarland, D.J.; Birbaumer, N.; Kübler, A. An auditory brain-computer interface (BCI). J. Neurosci. Methods 2008, 167, 43–50. [Google Scholar] [CrossRef] [PubMed]

- Klobassa, D.S.; Vaughan, T.M.; Brunner, P.; Schwartz, N.E.; Wolpaw, J.R.; Neuper, C.; Sellers, E.W. Toward a high-throughput auditory P300-based brain-computer interface. Clin. Neurophysiol. 2009, 120, 1252–1261. [Google Scholar] [CrossRef] [PubMed]

- Sellers, E.W.; Ryan, D.B.; Hauser, C.K. Noninvasive brain-computer interface enables communication after brainstem stroke. Sci. Transl. Med. 2014, 6. [Google Scholar] [CrossRef] [PubMed]

- Okahara, Y.; Takano, K.; Komori, T.; Nagao, M.; Iwadate, Y.; Kansaku, K. Operation of a P300-based brain-computer interface by patients with spinocerebellar ataxia. Clin. Neurophysiol. Pract. 2017, 2, 147–153. [Google Scholar] [CrossRef] [PubMed]

- Millán, J.d.R.; Renkens, F.; Mouriño, J.; Gerstner, W. Brain-actuated interaction. Artif. Intell. 2004, 159, 241–259. [Google Scholar] [CrossRef]

- Sirvent Blasco, J.L.; Iáñez, E.; Úbeda, A.; Azorín, J.M. Visual evoked potential-based brain-machine interface applications to assist disabled people. Expert Syst. Appl. 2012, 39, 7908–7918. [Google Scholar] [CrossRef]

- Hill, N.J.; Lal, T.N.; Bierig, K.; Birbaumer, N.; Scholkopf, B. Attentional modulation of auditory event-related potentials in a brain-computer interface. In Proceedings of the IEEE International Workshop on Biomedical Circuits and Systems, Singapore, 1–3 December 2004. [Google Scholar]

- Hill, N.J.; Schölkopf, B. An online brain-computer interface based on shifting attention to concurrent streams of auditory stimuli. J. Neural Eng. 2012, 9, 1–23. [Google Scholar] [CrossRef]

- Suwa, S.; Yin, Y.; Cui, G.; Tanaka, T.; Cao, J.; Algorithm, A.E.M.D. A design method of an auditory P300 with P100 brain computer interface system. In Proceedings of the 2012 IEEE 11th International Conference on Signal Processing, Beijing, China, 21–25 October 2012. [Google Scholar]

- Yin, E.; Zeyl, T.; Saab, R.; Hu, D.; Zhou, Z.; Chau, T. An Auditory-Tactile Visual Saccade-Independent P300 Brain–Computer Interface. Int. J. Neural Syst. 2015, 26, 1650001. [Google Scholar] [CrossRef] [PubMed]

- Collinger, J.L.; Wodlinger, B.; Downey, J.E.; Wang, W.; Tyler-Kabara, E.C.; Weber, D.J.; McMorland, A.J.C.; Velliste, M.; Boninger, M.L.; Schwartz, A.B. High-performance neuroprosthetic control by an individual with tetraplegia. Lancet 2013, 381, 557–564. [Google Scholar] [CrossRef]

- Wang, Y.T.; Wang, Y.; Jung, T.P. A cell-phone based brain-computer interface for communication in daily life. J. Neural Eng. 2011, 8, 025018. [Google Scholar] [CrossRef] [PubMed]

- Daly, I.; Williams, D.; Malik, A.; Weaver, J.; Kirke, A.; Hwang, F.; Miranda, E.; Nasuto, S.J. Personalised, Multi-modal, Affective State Detection for Hybrid Brain-Computer Music Interfacing. IEEE Trans. Affect. Comput. 2018, 3045, 1–14. [Google Scholar] [CrossRef]

- Williams, D.; Kirke, A.; Miranda, E.; Daly, I.; Hwang, F.; Weaver, J.; Nasuto, S. Affective calibration of musical feature sets in an emotionally intelligent music composition system. ACM Trans. Appl. Percept. 2017, 14, 1–13. [Google Scholar] [CrossRef]

- Murugappan, M.; Nagarajan, R.; Yaacob, S. Combining spatial filtering and wavelet transform for classifying human emotions using EEG Signals. J. Med. Biol. Eng. 2011, 31, 45–51. [Google Scholar] [CrossRef]

- Miranda, E.R.; Durrant, S.; Anders, T. Towards brain-computer music interfaces: Progress and challenges. In Proceedings of the 2008 First International Symposium on Applied Sciences on Biomedical and Communication Technologies (ISABEL 2008), Aalborg, Denmark, 25–28 October 2008. [Google Scholar]

- Khosrowabadi, R.; Quek, H.C.; Wahab, A.; Ang, K.K. EEG-based emotion recognition using self-organizing map for boundary detection. In Proceedings of the 2010 20th International Conference on Pattern Recognition, Istanbul, Turkey, 23–26 August 2010. [Google Scholar]

- Mühl, C.; Brouwer, A.M.; van Wouwe, N.; van den Broek, E.; Nijboer, F.; Heylen, D.K.J. Modality-specific affective responses and their implications for affective BCI. In Proceedings of the Fifth International Brain-Computer Interface Conference 2011, Graz, Austria, 22–24 September 2011. [Google Scholar]

- Petrantonakis, P.C.; Hadjileontiadis, L.J. Emotion Recognition From EEG Using Higher Order Crossings. IEEE Trans. Inf. Technol. Biomed. 2010, 14, 186–197. [Google Scholar] [CrossRef]

- Nie, D.; Wang, X.W.; Shi, L.C.; Lu, B.L. EEG-based emotion recognition during watching movies. In Proceedings of the 2011 5th International IEEE/EMBS Conference on Neural Engineering, Cancun, Mexico, 27 April–1 May 2011. [Google Scholar]

- Hsu, J.L.; Zhen, Y.L.; Lin, T.C.; Chiu, Y.S. Personalized music emotion recognition using electroencephalography (EEG). In Proceedings of the 2014 IEEE International Symposium on Multimedia, Taichung, Taiwan, 10–12 December 2014. [Google Scholar]

- Byun, S.W.; Lee, S.P.; Han, H.S. Feature selection and comparison for the emotion recognition according to music listening. In Proceedings of the 2017 International Conference on Robotics and Automation Sciences (ICRAS), Hong Kong, China, 26–29 August 2017. [Google Scholar]

- Sourina, O.; Liu, Y. EEG-enabled affective applications. In Proceedings of the 2013 Humaine Association Conference on Affective Computing and Intelligent Interaction, Geneva, Switzerland, 2–5 September 2013. [Google Scholar]

- Diesner, J.; Evans, C.S. Little Bad Concerns: Using Sentiment Analysis to Assess Structural Balance in Communication Networks. In Proceedings of the 2015 IEEE/ACM International Conference on Advances in Social Networks Analysis and Mining (ASONAM), Paris, France, 25–28 August 2015. [Google Scholar]

- Tseng, K.C.; Lin, B.S.; Wong, A.M.K.; Lin, B.S. Design of a mobile brain computer interface-based smart multimedia controller. Sensors 2015, 15, 5518–5530. [Google Scholar] [CrossRef]

- Xu, H.; Zhang, D.; Ouyang, M.; Hong, B. Employing an active mental task to enhance the performance of auditory attention-based brain-computer interfaces. Clin. Neurophysiol. 2013, 124, 83–90. [Google Scholar] [CrossRef]

- Koelstra, S.; Mühl, C.; Soleymani, M.; Lee, J.S.; Yazdani, A.; Ebrahimi, T.; Pun, T.; Nijholt, A.; Patras, I. DEAP: A database for emotion analysis; Using physiological signals. IEEE Trans. Affect. Comput. 2012, 3, 18–31. [Google Scholar] [CrossRef]

- Pantic, M.; Rothkrantz, L.J.M. Toward an affect-sensitive multimodal human-computer interaction. Proc. IEEE 2003, 91, 1370. [Google Scholar] [CrossRef]

- Healey, J.; Picard, R. SmartCar: Detecting driver stress. In Proceedings of the 15th International Conference on Pattern Recognition (ICPR-2000), Barcelona, Spain, 3–7 September 2000. [Google Scholar]

- Hamdi, H.; Richard, P.; Suteau, A.; Saleh, M. A Multi-Modal Virtual Environment To Train for Job Interview. In Proceedings of the 1st International Conference on Pervasive and Embedded Computing and Communication Systems (PECCS 2011), Algarve, Portugal, 5–7 March 2011. [Google Scholar]

- Kousarrizi, M.R.N.; Ghanbari, A.A.; Teshnehlab, M.; Aliyari, M.; Gharaviri, A. Feature extraction and classification of EEG signals using wavelet transform, SVM and artificial neural networks for brain computer interfaces. In Proceedings of the 2009 International Joint Conference on Bioinformatics, Systems Biology and Intelligent Computing, Shanghai, China, 3–5 August 2009. [Google Scholar]

- Padfield, N.; Zabalza, J.; Zhao, H.; Masero, V.; Ren, J. EEG-Based Brain-Computer Interfaces Using Motor-Imagery: Techniques and Challenges. Sensors 2019, 19, 1423. [Google Scholar] [CrossRef] [PubMed]

- Allison, B.Z.; Neuper, C. Could Anyone Use a BCI? In Brain-Computer Interfaces; Tan, D., Nijholt, A., Eds.; Springer: London, UK, 2010. [Google Scholar]

- De Negueruela, C.; Broschart, M.; Menon, C.; Del R. Millán, J. Brain-computer interfaces for space applications. Pers. Ubiquitous Comput. 2011, 15, 527–537. [Google Scholar] [CrossRef]

- Wolpaw, J.R.; Birbaumer, N.; McFarland, D.J.; Pfurtscheller, G.; Vaughan, T.M. Brain-computer interfaces for communication and control. Clin. Neurophysiol. 2002, 113, 767–791. [Google Scholar] [CrossRef]

- Suefusa, K.; Tanaka, T. A comparison study of visually stimulated brain-computer and eye-tracking interfaces. J. Neural Eng. 2017, 14. [Google Scholar] [CrossRef] [PubMed]

- Makeig, S.; Kothe, C.; Mullen, T.; Bigdely-Shamlo, N.; Zhang, Z.; Kreutz-Delgado, K. Evolving Signal Processing for Brain 2013; Computer Interfaces. Proc. IEEE 2012, 100, 1567–1584. [Google Scholar] [CrossRef]

- Bhowmick, A.; Hazarika, S.M. An insight into assistive technology for the visually impaired and blind people: state-of-the-art and future trends. J. Multimodal User Interfaces 2017, 11, 149–172. [Google Scholar] [CrossRef]

- Yuan, H.; He, B. Brain–Computer Interfaces Using Sensorimotor Rhythms: Current State and Future Perspectives. IEEE Trans. Biomed. Eng. 2014, 61, 1425–1435. [Google Scholar] [CrossRef] [PubMed]

| Identifier | Year | Components | Description | Stimulus | Analysis | Accuracy | Extraction/Classification |

|---|---|---|---|---|---|---|---|

| [9] Hamdi et al. | 2012 | BCI, EEG, AC | Recognition of emotions through a BCI and a heart rate sensor | Visual | Online | Positive | Analysis of variance (ANOVA) |

| [14] Pattnaik et al. | 2018 | BCI, EEG | BCI for the classification of the movements of the left hand and the right hand | Visual | Online | Positive | Discrete wavelet transform (DWT) |

| [16] Minguillon et al. | 2017 | BCI, EEG | Identification of EEG noise produced by endogenous and exogenous causes | -- | Offline | -- | -- |

| [17] Jafarifarmad et al. | 2013 | BCI, EEG | Extraction of noise-free features for EEG previously recorded | -- | Offline | Positive | Functional-link neural network (FLN), adaptive radial basis function networks (RBFN) |

| [18] Arvaneh et al. | 2011 | BCI, EEG | Algorithm for EEG channel selection | Auditory/Visual | Offline | +10% | Sparse common spatial pattern (SCSP) |

| [19] Kübler et al. | 2009 | BCI, EEG, EP | BCI-controlled auditory event-related potential | Auditory | Online | -- | Stepwise linear discriminant analysis method (SWLDA), Fisher’s linear discriminant (FLD) |

| [22] Utsumi et al. | 2018 | BCI, EEG | BCI for patients with DMD (Duchenne muscular dystrophy) based on the P300 | Visual | Offline | 71.6%–80.6% | Fisher’s linear discriminant analysis |

| [24] Chi et al. | 2012 | BCI, EEG | Analysis of dry and non-contact electrodes for a BCI | Auditory/Visual | Online | Positive | Canonical correlation analysis (CCA) |

| [29] Sarwar et al. | 2010 | BCI, EEG | Non-invasive BCI to convert images into signals for the optic nerve | Visual | Online | Positive | -- |

| [30] Guo et al. | 2010 | BCI, EEG | A brain computer–auditory interface, using the mental response | Auditory | Offline | 85% | Fisher discriminant analysis (FLD), support vector machine (SVM) |

| [33] Klobassa et al. | 2009 | BCI, EEG, EP | BCI based on P300 | Auditory | Offline | 50%–75% | Stepwise linear discriminant analysis method (SWLDA) |

| [34] Sellers et al. | 2014 | BCI, EEG | BCI non-invasive for communication of messages from people with motor disabilities | Visual | Online | Positive | Stepwise linear discriminant analysis method (SWLDA) |

| [35] Okahara et al. | 2017 | BCI, EEG | BCI based on P300 for patients with spinocerebellar ataxia (SCA) | Visual | Offline | 82.9%–83.2% | Fisher’s linear discriminant analysis |

| [37] Blasco et al. | 2012 | BCI, EEG, AC | BCI based on EEG, for people with disabilities | Visual | Online | Positive | Stepwise linear discriminant analysis (SWLDA) |

| [39] Hill et al. | 2012 | BCI, EEG | BCI for completely paralyzed people, based on auditory stimuli | Auditory | Online | 76%–96% | Contrast between stimuli |

| [40] Suwa et al. | 2012 | BCI, EEG, EP | BCI that uses the P300 and P100 responses | Auditory | Online | 78% | Support vector machine (SVM) |

| [41] Yin et al. | 2015 | BCI, EEG, EP | Bimodal brain–computer interface | Auditory/Tactile | Online | +45.43–+51.05% | Bayesian linear discriminant analysis (BLDA) |

| [42] Collinger et al. | 2013 | BCI, EEG | Invasive brain–computer interface for neurological control | Visual | Online | Positive | -- |

| [43] Wang et al. | 2010 | BCI, EEG | Portable and wireless brain–computer interface | Visual | Online | 95.9% | Fast Fourier transform (FFT) |

| [44] Daly et al. | 2018 | BCI, EEG, AC | Analysis of brain signals for the detection of a person’s affective state | Auditory | Online | Positive | Support vector machine (SVM) |

| [45] Williams et al. | 2017 | BCI, EEG, AC | System for the generation of music dependent on the affective state of a person | Auditory | Online | Positive | -- |

| [46] Murugappan et al. | 2011 | BCI, EEG, AC | Evaluation of the emotions of a person, using an EEG and auditory stimuli | Auditory/Visual | Offline | 79.17%–83.04% | Surface laplacian filtering, wavelet transform (WT), linear classifiers |

| [48] Khosrowabadi | 2010 | BCI, EEG, AC | System for the detection of emotions based on EEG | Auditory/Visual | Offline | 84.5% | The k-nearest neighbor classifier (KNN) |

| [49] Mühl et al. | 2011 | BCI, EEG, AC | Affective BCI using a person’s affective responses | Auditory/Visual | Online | -- | A Gaussian naive Bayes classifier |

| [50] Pentratonakis et al. | 2010 | BCI, EEG, AC | Recognition of emotions through the study of EEG | Visual | Offline | 62.3%–83.33% | K-nearest neighbor (KNN), quadratic discriminant analysis, support vector machine (SVM) |

| [51] Nie et al. | 2011 | BCI, EEG, AC | Classification of positive or negative emotions, studying an EEG | Visual | Offline | 87.53% | Support vector machine (SVM) |

| [52] Hsu et al. | 2015 | BCI, EEG, AC | BCI non-invasive for the recognition of the emotions produced by music | Visual | Online | Positive | Artificial neural network model (ANN) |

| [53] Byun et al. | 2017 | BCI, EEG, AC | Classification of a person’s emotions using an EEG | Auditory | Offline | Positive | Band-pass filter |

| [54,55] Sourina & Liu | 2013 | BCI, EEG, AC | Algorithm of recognition of emotions in real-time, for sensitive interfaces | Visual | Online | Positive | Support vector machine (SVM) |

| [58] Tseng et al. | 2015 | BCI, EEG | Intelligent multimedia controller based on BCI | Auditory | Online | Positive | Fast Fourier transform (FFT) |

| [56] Xu et al. | 2013 | BCI, EEG | Performance of an auditory BCI based on related evoked potentials | Auditory | Online | +4%–+6% | Support vector machine (SVM) |

| [57] Koelstra et al. | 2012 | BCI, EEG | A database for the analysis of emotions | Visual | Offline | -- | High-pass filter, analysis of variance (ANOVA) |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

López-Hernández, J.L.; González-Carrasco, I.; López-Cuadrado, J.L.; Ruiz-Mezcua, B. Towards the Recognition of the Emotions of People with Visual Disabilities through Brain–Computer Interfaces. Sensors 2019, 19, 2620. https://doi.org/10.3390/s19112620

López-Hernández JL, González-Carrasco I, López-Cuadrado JL, Ruiz-Mezcua B. Towards the Recognition of the Emotions of People with Visual Disabilities through Brain–Computer Interfaces. Sensors. 2019; 19(11):2620. https://doi.org/10.3390/s19112620

Chicago/Turabian StyleLópez-Hernández, Jesús Leonardo, Israel González-Carrasco, José Luis López-Cuadrado, and Belén Ruiz-Mezcua. 2019. "Towards the Recognition of the Emotions of People with Visual Disabilities through Brain–Computer Interfaces" Sensors 19, no. 11: 2620. https://doi.org/10.3390/s19112620

APA StyleLópez-Hernández, J. L., González-Carrasco, I., López-Cuadrado, J. L., & Ruiz-Mezcua, B. (2019). Towards the Recognition of the Emotions of People with Visual Disabilities through Brain–Computer Interfaces. Sensors, 19(11), 2620. https://doi.org/10.3390/s19112620