1. Introduction

The framework of this proposal is positioning in indoor Intelligent Spaces. These kinds of Local Positioning Spaces (LPSs) are complex environments in which several sensors collect information, multiple agents share resources and position and navigation of mobile units appear as main tasks [

1,

2]. Under such complex conditions, having sensors with complementary capacities is necessary to fulfill all requirements satisfactorily.

At the end of this section we include a table (

Table 1) with a comprehensive summary of the indoor positioning outlook presented in this introduction, according to accuracy and cost features, together with a description of the applications and some comments on their main strengths and weaknesses.

Depending on the application goals, a rough, but useful, classification may divide the indoor positioning systems in non-precise systems (from some tens of cm to 1 m level) or precise ones (1 to 10 cm). The former are typically human-centered applications in which m-level or room-level accuracy may be enough to fulfill the requirements (for example, localization of people or objects in office buildings). They are usually user-oriented applications based on portable technologies as mobile phones [

3], Inertial Measurement Units (IMUs), etc. [

4], or indoor local networks (WiFi, Zigbee, Bluetooth) [

5]. They exploit the benefits of having an available infrastructure in the environment reducing, consequently, costs and sensor design effort at the expense of reaching lower precision than an ad-hoc sensorial positioning system, as the networks are originally conceived for communication purposes. They usually operate using Received Signal Strength (RSS) signals [

6] to directly obtain distance estimations upon signal level measurements, or fingerprinting-based approaches relying on previously acquired radiomaps [

5].

On the other side, in industrial environments, positioning systems for autonomous agents must be precise and robust. A cm-level positioning accuracy is needed when, for instance, an Automated Guided Vehicle (AGV) must perform different tasks such as carrying heavy loads inside a manufacturing plant [

7,

8]. In these spaces, the localization system (sensors and processing units) is very often deployed in the environment and needs careful specific design. Precise positioning of AGVs (and autonomous agents in general) is more visible today in industrial environments (for example, a manufacturing plant) than in civil ones (airports, hospitals, large malls, etc.) because they are more controlled spaces. In the second ones, due to human safety reasons together with the fact of being much more unpredictable spaces, their introduction is much slower. Unlike outdoor localization where Global Navigation Satellite Systems (GNSS) have imposed themselves, there is no dominant technology. Ultrasound (US) [

8], cameras [

9] and radio frequency (RF) [

10,

11], which also comprises Ultra Wide Band (UWB) technologies [

12,

13], and infrared (IR) have been mainly used so far. Alternatives with IR have the disadvantage of being directional, but they are very interesting when high precision is required in a channel without interference. All of them have strengths and weaknesses depending on the environmental conditions, the type of application and the performance required. They face several problems common to any LPS (occlusions, multipath, multiple users, etc.). Regarding the measuring principle, they mostly work with Time Of Flight (TOF) [

12,

14] measurements, which can in turn be Time Of Arrival (TOA) and Time Difference of Arrival (TDOA), Angle Of Arrival (AOA) [

11], or RSS [

15], as addressed in the next paragraphs and

Table 1.

In this context, the interest of this proposal focuses in precise localization systems with two types of sensors for positioning a mobile agent: IR sensors and cameras. IR sensors are, as mentioned, an interesting option if precise localization and interference free channel is needed, providing secure communication capabilities. On the other hand, cameras are widely used in many applications (such as detection, identification, etc.), including indoor positioning applications [

16]. In the type of environments mentioned above, low cost systems may be a need for scalability extension of the solution proposed to larger spaces. IR and camera solutions can meet this requirement, although IR need accurate ad-hoc design to deal with the very strong tradeoff between coverage (devices field of view), precision (Signal-to-noise ratio (SNR) achieved), real time response (integration time or filtering restriction) and cost [

17]. The coexistence of IR sensors and cameras in a complex intelligent space can be very convenient. From the cooperative point of view cameras may carry out detection and identification of people, mobile robots or objects, environment modeling and also positioning. An IR system can perform localization and act as communication channel too [

18]. Furthermore, if the sensors do not just cooperate but data fusion is carried out, the localization system improves with respect to two important aspects: first, the precision of the fused results is higher compared to the IR and camera ones, i.e., the variances of the position estimation obtained from fusion are lower than the variances of both sensors working independently [

19]. Second, the fused position estimation presents high robustness because in case any of the sensors delivers low quality measurements (high dispersion due to bad measurement conditions or sensor failure), the fused variance keeps below both sensor variances, as close to the lowest one as the other. This second aspect is a key advantage for feasible and robust positioning during navigation.

Camera-based indoor localization systems work either with natural landmarks or artificial ones, the former being more widely used recently. This kind of approach requires ad-hoc offline processes to collect information about the environment and be stored in large databases [

3,

20,

21,

22]. The artificial landmark approach implies a more invasive strategy but, on the other hand, does not require a priori environmental knowledge [

23,

24]. With respect to the camera location, it is usually placed onboard the mobile agent [

3,

20,

21,

22,

23], being a less common practice deploying the cameras in the environment infrastructure (which match better the conception of an intelligent space) [

16,

25]. Most works report a sub-meter precision like in [

20,

21], or in [

3], where an online homography (same strategy as the one in our proposal) is used. Higher precisions are also achievable, as in [

22], where a margin between 5 cm and 13 cm is reached with natural landmarks. A comprehensive review where different approaches and their features and performance can be found in [

26]. In many works different sensors are used in a cooperative (not fusion) way, as in [

20] with a camera and a Laser Imaging Detection and Ranging (LIDAR) or in [

27] with camera and odometry, both for robot navigation applications, or [

24] where ArUco markers [

28] (widely known encoded markers, also used in this work) and an IMU cooperate for a drone navigation and landing application. There is no dominant approach in fusion of cameras with other sensors in positioning and navigation applications. In [

6], a human localization system is proposed based on fusion of RF and IR pyroelectric sensors, with signal strength (RSS) measurements, is proposed. In [

29], a fusion approach with inertial measurement units (IMUs) and Kalman filter for navigation purposes is shown. In [

30] a fusion application with a similar approach as the one we present in this paper, combining the observations with covariance-matrix weights and running Monte Carlo simulations to test models and further comparison with real measurements is shown. An Interesting approach [

31] where motion sensors and Bluetooth Low Energy (BLE) beacon are fused by means of a weighted sum.

Focusing on IR solutions, there are no dominant approaches in indoor localization systems either. They can be addressed in two ways: with collimated sources (mainly laser) performing a spatial sweep [

32], or with static devices with open emission and opening angles as high as possible both in emission and reception [

17,

33]. In the first case, a high SNR is collected at the detectors but there is also greater optomechanical complexity requiring a precise scanning system [

34,

35], a structured environment (IR reflectors in known positions, with very precise alignment) and notably higher cost, in addition to demanding greater maintenance effort. With the second approach, which is the one used in the IR subsystem presented here, receivers collect lower SNR (hence engaging precision) but the system covers wider angle at lower cost. It implies a big design challenge to deal with this severe tradeoff. All these features (coverage, number of receivers needed, accuracy and cost) are key aspects towards scalability of a locating system to large spaces.

In the emerging context of Visible Light Communication (VLC) which tackles localization and communications making use of the same optical channel, the positioning systems exploit the IR device infrastructure as seen in [

33] with TDOA measurements, or in [

15] where the authors report precisions in the sub cm level with RSS, although both works provide only simulation results. Ref. [

11] is another power-based approach plus AOA detection with three photodiodes, achieving precisions of 2 cm in a realistic setup. The precisions achieved in these works are valid in a measuring range up to approximately 2 m. Regarding IR and other sensors working together, many solutions are approached from a point of view of sensor cooperation or joint operation rather than, strictly considered, sensor fusion. In [

36] passive IR reflectors are deployed in the ceiling while a camera boarded on a robot analyzes the scene under on/off IR controlled illumination, so that the joint performance is based on the comparison between both states’ joint response. A precision between 1 and 5 cm is achieved in a robot navigation application. In [

37] a collaborative approach using a camera and an IR distance scanner is used for joint estimation of the robot pose, where the camera provides accurate orientation information from visual features while the IR sensor enhances the speed of the overall solution. While [

36,

37] are the most similar approaches to our proposal, given the use of IR and camera-based localization in a robotics context, both rely on significantly different approaches (detection of actively illuminated landmarks and SLAM, Simultaneous Localization and Mapping) and architecture (self-localization systems on-board of a mobile unit). Their main challenges and achieved performance are therefore hard to compare to the proposal described in this paper, as will be seen next. Another VLC application for positioning with three Light-Emitting Diodes (LEDs) and a fast camera, with fast code (from LEDs) processing, achieving precisions better than 10 cm in a 40 m

area, can be found in [

38]. A similar application to the latter, although making use of a mobile phone camera, is proposed in [

39], showing decimeter level precisions. Many positioning systems are based on RSS measurements [

15], or AOA [

11,

34], but precise optical telemetry is usually based on phase measurements [

40].

Summarizing such a complex scenario with so high heterogeneity in the solutions proposed, we can ascertain that cm-level precision (below 10 cm) is quite difficult to achieve, not only in prepared experimental setups typically referred in the literature but, and specially, under realistic conditions in real environments. Home applications can cope with m-level and room-level accuracies while industrial ones may need (robust) performance down to 1 cm and need accurate ad-hoc solutions. Additionally, low cost solutions are very interesting in industry as, in many cases, large spaces must be covered (needing a high number of resources and devices for this purpose). The system we present here is intended to aim at meeting these requirements.

In this context, our proposal is a localization system developed with a phase-shift IR localization subsystem, composed of five receivers acting as anchors and an emitter acting as target, fused with a camera localization one, with a maximum likelihood (ML) approach. IR and camera models are developed and used for variance propagation to the final position estimation. Additive White Gaussian Noise (AWGN) hypothesis is supposed for the IR and camera observations in these models. For this, IR measurements must be mostly multipath (MP) free, which can be reasonably assumed in sufficiently large scenarios with low reflectivity of walls and ceiling, or by implementing MP mitigation techniques [

41] or oriented sensors (as in [

42] in an IR communications framework). The IR positioning system, with cm level precision, was successfully developed and shown in the past, and a model that relates the variances of position estimate to the target position was derived. The novelty lies in the development of another model to deduce observation variances and further propagation to camera position estimate by means of an homography, so that it can be used in the fused final position estimate. The novelty also lies in the fused sensor system itself as a measuring unit, which performs robustly delivering precisions in the cm level, and at the same time matches the models stated. To our knowledge there are no precise positioning systems with data fusion of a phase-shift IR system, developed ad-hoc with wide angle simple devices (IR LED emitter and photodiodes) and a single low cost camera detecting passive landmarks, performing with cm-level in ranges of 5 to 7 m.

In

Section 2 the method description is presented. The IR sensor, camera and fusion estimation models are included in

Section 3,

Section 4 and

Section 5 respectively, and evaluated with Monte Carlo simulations. Results on a real setup and the comparison with simulations to validate theoretical prediction from the models are presented in

Section 6. A summary of key concepts derived from results discussion is included in the final conclusions in

Section 7.

2. Method Description

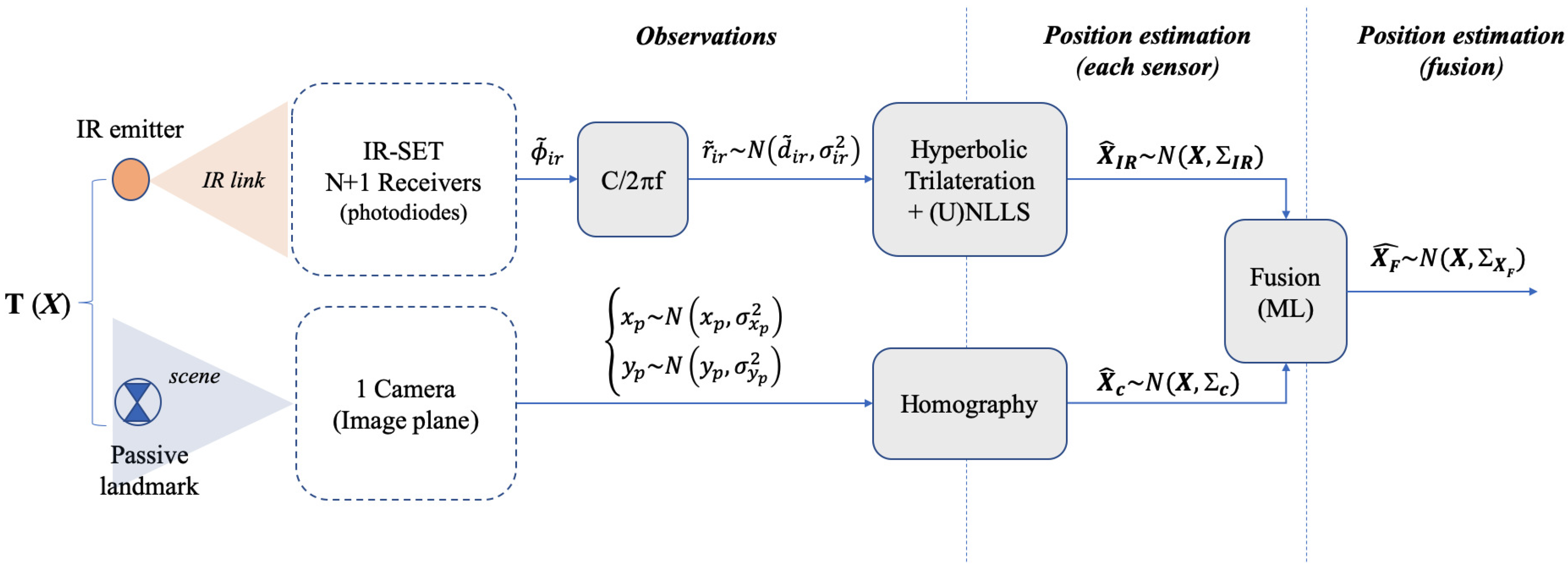

A block level description of the strategy proposed is depicted in

Figure 1. We consider a basic locating cell (BLC) where a target (a mobile robot) is to be positioned. This BLC is covered by an infrared positioning set (IR set hereafter) composed of several IR detectors and one single camera sharing the locating area. In this arrangement, the position

of the target

, defined as

, is to be obtained. This target is an IR emitter for the IR sensor and a passive landmark for the camera. It lies in a plane with fixed (and known) height

, hence positioning is a 2D problem where the coordinates

of

in such plane are sought. After the IR and camera processing blocks, two position estimates,

and

, are obtained from the IR and camera sensors respectively.

is attained by hyperbolic trilateration from differences of distances [

45] and

is obtained by projecting the camera image plane onto the scene plane by means of a homography transformation. We assume both estimates are affected by bi-dimensional zero-mean Gaussian uncertainties, represented by their respective covariance matrices

,

. A fusion stage with a maximum likelihood (ML) approach is carried out yielding a final estimate

with expected lower uncertainty values than the original IR and camera ones. The information about the final precision is contained in the resulting covariance matrix

, which is addressed in

Section 5.

A deep explanation of the IR positioning system (developed in the past) can be read in [

17,

45]. Some relevant aspects are recalled herein though, for better understanding of the proposal. In

Figure 1, the IR BLC is composed of N + 1 receivers acting as anchors (

), one of them as common reference (

), being

and

the coordinate vectors

and

, with z-coordinate fixed and known, equal to

. The coordinates of

are achieved by hyperbolic trilateration (HT) from the differences of distance measurements

, from

to each one of the anchors

(

) and from

to

. These

are obtained from differential-phase of arrival (DPOA) measurements,

, between

and

[

17]. Nevertheless, the quantities

will be named as observations hereafter as only a constant factor is needed to convert

into

. Every observation

is assumed to have additive white Gaussian noise (AWGN) with variance

. The

x,

y variances of the position IR estimate (which are terms of

) result from the propagation of

through the HT algorithm. This process, addressed in the next section, involves a set of positioning equations solved by non-linear least squares (NLLS) plus a Newton-Gauss recursive algorithm [

46]. Note that considering the error as unbiased AWGN means there are no remaining systematic or other biasing error contributions (cancelled after error correction and calibration, or considered negligible compared with random contributions). This includes multipath (MP) errors, which means working in an MP free environment (large open spaces with low wall reflectivity or else, MP cancellation capabilities [

41,

47] or working with orientable detectors [

42]).

On the other hand, the camera captures the scene and the center of a landmark placed on is detected yielding its coordinates in the BLC locating plane (in the scene) after image processing (landmark identification and center detection algorithm) and further projection from the camera image plane to the scene. As already mentioned, this is carried out by means of a homography so that a bijective correspondence between the image plane and the scene plane makes it possible to express the real world coordinates as a function of pixel coordinates in the image plane (and reciprocally). The homography is applied to the rectified image after camera calibration.

Once the estimates

and

are known, after an ML fusion procedure we obtain

as

where the weights

and

depend on the IR and camera covariance terms, which in turn also depend on the

x,

y coordinates of

. Detailed models for the

and

estimates and their respective covariance matrices

,

have been derived. We aim at two goals with these models: on the one hand, the models are the key theoretical basis to obtain the final fused estimation, as the covariance matrices acting as weights are obtained from said models. On the other hand, they are a very useful tool for the designer of the positioning system as low level parameters can be easily tuned and allow for evaluating the effect on precision, from sensor observation level to final fused estimation of position. It is also convenient to describe here the set of errors used to quantify the uncertainty of the position estimate, either delivered by simulation or by real measurements, and either referred to IR, camera or fusion. Considering an ellipse of

N position estimations, the error metrics used are:

Errors in , axes: for every position in the test grid, the uncertainty in the x and y axis is assessed by the standard deviation in x or y respectively. This information is contained in the covariance matrix. This applies to Monte Carlo simulations or real measurements.

Maximum and minimum elliptical errors: the eigenvalues of the covariance matrix are the squares of the lengths of the 1-sigma confidence ellipsoid axis, and can be easily computed by a singular-value decomposition (SVD) of this covariance matrix. Hence, given a generic covariance matrix

of a set of observations in

with coordinates x,y, after this SVD decomposition the two eigenvalues

are obtained in a matrix

. This matrices have the form:

The deviations with respect to these axes provide more spatial information about the uncertainty in each position. We will refer to these deviations as elliptical deviations, or elliptical errors. The complete spatial information of the confidence ellipsoid would include also the rotation angle of its axis with respect to, for instance, x axis. This is also easily computed, if needed, through the aforementioned SVD decomposition. In our case, the axis length is enough to assess the dimensions of the uncertainty ellipsoid and its level of circularity.

The criterion for considering one of them depends on the specific uncertainty description requirements as will be addressed along the paper as needed. The results delivered by Monte Carlo simulations, running the models aforementioned, will be compared with real measurements in a real setup.

3. Infrared Estimation Model

We developed an IR sensor in the past with cm-level precision in MP-free environments or with MP mitigation capabilities [

45]. Three receivers (anchors) at least must be seen by the IR emitter (target) so that trilateration is possible. More receivers are usually used to increase precision as real measurements are always spoiled by additive noise. A

m IR BLC composed of five receivers was proposed in [

17] so that three, four or five can be used in different configurations as needed (one of them being the common reference). For scalability purposes, this 5-anchor BLC must be linked with other BLCs and high level strategies must be defined too. Nevertheless, this question fell out of the scope of that work and is not considered here either.

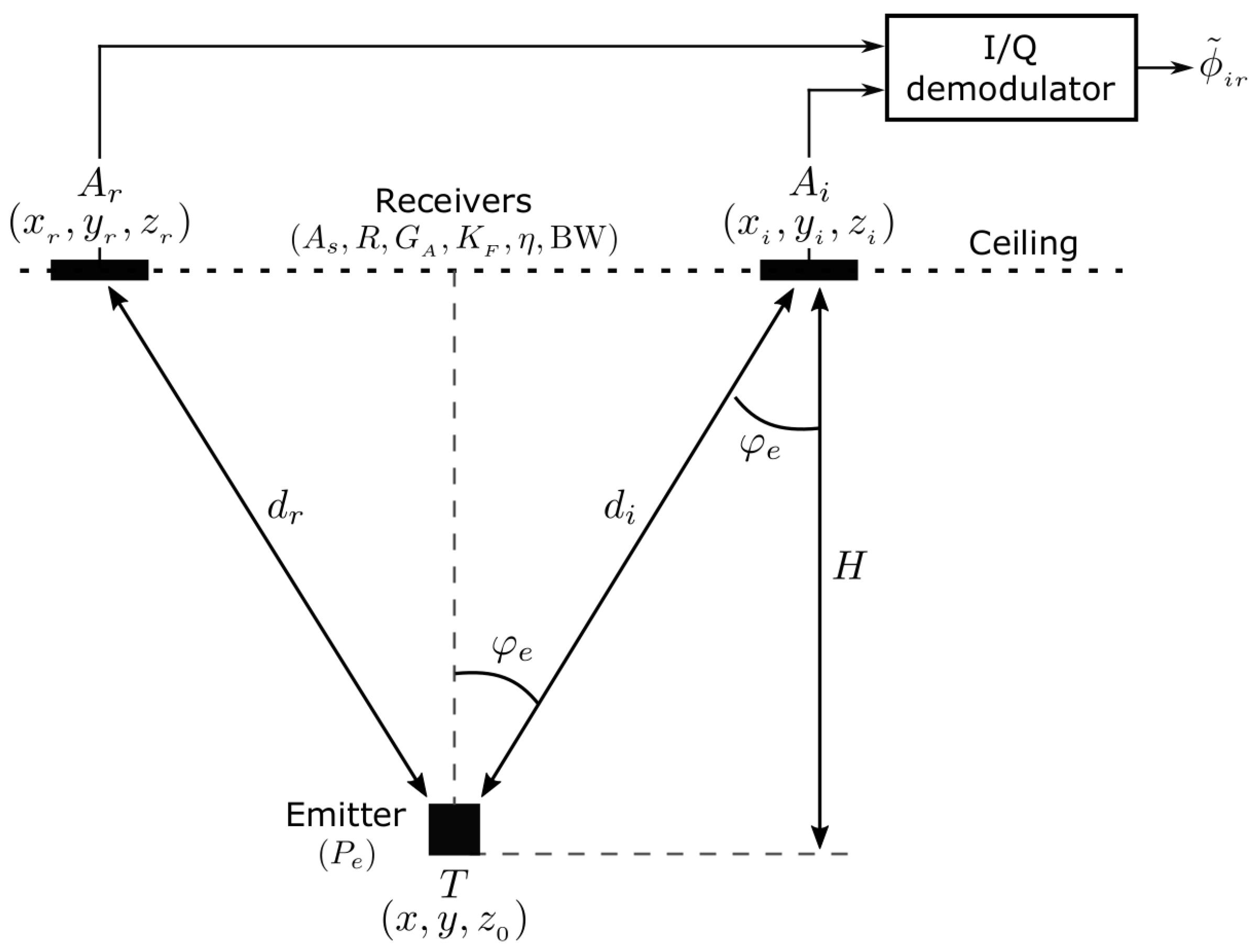

In

Figure 2 the IR link elements are depicted, summarizing the basic parameters involved in a DPOA measuring unit. Therefore, just the emitter placed at

and one of the receivers located at

together with the reference

are depicted, followed by an I/Q demodulator. As introduced in

Section 2, and explained in detail in [

17] the observation

is the

(phase difference between target

and receivers

and

respectively), directly obtained at the output of each I/Q demodulator. Regarding the IR model parameters (which encompasses radiometric, devices and electronics ones), the link

-

is the same as any of the other

-

, as all receivers, including the reference one, are implemented with the same devices.

It is assumed that

, where

is the DPOA true value. The corresponding observed differential range

is directly obtained as

and we also assume

, where

is the distance-difference true value (i.e.,

, and

represents the 2-norm operator). In order to derive an IR model for the measurements, we consider

defined as

, being

,

the true value of the distance between

and

, and

the variance of

(related to one single anchor

). This also applies to

(with

). The variance term

can be modeled as a function of

and the IR measuring-system parameters, as will be shown further. This way, considering

and

as uncorrelated variables, we can compute

as:

Note that while are real observations from the measuring system (the I/Q demodulator directly delivers a phase-difference ), and are virtual single-anchor observations, defined to derive the model.

As demonstrated in [

48], the variance

can be expressed as the inverse of the signal to noise ratio at every anchor (

), and can be modelled as follows

where the factor

is proved to be

[

48],

is the Euclidean distance (true value) between

and

, and

is a constant encompassing all parameters of the IR system (including devices, electronics, noise and geometry), as follows:

All parameters appearing in (

5) are known:

is the IR emitted power per solid angle unit,

and

R are the photodiode sensitive area and responsivity respectively,

is the i-v converter gain,

is the filter gain (after i-v stage),

is the I/Q demodulator gain (ideally unity gain) and

H is the receiver’s height (measured from the emitter z-coordinate). The terms in the denominator,

and

, are the noise power spectral density and noise bandwidth respectively. Consequently, the quantity in the denominator is the total noise power which, once the receiver parameters are fixed, is constant [

17]. The distance

in (

4) is

also valid for

making

. The relation in (

4) is a very useful tool as it allows for expressing the variance terms as a function of the coordinates of distance

, hence as a function of the coordinates

of the sought target

(given that the coordinates of

are constant) and fixed parameters grouped together in a single constant. Therefore, (

4) and (

5) establish the link between the random contributions in the observations and the coordinates

of the target. The covariance matrix of the observations

is

where every term

in the diagonal represents the variance

term defined in (

3) of the observation

. The covariance matrix is not diagonal, as the

distance term is present in all the

terms. As said, the covariance matrix modelled this way links the position of the target in the navigation space with the uncertainty in the observations. Every diagonal term is computed as in (

3) using the relation in (

4), where

and

directly depend on the target coordinates through (

6). The non-diagonal terms are directly deduced as a function of

from expressions (

4) to (

6) with

.

The position

of the target is achieved by hyperbolic trilateration. From

N observations

we can write

where

is an AWGN contribution with variance

. This is:

An estimate for

can be typically obtained by nonlinear (unweighted) least squares (NLLS) as follows:

where

is an N × 1 column vector formed by the N residuals

(noise terms in (

9)), i.e.,:

which are the Gaussian uncertainty terms in the measurement of

. (

10) can also be typically solved iteratively with a Newton-Gauss algorithm, yielding a recursive solution:

The N × 2 matrix

in (

12) is the Jacobian of

with respect to variables

x and

y (

z is constant, as mentioned), with terms

where

and

are computed as in (

6). The covariance matrix of the NLLS estimator in (

12) is then [

49,

50].

computed at each

coordinates. The IR estimate obtained in (

12) and the covariance matrix in (

14) will be used in the final fusion estimate, in the form of (

1). Note that the covariances in (

7) are not obtained from experimental measurements but from the expressions derived from the IR model. Besides providing the weights in the fusion estimate, this covariance matrix can be also used for off-line simulations in order to evaluate the results under different configurations prior to real performance.

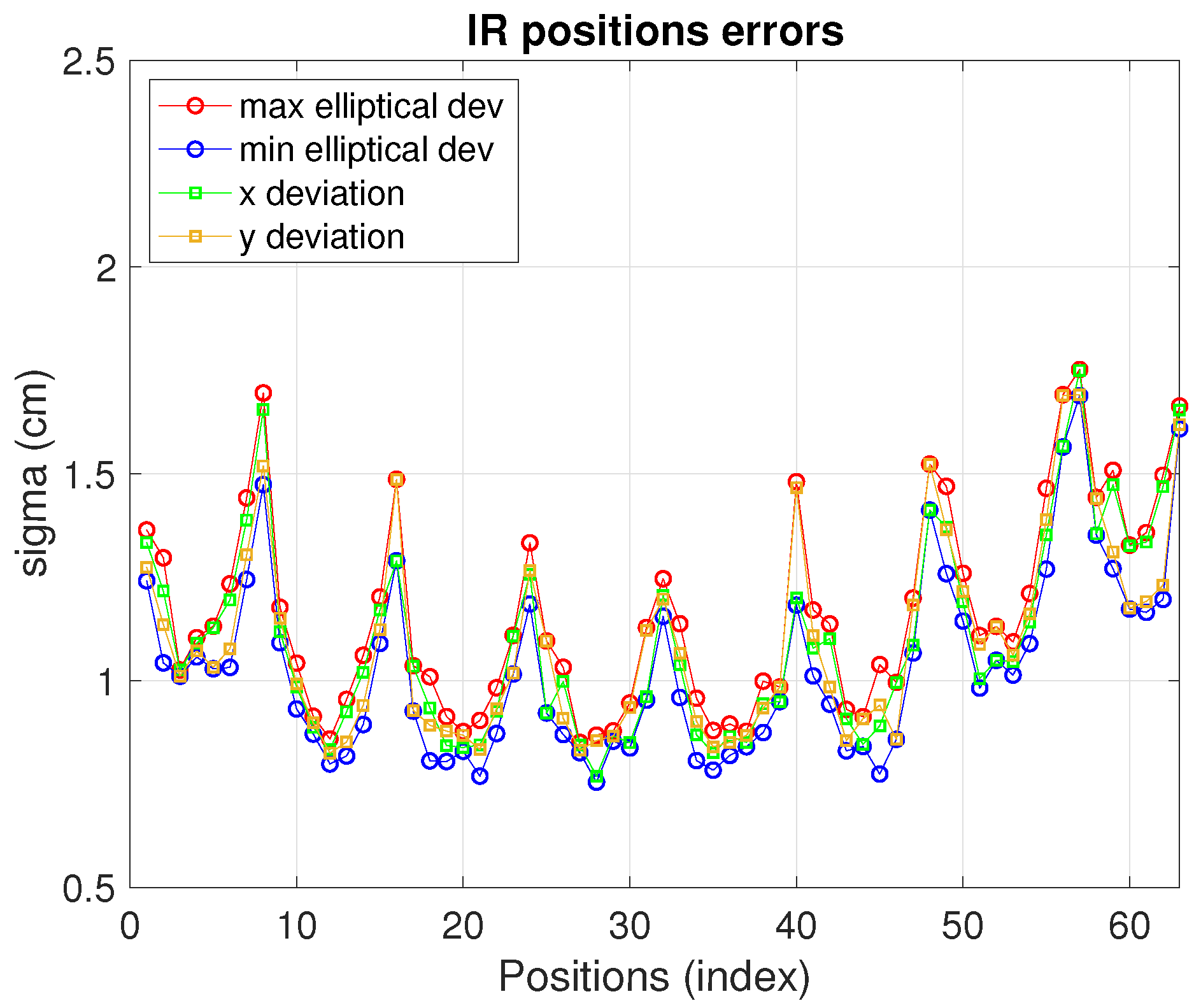

In

Figure 3 the simulation results of the model explained for realistic conditions are displayed. As shown, in

Figure 3a the whole considered test grid is depicted, with a

m IR set with 5 anchors placed at

m height (four in the corners and one in the center as common reference) covering a synthetic

m BLC. The additional area out of the IR set can be useful for transitions between BLCs or just for widening the area covered by one IR set, although with less precision (in the extra area out of the IR set the dispersion of the estimations increases). A set of 63 positions with

m separation between consecutive ones has been tested, with 100 realizations at each position. One of the locations is zoomed so that the observations cloud can be seen. The elliptical errors are evaluated at every position upon the observation ellipse (as the one zoomed). The IR link features in this simulation are: emitting power (

) 75 mW/sr, detector area (

) 100 mm

, responsivity (

R)

, i-v gain factor (

)

, filter gain (

) and I/Q demodulator gain (

) are both unity factor, noise power spectral density (

)

W/Hz and noise equivalent bandwidth (

)

Hz. These are the values for the parameters appearing in (

5) with the geometrical parameter

H set to

m. In

Figure 3b the values of these errors along the whole test cell are displayed (indexed starting at the left-bottom corner of the grid and growing in columns up to the upper right corner). As shown, under the conditions aforementioned, less dispersion is observed in the central area. The uncertainty in

axis are in a margin between 1 cm and

cm and between

cm and

cm values for

and

respectively. The

confidence ellipse is defined by axis with

values between

cm and

cm and between

cm and 2 cm (a

confidence ellipse would be defined by two times these

values). The closeness of the deviations in the ellipse axis compared to

is due to the central geometry derived from choosing the reference anchor in the center and using the five anchors in the BLC. More ellipticity would be observed in choosing another reference anchor or using less anchors in the BLC.

The IR set defines an area enclosed by the perimeter defined by the four vertices at the external anchors. This is useful for modular scaling of a larger positioning environment. Nevertheless, as can be seen in the figure, a larger area (BLC) can be covered by the same anchor deployment. This may be quite convenient when scaling the LPS as said, for locating the mobile robot when navigating between consecutive BLCs. It allows for different high level design strategies making it possible, for instance, to separate the anchors as much as possible if cost reduction is a need (at the cost of lower precision). This applies also for the camera coverage as will be seen. However, the design strategy of a larger space linking several BLCs lies out of the scope of this paper. Finally, any other configuration in which any of the parameters is changed (photodiode sensitive area, emitted power, etc) can be easily evaluated. The real tests reported in the results section are carried out with similar values as the ones synthetically generated in

Figure 3.

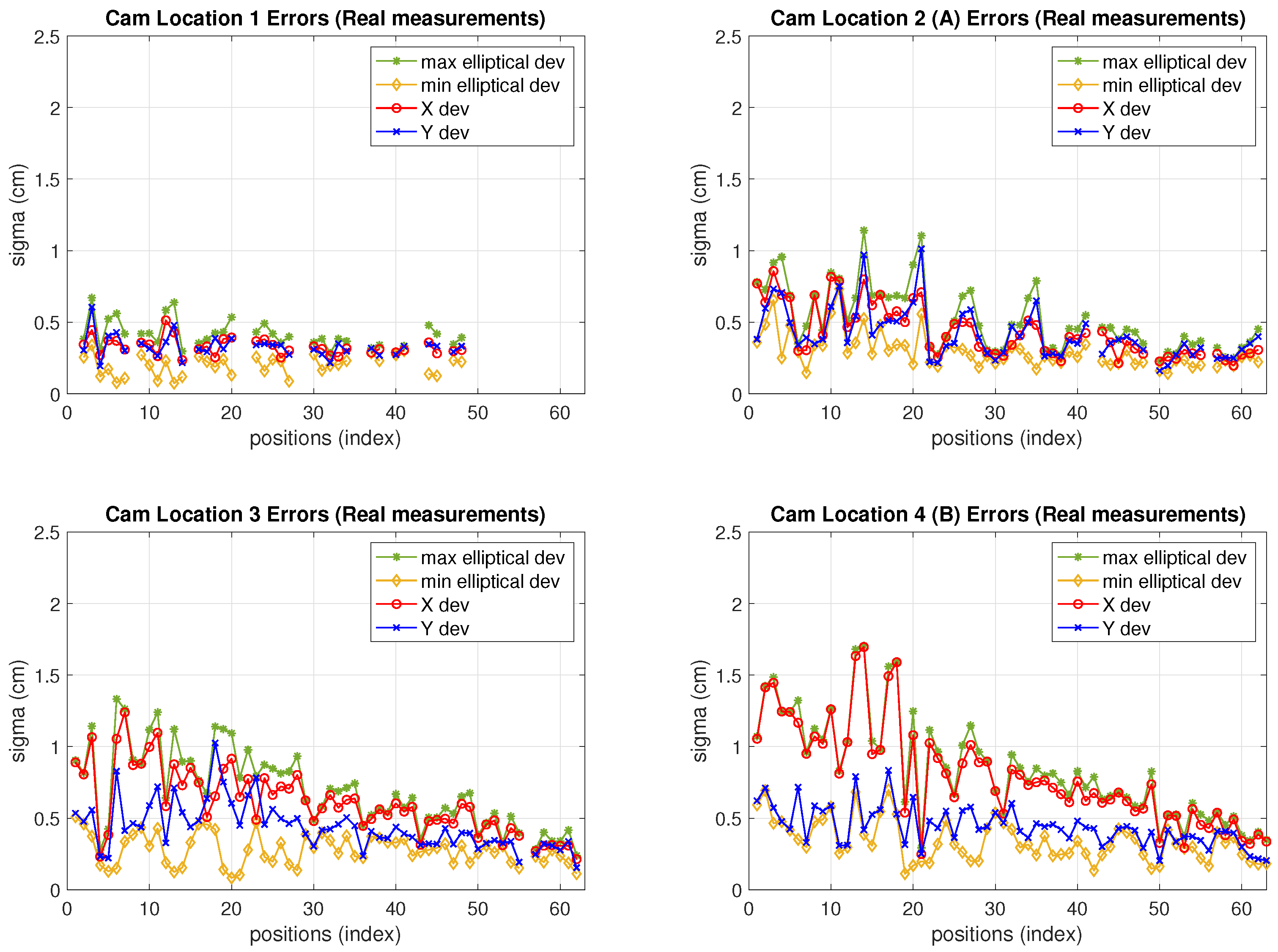

4. Camera Estimation Model

The camera is placed in the BLC at fixed co-ordinates and not necessarily at the same height as the IR anchors. It may lay out of the polygon formed by the IR receivers and, although in this work both sensors are tested with the same (target) test-position grid, it might cover, if needed, a different target-positioning area than the IR sensors (the fusion would be, nevertheless, carried out in the intersection of both areas). Let us distinguish the four procedures that take place for proper camera performance in the LPS.

Calibration: it is carried out, customarily, by means of a calibration pattern, in order to obtain the intrinsic and extrinsic camera parameters. Once these parameters are known, the coordinates of the detected target from the scene can be obtained in the image. Calibration has a big impact on errors in the final positioning. In any case, this is a standard stage in any camera-based landmark recognition application.

Homography parameters computation: a homography is established between the image and scene planes (as already mentioned, the scene is also a plane of known height). It is a reciprocal transformation from image to scene. The coefficients of the matrix

for such transformation are obtained by means of specific encoded markers (named H-markers hereafter). This process (with low computational and time cost) allows for having a bijective relation between camera and navigation planes. This way, positioning can be easily carried out with one single camera. As explained in

Section 2, the uncertainty in the image capture is propagated to uncertainty in the position in the scene through

. For setup characterization purposes, we have carried out this process off line but, in real performance, it can normally be implemented on line.

Target detection (Projection): a landmark placed on the target is detected by image processing so that the position of the target is obtained in the image plane in pixel coordinates. For simplicity, we will refer hereafter to

projection when considering the projection path from image to scene (with matrix

) and to

back projection in the opposite sense (scene to image, with

), as represented in

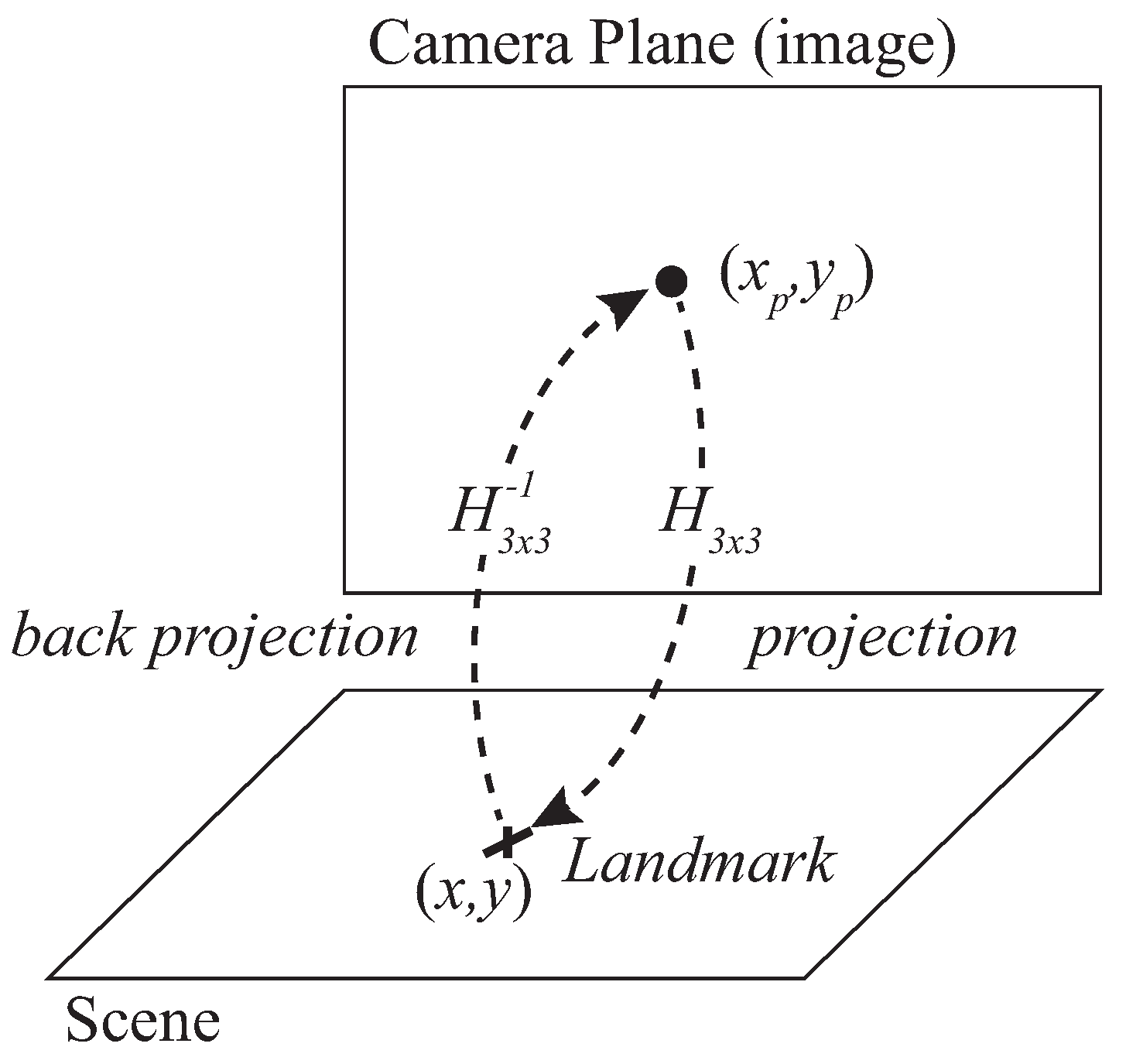

Figure 4.

Back projection: the back projection stage projects the position of scene to the camera image by means of the inverse homography matrix matrix. Back projection is used for simulation of the camera estimation model.

In

Figure 4 the true position of the landmark is represented by the coordinate vector

(same as for IR sensor) and the captured position in the camera plane is

where

and

are the

coordinates in such camera plane in pixelic units (index

p stands for

pixelic hereafter). A homography can be defined between the scene and camera planes, so that the relation between the coordinates of the landmark in the scene,

, and

in the image can be written as (

15):

In (

15)

is the homography transformation matrix, the terms

of which are specific for every scene and camera planes (hence camera location) and must be obtained accordingly. The homography matrix is a

matrix but with 8 DoF (degrees of freedom) because it is generally normalized with

or

since the planar homography relates the transformation between two planes with a scale factor

s [

51]. This way, the coordinates in the scene are obtained as follows:

Let us define the 2-variables function

defined by (

16) so that

. Given pixelic variances

and

and considering the camera observations with behavior

and

, the jacobian of

for further variance propagation is:

where the terms

,

and

D are computed as:

Given

, pixelic coordinates of the captured landmark, yielding a corresponding position estimation

, the covariance matrix of the camera estimation of position is:

Obtained from the covariance matrix of the camera observations

and the jacobian described in (

17). It is noteworthy to remark here that in addition to the projection-homography (camera to scene) described in (

15), it is also necessary to work with the back projection (scene to camera), defined by

, for evaluation of the method through simulation. To do so, synthetic true positions of the target in the grid are generated and back projected to the camera plane by means of

. Next, in the camera image, synthetic realizations with a bidimensional Gaussian distribution are also generated, with center in the true positions back projected to the image plane before. This cloud of image points is then projected to the scene by means of

. This Monte Carlo simulation allows evaluation of errors in the scene given certain known values of pixelic errors and knowledge of

matrix. It must be noted that the

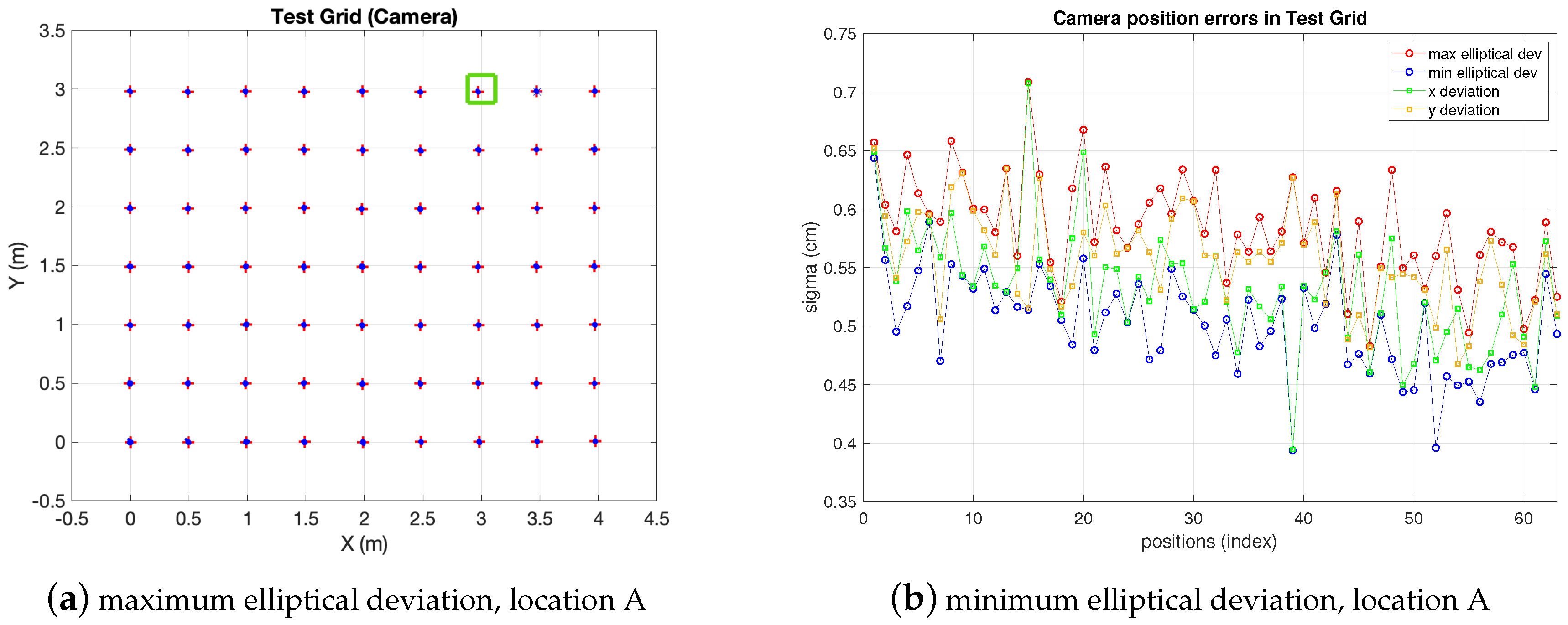

matrix depends on the camera location and, therefore, it must be obtained specifically for such a location. In

Figure 5 the same test-grid as the IR one of previous section (a

m rectangular cell with 63 positions separated

m each other) is evaluated running a simulation as explained in the previous paragraph. The camera, represented by a green square in the figure, is set at an arbitrary position at 3 m high with respect to the horizontal plane. Operating with a single camera it may be interesting to cover a wide angle for inexpensive scalability to large areas, at the cost of more distortion (on the contrary, a camera placed in the center experiences less distortion, but less coverage too).

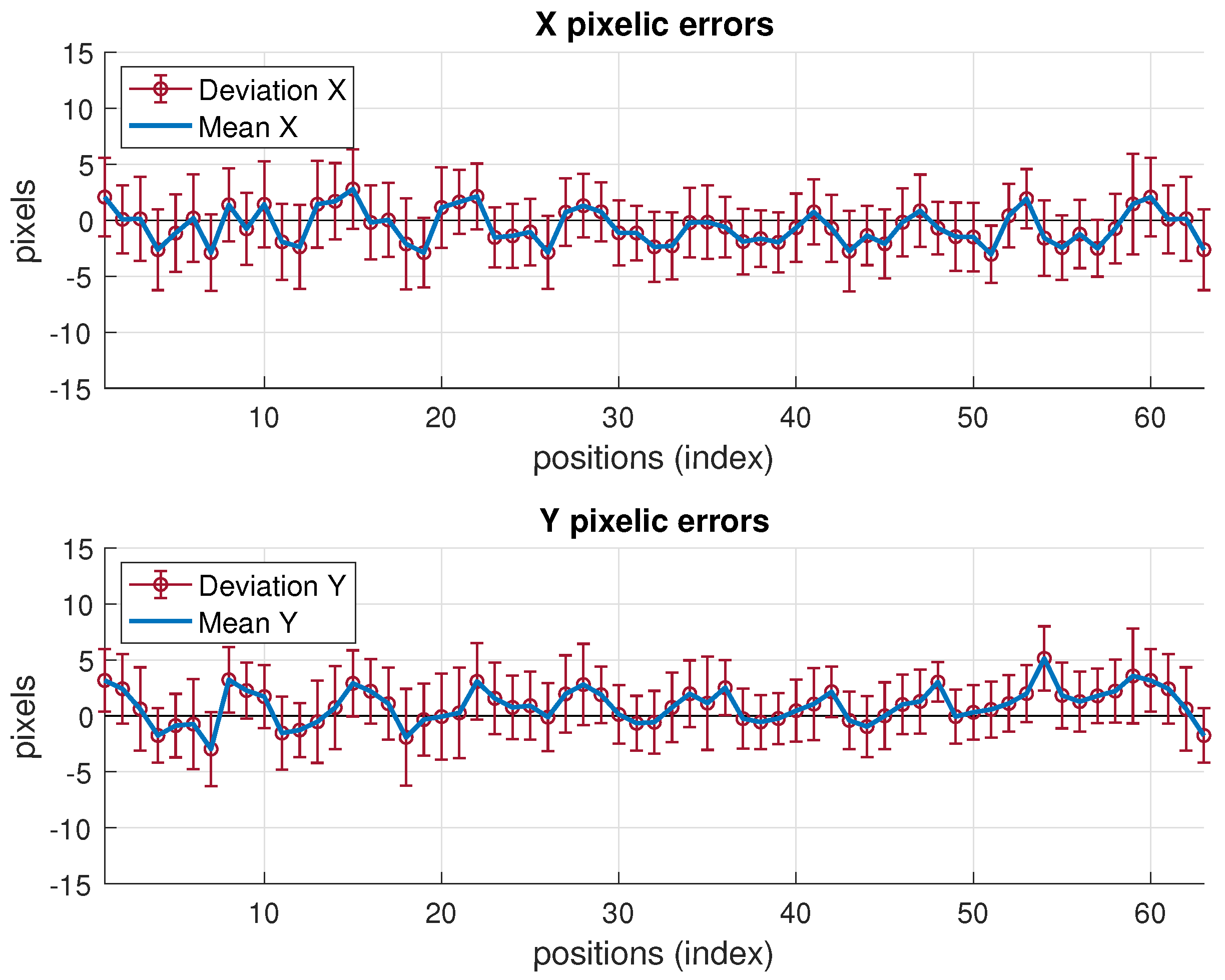

Supposing realistic uncertainty values

and

equal to 5 pixels (they could also be different from each other) and the following real

coefficients (

;

;

;

;

;

;

;

;

), the precision of the position in the scene can be seen in

Figure 5: the deviation in

axis are between

cm and

cm and between

cm and

cm values respectively, being the 68% confidence ellipse within margins of

cm and

cm and between

cm and

cm (ellipse axis respectively).

Note the decrease in the deviations as the test positions get closer to the camera. Other tests may be run in the same manner with the camera in any other location (yielding another matrix ).

5. Fusion of Camera and IR Sensors

The fusion estimation

is reached by a maximum likelihood (ML) approach [

19], i.e., maximizing, with respect to

, the joint probability of having

and

estimates given a true position

. This is, given that both estimates are independent of each other:

The IR and camera estimates are

and

respectively, where

is the true position of

T and

,

are the IR and camera covariance matrixes defined by (

12) to (

14) and (

19) to (

20), respectively. Consequently,

is described by:

and

:

Introducing (

22) and (

23) in (

21), it follows that

is found as:

Yielding the fusion estimate, computed as follows:

Regarding covariance

-dependence, note the approach followed herein in reaching (

25): the joint probability is maximized in (

21) with respect to

, considering covariances as constant. Covariances depend on the position

as seen in the derivation of IR and camera models in previous sections. However, to simplify the optimization process, a good tradeoff to find the solution is solving the ML problem as stated in (

21) to (

25), where

is the optimization variable, and compute the IR and camera covariance matrixes in

25, defined as in (

7) to (

14) and (

17) to (

20) respectively, using the position estimation delivered either by the IR sensor or by the camera sensor. The criterion for choosing one of both can be defined as needed depending on the application. By default, if no other requirement is set, the one with minimum variance (estimated at

with each respective model) is chosen. In static conditions this would be a good solution; in dynamic (navigation) conditions, we can compute the fusion-position

by using the covariance matrixes from

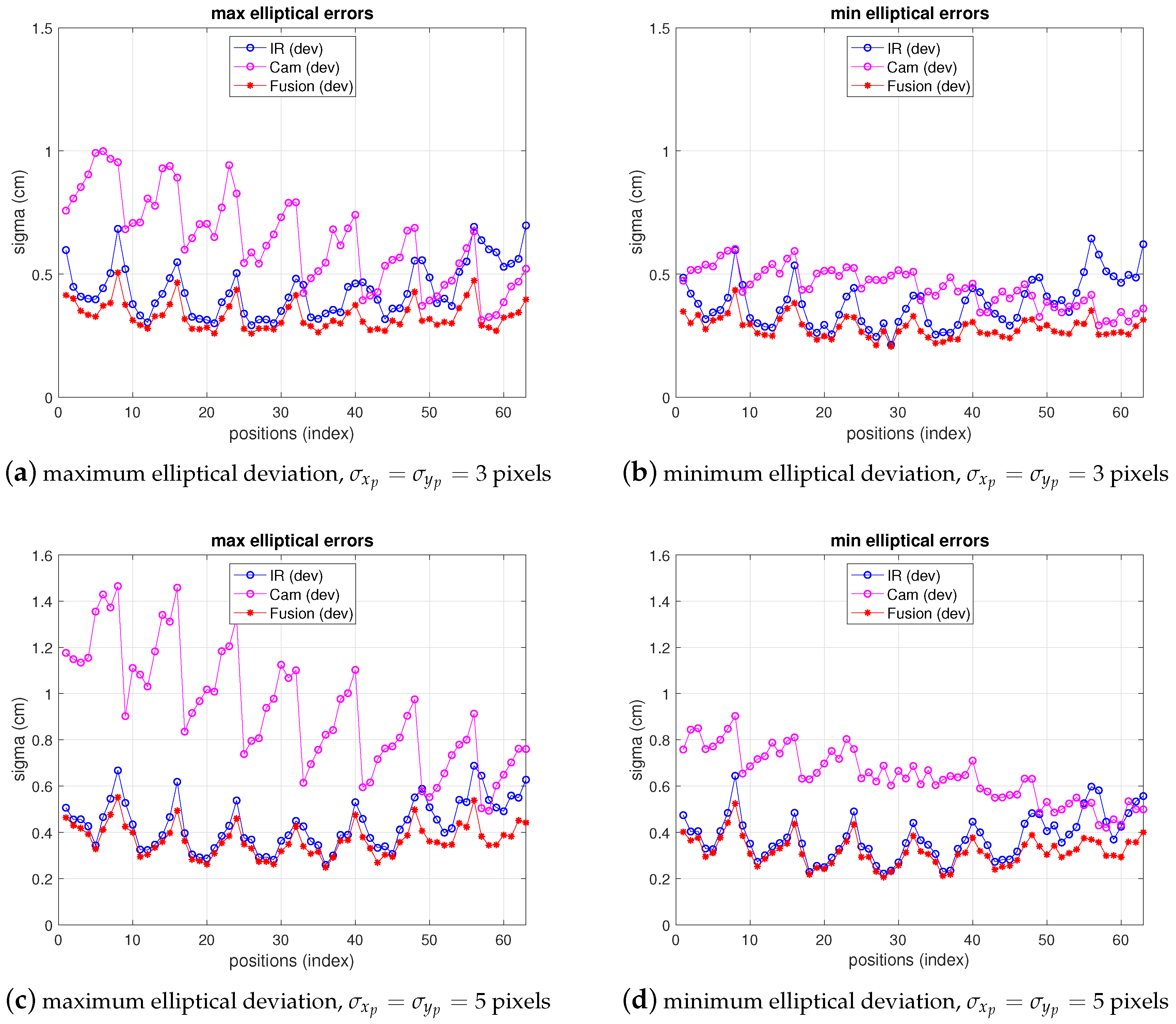

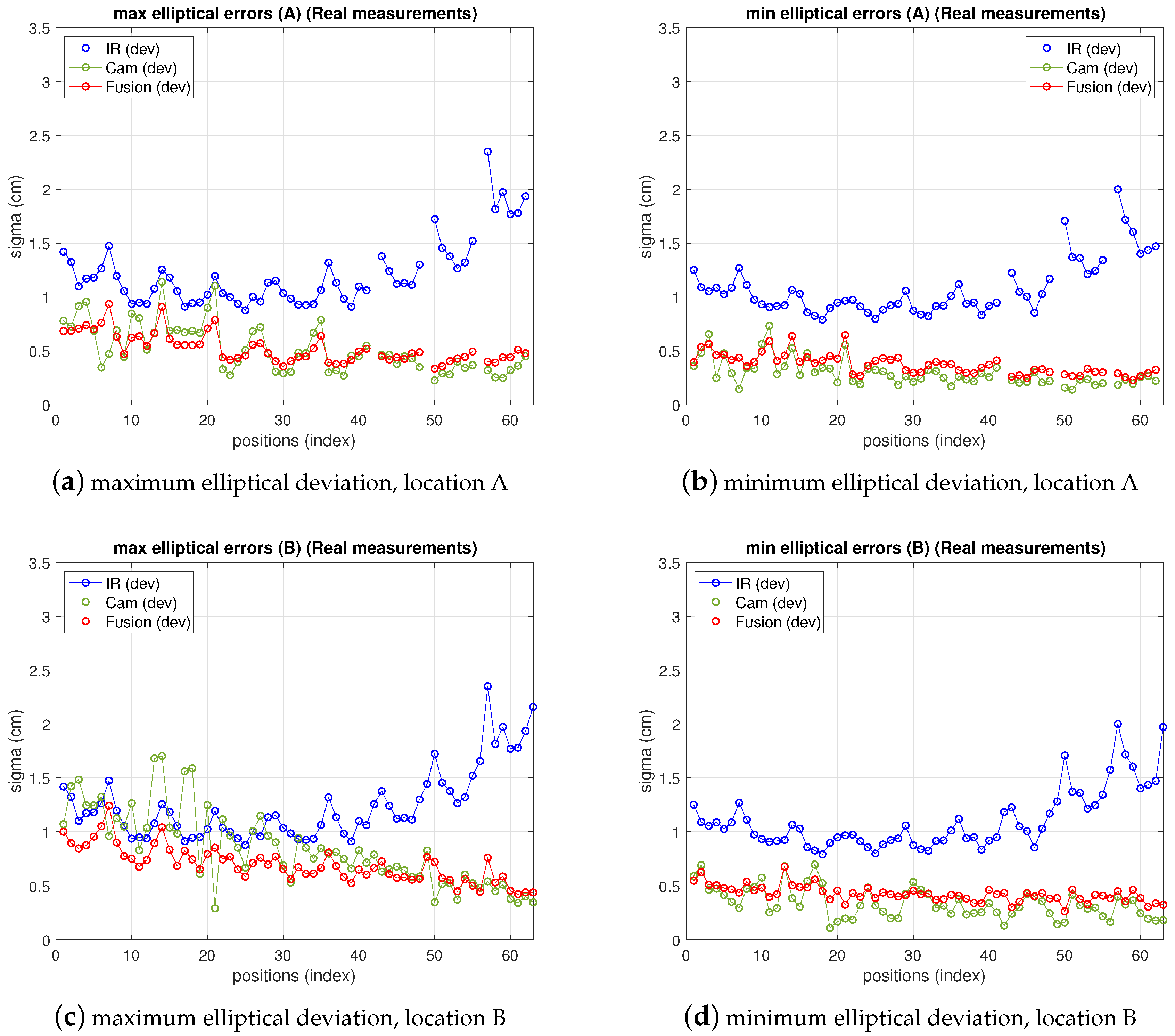

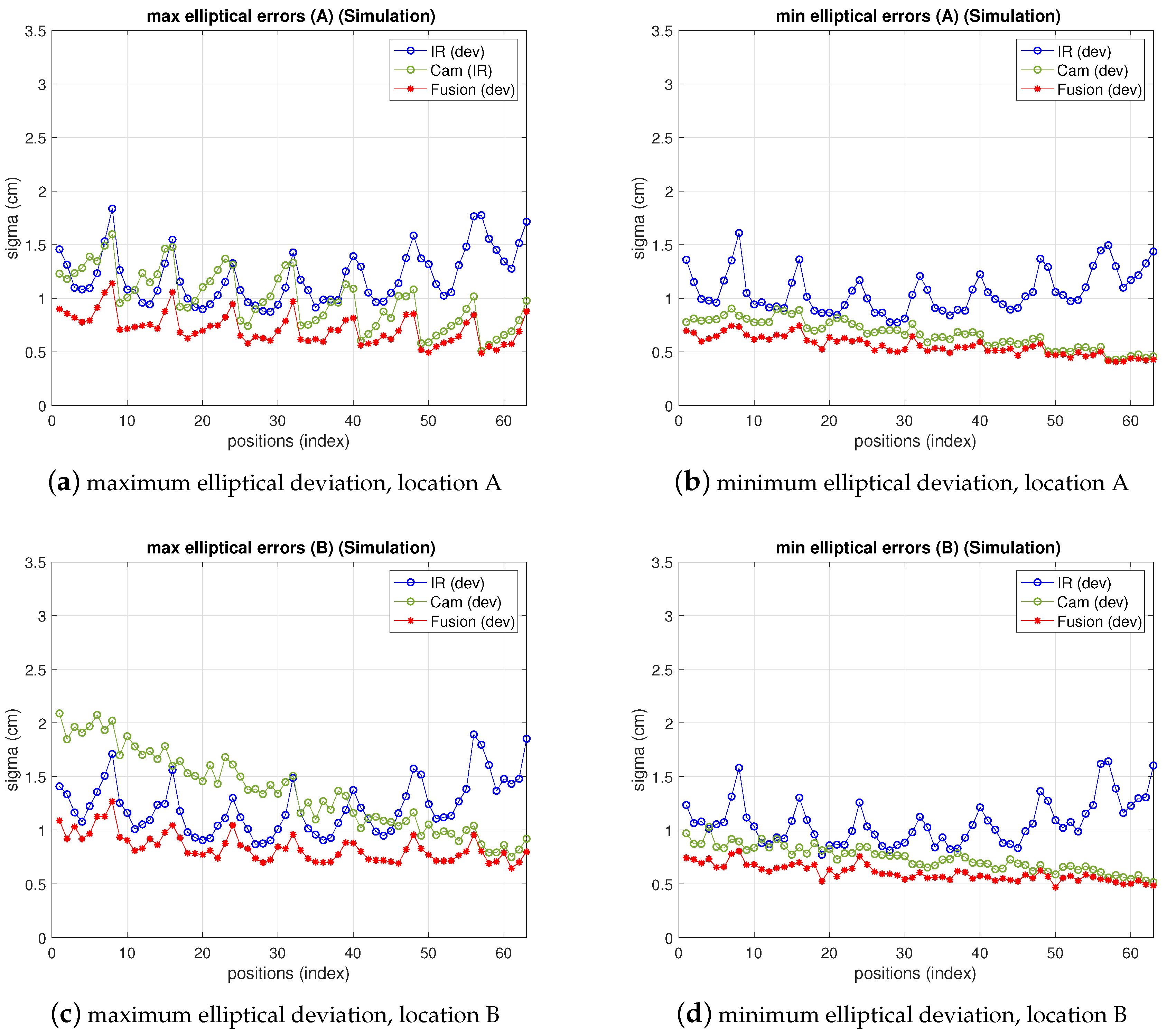

. If the position update velocity is high enough this is also a good fast real-time solution. The results of fusion simulations are depicted in

Figure 6. In this figure, the tests reproduce the conditions of the respective IR and camera simulations shown in the previous two sections. The standard deviations in the

axis, together with the maximum and minimum variances (

confidence ellipse axis) are displayed for camera, IR and fusion results. As expected, the deviations delivered by the fusion estimate are lower than any of the two single sensors. The more one of the deviations (IR or camera) increases, the more fusion variance approaches the lowest one.

7. Conclusions

We have successfully developed an indoor positioning system with fusion of IR sensors and cameras (5 IR sensors and one camera). The achievable precision is in the cm level (maximum of cm, cm on average, within 95% confidence ellipse) in localization areas of m, with IR sensors in a m cell and a camera in possible locations up to a distance of 7 m from the target. It has been tested with measurements in a real setup, validating both the system performance and also the proposed models for variance propagation from IR and camera observations to the resulting fused position. Model and measurement fusion results agree within a margin of cm ( cm for camera, 1 cm for infrared), allowing for the models to be used as a valuable positioning systems simulation tool. Regarding positioning precision itself, while camera and IR ones (95% confidence margin), may rise up to cm and cm respectively, fusion improves precision reducing the deviations to cm. On average, IR and camera present values of cm and cm; fusion lowers it to cm. This means approximately a 48% precision improvement on average (in the maximum deviations it rises to about 72%). An average improvement of almost 50% may be quite convenient in some applications. Moreover, as important as precision performance, the sensor fusion strategy provides the system with high robustness as, in case one sensor fails or its performance worsens, the fused position precision gets closer to the other one. The system also works under changing illumination. The camera performs satisfactorily with very low illumination levels and also with floor brightness (high artificial illumination conditions). Furthermore, IR and camera provide complementary behavior with respect to light conditions, as in dark conditions the camera might not see the scene but the IR system would perform correctly, while under high illumination levels the IR receivers could saturate but the camera would still capture the landmarks. The last two features pointed out add robustness and reliability to the system. The proposal shows promising perspectives in terms of scalability to large real spaces because the system developed offers wide coverage with a small number of low cost devices (less than five IR anchors is also valid) and high precision features, with the camera enhancing the decrease of IR precision beyond 5 m. Locating areas are expected to be notably enlarged without increasing the number of devices, although further tests are necessary for proper assessment. This could be attained by placing the camera at longer distances and by improvement of the IR precision (increasing sensitive area, frequency and emitted power is feasible). From the low level point of view, this will imply a challenging design effort to balance the distance between devices so as to keep cost as low as possible and still have acceptable coverage and precision (increasing the distance will reduce SNR). Careful selection of new devices with higher working frequency (which also enhances precision) and wider field of view, without engaging the response time, is a key aspect in this low level tradeoff. With respect to response time requirements (under real time navigation conditions), a more restrictive signal filtering (IR) and resolution increase (camera) would increase SNR (precision) but would make the system slower. Considering all these aspects, the final system should also be tackled together with the aid of odometry (which can also be tackled with a fusion approach). Finally, we have proposed a BLC with five receivers but the minimum localization unit needs three, although precision worsens. If the precision requirements relax the number of receivers could be lower (not necessarily the fusion precision, unless the camera worsens too).

From the theoretical point of view, the IR model for variance propagation is completely analytical and matches real results very closely at every target position in unbiased scenarios, i.e., mainly multipath free (or MP canceled). The camera one is semi-empirical, the variance of observations being deduced from the measurements. A completely analytical model for the camera is not easy to define as some stages are not accessible as to link variance with position (mainly the detection algorithm). Nevertheless, unlike the IR system, the determination of the pixelic variances, in order to obtain the matrix of the model, is very easily achieved with one single picture-burst of the whole scene (with IR, this would need a large amount of measurements, successively at every position in the grid). Moreover, although this task has been carried out offline, under real navigation performance, it could be easily carried out online. In summary, a good tradeoff solution has been found for determining the variance of the camera observations, which represents within 1 cm the real behavior in the whole grid. The camera bias is very position-dependent and not easy to model, but it has also been successfully integrated in the procedure. Regarding IR, as said, AWGN assumption requires multipath free scenario what requires cancelation techniques. Otherwise, multipath errors can grow up to the m level in unfriendly (though common) scenarios.

A next challenge is improving the model for the camera, by going further in the analytical link between variance and target position. In addition, it will be important to tackle the problem from an estimator-based approach, addressing the approximations assumed here with respect to the position-depending variances in the ML solution, and investigating the camera bias and IR multipath contribution to integrate them in the models. Finally, exploring sensor cooperation capabilities together with data fusion will be interesting when applied to real applications.