3.1. Grid Graph

The depth image is converted into an undirected grid graph, with one node for each pixel in the foreground. The pixel at row

i, column

j is connected to its four-neighbourhood in the image: the pixels at

,

,

and

. The connection is represented by an edge in the grid graph. For an undirected edge

, the weight is:

where

denotes the value at pixel

u. This is the standard weighting function for RW [

14]. In this case,

is the depth at pixel

u. The parameter

determines the significance of the difference in depth values. The

values are first normalized across the image, as suggested in [

14].

3.2. Arm Probability Matrix

We first aim to segment the two arm regions, as we assume these are the regions that are potentially occluding other parts of the body.

As described in

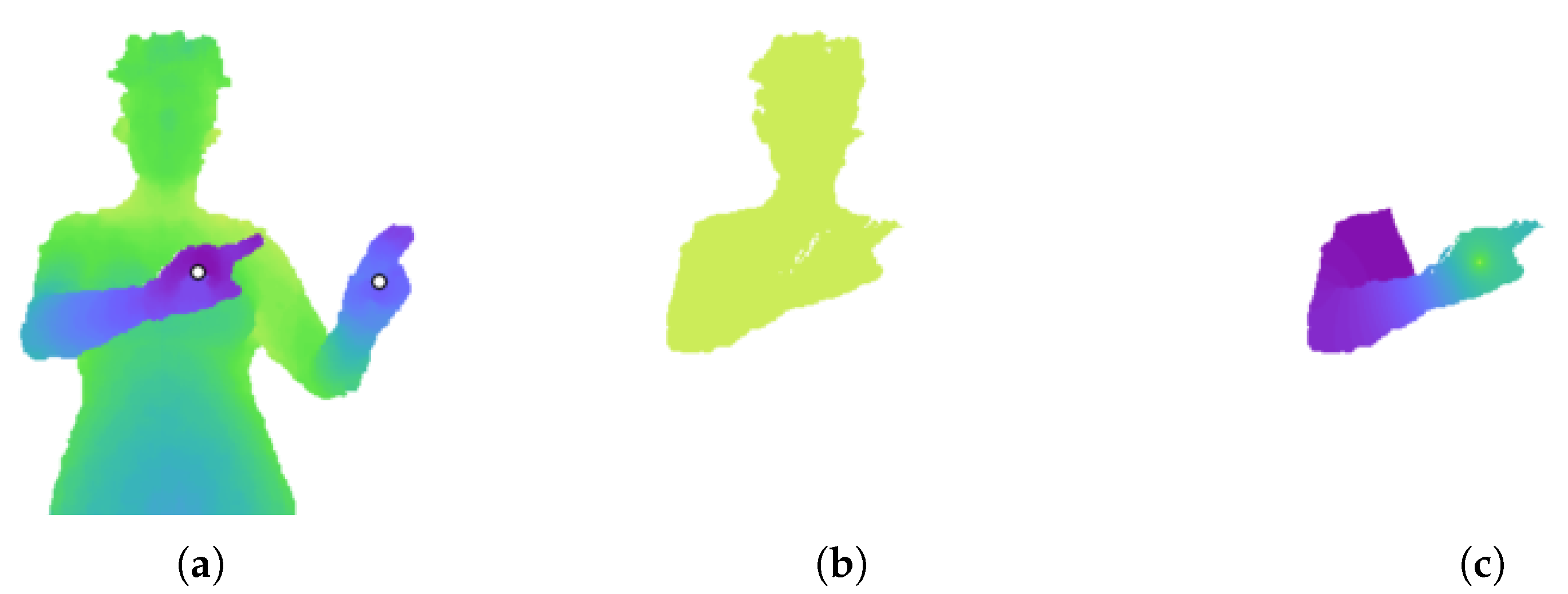

Section 2.2.2, the RW algorithm produces a probability matrix for each seed label. The algorithm is first run to obtain a probability matrix for each arm. The two hand pixels are used as seeds. Running RW using these two seed pixels, labelled 1 and 2, results in two probability matrices. However, if an arm is in front of the torso, the probability values can be skewed to be close to either zero or one. This can be seen in

Figure 1. The depth difference of the right arm acts as a barrier to a random walker. As a result, even pixels on the head are given a probability of nearly one for belonging to the right hand.

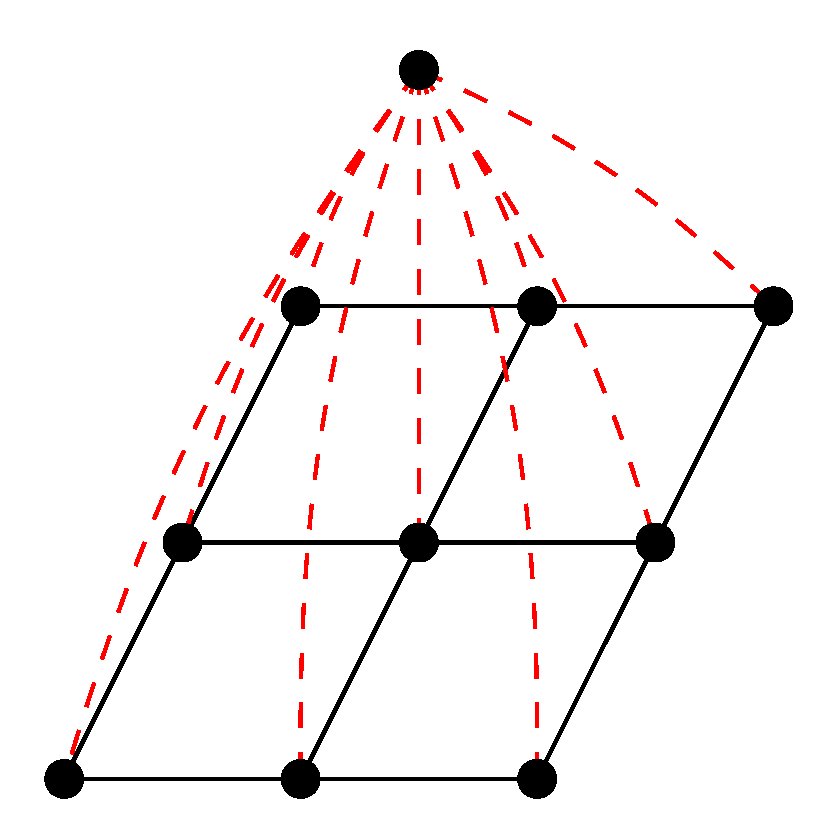

To increase the variance in probability values, an extra ‘dummy’ node is added to the graph. This node is connected to every node in the grid graph by an edge with a small weight

w, as shown in

Figure 2. A value of

was determined through experiment. This represents the probability that a random walker will cross the edge and land on the dummy node. The RW algorithm is run on this new graph structure using three seed nodes: the two hand nodes and the dummy node, labelled 1 to 3. The output probability values for the hand nodes now have more variance, as evident in

Figure 1. The new matrix

P for hand

X is used to segment arm

X (left or right).

3.3. Arm Segmentation

If the arm is occluding the body, there will be a significant difference in depth between pixels on the arm and neighbouring pixels on the body. This will also be evident in the probability matrix

P found in

Section 3.2.

The probability values of

P are clustered using the mean shift algorithm [

16] with a Gaussian kernel. For efficiency, only a small sample of probability values is clustered. After sorting the probabilities,

n uniformly-spaced values are taken from the sorted list and clustered with mean shift, resulting in

k clusters. The value of

n can be tuned by the user for their specific dataset. Mean shift automatically determines the number of clusters.

A new image

is the result of segmenting the probability matrix with mean shift. Each pixel in the foreground is assigned to the cluster with the closest centroid value.

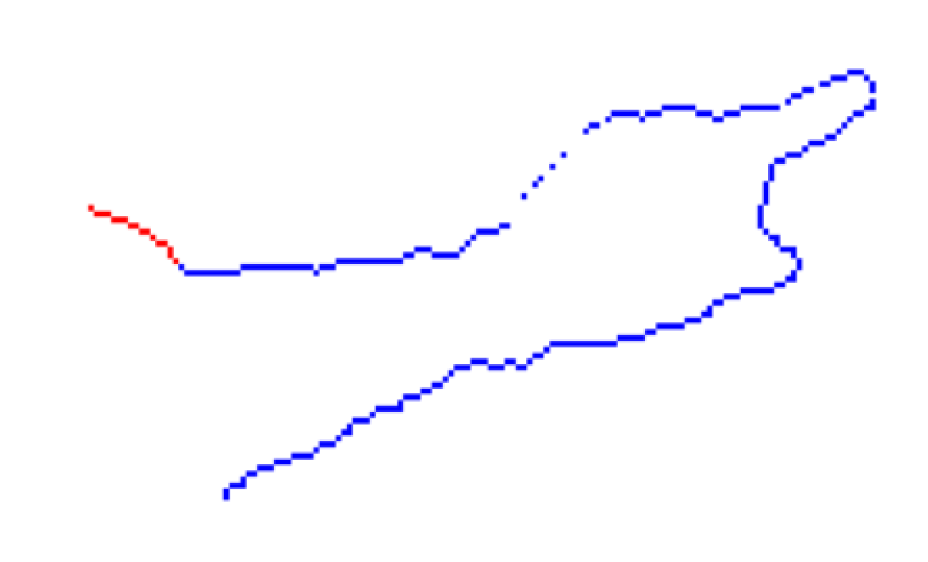

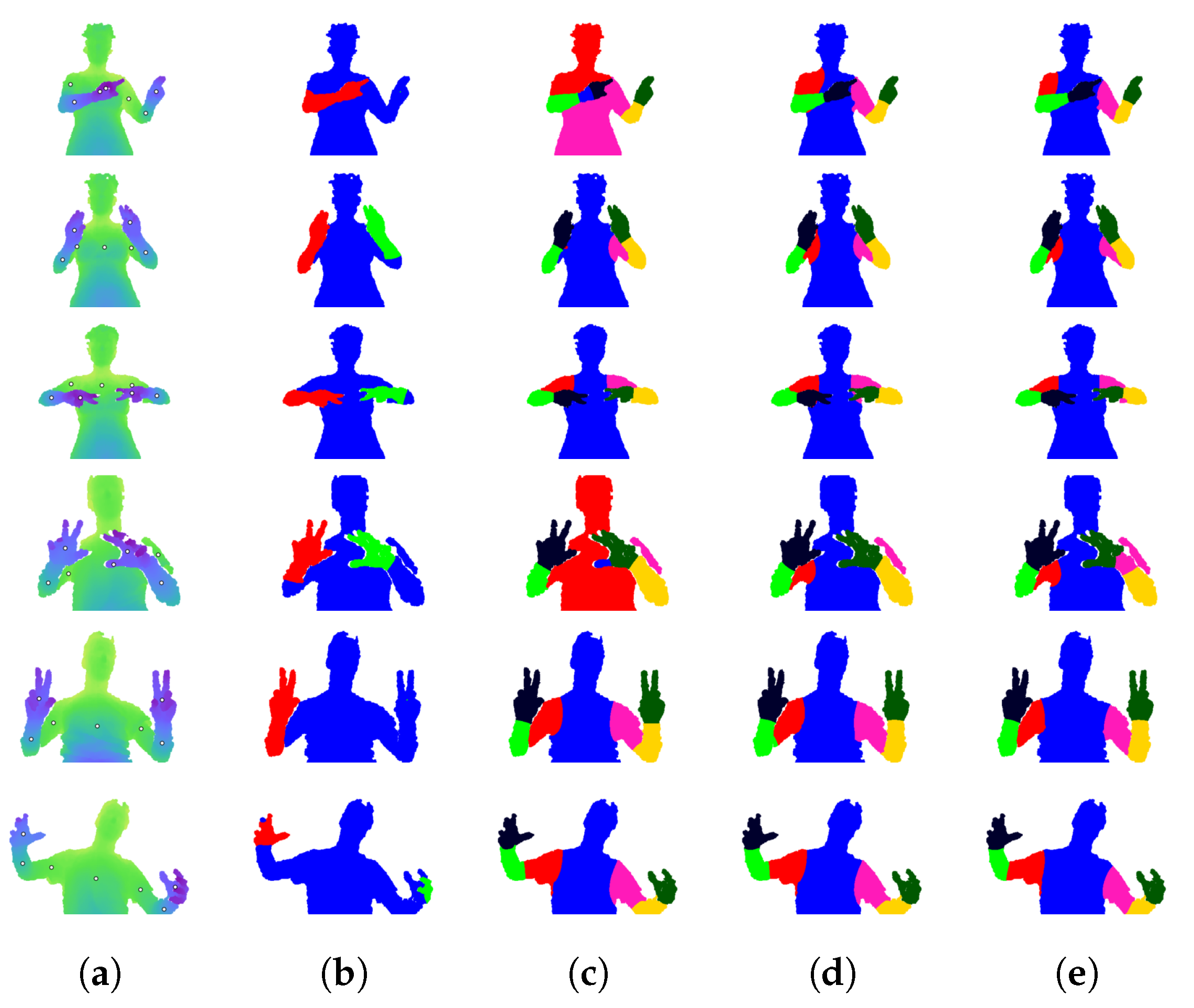

Figure 3 shows an example of this segmented image.

An iterative process is used to find the arm segment that minimizes a cost function, as explained below. Beginning with the segment on corresponding to the highest cluster (i.e., the segment containing the hand pixel), segments are added in order of descending probability. At each iteration i, the full arm segment is the union of segments in from one to i.

The image gradient captures information about the local change in pixel values [

17]. It returns an

vector at each pixel location in an image.

is the magnitude of the image gradient of the probability matrix

P (with all background pixels of

P set to null). An example is shown in

Figure 3. The highest values in

occur at sharp differences in pixel probability, corresponding to the depth difference caused by the arm occluding the body. The cost function for the arm segment uses this image gradient.

The binary arm segment

is eroded with the structuring element for the four-neighbourhood.

A new binary image

is the union of the eroded

and the erosion of its complement image, i.e., all image pixels not in

, including background pixels.

The cost of

is the mean of gradient values inside

.

In essence, an arm segment

that is too small will cause ~

Bi to cut across a strong gradient line, while a

Bi that is too large will cut across a strong gradient line itself. This is evident in

Figure 3. The segments are eroded to avoid the gradient values along their perimeters.

Algorithm 1 shows the pseudocode for the iterative process that segments the arm. It selects the arm segment that minimizes the cost defined in Equation (

4). After the two arm segments have been found, there may be pixels that belong to both segments. Each of these pixels are assigned to the side with the higher RW probability.

| Algorithm 1 Arm segmentation. |

- 1:

Sort values of probability matrix P - 2:

Select n evenly-spaced samples from sorted list - 3:

Image segmented by applying mean shift to probability samples - 4:

Image gradient of P

|

| |

- 5:

function ArmCost(B, ) - 6:

erosion of binary image B - 7:

erosion of complement image B - 8:

- 9:

return - 10:

end function

|

| |

- 11:

procedure SegmentArm(, ) - 12:

infinity - 13:

for i in k clusters do - 14:

- 15:

ArmCost(, ) - 16:

if then - 17:

- 18:

- 19:

end if - 20:

end for - 21:

return - 22:

end procedure

|

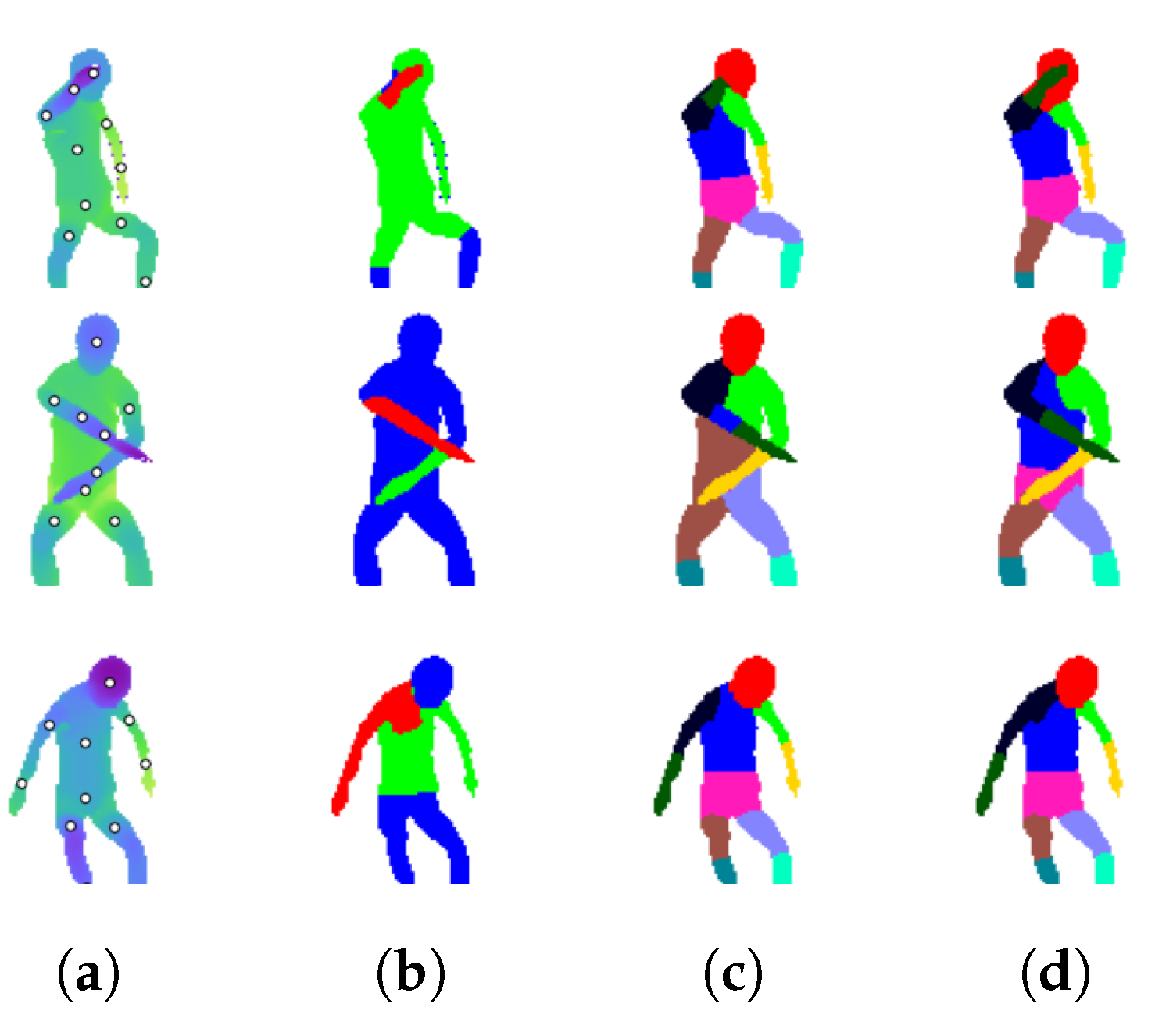

3.4. Validation of Arm Segments

Once the arms have been segmented, they are evaluated for evidence that they are occluding the body. An occluding arm segment is referred to as valid. In the case that an arm is not occluding the body, there can be an insignificant depth difference between the body and the arm.

is the arm segment determined from

Section 3.3.

is the rest of the foreground

F, including the other arm.

is the set of pixels in

that are in the four-neighbourhood of

. This set is found by dilating

and taking the intersection with

.

Similarly,

is the set of pixels in

that are in the four-neighbourhood of

.

Figure 4 shows the inner and outer pixels for the right arm segment.

If the arm is occluding the body, there should be a significant difference between the depths of pixels in

and

, and the outer depths should be greater than the inner. There should also be a significant probability difference. Let

be the set of depths of pixels in

and

be the set of probabilities. An arm segment is valid only if it is whole (i.e., only one connected component) and if it meets both of the following two conditions:

In the case shown in

Figure 3, the right arm is valid and the left is not.

3.5. Layered Grid Graph

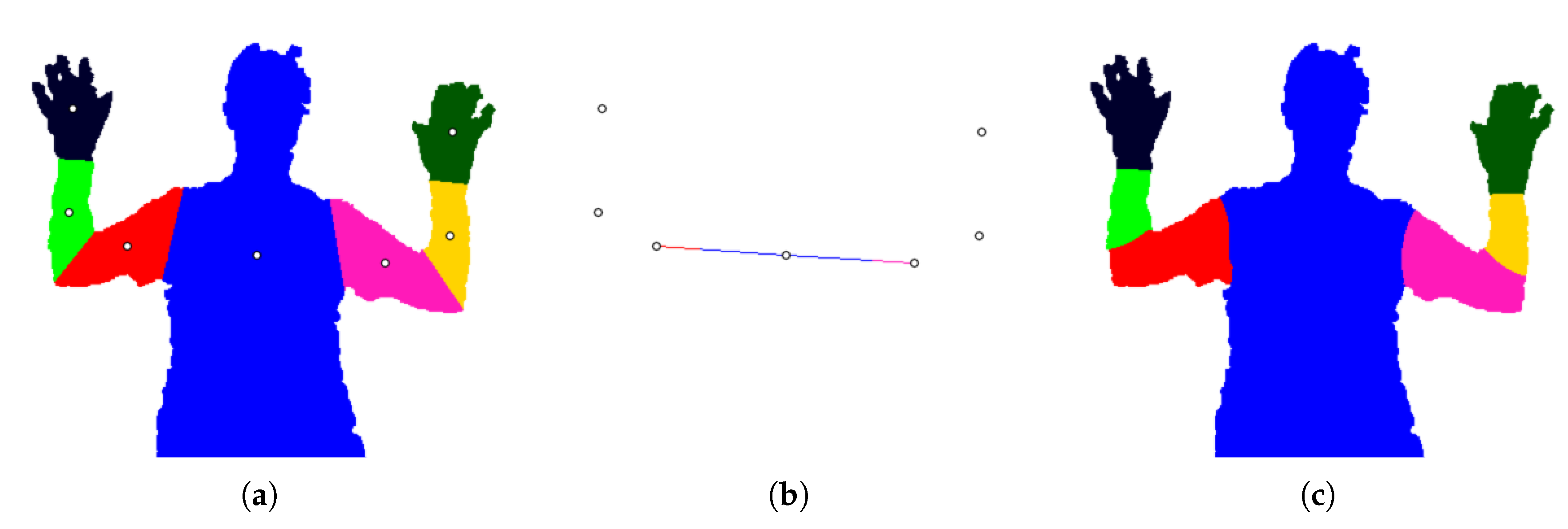

After the arms have been segmented, a new grid graph G is created with one to three ‘layers’: a base layer , a right arm layer and a left arm layer . Each layer is a distinct set of nodes. A layer is created for an arm only if the segment was found to be valid.

The purpose of this layered graph is to emulate a body with the arms outstretched, rather than occluding the mid-region. The base layer is a grid graph with one node for every foreground pixel, including the pixels that are in the right or left arm segments. A pixel inside binary arm segment is represented by a node in and a node in .

When all three layers are constructed, the graph

G has

nodes.

where

is the number of foreground pixels,

is the number of pixels in arm segment

and

is the number of pixels in arm segment

.

In the regions of the arms, nodes in

are given interpolated depth values, which are used to compute edge weights. This allows a segmentation algorithm to be unaffected by the sharp depth difference caused by occluding arms. To interpolate the depth values, every background (i.e., non-human) pixel is given the same depth, which is the median of all foreground depths. Then, the arm regions of the base layer are filled with inward interpolation. The edges of

G are weighted using Equation (

1).

3.6. Connecting Graph Layers

When the graph layers , and are first constructed, there are no edges between layers. A set of nodes is selected that will connect G to and another set to connect G to .

is the set of gradient values on

(from Equation

6).

Similar to the clustering in

Section 3.3, the values of

are clustered with mean shift. Along the inner pixels of the arm segment, the gradient is lowest where the arm connects to the body.

is the set of pixels in

corresponding to the lowest value cluster found by mean shift. These connecting pixels are shown in

Figure 5.

Each pixel in has two nodes associated with it: node u in the base graph and node v in the arm graph . An edge is inserted with unit weight. This is repeated for each pixel .

3.8. Seed Nodes and Labels

In order to run an interactive segmentation algorithm on the graph, a subset of nodes must be specified as seeds. The provided body part positions are used as seed nodes, with labels one to .

More seed nodes can be added by drawing lines between adjacent body parts. Bresenham’s algorithm [

18] is used to find the pixels that constitute a line between two image positions

A and

B. This line

is now split into

and

, i.e., pixels in

and

are given labels

A and

B, respectively. The user specifies a value

r, which is the ratio of the length of

to the total length of

. This allows the user to alter the size of the final part segment.

Each pixel in

is assigned to a node in the layered graph. The layers for each body part position have been found by the process described in

Section 3.7. Each seed pixel with label

i is assigned to the layer of part

i. Thus, seed pixels on the image are converted to seed nodes in the layered graph.