1. Introduction

With the advent of the IoT (Internet Of Things) era, sensor data is becoming rapidly affordable and increasingly influential in the energy field [

1]. In particular, energy consumption data has become an integral part of solving a variety of energy problems. For instance, energy consumption data is being used for load prediction [

2], population segmentation [

3], real-time dashboard [

4,

5], individual feedback [

6], occupancy detection [

7], and NILM (Non-Intrusive Load Monitoring) [

8]. For the data to be effective in such applications, high data quality is required, where quality can be defined as the resolution in the time and space dimensions [

1]. Data resolution directly affects the amount of information that is captured in the data, and therefore determines the level of analysis that can be performed [

9]. Collecting high-resolution data, however, implies a higher cost in terms of sensors and data management systems. This results in a tradeoff between data quality and cost, and the optimal resolution is known to be dependent on the task and the final goal [

10]. To study the impact of high-quality data on well-known energy problems, we consider the task of energy saving, which has been heavily investigated in recent decades. Most existing studies have focused only on the amount of power consumption, but we are more concerned with the data quality and its impact. Additionally, some studies have incorporated occupancy data collected from other types of sensors [

11,

12], but we consider only electrical power consumption data in order to keep the study focused on data quality (in fact, we tried installing a few different types of occupancy detection sensors, but encountered accuracy and/or privacy issues in the campus environment. In order to focus on the main topic of this work, occupancy data was excluded in the study).

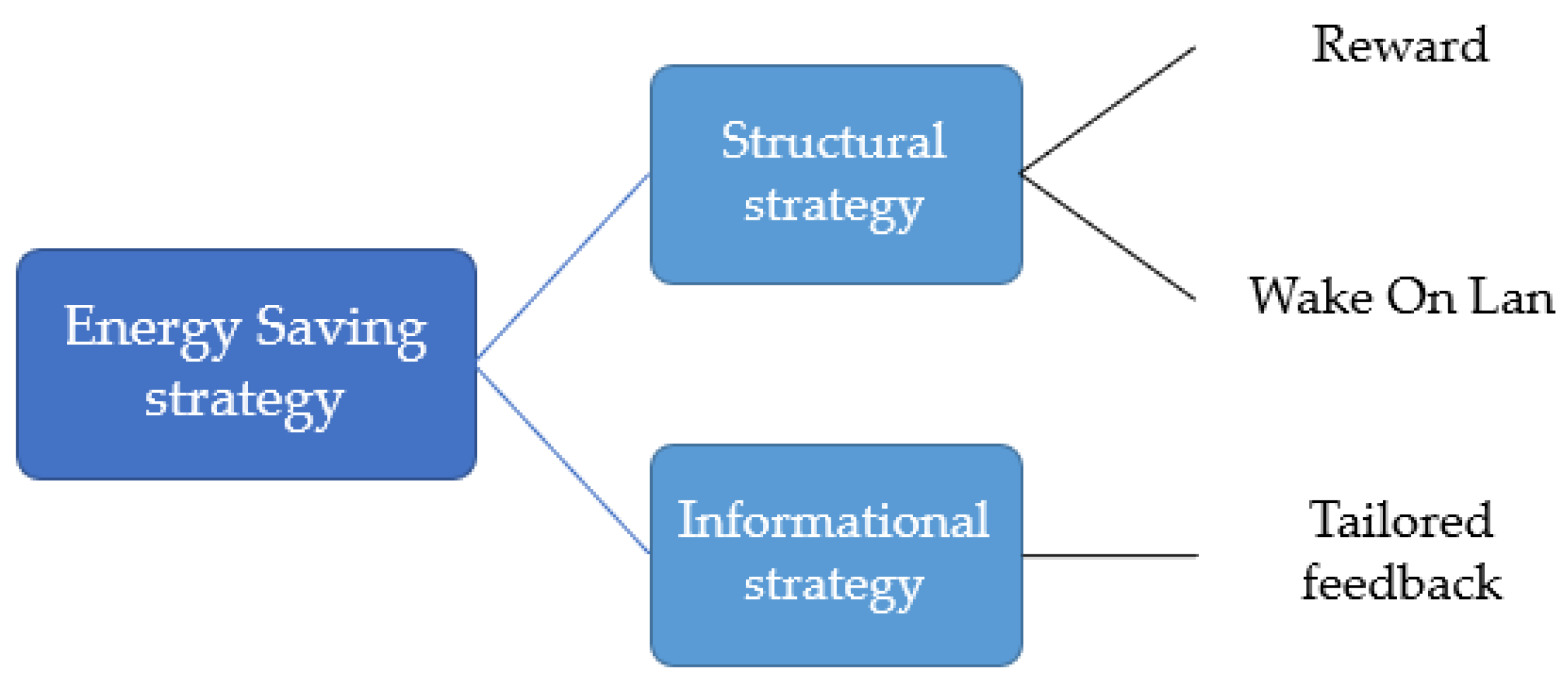

Energy saving strategies for encouraging pro-environmental behavior can be divided into two broad categories: structural strategies and informational strategies [

13]. According to [

13], structural strategies are aimed at making changes in the circumstances under which behavioral choices are made (e.g., improvement of home insulation, rewards, etc.). Typically, a structural change requires a financial investment. Informational strategies are aimed at changing perceptions, motivations, knowledge, and norms, without changing the external context in which choices are made (e.g., periodic emails with recommended behavior changes, consulting, etc.). In general, combining both strategies will be most effective, because there is often more than one barrier inhibiting users from acting pro-environmentally [

13,

14]. In this study, we utilize data-driven approaches and combine structural strategies and informational strategies. As structural strategies, we provide a reward and use a low-cost system that encourages users to turn off their computers (Wake On Lan; details in

Section 4). For informational strategies, data-driven analysis is employed to provide highly tailored feedback, which is known to be effective [

15,

16,

17,

18]. The overall interventions for this study are summarized in

Figure 1.

Our experiment was performed in a university building. The importance of energy saving in the residential sector has been well recognized, and there have been numerous experiments and studies using interventions such as feedback, reward, and consulting [

19,

20]. The commercial sector, including university buildings, is also important, and the sector is continuing to grow. For instance, the world’s commercial sector, including education, government, private and public organizations, is expected to be the fastest-growing demand sector with an average energy consumption growth of 1.6% per year between 2012 and 2040 [

21], and the service sector accounts for 30% of the total electricity usage in EU [

22]. Within the commercial sector, energy consumption in educational buildings was the third highest in the US, according to [

23]. A variety of studies have been performed on energy saving in the commercial sector, for instance, see [

24,

25,

26], and a review on data science techniques applied to building energy management can be found in [

27].

There are several challenges for energy behavior research on campus. Students, faculties, and staff on campus are typically not interested in reducing energy usage. Almost no one is aware of the energy bill, and the majority usually behave as if the energy used on campus is free. In particular, students do not realize that the charge is included in their tuition fees [

28,

29]. Due to the lack of information and interest, it is difficult to motivate people to change their behavior. Even if they can be motivated, it is usually difficult to expect a large saving, because the group does not know what particular energy saving behavior is required and allowed within their local context. Even though it is important to reduce standby power when there is no activity, many devices are shared and used, and energy is wasted because conservation maintenance is often not performed properly [

30,

31]. Another challenge lies in uncontrollable factors such as exams, papers due, office allocation shuffling, and graduation in a campus environment. User behavior that influences power consumption is affected not only by the interventions but also by the uncontrollable factors (

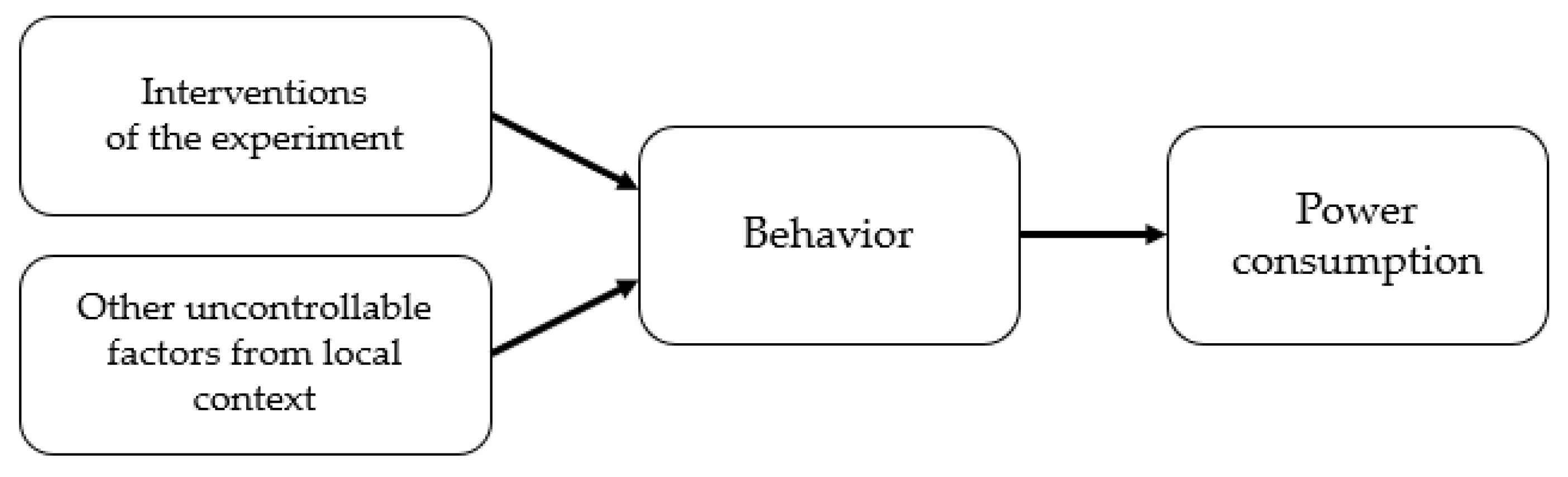

Figure 2). A large-scale experiment is typically needed to cope with such uncontrollable factors. Establishing a large-sized experimental group, however, is usually not feasible in the campus context. Consequently, it becomes difficult to confirm whether a reduction in energy consumption was achieved due to the interventions in the experiment. To resolve this problem, we investigate how high-resolution data can be utilized to mitigate this issue. In particular, we establish methods for utilizing high-quality data to detect energy saving behaviors with high confidence.

The rest of this paper is organized as follows.

Section 2 presents the experiment setup.

Section 3 explains the space-time resolution of data and how they can be used for detection of energy saving behavior.

Section 4 describes the experiment, and

Section 5 explains the energy saving results, including power consumption analysis and energy saving behavior analysis.

Section 6 supplies discussions on several matters, and

Section 7 contains the conclusions.

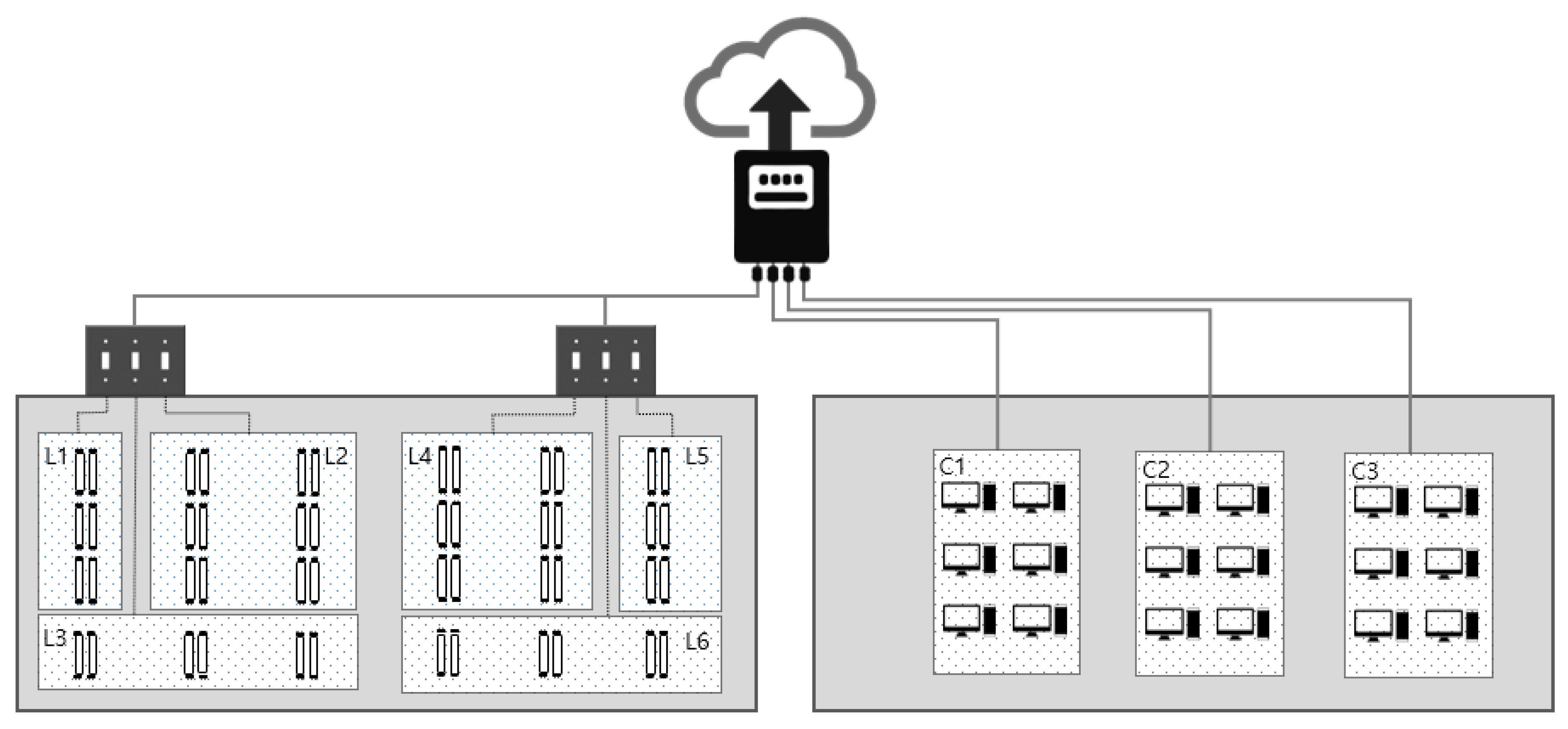

3. Space-Time Resolution and Behavior Detection

To facilitate data-driven intervention design of energy saving, waste behavior investigation using E1-Pre data was performed prior to the intervention experiment E1. Because the deployed IoT system collected 1 Hz data over multiple sensors, we were able to identify frequently occurring wasting behaviors without any human interactions such as surveys or interviews. In this section, the findings are explained with careful attention to the required space-time resolution for each specific finding.

In

Figure 4, the power consumption plots are shown for six different combinations of time and space resolutions. If the power usage is measured only once per day for the aggregate usage of all lights, computers, and others in

Table 1, the power consumption plot looks like (I). From this plot, we can tell the overall power usage level for each day, but probably that is all the information that can be extracted. In comparison, if the power usage is measured every 15 min (96 samples/day) and, furthermore, the lights and computers are measured separately, the resulting plots are much richer in information, as shown in (IV-1) and (IV-2). As an example, consider (IV-1), which is the aggregate for the usage of the lights only. The first red section, marked as [B] in (IV-1), indicates that all the lights were turned on together in the same 15 min interval in the morning. Considering that the graduate students do not have a fixed time schedule, and that it typically takes a while for the second student to show up in the office, this is undesired behavior. There is no need to turn on all the lights in an office that is used by 15~20 students when full. The second red section, [C] in (IV-1), indicates that the lights were never turned off during the night, another undesired behavior. The thin green section [D] in (IV-1) indicates that the lights were properly turned off the following night. The green section [E] in (IV-1) indicates that the light switches were sequentially turned on over several hours as more students arrived at the office.

Clearly, a certain type of information cannot be obtained without increased time and space resolution. In

Table 3, waste and conservation behaviors that can be detected with different levels of space and time resolution are summarized. For the time resolution, the same three levels as in

Table 3 are used. For the computers, only three sensors were used to measure the power usage of 15~20 computers and many more monitors depending on their physical locations in the office. While this is a very high space resolution compared to most previous energy saving studies, it is worth noting that a sensor measurement per computer or a sensor measurement per person would have enabled a personalized waste investigation. When a person-level data collection was discussed, however, the participants expressed a very strong objection due to a possible compromise of privacy. In the study, we actually had four sensors (one for lights and three for computers), and therefore space resolution of four groups could have been utilized, but we focus on two groups (light and computer groups) because the additional information was marginally helpful in this study.

During the pre-trial investigation, it became clear that the data quality in terms of time and space resolution was critical for identifying wasting and conservational behaviors. A high resolution of space-time data was essential for identifying the key behaviors and their relative importance for the site’s energy saving. In the following section, the actual energy saving experiments and what we learned from them are explained.

4. Experiments

To understand the effectiveness of data-driven analysis and to understand the maximum potential of energy saving without affecting normal activities in a campus environment, the experiment was designed to utilize the pre-trial data as much as possible and to aim for a maximum energy saving in one week. To make sure that the students were motivated and diligently made waste reduction efforts, a reward of a nice group dinner was promised, as long as the students saved a reasonably large amount of energy. The ‘large amount’ was not very clearly specified, because we wanted the students to make no less or no more than the best effort without sacrificing their normal activities; however, when pressured to specify the exact number, we indicated that a 20% saving would be considered to be more than successful.

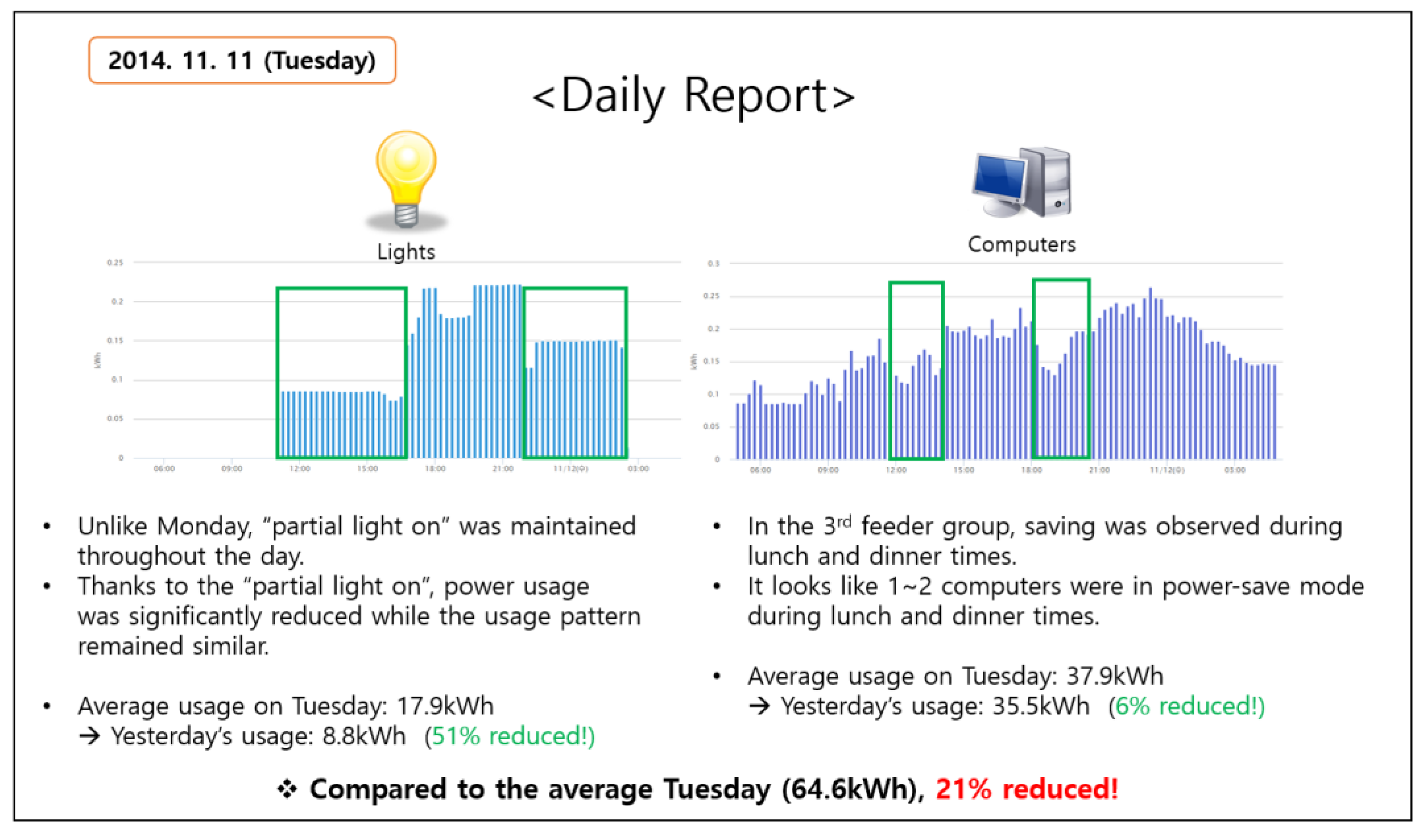

The experiment was designed to last for one week, with a dedicated energy delegate for close communication. The energy delegate visited the office every morning to talk with the students, and the delegate also delivered a daily report that contained the detailed information and instructions for energy saving (

Figure 5). The report was generated by analyzing the previous day’s energy data. It contained a list of tips generated by inspecting the list of wasting behaviors explained in

Section 4. For instance, if all the lights are simultaneously turned on by the first student at the office, the information was included in the report, and the energy delegate pin-pointed the behavior and asked for additional attention on the matter. The report also contained energy consumption information. Each day, students were informed whether they had successfully reduced power consumption on the previous day, and what % of reduction was achieved if they were successful. All the information was summarized in the daily report, which contained plots and texts. Overall, this experiment was an intensive energy saving exercise that was designed to last for only one week.

4.1. Data-Driven Intervention Design

Findings from the pre-trial investigations utilizing high-quality data were fully reflected in the intervention design. Regarding the light usage behaviors, the list of interventions was ‘encourage partial light-on when possible’, ‘ask for full attention on preventing overnight light-on’, and ‘encourage lights and computers to be turned off during lunch time’. To assist the students, color stickers were deployed, and they visually showed the mappings between the switches and the corresponding light sections. This action was the direct result of pre-trial interviews, where students said it was cumbersome to try the switches until the desired light section only is turned on.

Regarding the computer usage behavior, the most obvious problem was the students keeping computers on all the time. Proper settings of screensaver and sleep modes were strongly recommended, and the energy delegate provided support for configuration of computers whenever requested. As will be discussed later, it turned out that many of the students did not follow the recommendation, despite evident energy savings. Subsequent short interviews just after E1 revealed that students kept the computers on mainly to keep remote login enabled. In fact, they hardly used remote login, but they were simply concerned about rare but possible needs to connect to university network environment for free access to academic literature or concerned about occasional needs to open or transfer files to the office computers. Basically, keeping computers in sleep meant disabling remote login. This issue was identified only after experiment E1 and was addressed using WOL (Wake-On-Lan) in experiment E2. WOL is an Ethernet standard that allows a computer to be turned on or awakened by a network message called magic packet [

32]. With WOL, students can keep the computers asleep without sacrificing the capability to awaken the computers and remotely connect. To make use of it, a low-cost WOL system had to be deployed in the office network (around 50 US dollars). Other than keeping computers and screens off when they are not being used, we did not pursue any computer related interventions.

To be sufficiently convinced that these interventions were likely to be effective to the experiment group, the quality of data had to be at least a medium level of time resolution (96 samples per day) and two groups of space resolution (light and computer) in

Table 3. In reality, however, we freely investigated the high level of time resolution and four groups of space resolution. Some of the intervention designs and feedback generation, especially for computers, were possible only by inspecting the highest levels of time and space resolutions and additionally by interviewing the students.

4.2. Experiment E1

The first official experiment was launched on 10 November of 2014 and lasted for one week (see

Table 2). The experiment proceeded smoothly in the beginning, but in a few days, it was noticed that the energy usage of light feeders was too low to be true. Upon further inspection, strip LEDs connected to an independent power source were discovered. It turned out that one of the students was too motivated and installed the strip LED lights. The experiment was immediately announced to be invalid, and the energy delegate stopped energy saving activities.

Even though the experiment had to be abandoned, a post-analysis was performed to investigate energy saving behaviors related to computer usage. While there was a meaningful reduction according to the data analysis, some of the high-power computers were kept on during the night. The follow-up interviews revealed that many students still kept the computers on because of the remote-access concerns. Hence, the need for WOL was identified, and a WOL was installed before starting experiment E2. The use of WOL turned out to be very important, as will be explained in the next subsection.

4.3. Experiment E2

To make sure that we had a fresh and independent experiment, we waited for two months before starting the experiment E2. This time, the students were asked not to do anything abnormal or anything affecting their normal activities. Instead, they were asked to focus on the energy saving behaviors that we were suggesting. Additionally, we made sure that WOL was ready and easily usable. The energy delegate personally showed how easy it was to use the system, and provided personal support when requested. After the education, the experiment was executed for one week. As in experiment E1, daily reports were provided and the energy delegate diligently communicated with the students to encourage them. This time, the experiment was completed without any incident, and the resulting energy saving turned out to be more than 20%, as will be explained in

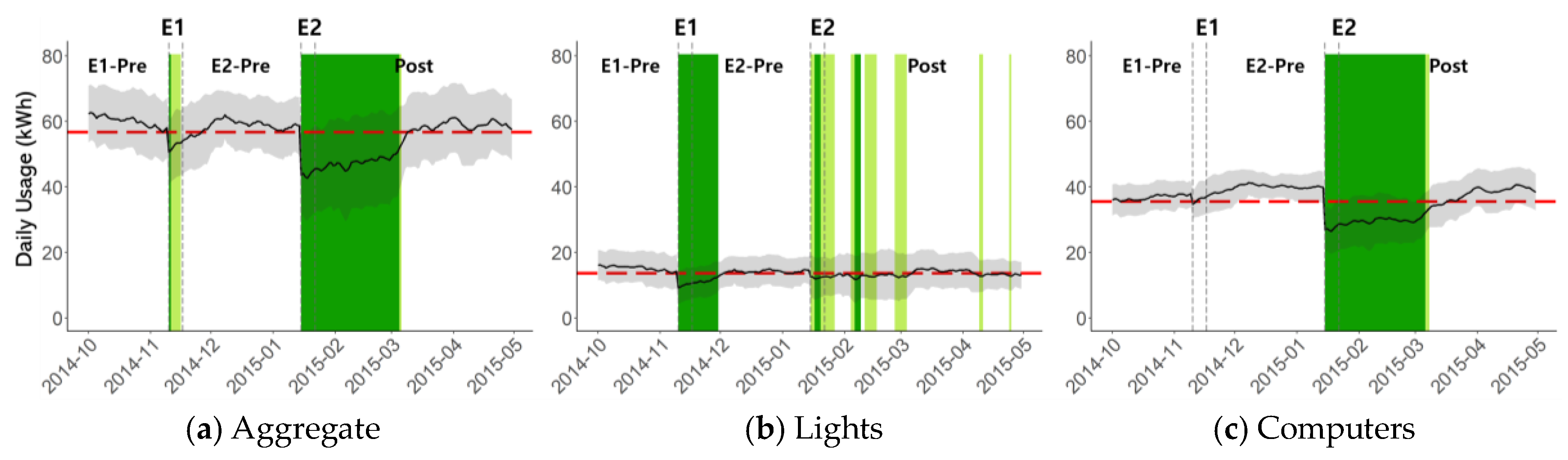

Section 5.1.

6. Discussion

The energy saving potential in the university building was larger than expected. Overall reduction of 25.4% was achieved in E2 without sacrificing normal activities, and a significant portion of the saving was sustained for 44 days until the office and desk shuffling occurred. Overall, the large saving was possible mainly because of tailored interventions that were the direct outcomes of waste investigation using high-quality data.

The LED incident during E1 was completely unexpected. Despite the incident, a few key insights were identified from the experiment. As for the lights, pre-experiment investigation using high-resolution data was very useful. The tailored interventions derived from the findings were observable only with high-resolution data, and findings such as partial light-on and 24 h light-on were accurate and comprehensive enough to result in successful counseling to the students. When advised, the students quickly agreed and followed the instructions. As for the computers, pre-experiment investigation of high-resolution data was very helpful for identifying and analyzing the problems, including the severity of the always-power-on problem. Merely delivering the feedback and guidelines, however, was not sufficient to achieve the desired behavior change during E1. A further investigation beyond data analysis was necessary, and the main issue of ‘power-on for remote-access readiness’ was identifiable only after in-depth discussions between the energy delegate and the office students.

While the data-driven pre-trial analysis was very helpful, the resulting interventions and advice were nothing really new. We neither discovered any new wasting behavior nor did we find a new way to reduce energy consumption. In this sense, it might be most accurate to say that the data-driven analysis was able to identify which known wasting behavior was occurring and how frequently. Such information was very helpful when the energy delegate tried to guide the students. When some of the students were in doubt, typically showing them the high-resolution data plots was sufficient to reach a quick agreement. Pin-pointing the list of saving items was important, as already known in the field [

17], and the data-driven analysis was a pivotal element in our experiment.

Besides the benefit of tailored intervention design, the data-driven analysis was shown to be effective for confidently detecting energy saving behaviors. Power consumptions of public spaces can be affected by many different factors [

33], and even setting up a control group might not be sufficient to tell if energy saving truly happened. Therefore, utilizing data patterns and data-driven measures that are very unlikely to occur without serious effort in terms of energy saving can be a reliable way of confirming behavior changes. When there is no behavior change that can be detected, it might be reasonable to suspect whether or not the measured power consumption saving is indeed because of energy saving efforts. When both power consumption analysis and behavior detection results are positive, one can more reliably conclude a successful energy saving.

Furthermore, the detected energy saving behaviors can be used for generating highly effective feedback. Energy consumers usually do not understand what behavioral changes are needed in the local context [

34]. The problem is aggravated when the consumers are not interested in energy saving, either. If high-resolution data can be collected and used for detecting energy saving behaviors and for pin-pointing the desired feedback [

17], it can help improve the effectiveness of feedbacks and accuracy of influence assessments. Furthermore, such energy saving behavior detections can be used to evaluate whether a group of users is making more effort than another group, even when a direct comparison of quantitative consumption amounts is deemed inappropriate. Therefore, the behavior detection using high-resolution data may be a viable solution for performing a large-scale experiment that includes many offices with heterogeneous characteristics.

Whereas the scope of this paper is limited to lights and computers, data of HVAC was also available. The amount of energy saving on HVAC was comparable to the savings of lights and computers together, but the result is excluded in this paper because there was a central heating system during daytime and students kept the local HVAC completely off during the E2 period. There was no way to tell if the non-use of local HVAC was caused by the intervention or not, and the weather became warm after E2, thus making it difficult to conclude whether or not there was a behavior change.

A 25.4% energy saving potential was identified in the experiment, but the saving was achieved at the cost of a dedicated human delegate and a short-term incentive. To overcome this limitation, we actually conducted an expanded experiment after the study was completed, and the expanded experiment aimed at sustainability with minimal use of resources. Hence, the energy delegate was removed, and only wall-mounted dashboard screens in the office were allowed. The dashboards were the main channel for intervention, and a cost-effective incentive was introduced only at the last stage of the experiment. We have experimented in many different ways for 34 weeks concurrently over three offices and acquired a rich set of interesting data. In the end, however, we decided to abandon the entire second experiment result, because there were too many factors that were specific to each office’s characteristics and activities, and because the longevity of the experiment allowed even more random factors to be part of the data. Despite the high-resolution data and its tremendous benefits, we were not able to derive sufficiently rigorous quantitative conclusions in the campus environment. In fact, we believe our experiment environment was one of the most challenging, and that the results in this work were possible only because of the advantage of high-resolution data and the relevant data-driven approaches. For less challenging building environments with fewer random factors, it would be much less difficult to perform a similar experiment and quantitatively evaluate the energy saving amount. In general, we believe the findings in this work will be useful for any environment, but some investigations and customizations might be necessary to identify how and what parts of insights to utilize for the given environment.