Abstract

Vision-based mobile robot navigation is a vibrant area of research with numerous algorithms having been developed, the vast majority of which either belong to the scene-oriented simultaneous localization and mapping (SLAM) or fall into the category of robot-oriented lane-detection/trajectory tracking. These methods suffer from high computational cost and require stringent labelling and calibration efforts. To address these challenges, this paper proposes a lightweight robot navigation framework based purely on uncalibrated spherical images. To simplify the orientation estimation, path prediction and improve computational efficiency, the navigation problem is decomposed into a series of classification tasks. To mitigate the adverse effects of insufficient negative samples in the “navigation via classification” task, we introduce the spherical camera for scene capturing, which enables 360° fisheye panorama as training samples and generation of sufficient positive and negative heading directions. The classification is implemented as an end-to-end Convolutional Neural Network (CNN), trained on our proposed Spherical-Navi image dataset, whose category labels can be efficiently collected. This CNN is capable of predicting potential path directions with high confidence levels based on a single, uncalibrated spherical image. Experimental results demonstrate that the proposed framework outperforms competing ones in realistic applications.

1. Introduction

Vision-based methods have been attracting a huge amount of research interest for decades in autonomous navigation on various platforms, such as quadrotors, self-driving cars, and ground robotics. Various camera sensors and algorithms have been incorporated in these platforms to improve the machine’s sensing ability in challenging indoor and outdoor environments. For most applications, it is imperative to precisely localize the navigation path and detect potential obstacles. Among them, accurate position and orientation estimation is arguably the core task for mobile robot navigation.

One major category of navigation methods, the simultaneous localization and mapping (SLAM), build virtual 3D maps of the surroundings while tracking the location and orientation of the platform. During last two decades, SLAM and its derivative methods have been dominating the navigation research field. Various systems have been proposed, such as MonoSLAM [1], PTAM [2], FAB-MAP [3], DTAM [4], KinectFusion [5], etc. Besides the use of monocular cameras, Caruso et al. [6] recently developed a SLAM system directly based on omnidirectional cameras and greatly expanded their applications. All the aforementioned SLAM systems share a common impediment to mobile platforms with limited computational capabilities, such as tablet PCs, quadrotors, and moving robotics as in our case (Figure 1).

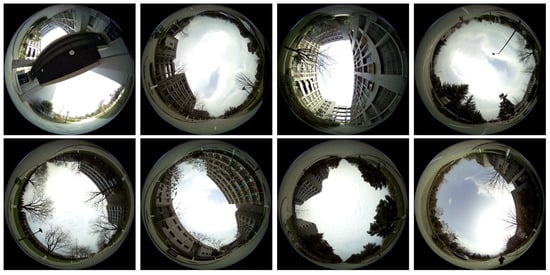

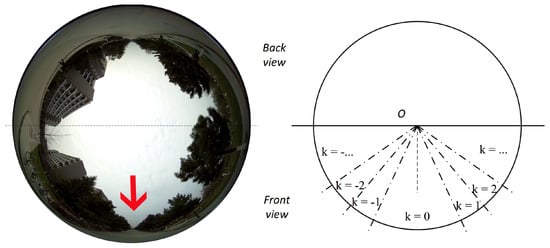

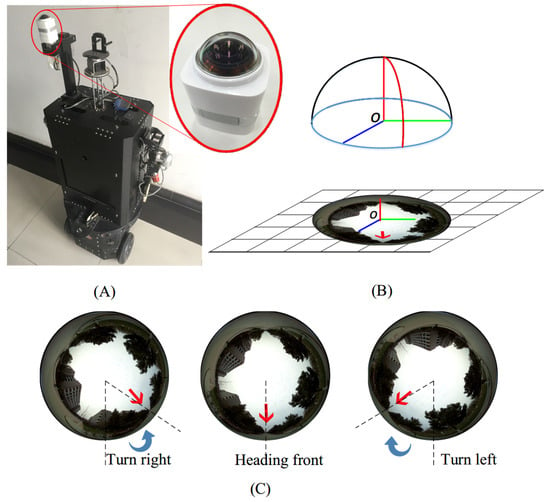

Figure 1.

(A) A spherical camera mounted on a ground robot platform. (B) The spherical camera coordinate systems and its imaging model. Natural scenes are warped into the circular fisheye image. (C) Samples of captured spherical images. Red arrows denote the detected optimal path. Our objective is to generate navigation signals (denoted by blue arrows, i.e., steering direction and angles) based directly on these fisheye panoramas.

Another category of human vision inspired approaches, i.e., the robot-oriented heading-field road detection and trajectory planning methods, address the navigation problem directly with visual paths detection via local road segmentation [7] and trajectory prediction [8], etc. For example, Lu et al. [9] built a robust road detection system based on hierarchical vision sensor. Chang et al. [10] presented a system using two biologically-inspired scene understanding models. In spite of their simplicity, the navigation performances of human vision inspired methods are heavily dependent on the quality of low level local features for their segmentation steps. In addition, for panorama images with heavy fisheye effect, traditional human vision inspired methods require prior calibration and warping preprocessing, further complicating these solutions.

In this paper, we focus on mobile robot navigation in open fields, with potential applications such as wheeled ground robots and low-flying quadrotors. This is a challenging problem: for one thing, such platforms lack computational capabilities required by SLAM-type algorithms and their typical tasks do not require sophisticated virtual 3D reconstruction. For another, those robots could be deployed outdoors with unpaved trails, where traditional path planning algorithms are likely to fail due to the increased difficulty in detecting unpaved surfaces.

As early as the 1990s, Pomerleau [11] formulated the road following task as a classification problem. Decades later, Hadsell et al. [12] developed a similar system for ground robot navigation in unknown environments. Recently, Giusti et al. [13] framed the camera orientation estimation as a three-class classification (Left, Front and Right) and captured a set of forest trail images with 3 head-mounted cameras, each pointing in one direction. Given one frame input, their model can decide the next optimal move (left/right turn or keep forward). One major drawback of this system is the coarse steering decisions, three fixed cameras and three choices are not precise enough for applications with higher orientation accuracy requirements.

We propose to replace multiple monocular cameras in [13] with a single spherical camera [14]. Thanks to the imaging characteristic of spherical cameras, each image captures the 360° panorama of the scene, eliminating the limitation on available steering choices.

One of the major challenges with spherical images is the heavy barrel distortion due to the ultra wide-angle fisheye lens, which complicates the implementation of conventional human vision inspired methods such as lane detection and trajectory tracking. Additional preprocessing steps such as prior calibration and dewarping are often required. However, in this paper, we circumvent these preprocessing steps by formulating navigation as a classification problem on finding the optimal potential path orientation directly based on the raw, uncalibrated spherical images.

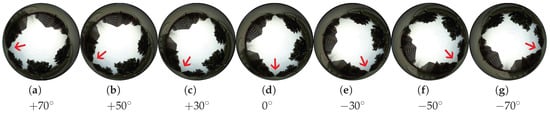

Another challenge in the “navigation via classification” task is the shortage of negative training examples. Negative training samples typically represent wrong heading commands, which could lead to disasters such as collision. Inspired by [11], we reformulate navigation as classifying spherical images into a series of rotation categories. Unlike [11], negative training samples (wrong heading direction) could be conveniently generated by simple rotations of positive training samples (optimal heading direction), thanks to the fisheye panorama.

The contributions of this paper are as follows:

- A native “navigation via classification” framework based purely on fisheye panoramas is proposed in this paper, without the need of any additional calibration or unwarping preprocessing steps. Uncalibrated spherical images could be directly fed into the proposed framework for training and navigation, eliminating strenuous efforts such as pixel-level labeling of training images, or high resolution 3D point cloud generation for training.

- An end-to-end convolutional neural network (CNN) based framework is proposed, achieving extraordinary classification accuracy on our realistic dataset. The proposed CNN framework is significantly more computational efficient (in the testing phase) than SLAM-type algorithms and readily deployable on more mobile platforms, especially battery powered ones with limited computational capabilities.

- A novel fisheye panoramas dataset, i.e., the Spherical-Navi image dataset is collected, with a unique labeling strategy enabling automatic generation of an arbitrary number of negative samples (wrong heading direction).

The rest of this paper is organized as follows: Section 2 reviews related literature on deep learning based navigation and spherical images based navigation. Section 3 presents our proposed “navigation via classification” framework based directly on fisheye panoramas. A novel fisheye panoramas dataset (Spherical-Navi image dataset), is introduced in Section 4 together with the evaluation of the proposed “navigation via classification” framework in Section 5. Finally, Section 6 concludes this paper.

2. Background and Related Work

Numerous research efforts have been devoted to robot navigation since decades ago, and SLAM-type algorithms had been the preferable method until the recent trends in applying deep learning techniques in all low-level/mid-level computer vision tasks. Various classification methods (even with advanced and multisensory data, [15,16,17]) and radar based localization methods [18,19,20] had not been competitive enough against SLAM-type algorithms, due to increased sensor complexity and mediocre recognition accuracy. The “navigation via classification” framework is made both feasible and attractive to researchers only after deep learning based methods dramatically improved the classification accuracy.

The current advent of General-Purpose computing on Graphics Processing Units (GPGPU) reduces the typical CNN training time to feasible levels (the total training time of the proposed network is approximately 20 h). The low computational cost of deployed CNN makes real-time processing easily attainable (the proposed network prototype achieves 100 fps without any sophisticated optimization).

2.1. Deep Learning in Navigation

Deep learning has shown its overwhelmingly advantages over conventional methods in many research areas, including object detection [21,22] and tracking [23,24], image segmentation [25,26], and hyper-spectral image classification [27,28] etc. With the success of AlexNet [29] on ImageNet classification challenge [30], convolutional neural networks (CNNs) have become off-the-shelf solution for classification problems.

A number of improvements have been proposed over the years to further improve the classification performance of CNNs, such as the pioneering work [31], which shows the regularization efficiency of “Dropout”, especially for exploring extremely large amount of parameters. Another example is Lin et al. [32], which enhances model discriminability for local patches within the receptive field by incorporating micro neural networks within complex structures.

Navigation based on classifying the surrounding scene images with neural networks has been explored as early as 1990s. The Autonomous Land Vehicle In a Neural Network (ALVINN) [11] project is arguably one of the most influential ones, with realistic visual perception tasks and performance target of real-time processing. However, the tiny scale, oversimplified structure of early day neural networks, the primitive imaging sensors as well as abysmal computing power limited the usability of [11] in reality.

Subsequently, many improvements to ALVINN have been proposed. Hadsell et al. [12] developed a more stable system for navigation in unknown environments by incorporating a self-supervised learning framework capable of long-range sensing. This system is capable of accurately classifying complex terrains at distances up to the horizon (from 5 to over 100 m away from the platform, far beyond the maximum stereo range of 12 m), thus significantly improving path-planning.

Recently, Giusti et al. demonstrated a quadrotor platform autonomously following forest trails in [13]. They formulated the optimization of heading orientation as a three-class classification problem (Left, Front and Right) and captured a series of forest trail images with 3 inboard cameras, each facing Left, Front and Right, respectively. Given one image frame, the deployed CNN model determines the optimal heading orientation among the three available choices: left turn, straight forward or right turn. The major drawback of this design is the limited number of choices of three (due to three cameras), which is a compromise between steering accuracy and quadrotor load capacity.

2.2. Spherical Cameras in Navigation

There are a few published prior attempts on navigation based on spherical cameras, however, their performances are adversely affected by either rectification errors in pre-processing or lack of accurate reference frame.

First, considering the heavy barrel distortion due to the ultra wide-angle lens (e.g., omnidirectional cameras, fish-eye cameras, and spherical cameras), conventional navigation applications usually require pre-processing efforts such as calibration and rectification (i.e., removing fisheye effects). For example, Li [33] proposed a calibration method for full-view spherical camera images. We argue that this pre-processing steps incur unnecessary computational complexity and accumulate errors thus we favor the alternative approach, i.e., navigation based directly on spherical images.

A related but subtly different research field, spherical rotation estimation, has been investigated as early as a decade ago. For example, Makadia et al. [34,35] estimated 3D spherical rotations via the transformations induced in the spectral domain, and directly via the sphere images without correspondence, respectively. A recent paper by Bazin et al. [36] estimated spherical rotations based on vanishing points in omnidirectional images. Caruso et al. [6] proposed an image alignment method based on a unified omnidirectional model, achieving fast and accurate incremental stereo matching based directly on curvilinear, wide-angled images.

For the former “calibration and rectification” based methods, the error-accumulating pre-processing step would be eliminated if raw spherical images are directly used for navigation. For the latter group of methods, a major difference of these “spherical rotations estimation” attempts from the navigation tasks is the requirement of reference image frame: in rotation estimation problems, an estimated rotation angle is evaluated with respect to the reference image frame; however, reference image frames are almost never readily available in robot navigation applications. To overcome these limitations, a highly accurate, raw spherical image based “navigation via classification” framework is proposed in this paper.

5. Experimental Results

5.1. Sky Pixels Elimination

The proposed robot platform collects data under various illumination conditions, due to different time-of-day and weather. Before feeding the spherical images into training networks, the central sky pixels (within a predefined radius) are masked out. Empirically, we found that these sky pixel values are heavily susceptible to illumination changes and our network gains overall classification accuracy if these sky pixels are masked out. Subsequently, spherical images are normalized in the YUV color space to achieve zero mean and unit variance.

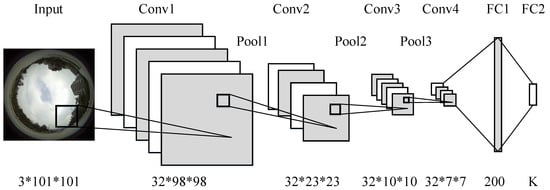

5.2. Network Setup and Training

Three algorithms are compared on the proposed Spherical-Navi dataset in Table 1. All of them share identical convolutional layers with the filter size 4. Their following pooling layers are of “Max-pooling” type, which select the local maximum values from the winning neurons.

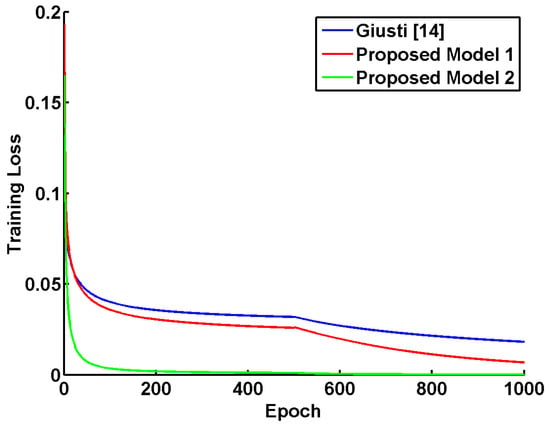

We follow the training configurations in Giusti et al. [13], with weights initialized as in [40] and biases initialized by zeros. During the training procedure, a higher initial learning rate () is selected for the proposed “Model 1” than that () in the proposed “Model 2”. When the training loss stops decreasing, the learning rate is adjusted to one-tenth of the previous one. For better generalization to the testing phase, a mini-batch of size 10 is incorporated and all training samples are shuffled before each epoch. The training losses are illustrated against epoch in Figure 6, where our “Model 2” with batch normalization achieves significantly faster convergence to “better” local minima with smaller training loss value.

Figure 6.

Training losses of competing models with the Adagrad optimizer. The learning rate is decreased by a factor of ten every 500 epochs. Our proposed “Model 2” with “Batch Normalization” achieves the fastest convergence and the lowest training loss.

The proposed “Model 1” and “Model 2” algorithms are developed with the Torch7 deep learning package [46] and the respective network parameters are summarized in Table 1. With the proposed models and Spherical-Navi Dataset, all training procedures finish within 3 days using a PC with one Intel Core-i7 3.4 GHz CPU, or less than 20 h with a PC equipped with one Nvidia Titan X GPU. During the testing procedure, it takes the Nvidia Jetson TK1 installed onboard the robot platform only 10 milliseconds to process each spherical image.

5.3. Quantitative Results and Discussion

Table 2 summarizes the overall classification accuracies among competing algorithms with different number of navigation choices (i.e., as in Equation (1)). The LIBSVM [47] software with default settings (RBF kernel, , ) is chosen to implement the popular Support Vector Machine (SVM) classifier as a competing baseline. All deep learning based algorithms have achieved evident performance gains against the SVM baseline in various K settings. Generally, with more navigation choices (larger K), the classification accuracies drop for all competing algorithm, due to the increased complexity in the multiclass problem. Another factor that might contribute to imperfect classification is the camera mounting calibration, there could be some small rotating movements in the spherical camera during the capture process due to vibration.

Table 2.

Classification Accuracies on the Spherical-Navi dataset.

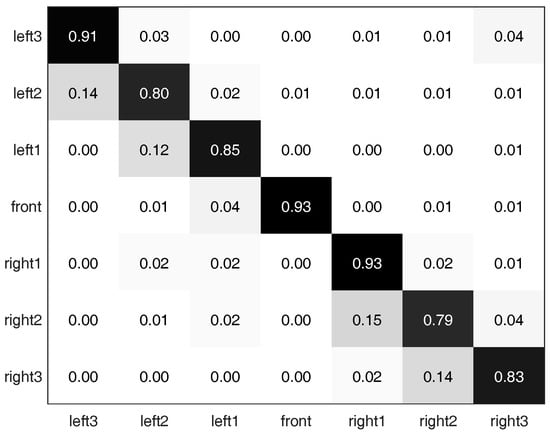

Additionally, Figure 7 provides the multi-class classification confusion matrix [48,49] with 7 navigation choices (, last row in Table 2). With more navigation choices, spherical images from adjacent heading directions appear even more visually similar. The misclassification of adjacent choices leads to relatively larger sub-diagonal and super-diagonal values than other off-diagonal elements. We also note that while the robot platform is moving along a long stretch of straight path with non-distinctive scenes, Left3 view (leftmost view with perpendicular to drive path) appears to be a horizontal/vertical flip of Right3 view (rightmost view with perpendicular to drive path). This visual similarity could contribute to the slightly higher value in the upper-right element in the confusion matrix in Figure 7.

Figure 7.

Classification confusion matrix filled with normalized accuracies in the case of 7 navigation choices. Diagonal elements denote correct classification; while off-diagonals denote mistakes.

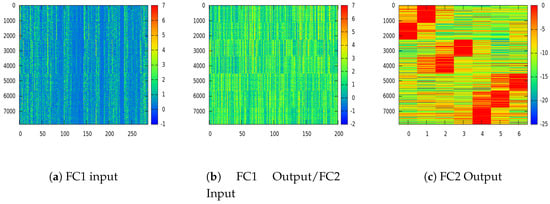

Deep learning based methods can be generally regarded as a superbly discriminative feature extractor, and Figure 8 illustrates the progressive discriminability enhancement procedure layer after layer. Class-wise aggregated 8000 sample training images are fed into the proposed “Model 2” network with 7 navigation choices (). The input of FC1 layer (i.e., the output from the last CONV layer), the output of FC1 layer (i.e., the input of FC2 layer) and the output of FC2 layer are visualized in Figure 8a–c, respectively. As the dimension of features decreases from 288 in “FC1 input” to 7 in “FC2 output” (Please refer to the last column in Table 1 for the layer structure of the proposed “Model 2” network), features are condensed into more compact and discriminative format, which is visually verifiable by inspecting the distinctive patterns in Figure 8c.

Figure 8.

Extracted features from different layers in the proposed “Model 2” network with ordered 8000 sample training images and 7 navigation choices (X-axis: feature dimension; Y-axis: index of samples, best viewed in color). The network nonlinear mappings of fully connected layer 1 (FC1) ((a)→(b)) and FC2 ((b)→(c)) enhance the discriminability one after another.

5.4. Robot Navigation Evaluation

The robot navigation performance of the proposed “Model 2” network (with 7 navigation choices) is evaluated in this section via multiple navigation tasks.

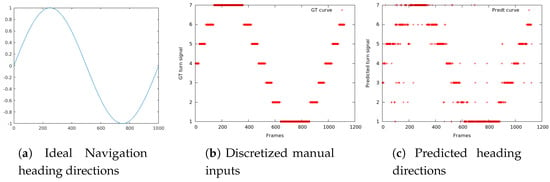

In the first simulated evaluation, a special test drive path (covering all possible heading directions) is manually selected, visualized in Figure 9a and discretized in Figure 9b to match the number of available navigation choices. The robot platform (as shown in Figure 1A) is subsequently deployed with the exact navigation manual input in Figure 9b and a series of evaluation spherical images are collected correspondingly. These spherical images are then fed into the trained network as testing images and the predicted heading directions are visualized in Figure 9c.

Figure 9.

Robot Navigation Simulation. X-axis: frame index; Y-axis: sine of heading direction angle. The ideal, manually selected navigation path in (a) covers the entire possible heading direction angle , from to , then and back to . Therefore, covers . (b) Limited by the 7 navigation choices, the robot navigation inputs are discretized. (c) visualizes the network predictions based purely on the collected spherical images.

The overall average prediction accuracy in Figure 9c is 87.3%, as compared to the ground truth in Figure 9b. Most of the misclassification errors happen during confusing the adjacent heading direction classes, which is understandable given the spatial similarity of typical scenes.

In the following real-world navigation evaluation, the robot platform (as shown in Figure 1A) is deployed in the Jing-Wu Garden (as shown in Figure 10) inside the campus of Northwestern Polytechnical University, Xi’an, Shaanxi, China. The tested paths cover both paved walking trails and unpaved surfaces (mostly lawn).

Figure 10.

Jing-Wu Garden, inside the campus of Northwestern Polytechnical University, Xi’an, Shaanxi, China, where the navigation of the robot platform was tested.

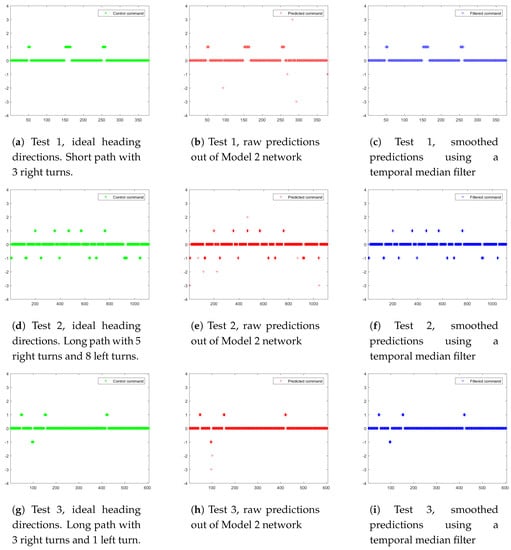

3 separate tests (Test 1 is shown in Figure 11a–c and Figure 12a–c. Test 2 is shown in Figure 11d–f and Figure 12d–f. Test 3 is shown in Figure 11g–i and Figure 12g–i) are conducted under various road conditions in 3 phases:

Figure 11.

Robot navigation heading directions in 3 separate tests. X-axis: frame index; Y-axis: heading direction choices (0, , , , positive values for right turns and negative values for left turns). (a,d,b) are manually labeled ideal heading directions; (b,e,h) are corresponding raw predictions from the proposed “Model 2” network; (c,f,i) are smoothed predictions using a temporal median filter of size 3.

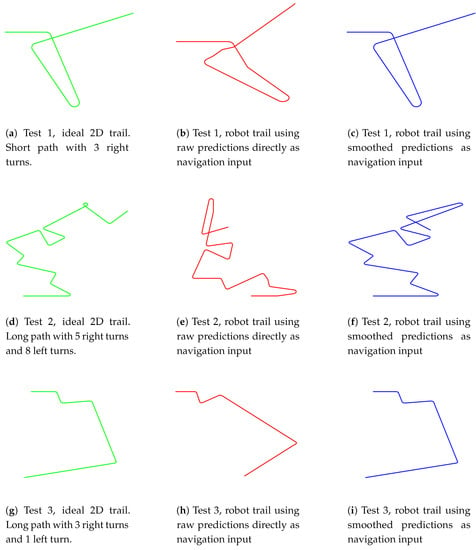

Figure 12.

Robot navigation 2D trails in 3 separate tests. (a,d,g) are manually selected ideal drive trails; (b,e,h) are trails with raw network predictions as navigation inputs; (c,f,i) are trails with smoothed network predictions as navigation inputs.

- Training data collection (Training data collection is illustrated in Figure 11a,d,g and Figure 12a,d,g): the robot platform is manually controlled to drive along 3 pre-defined paths multiple times, and the collected spherical images with synthesized optimal heading directions (detailed in Section 4.4) are used for “Model 2” network training.

- Navigation with smoothed network predictions (Navigation with smoothed network predictions is illustrated in Figure 11c,f,i) and Figure 12c,f,i): the robot platform is deployed at the starting point of each trail and it autonomously navigate along the path with smoothed network predictions as inputs. The smoothing is carried out with a temporal median filter of size 3.

The ideal heading directions and ideal two-dimensional trails are shown in Figure 11a,d,g and Figure 12a,d,g, respectively. A total of 7 heading direction choices are available at each frame, with 0 for straight forward, positive values for right turns and negative values for left turns. While navigating through corners, a series of consecutive small turning maneuvers (multiple and heading directions in Figure 11 and Figure 12) are preferred over sharp turns, allowing more training samples to be collected during these maneuvering frames.

Figure 11b,e,h demonstrate the raw predictions out of the “Model 2” network. Overall, vast majority of predictions are accurate for the straight forward (heading direction 0) sequences; while small portions of turning maneuvers are overestimated (with predicted heading directions and ). This could arise from the ambiguity of consecutive small turns and a single sharp turn achieving identical drive trail. In addition, there are only very subtle appearance differences during the limited number of frames while making turning maneuvers, which could result in confusions. In conjunction with the sporadic appearances of pedestrians, these confusions could lead to the spurious heading directions with excessive values ( and ). To remedy the situation, temporal coherence of heading directions need to be addressed. Empirically, a naive temporal median filter with window size 3 is effective enough to remove most spurious results, as shown in Figure 11c,f,i.

Figure 12 demonstrates the corresponding 2D trails of Figure 11. A few overestimated turning maneuvers in Figure 11b,e,h lead to wildly different trails (Figure 12b,e,h) from the ideal ones (Figure 12a,d,g). However, the smoothing-with-median-filtering remedy is highly successful in Figure 12c,i, only with Figure 12f showing an obvious difference from the Figure 12d towards the end of Test 2. A demonstration video is available online (Video demo: https://www.youtube.com/watch?v=4ZjnVOa8cKA.)

6. Conclusions

In this paper, a Convolutional Neural Network-based robot navigation framework is proposed to address the drawbacks in conventional algorithms, such as intense computational complexity in the testing phase and difficulty in collecting high quality labels in the training phase. The robot navigation task is formulated as a series of classification problems based on uncalibrated spherical images. The unique design of training data preparation eliminates time-consuming calibration and rectilinear correction processes, and enables automatic generation of an arbitrary number of negative training samples for better performance.

One potential improvement direction is the incorporation of temporal information via Recurrent Neural Networks (RNNs)/Long Short Term Memory networks (LSTMs). In addition, there are also multiple related problems for future research, such as indoor navigation and off-road collision avoidance. Source codes of the proposed methods and the Spherical-Navi dataset are available for download on our project web page (Project page: https://hijeffery.github.io/PanoNavi/).

Acknowledgments

This work is supported by the National Natural Science Foundation of China (No. 61672429, No. 61272288, No. 61231016), The National High Technology Research and Development Program of China (863 Program) (No. 2015AA016402), ShenZhen Science and Technology Foundation (JCYJ20160229172932237), Northwestern Polytechnical University (NPU) New AoXiang Star (No. G2015KY0301), Fundamental Research Funds for the Central Universities (No. 3102015AX007), NPU New People and Direction (No. 13GH014604).

Author Contributions

L.R. and T.Y. conceived and designed the experiments and analysed the data; L.R. wrote the manuscript; Y.Z., and Q.Z. analysed the data and updated the manuscript.

Conflicts of Interest

The authors declare no conflict of interest. The founding sponsors had no role in the design of the study; in the collection, analyses, or interpretation of data; in the writing of the manuscript, and in the decision to publish the results.

Abbreviations

The following abbreviations are used in this manuscript:

| CNN | Convolutional Neural Networks |

| SLAM | Simultaneous Localization And Mapping |

References

- Davison, A.J.; Reid, I.D.; Molton, N.D.; Stasse, O. MonoSLAM: Real-time single camera SLAM. IEEE Trans. Pattern Anal. Mach. Intell. 2007, 29, 1052–1067. [Google Scholar] [CrossRef] [PubMed]

- Klein, G.; Murray, D. Parallel tracking and mapping for small AR workspaces. In Proceedings of the 2007 6th IEEE and ACM International Symposium on Mixed and Augmented Reality, Nara, Japan, 13–16 November 2007; pp. 225–234. [Google Scholar]

- Cummins, M.; Newman, P. FAB-MAP: Probabilistic localization and mapping in the space of appearance. Int. J. Robot. Res. 2008, 27, 647–665. [Google Scholar] [CrossRef]

- Newcombe, R.A.; Lovegrove, S.J.; Davison, A.J. DTAM: Dense tracking and mapping in real-time. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Barcelona, Spain, 6–13 November 2011; pp. 2320–2327. [Google Scholar]

- Newcombe, R.A.; Izadi, S.; Hilliges, O.; Molyneaux, D.; Kim, D.; Davison, A.J.; Kohi, P.; Shotton, J.; Hodges, S.; Fitzgibbon, A. KinectFusion: Real-time dense surface mapping and tracking. In Proceedings of the 10th IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Basel, Switzerland, 26–29 October 2011; pp. 127–136. [Google Scholar]

- Caruso, D.; Engel, J.; Cremers, D. Large-Scale Direct SLAM for Omnidirectional Cameras. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Hamburg, Germany, 28 September–2 October 2015. [Google Scholar]

- Hillel, A.B.; Lerner, R.; Levi, D.; Raz, G. Recent progress in road and lane detection: A survey. Mach. Vis. Appl. 2014, 25, 727–745. [Google Scholar] [CrossRef]

- Liang, X.; Wang, H.; Chen, W.; Guo, D.; Liu, T. Adaptive Image-Based Trajectory Tracking Control of Wheeled Mobile Robots With an Uncalibrated Fixed Camera. IEEE Trans. Control Syst. Technol. 2015, 23, 2266–2282. [Google Scholar] [CrossRef]

- Lu, K.; Li, J.; An, X.; He, H. Vision sensor-based road detection for field robot navigation. Sensors 2015, 15, 29594–29617. [Google Scholar] [CrossRef] [PubMed]

- Chang, C.K.; Siagian, C.; Itti, L. Mobile robot vision navigation & localization using gist and saliency. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Taipei, Taiwan, 18–22 October 2010; pp. 4147–4154. [Google Scholar]

- Pomerleau, D.A. Efficient training of artificial neural networks for autonomous navigation. Neural Comput. 1991, 3, 88–97. [Google Scholar] [CrossRef]

- Hadsell, R.; Sermanet, P.; Ben, J.; Erkan, A.; Scoffier, M.; Kavukcuoglu, K.; Muller, U.; LeCun, Y. Learning long-range vision for autonomous off-road driving. J. Field Robot. 2009, 26, 120–144. [Google Scholar] [CrossRef]

- Giusti, A.; Guzzi, J.; Ciresan, D.; He, F.L.; Rodriguez, J.P.; Fontana, F.; Faessler, M.; Forster, C.; Schmidhuber, J.; Di Caro, G.; et al. A Machine Learning Approach to Visual Perception of Forest Trails for Mobile Robots. IEEE Robot. Autom. Lett. 2016, 1, 661–667. [Google Scholar] [CrossRef]

- Ran, L.; Zhang, Y.; Yang, T.; Zhang, P. Autonomous Wheeled Robot Navigation with Uncalibrated Spherical Images. In Chinese Conference on Intelligent Visual Surveillance; Springer: Singapore, 2016; pp. 47–55. [Google Scholar]

- Zhang, Q.; Hua, G. Multi-View Visual Recognition of Imperfect Testing Data. In Proceedings of the 23rd Annual ACM Conference on Multimedia Conference, Brisbane, Australia, 26–30 October 2015; pp. 561–570. [Google Scholar]

- Zhang, Q.; Hua, G.; Liu, W.; Liu, Z.; Zhang, Z. Auxiliary Training Information Assisted Visual Recognition. IPSJ Trans. Comput. Vis. Appl. 2015, 7, 138–150. [Google Scholar] [CrossRef]

- Zhang, Q.; Hua, G.; Liu, W.; Liu, Z.; Zhang, Z. Can Visual Recognition Benefit from Auxiliary Information in Training? In Computer Vision—ACCV 2014; Springer: Singapore, 1–5 November 2014; Volume 9003, pp. 65–80. [Google Scholar]

- Abeida, H.; Zhang, Q.; Li, J.; Merabtine, N. Iterative sparse asymptotic minimum variance based approaches for array processing. Signal Proc. IEEE Trans. 2013, 61, 933–944. [Google Scholar] [CrossRef]

- Zhang, Q.; Abeida, H.; Xue, M.; Rowe, W.; Li, J. Fast implementation of sparse iterative covariance-based estimation for source localization. J. Acoust. Soc. Am. 2012, 131, 1249–1259. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Q.; Abeida, H.; Xue, M.; Rowe, W.; Li, J. Fast implementation of sparse iterative covariance-based estimation for array processing. In Proceedings of the 2011 Conference Record of the Forty Fifth Asilomar Conference on Signals, Systems and Computers (ASILOMAR), Pacific Grove, CA, USA, 6–9 November 2011; pp. 2031–2035. [Google Scholar]

- Sermanet, P.; Eigen, D.; Zhang, X.; Mathieu, M.; Fergus, R.; LeCun, Y. Overfeat: Integrated recognition, localization and detection using convolutional networks. arXiv 2014. [Google Scholar]

- Girshick, R.; Donahue, J.; Darrell, T.; Malik, J. Region-based convolutional networks for accurate object detection and segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2016, 38, 142–158. [Google Scholar] [CrossRef] [PubMed]

- Wang, L.; Ouyang, W.; Wang, X.; Lu, H. STCT: Sequentially Training Convolutional Networks for Visual Tracking. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 26 June–1 July 2016. [Google Scholar]

- Nam, H.; Han, B. Learning Multi-Domain Convolutional Neural Networks for Visual Tracking. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 26 June–1 July 2016. [Google Scholar]

- Ran, L.; Zhang, Y.; Hua, G. CANNET: Context aware nonlocal convolutional networks for semantic image segmentation. In Proceedings of the IEEE International Conference on Image Processing (ICIP), Quebec City, QC, Canada, 27–30 September 2015; pp. 4669–4673. [Google Scholar]

- Chen, L.C.; Barron, J.T.; Papandreou, G.; Murphy, K.; Yuille, A.L. Semantic Image Segmentation with Task-Specific Edge Detection Using CNNs and a Discriminatively Trained Domain Transform. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 26 June–1 July 2016. [Google Scholar]

- Hu, W.; Huang, Y.Y.; Wei, L.; Zhang, F.; Li, H.C. Deep Convolutional Neural Networks for Hyperspectral Image Classification. J. Sens. 2015, 2015. [Google Scholar] [CrossRef]

- Ran, L.; Zhang, Y.; Wei, W.; Yang, T. Bands Sensitive Convolutional Network for Hyperspectral Image Classification. In Proceedings of the International Conference on Internet Multimedia Computing and Service, Xi’an, China, 19–21 August 2016; pp. 268–272. [Google Scholar]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. In Proceedings of the 25th International Conference on Neural Information Processing Systems, Lake Tahoe, NV, USA, 3–8 December 2012; pp. 1097–1105. [Google Scholar]

- Russakovsky, O.; Deng, J.; Su, H.; Krause, J.; Satheesh, S.; Ma, S.; Huang, Z.; Karpathy, A.; Khosla, A.; Bernstein, M.; et al. Imagenet large scale visual recognition challenge. Int. J. Comput. Vis. 2015, 115, 211–252. [Google Scholar] [CrossRef]

- Srivastava, N.; Hinton, G.; Krizhevsky, A.; Sutskever, I.; Salakhutdinov, R. Dropout: A simple way to prevent neural networks from overfitting. J. Mach. Learn. Res. 2014, 15, 1929–1958. [Google Scholar]

- Lin, M.; Chen, Q.; Yan, S. Network In Network. In Proceedings of the International conference on Learning Representations (ICLR), Banff, AB, Canada, 14–16 April 2014. [Google Scholar]

- Li, S. Full-view spherical image camera. In Proceedings of the 18th International Conference on Pattern Recognition (ICPR’06), Hong Kong, China, 20–24 August 2006; Volume 4, pp. 386–390. [Google Scholar]

- Makadia, A.; Sorgi, L.; Daniilidis, K. Rotation estimation from spherical images. In Proceedings of the 2004 17th International Conference on Pattern Recognition, Cambridge, UK, 26 August 2004; Volume 3, pp. 590–593. [Google Scholar]

- Makadia, A.; Daniilidis, K. Rotation recovery from spherical images without correspondences. IEEE Trans. Pattern Anal. Mach. Intell. 2006, 28, 1170–1175. [Google Scholar] [CrossRef] [PubMed]

- Bazin, J.C.; Demonceaux, C.; Vasseur, P.; Kweon, I. Rotation estimation and vanishing point extraction by omnidirectional vision in urban environment. Int. J. Robot. Res. 2012, 31, 63–81. [Google Scholar] [CrossRef]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going deeper with convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 1–9. [Google Scholar]

- Zintgraf, L.M.; Cohen, T.S.; Adel, T.; Welling, M. Visualizing Deep Neural Network Decisions: Prediction Difference Analysis. arXiv 2017. [Google Scholar]

- Nair, V.; Hinton, G.E. Rectified linear units improve restricted boltzmann machines. In Proceedings of the International Conference on Machine Learning (ICML), Haifa, Israel, 21–24 June 2010; pp. 807–814. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Delving deep into rectifiers: Surpassing human-level performance on imagenet classification. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 1026–1034. [Google Scholar]

- Ioffe, S.; Szegedy, C. Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift. In Proceedings of the International Conference on Machine Learning (ICML), Lille, France, 6–11 July 2015; pp. 448–456. [Google Scholar]

- Duchi, J.; Hazan, E.; Singer, Y. Adaptive subgradient methods for online learning and stochastic optimization. J. Mach. Learn. Res. 2011, 12, 2121–2159. [Google Scholar]

- Bottou, L. Large-scale machine learning with stochastic gradient descent. In Proceedings of COMPSTAT’2010; Springer: Paris, France, 2010; pp. 177–186. [Google Scholar]

- Ma, Z.; Liao, R.; Tao, X.; Xu, L.; Jia, J.; Wu, E. Handling motion blur in multi-frame super-resolution. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 5224–5232. [Google Scholar]

- Torralba, A.; Efros, A.A. Unbiased look at dataset bias. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Colorado Springs, CO, USA, 20–25 June 2011; pp. 1521–1528. [Google Scholar]

- Collobert, R.; Kavukcuoglu, K.; Farabet, C. Torch7: A matlab-like environment for machine learning. In Proceedings of the BigLearn, NIPS Workshop, Granada, Spain, 12–17 December 2011. [Google Scholar]

- Chang, C.C.; Lin, C.J. LIBSVM: A library for support vector machines. ACM Trans. Intell. Syst. Technol. 2011, 2, 27. [Google Scholar] [CrossRef]

- Stehman, S.V. Selecting and interpreting measures of thematic classification accuracy. Remote Sens. Environ. 1997, 62, 77–89. [Google Scholar] [CrossRef]

- Fawcett, T. An introduction to ROC analysis. Pattern Recognit. Lett. 2006, 27, 861–874. [Google Scholar] [CrossRef]

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).