Activity Recognition and Semantic Description for Indoor Mobile Localization

Abstract

:1. Introduction

2. Related Works

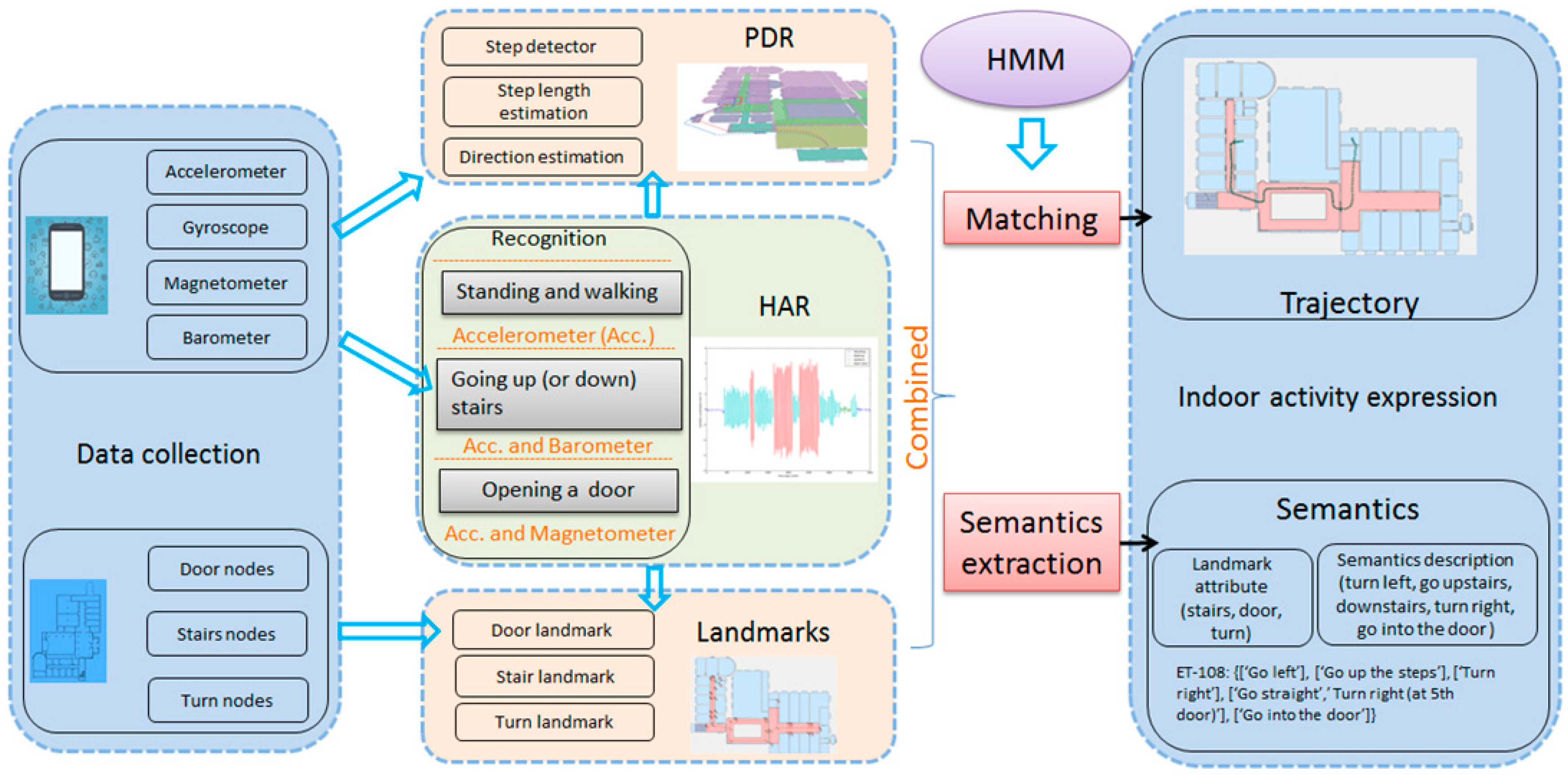

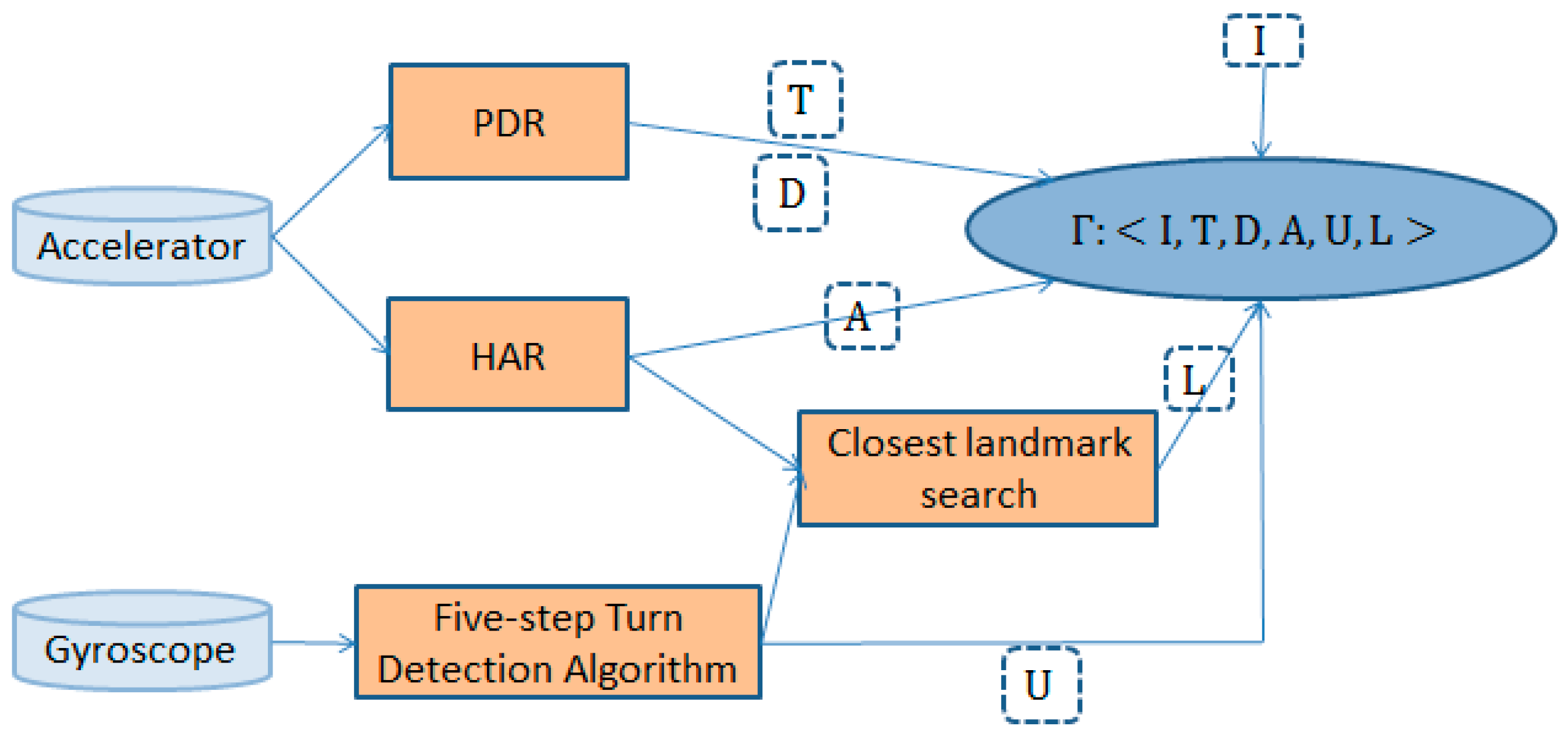

3. Methods

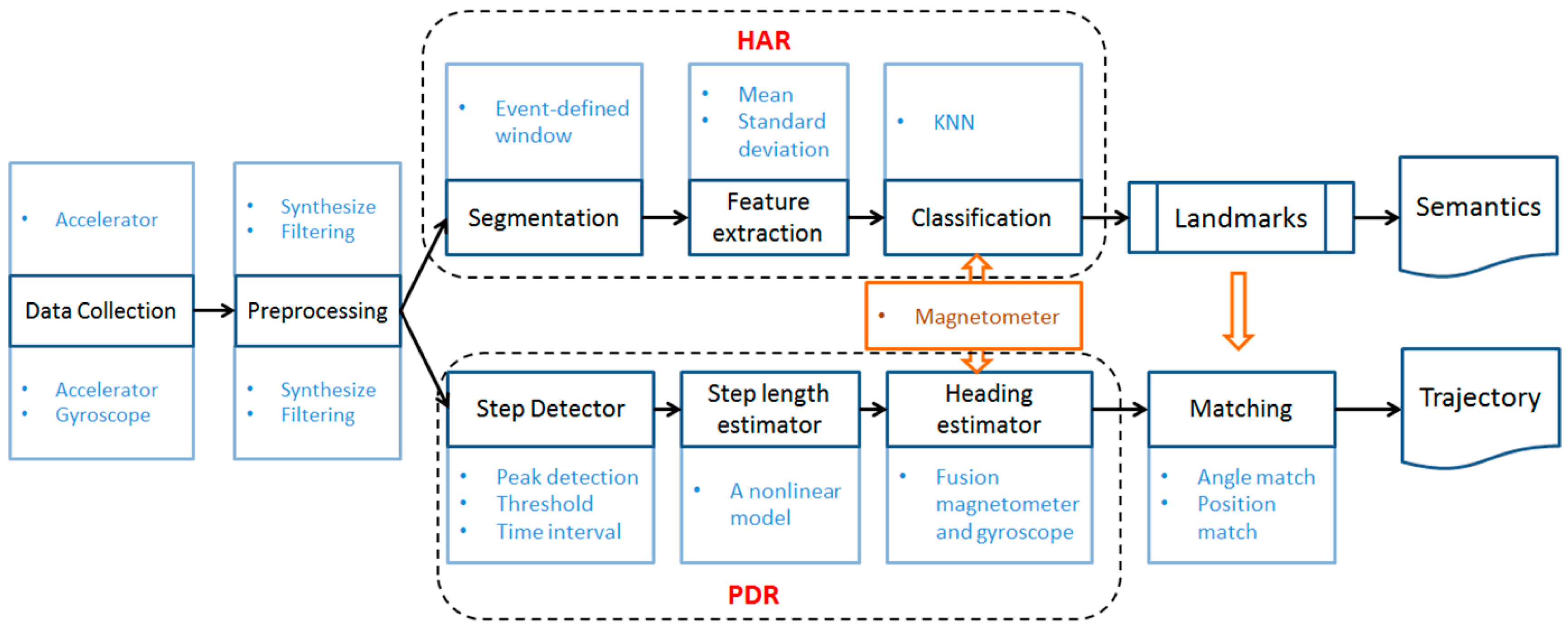

3.1. Location Estimation and Activity Recognition

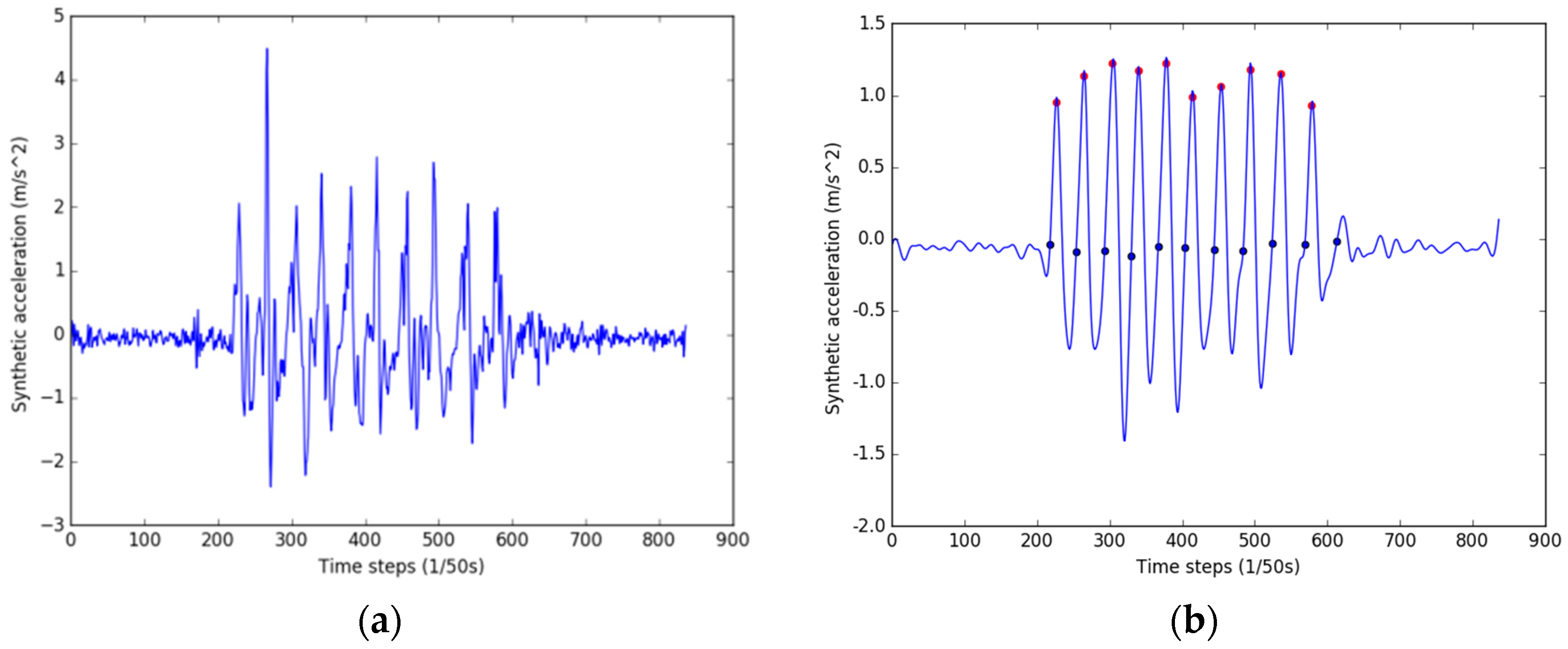

3.1.1. Landmark-Based PDR

- is the local maximum and is larger than a given threshold .

- The time between two consecutive detected peaks is greater than the minimum step period .

- According to human walking posture, the start of a step is the zero-crossing point before the peak.

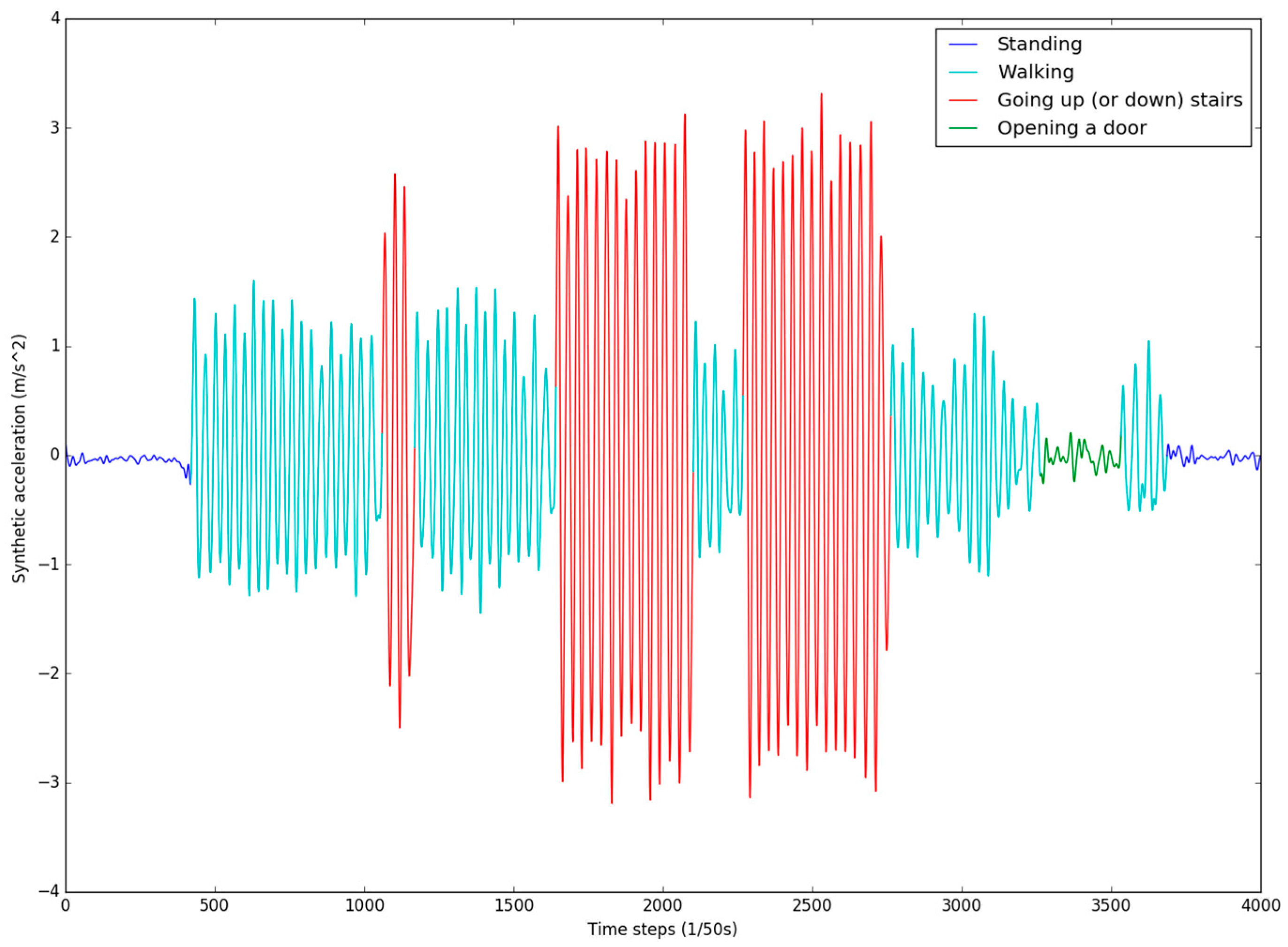

3.1.2. Multiple Sensor-Assisted HAR

| Algorithm 1. KNN. | |

| Input: | Samples that need to be categorized: ; the known sample pairs: (, ) |

| Output: | Prediction classification: |

| 1: | for every sample in the dataset to be predicted do |

| 2: | calculate the distance between (, ) and the current sample |

| 3: | sort the distances in increasing order |

| 4: | select the k samples with the smallest distances to |

| 5: | find the majority class of the k samples |

| 6: | return the majority class as the prediction classification |

| 7: | end For |

3.1.3. The Hidden Markov Model

- (1)

- N represents the hidden states in the model, which can be transferred between each other. The hidden states in HMM are landmark nodes in the indoor environment, such as a door, stairs or a turning point.

- (2)

- M indicates the observations of each hidden state, which are the user’s direction selection (east, south, west and north) and the activity result from HAR.

- (3)

- A and B state the transition probability and the emission probability, respectively. The pedestrian moves indoors from one node to another, and when the direction of the current state is determined, the reachable nodes are reduced. In order to reduce the algorithm’s complexity, A and B are combined to give a transition probability set . [, , , ] represent the transition probabilities of different directions.

- (4)

- π is the distribution in the initial state. The magnetometer and barometer provide direction and altitude information when the user starts recording, which helps to reduce the number of candidate nodes in the initial environment. If the starting point is unknown, the same initial probability is given.

| Algorithm 2. Improved Viterbi algorithm. | |

| Input: | The proposed HMM tuples ; HAR classification results ; PDR distance information ; Initial direction of magnetometer ; Initial pressure of barometer ; is the distance threshold. |

| Output: | Prediction trajectory. |

| 1: | , /* Determine the initial orientation and floor |

| 2: | for from 1 to do |

| 3: | for each path pass through to do |

| 4: | if ((Distance(), )) - )<) and (>0) then /* Determine whether the distance between two landmark nodes coincides with the distance information estimated by PDR |

| 5: | Obtain the subset data |

| 6: | end if |

| 7: | end for |

| 8: | end for |

| 9: | for in do |

| 10: | Obtain the landmark data set |

| 11: | if match with HAR data then |

| 12: | Add this trajectory to the final trajectory data set |

| 13: | end for |

| 14 | return Max()/* Return the trajectory of the maximum probability |

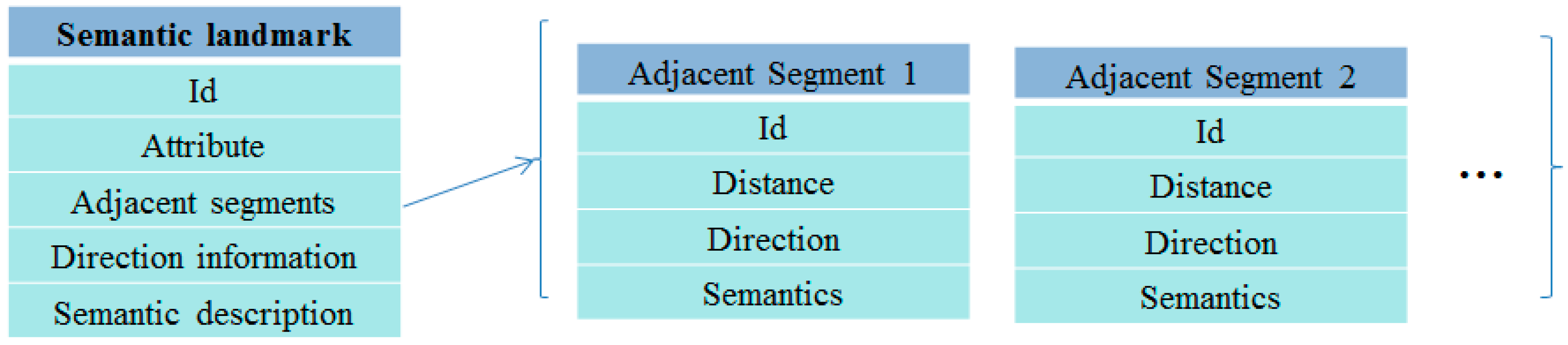

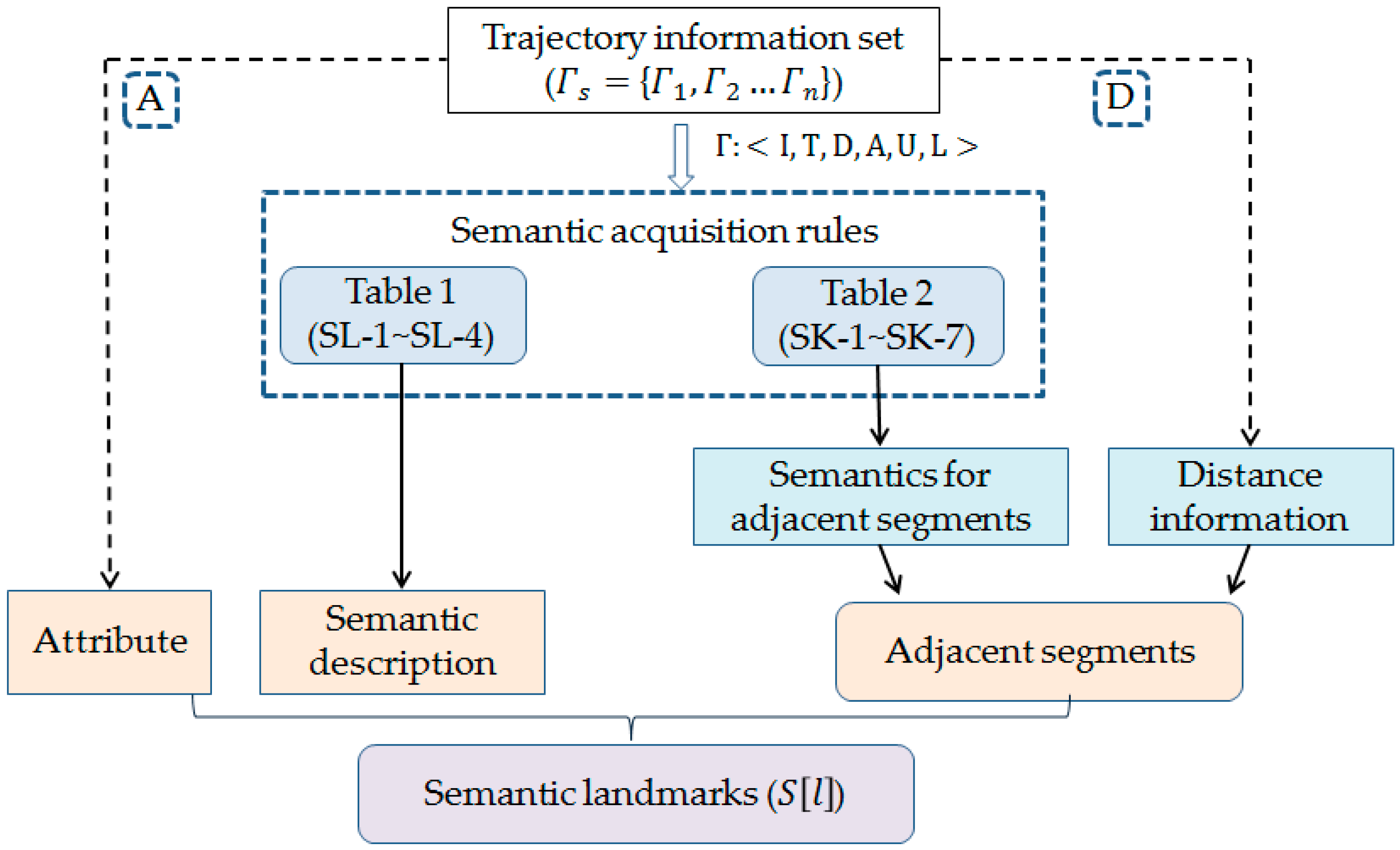

3.2. Semantic Landmark Model

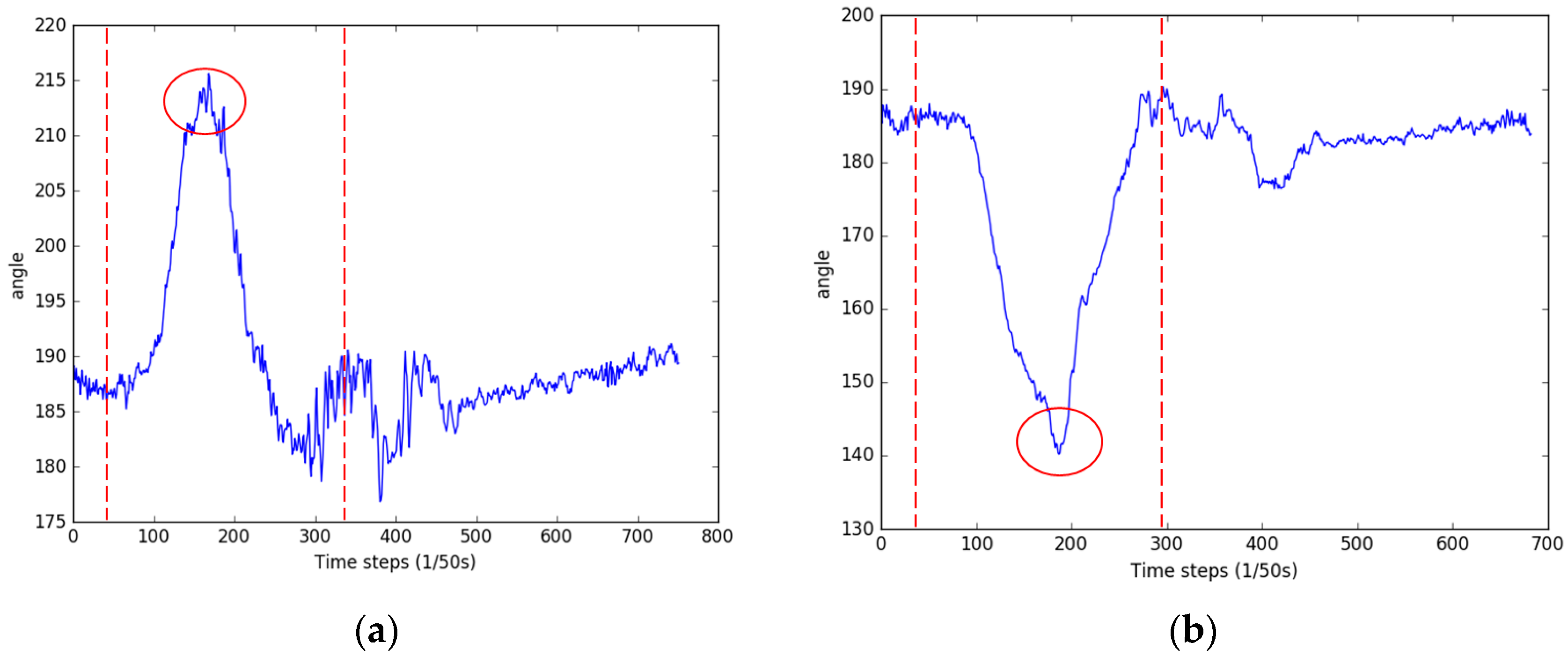

3.2.1. Trajectory Information Collection

| Algorithm 3. Five-step turn detection algorithm. | |

| Input: | Angle value sequence = [ |

| Output: | Direction change list . |

| 1: | Findpeaks()/* Find the local maximum sequence |

| 2: | for in do |

| 3: | if ( or ) and (>2) then |

| 4: | = |

| 5: | if () then |

| 6: | |

| 7: | else if () then |

| 8: | |

| 9: | else if () then |

| 10: | |

| 11: | else if () then |

| 12: | |

| 13: | else if () then |

| 14 | |

| 15: | end for |

| 16: | return |

- If the going-up-(or -down)-stairs activity is detected, the nearest stairs landmark is added to L.

- If direction change activity (see Algorithm 3) is detected, the nearest turn landmark is added to L.

- If a door-opening activity is detected, the nearest door landmark is added to L.

3.2.2. Semantics Extraction

- If the current landmark’s adjacent segments contain multiple turn or door landmarks and they have the same semantics, sort them by distance and then provide them with the order information or .

4. Experiment

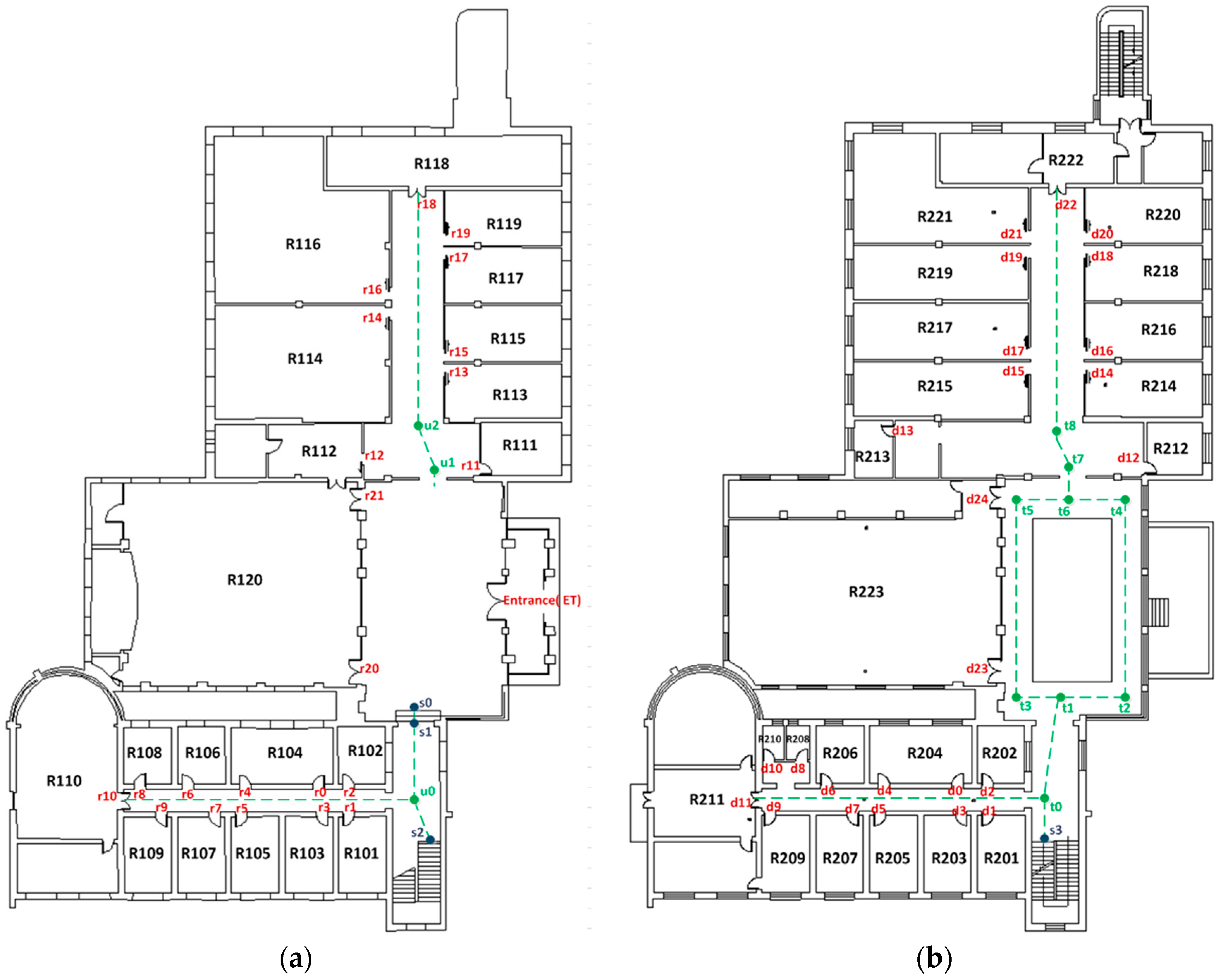

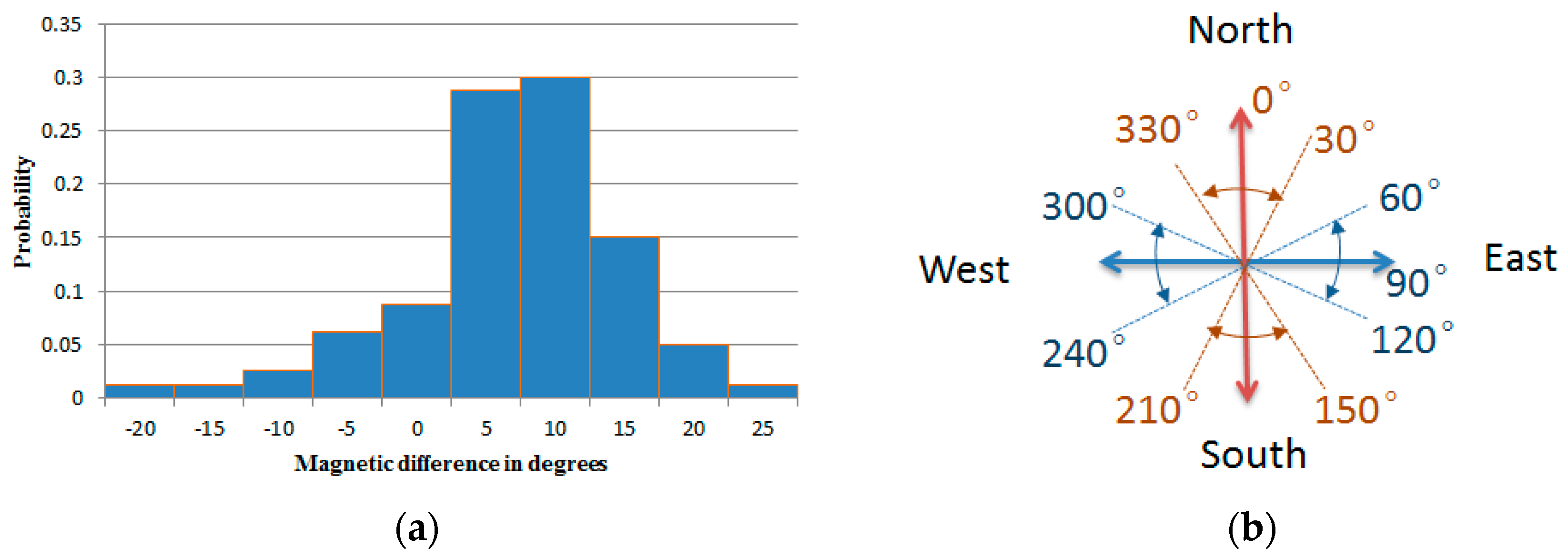

4.1. Pre-Knowledge

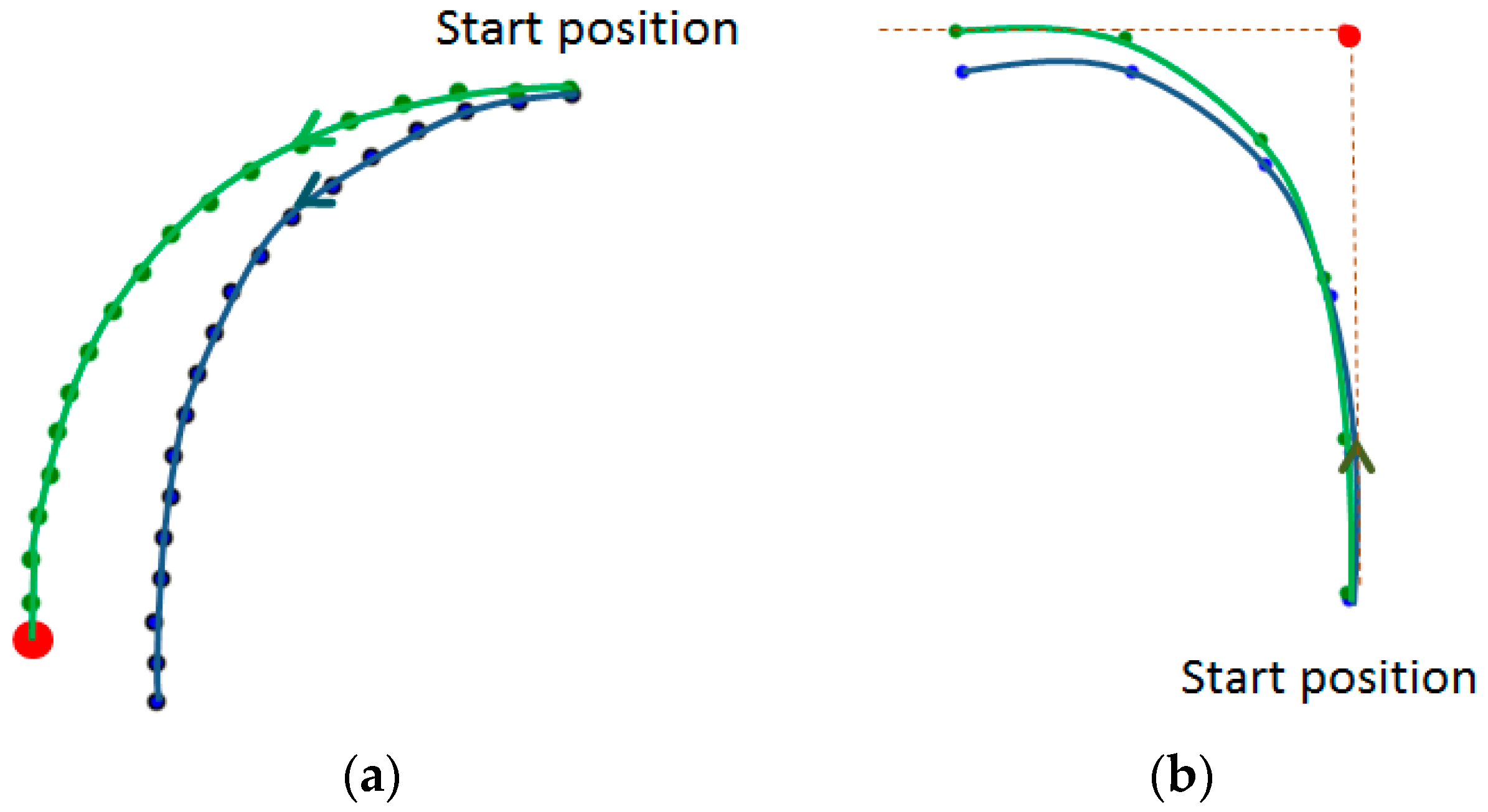

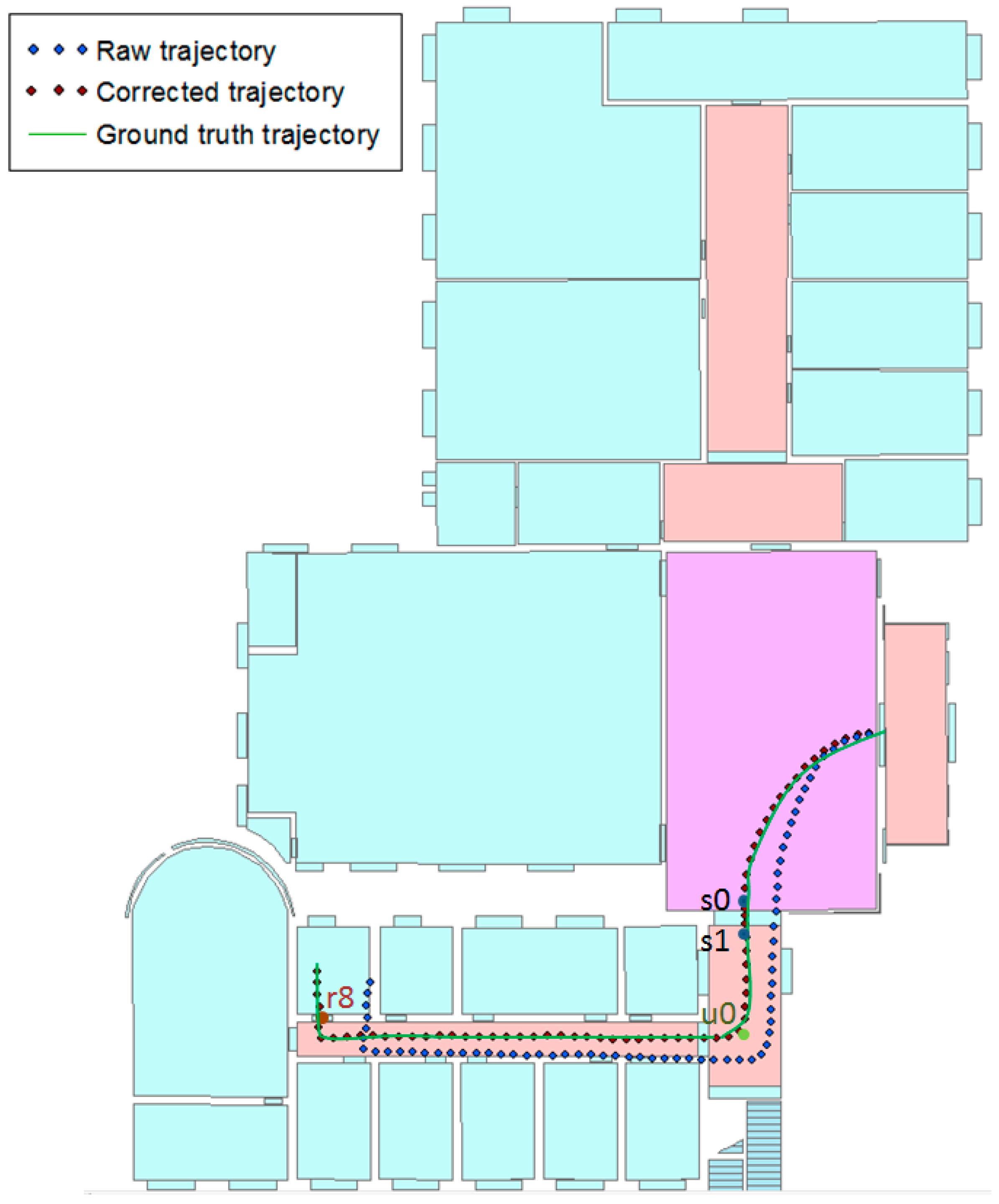

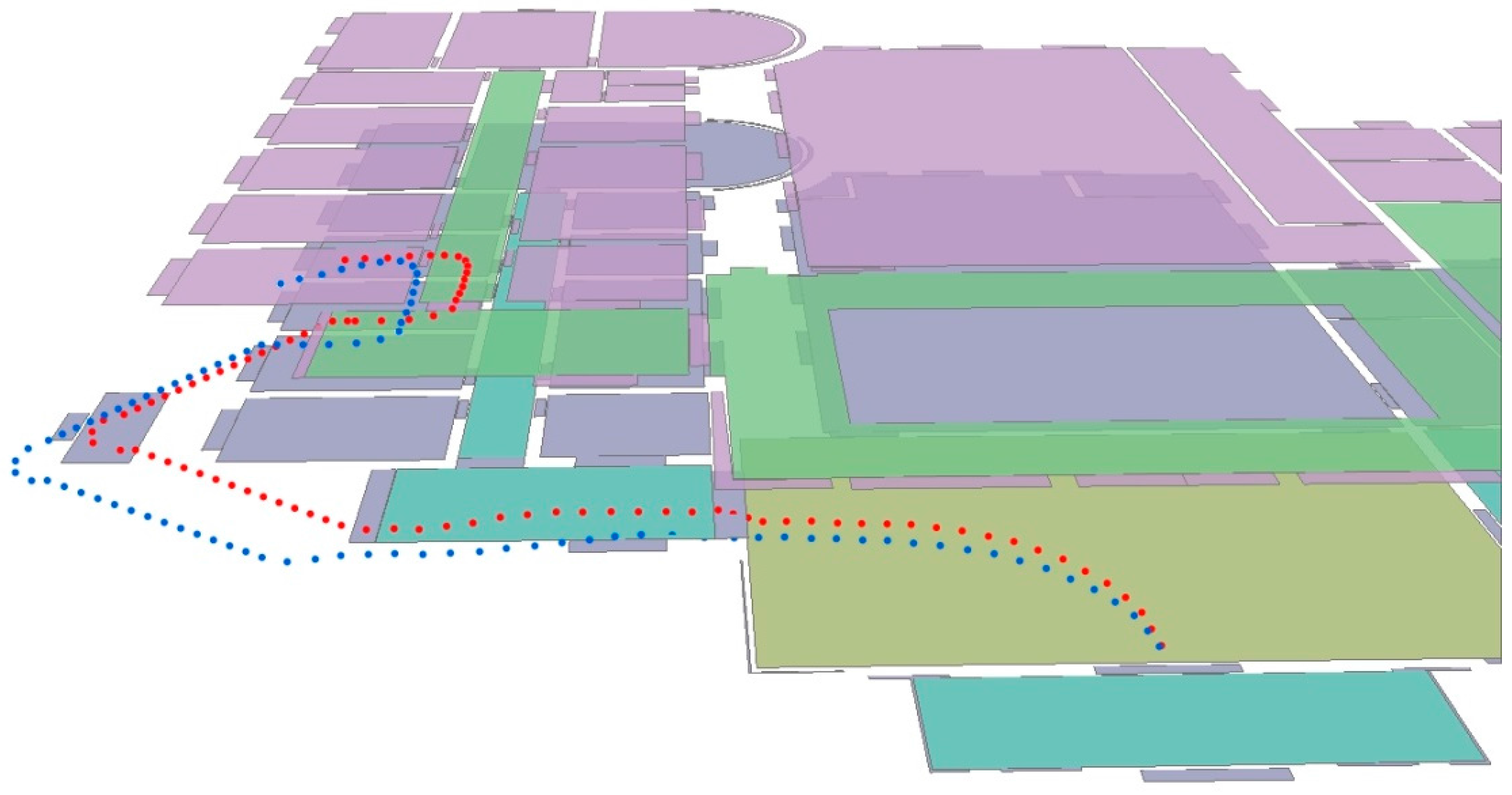

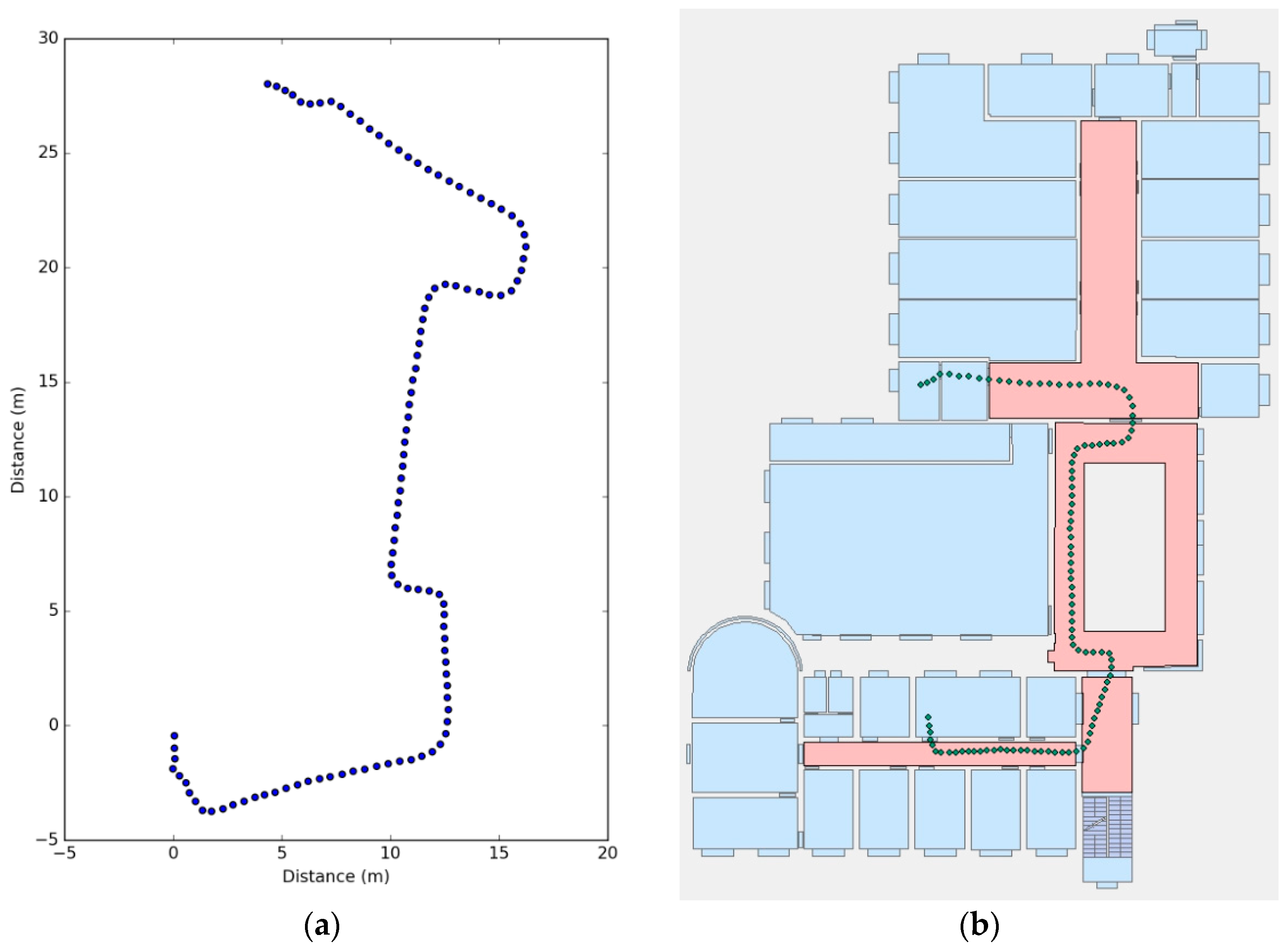

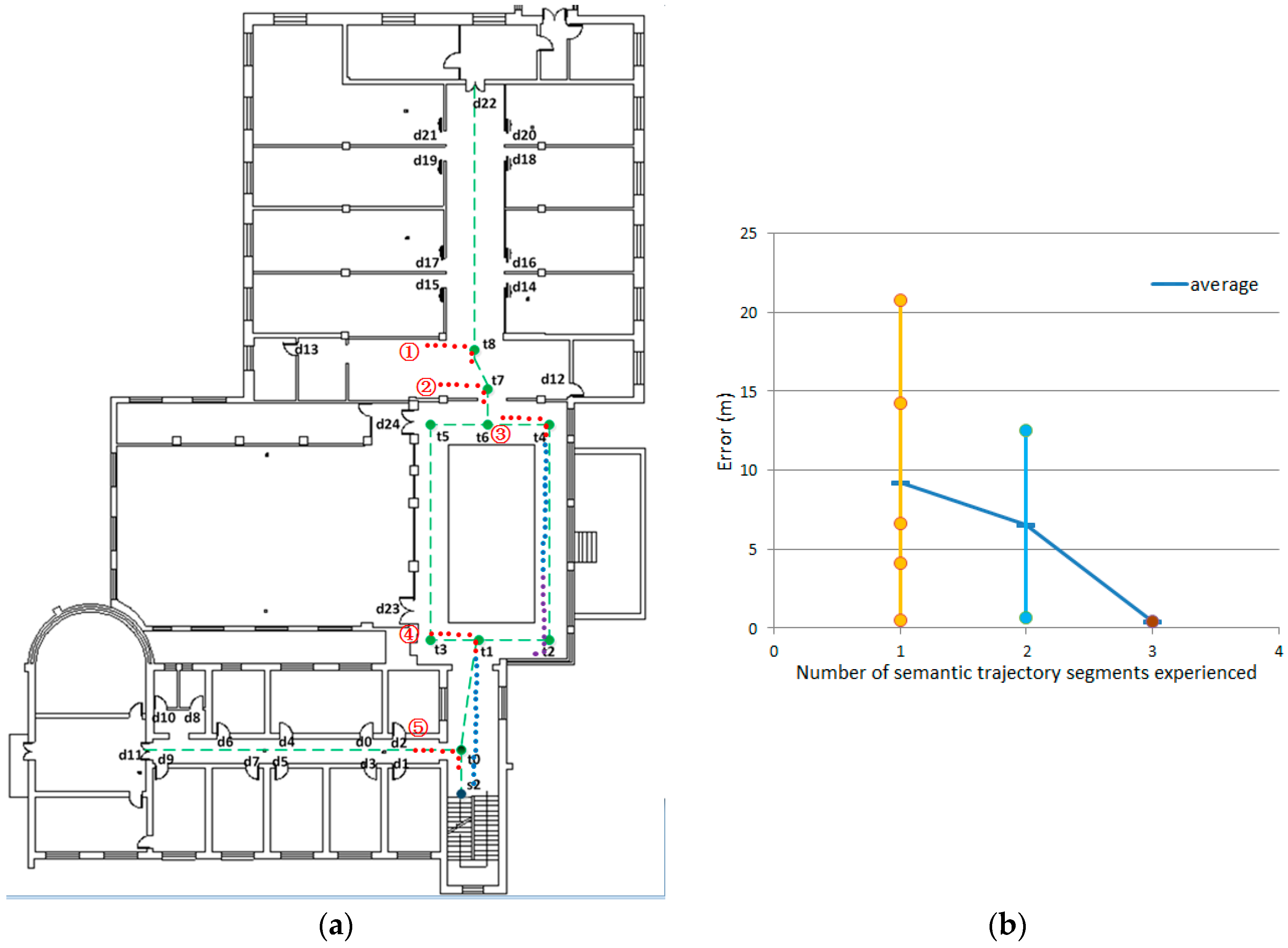

4.2. Trajectory Generation and Correction

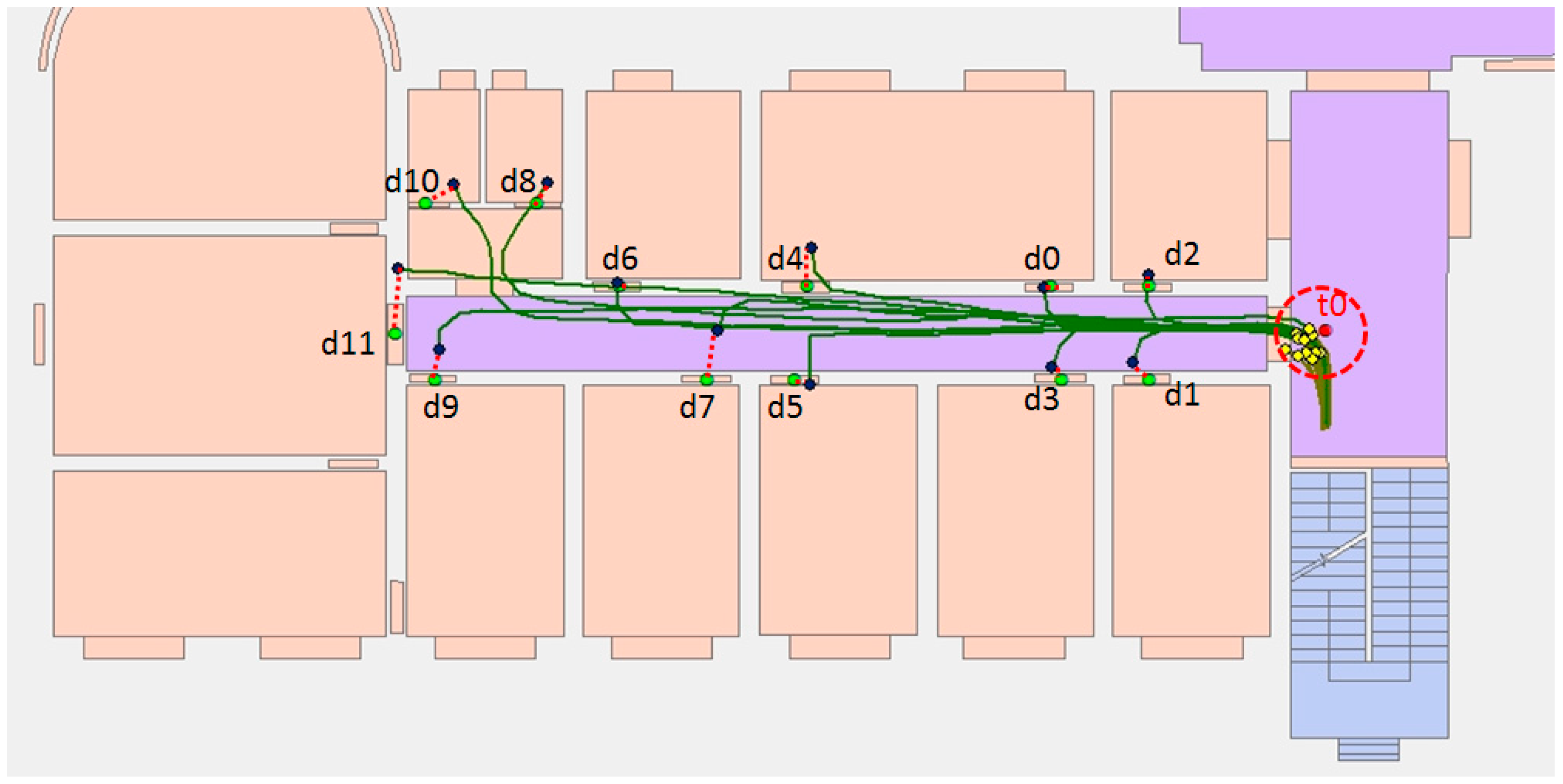

4.3. Semantics Extraction

5. Discussion

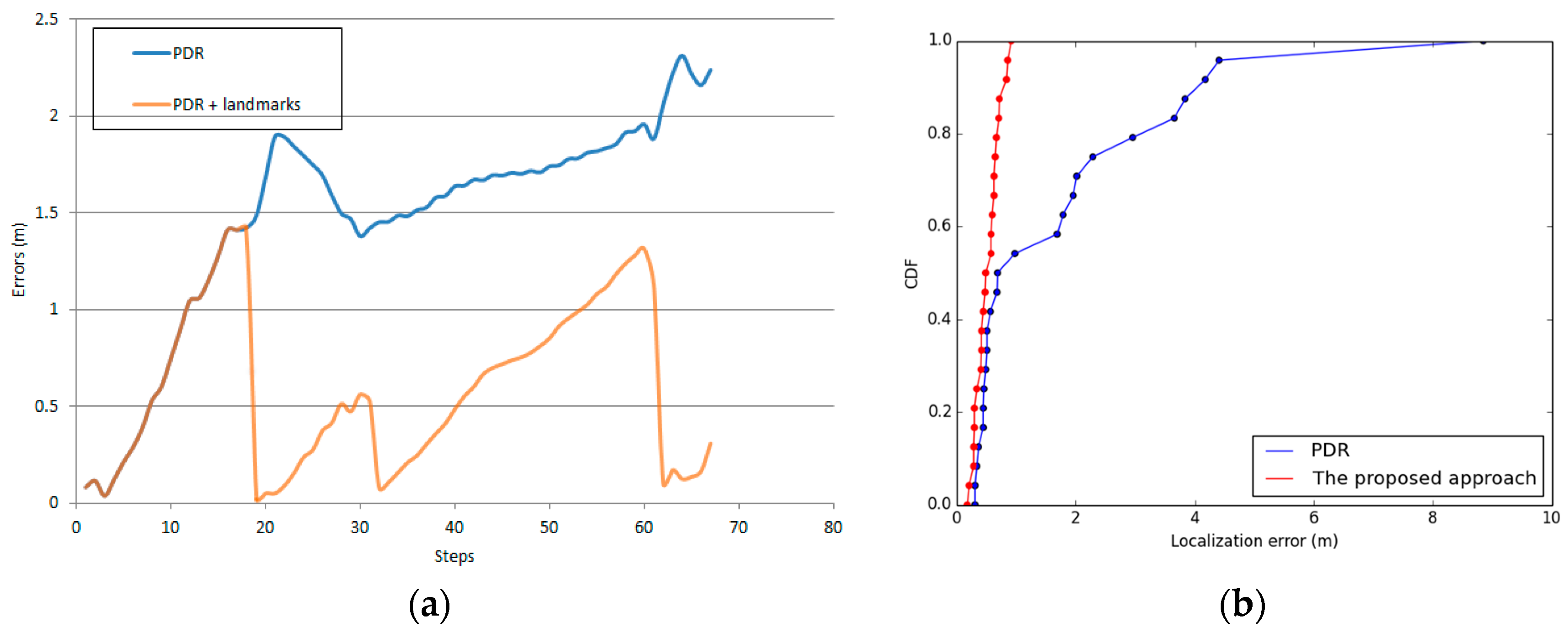

5.1. Error Analysis

5.1.1. HAR Classification Error

5.1.2. Localization Error

5.1.3. Landmark Matching Errors

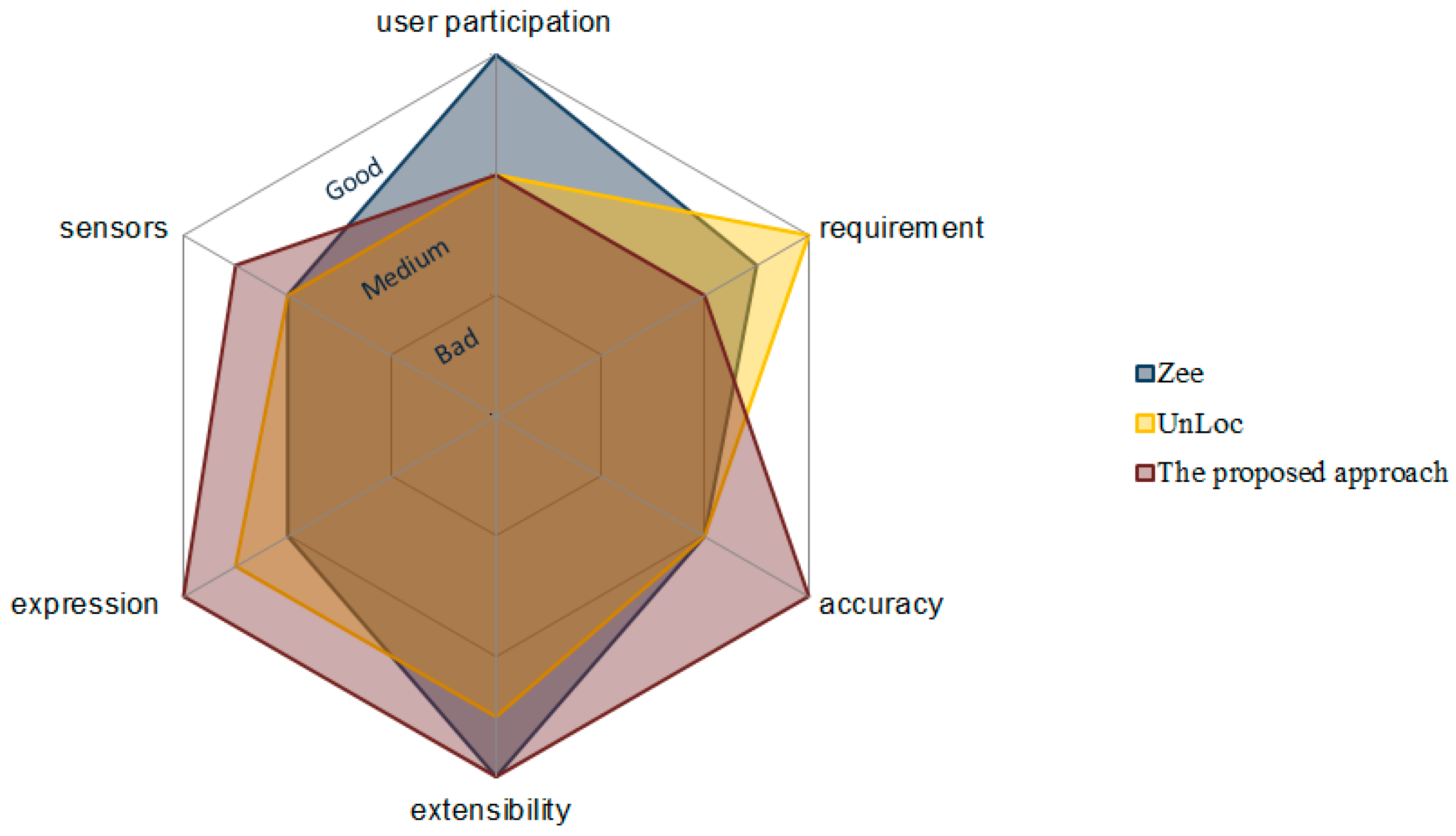

5.2. Comprehensive Comparison

5.3. Computational Complexity

6. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Deng, Z.; Wang, G.; Hu, Y.; Cui, Y. Carrying Position Independent User Heading Estimation for Indoor Pedestrian Navigation with Smartphones. Sensors 2016, 16, 677. [Google Scholar] [CrossRef] [PubMed]

- Gezici, S.; Tian, Z.; Giannakis, G.B.; Kobayashi, H.; Molisch, A.F.; Poor, H.V.; Sahinoglu, Z. Localization via ultra-wideband radios: A look at positioning aspects for future sensor networks. IEEE Signal Proc. Mag. 2005, 22, 70–84. [Google Scholar] [CrossRef]

- Zhu, W.; Cao, J.; Xu, Y.; Yang, L.; Kong, J. Fault-tolerant RFID reader localization based on passive RFID tags. IEEE Trans. Parallel Distrib. Syst. 2014, 25, 2065–2076. [Google Scholar] [CrossRef]

- Deng, Z.; Xu, Y.; Ma, L. Indoor positioning via nonlinear discriminative feature extraction in wireless local area network. Comput. Commun. 2012, 35, 738–747. [Google Scholar] [CrossRef]

- Li, H.; Chen, X.; Jing, G.; Wang, Y.; Cao, Y.; Li, F.; Zhang, X.; Xiao, H. An Indoor Continuous Positioning Algorithm on the Move by Fusing Sensors and Wi-Fi on Smartphones. Sensors 2015, 15, 31244–31267. [Google Scholar] [CrossRef] [PubMed]

- Guinness, R. Beyond Where to How: A Machine Learning Approach for Sensing Mobility Contexts Using Smartphone Sensors. Sensors 2015, 15, 9962–9985. [Google Scholar] [CrossRef] [PubMed]

- Incel, O.D.; Kose, M.; Ersoy, C. A Review and Taxonomy of Activity Recognition on Mobile Phones. BioNanoScience 2013, 3, 145–171. [Google Scholar] [CrossRef]

- Shoaib, M.; Bosch, S.; Incel, O.; Scholten, H.; Havinga, P. A Survey of Online Activity Recognition Using Mobile Phones. Sensors 2015, 15, 2059–2085. [Google Scholar] [CrossRef] [PubMed]

- Lara, O.D.; Labrador, M.A. A Survey on Human Activity Recognition using Wearable Sensors. IEEE Commun. Surv. Tutor. 2013, 15, 1192–1209. [Google Scholar] [CrossRef]

- Yang, Z.; Wu, C.; Zhou, Z.; Zhang, X.; Wang, X.; Liu, Y. Mobility increases localizability: A survey on wireless indoor localization using inertial sensors. ACM Comput. Surv. (CSUR) 2015, 47, 1–34. [Google Scholar] [CrossRef]

- Deng, Z.; Wang, G.; Hu, Y.; Wu, D. Heading Estimation for Indoor Pedestrian Navigation Using a Smartphone in the Pocket. Sensors 2015, 15, 21518–21536. [Google Scholar] [CrossRef] [PubMed]

- Paucher, R.; Turk, M. Location-based augmented reality on mobile phones. In Proceedings of the 2010 IEEE Computer Society Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), San Francisco, CA, USA, 13–18 June 2010; pp. 9–16.

- Kang, W.; Han, Y. SmartPDR: Smartphone-Based Pedestrian Dead Reckoning for Indoor Localization. IEEE Sens. J. 2015, 15, 2906–2916. [Google Scholar] [CrossRef]

- Alzantot, M.; Youssef, M. UPTIME: Ubiquitous pedestrian tracking using mobile phones. In Proceedings of the 2012 IEEE Wireless Communications and Networking Conference (WCNC), Paris, France, 1–4 April 2012; pp. 3204–3209.

- Chen, Z.; Zou, H.; Jiang, H.; Zhu, Q.; Soh, Y.; Xie, L. Fusion of WiFi, Smartphone Sensors and Landmarks Using the Kalman Filter for Indoor Localization. Sensors 2015, 15, 715–732. [Google Scholar] [CrossRef] [PubMed]

- Evennou, F.; Marx, F. Advanced integration of WiFi and inertial navigation systems for indoor mobile positioning. Eurasip J. Appl. Signal Process. 2006, 2006, 164. [Google Scholar] [CrossRef]

- Waqar, W.; Chen, Y.; Vardy, A. Incorporating user motion information for indoor smartphone positioning in sparse Wi-Fi environments. In Proceedings of the 17th ACM international conference on Modeling, analysis and simulation of wireless and mobile systems), Montreal, QC, Canada, 21–26 September 2014; pp. 267–274.

- Huang, Q.; Zhang, Y.; Ge, Z.; Lu, C. Refining Wi-Fi Based Indoor Localization with Li-Fi Assisted Model Calibration in Smart Buildings. In Proceedings of the International Conference on Computing in Civil and Building Engineering, Osaka, Japan, 6–8 July 2016.

- Hernández, N.; Ocaña, M.; Alonso, J.M.; Kim, E. Continuous Space Estimation: Increasing WiFi-Based Indoor Localization Resolution without Increasing the Site-Survey Effort. Sensors 2017, 17, 147. [Google Scholar] [CrossRef] [PubMed]

- Li, F.; Zhao, C.; Ding, G.; Gong, J.; Liu, C.; Zhao, F. A reliable and accurate indoor localization method using phone inertial sensors. In Proceedings of the 2012 ACM Conference on Ubiquitous Computing, Pittsburgh, USA, 5–8 September 2012; pp. 421–430.

- Xiao, Z.; Wen, H.; Markham, A.; Trigoni, N. Lightweight map matching for indoor localisation using conditional random fields. In IPSN-14 Proceedings of the 13th International Symposium on Information Processing in Sensor Networks, Berlin, Germany, 15–17 April 2014; pp. 131–142.

- Rai, A.; Chintalapudi, K.K.; Padmanabhan, V.N.; Sen, R. Zee: Zero-effort crowdsourcing for indoor localization. In Proceedings of the 18th Annual International Conference on Mobile Computing and Networking, Istanbul, Turkey, 22–26 August 2012; pp. 293–304.

- Leppäkoski, H.; Collin, J.; Takala, J. Pedestrian navigation based on inertial sensors, indoor map, and WLAN signals. J. Signal Process. Syst. 2013, 71, 287–296. [Google Scholar] [CrossRef]

- Wang, H.; Lenz, H.; Szabo, A.; Bamberger, J.; Hanebeck, U.D. WLAN-based pedestrian tracking using particle filters and low-cost MEMS sensors. In Proceedings of the 4th Workshop on Positioning, Navigation and Communication (WPNC’07), Hannover, Germany, 22 March 2007; pp. 1–7.

- Wang, H.; Sen, S.; Elgohary, A.; Farid, M.; Youssef, M.; Choudhury, R.R. No need to war-drive: unsupervised indoor localization. In Proceedings of the 10th International Conference on Mobile Systems, Applications, and Services, Ambleside, UK, 25–29 June 2012; pp. 197–210.

- Constandache, I.; Choudhury, R.R.; Rhee, I. Towards mobile phone localization without war-driving. In Proceedings of the 29th Conference on Computer Communications, San Diego, CA, USA, 15–19 March 2010; pp. 1–9.

- Anagnostopoulos, C.; Tsetsos, V.; Kikiras, P. OntoNav: A semantic indoor navigation system. In Proceedings of the 1st Workshop on Semantics in Mobile Environments (SME’05), Ayia Napa, Cyprus, 9 May 2005.

- Kolomvatsos, K.; Papataxiarhis, V.; Tsetsos, V. Semantic Location Based Services for Smart Spaces; Springer: Boston, MA, USA, 2009; pp. 515–525. [Google Scholar]

- Tsetsos, V.; Anagnostopoulos, C.; Kikiras, P.; Hadjiefthymiades, S. Semantically enriched navigation for indoor environments. Int. J. Web Grid Serv. 2006, 2, 453–478. [Google Scholar] [CrossRef]

- Park, J.; Teller, S. Motion Compatibility for Indoor Localization; Massachusetts Institute of Technology: Cambridge, MA, USA, 2014. [Google Scholar]

- Harle, R. A Survey of Indoor Inertial Positioning Systems for Pedestrians. IEEE Commun. Surv. Tutor. 2013, 15, 1281–1293. [Google Scholar] [CrossRef]

- Sun, Z.; Mao, X.; Tian, W.; Zhang, X. Activity classification and dead reckoning for pedestrian navigation with wearable sensors. Meas. Sci. Technol. 2008, 20, 15203. [Google Scholar] [CrossRef]

- Kappi, J.; Syrjarinne, J.; Saarinen, J. MEMS-IMU based pedestrian navigator for handheld devices. In Proceedings of the 14th International Technical Meeting of the Satellite Division of the Institute of Navigation (ION GPS 2001), Salt Lake City, UT, USA, 11–14 September 2001; pp. 1369–1373.

- Kang, W.; Nam, S.; Han, Y.; Lee, S. Improved heading estimation for smartphone-based indoor positioning systems. In Proceedings of the 2012 IEEE 23rd International Symposium on Personal Indoor and Mobile Radio Communications (PIMRC), Sydney, Australia, 9–12 September 2012; pp. 2449–2453.

- Gusenbauer, D.; Isert, C.; Krösche, J. Self-contained indoor positioning on off-the-shelf mobile devices. In Proceedings of the 2010 International Conference on Indoor Positioning and Indoor Navigation (IPIN), Zurich, Switzerland, 15–17 September 2010; pp. 1–9.

- Preece, S.J.; Goulermas, J.Y.; Kenney, L.P.; Howard, D.; Meijer, K.; Crompton, R. Activity identification using body-mounted sensors—A review of classification techniques. Physiol. Meas. 2009, 30, R1–R33. [Google Scholar] [CrossRef] [PubMed]

- Banos, O.; Galvez, J.; Damas, M.; Pomares, H.; Rojas, I. Window Size Impact in Human Activity Recognition. Sensors 2014, 14, 6474–6499. [Google Scholar] [CrossRef] [PubMed]

- Wu, W.; Dasgupta, S.; Ramirez, E.E.; Peterson, C.; Norman, G.J. Classification accuracies of physical activities using smartphone motion sensors. J. Med. Internet Res. 2012, 14, e130. [Google Scholar] [CrossRef] [PubMed]

- Shoaib, M.; Bosch, S.; Incel, O.; Scholten, H.; Havinga, P. Complex Human Activity Recognition Using Smartphone and Wrist-Worn Motion Sensors. Sensors 2016, 16, 426. [Google Scholar] [CrossRef] [PubMed]

- Damaševičius, R.; Vasiljevas, M.; Šalkevičius, J.; Woźniak, M. Human Activity Recognition in AAL Environments Using Random Projections. Comput. Math. Methods Med. 2016, 2006, 4073584. [Google Scholar] [CrossRef] [PubMed]

| Id | Conditions (C) | Semantics (S) |

|---|---|---|

| SL-1 | = ‘Walking’, Detected () and Find () | ‘Go left’, ‘Go right’, ‘Turn left’, ‘Turn right’, ‘Turn around’ |

| SL-2 | = ‘Opening a door’, Find () and and | ‘Opening a door’ |

| SL-3 | = ‘Opening a door’, Find () and | ‘Go into the door’ |

| SL-4 | = ‘Opening a door’, Find () and | ‘Go out of the door’ |

| Id | Conditions (C) | Semantics (S) |

|---|---|---|

| SK-1 | = ‘Standing’, Detected () | ‘Turn left’, ‘Turn right’, ‘Turn around’ |

| SK-2 | = ‘Walking’, Detected () and Unfound () | ‘Go left’, ‘Go right’, ‘Turn left’, ‘Turn right’, ‘Turn around’ |

| SK-3 | = ‘Walking’, Undetected (), > | ‘Go straight’ |

| SK-4 | = ‘Going up(or down) stairs’, Find () and < , < 5 s | ‘Go up the steps’ |

| SK-5 | = ‘ Going up(or down) stairs, Find () and > , < 5 s | ‘Go down the steps’ |

| SK-6 | = ‘ Going up(or down) stairs, Find () and < , > 5 s | ‘Go upstairs’ |

| SK-7 | = ‘ Going up(or down) stairs, Find () and > , > 5 s | ‘Go downstairs’ |

| Floor | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | Average (hpa) |

|---|---|---|---|---|---|---|---|---|---|

| f1 | 1020.91 | 1020.92 | 1020.92 | 1020.9 | 1020.88 | 1020.86 | 1020.85 | 1020.87 | B(f1) = 1020.89 |

| f2 | 1020.32 | 1020.34 | 1020.34 | 1020.33 | 1020.29 | 1020.3 | 1020.33 | 1020.32 | B(f2) = 1020.32 |

| Observation Sequence | Trajectories | Distance and Activities Information | Trajectories after |

|---|---|---|---|

| {‘S’, ‘E’, ‘N’} | , | { = 1.86, = 1.69, = 10.9} { = (s, w, o), = (w), = (w)} | |

| {‘S’, ‘E’, N’,’W’} | = 6.73, = (w) | ||

| {‘S’, ‘E’, N’,’W’, ‘N’} | = 2.1, = (w) | ||

| {‘S’, ‘E’, N’,’W’, ‘N’, ‘E’} | = 13.73, = (w) | ||

| {‘S’, ‘E’, N’,’W’, ‘N’, ‘E’, ‘N’} | = 4.1, = (w) | ||

| {‘S’, ‘E’, N’,’W’, ‘N’, ‘E’, N’,’W’} | , | = 4.1, = (w) | , |

| = 10.94, = 2.8 = (w, o, w) | , |

| Landmark | Name | Expression |

|---|---|---|

| Entrance | Id | ET |

| Attribute | Virtual landmarks | |

| Adjacent segments | {‘’:{‘semantics’: ‘Go left’, ‘distance’: 9.12, ‘direction’: ‘West-South’}} | |

| Direction information | ‘West’ | |

| Semantic description | ||

| Stairs s0 | Id | s0 |

| Attribute | Stairs | |

| Adjacent segments | {‘’:{‘semantics’: ‘Climb the steps’, ‘distance’: 0.99, ‘direction’: ‘South’}} | |

| Direction information | ‘South’ | |

| Semantic description | ||

| Stairs s1 | Id | s1 |

| Attribute | Stairs | |

| Adjacent segments | { ‘’:{‘semantics’: , ‘distance’: 5.05, ‘direction’: ‘South’}} | |

| Direction information | ‘South’ | |

| Semantic description | ||

| Turn u0 | Id | u0 |

| Attribute | Turn | |

| Adjacent segments | {‘’:{‘semantics’: [‘Go straight’, ‘Turn right’], ‘distance’: 19.13, ‘direction’: ‘West-North’}} | |

| Direction information | ‘South - West’ | |

| Semantic description | ‘Turn right’ | |

| Door r8 | Id | r8 |

| Attribute | Door | |

| Adjacent segments | {‘’:{‘semantics’: , ‘distance’: 2.03, ‘direction’: ‘North}} | |

| Direction information | ‘North’ | |

| Semantic description | ‘Go into the door’ |

| Name | Expression |

|---|---|

| Id | u0 |

| Attribute | Turn |

| Adjacent segments | {‘’: {‘semantics’: ‘Turn left (at 1st door)’, ‘distance’: 5.19, ‘direction’: ‘West-South’}, ‘’: {‘semantics’: ‘Turn right (at 1st door)’, ‘distance’: 5.21, ‘direction’: ‘West-North’}, ‘’: {‘semantics’: ‘Turn left (at 2nd door)’, ‘distance’: 6.87, ‘direction’: ‘West-South’}, ‘’: {‘semantics’: ‘Turn right (at 2nd door)’, ‘distance’: 7.19, ‘direction’: ‘West-North’}, ‘’: {‘semantics’: ‘Turn right (at 3rd door)’, ‘distance’: 12.13, ‘direction’: ‘West-North’}, ‘’: {‘semantics’: ‘Turn left (at 3rd door)’, ‘distance’: 12.27, ‘direction’: ‘West-South’}, ‘’: {‘semantics’: ‘Turn left (at 4th door)’, ‘distance’: 14.11, ‘direction’: ‘West-South’}, ‘’: {‘semantics’: ‘Turn right (at 4th door)’, ‘distance’: 15.96, ‘direction’: ‘West-North’}, ‘’: {‘semantics’: ‘Turn left (at 5th door)’, ‘distance’: 17.75, ‘direction’: ‘West-South’}, ‘’: {‘semantics’: ‘Turn right (at 5th door)’, ‘distance’: 19.13, ‘direction’: ‘West-North’}, ‘’: {‘semantics’: ‘Go straight’, ‘distance’: 20.28, ‘direction’: ‘West’}, ‘’: {‘semantics’: ‘Turn left’, ‘distance’: 5.09, ‘direction’: ‘North}, ‘’: {‘semantics’: ‘Turn right’, ‘distance’: 2.84, ‘direction’: ‘South’}} |

| Direction information | ‘South-West’, ‘North-West’, ‘East-South’, ‘East-North’ |

| Landmark semantic | ‘Turn right’, ‘Turn left’ |

| Classifier | Accuracy | Accuracy |

|---|---|---|

| (Sliding Windows) | (Event-Defined Windows) | |

| DT | 98.62% | 98.69% |

| SVM | 96.55% | 97.73% |

| KNN | 98.83% | 98.95% |

| Actual Class | Predicted Class | Accuracy (%) | |||

|---|---|---|---|---|---|

| Standing | Walking | Going up (or down) Stairs | Opening a Door | ||

| Standing | 284 | 0 | 0 | 10 | 96.60% |

| Walking | 0 | 1981 | 3 | 0 | 99.85% |

| Going up (or down) stairs | 0 | 4 | 787 | 0 | 99.49% |

| Opening a door | 3 | 0 | 0 | 56 | 94.92% |

| Landmark | Total | Wrong Match | Error Rate |

|---|---|---|---|

| Doors | 24 | 1 | 4.17% |

| Stairs | 96 | 0 | 0 |

| Turns | 63 | 1 | 1.59% |

| Name | Zee | UnLoc | The Proposed Approach |

|---|---|---|---|

| Requirement | Floorplan | A door location | Floorplan, landmarks |

| Sensors | Acc., Gyro., Mag., (Wi-Fi) | Acc., Gyro., Mag., (Wi-Fi) | Acc., Gyro., Mag., Baro. |

| User participation | None | Some | Some |

| Accuracy | 1–2 m | 1–2 m | <1 m |

| Expression | Trajectory | Trajectory | Trajectory, semantic description |

| Extensibility | Wi-Fi RSS distribution | Landmark distribution | Semantic landmark model |

| Trajectory | Trajectory Segment | Semantic | Time Complexity | Numbers 1 |

|---|---|---|---|---|

| Trajectory information | Segment 1 (red points) | ‘Turn right’ (‘East-South’) | O(N) | 5 |

| Segment 2 (blue points) | ‘Go straight’ (‘South’) | O(7) | 2 | |

| Segment 3 (purple points) | ‘Turn right’ (‘South-West’) | O(3) | 1 |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license ( http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Guo, S.; Xiong, H.; Zheng, X.; Zhou, Y. Activity Recognition and Semantic Description for Indoor Mobile Localization. Sensors 2017, 17, 649. https://doi.org/10.3390/s17030649

Guo S, Xiong H, Zheng X, Zhou Y. Activity Recognition and Semantic Description for Indoor Mobile Localization. Sensors. 2017; 17(3):649. https://doi.org/10.3390/s17030649

Chicago/Turabian StyleGuo, Sheng, Hanjiang Xiong, Xianwei Zheng, and Yan Zhou. 2017. "Activity Recognition and Semantic Description for Indoor Mobile Localization" Sensors 17, no. 3: 649. https://doi.org/10.3390/s17030649

APA StyleGuo, S., Xiong, H., Zheng, X., & Zhou, Y. (2017). Activity Recognition and Semantic Description for Indoor Mobile Localization. Sensors, 17(3), 649. https://doi.org/10.3390/s17030649