1. Introduction

Autonomous driving is the highest level of automation for a vehicle, which means the vehicle can drive itself from a starting point to a destination with no human intervention. The problem can be divided into two separate tasks. The first task is focused on keeping the vehicle moving along a correct path. The second task is the capability to perceive and react to unpredictable dynamic obstacles, like other vehicles, pedestrians, and traffic signalization. This article is focused on the first task and proposes an accurate approach based on low-cost sensors and data fusion, capable of performing autonomous driving.

In order to solve the first task, an autonomous vehicle must be equipped with a set of sensors that allows it to accurately determine its position relative to the road limits. The lateral deviation is the most critical since it must be in the order of few centimeters. The most affordable sensor for direct measurement of position, the Global Navigation Satellite System (GNSS), does not reach this level of accuracy. According to the report [

1] of the USA government responsible for the GPS system, the accuracy of the civil GPS system is better than 7.8 m 95% of the time.

There are some special techniques to improve the accuracy of a GNSS system, like Differential GPS (DGPS) [

2], Wide Area Augmentation System (WAAS) [

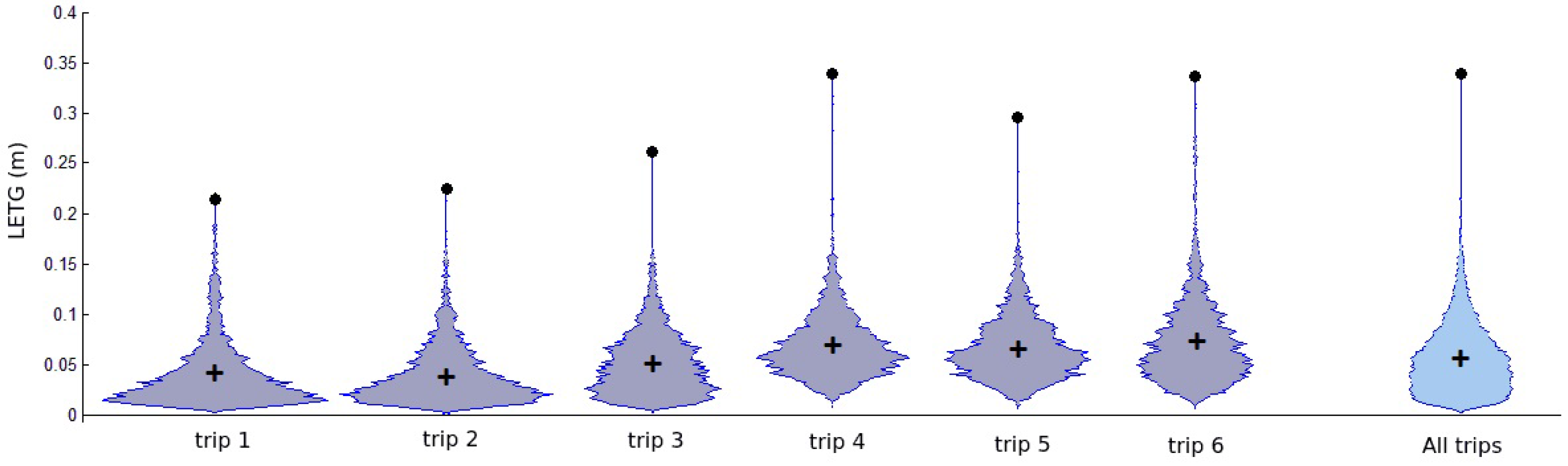

3], Real Time Kinematic (RTK) [

4]. All those techniques use some kind of correction signal, provided by a base station system, that permits the receiver to calculate its global position with an error of up to few centimeters. For example, the WAAS system was developed by the Federal Aviation Administration (FAA) in order to improve the accuracy, integrity, and availability of the positioning measurement to allow an aircraft to operate autonomously (autopilot) in all phases of flight, including precision approach for landing. In the case of autonomous driving based only on GNSS, even the most sophisticated systems fail. The signal condition in urban environment is strongly degraded due to poor sky view, building obstructions or multi-path reflections. Moreover the correction signal, if available, is not effective in such environment because it cannot compensate a particular degradation condition of an small area. Moreover, a simple GNSS receiver can only be used by an autonomous vehicle to measure its approximated initial position after the autonomous vehicle starts up.

To achieve the stringent level of accuracy, integrity, and availability, required for autonomous driving applications, outstanding projects [

5,

6,

7,

8,

9,

10,

11,

12,

13] have been using widely two kinds of exteroceptive sensors: 3D LASER scanners (LIDAR) [

14,

15] and cameras. Some of these projects [

6,

7] also use prior information, for example a map, to compare the current sensor readings, which produces a more accurate, robust and reliable localization. Optionally, these exteroceptive sensors can also be used to detect obstacles, other vehicles, persons, and/or traffic signalization, like traffic light and signs.

LASER scanners, or Laser range finders, are widely employed to detect the road shape or road infrastructures like guard-rails. LASER-based sensors are able to directly measure distances and require less computer processing compared to vision-based techniques. This kind of sensor emits its own light, LASER, and is not dependent of the environment lighting conditions. Another advantage of the LIDAR is its great field of detection. Installed on the roof of the vehicle it covers

around the vehicle so that no other sensor is necessary. For example, the Velodyne HDL-64E LASER range finder has 64 scanning beams that generates a point cloud with hundreds of thousands of points, updated at approximately 10 Hz [

16]. LIDARs are being used in several autonomous vehicles prototypes, like the one being tested by Uber [

17], and some companies are investing on the development of sensor elements specifically for those type of devices [

18]. The drawback of this kind of sensors is that they are expensive and need to be installed on the exterior of a vehicle, often in specific positions, therefore requiring changes in the external shape of the vehicle.

Besides measuring the distance to each detected point, some LIDAR models can give information about the reflectivity of the surface. Some authors [

19,

20] tested this possibility to recover lane markings painted on the ground, exploiting the different reflectivity between the asphalt and the ink used to paint the lane markings. In [

20] a LIDAR sensor was used to measure the reflectivity intensity to detect road markings (lane markings and zebra crossings). This information was used to build a map.

Cameras are very suitable sensors for wide perception of the environment, increasingly being used as a lower cost alternative for the LIDAR. They are generally small [

21] and easy to integrate on a vehicle without changing its shape [

22]. Besides that, with the exception of some devices built to acquire specific spectral ranges, like long or short wavelength infrared, cameras are quite inexpensive equipment. For example, the camera used in our prototype vehicle costs US

$ 25.00 on commercial shops. Another interesting point is that images convey a huge amount of information. Cameras are, in fact, able to acquire texture and color of all framed objects and can also be used to estimate road surface [

23,

24]. If used in pairs, they can work as a stereo vision system, capable of determining the distance to objects [

25].

All those features make cameras very versatile perception devices that can be employed on different tasks. On the other hand, the extraction of this information requires a complex and heavy computational process. Edge extraction, color segmentation, morphological analysis, feature detection and description and object detection, are common tasks performed by computer vision algorithms that require large amount of processing. Therefore, the choice of processing engines have to take into account extra processing tasks for computer vision.

Most of autonomous vehicles based on vision use frontal camera only to detect landmarks. In [

26] the frontal cameras are used to detect the preceding vehicle, in order to build the possible lane position. If the vehicle is not present, a lane marking detection algorithm is used. In [

27] prior knowledge of the road type is used to trigger two independent algorithms, one to provide the position relative to road geometry, and the other to estimate the shape of off-road path.

Vision-based approaches are also widely used for the detection of road and/or lane markings [

28,

29,

30]. Lane marking detection for lateral localization and curvature estimation is one of the first research fields of computer vision applied to the problem of autonomous driving, since the early 90 s. Many publications and surveys were made [

31,

32,

33,

34] describing the diversity of techniques that were developed to solve this problem.

Despite of the limitations, some of the early projects [

35] already demonstrated in practice the capability of such systems in following autonomously a road for more than hundreds of kilometers. Actually, if the road has a well painted lane along all of its length, without roundabouts or bifurcations, even a simple system is capable of keeping the vehicle following the road until the vehicle runs out of fuel. More recently, visual lane marking detection regained more attention due to its commercial application in advanced driver assistance systems (ADAS) [

36,

37,

38,

39] for lane departure warning and lane keeping systems.

As already mentioned, if a pair of cameras is used, the distance to a detected point can be recovered. So stereo vision can produce the same kind of information as a LIDAR, i.e., point clouds. Despite of the computational power required to process the stereo algorithm, the cost of this kind of sensor is much smaller then one based on LASER scanners. On the other hand, stereo vision is a passive sensor that requires external illumination. Comparing with a LIDAR, stereo vision has smaller field of view and produces less dense information. Even so, some projects of autonomous vehicles use stereo vision to calculate the 3D position of road lane markings. In [

40] the position of lane markings in the world is computed using 3D information provided by a stereo camera system using V-Disparity. In [

41] 3D features, extracted using stereo vision, are used to create a 3D map in which is possible to self localize using both the real-time computed landmarks and the ones stored in the map.

Other projects of autonomous vehicles use some kind of prior information, like a map, and comparison with the current sensor readings, to increase the robustness and reliability of the autonomous driving algorithm. Autonomous vehicles operating in previously mapped environments [

6,

7,

42,

43] have demonstrated the potential of this strategy performing successful autonomous trips in complex real environments. In general a map contains, at least, a set of landmarks used to locate the vehicle and a reference trajectory that is guaranteed to be free of road infrastructure obstacles. If the localization technique achieves sufficient accuracy in any part of the map, the problem of autonomous driving can be reduced to a path following problem.

The localization process relies on the detection and position estimation of these landmarks (see [

40,

41,

44]). Landmarks should not be selected too far from the vehicle since the error in estimating their position increases with the distance. In addition, a landmark should be easy to be detected in order to minimize false positive/negative detections. For example, standard road artifacts like lane markings, stop markings, zebra crossings, or objects like traffic signs and poles are all suitable options. Several approaches use the lane markings as landmarks. Lane markings are easy to detect both by LIDAR and by computer vision. In [

45,

46] the lane markings are detected by a camera and a computer vision algorithm. In [

20], a LIDAR sensor detects the lane markings and the Monte Carlo Localization (MCL) method was used to localize the vehicle in the resulting map with accuracy of 0.31 m.

In [

47] digital maps and coherency images were used to estimate the vehicle localization. The maps are constructed from aerial images, where all the information about the lane markings and road surface are manually placed. This map is used to generate artificial images from a given point of view. A particle filter is used to estimate the vehicle position, with the likelihood of each particle being calculated from the coherency value between the current camera view and the artificial image associated to each particle. This system reported a lane-level accuracy of 0.35 m, but has the disadvantage of a manual map construction.

In [

44] upright SURF features are used to extract the points which are used for both mapping and localization. This approach is described as topometric, because it is a fusion between topological and metric approaches. The localization is made on a topological map, but the map is geo-localized in order to achieve a metric localization. The method reported an average localization error of 2.70 m.

Now, in [

48], image based localization scheme was presented based on Virtual Generalizing Random Access Memory (VG-RAM) [

45]. A neural map is built from 3D landmarks, detected by a stereo vision system, and used for localization. The average lateral error reported was 1.12 m, which is not sufficiently small for autonomous driving.

Conversely, in [

7], a combination of both front and rear camera systems is used to detect different types of landmarks. The frontal vision system detects road elements painted on the ground (lane markings, stop lines…) to obtain a so called LFL (lane feature based localization). The rear vision system extracts a set of points features to be compared to previously acquired data, to obtain a point feature based localization (PFL). This twofold approach provides a more precise and reliable localization and allows the location system to well adapt to different road conditions of rural, highway, or urban environments. The maps are built offline using images, odometer, and GNSS data collected during a preliminary mapping trip.

Contribution and Organization of the Text

The main contribution of this paper is the presentation of a light and two-level data fusion architecture using data from a lane marking sensor, a dead reckoning sensor, and a map, to be applied in autonomous driving applications. The lane marking sensor and the map structure are part of the proposed architecture. Mapping is executed using the same set of sensors used in autonomous driving. Another important contribution is the detailed presentation of how low-cost, standard commercial devices, like USB camera, ordinary GNSS receiver, MEMs based gyroscope and a regular notebook computer can be used to achieve accurate vehicle localization and autonomous driving. For instance, we present the design of a Lane Markings Sensor based on a standard USB web-cam, and the study of bias compensation and random noise analysis of a MEMs based gyroscope. Experimental tests show that the accuracy of our proposed system is sufficiently high for autonomous driving operation, even in narrow roads and under challenging lighting conditions.

The paper is organized as follows.

Section 2, presents the system overview,

Section 3 the visual sensor for lane marking detection,

Section 4 describes the proprioceptive sensors architecture developed for dead reckoning,

Section 5 presents the data fusion strategy,

Section 6 presents the results of accuracy and autonomous driving tests, and, finally

Section 7 ends the paper with some final remarks.

2. System Overview

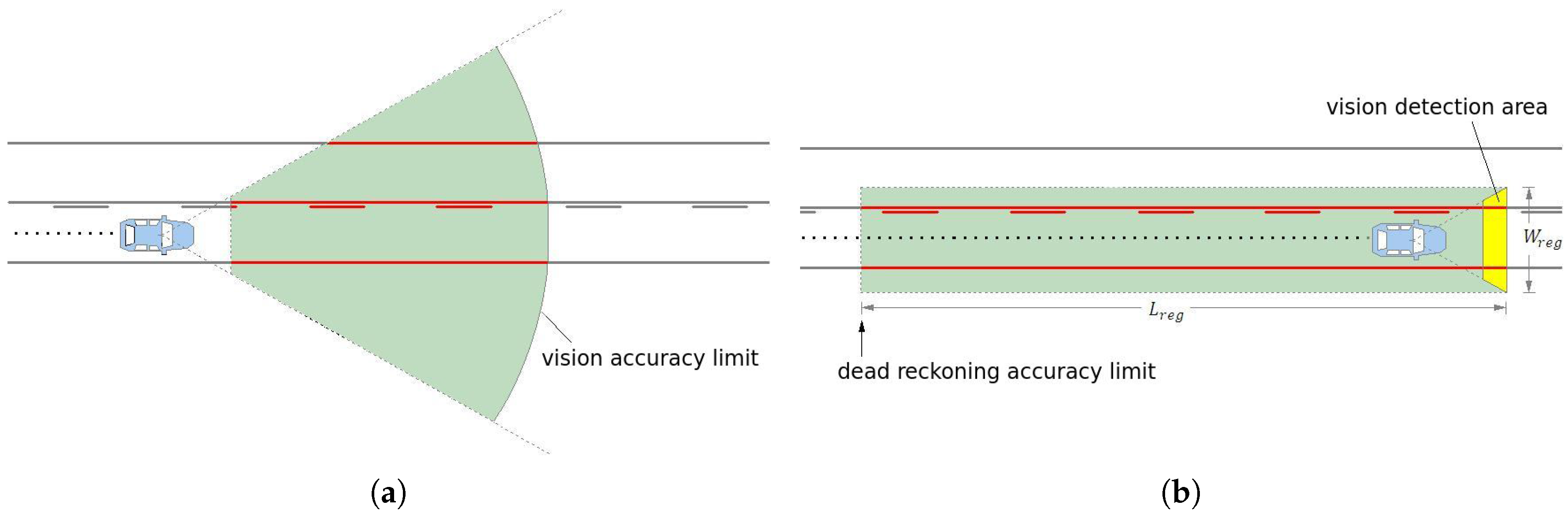

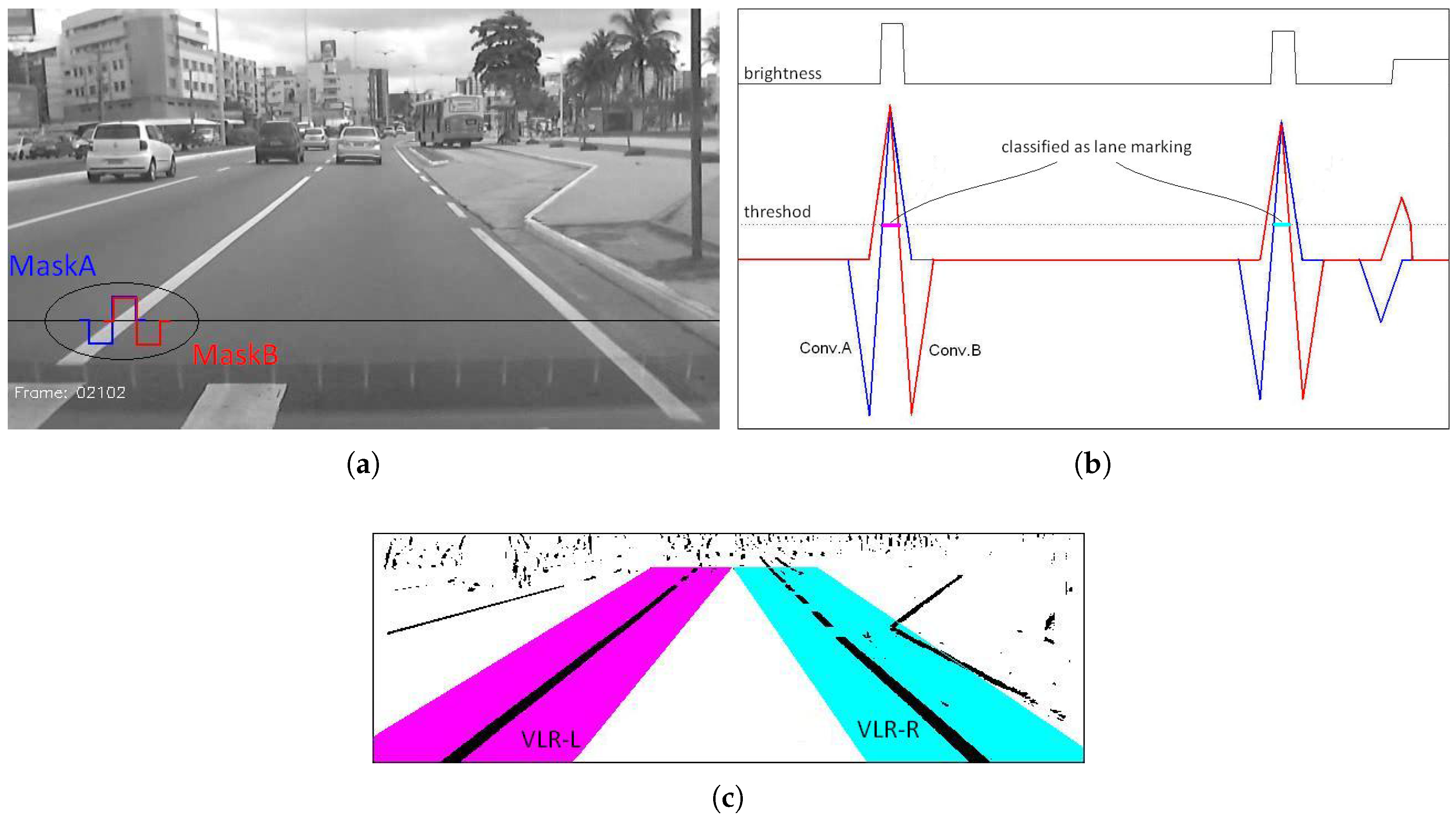

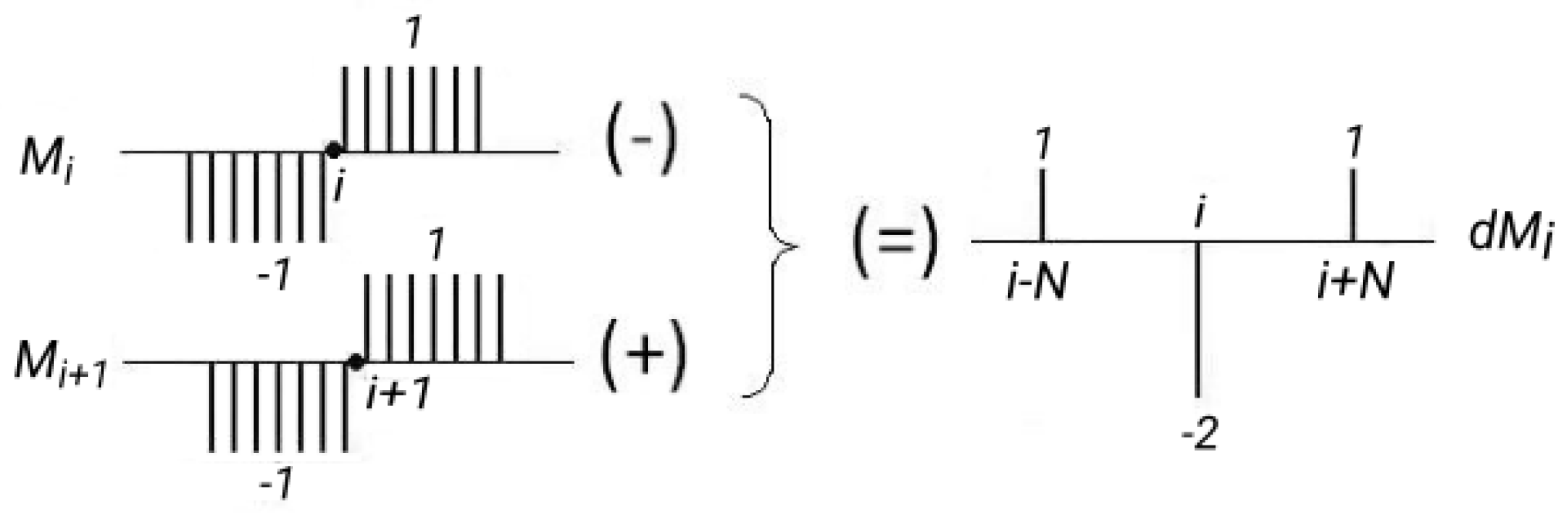

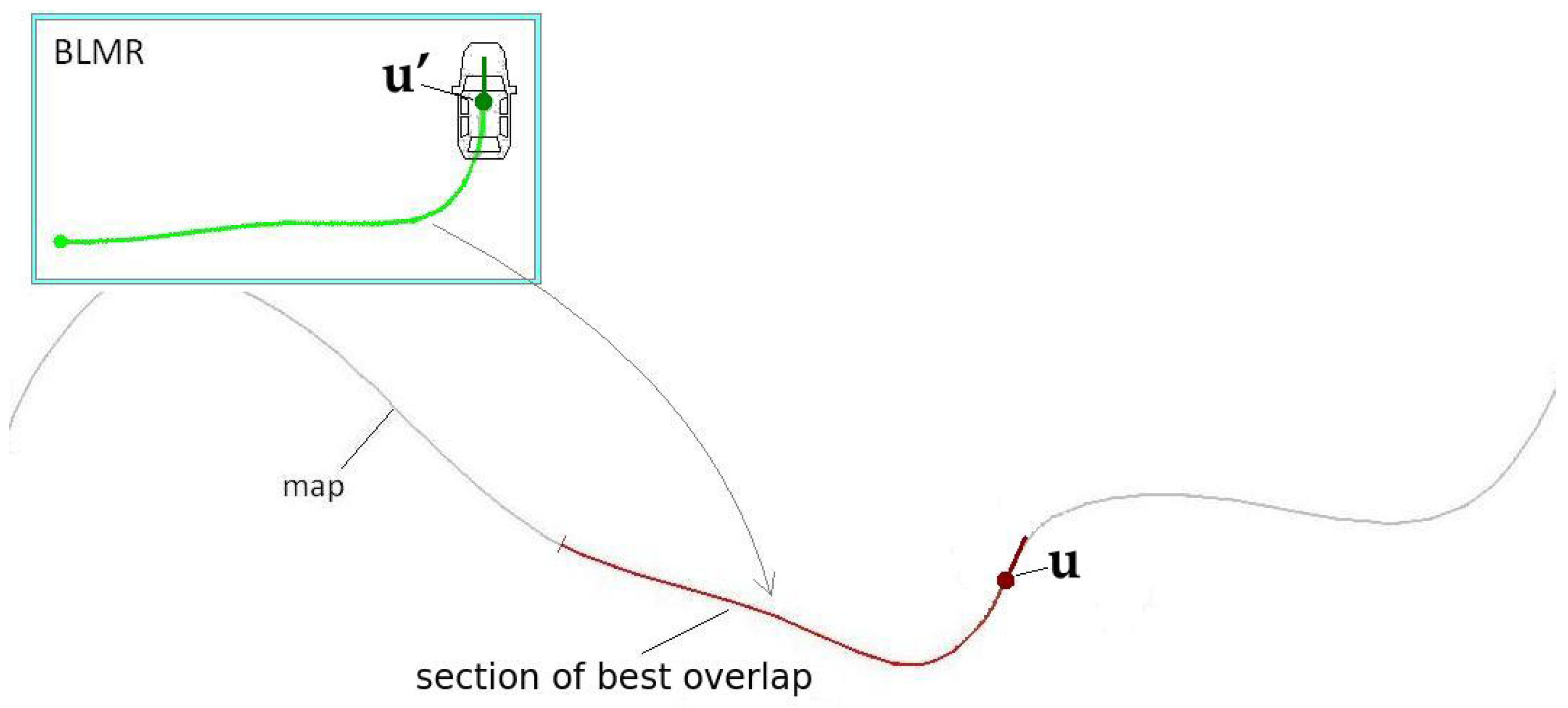

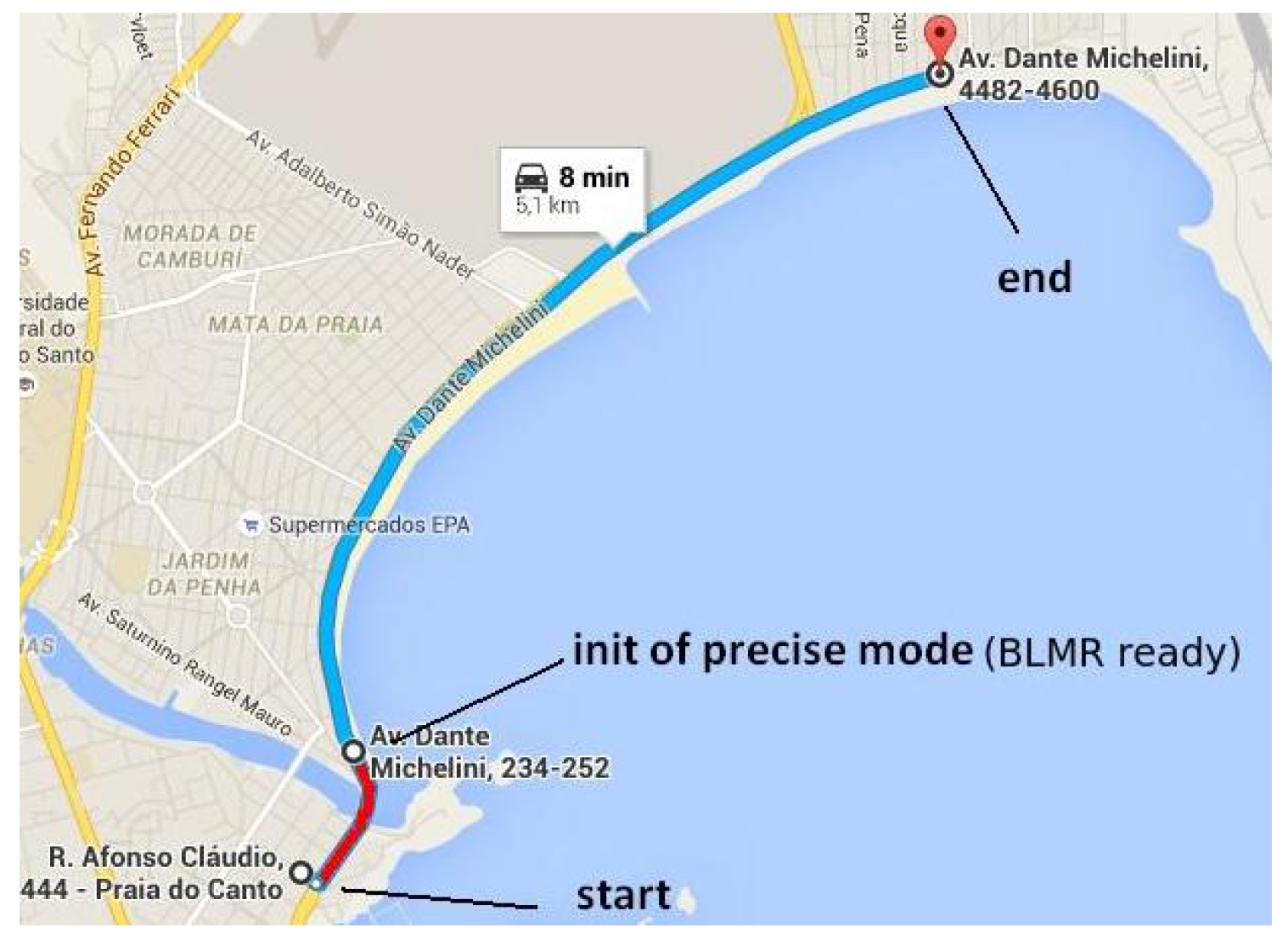

Typical computer vision systems for autonomous vehicles or ADAS (advanced driving assistance), use camera to detect lane markings in the medium range (up to 30 m or 40 m) in front of the vehicle, as shown in

Figure 1a. The further away the visual information is from the vehicle, the smaller is its confidence, specially because the variation of the pitch angle, vertical curvature of the ground, or occlusions caused by other vehicles. Thus, the approach presented in this paper avoids this problem by using a visual short-range lane marking detector, and dead reckoning system, that allows to build an accurate and extended perception of the lane markings in the back of the current vehicle’s position. This means that as the vehicle moves, the lane markings detected are displaced backwards so that a back lane mark registry (BLMR) is constructed. The principle, illustrated in

Figure 1b, is similar to that used by a document scanner to construct a 2D image from an 1D light sensor array (1D CCD).

Of course that the information in the backwards (BLMR) is not useful to directly drive the vehicle, but when combined with prior information of the environment, like a map, leads to a very reliable and precise autonomous guidance system. In our approach, the BLMR is used to search in the map the current pose of the vehicle (localization). Once the localization is precisely known, and the description of the road is available in the map, the autonomous driving can be performed.

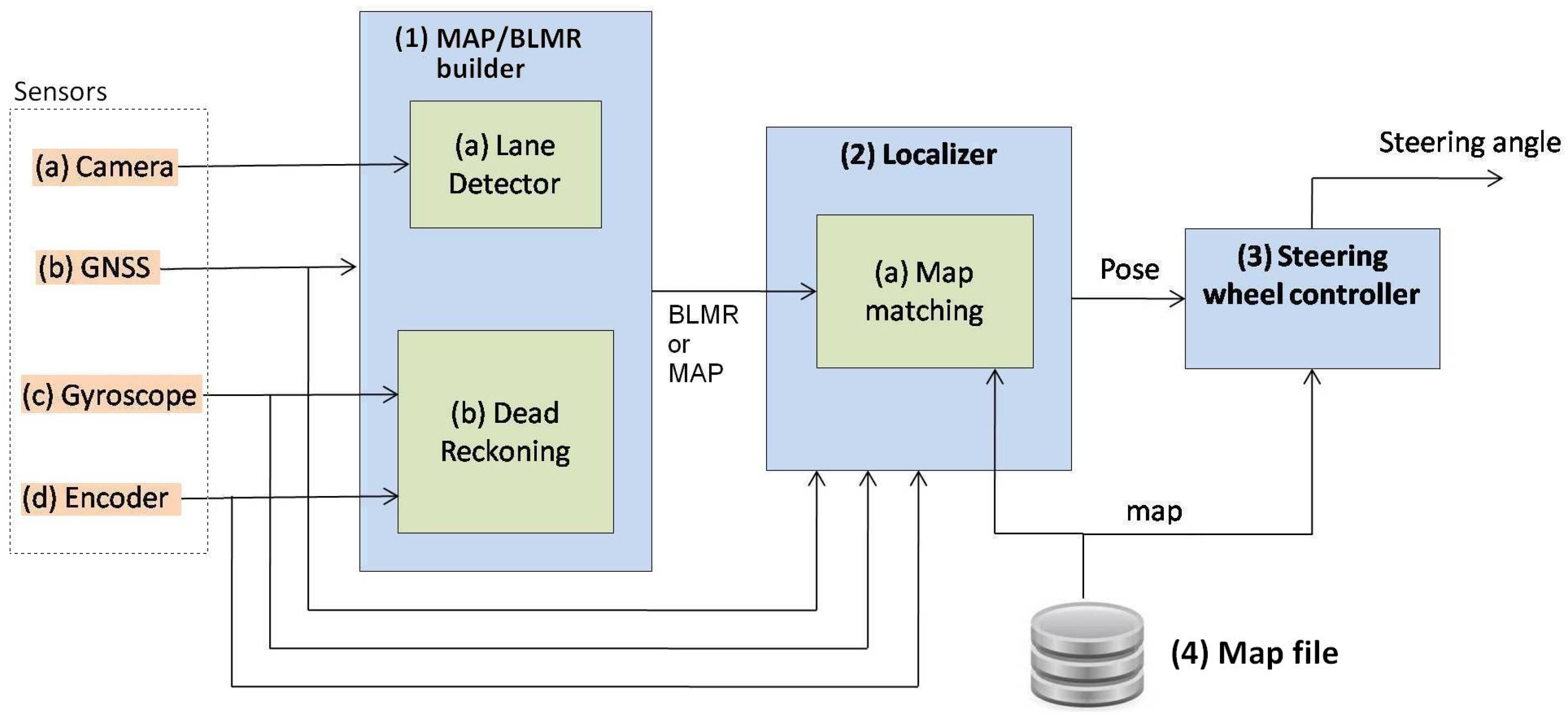

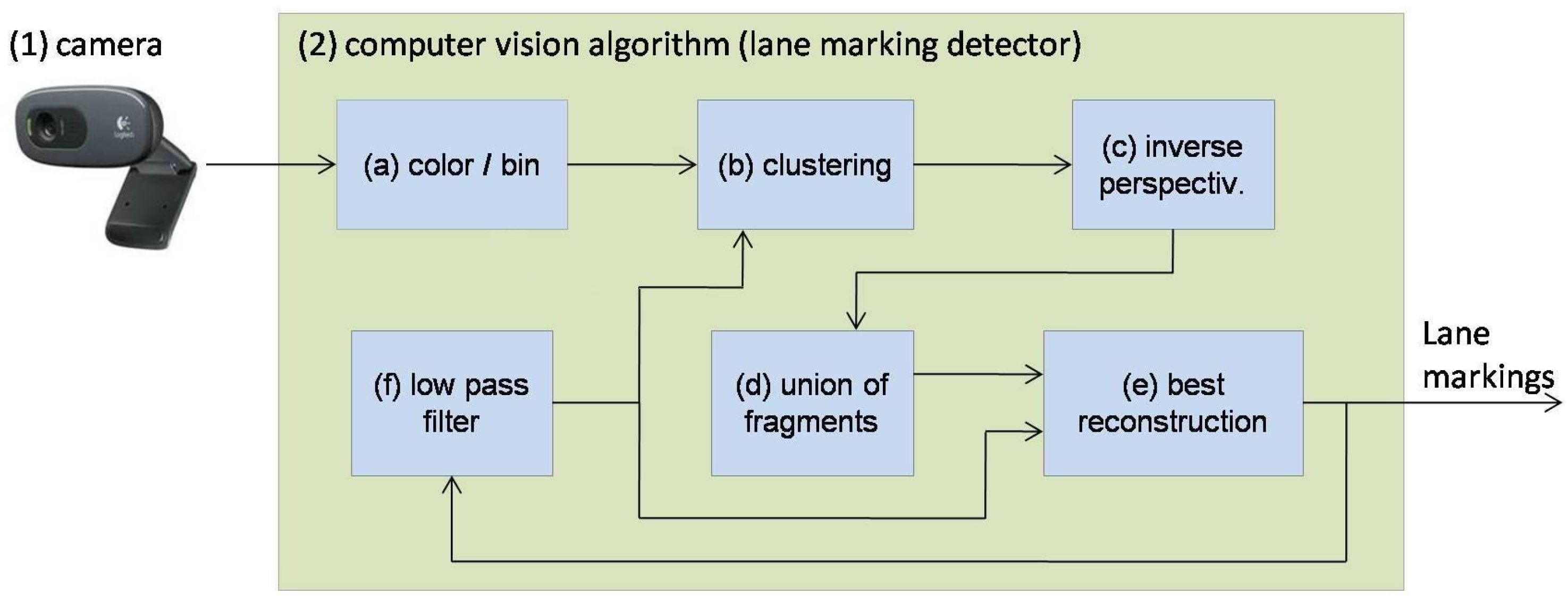

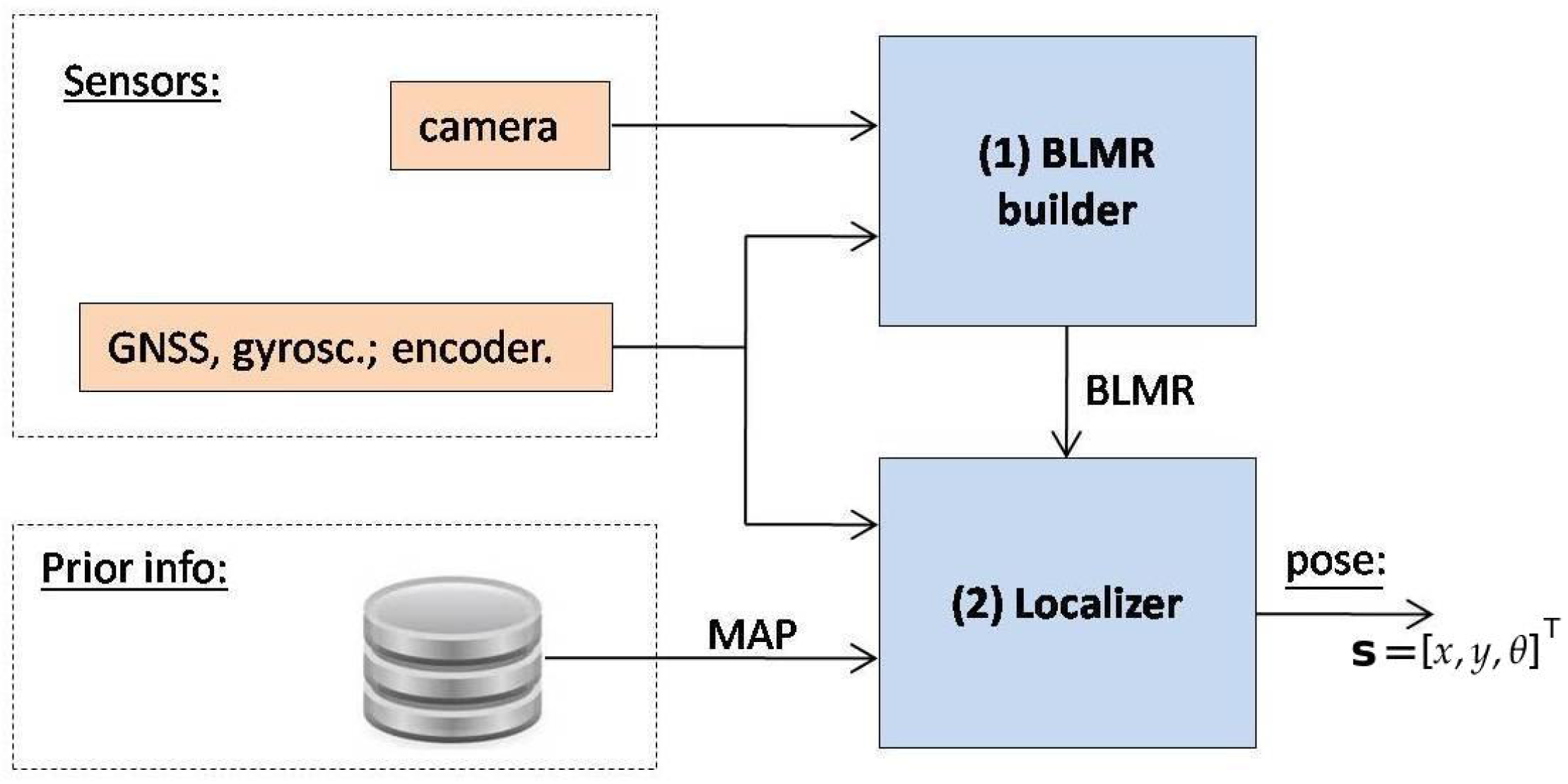

2.1. Software Architecture

Figure 2 shows the sensors and software architecture developed both for map building and autonomous driving. When the system is operating to build a map, only Module 1 is used and its output is a map. When operating in precise localization mode or autonomous driving, all the modules are used. In this case the output of Module 1 is the BLMR. The details will be better explained in

Section 5.1.

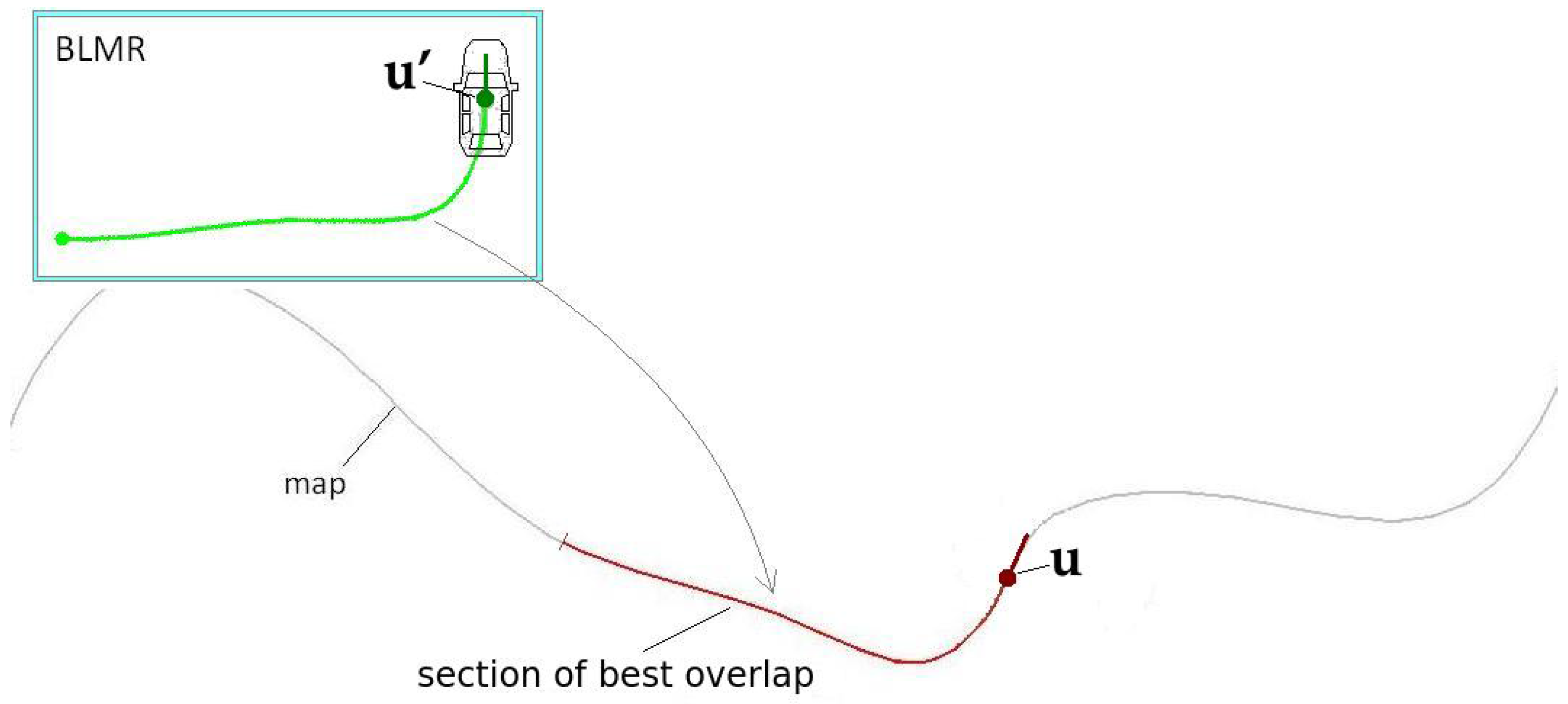

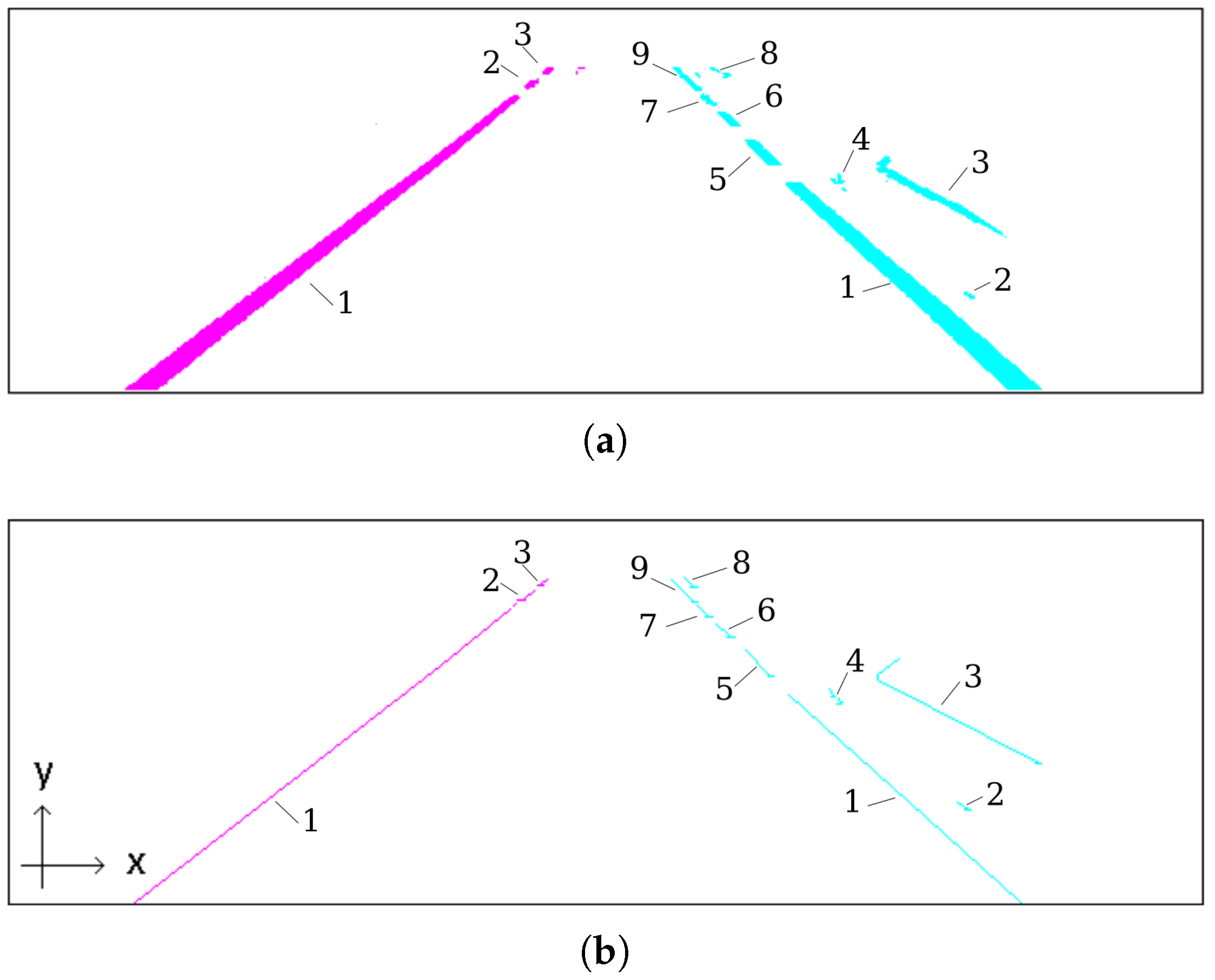

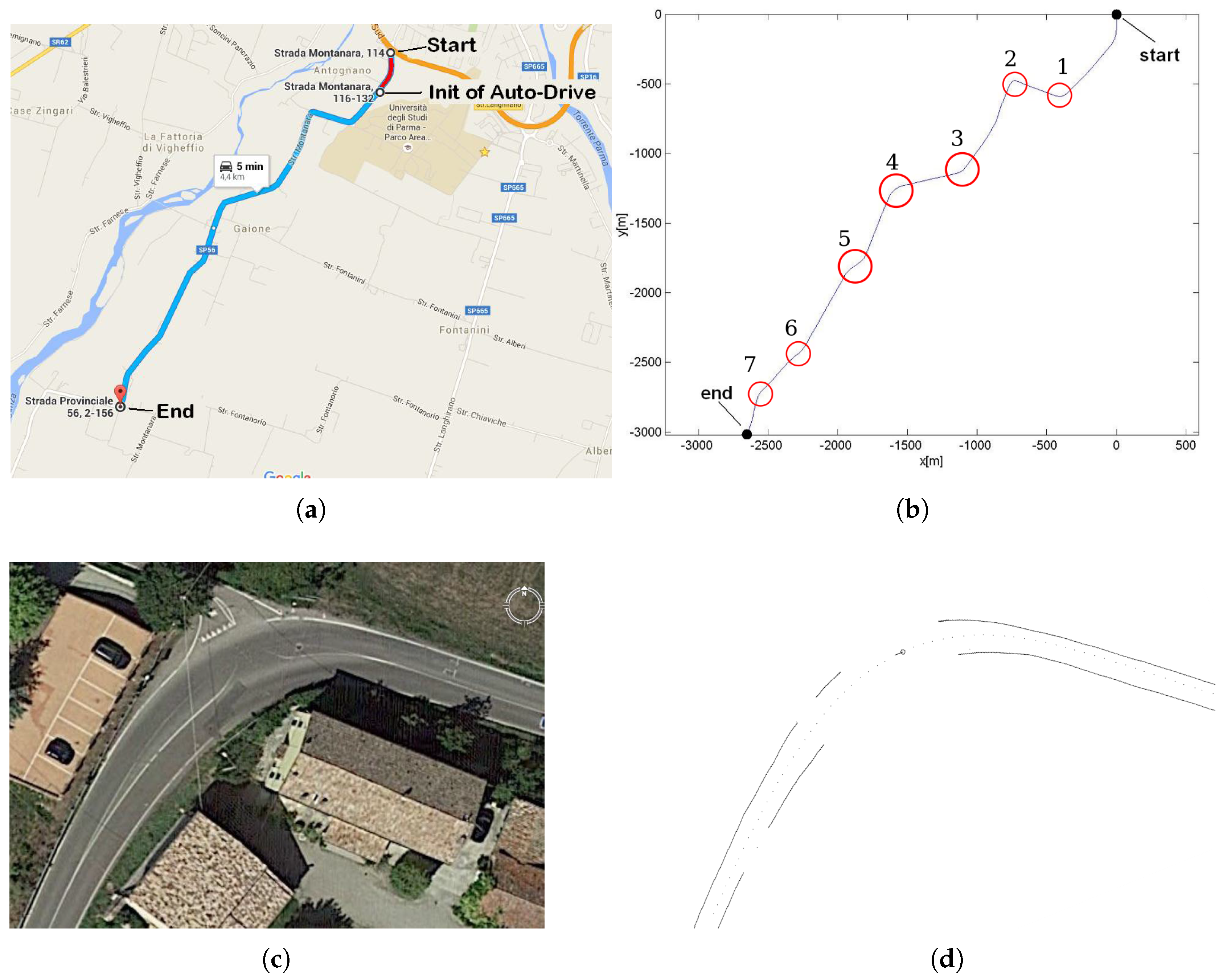

Module 1 fuses information from four sensors to construct the BLMR or a Map, depending on the mode of operation. In reality, the BLMR and a map are the same type of structure, except that BLMR has a limited length and works on its own reference frame. The sub-module (1-a) is the vision algorithm to detect lane markings, and the sub-module (1-b) calculates the dead reckoning. The lane markings detected in the image frame are transformed to the vehicle reference frame by an inverse perspective transformation, corresponding to a bird’s eye view (also called sky-view). The dead reckoning sub-module computes the 2D path of the vehicle (flat world model) based on information from the gyroscope, that calculates the yaw (heading) angle, and the encoder, that measures the linear displacement. Over this 2D path, the detected lane markings are inserted creating a 2D map of the lane marks (or BLMR). One example of this map is shown in

Figure 3a, built after the prototype vehicle traveled through the road section of

Figure 3b.

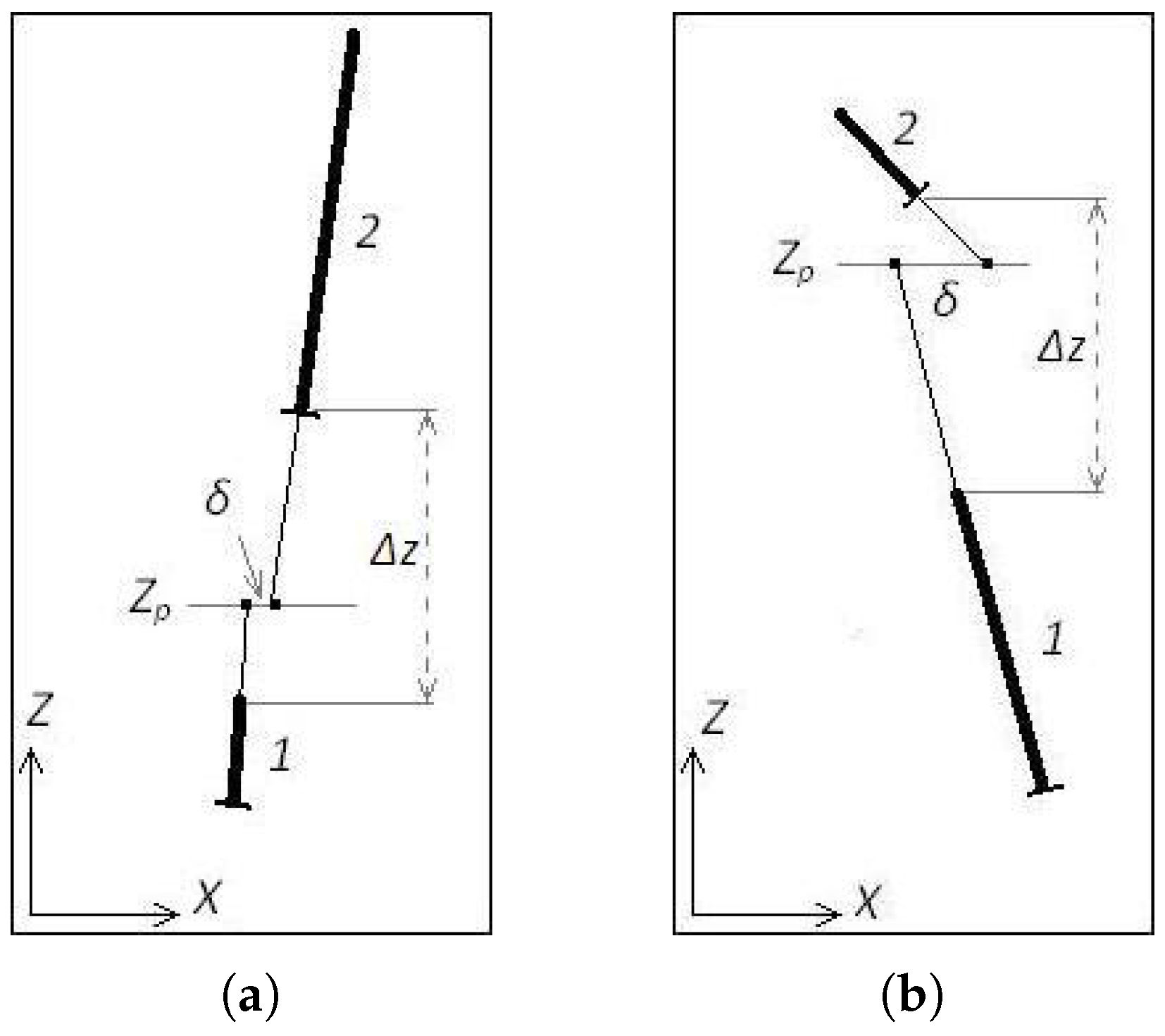

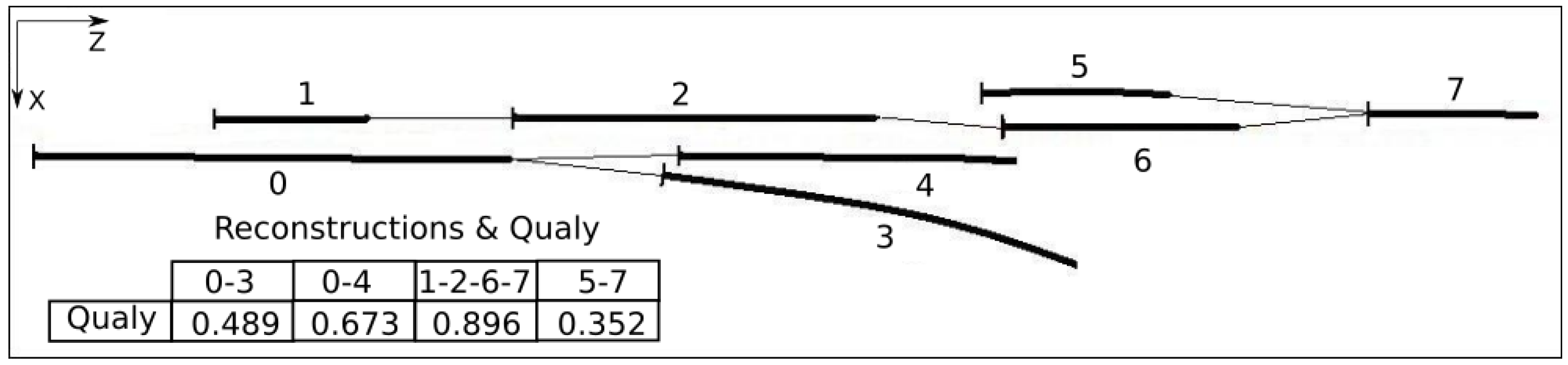

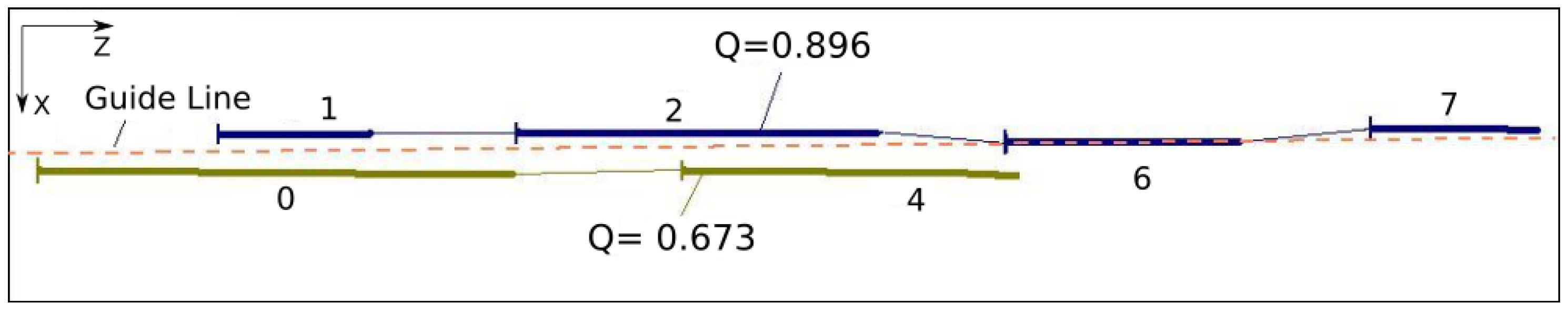

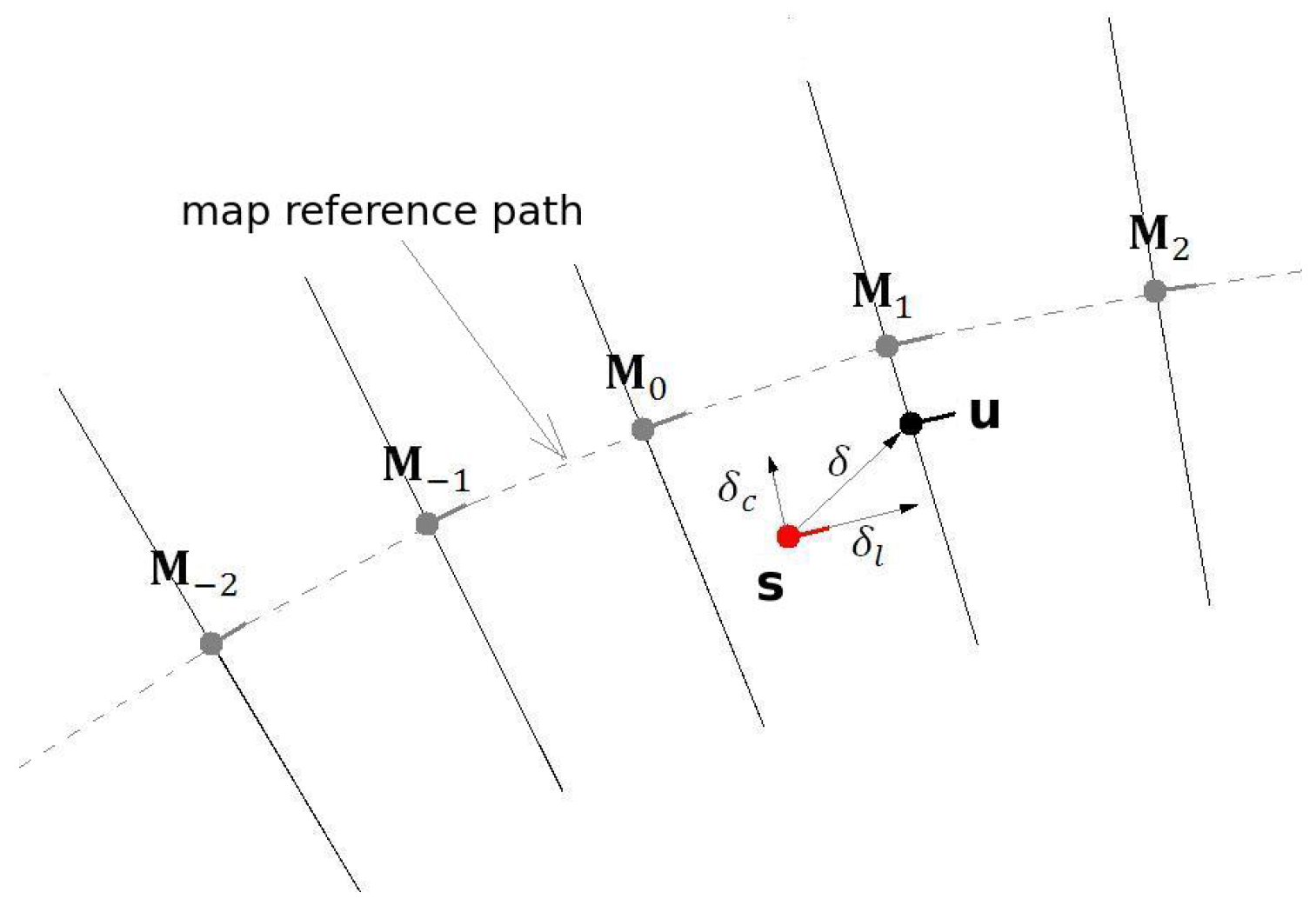

Module 2 is responsible for the localization of the vehicle in the map. The information from GNSS is used only to calculate the approximated initial localization. Once the vehicle knows its approximated localization, and the BLMR data is available, Module 2 applies a technique of map-matching and filtering to calculate the localization with higher accuracy. The principle of precise localization, illustrated in

Figure 4, is to search in the map for a section with similar geometric characteristics. As this process is subject to error, additional filtering is applied. The filter also predicts the variation of localization, based on the information provided by the gyroscope and the odometer. Details of the operation of this module are presented in

Section 5.2.

Module 3 uses the information of the current localization and road geometry, from the map, to control the steering wheel. Commands are sent to a servo motor that moves the steering wheel to the desired angle. The control rule uses the relative position of the vehicle to a target point, placed in the middle of the road, at a given distance in front of the vehicle. Once this information is known, Module 3 calculates the curvature needed for the vehicle to reach this point. The target point is retrieved from the map and its distance to the car is proportional to the current vehicle’s speed. Details of the control strategy are described in

Section 6.2.

Module 4 is a map stored in a file, generated by Module 1, when the vehicle is operating in mapping mode. In mapping mode, the vehicle is driven by a person along the desired path, and at the end, the map is automatically saved in a file. When the vehicle is operating in precise localization or autonomous driving mode, the map file must be loaded to memory to be used by Module 2. Details of the map structure are shown in

Section 5.1.

2.2. Hardware Architecture

The components that determine the cost of the equipment installed in a vehicle prepared for autonomous driving are the sensors, actuators and the computer system. Among those items, the sensors can be by far the most expensive. In order to achieve a low cost, we avoided the expensive LASER sensor. Instead, we opted for a single camera and a computer vision algorithm for the perception of the environment. Moreover, instead of using a commercial INS/IMU unit, we developed our own, with the minimum functionality, based on low cost inertial sensors and microcontrollers.

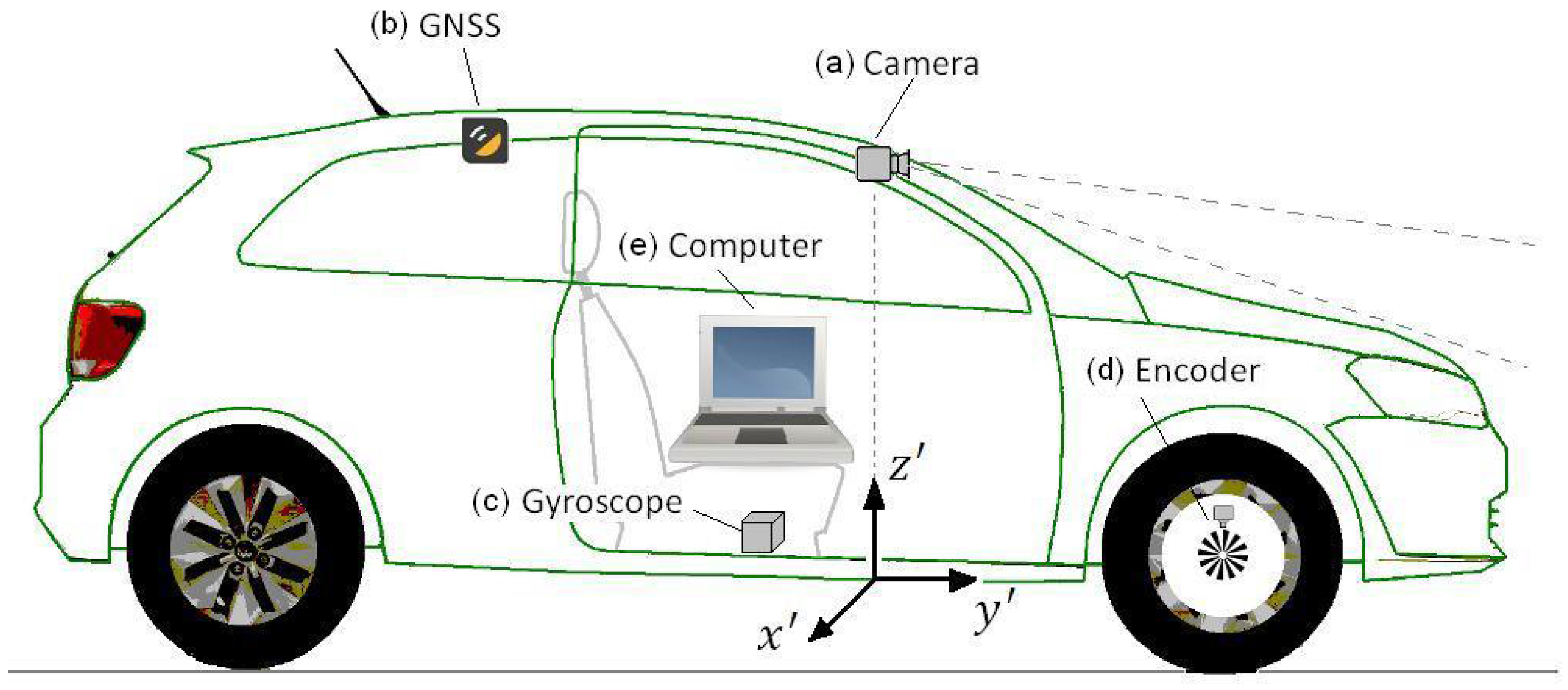

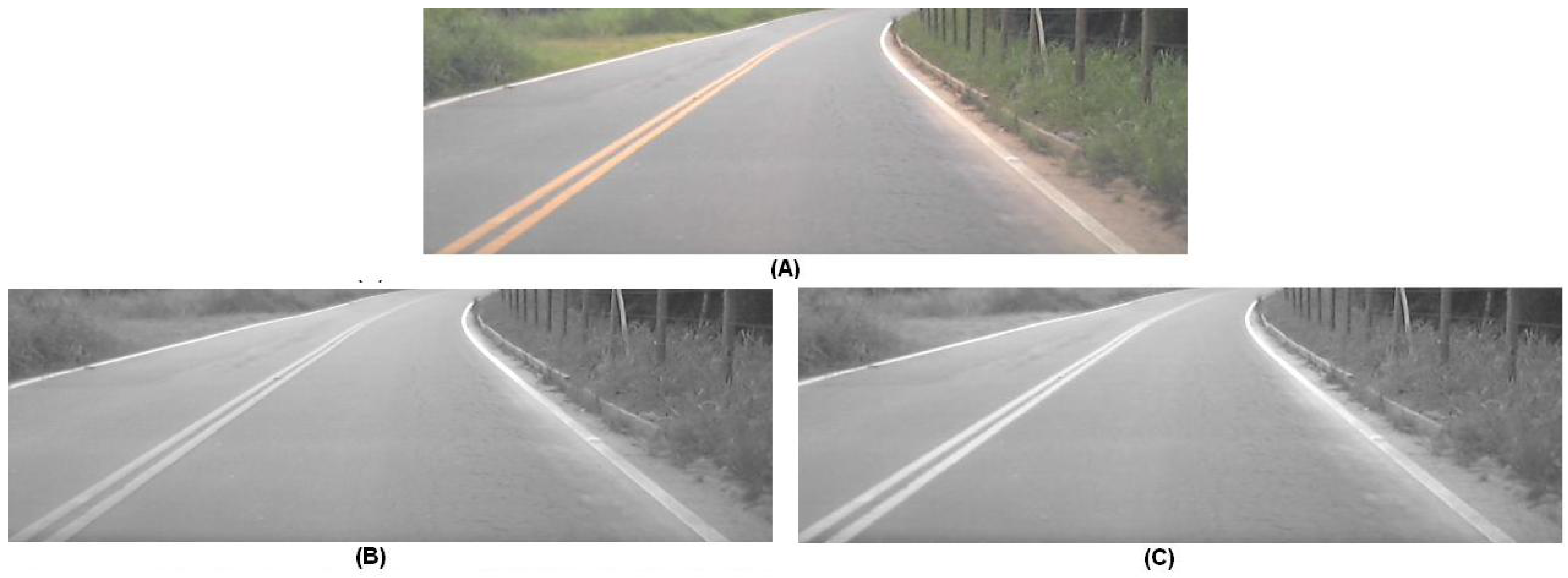

Figure 5 presents the prototype vehicle, a VW GOL 1.6, and its hardware setup. This vehicle was used in the first part of the experiments with the objective of determining the localization accuracy. The hardware installed consists of: (a) camera, (b) GNSS receiver, (c) gyroscope, (d) encoder, and (e) computer.

The camera and the vision algorithm forms what we named the lane marking sensor (LMS). The camera we used is a standard USB WebCam (Logitech, model C270) mounted in front of the rear-view mirror for capturing front images. This camera costs about US

$ 20.00 on regular computer shops. The vision algorithm processes the image from the camera and calculates the quantity, quality and position of up to four lane markings, within the range of 7.2 m. The algorithm was conceived to be lightweight so that it can run in low cost computers systems. Details of the LMS sensor are presented in the

Section 3.

Note that the choice of detecting lane markings close to the vehicle has some advantages. For close lane marks the visual resolution is higher, and the lane markings position computation is less affected by vehicle movements, like pitch and roll, that usually dynamically affect the vision system calibration. The assumption of a flat road, used to estimate the lane markings position, is sufficiently safe when using close lane markings only. Besides that, lane markings close to the vehicle generally are not affected by occlusions. But a short range LMS has a serious disadvantage: it is able to perform autonomous driving only in very limited speeds. Considering a range of 7.2 m, and a reaction time of 1.5 s, this establishes a maximum speed of 17 km/h. This limitation in speed is overcome with a data fusion strategy, explained in

Section 5.

The GNSS receiver is a standard low-cost model (UsGlobalSat, model Bu-353-S4) with USB interface and measuring frequency of 1Hz. This kind of receiver, that costs less then US

$ 17.00 on commercial shops, was not designed for precision applications. In very favorable conditions, the GPS error of localization is smaller than 7.8 m 95% of the time [

1]. This error, that can be much higher in dense urban environments, prevents the use of this kind of receiver in direct autonomous driving. Nevertheless, the value error is sufficient low to be used by the localization algorithm to get the initial estimation of the localization.

The gyroscope sensor is the Invensense model MPU6050. It is rigidly mounted in the bottom of the vehicle to measure its angular speed around the vertical axis. It is constructed from two printed circuit boards. One board contains the gyroscope itself, to measure the angular rate, and the other board contains the microcontroller MSP430G2553, to process its raw signal.

The microcontroller MSP430G2553 is responsible for making the interface between the INS (inertial navigation system) sensor and the computer, to perform some basic calculation and to control the temperature of the sensor. The cost of our mounted sensor is US

$5.00, for the INS sensor, plus US

$9.00, for the microcontroller board, giving a total of US

$14.00. More details of the gyroscope are presented in

Section 4.1.

The measurement of the longitudinal displacement (y direction) is done with the original vehicle encoder, used by the speed gauge. So, we had no cost with this sensor. The computer responsible to run the vision, localization and steering command algorithms (see

Figure 2) is a laptop with a processor Intel Celeron 1.86 GHz, 1 GB of RAM.

To guarantee that the data received by all sensors is perfectly synchronized we would have to use special sensors, cameras and/or acquisition interfaces that would increase the overall cost of the system. Instead, we choose not to use any special synchronization scheme, and we worked under the assumption that the delay of our sensors is very low with respect to the dynamic characteristics of the controlled system (the vehicle). For example, the vision sensor is the slowest one. We observed a maximum delay of about 30 ms, including frame grabbing, transmission and decompression. If the vehicle is moving at 70 km/h, the longitudinal error corresponds to only 0.58 m, which is a perfectly acceptable value for autonomous driving (note that this is not lateral error). As shown by the results at the end of this article, the overall error of our approach is sufficiently small for autonomous driving.

4. Sensors for Dead Reckoning

Dead reckoning is a technique that allows the estimation of the current position by knowledge of the previous position and integration of speed considering the course [

53]. Due to integration, this process is subjected to cumulative error, which limits the range of its application. The cost and accuracy of a dead reckoning system depends strongly on the sensors used, specially the gyroscope. Respecting the low cost philosophy of our approach, we developed a dead reckoning system that uses information from two sensors: the original odometer of the vehicle, used to measure the linear displacement (

) and a low-cost MEMs based gyroscope that measures the angular displacement (

), or yaw rate.

The MEMs (Microelectromechanical Systems) technology [

54] combines electrical and mechanical systems at a micro-meter scale. It allows to build moving micro-structures, like accelerometers and gyroscopes, on a silicon substrate. We chose the sensor MPU6050 [

55], manufactured by InveSense. It contains a 3-axis gyroscope, a 3-axis accelerometer, and was designed primarily for automotive/commercial performance categories. Precise dead reckoning computation requires the highest level of performance (tactical-grade performance [

56]), achieved only by costly sensors. Attempting to extract the maximum performance of our low-cost gyroscope, we took some actions, like temperature control and low frequency noise analysis, as explained in the following subsections.

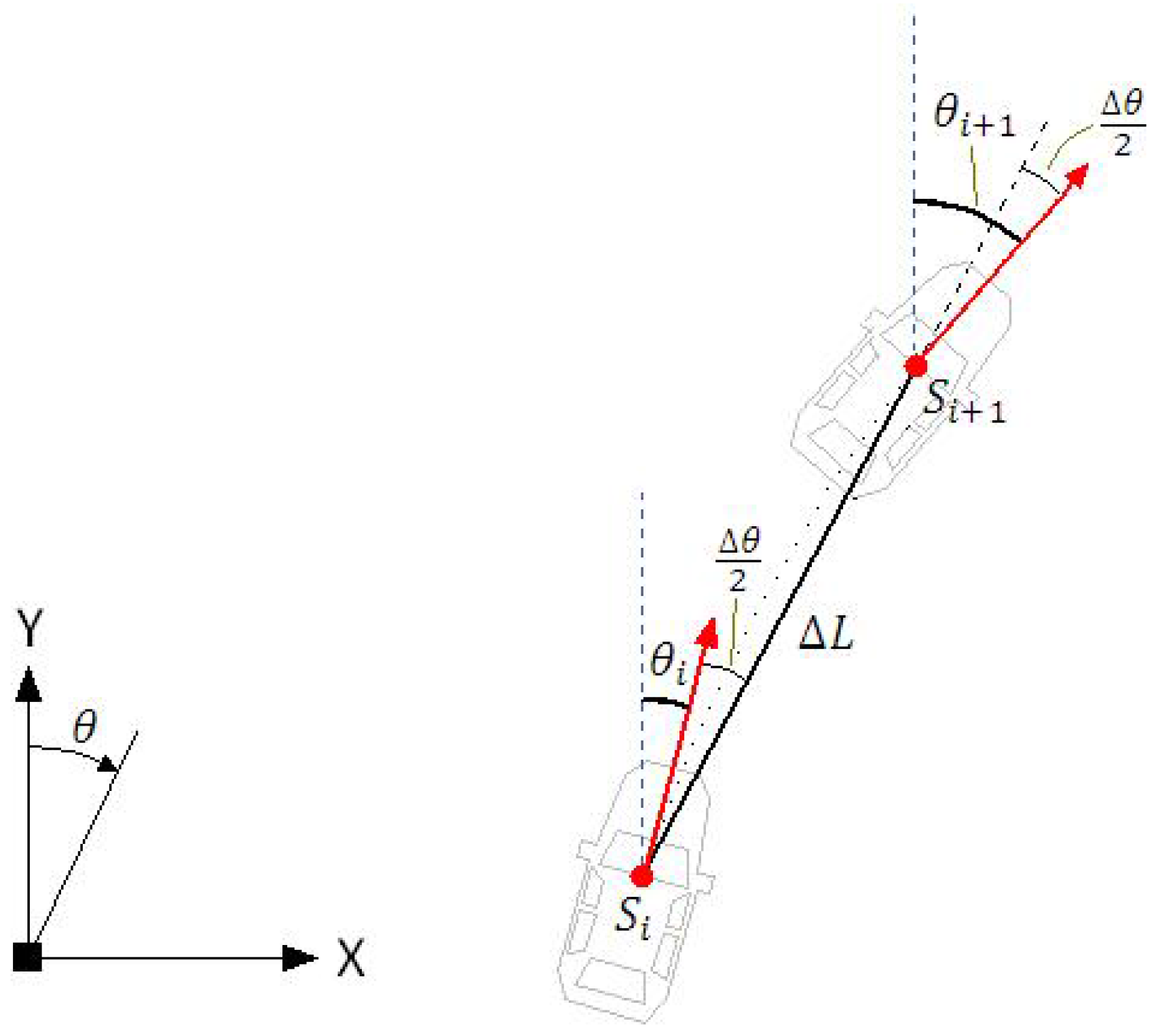

The dead reckoning computation is performed by the state transition Equations (

12)–(

14). Given the current vehicle’s pose (

), the future pose (

) after a linear and angular displacement is computed by

where

is the yaw angle measured with respect to the y-axis, as illustrated in

Figure 16. The equations are executed in the frequency of 25 Hz, which means that at 90 km/h the linear increments will be of 1.0 m. Small linear increments are desirable because they are associated with small angular increments. This allows good description of curves by small straight sections and leads to the simplified Equations (

12)–(

14).

4.1. Measurement of Orientation

The measurement of the angular displacement (

) in Equation (

14) is made by integrating the instantaneous angular speed (

), provided by the gyroscope, according to Equation (

15)

The value of bias () corresponds to the value present in the device’s output when its angular velocity is zero. This value should be zero, but it is not for real devices.

If the bias value is not perfectly compensated, the path reconstruction by dead reckoning will suffer a bend, as illustrated in

Figure 17. This figure was generated based on experimental data collected by our test vehicle. The reference path, with no bias, was generated by the GNSS registry, while the others were generated by dead reckoning.

Different values of bias were artificially inserted in the raw gyroscope data collected by our test vehicle, assuming the vehicle is moving at constant speed of 36 km/h. The higher the value of bias, the greater the bend caused in the track. On the other hand, the higher the speed of the vehicle, the smaller the bend because the smaller time of travel.

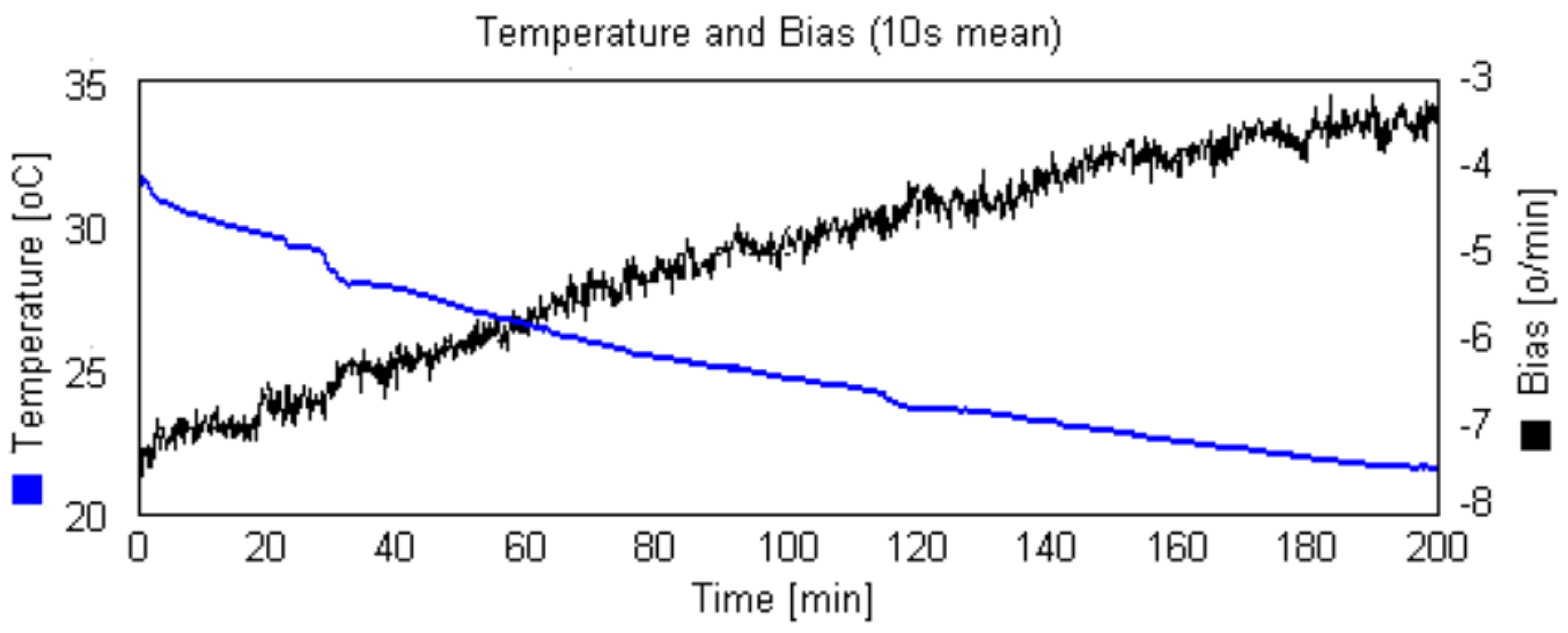

4.1.1. Gyroscope Bias Compensation

As discussed in the previous section, the precision of 2D path reconstruction by dead reckoning depends strongly on precise bias compensation of the gyroscope. The pure signal generated by a real MEMs gyroscope () is additionally disturbed by random noise () and a constant parcel of that is function of the temperature ().

The compensation of the thermal bias component can be done by finding a compensation expression (bias × Temperature).

Figure 18 shows the experimental relation obtained for one of our acquired sensor. Note the strong dependence between bias and temperature. For a variation of 10

C, the bias ranged 8.8

/min.

In our approach, we decided to operate the gyroscope in controlled constant temperature. In this case, becomes a constant and the only remaining source of disturbance is random noise. The constant temperature has the advantage of removing the effect of the temperature over all other gyroscope parameters, like scale factor.

4.1.2. Analysis of Random Noise in Constant Temperature

To remove the effect of the bias caused by temperature variation, we put the gyroscope to operate with constant temperature. The sensor MPU6050 was fit inside a thermal box, shown in

Figure 19, and a heating resistor maintains the temperature in 38

C. We chose this temperature because it is guaranteed to be higher than the room temperature, but not too much. The temperature control is made by the same microcontroller that interfaces the dead reckoning data to the computer, so no additional hardware is necessary.

To analyze the random noise of the sensor MPU6050 that we purchased, we collected 35 h of data, in constant temperature, according to orientations of Section 12.11 from the IEEE Standard Specification Format Guide and Test Procedure for Coriolis Vibratory Gyros [

57]. The result is illustrated in

Figure 20.

MEMs Gyroscopes are subjected to different random noise modes, being predominant the Angle Random Walk (white noise), Bias Instability (pink noise), and Rate Random Walk (brown noise) [

57,

58]. The Allan variance method is often used to quantify the noise components of a stochastic processes with different properties [

58,

59].

The yaw angle, computed by the integration of the gyroscope rate signal, is affected by each noise components in a different manner. The white noise is not a problem because its mean value is null and the high frequency characteristics is removed by the integration.

The most critical noise component is the brown noise because of its low frequency characteristics (slow variation). The black line in the graph of

Figure 20a, obtained by low pass filtering, illustrates this component.

Figure 20b shows that for relative short periods of time, in this case 18 min, the brown noise component presents small variation (

), as 0.42

/min.

Figure 21 shows the histogram of

for intervals of 18 and 60 min. It was computed from 1000 randomly taken sections of signal of

Figure 20a (black line). When the localization system of the vehicle starts-up, first it measures the current value of the bias, by averaging the gyroscope signal for 15 s. So, the histogram of

Figure 21 permits to calculate the probability of this initial value to drift by a certain amount, for the next period of time (18 and 60 min).

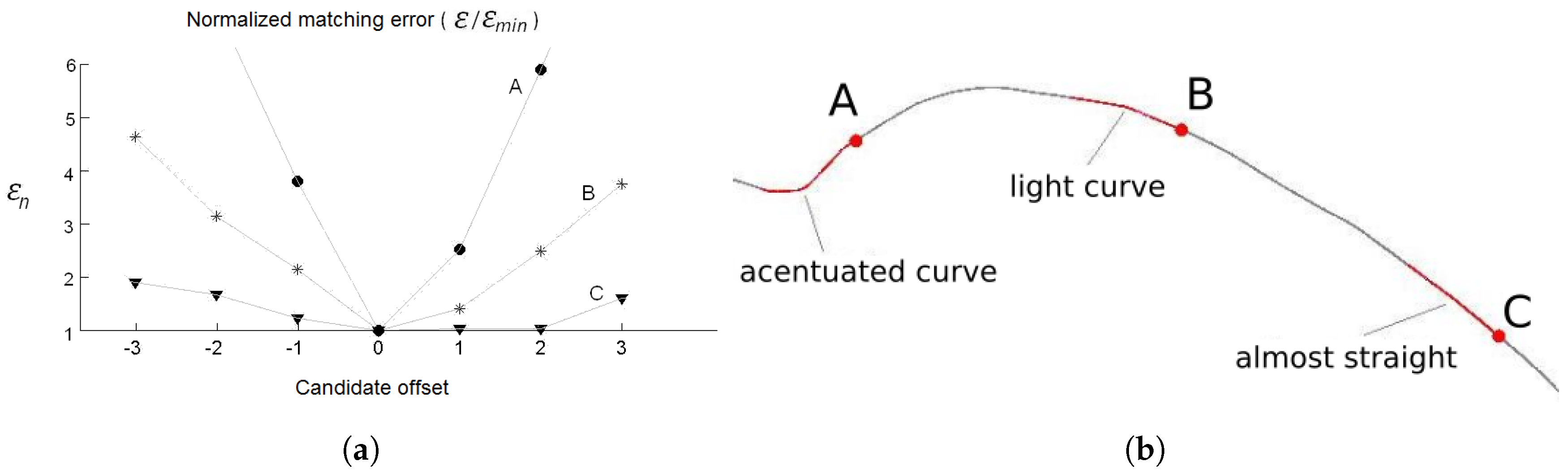

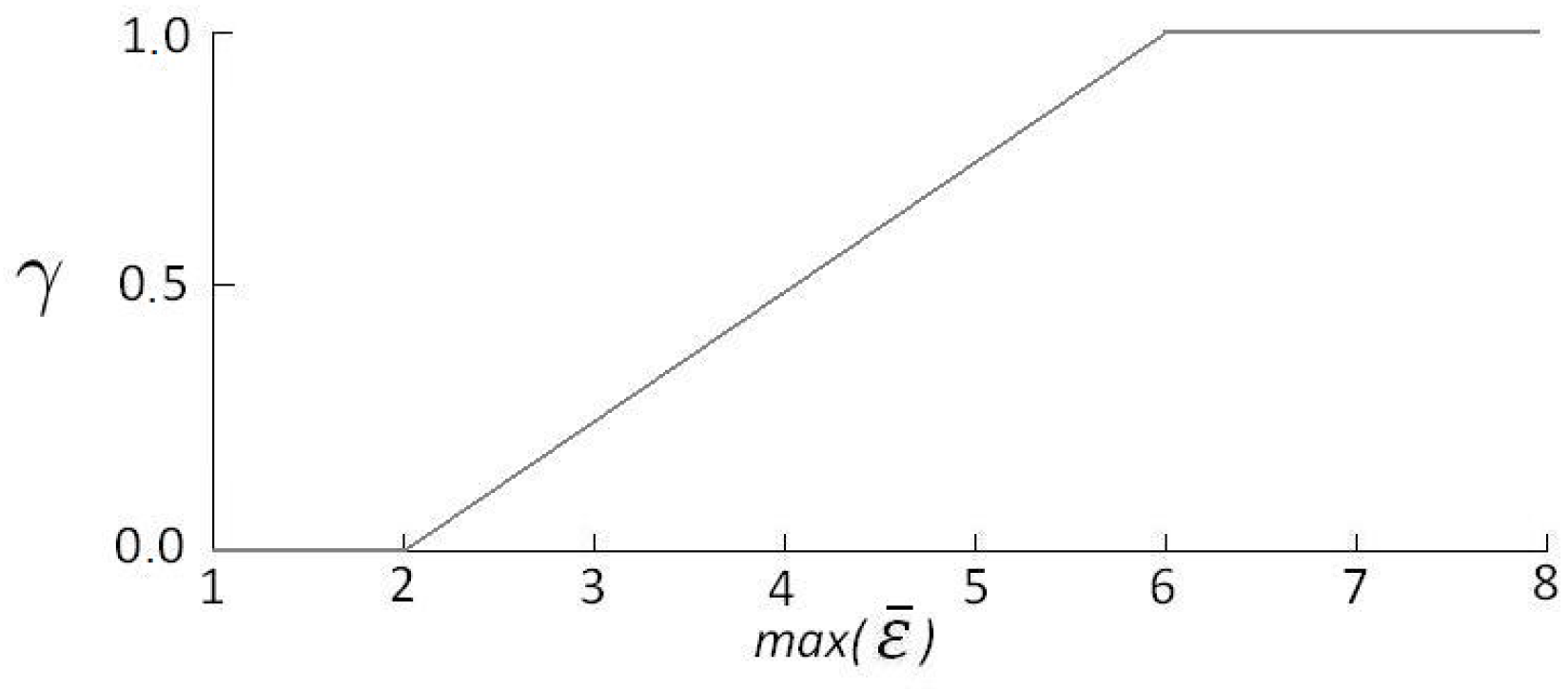

As introduced in

Section 2.1, the principle of localization is the comparison of the shape and content of lane markings of small road segments.

Figure 17a illustrates that bias drifts in the range of 1 deg/min, causes very small distortion in the shape, and consequently, we assume that it doesn’t compromise the localization algorithm to work properly. The histogram of

Figure 21b together with the consideration of the prior phrase, are a strong indicative that is possible to operate the autonomous vehicle properly up to 60 min or even more. After this time limit the vehicle must stop to measure again the bias value. Another possibility, is to update dynamically the bias, using information from the GNSS receiver, since it is not subjected to accumulative error. This is one of the issues addressed as future work.

A deeper comprehension on how the distortion caused by the bias error affects the accuracy of the localization is not a simple task. It depends on the particularities of each road section, like shape and distribution of the lane markings information over the BLMR. This is also planned as future work.

4.2. Measurement of Linear Displacement

The measurement of linear displacement is made directly by the pulse count (), given by the equation . The pulse signal is taken from the original encoder of the vehicle, used by the speed gauge, and connected to the same microcontroller board used by the gyroscope. The constant is determined by the tire diameter and the number of pulses generated at each complete revolution of the wheel.

7. Conclusions and Future Work

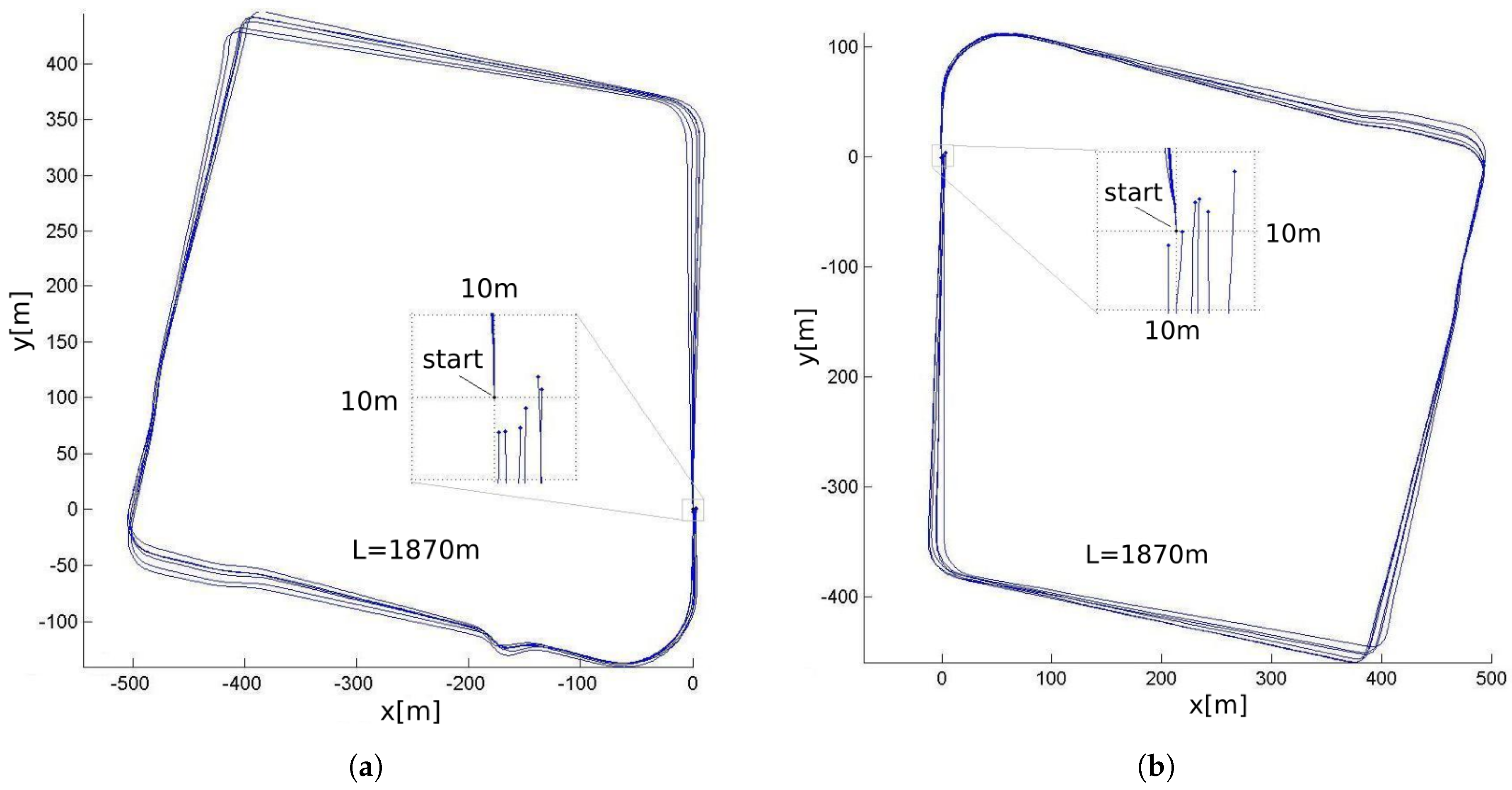

This paper presents a low cost sensors approach for accurate vehicle localization and autonomous driving application. The algorithm is based on a light two-level fusion of data from the lane marking sensor, the dead reckoning sensor, and a map. The map is created by the same sensors set, in a manual driven trip. To reach a low cost solution, each sensor module was constructed from standard commercial devices, like USB camera, ordinary GNSS, and MEMs based gyroscope. Special care was taken to overcome the limited performance of standard low cost devices, like the use of near visual information for the camera and, precise temperature control and bias compensation for the gyroscope. The fusion algorithm and map data structure have been designed with special care to be computationally lightweight, so that it can run in low-cost embedded architectures, which contributes to keep the overall system cost low. This is an important issue when thinking about large scale commercial applications. Despite the low cost hardware, the experimental results demonstrated that the system was capable of reaching a high degree of accuracy.

Considering our future work, our main issues can be described as three topics: (i) implementation of the system on a low cost computer; (ii) dynamic correction of the gyroscope bias; (iii) improvement of the filtering.

The good computational performance encouraged us to investigate the viability of implementing the system on a small and cheap single board computer (SBC) or even in a SoC (system on a chip), like Raspberry PI or BeagleBone Black. The substitution of the notebook by a low cost computer system will contribute to reduce the total cost of the localization and autonomous driving system.

Another useful issue to be treated in future are strategies to update the gyroscope bias while the vehicle is in operation. A possible solution is to use the moments when the vehicle stops, for example in a crossing with red traffic light, to make measurements of the bias. Another possible solution, is to use information from the GNSS to correct the bias while the car is moving.

We also believe that the use of a more elaborate scheme of filtering, like Kalman filter, that holds the instantaneous value of the uncertainty for each localization component (lateral, longitudinal, heading) could lead to a more precise and robust estimation of localization. The value of instantaneous uncertainty could be used, for example, to generate some warning signal when the localization accuracy becomes too low for autonomous driving.

Finally, future work also includes the control of acceleration and breaking of the vehicle and detection of obstacles, which are essential for a fully autonomous driving vehicle.