Sensing Technologies for Autism Spectrum Disorder Screening and Intervention

Abstract

:1. Introduction

2. Methodology

- What are the categories of sensing technologies that were intended for autism screening and intervention?

- From the perspective of clinical utility, why are these categories important?

- Were there experiments that showed the effectiveness of the sensors (in terms of accuracy, resolution, etc.) and their corresponding software applications?

- Are the sensors commercially-available or are still proof-of-concepts from research laboratories?

- What are the advantages and limitations of each sensor?

3. A Taxonomy of Sensors for ASD Screening and Intervention

3.1. Eye Trackers

3.1.1. Desktop-Based Eye Trackers

3.1.2. Head-Mounted Eye Trackers

3.1.3. Eye Tracking Glasses

3.2. Movement Trackers

3.3. Electrodermal Activity Monitors

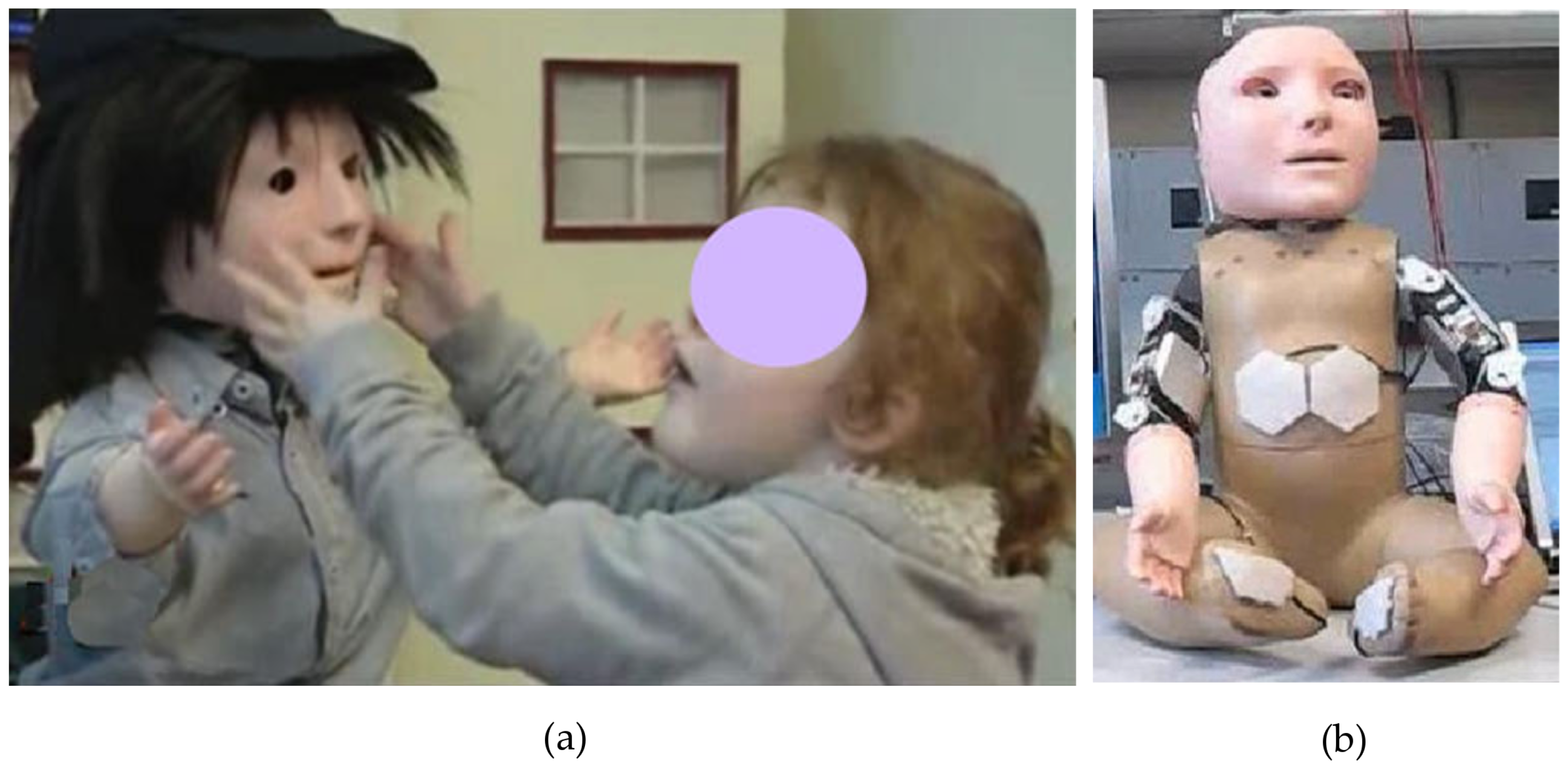

3.4. Touch Sensing

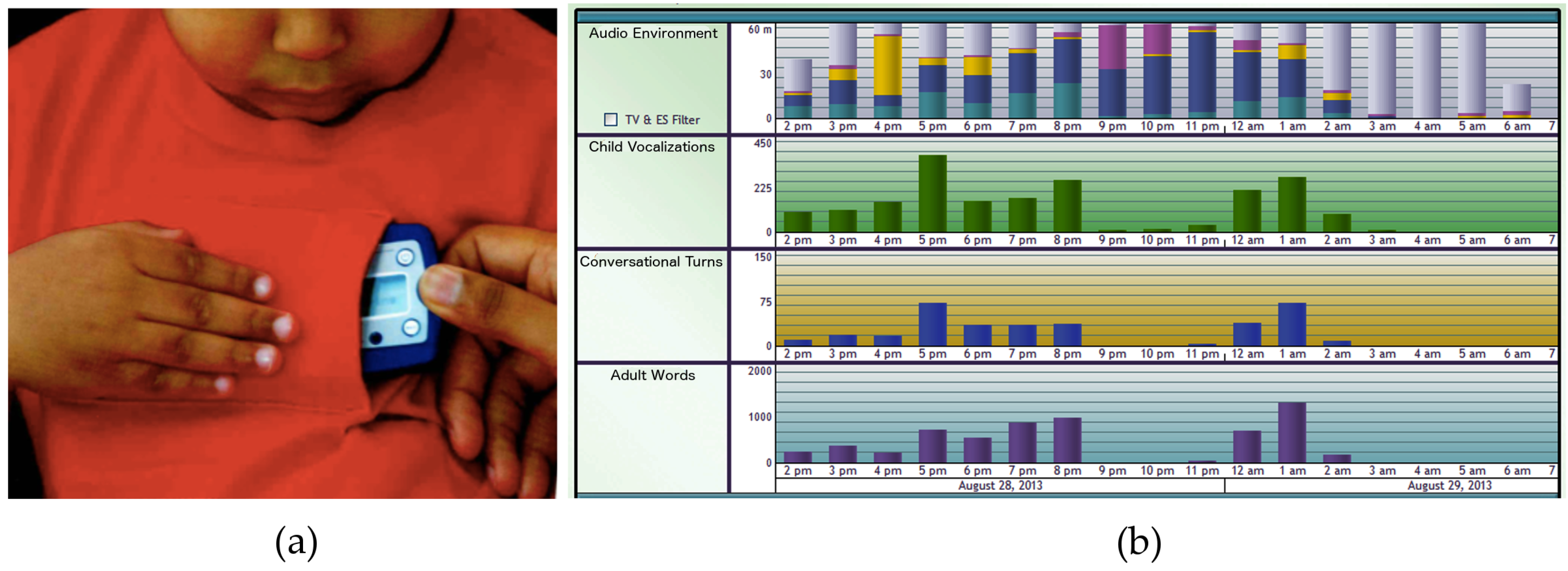

3.5. Prosody and Speech Detection

3.6. Sleep Quality Assessment Detection

3.6.1. Polysomnography

3.6.2. Actigraphy

3.6.3. Video Monitoring

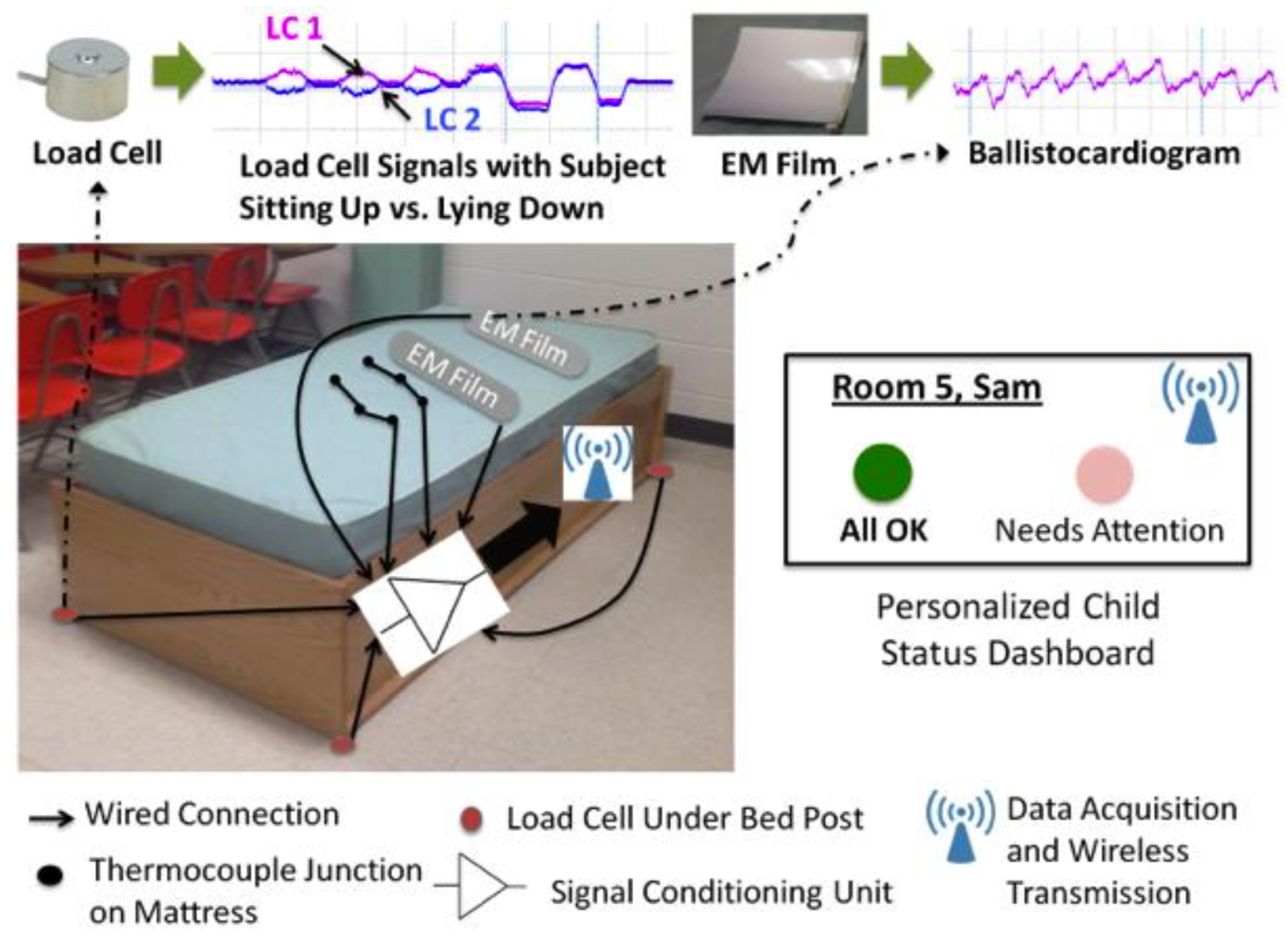

3.6.4. Ballistocardiography

4. Discussion and Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

Appendix A. Summary of Sensing Technologies for ASD Screening and Intervention

| Sensor Category | Purpose | Type | Measured Quantity | Benefits | Limitations |

|---|---|---|---|---|---|

| Eye trackers | To detect atypical eye gaze patterns for early screening | Desktop-based eye trackers | Timestamp, x and y coordinates of gaze fixations, distance from the display or stimulus |

|

|

| Head-mounted eye trackers | Timestamp, x and y coordinates of gaze fixations, distance from the display or stimulus |

|

| ||

| Eye tracking glasses | Timestamp, x and y coordinates of gaze fixations, distance from the display or stimulus |

|

| ||

| Movement trackers | To detect stereotypical movements for timely intervention | Wrist wear, worn on the chest, desktop | Acceleration, velocity or displacement in x, y, and z coordinates |

|

|

| Electrodermal activity monitors | To estimate the subject’s internal state through physiological data for timely intervention | Wrist wear | Electrodermal activity, blood volume pulse, heart rate, skin temperature |

|

|

| Tactile sensors | To simulate touch and hugs, and to induce controlled pain (for subjects with self-harming tendencies) | Worn on the wrist, chest, or leg | Contact pressure, then provides tactile feedback |

|

|

| To provide emotional feedback while playing games and to evaluate the accuracy of the subjects’ responses | Vibrotactile gamepad | Contact pressure, then provides vibrotactile feedback |

|

| |

| Touch sensors on social robots | Contact pressure, then classifies the contact behavior to provide appropriate feedback |

|

| ||

| Vocal prosody and speech detectors | To detect atypical vocal patterns for early diagnosis | Voice recording and pattern recognition | Detects prohibition, approval, soothing, attentional bids and neutral utterances |

|

|

| Voice recording and LENA (Language ENvironment Analysis) device | Counts the number of words spoken by adults to and around the child, adult-child conversational interactions and child vocalizations |

|

| ||

| Sleep quality assessment devices | To get an early indication of ASD since poor sleep quality may serve as a possible indicator | Poly-somnography | Neurophysiological and cardiorespiratory parameters to determine eye-movements, muscle activity and oxygen |

|

|

| Actigraphy | Movement data through accelerometer readings |

|

| ||

| Video-monitoring devices | Video data |

|

| ||

| Ballisto-cardiography | Heart rate, respiratory rate, activity detection, bedwetting incidents |

|

|

References

- Cashin, A.; Barker, P. The triad of impairment in autism revisited. J. Child Adolesc. Psychiatr. Nurs. 2009, 22, 189–193. [Google Scholar] [CrossRef] [PubMed]

- Daniels, A.M.; Mandell, D.S. Explaining differences in age at autism spectrum disorder diagnosis: A critical review. Autism Int. J. Res. Pract. 2014, 18, 583–597. [Google Scholar] [CrossRef] [PubMed]

- Jordan, R. Autistic Spectrum Disorders: An Introductory Handbook for Practitioners; David Fulton Publishers: London, UK, 2013. [Google Scholar]

- Kanner, L. Autistic disturbances of affective contact. Acta Paedopsychiatr. 1943, 35, 100–136. [Google Scholar]

- Lartseva, A.; Dijkstra, T.; Buitelaar, J.K. Emotional language processing in autism spectrum disorders: A systematic review. Front. Hum. Neurosci. 2014, 8, 991. [Google Scholar] [CrossRef] [PubMed]

- Morales, M.; Mundy, P.; Delgado, C. Responding to joint attention across the 6-through 24-month age period and early language acquisition. J. Appl. Dev. Psychol. 2000, 21, 283–298. [Google Scholar] [CrossRef]

- Kasari, C.; Rotheram-Fuller, E. Peer Relationships of Children with Autism: Challenges and Interventions. In Clinical Manual for the Treatment of Autism; Anagnostou, E., Hollander, E., Eds.; American Psychiatric Publishing, Inc.: Arlington County, VA, USA, 2007. [Google Scholar]

- Wing, L. Asperger’s syndrome: A clinical account. Psychol. Med. 1981, 11, 115–129. [Google Scholar] [CrossRef] [PubMed]

- El Kaliouby, R. Affective computing and autism. Ann. N. Y. Acad. Sci. 2006, 1093, 228–248. [Google Scholar] [CrossRef] [PubMed]

- Dawson, G. Early behavioral intervention, brain plasticity, and the prevention of autism spectrum disorder. Dev. Psychopathol. 2008, 20, 775–803. [Google Scholar] [CrossRef] [PubMed]

- Harris, S.L.; Handleman, J.S.; Kristoff, B.; Bass, L.; Gordon, R. Changes in language development among autistic and peer children in segregated and integrated preschool settings. J. Autism Dev. Disord. 1990, 20, 23–31. [Google Scholar] [CrossRef] [PubMed]

- McEachin, J.J.; Smith, T.; Lovaas, O.I. Long-term outcome for children with autism who received early intensive behavioral treatment. Am. J. Ment. Retard. 1993, 97, 359–372. [Google Scholar] [PubMed]

- Picard, R. Future affective technology for autism and emotion communication. Philos. Trans. R. Soc. Lond. B Biol. Sci. 2009, 364, 3575–3584. [Google Scholar] [CrossRef] [PubMed]

- Nakano, T.; Tanaka, K.; Endo, Y.; Yamane, Y.; Yamamoto, T.; Nakano, Y.; Ohta, H.; Kato, N.; Kitazawa, S. Atypical gaze patterns in children and adults with autism spectrum disorders dissociated from developmental changes in gaze behaviour. Proc. Biol. Sci. R. Soc. 2010, 277, 2935–2943. [Google Scholar] [CrossRef] [PubMed]

- Bird, G.; Catmur, C.; Silani, G.; Frith, C.; Frith, U. Attention does not modulate neural responses to social stimuli in autism spectrum disorders. Neuroimage 2006, 31, 1614–1624. [Google Scholar] [CrossRef] [PubMed]

- Luo, Y.; Cheong, L.F.; Cabibihan, J.J. Modeling the Temporality of Saliency. In Computer Vision—ACCV 2014: 12th Asian Conference on Computer Vision, Singapore, November 1–5, 2014, Revised Selected Papers, Part III; Cremers, D., Reid, I., Saito, H., Yang, M.H., Eds.; Springer International Publishing: Cham, Switzerland, 2015; pp. 205–220. [Google Scholar]

- Boraston, Z.; Blakemore, S.J. The application of eye-tracking technology in the study of autism. J. Physiol. 2007, 581, 893–898. [Google Scholar] [CrossRef] [PubMed]

- Jolliffe, T.; Baron-Cohen, S. A test of central coherence theory: Can adults with high-functioning autism or Asperger syndrome integrate objects in context? Vis. Cogn. 2001, 8, 67–101. [Google Scholar] [CrossRef]

- Pelphrey, K.A.; Sasson, N.J.; Reznick, J.S.; Paul, G.; Goldman, B.D.; Piven, J. Visual scanning of faces in autism. J. Autism Dev. Disord. 2002, 32, 249–261. [Google Scholar] [CrossRef] [PubMed]

- Klin, A.; Jones, W.; Schultz, R.; Volkmar, F.; Cohen, D. Defining and quantifying the social phenotype in autism. Am. J. Psychiatry 2002, 159, 895–908. [Google Scholar] [CrossRef] [PubMed]

- Joseph, R.M.; Tanaka, J. Holistic and part-based face recognition in children with autism. J. Child Psychol. Psychiatry Allied Discip. 2003, 44, 529–542. [Google Scholar] [CrossRef]

- Spezio, M.L.; Adolphs, R.; Hurley, R.S.E.; Piven, J. Abnormal use of facial information in high-functioning autism. J. Autism Dev. Disord. 2007, 37, 929–939. [Google Scholar] [CrossRef] [PubMed]

- Speer, L.L.; Cook, A.E.; McMahon, W.M.; Clark, E. Face processing in children with autism: Effects of stimulus contents and type. Autism 2007, 11, 265–277. [Google Scholar] [CrossRef] [PubMed]

- Frazier, T.W.; Klingemier, E.W.; Beukemann, M.; Speer, L.; Markowitz, L.; Parikh, S.; Wexberg, S.; Giuliano, K.; Schulte, E.; Delahunty, C.; et al. Development of an objective autism risk index using remote eye tracking. J. Am. Acad. Child Adolesc. Psychiatry 2016, 55, 301–309. [Google Scholar] [CrossRef] [PubMed]

- Riby, D.M.; Hancock, P.J.B. Do faces capture the attention of individuals with Williams syndrome or autism? Evidence from tracking eye movements. J. Autism Dev. Disord. 2009, 39, 421–431. [Google Scholar] [CrossRef] [PubMed]

- Merin, N.; Young, G.S.; Ozonoff, S.; Rogers, S.J. Visual fixation patterns during reciprocal social interaction distinguish a subgroup of 6-month-old infants at-risk for autism from comparison infants. J. Autism Dev. Disord. 2007, 37, 108–121. [Google Scholar] [CrossRef] [PubMed]

- Gusella, J.L.; Muir, D.; Tronick, E.Z. The effect of manipulating maternal behavior during an interaction on three- and six-month-olds’ affect and attention. Child Dev. 1988, 59, 1111–1124. [Google Scholar] [CrossRef] [PubMed]

- Rapela, J.; Lin, T.Y.; Westerfield, M.; Jung, T.P.; Townsend, J. Assisting Autistic Children with Wireless EOG Technology. In Proceedings of the Annual Conference IEEE Engineering in Medicine and Biology Society, San Diego, CA, USA, 28 August–1 September 2012; Volume 2012, pp. 3504–3506.

- Wagner, J.B.; Hirsch, S.B.; Vogel-Farley, V.K.; Redcay, E.; Nelson, C.A. Eye-tracking, autonomic, and electrophysiological correlates of emotional face processing in adolescents with autism spectrum disorder. J. Autism Dev. Disord. 2013, 43, 188–199. [Google Scholar] [CrossRef] [PubMed]

- Chawarska, K.; Macari, S.; Shic, F. Context modulates attention to social scenes in toddlers with autism. J. Child Psychol. Psychiatry Allied Discip. 2012, 53, 903–913. [Google Scholar] [CrossRef] [PubMed]

- Chawarska, K.; Macari, S.; Shic, F. Decreased spontaneous attention to social scenes in 6-month-old infants later diagnosed with autism spectrum disorders. Biol. Psychiatry 2013, 74, 195–203. [Google Scholar] [CrossRef] [PubMed]

- Campbell, D.J.; Shic, F.; Macari, S.; Chawarska, K. Gaze response to dyadic bids at 2 years related to outcomes at 3 years in autism spectrum disorders: A subtyping analysis. J. Autism Dev. Disord. 2014, 44, 431–442. [Google Scholar] [CrossRef] [PubMed]

- Bedford, R.; Elsabbagh, M.; Gliga, T.; Pickles, A.; Senju, A.; Charman, T.; Johnson, M.H. Precursors to social and communication difficulties in infants at-risk for autism: Gaze following and attentional engagement. J. Autism Dev. Disord. 2012, 42, 2208–2218. [Google Scholar] [CrossRef] [PubMed]

- Elsabbagh, M.; Mercure, E.; Hudry, K.; Chandler, S.; Pasco, G.; Charman, T.; Pickles, A.; Baron-Cohen, S.; Bolton, P.; Johnson, M.H. Infant neural sensitivity to dynamic eye gaze is associated with later emerging autism. Curr. Biol. 2012, 22, 338–342. [Google Scholar] [CrossRef] [PubMed]

- Elsabbagh, M.; Gliga, T.; Pickles, A.; Hudry, K.; Charman, T.; Johnson, M.H. The development of face orienting mechanisms in infants at-risk for autism. Behav. Brain Res. 2013, 251, 147–154. [Google Scholar] [CrossRef] [PubMed]

- Elsabbagh, M.; Bedford, R.; Senju, A.; Charman, T.; Pickles, A.; Johnson, M.H. What you see is what you get: contextual modulation of face scanning in typical and atypical development. Social Cogn. Affect. Neurosci. 2014, 9, 538–543. [Google Scholar] [CrossRef] [PubMed]

- Key, A.P.F.; Stone, W.L. Same but different: 9-month-old infants at average and high risk for autism look at the same facial features but process them using different brain mechanisms. Autism Res. 2012, 5, 253–266. [Google Scholar] [CrossRef] [PubMed]

- Young, G.S.; Merin, N.; Rogers, S.J.; Ozonoff, S. Gaze behavior and affect at 6 months: Predicting clinical outcomes and language development in typically developing infants and infants at risk for autism. Dev. Sci. 2009, 12, 798–814. [Google Scholar] [CrossRef] [PubMed]

- Falck-Ytter, T.; Fernell, E.; Hedvall, A.L.; von Hofsten, C.; Gillberg, C. Gaze performance in children with autism spectrum disorder when observing communicative actions. J. Autism Dev. Disord. 2012, 42, 2236–2245. [Google Scholar] [CrossRef] [PubMed]

- Falck-Ytter, T.; von Hofsten, C.; Gillberg, C.; Fernell, E. Visualization and analysis of eye movement data from children with typical and atypical development. J. Autism Dev. Disord. 2013, 43, 2249–2258. [Google Scholar] [CrossRef] [PubMed]

- Falck-Ytter, T.; Rehnberg, E.; Bölte, S. Lack of visual orienting to biological motion and audiovisual synchrony in 3-year-olds with autism. PLoS ONE 2013, 8, e68816. [Google Scholar] [CrossRef] [PubMed]

- Gosselin, F.; Schyns, P.G. Bubbles: A technique to reveal the use of information in recognition tasks. Vis. Res. 2001, 41, 2261–2271. [Google Scholar] [CrossRef]

- Brainard, D.H. The Psychophysics Toolbox. Spat. Vis. 1997, 10, 433–436. [Google Scholar] [CrossRef] [PubMed]

- Cornelissen, F.W.; Peters, E.M.; Palmer, J. The Eyelink Toolbox: Eye tracking with MATLAB and the Psychophysics Toolbox. Behav. Res. Methods Instrum. Comput. 2002, 34, 613–617. [Google Scholar] [CrossRef] [PubMed]

- Mazzei, D.; Billeci, L.; Armato, A.; Lazzeri, N.; Cisternino, A.; Pioggia, G.; Igliozzi, R.; Muratori, F.; Ahluwalia, A.; De Rossi, D. The FACE of Autism. In Proceedings of the 19th International Symposium in Robot and Human Interactive Communication, Viareggio, Italy, 13–15 September 2010; pp. 791–796.

- Noris, B.; Nadel, J.; Barker, M.; Hadjikhani, N.; Billard, A. Investigating gaze of children with ASD in naturalistic settings. PLoS ONE 2012, 7, e44144. [Google Scholar] [CrossRef] [PubMed]

- Noris, B.; Barker, M.; Nadel, J.; Hentsch, F.; Ansermet, F.; Billard, A. Measuring Gaze of Children with Autism Spectrum Disorders in Naturalistic Interactions. In Proceedings of the Annual Conference IEEE Engineering in Medicine and Biology Society, Boston, MA, USA, 30 August–3 September 2011; Volume 2011, pp. 5356–5359.

- Piccardi, L.; Noris, B.; Barbey, O.; Billard, A.; Schiavone, G.; Keller, F.; von Hofsten, C. WearCam: A Head Mounted Wireless Camera for Monitoring Gaze Attention and for the Diagnosis of Developmental Disorders in Young Children. In Proceedings of the RO-MAN 2007—The 16th IEEE International Symposium on Robot and Human Interactive Communication, Jeju, Korea, 26–29 August 2007; pp. 594–598.

- Ye, Z.; Li, Y.; Liu, Y.; Bridges, C.; Rozga, A.; Rehg, J.M. Detecting Bids for Eye Contact Using a Wearable Camera. In Proceedings of the 2015 11th IEEE International Conference and Workshops on Automatic Face and Gesture Recognition (FG), Ljubljana, Slovenia, 4–8 May 2015; Volume 1, pp. 1–8.

- Teo, H.T.; Cabibihan, J.J. Toward Soft, Robust Robots for Children with Autism Spectrum Disorder. In Proceedings of the Failures in Real Robots (FINE-R@IROS) Workshop, Hamburg, Germany, 2 October 2015.

- Albinali, F.; Goodwin, M.S.; Intille, S.S. Recognizing Stereotypical Motor Movements in the Laboratory and Classroom. In Proceedings of the 11th International Conference on Ubiquitous Computing—Ubicomp ’09, Orlando, FL, USA, 30 September–3 October 2009.

- Goodwin, M.S.; Intille, S.S.; Albinali, F.; Velicer, W.F. Automated detection of stereotypical motor movements. J. Autism Dev. Disord. 2011, 41, 770–782. [Google Scholar] [CrossRef] [PubMed]

- Tapia, E.M.; Intille, S.S.; Lopez, L.; Larson, K. The Design of a Portable Kit of Wireless Sensors for Naturalistic Data Collection. In Pervasive Computing, Proceedings of 4th International Conference, PERVASIVE 2006, Dublin, Ireland, May 7–10, 2006; Springer: Berlin/Heidelberg, Germany, 2006; Volume 3968, pp. 117–134. [Google Scholar]

- Min, C.H.; Tewfik, A.H. Automatic Characterization and Detection of Behavioral Patterns Using Linear Predictive Coding of Accelerometer Sensor Data. In Proceedings of the IEEE Annual Conference Engineering in Medicine and Biology Society, Buenos Aires, Argentina, 31 August–4 September 2010; Volume 2010, pp. 220–223.

- Deller, J.R., Jr.; Proakis, J.G.; Hansen, J.H. Discrete Time Processing of Speech Signals, 1st ed.; Prentice Hall PTR: Upper Saddle River, NJ, USA, 1993. [Google Scholar]

- Plötz, T.; Hammerla, N.Y.; Rozga, A.; Reavis, A.; Call, N.; Abowd, G.D. Automatic Assessment of Problem Behavior in Individuals with Developmental Disabilities. In Proceedings of the 2012 ACM Conference on Ubiquitous Computing, UbiComp ’12, Pittsburgh, PA, USA, 5–8 September 2012; pp. 391–400.

- Roggen, D.; Calatroni, A.; Rossi, M.; Holleczek, T.; Forster, K.; Troster, G.; Lukowicz, P.; Bannach, D.; Pirkl, G.; Ferscha, A.; et al. Collecting Complex Activity Datasets in Highly Rich Networked Sensor Environments. In Proceedings of the 2010 Seventh International Conference on Networked Sensing Systems (INSS), Kassel, Germany, 15–18 June 2010; pp. 233–240.

- Goncalves, N.; Rodrigues, J.L.; Costa, S.; Soares, F. Automatic Detection of Stereotyped Hand Flapping Movements: Two Different Approaches. In Proceedings of the 2012 IEEE RO-MAN: The 21st IEEE International Symposium on Robot and Human Interactive Communication, Paris, France, 9–13 September 2012; pp. 392–397.

- Cabibihan, J.J.; Chauhan, S. Physiological responses to affective tele-touch during induced emotional stimuli. IEEE Trans. Affect. Comput. 2016. [Google Scholar] [CrossRef]

- Boucsein, W. Electrodermal Activity; Springer Science & Business Media: New York, NY, USA, 2012; p. 618. [Google Scholar]

- Critchley, H.D. Electrodermal responses: What happens in the brain. Neuroscientist 2002, 8, 132–142. [Google Scholar] [CrossRef] [PubMed]

- Frazier, T.W.; Strauss, M.E.; Steinhauer, S.R. Respiratory sinus arrhythmia as an index of emotional response in young adults. Psychophysiology 2004, 41, 75–83. [Google Scholar] [CrossRef] [PubMed]

- Fletcher, R.R.; Dobson, K.; Goodwin, M.S.; Eydgahi, H.; Wilder-Smith, O.; Fernholz, D.; Kuboyama, Y.; Hedman, E.B.; Poh, M.Z.; Picard, R.W. iCalm: Wearable sensor and network architecture for wirelessly communicating and logging autonomic activity. IEEE Trans. Inf. Technol. Biomed. 2010, 14, 215–223. [Google Scholar] [CrossRef] [PubMed]

- Fletcher, R.R.; Poh, M.Z.; Eydgahi, H. Wearable Sensors: Opportunities and Challenges for Low-Cost Health Care. In Proceedings of the IEEE Annual Conference Engineering in Medicine and Biology Society, Buenos Aires, Argentina, 31 August–4 September 2010; Volume 2010, pp. 1763–1766.

- Eydgahi, H. Design and Evaluation of iCalm: A Novel, Wrist-Worn, Low-Power, Low-Cost, Wireless Physiological Sensor Module. Master’s Thesis, Department of Electrical Engineering and Computer Science, Massachusetts Institute of Technology, Cambridge, MA, USA, 2008. [Google Scholar]

- McCarthy, C.; Pradhan, N.; Redpath, C.; Adler, A. Validation of the Empatica E4 Wristband. In Proceedings of the 2016 IEEE EMBS International Student Conference (ISC), Ottawa, ON, Canada, 29–31 May 2016; pp. 1–4.

- Garbarino, M.; Lai, M.; Bender, D.; Picard, R.W.; Tognetti, S. Empatica E3: A Wearable Wireless Multi-Sensor Device for Real-Time Computerized Biofeedback and Data Acquisition. In Proceedings of the 2014 EAI 4th International Conference on Wireless Mobile Communication and Healthcare (Mobihealth), Athens, Greece, 3–5 November 2014; pp. 39–42.

- Cabibihan, J.J.; Chauhan, S.S.; Suresh, S. Effects of the artificial skin’s thickness on the subsurface pressure profiles of flat, curved, and braille surfaces. IEEE Sens. J. 2014, 14, 2118–2128. [Google Scholar] [CrossRef]

- Lee, W.W.; Cabibihan, J.; Thakor, N.V. Bio-Mimetic Strategies for Tactile Sensing. In Proceedings of the 2013 IEEE SENSORS, Baltimore, MD, USA, 3–6 November 2013; pp. 1–4.

- Salehi, S.; Cabibihan, J.J.; Ge, S.S. Artificial Skin Ridges Enhance Local Tactile Shape Discrimination. Sensors 2011, 11, 8626–8642. [Google Scholar] [CrossRef] [PubMed]

- Cabibihan, J.J.; Pattofatto, S.; Jomaa, M.; Benallal, A.; Carrozza, M.C.; Dario, P. The Conformance Test for Robotic/Prosthetic Fingertip Skins. In Proceedings of the First IEEE/RAS-EMBS International Conference on Biomedical Robotics and Biomechatronics, Pisa, Italy, 20–22 February 2006; pp. 561–566.

- Anand, A.; Mathew, J.; Pramod, S.; Paul, S.; Bharath, R.; Xiang, C.; Cabibihan, J.J. Object Shape Discrimination Using Sensorized Glove. In Proceedings of the 2013 10th IEEE International Conference on Control and Automation (ICCA), Hangzhou, China, 12–14 June 2013; pp. 1514–1519.

- Cabibihan, J.J.; Carrozza, M.C.; Dario, P.; Pattofatto, S.; Jomaa, M.; Benallal, A. The Uncanny Valley and the Search for Human Skin-Like Materials for a Prosthetic Fingertip. In Proceedings of the 6th IEEE-RAS International Conference on Humanoid Robots, Genova, Italy, 4–6 December 2006; pp. 474–477.

- Cabibihan, J.J.; Joshi, D.; Srinivasa, Y.M.; Chan, M.A.; Muruganantham, A. Illusory sense of human touch from a warm and soft artificial hand. IEEE Trans. Neural Syst. Rehabil. Eng. 2015, 23, 517–527. [Google Scholar] [CrossRef] [PubMed]

- Cabibihan, J.J.; Zheng, L.; Cher, C.K.T. Affective Tele-touch. In Proceedings of the 4th International Conference on Social Robotics, ICSR 2012, Chengdu, China, 29–31 October 2012; pp. 348–356.

- Cabibihan, J.J.; Jegadeesan, R.; Salehi, S.; Ge, S.S. Synthetic Skins with Humanlike Warmth. In Proceedings of the Second International Conference on Social Robotics (ICSR 2010), Singapore, 23–24 November 2010; Ge, S.S., Li, H., Cabibihan, J.J., Tan, Y.K., Eds.; Springer: Berlin/Heidelberg, Germany, 2010; pp. 362–371. [Google Scholar]

- Cabibihan, J.J.; Pradipta, R.; Chew, Y.Z.; Ge, S.S. Towards Humanlike Social Touch for Prosthetics and Sociable Robotics: Handshake Experiments and Finger Phalange Indentations. In Proceedings of the FIRA RoboWorld Congress, Incheon, Korea, 16–18 August 2009; Springer: Berlin/Heidelberg, Germany, 2009; pp. 73–79. [Google Scholar]

- Cabibihan, J.J.; Ahmed, I.; Ge, S.S. Force and Motion Analyses of the Human Patting Gesture for Robotic Social Touching. In Proceedings of the 2011 IEEE 5th International Conference on Cybernetics and Intelligent Systems (CIS), Qingdao, China, 17–19 September 2011; pp. 165–169.

- Cabibihan, J.J.; Ge, S.S. Towards Humanlike Social Touch for Prosthetics and Sociable Robotics: Three-Dimensional Finite Element Simulations of Synthetic Finger Phalanges. In Proceedings of the FIRA RoboWorld Congress, Incheon, Korea, 16–18 August 2009; Springer: Berlin/Heidelberg, Germany, 2009; pp. 80–86. [Google Scholar]

- Vaucelle, C.; Bonanni, L.; Ishii, H. Design of Haptic Interfaces for Therapy. In Proceedings of the 27th International Conference on Human Factors in Computing Systems—CHI 09, Boston, MA, USA, 4–9 April 2009.

- Changeon, G.; Graeff, D. Tactile emotions: A vibrotactile tactile gamepad for transmitting emotional messages to children with autism. Percept. Dev. Mobil. Commun. 2012, 7282, 79–90. [Google Scholar]

- So, W.C.; Wong, M.Y.; Cabibihan, J.J.; Lam, C.Y.; Chan, R.Y.; Qian, H.H. Using robot animation to promote gestural skills in children with autism spectrum disorders. J. Comput. Assist. Learn. 2016, 32, 632–646. [Google Scholar] [CrossRef]

- Cabibihan, J.J.; Javed, H.; Ang, M., Jr.; Aljunied, S.M. Why robots? A survey on the roles and benefits of social robots in the therapy of children with autism. Int. J. Soc. Robot. 2013, 5, 593–618. [Google Scholar] [CrossRef]

- Li, H.; Cabibihan, J.J.; Tan, Y.K. Towards an effective design of social robots. Int. J. Soc. Robot. 2011, 3, 333–335. [Google Scholar] [CrossRef]

- Cabibihan, J.J.; So, W.C.; Saj, S.; Zhang, Z. Telerobotic Pointing Gestures Shape Human Spatial Cognition. Int. J. Soc. Robot. 2012, 4, 263–272. [Google Scholar] [CrossRef]

- Ge, S.S.; Cabibihan, J.J.; Zhang, Z.; Li, Y.; Meng, C.; He, H.; Safizadeh, M.; Li, Y.; Yang, J. Design and Development of Nancy, a Social Robot. In Proceedings of the 2011 8th International Conference on Ubiquitous Robots and Ambient Intelligence (URAI), Incheon, Korea, 23–26 November 2011; pp. 568–573.

- Cabibihan, J.J.; So, W.C.; Nazar, M.; Ge, S.S. Pointing Gestures for a Robot Mediated Communication Interface. In Proceedings of the International Conference on Intelligent Robotics and Applications, Singapore, 16–18 December 2009; pp. 67–77.

- Ham, J.; Bokhorst, R.; Cabibihan, J. The Influence of Gazing and Gestures of a Storytelling Robot on Its Persuasive Power. In Proceedings of the International Conference on Social Robotics, Amsterdam, The Netherlands, 24–25 November 2011.

- Hoa, T.D.; Cabibihan, J. Cute and soft: baby steps in designing robots for children with autism. In Proceedings of the Workshop at SIGGRAPH Asia, Singapore, 28 November–1 December 2012.

- Robins, B.; Dautenhahn, K. Tactile Interactions with a Humanoid Robot: Novel Play Scenario Implementations with Children with Autism. Int. J. Soc. Robot. 2014, 6, 397–415. [Google Scholar] [CrossRef]

- Dautenhahn, K.; Nehaniv, C.L.; Walters, M.L.; Robins, B.; Kose-Bagci, H.; Mirza, N.A.; Blow, M. KASPAR—A minimally expressive humanoid robot for human-robot interaction research. Appl. Bion. Biomech. 2009, 6, 369–397. [Google Scholar] [CrossRef]

- Amirabdollahian, F. Investigating tactile event recognition in child-robot interaction for use in autism therapy. In Proceedings of the 2011 Annual International Conference of the IEEE on Engineering in Medicine and Biology Society, Boston, MA, USA, 30 August–3 September 2011; pp. 5347–5351.

- Schmitz, A.; Maggiali, M.; Natale, L.; Metta, G. Touch Sensors for Humanoid Hands. In Proceedings of the 19th International Symposium in Robot and Human Interactive Communication, Viareggio, Italy, 13–15 September 2010; pp. 691–697.

- Costa, S.; Lehmann, H.; Dautenhahn, K.; Robins, B.; Soares, F. Using a Humanoid Robot to Elicit Body Awareness and Appropriate Physical Interaction in Children with Autism. Int. J. Soc. Robot. 2015, 7, 265–278. [Google Scholar] [CrossRef]

- Shriberg, L.D. Speech and Prosody Characteristics of Adolescents and Adults With High-Functioning Autism and Asperger Syndrome. J. Speech Lang. Hear. Res. 2001, 44, 1097–1115. [Google Scholar] [CrossRef]

- Fay, W.H.; Schuler, A.L. Emerging Language in Autistic Children; Park University: Parkville, MO, USA, 1980. [Google Scholar]

- McCann, J.; Peppé, S. Prosody in autism spectrum disorders: A critical review. Int. J. Lang. Commun. Disord. 2009, 8, 325–350. [Google Scholar] [CrossRef] [PubMed]

- Hobson, R.P. The Cradle of Thought; Macmillan: London, UK, 2002; p. 296. [Google Scholar]

- Jónsdóttir, S.L.; Saemundsen, E.; Antonsdóttir, I.S.; Sigurdardóttir, S.; Ólason, D. Children diagnosed with autism spectrum disorder before or after the age of 6 years. Res. Autism Spectrum Disord. 2011, 5, 175–184. [Google Scholar] [CrossRef]

- Georgiades, S.; Szatmari, P.; Zwaigenbaum, L.; Bryson, S.; Brian, J.; Roberts, W.; Smith, I.; Vaillancourt, T.; Roncadin, C.; Garon, N. A prospective study of autistic-like traits in unaffected siblings of probands with autism spectrum disorder. JAMA Psychiatry 2013, 70, 42–48. [Google Scholar] [CrossRef] [PubMed]

- Santos, J.F.; Brosh, N.; Falk, T.H.; Zwaigenbaum, L.; Bryson, S.E.; Roberts, W.; Smith, I.M.; Szatmari, P.; Brian, J.A. Very Early Detection of Autism Spectrum Disorders Based on Acoustic Analysis of Pre-Verbal Vocalizations of 18-Month Old Toddlers. In Proceedings of the 2013 IEEE International Conference on Acoustics, Speech and Signal Processing, Vancouver, BC, Canada, 26 May–31 May 2013; pp. 7567–7571.

- Shue, Y.L.; Keating, P.; Vicenik, C. VoiceSauce: A program for voice analysis. J. Acoust. Soc. Am. 2009, 126, 2221. [Google Scholar] [CrossRef]

- Scassellati, B. How Social Robots Will Help Us to Diagnose, Treat, and Understand Autism. In Robotics Research: Results of the 12th International Symposium ISRR; Thrun, S., Brooks, R., Durrant-Whyte, H., Eds.; Springer: Berlin/Heidelberg, Germany, 2007; pp. 552–563. [Google Scholar]

- Robinson-Mosher, A.; Scassellati, B. Prosody Recognition in Male Infant-Directed Speech. In Proceedings of the 2004 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Sendai, Japan, 28 September–2 October 2004; Volume 3, pp. 2209–2214.

- Warren, S.F.; Gilkerson, J.; Richards, J.A.; Oller, D.K.; Xu, D.; Yapanel, U.; Gray, S. What automated vocal analysis reveals about the vocal production and language learning environment of young children with autism. J. Autism Dev. Disord. 2010, 40, 555–569. [Google Scholar] [CrossRef] [PubMed]

- Kotagal, S.; Broomall, E. Sleep in Children with Autism Spectrum Disorder. Pediatr. Neurol. 2012, 47, 242–251. [Google Scholar] [CrossRef] [PubMed]

- Richdale, A.L.; Schreck, K.A. Sleep problems in autism spectrum disorders: Prevalence, nature, & possible biopsychosocial aetiologies. Sleep Med. Rev. 2009, 13, 403–411. [Google Scholar] [PubMed]

- Richdale, A.L.; Prior, M.R. The sleep/wake rhythm in children with autism. Eur. Child Adolesc. Psychiatry 1995, 4, 175–186. [Google Scholar] [CrossRef] [PubMed]

- Limoges, E. Atypical sleep architecture and the autism phenotype. Brain 2005, 128, 1049–1061. [Google Scholar] [CrossRef] [PubMed]

- Hoban, T.F. Sleep disorders in children. Continuum: Lifelong Learn. Neurol. 2013, 19, 185–198. [Google Scholar] [CrossRef] [PubMed]

- Arbelle, S.; Ben-Zion, I. The Research Basis for Autism Intervention; Kluwer Academic Publishers: Boston, MA, USA, 2002; Volume 264, pp. 219–227. [Google Scholar]

- Diomedi, M.; Curatolo, P.; Scalise, A.; Placidi, F.; Caretto, F.; Gigli, G.L. Sleep abnormalities in mentally retarded autistic subjects: Down’s syndrome with mental retardation and normal subjects. Brain Dev. 1999, 21, 548–553. [Google Scholar] [CrossRef]

- Elia, M.; Ferri, R.; Musumeci, S.A.; del Gracco, S.; Bottitta, M.; Scuderi, C.; Miano, G.; Panerai, S.; Bertrand, T.; Grubar, J.C. Sleep in subjects with autistic disorder: A neurophysiological and psychological study. Brain Dev. 2000, 22, 88–92. [Google Scholar] [CrossRef]

- Thirumalai, S.S.; Shubin, R.A.; Robinson, R. Rapid eye movement sleep behavior disorder in children with autism. J. Child Neurol. 2002, 17, 173–178. [Google Scholar] [CrossRef] [PubMed]

- Toussaint, M.; Luthringer, R.; Schaltenbrand, N.; Carelli, G.; Lainey, E.; Jacqmin, A.; Muzet, A.; Macher, J.P. First-night effect in normal subjects and psychiatric inpatients. Sleep 1995, 18, 463–469. [Google Scholar] [PubMed]

- Le Bon, O.; Staner, L.; Hoffmann, G.; Dramaix, M.; San Sebastian, I.; Murphy, J.R.; Kentos, M.; Pelc, I.; Linkowski, P. The first-night effect may last more than one night. J. Psychiatr. Res. 2001, 35, 165–172. [Google Scholar] [CrossRef]

- Adkins, K.W.; Goldman, S.E.; Fawkes, D.; Surdyka, K.; Wang, L.; Song, Y.; Malow, B.A. A pilot study of shoulder placement for actigraphy in children. Behav. Sleep Med. 2012, 10, 138–147. [Google Scholar] [CrossRef] [PubMed]

- Acebo, C.; Sadeh, A.; Seifer, R.; Tzischinsky, O.; Wolfson, A.R.; Hafer, A.; Carskadon, M.A. Estimating sleep patterns with activity monitoring in children and adolescents: How many nights are necessary for reliable measures? Sleep 1999, 22, 95–103. [Google Scholar] [PubMed]

- Littner, M.; Kushida, C.A.; Anderson, W.M.; Bailey, D.; Berry, R.B.; Davila, D.G.; Hirshkowitz, M.; Kapen, S.; Kramer, M.; Loube, D.; et al. Practice parameters for the role of actigraphy in the study of sleep and circadian rhythms: an update for 2002. Sleep 2003, 26, 337–341. [Google Scholar] [PubMed]

- Wiggs, L.; Stores, G. Sleep patterns and sleep disorders in children with autistic spectrum disorders: Insights using parent report and actigraphy. Dev. Med. Child Neurol. 2004, 46, 372–380. [Google Scholar] [CrossRef] [PubMed]

- Pollak, C.P.; Tryon, W.W.; Nagaraja, H.; Dzwonczyk, R. How accurately does wrist actigraphy identify the states of sleep and wakefulness? Sleep 2001, 24, 957–965. [Google Scholar] [PubMed]

- Ancoli-Israel, S.; Cole, R.; Alessi, C.; Chambers, M.; Moorcroft, W.; Pollak, C.P. The role of actigraphy in the study of sleep and circadian rhythms. Sleep 2003, 26, 342–392. [Google Scholar] [PubMed]

- Kushida, C.A.; Chang, A.; Guilleminault, C.; Carrillo, O.; Dement, W.C. Comparison of actigraphic, polysomnographic, and subjective assessment of sleep parameters in sleep-disordered patients. Sleep Med. 2001, 2, 389–396. [Google Scholar] [CrossRef]

- Oyane, N.M.F.; Bjorvatn, B. Sleep disturbances in adolescents and young adults with autism and Asperger syndrome. Autism Int. J. Res. Pract. 2005, 9, 83–94. [Google Scholar] [CrossRef] [PubMed]

- Hering, E.; Epstein, R.; Elroy, S.; Iancu, D.R.; Zelnik, N. Sleep patterns in autistic children. J. Autism Dev. Disord. 1999, 29, 143–147. [Google Scholar] [CrossRef] [PubMed]

- Nakatani, M.; Okada, S.; Shimizu, S.; Mohri, I.; Ohno, Y.; Taniike, M.; Makikawa, M. Body movement analysis during sleep for children with ADHD using video image processing. In Proceedings of the Annual International Conference of the IEEE Engineering in Medicine and Biology Society, Osaka, Japan, 3–7 July 2013; Volume 2013, pp. 6389–6392.

- Sitnick, S.L.; Goodlin-Jones, B.L.; Anders, T.F. The use of actigraphy to study sleep disorders in preschoolers: some concerns about detection of nighttime awakenings. Sleep 2008, 31, 395–401. [Google Scholar] [PubMed]

- Prakash, P.; Kuehl, P.; McWilliams, B.; Rubenthaler, S.; Schnell, E.; Singleton, G.; Warren, S. Sensors and Instrumentation for Unobtrusive Sleep Quality Assessment in Autistic Children. In Proceedings of the Annual International Conference of the IEEE Engineering in Medicine and Biology Society, Chicago, IL, USA, 26–30 August 2014; Volume 2014, pp. 800–803.

- Wai, A.A.P.; Yuan-Wei, K.; Fook, F.S.; Jayachandran, M.; Biswas, J.; Cabibihan, J.J. Sleeping Patterns Observation for Bedsores and Bed-Side Falls Prevention. In Proceedings of the 2009 Annual International Conference of the IEEE Engineering in Medicine and Biology Society, Minneapolis, MN, USA, 3–6 September 2009; pp. 6087–6090.

- Grice, S.J.; Halit, H.; Farroni, T.; Baron-Cohen, S.; Bolton, P.; Johnson, M.H. Neural correlates of eye-gaze detection in young children with autism. Cortex 2005, 41, 342–353. [Google Scholar] [CrossRef]

© 2016 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC-BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Cabibihan, J.-J.; Javed, H.; Aldosari, M.; Frazier, T.W.; Elbashir, H. Sensing Technologies for Autism Spectrum Disorder Screening and Intervention. Sensors 2017, 17, 46. https://doi.org/10.3390/s17010046

Cabibihan J-J, Javed H, Aldosari M, Frazier TW, Elbashir H. Sensing Technologies for Autism Spectrum Disorder Screening and Intervention. Sensors. 2017; 17(1):46. https://doi.org/10.3390/s17010046

Chicago/Turabian StyleCabibihan, John-John, Hifza Javed, Mohammed Aldosari, Thomas W. Frazier, and Haitham Elbashir. 2017. "Sensing Technologies for Autism Spectrum Disorder Screening and Intervention" Sensors 17, no. 1: 46. https://doi.org/10.3390/s17010046

APA StyleCabibihan, J.-J., Javed, H., Aldosari, M., Frazier, T. W., & Elbashir, H. (2017). Sensing Technologies for Autism Spectrum Disorder Screening and Intervention. Sensors, 17(1), 46. https://doi.org/10.3390/s17010046