3.2. Bayesian Inference

To continuously update the belief about the potential source location, observed data are incorporated using Bayesian inference, which plays a central role in guiding the movement decisions of the searching agent. As in [

15], the posterior probability density function (PDF) of the state vector

given a set of concentration measurements

is estimated as

In this formulation, the state space

includes the source parameters such as the 2D source location and plume characteristics, typically

, and optionally including parameters like

Q,

, and

. In the grid-based approach used in this work, the belief is represented as a discrete probability distribution over a finite grid of source hypotheses, where each cell

j corresponds to a candidate source location and stores a probability

such that

, where

N represents the total number of cells. At each time step, the prior belief

is updated by incorporating the new set of observations. For each grid cell (i.e., each hypothesis

), the likelihood of the observation given that hypothesis is computed using a Gaussian model:

Here,

k is the number of measurements obtained up to time

t,

is the position of the

i-th observation,

is the predicted concentration at

using the Gaussian plume model with source hypothesis

and

denotes the variance of measurement errors proportional to the modeled concentration. After computing the likelihoods for all grid cells, the belief is updated via Bayes’ rule by re-normalizing:

This grid-based inference approach provides a spatially explicit and interpretable representation of uncertainty.

3.3. Cognitive Movement Decision

The decision process guides the agent toward the most informative positions, defined as those that minimize the expected uncertainty about the source location, quantified with the so-called information utilities [

16]. At each admissible future position

u, the expected gain in information is computed as the difference between the entropy of the current belief map and the expected entropy obtained from a future belief map updated with a hypothetical observation at that position. The actual uncertainty is computed from the belief map at time step

t using Shannon’s entropy

.

To compute the expected entropy, a discrete set of

potential observations

is taken. Each observation

is associated with a probability of occurring, denoted as

, which is estimated using the plume dispersion model and the actual belief. For each potential observation

a future belief map

is computed using Bayes’ rule (Equation (

2)), followed by its entropy computed with Equation (

5). The total expected entropy is obtained by weighting each future entropy with its corresponding observation probability:

The expected uncertainty reduction at each possible movement location is then determined from the difference between the entropy of the actual belief map and the expected (future) entropy value as in

The agent selects the position u that yields the highest expected uncertainty reduction, which is then used as the next movement goal.

3.4. Spatial Analysis of Entropy Reduction

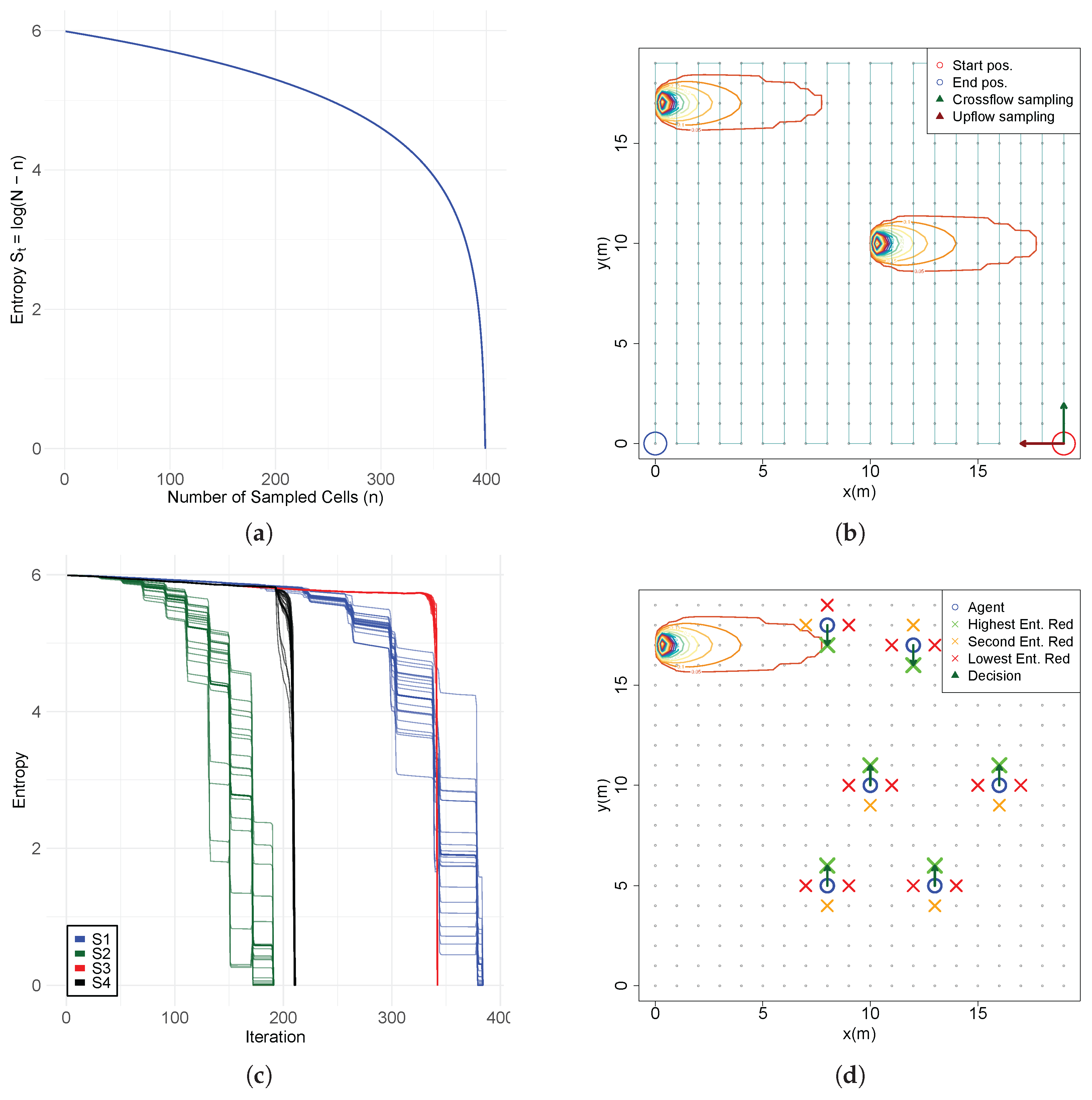

Consider a simplified case where the agent has no model of the environment, no sensor to measure chemical concentrations, and the source is situated in one of the cells. The belief starts uniform with each cell having a probability of containing the source, resulting in a maximum entropy value of .

The agent samples each cell sequentially, setting the probability to zero when the source is not found and renormalizing the remaining probabilities, with the sum of all values equaling one. The belief distribution becomes increasingly concentrated in the unsampled cells but remains uniform although over fewer cells with the entropy decreasing with a logarithmic decay (

Figure 1a) following the equation:

where

n represents the cells already sampled. This means that entropy decreases slowly during the first iterations and more sharply as the number of remaining cells reduces. When the source is found, the probability is concentrated in a single grid cell with the value of 1 resulting in an entropy value

. While the computational demands are minimal, this approach is highly inefficient requiring direct exclusion of nearly all incorrect cells to converge on the true source location.

In contrast, a more informed approach such as Infotaxis leverages chemical concentration measurements in combination with a physical dispersion model, such as the Gaussian plume model, to update the belief map. Here, the entropy reduction evolves differently, where instead of explicitly eliminating cells, the agent observes values that are related to the source location through a probability distribution. The likelihood of each observation adjust the probabilities across many cells simultaneously, concentrating the belief around areas consistent with the observed data and the plume dynamics. This leads to faster and more informative entropy reduction, even when the true source cell is not directly observed.

However, in this situation, a closed-form expression of the entropy reduction cannot be obtained. Chemical observations are a function of the source parameters and spatial configuration, containing significant noise originating from multiple sources of uncertainty such as sensor performance and environmental conditions. The Bayesian inference process relies non-linearly on the prior belief and the likelihood function, which is determined by a probability distribution and the plume dispersion model. Also, the surprise of each observation, which depends on the spatial phenomena and the mismatch between the predicted and real measurements, significantly influences the evolution of entropy during the search, decreasing faster in more certain environments and slower when the disturbances are meaningful.

Assume an informed agent capable of estimating the belief distribution of the source location from chemical observations systematically samples each cell in a

grid (1 m resolution). These measurements are sequentially used to update the source belief. The agent begins at the bottom-right corner (red circle) and traverses the space in either a crossflow direction (green arrow) or upflow direction (red arrow), following a serpentine sampling pattern until it reaches the source (

Figure 1b). The environment contains a Gaussian chemical plume, with the source located either in the top-left corner (0, 17) or at the center (10, 10) and flow aligned with the

x axis. Scenarios S1 and S2 implement crossflow movement, corresponding to the corner source (S1) and central source (S2), respectively. Scenarios S3 and S4 follow upflow trajectories, with sources at the same respective locations. The analysis of

Figure 1c reveals that crossflow trajectories (S1, S2) generally result in a faster entropy reduction compared to upflow movements (S3, S4). Notably, S1 shows a more rapid early decline than S3. However, S3 ultimately achieves lower entropy levels sooner, due to higher chemical concentrations encountered near the source. For centrally located sources, S2 consistently yields faster and more substantial uncertainty reduction than S4. These entropy dynamics are significantly affected by sensor noise, modeled as normally distributed, which introduces variability in the belief updates. This highlights the complexity of predicting entropy evolution, driven by the interplay among sensor inaccuracies, belief update processes, and the spatial structure of the chemical distribution.

Now consider a scenario in which the agent possesses a highly uncertain belief about the source location and employs cognitive decision-making by evaluating the expected entropy reduction at cardinal directions to choose its next movement position. With the source located in the top-left corner (0, 17), an analysis of expected information gain across the search space (

Figure 1d) reveals that crossflow decisions consistently yield the highest expected entropy reduction, whereas upflow and downflow movements are comparatively less informative. These results corroborate the findings obtained from sequential sampling scenarios, reinforcing the conclusion that crossflow movement in the direction of the plume provides the most valuable information gain. This behavior aligns with patterns commonly observed in cognitive observation-based source localization (OSL) strategies, where agents intensively explore the environment perpendicular to the flow to increase information gain and improve the likelihood of plume interception.

This work aims to study entropy reduction in an environment containing a chemical plume, independently of any specific search strategy for source localization. In typical cognitive OSL implementations, agents evaluate expected information gain only at a limited number of admissible positions near their current location as in the previous example. This localized decision-making approach constrains the understanding of where high-gain regions truly lie within the environment. To address this limitation, the present study assumes complete freedom in the sampling process, computing entropy reduction (Equation (

7)) at every point in the environment, with particular focus on the active plume region and its surrounding areas.

The study will start by analyzing how measurement positions impact the shape of the posterior probability belief and its associated uncertainty. In a second stage, gains of information are evaluated across the entire search space, followed by a focused analysis within the active region of the plume. An important subject of interest lies on comprehending how variations in the prior belief influence the spatial distribution of entropy reductions. A third stage consists on examining the borders of the active region, that exhibit distinct and structured patterns of information gains.

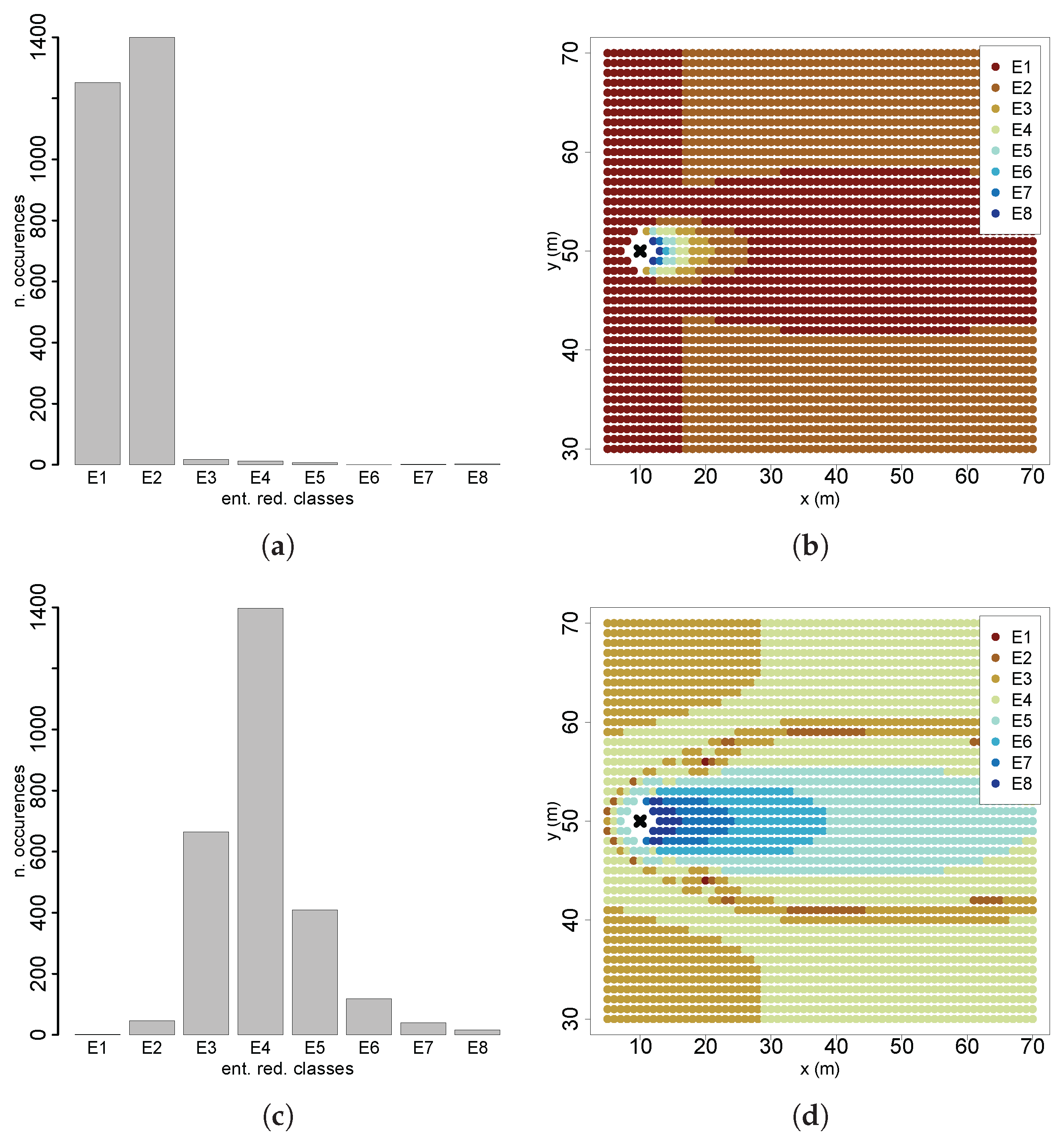

Applying these computations to all candidate future positions, the study produces a comprehensive spatial map of information gains. However, a limitation of classical entropy is its inability to consider the spatial structure since it only reasons the probability distribution, not how probabilities are arranged in space. As a result, spatially distinct distributions with identical probabilities yield the same entropy value. Furthermore, as the study will show, entropy reductions are not uniformly distributed across the search space due to the influence of the plume structure and odor dynamics while also being spatially correlated with nearby cells containing similar entropy variations. Hence, a final stage will consist of applying a spatial entropy framework proposed by Altieri et al. [

17] on the entropy reduction map, which incorporates spatial relationships into entropy computation. The goal is to investigate the diversity of information gains and quantify their dependence on spatial distributions. This enables a more nuanced analysis of how entropy reduction varies spatially, allowing us to better understand how it shapes movement decisions in cognitive searches.

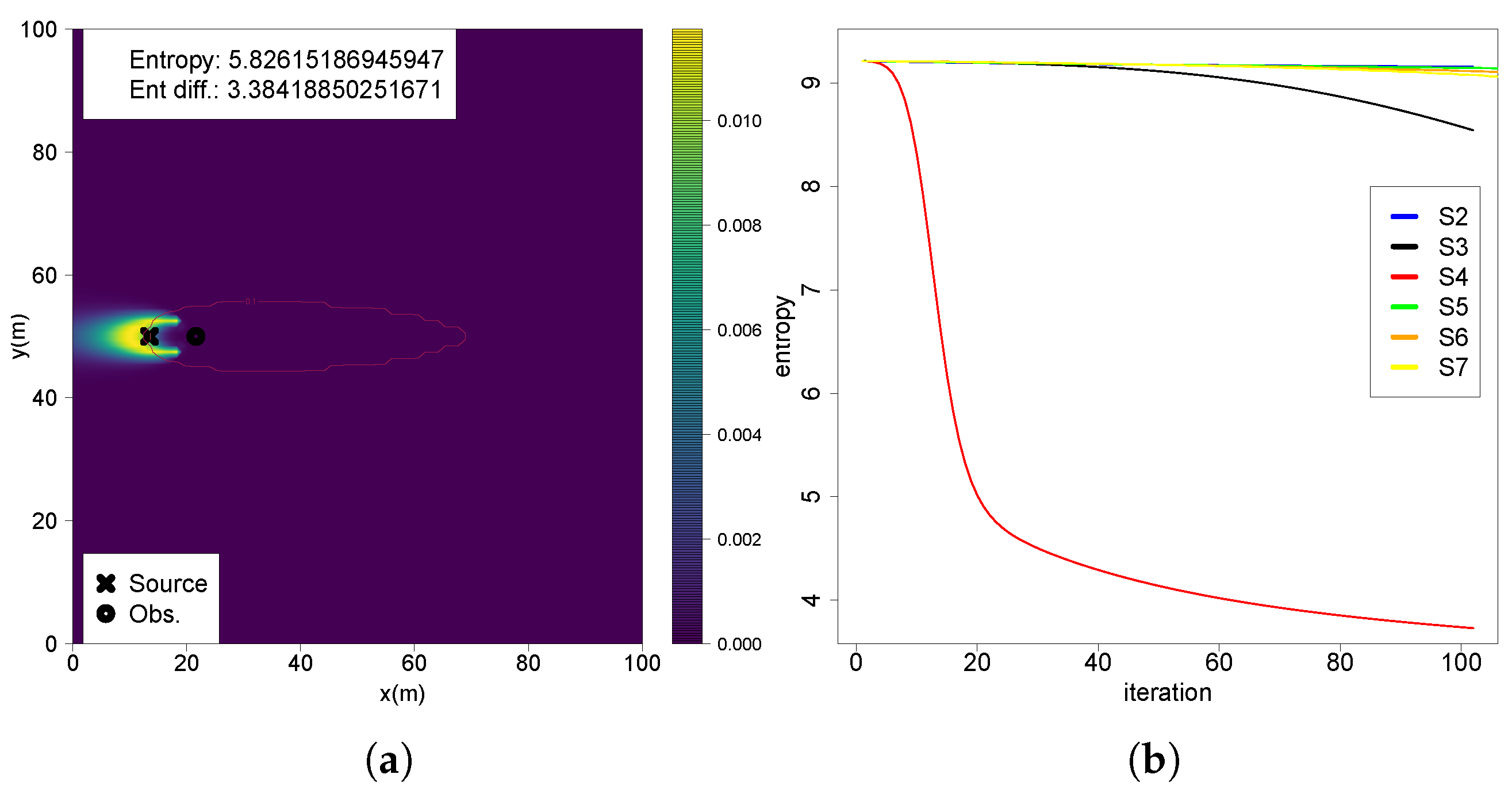

3.5. Evaluation Process

The belief is discretized using a grid resolution of m, while the entropy difference map is configured with a lower resolution of m. A circular region with a 2 m diameter centered on source position is employed to avoid entropy reduction calculations on top of the source. The detection threshold is defined as 0.1 μg/m3. For a comprehensive analysis, in this study real entropy reductions are computed instead of the expected values; i.e, a single measurement is taken from the real plume model at each admissible action, assuming a probability of detection equaling to 1.

Since spatial entropy measurement requires categorical data, the continuous entropy differences are classified into eight equally separated intervals, between the minimum and maximum entropy (denoted between “E1” and “E8”). From the literature, smaller co-occurrence distances are deemed most relevant; therefore, partial mutual information and partial residual entropy are computed for five distance intervals, namely , , , and , , where denotes the maximum diagonal distance of the square searching region. These distances support analysis on both local scales, as in cognitive decision-making, where the agent assesses nearby information gains and global scales which encompass the entire searching area. A significant number of testing scenarios are evaluated with the prior belief updated with the following.

- (S1)

Initial uniform belief with equal probability across all cells (). No observations are taken;

- (S2)

A single observation taken far from the source, at

,

, below the detection threshold (

Figure 2a);

- (S3)

A single observation taken far from the source, at

,

, above the detection threshold with a concentration value of

(

Figure 2b);

- (S4)

A single observation taken near the source, at

,

, above the detection threshold with a concentration value of

(

Figure 2c);

- (S5)

A sequence of observations across the active region and far from the source

, with three (S5.1), five (S5.2

Figure 2d) and ten (S5.3) observations;

- (S6)

A sequence of observations with two crossings over the active region and far from the source, and ;

- (S7)

A diagonal sequence of five observations across the active region, starting at

,

with an angle

relative to the flow (

Figure 2e);

- (S8)

Measurements obtained across plume borders

at a downflow distance

(

Figure 2f).

Figure 2.

Measurement positions of the tested scenarios: (a) S2; (b) S3; (c) S4; (d) S5.2; (e) S7; (f) S8.

Figure 2.

Measurement positions of the tested scenarios: (a) S2; (b) S3; (c) S4; (d) S5.2; (e) S7; (f) S8.

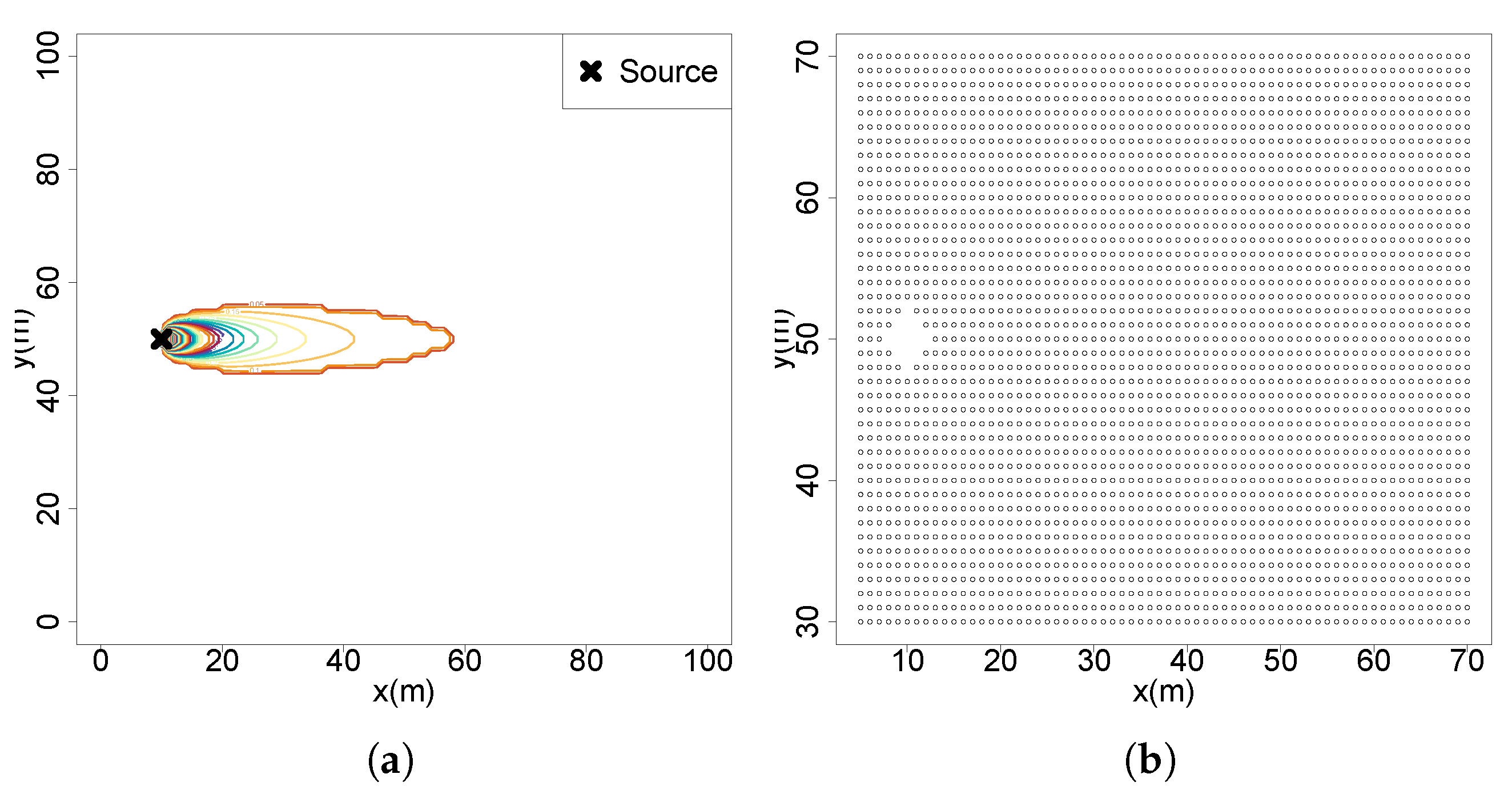

This study is performed in a simulated searching area with 100 m × 100 m dimensions and is discretized into a 2D grid of

m making a total of 100,000 N positions. The GPM is used to generate a plume with fixed parameters that is approximately

of the searching area with the flow moving in the direction of the x axis with a speed of

m/s, emission rate

Q of 30 g/s and dispersion parameters

and

equal to 0.5. The testing environment and the positions of future gains of information are shown in

Figure 3a and

Figure 3b, respectively. The simulations are designed with R programming language (version 4.5.1) and processed in a computer equipped with a Ryzen 7 5700x processor, 32 GB DDR4 RAM and a Nvidia GTX1660 graphics card.