Handwritten Digit Recognition with Flood Simulation and Topological Feature Extraction

Abstract

1. Introduction

2. Problem Formulation

3. Proposed Method—Directional Flood Feature Extraction Algorithm

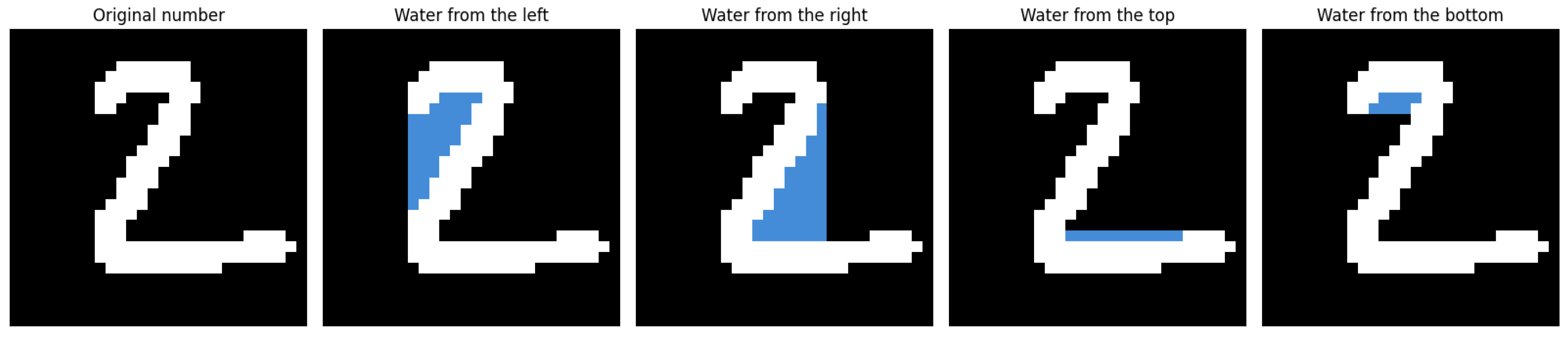

3.1. Directional Flood Simulation for Feature Extraction

3.1.1. Method Overview

3.1.2. Directional Flood Feature Extraction

3.1.3. Advantages of the Proposed Approach

- The flooding process mimics natural fluid behavior, effectively capturing topological structures such as loops (as in the digit ‘8’) and open gaps (as in ‘9’).

- Multi-directional flooding reduces sensitivity to rotation and minor distortions.

- The BFS-based implementation is computationally efficient with time complexity linear in the number of image pixels.

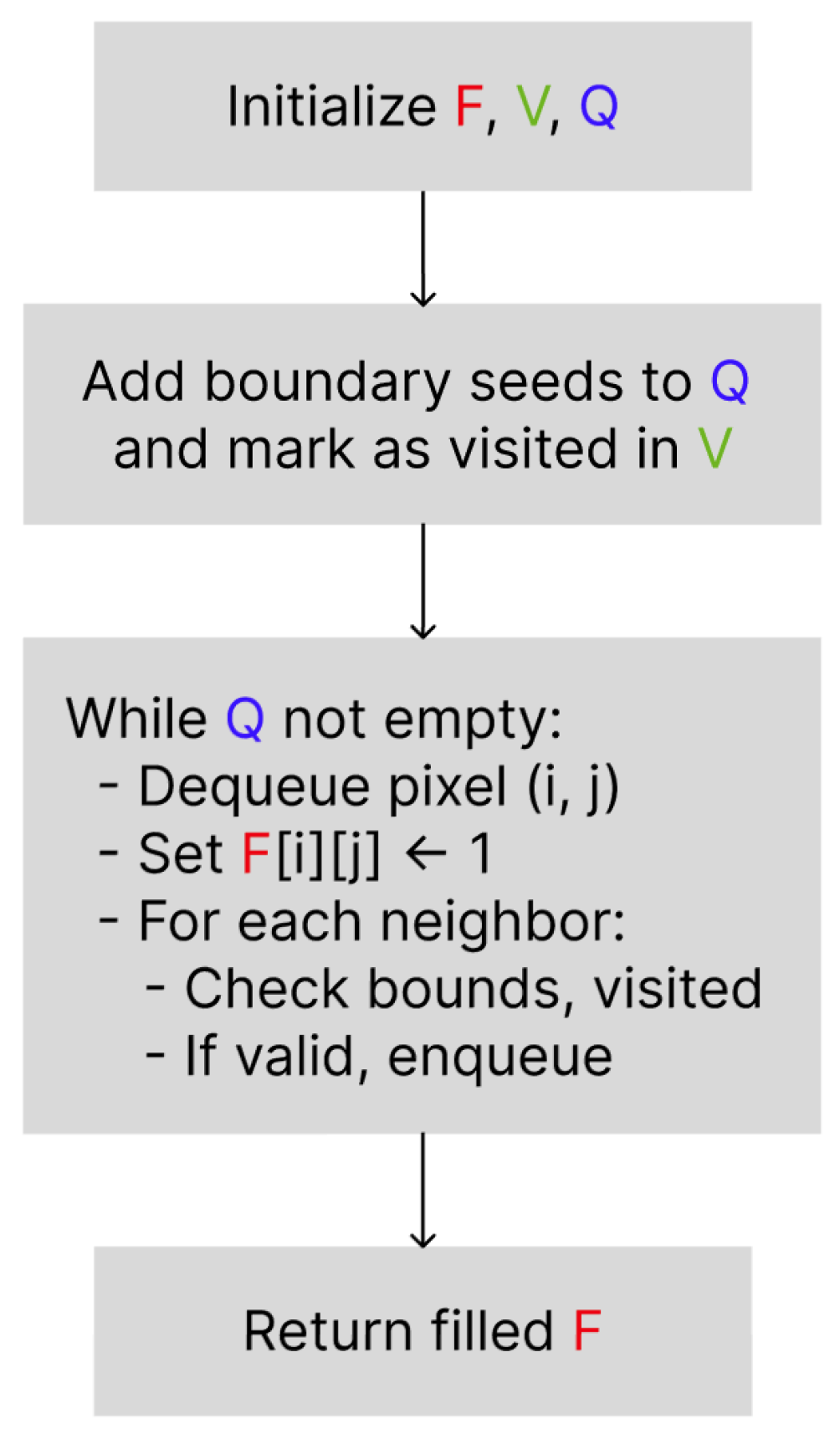

3.2. Directional Flood Feature Extraction Algorithm

- Initialization

- // copy of the original matrix;

- queue of initial seed pixels;

- visitation matrix (initialized to false).

- Seed SelectionAll selected seed pixels are marked as visited in V.

- Flood Propagation

- (a)

- While :

- i.

- Dequeue ,

- ii.

- Set // fill pixel

- iii.

- Determine neighboring positions based on the allowed flood direction:where (right) for left-side flooding, (left) for right-side flooding, etc.

- iv.

- For each neighbor , if the following apply:

- is within bounds,

- ,

- ,

- and (if no backtracking) the move is away from the origin side,

then:- Enqueue ,

- Mark .

- Output: Return the filled matrix F.

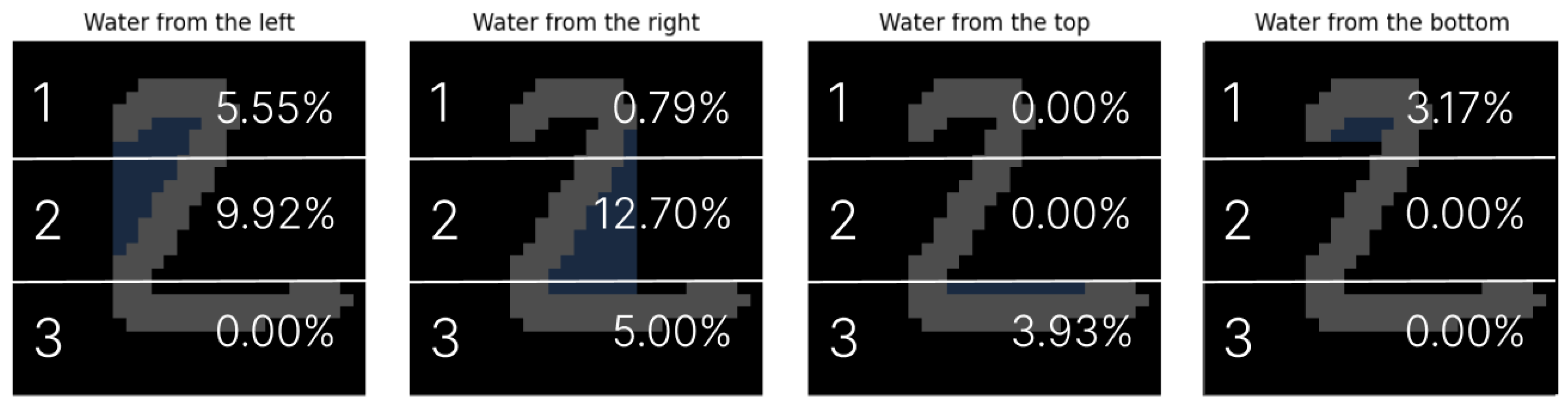

3.3. Segmentation

3.4. Extension of the Method for Digits with Enclosed Regions

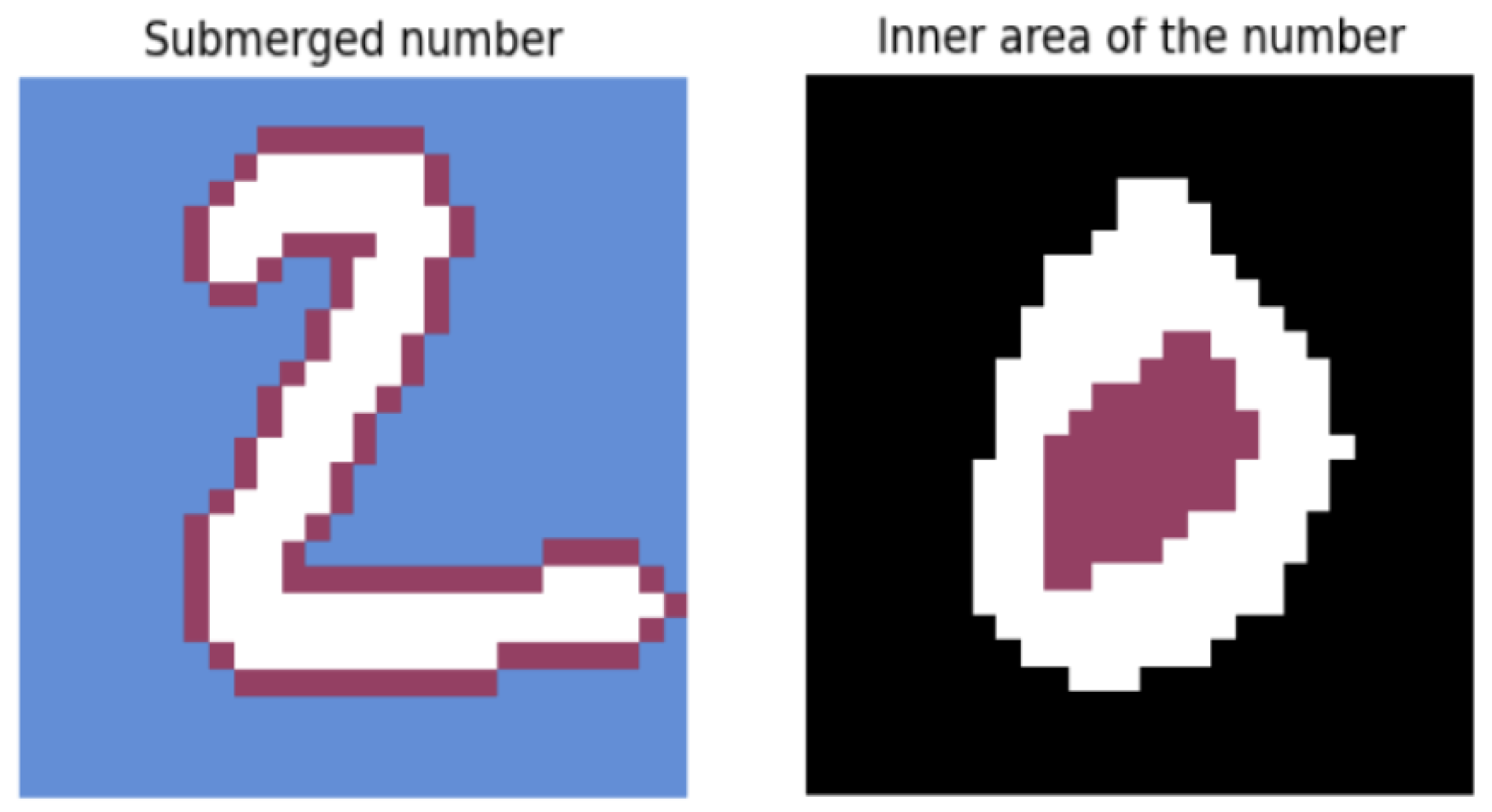

- Inner Area Simulation: To detect the internal regions of the digit, an additional iteration of the BFS algorithm was performed with backward propagation enabled. This simulates water being poured inside the enclosed components of the digit. A graphical representation of this concept is provided in Figure 4. The resulting flooded region is divided into N horizontal segments, and the percentage of filled pixels is calculated for each segment.

- Digit Perimeter Estimation: The second parameter quantifies the degree to which the digit is surrounded by water. This is achieved by simulating the digit’s immersion in a water basin and computing its perimeter. The process is formalized as follows:Let be the binary matrix representing the digit, and let represent the enclosed regions identified in the previous step. The combined matrix C is defined asThe normalized perimeter P is computed aswhere the following apply:

- denotes the set of 4-connected neighbors (excluding out-of-bounds positions),

- is the indicator function, which is equal to 1 if the condition is satisfied and 0 otherwise,

- the result is normalized by the total number of pixels in the matrix, .

A graphical representation of this concept is provided in Figure 4. - Segmented Pixel Density: As the third feature, the original binary digit matrix is divided into N horizontal segments. In each segment, the number of digit pixels is counted and normalized by the total number of pixels in that segment, yielding a measure of pixel density.

3.5. Classification Phase

3.5.1. Hierarchical Partitioning via Binary Trees

3.5.2. Ensemble Forest Construction

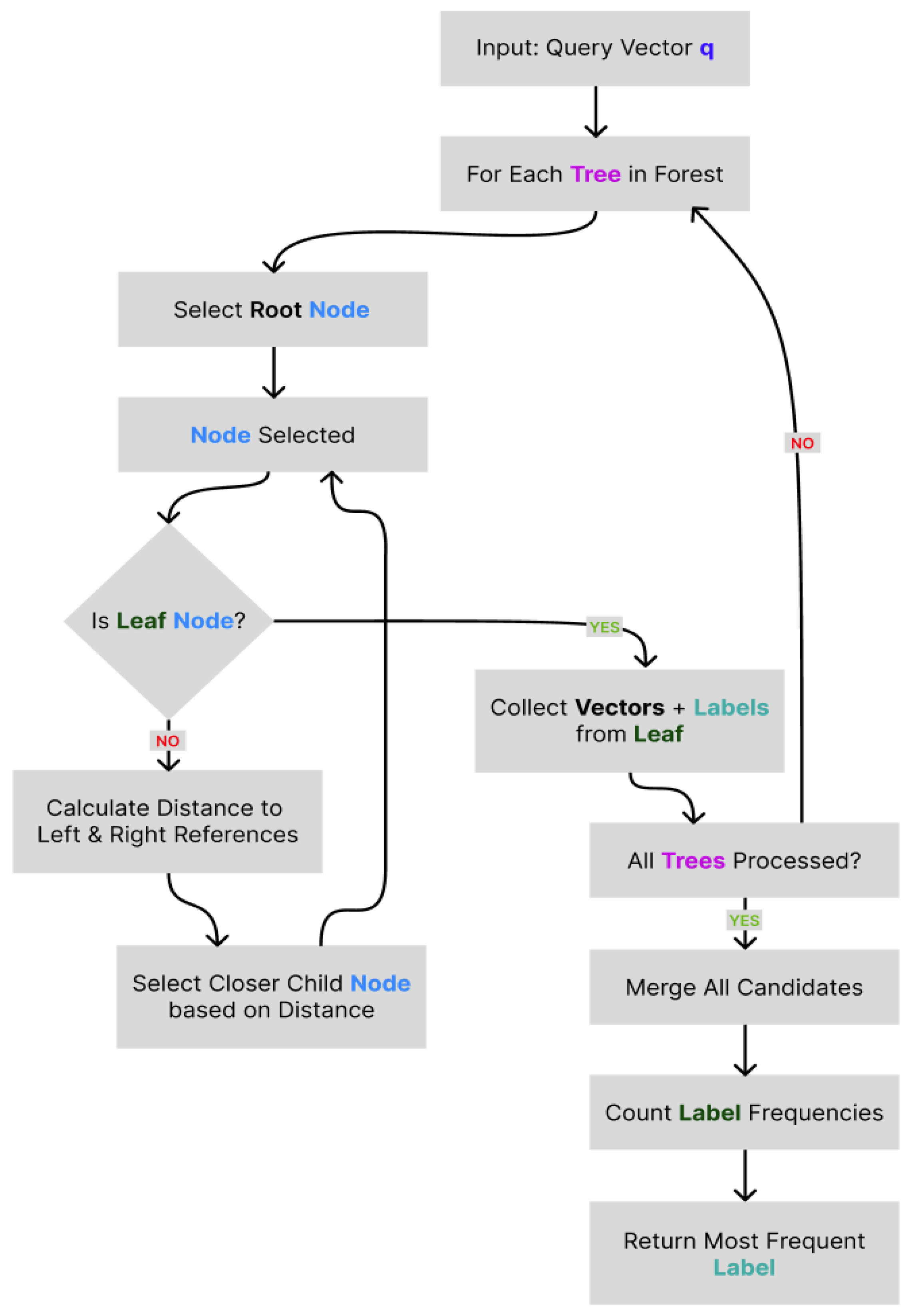

3.5.3. Querying Process

3.5.4. Label Assignment via Majority Voting

4. Results

4.1. Methodology

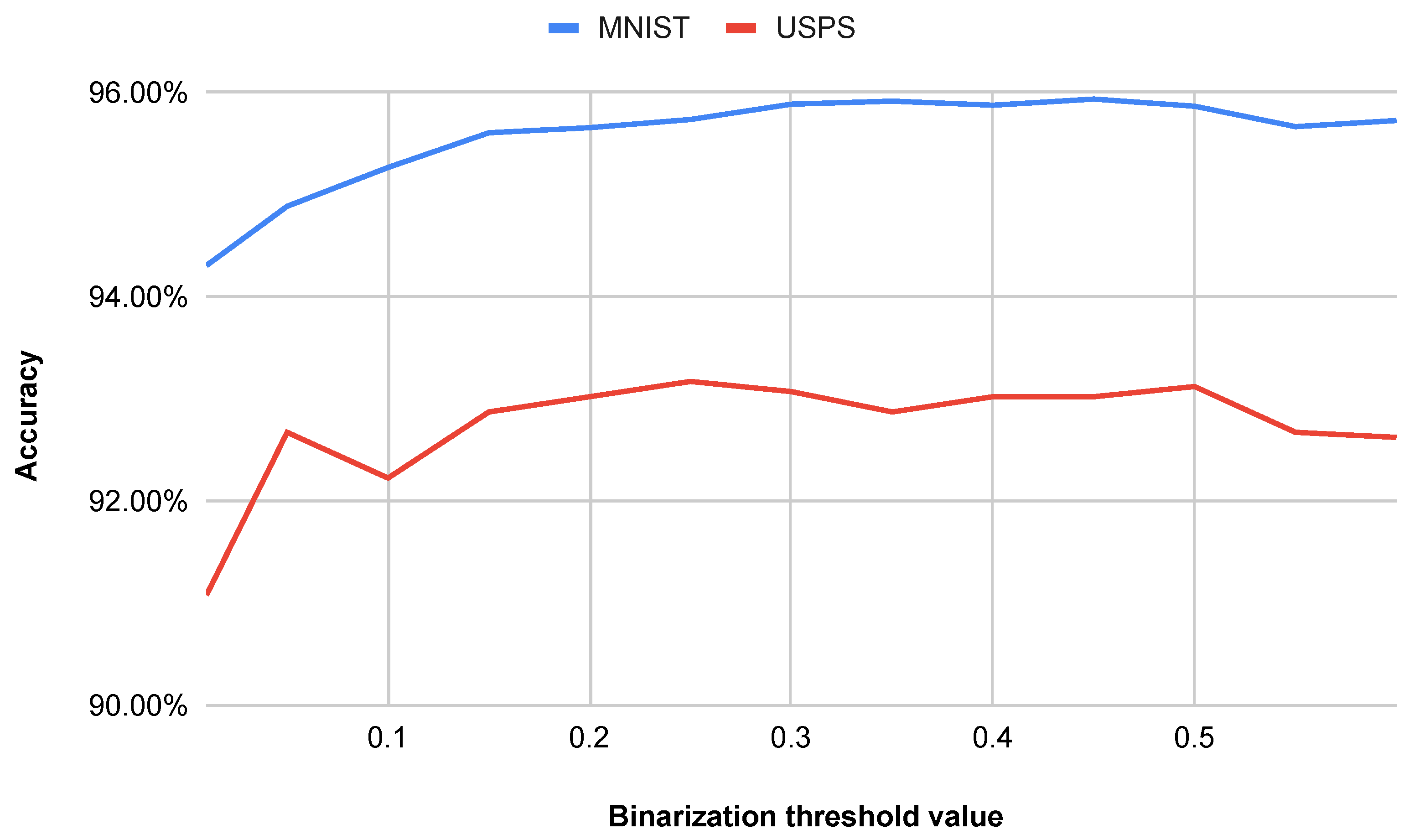

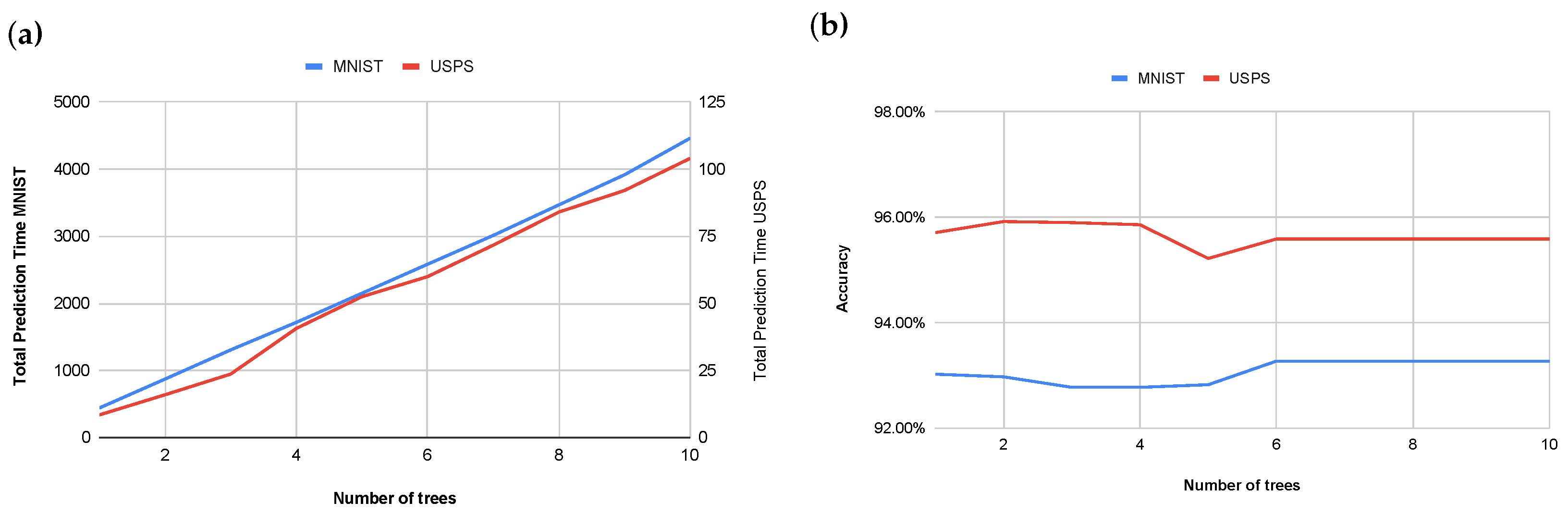

4.2. Performance Comparison Across Initial Parameters

4.3. Benchmarking Against k-NN

- Dimensionality reduction with minimal accuracy loss (best k-NN accuracy was taken):

- For N = 5, our method reduces the feature vector length from 784 (k-NN) to 31 ( reduction), while the accuracy decreases by only .

- For N = 7, the vector length is reduced to 43 ( reduction) with an accuracy drop of .

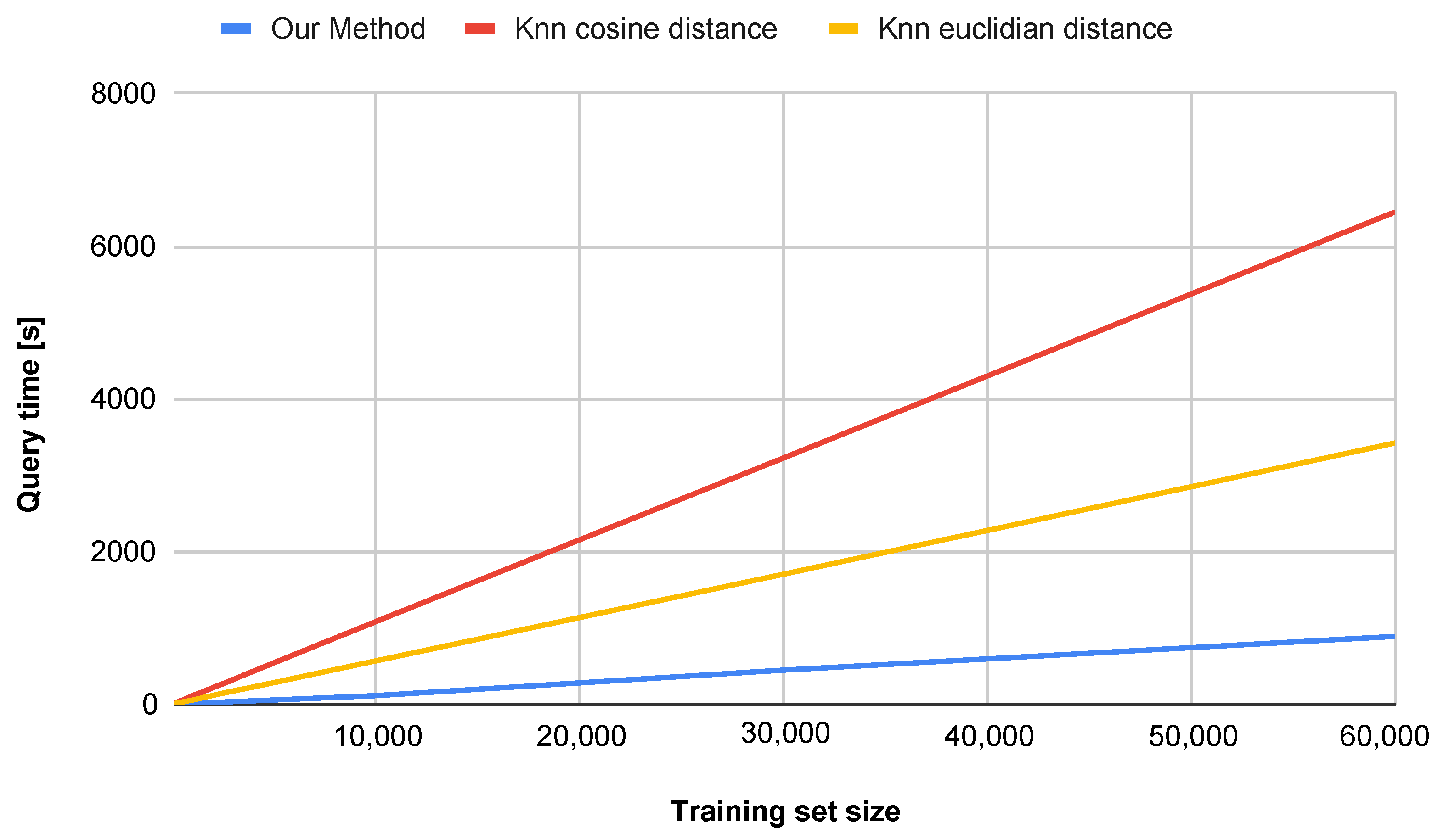

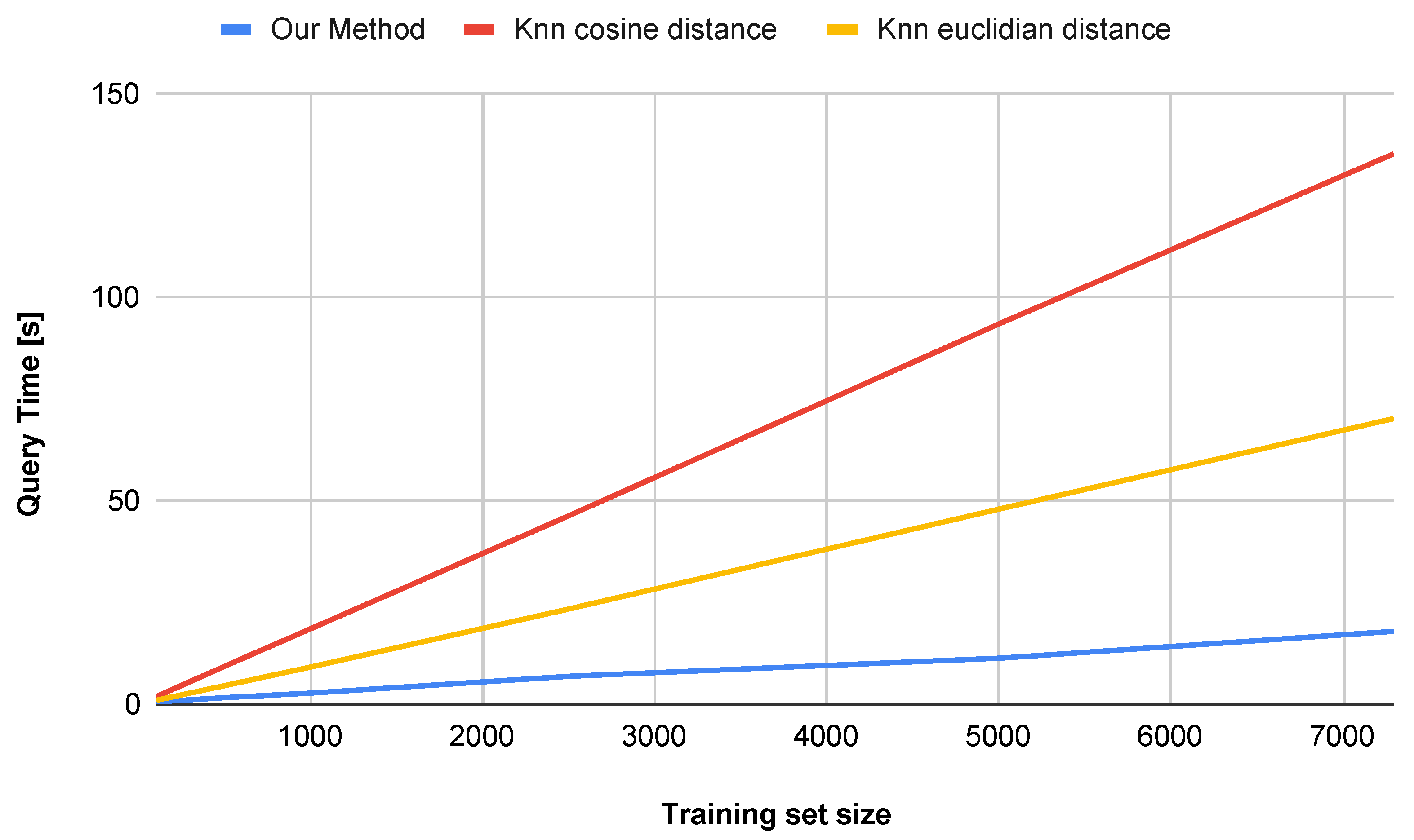

- Substantial computational efficiency:

- Compared to k-NN (Euclidean), our approach is faster ( s vs. s) on the MNIST dataset and faster ( s vs. s) on the USPS dataset.

- Compared to k-NN (Cosine), our approach is faster ( s vs. s) on the MNIST dataset and faster ( s vs. s) on the USPS dataset.

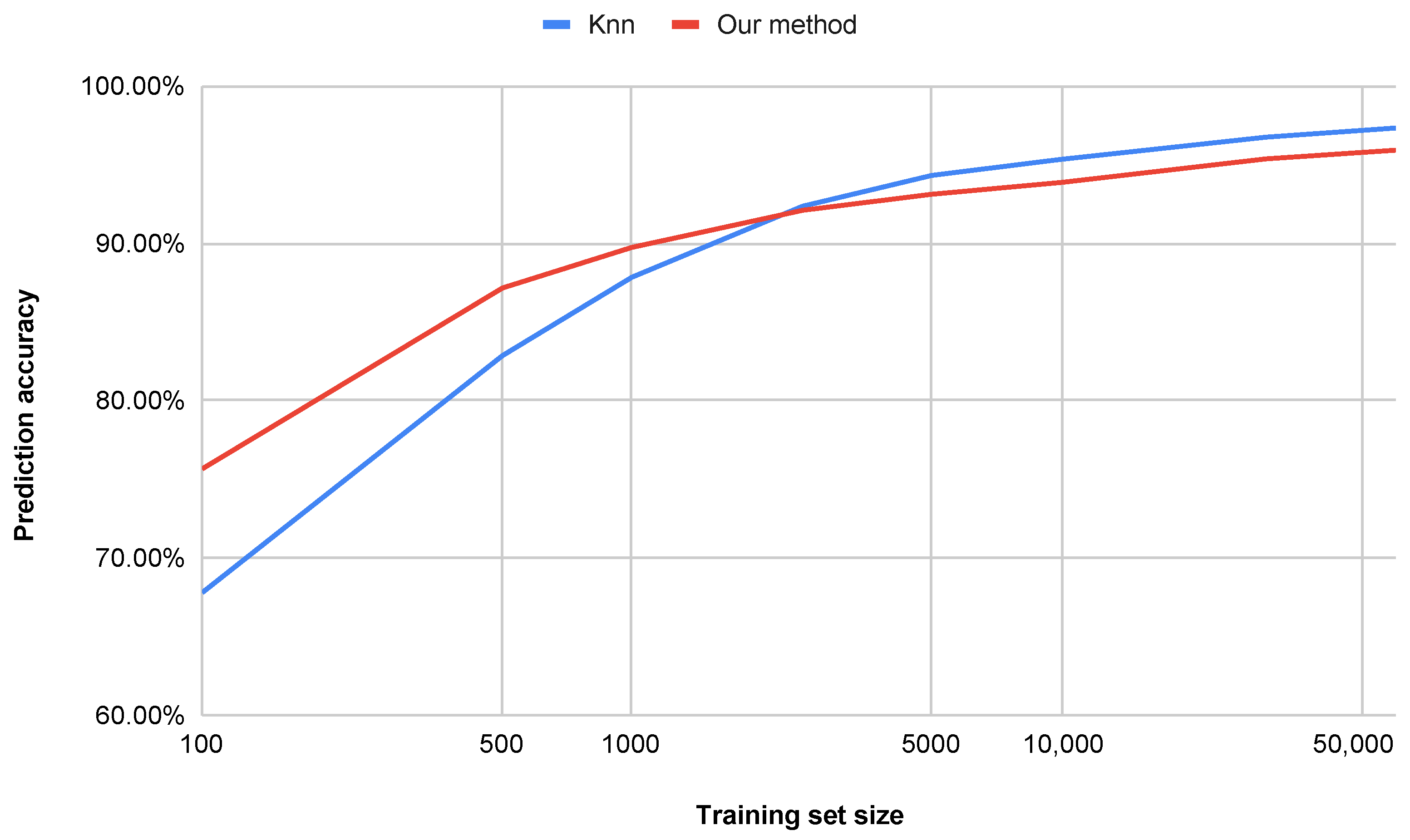

- Accuracy vs. Dataset Size (Figure 8):

- The proposed method (water simulation) maintains consistently high accuracy, even with small training sets (e.g., achieving strong performance with only 500 samples), whereas the k-NN method requires more than 1100 samples to reach comparable accuracy.

- With a training set of 100 samples, our approach outperforms k-NN by percentage points, indicating superior generalization capability in low-data regimes.

- Our method demonstrates sub-linear runtime scaling as the dataset size increases, in contrast to the k-NN method (both Euclidean and Cosine similarity variants), which exhibits near-quadratic growth.

- At 10,000 samples, our method achieves a runtime reduction of compared to k-NN with Cosine similarity and compared to k-NN with Euclidean distance.

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Morooka, E.V.; Omae, Y.; Hämäläinen, M.; Takahashi, H. Benchmarking Point Cloud Feature Extraction with Smooth Overlap of Atomic Positions (SOAP): A Pixel-Wise Approach for MNIST Handwritten Data. AppliedMath 2025, 5, 72. [Google Scholar] [CrossRef]

- Mukhamediev, R.I. State-of-the-Art Results with the Fashion-MNIST Dataset. Mathematics 2024, 12, 3174. [Google Scholar] [CrossRef]

- Ranga, D.; Prajapat, S.; Akhtar, Z.; Kumar, P.; Vasilakos, A.V. Hybrid quantum–classical neural networks for efficient MNIST binary image classification. Mathematics 2024, 12, 3684. [Google Scholar] [CrossRef]

- Wen, Y.; Ke, W.; Sheng, H. Improved Localization and Recognition of Handwritten Digits on MNIST Dataset with ConvGRU. Appl. Sci. 2024, 15, 238. [Google Scholar] [CrossRef]

- Ghimire, D.; Kil, D.; Kim, S.h. A survey on efficient convolutional neural networks and hardware acceleration. Electronics 2022, 11, 945. [Google Scholar] [CrossRef]

- Nemavhola, A.; Chibaya, C.; Viriri, S. A Systematic Review of CNN Architectures, Databases, Performance Metrics, and Applications in Face Recognition. Information 2025, 16, 107. [Google Scholar] [CrossRef]

- Shanmuganathan, M.; Nalini, T. A Critical Scrutiny of ConvNets (CNNs) and Its Applications. SN Comput. Sci. 2022, 3, 460. [Google Scholar] [CrossRef]

- Zhao, X.; Wang, L.; Zhang, Y.; Han, X.; Deveci, M.; Parmar, M. A review of convolutional neural networks in computer vision. Artif. Intell. Rev. 2024, 57, 99. [Google Scholar] [CrossRef]

- Brociek, R.; Pleszczyński, M. Differential Transform Method and Neural Network for Solving Variational Calculus Problems. Mathematics 2024, 12, 2182. [Google Scholar] [CrossRef]

- Brociek, R.; Pleszczyński, M. Differential Transform Method (DTM) and Physics-Informed Neural Networks (PINNs) in Solving Integral–Algebraic Equation Systems. Symmetry 2024, 16, 1619. [Google Scholar] [CrossRef]

- Lecun, Y.; Bottou, L.; Bengio, Y.; Haffner, P. Gradient-Based Learning Applied to Document Recognition. Proc. IEEE 1998, 86, 2278–2324. [Google Scholar] [CrossRef]

- Grover, D.; Toghi, B. MNIST dataset classification utilizing k-NN classifier with modified sliding-window metric. In Advances in Computer Vision: Proceedings of the 2019 Computer Vision Conference (CVC); Springer: Cham, Switzerland, 2020; Volume 21, pp. 583–591. [Google Scholar]

- Belongie, S.; Malik, J.; Puzicha, J. Shape matching and object recognition using shape contexts. IEEE Trans. Pattern Anal. Mach. Intell. 2002, 24, 509–522. [Google Scholar] [CrossRef]

- Makkar, T.; Kumar, Y.; Dubey, A.K.; Rocha, A.; Goyal, A. Analogizing time complexity of KNN and CNN in recognizing handwritten digits. In Proceedings of the 2017 Fourth International Conference on Image Information Processing (ICIIP), Shimla, India, 21–23 December 2017; pp. 1–6. [Google Scholar] [CrossRef]

- Karic, M.; Martinovic, G. Improving offline handwritten digit recognition using concavity-based features. Int. J. Comput. Commun. Control 2013, 8, 220–234. [Google Scholar] [CrossRef]

- Decoste, D.; Cristianini, N. Training Invariant Support Vector Machines. Mach. Learn. 2003, 46, 161–190. [Google Scholar] [CrossRef]

- Baldominos, A.; Saez, Y.; Isasi, P. A Survey of Handwritten Character Recognition with MNIST and EMNIST. Appl. Sci. 2019, 9, 3169. [Google Scholar] [CrossRef]

- Chang, Y.J.; Lin, Y.L.; Pai, P.F. Support Vector Machines with Hyperparameter Optimization Frameworks for Classifying Mobile Phone Prices in Multi-Class. Electronics 2025, 14, 2173. [Google Scholar] [CrossRef]

- Dudzik, W.; Nalepa, J.; Kawulok, M. Evolving data-adaptive support vector machines for binary classification. Knowl.-Based Syst. 2021, 227, 107221. [Google Scholar] [CrossRef]

- Liu, C.; Jia, G. Industrial big data and computational sustainability: Multi-method comparison driven by high-dimensional data for improving reliability and sustainability of complex systems. Sustainability 2019, 11, 4557. [Google Scholar] [CrossRef]

- Nalepa, J.; Kawulok, M. Selecting training sets for support vector machines: A review. Artif. Intell. Rev. 2019, 52, 857–900. [Google Scholar] [CrossRef]

- Gope, B.; Pande, S.; Karale, N.; Dharmale, S.; Umekar, P. Handwritten Digits Identification Using Mnist Database via Machine Learning Models. IOP Conf. Ser. Mater. Sci. Eng. 2021, 1022, 012108. [Google Scholar] [CrossRef]

- Wan, L.; Zeiler, M.; Zhang, S.; Le Cun, Y.; Fergus, R. Regularization of Neural Networks using DropConnect. In Proceedings of the 30th International Conference on Machine Learning, Atlanta, GA, USA, 17–19 June 2013; pp. 1058–1066. [Google Scholar]

- Larasati, R.; KeungLam, H. Handwritten digits recognition using ensemble neural networks and ensemble decision tree. In Proceedings of the 2017 International Conference on Smart Cities, Automation and Intelligent Computing Systems (ICON-SONICS), Yogyakarta, Indonesia, 8–10 November 2017; pp. 99–104. [Google Scholar] [CrossRef]

- Ahmed, S.S.; Mehmood, Z.; Awan, I.A.; Yousaf, R.M. A novel technique for handwritten digit recognition using deep learning. J. Sens. 2023, 2023, 2753941. [Google Scholar] [CrossRef]

- Im, S.K.; Chan, K.H. Enhanced Localisation and Handwritten Digit Recognition Using ConvCARU. Appl. Sci. 2025, 15, 6772. [Google Scholar] [CrossRef]

- Shamim, S.; Miah, M.B.A.; Sarker, A.; Rana, M.; Al Jobair, A. Handwritten digit recognition using machine learning algorithms. Indones. J. Sci. Technol. 2018, 3, 29–39. [Google Scholar] [CrossRef]

- Shi, H.; Zhu, Z.; Zhang, C.; Feng, X.; Wang, Y. Multimodal Handwritten Exam Text Recognition Based on Deep Learning. Appl. Sci. 2025, 15, 8881. [Google Scholar] [CrossRef]

- Kumar, B.; Tiwari, U.K.; Kumar, S.; Tomer, V.; Kalra, J. Comparison and performance evaluation of boundary fill and flood fill algorithm. Int. J. Innov. Technol. Explor. Eng 2020, 8, 9–13. [Google Scholar]

- Law, G. Quantitative comparison of flood fill and modified flood fill algorithms. Int. J. Comput. Theory Eng. 2013, 5, 503. [Google Scholar] [CrossRef]

- Nosal, E.M. Flood-fill algorithms used for passive acoustic detection and tracking. In Proceedings of the 2008 IEEE New Trends for Environmental Monitoring Using Passive Systems, Hyeres, France, 14–17 October 2008; pp. 1–5. [Google Scholar]

- Qian, H.; Sun, H.; Cai, Z.; Gao, F.; Ni, T.; Yuan, Y. RC Bridge Concrete Surface Cracks and Bug-Holes Detection Using Smartphone Images Based on Flood-Filling Noise Reduction Algorithm. Appl. Sci. 2024, 14, 10014. [Google Scholar] [CrossRef]

- Kramer, O. K-Nearest Neighbors. In Dimensionality Reduction with Unsupervised Nearest Neighbors; Springer: Berlin/Heidelberg, Germany, 2013; pp. 13–23. [Google Scholar]

- Li, K.; Xie, M.; Chen, X. A Study of PyTorch-Based Algorithms for Handwritten Digit Recognition. In International Symposium on Intelligence Computation and Applications; Communications in Computer and Information Science; Springer: Singapore, 2024; Volume 2146, pp. 266–276. [Google Scholar] [CrossRef]

- Zhang, Y.; Zhang, C.; Zhou, Q.; Dai, M. Semi-supervised classification algorithm based on L1-Norm and KNN superposition graph. Pattern Recognit. Artif. Intell. (Moshi Shibie Yu Rengong Zhineng) 2016, 29, 850–855. [Google Scholar] [CrossRef]

| Our Method and k-NN Comparison on MNIST Dataset | ||||

|---|---|---|---|---|

| Method | Vec. Length | Train (s) | Query (s) | Acc. (%) |

| Our Method (N = 5) | 31 | 6.32 | 800.26 | 94.9 |

| Our Method (N = 7) | 43 | 6.69 | 888.53 | 95.9 |

| Our Method (N = 9) | 55 | 7.28 | 989.17 | 96.4 |

| k-NN Euclidean (K = 3) | 784 | n/a | 3422.08 | 97.1 |

| k-NN Euclidean (K = 5) | 784 | n/a | 3442.98 | 96.9 |

| k-NN Euclidean (K = 7) | 784 | n/a | 3447.05 | 96.9 |

| k-NN Cosine (K = 3) | 784 | n/a | 6448.41 | 97.3 |

| k-NN Cosine (K = 5) | 784 | n/a | 6314.58 | 97.3 |

| k-NN Cosine (K = 7) | 784 | n/a | 6284.65 | 97.3 |

| Our Method and k-NN Comparison on USPS Datset | ||||

|---|---|---|---|---|

| Method | Vec. Length | Train (s) | Query (s) | Acc. (%) |

| Our Method (N = 5) | 31 | 0.72 | 14.92 | 91.9 |

| Our Method (N = 7) | 43 | 0.73 | 15.99 | 93.0 |

| Our Method (N = 9) | 55 | 0.76 | 18.09 | 93.3 |

| k-NN Euclidean (K = 3) | 784 | n/a | 70.22 | 94.5 |

| k-NN Euclidean (K = 5) | 784 | n/a | 70.34 | 94.5 |

| k-NN Euclidean (K = 7) | 784 | n/a | 69.64 | 94.2 |

| k-NN Cosine (K = 3) | 784 | n/a | 135.32 | 94.2 |

| k-NN Cosine (K = 5) | 784 | n/a | 132.74 | 94.2 |

| k-NN Cosine (K = 7) | 784 | n/a | 135.19 | 93.8 |

| Predicted | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Actual | |||||||||||

| 0 | 971 | 0 | 1 | 0 | 2 | 1 | 2 | 3 | 0 | 0 | |

| 1 | 0 | 1130 | 2 | 1 | 0 | 0 | 2 | 0 | 0 | 0 | |

| 2 | 4 | 1 | 996 | 2 | 7 | 2 | 10 | 9 | 1 | 0 | |

| 3 | 0 | 2 | 7 | 958 | 0 | 26 | 0 | 15 | 2 | 0 | |

| 4 | 1 | 2 | 0 | 0 | 948 | 0 | 7 | 0 | 0 | 24 | |

| 5 | 3 | 8 | 2 | 20 | 0 | 837 | 8 | 5 | 3 | 6 | |

| 6 | 5 | 8 | 1 | 0 | 7 | 1 | 934 | 0 | 1 | 1 | |

| 7 | 0 | 9 | 14 | 4 | 2 | 2 | 0 | 995 | 0 | 2 | |

| 8 | 14 | 4 | 4 | 4 | 9 | 2 | 10 | 3 | 916 | 8 | |

| 9 | 6 | 9 | 0 | 4 | 19 | 2 | 0 | 13 | 10 | 946 | |

| Predicted | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Actual | |||||||||||

| 0 | 352 | 0 | 3 | 1 | 1 | 0 | 1 | 1 | 0 | 0 | |

| 1 | 0 | 258 | 0 | 0 | 2 | 0 | 3 | 0 | 0 | 1 | |

| 2 | 5 | 1 | 183 | 2 | 5 | 0 | 1 | 1 | 0 | 0 | |

| 3 | 2 | 0 | 5 | 148 | 0 | 10 | 0 | 0 | 1 | 0 | |

| 4 | 3 | 6 | 2 | 0 | 180 | 0 | 1 | 0 | 0 | 8 | |

| 5 | 5 | 0 | 2 | 5 | 0 | 140 | 1 | 0 | 4 | 3 | |

| 6 | 2 | 0 | 1 | 0 | 3 | 0 | 164 | 0 | 0 | 0 | |

| 7 | 0 | 1 | 2 | 0 | 5 | 0 | 0 | 136 | 0 | 3 | |

| 8 | 7 | 3 | 0 | 0 | 1 | 1 | 3 | 1 | 143 | 7 | |

| 9 | 1 | 0 | 1 | 0 | 3 | 0 | 0 | 1 | 2 | 169 | |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Brociek, R.; Pleszczyński, M.; Błaszczyk, J.; Czaicki, M.; Napoli, C. Handwritten Digit Recognition with Flood Simulation and Topological Feature Extraction. Entropy 2025, 27, 1218. https://doi.org/10.3390/e27121218

Brociek R, Pleszczyński M, Błaszczyk J, Czaicki M, Napoli C. Handwritten Digit Recognition with Flood Simulation and Topological Feature Extraction. Entropy. 2025; 27(12):1218. https://doi.org/10.3390/e27121218

Chicago/Turabian StyleBrociek, Rafał, Mariusz Pleszczyński, Jakub Błaszczyk, Maciej Czaicki, and Christian Napoli. 2025. "Handwritten Digit Recognition with Flood Simulation and Topological Feature Extraction" Entropy 27, no. 12: 1218. https://doi.org/10.3390/e27121218

APA StyleBrociek, R., Pleszczyński, M., Błaszczyk, J., Czaicki, M., & Napoli, C. (2025). Handwritten Digit Recognition with Flood Simulation and Topological Feature Extraction. Entropy, 27(12), 1218. https://doi.org/10.3390/e27121218