1. Introduction

Since the advent of the theoretical description of classical and quantum phase transitions (QPTs), long-range interactions between degrees of freedom challenged the established concepts and propelled the development of new ideas in the field [

1,

2,

3,

4,

5]. It is remarkable that, only a few years after the introduction of the renormalisation group (RG) theory by K.G. Wilson in 1971 as a tool to study phase transitions and as an explanation for universality classes [

6,

7,

8,

9,

10,

11], it was used to investigate ordering phase transitions with long-range interactions. These studies found that the criticality depends on the decay strength of the interaction [

1,

2,

3]. It then took two decades to develop numerical Monte Carlo (MC) tools capable of simulating basic magnetic long-range models with thermal phase transitions following the behaviour predicted by the RG theory [

12,

13]. The results of these simulations sparked a renewed interest in finite-size scaling above the upper critical dimension [

12,

14,

15,

16,

17,

18,

19] since “hyperscaling is violated” [

13] for long-range interactions that decay slowly enough. In this regime, the treatment of dangerous irrelevant variables (DIVs) in the scaling forms is required to extract critical exponents from finite systems.

Meanwhile, a similar historic development took place regarding the study of QPTs under the influence of long-range interactions. By virtue of pioneering RG studies [

20,

21], the numerical investigation of long-range interacting magnetic systems has been triggered [

22,

23,

24,

25,

26,

27,

28,

29,

30,

31,

32,

33,

34,

35,

36,

37,

38]. In particular, Monte Carlo-based techniques became a popular tool to gain quantitative insight into these long-range interacting quantum magnets [

22,

25,

26,

29,

30,

31,

32,

33,

34,

35,

36,

37,

38,

39,

40]. On the one hand, this includes high-order series expansion techniques, where classical Monte Carlo integration is applied for the graph embedding scheme, allowing extracting energies and observables in the thermodynamic limit [

25,

29]. On the other hand, there is stochastic series expansion quantum Monte Carlo [

39], which enables calculations on large finite systems. To determine the physical properties of the infinite system, finite-size scaling is performed with the results of these computations. Inspired by the recent developments for classical phase transitions [

15,

16,

17,

18,

19,

41], a theory for finite-size scaling above the upper critical dimension for QPTs was introduced [

32,

34].

When investigating algebraically decaying long-range interactions

with the distance

r and the dimension

d of the system, there are two distinct regimes: one for

(strong long-range interaction) and another one for

(weak long-range interaction) [

5,

42,

43,

44,

45]. In the case of strong long-range interactions, common definitions of internal energy and entropy in the thermodynamic limit are not applicable and standard thermodynamics breaks down [

5,

42,

43,

44,

45]. We will not focus on this regime in this review. For details specific to strong long-range interactions, we refer to other review articles such as Refs. [

5,

42,

43,

44,

45]. For the sake of this work, we restrict the discussion to weak long-range interaction or competing antiferromagnetic strong long-range interactions, for which an extensive ground-state energy can be defined without rescaling of the coupling constant [

5].

The interest in quantum magnets with long-range interactions is further fuelled by the relevance of these models in state-of-the-art quantum–optical platforms [

5,

46,

47,

48,

49,

50,

51,

52,

53,

54,

55,

56,

57,

58,

59,

60,

61,

62,

63,

64,

65,

66,

67,

68,

69,

70,

71,

72,

73,

74,

75,

76,

77,

78,

79,

80,

81,

82,

83,

84,

85,

86,

87]. To realise long-range interacting quantum lattice models with a tunable algebraic decay exponent, one can use trapped ions, which are coupled off-resonantly to motional degrees of freedom [

5,

81,

82,

83,

84,

85,

88]. Another possibility is to couple trapped neutral atoms to photonic modes of a cavity [

5,

86,

87]. Alternatively, one can realise long-range interactions decaying with a fixed algebraic decay exponent of six or three using Rydberg atom quantum simulators [

46,

47,

48,

49,

50,

51,

52,

53,

54,

55] or ultracold dipolar quantum atomic or molecular gases in optical lattices [

56,

57,

58,

59,

60,

61,

62,

63,

64,

65,

66,

67,

68,

69,

70,

71,

72,

73]. Note that, in many of the above-listed cases, it is possible to map the long-range interacting atomic degrees of freedom onto quantum spin models [

5,

52,

89]. Therefore, they can be exploited as analogue quantum simulators for long-range interacting quantum magnets, and the relevance of the theoretical concepts transcends the boundary between the fields.

From the perspective of condensed matter physics, there are multiple materials with relevant long-range interactions [

90,

91,

92,

93,

94,

95,

96,

97,

98,

99,

100,

101,

102,

103,

104,

105,

106]. The compound LiHoF

4 in an external field realises an Ising magnet in a transverse magnetic field [

102,

103,

104,

105]. A recent experiment with the two-dimensional Heisenberg ferromagnet Fe

3GeTe

2 demonstrates that phase transitions and continuous symmetry breaking can be implemented by circumventing the Hohenberg–Mermin–Wagner theorem with long-range interactions [

106]. This material is in the recently discovered material class of 2D magnetic van der Waals systems [

107,

108]. Further, dipolar interactions play a crucial role in the spin ice state in the frustrated magnetic pyrochlore materials Ho

2Ti

2O

7 and Dy

2Ti

2O

7 [

90,

91,

92,

93,

94,

95,

96,

97,

98,

99,

100,

101].

In this review, we are interested in physical systems described by quantum spin models, where the magnetic degrees of freedom are located on the sites of a lattice. We concentrate on the following three paradigmatic types of magnetic interactions between lattice sites: first, Ising interactions, where the magnetic interaction is oriented only in the direction of one quantisation axis; second, XY interactions with a -symmetric magnetic interaction invariant under planar rotations; and third, Heisenberg interactions with a -symmetric magnetic interaction invariant under rotations in 3D spin space. In the microscopic models of interest, a competition between magnetic ordering and trivial product states, external fields, or quasi-long-range order leads to QPTs.

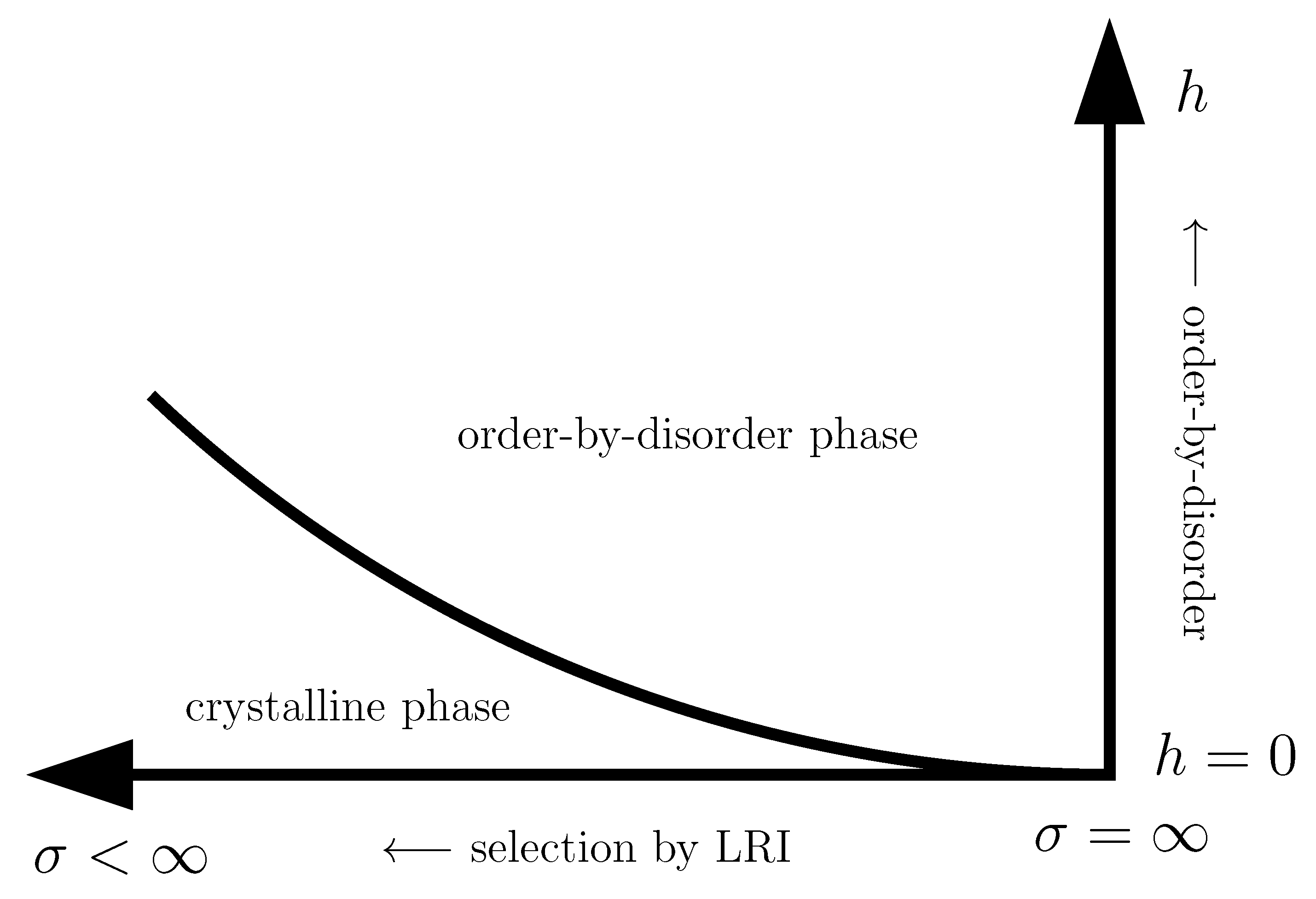

In this context, the primary research pursuit revolves around how the properties of the QPT depend on the long-range interaction. The upper critical dimension of a QPT in magnetic models with non-competing algebraically decaying long-range interactions is known to depend on the decay exponent of the interaction for a small enough exponent, and decreases as the decay exponent decreases [

20,

21]. If the dimension of a system is equal to or exceeds the upper critical dimension, the QPT displays mean-field critical behaviour. At the same time, standard finite-size scaling, as well as standard hyperscaling relations are no longer applicable. Therefore, these systems are primary workhorse models to study finite-size scaling above the upper critical dimension. In this case, the numerical simulation of these systems is crucial in order to gauge novel theoretical developments. Further, QPTs in systems with competing long-range interactions do not tend to depend on the long-range nature of the interaction [

23,

24,

25,

26,

29,

30,

32]. In several cases, long-range interactions then lead to the emergence of ground states and QPTs, which are not present in the corresponding short-range interacting models [

27,

30,

54,

55,

109,

110,

111,

112].

In this review, we are mainly interested in the description and discussion of two Monte Carlo-based numerical techniques, which were successfully used to study the low-energy physics of long-range interacting quantum magnets, in particular with respect to the quantitative investigation of QPTs [

22,

25,

29,

30,

31,

32,

34,

35,

36,

37,

38,

40]. The success of Monte Carlo techniques in this field is due to the occurrence of high-dimensional sums and integrals that commonly arise in the formulation of many-particle statistics. In contrast to many deterministic integration techniques, for which the standard error scales exponentially with the dimension of the underlying integral, the standard error of an integral calculated with Monte Carlo integration does not scale with the dimension of the underlying integral. We further chose to review this topic due to our personal involvement with the application and development of these methods [

25,

29,

30,

31,

32,

34,

35]. On the one hand, we explain in detail how classical Monte Carlo integration can enhance the capabilities of linked-cluster expansions (LCEs) with the pCUT+MC approach (a combination of the perturbative unitary transform approach (pCUT) and MC embedding). On the other hand, we describe how stochastic series expansion (SSE) quantum Monte Carlo (QMC) integration is used to directly sample the thermodynamic properties of suitable long-range quantum magnets on finite systems.

This review is structured as follows. In

Section 2, we review the basic concept of a QPT in a condensed way, focusing on the details relevant for this review. We define the quantum-critical exponents and the relations between them in

Section 2.1. Here, we also have the first encounter with the generalised hyperscaling relation, which is also valid above the upper critical dimension where conventional hyperscaling breaks down. As the SSE QMC method discussed in this review is a finite-system simulation, we discuss the conventional finite-size scaling below the upper critical dimension in

Section 2.2 and the peculiarities of finite-size scaling above the upper critical dimension in

Section 2.3. In

Section 3, we summarise the basic concepts of Markov chain Monte Carlo integration: Monte Carlo sampling, Markovian random walks, stationary distributions, the detailed balance condition, and the Metropolis–Hastings algorithm. We continue by introducing the series-expansion Monte Carlo embedding method pCUT+MC in

Section 4. We start with the basic concepts of a graph expansion in

Section 4.1 and introduce the perturbative method of our choice, the perturbative continuous unitary transformation method, in

Section 4.2. We introduce the theoretical concepts for setting up a linked-cluster expansion as a full graph decomposition in

Section 4.3 and, subsequently, discuss how to practically calculate perturbative contributions in

Section 4.4 and

Section 4.5. We prepare the discussion of the white graph decomposition in

Section 4.6 with an interlude on the relevant graph theory in

Section 4.6.1 and

Section 4.6.2 and the important concept of white graphs in

Section 4.6.3. Further, in

Section 4.7, we discuss the embedding problem for the white graph contributions. Starting from the nearest-neighbour embedding problem in

Section 4.7.1, we generalise it to the long-range case in

Section 4.7.2 and then introduce a classical Monte Carlo algorithm to calculate the resulting high-dimensional sums in

Section 4.7.3. This is followed by some technical aspects on series extrapolations in

Section 4.8 and a summary of the entire workflow in

Section 4.9. In the next section, the topic changes towards the review of the SSE QMC method, which is an approach to simulate thermodynamic properties of suitable quantum many-body systems on finite systems at a finite temperature. First, we discuss the general concepts of the method in

Section 5. We review the algorithm to simulate arbitrary transverse-field Ising models introduced by A. Sandvik [

39] in

Section 5.1. We then review an algorithm used to simulate non-frustrated Heisenberg models in

Section 5.2. After the introduction to the algorithms, we summarise techniques on how to measure common observables in the SSE QMC scheme in

Section 5.3. Since the SSE QMC method is a finite-temperature method, we discuss how to rigorously use this scheme to perform simulations at effective zero temperature in

Section 5.4. We conclude this section with a brief summary of path integral Monte Carlo techniques used for systems with long-range interactions (see

Section 5.5). To maintain the balance between algorithmic aspects and their physical relevance, we summarise several theoretical and numerical results for quantum phase transitions in basics long-range interacting quantum spin models, for which the discussed Monte Carlo-based techniques provided significant results. First, we discuss long-range interacting transverse-field Ising models in

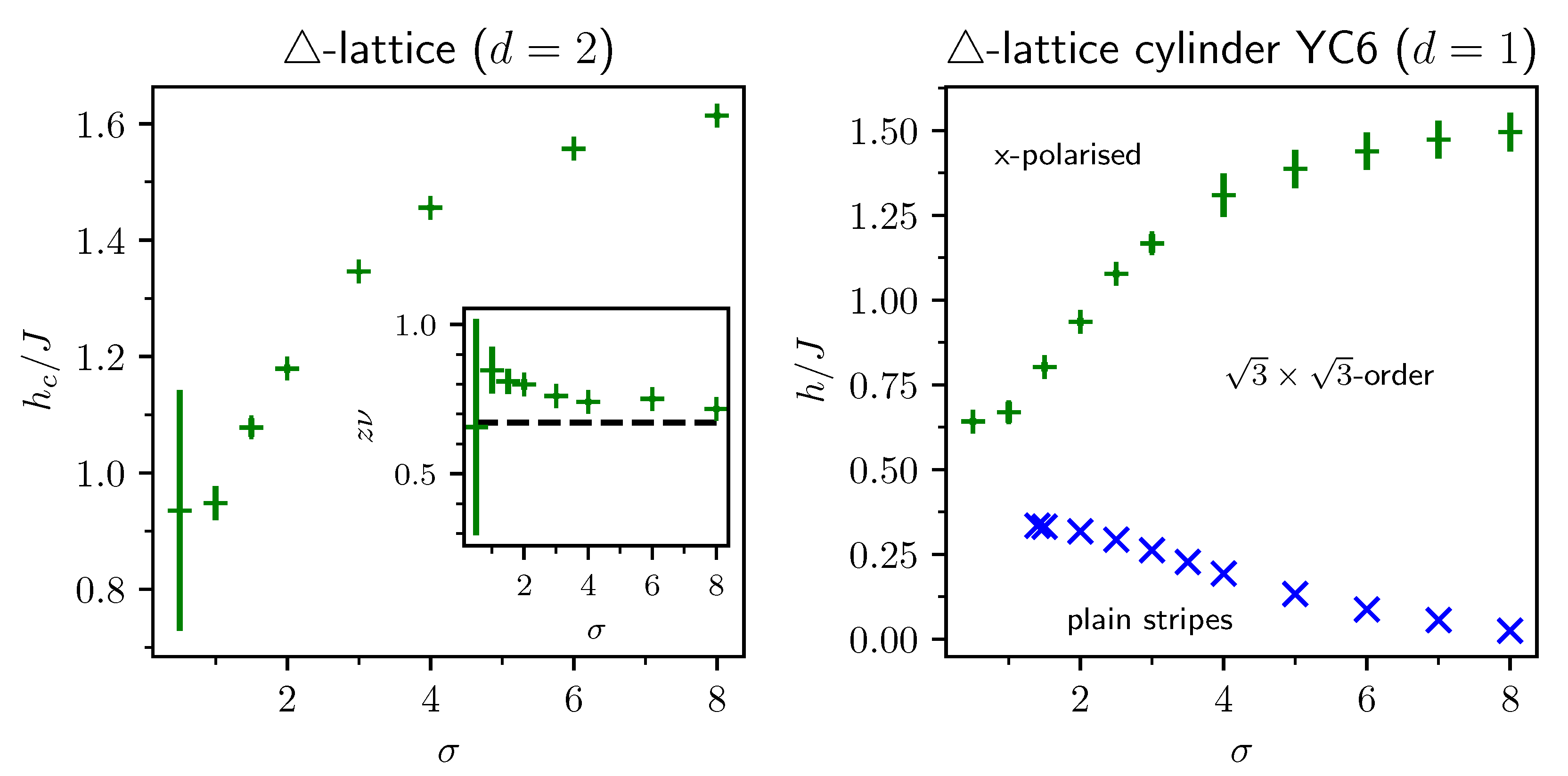

Section 6. For ferromagnetic interactions, this model displays three regimes of universality: a long-range mean-field regime for slowly decaying long-range interactions, an intermediate long-range non-trivial regime, and a regime of short-range universality for strong decaying long-range interactions. We discuss the theoretical origins of this behaviour in

Section 6.1.1 and numerical results for quantum critical exponents in

Section 6.1.2. Since this model is a prime example to study scaling above the upper critical dimension in the long-range mean-field regime, we emphasise these aspects in

Section 6.1.3. Further, we discuss the antiferromagnetic long-range transverse-field Ising model on bipartite lattices in

Section 6.2 and on non-bipartite lattices in

Section 6.3. The next obvious step is to change the symmetry of the magnetic interactions. Therefore, we turn to long-range interacting XY models in

Section 7 and Heisenberg models in

Section 8. We discuss the long-range interacting transverse-field XY chain in

Section 7 starting with the

-symmetric isotropic case in

Section 7.1, followed by the anisotropic case for ferromagnetic (see

Section 7.2) and antiferromagnetic (see

Section 7.3) interactions, which display similar behaviour to the long-range transverse-field Ising model on the chain discussed in

Section 6. We conclude the discussion of the results with unfrustrated long-range Heisenberg models in

Section 8. We focus on the staggered antiferromagnetic long-range Heisenberg square lattice bilayer model in

Section 8.1 followed by long-range Heisenberg ladders in

Section 8.2 and the long-range Heisenberg chain in

Section 8.3. We conclude in

Section 9 with a brief summary and with some comments on the next possible steps in the field.

2. Quantum Phase Transitions

This review is part of the Special Issue with the topic “Violations of Hyperscaling in Phase Transitions and Critical Phenomena”. In this work, we summarise investigations of low-dimensional quantum magnets with long-range interactions targeting, in particular, quantum phase transitions (QPTs) above the upper critical dimension, where the naive hyperscaling relation is no longer applicable. In this section, we recapitulate the relevant aspects of QPTs needed to discuss the results of the Monte Carlo-based numerical approaches. First, we give a general introduction to QPTs. After that, we discuss in detail the definition of critical exponents and the relations among them in

Section 2.1, as well as the scaling below (see

Section 2.2) and above (see

Section 2.3) the upper critical dimension.

Any non-analytic point of the ground-state energy of an infinite quantum system as a function of a tuning parameter

is identified with a QPT [

113]. This tuning parameter can, for instance, be a magnetic field or pressure, but not the temperature. Quantum phase transitions are a concept of zero temperature as there are no thermal fluctuations and all excited states are suppressed infinitely strong such that the system remains in its ground state. There are two scenarios for how a non-analytic point in the ground-state energy can emerge [

113]: First is an actual (sharp) level crossing between the ground-state energy and another energy level. Second, the non-analytic point can be considered as a limiting case of an avoided level crossing. Historically, phase transitions are classified by the lowest order derivative of the free energy that is discontinuous [

113,

114]. Therefore, a first-order phase transition is discontinuous in the order parameter (first derivative) and a second-order phase transition is discontinuous in the response functions (second derivative). Since, in second-order phase transitions, the order parameter is still continuous across the phase transition, we use the term “continuous phase transition” as an equivalent for “second-order phase transition”.

In this review, we are interested in second-order QPTs, which fall into the second scenario. At a second-order QPT, the relevant elementary excitations condense into a novel ground state, while the characteristic length and time scales diverge. Apart from topological QPTs involving a long-range entangled topological phase, such continuous transitions are described by the concept of spontaneous symmetry breaking. On one side of the QPT, the ground state obeys a symmetry of the Hamiltonian, while on the other side, this symmetry is broken in the ground state and a ground-state degeneracy arises.

Following the idea of the quantum-to-classical mapping [

113,

115],

d-dimensional quantum systems can be mapped in the vicinity of a second-order QPT to models of statistical mechanics with a classical (thermal) second-order phase transition in

dimensions. In many cases, the models obtained from a quantum-to-classical mapping are rather artificial [

113]. However, such mappings often allow categorising QPTs in terms of universality classes and associated critical exponents by the non-analytic behaviour of the classical counterparts [

10,

11,

113,

116,

117]. The mapping further illustrates that the renormalisation group (RG) theory is also applicable to describe QPTs [

10,

11,

113,

116,

117].

In the RG theory, each QPT belongs to a non-trivial fixed point of the RG transformation [

10,

11], whereas a trivial fixed point would, for instance, be a fully ordered state with maximal correlation or a fully disordered state with no correlation at all. Critical exponents are connected to the RG flow in the immediate vicinity of these non-trivial fixed points [

10,

11,

113]. The concept of universality classes arises from the fact that different microscopic Hamiltonians can have a quantum critical point that is attracted by the same non-trivial fixed point under successive application of the RG transformation [

10,

11]. Due to this, the QPTs in these models have the same critical exponents.

Another remarkable result of the RG theory is the scaling of observables in the vicinity of phase transitions. Historically, the theory of scaling at phase transitions was heuristically introduced before the RG approach [

118,

119,

120,

121,

122,

123,

124]. The latter provided the theoretical foundation for the scaling hypothesis [

6,

7]. The main statement of the scaling theory is that the non-analytic contributions to the free energy and correlation functions are mathematically described by generalised homogeneous functions (GHFs) [

124]. A function with

n variables

is called a GHF, if there exist

with at least one being non-zero and

such that, for

,

The exponents

are the scaling powers of the variables, and

is the scaling power of the function

f itself. An in-depth summary of the mathematical properties of GHFs can be found in

Appendix B. The most important properties of GHFs are that their derivatives, Legendre transforms, and Fourier transforms are also GHFs. As we will outline in

Section 2.2, the theory of finite-size scaling is formulated in terms of GHFs and relates the non-analytic behaviour at QPTs in the thermodynamic limit with the scaling of observables for different finite system sizes. In this, the variables

are related to physical parameters like the temperature

T, control parameter

, symmetry-breaking field

H, and also irrelevant, more abstract parameters that parameterise the microscopic details of the model like the lattice spacing. Later in this section, we will define irrelevant variables in the context of the RG and GHFs.

Another aspect relevant for this work is that quantum fluctuations are the driving factor with QPTs [

113]. In general, fluctuations are more important in low dimensions [

117]. The universality class of QPTs for a certain symmetry breaking depends on the dimensionality of the system.

An important aspect regarding this review is the so-called upper critical dimension

. The upper critical dimension is defined as a dimensional boundary such that, for systems with dimension

, the critical exponents are those obtained from mean-field considerations. The upper critical dimension is of particular importance for QPTs in systems with non-competing long-range interactions. For sufficiently small decay exponents of an algebraically decaying long-range interaction

, the upper critical dimension starts to decrease as a function of the decay exponent

[

20,

32,

34]. In the limiting case of a completely flat decay (

) of the long-range interaction resulting in an all-to-all coupling, the model is intrinsically of the mean-field type and mean-field considerations become exact. For a certain value of the decay exponent, the upper critical dimension becomes equal to the fixed spatial dimension, and for decay exponents below this value, the dimension of the system is above the upper critical dimension of the transition [

20,

32,

34]. This makes long-range interacting systems an ideal test bed for studying phase transitions above the upper critical dimension in low-dimensional systems. In particular, long-range interactions can make the upper critical dimension accessible in real-world experiments as the upper critical dimension of short-range models is usually not below three.

Although phase transitions above the upper critical dimension display mean-field criticality, they are still a matter worth studying, since naive scaling theory describing the behaviour of finite systems close to a phase transition (see

Section 2.2) is no longer applicable [

16,

19,

125]. Moreover, the naive versions of some relations between critical exponents, as discussed in

Section 2.1, do not hold any longer [

15,

16]. The reason for this issue are the dangerous irrelevant variables (DIVs) in the RG framework [

126,

127,

128]. During the application of the RG transformation, the original Hamiltonian is successively mapped to other Hamiltonians, which can have infinitely many couplings. All these couplings, in principle, enter the GHFs. In practice, all but a finite number of these couplings are set to zero since their scaling powers are negative, which means they flow to zero under renormalisation. These couplings are, therefore, called irrelevant. This approach of setting irrelevant couplings to zero can be used to derive the finite-size scaling behaviour as described in

Section 2.2. However, above the upper critical dimension, this approach breaks down because it is only possible to set irrelevant variables to zero if the GHF does not have a singularity in this limit [

126]. Above the upper critical dimension, such singularities in irrelevant parameters exist, which makes them DIVs [

127]. We explain the effect of DIVs on scaling in

Section 2.3.

2.1. Critical Exponents in the Thermodynamic Limit

As outlined above, a second-order QPT comes with a singularity in the free energy density. In fact, also, other observables experience singular behaviour at the critical point in the form of power-law singularities. For instance, the order parameter

m as a function of the control parameter

behaves as

in the ordered phase. Without loss of generality, the system is taken to be in the ordered phase for

and the notation

means that

is approaching

from below, i.e., it is approaching in the ordered phase. In the disordered phase

, the order parameter by definition vanishes such that

. The observables with their respective power-law singular behaviour, which is characterised by the critical exponents

, and

z, are summarised in

Table 1 together with how they are commonly defined in terms of the free energy density

f, the symmetry-breaking field

H, which couples to the order parameter, and the reduced control parameter

.

One usually defines reduced parameters like

r that vanish at the critical point not only to shorten the notation, but also to express the power-law singularities independent of the microscopic details of the specific model one is looking at. While the value of

depends on these details, the power-law singularities are empirically known to not depend on the microscopic details, but only on more general properties like the dimensionality, the symmetry that is being broken, and, with particular emphasis due to the focus of this review, on the range of the interaction. It is, therefore, common to classify continuous phase transitions in terms of universality classes. These universality classes share the same set of critical exponents. In terms of the RG, this behaviour is understood as distinct critical points of microscopically different models flowing to the same renormalisation group fixed point, which determines the criticality of the system [

6,

7,

10]. Prominent examples for universality classes of magnets are the 2D and 3D Ising (

symmetry), 3D XY (

symmetry), and 3D Heisenberg (

symmetry) universality classes [

113]. It is important to mention that the dimension in the classifications is referring to classical and not quantum systems, and they should not be confused with each other. In fact, the universality class of a short-range interacting non-frustrated quantum Ising model of dimension

d lies in the universality of the classical

-dimensional Ising model.

There are only a few dimensions for which a separate universality class is defined for the different models. For lower dimensions, the fluctuations are too strong in order for a spontaneous symmetry breaking to occur. In the case of the classical Ising model, there is no phase transition for 1D, while for the classical XY and Heisenberg models with continuous symmetries, there is not even a phase transitions for 2D due to the Hohenberg–Mermin–Wagner (HWM) theorem [

130,

131]. This dimensional boundary is referred to as lower critical dimension

. The lower critical dimension is the highest dimension for which no transition occurs, i.e.,

for the Ising model and

for the XY and Heisenberg model. For higher dimensions

, the critical exponents of the mentioned models do not depend on the dimensionality any longer, and they take on the mean-field critical exponents in all dimensions. The underlying reason is that, with increasing dimensions, the local fluctuations become smaller due to the higher connectivity of the system [

132]. This has been also exploited in diagrammatic and series expansions in

[

133,

134,

135]. This dimensional boundary, at which the criticality becomes the mean-field one, is called upper critical dimension

. Usually, the upper critical dimension is too large to realise a system above its upper critical dimension in the real world. However, long-rang interactions can increase the connectivity of a system in a similar sense as the dimensionality. A sufficiently long-range interaction can, therefore, lower the upper critical dimension to a value that is accessible in experiments.

Finally, it is worth mentioning that the critical exponents are not independent of each other, but obey certain relations [

129], namely

The first relation in Equation (

3) is the so-called hyperscaling relation, whose classical analogue (without

z) was introduced by Widom [

10,

136]. The Essam–Fisher relation in Equation (

4) [

137,

138] is reminiscent of a similar inequality, which was proven rigorously by Rushbrooke using thermodynamic stability arguments. Equation (

5) is called the Widom relation. The last relation in Equation (

6) is the Fisher scaling relation, which can be derived using the fluctuation–dissipation theorem [

10,

129,

138]. Those relations were originally obtained from scaling assumptions of observables close to the critical point, which were only later derived rigorously when the RG formalism introduced to critical phenomena [

10,

129]. Due to these relations, it is sufficient to calculate three, albeit not arbitrary, exponents to obtain the full set of critical exponents.

The hyperscaling relation Equation (

3) is the only relation containing the dimension of the system and is, therefore, often said to break down above the upper critical dimension, where one expects the same mean-field critical exponents independent of the dimension

d [

10]. It, therefore, deserves special focus in this review since the long-range models discussed will be above the upper critical dimension in certain parameter regimes. Personally, we would not agree that the hyperscaling relation breaks down above the upper critical dimension, but we would rather call Equation (

3) a special case of a more general hyperscaling relation:

with the pseudo-critical exponent

(“koppa”) [

34]:

Below the upper critical dimension, the general hyperscaling relation, therefore, relaxes to Equation (

3). Above the upper critical dimension, the relation becomes

which is independent of the dimension of the system. For the derivation of this generalised version of the hyperscaling relation for QPTs, see

Section 2.3 or Ref. [

34]. The derivation of the classical counterpart can be found in Ref. [

15] and is reviewed in Ref. [

41].

2.2. Finite-Size Scaling below the Upper Critical Dimension

Even though the singular behaviour of observables at the critical point is only present in the thermodynamic limit, it is possible to study the criticality of an infinite system by investigating their finite counterparts. In finite systems, the power-law singularities of the infinite system are rounded and shifted with respect to the critical point, e.g., the susceptibility with its characteristic divergence at the critical point

is deformed to a broadened peak of finite height. The peak’s position

is shifted with respect to the critical point

. A possible definition of a pseudo-critical point of a finite system is the peak position

. As the characteristic length scale of fluctuations

diverges at the critical point, the finite system approaching the critical point will at some point begin to “feel” its finite extent and the observables start to deviate from the ones in the thermodynamic limit. As

diverges with the exponent

like

at the critical point, the extent of rounding in a finite system is related to the value of

. Similarly, the peak magnitude of finite-size observables at the pseudo-critical point will depend on how strong the singularity in the thermodynamic limit is, which means it depends on the respective critical exponents

,

,

, and

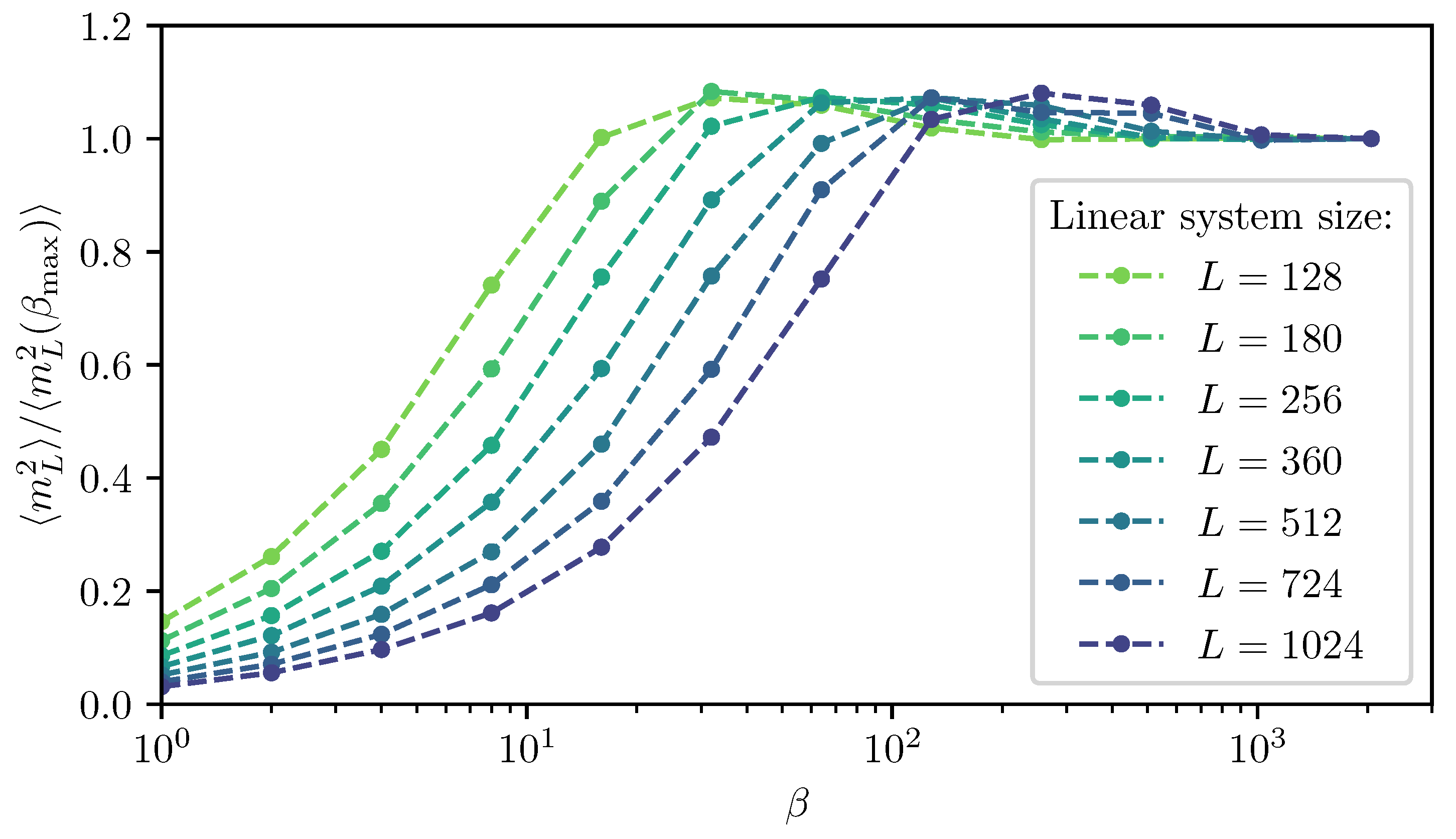

. The shifting, rounding, and varying peak magnitude are shown for the susceptibility of the long-range transverse-field Ising model in

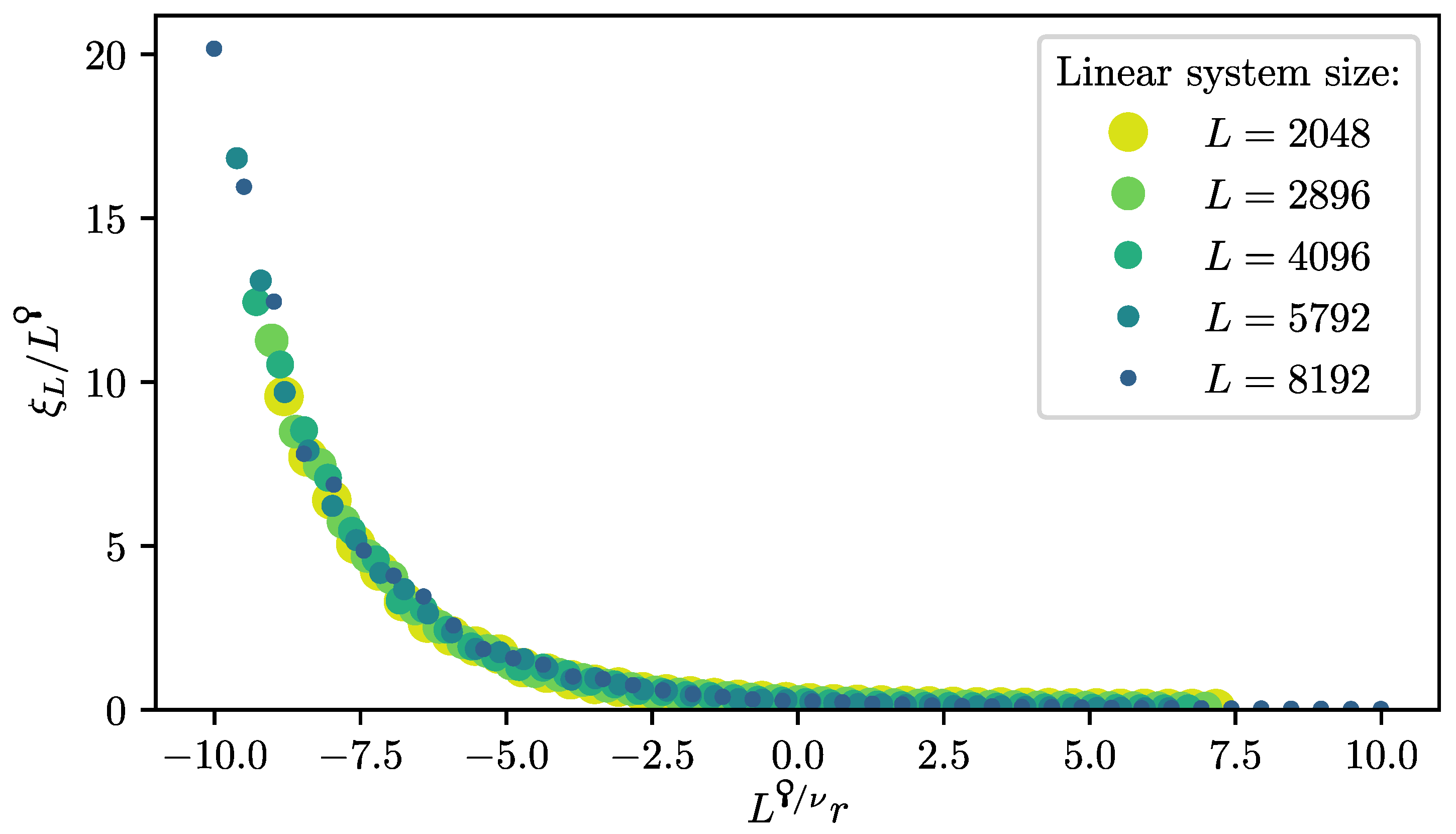

Figure 1. This dependence of observables in finite systems on the criticality of the infinite system is the basis of finite-size scaling.

In a more mathematical sense, the relation between critical exponents and finite-size observables has its origin in the renormalisation group (RG) flow close to the corresponding RG fixed point that determines the criticality [

139]. Close to this fixed point, one can linearise the RG flow so that the free energy density and the characteristic length

become generalised homogeneous functions (GHFs) in their parameters [

124,

128,

129,

140,

141]. For a thorough discussion of the mathematical properties of GHFs, we refer to Ref. [

124] and

Appendix B. This means that the free energy density

f and characteristic length scale

as functions of the couplings

and the inverse system length

obey the relations:

with the respective scaling dimensions

,

, and

governing the linearised RG flow with spatial rescaling factor

around the RG fixed point, at which all couplings vanish by definition. All of those couplings are relevant except for

u, which denotes the leading irrelevant coupling [

10,

113]. Relevant couplings are related to real-world parameters that can be used to tune our system away from the critical point like the temperature

T, a symmetry-breaking field

H, or simply the control parameter

r. The irrelevant couplings

u do not per se vanish at the critical point like the relevant ones do. However, they flow to zero under the RG transformation and are commonly set to zero in the scaling laws:

by assuming analyticity in these parameters. The generalised homogeneity of thermodynamic observables can be derived from the one of the free energy density

f. For example, the generalised homogeneity of the magnetisation:

can be derived by taking the derivative of

f with respect to the symmetry-breaking field

H.

By investigating the singularity of

in

r via Equation (

13), one can show that the scaling power

of the control parameter

r is related to the critical exponent

by

[

113]. For this, one fixes the value

of the first argument to

in the right-hand side of Equation (

13) by setting

such that

Analogously, further relations between the scaling powers and other critical exponents can be derived by looking at the singular behaviour of the respective observables in the corresponding parameters. Overall, one further obtains

From these equations, one can already tell that the critical exponents are not independent of each other. In fact, the scaling relations

,

and

(see Equations (

3)–(

5)) can be derived from Equation (

16) and

. By expressing the RG scaling powers

in terms of critical exponents, the homogeneity law for an observable

with a bulk divergence

is given by

In order to investigate the dependence on the linear system size

L, the last argument in the homogeneity law is fixed to

by inserting

. This readily gives the finite-size scaling form

with

being the universal scaling function of the observables

. The scaling function

itself does not depend on

L any longer, but in order to compare different linear system sizes, one has to rescale its arguments. To extract the critical exponents from finite systems, the observable

is measured for different system sizes

L and parameters

close to the critical point

. The

L-dependence according to Equation (

19) is then fit with the critical exponents

,

,

, and

z, as well as the critical point

, which is hidden in the definition of

r, as free parameters. It is advisable to fix two of the three parameters

to their critical values in order to minimise the amount of free parameters in the fit. For example, with

and only

, one can extract the two critical exponents

and

alongside

. For further details on a fitting procedure, we refer to Ref. [

32]. If one knows the critical point, one can also set

and look at the

L-dependent scaling

directly at the critical point to extract the exponent ratio

. There are many more possible approaches to extract critical exponents from the FSS law in Equation (

19) [

142,

143,

144]. For relatively small system sizes, it might be required to take corrections to scaling into account [

143,

144].

2.3. Finite-Size Scaling above the Upper Critical Dimension

In the derivation of finite-size scaling below the upper critical dimension, it was assumed that the free energy density is an analytic function in the leading irrelevant coupling u and, therefore, one can set it to zero. However, this is not the case above the upper critical dimension any longer, and the free energy density f is singular at . Due to this singular behaviour u is referred to as a dangerous irrelevant variable (DIV).

As a consequence, one has to take the scaling of

u close to the RG fixed point into account. This is achieved by absorbing the scaling of

f in

u for small

u into the scaling of the other variables [

128]:

up to a global power

of

u. This leads to a modification of the scaling powers in the homogeneity law for the free energy density [

128]:

with the modified scaling powers [

34,

128]:

In the classical case [

128],

was commonly set to 1 by choice. This is justified because the scaling powers of a GHF are only determined up to a common non-zero factor [

124]. However, for the quantum case [

34], this was kept general as it has no impact on the FSS.

As the predictions from the Gaussian field theory and mean field differed for the critical exponents

,

, and

, but not for the “correlation” critical exponents

,

z,

, and

[

145], the correlation sector was thought not to be affected by DIVs at first [

128,

142,

145]. Later, the Q-FSS, another approach to FSS above the upper critical dimension, pioneered by Ralph Kenna and his colleagues, was developed for classical [

15,

19,

125], as well as for quantum systems [

34], which explicitly allowed the correlation sector to also be affected by the DIV. In analogy to the free energy density, the homogeneity law of the characteristic length scale is then also modified to

with

in order to reproduce the correct bulk singularity

. A new pseudo-critical exponent

(“koppa”):

is introduced. This exponent describes the scaling of the characteristic length scale with the linear system size. This non-linear scaling of

with

L is one of the key differences to the previous treatments above the upper critical dimension in Ref. [

128].

Analogous to the case below the upper critical dimension, the modified scaling powers

can be related to the critical exponents:

By using the mean-field critical exponents for the

quantum rotor model, one obtains restrictions for the ratios of modified scaling powers:

Furthermore, one can link the bulk scaling powers

, and

to the scaling power

of the inverse linear system size [

34]:

by looking at the scaling of the susceptibility in a finite system [

34,

128]. This relation is crucial for deriving an FSS form above the upper critical dimension as the modified scaling power

, or rather, its relation to the other scaling powers determines the scaling with the linear system size

L. For details on the derivation, we refer to Ref. [

34]. We want to stress again that the scaling powers of GHFs are only determined up to a common non-zero factor [

124]. Therefore, it is evident that one can only determine the ratios of the modified scaling powers, but not their absolute value. The absolute values are subject to choice. Different choices were discussed in Ref. [

34], but these choices rather correspond to taking on different perspectives and have no impact on the FSS nor the physics.

From Equation (

32) together with Equations (

26) and (

27), a generalised hyperscaling relation:

can be derived. This also determines the pseudo-critical exponent:

Finally, we can express the modified scaling powers in the FSS law for an observable

with power-law singularity

:

For

below the upper critical dimension, Equation (

36) relaxes to the standard FSS law Equation (

19). The scaling in temperature has not yet been studied for finite quantum systems above the upper critical dimension. However, in Ref. [

34], it was conjectured that

based on Equation (

32), which is also in agreement with

z being the dynamical critical exponent that determines the space–time anisotropy

, as we will shortly see. This means that the finite-size gap scales as

with the system size [

34]. Of particular interest is the scaling of the characteristic length scale above the upper critical dimension, for which the modified scaling law Equation (

36) also holds with

. Hence, the characteristic length scale in dependence of the control parameter

r scales like

with the scaling function

. Directly at the critical point

, this leads to

. Comparing this with the scaling of the inverse finite-size gap

verifies that

z still determines the space–time anisotropy. Prior to the Q-FSS [

15,

17], the characteristic length scale was thought to be bound by the linear system size

L [

128]. However, this was shown not to be the case by measurements of the characteristic length scale for the classical five-dimensional Ising model [

14] and for long-range transverse-field Ising models [

34].

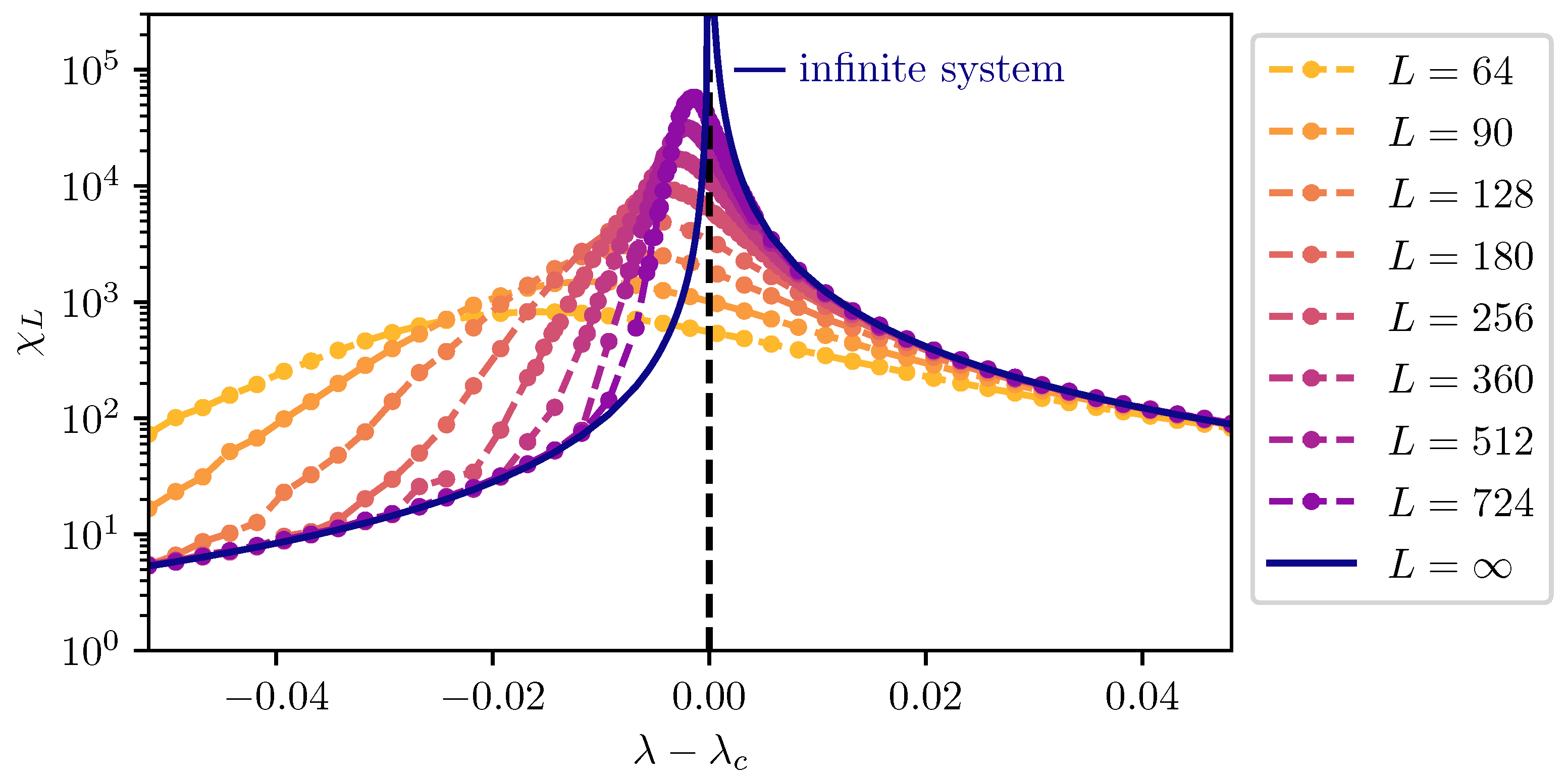

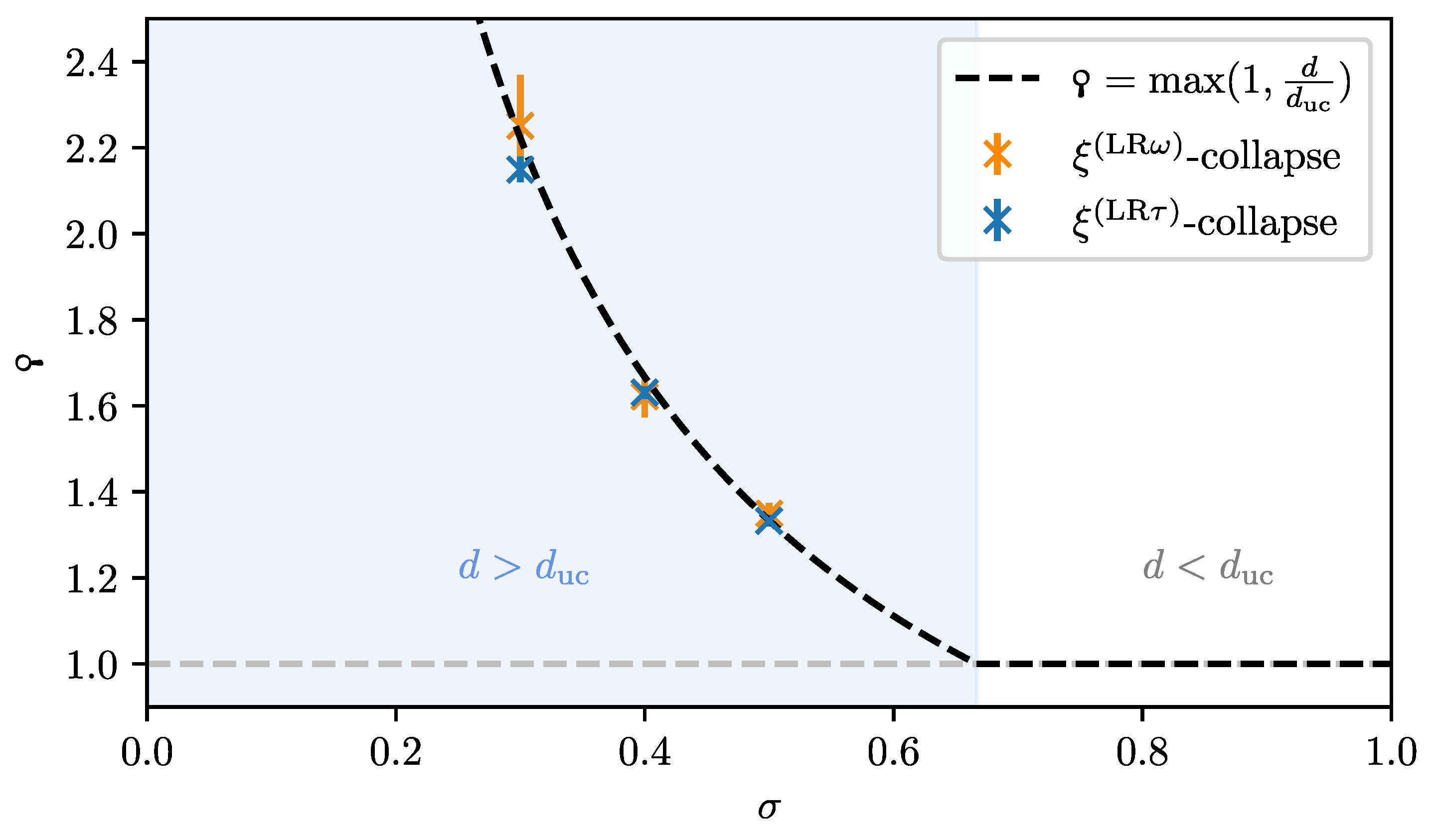

For the latter, the data collapse of the correlation length according to Equation (

37) is shown in

Figure 2 as an example.

3. Monte Carlo Integration

In this section, we provide a brief introduction to Monte Carlo integration (MCI). We focus on the aspects of Markov chain MCI as the basis to formulate the white graph Monte Carlo embedding scheme of the pCUT+MC method in

Section 4 and the stochastic series expansion (SSE) quantum Monte Carlo (QMC) algorithm in

Section 5 in a self-contained fashion. MCI is the foundation for countless numerical applications, which require the integration over high-dimensional integration spaces. As this review has a focus on “Monte Carlo-based techniques for quantum magnets with long-range interactions”, we forward readers with a deeper interest in the fundamental aspects of MCI and Markov chains to Refs. [

146,

147,

148].

MCI summarises numerical techniques to find estimators for integrals of functions

over an integration space

using random numbers. The underlying idea behind MCI is to estimate the integral, or the sum in the case of discrete variables, of the function

f over the configuration space by an expectation value:

with

sampled according to a probability density function

(PDF) and the function

reweighted by the PDF. A famous direct application of this idea is the calculation of the number “pi”, which is discussed in great detail in Ref. [

148].

In this review, MCI is used for the embedding of white graphs on a lattice to evaluate high orders of a perturbative series expansion or to calculate thermodynamic observables using the SSE framework. In both cases, non-normalised relative weights

within a configuration space

arise, which are used for the sampling of the PDF

P:

being oftentimes not directly accessible. In the context of statistical physics,

is often chosen to be the relative Boltzmann weight

of each configuration

. While this relative Boltzmann weight is accessible as long as

is known, the full partition function to normalise the weights is in general not.

In order to efficiently sample the configuration space

according to the relative weights, the methods in this review use a Markovian random walk to generate

. Let

be the random state of a random walk at a discrete step

n. The state

at the next step is randomly determined according to the conditional probabilities

(transition probabilities). These transition probabilities are normalised by

Markovian random walks obey the Markov property, which means the random walk is memory-free and the transition probability for multiple steps factorises into a product over all time steps:

with

the start configuration. We require the Markovian random walk to fulfil the following conditions: First, the random walk should have a certain PDF

defined by the weights

in Equation (

39) as a stationary distribution. By definition,

is a stationary distribution of the Markov chain if it satisfies the global balance condition:

Second, we require the random walk to be irreducible, which means that the transition graph must be connected and every configuration

can be reached from any configuration

in a finite number of steps. This property is necessary for the uniqueness of the stationary distribution [

147]. Lastly, we require the random walk to be aperiodic (see Ref. [

147] for a rigorous definition). Together with the irreducibility condition, this ensures convergence to the stationary distribution [

147].

There are several possibilities to design a Markov chain with a desired stationary distribution [

146,

147,

148,

149,

150,

151]. Commonly, the Markov chain is constructed to be reversible. This means that it satisfies the detailed balance condition:

which is a stronger condition for the stationarity of

P than the global balance condition in Equation (

42). One popular choice for the transition probabilities

that satisfies the detailed balance condition is given by the Metropolis–Hastings algorithm. Most applications of MCI reviewed in this work are based on the Metropolis–Hastings algorithm [

149,

150]. In this approach, the transition probabilities

are decomposed into propositions

and acceptance probabilities

as follows:

The probabilities to propose a move

can be any random walk satisfying the irreducibility and aperiodicity condition. By inserting the decomposition of the transition probabilities Equation (

44) into the detailed balance condition Equation (

43), one obtains for the acceptance probabilities:

where, in the last step, the idea that the unknown normalisation factors (see Equation (

39)) of the PDF cancel was used. The condition in Equation (

45) is fulfilled by the Metropolis–Hastings acceptance probabilities [

150]:

For the special case, for which the proposition probabilities are symmetric

, Equation (

46) reduces to the Metropolis acceptance probabilities:

As an example, we regard a classical thermodynamic system with Boltzmann weights

given by the energies of configurations and the inverse temperature to give an intuitive interpretation of the Metropolis acceptance probabilities in Equation (

47). The proposition to move from a configuration

to a configuration

with a smaller energy

is always accepted independent of the temperature. On the other hand, the proposition to move to a configuration

with a larger energy than

is only accepted with a probability depending on the ratio of the Boltzmann weights. If the temperature is higher, it is more likely to move to states with a larger energy. This reflects the physics of the system in the algorithm, focusing on the low-energy states at low temperatures and going to the maximum entropy state at large temperatures.

5. Stochastic Series Expansion Quantum Monte Carlo

In this section, we discuss the method of stochastic series expansion (SSE) QMC. This class of QMC algorithms is closely related to path integral (PI) QMC and samples configurations according to the Boltzmann distribution of a quantum mechanical Hamiltonian. This sampling is achieved by extending the configuration space in the imaginary-time direction by operator sequences. The objective is to evaluate thermal expectation values for operators at a finite temperature on a finite system.

The canonical partition function of a system with a quantum mechanical Hamiltonian

can be expressed as

with

being the inverse temperature and the sum over an arbitrary orthonormal basis

. The task is to bring Equation (

211) into the form of

with all weights

required to be non-negative.

Of course, for a Hamiltonian that is traced over in its eigenbasis (or a classical system), Equation (

211) is already in the form of Equation (

212), and the system can be directly sampled by a Metropolis–Hastings algorithm (see Equation (

46)). For a general quantum mechanical problem, we do not have access to the eigenstates of a system and require a reformulation of Equation (

211).

The SSE QMC idea resolves this issue in the following way: Given a Hamiltonian

, a computational orthonormal basis

is chosen in which the trace is evaluated. Further, there should exist a decomposition of the Hamiltonian:

into operators

.

and

are chosen such that the following two conditions are met:

No-branching rule:

ensuring that no superpositions of basis states are created by acting with

.

Non-negative real matrix elements in the computational basis:

The second condition is not strictly necessary, but makes sure that no sign problem arises, which would lead to exponentially hard computational complexity. In general, it is not necessarily possible to find a computational basis in which this condition can be fulfilled for all Hamiltonians. However, if the negative matrix elements contribute in such a way that they always occur in pairs and the minus signs cancel, the condition can be relaxed without inducing a sign problem. We will encounter this case for the antiferromagnetic Heisenberg models in

Section 5.2.

For operators

that are diagonal in the computational basis, the conditions (

214) and (

215) never pose a problem as diagonal operators intrinsically obey the no-branching rule and can always be made non-negative by adding a suitable constant to the Hamiltonian. The main difficulty is to find a computational basis in which the off-diagonal matrix elements are non-negative, which is not necessarily possible, as mentioned above. In particular, for fermionic or frustrated systems, negative signs typically occur.

In order to reformulate Equation (

211) in the form of Equation (

212), a high-temperature expansion for the partition function is performed:

In general, the evaluation of

is not feasible. The way the SSE tackles this expression is by expanding the product of sums as

as a sum over all occurring operator sequences

resulting from the exponentiation. The additional dimension created by the operator sequence is usually referred to as imaginary time in analogy to path-integral formulations. Inserting Equation (

219) into Equation (

218), one obtains

Note that each of the summands in Equation (

220) is non-negative by design due to the condition (

215) and can be interpreted as the relative weight of a configuration. By comparing Equation (

220) with Equation (

212), we see that it is of a suitable form for a Markov chain Monte Carlo sampling. We identify the direct product of the set of all basis states

with the set of all sequences as the configuration space:

The weight of a configuration is given by

In the next step, we discuss the structure of each configuration consisting of a computational basis state

and an operator sequence

. Regarding Equation (

220), we stress that the action of the product of operators in the sequence onto the basis state is crucial. Due to the no-branching rule (see Equation (

214)), the action of

onto the basis state can be interpreted as a discrete propagation of the state

in imaginary time according to the operators in the sequence. We define the state at propagation index

to be

Due to the periodic boundary condition of the trace and the orthogonality of the basis states

, only sequences for which

have a non-zero weight (see Equation (

222)).

At this point, we can demonstrate why it does not matter if some matrix elements are negative in some instances, e.g., for some antiferromagnetic spin models on bipartite lattices. If a matrix element of an operator is negative, but it is ensured by the Hamiltonian and the periodicity of the trace that there is always an even number of negative matrix elements in a sequence with non-vanishing weight, then the definition of weights as in Equation (

222) is nevertheless possible.

In the discussion so far, we considered sequences of all possible lengths. In order to formulate algorithms sampling the configuration space efficiently, a scheme with a fixed sequence length

can be introduced, in which all sequences with

are padded with identity operators

to length

and all sequences

are discarded. The physical justification to discard all sequences above a certain fixed length

is that they are exponentially suppressed for sufficiently large

. In short, this is the case because the mean operator number

is proportional to the mean energy

and the variance is related to the specific heat in the following way:

A derivation and discussion of these statements can be found in Refs. [

39,

210,

211,

212,

213]. From Equation (

224), we can infer that the infinite sum over all sequence lengths can be truncated at a finite

. From rearranging Equation (

224) and using the extensivity of the mean energy, the mean sequence length scales as

proportional to the inverse temperature and system size

N. As the mean sequence length has a finite value, the idea is to choose an

great enough to be able to sample all but a negligible amount of operator sequences. Further, we will argue that the introduction of a large enough cutoff results in an exponentially small and negligible error. In the limit of

, the specific heat has to vanish. Therefore, the variance of the mean sequence length is proportional to

. From this, it is concluded that the weights of sequences vanish exponentially for large enough sequence lengths

n [

212].

We, therefore, introduce a large enough cutoff for the sequence length

and consider operator sequences with a fixed length

. The expression for the partition function can then be written as

The new configuration space includes all sequences of length

where the shorter sequences are padded by inserting unity operators. The random insertion of unity operators into a sequence of

non-trivial operators results in

sequences of length

. The modified prefactor in Equation (

225) accounts for this overcounting. Although Equation (

225) is, strictly speaking, an approximation to the partition function, we want to note that, in practice, this does not cause a systematic error as

can be chosen large enough in a dynamical fashion such that, during the finite simulation time, no sequence of length

would occur. Therefore, we will not consider the fixed-length scheme as an approximation below.

To summarise: Following the argumentation discussed in this section, it is possible to bring any partition function into the form of Equation (

225), whereby all the summands are non-negative if a suitable decomposition and computational basis can be found. The next step is to implement a Markov chain MC sampling on the configuration space. As the configuration space largely depends on the model, the sampling is performed in a model-dependent way. In

Section 5.1, we will introduce an algorithm to sample the (LR)TFIM. In

Section 5.2, we will further describe an algorithm to sample unfrustrated (long-range) Heisenberg models. In

Section 5.3, the measurement of a variety of operators is discussed. Further, we will discuss how to sample systems at effectively zero temperature in

Section 5.4 as this review considers the zero-temperature physics of quantum phase transitions. In

Section 5.5, we give a short overview of other MC algorithms for long-range models based on path integrals.

5.1. Algorithm for Arbitrary Transverse-Field Ising Models

In this section, we describe the SSE algorithm to sample arbitrary transverse-field Ising models (TFIMs) of the form

as introduced by A. Sandvik in Ref. [

39]. The Pauli matrices

with

describe

N spins 1/2 located on the lattice sites

. The transverse-field strength at a lattice site

i is

, and the Ising couplings between sites

i and

j have the strength

. For

, the interaction is antiferromagnetic and it is energetically favourable for the spins to anti-align, while for

, the interaction is ferromagnetic and it is energetically favourable for the spins to align.

Choosing the

-eigenbasis

for the SSE formulation avoids the sign problem for arbitrary Ising interactions. The Hamiltonian is decomposed using the following operators:

We call

a trivial operator,

a field operator,

a constant operator, and

an Ising operator. The operator

is associated with the site

i, even though it is proportional to

. With these operators, we can rewrite Equation (

226) up to an irrelevant constant as

Note that Equation (

231) is a sum over field, constant, and Ising operators. The constant operators are not part of the original Hamiltonian, but will be relevant for algorithmic purposes. The trivial operators (Equation (

227)) are not relevant to express the Hamiltonian, but are necessary for the fixed-length sampling scheme. The proposed decomposition fulfils the no-branching property, and there are no negative matrix elements of the operators in the computational basis due to the positive constant

that is added to the Ising operators. The possible matrix elements for Ising operators

acting on a pair of spins

are given by

This implies that only sequences where (anti)ferromagnetic Ising bonds are placed on (anti)aligned spins have a non-vanishing weight.

The partition function (Equation (

225)) in the fixed-length scheme reads

The propagated states

only change in the imaginary-time propagation if a field operator, the only off-diagonal operator in the chosen basis, acts on it. A configuration only has a non-zero weight if all the Ising operators in the sequence are placed on spins that have the correct alignment. Constant operators are included in the Hamiltonian for algorithmic purposes, which will become important in the off-diagonal update described below.

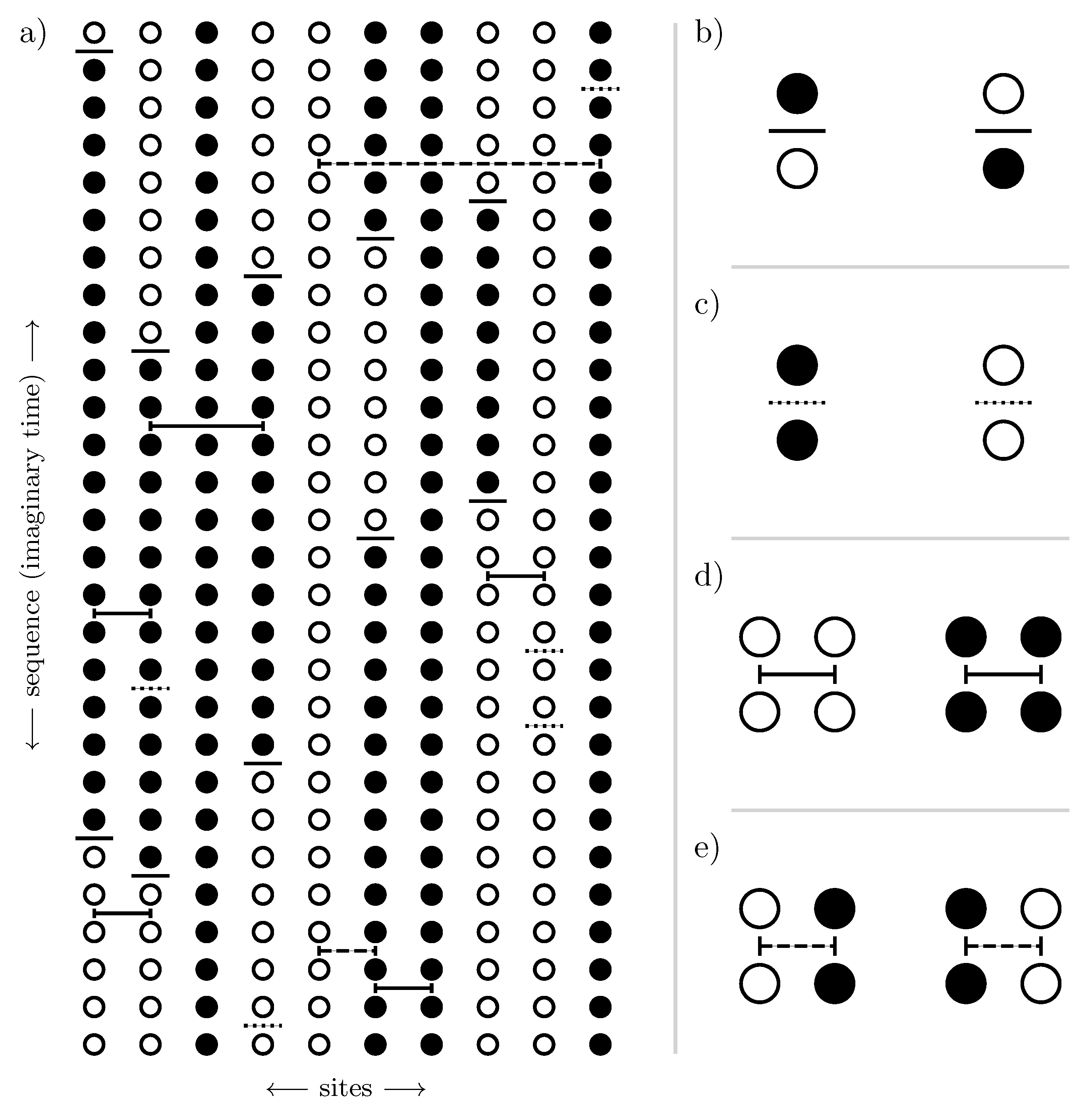

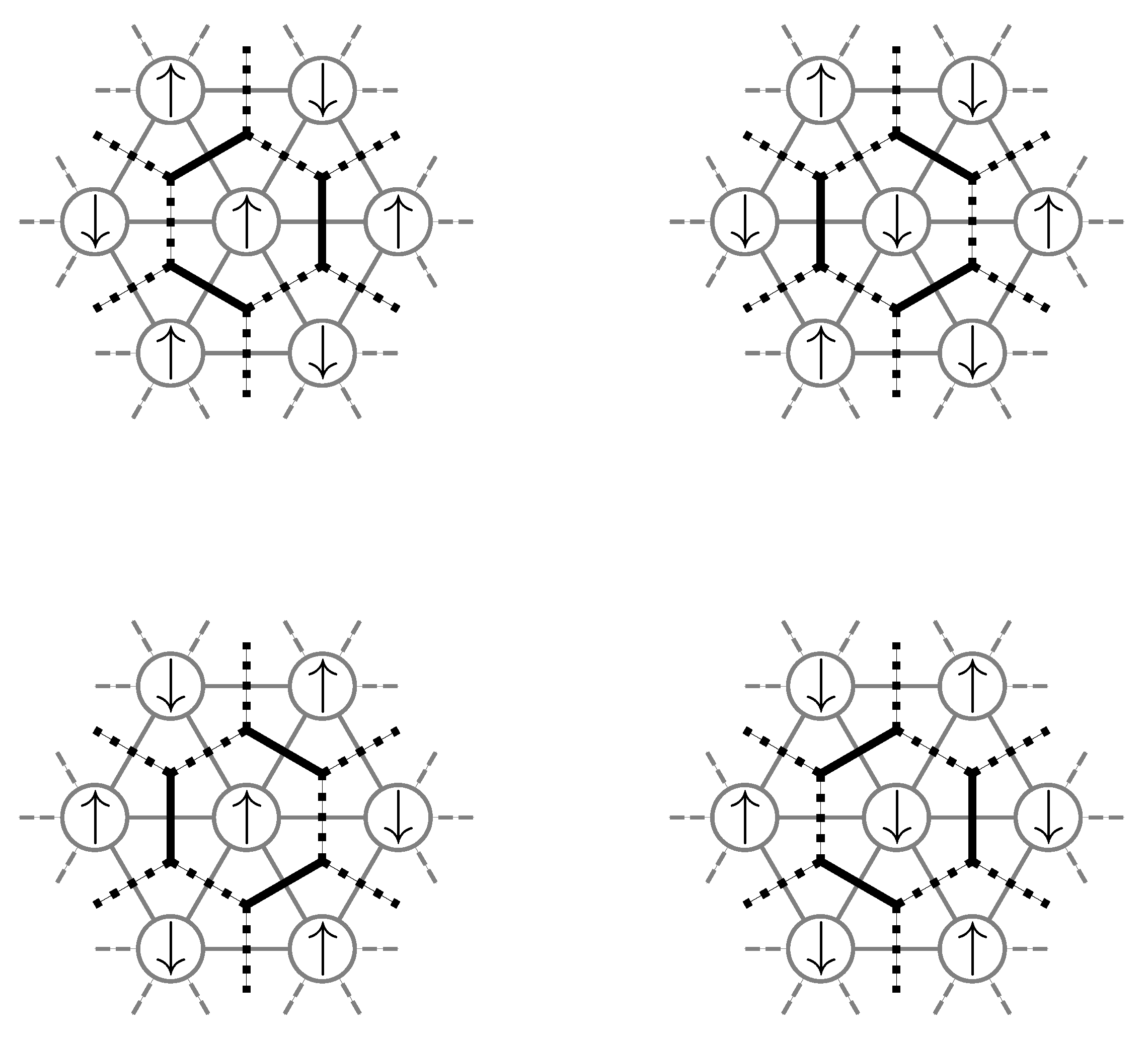

Before going into the description of the Markov chain to sample the configuration space, it is illustrative to visualise a configuration with non-vanishing weight defined by a state

and an operator sequence

. In

Figure 14, an exemplary configuration with non-vanishing weight is illustrated for the one-dimensional TFIM and visualisations of the different operators Equations (

228)–(

230) in such a configuration are shown.

Before going into the details of the algorithm, we introduce the main concept of the update scheme and the crucial obstacles that are encountered in setting up an efficient algorithm [

39]. Each step of the Markov chain sampling of configurations is performed by performing a so-called diagonal update followed by an off-diagonal quantum cluster update [

39]. In the diagonal update, one iterates over the sequence and exchanges trivial operators with constant or Ising operators and vice versa while propagating the states along the sequence. In the off-diagonal update, constant operators are exchanged with field operators while preserving the weight of the configuration. The main obstacle that is circumvented with this update procedure is the following: It is a non-trivial and non-local task to insert off-diagonal operators into the sequence without creating a configuration with vanishing weight. The first problem is that one cannot simply insert a single field operator into the sequence as it breaks the periodicity of the propagated states

, leading to a vanishing weight due to the orthonormality of the computational basis. Therefore, field operators can only occur in an even number for each site to preserve the periodicity in imaginary time. The second issue involves Ising operators placed on a pair of sites

i and

j. If one places a field operator at one of the sites before and behind the Ising operator in the sequence, this preserves the periodicity in imaginary time, but the matrix element of the Ising operator becomes zero as the spins will be misaligned with respect to the sign of the Ising coupling (see Equation (

232)). These issues can be tackled by a non-local off-diagonal update [

39], which we will thoroughly discuss after the diagonal update.

In the diagonal update, the number of non-trivial operators

n is altered by exchanging trivial operators with constant and Ising operators in the operator sequence and vice versa. This does not change the states

, including the state

. Starting from propagation step

and state

, one iterates over the sequence step-by-step and conducts the exchange of trivial operators with non-trivial diagonal operators. If a field operator is encountered at the current propagation step, the state is propagated by flipping the respective spin, and the iteration proceeds. If a diagonal operator is encountered, a local update following the Metropolis–Hastings algorithm as described in

Section 3 is performed and the total transition probability is made of a proposition probability

and an acceptance probability

.

If a trivial operator is encountered at propagation step

k, a non-trivial diagonal operator gets proposed with the probability

taking into account the weight

of the proposed operator with the normalising constant

C:

The weight is essentially given by the matrix elements of the respective operators

with the special case that the Ising operators are a priori handled as if they were allowed. At a later stage, it is checked if the spins are correctly aligned at the considered propagation step

k and state

, and the operator gets rejected if this is not the case. This has the benefit that one does not need to check every single bond for correct alignment for the insertion of a single operator, which scales like

for long-range models. This sampling can be performed in a constant time complexity in the number of elements in the distribution by using the so-called walker method of aliases [

214]. Instructions on how to set up a walker sampler from a distribution of discrete weights and how to draw from this distribution can be found in

Appendix C or in Ref. [

214]. Drawing from the discrete distribution of weights, an operator

gets proposed to be inserted. On the other side, if a non-trivial diagonal operator is encountered, it is always proposed to be replaced by a trivial operator:

The acceptance probabilities are then chosen according to the Metropolis–Hastings algorithm in Equation (

46). For a constant field operator

, this gives the acceptance probability:

Similarly, for an Ising operator

, one has

up to a factor

, which is either 1 or 0 depending on if the spins at the current propagation step

k are correctly aligned or misaligned. An Ising bond with misaligned spins would lead to a vanishing weight of the configuration and is, therefore, not allowed.

Up to this factor, the acceptance probability is the same independent of the type of non-trivial diagonal operator that is proposed to be inserted as the operator weight arising in the weight for the newly proposed configuration cancels with the same factor in the proposition probability. This is because we already chose to propose an operator by considering its respective weight . As the acceptance probabilities do not differ, one can, therefore, also first check if one accepts to insert any non-trivial diagonal operator and, only if this is accepted, draw the precise operator to be inserted. Of course, if the chosen operator is an Ising operator, it still has to be checked if the spins are correctly aligned to prevent a non-vanishing weight.

The cancellation of the operator weights

also makes it easier to perform the reverse process and replace a non-trivial diagonal operator with a trivial one in the sense that the acceptance probability for inserting

:

does not depend on the current non-trivial diagonal operator

.

If a proposition gets rejected, the iteration along the sequence continues and the procedure starts again for the next operator. After each diagonal update sweep (iteration over the whole sequence) during the equilibration, trivial operators are appended to the end of the sequence such that

[

39]. This allows dynamically adjusting the sequence length

for the fixed-length scheme to ensure a sufficiently long sequence.

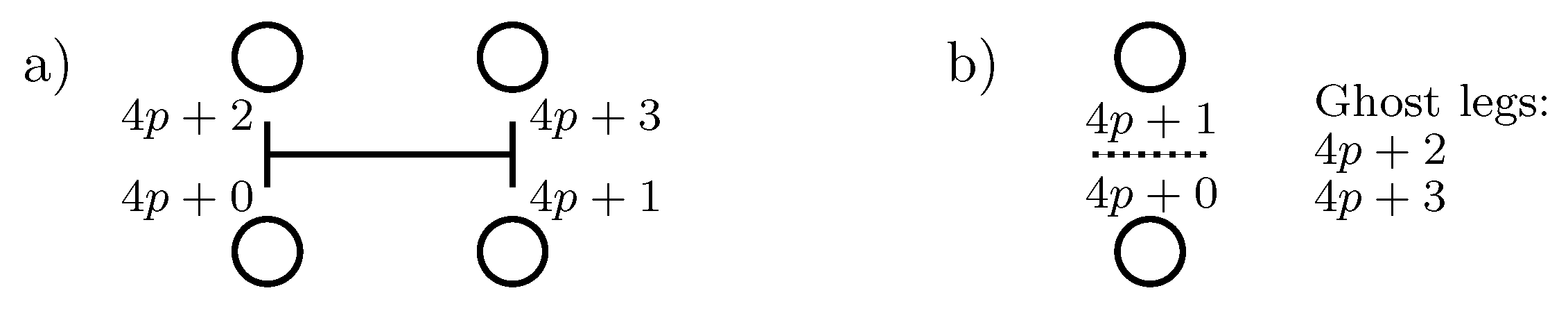

For an efficient implementation of the off-diagonal quantum cluster update, it is crucial to introduce the concept of operator legs. An operator at position

p in the sequence has legs with the numbers

. For an Ising operator, these legs are associated with two legs per site, one upper and one lower leg (see

Figure 15a).

For a constant or field operator, only two of the four legs are real vertex legs since these operators act only on a single site (see

Figure 15b). The remaining two legs are called ghost legs and are considered solely due to algorithmic reasons as it is numerically beneficial to let every operator have the same number of legs. This allows calculating the propagation index

p of an operator from the leg numbers using integer division with

. Trivial operators can be described with four ghost legs or can be ignored entirely in the sequence for the off-diagonal update.

For the chosen representation of the Ising model within the SSE, one can subdivide the configuration into disjoint clusters that extend in space, as well as in imaginary time with Ising operators acting as bridges in real space and with constant and field operators acting as delimiters in imaginary time. These clusters of spins can be flipped by replacing the delimiting constant operators with field operators and vice versa without changing the weight . If the cluster winds around the boundary in imaginary time, the respective spins in state have to be updated as well. In order to flip half of the configuration on average and obtain a good mixing, all clusters are constructed and the probability to flip a specific cluster is chosen to be for each cluster separately.

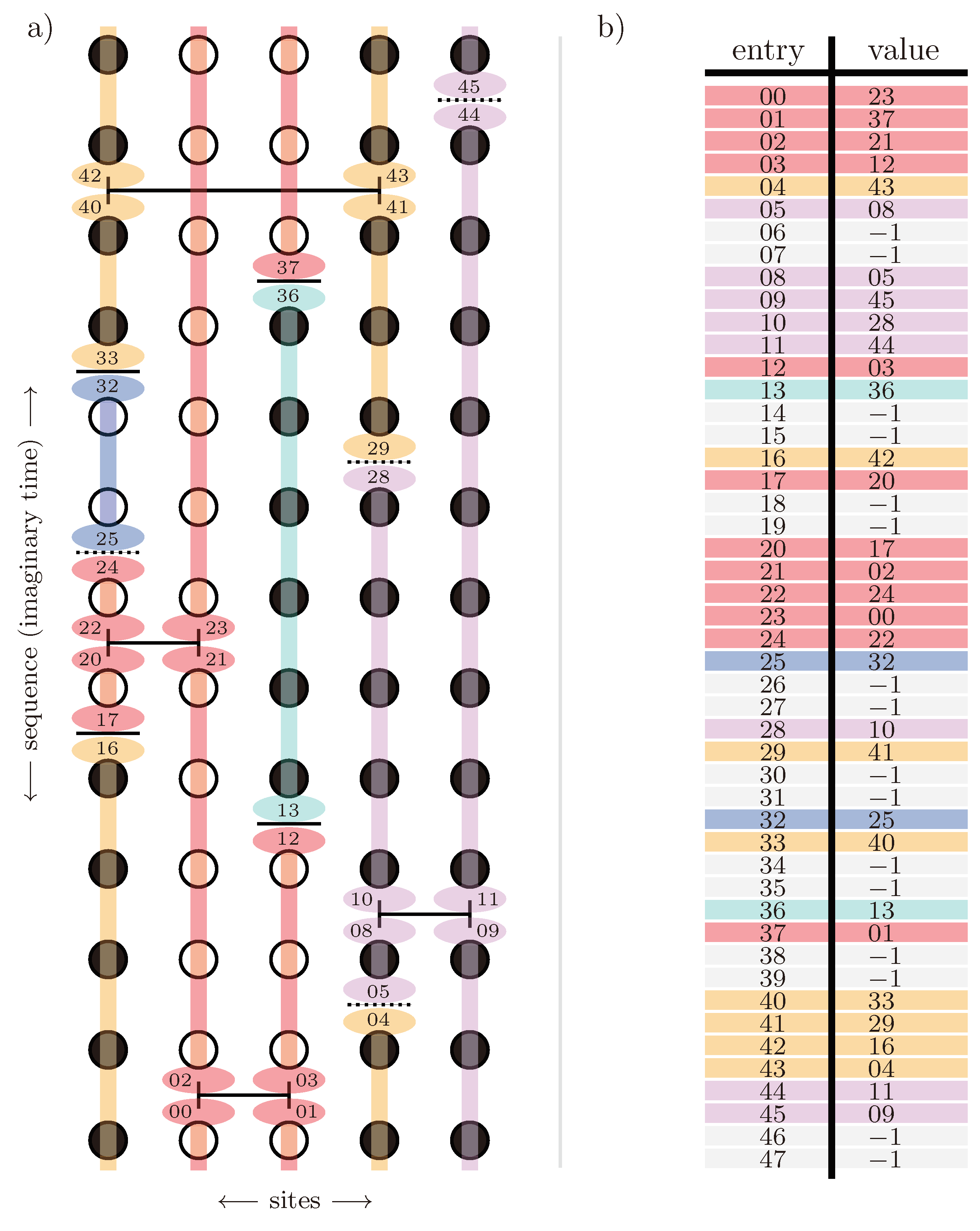

For the construction of the clusters, the propagated spin states along imaginary time are not needed. The entire problem can be dealt with in the language of vertices with legs. The relevant information for the cluster formation is which legs are connected. This information about the connection between legs of different operators is stored in a doubly linked list. The list is set up in the following way: At the index of a vertex leg

i, we store the index

j of the leg it is connected to, i.e.,

and vice versa

. This segmentation is illustrated for an exemplary configuration in

Figure 16 together with the doubly linked leg list for the depicted configuration. The doubly linked list can already be set up during the diagonal update when the sequence is traversed either way. An efficient algorithm to set up the data structure is described in Refs. [

213,

215].

In comparison to general off-diagonal loop updates [

215,

216,

217], the formation of clusters in the presented off-diagonal cluster update for the TFIM is fully deterministic. The whole configuration is divided into disjoint clusters, all of which will be built and flipped with probability

. It is beneficial to already decide whether a cluster is flipped or not before constructing the cluster so the constant and field operators and spin states can already be processed during the construction. A leg that is processed during the construction of a cluster is marked as visited in order to not process the same leg twice. This is also the reason why all the clusters have to be constructed even if they will not be flipped, as otherwise, the same cluster would get constructed starting from another leg later on. Further, we introduce a stack for the legs that were visited during the construction, but whose connections are yet to be processed.

The formation of each cluster starts by choosing a leg that has not yet been visited in the current off-diagonal update. At the beginning of the cluster formation, it is randomly determined if the cluster is flipped or not. If the leg corresponds to an Ising vertex, the cluster branches out to all four legs of the vertex. This means that all four legs of the operator are put on the stack. If the leg corresponds to a constant or field operator, the type of operator is exchanged if the cluster is flipped and only this primal leg is put on the stack. Next, the following logic is repeated until the stack is empty. We pop a leg l from the stack. If the leg has been visited already, we continue with a new leg from the stack. Else, we determine the new leg using the doubly linked list. We mark both legs l and as visited. If one passes by the periodic boundary in imaginary time while going from leg l to and the cluster will be flipped, the corresponding site belonging to the legs in the state has to be flipped. If belongs to an Ising operator, we add all legs of the Ising operator that have not been visited yet to the stack. If belongs to a constant or field operator, we exchange the operator if the cluster is said to be flipped. This procedure is repeated until the stack is empty. After that, one proceeds with the next cluster starting from a leg that has not yet been visited in the current cluster update. If no such leg is left, the cluster update is finished.

In addition to this cluster update, spins that have no operators acting on them in the sequence can be flipped with a probability of (thermal spin flip).

In summary, the off-diagonal update exchanges field and constant operators with each other and changes the state . Combining the diagonal update with the off-diagonal cluster update samples the entire configuration space.

5.2. Algorithm for Unfrustrated Heisenberg Models

In this section, we describe the algorithm to sample arbitrary unfrustrated spin-1/2 Heisenberg models with the SSE framework [

213]. By unfrustrated Heisenberg models, we mean Hamiltonians:

written as a sum over three-component interactions between two sites

and

connected by bond

b, where each bond is either ferromagnetic (F) with

or antiferromagnetic (AF) with

, with the property that there is no loop of lattice sites connected by bonds that contains an odd number of antiferromagnetic bonds, as this would lead to frustration. The spin operators are a compact notation for a three-component vector of spin operators

. The coupling strengths

can have a priori arbitrary amplitudes. It is crucial to look at unfrustrated Heisenberg models in order to define non-negative weights for configurations as the off-diagonal components of the antiferromagnetic operators have a negative matrix element. In an unfrustrated model, these matrix elements always occur an even number of times in the operator sequence of any valid configuration, which makes it possible to construct a non-negative SSE weight [

213].

For the SSE algorithm, the

-eigenbasis

is chosen, but the

- or

-eigenbasis would work the same way. The Hamiltonian is decomposed into

using the following operators:

The diagonal operators

and

in Equations (

246) and (

248) are defined in the same fashion as for the TFIM up to the factor of

due to the usage of spin operators

instead of Pauli matrices

with

. The contribution to the weight of a sequence of these operators is either

if the bond fulfils the (anti)ferromagnetic condition and zero otherwise. Although the expressions for the ferromagnetic and antiferromagnetic bonds look the same, we distinguish between these two bonds to highlight that these objects behave differently within the off-diagonal update.

As

for antiferromagnetic bonds, the off-diagonal operators

do not fulfil the non-negativity of matrix elements (see Equation (

215)) in the computational basis. Therefore, they must always appear in an even number of times in the operator sequence to avoid the sign problem. For the ferromagnetic bonds, this restriction is not necessary.

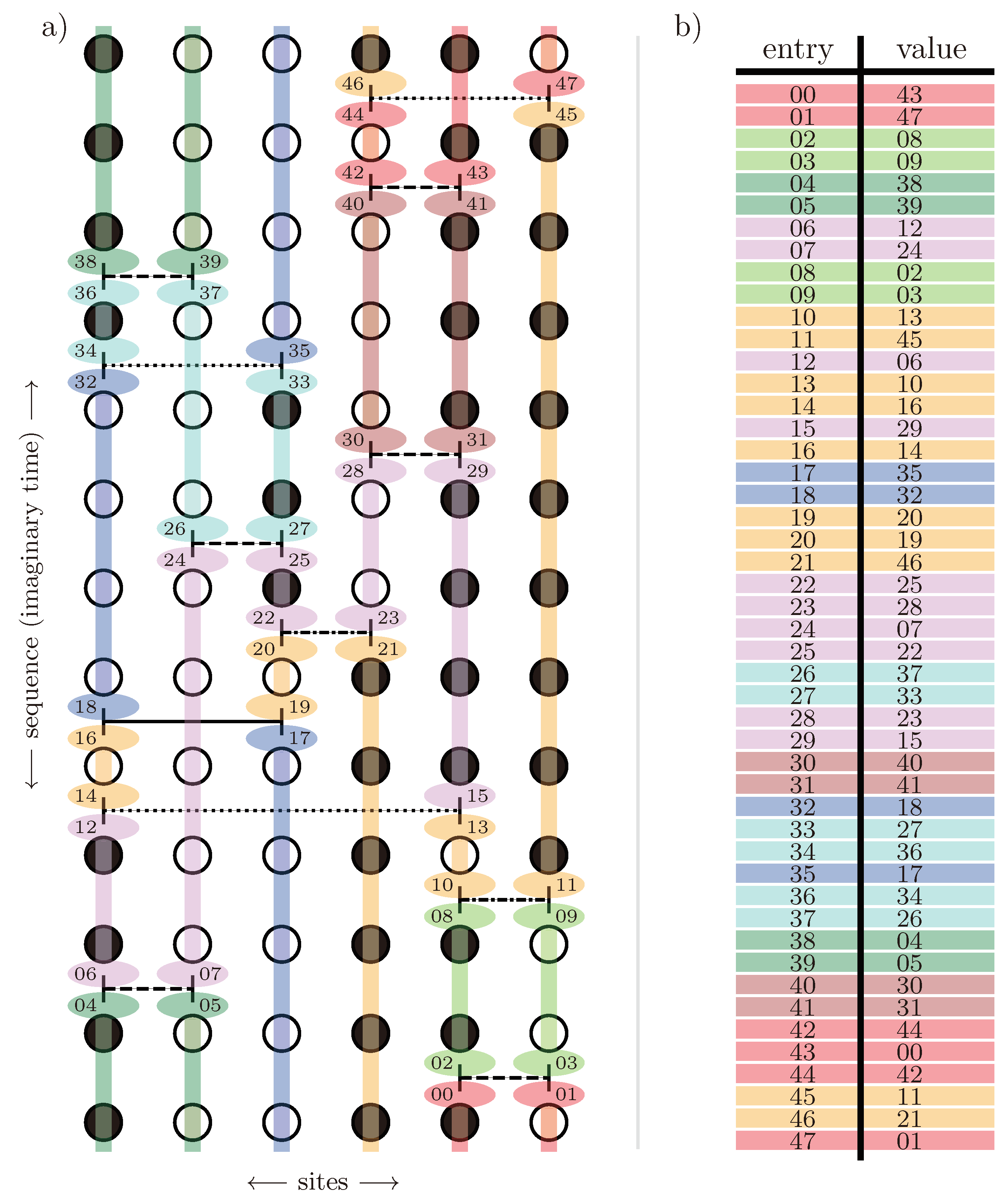

Analogous to the TFIM, the operator sequence additionally contains trivial operators

, which are not part of the Hamiltonian, but are used to pad the sequence to a fixed length

. In contrast to the TFIM, there is no need for further artificial operators like the constant field operators in the TFIM used to limit the cluster in the cluster update. In the case of the Heisenberg model, the non-local off-diagonal update is constructed in the form of loops instead of a cluster with several branches that need to be limited in imaginary time. An exemplary SSE configuration for a Heisenberg chain using the decomposition from above can be seen in

Figure 17.

Similar to the sampling of the TFIM in

Section 5.1, each step of the Markov chain sampling of configurations is achieved by performing a diagonal update followed by a non-local off-diagonal update. The diagonal update exchanges trivial operators with diagonal operators, while the off-diagonal update exchanges diagonal bond operators with the respective off-diagonal bond operators.

Due to the structure of the diagonal operators (see Equations (

246) and (

248)), similar to the TFIM, the diagonal update is performed similarly to the diagonal update of the TFIM described in

Section 5.1. Nonetheless, to make the description of the algorithm for unfrustrated Heisenberg models self-contained, we recapitulate the diagonal update and adapt it to the Heisenberg case.

The diagonal update exchanges trivial operators with diagonal operators in the sequence and vice versa. It will, therefore, not change the states

, including the state

. Starting from

at

, the sequence is again iterated over by

k. If the operator at the current position

k in the sequence is an off-diagonal operator operator, the state

is propagated by applying the off-diagonal operator and the iteration proceeds. If one encounters diagonal or trivial operators in the sequence, the Metropolis–Hastings algorithm (see

Section 3) is performed and the transition probability to an altered configuration is again split into a proposition probability

and an acceptance probability

.

Analogous to the algorithm for the TFIM, if a trivial operator is encountered, a non-trivial diagonal operator gets proposed with the probability:

taking into account the weight

of the proposed operator and the normalising constant

C:

The diagonal update for the Heisenberg model only differs from the one of the TFIM by the detailed probabilities

and the normalising constant

C. The factor of

in the bond weights in comparison to the Ising model comes from using spin operators in contrast to Pauli matrices in the Hamiltonian. This sampling according to the weight

can be performed in a constant-time complexity in the number of elements in the distribution by using the walker method of aliases [

214]. An introduction on how to set up a walker sampler from a distribution of discrete weights and how to draw from this distribution can be found in

Appendix C or in Ref. [

214]. Drawing from the discrete distribution of weights, an operator

gets proposed to be inserted. On the other side, if a non-trivial diagonal operator is encountered, it is always proposed to be replaced by a trivial operator:

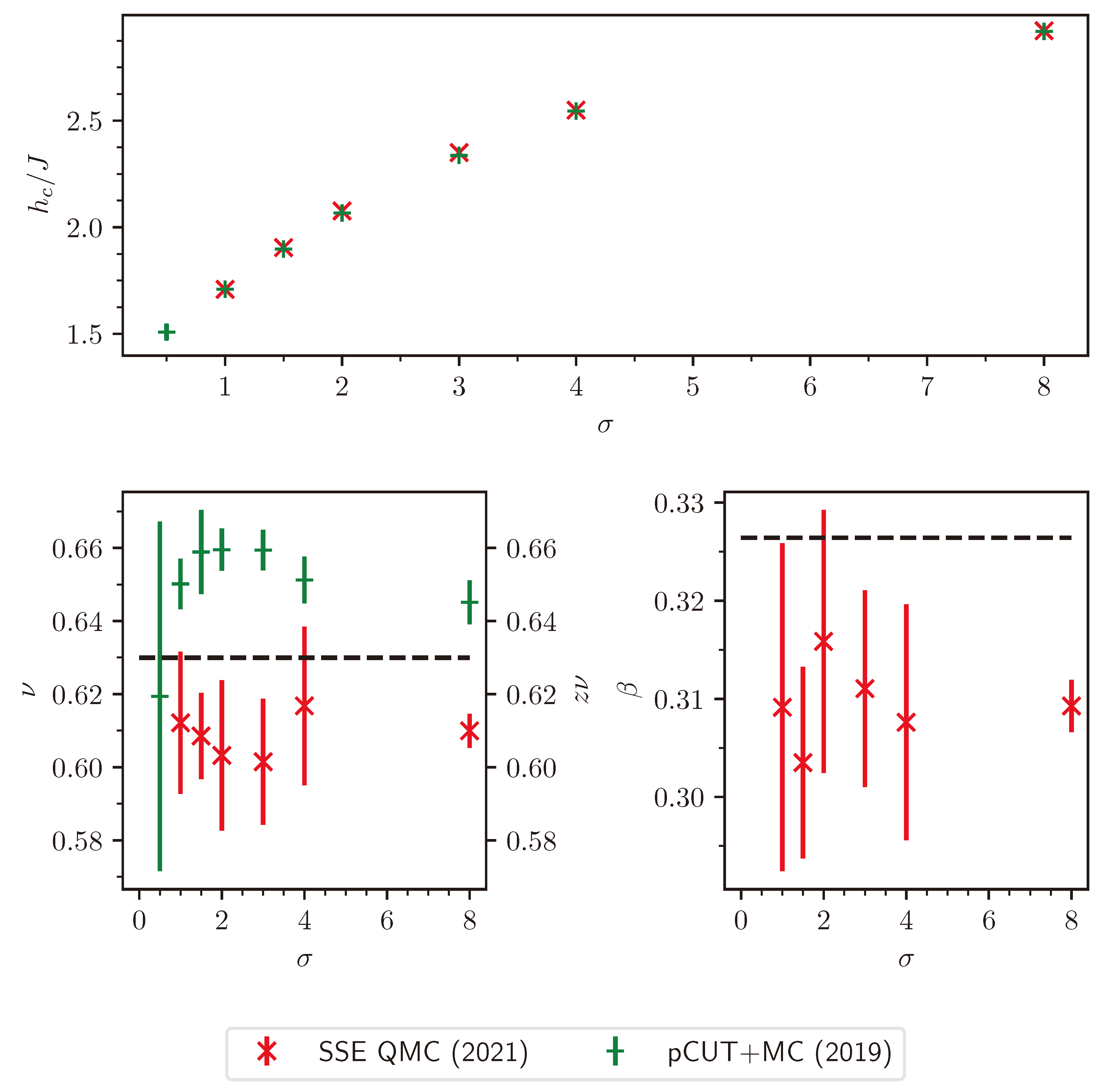

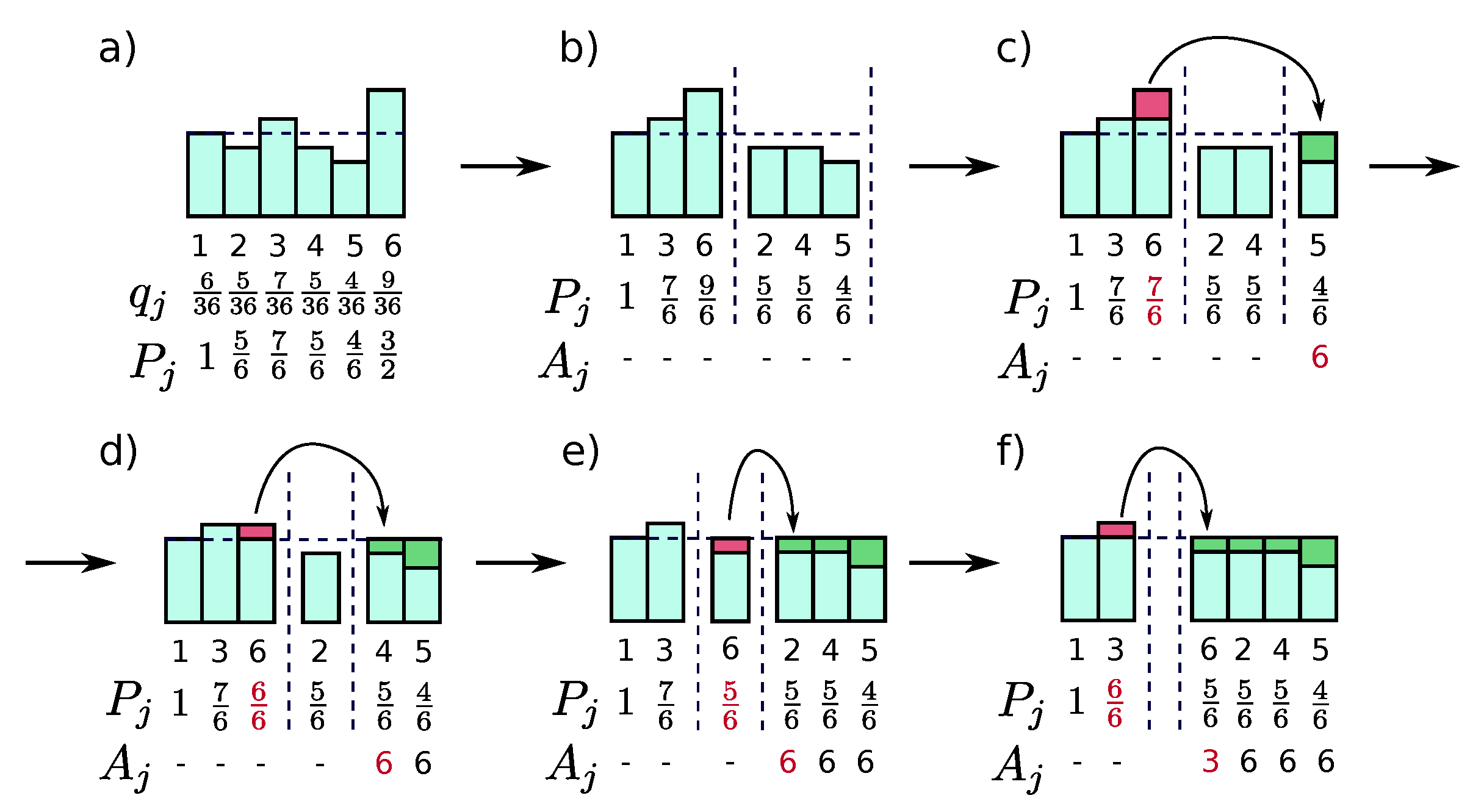

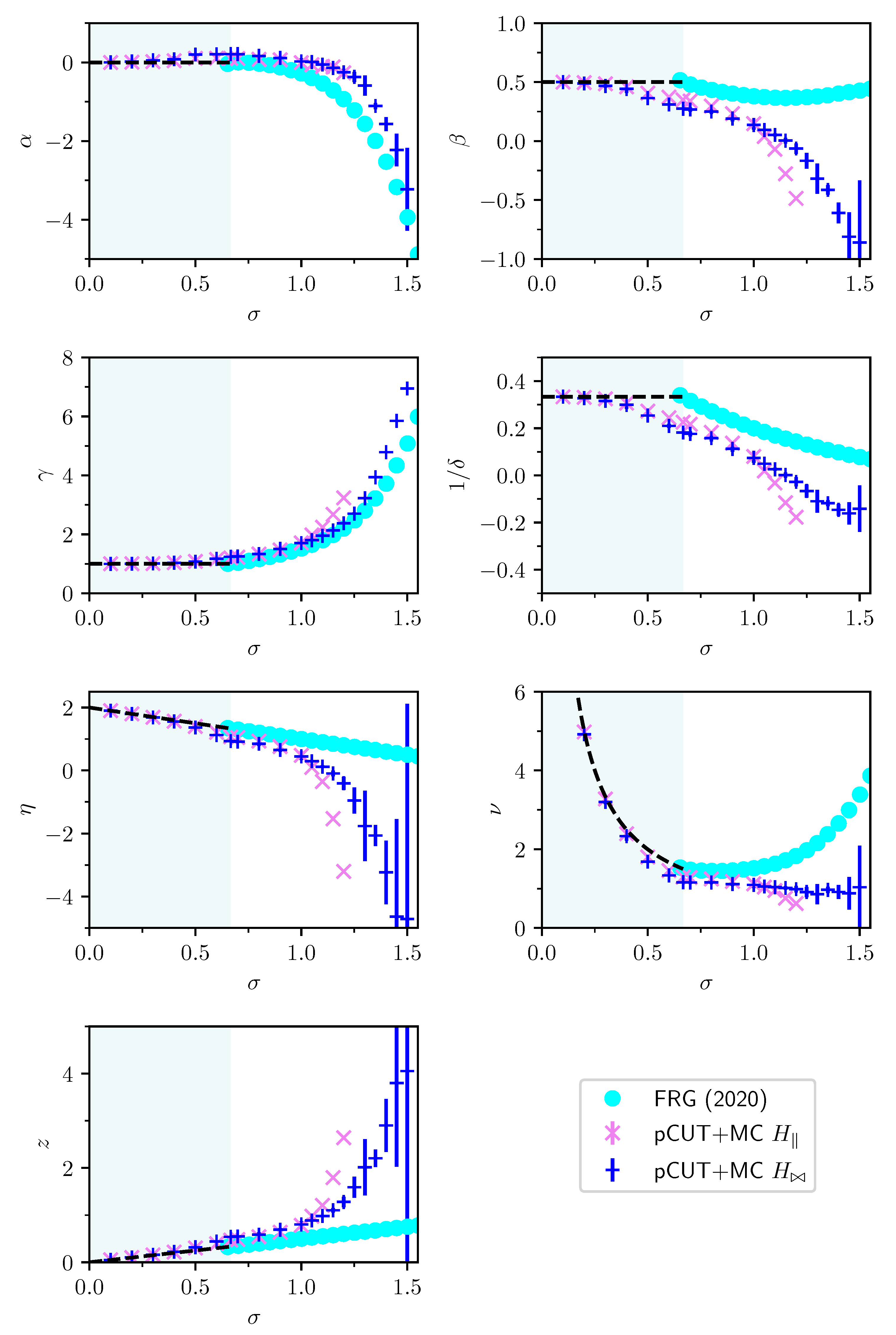

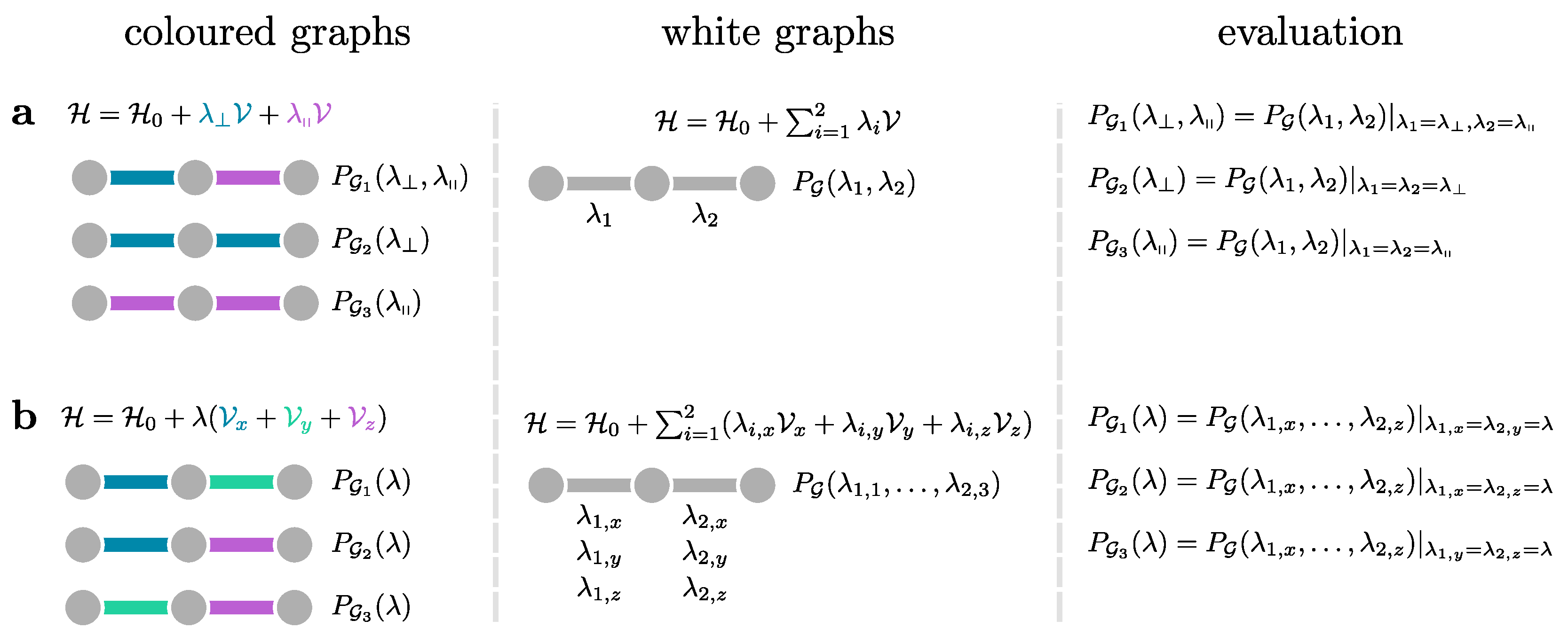

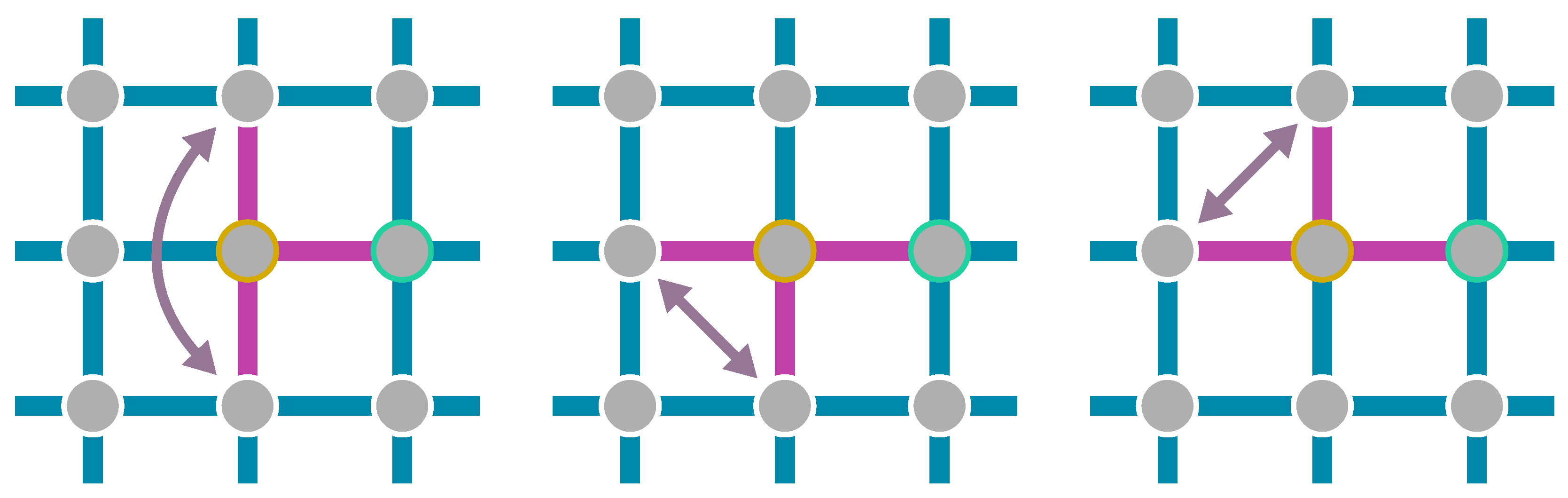

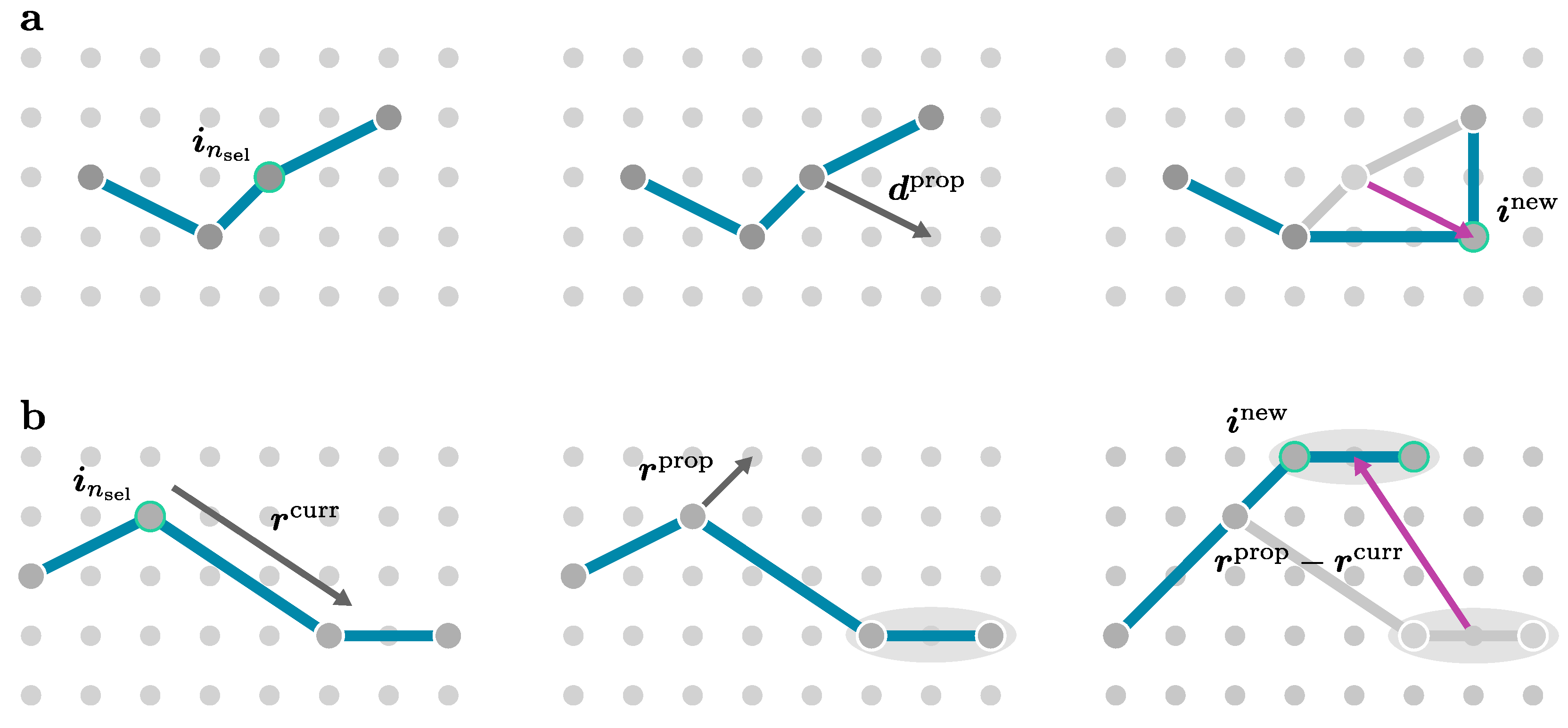

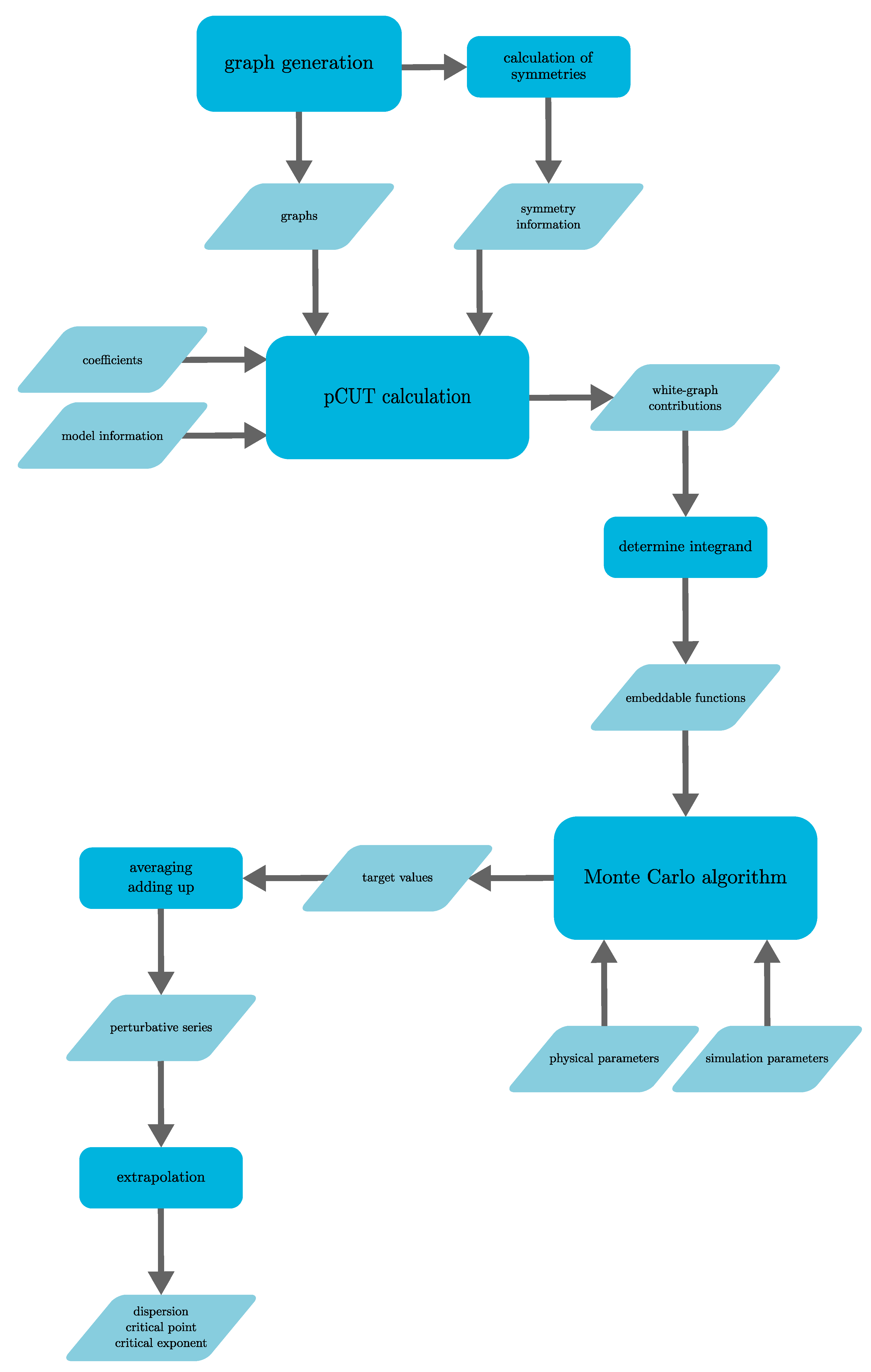

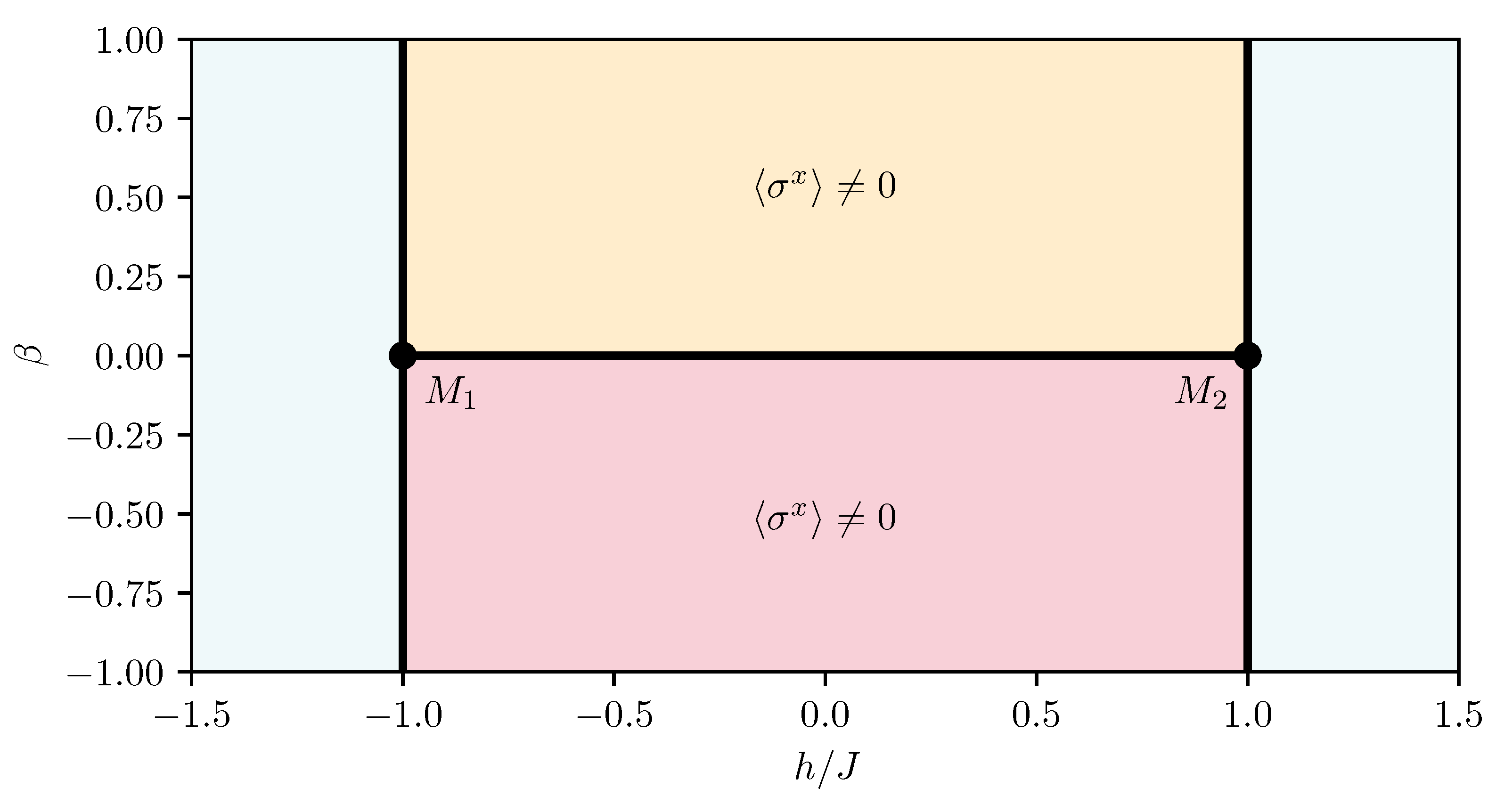

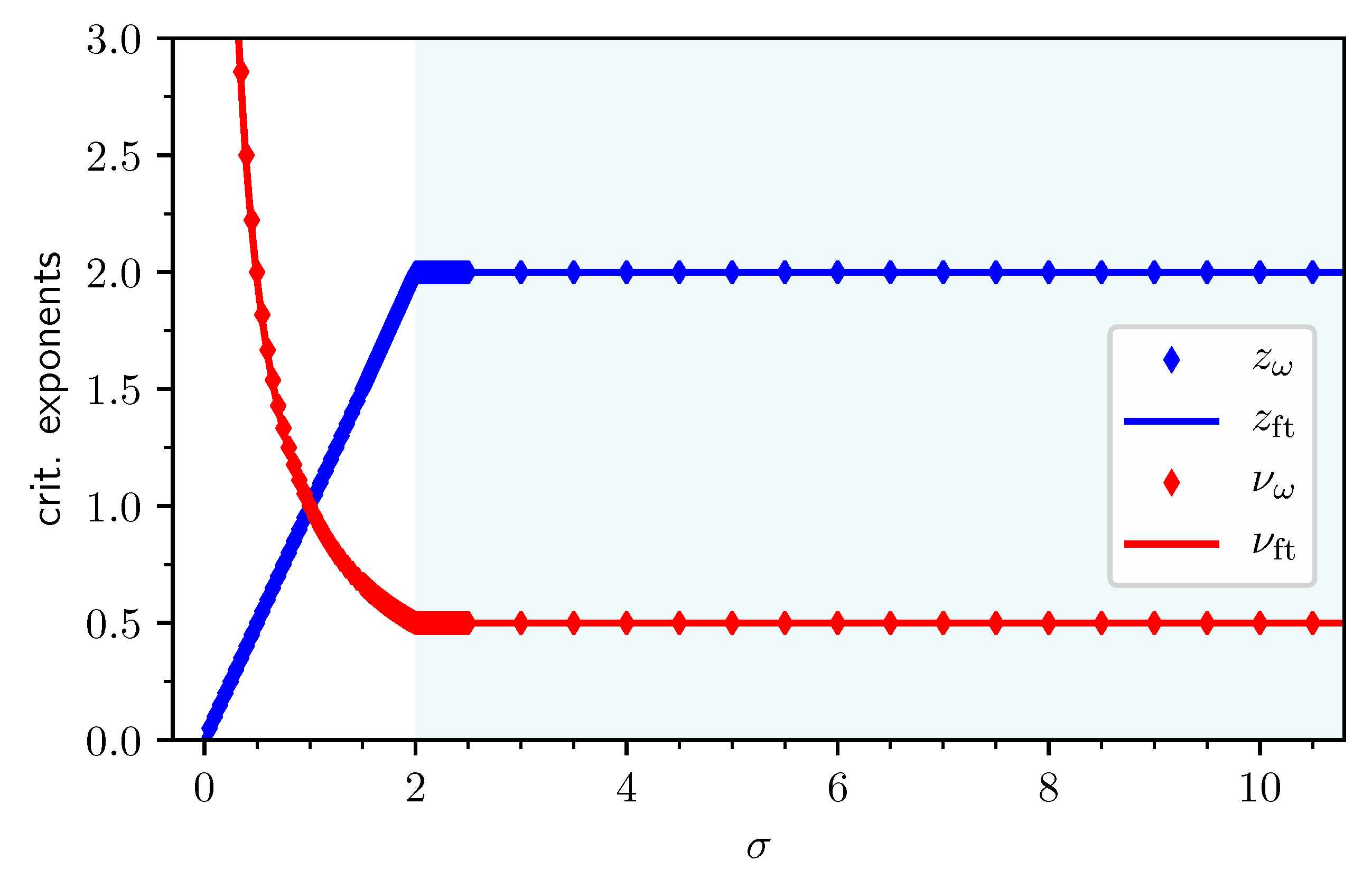

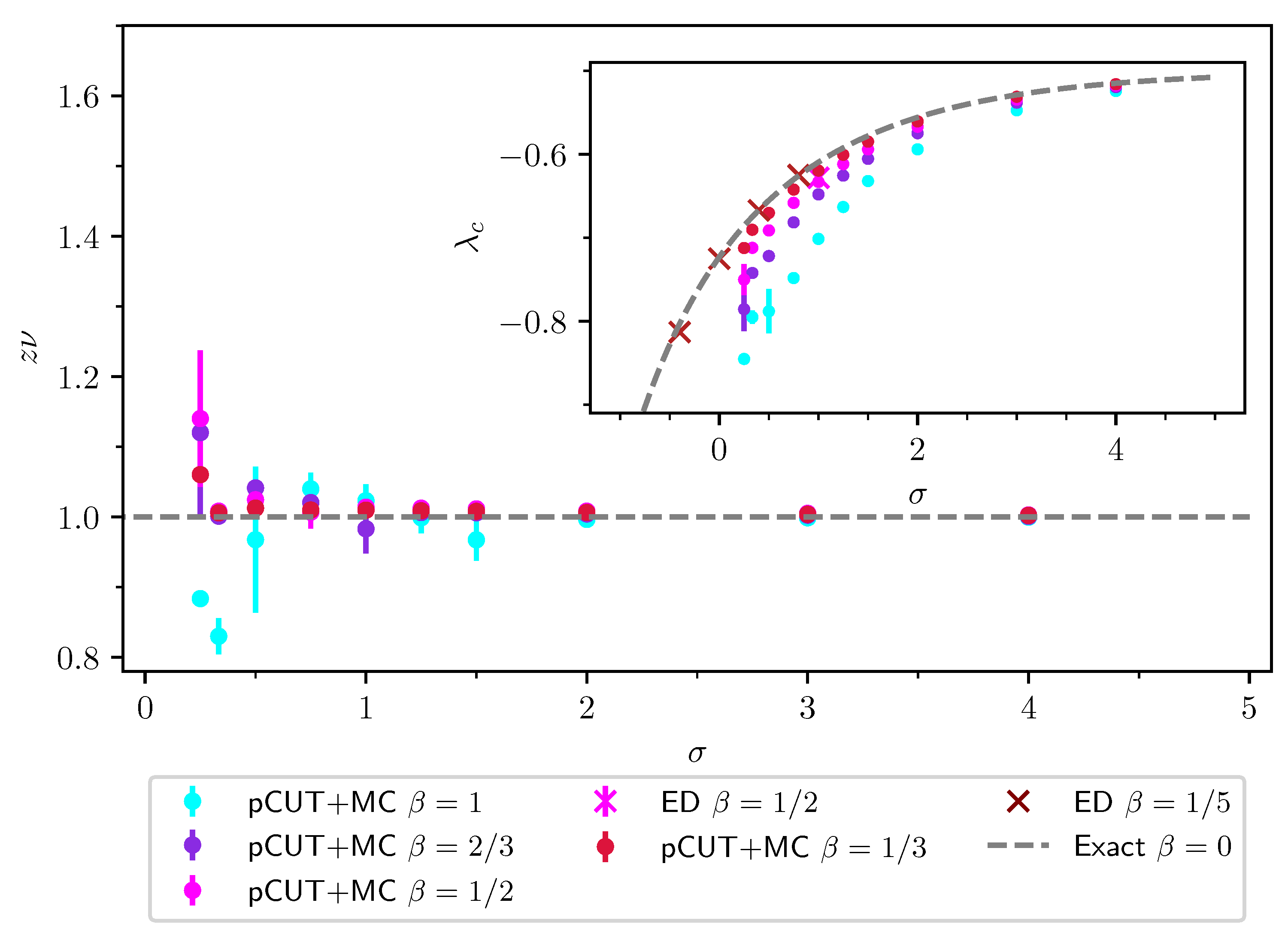

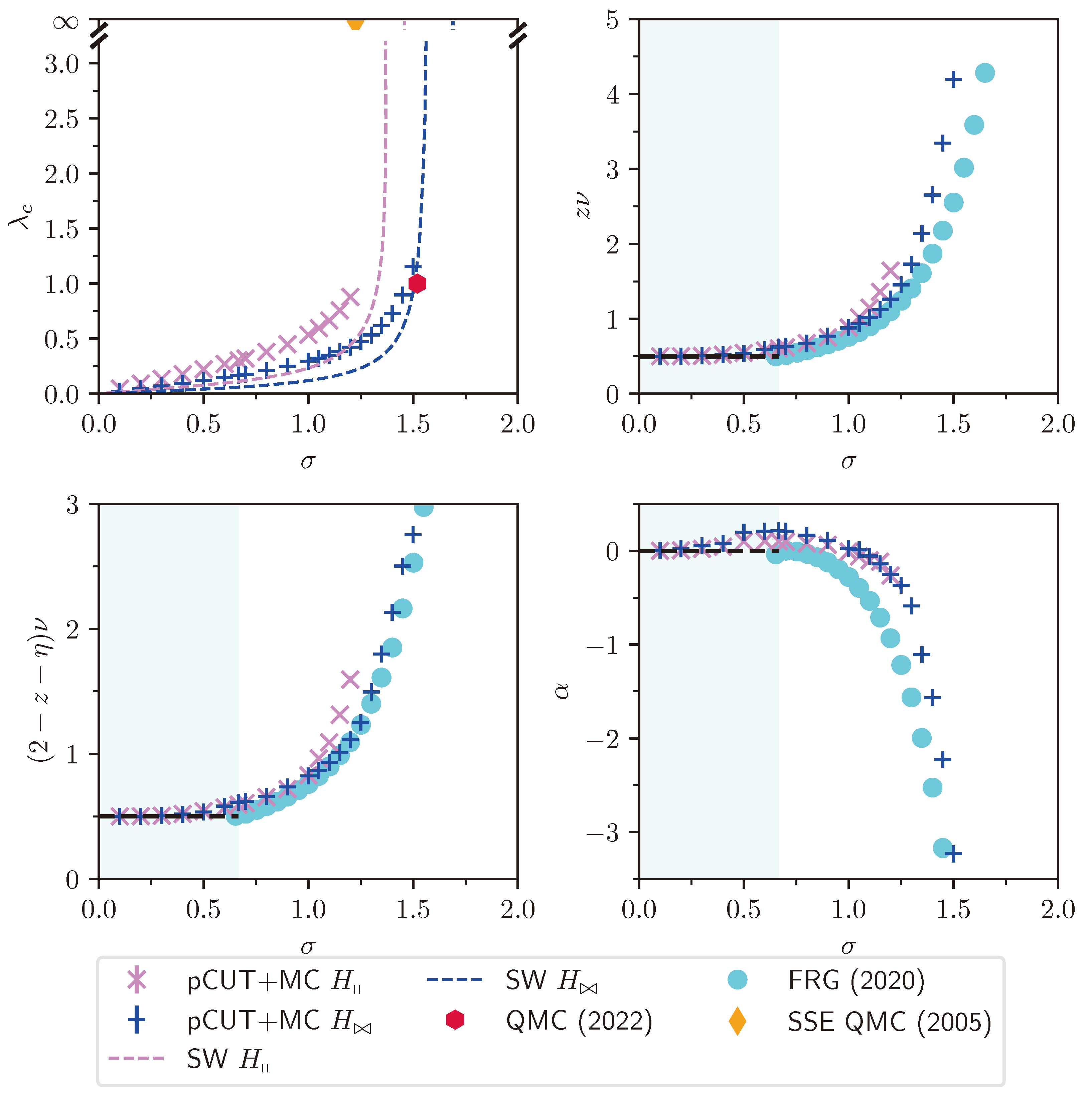

exactly as for the TFIM. The acceptance probabilities are then chosen according to the Metropolis–Hastings algorithm Equation (