Distributed Quantization for Partially Cooperating Sensors Using the Information Bottleneck Method

Abstract

:1. Introduction

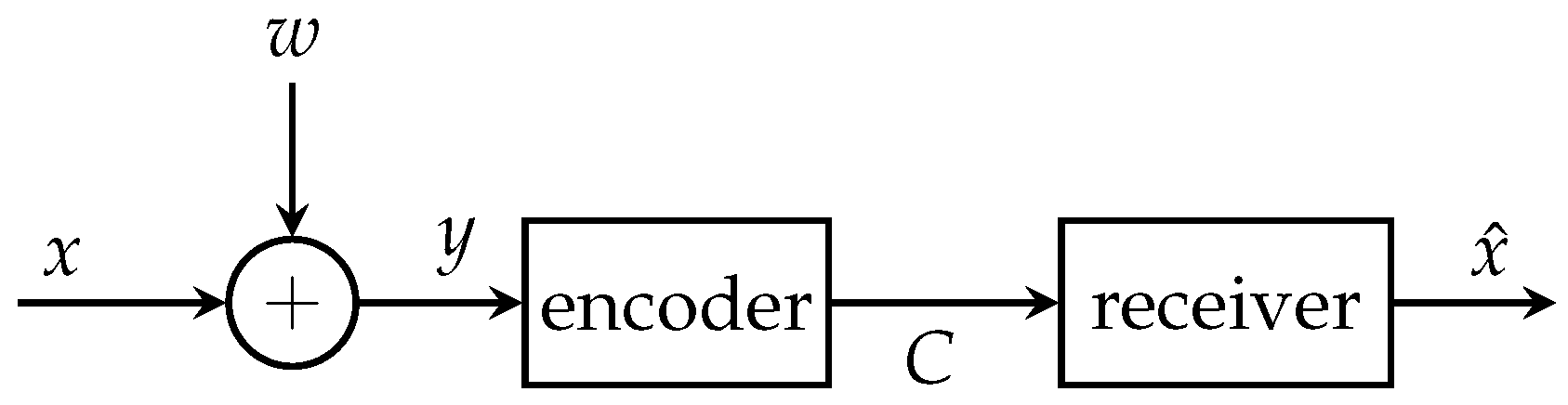

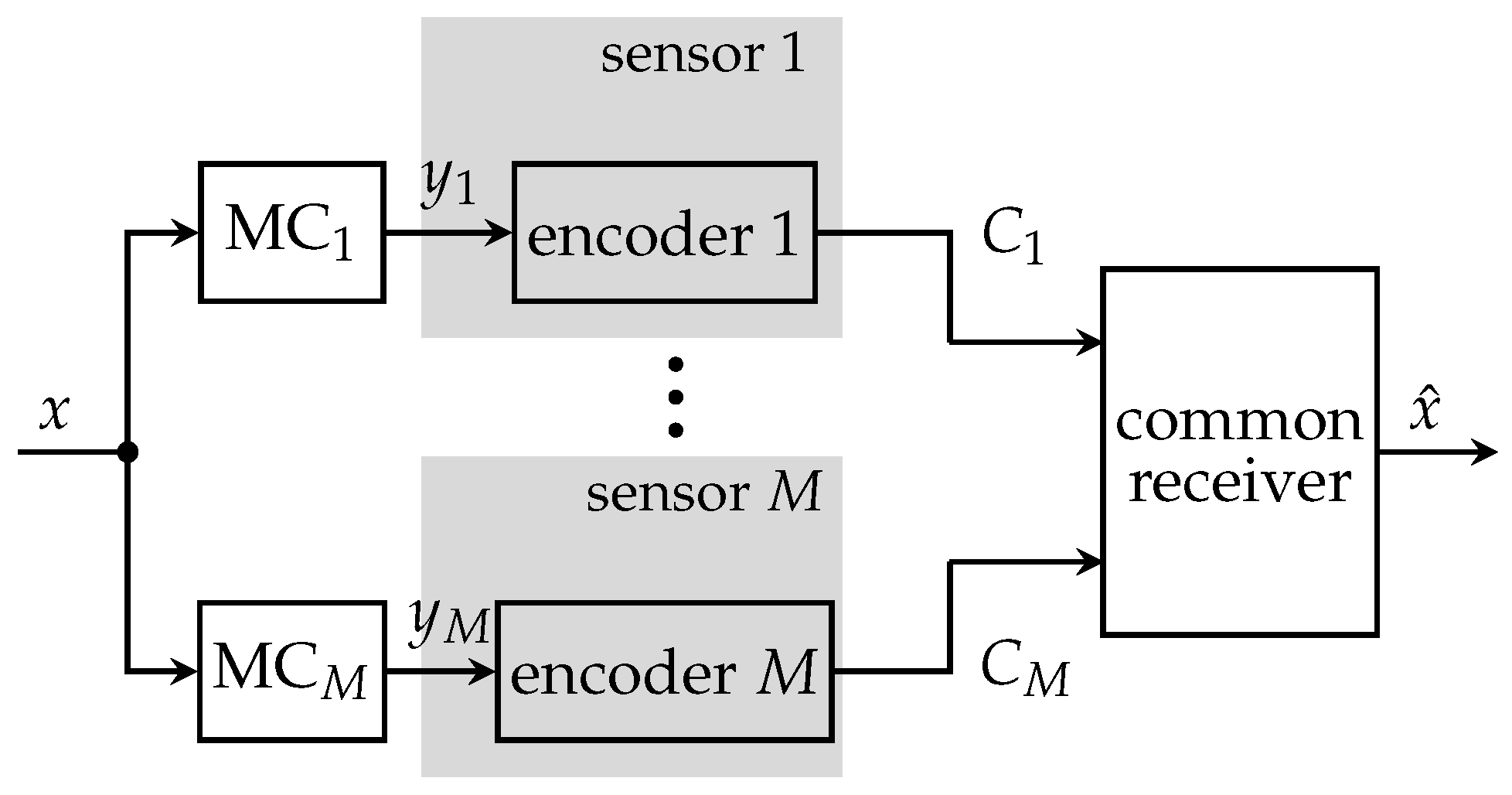

1.1. The CEO Problem

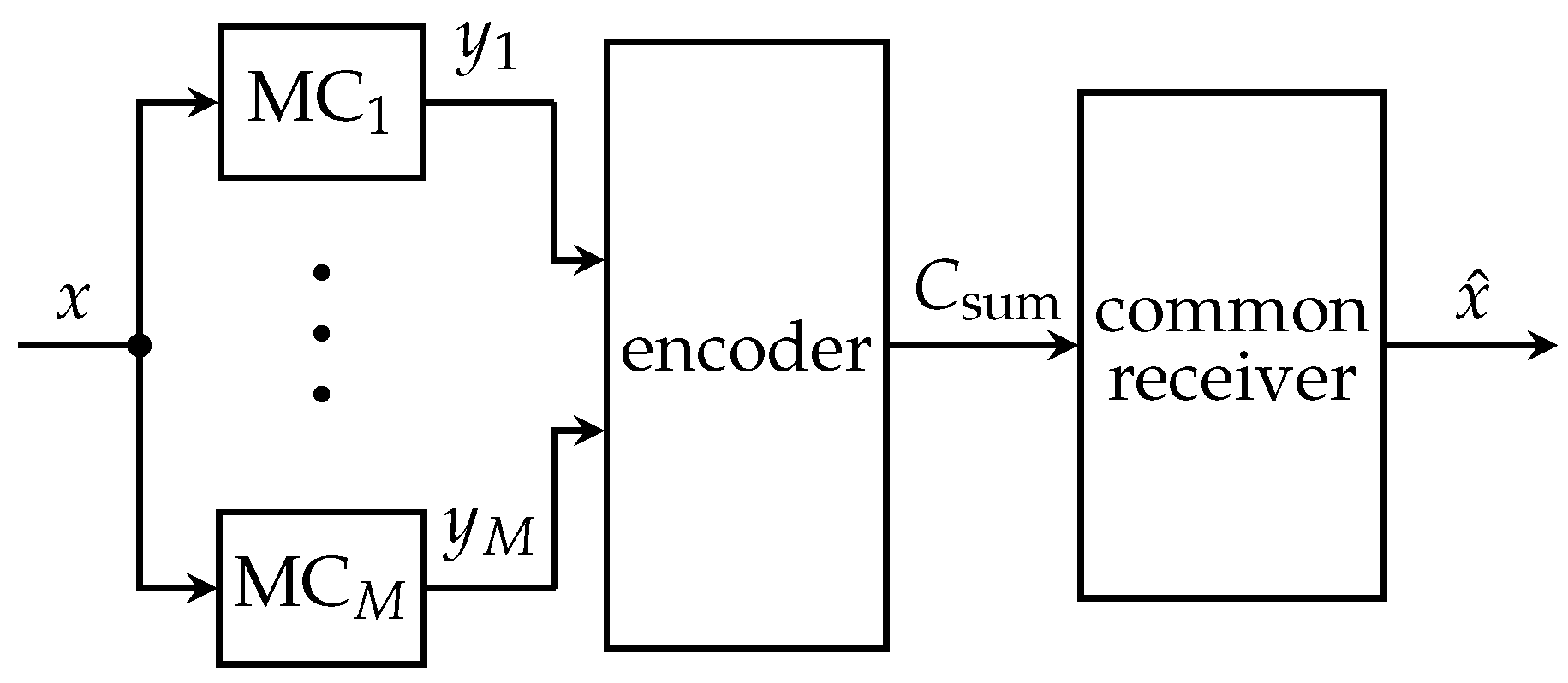

1.2. Partially Cooperating Sensors

1.3. Structure and Notation

2. The Information Bottleneck Principle

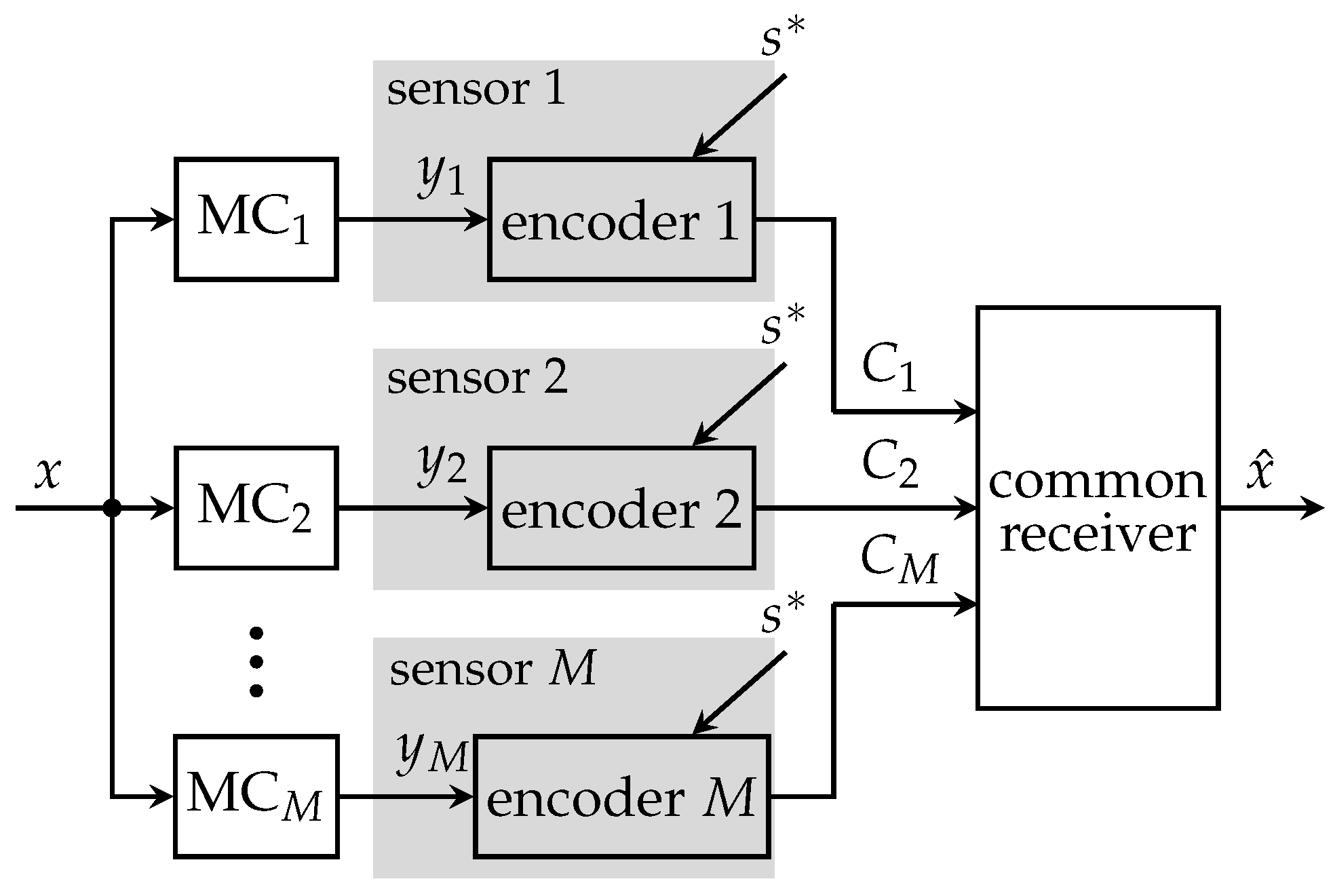

3. Non-Cooperative Distributed Sensing System

4. Fully Cooperative Distributed Sensing—A Centralized Quantization Approach

5. Partially Cooperative Distributed Sensing

5.1. Successive Broadcasting Protocol

5.1.1. Generation of Broadcast Side-Information

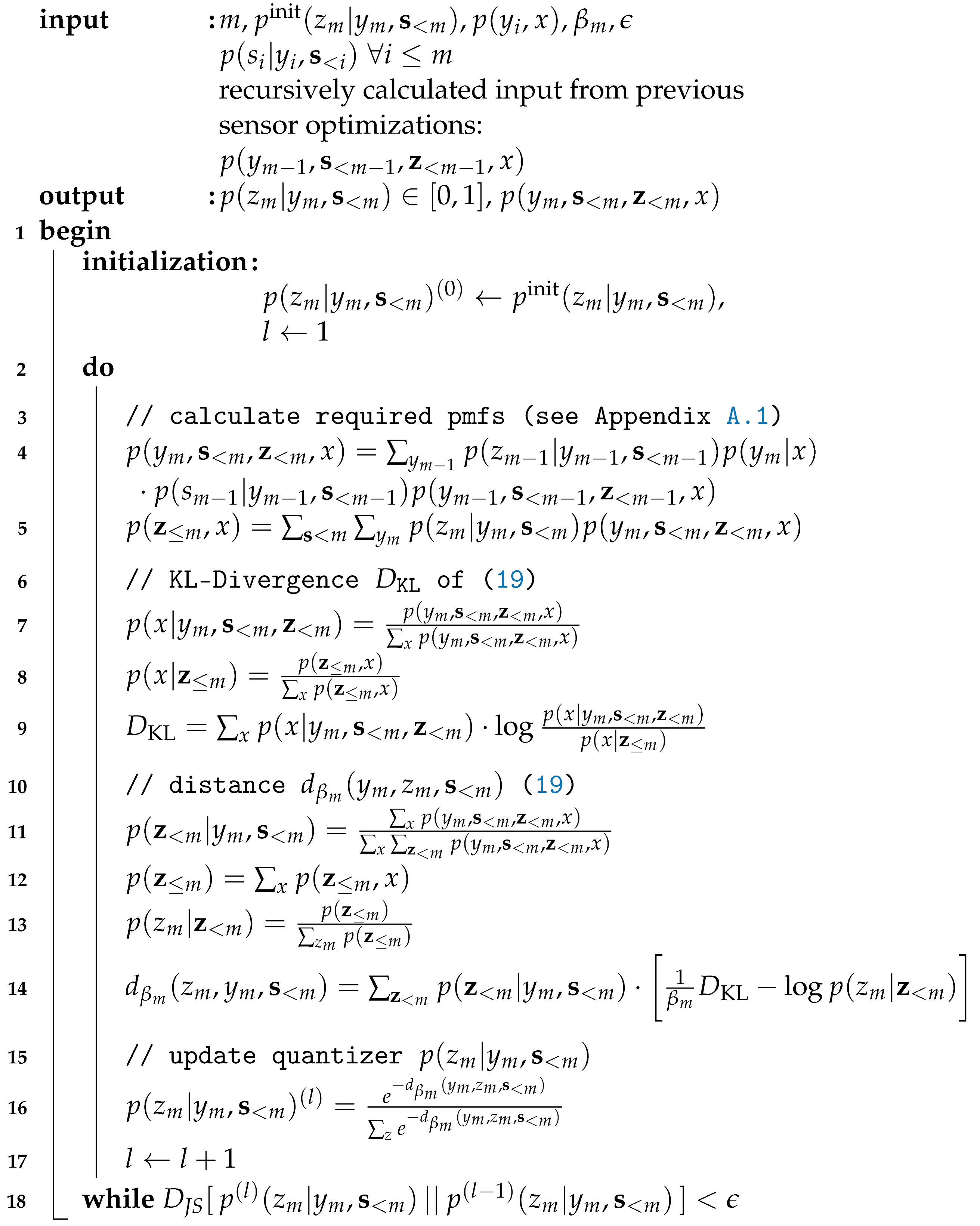

Algorithmic pcCEO Solution for the Successive Broadcasting Protocol

| Algorithm 1: Extended Blahut–Arimoto algorithm for broadcast cooperating sensors. |

|

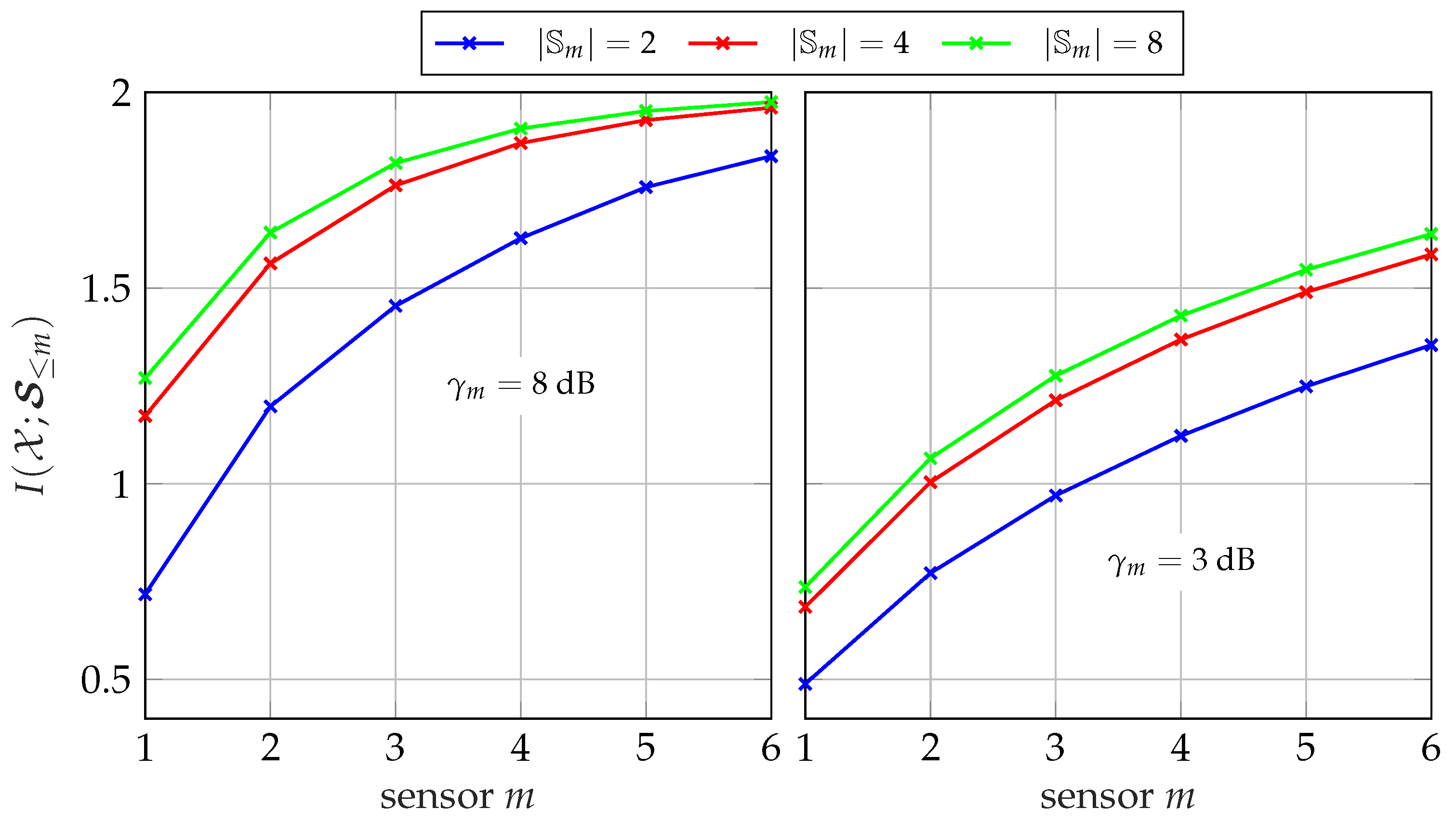

5.1.2. Evolution of Instantaneous Side-Information

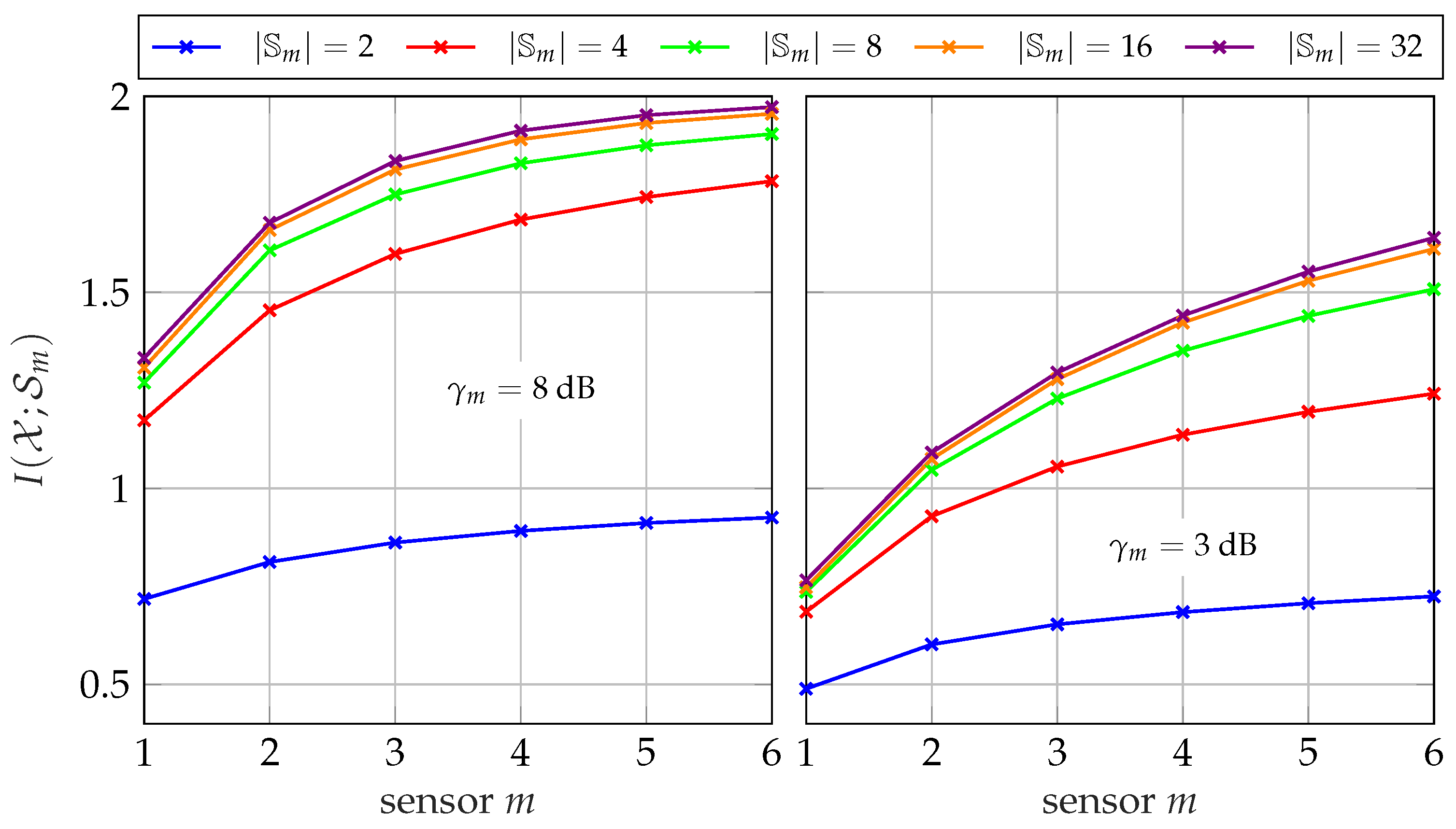

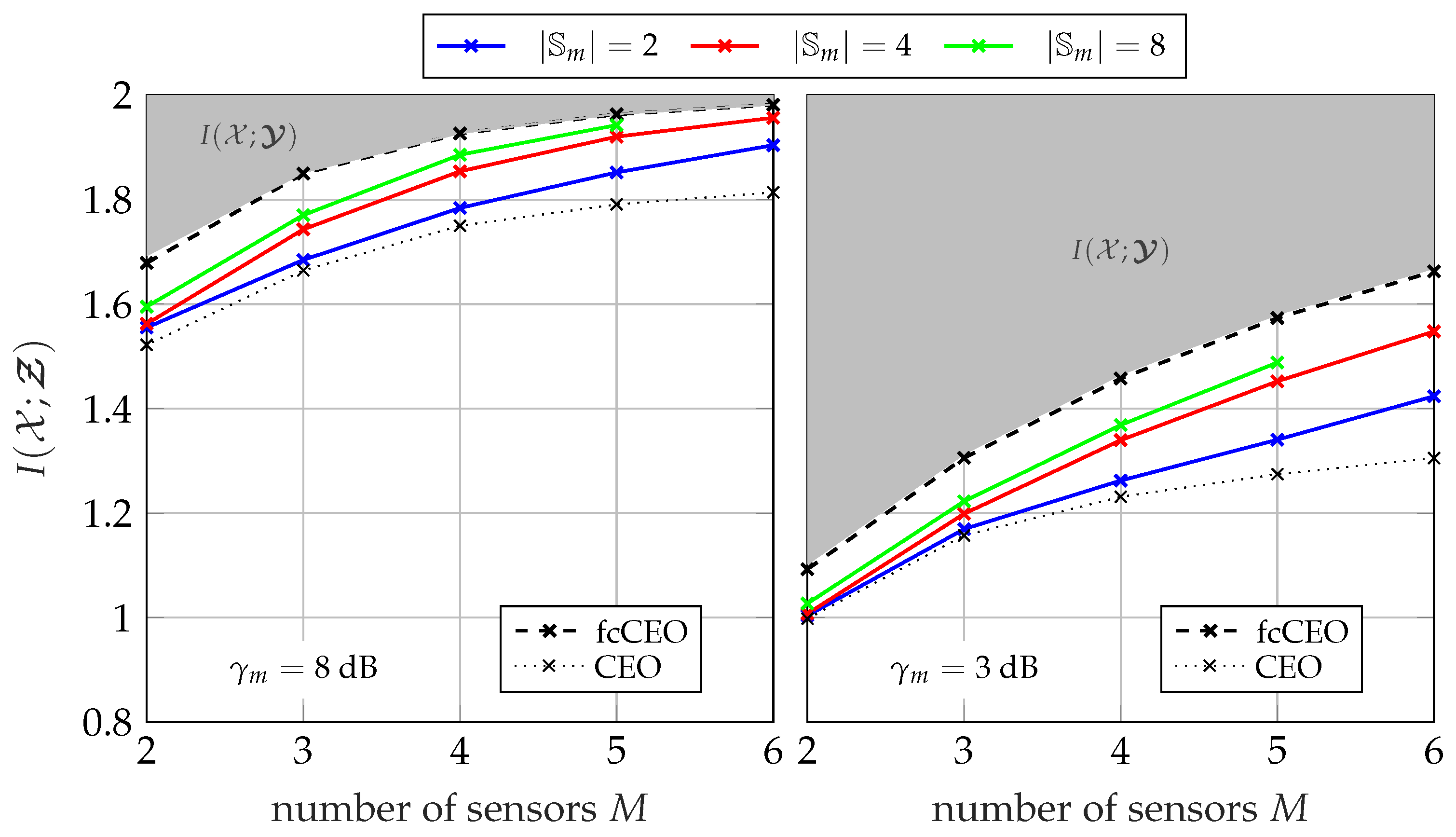

5.1.3. Performance for Different Network Sizes

5.2. Successive Point-to-Point Protocol

5.2.1. Generation of Point-to-Point Side-Information

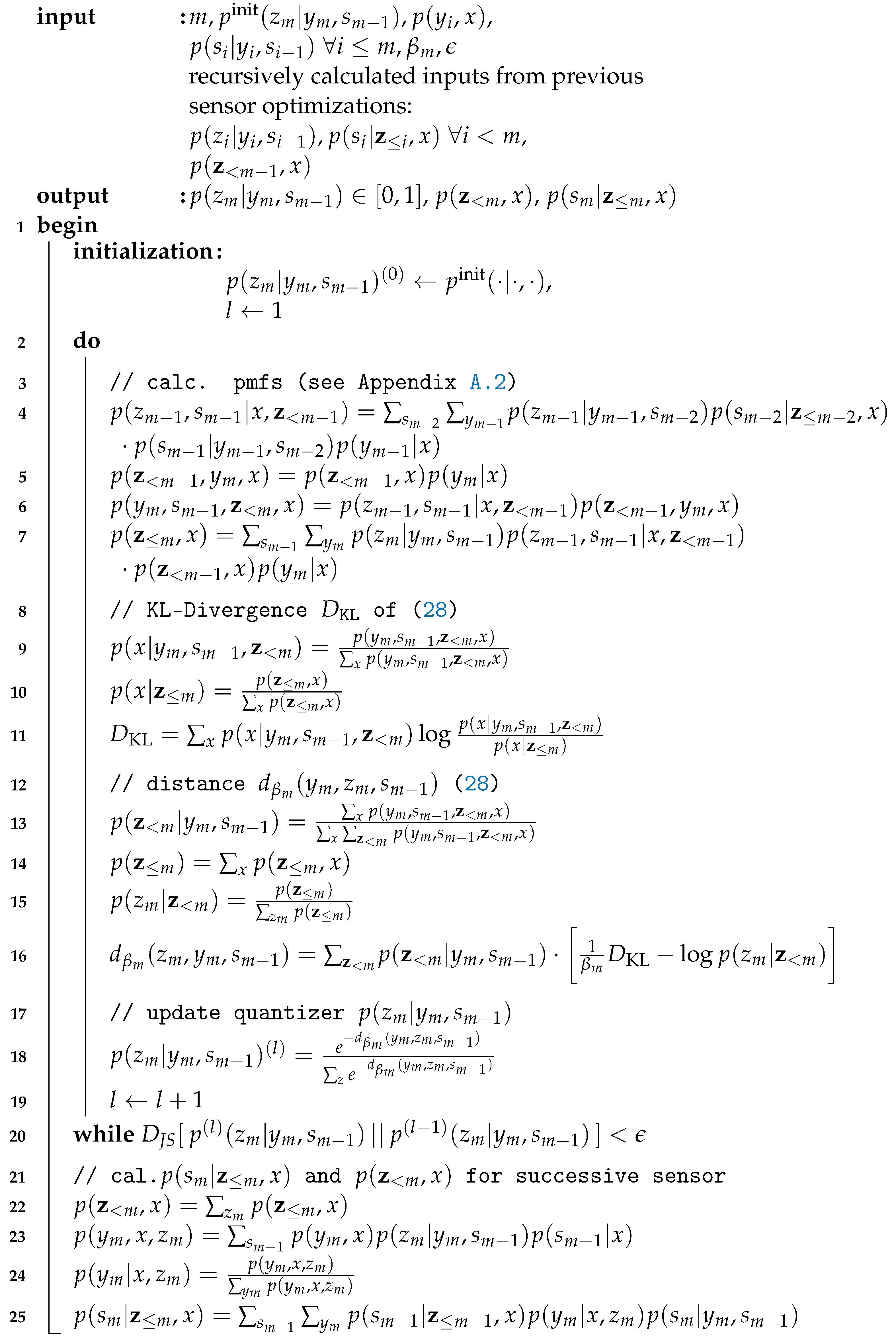

5.2.2. Algorithmic pcCEO Solution Applying the Successive Point-to-Point Protocol

5.2.3. Evolution of Instantaneous Side-Information

| Algorithm 2: Extended Blahut–Arimoto algorithm for the successive point-to-point protocol. |

|

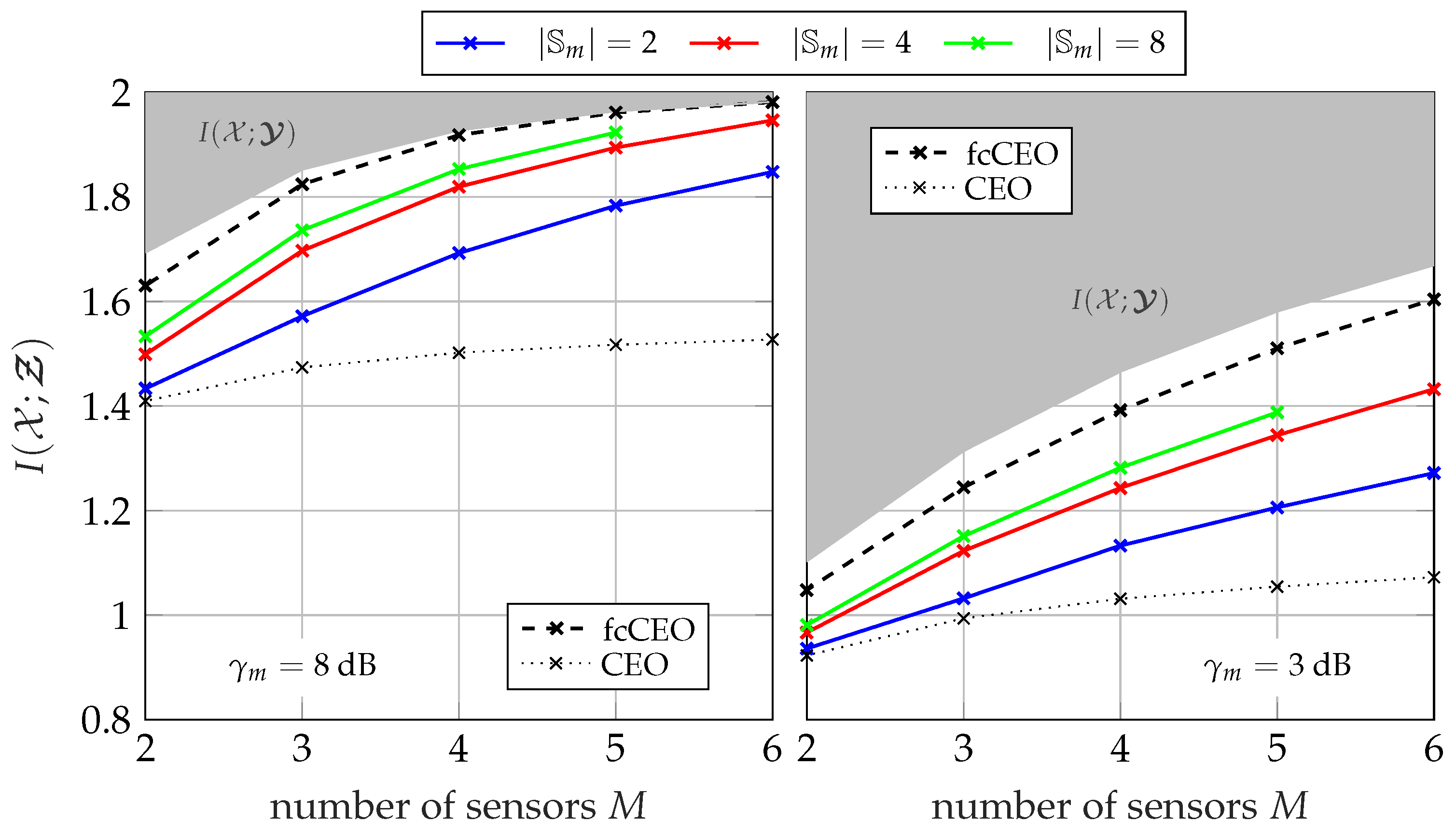

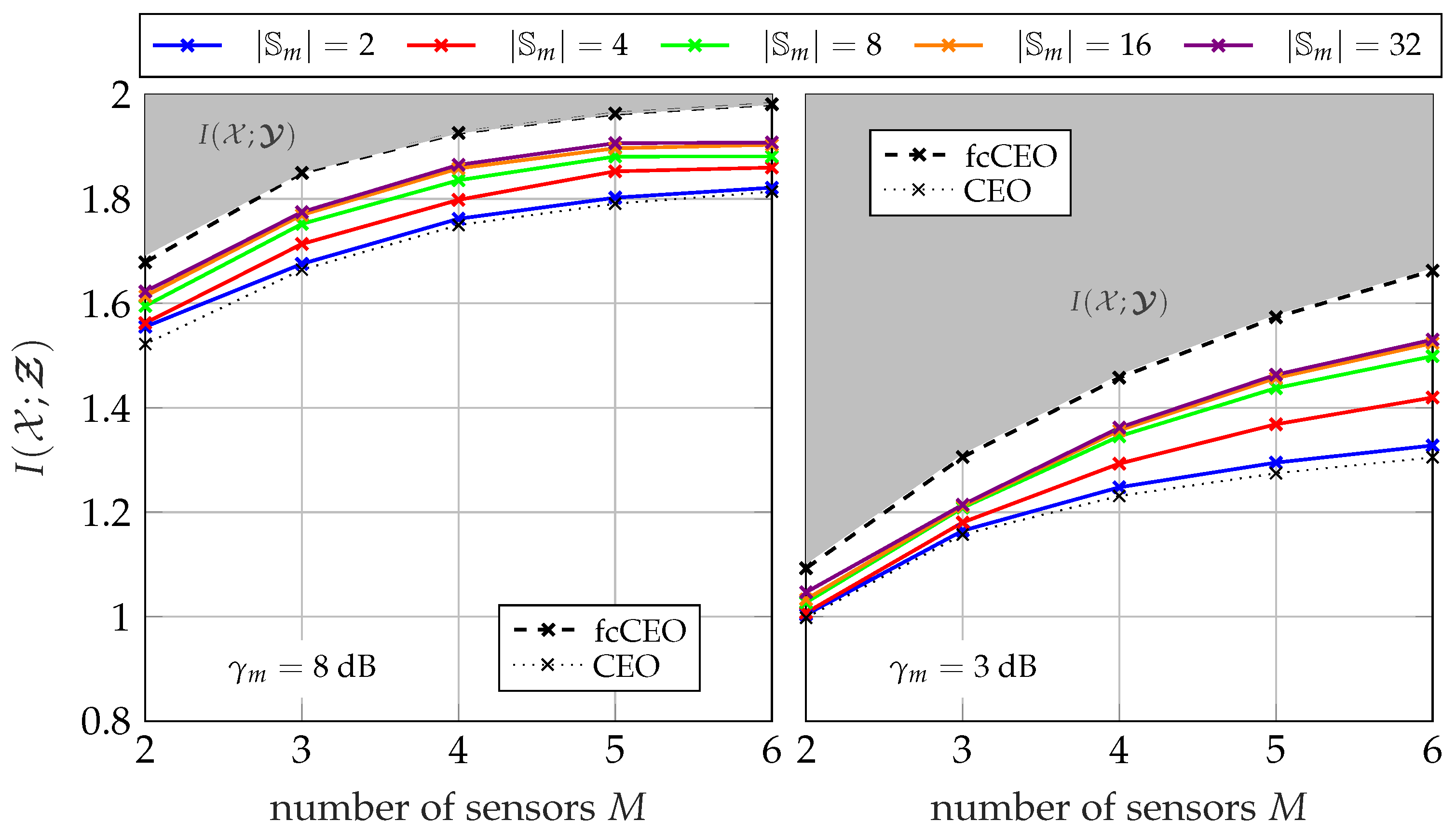

5.2.4. Performance for Different Network Sizes

5.2.5. Performance for Different Sum-Rates

5.2.6. Asymmetric Scenarios

5.3. Two-Phase Transmission Protocol with Artificial Side-Information

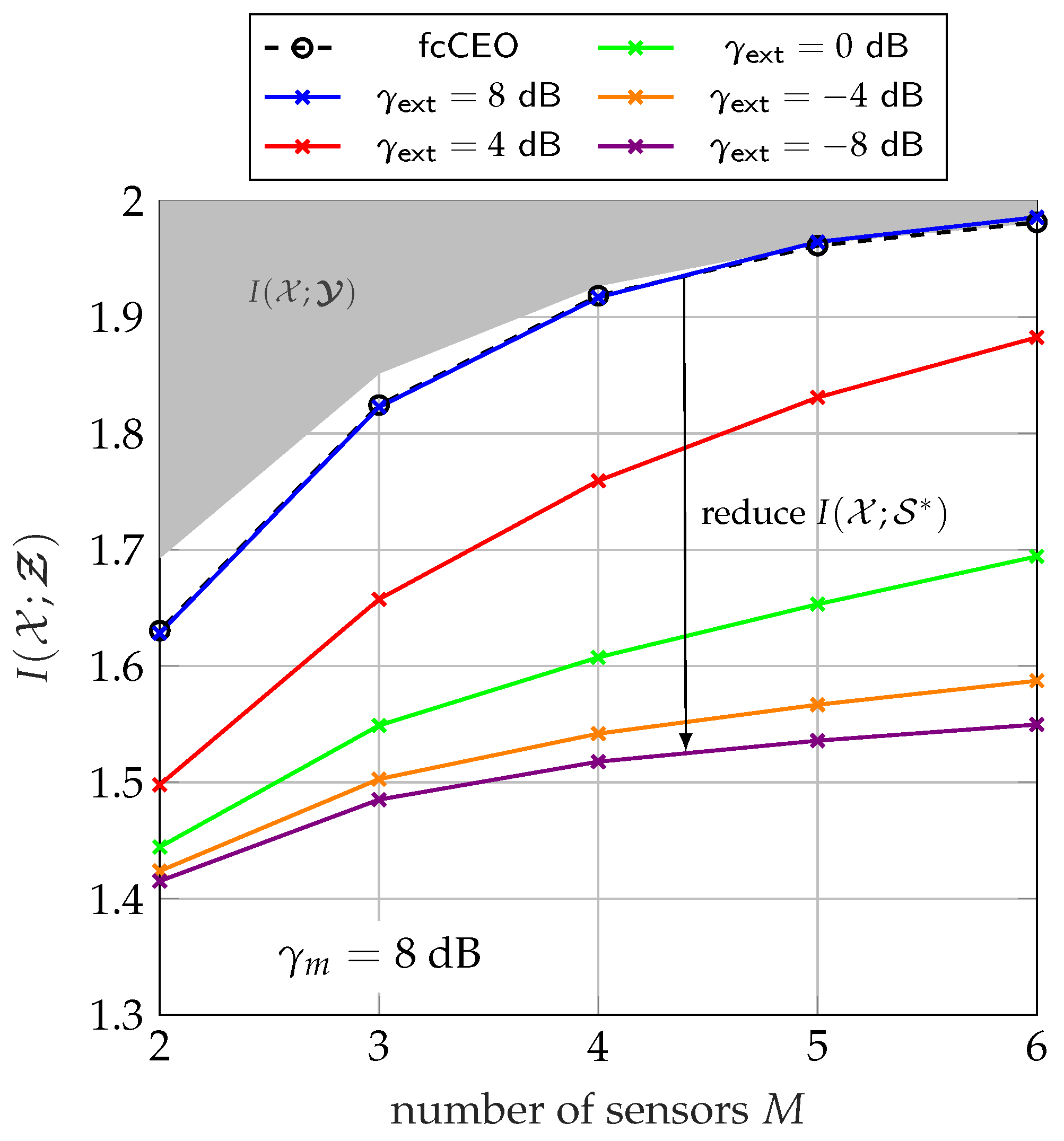

5.3.1. Performance of Two-Phase Transmission

5.3.2. Influence of Extrinsic Information

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A

Appendix A.1. Optimization for Broadcasting Side-Information

Appendix A.1.1. Derivative of I(; Ƶm|Ƶ<m)

Appendix A.1.2. Derivative of I(m, 𝓢<m; Ƶm|Ƶ<m)

Appendix A.1.3. Fusion of Derived Parts

Appendix A.1.4. Calculating Required pmfs

Appendix A.2. Optimization for Point-to-Point Exchange of Side-Information

Appendix A.2.1. Derivative of I(; Ƶm|Ƶ<m)

Appendix A.2.2. Derivative of I(m, m−1; Ƶm|Ƶ<m)

Appendix A.2.3. Fusion of Derived Parts

Appendix A.2.4. Calculating Required pmfs

References

- Oohama, Y. The rate-distortion function for the quadratic Gaussian CEO problem. IEEE Trans. Inf. Theory 1998, 44, 1057–1070. [Google Scholar] [CrossRef]

- Viswanathan, H.; Berger, T. The Quadratic Gaussian CEO Problem. IEEE Trans. Inf. Theory 1997, 43, 1549–1559. [Google Scholar] [CrossRef]

- Chen, J.; Zhang, X.; Berger, T.; Wicker, S.B. An upper bound on the sum-rate distortion function and its corresponding rate allocation schemes for the CEO problem. IEEE J. Sel. Areas Commun. 2004, 22, 977–987. [Google Scholar] [CrossRef]

- Prabhakaran, V.; Tse, D.; Ramachandran, K. Rate region of the quadratic Gaussian CEO problem. In Proceedings of the International Symposium on Information Theory (ISIT 2004), Chicago, IL, USA, 27 June–2 July 2004; p. 119. [Google Scholar] [CrossRef]

- Oohama, Y. Rate-distortion theory for Gaussian multiterminal source coding systems with several side informations at the decoder. IEEE Trans. Inf. Theory 2005, 51, 2577–2593. [Google Scholar] [CrossRef]

- Wagner, A.; Tavildar, S.; Viswanath, P. Rate Region of the Quadratic Gaussian Two-Encoder Source-Coding Problem. IEEE Trans. Inf. Theory 2008, 54, 1938–1961. [Google Scholar] [CrossRef]

- Ugur, Y.; Aguerri, I.E.; Zaidi, A. Vector Gaussian CEO problem under logarithmic loss. In Proceedings of the 2018 IEEE Information Theory Workshop (ITW), Guangzhou, China, 25–29 November 2018; pp. 1–5. [Google Scholar]

- Uğur, Y.; Aguerri, I.E.; Zaidi, A. Vector Gaussian CEO Problem Under Logarithmic Loss and Applications. IEEE Trans. Inf. Theory 2020, 66, 4183–4202. [Google Scholar] [CrossRef] [Green Version]

- Courtade, T.A.; Weissman, T. Multiterminal Source Coding Under Logarithmic Loss. IEEE Trans. Inf. Theory 2014, 60, 740–761. [Google Scholar] [CrossRef] [Green Version]

- Berger, T.; Zhang, Z.; Viswanathan, H. The CEO Problem [Multiterminal Source Coding]. IEEE Trans. Inf. Theory 1996, 42, 887–902. [Google Scholar] [CrossRef]

- Eswaran, K.; Gastpar, M. Remote Source Coding under Gaussian Noise: Dueling Roles of Power and Entropy Power. arXiv 2018, arXiv:1805.06515v2. [Google Scholar]

- Zaidi, A.; Aguerri, I.E. Distributed Deep Variational Information Bottleneck. In Proceedings of the 2020 IEEE 21st International Workshop on Signal Processing Advances in Wireless Communications (SPAWC), Atlanta, GA, USA, 26–29 May 2020; pp. 1–5. [Google Scholar] [CrossRef]

- Aguerri, I.E.; Zaidi, A. Distributed Variational Representation Learning. IEEE Trans. Pattern Anal. Mach. Intell. 2021, 43, 120–138. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Steiner, S.; Kuehn, V.; Stark, M.; Bauch, G. Reduced-Complexity Optimization of Distributed Quantization Using the Information Bottleneck Principle. IEEE Open J. Commun. Soc. 2021, 2, 1267–1278. [Google Scholar] [CrossRef]

- Steiner, S.; Kuehn, V. Distributed Compression using the Information Bottleneck Principle. In Proceedings of the ICC 2021—IEEE International Conference on Communications, Montreal, QC, Canada, 14–23 June 2021; pp. 1–6. [Google Scholar] [CrossRef]

- Estella Aguerri, I.; Zaidi, A. Distributed information bottleneck method for discrete and Gaussian sources. In Proceedings of the International Zurich Seminar on Information and Communication (IZS 2018) Proceedings, Zurich, Switzerland, 21–23 February 2018; ETH Zurich: Zurich, Switzerland, 2018; pp. 35–39. [Google Scholar]

- Uğur, Y.; Aguerri, I.E.; Zaidi, A. A generalization of blahut-arimoto algorithm to compute rate-distortion regions of multiterminal source coding under logarithmic loss. In Proceedings of the 2017 IEEE Information Theory Workshop (ITW), Kaohsiung, Taiwan, 6–10 November 2017; pp. 349–353. [Google Scholar]

- Prabhakaran, V.; Ramchandran, K.; Tse, D. On the Role of Interaction Between Sensors in the CEO Problem. In Proceedings of the 42nd Annual Allerton Conference on Communication, Control, and Computing, Monticello, IL, USA, 29 September–1 October 2004. [Google Scholar]

- Draper, S.; Wornell, G. Side Information Aware Coding Strategies for Sensor Networks. IEEE J. Sel. Areas Commun. 2004, 22, 966–976. [Google Scholar] [CrossRef]

- Simeone, O. Source and Channel Coding for Homogeneous Sensor Networks with Partial Cooperation. IEEE Trans. Wirel. Commun. 2009, 8, 1113–1117. [Google Scholar] [CrossRef]

- Permuter, H.; Steinberg, Y.; Weissman, T. Problems we can solve with a helper. In Proceedings of the 2009 IEEE Information Theory Workshop on Networking and Information Theory, Volos, Greece, 10–12 June 2009; pp. 266–270. [Google Scholar] [CrossRef]

- Tishby, N.; Pereira, F.C.; Bialek, W. The Information Bottleneck Method. In Proceedings of the 37th Annual Allerton Conference on Communication, Control, and Computing, Monticello, IL, USA, 22–24 September 1999; pp. 368–377. [Google Scholar]

- Slonim, N. The Information Bottleneck Theory and Applications. Ph.D. Thesis, Hebrew University of Jerusalem, Jerusalem, Israel, 2002. [Google Scholar]

- Hassanpour, S.; Wuebben, D.; Dekorsy, A. Overview and Investigation of Algorithms for the Information Bottleneck Method. In Proceedings of the SCC 2017—11th International ITG Conference on Systems, Communications and Coding, Hamburg, Germany, 6–9 February 2017. [Google Scholar]

- Lewandowsky, J.; Bauch, G. Information-Optimum LDPC Decoders Based on the Information Bottleneck Method. IEEE Access 2018, 6, 4054–4071. [Google Scholar] [CrossRef]

- Zeitler, G. Low-precision analog-to-digital conversion and mutual information in channels with memory. In Proceedings of the 48th Annual Allerton Conference on Communication, Control and Computing, Monticello, IL, USA, 29 September–1 October 2010; pp. 745–752. [Google Scholar]

- Meidlinger, M.; Matz, G. On Irregular LDPC Codes with Quantized Message Passing Decoding. In Proceedings of the 2017 IEEE 18th International Workshop on Signal Processing Advances in Wireless Communications (SPAWC), Sapporo, Japan, 3–6 July 2017; IEEE: Piscataway, NJ, USA, 2017; pp. 1–5. [Google Scholar] [CrossRef]

- Romero, F.; Kurkoski, B. LDPC Decoding Mappings That Maximize Mutual Information. IEEE J. Sel. Areas Commun. 2016, 34, 2391–2401. [Google Scholar] [CrossRef]

- Zeitler, G. Low-Precision Quantizer Design for Communication Problems. Ph.D. Thesis, Technische Universitaet Muenchen, Muenchen, Germany, 2012. [Google Scholar]

- Chen, D.; Kuehn, V. Alternating information bottleneck optimization for the compression in the uplink of C-RAN. In Proceedings of the 2016 IEEE International Conference on Communications (ICC), Kuala Lumpur, Malaysia, 23–27 May 2016; pp. 1–7. [Google Scholar] [CrossRef]

- Lewandowsky, J.; Stark, M.; Bauch, G. Information Bottleneck Graphs for Receiver Design. In Proceedings of the 2016 IEEE International Symposium on Information Theory (ISIT), Barcelona, Spain, 10–15 July 2016; pp. 2888–2892. [Google Scholar] [CrossRef]

- Fujishige, S. Submodular Functions and Optimization; Elsevier: Amsterdam, The Netherlands, 2005. [Google Scholar]

- ten Brink, S. Convergence Behavior of Iteratively Decoded Parallel Concatenated Codes. IEEE Trans. Commun. 2001, 49, 1727–1737. [Google Scholar] [CrossRef]

- Cover, T.; Thomas, J. Elements of Information Theory, 2nd ed.; Wiley & Sons: New York, NY, USA, 2006. [Google Scholar]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Steiner, S.; Aminu, A.D.; Kuehn, V. Distributed Quantization for Partially Cooperating Sensors Using the Information Bottleneck Method. Entropy 2022, 24, 438. https://doi.org/10.3390/e24040438

Steiner S, Aminu AD, Kuehn V. Distributed Quantization for Partially Cooperating Sensors Using the Information Bottleneck Method. Entropy. 2022; 24(4):438. https://doi.org/10.3390/e24040438

Chicago/Turabian StyleSteiner, Steffen, Abdulrahman Dayo Aminu, and Volker Kuehn. 2022. "Distributed Quantization for Partially Cooperating Sensors Using the Information Bottleneck Method" Entropy 24, no. 4: 438. https://doi.org/10.3390/e24040438

APA StyleSteiner, S., Aminu, A. D., & Kuehn, V. (2022). Distributed Quantization for Partially Cooperating Sensors Using the Information Bottleneck Method. Entropy, 24(4), 438. https://doi.org/10.3390/e24040438