3.1. The Nonparametric PC Algorithm in High Dimensions

To obtain a measure of conditional independence between

X and

Y given

Z that is free of

Z, we define

Clearly,

if and only if

. Consider a plug-in estimate of

as

We reject

vs

at level

if

, for a suitably chosen threshold

. In

Appendix A, we present a local bootstrap procedure for choosing

in practice, which is also used in our numerical studies. Henceforth, we will often denote

and

simply by

and

respectively for notational simplicity, whenever there is no confusion.

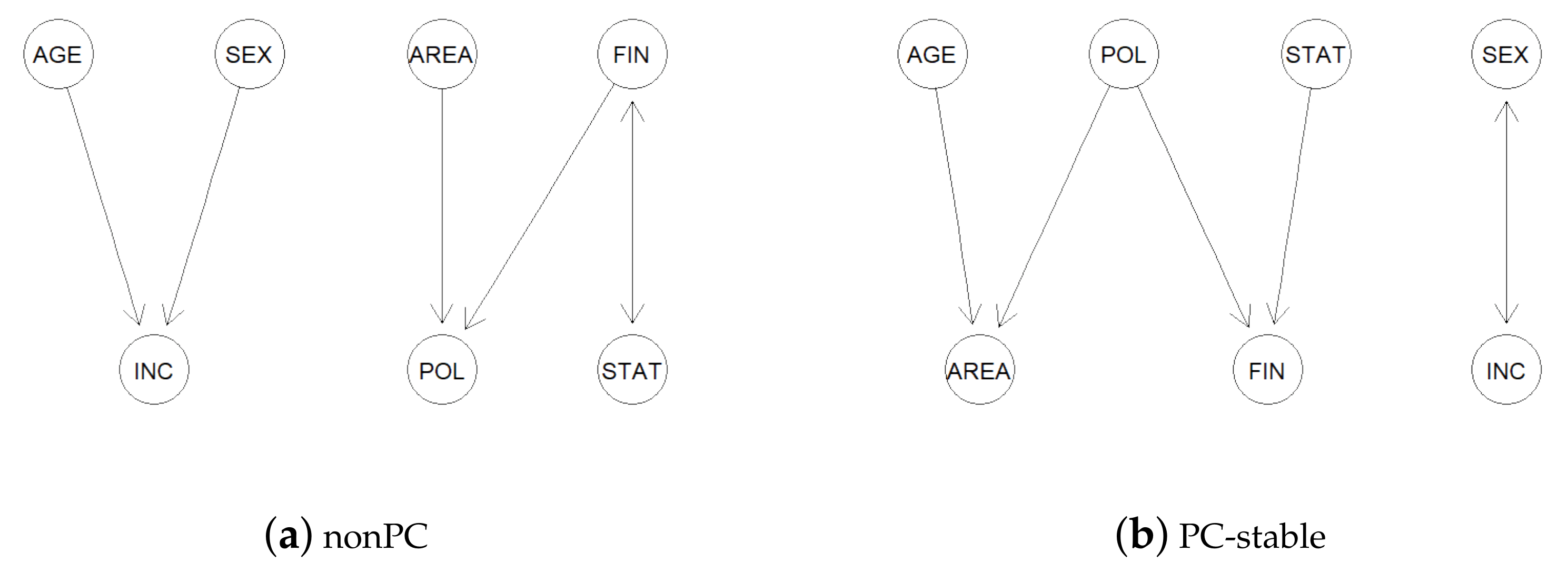

In view of the complete characterization of conditional independence by , we propose testing for conditional independence relations nonparametrically in the sample version of the PC-stable algorithm based on , rather than partial correlations. We coin the resulting algorithm the ‘nonPC’ algorithm, to emphasize that it is a nonparametric generalization of parametric PC-stable algorithms.

The

oracle version of the first step of nonPC, or the skeleton estimation step, is exactly the same as that of the PC-stable algorithm (Algorithm A1 in

Appendix A). The second step, which extends the skeleton estimated in the first step to a CPDAG (Algorithm A2 in

Appendix A), is comprised of some purely deterministic rules for edge orientations, and is exactly the same for both the nonPC and PC-stable as well. The only difference lies in the implementation of the tests for conditional independence relationships in the

sample versions of the first step. Specifically, we replace all the conditional independence queries in the first step by tests based on

. At some pre-specified significance level

, we infer that

when

, where

and

,

. When

,

and

. The critical value

in this case is obtained by a bootstrap procedure (see, e.g., Section 4 in [

22] with

).

Given that the equivalence between conditional independence and zero partial correlations only holds for multivariate normal random variables, our generalization broadens the scope of applicability of causal structure learning by the PC/PC-stable algorithm to general distributions over DAGs. This nonparametric approach is thus a natural extension of Gaussian and Gaussian copula models. It enables capturing nonlinear and non-monotone conditional dependence relationships among the variables, which partial correlations fail to detect.

Next, we establish theoretical guarantees on the correctness of the nonPC algorithm in learning the true underlying causal structure in sparse high-dimensional settings. Our consistency results only require mild moment and tail conditions on the set of variables, without making any strict distributional assumptions. Denote by the maximum cardinality of the conditioning sets considered in the adjacency search step of the PC-stable algorithm. Clearly, , where is the maximum degree of the DAG . For a fixed pair of nodes , the conditioning sets considered in the adjacency search step are elements of .

We first establish a concentration inequality that gives the rate at which the absolute difference of and its plug-in estimate decays to zero, for any fixed pair of nodes a and and a fixed conditioning set S. Towards that, we impose the following regularity conditions.

- (A1)

There exists such that, for , .

- (A2)

The kernel function is non-negative and uniformly bounded over its support.

Condition (A1) imposes a sub-exponential tail bound on the squares of the random variables. This is a quite commonly used condition, for example, in the high-dimensional feature screening literature (see, for example, [

23]). Condition (A2) is a mild condition on the kernel function

that is guaranteed by many commonly used kernels, including the Gaussian kernel. Under conditions (A1) and (A2), the next result shows that the plug-in estimate

converges in probability to its population counterpart

exponentially fast.

Theorem 1. Under conditions (A1) and (A2), for any , there exist positive constants A, B and such that The proof of Theorem 1 is long and somewhat technical; it is thus relegated to

Appendix B. Theorem 1 serves as the main building block towards establishing the consistency of the nonPC algorithm in sparse high-dimensional settings.

For notational convenience, henceforth, we denote and by and , respectively. In Theorem 2 below, we establish a uniform bound for the errors in inferring conditional independence relationships using the -based test in the skeleton estimation step of the sample version of the nonPC algorithm.

Theorem 2. Under conditions (A1) and (A2), for any , there exist positive constants A, B and such that Next, we turn to proving the consistency of the nonPC algorithm in the high-dimensional setting where the dimension

p can be much larger than the sample size

n, but the DAG is considered to be sparse. We impose the following regularity conditions, which are similar to the assumptions imposed in Section 3.1 of [

8] in order to prove the consistency of the PC algorithm for Gaussian graphical models. We let the number of variables

p grow with the sample size

n and consider

, and also the DAG

and the distribution

.

- (A3)

The dimension

grows at a rate such that the right-hand side of (

7) tends to zero as

. In particular, this is satisfied when

for any

.

- (A4)

The maximum degree of the DAG , denoted by , grows at the rate of , where .

- (A5)

The distribution

is faithful to the DAG

for all

n. In other words, for any

and

Moreover,

values are uniformly bounded both from above and below. Formally,

where

is a positive constant and

.

Condition (A3) allows the dimension to grow at any arbitrary polynomial rate of the sample size. Condition (A4) is a sparsity assumption on the underlying true DAG, allowing the maximum degree of the DAG to also grow, but at a slower rate than

n. Since

, we also have

. Finally, Condition (A5) is the strong faithfulness assumption (Definition 1.3 in [

24]) on

and is similar to condition (A4) in [

8]. This essentially requires

to be bounded away from zero when the vertices

and

are not d-separated by

. It is worth noting that the faithfulness assumption alone is not enough to prove the consistency of the PC/PC-stable/nonPC algorithms in high-dimensional settings, and the more stringent strong faithfulness condition is required.

Remark 2. For notational convenience, treat and as X, Y and Z, respectively, for any and . From Equation (3), we have Condition (A1) implies . With this and the definition of in Section 2.3, it follows from some simple algebra and the Cauchy–Schwarz inequality that . This provides a justification for the second part of Assumption (A5) that for some positive constant . The next theorem establishes that the nonPC algorithm consistently estimates the skeleton of a sparse high-dimensional DAG, thereby providing the necessary theoretical guarantees to our proposed methodology. It is worth noting that, in the sample version of the PC-stable and hence the nonPC algorithm, all the inference is done during the skeleton estimation step. The second step that involves appropriately orienting the edges of the estimated skeleton is purely deterministic (see Sections 4.2 and 4.3 in [

7]). Therefore, to prove the consistency of the nonPC algorithm in estimating the equivalence class of the underlying true DAG, it is enough to prove the consistency of the estimated skeleton. We include the detailed proof of Theorem 3 in

Appendix B.

Theorem 3. Assume that Conditions (A1)–(A5) hold. Let be the true skeleton of the graph , and be the skeleton estimated by the nonPC algorithm. Then, as , .

Remark 3. In the proof of Theorem 3, we consider the threshold to be of constant order. However, the proof continues to work as long as is of the same order as as .

3.2. The Nonparametric FCI Algorithm in High Dimensions

The FCI is a modification of the PC algorithm that accounts for latent and selection variables. Thus, generalizations of the PC algorithm naturally extend to the FCI as well. Similar to nonPC, we propose testing for conditional independence relations nonparametrically in the

sample version of the FCI-stable algorithm (Algorithm A3 in

Appendix A) based on

, instead of partial correlations. We coin the resulting algorithm the ‘nonFCI’ algorithm, to emphasize that it is a generalization of parametric FCI-stable algorithms. Again, the

oracle version of the nonFCI is exactly the same as that of the FCI-stable algorithm. The difference is in the implementation of the tests for conditional independence relationships in their

sample versions. This broadens the scope of the FCI algorithm in causal structural learning for observational data in the presence of latent and selection variables when Gaussianity is not a viable assumption. More specifically, it enables capturing nonlinear and non-monotone conditional dependence relationships among the variables that partial correlations would fail to detect.

Equipped with the theoretical guarantees we established for the nonPC in

Section 3.1, we establish below in Theorem 4 the consistency of the nonFCI algorithm for general distributions in sparse high-dimensional settings. Let

be a DAG with the vertex set partitioned as

, where

indexes the set of

p observed variables,

denotes the set of latent variables and

stands for the set of selection variables. Let

be the unique MAG over

. We let

p grow with

n and consider

,

and

, where

Q is the distribution of

. We provide below the definition of possible-D-SEP sets (Definition 3.3 in [

4]).

Definition 1. Let be a graph with any of the following edge types: , and ↔. A possible-D-SEP in , denoted , is defined as follows: if and only if there is a path π between and in such that, for every subpath of π, is a collider on the subpath in or is a triangle in .

To prove the consistency of the nonFCI algorithm in sparse high-dimensional settings, we impose the following regularity conditions, which are similar to the assumptions imposed in Section 4 in [

4].

- (C3)

The distribution is faithful to the underlying MAG for all n.

- (C4)

The maximum size of the possible-D-SEP sets for finding the final skeleton in the FCI-stable algorithm (Algorithm A6 in

Appendix A),

, grows at the rate of

, where

.

- (C5)

For any

and

with

, assume

where

is a positive constant and

.

Theorem 4. Suppose conditions (A1)–(A3) and (C3)–(C5) hold. Denote by and the true underlying FCI-PAG and the output of the nonFCI algorithm, respectively. Then, as , .