On the Nature of Functional Differentiation: The Role of Self-Organization with Constraints

Abstract

:1. Introduction

2. The Difference between Self-Organization and Self-Organization with Constraints

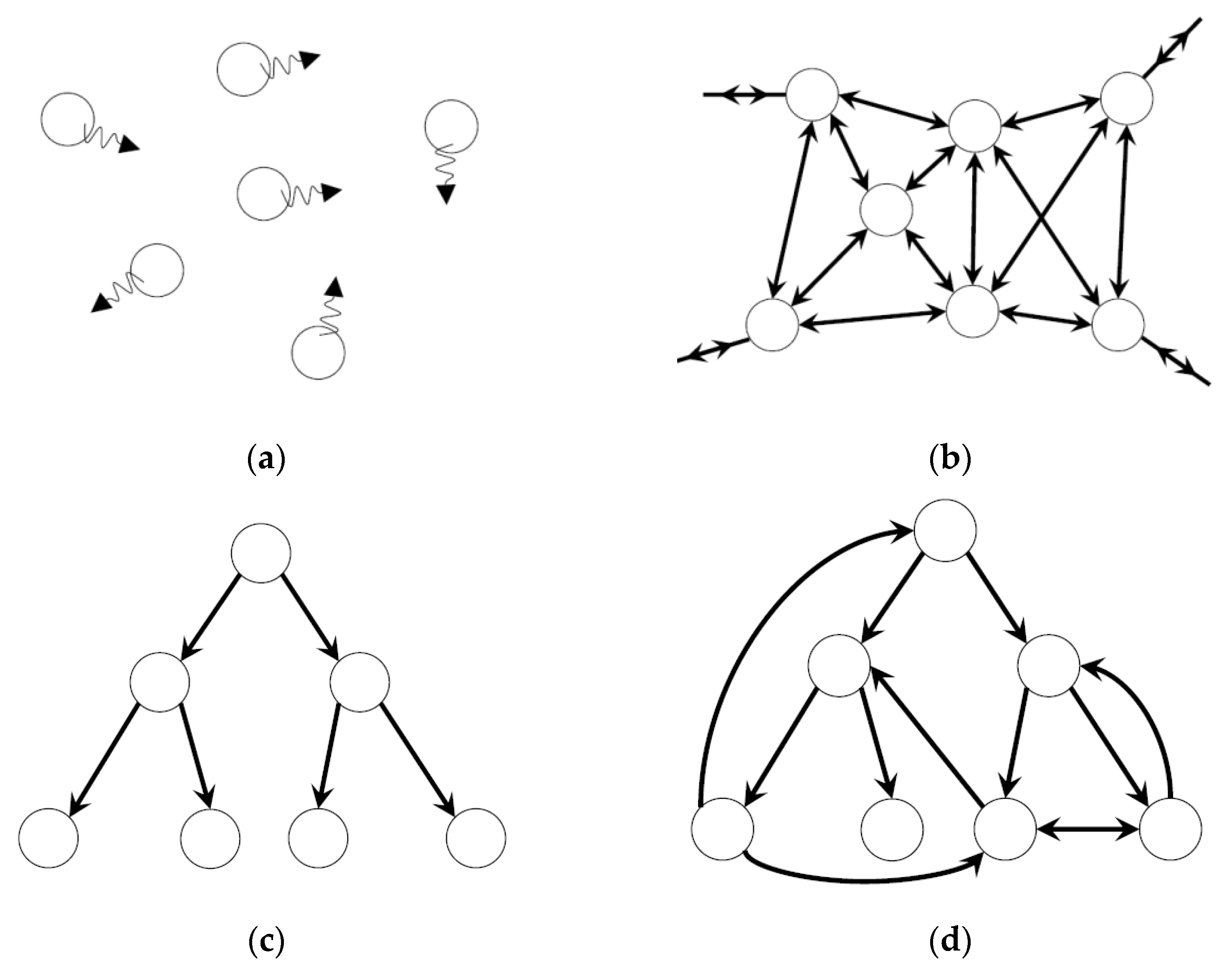

3. Dynamic Heterarchy

4. Heterarchy in the Model of Functional Differentiation

4.1. Heterarchy in EDS

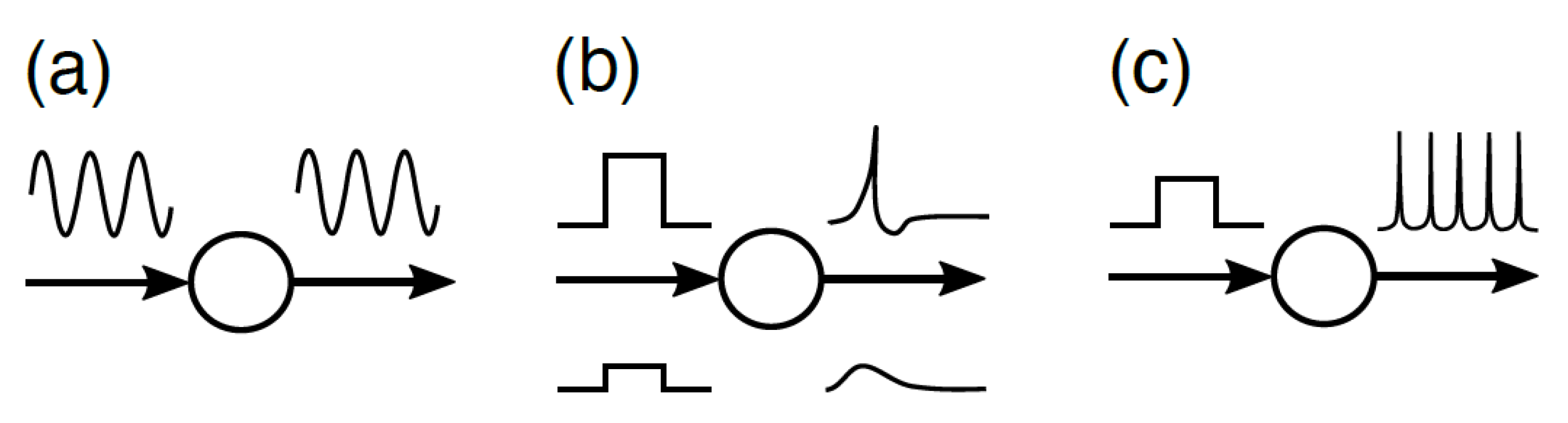

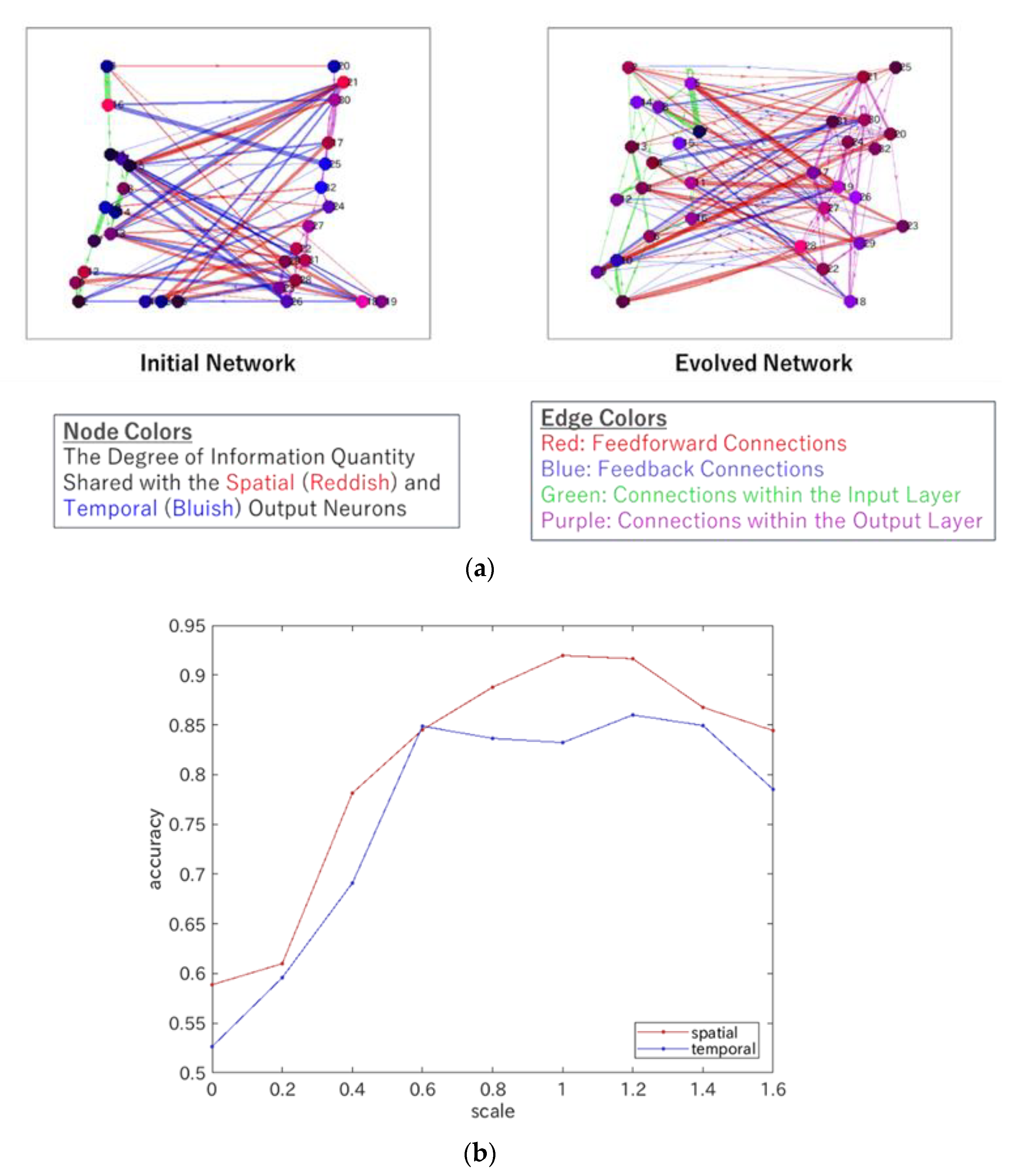

4.2. Heterarchy in ERC

5. Superposition Theorem, Epigenetic Landscape, and Functional Differentiation

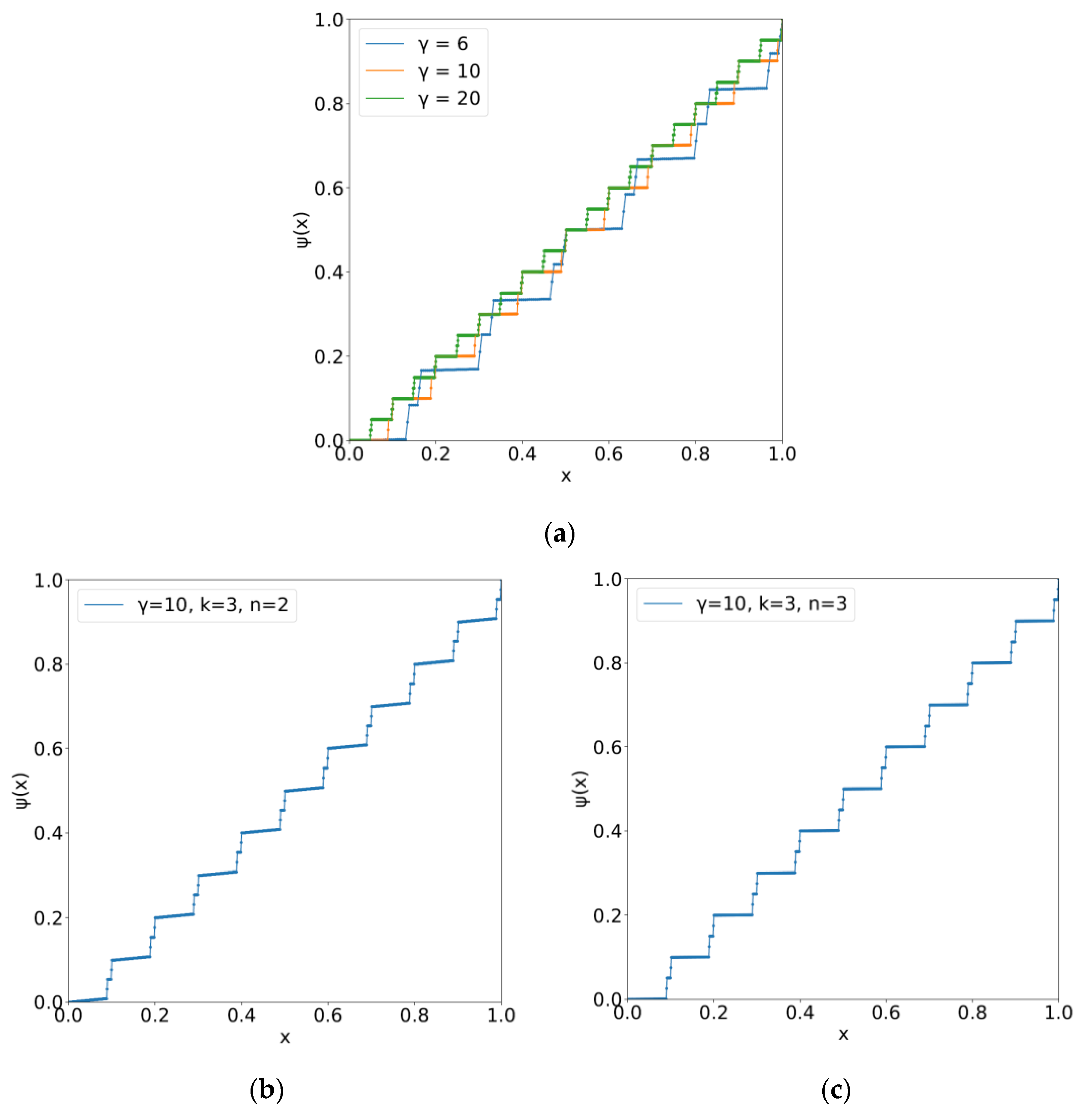

5.1. The Kolmogorov–Arnold–Sprecher Superposition Theorem

5.2. Epigenetic Landscape with Indices of Phenotype Yielding Functional Differentiation

6. Summary and Outlook

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Tsuda, I.; Yamaguti, Y.; Watanabe, H. Self-organization with constraints―A mathematical model for functional differentiation. Entropy 2016, 18, 74. [Google Scholar] [CrossRef] [Green Version]

- Yamaguti, Y.; Tsuda, I. Functional differentiations in evolutionary reservoir computing networks. Chaos 2021, 31, 013137. [Google Scholar] [CrossRef] [PubMed]

- Watanabe, H.; Ito, T.; Tsuda, I. A mathematical model for neuronal differentiation in terms of an evolved dynamical system. Neurosci. Res. 2020, 156, 206–216. [Google Scholar] [CrossRef] [PubMed]

- McCulloch, W. A heterarchy of values determined by the topology of nervous nets. B. Math. Biophys. 1945, 7, 89–93. [Google Scholar] [CrossRef]

- von Claus Pias, H. Cybernetics—Kybernetik, the Macy–Conferences 1946–1953; Diaphanes: Zurich, Switzerland, 2003. [Google Scholar]

- Nicolis, G.; Prigogine, I. Self-Organization in Nonequilibrium Systems; Wiley: New York, NY, USA, 1977. [Google Scholar]

- Haken, H. Advanced Synergetics; Springer: Berlin/Heidelberg, Germany, 1983. [Google Scholar]

- Kelso, J.A.S.; Dumas, G.; Tognoli, E. Outline of a general theory of behavior and brain coordination. Neural. Netw. 2013, 37, 120–131. [Google Scholar] [CrossRef] [Green Version]

- Tognoli, E.; Kelso, J.A.S. The metastable brain. Neuron 2014, 81, 35–48. [Google Scholar] [CrossRef] [Green Version]

- Kawasaki, M.; Yamada, Y.; Ushiku, Y.; Miyauchi, E.; Yamaguchi, Y. Inter-brain synchronization during coordination of speech rhythm in human-to-human social interaction. Sci. Rep. 2013, 3, 1692. [Google Scholar] [CrossRef] [Green Version]

- Tsuda, I.; Yamaguchi, Y.; Hashimoto, T.; Okuda, J.; Kawasaki, M.; Nagasaka, Y. Study of the neural dynamics for understanding communication in terms of complex hetero systems. Neurosci. Res. 2015, 90, 51–55. [Google Scholar] [CrossRef]

- Van Essen, D.C. Visual Cortex. In Cerebral Cortex; Peters, A., Jones, E.G., Eds.; Plenum Press: New York, NY, USA, 1985; Volume 3, pp. 259–330. [Google Scholar]

- Sur, M.; Palla, S.L.; Roe, A.W. Cross-modal plasticity in cortical development: Differentiation and specification of sensory neocortex. Trends Neurosci. 1990, 13, 227–233. [Google Scholar] [CrossRef]

- Treves, A. Phase transitions that made us mammals. Lect. Notes Comput. Sci. 2004, 3146, 55–70. [Google Scholar]

- Szentagothai, J.; Erdi, P. Self-organization in the nervous system. J. Soc. Biol. Struct. 1989, 12, 367–384. [Google Scholar] [CrossRef]

- Pattee, H.H. The complementarity principle in biological and social structures. J. Soc. Biol. Struct. 1978, 1, 191–200. [Google Scholar] [CrossRef]

- Cumming, G.S. Heterarchies: Reconciling networks and hierarchies. Trends Ecol. 2016, 31, 622–632. [Google Scholar] [CrossRef] [PubMed]

- Clune, J.; Mouret, J.-B.; Lipson, H. The evolutionary origins of modularity. Proc. R. Soc. Lond. Ser. B Biol. Sci. 2013, 280, 20122863. [Google Scholar] [CrossRef] [Green Version]

- Felleman, D.J.; van Essen, D.C. Distributed hierarchical processing in the primate cerebral cortex. Cere. Cor. 1991, 1, 1–47. [Google Scholar] [CrossRef]

- Hilgetag, C.-C.; O’Neill, M.A.; Young, M.P. Hierarchical organization of macaque and cat cortical sensory systems explored with a novel network processor. Philos. Trans. R. Soc. London. Ser. B Biol. Sci. 2000, 355, 71–89. [Google Scholar] [CrossRef] [Green Version]

- Vidaurre, D.; Smith, S.M.; Woolrich, M.W. Brain network dynamics are hierarchically organized in time. Proc. Natl. Acad. Sci. USA 2017, 114, 12827–12832. [Google Scholar] [CrossRef] [Green Version]

- Markov, N.T.; Ercsey-Ravasz, M.; Van Essen, D.C.; Knoblauch, K.; Toroczkai, Z.; Kennedy, H. Cortical high-density counterstream architectures. Science 2013, 342, 1238406. [Google Scholar] [CrossRef] [Green Version]

- Glasser, M.F.; Coalson, T.S.; Robinson, E.C.; Hacker, C.D.; Harwell, J.; Yacoub, E.; Ugurbil, K.; Andersson, J.; Beckmann, C.F.; Jenkinson, M.; et al. A multi-modal parcellation of human cerebral cortex. Nature 2016, 536, 171–178. [Google Scholar] [CrossRef] [Green Version]

- Matsumoto, K.; Tsuda, I. Calculation of information flow rate from mutual information. J. Phys. A Math. Gen. 1988, 21, 1405–1414. [Google Scholar] [CrossRef]

- Tsuda, I. Chaotic itinerancy and its roles in cognitive neurodynamics. Curr. Opin. Neurobiol. 2015, 31, 67–71. [Google Scholar] [CrossRef] [PubMed]

- Wade, J.; McDaid, L.; Harkin, J.; Crunelli, V.; Kelso, S. Biophysically based computational models of astrocyte~ neuron coupling and their functional significance. Front. Comput. Neurosci. 2013, 7, 1–2. [Google Scholar] [CrossRef] [Green Version]

- Maass, W.; Natschläger, T.; Markram, H. Real-time computing without stable states: A new framework for neural computation based on perturbations. Neural Comput. 2002, 14, 2531–2560. [Google Scholar] [CrossRef] [PubMed]

- Jaeger, H.; Haas, H. Harnessing nonlinearity: Predicting chaotic systems and saving energy in wireless communication. Science 2004, 304, 78–80. [Google Scholar] [CrossRef] [Green Version]

- Dominey, P.; Arbib, M.; Joseph, J.-P.J. A model of corticostriatal plasticity for learning oculomotor associations and sequences. Cognit. Neurosci. 1995, 7, 311–336. [Google Scholar] [CrossRef]

- Yamazaki, T.; Tanaka, S. The cerebellum as a liquid state machine. Neural Netw. 2007, 20, 290–297. [Google Scholar] [CrossRef]

- Dominey, P.F. Cortico-Striatal Origin of Reservoir Computing, Mixed Selectivity, and Higher Cognitive Function. In Reservoir Computing: Theory, Physical Implementations, and Applications; Nakajima, K., Fischer, I., Eds.; Springer Nature: Singapore, 2021; pp. 29–58. [Google Scholar]

- Treves, A. Computational constraints between retrieving the past and predicting the future, and the CA3-CA1 differentiation. Hippocampus 2004, 14, 539–556. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Seeman, S.C.; Campagnola, L.; Davoudian, P.A.; Hoggarth, A.; Hage, T.A.; Bosma-Moody, A.; Baker, C.A.; Lee, J.H.; Mihalas, S.; Teeter, C.; et al. Sparse recurrent excitatory connectivity in the microcircuit of the adult mouse and human cortex. eLife 2018, 7, e39349. [Google Scholar] [CrossRef] [PubMed]

- Rao, R.P.N.; Ballard, D.H. Predictive coding in the visual cortex: A functional interpretation of some extra-classical receptive-field effects. Nat. Neurosci. 1999, 2, 79–87. [Google Scholar] [CrossRef]

- Srinivasan, M.V.; Laughlin, S.B.; Dubs, A. Predictive coding: A fresh view of inhibition in the retina. Proc. R. Soc. Lond. Ser. B Biol. Sci. 1982, 216, 427–459. [Google Scholar]

- Barlow, H.B. Inductive inference, coding, perception, and language. Perception 1974, 3, 123–134. [Google Scholar] [CrossRef] [PubMed]

- Optican, L.; Richmond, B.J. Temporal encoding of two-dimensional patterns by single units in primate inferior cortex. II Information theoretic analysis. J. Neurophysiol. 1987, 57, 132–146. [Google Scholar] [CrossRef] [PubMed]

- Linsker, R. Perceptual neural organization: Some approaches based on network models and information theory. Ann. Rev. Neurosci. 1990, 13, 257–281. [Google Scholar] [CrossRef] [PubMed]

- Kolmogorov, A.N. On the representation of continuous functions of several variables by superposition of continuous functions of one variable and addition. Russ. Acad. Sci. 1957, 114, 179–182. [Google Scholar]

- Arnold, V.I. On functions of three variables. Russ. Acad. Sci. 1957, 114, 679–681. [Google Scholar]

- Sprecher, D.A. An improvement in the superposition theorem of Kolmogorov. J. Math. Anal. Appl. 1972, 38, 208–213. [Google Scholar] [CrossRef] [Green Version]

- Montanelli, H.; Yang, H. Error bounds for deep ReLU networks using the Kolmogorov–Arnold superposition theorem. Neural Netw. 2020, 129, 1–6. [Google Scholar] [CrossRef]

- Köppen, M. On the training of Kolomogorov Network. In International Conference on Artificial Neural Networks; Dorronsoro, J.R., Ed.; Springer: Berlin/Heidelberg, Germany, 2002; p. 2415. [Google Scholar]

- Hecht-Nielsen, R. Kolmogorov mapping neural network existence theorem. In Proceedings of the IEEE First International Conference on Neural Networks, San Diego, CA, USA, 21–24 June 1987; pp. 11–13. [Google Scholar]

- Funahashi, K. On the approximate realization of continuous mappings by neural networks. Neural Netw. 1989, 2, 183–192. [Google Scholar] [CrossRef]

- Imayoshi, I.; Isomura, A.; Harima, Y.; Kawaguchi, K.; Kori, H.; Miyachi, H.; Fujiwara, T.; Ishidate, F.; Kageyama, R. Oscillatory control of factors determining multipotency and fate in mouse neural progenitors. Science 2013, 342, 1203–1208. [Google Scholar] [CrossRef] [Green Version]

- Furusawa, C.; Kaneko, K. A dynamical-systems view of stem cell biology. Science 2012, 338, 215–217. [Google Scholar] [CrossRef]

- Waddington, C.H. Canalization of development and the inheritance of acquired characters. Nature 1942, 150, 563–565. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Tsuda, I.; Watanabe, H.; Tsukada, H.; Yamaguti, Y. On the Nature of Functional Differentiation: The Role of Self-Organization with Constraints. Entropy 2022, 24, 240. https://doi.org/10.3390/e24020240

Tsuda I, Watanabe H, Tsukada H, Yamaguti Y. On the Nature of Functional Differentiation: The Role of Self-Organization with Constraints. Entropy. 2022; 24(2):240. https://doi.org/10.3390/e24020240

Chicago/Turabian StyleTsuda, Ichiro, Hiroshi Watanabe, Hiromichi Tsukada, and Yutaka Yamaguti. 2022. "On the Nature of Functional Differentiation: The Role of Self-Organization with Constraints" Entropy 24, no. 2: 240. https://doi.org/10.3390/e24020240

APA StyleTsuda, I., Watanabe, H., Tsukada, H., & Yamaguti, Y. (2022). On the Nature of Functional Differentiation: The Role of Self-Organization with Constraints. Entropy, 24(2), 240. https://doi.org/10.3390/e24020240