1. Introduction

Control problems of systems with incomplete information and memory limitation appear in many practical situations. These constraints become especially predominant when designing the control of small devices [

1,

2], and are important for understanding the control mechanisms of biological systems [

3,

4,

5,

6,

7,

8] because their sensors are extremely noisy and their controllers can only have severely limited memories.

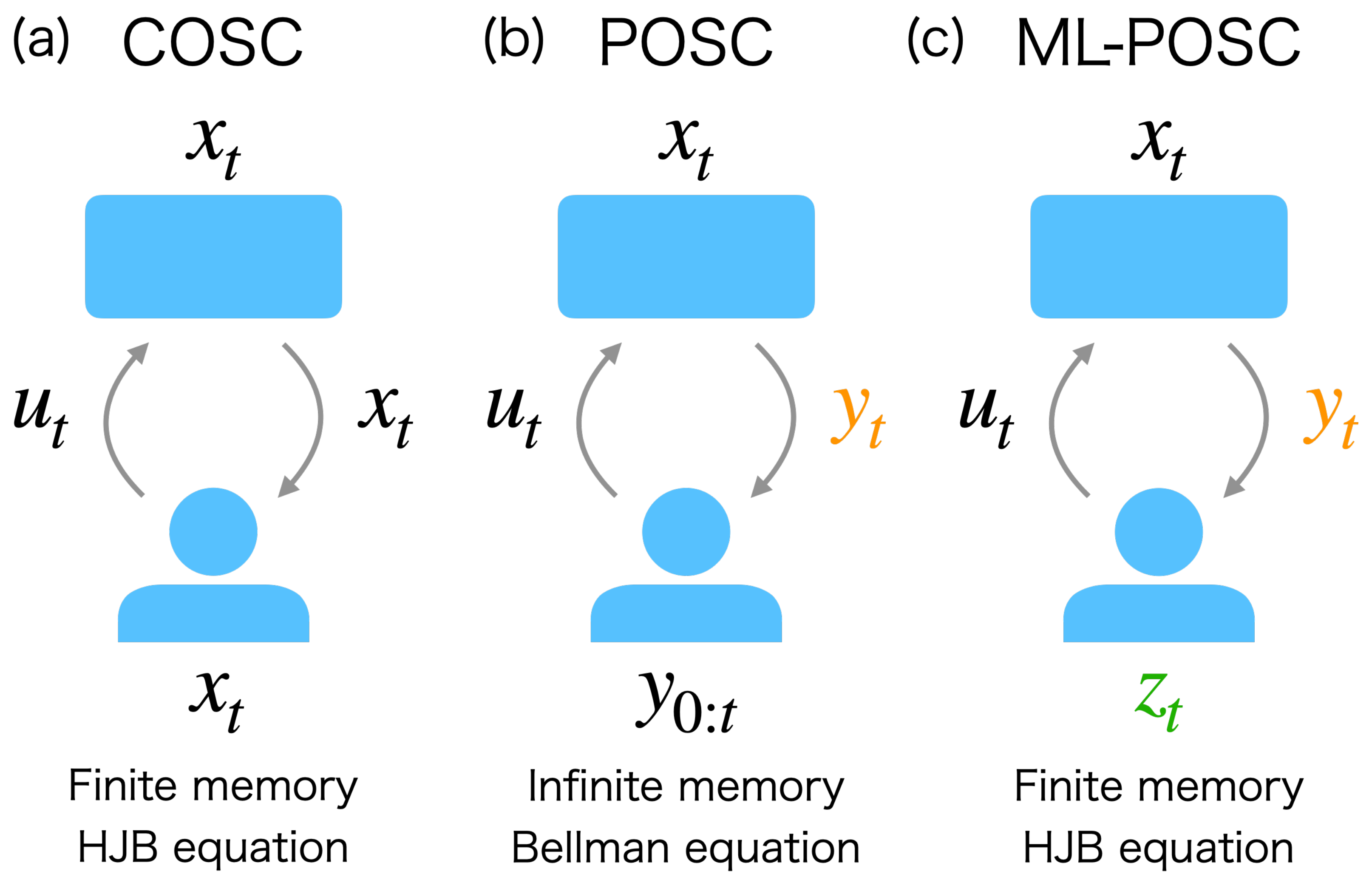

Partially observable stochastic control (POSC) is a conventional theoretical framework that considers the optimal control problem with one of these constraints, namely, the incomplete information of the system state (

Figure 1b) [

9]. Because the POSC controller cannot completely observe the state of the system, it determines the control based on the noisy observation history of the state. POSC can be solved in principle [

10,

11,

12] by converting it to a completely observable stochastic control (COSC) of the posterior probability of the state, as the posterior probability represents the sufficient statistics of the observation history. The posterior probability and the optimal control are obtained by solving the Zakai equation and the Bellman equation, respectively.

However, POSC has three practical problems with respect to the implementation of the controller which originate from the ignorance of the other constraint, namely, the memory limitation of the controller [

1,

2]. First, a controller designed by POSC should ideally have an infinite-dimensional memory to store and compute the posterior probability from the observation history. Second, the memory of the controller cannot have intrinsic stochasticity other than the observation noise to accurately compute the posterior probability via the Zakai equation. Third, POSC does not consider the cost originating from the memory update, which can be regarded as a cost of estimation. In light of the dualistic roles played by estimation and control, considering only control cost by ignoring estimation cost is asymmetric. As a result, POSC is not practical for control problems where the memory size, noise, and cost are non-negligible. Therefore, we need an alternative theoretical framework considering memory limitation to circumvent these three problems.

Furthermore, POSC has another crucial problem in obtaining the optimal state control by solving the Bellman equation [

3,

4]. Because the posterior probability of the state is infinite-dimensional, POSC corresponds to an infinite-dimensional COSC. In the infinite-dimensional COSC, the Bellman equation becomes a functional differential equation, which needs to be solved in order to obtain the optimal state control. However, solving a functional differential equation is generally intractable, even numerically.

In this work, we propose an alternative theoretical framework to the conventional POSC which can address the above-mentioned two issues. We call it memory-limited POSC (ML-POSC), in which memory limitation as well as incomplete information are directly accounted (

Figure 1c). The conventional POSC derives the Zakai equation without considering memory limitations. Then, the optimal state control is supposed to be derived by solving the Bellman equation, even though we do not have any practical way to do this. In contrast, ML-POSC first postulates the finite-dimensional and stochastic memory dynamics explicitly by taking the memory limitation into account and then jointly optimizes the memory dynamics and state control by considering the memory and control costs. As a result, unlike the conventional POSC, ML-POSC finds both the optimal state control and the optimal memory dynamics with given memory limitations. Furthermore, we show that the Bellman equation of ML-POSC can be reduced to the Hamilton–Jacobi–Bellman (HJB) equation by employing a trick from the mean-field control theory [

13,

14,

15]. While the Bellman equation is a functional differential equation, the HJB equation is a partial differential equation. As a result, ML-POSC can be solved, at least numerically.

The idea behind ML-POSC is closely related to that of the finite-state controller [

16,

17,

18,

19,

20,

21,

22]. Finite-state controllers have been studied using the partially observable Markov decision process (POMDP), that is, the discrete time and state POSC. The finite-dimensional memory of ML-POSC can be regarded as an extension of the finite-state controller of POMDP to the continuous time and state setting. Nonetheless, the algorithms of the finite-state controller cannot be directly extended to the continuous setting, as they strongly depend on the discreteness. Although Fox and Tishby extended the finite-state controller to the continuous setting, their algorithm is restricted to the special case [

1,

2]. ML-POSC resolves this problem by employing the technique of the mean-field control theory.

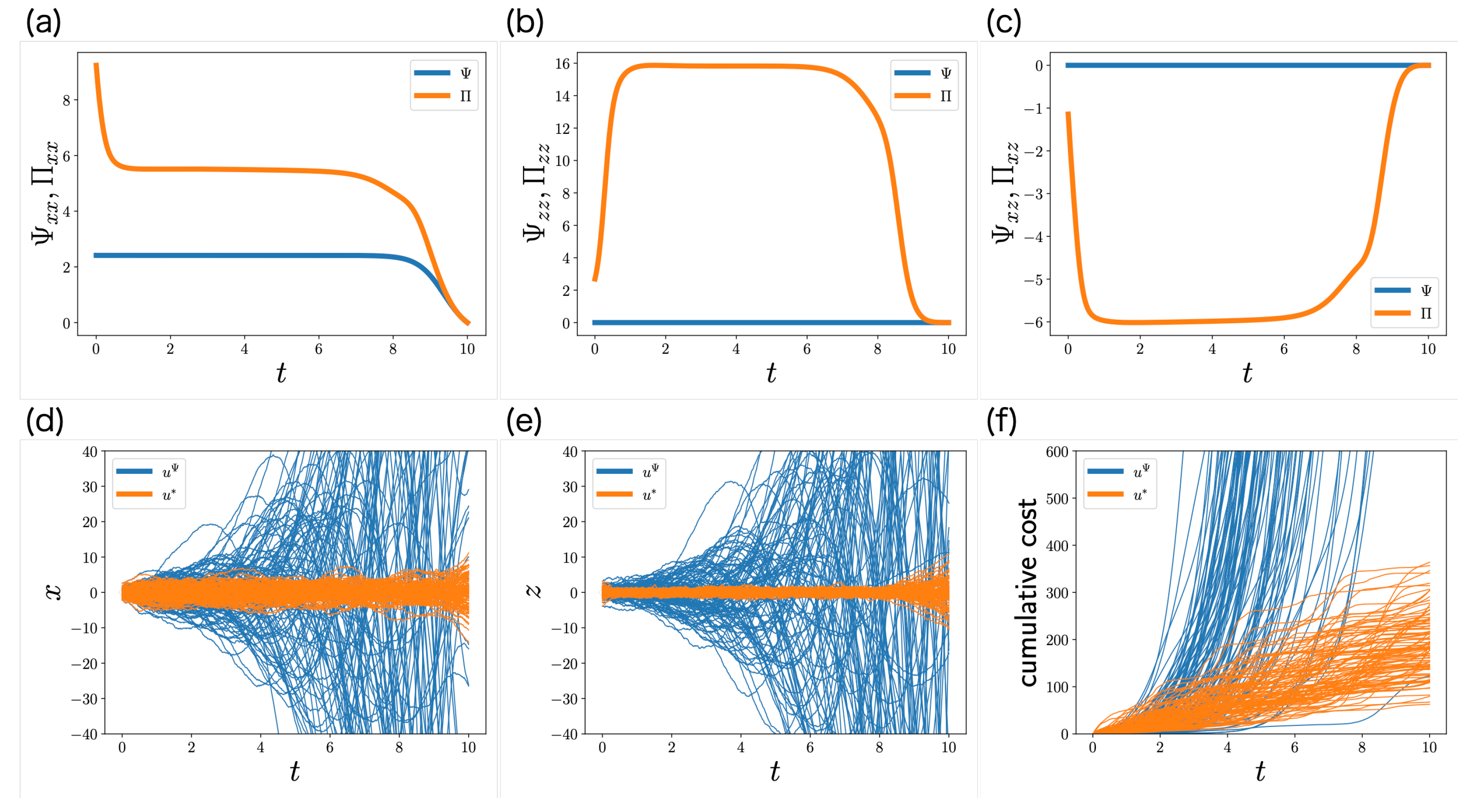

In the linear-quadratic-Gaussian (LQG) problem of the conventional POSC, the Zakai equation and the Bellman equation are reduced to the Kalman filter and the Riccati equation, respectively [

9,

23]. Because the infinite-dimensional Zakai equation is reduced to the finite-dimensional Kalman filter, the LQG problem of the conventional POSC can be discussed in terms of ML-POSC. We show that the Kalman filter corresponds to the optimal memory dynamics of ML-POSC. Moreover, ML-POSC can generalize the LQG problem to include memory limitations such as the memory noise and cost. Because estimation and control are not clearly separated in the LQG problem with memory limitation, the Riccati equation for control is modified to include estimation, which in this paper is called the partially observable Riccati equation. We demonstrate that the partially observable Riccati equation is superior to the conventional Riccati equation as concerns the LQG problem with memory limitation.

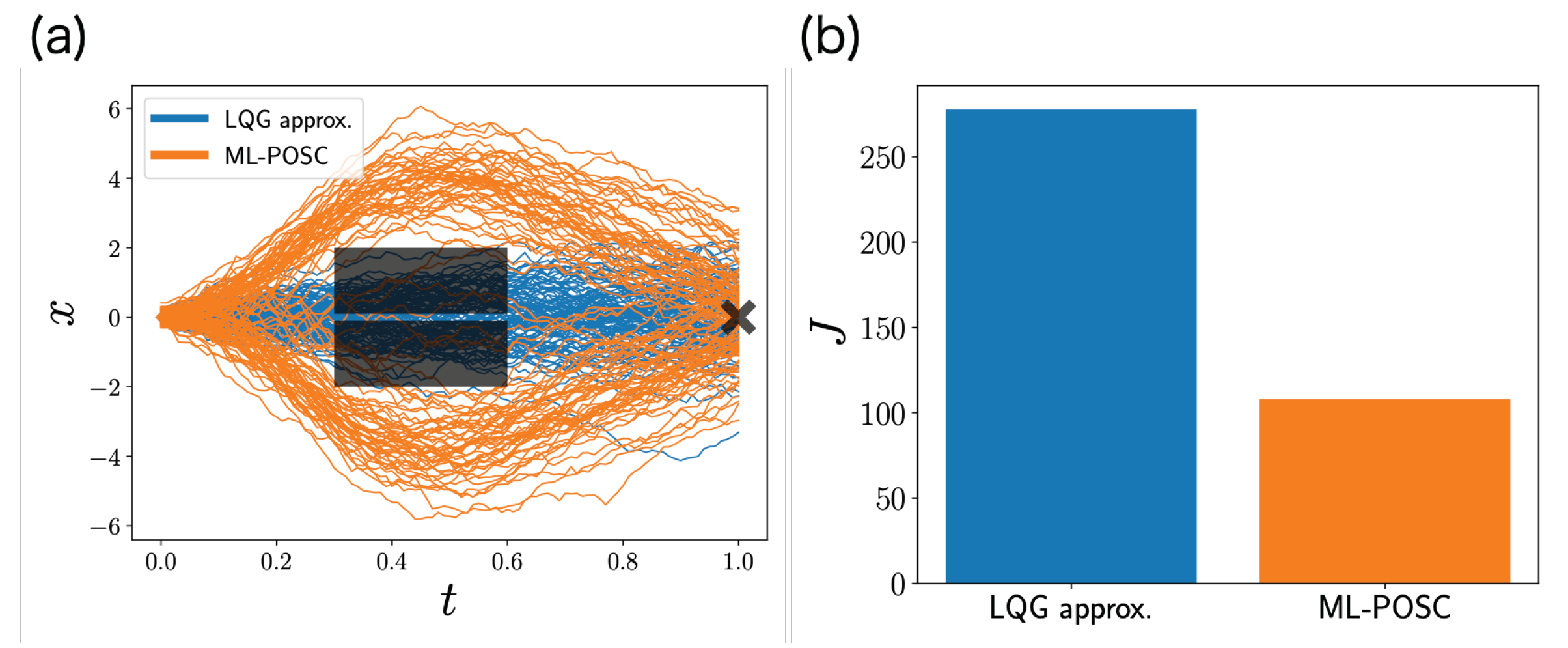

Then, we investigate the potential effectiveness of ML-POSC for a non-LQG problem by comparing it with the local LQG approximation of the conventional POSC [

3,

4]. In the local LQG approximation, the Zakai equation and the Bellman equation are locally approximated by the Kalman filter and the Riccati equation, respectively. Because the Bellman equation (a functional differential equation) is reduced to the Riccati equation (an ordinary differential equation), the local LQG approximation can be solved numerically. However, the performance of the local LQG approximation may be poor in a highly non-LQG problem, as the local LQG approximation ignores non-LQG information. In contrast, ML-POSC reduces the Bellman equation to the HJB equation while maintaining non-LQG information. We demonstrate that ML-POSC can provide a better result than the local LQG approximation in a non-LQG problem.

This paper is organized as follows: In

Section 2, we briefly review the conventional POSC. In

Section 3, we formulate ML-POSC. In

Section 4, we propose the mean-field control approach to ML-POSC. In

Section 5, we investigate the LQG problem of the conventional POSC based on ML-POSC. In

Section 6, we generalize the LQG problem to include memory limitation. In

Section 7, we show numerical experiments involving a LQG problem with memory limitation and a non-LQG problem. Finally, in

Section 8, we discuss our work.

3. Memory-Limited Partially Observable Stochastic Control

In order to address the above-mentioned problems, we propose an alternative theoretical framework to the conventional POSC called ML-POSC. In this section, we formulate ML-POSC.

3.1. Problem Formulation

In this subsection, we formulate ML-POSC. ML-POSC determines the control

based on the finite-dimensional memory

as follows:

The memory dimension

is determined not by the optimization but by the prescribed memory limitation of the controller to be used. Comparing (

3) and (

13), the memory

can be interpreted as the compression of the observation history

. While the conventional POSC compresses the observation history

into the infinite-dimensional posterior probability

, ML-POSC compresses it into the finite-dimensional memory

.

ML-POSC formulates the memory dynamics with the following SDE:

where

obeys

,

is the standard Wiener process, and

is the control for the memory dynamics. This memory dynamics has three important properties: (i) because it depends on the observation

, the memory

can be interpreted as the compression of the observation history

; (ii) because it depends on the standard Wiener process

, ML-POSC can consider the memory noise explicitly; (iii) because it depends on the control

, it can be optimized through the control

.

The objective function of ML-POSC is provided by the following expected cumulative cost function:

Because the cost function f depends on the memory control as well as the state control , ML-POSC can consider the memory control cost (state estimation cost) as well as the state control cost explicitly.

ML-POSC optimizes the state control function

u and the memory control function

v based on the objective function

, as follows:

ML-POSC first postulates the finite-dimensional and stochastic memory dynamics explicitly, then jointly optimizes the state and memory control function by considering the state and memory control cost. As a result, unlike the conventional POSC, ML-POSC can consider memory limitation as well as incomplete information.

3.2. Problem Reformulation

Although the formulation of ML-POSC in the previous subsection clarifies its relationship with that of the conventional POSC, it is inconvenient for further mathematical investigations. In order to resolve this problem, we reformulate ML-POSC in this subsection. The formulation in this subsection is simpler and more general than that in the previous subsection.

We first define the extended state

as follows:

where

. The extended state

evolves by the following SDE:

where

obeys

,

is the standard Wiener process, and

is the control. ML-POSC determines the control

based solely on the memory

, as follows:

The extended state SDE (

18) includes the previous state, observation, and memory SDEs (

1), (2) and (

14) as a special case; they can be represented as follows:

where

.

The objective function of ML-POSC is provided by the following expected cumulative cost function:

where

is the cost function and

is the terminal cost function. It is obvious that this objective function (

21) is more general than the previous one (

15).

ML-POSC is the problem of finding the optimal control function

that minimizes the objective function

as follows:

In the following section, we mainly consider the formulation in this subsection rather than that of the previous subsection, as it is simpler and more general. Moreover, we omit for the notational simplicity.

4. Mean-Field Control Approach

If the control

is determined based on the extended state

, i.e.,

, ML-POSC is the same as COSC of the extended state

, and can be solved by the conventional COSC approach [

10]. However, because ML-POSC determines the control

based solely on the memory

, i.e.,

, ML-POSC cannot be solved in a similar way as COSC. In order to solve ML-POSC, we propose the mean-field control approach in this section. Because the mean-field control approach is more general than the COSC approach, it can solve COSC and ML-POSC in a unified way.

4.1. Derivation of Optimal Control Function

In this subsection, we propose the mean-field control approach to ML-POSC. We first show that ML-POSC can be converted into a deterministic control of the probability density function, which is similar to the conventional POSC [

11,

15]. This approach is used in the mean-field control as well [

13,

14,

24,

25]. The extended state SDE (

18) can be converted into the following Fokker–Planck (FP) equation:

where the initial condition is provided by

and the forward diffusion operator

is defined by (

7). The objective function of ML-POSC (

21) can be calculated as follows:

where

and

. From (

23) and (

24), ML-POSC is converted into a deterministic control of

. As a result, ML-POSC can be approached in a similar way as the deterministic control, and the optimal control function is provided by the following lemma.

Lemma 1. The optimal control function of ML-POSC is provided bywhere is the Hamiltonian (10), is the conditional probability density function of a state x given memory z, is the solution of the FP Equation (23), and is the solution of the following Bellman equation:where . The controller of ML-POSC determines the optimal control based on the memory , not the posterior probability . Therefore, ML-POSC can consider memory limitation as well as incomplete information.

However, because the Bellman Equation (

26) is a functional differential equation, it cannot be solved, even numerically, which is the same problem as the conventional POSC. We resolve this problem by employing the technique of the mean-field control theory [

13,

14] as follows.

Theorem 1. The optimal control function of ML-POSC is provided bywhere is the Hamiltonian (10), is the conditional probability density function of a state x given memory z, is the solution of the FP Equation (23), and is the solution of the following Hamilton–Jacobi–Bellman (HJB) equation:where . While the Bellman Equation (

26) is a functional differential equation, the HJB Equation (

28) is a partial differential equation. As a result, unlike the conventional POSC, ML-POSC can be solved in practice.

We note that the mean-field control technique is applicable to the conventional POSC as well, and we obtain the HJB equation of the conventional POSC [

15]. However, the HJB equation of the conventional POSC is not closed by a partial differential equation due to the last term of the Bellman Equation (

12). As a result, the mean-field control technique is not effective with the conventional POSC except in a special case [

15].

In the conventional POSC, the state estimation (memory control) and the state control are clearly separated. As a result, the state estimation and the state control are optimized by the Zakai Equation (

6) and the Bellman Equation (

12), respectively. In contrast, because ML-POSC considers memory limitation as well as incomplete information, the state estimation and the state control are not clearly separated. As a result, ML-POSC jointly optimizes the state estimation and the state control based on the FP Equation (

23) and the HJB Equation (

28).

4.2. Comparison with Completely Observable Stochastic Control

In this subsection, we show the similarities and differences between ML-POSC and COSC of the extended state. While ML-POSC determines the control based solely on the memory , i.e., , COSC of the extended state determines the control based on the extended state , i.e., . The optimal control function of COSC of the extended state is provided by the following proposition.

Proposition 2 ([

10])

. The optimal control function of COSC of the extended state is provided by where is the Hamiltonian (10) and is the solution of the HJB Equation (28). Proof. The conventional proof is shown in [

10]. We note that it can be proven in a similar way as ML-POSC, which is shown in

Appendix C. □

Although the HJB Equation (

28) is the same between ML-POSC and COSC, the optimal control function is different. While the optimal control function of COSC is provided by the minimization of the Hamiltonian (

29), that of ML-POSC is provided by the minimization of the conditional expectation of the Hamiltonian (

27). This is reasonable, as the controller of ML-POSC needs to estimate the state from the memory.

4.3. Numerical Algorithm

In this subsection, we briefly explain a numerical algorithm to obtain the optimal control function of ML-POSC (

27). Because the optimal control function of COSC (

29) depends only on the backward HJB Equation (

28), it can be obtained by solving the HJB equation backwards from the terminal condition [

10,

26,

27]. In contrast, because the optimal control function of ML-POSC (

27) depends on the forward FP Equation (

23) as well as the backward HJB Equation (

28), it cannot be obtained in a similar way as COSC. Because the backward HJB equation depends on the forward FP equation through the optimal control function of ML-POSC, the HJB equation cannot be solved backwards from the terminal condition. As a result, ML-POSC needs to solve the system of HJB-FP equations.

The system of HJB-FP equations appears in the mean-field game and control [

28,

29,

30], and many numerical algorithms have been developed [

31,

32,

33]. Therefore, unlike the conventional POSC, ML-POSC can be solved in practice using these algorithms. Furthermore, unlike the mean-field game and control, the coupling of HJB-FP equations is limited to the optimal control function in ML-POSC. By exploiting this property, more efficient algorithms may be proposed for ML-POSC [

34].

In this paper, we use the forward–backward sweep method (the fixed-point iteration method) to obtain the optimal control function of ML-POSC [

33,

34,

35,

36,

37], which is one of the most basic algorithms for the system of HJB-FP equations. The forward–backward sweep method computes the forward FP Equation (

23) and the backward HJB Equation (

28) alternately. In the mean-field game and control, the convergence of the forward–backward sweep method is not guaranteed. In contrast, it is guaranteed in ML-POSC because the coupling of HJB-FP equations is limited to the optimal control function [

34].

6. Linear-Quadratic-Gaussian Problem with Memory Limitation

The LQG problem of the conventional POSC does not consider memory limitation because it does not consider the memory noise and cost. Furthermore, because the memory dimension is restricted to the state dimension, the memory dimension cannot be determined according to a given controller. ML-POSC can generalize the LQG problem to include the memory limitation. In this section, we discuss the LQG problem with memory limitation based on ML-POSC.

6.1. Problem Formulation

In this subsection, we formulate the LQG problem with memory limitation. The state and observation SDEs are the same as in the previous section, which are provided by (

30) and (

31), respectively. The controller of ML-POSC determines the control

based on the memory

, i.e.,

. Unlike the LQG problem of the conventional POSC, the memory dimension

is not necessarily the same as the state dimension

.

The memory

is assumed to evolve according to the following SDE:

where

obeys the Gaussian distribution

,

is the standard Wiener process, and

is the control. Because the initial condition

is stochastic and the memory SDE (

48) includes the intrinsic stochasticity

, the LQG problem of ML-POSC can consider the memory noise explicitly. We note that

is independent of the memory

. If

depends on the memory

, the memory SDE (

48) becomes non-linear and non-Gaussian. As a result, the optimal control functions cannot be derived explicitly in this case. In order to keep the memory SDE (

48) linear and Gaussian for obtaining the optimal control functions explicitly, we restrict

being independent of the memory

in the LQG problem with memory limitation. The LQG problem without memory limitation is the special case in which the optimal control

in (

45) does not depend on the memory

.

The objective function is provided by the following expected cumulative cost function:

where

,

,

, and

. Because the cost function includes

, the LQG problem of ML-POSC can consider the memory control cost explicitly. ML-POSC optimizes the state control function

u and the memory control function

v based on the objective function

, as follows:

For the sake of simplicity, we do not optimize , although this can be accomplished by considering unobservable stochastic control.

6.2. Problem Reformulation

Although the formulation of the LQG problem with memory limitation in the previous subsection clarifies its relationship with that of the LQG problem without memory limitation, it is inconvenient for further mathematical investigations. In order to resolve this problem, we reformulate the LQG problem with memory limitation based on the extended state

(

17). The formulation in this subsection is simpler and more general than that in the previous subsection.

In the LQG problem with memory limitation, the extended state SDE (

18) is provided as follows:

where

obeys the Gaussian distribution

,

is the standard Wiener process, and

is the control. The extended state SDE (

51) includes the previous state, observation, and memory SDEs (

30), (

31) and (

48) as a special case because they can be represented as follows:

where

.

The objective function (

21) is provided by the following expected cumulative cost function:

where

,

, and

. This objective function (

53) includes the previous objective function (

49) as a special case because it can be represented as follows:

The objective of the LQG problem with memory limitation is to find the optimal control function

that minimizes the objective function

, as follows:

In the following subsection, we mainly consider the formulation of this subsection rather than that of the previous subsection because it is simpler and more general. Moreover, we omit for notational simplicity.

6.3. Derivation of Optimal Control Function

In this subsection, we derive the optimal control function of the LQG problem with memory limitation by applying Theorem 1. In the LQG problem with memory limitation, the probability of the extended state

s at time

t is provided by the Gaussian distribution

. By defining the stochastic extended state

,

is provided as follows:

where

is defined by

By applying Theorem 1 to the LQG problem with memory limitation, we obtain the following theorem:

Theorem 3. In the LQG problem with memory limitation, the optimal control function of ML-POSC is provided bywhere (57) depends on , and and are the solutions of the following ordinary differential equations:where and , while and are the solutions of the following ordinary differential equations:where . Here, (

61) is the Riccati equation [

9,

10,

23], which appears in the LQG problem without memory limitation as well (

37). In contrast, (

62) is a new equation of the LQG problem with memory limitation, which in this paper we call the partially observable Riccati equation. Because estimation and control are not clearly separated in the LQG problem with memory limitation, the Riccati Equation (

61) for control is modified to include estimation, which corresponds to the partially observable Riccati Equation (

62). As a result, the partially observable Riccati Equation (

62) is able to improve estimation as well as control.

In order to support this interpretation, we analyze the partially observable Riccati Equation (

62) by comparing it with the Riccati Equation (

61). Because only the last term of (

62) is different from (

61), we denote it as follows:

can be calculated as follows:

where

. Because

and

,

and

may be larger than

and

, respectively. Because

and

are the negative feedback gains of the state

x and the memory

z, respectively,

may decrease

and

. Moreover, when

is positive/negative,

may be smaller/larger than

, which may increase/decrease

. A similar discussion is possible for

,

, and

, as

,

, and

are symmetric matrices. As a result,

may decrease the following conditional covariance matrix:

which corresponds to the estimation error of the state from the memory. Therefore, the partially observable Riccati Equation (

62) may improve estimation as well as control, which is different from the Riccati Equation (

61).

Because the problem in

Section 6.1 is specialized more than that in

Section 6.2, we can carry out a more specific discussion. In the problem in

Section 6.1,

is the same as the solution of the Riccati equation of the conventional POSC (

37), and

,

, and

are satisfied. As a result, the memory control does not appear in the Riccati equation of ML-POSC (

61). In contrast, because of the last term of the partially observable Riccati Equation (

62),

is not the solution of the Riccati Equation (

37), and

,

, and

are satisfied. As a result, the memory control appears in the partially observable Riccati Equation (

62), which may improve the state estimation.

6.4. Comparison with Completely Observable Stochastic Control

In this subsection, we compare ML-POSC with COSC of the extended state. By applying Proposition 2 in the LQG problem, the optimal control function of COSC of the extended state can be obtained as follows:

Proposition 4 ([

10,

23])

. In the LQG problem, the optimal control function of COSC of the extended state is provided by where is the solution of the Riccati Equation (61). Proof. The proof is shown in [

10,

23]. □

The optimal control function of COSC of the extended state (

66) can be derived intuitively from that of ML-POSC (

58). In ML-POSC,

is the estimator of the stochastic extended state. In COSC of the extended state, because the stochastic extended state is completely observable, its estimator is provided by

, which corresponds to

. By changing the definition of

K from (

57) to

, the partially observable Riccati Equation (

62) is reduced to the Riccati Equation (

61), and the optimal control function of ML-POSC (

58) is reduced to that of COSC (

66). As a result, the optimal control function of ML-POSC (

58) can be interpreted as the generalization of that of COSC (

66).

While the second term is the same between (

58) and (

66), the first term is different. The second term is the control of the expected extended state

, which does not depend on the realization. In contrast, the first term is the control of the stochastic extended state

, which depends on the realization. The first term has two different points: (i) The estimators of the stochastic extended state in COSC and ML-POSC are provided by

and

, respectively, which is reasonable because ML-POSC needs to estimate the state from the memory; and (ii) The control gains of the stochastic extended state in COSC and ML-POSC are provided by

and

, respectively. While

improves only control,

improves estimation as well as control.

6.5. Numerical Algorithm

In the LQG problem, the partial differential equations are reduced to the ordinary differential equations. The FP Equation (

23) is reduced to (

59) and (

60), and the HJB Equation (

28) is reduced to (

61) and (

62). As a result, the optimal control function (

58) can be obtained more easily in the LQG problem.

The Riccati Equation (

61) can be solved backwards from the terminal condition. In contrast, the partially observable Riccati Equation (

62) cannot be solved in the same way as the Riccati Equation (

61), as it depends on the forward equation of

(

60) through

K (

57). Because the forward equation of

(

60) depends on the backward equation of

(

62) as well, they must be solved simultaneously.

A similar problem appears in the mean-field game and control, and numerous numerical methods have been developed to deal with it [

33]. In this paper, we solve the system of (

60) and (

62) using the forward–backward sweep method, which computes (

60) and (

62) alternately [

33,

34]. In ML-POSC, the convergence of the forward–backward sweep method is guaranteed [

34].

8. Discussion

In this work, we propose ML-POSC, which is an alternative theoretical framework to the conventional POSC. ML-POSC first formulates the finite-dimensional and stochastic memory dynamics explicitly, then optimizes the memory dynamics considering the memory cost. As a result, unlike the conventional POSC, ML-POSC can consider memory limitation as well as incomplete information. Furthermore, because the optimal control function of ML-POSC is obtained by solving the system of HJB-FP equations, ML-POSC can be solved in practice even in non-LQG problems. ML-POSC can generalize the LQG problem to include memory limitation. Because estimation and control are not clearly separated in the LQG problem with memory limitation, the Riccati equation can be modified to the partially observable Riccati equation, which improves estimation as well as control. Furthermore, ML-POSC can provide a better result than the local LQG approximation in a non-LQG problem, as ML-POSC reduces the Bellman equation while maintaining non-LQG information.

ML-POSC is effective for the state estimation problem as well, which is a part of the POSC problem. Although the state estimation problem can be solved in principle by the Zakai equation [

38,

39,

40], it cannot be solved directly, as the Zakai equation is infinite-dimensional. In order to resolve this problem, a particle filter is often used to approximate the infinite-dimensional Zakai equation as a finite number of particles [

38,

39,

40]. However, because the performance of the particle filter is guaranteed only in the limit of a large number of particles, a particle filter may not be practical in cases where the available memory size is severely limited. Furthermore, a particle filter cannot take the memory noise and cost into account. ML-POSC resolves these problems, as it can optimize the state estimation under memory limitation.

ML-POSC may be extended from a single-agent system to a multi-agent system. POSC of a multi-agent system is called decentralized stochastic control (DSC) [

41,

42,

43], which consists of a system and multiple controllers. In DSC, each controller needs to estimate the controls of the other controllers as well as the state of the system, which is essentially different from the conventional POSC. Because the estimation among the controllers is generally intractable, the conventional POSC approach cannot be straightforwardly extended to DSC. In contrast, ML-POSC compresses the observation history into the finite-dimensional memory, which simplifies estimation among the controllers. Therefore, ML-POSC may provide an effective approach to DSC. Actually, the finite-state controller, the idea of which is similar with ML-POSC, plays a key role in extending POMDP from a single-agent system to a multi-agent system [

22,

44,

45,

46,

47,

48]. ML-POSC may be extended to a multi-agent system in a similar way as a finite-state controller.

ML-POSC can be naturally extended to the mean-field control setting [

28,

29,

30] because ML-POSC is solved based on the mean-field control theory. Therefore, ML-POSC can be applied to an infinite number of homogeneous agents. Furthermore, ML-POSC can be extended to a risk-sensitive setting, as this is a special case of the mean-field control setting [

28,

29,

30]. Therefore, ML-POSC can consider the variance of the cost as well as its expectation.

Nonetheless, more efficient algorithms are needed in order to solve ML-POSC with a high-dimensional state and memory. In the mean-field game and control, neural network-based algorithms have recently been proposed which can solve high-dimensional problems efficiently [

49,

50]. By extending these algorithms, it might be possible to solve high-dimensional ML-POSC efficiently. Furthermore, unlike the mean-field game and control, the coupling of HJB-FP equations is limited to the optimal control function in ML-POSC. By exploiting this property, more efficient algorithms for ML-POSC may be proposed [

34].