Abstract

In this paper we propose a novel transform domain steganography technique—hiding a message in components of linear combination of high order eigenfaces vectors. By high order we mean eigenvectors responsible for dimensions with low amount of overall image variance, which are usually related to high-frequency parameters of image (details). The study found that when the method was trained on large enough data sets, image quality was nearly unaffected by modification of some linear combination coefficients used as PCA-based features. The proposed method is only limited to facial images, but in the era of overwhelming influence of social media, hundreds of thousands of selfies uploaded every day to social networks do not arouse any suspicion as a potential steganography communication channel. From our best knowledge there is no description of any popular steganography method that utilizes eigenfaces image domain. Due to this fact we have performed extensive evaluation of our method using at least 200,000 facial images for training and robustness evaluation of proposed approach. The obtained results are very promising. What is more, our numerical comparison with other state-of-the-art algorithms proved that eigenfaces-based steganography is among most robust methods against compression attack. The proposed research can be reproduced because we use publicly accessible data set and our implementation can be downloaded.

1. Introduction

Steganography is a technique of hidden communication. The fact of passing messages between sender and recipient is kept secret by embedding messages in inconspicuous containers. These may be either common files, for example images and videos, or unexpected media, like geospatial data [1], network packets [2] and others [3]. In the digital era nearly any type of file may carry additional hidden data, but images are among most popular because the human visual system is unable to perceive subtle changes introduced by embedding process. There are many possible approaches to image-based steganography and we will discuss selected methods in the following subsection.

1.1. State-of-the-Art in Steganography

In spatial domain, the most known steganography technique is least significant bit (LSB) in which some bits of pixels are replaced with bits of a message. It may utilize various numbers of bits and mapping strategies, for example in [4,5] four least significant bits are substituted. Algorithm described in [6] uses plane bit substitution method in which message bits are embedded into the pixel value(s) of an image. A steganography transformation machine is proposed for solving binary operation for manipulation of the original image with help of LSB operator based matching. Ref. [7] uses five pixels pair differencing technique that is a combination of LSB and pixel value differencing (PVD) steganography. In [8] LSB message encoding is preceded by CRC-32 checksum, then the codeword is compressed by gzip just before encrypting it by AES, and is finally added to encrypted header information for further processing. During embedding the encrypted data, the Fisher–Yates Shuffle algorithm is used for selecting the next pixel location.

Least significant bit replacement is among the most used spatial domain techniques whereas discrete cosines transform (DCT) [9] and discrete wavelet transform (DWT) [10] based methods are major choices in the frequency domain. Additionally, many steganography techniques are based on singular value decomposition (SVD) for embedding secret message. These techniques conceal data in either right singular vectors, left singular vectors, singular values or combinations of all approaches in spatial domain or transform domain with satisfactory performance to various attacks [11,12,13,14]. Paper [15] describes image and [16] video steganography for face recognition for trusted and secured authentication by applying principal component analysis (PCA), namely the eigenfaces method. In those approaches signcryption is included for additional security measures.

An opposite approach for image steganography is presented in paper [17] where authors presented new generating technique which does not require any additional image to cover secret text. Instead, the image is created from scratch, on the basis of the text that we want to send securely.

The other steganographic techniques are based on the key generating process. In paper [18] authors used a new method of data hiding using Catalan numbers and Dyck words. The hidden message is generated with the data carrier and an adequate complex stego key. An important characteristic of the proposed method is that the data carrier retains its original shape, without supplements or modifications. Paper [19] presents an effective method of encryption and decryption of images in multi-party communications. Encryption is based on weighted Moore–Penrose inverse over the constant matrix.

There are many documents describing state-of-the-art steganography methods. A comprehensive discussion about combining individual’s biometric characteristics with steganography may be found in paper [20]. The more general surveys are presented in [21,22,23,24].

1.2. Motivation of This Paper

In this paper we propose a novel transform domain technique in which the message is hidden in components of linear combination of high order eigenfaces vectors. By high order we mean eigenvectors responsible for dimensions with low amount of overall image variance, which are usually related to high-frequency parameters of image (details). They seem to have marginal influence on image quality, especially if the eigenfaces method is trained on large enough data sets.

The choice of facial images as medium is based on overwhelming influence of social media in which hundreds of thousands of photos are uploaded every day. The high percentage of them are selfies which do not arouse any suspicion as potential steganography communication channel.

The main difference between proposed algorithm and already existing solutions is that our method utilized a certain fragments of the image, namely faces that are present in pictures. Due to this fact after hiding a secret, the rest of the image beside faces remains unmodified. Thanks to this there are no global histogram shifts in the image, which is a main indicator of potential image modification. What is more, we wanted our method to be highly robust to various common image transformations. We wanted obtain a bit-level accuracy of encoded data rather than pixel-level accuracy like in [14], so our priority was robustness rather than carrier capacity. Therefore proposed method can be easily used not only to watermarking but also to communication through the channel that does not preserve integrity of the message, for example when the image is uploaded on social media, its quality may be altered.

For our best knowledge there is no description of any popular steganography method that utilizes eigenfaces image domain. Due to this fact we have performed extensive evaluation of our method using at least 200,000 facial images for training and robustness evaluation of the proposed approach. The proposed research can be reproduced because we use publicly accessible data set and our implementation can be download from GitHub repository (https://github.com/browarsoftware/EigenfacesSteganography, accessed on 30 January 2021).

The paper is composed of five sections. In the Section 2 we have presented proposition of our steganography method, the dataset we have used for tests and evaluation metrics. We have performed extensive validation of proposed method which results are presented in Section 3. In the Section 4 and Section 5 and we have discussed obtained results presenting advantages and disadvantages of eigenfaces-based steganography and potential solutions that can be used to overcome its drawbacks.

2. Materials and Methods

In this section we propose our novel method, present the data set we used for training and validation and explain robustness tests we have done.

2.1. Eigenfaces and Eigenfaces-Based Steganography

An eigenface is a k-dimensional vector of real values that represents features of pre-aligned facial image. Eigenfaces are based on principal component analysis (PCA). Let us suppose that we have a set of l images with uniform dimensionality . Each face image being initially a two-dimensional matrix is “flattened” by placing its columns one below another, thereby becoming a single column vector. Then we use PCA to perform variance analysis and to recalculate current coordinates of those vectors into PCA coordinates systems where axes are ordered from those that represent highest variance to those that represent lowest variance. Let us assume that we have matrix D with dimensionality in which each column is a flattened image:

Next we calculate a column vector M (so called mean face) in which each row is a mean value of a corresponding row in matrix D

where is the j-th pixel of i-th image. This mean face is then subtracted from each column of matrix D to calculate new matrix called :

Then a covariance matrix is created:

Because C is symmetric and positively defined, all eigenvalues have real positive values. Eigenfaces E are calculated as:

where are eigenvectors of C ordered from the highest to the lowest eigenvalue.

In order to generate eigenfaces-based features of face image one has to perform following operation:

The inverse procedure that recalculates image vector coordinates to original coordinate system is:

We can use k first eigenvectors where . In this case and keeps at least percentage of variance equals to scaled cumulative sum of eigenvalues cooresponding to k first eigenvectors. In other words when , vector represents “compressed” facial image in respect to overall variance. features are coefficients of linear combination of E. Coefficients with lower indices corresponds to dimensions with higher variance.

Eigenface-based feature calculation is performed after designation of in (5). We have to subtract mean vector M from the face image in the same manner, as it was performed in (3). Image may be replaced by any face image, also not included in D, however it has to have the same resolution as images in D, namely . When is not from data set D, then we can safely assume that .

The idea of eigenface-based steganography is to replace range of coefficients with a binary encoded message s with the length o. j is an offset from the beginning of the eigenface-based features vector. The message is stored by changing original values of linear combination to binary values: where represents 0, represents 1 and is a scaling parameter. Transformation of original binary message s into values of message , which is directly inserted into eigenfaces coefficients, goes as follows:

where is i-th binary coefficient of vector s that contains a message to hide. The inverse procedure is:

where round denotes rounding to the closest integer.

The offset value j and the maximum value of secret length o need to be determined. It is a subject of discussion in following sections. The message hiding algorithm is presented in Algorithm 1. Message recovering algorithm is presented in Algorithm 2.

| Algorithm 1: Message encoding algorithm. |

| Data: J—image containing a face, |

| s—message to hide with length o bits, |

| j—offset from which the coefficients will be replaced by message, |

| —divider parameter. |

| Result: Image with hidden data. |

| 1. Extract face image from J (Figure 1A); |

| 2. Perform aligning of extracted face and store it in matrix ; |

| 3. Generate from using (6) (Figure 1B,D); |

| 4. Starting from index j replace original o values in by , which are scaled coefficients that represents binary message data (8) (Figure 1E,F); |

| 5. By application of (7) generate using modified (Figure 1G); |

| 6. Insert into J replacing original face, image is created (Figure 1H); |

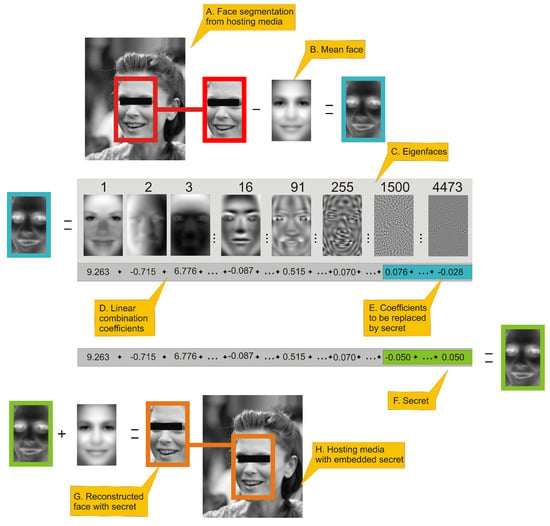

Figure 1.

This figure presents an outline of eigenface-based steganography secret encoding framework. (A.) a face image is extracted from an image; (B.) a mean face is subtracted from face image. (C.) presents selected eigenfaces visualized as 2D images. (D.) By applying Equation (6) we can generate eigenfaces-based features of a face, which is a linear combination of coefficients. Values in sum (D.) are actual coefficients of the face in turquoise frame. High-order coefficients (E.) of that linear combination have fractional influence on reconstructed image visual quality and can be used to hide the secret. Hiding can be done simply be replacing those coefficients by rescaled secret with Equation (8). (F.) is a linear combination coefficient with high-order coefficients replaced by a secret. That linear combination is used to reconstruct face image using Equation (7), which is represented as the face inside green frame. The last two steps is: (G.) adding mean face to face with hidden data and: (H.) inserting face image to original hosting media.

Figure 1 presents the procedure of encoding a secret. All numerical values presented in this figure will be justified in the Section 3. Person in image has been anonymized. The number above eigenface is its index. Eigenfaces are ordered by decreasing eigenvalues that corresponds to them. As can be seen several first eigenfaces represents global properties of image (i.e., lighting), next eigenfaces are responsible for high-frequency details.

| Algorithm 2: Message decoding algorithm. |

| Data: J—image containing a face, |

| j—offset from which the coefficients have been replaced by message, |

| —divider parameter |

| Result: s—decoded message with length o bits. |

| 1. Extract face image from J; |

| 2. Perform aligning of extracted face and store it in matrix ; |

| 3. Generate from using (6); |

| 4. Starting from index j extract o values from which are rescaled message’s values (), then restore the binary message by rescaling with parameter (9); |

The procedure of decoding a secret, which is presented in Figure 2, is very similar to process of encoding.

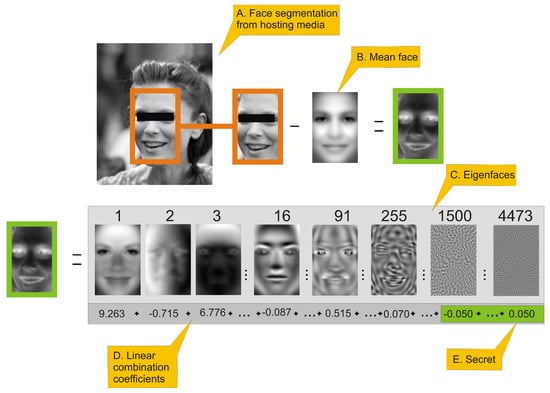

Figure 2.

This figure presents an outline of eigenfaces-based steganography secret decoding framework. The person in the image has been anonymized. (A.) a face image is extracted from an image with embedded secret; (B.) a mean face is subtracted from face image. (C.) presents selected eigenfaces visualized as 2D images. The number above eigenface is its index (those are same eigenfaces as in Figure 1). (D.) By applying Equation (6) we can generate eigenface-based features of a face, which is a linear combination of coefficients (D.) Values in sum (D.) are actual coefficients of the face in orange frame. High-order coefficients (E.) of that linear combination are secret data scaled by Equation (8). In order to recover original data we have to apply Equation (9) to those coefficients.

2.2. The Data Set

In order to successfully generate eigenfaces with a strong descriptive power and to validate our approach, we needed a sufficiently large collection of faces. Among the largest publicly available open faces repositories we have chosen Large-scale CelebFaces Attributes (CelebA) Dataset [25] which may be downloaded from Internet (http://mmlab.ie.cuhk.edu.hk/projects/CelebA.html, accessed on 1 January 2021). It consisted of 202,598 images of celebrities, actors, sportspersons etc. In this research we have used aligned and cropped version of those images. Each of aligned and cropped photos has the same resolution and the face is centered so that eyes of each person are located nearly in the same position. There is no information how the alignment has been done; however it can be very closely reproduced with Histogram of Oriented Gradients (HOG) method [26]. The face position estimator can be created using Python dlib’s library implementation of the work of Kazemi et al. [27] with face landmark data set (https://github.com/davisking/dlib-models, accessed on 1 January 2021.) trained on [28]. In order to reduce the complexity of further computations, we have limited the size of images to resolution , cropping all regions of image beside very face. For the same reason we have also converted images from RGB to grayscale. We have to remember, however, that proposed steganography approach may also be applied to RGB data, because eigenfaces are calculated in the same way on multiple colour channels as on single channel. Nonetheless, this discussion is out of scope of this paper.

2.3. Comparison of Covariance Matrices

The very heart of eigenfaces approach is preparation of a representative data set that is used to calculate linear transform from original coordinates to space (obtained by principal component analysis). Theoretically the more face samples we take (provided that the data set covers representative sample of images for future encoding), the better variance analysis we get. However, given that we operate on personal computers with limited RAM, we have to keep in mind that solving eigenvalue problem is complex and time demanding task, especially for relatively large matrices (i.e., or larger). Due to this we wanted to estimate the size of training set above which the covariance matrix will not change much. In order to do so, we compared the geodesic distance between covariance matrix generated from whole training data set and covariance matrices generated from subsets of validation data set of different sizes where are subsets of validation data set.

In order to calculate covariance matrix we used formula:

where has the same dimension no matter how many images l were used in calculation.

Due to the fact that covariance matrices are symmetric positive definite, we can measure distance between them using the Log-Euclidean Distance (LED) [29]. Let as assume that A and B are symmetric positive definite. The geodesic distance between A and B can be expressed in the domain of matrix logarithms [30] as:

In the above equation is a Frobenius norm which is calculated for n by m matrix A as [31]:

Let us assume that C and D are square matrices. A matrix C is a logarithm of D when:

where matrix exponential is defined as:

Any non-singular matrix has infinitely many logarithms. As the covariance matrix does not have negative eigenvalues, we can use method described in [32] to calculate the logarithm.

Another approach for calculating influence of correlation matrix size on quality of reconstruction from limited number of PCA coefficients is based on two measures: averaged mean square error (MSE) (15) between actual () and reconstructed () image and averaged Pearson correlation coefficient (CC) (16) between actual and PCA compressed image. Mean square error between actual and reconstructed image is defined as:

The Pearson correlation coefficient (CC) between actual and reconstructed image is defined as:

where is covariance coefficient between and and , are standard deviations of and .

To better visualize relationship of LED and size of covariance matrix, we can also use relative values of LED coefficient:

Relative MSE and Relative CC may be calculated similarly as in (17).

LED geodesic distances were compared with averaged mean square error (MSE) and averaged Pearson correlation coefficient (CC) between actual and reconstructed images from validation data set generated by PCA. The goal of this test was to check if the geodesic distance corresponds to averaged MSE and CC of PCA-compressed data.

2.4. Robustness Tests

We tested the robustness of proposed steganography method to common transformations. Each test has been applied to the image that contained hidden data.

- Rotation of image about its centre element using third-order spline interpolation.

- Salt and pepper—replacing given number of randomly chosen pixels with either 0 (black) or 255 (white) value.

- JPEG compression with various quality settings [33].

- Linear scaling with bicubic pixels interpolation.

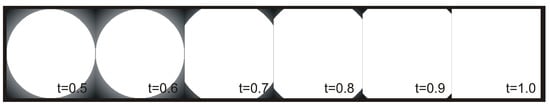

- Image cropping—for obvious reasons the eigenfaces method is very sensitive to image cropping, however it seems to be resistant to mixing encoded signal with an original image. We can do it with following steps. At first we create a matrix , which elements are defined using following formulas:We can use that matrix to mix the original image I with image with encoded data E by linearly scaling the amount of E using threshold parameter t:

Figure 3 presents how changes the shape of circular elements when the value of threshold t increases.

Messages retrieved from disturbed images were compared with the original message using following similarity coefficients or measures:

- Binary coefficient (BC) that equals 0 when both messages are identical and 1 otherwise.

- Levenshtein distance (LD) [34] which is a measure of the similarity between two strings. The distance is the number of deletions, insertions, or substitutions required to transform one into another. The greater the Levenshtein distance, the more divergent those strings are [35].

- Sørensen–Dice coefficient [36]:where , are binary vectors and is cardinal of binary vector v.

We have also used two following metrics to compare results obtained by our solution to state-of-the-art method: Pearson correlation (CC) and peak-signal-to-noise-ratio (PSNR). CC is used to evaluate the similarity between the original secret and the secret message and PSNR in dB is used to evaluate the similarity of the original image and the stegoimage.

where is the maximum value of vector pixels over the original image (in our case 255) and the is represents the mean square error between the stegoimage and the original image.

2.5. Hiding Data in Larger Images

When the facial image is a part of larger photo (which is true in most cases), it should first be extracted, then encoded and decoded using eigenfaces and finally inserted back into the photo. As the modified face is not identical to the original, it may be possible to spot visual artifacts in a rectangular region containing a face. In order to blur the manipulation, we can use the clipping procedure described in Equations (18)–(21). Then, step 5 of the message encoding algorithm (Algorithm 1) is extended in following manner:

The algorithm has one additional parameter t (threshold from (20)). The message decoding algorithm (Algorithm 2) remains unchanged.

3. Results

The proposed solution was implemented in Python 3.8 (however it seemed there were no obstacles to run it on lower version of this language). Among the most important packages we used was OpenCV-python 4.1.2.30 for general purpose image processing. For algorithms training and evaluation we used a PC computer equipped with Intel i7-9700F 3.00 GHz CP, 64 GB RAM, NVIDIA GeForce RTX 2060 GPU with Windows 10 OS, and the second PC with similar hardware architecture, however with 128 GB RAM with Linux OS.

We used data set described in Section 2.2 to evaluate this research. The faces data set was divided into halves. The first half (101,299 faces) was used as the training data set; the second half (101,300 faces) was a validation/test data set.

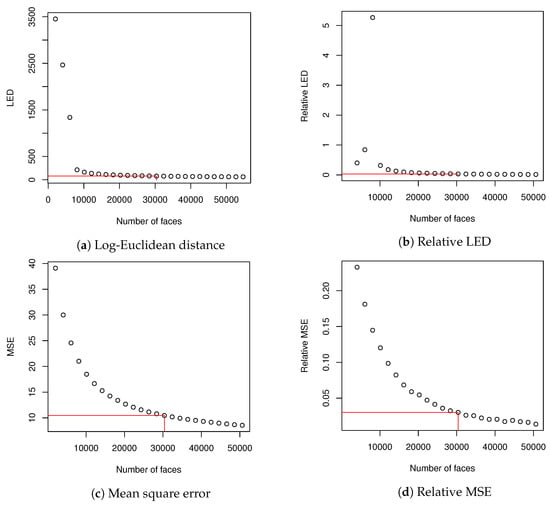

At first we estimated how many images we should use to create eigenfaces. In order to do so, we compared covariance matrices using methodology described in Section 2.3. To create (9) matrix, we used the whole validation dataset. In order to create matrices, we used training dataset that was divided into subsets. Subset with index a contained faces (2025 is about 2% of training dataset), where .

and were compared using Log-Euclidean Distance (10). It was possible because both matrices had identical dimensions. Then, for each , every image in training data set was encoded and then decoded using eigenfaces that explained at least of variance; as a result an image was created. MSE (15) and CC (16) were calculated for and corresponding (they were calculated for images from the test data set because were generated from validation data set). Averaged values of MSE and CC showed how well eigenfaces of various described the data set. We also made calculations of relative values of LED and MSE according to Equation (16). CC coefficient had a high value from the beginning and did not change much and due to this we skipped calculation of relative CC. When we used 30,375 images to create correlation matrix for PCA, both relative LED and relative MSE dropped below . Basing on this fact, we decided that it might be a sufficient size of the training data set to evaluate our methodology. What is more, calculation of eigen decomposition for matrix could be done in a reasonable time and further increase of data set did not change much in LED and averaged MSE. We have marked our choice of dataset size in Table 1 with two horizontal lines.

Table 1.

This table presents values of Log-Euclidean Distance (LED), mean square error (MSE) and Pearson correlation (CC) coefficients calculated from the test data set. In order to calculate MSE and CC for each each image in training data set is encoded and then decoded using eigenfaces that explain at least of variance.

Results from Table 1 (beside CC, which did not change much during the experiment) are visualized in Figure 4.

There was a limited amount of data to be hidden within eigenfaces, which were constrained the most by the face image resolution that was used to produce matrix D (1) and then (5). The second constraint was distribution of variance among eigenfaces, the influence of which on the capacity of the medium is discussed later on.

Typically steganography algorithms are evaluated on set of benchmark images like Lena, Pepper, Airplane etc. In our case however eigenfaces-based steganography operates only on face data. Because of it we need different validation dataset. Evaluation of robustness of proposed steganography algorithm was performed with methodology described in Section 2.4. We used training data set containing faces and validation data set with 101,300 faces. Scaling parameter in binary data encoding was arbitrary set to 20.

After applying PCA, we have calculated the number of dimensions that describe variance in test data set (consisting of 30,375 faces). 0.75 of variance was explained by 15 dimensions, 0.9 variance by 90 dimensions, 0.95 variance by 254 dimensions, 0.99 variance by 1499 dimensions and finally 0.999 variance by 4473 dimensions. Larger number of dimensions used for image encoding may introduce some high-frequency noises caused by not statistically significant data fluctuations in training data set. We can encode data in any eigenfaces coefficients between first and 4473rd value; however changes in linear combination of coefficients that are more important for variance explanation will be clearly visible in the encoded image. Because of that we decided to modify data between 1499 and 4473 coefficients. In this configuration potential maximal capacity of the image is Bytes. In further tests we have considered following lengths of messages: 18 bytes (∼5% of capacity), 37 bytes (∼10% of capacity), 87 bytes (∼23% of capacity), 174 (∼47% of capacity) and 370 (∼100% of capacity). We have chosen those values for convenience of calculations; also distribution of lengths allows to nicely plot the dependencies of proposed steganography method performance as a function of robustness tests parameters. Using five possible values of messages length unevenly distributed in data set allows visualizing general characteristics of proposed method. Of course it is possible to evaluate method using more sample points, however it would not introduce much new information. Additionally, evaluation of such large validation data set (approximately 100,000 images) lasted 24 to even 36+ h for each robustness test on hardware that we have used.

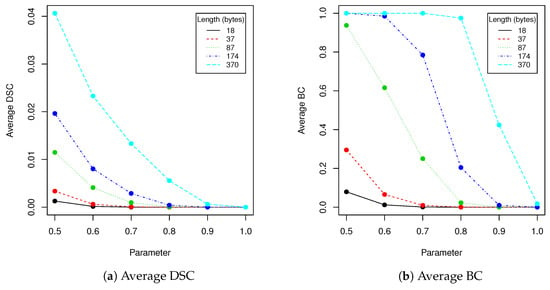

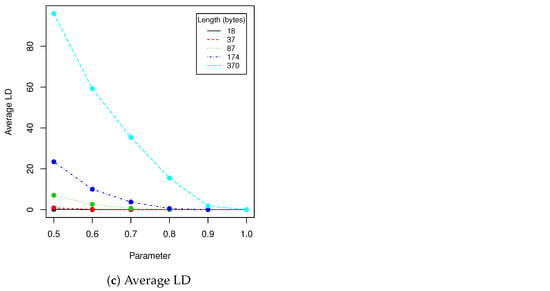

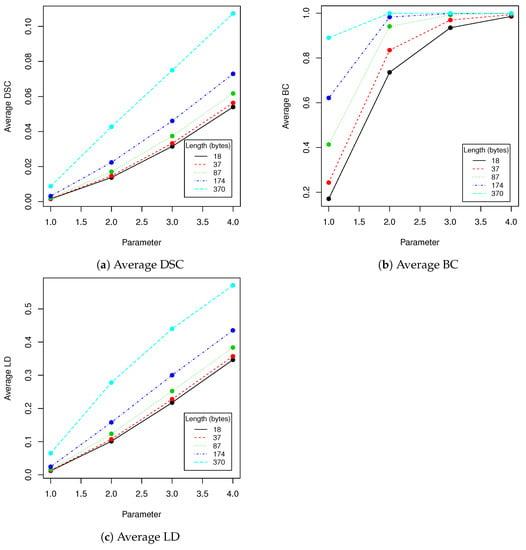

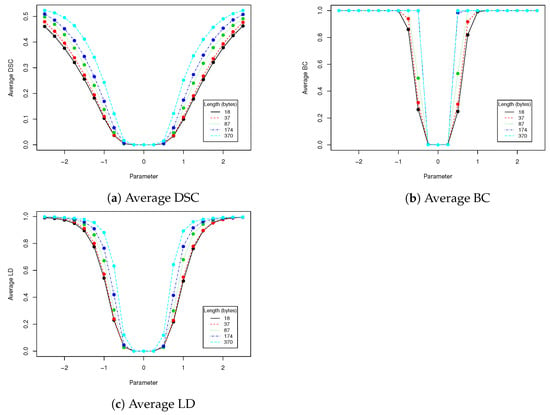

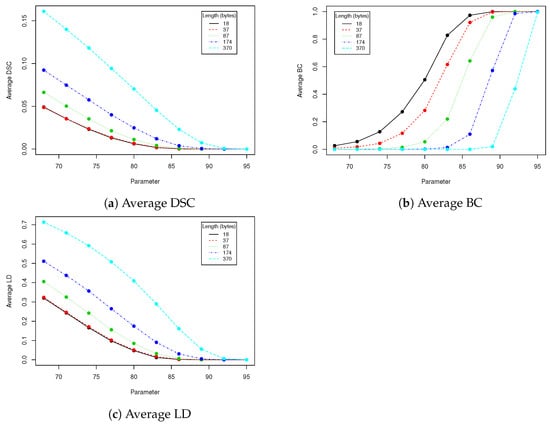

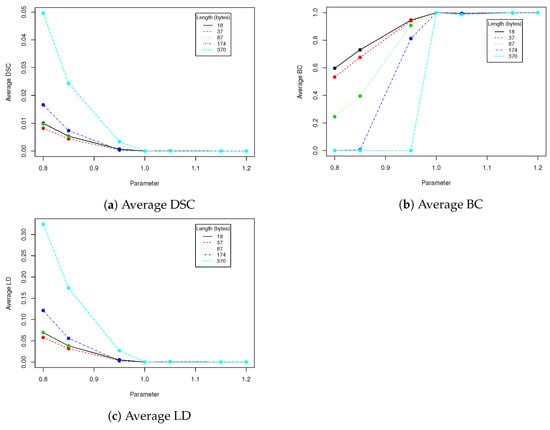

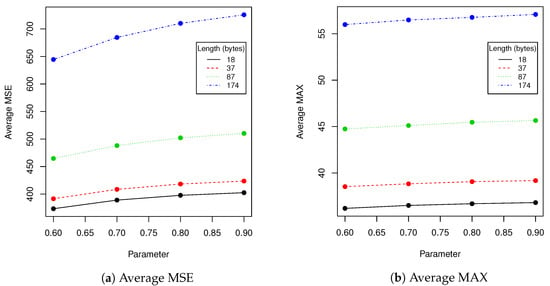

In robustness tests, besides various parameters described in Section 2.4, we used five mentioned lengths of encoded messages (18, 37, 87, 174 and 370). Obtained results are presented in Table 2, Table 3, Table 4, Table 5 and Table 6 and Figure 5, Figure 6, Figure 7, Figure 8 and Figure 9 using averaged values of Binary coefficient (BC), Levenshtein distance (LD) and Sørensen–Dice coefficient (DSC) on all images from validation data set.

Table 3.

This table presents evaluation results of robustness test of salt and pepper. Values in DSC, BC and LD are averaged on whole validation data set. We have used various values of noise level and various lengths of encoded data.

Table 4.

This table presents evaluation results of robustness test of rotation. Values in Sørensen–Dice coefficient (DSC), binary coefficient (BC) and Levenshtein distance (LD) are averaged on whole validation data set. We have used various values of rotation angle and various lengths of encoded data.

Table 5.

This table presents evaluation results of robustness test of JPEG compression. Values in DSC, BC and LD are averaged on whole validation data set. We have used various values of JPEG quality parameter and various lengths of encoded data.

Table 6.

This table presents evaluation results of robustness test of scaling. Values in DSC, BC and LD are averaged on whole validation data set. We have used various scaling factors and various lengths of encoded data.

Figure 5.

This figure presents visualization of data from Table 2 (clipping).

Figure 6.

This figure presents visualization of data from Table 3 (salt and pepper).

Figure 7.

This figure presents visualization of data from Table 4 (rotation).

Figure 8.

This figure presents visualization of data from Table 5 (JPEG compression).

Figure 9.

This figure presents visualization of data from Table 6 (scaling).

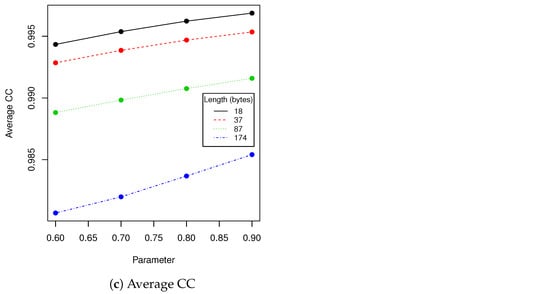

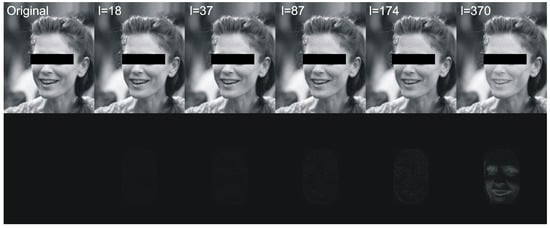

Next we have tested performance of algorithms described in Section 2.1 and Section 2.5 using various lengths of encoded messages and t parameter. We have calculated following statistics between original image and image with hidden data: averaged MSE, averaged maximal difference of pixels and averaged Pearson correlation coefficient of pixels. Results are presented in Table 7 and Figure 10. Figure 11 visualizes example differences between original image and image with hidden data. In some tables we have skipped certain zero-filled ranges in order to make results shorter and more comprehensible for reader. The larger ranges of values are presented in figures.

Table 7.

This table presents evaluation of performance of algorithms described in Section 2.1 and Section 2.5 using various length of encoded messages and values of parameter t. We have calculated following statistics between original image and image with hidden data: averaged MSE, averaged maximal difference of pixels and averaged Pearson correlation coefficient of pixels.

Figure 10.

This figure presents visualization of data from Table 7 (prototype evaluation).

Figure 11.

This figure shows an exemplary original image and images with hidden data of various lengths (first row) and differences between original image and images with hidden data of various lengths (bottom row). The threshold parameter for mixing (19) was set to .

The proposed method has been compared with following contemporary algorithms [11,12,13,14] in terms of CC and PSNR—see Table 8. We have evaluated robustness of the proposed steganography method (PM) against compression attack, which seems to be most common scenario in case of publishing stego images in social media.

Table 8.

This table presents comparison of Pearson correlation and peak-signal-to-noise-ratio (PSNR) for proposed and state-of-the-art methods. The number beside the proposed method (PM) is the length of the secret.

4. Discussion

For the reasons described in Section 3, we have selected training data set containing 30,375 faces to generate eigenfaces. This value might have been different if the calculation had been done on images with different resolution or on different data set, however the reasoning remains unchanged. In order to make eigenfaces descriptive enough for particular resolution, we need to take data set for which Relative LED (or/and Relative MSE) is below certain threshold. In our case seems to be adequate value—as can be seen in Figure 2, it introduces plateau on the Relative MSE and Relative LED plots. Taking more faces to generate covariance matrix would not change much in obtained results. The rest of calculations and discussion is conducted on eigenfaces generated from selected data set.

The proposed steganography method exhibits differing resistance to robustness tests. Although it it quite vulnerable to salt and paper disturbance (see Table 3), it seems to deal very nice with changes applied to whole image domain. Both clipping and JPEG compression do not damage the message if the whole possible capacity is not exhausted. The study found that when message length is set to 87 bytes, we can safely use a quality coefficient equal to 89, which only affects 0.041 (4.1%) of messages (see Table 5).

When image quality equals to 92 or more, no changes are observed for all validation data set (containing more than 100,000 of various faces images!). Result for BC measure corresponds to DSC and LD coefficients. The longer the message becomes, the more errors are introduced by compression. This situation is very similar if we take into account clipping (see Table 2). When the message length is set to 37 bytes and , the BC equals 0.066 which means that over of messages have been successfully recovered. When t increases to , the successful recovery rate rises to 100%. However the larger t we take, the more visible is a rectangular region in which message is hidden. Due to this fact, when the message lenght is set to 37 ( of image capacity), we suggest using t in range of in order to increase difficulty of message detection. More details will be provided together with performance tests.

The limitation of the proposed method is basically the same as in the original eigenfaces approach [37]. The descriptive power of the method is determined by the variance of images in dataset D that was used to generate eigenfaces. There might be a face with a certain facial features which are not represented in D and reconstructed face might differ from the original face . Those differences will be visible as high frequency noises and they might be easily spotted. The most straightforward solution to this issue is to use large dataset D with diversified face features. From the same reason face of the person wearing heavy, untypical makeup might be inappropriate carrier of hidden data. Additionally, it is recommended that a person on the photo should face the camera, which is an additional limitation.

Because rotation affects face aligning, eigenfaces are not robust to rotation (or robustness is on very low level—see Table 4). However this weakness may be compensated by face image aligning. The HOG-based face features detection, which is often used for this task, is a robust and repeatable technique that performs translation, rotation and scaling of original image. Due to this fact those three linear transformations, which might be used to change images with encoded data, might be then compensated by face image aligning. This, however, depends of face aligning procedure that we apply and it is not in the scope of this paper.

Eigenfaces technique requires that a facial image need to have the same resolution as faces in training dataset that was used to generate eigenfaces. We have to check if scaling the image with hidden data and then returning to the old resolution affects the payload, provided that we use bicubic pixels interpolation. Results in Table 6 show that our method has limited robustness for downscaling and very good robustness for upscaling. For upscaling by 5%, more than 98% messages were unaffected for all tested lengths; the more we upscale the better results we get. This means that we can use eigenfaces generated from images with lower resolution to hide data into facial images with higher resolution. A facial image has to be downscaled and then upscaled to old resolution. This is very important information, because thanks to that our method can be used in a certain range of facial images resolutions without necessity to generate eigenfaces to each possible resolution. Of course if we upscale image too much, a person observing the face might spot artifacts caused by interpolation.

The next experiment we have done was evaluation of the performance of the proposed algorithm with various encoded message lengths and t parameter (cropping size) values. Obtained results are presented in Table 7 and Figure 10. We have compared over 100,000 original images from the validation data set with images with encoded data using MSE, maximal difference between corresponding pixels and CC averaged. Those results confirmed observations we have made in robustness evaluation. Parameters in range resulted in a relatively small value of distance between original image and one with encoded data. As can be seen in second row of Figure 11, when message size is set to 37 ( of source image capacity) and , the differences are virtually impossible to notice. Those parameters are our recommendation for this particular image resolution we have evaluated. After message encoding we need to check if it can be recovered (according to Table 2 in faces there might be a problem with it). To overcome this situation, we have to increase t value or use another facial image. When message length is close to of image capacity, we can even visually spot that some changes has been applied to the region of the face. We do not recommend exploiting full possible image capacity: it is better to split message between several faces and then put it together again.

The peak signal-to-noise ratio (PSNR) is the most common metric used to evaluate the stego image quality. The PSNR measures the similarity between two images (how two images are close to each other—higher value means better results) [38]. As can be seen in Table 8, our algorithm has obtain very similar results to best state-of-the-art approaches. CC and PSNR are getting worse with decreasing quality of compression and increasing of stego message length. In terms of CC our method has overperformed [11,12] and has slightly worse results than [13,14]. In terms of PSNR our method has over performed all but [12]. We can conclude that our method is among most robust algorithms against compression attack.

5. Conclusions

Basing on the discussion presented in previous section we can conclude that proposed steganography method for hiding data in face images is usable and may be an interesting alternative to other state of the art approaches. The algorithm using parameters of eigenvectors linear combination turned out to be resistant to JPEG compression, clipping and scaling. These features are especially important for practical use, when facial image is only a rectangular subset of larger photo. In such cases we first need to detect the face and extract it from original image, which usually requires applying some transformations. Of course we should use the same face extraction method to generate training data set from which eigenfaces are computed. What is more, our numerical comparison with other state-of-the-art algorithms proved that eigenface-based steganography is among most robust methods against compression attack. The future work for further advancements may include improvements of robustness against downscaling attacks and taking advantage of fact that some images might contains pictures of several faces. Additional data hidden in several faces might be used as a check sum and data correction of the original secret.

Author Contributions

T.H. was responsible for conceptualization, proposed methodology, software, implementation and writing the original draft; K.K. was responsible for software, data curation and validation, M.R.O. was responsible for software, data curation and validation. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by the Pedagogical University of Krakow.

Data Availability Statement

Not applicable.

Conflicts of Interest

The authors declare no conflict of interest. The funder had no role in the design of the study; in the collection, analyses, or interpretation of data; in the writing of the manuscript, or in the decision to publish the results.

References

- Kurtuldu, O.; Demirci, M. StegoGIS: A new steganography method using the geospatial domain. Turk. J. Electr. Eng. Comput. Sci. 2019, 27, 532–546. [Google Scholar] [CrossRef]

- Jankowski, B.; Mazurczyk, W.; Szczypiorski, K. PadSteg: Introducing inter-protocol steganography. Telecommun. Syst. 2013, 52, 1101–1111. [Google Scholar] [CrossRef]

- Koptyra, K.; Ogiela, M.R. Multiply information coding and hiding using fuzzy vault. Soft Comput. 2019, 23, 4357–4366. [Google Scholar] [CrossRef]

- Zakaria, A.; Hussain, M.; Wahid, A.; Idris, M.; Abdullah, N.; Jung, K.H. High-Capacity Image Steganography with Minimum Modified Bits Based on Data Mapping and LSB Substitution. Appl. Sci. 2018, 8, 2199. [Google Scholar] [CrossRef]

- Mohamed, M.; Mohamed, L. High Capacity Image Steganography Technique based on LSB Substitution Method. Appl. Math. Inf. Sci. 2016, 10, 259–266. [Google Scholar] [CrossRef]

- Saghir, B.; Ahmed, E.; Zen Alabdeen Salh, G.; Mansour, A. A Spatial Domain Image Steganography Technique Based on Pseudorandom Permutation Substitution Method using Tree and Linked List. Int. J. Eng. Trends Technol. 2015, 23, 209–217. [Google Scholar] [CrossRef]

- Gulve, A.; Joshi, M. A High Capacity Secured Image Steganography Method with Five Pixel Pair Differencing and LSB Substitution. Int. J. Image Graph. Signal Process. 2015, 7, 66–74. [Google Scholar] [CrossRef]

- Kasapbaşı, M.C.; Elmasry, W. New LSB-based colour image steganography method to enhance the efficiency in payload capacity, security and integrity check. Sādhanā 2018, 43. [Google Scholar] [CrossRef]

- Das, A.; Das, P.; Chakraborty, K.; Sinha, S. A New Image Steganography Method using Message Bits Shuffling. J. Mech. Contin. Math. Sci. 2018, 13, 1–15. [Google Scholar] [CrossRef]

- Shete, K.; Patil, M.; Chitode, J. Least Significant Bit and Discrete Wavelet Transform Algorithm Realization for Image Steganography Employing FPGA. Int. J. Image Graph. Signal Process. 2016, 8, 48–56. [Google Scholar] [CrossRef]

- Bergman, C.; Davidson, J. Unitary embedding for data hiding with the SVD. In Security, Steganography, and Watermarking of Multimedia Contents VII; International Society for Optics and Photonics: Bellingham, WA, USA, 2005; Volume 5681, pp. 619–630. [Google Scholar] [CrossRef]

- Chung, K.L.; Yang, W.N.; Huang, Y.H.; Wu, S.T.; Hsu, Y.C. On SVD-based watermarking algorithm. Appl. Math. Comput. 2007, 188, 54–57. [Google Scholar] [CrossRef]

- Chang, C.C.; Lin, C.C.; Hu, Y.S. An SVD oriented watermark embedding scheme with high qualities for the restored images. Int. J. Innov. Comput. Inf. Control. 2007, 3, 609–620. [Google Scholar]

- Chanu, Y.J.; Singh, K.M.; Tuithung, T. A Robust Steganographic Method based on Singular Value Decomposition. Int. J. Inf. Comput. Technol. 2014, 4, 717–726. [Google Scholar]

- Hingorani, C.; Bhatia, R.; Pathai, O.; Mirani, T. Face Detection and Steganography Algorithms for Passport Issuing System. Int. J. Eng. Res. Technol. (IJERT) 2014, 3, 1438–1441. [Google Scholar]

- Raju, K.; Srivatsa, S. Video Steganography for Face Recognition with Signcryption for Trusted and Secured Authentication by using PCASA. Int. J. Comput. Appl. 2012, 56, 1–5. [Google Scholar] [CrossRef]

- Kadry, S.; Nasr, S. New Generating Technique for Image Steganography. Innova Cienc. 2012, 4, 46. [Google Scholar] [CrossRef]

- Saračević, M.; Adamović, S.; Miškovic, V.; Maček, N.; Šarac, M. A novel approach to steganography based on the properties of Catalan numbers and Dyck words. Future Gener. Comput. Syst. 2019, 186–197. [Google Scholar] [CrossRef]

- Hassan, R.; Pepíć, S.; Saračević, M.; Ahmad, K.; Tasic, M. A Novel Approach to Data Encryption Based on Matrix Computations. Comput. Mater. Contin. 2020, 66, 1139–1153. [Google Scholar] [CrossRef]

- McAteer, I.; Ibrahim, A.; Guanglou, Z.; Yang, W.; Valli, C. Integration of Biometrics and Steganography: A Comprehensive Review. Technologies 2019, 7, 34. [Google Scholar] [CrossRef]

- Hamid, N.; Yahya, A.; Ahmad, R.B.; Al-Qershi, O.M. Image Steganography Techniques: An Overview. Int. J. Comput. Sci. Secur. (IJCSS) 2012, 6, 168–187. [Google Scholar]

- Surana, J.; Sonsale, A.; Joshi, B.; Sharma, D.; Choudhary, N. Steganography Techniques. IJEDR 2017, 5, 989–992. [Google Scholar]

- Shelke, F.M.; Dongre, A.A.; Soni, P.D. Comparison of different techniques for Steganography in images. Int. J. Appl. Innov. Eng. Manag. (IJAIEM) 2014, 3, 171–176. [Google Scholar]

- Rejani, R.; Murugan, D.; Krishnan, D.V. Comparative Study of Spatial Domain Image Steganography Techniques. Int. J. Adv. Netw. Appl. 2015, 7, 2650–2657. [Google Scholar]

- Liu, Z.; Luo, P.; Wang, X.; Tang, X. Deep Learning Face Attributes in the Wild. In Proceedings of the International Conference on Computer Vision (ICCV), Santiago, Chile, 7–13 December 2015. [Google Scholar]

- Dalal, N.; Triggs, B. Histograms of oriented gradients for human detection. In Proceedings of the 2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’05), San Diego, CA, USA, 20–26 June 2005; Volume 1, pp. 886–893. [Google Scholar] [CrossRef]

- Kazemi, V.; Sullivan, J. One Millisecond Face Alignment with an Ensemble of Regression Trees. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 24–27 June 2014. [Google Scholar] [CrossRef]

- Sagonas, C.; Antonakos, E.; Tzimiropoulos, G.; Zafeiriou, S.; Pantic, M. 300 Faces In-The-Wild Challenge: Database and results. Image Vis. Comput. 2016, 47, 3–18. [Google Scholar] [CrossRef]

- Arsigny, V.; Fillard, P.; Pennec, X.; Ayache, N. Geometric Means in a Novel Vector Space Structure on Sysmetric Positive-Definite Matrices. SIAM J. Matrix Anal. Appl. 2006, 29, 328–347. [Google Scholar] [CrossRef]

- Huang, Z.; Wang, R.; Shan, S.; Chen, X. Learning Euclidean-to-Riemannian Metric for Point-to-Set Classification. In Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 1677–1684. [Google Scholar] [CrossRef]

- Golub, G.H.; Van Loan, C.F. Matrix Computations, 4th ed.; Johns Hopkins University Press: Baltimore, MD, USA, 2013. [Google Scholar]

- Al-Mohy, A.; Higham, N. Improved Inverse Scaling and Squaring Algorithms for the Matrix Logarithm. SIAM J. Sci. Comput. 2012, 34, 153–169. [Google Scholar] [CrossRef]

- Lau, W.L.; Li, Z.L.; Lam, K. Effects of JPEG compression on image classfication. Int. J. Remote. Sens. 2003, 24, 1535–1544. [Google Scholar] [CrossRef]

- Levenshtein, V. Binary Codes Capable of Correcting Deletions, Insertions and Reversals. Sov. Phys. Dokl. 1966, 10, 707. [Google Scholar]

- Haldar, R.; Mukhopadhyay, D. Levenshtein Distance Technique in Dictionary Lookup Methods: An Improved Approach. arXiv 2011, arXiv:1101.1232. [Google Scholar]

- Sørensen, T. A method of establishing groups of equal amplitude in plant sociology based on similarity of species and its application to analyses of the vegetation on Danish commons. K. Dan. Vidensk. Selsk. 1948, 5, 1–34. [Google Scholar]

- Sirovich, L.; Kirby, M. Low-Dimensional Procedure for the Characterization of Human Faces. J. Opt. Soc. Am. A Opt. Image Sci. 1987, 4, 519–524. [Google Scholar] [CrossRef] [PubMed]

- Almohammad, A.; Ghinea, G. Stego image quality and the reliability of PSNR. In Proceedings of the 2010 2nd International Conference on Image Processing Theory, Tools and Applications, Paris, France, 7–10 July 2010; pp. 215–220. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).