Abstract

As training Deep Neural Networks (DNNs) becomes more expensive, the interest in protecting the ownership of the models with watermarking techniques increases. Uchida et al. proposed a digital watermarking algorithm that embeds the secret message into the model coefficients. However, despite its appeal, in this paper, we show that its efficacy can be compromised by the optimization algorithm being used. In particular, we found through a theoretical analysis that, as opposed to Stochastic Gradient Descent (SGD), the update direction given by Adam optimization strongly depends on the sign of a combination of columns of the projection matrix used for watermarking. Consequently, as observed in the empirical results, this makes the coefficients move in unison giving rise to heavily spiked weight distributions that can be easily detected by adversaries. As a way to solve this problem, we propose a new method called Block-Orthonormal Projections (BOP) that allows one to combine watermarking with Adam optimization with a minor impact on the detectability of the watermark and an increased robustness.

1. Introduction

Deep learning has substantially impacted technology over the last years, becoming an important center of attention for researchers all over the world. Such a great impact stems from the versatility it offers as well as the excellent results that DNNs achieve on multiple tasks, like image classification or speech recognition, which often reach and even surpass human-level performance [1,2].

However, far from being a simple task, the design of new DNNs is generally expensive not only in terms of human effort and time needed to build effective model architectures but mostly because of the large volume of suitable data that must be gathered and the vast amount of computational resources and power used for training. Consequently, businesses owning costly models are interested in protecting them from any illicit use, and this growing need has recently led researchers to a common concern on how to embed watermarks on DNNs. As a result, several frameworks for protecting the intellectual property of neural networks were proposed in the literature, and can be classified as black-box or white-box approaches. The first kind of methods (i.e., black-box) do not need to access model parameters for detecting the presence of watermarks. Instead, key inputs are introduced as special triggers to identify the original network, either by the use of the so-called adversarial examples [3] or backdoor poisoning [4].

White-box approaches, in contrast, directly affect the model parameters in order to embed watermarks. One of the most significant white-box contributions was proposed by Uchida et al. in [5,6], the algorithm under study in this paper. This watermarking framework employs a regularization term that defines a cost function for embedding the secret message. This regularizer, which transforms weights into bits by means of a projection matrix in a similar way to spread–spectrum techniques used in watermarking [7], is added to the main loss function and can be applied either at the beginning of the training phase (i.e., from scratch) or during fine-tuning steps.

On the other hand, when training a DNN, there are several optimization algorithms to choose from. With their pros and cons, some of the most popular are the classic Stochastic Gradient Descent (SGD) [8] and Adaptive Moment Estimation (Adam) [9]. We found that, in order to perform watermarking following the approach in [5,6], it is necessary to pay close attention to the optimization algorithm being used. In this paper, we prove that Adam, although being an appealing algorithm for lots of applications—especially because of its efficiency and training speed—poses a big problem when implementing this watermarking method.

As any other watermarking algorithm, it is important to meet some minimal requirements regarding fidelity, robustness, payload, efficiency, and undetectability [5,6,10]. This latter property involves the need for concealing any clue that would let unauthorized parties know if a watermark was embedded, along with its further consequences. In other words, from a steganographic point of view, watermarks should be embedded into the DNN without leaving any detectable footprint.

We show that, unlike SGD, Adam significantly alters the distribution of the coefficients at the embedding layer in a very specific way and, thus, it compromises the undetectability of the watermark. In particular, when using Adam, the histogram of the weights at the embedding layer shows that these coefficients tend to group together at both sides of zero, originating two visible spikes that grow in magnitude with the number of iterations, so that the initial distribution ends up changing completely. As we will see later, the update direction given by Adam depends on the projection matrix used for watermarking; specifically, it is based on the sign function, responsible for the symmetric two-spiked shape that emerges. This behavior is somewhat surprising, as with a pseudorandom projection matrix, one might expect that the weights evolve with random speeds; in contrast, the weights tend to move in unison in a way that is reminiscent of ants after they have established a solid path from the nest to a food source. Then, the statistical dependence of the weights with the projection matrix is weak, and this is mainly due to the information collapse induced by the sign function; this loss is invested in making the weights more conspicuous and, therefore, the watermark more easily detectable. As we will confirm, the sign phenomenon does not occur in other algorithms like SGD. The appearance of the sign was already pointed out and studied—without considering watermarking applications—by the authors in [11], who explained the adverse effects of Adam on generalization [12] as a consequence of this aspect. However, instead of delving into the general performance of the optimization algorithm itself, we show that the sign function which is involved in Adam’s update direction is detrimental for watermarking purposes, so we highlight the need for being careful with the selection of the optimization algorithm in these cases.

In this paper, we carry out a theoretical and experimental analysis and compare the results using Adam and SGD optimization. The analysis can be extended to other optimization algorithms. As a way to measure the similarity between the original weight distribution and the resulting one after the watermark embedding, we will use the Kullback–Leibler divergence (KLD) [13]. We will show that, as expected, when we use Adam, the KLD between both distributions is considerably larger than when we use SGD, thus confirming that, as opposed to SGD, Adam modifies the original distribution to a great extent. Furthermore, in order to perform this kind of watermarking and, at the same time, enjoy the advantages that Adam optimization provides, we propose a new method called Block-Orthonormal Projections (BOP), which uses a secret transformation matrix in order to reduce the detectability of the watermark generated by Adam. As we will see, BOP allows us to considerably reduce the KLD to small values which are comparable to those obtained with SGD. Therefore, we show that BOP allows us to preserve the original shape of the weight distribution.

In summary, this work makes the following two-fold contribution:

- We provide mathematical and experimental evidence for SGD and Adam to show that: (1) in contrast to SGD, the changes in the distribution of weights caused by Adam can be easily detected when embedding watermarks following the approach in [5,6] and, hence, (2) the use of Adam considerably increases the detectability of the watermark. For the purpose of carrying out this analysis, we use FFDNet [14]—a DNN that performs image denoising tasks—as the host network.

- We introduce a novel method based on orthogonal projections to solve the detectability problem that arises when watermarking a DNN which is being optimized with Adam. A side effect of this novel method is an increased robustness against weight pruning.

The remainder of this paper is organized as follows: Section 1.1 introduces the notation and Section 2 explains the frameworks and algorithms used in this study—host network, optimization algorithms, and watermarking method—in more detail. Section 3 presents the mathematical core that allows us to model the observed effects on the histograms of weights once the embedding process has finished. Then, in Section 4, we introduce BOP as a solution for using Adam and watermarking simultaneously, Section 5 presents the information-theoretic measures that we will implement, and Section 6 shows the experimental results. Finally, we point out some concluding remarks in Section 7. Two appendices give additional details on mathematical derivations (Appendix A) and the validity of certain assumptions (Appendix B).

Notation

In this paper, we use the following notation. Matrices and vectors are denoted by upper-case and lower-case boldface characters, respectively, while random variables and their realizations are respectively represented by upper-case and lower-case characters.

For matrix and vector operations, we proceed as follows. As an example, let be a matrix. Then, its transpose is denoted by . Moreover, we use to represent the trace of and to denote the th element of . refers to the identity matrix. We use column vectors unless otherwise stated. In addition, we use to denote a column vector of zeros and for a column vector of ones. Let be a column vector of length N; then, is the gradient operator with respect to that is:

We use the operator ∘ to denote the Hadamard (i.e., sample-wise) product and ⊗ for the Kronecker product. Finally, and denote the mathematical expectation and the variance, respectively.

2. Preliminaries

2.1. Host Network: FFDNet

The rapid development of Deep Learning over the last few years has led to new advances in the field of image restoration [15]. Several Convolutional Neural Networks (CNNs) have been designed to replace classical methods and, often, they offer new competitive advantages. This is the case of FFDNet [14], which performs image denoising tasks and is used in our work as the exemplary host network that is watermarked by means of the algorithm proposed in [5,6].

Image denoising is the task of removing noise from a given image. Let be the input noisy image, the clean image, and the noise, which is usually modeled as zero-mean Additive White Gaussian Noise (AWGN), then we have and we wish to obtain an estimate of the clean image. As opposed to other CNNs for image denoising, FFDNet works on downsampled sub-images, and it is able to adapt to several noise levels using only a single network. For that purpose, a noise level map is included also as an input, so that the function FFDNet aims to learn can be expressed as: , where represents the parameters of the network. Furthermore, FFDNet can handle spatially variant noise and offers competitive inference speed without sacrificing denoising performance [14].

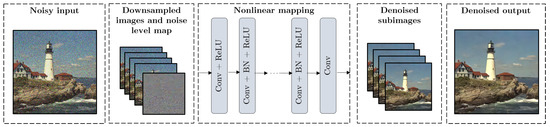

Figure 1 shows the architecture of FFDNet. As we can see, it is composed of a downscaling operation on the input images, a nonlinear mapping consisting of Convolutional Layers, Batch Normalization steps [16], and ReLU activation functions; and, finally, an upscaling process to generate denoised images with the original size. Let be L noisy-clean image pairs from the training dataset, the denoising cost function is:

Figure 1.

Architecture of the host network FFDNet.

FFDNet can be used for grayscale or color images. Table 1 shows the main differences between both configurations. As we can see, the total number of convolutional layers can be set to 15 or 12 for grayscale or RGB denoising, respectively. The number of feature maps and the size of the receptive field also differ, but this is not the case of the kernel size, which is kept to for either grayscale or RGB. In this paper, we implement the RGB version of FFDNet and proceed as follows: (1) train FFDNet from scratch using Adam optimization without embedding any watermark and (2) fine-tune the network to embed the desired watermark, using both Adam and SGD to compare the results.

Table 1.

FFDNet configurations for grayscale and RGB image denoising.

2.2. Optimization Algorithms

The training of a DNN is an iterative process that makes it possible for the model to learn how to perform a given task. This is certainly the most challenging optimization problem when implementing deep learning models from scratch. In order to increase the efficiency of the training process, researchers have developed several optimization techniques in the last years, each of them with its own advantages and drawbacks [17].

An optimization algorithm determines the weight update rule that must be applied at every iteration. The goal is usually to minimize a cost function—also known as loss function—which generally compares predictions with expected values and computes an error metric that evaluates the performance of the network. The learning rate —or step size—is the hyperparameter that controls how much the weights can change after each iteration of the optimization algorithm.

In the following sections, we briefly review the mechanics of two widely known optimization algorithms: SGD and Adam.

2.2.1. SGD Optimization

SGD [8] is a classic optimization algorithm based on gradient descent and one of the most used. However, unlike standard gradient descent techniques that use all the training samples to compute the gradient of the cost function, SGD uses a small number of samples from the dataset—a minibatch—and then takes the average over these samples to get an estimate of the gradient, . Then, the update rule is given by:

where is the weight vector at iteration k and is its initial value.

2.2.2. Adam Optimization

Adam [9] is a popular optimization algorithm that combines the ideas of AdaGrad [18] and RMSProp [19]. Some of the advantages of Adam include, among others, the fact of being easy to implement and computationally efficient, as well as being fast and suitable for complex settings with noisy and sparse gradients.

Just like SGD, Adam estimates the gradient from the samples of a minibatch, but, as opposed to SGD, it uses estimates of the first and second moments of the gradients to compute individual adaptive learning rates for each parameter in the network. In order to do that, exponential moving averages of the gradient and the squared gradient are calculated using two hyperparameters to control the decay rate, and , respectively. Let and be the initial values for the first and second moment vectors, respectively, then the steps of this algorithm at the kth iteration are the following [9]:

where , , are the jth element of , , , respectively, is the cost function and is a very small number that avoids diving by zero. The default set-up for the hyperparameters is [9]: , and .

2.3. Digital Watermarking Algorithm

In this paper, we analyze the digital watermarking algorithm proposed in [5,6]. As we mentioned previously, we employ the fine-tune-to-embed approach; therefore, the embedding function is applied only during some additional epochs after convergence is achieved for the original task—in this case, image denoising—.

2.3.1. Embedding Elements

We wish to embed a T-bit sequence, , into a certain layer l of the host DNN. For this specific layer, let , I and F represent the size of the convolution filter, the depth of input to the convolutional layer and the number of filters in that layer, respectively. Then, the weights can be represented by a tensor with dimensions and then rearranged to form a vector of length .

One important point here is that, instead of directly using the weight vector , the authors in [5,6] suggest including an initial transformation of these coefficients. In order to reflect this, we must calculate the mean of over the F kernels. As a result, we obtain a new flattened vector with M elements, where . This transformation can also be formulated if we introduce a new matrix of size :

so that . Here, is a column vector of length F with all of its elements set to , i.e., .

In order to move to the T-dimensional space, the authors in [5,6] introduce a secret projection matrix . The size of this projection matrix—also referred to as regularizer parameter in [5,6]—is so that each column corresponds to a particular projection vector , .

For the purpose of the subsequent theoretical analysis, we pair up matrices and and, therefore, we ascribe the initial transformation to the projection matrix, preserving the notation with the original weight vector . To that end, we define a new projection matrix:

so that now the projection vectors can be expressed as .

2.3.2. Embedding Process

Now that we have introduced the basic elements, the embedding procedure can be described as follows [5,6]. Let be a vector containing all the parameters of the network, then the watermarking regularizer, , is added to the global cost function :

where is the original cost function and is the regularization parameter. The regularizer term is a composition of two functions: cross-entropy and the sigmoid,

with . In order to minimize (10), will approximate to the value of . Therefore, the sigmoid function will force each projection , , to progressively move towards and depending on whether or , respectively. For successfully embedding the secret message, it is generally enough to guarantee that each projection lies on the proper side of the horizontal axis. When this happens, we reach a Bit Error Rate (BER) of 0 and all the projected weights are aligned with their corresponding bits, that is, they are positive when the bit is 1 and, conversely, negative when the bit is 0.

2.3.3. Detectability Issues

In this paper, we employ the Random technique proposed by the authors, in which the values of the projection matrix —before applying the transformation in (9)—are independent samples from a standard normal distribution . Their results [5,6] show that this Random approach is the most appealing design method for because, as they indicate, it does not significantly alter the distribution of weights at the embedding layer. However, there are some detectability issues here that should be considered.

On the one hand, the authors in [20] show that the standard deviation of the distribution of weights grows with the length of the embedded message. This information can be used by adversaries for detecting the watermark and even overwriting it.

On the other hand, one of the main conclusions of our work is that the presence or absence of alterations to the shape of the weight distributions is a consequence of the optimization algorithm used during the watermark embedding. In particular, the authors in [5,6] employ SGD with momentum in their experiments and the distributions of weights remain unchanged, yet the use of Adam would significantly alter the shape of the distributions even though we apply the same Random technique. In this paper, we will show that the results in [5,6] regarding the undetectability of the watermark do not hold when we use Adam optimization.

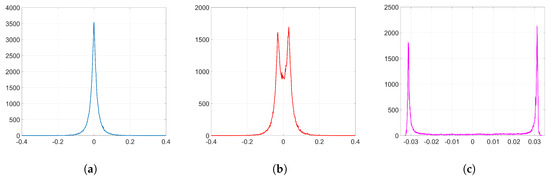

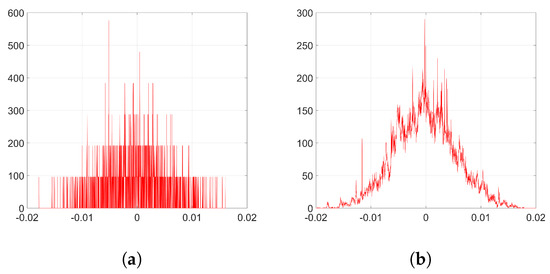

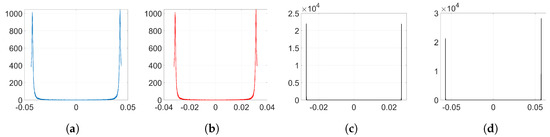

As an example to visualize this peculiar behavior shown by Adam when we employ the watermarking algorithm proposed in [5,6], we plot in Figure 2 the resulting histograms when and ; specifically, the histograms of the weights before and after the embedding (corresponding to k = 32,140) and the histogram of the weight variations, respectively. As we can see, the distribution of the original weights has significantly changed, turning into a two-spiked shape that could be easily detected by an adversary. The complete set of histograms will be later shown in Section 6.

Figure 2.

Histograms from the embedding layer (, and k = 32,140). (a) histogram of ; (b) histogram of ; (c) histogram of .

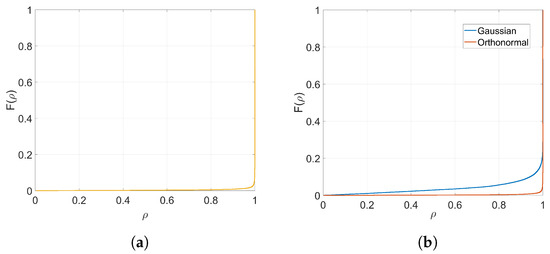

2.3.4. Gaussian and Orthogonal Projection Vectors

In addition to the Random technique suggested by the authors of this watermarking algorithm—whose projection vectors will be referred to as Gaussian projection vectors in this paper—we will also implement orthonormal projectors. In order to build these kinds of projectors, we first generate the projection matrix following the Random technique; that is, samples are drawn from a standard normal distribution . Then, from the Singular Value Decomposition of this projection matrix, we obtain an orthonormal basis for the column space so that we have . Notice that once we apply the initial transformation (i.e., ) the resulting projection vectors , , will still preserve the orthogonality between them, although they will not be normalized:

Therefore, these kinds of projectors will be referred to as orthogonal. As we will see later from KLD results and histograms, implementing orthogonal projectors may help us to better preserve both the original shape of the weight distribution and the denoising performance.

3. Theoretical Analysis

From now on, we will omit the sub-index l for the sake of clarity although we are always addressing the coefficients of the embedding layer. The experiments clearly illustrate that the use of Adam optimization together with the watermarking algorithm proposed in [5,6] originates noticeable changes in the distribution of weights, as we see in Figure 2. In the following analysis, we delve into the reasons why this happens. To that end, we aim to get a theoretical expression of . This will allow us to prove and understand the nature of the observed behavior of the weights when watermark embedding is carried out. We start off by defining vector and matrix :

Notice that . In addition, for the case of orthogonal projectors, the following properties can be straightforwardly proven:

These properties will come in handy later on in several theoretical derivations.

Firstly, in order to simplify the analysis and understand more clearly how the watermarking cost function impacts on the movement of the weights when using both SGD and Adam optimization, we will just consider the presence of the regularization term, that is, we will not include the denoising cost function for now. The influence of the denoising part will be studied in Section 3.3. Therefore, given our embedding cost function in (10) and assuming for simplicity (and without loss of generality) that all embedded symbols are , we have:

If we compute the gradient of this function, we obtain:

In order to simplify the subsequent analysis, we introduce a series of assumptions which are based on empirical observations or hypotheses that will be duly verified.

By construction, it is possible to show that the mean of is zero and its variance is for Gaussian projectors and for orthogonal projectors (see Appendix A.1). Since the variance of the weights at the initial iteration is generally very small—in our experiments, it is —it can be considered that the variance of will also be small enough so that we can assume for all . Although this assumption might not be strictly true for all k—especially once we have crossed the linear region of the sigmoid function—it is reasonably good and it allows us to use a first-order Taylor expansion for around :

We introduce now one important hypothesis in this theoretical analysis to handle the previous equation: we assume that grows approximately affinely with k:

where is a vector that contains the slopes for each weight, and it is to be determined in the following sections. We hypothesize this affine-like growth for the weights and, later, we will verify that this is consistent with the rest of the theory and the experiments (see Appendix B.1 for more details). Therefore, we can write the weight variations as:

3.1. Analysis for SGD

We first analyze the behavior of SGD optimization when we implement digital watermarking as proposed in [5,6]. Recall the SGD update rule in (2). If we use the approximation for the gradient in (17) and the affine growth hypothesis for the weights introduced in (18), we have:

To simplify the analysis, we consider from now on that . We confirm the validity of this assumption in Appendix B.2. Then, we can write:

If we consider orthogonal projectors, we can arrive at a more explicit expression for . In particular, if we multiply (21) by and use the properties (13) and (14), we obtain:

Thus, if F is large compared to —this certainly holds for our experimental set-up, cf. Section 6.1— will be approximately proportional to . Then, the coefficients will follow an affine-like growth as we hypothesized in (18) (see Appendix B.1 for the empirical confirmation of this hypothesis). Now, the weight variations can be expressed as:

As we can see, when we use SGD, will approximately follow a zero-mean Gaussian distribution, as induced by [9]. Because of this, and unlike Adam (as we will see later), the weights will evolve with random speeds when we embed watermarks using SGD optimization. Therefore, the impact on the original shape of the weight distribution will be small. However, the variance of the weight distribution may change considerably as stated in [20]. Since we have for orthogonal projectors, the variance of can be computed as:

Thus, considering that and are uncorrelated—we check this statement in Section 6.2.2—we arrive at the following expression for the variance of the weights at the kth iteration:

As we can see from (25), when implementing the digital watermarking algorithm in [5,6] with SGD optimization and orthogonal projectors, the variance of the resulting weight distribution might change considerably. In order to preserve the original weight distribution when using SGD, it is important to take care with the values of T, F and N, especially. In addition, the standard deviation of the weights will (approximately) increase linearly with the number of iterations so it may be also important to limit the value of k. This is in line with the expected behavior: the weights will move away from their original value and they will be further if we perform more iterations.

Because the analysis for Gaussian projectors becomes considerably difficult, in this paper, we just address the study of SGD with orthogonal projectors. A more comprehensive analysis for Gaussian projectors that can be linked to the results obtained in [20] is left for future research. Regardless of this, the whole analysis for both kinds of projection vectors will be developed in the next section for Adam optimization.

3.2. Analysis for Adam

In the next sections, we will delve into the theory behind Adam optimization for DNN watermarking. In particular, we will obtain an expression for the mean and the variance of the gradient and then, as we did with SGD, we will analyze the update term to get an expression of the weight variations.

3.2.1. Mean of the Gradient

We are interested in computing the mean of the gradient that is used in Adam. Considering as the global cost function, then, from (4), we can rewrite the mean at the kth iteration as:

We use the gradient in (17) and do some derivations to find an explicit expression for under the hypothesis in (18). Finally, we arrive at the following expression for the bias-corrected mean gradient when k is sufficiently large (see Appendix A.2 for all the mathematical details):

where and . As we see from (27), the mean of the gradient also grows affinely with k.

3.2.2. Variance of the Gradient

Let . The approximation in (17) for the jth element of this vector , denoted by , is the following:

where:

Following the hypothesis in (18), we can write:

In summary, for this affine-like growth, the square gradient vector can be written as:

for some vectors whose jth component can be defined as:

Now, from (5), we can rewrite the variance of the gradient that is used in Adam as:

The bias-corrected term is obtained after dividing by . Applied to the special case of (31), this yields (see Appendix A.3):

3.2.3. Update Term

Because is usually very small—we use in our experiments—we can assume that will be small enough to obtain an approximation of the update used in Adam. Recall that, for the jth weight, this is , implying that . Let:

Then, assuming that , we can make a zero-order approximation of the update term, i.e.,:

This approximation is accurate enough for the set of experiments we perform. In particular, for the orthogonal case, we could deal with = 625,000 and still get a correlation coefficient of between and . In our experiments, we actually reach a BER of zero for values of k quite below (cf. Section 6.2).

From (36), we observe that the updated jth coefficient approximately follows the hypothesized growth, i.e., , where . Notice that, as expected, the update does not depend on , following Adam’s property that the update is invariant to rescaling the gradients [9]. Finding a more explicit expression runs into the problem that depends on , which in turn is a function of through (32) and (33). The following subsections are devoted to solving this problem by conjecturing a form for and refining it.

To simplify the analysis, we consider from now on that since most of the values of the weights at the initial iteration are very small (see Figure 2a). We will verify the accuracy of this approximation in Appendix B.2.

3.2.4. Rationale for the Sign Function

Recall the expression (23) that we obtained for when analyzing SGD, where we found to be approximately proportional to . Now, for Adam, we take this as a starting point, so we conjecture first that , for some real positive . Here, we consider orthogonal projection vectors and use the property introduced in (13) and the following:

In this particular case, we have the following identities:

Substituting these values into (35), we find that

When we divide by , we obtain:

It is then clear that cannot be written in the form , as was conjectured at the beginning of this section.

3.2.5. A Theoretical Expression for

Although the conjectured form for in Section 3.2.4 does not hold, the appearance of the sign function in (37) gives a key clue for an alternative approach, since the sign seems to reveal the reason behind the two-spiked histograms like the one shown in Figure 2c.

Therefore, let us write to explicitly contain the sign of and allow to take different (non-negative) values with j to reflect the varying magnitude (recall that even in Section 3.2.4 the conjectured value could be written as ). Let be the column vector containing , , then . Since , we can write:

Now,

In addition, thus, in order to meet the condition , the following nonlinear equation should be solved for all , :

This equation can be solved with a fixed-point iteration method [21]. To that end, we should initialize and then iterate the following: (1) compute the right-hand side of (38), and (2) use it to update on the left-hand side. This process will converge to the solution of (38). Even though this method can be implemented to give the specific values for each , we are more interested in obtaining a statistical characterization rather than a deterministic one. As we will see, the statistical approach offers a deeper explanation for the two-spiked distribution of which we ultimately seek.

We thus aim at finding the pdf of , now considered as a random variable for which , are nothing but realizations. Once again, Equation (38) can be solved iteratively (e.g., with Markov-chain Monte Carlo methods [22]) to yield the equilibrium distribution for . Instead, we can resort to the results in Section 6 where we conclude that the pdf of is strongly concentrated around its mode. With this observation, it is possible to consider that approximately corresponds to realizations of .

In order to simplify the analysis even further, we are interested in decomposing using its statistical projection onto , i.e., . Here, is a real multiplier and is zero-mean noise uncorrelated with . More generally, if we define matrix , then we seek to write . We do the analysis for the cases of Gaussian projectors and orthogonal projectors separately (refer to Appendix A.4 for the derivations). For the Gaussian case, we get:

On the other hand, for the orthogonal projectors, we get instead:

Recall that, by construction, can be seen as a random vector. In fact, we have for Gaussian projection vectors, and approximately follows for orthogonal projectors. Let , Z be random variables with the distribution of a single element of and , respectively, then and can be seen as realizations of (approximately): and , so a stochastic version of (38) is:

Squaring both sides, we find that, for a given realization (, z) of (, Z), must take the positive value that satisfies the following fourth degree equation:

From (43), it is easy to generate samples of and, accordingly, samples of , by recalling that:

We note that, for the particular case when is very close to 1, . This simplification allows us to approximate (43) as

which leads to the following fixed-point equation:

When the noise term z is very small compared to (which occurs with a fairly large probability, especially for the case of orthogonal projectors), then the solution to (46), denoted by , will be independent of the value of . This will cause the probability of to be concentrated around , and in turn this will make the pdf have two spikes centered at . We will see these spikes appearing time and again in the experiments carried out with Adam (Section 6).

3.3. The Denoising Term

Thus far, we have considered only that our cost function is ; however, as we know, there is an additional term, the original denoising function, so our real cost function is: .

The gradients corresponding to this function, , will try to pull the weight vector towards the original optimal in a relatively hard to model way. In order to analyze this behavior, we can approximate the gradient of the denoising function at the kth iteration with respect to the jth coefficient as a sum of a constant term, , and a noisy one, , which follows a zero-mean Gaussian distribution and is associated with the use of different training batches on each step. We will refer to this noise as batching noise. Thus, for each coefficient j, we can write:

Like we did in the previous section, we can formulate a stochastic version of (47). To that end, we notice that the constant term of this gradient, , can take different values with j, as well as the variance of the batching noise, that is, is drawn from . Therefore, in order to reflect the variability of these terms along the j-elements, we introduce two random variables with the distribution of the mean gradient and the variance of the batching noise, D and H, respectively, for which and are realizations. The pdf of these distributions will be obtained empirically in Section 6.2. Then, we can see as a realization of .

3.3.1. SGD

Similarly to Section 3.2.5, let be a random variable with the distribution of . Let (, , ) be a realization of (, D, ), respectively, then for SGD using orthogonal projectors we can compute samples of adding both functions, i.e., denoising and watermarking:

3.3.2. Adam

The variance of the batching noise computed by Adam will be approximately given by the random variable V, whose realizations can be expressed as . Notice that, for each realization of V, as the sum takes places over i, we must work with a fixed value for the variance of the batching noise. Then, with this variance, we generate k samples of to be used in the sum that produces . With this characterization, we can easily analyze how the denoising cost function shapes the distribution of the weight variations. Notice that this analysis could be adapted for any host network. Let and be realizations of D and V, respectively, then, we can generate samples of without including the gradients from the watermarking function as:

Moreover, in order to get a more accurate description of the problem, we can combine both functions: denoising and watermarking. The analysis becomes somewhat complicated, but, as we will check in Section 6, the distributions resulting from this analysis do capture better the shapes observed in the empirical ones. See Appendix A.5 for the results of this analysis.

4. Block-Orthonormal Projections (BOP)

Here, we discuss BOP, the solution we propose to solve the detectability problem posed by Adam optimization when implementing the watermarking algorithm proposed in [5,6]. In order to hide the noticeable weight variations that appear when we use Adam—as seen in Figure 2—we introduce a prior transformation using a secret matrix (the details for its construction are given below). The procedure we follow has three steps per each iteration of Adam.

Firstly, we project the weights and gradients from the embedding layer using :

Then, we run Adam optimization on the projected weights, , using the projected gradients, , as well, i.e., steps (3)–(8) are taken using and instead of and , respectively. The key of BOP relies on the following: if we execute Adam on instead of , we can break the natural bond created by Adam between and —as we saw in the previous sections—responsible for the ant-like behavior of the weights and, consequently, the appearance of side spikes in their histograms. These undesired effects disappear when we de-project using to get back to the weight vector :

In order to reduce the computational complexity and the memory requirements of this method—recall that N is generally a very large number and we must project and de-project the weights on each iteration—we consider to be a block diagonal matrix with B identical blocks. In this way, we only have to build and work with a single block , for which we can choose the size by simply adjusting the value of B. The values of this block are drawn from a standard normal distribution. In addition, is built as an orthonormal matrix so that . Let and be the ith block of and , respectively, both of them of length ; therefore, we just compute:

After executing Adam, we can get back to :

As we will see in Section 6.2.4, BOP does not significantly alter the original distribution of weights, as opposed to standard Adam. This makes it possible to enjoy the advantages of Adam optimization when we implement the watermarking algorithm in [5,6] with a minimal increase in the detectability of the watermark. In addition, this has an advantage in terms of robustness: if the adversary is not able to infer which layer is watermarked, then he/she will have to exert his/her attack (e.g., noise addition, weight pruning) on every layer thus producing a larger impact on the performance of the network as measured by the original cost function. We will discuss this fact in the experimental section.

5. Information-Theoretic Measures

As already discussed, one of the potential weaknesses of any neural network watermarking algorithm is the detectability of the watermark. An adversary that detects the presence of a watermark on a certain subset of the weights can initiate an attack to remove or alter the watermark. For this reason, it is important that the weights statistically suffer the least modification possible while of course being able to convey the desired hidden message. To measure this statistical closeness, we propose using the KLD [13] between the distributions of weights before and after the watermark embedding. Let P and Q be two discrete probability distributions defined on the same alphabet ; then, the KLD from Q to P is (notice that it is not symmetric):

The KLD is always non-negative. The more similar the distributions P and Q are, the smaller the divergence. In the extreme case of two identical distributions, the divergence is zero.

It is interesting to note that the KLD has been proposed for similar problems in forensics, including steganographic security [23], distinguishability between forensic operators [24], or more general source identification problems [25].

In our case, the two compared distributions are those of and , for k just producing convergence with no decoding errors. Since the KLD is not symmetric, it remains to assign those distributions to P and Q so that the measure is as informative as possible. In particular, we are interested in properly accounting for the possible lateral spikes in the pdf of . As those spikes often appear where the pdf of is small if not negligible, this suggests assigning the latter pdf to Q and the former to P. However, this choice creates a problem in practice, as for some , the empirical probabilities are such that and , potentially leading to an infinite divergence. To circumvent this issue related to insufficient sampling, we use an analytical approximation to Q with infinite support, after noticing that the empirical distribution of with 1000 discrete bins (see Figure 2a) can be approximated by a zero-mean Generalized Gaussian Distribution (GGD) with shape parameter and scale parameter , (for notational coherence with the literature, is used in this section to denote a different quantity than in the rest of the paper.) for which the latter controls the spread of the distribution. As a reference, the KLD between the empirical distribution of and its GGD best-fit is , which is smaller than any of the KLDs that we find in Table 2. In order to compute the KLD in our experiments, we use this infinite-support symmetric distribution for Q and the empirical one of for P after quantizing both to 1000 discrete bins.

Table 2.

PSNR (dB) results with noise level , number of iterations k needed to converge, KLD and SIKLD between the distributions of and .

The use of the KLD is adequate to measure the detectability in those cases where the adversary has access to information about the ’expected’ distribution of the weights. For instance, when only one layer is modified, the expected distribution can be inferred from the weights of other layers. However, this may be still too optimistic in terms of adversarial success, as while the expected shape may be preserved—and thus, inferred—across layers, the scale (directly affecting the variance) may be not so. For instance, if the original weights were expected to be zero-mean Gaussian and they still are after watermarking, the KLD (which depends on the ratio of the respective variances) may be quite large, but the adversary will not be able to determine if watermarking took place if he/she does not know what the variance should be and only measures divergence with respect to a Gaussian. To reflect this uncertainty, quite realistic in practical situations, we minimize the KLD with respect to the scale parameter . This puts the adversary in a scenario where only the shape is used for detectability. Thus, let correspond to a GGD with scale parameter , then we define the Scale Invariant KLD (SIKLD) as:

6. Experiments and Results

In this section, we show the experimental results and we compare them to the theory that we have developed. We use MATLAB R2018b to implement the expressions obtained in Section 3 and represent the theoretical histograms. As we will see, both theory and experiments match reasonably well. In particular, for Adam optimization, we are able to reproduce the same position of the side spikes seen in the empirical histograms of , as well as some effects which are attributable to the influence of the denoising cost function. We will also verify the BOP method proposed in Section 4. In addition, the KLD will be computed to give a more precise measure of the similarity between the distributions of (i.e., after the embedding) and , when using SGD, Adam and BOP.

6.1. Experimental Set-Up

We employ the fine-tune-to-embed approach described in [5,6]. This means that the training process is divided into two phases, as we explained earlier: (1) training the host network from scratch, and (2) fine-tuning steps for embedding the watermark.

6.1.1. Training the Host Network

In order to perform the initial training of the host network FFDNet, we use the open-source implementation for PyTorch provided in [26]. We employ the FFDNet architecture for color images, which has a depth of 12 convolutional layers and 96 feature maps per layer. The training details are the same as in [26] and also the used datasets: Waterloo Exploration Database [27] for training and Kodak24 [28] for validation. We implement the cost function introduced in (1) and train 80 epochs with the milestones described in [26] on a GPU NVIDIA Titan Xp. We use Adam as the optimization algorithm with its hyperparameters set to their default values. After training the network, we test it on the CBSD68 [29] and Kodak24 datasets.

6.1.2. Watermark Embedding

Once we have trained and tested our host network, we embed our T-bit watermark, , , into the convolutional layer of FFDNet. In the next section, we present the results for both SGD and Adam optimization algorithms. The size of the convolutional filter is and the depth of input is , as well as the number of filters in the layer, . Therefore, we have: , and = 82,944. In addition, the learning rate is set to during these fine-tuning steps and, also, we do not perform weight orthogonalization as we did during the initial training.

In addition, we use the following values for the regularizer parameter. When we use SGD we set and for Gaussian and orthogonal projectors, respectively. In addition, for Adam optimization, we use different values of for each configuration to better reflect the influence of the denoising function. In particular, we set and when we use Gaussian projectors, and and when we employ orthogonal projectors. We finish our embedding process when we reach a BER of zero, that is, when all the projected weights are positive—recall that all the embedded bits are set to —, i.e., , for all . Notice that these values of were selected with the goal of reaching a BER of zero in a relatively fast way and, as it can be seen, they are not straightforwardly comparable for Gaussian and orthogonal projectors. Finally, to check the validity of our proposed method BOP, we use the same values of as with Adam optimization, and set the number of blocks B to 12.

6.2. Experimental Results

Here, we present the experimental results. After the main training of the host network and before the watermark embedding, we obtain a PSNR of dB and dB on the CBSD68 and Kodak24 datasets, respectively, for a noise level of 25. These results are very close to those reported in [26]. Compare these values to those in Table 2, where we show the PSNR (dB) results on the CBSD68 and Kodak24 datasets for the same noise level after the watermark embedding was performed. As we see, when we embed the watermark using SGD with Gaussian projectors, the denoising performance drops about dB, while, if we employ orthogonal projectors, the performance drops only dB. Thus, employing orthogonal projectors with SGD optimization might be beneficial to better preserve the denoising performance. For Adam optimization and BOP, the original performance does not significantly drop and it even increases when using orthogonal projectors. Consequently, in order to keep a good performance after the watermark embedding, Adam would be preferable to SGD were it not for the conspicuousness of the weights. Our proposed method BOP is a good solution to bring the detectability of Adam down to similar levels as SGD and still enjoy the rest of advantages.

Table 2 also presents the number of iterations required for obtaining a BER of zero and the KLD and Scale Invariant KLD (SIKLD) between the distributions of and for each configuration.

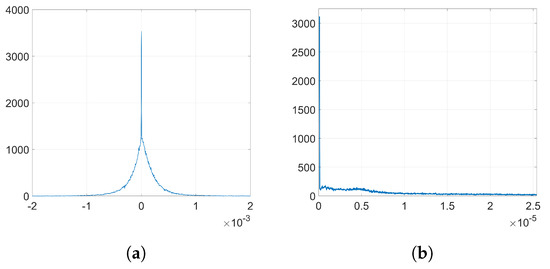

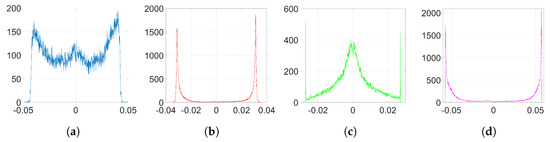

6.2.1. Empirical Denoising Gradients

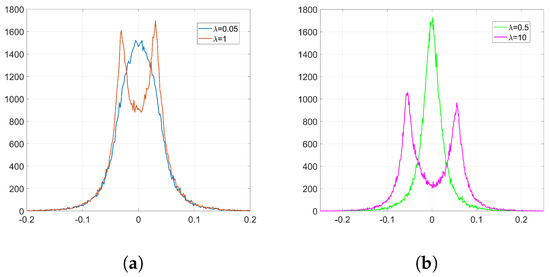

In order to analyze the influence of the denoising function, we need to get the empirical distributions of the mean denoising gradient and the variance of the batching noise. To that end, we proceed as follows: firstly, we extract the denoising gradients from the embedding layer and we average them for each coefficient over the number of iterations k to get the distribution of the mean. Then, the batching noise can be easily computed if, for each coefficient, we subtract the mean value from its corresponding denoising gradient value at each iteration. By computing the variance of this noise for each individual weight, we can estimate the overall distribution of the variance of the batching noise. Figure 3 shows the empirical distribution of the mean denoising gradient, D, and the variance of the batching noise, H.

Figure 3.

Empirical histograms from the denoising gradients (a) distribution of the mean denoising gradient, D; (b) distribution of the variance of the batching noise, H.

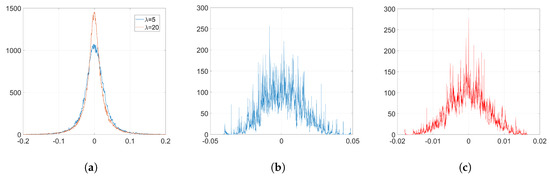

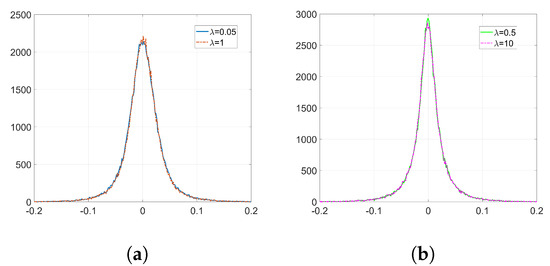

6.2.2. SGD

We embed our watermark using SGD and its corresponding set-up, as we detailed in Section 6.1.2. We show the resulting histograms of and in Figure 4. As we can see, SGD does not significantly alter the original distribution of weights shown in Figure 2a. This is also reflected in the SIKLD, which is very small, especially when we use orthogonal projectors (see Table 2).

Figure 4.

Empirical histograms after the watermark embedding using SGD. (a) histogram of , Gaussian, , and orthogonal projectors, ; (b) histogram of , Gaussian, ; (c) histogram of , orthogonal, .

Now, we check the theory we developed for SGD in Section 3.1. Recall that out theoretical analysis just covers the case of orthogonal projectors. Using (24), we can generate samples of without including the effect of the denoising cost function. The resulting histogram is shown in Figure 5a. Notice that the unusual appearance of this histogram can be attributed to the effects of applying the initial transformation explained in Section 2.3.1. In particular, each value of repeats F times to form vector ; hence, the discrete values in the y-axis of the histogram shown in Figure 5a. However, we see that the range of values of this theoretical histogram fits quite well the empirical one (Figure 4c). In order to get a more accurate representation, we can generate samples of according to (48), so that we add the effect of noise coming from the denoising cost function. As we see in Figure 5b, the resulting histogram is now very similar to the one in Figure 4c.

In addition, we confirm that (25) can be used to compute the variance of the distribution of when we implement orthogonal projectors. Firstly, we check the hypothesis that we made regarding the uncorrelatedness between and . For our particular case of , the correlation coefficient between and is , a very small value that confirms our assumption. Using (25), we have that the variance of the empirical distribution of —red histogram in Figure 4c—is while the theoretical variance is . As we see, these values are almost identical.

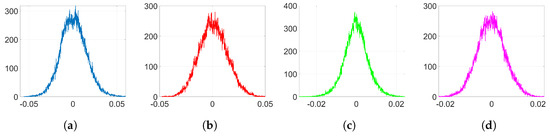

6.2.3. Adam

In the following experiments, we employ Adam optimization for the watermark embedding and use the same settings as in Section 6.1.2. The resulting histograms of and are shown in Figure 6 and Figure 7, respectively. As it can be observed, the shape of the weight distribution changes to a great extent for and . For smaller values of , since the influence of the watermarking cost function is weaker, we can avoid having a significant alteration to the original distribution shape. This is also reflected on their SIKLD values in Table 2: as increases the SIKLD also increases considerably. However, notice that, whatever the value of is, the histograms of weight variations always present the characteristic side spikes. These footprints left by Adam can increase the detectability of the watermark. In addition, when is small, we can observe in these histograms the influence of the denoising cost function: it causes the appearance of a central peak with values that spread till the location of both side spikes.

Figure 6.

Empirical histograms of after the watermark embedding using Adam. (a) Gaussian, and ; (b) orthogonal, and .

Figure 7.

Empirical histograms of after the watermark embedding using Adam. (a) Gaussian, ; (b) Gaussian, ; (c) orthogonal, ; (d) orthogonal, .

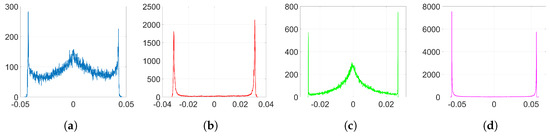

Figure 8 represents the pdf of obtained from (43). Notice that, as we stated in Section 3.2.5, the pdf is concentrated around its mode. Figure 9 shows the histograms of obtained from (44) when only the watermarking loss is optimized and the denoising component is set to zero. Compare these histograms to those in Figure 7: the theory developed in Section 3.2 is able to explain the two-spiked distributions of . Notice that these theoretical expressions provide a good enough approximation since they allow us to predict the position of the side spikes. We show in Table 3 the values of these positions obtained from both theoretical (Figure 9) and empirical (Figure 7) results. As we can see, these side spikes are placed in almost identical positions by both theory and experiments, hence, we can confirm that the sign phenomenon in Adam is responsible for this ant-like behavior shown by the weights at the embedding layer.

Figure 8.

Pdf of , Gaussian projectors.

Table 3.

Position of both side spikes in the histograms of obtained from theoretical and empirical results.

Finally, in order to reflect the influence of the denoising function and obtain more realistic histograms, we can solve the fourth-degree Equation (A11) and, then, generate samples of according to (A12). The resulting histograms are shown in Figure 10 and are now quite similar to those in Figure 7. As it can be seen, we can emulate the central peak and the dispersion of the values of the side spikes in the histograms and, thus, we can confirm that these effects are attributable to the influence of the denoising cost function.

Figure 10.

Theoretical histograms of for Adam with denoising and watermarking functions using Equations (A11) and (A12). (a) Gaussian, ; (b) Gaussian, ; (c) orthogonal, ; (d) orthogonal, .

6.2.4. BOP

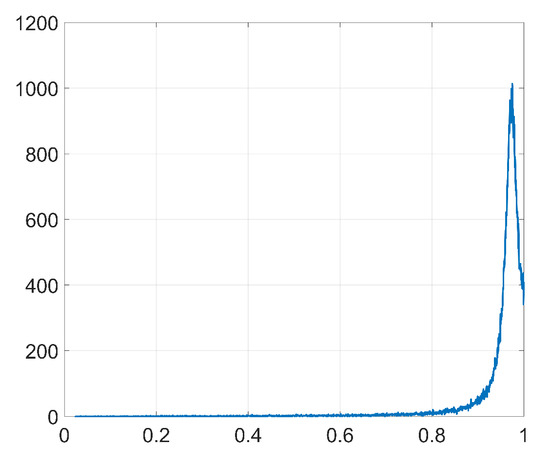

Here, we represent the empirical histograms when we implement our method BOP. Figure 11 and Figure 12 show the histograms of and , respectively. As we see from these histograms and the KLD and SIKLD values in Table 2, this method allows us to remove the side spikes of the histograms and much better preserve the original shape of the weight distribution. As a result, the detectability of the watermark due to Adam optimization is strongly reduced.

Figure 11.

Empirical histograms of after the watermark embedding using Adam. (a) Gaussian, and ; (b) orthogonal, and .

Figure 12.

Empirical histograms of after the watermark embedding using Adam. (a) Gaussian, ; (b) Gaussian, ; (c) orthogonal, ; (d) orthogonal, .

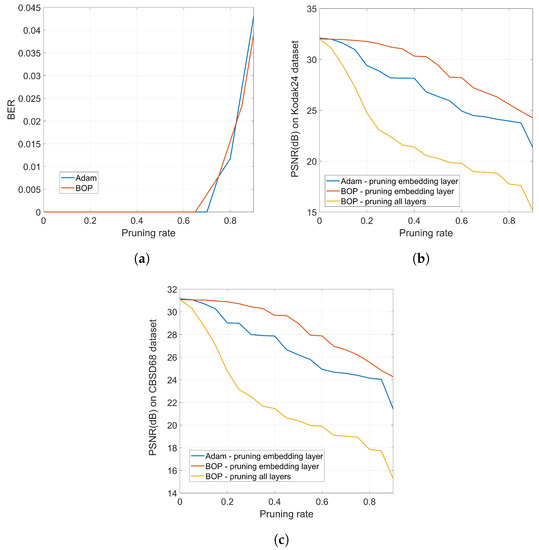

A positive side effect of the undetectability of the watermark is that the robustness is increased because an adversary will not know which layer must be modified in order to alter the embedded watermark. This is illustrated in Figure 13 where we compare the robustness of standard Adam with that of BOP against weight pruning. The network is trained long enough for both Adam and BOP to guarantee a similar BER vs. pruning rate, as shown in Figure 13a. Then, the PSNR obtained after training and pruning is shown in Figure 13b for the Kodak24 dataset which illustrates the following facts: (1) BOP produces a network that is more robust to pruning in terms of PSNR, which can be valuable towards model compression; for instance, for a pruning rate of 0.35 (that has no impact on the BER of the hidden information), BOP degrades the original PSNR by about 1 dB, whereas Adam would produce a degradation of more than 3 dB. (2) This robustness might be detrimental in case it is an attacker who does the pruning in an attempt to degrade the watermark; for instance, for a pruning rate of 0.82, the BER for both Adam and BOP rises to 0.02 (see Figure 13a). For this pruning rate, the PSNR of BOP is around 25 dB while Adam gives slightly less than 24 dB. Then, in the case of BOP, the adversary would be able to produce a network that performs closer to the original in terms of PSNR—notice, however, that the degradation in both cases is quite severe, so this heavy pruning would render a denoiser with little practical use —. (3) In any case, the previous comparison would assume that the adversary knows the layer that contains the watermark; as we have properly justified, this is reasonable for Adam but not so for BOP. If the adversary does not know the layer that must be pruned, then, to achieve the same target BER, he/she must prune all the layers. In this case, for the same pruning rate of 0.82 that causes the BER to increase to 0.02, the PSNR drops to less than 18 dB.

Figure 13.

(a) BER vs. pruning rate for Adam and BOP (pruning all layers or only the watermarked one does not have any impact on BER); (b) PSNR vs. pruning rate for Adam and BOP for the Kodak24 dataset; (c) PSNR vs. pruning rate for Adam and BOP for the CBSD68 dataset.

Similar conclusions can be extracted from Figure 13c that shows the PSNR vs. pruning rate for the CBSD68 dataset. These experiments clearly show the higher robustness brought about by the undetectability of BOP, as it prevents attacks targeted to a specific layer.

7. Conclusions

Throughout this paper, we have shown the importance of being careful with the optimization algorithm when we embed watermarks following the approach in [5,6]. The choice of certain optimization algorithms whose update direction is given by the sign function can originate footprints in the distributions of weights that are easily detectable by adversaries, thus compromising the efficacy of the watermarking algorithm.

In particular, we studied the mechanisms behind SGD and Adam optimization and found that the sign phenomenon that occurs in Adam is detrimental for watermarking, since it causes the appearance of two salient side spikes on the histograms of weights. As opposed to Adam, the sign function does not appear when we use SGD. Therefore, SGD does not significantly alter the original shape of the distribution of weights although, as we showed in the theoretical analysis, it slightly increases its variance. The analysis in this paper can be extended to other optimization algorithms.

In addition, we introduced orthogonal projectors and observed that, compared to the Gaussian case, they generally preserve the original performance and weight distribution better. However, a deeper analysis on this subject is left for further research.

Finally, we presented a novel method that uses orthogonal block projections to address the use of Adam optimization together with the watermarking algorithm under study. As we checked in the empirical section, this method allows us to solve the detectability problem posed by Adam and still enjoy the rest of advantages of this optimization algorithm.

Author Contributions

Conceptualization, B.C.-L. and F.P.-G.; methodology, F.P.-G.; software, B.C.-L.; validation, B.C.-L. and F.P.-G.; formal analysis, B.C.-L. and F.P.-G.; investigation, B.C.-L.; resources, B.C.-L.; writing—original draft preparation, B.C.-L.; writing—review and editing, B.C.-L. and F.P.-G.; supervision, F.P.-G. All authors have read and agreed to the published version of the manuscript.

Funding

This work was partially funded by the Agencia Estatal de Investigación (Spain) and the European Regional Development Fund (ERDF) under projects RODIN (PID2019-105717RB-C21) and RED COMONSENS (RED2018-102668-T). In addition, it was funded by the Xunta de Galicia and ERDF under projects ED431C 2017/53 and ED431G 2019/08.

Conflicts of Interest

The authors declare no conflict of interest.

Appendix A. Mathematical Derivations

Appendix A.1. Projected Weights at k = 0

We want to compute the mean and the variance of . It is easy to see that the mean is zero for any , because .

Now, in order to obtain an expression for the variance, we may compute the expectation of the trace of the covariance matrix of divided by T. Therefore, if we use the properties of the trace, we have:

We can write:

It is straightforward to see that, for the off-diagonal terms, i.e., , the expectation in (A1) is zero. For the N diagonal terms, i.e., , we have instead:

where is the variance of any projection vector , . When we use Gaussian projectors, we have and when we use orthogonal projectors, we have . Therefore, the variance for Gaussian projectors is

while for orthogonal projector is

Appendix A.2. Adam: Mean of the Gradient

In order to find an expression for the mean of the gradient, we substitute (17) into (26) and obtain:

The bias-corrected mean gradient is:

The main difficulty in finding an explicit expression for is the last sum which requires a closed-form expression for , for all , which in turn depends on the Adam adaptation. This easily leads to a difference equation whose solution is cumbersome. Alternatively, we start by conjecturing the affine-growth in (18) and write:

Therefore, under the affine-growth hypothesis, we can write:

and arrive at the expression in (27).

Appendix A.3. Adam: Variance of the Gradient

Appendix A.4. Adam: A Projection-Based Decomposition of

We want to write . To find , we compute the cross-correlation with , i.e., . Then, is simply . In the following, we show separately the derivations for the Gaussian and orthogonal projectors.

Appendix A.4.1. Decomposition for Gaussian Projectors

Recall that the projection vectors have i.i.d. components drawn from a distribution. Since the quantity of interest is a scalar, we will use the trace to manipulate the involved matrices, as follows:

where , , , and is the ith row of matrix . In order to compute the previous expectation, we need to consider separately the diagonal terms and the off-diagonal ones.

We start with the off-diagonal elements (i.e., ). Here, we also need to distinguish the following cases: , satisfied by elements, and , which applies for the remaining off-diagonal terms. Therefore, in the first subcase, we have that , so and:

In order to compute the expectation in (A2), we write:

where can be seen as a realization of an i.i.d. Gaussian distribution with zero-mean and variance . Assume without loss of generality that . Then, the probability that is , (.) and will take the value with probability p, and with probability . Then, the distribution of will correspond to that of a random variable Y constructed as follows: letting , we define Y as . Then,

It can be shown that this integral gives [30]:

Therefore, when and , we can compute (A2) as:

When and , we have that , so . Thus:

where can be computed as the mean of defined in a similar way as above, i.e.,: let , we define as . Then:

Using Mathematica, it is found that:

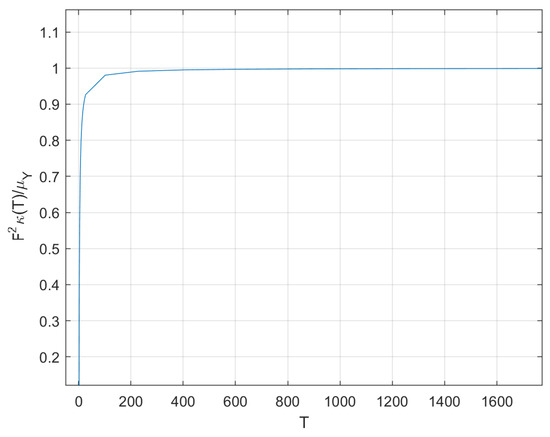

Unfortunately, the double integral required to compute the expectation in (A4) does not seem to admit a closed-form solution. On the other hand, its numerical computation through Monte Carlo integration is rather straightforward. Let and , all mutually independent, then the desired expectation is , where, with , we stress the fact that the integral depends on T alone. The result is represented in Figure A1. It is possible to show that, as , .

Figure A1.

vs. T

Then, for moderately large T, the sum in (A4) is approximately .

For the case (i.e., diagonal terms), we obtain the same result as (A4). Therefore, when computing , there are terms with value , and terms with approximate value , hence:

In addition, , so we have:

We are interested now in measuring the variance of , , which, by construction, we assume to be i.i.d. First, we note that the covariance matrix is:

Then, the variance of will be the expectation of the trace of this matrix divided by N. The second term is immediate to compute, as , so we focus next on . Again, we can write:

Note that:

Then,

We analyze first the off-diagonal terms, i.e., , when , and consider separately the cases where and . For the first case, the right-hand side of (A7) can be written as:

When and , the right-hand side of (A7) can be written as:

Now, we consider the rest of elements which satisfy that , which are the N diagonal terms and the remaining off-diagonal ones. The right-hand side of (A7) is:

where, for the last summand, we have used the fact that, for a zero-mean Gaussian random variable X with variance , . Then, the sum in (A7) is:

and the resulting variance of is:

Appendix A.4.2. Decomposition for Orthogonal Projectors

Now, for orthogonal projectors, we take a different route. Again, we define matrix , and we note that . Recall also properties (13) and (14). We can collect all products , in a vector obtained as .

We are interested in computing the cross-product of this vector and . Then, we can write:

Assuming i.i.d. components in , this implies that, for the orthogonal projectors, the cross-correlation of and is the same as and . We can then compute . Modeling as , we find that . Furthermore, .

The existence of a positive cross-correlation suggests writing , with a suitable positive constant and a zero-mean noise vector uncorrelated with . Taking the cross-product and the expectation:

Therefore, we find that:

Now, it is easy to measure the second order moment of since:

With the above characterization, we can see that:

Therefore, we have:

Then,

When , the expectation above is zero. For the remaining terms, that is, when , we have and . Thus:

Finally, we can compute the variance of as follows:

Appendix A.5. Adam: Analysis with Denoising and Watermarking

Here, we join the denoising and watermarking cost functions and follow a similar approach to that in Section 3.2.5. Let be a random vector of length N, now we should build a random projection matrix, —with Gaussian or orthogonal projectors—, as a basis to generate samples of from (11) and to build following (29).

We also consider that is a random vector of length N, where and represent the denoising and watermarking update terms, respectively. The components of can be computed from realizations of D and V like in Section 3.3 that is, . On the other hand, has the same definition than in Section 3.2.5.

Let be a random variable with the same distribution as and let , P, Q and R be random variables for which , , are are realizations, respectively. Then, we have:

Additionally, let S be a random variable for which is a realization. Now, it must include the contribution due to the denoising cost function so that:

Therefore, for a given realization (, z, , ) of (, Z, D, V), we must solve the following fourth degree equation to get samples of (notice that, in order to generate samples, we must previously build the random vectors and ):

where:

with and given by:

with the following definitions:

where:

Finally, with:

Finally, we can generate samples of as:

Appendix B. Verification of Assumptions

Appendix B.1. Affine Growth Hypothesis for the Weights

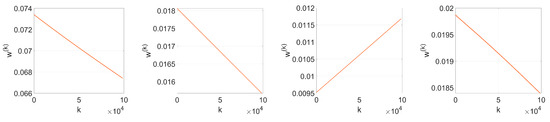

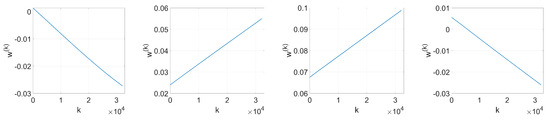

In order to check the affine growth hypothesis that we introduced in (18), we extract the weight values from the embedding layer on each iteration. Figure A2 and Figure A3 show the evolution with k of four randomly selected weights when we respectively use: (i) SGD with orthogonal projectors, and (ii) Adam with Gaussian projectors. As is evident, the hypothesis holds quite accurately for these particular examples.

For a more general approach encompassing all weights, we carry out lineal regression on the evolution of each individual weight with k. Then, for each , we measure the correlation coefficient, , between the observed values—the experimental weight values—and the predictor values. Figure A4 represents the Empirical Cumulative Distribution Function (ECDF) of when using SGD with orthogonal projectors and Adam with both Gaussian and orthogonal projectors. We can state that, when we use Gaussian projectors with Adam optimization, for of the weights at the embedding layer and for of them . Moreover, the affine hypothesis is even stronger when using orthogonal projectors, both with SGD or Adam optimization, since for of the weights at the embedding layer .

Because these percentages show a high-linear behavior, we can confirm the validity of the affine hypothesis for the weights that is key to our theoretical analysis.

Figure A2.

Evolution with k of four randomly selected weights. SGD optimization with orthogonal projectors, , .

Figure A3.

Evolution with k of four randomly selected weights. Adam optimization with Gaussian projectors, , .

Figure A4.

ECDF of the correlation coefficient between the observed values of the weights over k and their predicted affine evolution. (a) SGD with orthogonal projectors, ; (b) Adam with Gaussian, and orthogonal projectors, .

Appendix B.2. Negligibility of Weights at k = 0

We first check the validity of the approximation introduced in the analysis for SGD optimization. From (23), we can get from the parameter settings detailed in Section 6.1 for the orthogonal case. We compute the gradient following (20) and also its approximation . In order to prove that , we can show that the preserved term, that is, the approximation , is much larger than the discarded term , using the Normalized Mean Squared Error (NMSE). Let be a N-length vector and its approximation, then the corresponding NMSE is defined as . Using this definition on and , we get an NMSE of , a very small value that supports our assumption .

Now, we check the same approximation for the Adam analysis. From (43), we can generate samples of using the parameter settings in Section 6.1. Let us recall the following definitions:

for . Additionally, we define their corresponding approximations as:

For Gaussian projectors, we get a NMSE of , and , for the approximations made for , and , respectively. For orthogonal projectors, we get a NMSE of , and , for the approximations made for vectors , and , respectively. As we see, these are small enough values to consider that our hypothesis is reasonable for the analysis in Section 3.2.

References

- He, K.; Zhang, X.; Ren, S.; Sun, J. Delving Deep into Rectifiers: Surpassing Human-Level Performance on ImageNet Classification. In Proceedings of the 2015 IEEE International Conference on Computer Vision (ICCV ’15), Santiago, Chile, 7–13 December 2015; IEEE Computer Society: Washington, DC, USA, 2015; pp. 1026–1034. [Google Scholar]

- Nassif, A.B.; Shahin, I.; Attili, I.; Azzeh, M.; Shaalan, K. Speech Recognition Using Deep Neural Networks: A Systematic Review. IEEE Access 2019, 7, 19143–19165. [Google Scholar] [CrossRef]

- Le Merrer, E.; Pérez, P.; Trédan, G. Adversarial Frontier Stitching for Remote Neural Network Watermarking. arXiv 2017, arXiv:1711.01894. [Google Scholar] [CrossRef]

- Adi, Y.; Baum, C.; Cissé, M.; Pinkas, B.; Keshet, J. Turning Your Weakness Into a Strength: Watermarking Deep Neural Networks by Backdooring. arXiv 2018, arXiv:1802.04633. [Google Scholar]

- Uchida, Y.; Nagai, Y.; Sakazawa, S.; Satoh, S. Embedding Watermarks into Deep Neural Networks. In Proceedings of the 2017 ACM on International Conference on Multimedia Retrieval (ICMR ’17), Bucharest, Romania, 6–9 June 2017; Association for Computing Machinery: New York, NY, USA, 2017; pp. 269–277. [Google Scholar]

- Nagai, Y.; Uchida, Y.; Sakazawa, S.; Satoh, S. Digital Watermarking for Deep Neural Networks. Int. J. Multimed. Inf. Retr. 2018, 7, 3–16. [Google Scholar] [CrossRef]

- Cox, I.J.; Kilian, J.; Leighton, F.T.; Shamoon, T. Secure Spread Spectrum Watermarking for Multimedia. IEEE Trans. Image Process. 1997, 6, 1673–1687. [Google Scholar] [CrossRef] [PubMed]

- Bottou, L. Online Algorithms and Stochastic Approximations. In Online Learning and Neural Networks; Saad, D., Ed.; Cambridge University Press: Cambridge, UK, 1998. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A Method for Stochastic Optimization. In Proceedings of the 3rd International Conference on Learning Representations (ICLR ’15), San Diego, CA, USA, 7–9 May 2015. [Google Scholar]

- Rouhani, B.D.; Chen, H.; Koushanfar, F. DeepSigns: A Generic Watermarking Framework for IP Protection of Deep Learning Models. arXiv 2018, arXiv:1804.00750. [Google Scholar]

- Balles, L.; Hennig, P. Dissecting Adam: The Sign, Magnitude and Variance of Stochastic Gradients. In Proceedings of the 2018 International Conference on Machine Learning (ICML ’18), Stockholm, Sweden, 10–15 July 2018. [Google Scholar]

- Wilson, A.C.; Roelofs, R.; Stern, M.; Srebro, N.; Recht, B. The Marginal Value of Adaptive Gradient Methods in Machine Learning. In Proceedings of the 31st International Conference on Neural Information Processing Systems (NIPS ’17), Long Beach, CA, USA, 4–9 December 2017; Curran Associates Inc.: Red Hook, NY, USA; pp. 4151–4161. [Google Scholar]

- Kullback, S.; Leibler, R.A. On Information and Sufficiency. Ann. Math. Stat. 1951, 22, 79–86. [Google Scholar] [CrossRef]

- Zhang, K.; Zuo, W.; Zhang, L. FFDNet: Toward a Fast and Flexible Solution for CNN-Based Image Denoising. IEEE Trans. Image Process. 2018, 27, 4608–4622. [Google Scholar] [CrossRef] [PubMed]

- Fan, L.; Zhang, F.; Fan, H.; Zhang, C. Brief Review of Image Denoising Techniques. Vis. Comput. Ind. Biomed. Art 2019, 2, 7. [Google Scholar] [CrossRef] [PubMed]

- Ioffe, S.; Szegedy, C. Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift. In Proceedings of the 32nd International Conference on Machine Learning (ICML ’15), Lille, France, 6–11 July 2015; pp. 448–456. [Google Scholar]

- Sun, S.; Cao, Z.; Zhu, H.; Zhao, J. A Survey of Optimization Methods From a Machine Learning Perspective. IEEE Trans. Cybern. 2020, 50, 3668–3681. [Google Scholar] [CrossRef] [PubMed]

- Duchi, J.; Hazan, E.; Singer, Y. Adaptive Subgradient Methods for Online Learning and Stochastic Optimization. J. Mach. Learn. Res. 2011, 12, 2121–2159. [Google Scholar]

- Tieleman, T.; Hinton, G. Lecture 6.5—RMSProp, COURSERA: Neural Networks for Machine Learning; University of Toronto: Toronto, ON, Canada, 2012. [Google Scholar]

- Wang, T.; Kerschbaum, F. Attacks on Digital Watermarks for Deep Neural Networks. In Proceedings of the 2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP ’19), Brighton, UK, 12–17 May 2019; pp. 2622–2626. [Google Scholar]

- Burden, R.L.; Faires, J.D. Numerical Analysis, 9th ed.; Brooks/Cole: Boston, MA, USA, 2010. [Google Scholar]

- Geyer, C.J. Practical Markov Chain Monte Carlo. Stat. Sci. 1992, 7, 493–497. [Google Scholar] [CrossRef]

- Cachin, C. An Information-Theoretic Model for Steganography. In Information Hiding; Lecture Notes in Computer Science; Aucsmith, D., Ed.; Springer: Berlin/Heidelberg, Germany, 1998; Volume 1525. [Google Scholar]

- Comesaña, P. Detection and information theoretic measures for quantifying the distinguishability between multimedia operator chains. In Proceedings of the IEEE Workshop on Information Forensics and Security (WIFS12), Tenerife, Spain, 2–5 December 2012. [Google Scholar]

- Barni, B.; Tondi, B. The Source Identification Game: An Information-Theoretic Perspective. IEEE Trans. Inf. Forensics Secur. 2013, 8, 450–463. [Google Scholar] [CrossRef]

- Tassano, M.; Delon, J.; Veit, T. An Analysis and Implementation of the FFDNet Image Denoising Method. Image Process. Line 2019, 9, 1–25. [Google Scholar] [CrossRef]

- Ma, K.; Duanmu, Z.; Wu, Q.; Wang, Z.; Yong, H.; Li, H.; Zhang, L. Waterloo Exploration Database: New Challenges for Image Quality Assessment Models. IEEE Trans. Image Process. 2017, 26, 1004–1016. [Google Scholar]

- Franzen, R. Kodak Lossless True Color Image Suite. 1999. Available online: http://r0k.us/graphics/kodak (accessed on 22 September 2020).

- Martin, D.; Fowlkes, C.; Tal, D.; Malik, J. A Database of Human Segmented Natural Images and its Application to Evaluating Segmentation Algorithms and Measuring Ecological Statistics. In Proceedings of the 8th Int’l Conf. Computer Vision (ICCV 2001), Vancouver, BC, Canada, 7–14 July 2001; pp. 416–423. [Google Scholar]

- Wilson, S.G. Digital Modulation and Coding; Pearson: London, UK, 1995. [Google Scholar]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).