1. Introduction

Causality, as a relation between cause and effect, is a complex topic discussed from many perspectives, starting from situations of everyday life, through philosophy, to mathematics and physics.

In the last few decades, new causal methods are continually being designed and at the same time, there is an ongoing debate over whether or not the relationships found using the individual methods are actually causal. The interested reader can learn more from the book of Pearl and Mackenzie [

1]. As the authors emphasize in the book, several operational methods exist for discovering potential causal relations. However, regardless of language, reasoning through algorithms of inductive causation should follow to determine which connections can be inferred from empirical observations even in the presence of latent variables. The concept reveals the limits of what can be learned by causal analysis from observational studies and represents a more general strategy comprising approaches used so far. However, the development of successful techniques, such as times series causality tools, remains useful as these tools help to draw potential causal links that are later verified by means such as Pearl’s model.

In this study, we will present one of such tools, that is applicable in a special case—when we ask about the causal relationship between dynamical systems.

We restrict ourselves to the study of causality in situations where processes are represented by time series. Following the concept, introduced by Clive Granger in 1969, we respect that an effect cannot occur before its cause and we say that

x causes

y if, information on the recent past of the time series

x helps improve the prediction of

y [

2]. Comparison of several causal methods in [

3] has shown that the Granger causality test works well for autoregressive models but produces false positives for time series coming from coupled dynamical systems. Some more recent information-theoretic methods such as conditional mutual information or transfer entropy [

4,

5] are usually more successful with data from dynamical systems. Methods that work in reconstructed state spaces may also be used successfully. This includes the method of predictability improvement, which determines whether a prediction of an observable from system

Y, made in a reconstructed state space, improves when observable from system

X is included in the reconstruction. If the predictability improves, then, analogously to the idea of Granger’s causality in the case of autoregressive processes, we hypothesize that

X causes

Y [

3].

However, Cummins et al. have introduced a comprehensive theory showing that in coupled dynamical systems we have limited possibilities when it comes to uncovering causal links. The best we can hope for is finding the strongly connected components of the graph (sets of mutually reachable vertices) which represent distinct subsystems coupled through one-way driving links [

6]. We cannot identify self-loops, and, we cannot distinguish the direct driving, indirect driving, correlate of direct driving and correlate of an indirect driving.

In this study, we would like to draw attention to an interesting new way to reveal detectable causal relationships in dynamical systems. The method makes use of the fact that the driver has lower complexity and degree of freedom than the driven system containing information about the driving dynamics. To quantify the complexity, the so-called correlation dimension () will be used.

The use of the correlation dimension in the context of causality detection has been proposed by Janjarasjitt and Loparo in 2008 [

7]. The study has built on the fact that, if the subsystems

X and

Y are independent, then the active degrees of freedom of the combined system is equal to the sum of the active degrees of the subsystems. On the other hand, if

X and

Y are coupled, the active degrees of freedom of the combination is expected to be reduced. The authors have introduced an index named dynamic complexity coherence measure. It has been defined as the ratio of the sum of the correlation dimensions of the individual subsystems to the correlation dimension of the combination of the subsystems. It has been shown that the index is useful to quantify the degree of coupling because it is increasing with intensifying coupling strength. The index was designed to detect the presence of coupling but not to determine the direction of the link. Regarding the direction, the authors have suggested utilizing the variability in the correlation dimension, which is supposed to be greater in the case of the response system than in the driving system alone.

However, we have shown in 2013 that not just the presence but also the direction of the link is simply identifiable from the

estimates [

8]. The study has demonstrated that

for a unidirectionally driven system and the combined state portrait are equal and significantly higher than

for the driving system. Bidirectional causality and synchronized dynamics, on the other hand, are characterized by the equality of all three evaluated dimensions.

Finally, in a recent study [

9], the authors pointed out that even the hidden common driver of the systems

X and

Y can be detected through estimates of fractal dimensions (they used the information dimension), although it must always be emphasized that this is only possible in cases where

X and

Y are not interconnected.

In this study, the topic of using the fractal dimension for causality detection is revisited. Our goal is to systematically specify detectable types of causal relations, test the -based method for different levels of coupling strength, and highlight the potential of the methodology.

In the following section, we focus on the choice of the so-called embedding parameters for the reconstruction of the examined dynamics, as the quality of the reconstruction is essential for the accuracy of dimension estimation. Then the -based method of causality detection is explained. Finally, test examples of different causal relationships are given and the results are presented. The sensitivity of the method to the strength of the links is also discussed. We also briefly comment on the -based approach concerning the current topic of causal analysis of time-reversed measurements.

3. Results

The -based causality detection applies to any pair of time series that originate from dynamical systems and are long and clean enough to allow reasonably accurate estimates of the correlation dimensions of systems.

To demonstrate the ability of to detect causal relations we decided to use selected pairs of time series produced by the well-known chaotic Hénon maps.

First of all, from the time series, we made reconstructions of the state portraits. To do so, we explored a suitable invariant (number of false nearest neighbors or the predictability) for several combinations of parameters

m and

and selected the one that led to the best result (minimum of false neighbors or the lowest prediction error) [

15].

Then we estimated , , and for the reconstructed state portraits and derived the corresponding causal relation between X and Y.

This section presents the individual test examples, the outputs obtained for each test case, and the visualization of the results.

3.1.

Equation (

1) represents our first test example—unidirectional driving of system

Y by

X. The first two lines correspond to the driving Hénon map

X, and the following two equations describe the response

Y:

C controls the strength of the coupling, with

for uncoupled systems. Plots of conditional Lyapunov exponents in [

19], similarly as correlation dimension estimates in [

18], show that synchronization takes place at about

.

Before we start with a causal analysis of this example, let us remember some of the limits we are encountering here. The so-called interaction graph (see the left part of

Figure 1), which is easy to interpret from Equation (

1), shows how

X and

Y are coupled through a one-way driving relationship between variables

and

. In an interaction graph, the nodes representing the variables are connected by directed edges whenever one variable directly drives another.

Now imagine that we have the time evolution of all four variables, but we do not know how they are linked. According to Cummins et al. [

6], complete pair-wise causal testing should reveal all five links in the left graph of

Figure 1. In addition to these, since we cannot distinguish between direct and indirect driving, we would also see

driving

and

driving both

and

(see the right graph of

Figure 1). In summary, we would correctly find that

and

form one subsystem

X,

and

form the second subsystem

Y, and

. However, the exact position of the direct driving link (

) cannot be determined.

The test example given by Equation (

1) for increasing coupling strengths has been recently analyzed with six different causal methods [

3]. The methods included the Granger VAR test, the extended Granger test, the kernel Granger test, cross-mapping techniques, conditional mutual information, and assessment of the predictability improvement. Detailed results can be found in the extensive supplemental material of the article [

3]. The study has shown, among other things, that the Granger test does not apply to data from dynamical systems and even some of the popular methods, supposedly suitable for analysis of dynamical systems, have extremely low specificity—–they produce a large number of false detections of causality.

To test for the presence of unidirectional driving by the

-based method we used time series

and

generated by Equation (

1). First, we used

, which is well below the synchronization value. The starting point was

. The first 1000 data points were discarded and the next

were saved and used for

estimations. We started with the reconstruction of

and

using embedding parameters

,

,

, and

. Then we estimated

for

and got a value of about

. The estimate of the correlation dimension for

, as well as the estimate for

resulted in a value of about 2. Such an outcome correctly indicates that system

X causes system

Y. This test example is presented in the first row of

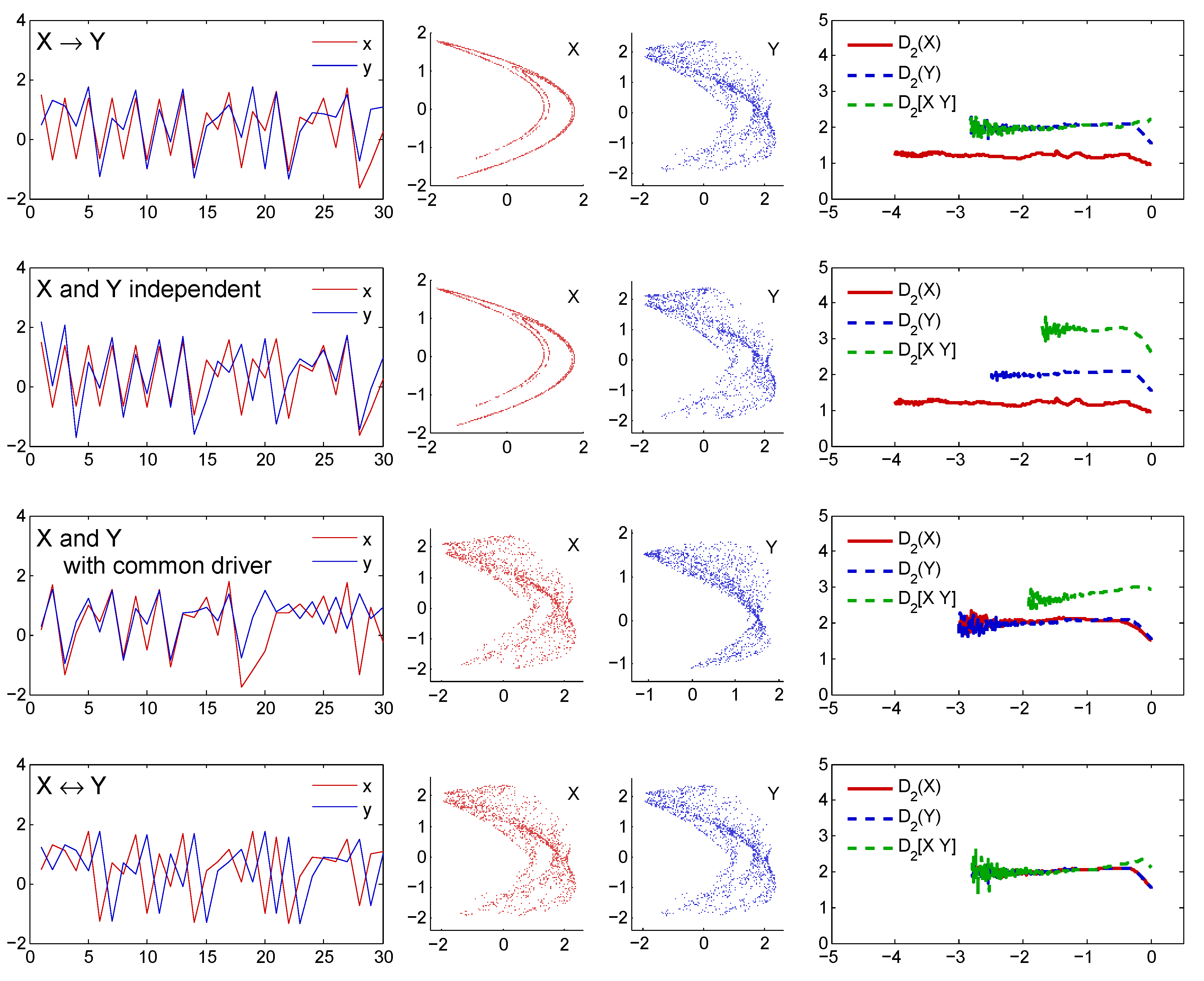

Figure 2.

Recall, however, that we could identify the one-way causal link equally well for any other coupling value below the synchronization threshold. This is evident from

Figure 3, where the

estimates for increasing coupling strength

C (see Equation (

1)) are shown.

of about

was estimated for the driving system represented by

and values of

markedly higher for

and

.

Figure 3 also clearly reveals the onset of synchronization by a drop of

to the level given by the driving system for the coupling of about

. For the coupling value

the result depends on the starting points of the two time series. Since we started here from different points,

and

respectively, we got two independent time series. As a result,

. If the maps

X and

Y were started from the same point, we would get two identical time series and

. The same applies for

, that is, after an identical synchronization, when the time series are no longer distinguishable.

Note also that it does not matter whether the data comes from maps or continuous dynamical systems. We used observables from Hénon maps here, but we could have equally well demonstrated the effectiveness of the method on data from flow systems. In [

18] six different examples of linked Rössler and Lorenz systems have been presented, together with graphs of

values for increasing coupling strength. The graphs have suggested that testing these examples would lead to equally clear results as those presented here.

3.2. X and Y Are Independent

As the second test example, we took observables

and

of Hénon maps (Equations (

1) with

), generated with different starting points to get two independent time series. We determined

,

,

, and

as the suitable embedding parameters for reconstructions of manifolds

and

. The correlation dimension of

computed for

data points was found about

. The estimate of

from the more complex

was about

. Then we concatenated

and

to combine state vectors of both manifolds. The two dynamics were independent, each with its own degree of freedom, and for the dimension of the

we got an estimate of about

, equal to the sum of the individual dimensions. This test case is shown in the second row of

Figure 2.

3.3. Uncoupled X and Y with a Hidden Common Driver

In the next example, we used two different Hénon maps to generate independent time series unidirectionally driven by a hidden common driver. The systems

X and

Y only differ in one parameter, which is set to

for the

X case and

for the

Y case. The first two lines correspond to the system

Z driving both

X and

Y, while the systems

X and

Y are independent of each other (also see

Figure 4 for the interaction graph):

Although, like in the second example, the systems are uncoupled, the signals

,

might seem correlated or causally linked because they are controlled by a common hidden driver. We determined

,

,

, and

as the suitable embedding parameters for reconstructions of

and

. Correlation dimensions computed for

data points were found to be about 2 and

for

and

, respectively. Then we combined the state vectors of both manifolds into 8-dimensional state space. Since the two dynamics were partly generated by a common driver, the estimated joint dimension of about

was, as expected, higher than the individual dimensions but less than the sum (

) of the two:

. This example, for the hidden system driving with a strength of

, is presented in the third row of

Figure 2.

Figure 5, on the other hand, illustrates how sensitive the causality detection is to the driving strength of the hidden common driver. For this purpose, we generated and saved time series

,

, driven by

Z using the coupling strength values

C from 0 to 1 with the step of

. In each case, the first 1000 data points were discarded and the next

were saved and used for

estimations. For zero coupling (see Equation (

1)) we have two unrelated systems of Hénon type:

X with attractor of the complexity of about

and

Y with attractor of the complexity of about

. For increasing coupling strength the

estimates indicate presence of a hidden common driver until

C reaches the synchronization threshold at about

. For higher couplings

X is identically synchronized with the hidden driver

Z, while

Y remains driven but not synchronized [

18].

X and

Z become indistinguishable and the relations

suggest unidirectional link from

X to

Y.

3.4.

As an example of bidirectional coupling, we used variables

and

generated by Equation (

1), with

and starting point of

. Let us denote

and

. First of all, we made reconstructions of the state portraits. Both

and

were reconstructed with embedding dimension

and delay

. Then the joined state space

was 8-dimensional. If

X and

Y interact bidirectionally, then, in theory, the cyclic flow of information ensures that any

x or

y variable contains information about the dynamics of both systems. Exactly in line with our expectations, all three estimates of dimensions reached the same value. See the shared plateau at about 2 in the last graph of the bottom row of

Figure 2.

4. Discussion

In this study, we were facing an interesting problem of causality detection in cases, where the valid working hypothesis is that the investigated long time series x and y are manifestations of some dynamical systems X and Y, respectively.

If we analyzed autoregressive processes, we could use the Granger method as a tool for causal analysis. For dynamical systems, however, we must look for different approaches. Differences in causal analysis of dynamical systems as compared to autoregressive models have also emerged in connection with the first principle of Granger causality that the cause precedes the effect. Based on this principle one expects a change in the direction of causality from

to

when causally linked time series and their time-reversals are analyzed. It might even look like a good idea to use this turning test routinely to confirm the conclusion about the direction of the causal link. Indeed, this applies to autoregressive processes. However, Paluš et al. have pointed out that the expected change of the direction of causality did not happen after the time reversing of the tested chaotic signals [

20]. The authors have suggested that the observed paradox is probably related to the dynamic memory of the systems. The lesson, among other things, is that in case of data from dynamical systems we should not try to confirm the direction of the causal link by analyzing the time-reversals.

Can correlation dimensions somehow contribute to this debate? as a geometrical characteristic is the same regardless of whether the points of the attractor are taken forward or backward in time. We only know that the dimension of the driving dynamical system (cause) is always lower than the dimension of the driven dynamical system (effect). Then, if the causal link is unidirectional, we can say that the direction of the coupling can only go from a system with a lower to the system with higher .

To conclude, we must say that we consider the use of the correlation dimension in causal analysis to be a very promising approach, with a wide range of potential application areas. Many real data can be modeled by dynamical systems—usually through differential equations or discrete-time difference equations. The best-known examples include planetary motion, climate models, electric circuits, ecosystem and population dynamics modeling, cardio-respiratory interactions and other biomedical applications. The only requirement for the investigated time series is that they are generated by dynamics that can be modeled by dynamical systems and they are long and clean enough to enable estimating for the reconstructed systems.

However, many unanswered questions remain to be addressed. For example, the impact of noise needs to be examined. It is known that for noisy data the plateau for

estimation is lifted and thus hardly evaluable. When applied to causality analysis, however,

is interesting in terms of relative comparisons. In that case, if we expect noise to affect each time series evenly, meaningful results might still be obtained. We also need to be careful when the signals being investigated belong to the so-called

processes. In such cases,

estimates can easily be misinterpreted as a sign of low-dimensional dynamics [

21]. It is one of the topics we would like to focus on soon because

noise seems to be a ubiquitous feature of data from various areas including solids, condensed matter, electronic devices, music, economy, heart or brain signals.

Moving from pairwise causality detection to a multivariate approach is another issue that would be worth considering in future theoretical or computational studies.

What we know, for now, is that the -based method offers unquestionable advantages in causal analysis of dynamical systems. If sufficiently long time series are available, then the detection of the causal relations is straightforward, fast, and reliable. It will be interesting to explore its possibilities and limitations in situations where deterministic dynamics do not play a dominant role in data.