1. Introduction

Action potentials are the key carriers of information in the brain. The arrival of an action potential at a synapse opens calcium channels in the presynaptic site, which leads to the release of vesicles filled with neurotransmitters [

1]. In turn, the released neurotransmitters activate post-synaptic receptors, thereby leading to a change in the post-synaptic potential.

This process of release, however, is stochastic. The release probability is affected by the level of intracellular calcium and the size of the readily releasable pool of vesicles [

2,

3]. Moreover, the release of a vesicle is not necessarily synchronized with the spiking process; a synapse may release asynchronously tens of milliseconds after the arrival of an action potential [

4], or sometimes even spontaneously [

5].

The release properties of a synapse also change on different time scales. The successive release of vesicles can deplete the pool of vesicles, thereby depressing the synapse. On the other hand, a sequence of action potentials with short inter-spike intervals can “prime” the release mechanism and increase the release probability, inducing short-term facilitation [

6].

Several studies have addressed the modulatory role of short-term depression on synaptic information transmission [

7,

8,

9]. In contrast, the information rate of a facilitating synapse is not yet fully understood, though it has been suggested that short-term facilitation temporally filters the incoming spike train [

10].

To study the impact of short-term facilitation on synaptic information efficacy, we employ a binary asymmetric channel with two states. The model synapse switches between a baseline state and facilitated state based on the history of the input. Each state has distinct release probabilities, both for synchronous and asynchronous release. We derive a lower bound and an upper bound for the mutual information rate of such a facilitating synapse and assess the functional role of short-term facilitation on the synaptic information efficacy.

Short-term facilitation increases the release probability and consequently raises the metabolic energy consumption of the synapse [

11]. We calculate the rate of information transmission per unit of energy to evaluate the compromises that a facilitating synapse makes to balance energy consumption and information transmission.

2. Synapse Model and Information Bounds

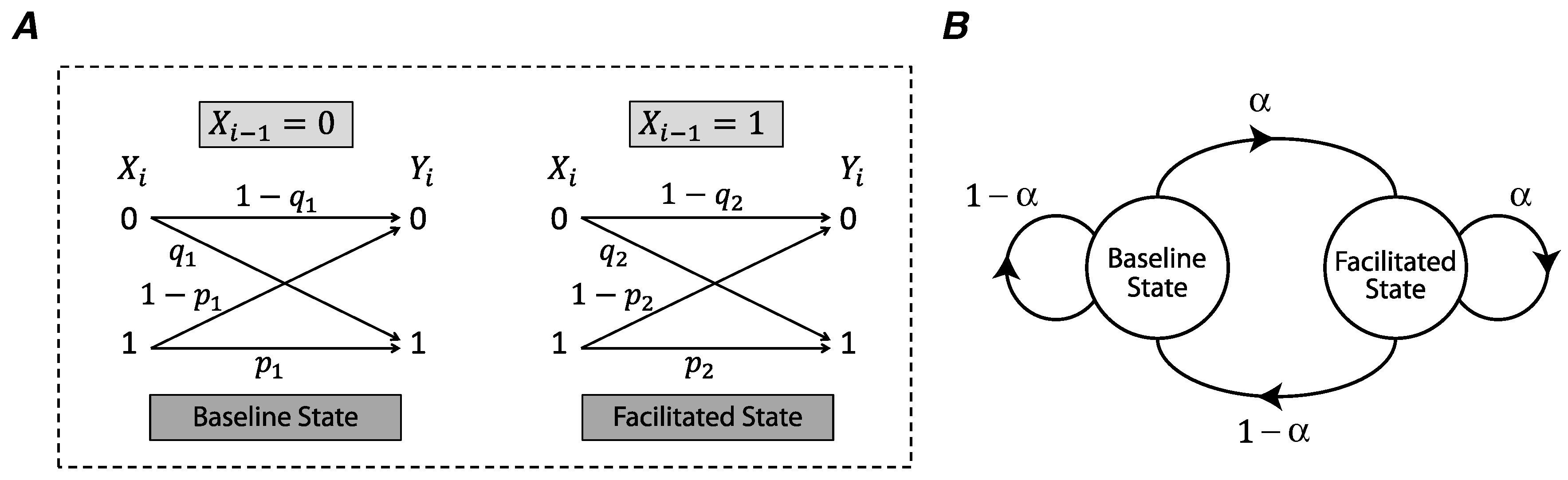

We use a binary asymmetric channel to model the stochasticity of release in a synapse (

Figure 1A) [

12]. The input of the model is the presynaptic spike process

, where

is a binary random variable corresponding to the presence (

) or absence (

) of a spike at time

i. We assume that

X is an i.i.d. random process, and

is a Bernoulli random variable with

. The output process of the channel

, represents a release (

) or lack of release (

) at time

i. The synchronous spike-evoked release probability is characterized as

, and asynchronous release probability as

.

In short-term synaptic facilitation, a presynaptic input spike facilitates the synaptic release for the next spike. We model this phenomenon as a binary asymmetric channel whose state is determined by the previous input of the channel (

Figure 1A). In the absence of a presynaptic spike (

), the channel is in the baseline state and the probabilities of synchronous spike-evoked and asynchronous release are

and

. If a presynaptic spike occurs at time

, i.e.,

, the state of the channel is switched to the facilitated state and the synchronous and asynchronous release probabilities are increased to

and

as follows,

Here,

u and

v are facilitation coefficients of synchronous and asynchronous release probabilities (

), and

and

are the maximum release probabilities of these two modes of release. A Markov chain describes the transitions between the baseline state and the facilitated state, and the transition probabilities correspond to the presence or absence of an action potential in the presynaptic neuron (

Figure 1B).

If

and

represent the mutual information rates of the binary asymmetric channels corresponding to the baseline state and facilitated state, then for

,

where

and

. First we derive a lower bound for the information rate between the input spike process

X and the output process of the release site

Y (the proofs for the theorems are in the

Appendix A).

Theorem 1 (Lower Bound)

. Let denote the mutual information rate of a synapse with short-term facilitation, modeled by the two-state binary asymmetric channel (Figure 1A). Then is a lower bound for . Since

,

is the statistical average over the mutual information rates of the two constituent states of the release site. Therefore, our theorem shows that at least in this simple model of facilitation, the mutual information rate is higher than the statistical average over the mutual information rates of the single states. This contrasts with the result for the two-state model of depression [

12], for which

is an exact result for the mutual information rate.

Theorem 2 (Lower Bound)

. The mutual information rate of the two-state model of facilitation is upper-bounded bywhere In a facilitating synapse, the release probability and consequently, the energy consumption of the synapse increases. We define the ratio of the mutual information rate by the release probability as the energy-normalized information rate of the synapse. The energy-normalized information rate of the synapse without facilitation, denoted by

, is then

Moreover, the release probability of the synapse in the two-state model of facilitation is

which is independent of

i. Hence, the energy-normalized information rate of a facilitated synapse,

, as well as the lower and upper bounds of energy-normalized information rate,

and

, are calculated by dividing

,

, and

by

.

3. Results

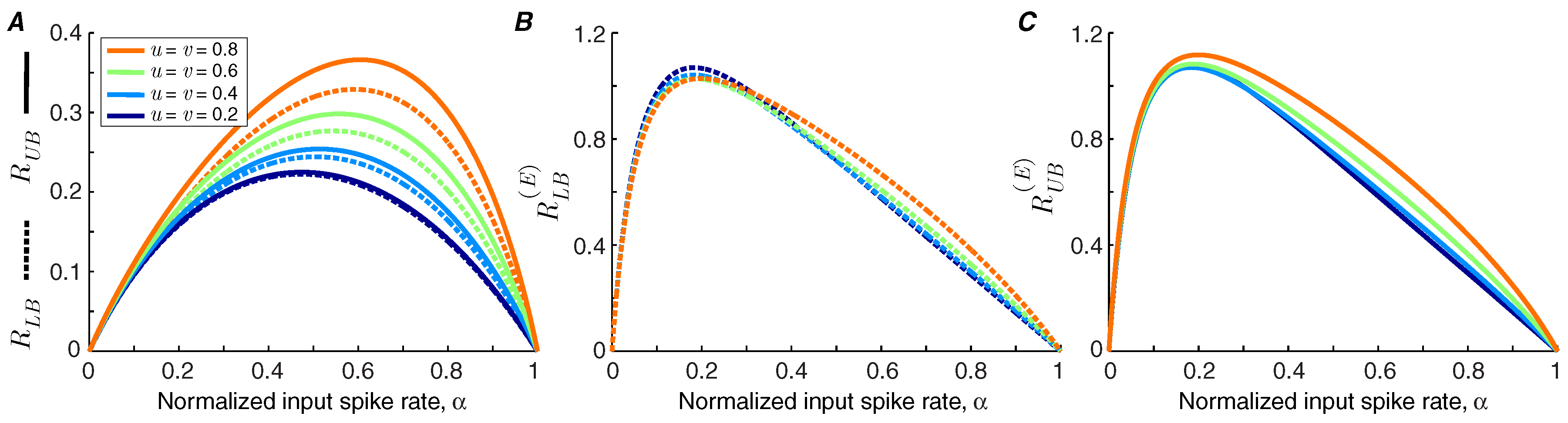

We use Theorems 1 and 2 to calculate the lower bound and upper bound of the information transmission rate of a synapse under short-term facilitation (

Figure 2A). It is shown that the bounds are tighter for synapses with lower facilitation levels. We find that the information rate increases with the level of facilitation. By contrast, the bounds on the energy-normalized information rate of the synapse are relatively invariant to the strength of facilitation (

Figure 2B,C). This finding indicates that a synapse can change the balance between its energy consumption and transmission rate by altering its level of facilitation without affecting the information rate per release.

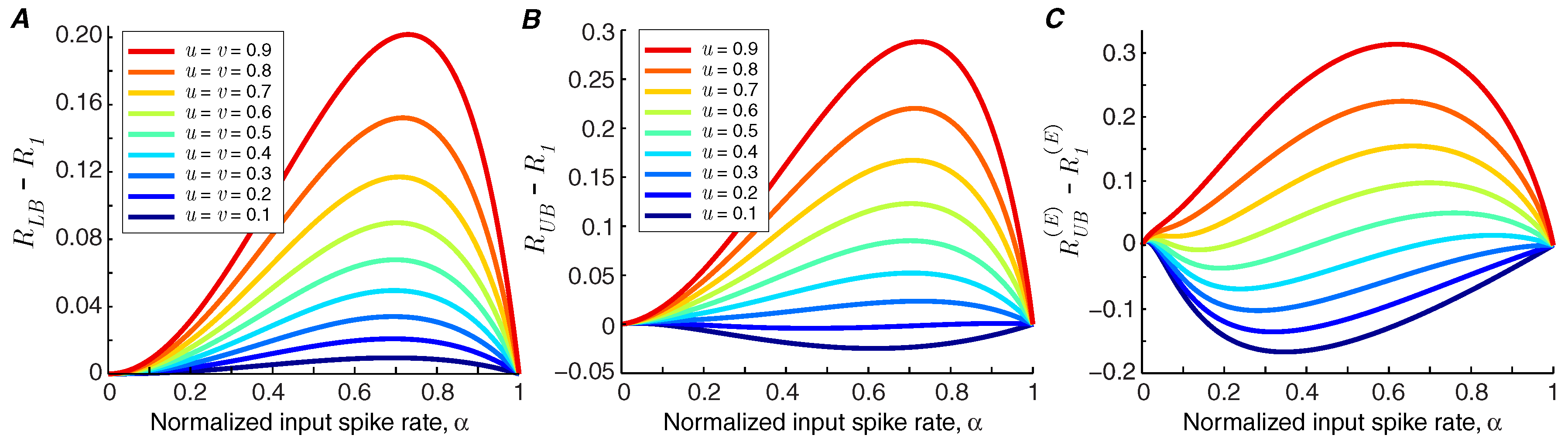

If the lower bound of information rate,

, is greater than the information rate of the synapse in the baseline state,

, we can conclude that short-term facilitation increases the mutual information rate of the synapse (i.e.,

). In

Figure 3A, it is shown that for the modeled synapse (with

and

), short-term facilitation always increases the mutual information rate, provided that synchronous spike-evoked release and asynchronous release are facilitated equally, i.e.,

.

Recent findings suggest that synchronous and asynchronous release may be governed by different mechanisms [

4], and consequently they may show distinct levels of facilitation. We study the impact of different facilitation coefficients in the modeled synapse by fixing the facilitation coefficient of asynchronous release at

and calculate the information bounds for different values of

u. Short-term facilitation reduces the mutual information rate of the synapse if the upper bound of the rate of the synapse,

, goes below the information rate of the synapse without facilitation,

.

Figure 3B shows that short-term facilitation degrades the information rate of the synapse if the facilitation level of synchronous release is much lower than that of asynchronous release. The degrading effect of facilitation is pronounced when we compare the upper bound of the energy-normalized information rate of the synapse with facilitation,

, with the energy-normalized information rate of a static synapse,

. The values below zero in

Figure 3C show the operating points of synapses in which facilitation reduces the energy-normalized information rate.

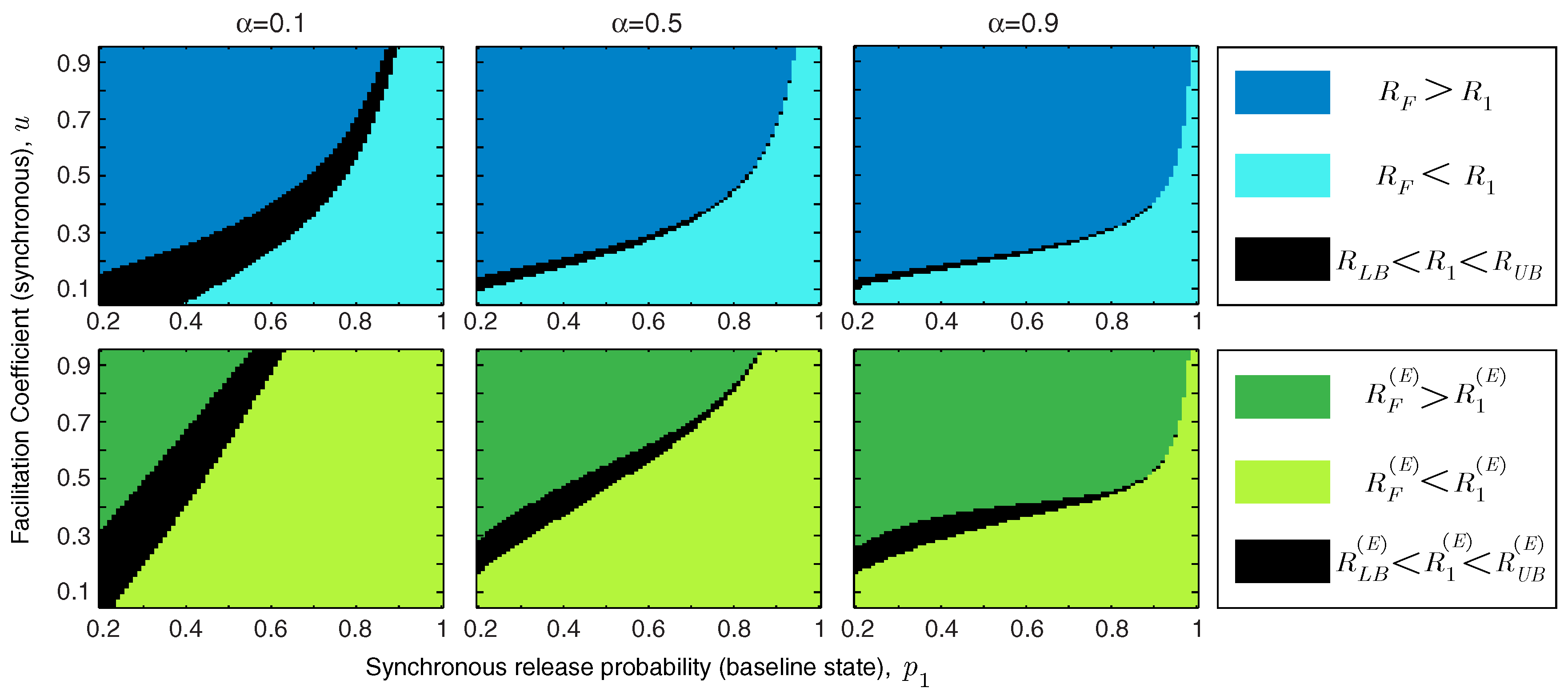

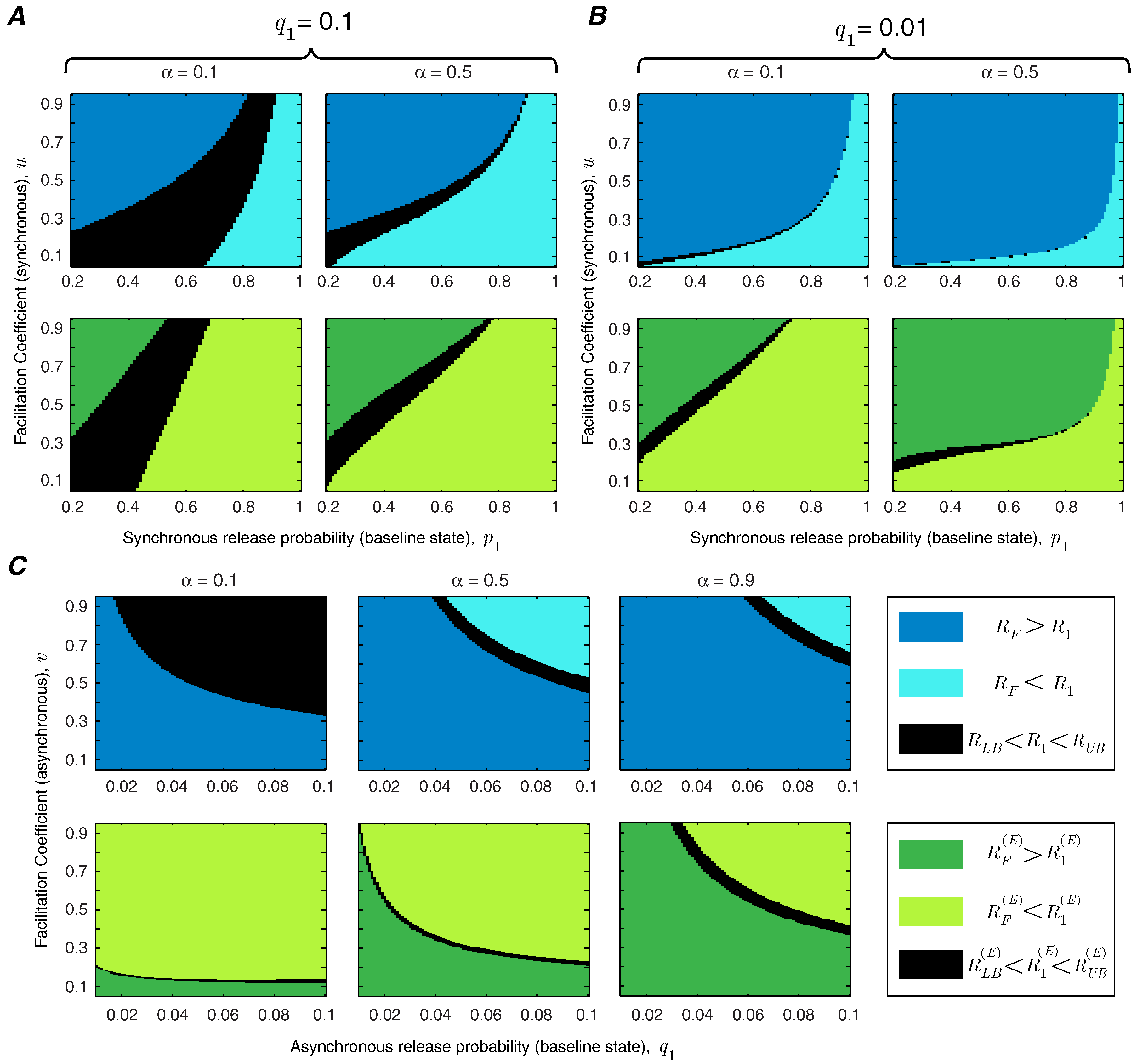

In addition to the facilitation coefficient, the release probability of the synapse in the baseline state plays a critical role in determining the functional role of short-term facilitation. We study the interaction between

u and

in

Figure 4. We show the regime of parameters for which short-term facilitation increases/decreases the mutual information rate and energy-normalized information rate of the synapse. If

(or

), the bounds cannot specify whether facilitation increases or decreases the rate of information transmission (or energy-normalized information rate); these regions are marked in black in

Figure 4. We show that for an unreliable synapse (with small

) and relatively large facilitation coefficient,

u, short-term facilitation increases both mutual information rate and energy-normalized information rate of the synapse, since the enhancement of synchronous release dominates the rise of asynchronous release. Interestingly, it has been observed that for many facilitating synapses the baseline release probability is quite low [

13,

14]. For synapses that are more reliable

a priori, the relative facilitation of asynchronous releases counteracts the improvement in the information rate. In reliable synapses (with higher values of

) and relatively small facilitation coefficients, short-term facilitation not only decreases the energy-normalized information rate of the synapse but also drops the information transmission rate. In addition,

Figure 4 shows that higher input spike rates expand the range of synaptic parameters (

and

u) for which short-term facilitation enhances the rate-energy performance of the synapse.

To study the effect of asynchronous release, we repeat the analysis of

Figure 4 for very high (

) and very low (

) asynchronous release probabilities. Comparing

Figure 5A,B reveals that decreasing the level of asynchronous release expands the range of synchronous release parameters,

u and

, for which short-term facilitation increases the mutual information rate and energy-normalized information rate. We also study the interaction between asynchronous release probability

and the facilitation coefficient of asynchronous release

v, keeping the parameters of synchronous release fixed at

and

(

Figure 5C). To benefit from short-term facilitation, the synapse needs to decrease the release probability and/or the facilitation coefficient of the asynchronous mode of release. For synapses with very high asynchronous release probabilities, short-term facilitation can still boost the information rate and energy-normalized rate of the synapse, provided that the facilitation coefficient of the asynchronous release is small enough. Similar to the results in

Figure 4, by increasing the normalized spike rate, the synapse spends more time in the facilitated state and therefore, the impact of short-term facilitation on rate-energy efficiency of the synapse is enhanced.

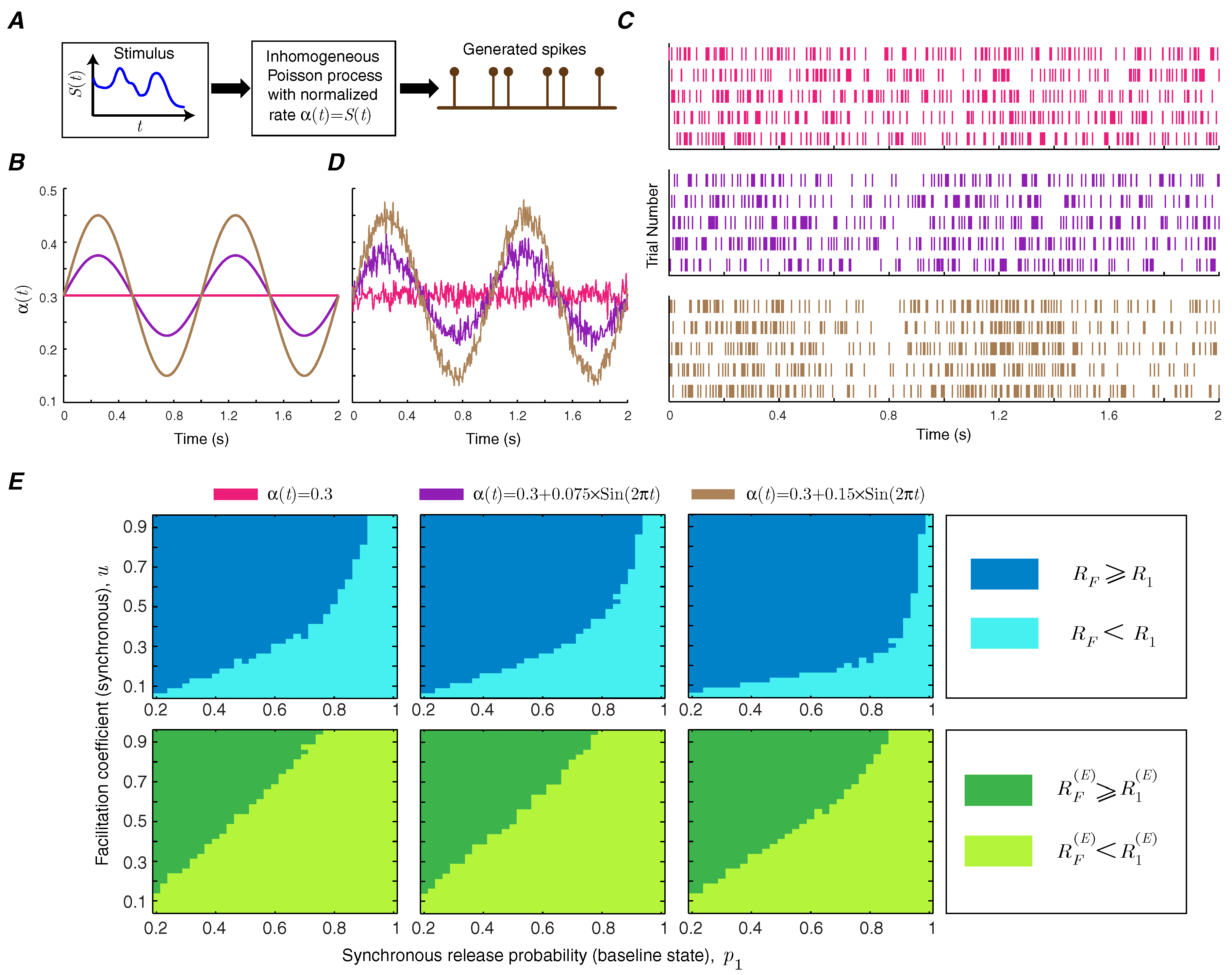

Short-term facilitation creates a memory for the synapse, since the release probability of the synapse depends on the history of spiking activity. It is, therefore, important to study how short-term facilitation modulates information transmission rate in synapses with temporally correlated spike trains by making the Poisson rate of the input spike train time-dependent [

15] (

Figure 6A). We use a sinusoidal rate stimulus with a frequency of 1 Hz on top of a baseline rate (

Figure 6B) and use the context-tree weighting algorithm to numerically estimate the mutual information and energy-normalized information rates of the facilitating synapse [

16]. The amplitude of the sinusoidal signal specifies the level of correlation. The raster plots of the neurons show the synchrony between the spiking activity of the neuron and the sinusoidal instantaneous rate (

Figure 6C). The instantaneous firing rate, averaged over 1000 trials, provides a good estimate of the stimulus (

Figure 6D). The functional classes of short-term facilitation are calculated as a function of baseline release probability and facilitation coefficient of synchronous release for different levels of correlation. This numerical analysis shows that correlations in the presynaptic spike train can slightly enlarge the regions in which short-term facilitation increases the mutual information rate and energy-normalized information rate (

Figure 6E).

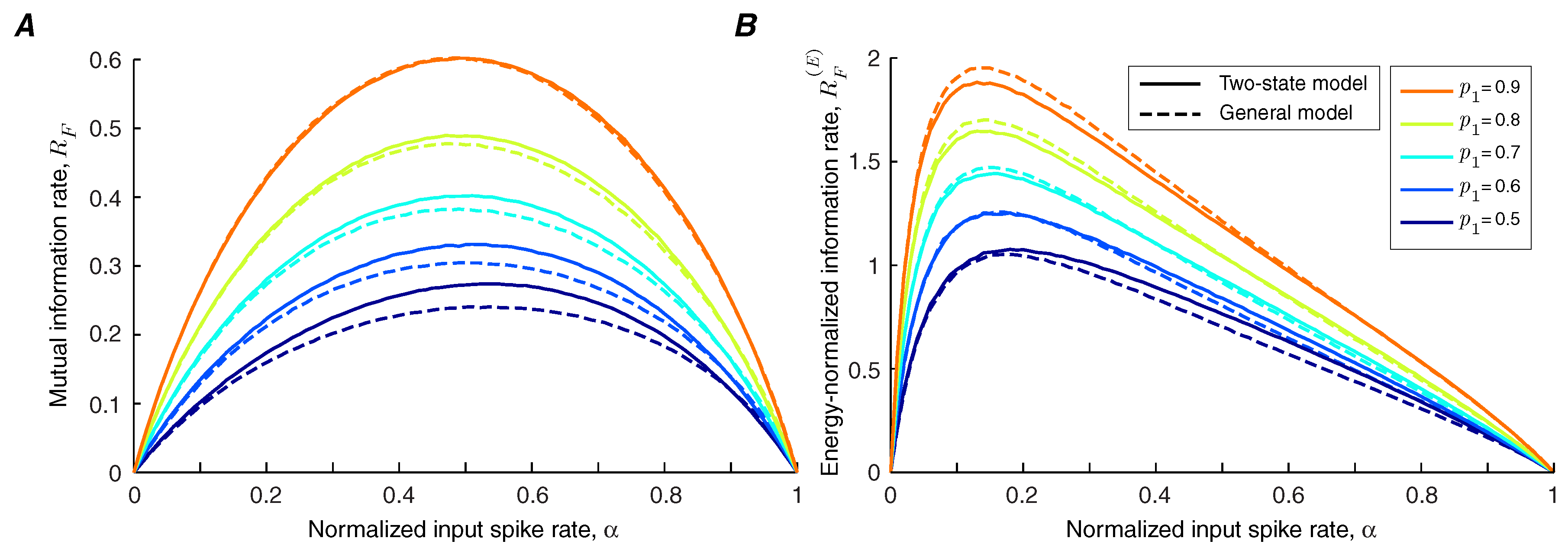

In the general model of facilitation, it is assumed that the state of the synapse at time

i is affected not only by the spiking activity of the presynaptic neuron at time

, but also by the whole history of the spiking events. Synchronous and asynchronous release probabilities converge to the limit probabilities,

and

, exponentially by time constants

and

. The arrival of an action potential at time

i increases the limit probabilities

and

by

and

respectively; the initial values of the limit probabilities are

and

. In the quiescent intervals, the limit probabilities decay to the baseline values,

and

, by facilitation decay time constants,

and

. The numerical methods are used to compare the synaptic information efficacy of the two-state model with the general model of short-term facilitation. We show that the two-state model provides a good approximation for the mutual information rate (

Figure 7A) and energy-normalized information rate (

Figure 7B) of a facilitating synapse, provided that the facilitation decays rapidly. If the facilitation decay time constant is large, similar to the approach in [

12], the parameters of the two-state model can be tuned to provide a better estimation.

4. Discussion

We studied how prior spikes, by facilitating the release of neurotransmitter at a synapse, modulate the rate of synaptic information transfer. Most components of neural hardware are noisy, hybrid analog-digital devices. In particular, the synapse maps quite naturally onto an asymmetric binary channel in communication theory. Some neurons, such as thalamic relay neurons, act as nodes in a network for long-range communication using spikes, so it is natural to quantify the performance of the synapses in bits [

17,

18,

19,

20,

21,

22,

23]. Synaptic information efficacy quantifies the amount of information that the post-synaptic potential contains about the spiking activity of the presynaptic neuron. This analysis, however, does not guarantee that the post-synaptic neuron accesses or uses this information, which rather depends on the biophysical mechanisms of encoding and decoding.

To capture the phenomenon of facilitation, we made the binary asymmetric channel have two states. The resulting model permits the short-term dynamics of synchronous and asynchronous releases to be different, which enabled us to assess the impact of each release mode on the efficacy of synaptic information transfer. We first assumed identical facilitation coefficients for synchronous and asynchronous release (i.e.,

) and demonstrated that the lower bound of information rate of a facilitating synapse is higher than the information rate of a static synapse (

Figure 3A). We were, therefore, able to show that synapses quantifiably transmit more information through short-term facilitation, as long as synchronous and asynchronous release of neurotransmitter obey the same dynamics. Indeed, the increase in information can outweigh the higher energy consumption, as measured by the energy-normalized information rate, provided that synchronous release is facilitated more than asynchronous release. In contrast, when facilitation enhances the asynchronous component of release more strongly than the synchronous component, short-term facilitation would have the opposite effect, namely to decrease synaptic information efficacy.

In previous work, we studied the information transmission in a synapse during short-term depression [

9,

12]. There, the state of the binary asymmetric channel, which models the synapse, depended on the history of the output

. Facilitation, in contrast, depends on the history of the input

. This simple change makes the problem much more challenging mathematically, as it is, in fact, isomorphic to an unsolved problem in information theory, namely the entropy rate of a hidden Markov process [

24]. Nevertheless, the lower bound we derive for a facilitating synapse mirrors the exact result we had derived earlier for short-term depression. Moreover, in practice, this bound is fairly tight.

The bounds derived here are only a first step towards understanding information transmission in facilitating synapses. The two-state binary asymmetric channel simplifies the process of facilitation by making the release probability depend only on the presence or absence of a presynaptic spike at the previous time-point. Yet when the facilitation decays rapidly, the two-state model converges in behavior to a more general model that considers the entire history of spiking.

Our model ignores the possibility of temporal correlations in the presynaptic spike train. Instead, in line with many other studies, the time series of spikes were assumed to obey Poisson statistics [

8,

22,

25]. This simplification made the information-theoretic analysis of the synapse tractable and helped us to derive the upper bound and lower bound of information rate. Different methods have been suggested for modeling correlated spike trains, such as inhomogeneous Poisson processes [

15,

26], autoregressive point processes [

27], and random spike selections from a set of spike trains [

15]. We used an inhomogeneous Poisson process to generate correlated spike trains and estimated the mutual information rate and energy-normalized information rate of the synapse numerically. In the future, it will be of interest to study the effect of correlated input on the information efficacy of the general model of facilitation in which the release probabilities are determined by the whole history of spiking activity.

In this study, we have assumed that in response to an incoming action potential, the release site releases at most one vesicle. To capture multiple releases, the model should be extended to a communication channel with binary input and multiple outputs. The analysis of this channel will reveal the impact of multiple releases on the mutual information rate of a static and dynamic synapse.