Attribute Selection Based on Constraint Gain and Depth Optimal for a Decision Tree

Abstract

1. Introduction

2. Related Work

3. Measure of Uncertainty

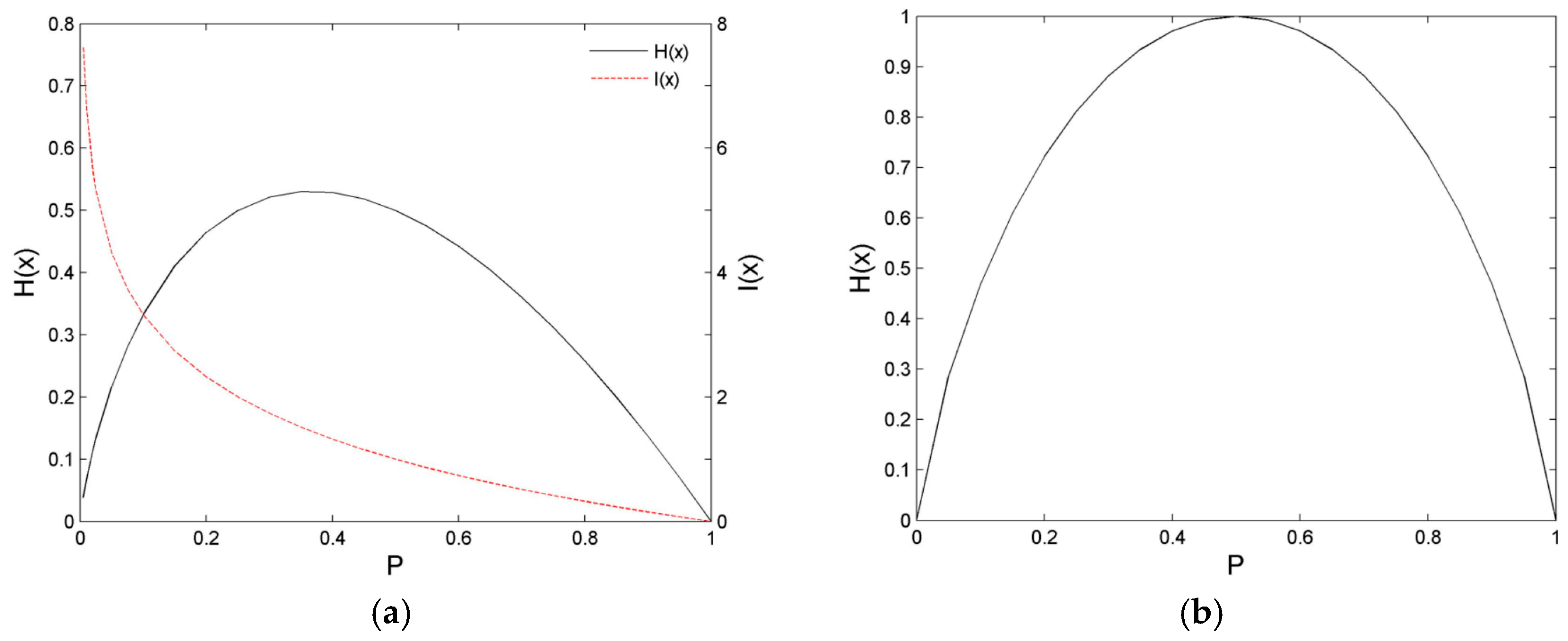

3.1. Analysis of Uncertainty Measure of Entropy

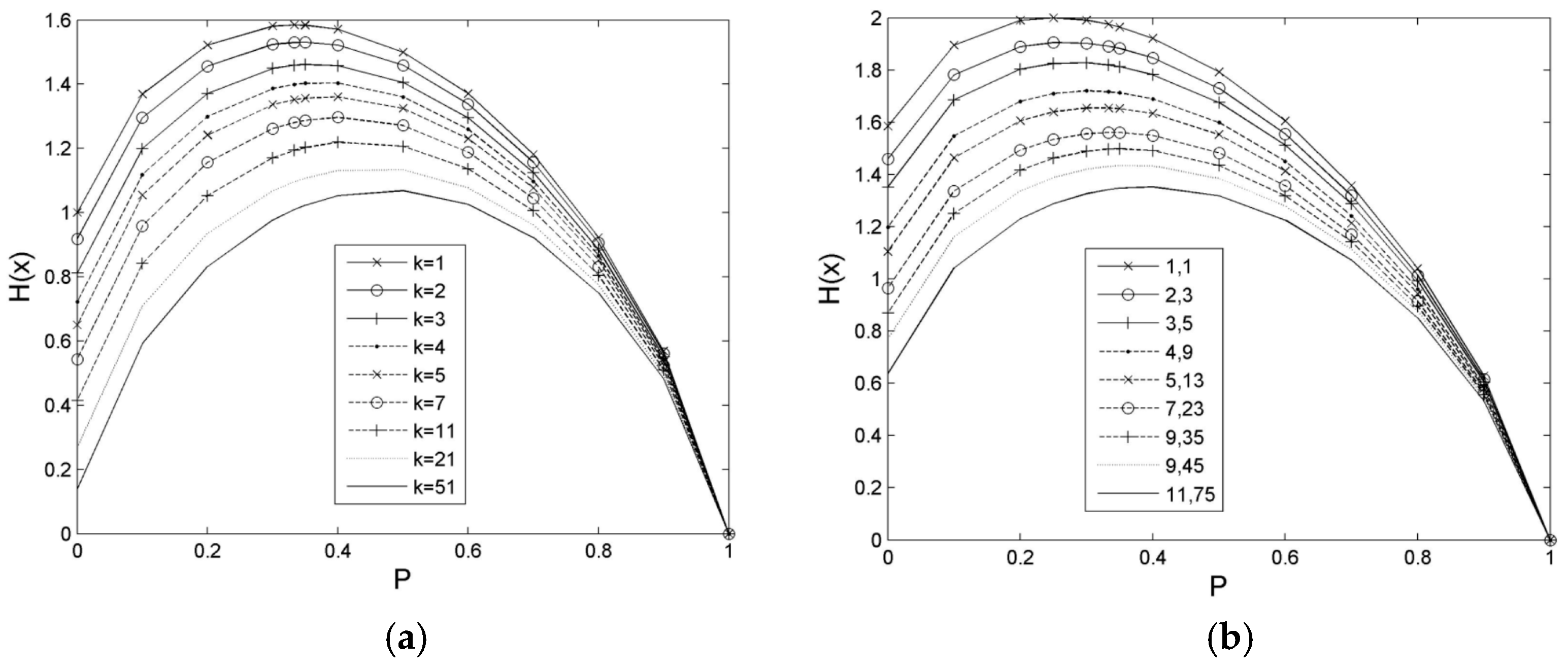

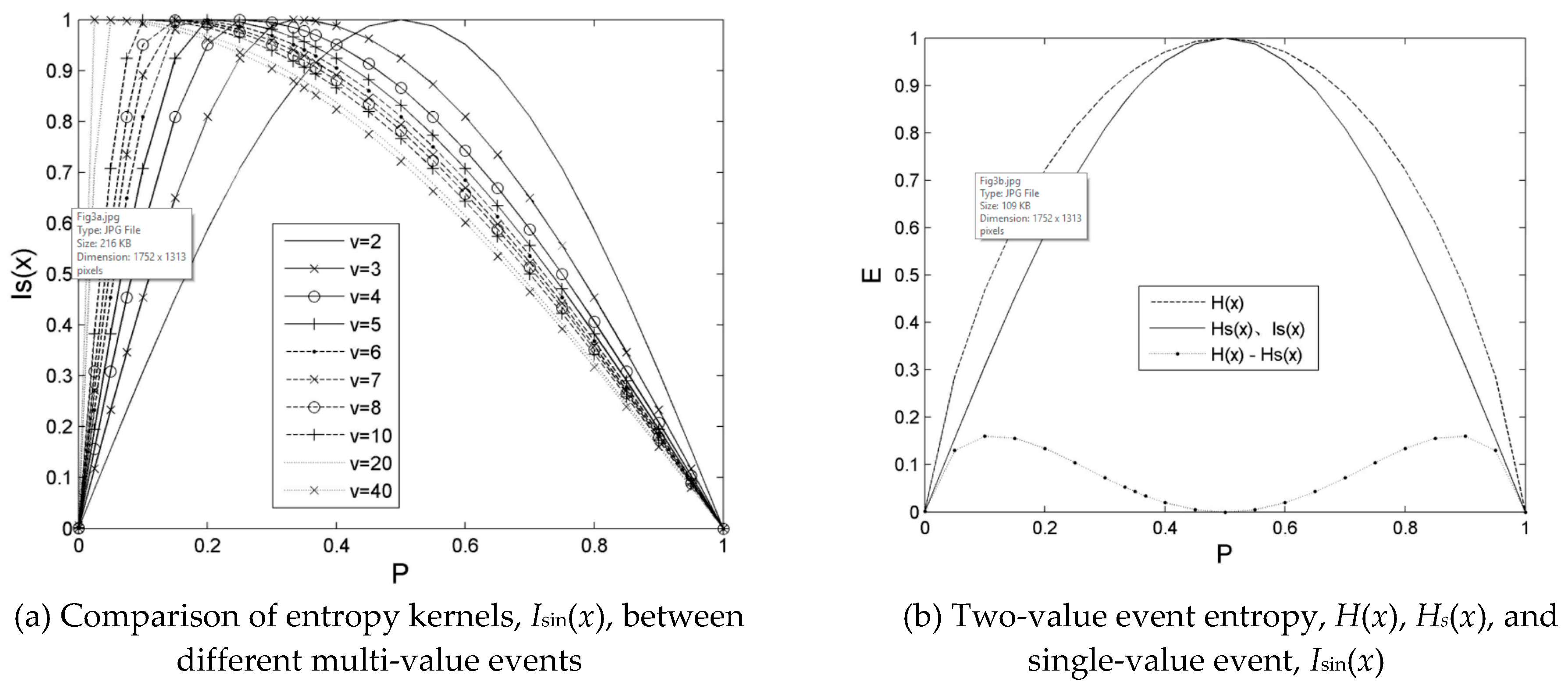

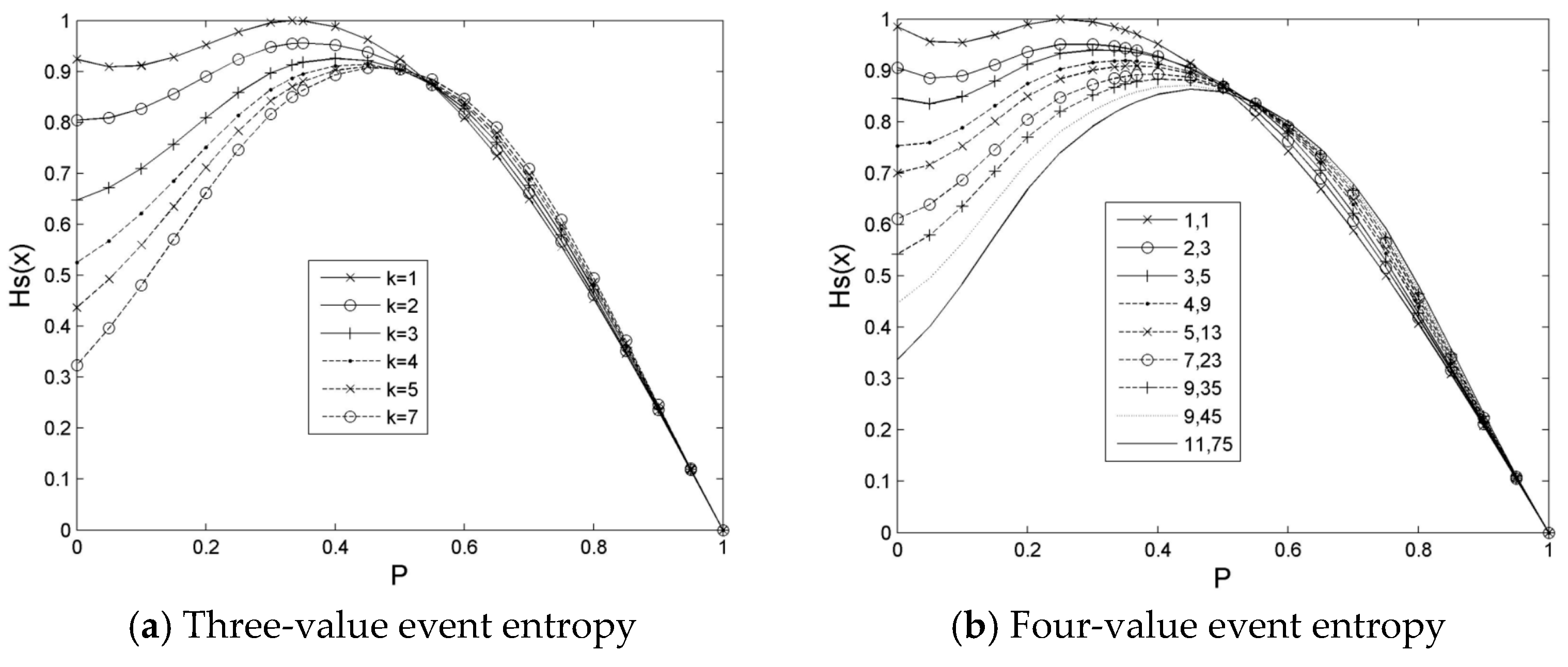

3.2. Definition of Constraint Entropy Estimation Based on Peak-Shift

4. Decision Tree Learning Algorithm Based on Constraint Gain and Depth Optimal

4.1. Evaluation of the Attribute Selection Based on Constraint Gain and Depth Induction

4.2. Learning Algorithm Based on Constraint Gain and Depth Induction for a Decision Tree

| Algorithm 1. The learning algorithm of the constraint gained decision tree, CGDT (S, R). |

| Input: Training dataset, S, which has been filtered and labelled. |

| Output: Output decision tree classifier. |

| Pre-processing: For any sample in the dataset, {Y1, Y2, ..., Yn}: Yi = {X, Ti}, Ti∈C to obtain the discrete training set. |

| Initialization: The training set, S, is used as the initial dataset of the decision tree to establish the root node, R, which corresponds to the tree. |

| 1. If Leaftype(S) = Ck, where Ck∈C and k∈[0, |C|], then label the corresponding node, R, of the sample set, S, as a leaf of the Ck category, and return. |

| 2. Return the valid attribute set of the corresponding dataset, S, of the node: Xe = Effective(S). If Xe is an empty set, then the maximal frequentness class is taken from the S set, and the node is marked as a leaf and is returned. If Xe is only a single attribute set, then this attribute is returned directly as the split attribute of the node. |

| 3. For any attribute, Xi (i∈[0, |Xe|]), in the Xe set, perform calculations on GCE. The attribute of the minimum uncertainty is selected as the split attribute, A*, of the current node, R. |

| 4. The dataset, S, of the current node, R, can be divided into v subsets, {S1, S2..., Sv}, which correspond to the attribute values, {A1, A2, ..., Av} of A*. |

| 5. For i = 1, 2, ..., v v CGDT(Si, Ri). |

| Algorithm 2. Constraint gained and depth inductive decision tree algorithm, CGDIDT (S, R). |

| Input: Training dataset, S, which has been filtered and labelled. |

| Output: Output decision tree classifier. |

| Pre-processing: As Algorithm 1. |

| Initialization: As Algorithm 1. |

| 1. Judge whether the Leaftype(S) = Ck (Ck and S definition is the same as in Algorithm 1), the corresponding node, R, of the sample set, S, is labelled as a leaf of the Ck category when it is true, and return. |

| 2. Return the valid attribute set of the corresponding dataset, S, of the node: Xe = Effective(S). If Xe is an empty set, then the maximum frequency class is taken from the S set. The node is marked as a leaf and is returned. If Xe is only a single attribute set, then return the attribute directly as the split attribute of the node, R. |

| 3. Establish an empty set, H, for the candidate split attributes; firstly, obtain the attribute with the smallest constraint gain, f, from the set, S, that is, f = Min{GCE(Xi), i∈[0, |Xe|]}. Secondly, determine the candidate attributes in which GCE is the same or similar to the minimum value, such as GCE≤(1 + r)f, where r∈[0, 0.5]; these candidate attributes are placed in the set, H. |

| 4. Face the candidate attributes set, H, of the current node, and calculate the depth branch convergence number, Bconv, and depth branch fan-out number, Bdiver, of each attribute. If the attribute with the optimal Bconv is not the same as the GCE minimal attribute in the set, H, then select the attribute of the larger Bconv and smaller Bdiver as the improved attribute. If the attribute obtained the optimal Bconv, and the GCE minimal attribute is the same attribute in the set, H, then the split attribute, A*, selection is all with the GCE minimum evaluation as the preferred attribute selection criteria for the current node and even the subsequent branch node. |

| 5. Divide the dataset, S, of the current node, R, into v subsets, {S1, S2..., Sv}, which correspond to the attribute values, {A1, A2, ..., Av} of A*. |

| 6. For i = 1, 2, ..., v CGDIDT(Si, Ri). |

5. Experiment Results

5.1. Experimental Setup

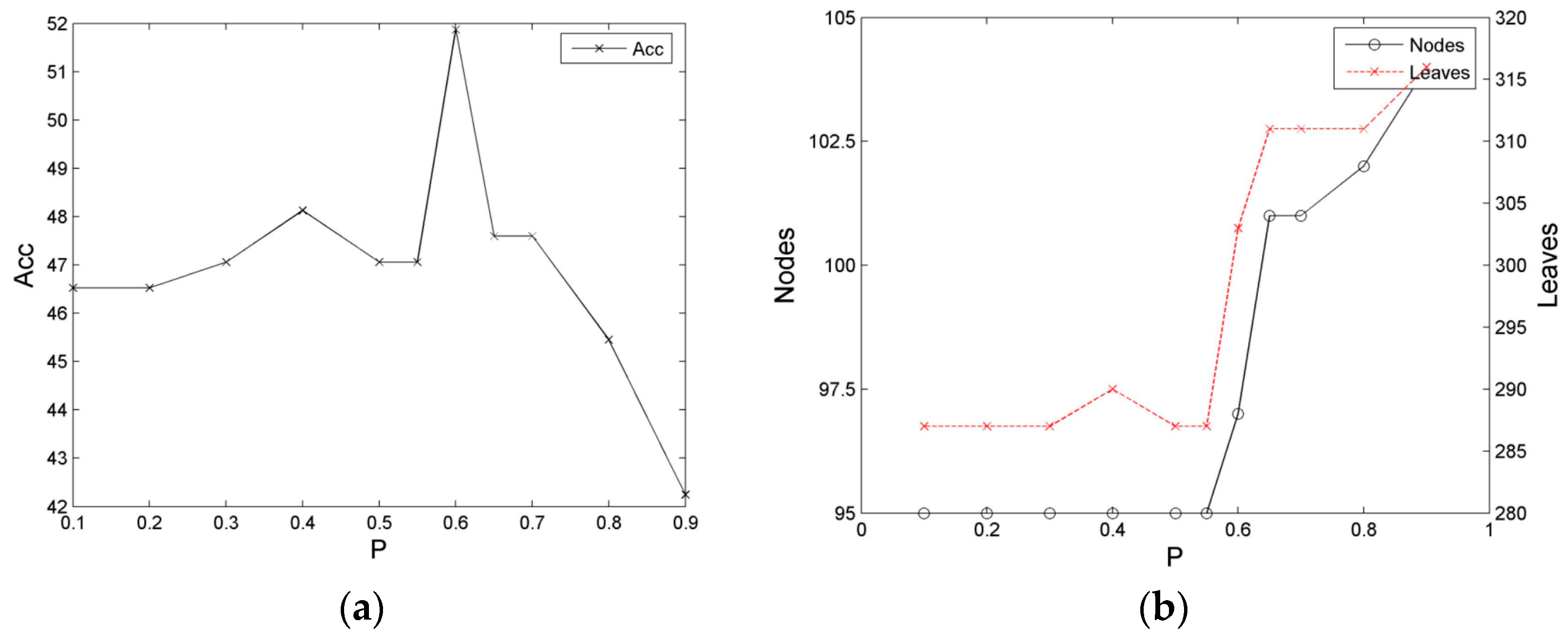

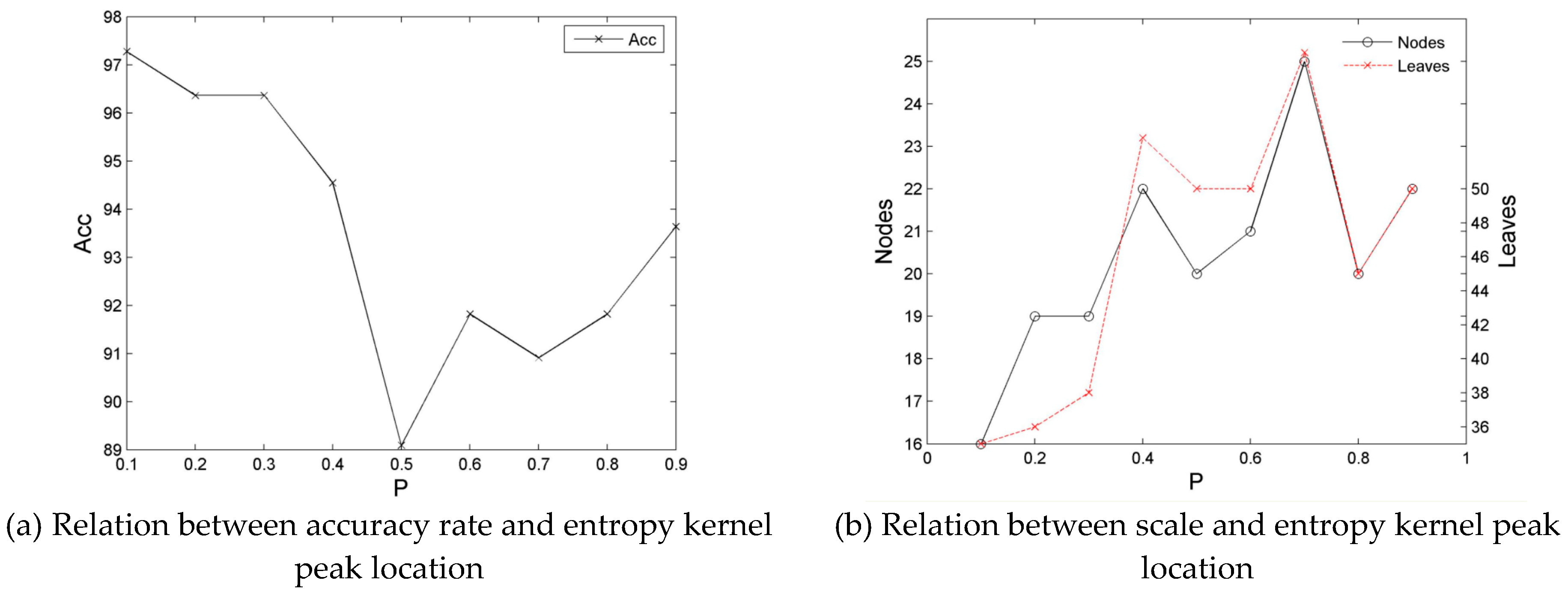

5.2. Influence of the Entropy Peak Shift to Decision Tree Learning

5.3. Effects of Decision Tree Learning Based on Constraint Gain

5.4. Effect of Optimized Learning of Combining Depth Induction

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Lausch, A.; Schmidt, A.; Tischendorf, L. Data mining and linked open data—New perspectives for data analysis in environmental research. Ecol. Model. 2015, 295, 5–17. [Google Scholar] [CrossRef]

- Navarro, J.; Del Moral, R.; Cuestaalvaro, P.; Marijuán, P.C. The Entropy of Laughter: Discriminative Power of Laughter’s Entropy in the Diagnosis of Depression. Entropy 2016, 18, 36. [Google Scholar] [CrossRef]

- Melgaço, B.R.; Ramos, N.L.; Rodolfo, F.; Barbosa, F., Jr. The Use of Decision Trees and Naïve Bayes Algorithms and Trace Element Patterns for Controlling the Authenticity of Free-Range-Pastured Hens’ Eggs. J. Food Sci. 2014, 79, C1672–C1677. [Google Scholar]

- Absy, M.; Metreweli, C.; Matthews, C.; Chan, C.H. Mining unexpected patterns using decision trees and interestingness measures: A case study of endometriosis. Soft Comput. 2015, 60, 1–13. [Google Scholar]

- Daszykowski, M.; Korzen, M.; Krakowska, B.; Fabianczyk, K. Expert system for monitoring the tributyltin content in inland water samples. Chemom. Intell. Lab. Syst. 2015, 149, 123–131. [Google Scholar] [CrossRef]

- Anuradha; Gupta, G. MGI: A New Heuristic for classifying continuous attributes in decision trees. In Proceedings of the International Conference on Computing for Sustainable Global Development, New Delhi, India, 5–7 March 2014; pp. 291–295. [Google Scholar]

- Cazzolato, M.T.; Ribeiro, M.X. Classifying High-Speed Data Streams Using Statistical Decision Trees. J. Inf. Data Manag. 2014, 5, 469–484. [Google Scholar]

- Navada, A.; Ansari, A.N.; Patil, S.; Sonkamble, B.A. Overview of use of decision tree algorithms in machine learning. In Proceedings of the Control and System Graduate Research Colloquium, Shah Alam, Malaysia, 27–28 June 2011; pp. 37–42. [Google Scholar]

- Vink, J.P.; Haan, G.D. Comparison of machine learning techniques for target detection. Artif. Intell. Rev. 2015, 43, 125–139. [Google Scholar] [CrossRef]

- Osei-Bryson, K.M. Overview on decision tree induction. In Advances in Research Methods for Information Systems Research; Springer: Boston, MA, USA, 2014; pp. 15–22. [Google Scholar]

- Bramer, M. Decision Tree Induction: Using Entropy for Attribute Selection. In Principles of Data Mining; Springer: London, UK, 2016; pp. 49–62. [Google Scholar]

- Sathyadevan, S.; Nair, R.R. Comparative Analysis of Decision Tree Algorithms: ID3, C4.5 and Random Forest. In Computational Intelligence in Data Mining-Volume 1; Springer: New Delhi, India, 2015; pp. 549–562. [Google Scholar]

- Last, M.; Roizman, M. Avoiding the Look-Ahead Pathology of Decision Tree Learning. Int. J. Intell. Syst. 2013, 28, 974–987. [Google Scholar] [CrossRef]

- Sun, H.; Hu, X. Attribute selection for decision tree learning with class constraint. Chemom. Intell. Lab. Syst. 2017, 163, 16–23. [Google Scholar] [CrossRef]

- Lee, M.C. Customer Value Evaluation Based on Rough Set with Information Gain and Generate Decision Tree. Br. J. Math. Comput. Sci. 2014, 4, 2123–2136. [Google Scholar] [CrossRef]

- Zhu, F.B.; Huo, X.Q.; Jing, X.U. Improved ID3 decision tree algorithm based on rough set. J. Univ. Light Ind. 2015, 30, 20–54. [Google Scholar]

- Xu, H.; Wang, L.; Gan, W. Application of Improved Decision Tree Method based on Rough in Building Smart Medical Analysis CRM System. Int. J. Smart Home 2016, 10, 251–266. [Google Scholar] [CrossRef]

- Nowozin, S. Improved Information Gain Estimates for Decision Tree Induction. Icml 2012, 23, 1293–1314. [Google Scholar]

- Wang, Y.; Peng, X.; Bian, J. Computer Crime Forensics Based on Improved Decision Tree Algorithm. J. Netw. 2014, 9, 1005–1011. [Google Scholar] [CrossRef]

- Shannon, C.E. A mathematical theory of communication. Univ. Illinois Press 1948, 5, 3–55. [Google Scholar]

- Zhang, Y.; Lu, S.; Zhou, X.; Yang, M.; Wu, L.; Liu, B.; Phillips, P.; Wang, S. Comparison of machine learning methods for stationary wavelet entropy-based multiple sclerosis detection: Decision tree, k-nearest neighbors, and support vector machine. Simulation 2016, 92, 861–871. [Google Scholar] [CrossRef]

- Quinlan, J.R. Induction of decision trees. Mach. Learn. 1986, 1, 81–106. [Google Scholar] [CrossRef]

- Quinlan, J.R. C4.5: Programs for Machine Learning; Morgan Kaufmann Publishers: San Francisco, CA, USA, 1993; ISBN 1-55860-238-0. [Google Scholar]

- Huang, J.-J.; Chen, D.; Li, M.T. Application of decision tree based on rough set in medical diagnosis. Comput. Technol. Devel. 2017, 27, 148–152. [Google Scholar]

- Richman, J.S.; Moorman, J.R. Physiological time-series analysis using approximate entropy and sample entropy. Am. J. Heart Circul. Physiol. 2000, 278, H2039–H2049. [Google Scholar] [CrossRef] [PubMed]

- Yan, R.; Gao, R.X. Approximate Entropy as a diagnostic tool for machine health monitoring. Mech. Syst. Signal Process. 2007, 21, 824–839. [Google Scholar] [CrossRef]

- Sourati, J.; Akcakaya, M.; Dy, J.; Leen, T.K.; Erdogmus, D. Classification Active Learning Based on Mutual Information. Entropy 2016, 18, 51. [Google Scholar] [CrossRef]

- Schurmann, T. Bias Analysis in Entropy Estimation. J. Phys. A Gen. Phys. 2004, 37, 295–301. [Google Scholar] [CrossRef]

- Wang, Y.; Xia, S.T.; Wu, J. A less-greedy two-term Tsallis Entropy Information Metric approach for decision tree classification. Knowl.-Based Syst. 2017, 120, 34–42. [Google Scholar] [CrossRef]

- Nurpratami, I.D.; Sitanggang, I.S. Classification Rules for Hotspot Occurrences Using Spatial Entropy-based Decision Tree Algorithm. Proc. Environ. Sci. 2015, 24, 120–126. [Google Scholar] [CrossRef][Green Version]

- Sivakumar, S.; Venkataraman, S.; Selvaraj, R. Predictive modeling of student dropout indicators in educational data mining using improved decision tree. Ind. J. Sci. Technol. 2016, 9, 1–5. [Google Scholar] [CrossRef]

- Sun, H.; Hu, X. An improved learning algorithm of decision tree based on entropy uncertainty deviation. In Proceedings of the IEEE International Conference on Communication Technology, Chengdu, China, 2 May 2013; pp. 886–890. [Google Scholar]

- Qiu, C.; Jiang, L.; Li, C. Randomly selected decision tree for test-cost sensitive learning. Appl. Soft Comput. 2017, 53, 27–33. [Google Scholar] [CrossRef]

| No. | Dataset | Instances | Attributes | Num (A.v.) Range | Distribution of Attributes (n/v) | Num. of Class values Distr. of Class |

|---|---|---|---|---|---|---|

| 1 | Balance Scale | 625 | 4 | 5~5 | 4/5 | 3{288,49,288} |

| 2 | Breast | 699 | 9 | 9~11 | 1/9, 7/10, 1/11 | 2{458,261} |

| 3 | Dermatology | 366 | 33 | 2~4 | 1/(2,3), 31/4 | 6{112,61,72,49,52,20} |

| 4 | Tic-Tac-Toe | 958 | 9 | 3~3 | 9/3 | 2{626,332} |

| 5 | Voting | 232 | 16 | 2~2 | 16/2 | 2{124,108} |

| 6 | Mushroom | 8124 | 22 | 1~12 | 1/(1,7,10,12), 5/2, 4/4, 2/5, 2/6, 3/9 | 2{4208,3916} |

| 7 | Promoters | 106 | 57 | 4~4 | 57/4 | 2{53,53} |

| 8 | Zoo | 101 | 18 | 2~6 | 15/2, 1/6 | 7{41,20,5,13,4,8,10} |

| 9 | Monks1 * | 124+308 | 6 | 2~4 | 2/2, 3/3, 1/4 | 2{62,62} |

| 10 | Monks2 * | 169+263 | 6 | 2~4 | 2/2, 3/3, 1/4 | 2{105,64} |

| 11 | Monks3 * | 122+310 | 6 | 2~4 | 2/2, 3/3, 1/4 | 2{62,60} |

| Dataset | Method | Size | Ns | Ls | Acc |

|---|---|---|---|---|---|

| Balance Scale | Gain | 388 | 97 | 291 | 43.8503 |

| Gainratio | 393 | 98 | 295 | 43.8503 | |

| GCE (CGDT) | 382 | 95 | 287 | 46.5241 | |

| Breast | Gain | 78 | 13 | 65 | 89.0476 |

| Gainratio | 107 | 19 | 88 | 86.6667 | |

| GCE (CGDT) | 87 | 16 | 71 | 87.1429 | |

| Dermatology | Gain | 64 | 20 | 44 | 93.6364 |

| Gainratio | 159 | 51 | 108 | 72.7273 | |

| GCE (CGDT) | 58 | 20 | 38 | 96.3636 | |

| Tic-Tac-Toe | Gain | 255 | 92 | 163 | 81.1847 |

| Gainratio | 418 | 157 | 261 | 69.6864 | |

| GCE (CGDT) | 269 | 98 | 171 | 83.6237 | |

| Voting | Gain | 27 | 13 | 14 | 94.3662 |

| Gainratio | 29 | 14 | 15 | 97.1831 | |

| GCE (CGDT) | 27 | 13 | 14 | 95.7747 | |

| Mushroom | Gain | 29 | 5 | 24 | 100 |

| Gainratio | 45 | 9 | 36 | 100 | |

| GCE (CGDT) | 35 | 6 | 29 | 100 | |

| Mushroom ** | Gain | 30 | 5 | 25 | 99.8031 |

| Gainratio | 48 | 9 | 39 | 99.8031 | |

| GCE (CGDT) | 34 | 6 | 28 | 99.9508 | |

| Promoters | Gain | 30 | 8 | 22 | 71.8750 |

| Gainratio | 30 | 8 | 22 | 68.7500 | |

| GCE (CGDT) | 29 | 8 | 21 | 75.0000 | |

| Zoo | Gain | 23 | 9 | 14 | 93.3333 |

| Gainratio | 23 | 9 | 14 | 90.0000 | |

| GCE (CGDT) | 19 | 7 | 12 | 100 | |

| Monks1 * | Gain | 58 | 21 | 37 | 77.5974 |

| Gainratio | 52 | 19 | 33 | 83.1169 | |

| GCE (CGDT) | 58 | 21 | 37 | 78.2468 | |

| Monks2 * | Gain | 109 | 43 | 66 | 53.6122 |

| Gainratio | 116 | 48 | 68 | 55.1331 | |

| GCE (CGDT) | 108 | 42 | 66 | 55.8935 | |

| Monks3 * | Gain | 27 | 11 | 19 | 90.3226 |

| Gainratio | 25 | 11 | 21 | 94.1935 | |

| GCE (CGDT) | 35 | 11 | 19 | 90.3226 | |

| Gain | 98.9091 | 29.9091 | 69 | 80.8023 | |

| Average | Gainratio | 127 | 40 | 87 | 78.3007 |

| GCE (CGDT) | 100.6364 | 30.6364 | 70 | 82.6265 |

| Dataset | Method | Size | Acc | Cov | F-Measure |

|---|---|---|---|---|---|

| Balance Scale | ID3 | 486 | 43.8503 | 47.5936 | 0.5942 |

| J48(C4.5) | 21 | 67.9144 | 100 | 0.6791 | |

| CGDIDT | 382 | 46.5241 | 49.7326 | 0.6214 | |

| Breast | ID3 | 135 | 89.0476 | 94.7619 | 0.9144 |

| J48(C4.5) | 32 | 92.8571 | 100 | 0.9286 | |

| CGDIDT | 100 | 91.4286 | 97.1429 | 0.9275 | |

| Dermatology | ID3 | 81 | 93.5780 | 98.1651 | 0.9444 |

| J48(C4.5) | 25 | 95.4128 | 100 | 0.9541 | |

| CGDIDT | 66 | 98.1818 | 100 | 0.9818 | |

| Tic-Tac-Toe | ID3 | 276 | 81.1847 | 97.2125 | 0.8233 |

| J48(C4.5) | 100 | 83.9721 | 100 | 0.8397 | |

| CGDIDT | 243 | 87.4564 | 97.9094 | 0.8838 | |

| Voting | ID3 | 27 | 94.3662 | 100 | 0.9437 |

| J48(C4.5) | 3 | 97.1831 | 100 | 0.9718 | |

| CGDIDT | 23 | 95.7747 | 100 | 0.9577 | |

| Mushroom | ID3 | 38 | 100 | 100 | 1.0000 |

| J48(C4.5) | 31 | 100 | 100 | 1.0000 | |

| CGDIDT | 28 | 100 | 100 | 1.0000 | |

| Mushroom ** | ID3 | 34 | 99.8031 | 100 | 0.9980 |

| J48(C4.5) | 29 | 100 | 100 | 1.0000 | |

| CGDIDT | 27 | 100 | 100 | 1.0000 | |

| Promoters | ID3 | 33 | 71.8750 | 96.8750 | 0.7302 |

| J48(C4.5) | 17 | 68.7500 | 100 | 0.6875 | |

| CGDIDT | 32 | 75.0000 | 96.8750 | 0.7619 | |

| Zoo | ID3 | 23 | 93.3333 | 93.3333 | 0.9655 |

| J48(C4.5) | 17 | 90.0000 | 90.0000 | 0.9474 | |

| CGDIDT | 19 | 100 | 100 | 1.0000 | |

| Monks1 * | ID3 | 64 | 77.5974 | 88.6364 | 0.8227 |

| J48(C4.5) | 10 | 72.7273 | 100 | 0.7273 | |

| CGDIDT | 39 | 92.2078 | 92.2078 | 0.9595 | |

| Monks2 * | ID3 | 103 | 51.3308 | 95.4373 | 0.5253 |

| J48(C4.5) | 22 | 57.0342 | 100 | 0.5703 | |

| CGDIDT | 108 | 56.2738 | 96.1977 | 0.5736 | |

| Monks3 * | ID3 | 32 | 90.3226 | 94.1935 | 0.9302 |

| J48(C4.5) | 12 | 96.7742 | 100 | 0.9677 | |

| CGDIDT | 32 | 95.4839 | 98.7097 | 0.9610 | |

| ID3 | 118 | 80.5896 | 91.4735 | 0.8358 | |

| Average | J48(C4.5) | 26.3636 | 83.8750 | 99.0909 | 0.8430 |

| CGDIDT | 97.4545 | 85.3028 | 93.5250 | 0.8753 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sun, H.; Hu, X.; Zhang, Y. Attribute Selection Based on Constraint Gain and Depth Optimal for a Decision Tree. Entropy 2019, 21, 198. https://doi.org/10.3390/e21020198

Sun H, Hu X, Zhang Y. Attribute Selection Based on Constraint Gain and Depth Optimal for a Decision Tree. Entropy. 2019; 21(2):198. https://doi.org/10.3390/e21020198

Chicago/Turabian StyleSun, Huaining, Xuegang Hu, and Yuhong Zhang. 2019. "Attribute Selection Based on Constraint Gain and Depth Optimal for a Decision Tree" Entropy 21, no. 2: 198. https://doi.org/10.3390/e21020198

APA StyleSun, H., Hu, X., & Zhang, Y. (2019). Attribute Selection Based on Constraint Gain and Depth Optimal for a Decision Tree. Entropy, 21(2), 198. https://doi.org/10.3390/e21020198