1. Introduction

In the last 30 years or so, the concept of anomalous diffusion has been widely adopted to deal with processes, ranging from biology to sociology, departing from the conditions of thermodynamic equilibrium that the Boltzmann principle [

1] establishes for physical processes. For instance, in 1992, Peng et al. [

2] introduced the concept of DNA walks that became a very popular way to study fluctuations of biological processes. They studied nucleotide sequences and assigned the symbol 1 to purines and the symbol

to pyrimidines. The position of a nucleotide is thought of as a time and the random walker at that time makes a step forward or backward according to whether the nucleotide is a purine or a pyrimidine. Since a nucleotide sequence is unique and it is not possible to adopt the conventional Gibbs prescription of making averages over many identical copies, the authors of this important paper adopted the method of a moving window of size

l. The window moves along the nucleotide sequence and the observer records the space traveled by the walker in the “time” interval

l, namely the distance from the position of the walker at time

l to the position she had at the beginning of the window, assumed to be zero. In the case of random walk the fluctuations from 1 to

and back are totally uncorrelated and the resulting scaling

is equal to

. These authors found that the scaling is larger than

, thereby suggesting that the DNA nucleotides are correlated; or, said differently, the random walk is persistent.

What is the origin of this anomalous behavior? A widely shared conjecture is that the source of this correlation is properly described by means of the Fractional Brownian Motion (FBM) proposed by Mandelbrot [

3]. This is a generalization of ordinary Brownian diffusion, yielding the following relation

where

H is the symbol adopted by Mandelbrot to denote scaling. Ordinary Brownian motion is a singularity of this formula, corresponding to

. This relation implies that FBM has memory of the infinitely distant past, since no limit is set on the magnitude of

t. However, it has been noticed [

4] that if we go beyond this mathematical formalism and adopt a dynamical derivation of FBM from the traditional diffusion equation

yielding in the integral

we obtain the auto-correlation function

where

is the stationary auto-correlation function

. With a proper choice of this correlation function, setting

and sending

t,

and

to ∞ has the effect of recovering Equation (

1). For this reason, we define this form of infinite memory as Stationary Fractional Brownian Motion (SFBM).

Herein we adopt the symbol H to denote the SFBM scaling and the symbol to denote the scaling of a differently described form of anomalous diffusion process.

The technological progress allowing the observation of the diffusion of single molecules in biological cells has attracted the general interest for a form of fractional diffusion that we term Aging Fractional Brownian Motion (AFBM) to stress its non-stationary nature [

5]. The non-stationarity of this form of diffusion is not a consequence of the physical rules behind diffusion changing with time. However, rather, depends on the occurrence of crucial events that are responsible for the breakdown of ergodicity.

A good way to introduce the readers to crucial events is by adopting the engineering language of [

6], which defines the age dependent failure rate

through

where

is the survival probability, namely the probability that a machine keeps working for a time interval

t from the time at which it was created. Let us imagine that a team of engineers acts the moment a machine fails. They instantaneously correct the machine’s ill-functioning making it brand new. This has the effect of extending the working life of the machine to the next failure, when it will again require the instantaneous action of the team of engineers. The time distance between two consecutive failures has the waiting time distribution density

Assuming that

decays in time as

, namely at the limit of integrability, and more precisely according to

when inserted into Equation (

7) and integrating yields

where

and

. Equation (

9) readily yields the waiting time distribution density given by

We define the occurrence of these failures as the crucial events when the inverse power index

fits the condition

. We note that the average waiting time, defined using Equation (

10) is given by, when

,

To stress the non-stationary nature of this process, in the whole interval

, as well as in the sub-interval

, where the ergodicity breaking is made evident by the divergence of

, let us assume that the laminar region between consecutive crucial events is filled with either 1 or

, according to a coin tossing procedure. In this case, if we observe the process with the mobile window prescribed by the authors of Reference [

2], with the constraint of locating the beginning of the window where we see an abrupt transition from 1 to

or from

to 1, we find

We adopt the symbol

, rather than

, to stress the adoption of the mobile window to evaluate the brand-new survival probability. This is a consequence of the fact that when the window of length

l overlaps more than one laminar region, different laminar regions may have opposite signs making the survival probability

vanish.

If we do not adopt the above constraint and we move the left end of laminar region in a continuous way along the sequence,

becomes the equilibrium correlation function defined by [

7]:

Using Equation (

11) and inserting Equation (

10) under the integral, direct integration yields

This is a result of the non-stationary nature of this process that as effect of aging changes the power index

of Equation (

12) into

. If we try to interpret this result by using SFBM we are immediately led to make the conjecture that the scaling index of Mandelbrot

H fluctuates. In fact, the stationary correlation function

yielding the surprisingly extended memory of Equation (

1) has an inverse power law (IPL) tail with index

related to

H by

Consequently, if we identify the exponents of Equation (

12) and Equation (

14) with a non-stationary

, we get the non-stationary

H given by

which, for instance, in the case

, would change from

(

) to

(

).

The main purpose of the present paper is to propose an entropic approach to the analysis of time series that will establish if the anomalous diffusion emerging from the use of the mobile window of Reference [

2] is due to the Mandelbrot infinite memory or to crucial events. We also posit suggestions to establish if both sources of anomalous diffusion are jointly acting on the complex process under study. We show that this entropic approach to the analysis of physiological data, on the dynamics of heart and the brain, settles the ambiguity about the

noise generated by these physiological processes leading to the conclusion that they are driven by crucial events.

The outline of the present paper is as follows. In

Section 2 we show that the observation of

noise does not afford a clear-cut criterion to establish whether SFBM or AFBM applies. In

Section 3 we illustrate the entropy concepts that are used in this paper to detect crucial events, by assessing if the experimental signal under analysis is a SFBM or an AFBM.

Section 4 illustrates the main result of this paper, namely, how to prove that an anomalous scaling is a manifestation of crucial events.

Section 5 affords detail on the method of stripes. In

Section 6 we discuss the consequences of the main results of this paper for the dynamics of heart and the brain. Finally, in

Section 7 we argue that the results of this paper go much beyond the limit of a single discipline and can be beneficial for psychology and sociology as well as for physiology and biology.

3. Entropy Concept

The concept of entropy has a thermodynamic origin and is closely connected to the second law of thermodynamics. The well-known expression [

1]

can be fruitfully used to explain why the free expansion of a gas in a container is an irreversible process [

13]. However, the attempts to describe the time evolution towards equilibrium resting on the Gibbs entropy forced investigators to adopt the concept of a Gibbs ensemble average and, consequently, to assume ergodicity [

14], namely that an average over infinitely many copies of the same system is identical to averaging a single system of the ensemble over time. At the same time, these attempts led the investigators to assume that chaos is an important ingredient to generate an irreversible transition to equilibrium, even if chaos may not be completely random [

15]. The main problem of reconciling the second law of thermodynamics and irreversibility with the reversible nature of both classical and quantum mechanics led the investigators to establish a connection between the second law and information theory [

16], as explained in the work of Landauer [

17] and in the more recent work of Reference [

18]. This reconciliation attempt becomes even harder when we move from quantum physics to the second law insofar as it raises the still unresolved problem of deriving classical from quantum physics [

19]. These contributions to the field of non-equilibrium statistical physics, although generating fruitful applications, are based on the Gibbs ensemble perspective and consequently do not shed light into the dynamics of the individual systems of a statistical ensemble, if no recourse is done to the ergodicity assumption.

In the last ten years, however, increasing attention has been devoted to the ergodicity breaking, in two different fields of research. The first field of investigation is molecular diffusion, with the tracking of single molecules in living cells [

5], making it possible to do time averages over the motion of a single molecule, and the latter is the field of complex networks, where the discovery of cooperation-induced criticality has proven to yield temporal complexity, namely non-ergodic fluctuations of the complex network’s mean field [

20]. A reasonable conjecture currently being made is that in both cases non-ergodic behavior is a signature of the transition from a non-cooperative to a self-organized state [

21]. The brain is an example of a complex system of this kind and its non-ergodic nature raises the important question of how to measure its complexity using time averages, or, equivalently, how to define the entropy of a single trajectory. We therefore explore the important issue of Kolmogorov complexity [

22], which is expected to shed light into the entropy of a single time series and thus on the entropy of a single trajectory, which is called Kolmogorov-Sinai (KS) entropy. We discuss the joint use of compression and diffusion [

23], two methods of analyzing of time series based on the KS entropy. While the former procedure establishes the amount of order by the numerical evaluation of algorithmic compressibility of a time series, the latter assesses the amount of order by forcing the time series to generate diffusion, the scaling of which is sensitive to the deviation from randomness.

We assume that complexity is generated by the occurrence of renewal non-Poisson processes, and on the basis of this assumption we propose an approach to calculating the entropy of a single trajectory based on the theoretical perspective of a continuous random walk [

24]. Randomness is a property of events occurring in the operational time

, related to the clock time

t by the relation

. This observation leads to a generalization of the Pesin’s identity [

25] that we propose to adopt to define the entropic complexity of non-ergodic trajectories. We conclude this section arguing that non-ergodic fluctuations may be incompressible despite their vanishing Lyapunov exponent and we discuss to what extent this theoretical perspective may afford a useful technique of analysis of non-stationary time series.

3.1. External Entropy

The authors of [

26] shed light on the paradoxical macroscopic effects generated by chaotic trajectories exploring regions with different Lyapunov coefficients [

15] used the concept of external entropy that was later adopted in Reference [

23] to discuss the Kolmogorov complexity in terms of compression and diffusion. To evaluate external entropy for non-ergodic trajectories, we can benefit from the time series generated by the idealized Manneville map [

23]. Imagine a particle moving in the interval

from an initial condition

, with uniform probability, according to the dynamical prescription

with

and

. The time

necessary to arrive at the border

moving from an initial value

is given by

with

When the particle reaches the border

it is injected back to a new initial position by randomly selecting a new number

y. It is important to notice that the waiting time probability distribution function (PDF)

separating consecutive activations of the external entropy has the same analytical form as the waiting time PDF

of Equation (

10). Notice that when

, we recover the mean waiting time given by Equation (

11) with

Please note that the limiting case

is realized by replacing the procedure of Equation (

31) with

which is easily shown to generate the Poisson waiting time PDF

The main remaining question is whether DEA can properly address the condition , which is characterized by perennial ergodicity breaking.

The authors of Reference [

27] proved that the Kolmogorov-Sinai entropy,

, reads:

where, according to Equation (

32),

The land of crucial events ranges from

to

, with

vanishing from

to

. We can recover the proposal of Korabel and Barkai [

25] by the following conjectures on the computational cost. The computation cost, in the case

increases linearly in time, while in the case

it increases as

, with

In fact, the number of random drawings

n of the initial condition

y is

for

. This is the condition making it possible to realize a Lévy walk [

28,

29]. In the case

, as earlier mentioned, the number of random drawings is

This simple heuristic prediction has the effect of defining the incompressibility of the time series also for

[

25]. In fact, adopting the generalized form of KS entropy proposed by Korabel and Barkai [

25], based on observing that for

, it is necessary to use a new definition of time, taking into account the transition from

n proportional to

t to

n proportional to

, a singularity appears at

. The generalized

of Korabel and Barkai [

25] is a decreasing function of

z for

and it becomes an increasing function of

z for

. These intuitive arguments can be used to attract attention to the rigorous work of References [

30,

31,

32,

33,

34]. Here we limit ourselves to point out that informational compressibility implies the existence of a message carried by the time series, thereby implying a connection with cognition.

We emphasize that according to the statistical analysis of the brain in the awake condition [

35] the brain is controlled by an AFBM process with

. This means that the brain operates at the border between the perennial aging condition,

, and the temporary lack of stationarity condition,

. The observation of

noise does not make it possible to realize the singularity of this condition because the IPL index

of the spectrum obeys Equation (

28), making the spectrum become that of an ideal

noise at

, with

moving in a continuous way from values slightly larger than 1 to values slightly smaller. The singularity of this condition, made evident by the entropic analysis of Korabel and Barkai, should be properly taken into account by the analysis of real physiological data.

3.2. Entropic Treatment of the Scale Detection Issue

The existence of a message with a meaning motivates us to move from the Boltzmann to the Wiener/Shannon entropy. Let us imagine that we have a sequence of events and that all of them are crucial. We follow the prescription of Reference [

12]. For any crucial event, the random walker makes a step ahead by the fixed quantity 1 and thereby builds up a diffusion trajectory

. We then observe this trajectory with the moving window of size

l so as to generate the histogram of the PDF

. According to the generalized central limit theorem [

12] we obtain

where the power law index is

if

, and becomes

if

. Finally, we have

if

.

It is important to stress that these rules in the anomalous case generate the asymmetric Lévy diffusion [

12].

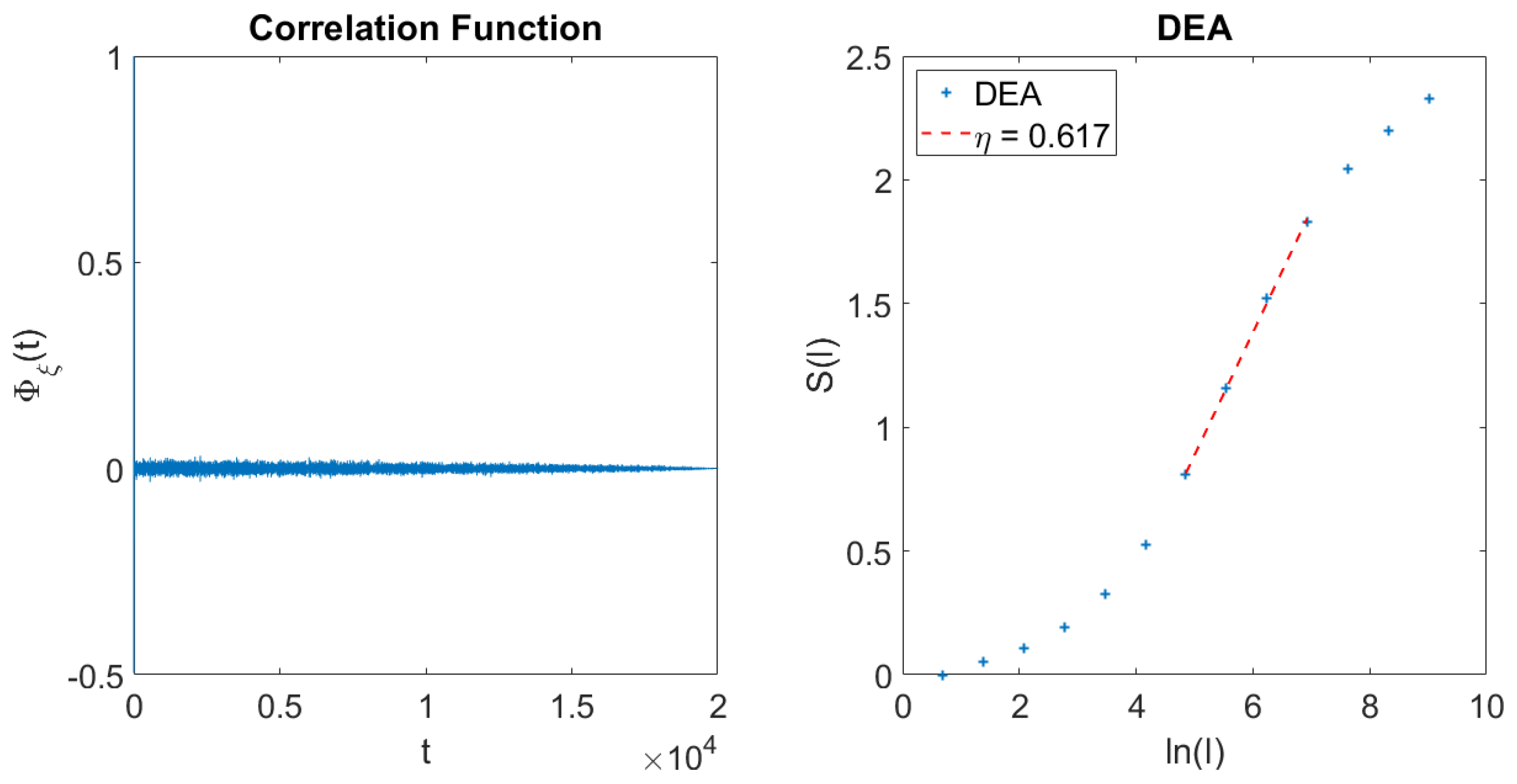

To appreciate the main result of this paper, namely, how a refined version of DEA makes it possible to distinguish AFBM from SFBM, it is convenient to discuss DEA in action on an experimental signal

, when we do not know if the single fluctuations are crucial or not crucial. Let us consider, for instance, the case when the laminar regions between two consecutive crucial events are filled with either 1’s or

’s, with a coin tossing prescription, and

. This is the celebrated Lévy walk [

28,

29]. We create the diffusion trajectory

We then observe this trajectory with the mobile window of size

l. We evaluate the difference between the value of

at the end of the window and the value of

at the beginning of the window. This allows us to create the probability density function

In this case, the scaling

is identical to that of the asymmetric Lévy scaling of Equation (

43).

Let us also discuss the case where the signal does not host any crucial event. If

is a generator of FBM the observation of the trajectory of Equation (

45) with the method of the mobile window of length

l yields the probability density function

The main problem with the use of the mobile window of size

l [

2] is that in the case of crucial events, Equation (

41), the long, slow tails of the PDF make their second moment divergent, and the scaling evaluation is affected by the numerical truncation that cannot be easily controlled. The second moment technique works in the case of SFBM because in this case the PDFs are Gaussian, with fast exponential tails.

This difference in the second moment is the reason the adoption of DEA [

11,

12] turns to be very convenient. In fact, DEA lead us to evaluate the Wiener/Shannon entropy of the diffusion process, namely

Inserting Equation (

41) into Equation (

48) yields

where A is a constant. When we insert Equation (

47) into Equation (

48) and integrate, we obtain

where

B is a different constant from A. The scaling parameter

is properly defined even if the probability density function of Equation (

41) has a diverging second moment. The DEA does perceive the correct scaling

even if the long-time limit leads to numerical statistical inaccuracy, since the crucial events are rare in this limit. However, this use of DEA does not allow us to assess if we are dealing with a SFBM or an AFBM phenomenon. In fact, if

it is impossible to establish with the use of DEA alone if we are observing super-diffusion generated by SFBM or by AFBM.

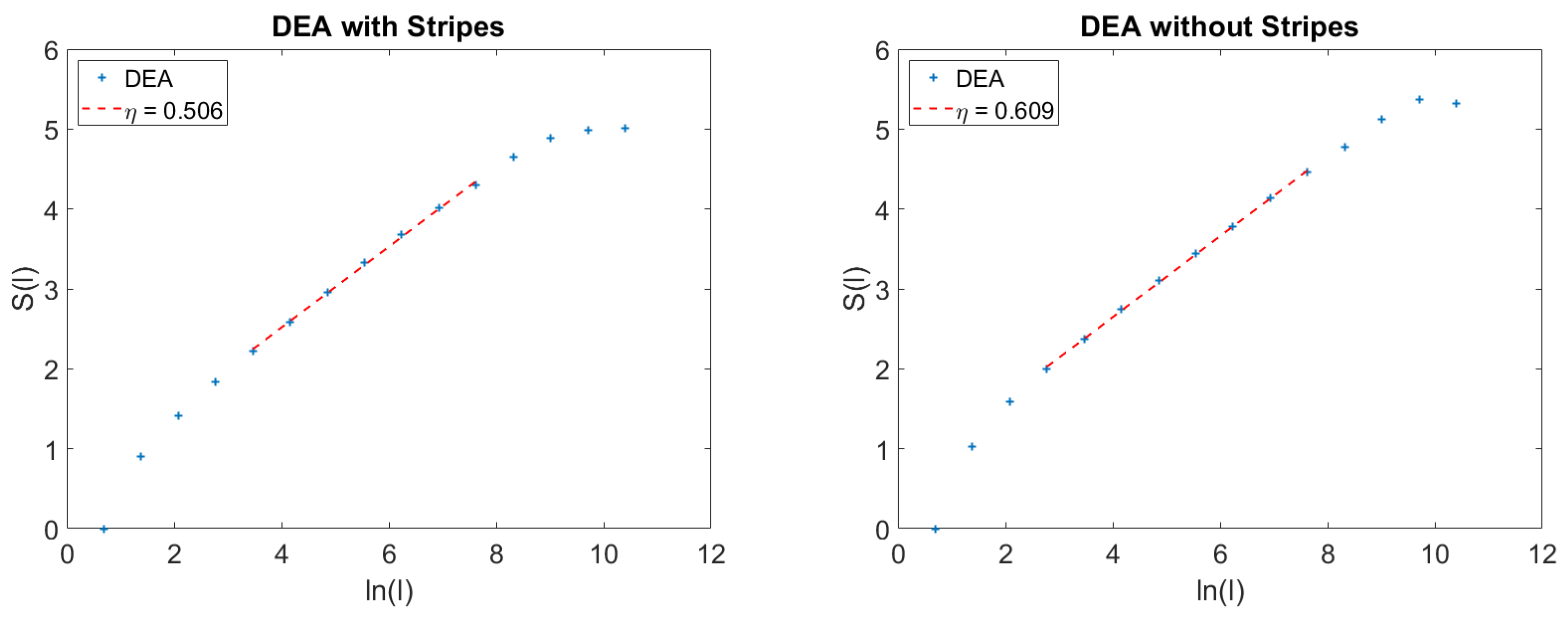

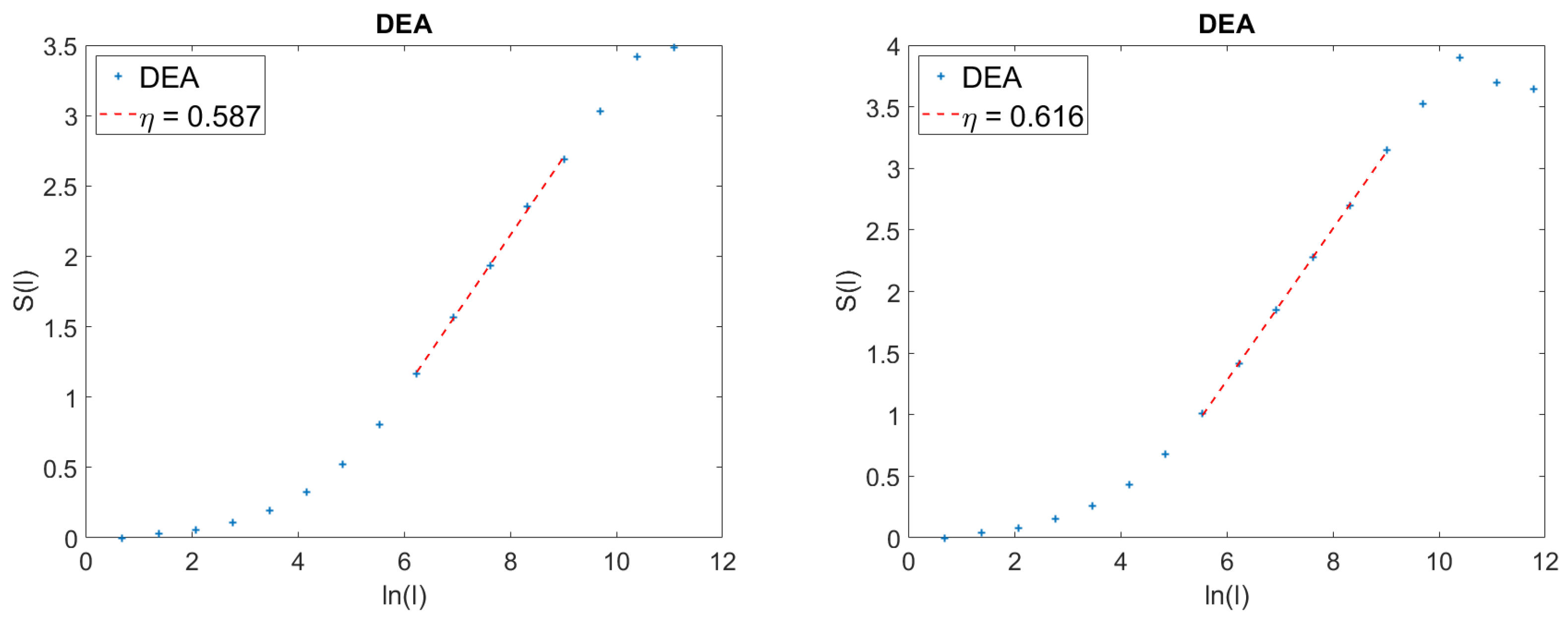

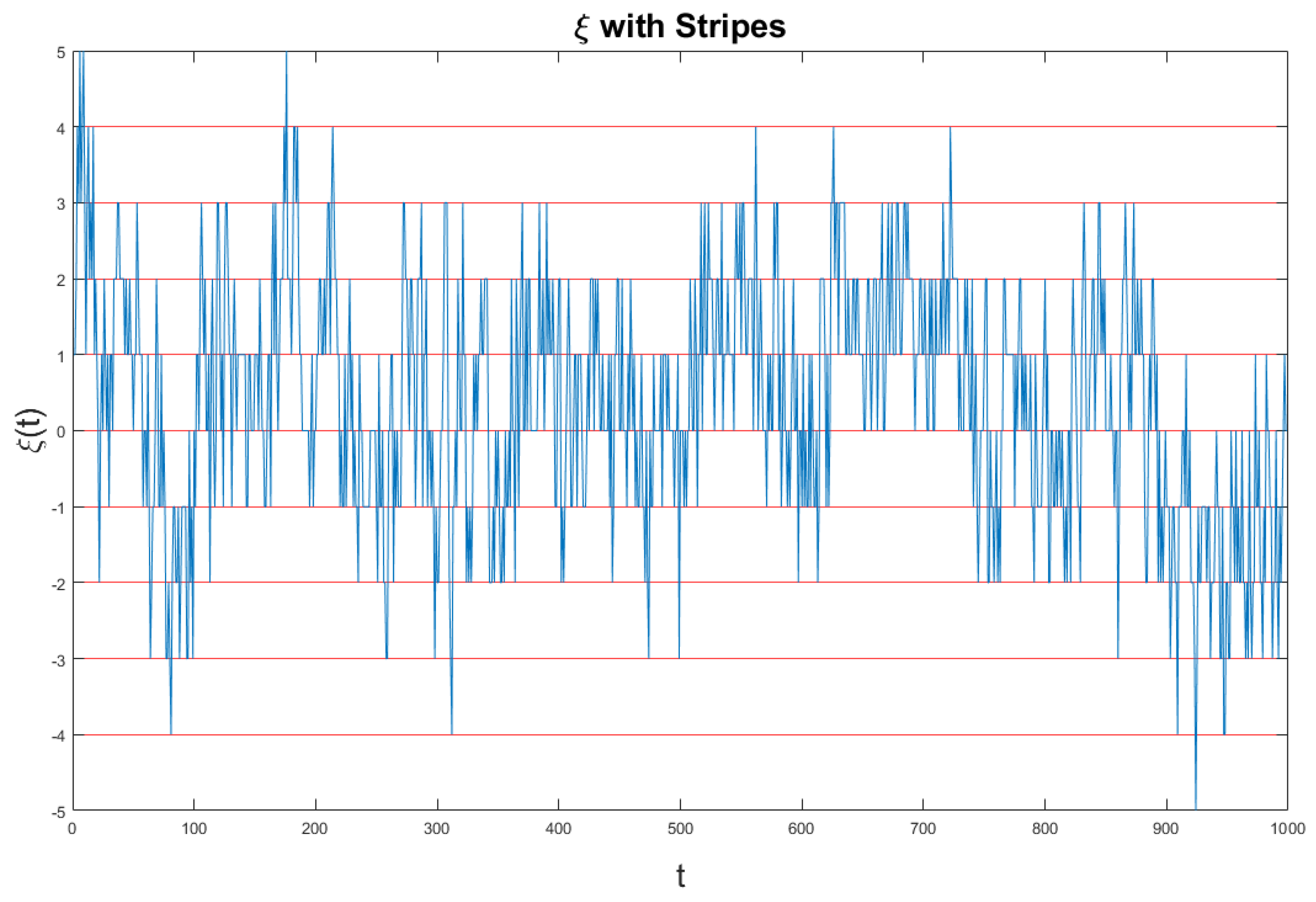

5. Details on the Action of the Stripes

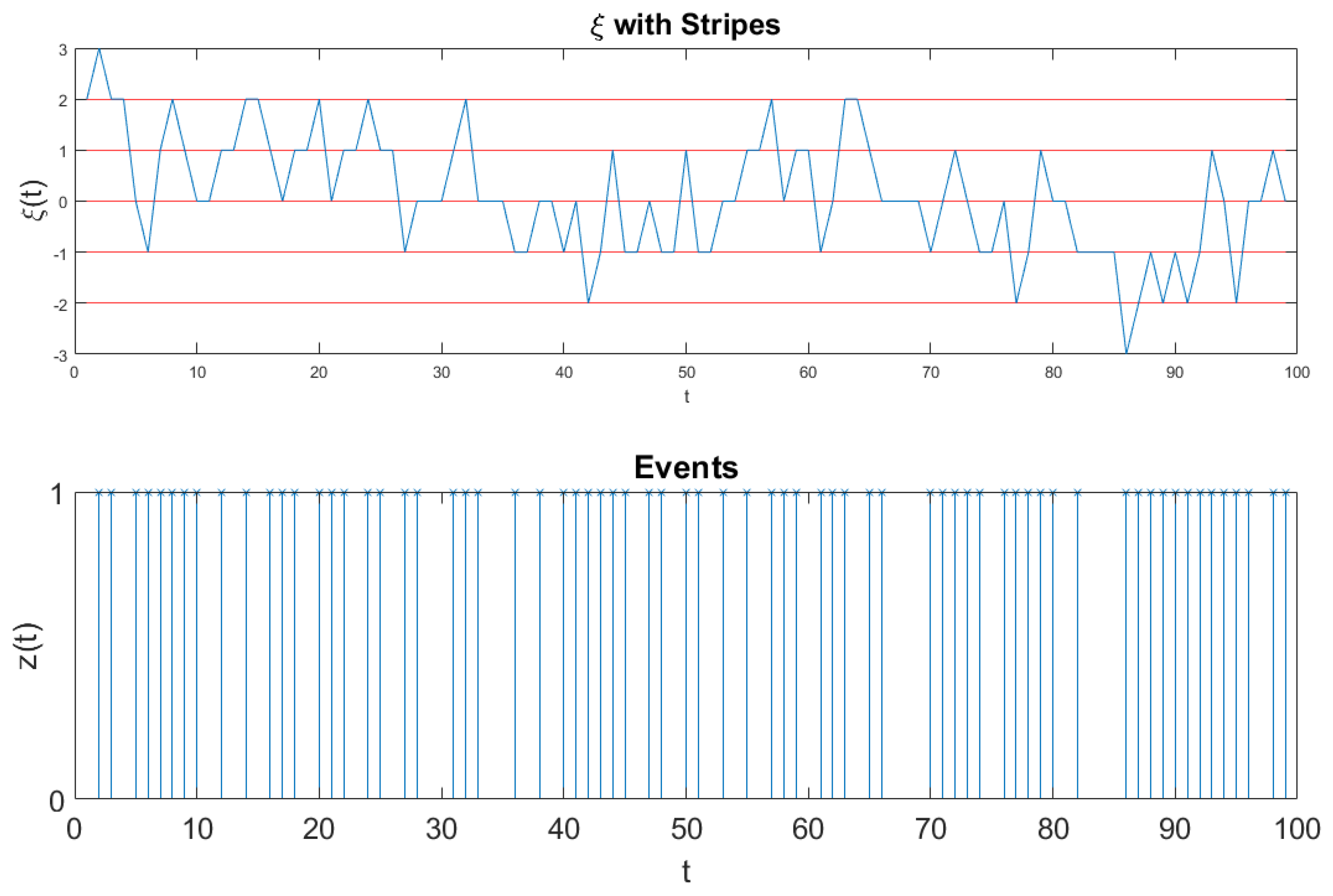

We devote this Section to more details on the method of the stripes. We afford details in the case of the results of

Figure 3. This is expected to help the readers to also get a better understanding of the method used to get the results of the left panel of

Figure 2. In

Figure 5 we show the fluctuation

generated by Equation (

56), which is the combination of crucial and non-crucial events adopted by us the generate the diffusional trajectory of Equation (

54).

The ordinate axis is divided in many stripes of size . The time at which the signal moves from one stripe to one of the two nearest neighbor stripes is recorded as the time of occurrence of an event, which can be either crucial, if it depends on , or not, if it depends on . In the case of fluctuations of intensity much larger than the size s of the stripes many events occur at the same time and they are recorded as a single event. This explains why the large intensities of the crucial fluctuations do not influence the scaling, but only their temporal occurrence does.

The adoption of the always stepping ahead rule [

12] to define the diffusional trajectory

of Equation (

54) is essential to making the method of stripes efficient. In fact, if all the events are crucial, this diffusion process generates the scaling

, which is larger than

for

. If all the events are not crucial,

. When the events detected using the stripes are a mixture of crucial and non-crucial events, the long-time limit of the diffusion process is dominated by the faster scaling of crucial events. This explains why the adoption of the method of stripes makes the DEA so efficient for the detection of crucial events.

The fluctuation

of

Figure 5 is too dense to see the single events. Therefore, we invite the readers to look at

Figure 6. Also

Figure 6 refers to the analysis of the surrogate sequence of Equation (

56) and to the results of

Figure 3. However, the realization

of

Figure 6, is of size 100, much shorter than the realization of

of

Figure 5: it is short enough as to illustrate the details of this fluctuation. The times at which the signal

crosses one of the border lines are recorded to signal the occurrence of an event, which can be either crucial or not, according to the component of

of Equation (

56), either

or

, determining the crossing. We convert these events into the diffusional trajectory

. This is shown in detail in

Figure 6.

6. Criticality and Physiological Processes

Self-organized criticality (SOC) and the connection between SOC and

noise have been the subject of sometimes heated debates since the publication of the original work of Bak and coworkers [

8]. The connection between criticality and

noise has been emphasized [

43,

44] and questioned [

45,

46], and it remains an open question especially because whether or not

noise itself has a unique origin is still unsettled.

On one side we have proposals that advocate to some extent the adoption of SFBM arguments [

47,

48] and introduce the adoption of multifractality to take into account the fluctuations of

H. On the other side there are approaches to

noise in systems with ergodicity breaking [

49] including the extreme case of perennial aging [

50] with

. The analysis of the brain dynamics of Reference [

35] led these authors to the conclusion that the brain in the awake state is a generator of an ideal

noise, corresponding to

.

Another important physiological process is the dynamics of the heart and significant interest exists on the correlation between heart rate variability and brain activity [

51,

52,

53]. However, the theoretical explanation of the correlation between these two important physiological processes remains a difficult problem due to their different frequency scales.

The main theoretical result of the present paper allows us to reach some compelling conclusions about the nature of these two physiological processes. In fact, the heartbeats have been analyzed with the method of the stripes [

37,

42] leading the conclusion that the IPL index

in the case of healthy individuals is close to the condition generating ideal noise,

. In a more recent paper, Bohara et al. [

54] using the method of the stripes analyzed the EEGs of healthy subjects in the same awake conditions as the human subjects of Reference [

35] and confirmed that they have a power law index

very close to the ideal condition

. They also established a bridge between waves and crucial events leading to the important conjecture that for the brain-heart correlation the tuning of frequencies is not as important as the tuning of temporal complexity, namely the brain and heart dynamics sharing the same IPL index

.

On the basis of the present results, we conclude that the frequencies of the brain wave may affect the brain physiological process with SFBM contributions that are killed by the DEA analysis resting on the adoption of the method of stripes and that the form of

noise generated by the brain in the awake condition is due to ASBM, thereby supporting the conjecture that the brain-heart correlation is a consequence of complexity matching [

55], mainly resting on tuning temporal complexity rather than frequencies.

It is important to stress that the theoretical perspective of this paper affords strong support to our conviction that crucial events play a fundamental role for cognition, without ruling out coherence. The authors of the recent work of Reference [

56] found that meditation has the remarkable role of enhancing coherence. We believe that SFBM is closely related to coherence. On the other hand, the earlier work of Reference [

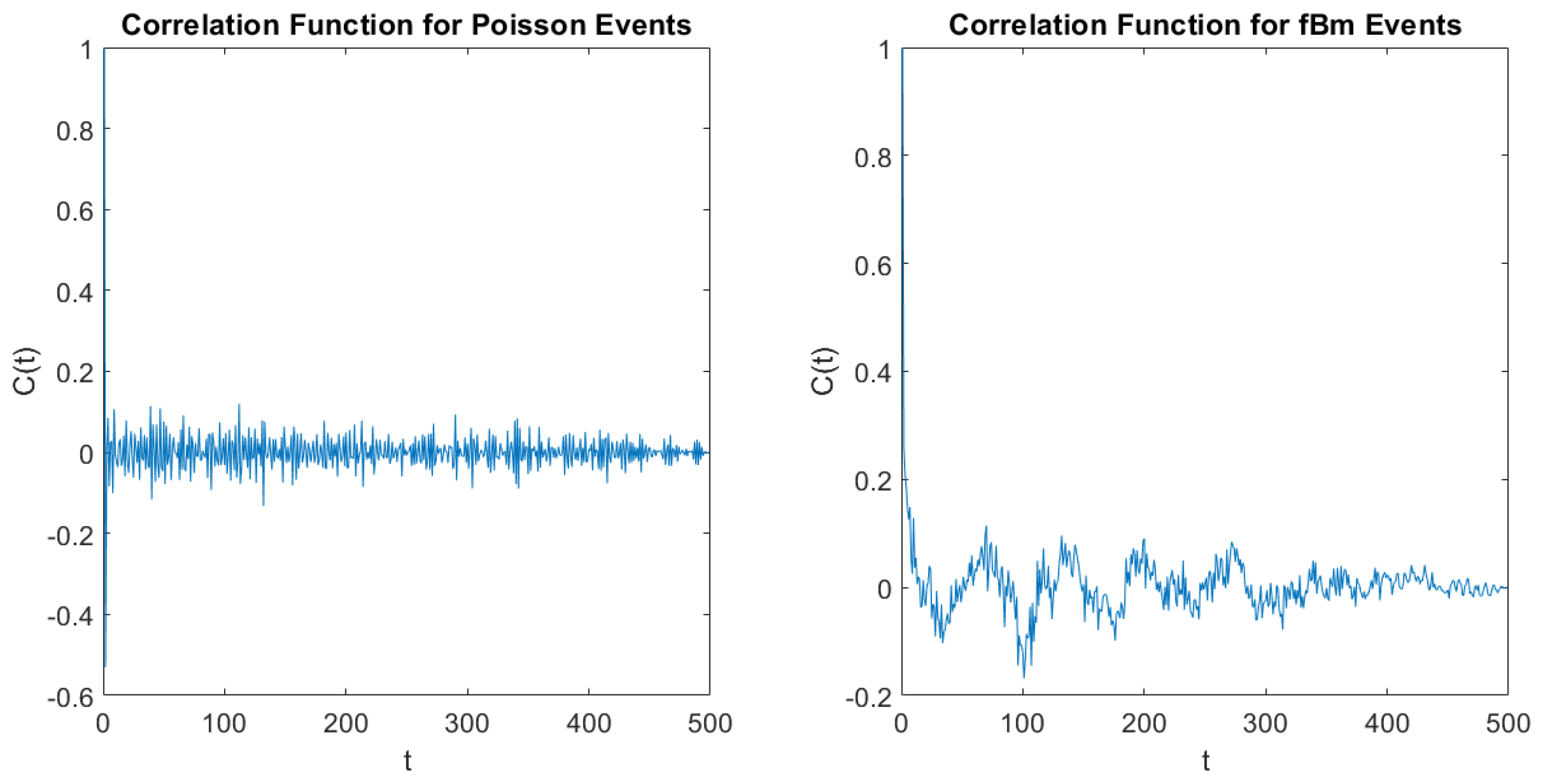

37], where the method of stripes has been used for the first time, suggests that crucial events are imbedded in a cloud of Poisson events, ruling out coherence-induced SFBM. This paper shows that the crucial events may be imbedded in a cloud of SFBM fluctuations and that also in this case, thanks to the method of stripes, the action of crucial events can be revealed. The right panel of

Figure 4 illustrates SFBM effects that the DEA with stripes does not perceive.