Mathematics and the Brain: A Category Theoretical Approach to Go Beyond the Neural Correlates of Consciousness

Abstract

1. Introduction

“There is no certainty in sciences where mathematics cannot be applied”(Leonardo da Vinci)

2. Category and Consciousness

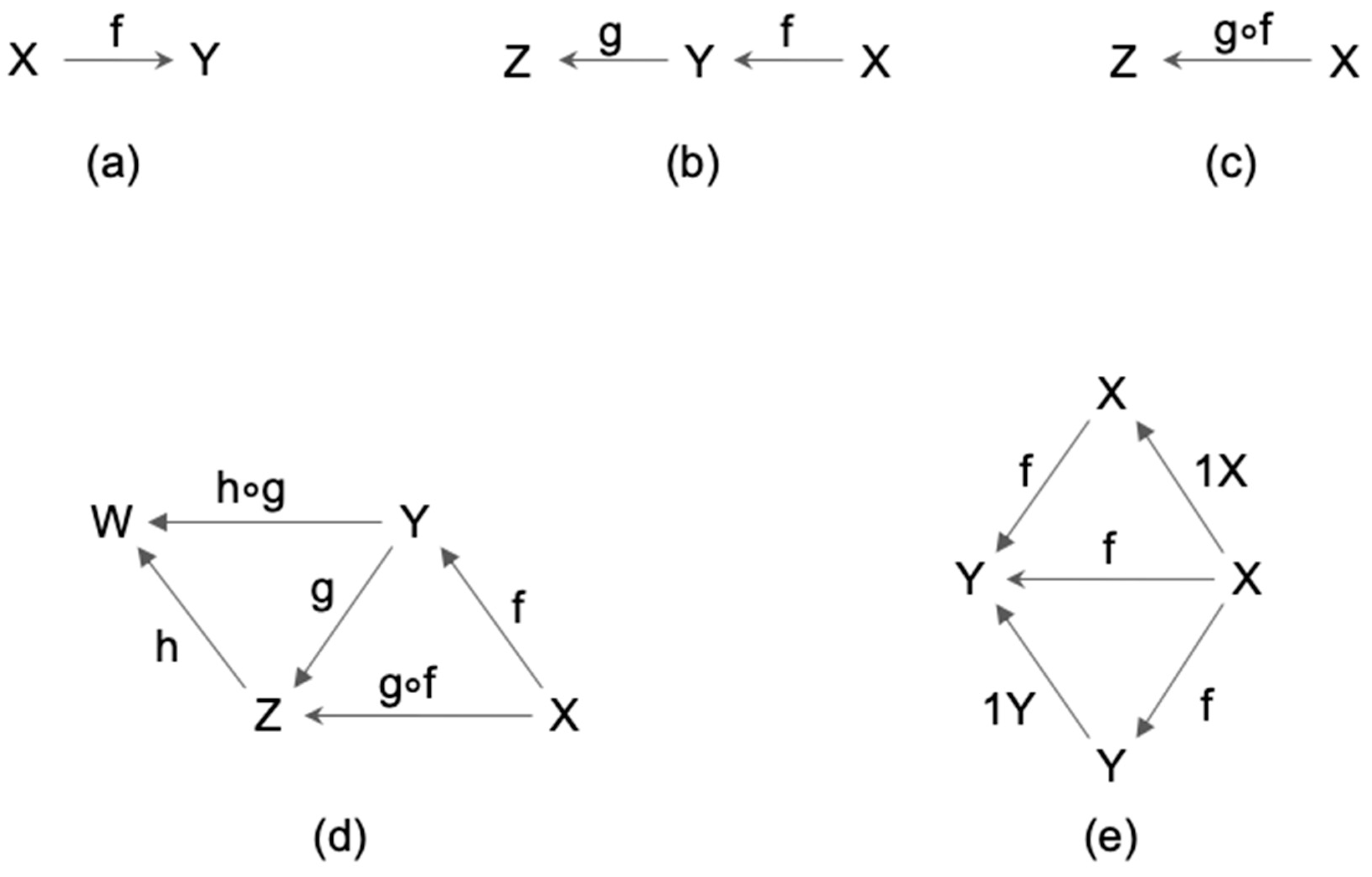

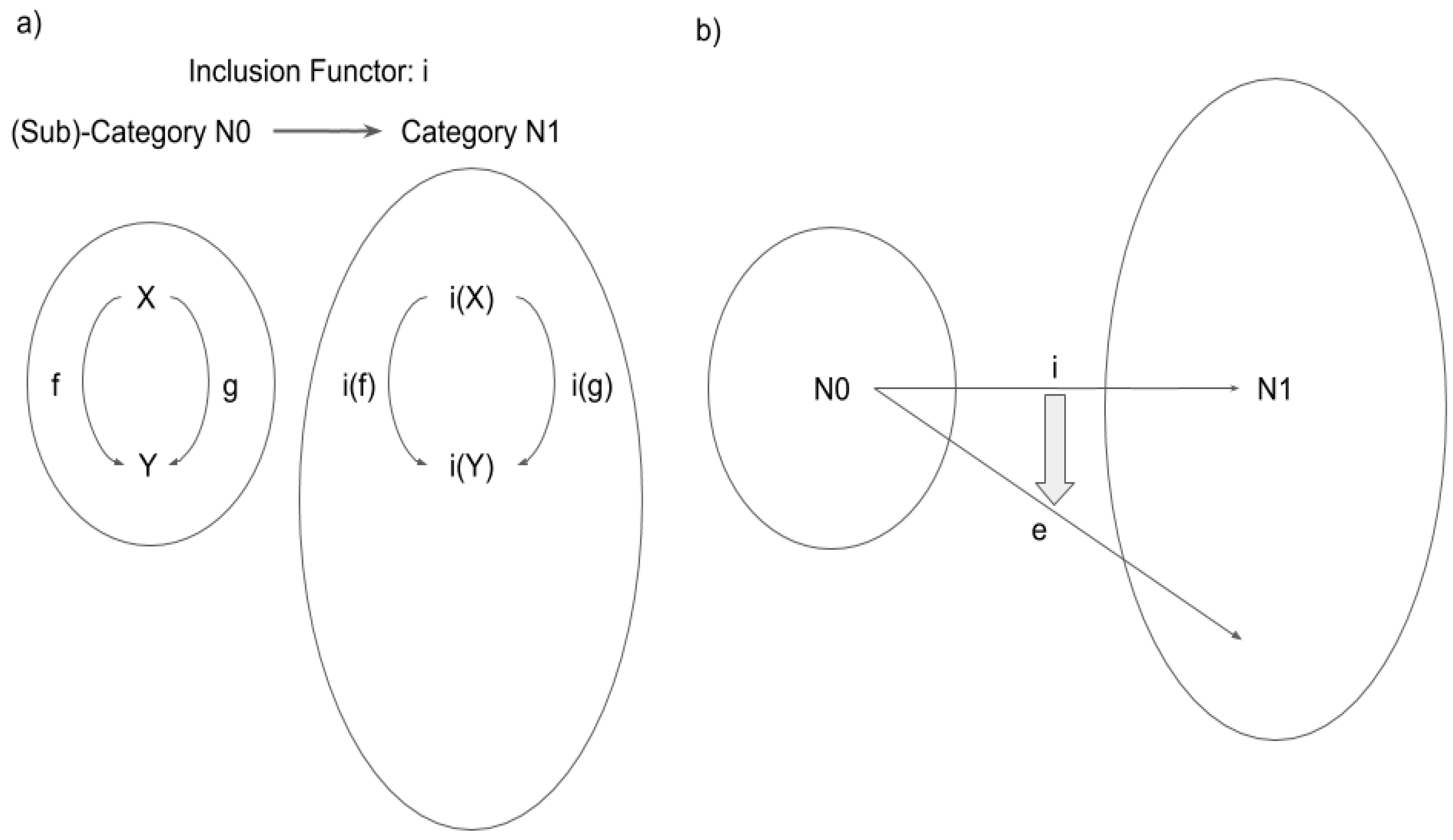

2.1. Definition of Category

2.2. Category and Consciousness

“A neural correlate of a phenomenal family S is a neural system N such that the state of N directly correlates with the subject’s phenomenal property in S.”

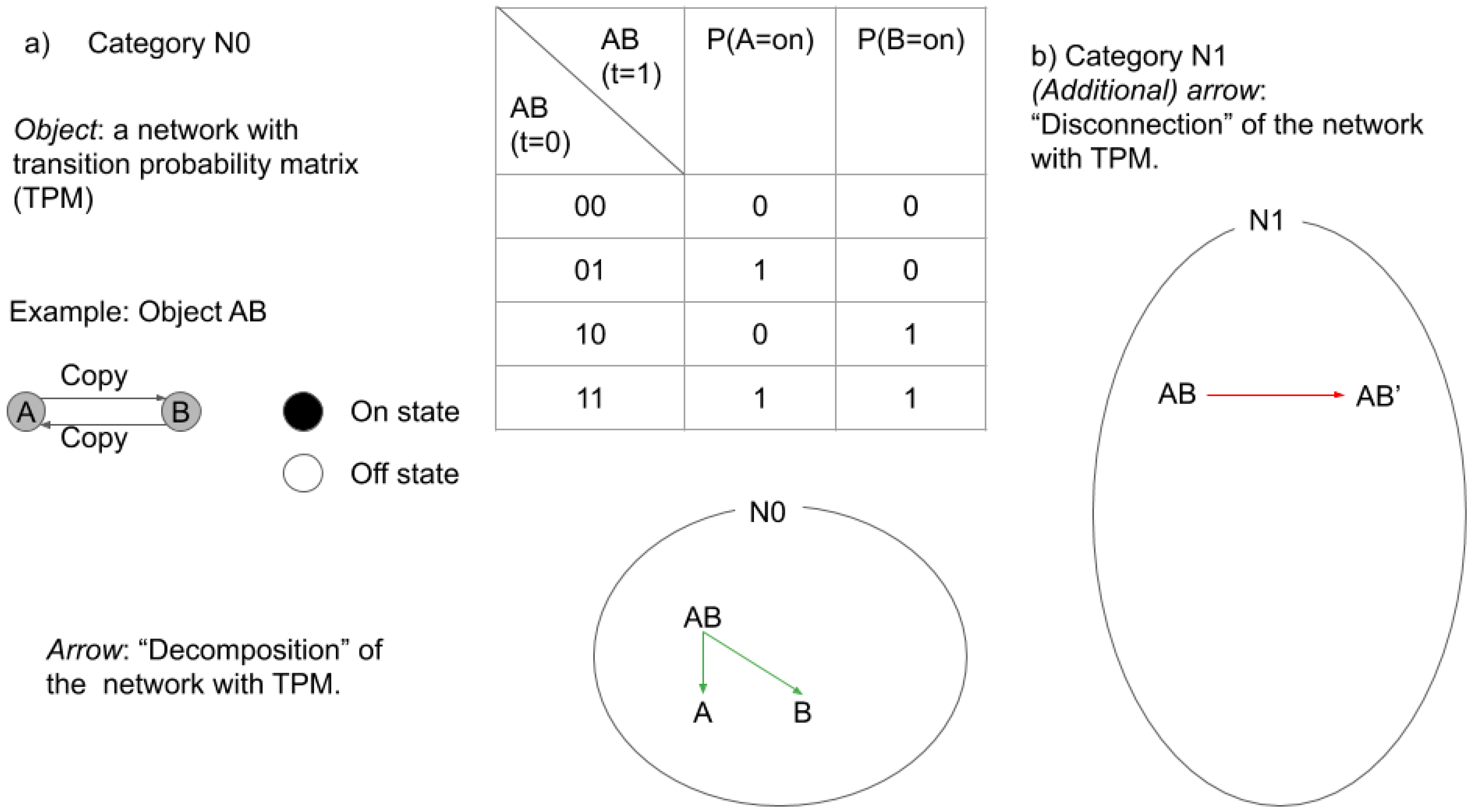

2.3. Categories in IIT and TTC

2.3.1. Categories in IIT

2.3.2. Categories in TTC

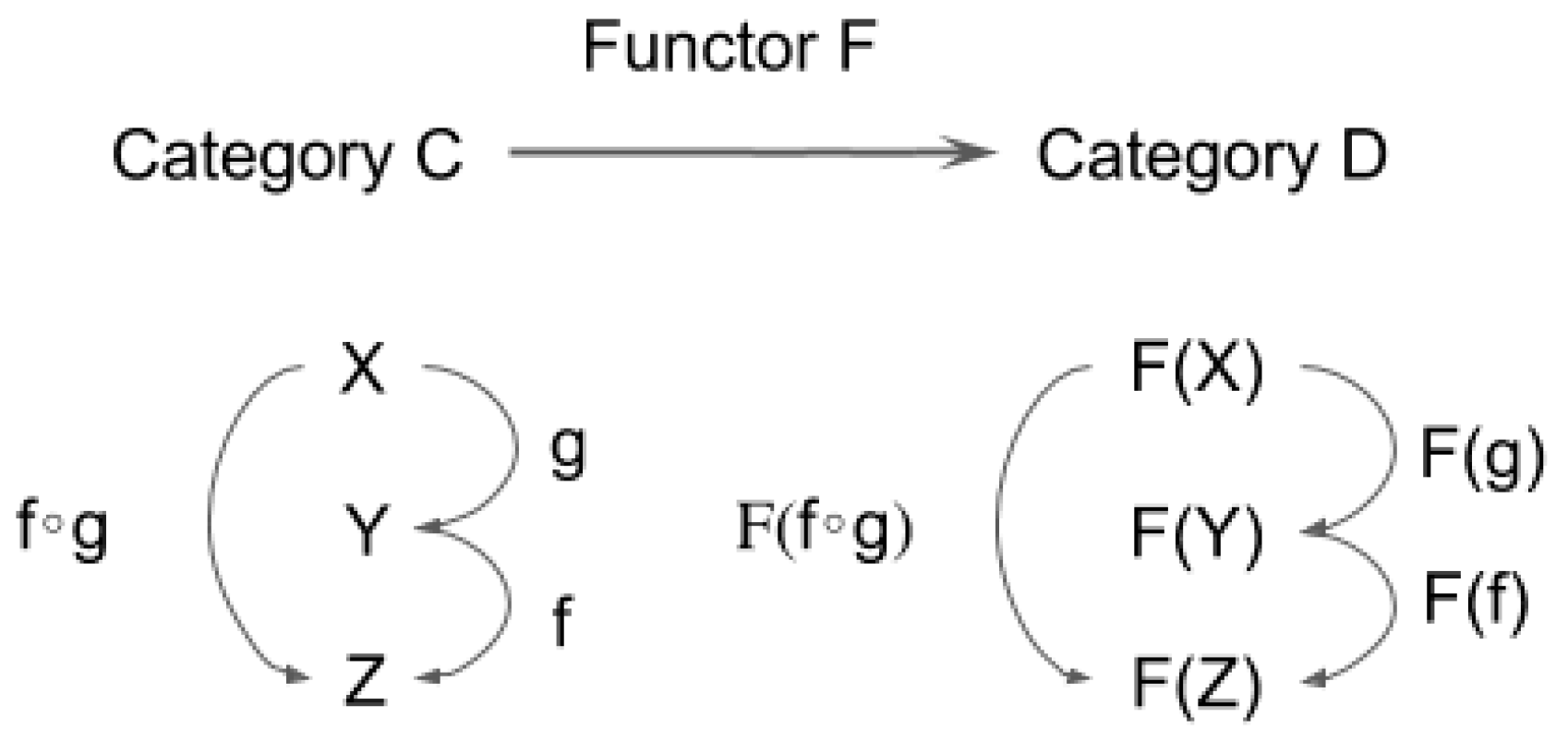

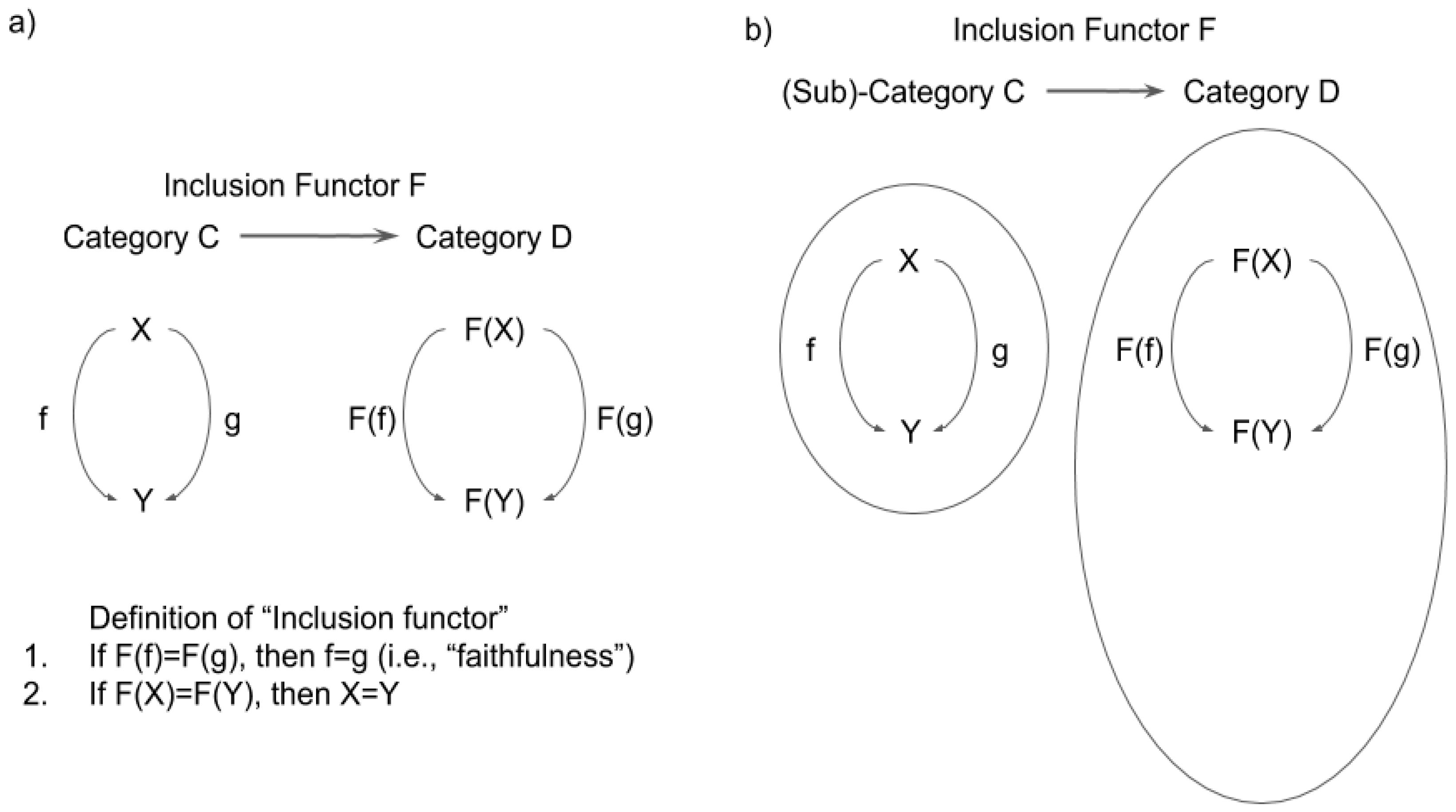

3. Functor, Natural Transformation, and Consciousness

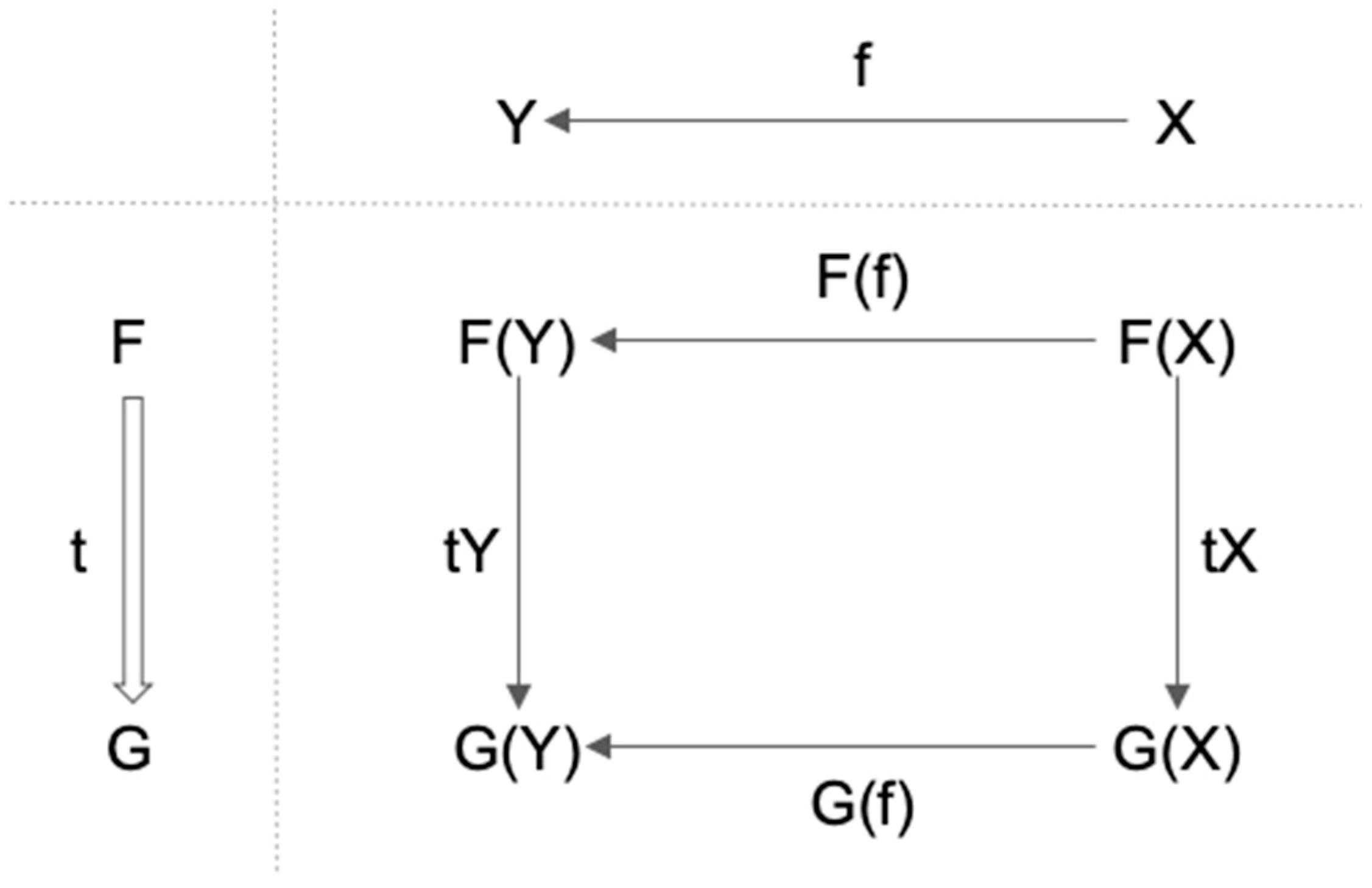

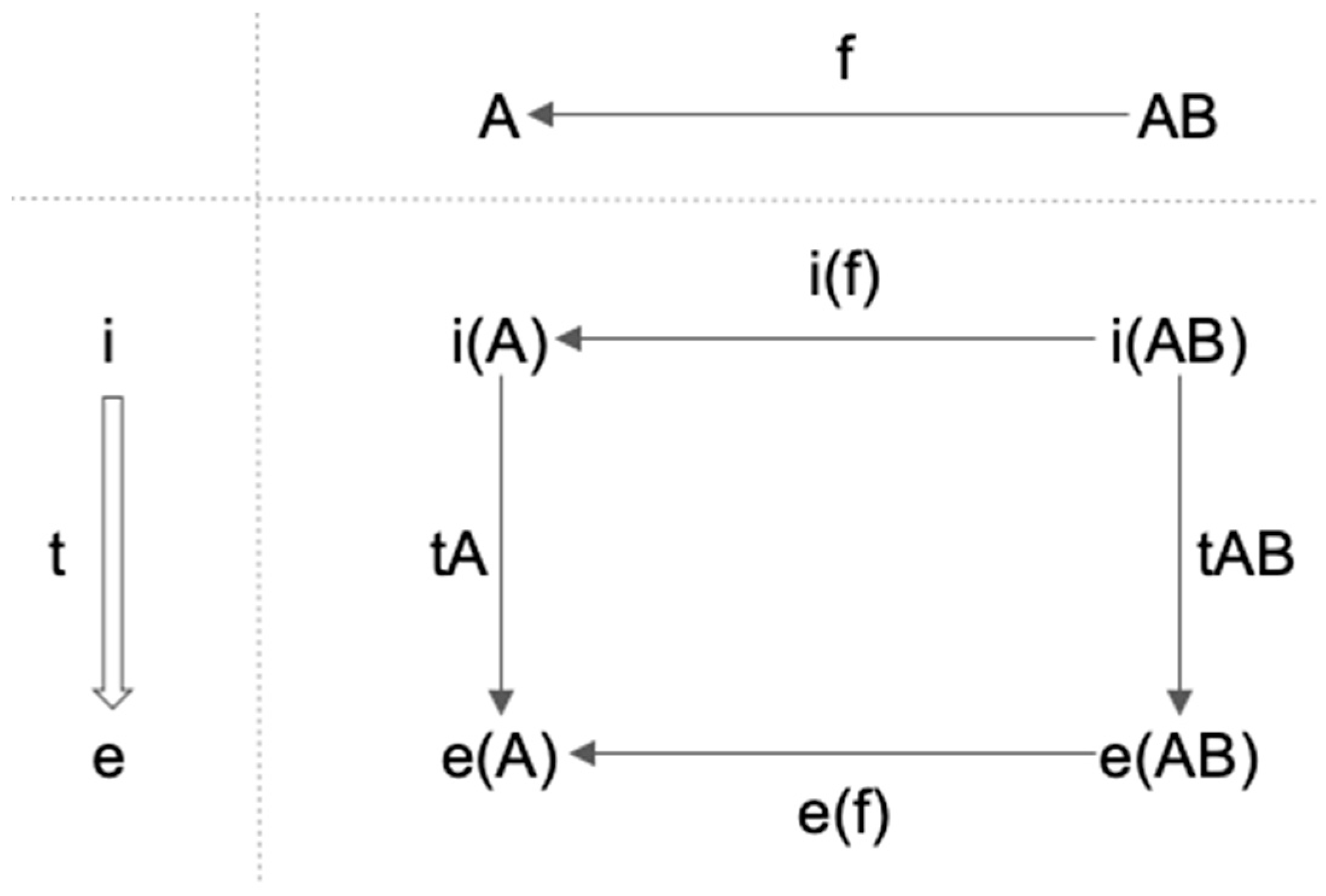

3.1. Definition of Functor and Natural Transformation

- 1.

- It maps f: X→Y in C to F(f): F(X)→F(Y) in D;

- 2.

- F(f ∘ g) = F(f) ∘ F(g) for any (composable) pair of f and g in C;

- 3.

- For each X in C, F(1X) = 1F(X).

- 1.

- t maps each object X in C to corresponding arrow tX: F(X)→G(X) in D;

- 2.

- For any f: X→Y in C, tY ∘ F(f) = G(f) ∘ tX.

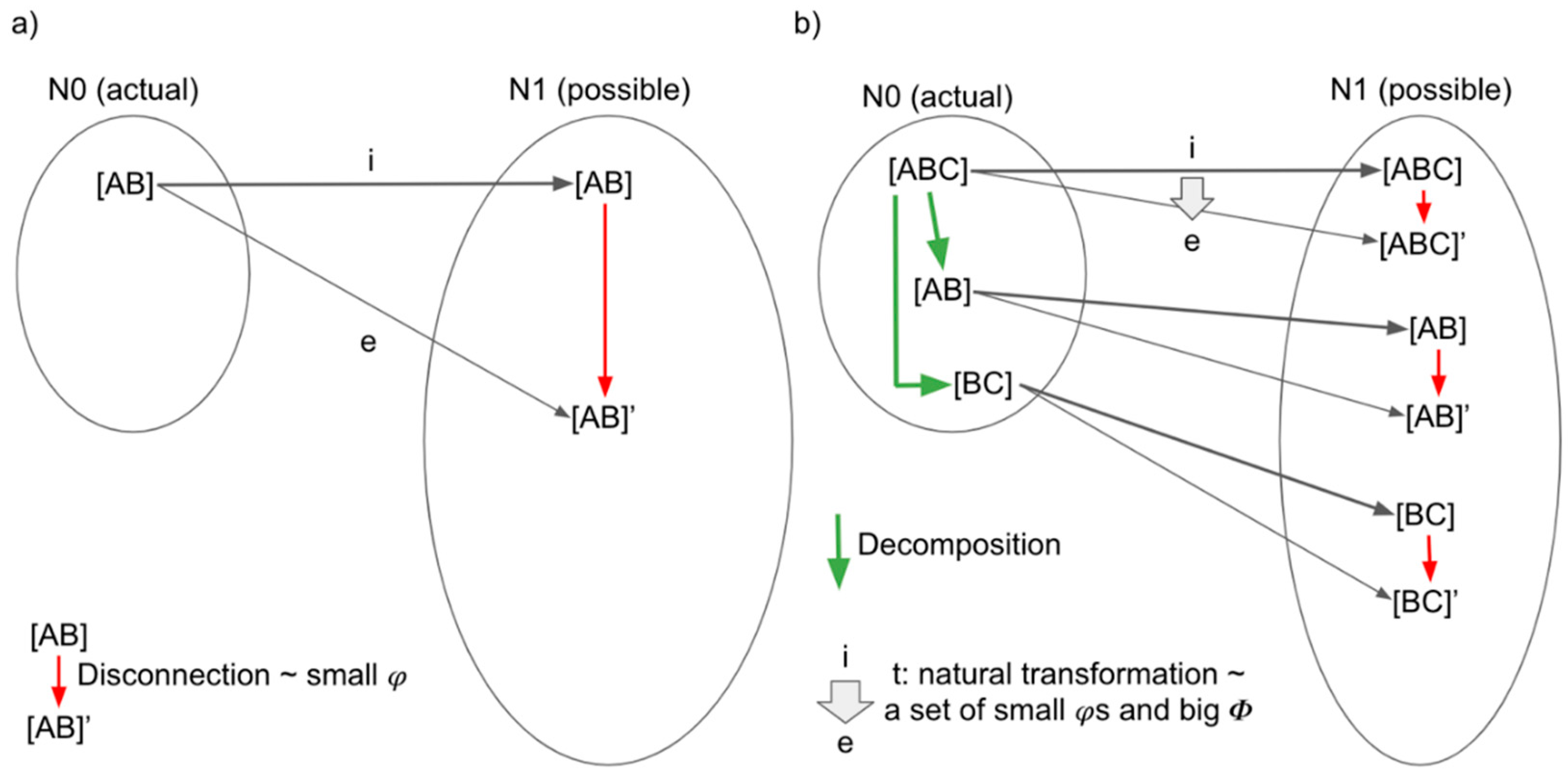

3.2. Functor, Natural Transformation, and Consciousness

3.3. Functors and Natural Transformations in IIT and TTC

3.3.1. Functors and Natural Transformations in IIT

3.3.2. Functor and Natural Transformations in TTC

4. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Levine, J. Materialism and qualia: The explanatory gap. Pac. Philos. Q. 1983, 64, 354–361. [Google Scholar] [CrossRef]

- Chalmers, D.J. What Is a Neural Correlate of Consciousness? Neural Correlates of Consciousness: Empirical and Conceptual Questions; MIT Press: Cambridge, MA, USA, 2000. [Google Scholar]

- Northoff, G. Unlocking the Brain: Volume II: Consciousness; Oxford University Press: Oxford, UK, 2014. [Google Scholar]

- Churchland, P. Brain-Wise; MIT Press: Cambridge, MA, USA, 2002. [Google Scholar]

- Northoff, G. Neuro-Philosophy and the Healthy Mind: Learning from the Unwell Brain; Norton Publisher: New York, NY, USA, 2016. [Google Scholar]

- Northoff, G. The Spontaneous Brain. From Mind-Body Problem to World-Brain Problem; MIT Press: Cambridge, MA, USA, 2018. [Google Scholar]

- Searle, J.R. Mind: A Brief Introduction; Oxford University Press: Oxford, UK, 2004. [Google Scholar]

- Arzi-Gonczarowski, Z. Perceive this as that—Analogies, artificial perception, and category theory. Ann. Math. Artif. Intell. 1999, 26, 215–252. [Google Scholar] [CrossRef]

- Crick, F.; Koch, C. A framework for consciousness. Nat. Neurosci. 2003, 6, 119–126. [Google Scholar] [CrossRef] [PubMed]

- De Graaf, T.A.; Hsieh, P.J.; Sack, A.T. The ‘correlates’ in neural correlates of consciousness. Neurosci. Biobehav. Rev. 2012, 36, 191–197. [Google Scholar] [CrossRef] [PubMed]

- Koch, C. The Quest for Consciousness; Oxford University Press: Oxford, UK, 2004. [Google Scholar]

- Northoff, G. Unlocking the Brain: Volume I: Coding; Oxford University Press: Oxford, UK, 2014. [Google Scholar]

- Koch, C.; Massimini, M.; Boly, M.; Tononi, G. Neural correlates of consciousness: Progress and problems. Rev. Neurosci. 2016, 17, 307–321. [Google Scholar] [CrossRef] [PubMed]

- Tononi, G. An information integration theory of consciousness. BMC Neurosci. 2004, 5, 42. [Google Scholar] [CrossRef] [PubMed]

- Tononi, G.; Boly, M.; Massimini, M.; Koch, C. Integrated information theory: From consciousness to its physical substrate. Nat. Rev. Neurosci. 2016, 17, 450–461. [Google Scholar] [CrossRef]

- Dehaene, S.; Charles, L.; King, J.R.; Marti, S. Toward a computational theory of conscious processing. Curr. Opin. Neurobiol. 2014, 25, 76–84. [Google Scholar] [CrossRef]

- Dehaene, S.; Changeux, J.P. Experimental and theoretical approaches to conscious processing. Neuron 2011, 70, 200–227. [Google Scholar] [CrossRef]

- Dehaene, S.; Naccache, L. Towards a cognitive neuroscience of consciousness: Basic evidence and a workspace framework. Cognition 2001, 79, 1–37. [Google Scholar] [CrossRef]

- Baars, B.J. Global workspace theory of consciousness: Toward a cognitive neuroscience of human experience. Prog. Brain Res. 2005, 150, 45–53. [Google Scholar] [PubMed]

- Northoff, G. What the brain’s intrinsic activity can tell us about consciousness? A tri-dimensional view. Neurosci. Biobehav. Rev. 2013, 37, 726–738. [Google Scholar] [CrossRef] [PubMed]

- Northoff, G.; Huang, Z. How do the brain’s time and space mediate consciousness and its different dimensions? Temporospatial theory of consciousness (TTC). Neurosci. Biobehav. Rev. 2017, 80, 630–645. [Google Scholar] [CrossRef]

- Lau, H.; Rosenthal, D. Empirical support for higher-order theories of conscious awareness. Trends Cogn. Sci. 2011, 15, 365–373. [Google Scholar] [CrossRef] [PubMed]

- Rosenthal, D.M. Metacognition and higher-order thoughts. Conscious. Cogn. 2000, 9, 231–242. [Google Scholar] [CrossRef] [PubMed]

- Lamme, V.A.; Roelfsema, P.R. The distinct modes of vision offered by feedforward and recurrent processing. Trends Neurosci. 2000, 23, 571–579. [Google Scholar] [CrossRef]

- Fingelkurts, A.A.; Fingelkurts, A.A.; Neves, C.F. Natural world physical, brain operational, and mind phenomenal space-time. Phys. Life Rev. 2010, 7, 195–249. [Google Scholar] [CrossRef]

- Engel, A.K.; Singer, W. Temporal binding and the neural correlates of sensory awareness. Trends Cogn. Sci. 2001, 5, 16–25. [Google Scholar] [CrossRef]

- Graziano, M.S.; Kastner, S. Human consciousness and its relationship to social neuroscience: A novel hypothesis. Cogn. Neurosci. 2011, 2, 98–113. [Google Scholar] [CrossRef]

- Tsuchiya, N.; Taguchi, S.; Saigo, H. Using category theory to assess the relationship between consciousness and integrated information theory. Neurosci. Res. 2016, 107, 1–7. [Google Scholar] [CrossRef]

- Stanley, R.P. Qualia space. J. Conscious. Stud. 1999, 6, 49–60. [Google Scholar]

- Yoshimi, J. Phenomenology and connectionism. Front. Psychol. 2011, 2, 288. [Google Scholar] [CrossRef] [PubMed]

- Hoffman, W.C. Subjective geometry and geometric psychology. Math. Model. 1980, 1, 349–367. [Google Scholar] [CrossRef]

- Hoffman, W.C. The Lie algebra of visual perception. J. Math. Psychol. 1966, 3, 65–98. [Google Scholar] [CrossRef]

- Palmer, S.E. Color, consciousness, and the isomorphism constraint. Behav. Brain Sci. 1999, 22, 923–943. [Google Scholar] [CrossRef]

- Prentner, R. Consciousness and topologically structured phenomenal spaces. Conscious. Cogn. 2019, 70, 25–38. [Google Scholar] [CrossRef]

- Fekete, T.; Edelman, S. Towards a computational theory of experience. Conscious. Cogn. 2011, 20, 807–827. [Google Scholar] [CrossRef]

- Eilenberg, S.; MacLane, S. Relations between homology and homotopy groups of spaces. Ann. Math. 1945, 46, 480–509. [Google Scholar] [CrossRef]

- Baez, J.C.; Stay, M. Physics, Topology, Logic. and Computation: A Rosetta Stone. Available online: https://arxiv.org/abs/0903.0340 (accessed on 10 October 2019).

- Ehresmann, A.C.; Vanbremeersch, J.P. Hierarchical evolutive systems: A mathematical model for complex systems. Bull. Math. Biol. 1987, 49, 13–50. [Google Scholar] [CrossRef]

- Ehresmann, A.C.; Vanbremeersch, J.P. Information processing and symmetry-breaking in memory evolutive systems. Biosystems 1997, 43, 25–40. [Google Scholar] [CrossRef]

- Ehresmann, A.C.; Gomez-Ramirez, J. Conciliating neuroscience and phenomenology via category theory. Prog. Biophys. Mol. Biol. 2015, 119, 347–359. [Google Scholar] [CrossRef] [PubMed]

- Healy, M.J.; Caudell, T.P.; Goldsmith, T.E. A Model of Human Categorization and Similarity Based Upon Category Theory; Electrical & Computer Engineering Technical Reports; University of New Mexico: Albuquerque, NM, USA, 7 January 2008; Report No.: EECE-TR-08-0010; Available online: https://digitalrepository.unm.edu/ece_rpts/28 (accessed on 10 October 2019).

- Phillips, S.; Wilson, W.H. Categorial compositionality: A category theory explanation for the systematicity of human cognition. PLoS Comput. Biol. 2010, 6, e1000858. [Google Scholar] [CrossRef] [PubMed]

- Phillips, S.; Wilson, W.H. Systematicity and a categorical theory of cognitive architecture: Universal construction in context. Front. Psychol. 2016, 7, 1139. [Google Scholar] [CrossRef] [PubMed]

- Allison, T.; Ginter, H.; McCarthy, G.; Nobre, A.C.; Puce, A.; Luby, M.; Spencer, D.D. Face recognition in human extrastriate cortex. J. Neurophysiol. 1994, 71, 21–25. [Google Scholar] [CrossRef]

- Baroni, F.; van Kempen, J.; Kawasaki, H.; Kovach, C.K.; Oya, H.; Howard, M.A.; Adolphs, R.; Tsuchiya, N. Intracranial markers of conscious face perception in humans. Neuroimage 2017, 162, 322–343. [Google Scholar] [CrossRef]

- Kanwisher, N.; Yovel, G. The fusiform face area: A cortical region specialized for the perception of faces. Philos. Trans. R. Soc. Lond. B Biol. Sci. 2006, 361, 2109–2128. [Google Scholar] [CrossRef]

- Tong, F.; Nakayama, K.; Vaughan, J.T.; Kanwisher, N. Binocular rivalry and visual awareness in human extrastriate cortex. Neuron 1998, 21, 753–759. [Google Scholar] [CrossRef]

- Rangarajan, V.; Hermes, D.; Foster, B.L.; Weiner, K.S.; Jacques, C.; Grill-Spector, K.; Parvizi, J. Electrical stimulation of the left and right human fusiform gyrus causes different effects in conscious face perception. J. Neurosci. 2014, 34, 12828–12836. [Google Scholar] [CrossRef]

- Chialvo, D.R. Emergent complex neural dynamics. Nat. Phys. 2010, 6, 744–750. [Google Scholar] [CrossRef]

- Rees, G.; Friston, K.; Koch, C. A direct quantitative relationship between the functional properties of human and macaque V5. Nat. Neurosci. 2000, 3, 716–723. [Google Scholar] [CrossRef]

- Balduzzi, D.; Tononi, G. Qualia: The geometry of integrated information. PLoS Comput. Biol. 2009, 5, e1000462. [Google Scholar] [CrossRef] [PubMed]

- Tononi, G. Information integration: Its relevance to brain function and consciousness. Arch. Ital. Biol. 2010, 148, 299–322. [Google Scholar] [PubMed]

- Oizumi, M.; Albantakis, L.; Tononi, G. From the phenomenology to the mechanisms of consciousness: Integrated Information Theory 3.0. PLoS Comput. Biol. 2014, 10, e1003588. [Google Scholar] [CrossRef] [PubMed]

- Hidaka, S.; Oizumi, M. Fast and exact search for the partition with minimal information loss. PLoS ONE 2018, 13, e0201126. [Google Scholar] [CrossRef] [PubMed]

- Toker, D.; Sommer, F.T. Information integration in large brain networks. PLoS Comput. Biol. 2019, 15, e1006807. [Google Scholar] [CrossRef]

- Tsuchiya, N.; Andrillon, T.; Haun, A. A reply to “the unfolding argument”: Beyond functionalism/behaviorism and towards a truer science of causal structural theories of consciousness. PsyArXiv 2019. [Google Scholar] [CrossRef]

- Awodey, S. Category Theory; Oxford University Press: Oxford, UK, 2010. [Google Scholar]

- Haun, A.M.; Oizumi, M.; Kovach, C.K.; Kawaski, H.; Oya, H.; Howard, M.A.; Adolphs, R.; Tsuchiya, N. Conscious perception as integrated information patterns in human electrocorticography. eNeuro 2017, 4. [Google Scholar] [CrossRef]

- Oizumi, M.; Tsuchiya, N.; Amari, S.I. Unified framework for information integration based on information geometry. Proc. Natl. Acad. Sci. USA 2016, 113, 14817–14822. [Google Scholar] [CrossRef]

- Tegmark, M. Improved measures of integrated information. PLoS Comput. Biol. 2016, 12, e1005123. [Google Scholar] [CrossRef]

- Northoff, G. Paradox of slow frequencies—Are slow frequencies in upper cortical layers a neural predisposition of the level/state of consciousness (NPC)? Conscious. Cogn. 2017, 54, 20–35. [Google Scholar] [CrossRef]

- Northoff, G. The anxious brain and its heart—Temporal brain-heart de-synchronization in anxiety disorders. J. Affect. Disord. 2019. Forthcoming. [Google Scholar]

- He, B.J.; Zempel, J.M. Average is optimal: An inverted-U relationship between trial-to-trial brain activity and behavioral performance. PLoS Comput. Biol. 2013, 9, e1003348. [Google Scholar] [CrossRef] [PubMed]

- Northoff, G.; Qin, P.; Nakao, T. Rest-stimulus interaction in the brain: A review. Trends Neurosci. 2010, 33, 277–284. [Google Scholar] [CrossRef] [PubMed]

- Huang, Z.; Zhang, J.; Longtin, A.; Dumont, G.; Duncan, N.W.; Pokorny, J.; Qin, P.; Dai, R.; Ferri, F.; Weng, X.; et al. Is There a Nonadditive Interaction Between Spontaneous and Evoked Activity? Phase-Dependence and Its Relation to the Temporal Structure of Scale-Free Brain Activity. Cereb. Cortex. 2017, 27, 1037–1105. [Google Scholar] [CrossRef]

- Boly, M.; Phillips, C.; Tshibanda, L.; Vanhaudenhuyse, A.; Schabus, M.; Dang-Vu, T.; Moonen, G.; Hustinx, R.; Maquet, P.; Laureys, S. Intrinsic brain activity in altered states of consciousness: How conscious is the default mode of brain function? Ann. N. Y. Acad. Sci. 2008, 1129, 119–129. [Google Scholar] [CrossRef] [PubMed]

- Hesselmann, G.; Kell, C.A.; Eger, E.; Kleinschmidt, A. Spontaneous local variations in ongoing neural activity bias perceptual decisions. Proc. Natl. Acad. Sci. USA 2008, 105, 10984–10989. [Google Scholar] [CrossRef]

- Sadaghiani, S.; Hesselmann, G.; Friston, K.J.; Kleinschmidt, A. The relation of ongoing brain activity, evoked neural responses, and cognition. Front. Syst. Neurosci. 2010, 4, 20. [Google Scholar] [CrossRef]

- Sadaghiani, S.; Hesselmann, G.; Kleinschmidt, A. Distributed and antagonistic contributions of ongoing activity fluctuations to auditory stimulus detection. J. Neurosci. 2009, 29, 13410–13417. [Google Scholar] [CrossRef]

- Arazi, A.; Censor, N.; Dinstein, I. Neural Variability Quenching Predicts Individual Perceptual Abilities. J. Neurosci. 2017, 37, 97–109. [Google Scholar] [CrossRef]

- Bai, Y.; Nakao, T.; Xu, J.; Qin, P.; Chaves, P.; Heinzel, A.; Duncan, N.; Lane, T.; Yen, N.S.; Tsai, S.Y.; et al. Resting state glutamate predicts elevated prestimulus alpha during self-relatedness: A combined EEG-MRS study on “rest-self overlap”. Soc. Neurosci. 2011, 11, 249–263. [Google Scholar] [CrossRef]

- Baria, A.T.; Maniscalco, B.; He, B.J. Initial-state-dependent, robust, transient neural dynamics encode conscious visual perception. PLoS Comput. Biol. 2017, 13, e1005806. [Google Scholar] [CrossRef] [PubMed]

- Liu, C.H.; Ma, X.; Song, L.P.; Fan, J.; Wang, W.D.; Lv, X.Y.; Zhang, Y.; Li, F.; Wang, L.; Wang, C.-Y. Abnormal spontaneous neural activity in the anterior insular and anterior cingulate cortices in anxious depression. Behav. Brain Res. 2015, 281, 339–347. [Google Scholar] [CrossRef] [PubMed]

- Northoff, G.; Wainio-Theberge, S.; Evers, K. Is temporospatial dynamics the “common currency” of brain and mind? In Quest of “Spatiotemporal Neuroscience”. Phys. Life Rev. 2019. [Google Scholar] [CrossRef] [PubMed]

- Oizumi, M.; Amari, S.; Yanagawa, T.; Fujii, N.; Tsuchiya, N. Measuring integrated information from the decoding perspective. PLoS Comput. Biol. 2016, 12, e1004654. [Google Scholar] [CrossRef] [PubMed]

- Leung, A.; Cohen, D.; van Swinderen, B.; Tsuchiya, N. General anaesthesia reduces integrated information in flies. Monash Univ. 2018. [Google Scholar] [CrossRef]

- Fong, B.; Spivak, D.I. Seven Sketches in Compositionality: An Invitation to Applied Category Theory. 2018. Available online: https://arxiv.org/abs/1803.05316 (accessed on 10 October 2019).

- Arieli, A.; Sterkin, A.; Grinvald, A.; Aertsen, A. Dynamics of ongoing activity: Explanation of the large variability in evoked cortical responses. Science 1996, 273, 1868–1871. [Google Scholar] [CrossRef]

- Azouz, R.; Gray, C.M. Cellular mechanisms contributing to response variability of cortical neurons in vivo. J. Neurosci. 1999, 19, 2209–2223. [Google Scholar] [CrossRef]

- Fox, M.D.; Snyder, A.Z.; Zacks, J.M.; Raichle, M.E. Coherent spontaneous activity accounts for trial-to-trial variability in human evoked brain responses. Nat. Neurosci. 2006, 9, 23–25. [Google Scholar] [CrossRef]

- Fox, M.D.; Snyder, A.Z.; Vincent, J.L.; Raichle, M.E. Intrinsic fluctuations within cortical systems account for intertrial variability in human behavior. Neuron 2007, 56, 171–184. [Google Scholar] [CrossRef]

- Fox, M.D.; Raichle, M.E. Spontaneous fluctuations in brain activity observed with functional magnetic resonance imaging. Nat. Rev. Neurosci. 2007, 8, 700–711. [Google Scholar] [CrossRef]

- Sylvester, C.M.; Shulman, G.L.; Jack, A.I.; Corbetta, M. Anticipatory and stimulus-evoked blood oxygenation level-dependent modulations related to spatial attention reflect a common additive signal. J. Neurosci. 2009, 29, 10671–10682. [Google Scholar] [CrossRef] [PubMed]

- Ferri, F.; Costantini, M.; Huang, Z.; Perrucci, M.G.; Ferretti, A.; Romani, G.L.; Northoff, G. Intertrial variability in the premotor cortex accounts for individual differences in peripersonal space. J. Neurosci. 2015, 35, 16328–16339. [Google Scholar] [CrossRef] [PubMed]

- Ferri, F.; Nikolova, Y.S.; Perrucci, M.G.; Costantini, M.; Ferretti, A.; Gatta, V.; Huang, Z.; Edden, R.A.E.; Yue, Q.; D’Aurora, M.; et al. A Neural “Tuning Curve” for Multisensory Experience and Cognitive-Perceptual Schizotypy. Schizophr. Bull. 2017, 43, 801–813. [Google Scholar] [CrossRef] [PubMed]

- Ponce-Alvarez, A.; He, B.J.; Hagmann, P.; Deco, G. Task-driven activity reduces the cortical activity space of the brain: Experiment and whole-brain modeling. PLoS Comput. Biol. 2015, 11, e1004445. [Google Scholar] [CrossRef] [PubMed]

- Huang, Z.; Zhang, J.; Wu, J.; Liu, X.; Xu, J.; Zhang, J.; Qin, P.; Dai, R.; Yang, Z.; Mao, Y.; et al. Disrupted neural variability during propofol-induced sedation and unconsciousness. Hum. Brain Map. 2018, 39, 4533–4544. [Google Scholar] [CrossRef] [PubMed]

- Schurger, A.; Sarigiannidis, I.; Naccache, L.; Sitt, J.D.; Dehaene, S. Cortical activity is more stable when sensory stimuli are consciously perceived. Proc. Natl. Acad. Sci. USA 2015, 112, E2083–E2092. [Google Scholar] [CrossRef]

- Wolff, A.; Di Giovanni, D.A.; Gómez-Pilar, J.; Nakao, T.; Huang, Z.; Longtin, A.; Northoff, G. The temporal signature of self: Temporal measures of resting-state EEG predict self-consciousness. Hum. Brain Map. 2019, 40, 789–803. [Google Scholar] [CrossRef]

- Wolff, A.; Gómez-Pilar, J.; Nakao, T.; Northoff, G. Interindividual neural difference in moral decision-making are mediated by alpha power and delta/theta phase coherence. Sci. Rep. 2019, 9, 4432. [Google Scholar] [CrossRef]

- Bayne, T. The Unity of Consciousness; Oxford University Press: Oxford, UK, 2010. [Google Scholar]

- Ebisch, S.J.H.; Gallese, V.; Salone, A.; Martinotti, G.; di Iorio, G.; Mantini, D.; Perrucci, M.G.; Romani, G.L.; Di Giannantonio, M.; Northoff, G. Disrupted relationship between “resting state” connectivity and task-evoked activity during social perception in schizophrenia. Schizophr. Res. 2018, 193, 370–376. [Google Scholar] [CrossRef]

- Northoff, G.; Duncan, N.W.; Hayes, D.J. The brain and its resting state activity-experimental and methodological implications. Prog. Neurobiol. 2010, 92, 593–600. [Google Scholar] [CrossRef]

- Martino, D.J.; Samame, C.; Strejilevich, S.A. Stability of facial emotion recognition performance in bipolar disorder. Psych. Res. 2016, 243, 182–184. [Google Scholar] [CrossRef] [PubMed]

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Northoff, G.; Tsuchiya, N.; Saigo, H. Mathematics and the Brain: A Category Theoretical Approach to Go Beyond the Neural Correlates of Consciousness. Entropy 2019, 21, 1234. https://doi.org/10.3390/e21121234

Northoff G, Tsuchiya N, Saigo H. Mathematics and the Brain: A Category Theoretical Approach to Go Beyond the Neural Correlates of Consciousness. Entropy. 2019; 21(12):1234. https://doi.org/10.3390/e21121234

Chicago/Turabian StyleNorthoff, Georg, Naotsugu Tsuchiya, and Hayato Saigo. 2019. "Mathematics and the Brain: A Category Theoretical Approach to Go Beyond the Neural Correlates of Consciousness" Entropy 21, no. 12: 1234. https://doi.org/10.3390/e21121234

APA StyleNorthoff, G., Tsuchiya, N., & Saigo, H. (2019). Mathematics and the Brain: A Category Theoretical Approach to Go Beyond the Neural Correlates of Consciousness. Entropy, 21(12), 1234. https://doi.org/10.3390/e21121234