Embedding Learning with Triple Trustiness on Noisy Knowledge Graph

Abstract

I am convinced that the crux of the problem of learning is recognizing relationships and being able to use them. Christopher Strachey in a letter to Alan Turing, 1954

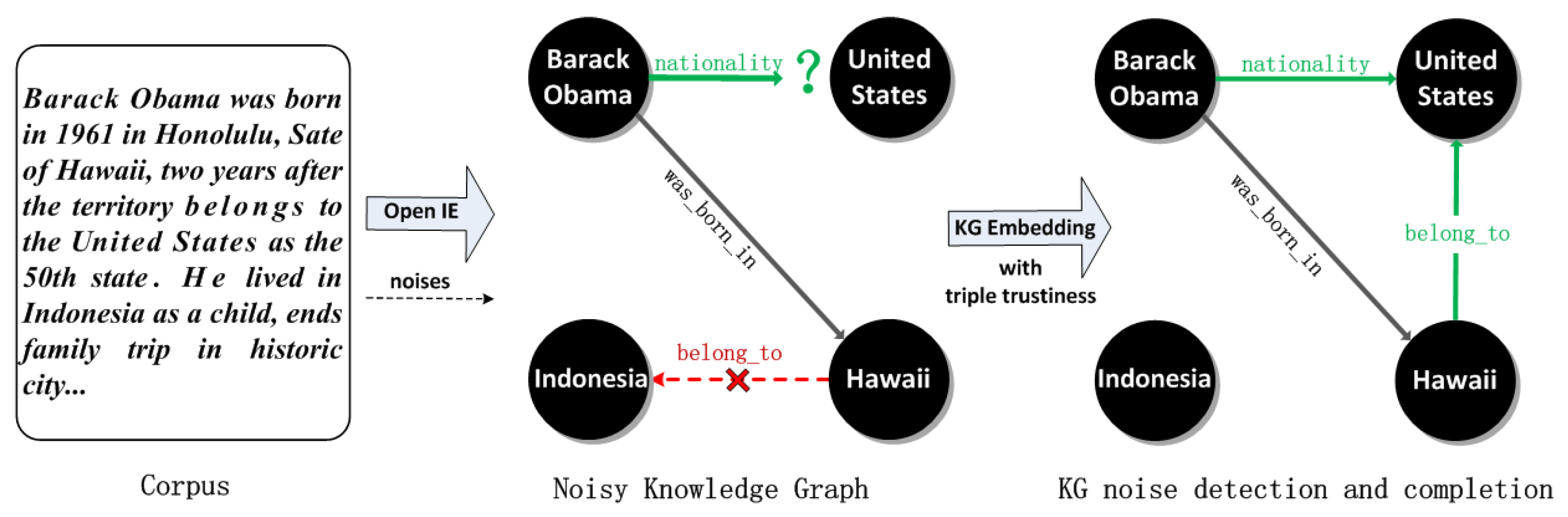

1. Introduction

- We propose a novel translating embedding model, TransT, for learning with triple trustiness on noisy knowledge graph by considering two external information, i.e., entity types and entity descriptions.

- Under this strategy, we propose two sub-models for calculating triple trustiness, one of which is estimated on newly generated entity type triples and another is measured with synthetic entity description triples.

- We present a cross entropy based approach for training model. The experimental results on three noisy datasets including FB15K-N1, FB15K-N2 and FB15K-N3 demonstrate the effectiveness of our proposed model.

2. Related Work

2.1. Kg Noise Detection

2.2. Knowledge Graph Embedding

2.3. Knowledge Graph Refinement

3. Methodology

3.1. Translating Embedding Model

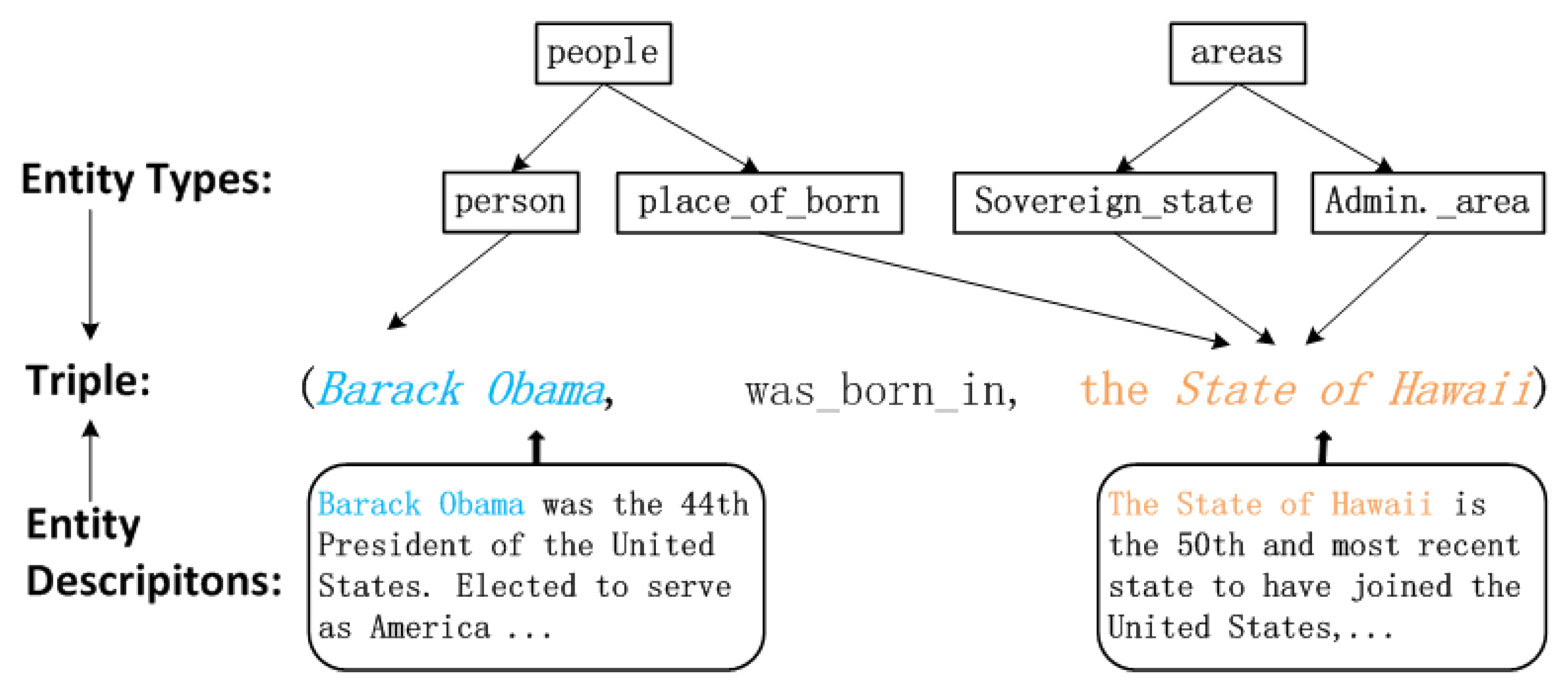

3.2. Translating Embedding with Triple Trustiness

3.3. Triple Trustiness

3.3.1. Triple Trustiness with Entity Types

3.3.2. Triple Trustiness with Entity Descriptions

3.3.3. Overall Triple Trustiness Model

4. Cross Entropy Loss Function for Optimization

| Algorithm 1 Learning TransT using cross entropy loss function. |

|

5. Experiments

5.1. Datasets

5.2. Experimental Settings and Baselines

5.3. Kg Noise Detection

5.4. Kg Completion

5.5. Triple Classification

6. Conclusions and Future Work

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Berant, J.; Chou, A.; Frostig, R.; Liang, P. Semantic Parsing on Freebase from Question-Answer Pairs. In Proceedings of the 2013 Conference on Empirical Methods in Natural Language Processing (EMNLP’13), Seattle, WA, USA, 18–21 October 2013; pp. 1533–1544. [Google Scholar]

- Bordes, A.; Weston, J.; Chopra, S. Question Answering with Subgraph Embeddings. In Proceedings of the Conference on Empirical Methods in Natural Language Processing, Doha, Qatar, 25–29 October 2014; pp. 615–620. [Google Scholar]

- Zheng, W.; Cheng, H.; Yu, J.X.; Zou, L.; Zhao, K. Interactive natural language question answering over knowledge graphs. Inf. Sci. 2019, 481, 141–159. [Google Scholar] [CrossRef]

- Weston, J.; Bordes, A.; Yakhnenko, O.; Usunier, N. Connecting language and knowledge bases with embedding models for relation extraction. In Proceedings of the Conference on Empirical Methods in Natural Language Processing, Seattle, WA, USA, 18–21 October 2013; pp. 1366–1371. [Google Scholar]

- Chang, K.W.; tau Yih, W.; Yang, B.; Meek, C. Typed tensor decomposition of knowledge bases for relation extraction. In Proceedings of the EMNLP, Doha, Qatar, 25–29 October 2014; pp. 1568–1579. [Google Scholar]

- Kaminska, D. Emotional Speech Recognition Based on the Committee of Classifiers. Entropy 2019, 21, 920. [Google Scholar] [CrossRef]

- Bollacker, K.; Evans, C.; Paritosh, P.; Sturge, T.; Taylor, J. Freebase: A collaboratively created graph database for structuring human knowledge. In Proceedings of the 2008 ACM SIGMOD International Conference on Management of Data (SIGMOD’08), Vancouver, BC, Canada, 10–12 June 2008; pp. 1247–1250. [Google Scholar]

- Miller, G.A. WordNet: A lexical database for English. Commun. ACM 1995, 38, 39–41. [Google Scholar] [CrossRef]

- Suchanek, F.M.; Kasneci, G.; Weikum, G. Yago: A core of semantic knowledge. In Proceedings of the WWW, Banff, AB, Canada, 8–12 May 2007; pp. 697–706. [Google Scholar]

- Lehmann, J.; Isele, R.; Jakob, M.; Jentzsch, A.; Kontokostas, D.; Mendes, P.N.; Hellmann, S.; Morsey, M.; van Kleef, P.; Auer, S.; et al. DBpedia: A largescale, multilingual knowledge base extracted from Wikipedia. Semant. Web J. 2015, 6, 167–195. [Google Scholar]

- Dong, X.; Gabrilovich, E.; Heitz, G.; Horn, W.; Lao, N.; Murphy, K.; Strohmann, T.; Sun, S.; Zhang, W. Knowledge vault: A web-scale approach to probabilistic knowledge fusion. In Proceedings of the 20th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (SIGKDD’14), New York, NY, USA, 24–27 August 2014; pp. 601–610. [Google Scholar]

- Banko, M.; Cafarella, M.J.; Soderland, S.; Broadhead, M.; Etzioni, O. Open Information Extraction from the Web. In Proceedings of the 24th International Joint Conference on Artificial Intelligence (IJCAI’07), Hyderabad, India, 6–12 January 2007; pp. 2670–2676. [Google Scholar]

- Lin, Y.; Shen, S.; Liu, Z.; Luan, H.; Sun, M. Neural Relation Extraction with Selective Attention over Instances. In Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics (ACL’16), Berlin, Germany, 7–12 August 2016; pp. 2124–2133. [Google Scholar]

- Liang, J.; Xiao, Y.; Zhang, Y.; won Hwang, S.; Wang, H. Graph-Based Wrong IsA Relation Detection in a Large-Scale Lexical Taxonomy. In Proceedings of the Thirty-First AAAI Conference on Artificial Intelligence (AAAI’17), San Francisco, CA, USA, 4–9 February 2017; pp. 1178–1184. [Google Scholar]

- Heindorf, S.; Potthast, M.; Stein, B.; Engels, G. Vandalism detection in wikidata. In Proceedings of the International on Conference on Information and Knowledge Management (CIKM’16), Indianapolis, IN, USA, 24–28 October 2016; ACM: New York, NY, USA, 2016; pp. 327–336. [Google Scholar]

- Stanovsky, G.; Michael, J.; Zettlemoyer, L.; Dagan, I. Supervised Open Information Extraction. In Proceedings of the NAACL-HLT 2018, New Orleans, LA, USA, 5 June 2018; pp. 885–895. [Google Scholar]

- Bordes, A.; Usunier, N.; Garcia-Duran, A.; Weston, J.; Yakhnenko, O. Translating Embeddings for Modeling Multi-relational data. In Proceedings of the Advances in Neural Information Processing Systems (NIPS’13), Lake Tahoe, NV, USA, 5–10 December 2013; pp. 2787–2795. [Google Scholar]

- Wang, Z.; Zhang, J.; Feng, J.; Chen, Z. Knowledge graph embedding by translating on hyperplanes. In Proceedings of the 28th AAAI Conference on Artificial Intelligence, Quebec City, QC, Canada, 27–31 July 2014; pp. 1112–1119. [Google Scholar]

- Socher, R.; Chen, D.; Manning, C.D.; Ng, A.Y. Reasoning with neural tensor networks for knowledge base completion. In Proceedings of the Advances in Neural Information Processing Systems (NIPS’13), Lake Tahoe, NV, USA, 5–10 December 2013; pp. 926–934. [Google Scholar]

- Trouillon, T.; Welbl, J.; Riedel, S.; Gaussier, E.; Bouchard, G. Complex embeddings for simple link prediction. In Proceedings of the 33rd International Conference on Machine Learning (ICML’16), New York, NY, USA, 19–24 June 2016; pp. 2071–2080. [Google Scholar]

- Xiao, H.; Huang, M.; Zhu, X. TransG: A generative model for knowledge graph embedding. In Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics (ACL’16), Berlin, Germany, 7–12 August 2016; pp. 2316–2325. [Google Scholar]

- Wang, Q.; Mao, Z.; Wang, B.; Guo, L. Knowledge graph embedding: A survey of approaches and applications. IEEE Trans. Knowl. Data Eng. 2017, 29, 2724–2743. [Google Scholar] [CrossRef]

- Xie, R.; Liu, Z.; Lin, F.; Lin, L. Does William Shakespeare REALLY Write Hamlet? Knowledge Representation Learning with Confidence. In Proceedings of the Association for the Advancement of Artificial Intelligence, New Orleans, LA, USA, 2–7 February 2018. [Google Scholar]

- Mitra, S.; Pal, S.K.; Mitra, P. Data Mining in Soft Computing Framework: A Survey. IEEE Trans. Neural Netw. 2002, 13. [Google Scholar] [CrossRef] [PubMed]

- Paulheim, H. Knowledge Graph Refinement: A Survey of Approaches and Evaluation Methods. Semant. Web 2017, 8, 489–508. [Google Scholar] [CrossRef]

- Melo, A.; Paulheim, H. Detection of Relation Assertion Errors in Knowledge Graphs. In Proceedings of the ACM Conference, Tacoma, WA, USA, 18–20 August 2017; p. 22. [Google Scholar]

- Nickel, M.; Murphy, K.; Tresp, V.; Gabrilovich, E. A review of relational machine learning for knowledge graphs. Proc. IEEE 2016, 104, 11–33. [Google Scholar] [CrossRef]

- Pellissier Tanon, T.; Vrandecic, D.; Schaffert, S.; Steiner, T.; Pintscher, L. From freebase to wikidata: The great migration. In Proceedings of the WWW, Montreal, QC, Canada, 11–15 April 2016; pp. 1419–1428. [Google Scholar]

- Gyöngyi, Z.; Garcia-Molina, H.; Pedersen, J. Combating web spam with trust rank. In Proceedings of the VLDB, Toronto, ON, Canada, 31 August–3 September 2004; pp. 576–587. [Google Scholar]

- De Meo, P.; Ferrara, E.; Fiumara, G.; Ricciardello, A. A novel measure of edge centrality in social networks. Knowl.-Based Syst. 2012, 30, 136–150. [Google Scholar] [CrossRef]

- Paulheim, H.; Bizer, C. Type Inference on Noisy RDF Data. In Proceedings of the ISWC, Sydney, Australia, 21–25 October 2013; pp. 510–525. [Google Scholar]

- Bordes, A.; Weston, J.; Collobert, R.; Bengio, Y. Learning structured embeddings of knowledge bases. In Proceedings of the 25th AAAI Conference on Artificial Intelligence (AAAI’11), San Francisco, CA, USA, 7–11 August 2011; pp. 301–306. [Google Scholar]

- Bordes, A.; Glorot, X.; Weston, J.; Bengio, Y. A semantic matching energy function for learning with multi-relational data. Mach. Learn. 2014, 94, 233–259. [Google Scholar] [CrossRef]

- Zhao, Y.; Gao, S.; Gallinari, P.; Guo, J. Knowledge base completion by learning pairwise-interaction differentiated embeddings. Data Min. Knowl. Discov. 2015, 29, 1486–1504. [Google Scholar] [CrossRef]

- Nickel, M.; Tresp, V.; Kriegel, H.P. A three-way model for collective learning on multi-relational data. In Proceedings of the 28th International Conference on Machine Learning (ICML’11), Bellevue, WA, USA, 28 June–2 June 2011; pp. 809–816. [Google Scholar]

- Lin, Y.; Liu, Z.; Sun, M.; Liu, Y.; Zhu, X. Learning entity and relation embeddings for knowledge graph completion. In Proceedings of the 29th AAAI Conference on Artificial Intelligence (AAAI’15), Austin, TX, USA, 25–29 January 2015; pp. 2181–2187. [Google Scholar]

- Ji, G.; He, S.; Xu, L.; Liu, K.; Zhao, J. Knowledge graph embedding via dynamic mapping matrix. In Proceedings of the 53rd Annual Meeting of the Association for Computational Linguistics and the 7th International Joint Conference on Natural Language Processing (ACL’15), Beijing, China, 26–31 July 2015; pp. 687–696. [Google Scholar]

- Liu, H.; Wu, Y.; Yang, Y. Analogical inference for multi-relational embeddings. In Proceedings of the 34th International Conference on Machine Learning (ICML’17), Sydney, Australia, 6–11 August 2017; pp. 2168–2178. [Google Scholar]

- Dettmers, T.; Pasquale, M.; Pontus, S.; Riedel, S. Convolutional 2D knowledge graph embeddings. In Proceedings of the 2017 AAAI Conference on Artificial Intelligence (AAAI’17), San Francisco, CA, USA, 4–9 February 2017; pp. 1811–1818. [Google Scholar]

- Shi, B.; Weninger, T. ProjE: Embedding projection for knowledge graph completion. In Proceedings of the 31st AAAI Conference on Artificial Intelligence (AAAI’17), San Francisco, CA, USA, 4–9 February 2017; pp. 1236–1242. [Google Scholar]

- Xiao, H.; Huang, M.; Zhu, X. SSP: Semantic space projection for knowledge graph embedding with text descriptions. In Proceedings of the 31st AAAI Conference on Artificial Intelligence (AAAI’17), San Francisco, CA, USA, 4–9 February 2017; pp. 3104–3110. [Google Scholar]

- Xie, R.; Liu, Z.; Sun, M. Representation learning of knowledge graphs with hierarchical types. In Proceedings of the 25th International Joint Conference on Artificial Intelligence (IJCAI’16), New York, NY, USA, 9–15 July 2016; pp. 2965–2971. [Google Scholar]

- Xie, R.; Liu, Z.; Jia, J.; Luan, H.; Sun, M. Representation learning of knowledge graphs with entity descriptions. In Proceedings of the 30th AAAI Conference on Artificial Intelligence (AAAI’16), Phoenix, AZ, USA, 12–17 February 2016; pp. 2659–2665. [Google Scholar]

- Zhong, H.; Zhang, J.; Wang, Z.; Wan, H.; Chen, Z. Aligning knowledge and text embeddings by entity descriptions. In Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing (EMNLP’15), Lisbon, Portugal, 17–21 September 2015; pp. 267–272. [Google Scholar]

- Zhang, D.; Yuan, B.; Wang, D.; Liu, R. Joint semantic relevance learning with text data and graph knowledge. In Proceedings of the 3rd Workshop on Continuous Vector Space Models and their Compositionality, Beijing, China, 26–31 July 2015; pp. 32–40. [Google Scholar]

- Lin, Y.; Liu, Z.; Luan, H.; Sun, M.; Rao, S.; Liu, S. Modeling relation paths for representation learning of knowledge bases. In Proceedings of the 2015 EMNLP, Lisbon, Portugal, 17–21 September 2015; pp. 705–714. [Google Scholar]

- Nickel, M.; Tresp, V.; Kriegel, H.P. Factorizing yago: Scalable machine learning for linked data. In Proceedings of the 21st International Conference on World Wide Web (WWW’12), Lyon, France, 16–20 April 2012; ACM: New York, NY, USA, 2012; pp. 271–280. [Google Scholar]

- Jia, S.; Xiang, Y.; Chen, X. TTMF: A Triple Trustworthiness Measurement Frame for Knowledge Graphs. arXiv 2018, arXiv:1809.09414. [Google Scholar]

- Moon, C.; Jones, P.; Samatova, N.F. Learning Entity Type Embeddings for Knowledge Graph Completion. In Proceedings of the 2017 ACM on Conference on Information and Knowledge Management, Singapore, 6–10 November 2017; pp. 2215–2218. [Google Scholar]

- Lai, S.; Xu, L.; Liu, K.; Zhao, J. Recurrent Convolutional Neural Networks for Text Classification. In Proceedings of the Twenty-Ninth AAAI Conference on Artificial Intelligence, Austin, TX, USA, 25–30 January 2015; pp. 2267–2273. [Google Scholar]

- Shannon, C.E. Communication theory of secrecy systems. Bell Syst. Tech. J. 1949, 28, 656–715. [Google Scholar] [CrossRef]

- Komninos, A.; Manandhar, S. Feature-Rich Networks for Knowledge Base Completion. In Proceedings of the ACL, Vancouver, BC, Canada, 30 July–4 August 2017; pp. 324–329. [Google Scholar]

- Brian Murphy, P.T.; Mitchell, T. Learning effective and interpretable semantic models using non-negative sparse embedding. In Proceedings of the COLING 2012, Mumbai, India, 8–15 December 2012; pp. 1933–1950. [Google Scholar]

- Ding, B.; Wang, Q.; Wang, B.; Guo, L. Improving Knowledge Graph Embedding Using Simple Constraints. In Proceedings of the 56th Annual Meeting of (ACL’18), Melbourne, Australia, 15–20 July 2018; pp. 110–121. [Google Scholar]

- Belda, J.; Vergara, L.; Safont, G.; Salazar, A. Computing the Partial Correlation of ICA Models for Non-Gaussian Graph Signal Processing. Entropy 2019, 21, 22. [Google Scholar] [CrossRef]

- Belda, J.; Vergara, L.; Salazar, A.; Safont, G. Estimating the Laplacian matrix of Gaussian mixtures for signal processing on graphs. Signal Process. 2018, 148, 241–249. [Google Scholar] [CrossRef]

| Dataset | #Entities | #Rel | #Train | #Valid | #Test |

| 14,951 | 1345 | 483,142 | 50,000 | 59,071 | |

| Dataset | #Ent | #Type | #Train | #Valid | #Test |

| FB15kET | 14,951 | 3851 | 136,618 | 16,000 | 16,000 |

| Datasets | FB15k-N1 | FB15k-N2 | FB15k-N3 |

|---|---|---|---|

| 46,408 | 93,782 | 187,925 | |

| 529,550 | 576,924 | 671,067 | |

| 50,000 | 50,000 | 50,000 | |

| 59,071 | 59,071 | 59,071 |

| Dataset | FB15K-N1 | FB15K-N2 | FB15K-N3 | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Metrics | Mean Rank | Hits@10(%) | Mean Rank | Hits@10(%) | Mean Rank | Hits@10(%) | ||||||

| Raw | Filter | Raw | Filter | Raw | Filter | Raw | Filter | Raw | Filter | Raw | Filter | |

| TransE | 240 | 144 | 44.9 | 59.8 | 250 | 155 | 42.8 | 56.3 | 265 | 171 | 40.2 | 51.8 |

| CKRL (LT) | 237 | 140 | 45.5 | 61.8 | 243 | 146 | 44.3 | 59.3 | 244 | 148 | 42.7 | 56.9 |

| CKRL (LT+PP) | 236 | 139 | 45.3 | 61.6 | 241 | 144 | 44.2 | 59.4 | 245 | 149 | 42.8 | 56.8 |

| CKRL (LT+PP+AP) | 236 | 138 | 45.3 | 61.6 | 240 | 144 | 44.2 | 59.3 | 245 | 150 | 42.8 | 56.6 |

| TransT (TT) | 233 | 137 | 45.8 | 61.2 | 239 | 143 | 44.6 | 58.1 | 249 | 153 | 42.4 | 55.2 |

| TransT (TT+DT) | 232 | 137 | 45.9 | 62.3 | 237 | 141 | 45.0 | 60.1 | 246 | 148 | 43.4 | 57.1 |

| Dataset | FB15K-N1 | FB15K-N2 | FB15K-N3 |

|---|---|---|---|

| TransE | 81.3 | 79.4 | 76.9 |

| CKRL(LT) | 81.8 | 80.2 | 78.3 |

| CKRL(LT+PP) | 81.9 | 80.1 | 78.4 |

| CKRL(LT+PP+AP) | 81.7 | 80.2 | 78.3 |

| TransT (TT) | 82.2 | 80.8 | 79.1 |

| TransT (TT+DT) | 82.4 | 81.1 | 80.1 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhao, Y.; Feng, H.; Gallinari, P. Embedding Learning with Triple Trustiness on Noisy Knowledge Graph. Entropy 2019, 21, 1083. https://doi.org/10.3390/e21111083

Zhao Y, Feng H, Gallinari P. Embedding Learning with Triple Trustiness on Noisy Knowledge Graph. Entropy. 2019; 21(11):1083. https://doi.org/10.3390/e21111083

Chicago/Turabian StyleZhao, Yu, Huali Feng, and Patrick Gallinari. 2019. "Embedding Learning with Triple Trustiness on Noisy Knowledge Graph" Entropy 21, no. 11: 1083. https://doi.org/10.3390/e21111083

APA StyleZhao, Y., Feng, H., & Gallinari, P. (2019). Embedding Learning with Triple Trustiness on Noisy Knowledge Graph. Entropy, 21(11), 1083. https://doi.org/10.3390/e21111083