1. Introduction

Over the last five years, the data science community has devoted significant attention to stochastic optimisation in Riemannian manifolds. This was impulsed by Bonnabel, who proved the convergence of the Riemannian stochastic gradient method [

1]. Later on [

2], the rate of convergence of this method was studied in detail and under various convexity assumptions on the cost function. More recently, asymptotic efficiency of the averaged Riemannian stochastic gradient method was proved in [

3]. Previously, for the specific problem of computing Riemannian means, several results on the convergence and asymptotic normality of Riemannian stochastic optimisation methods had been obtained [

4,

5]. The framework of stochastic optimisation in Riemannian manifolds is far-reaching, and encompasses applications to principal component analysis, dictionary learning, and tensor decomposition, to give only a few examples [

6,

7,

8].

The present work moves in a different direction, focusing on recursive estimation in Riemannian manifolds. While recursive estimation is a special case of stochastic optimisation, it has its own geometric structure, given by the Fisher information metric. Here, several original results will be introduced, which show how this geometric structure can be exploited, to design Riemannian stochastic optimisation algorithms which compute fast, asymptotically efficient, recursive estimates, of a statistical parameter which belongs to a Riemannian manifold. For the first time in the literature, these results extend, from the Euclidean context to the Riemannian context, the classical results of [

9,

10].

The mathematical problem, considered in the present work, is formulated in

Section 2. This involves a parameterised statistical model

P of probability distributions

, where the statistical parameter

belongs to a Riemannian manifold

. Given independent observations, with distribution

for some

, the aim is to estimate the unknown parameter

. In principle, this is done by minimising a statistical divergence function

, which measures the dissimilarity between

and

. Taking advantage of the observations, there are two approaches to minimising

: stochastic minimisation, which leads to recursive estimation, and empirical minimisation, which leads to classical techniques, such as maximum-likelihood estimation [

11,

12].

The original results, obtained in the present work, are stated in

Section 3. In particular, these are Propositions 2, 4, and 5. Overall, these propositions show that recursive estimation, which requires less computational resources than maximum-likelihood estimation, can still achieve the same optimal performance, characterised by asymptotic efficiency [

13,

14].

To summarise these propositions, consider a sequence of recursive estimates , computed using a Riemannian stochastic optimisation algorithm with decreasing step sizes (n is the number of observations already processed by the algorithm). Informally, under assumptions which guarantee that is an attractive local minimum of , and that the algorithm is neither too noisy, nor too unstable, in the neighborhood of ,

Proposition 2 states that, with an adequate choice of step sizes, the

achieve a fast non-asymptotic rate of convergence to

. Precisely, the expectation of the squared Riemannian distance between

and

is

. This is called a fast rate, because it is the best achievable, for any step sizes which are proportional to

with

[

9,

15]. Here, this rate is obtained without any convexity assumptions, for twice differentiable

. It would still hold for non-differentiable, but strongly convex,

[

2].

Proposition 4 states that the distribution of the

becomes asymptotically normal, centred at

, when

n grows increasingly large, and also characterises the corresponding asymptotic covariance matrix. This proposition is proved using a novel linearisation technique, which also plays a central role in [

3].

Proposition 5 states that, if the Riemannian manifold

is equipped with the Fisher information metric of the statistical model

P, then Riemannian gradient descent with respect to this information metric, when used to minimise

, computes recursive estimates

which are asymptotically efficient, achieving the optimal asymptotic rate of convergence, given by the Cramér-Rao lower bound. This is illustrated, with a numerical application to the recursive estimation of elliptically contoured distributions, in

Section 4.

The end result of Proposition 5 is asymptotic efficiency, achieved using the Fisher information metric. In [

3], an alternative route to asymptotic efficiency is proposed, using the averaged Riemannian stochastic gradient method. This method does not require any prior knowledge of the Fisher information metric, but has an additional computational cost, which comes from computing on-line Riemannian averages.

The proofs of Propositions 2, 4, and 5, are detailed in

Section 6, and

Appendix A and

Appendix B. Necessary background, about the Fisher information metric (in short, this will be called the information metric), is recalled in

Appendix C. Before going on, the reader should note that the summation convention of differential geometry is used throughout the following, when working in local coordinates.

2. Problem Statement

Let be a statistical model, with parameter space and sample space X. To each , the model P associates a probability distribution on X. Here, is a Riemannian manifold with , and X is any measurable space. The Riemannian metric of will be denoted , with its Riemannian distance . In general, the metric is not the information metric of the model P.

Let be a complete probability space, and be i.i.d. random variables on , with values in X. While the distribution of is unknown, it is assumed to belong to the model P. That is, for some , to be called the true parameter.

Consider the following problem: how to obtain fast, asymptotically efficient, recursive estimates

of the true parameter

, based on observations of the random variables

? The present work proposes to solve this problem through a detailed study of the decreasing-step-size algorithm, which computes, similar to [

1]

starting from an initial guess

.

This algorithm has three ingredients. First,

denotes the Riemannian exponential map of the metric

of

[

16]. Second, the step sizes

are strictly positive, decreasing, and verify the usual conditions for stochastic approximation [

10,

17]

Third,

is a continuous vector field on

for each

, which generalises the classical concept of score statistic [

13,

18]. It will become clear, from the results given in

Section 3, that the solution of the above-stated problem depends on the choice of each one of these three ingredients.

A priori knowledge about the model

P is injected into Algorithm (

1a) using a divergence function

(note that

is unknown, though). As defined in [

19], this is a positive function, equal to zero if and only if

, and with positive definite Hessian at

. Since one expects that minimising

will lead to estimating

, it is natural to require that

In other words, that

is an unbiased estimator of minus the Riemannian gradient of

. With

given by (

1c), Algorithm (

1a) is a Riemannian stochastic gradient descent, of the form considered in [

1,

2,

3]. However, as explained in Remark 2, (

1c) may be replaced by the weaker condition (

9), which states that

is a Lyapunov function of Algorithm (

1a), without affecting the results in

Section 3. In this sense, Algorithm (

1a) is more general than Riemannian stochastic gradient descent.

In practice, a suitable choice of

is often the Kullback-Leibler divergence [

20],

where

is absolutely continuous with respect to

with Radon-Nikodym derivative

(the likelihood function). Indeed, if

is chosen to be the Kullback-Leibler divergence, then (

1c) is satisfied by

which, in many practical situations, can be evaluated directly, without any knowledge of

.

Before stating the main results, in the following

Section 3, it may be helpful to recall some general background on recursive estimation [

10]. For simplicity, let

be the Kullback-Leibler divergence (

2a). The problem of estimating the true parameter

is equivalent to the problem of finding a global minimum of

. Of course, this problem cannot be tackled directly, since

cannot be computed without knowledge of

. There exist two routes around this difficulty.

The first route is empirical minimisation, which replaces the expectation in (

2a) with an empirical mean over observed data. Given the first

n observations, instead of minimising

, one minimises the empirical divergence

,

where

L is the likelihood function of (

2a). Now, given the minus sign ahead of the sum in (

3), it is clear that minimising

amounts to maximising the sum of log-likelihoods. Thus, one is lead to the method of maximum-likelihood estimation.

It is well-known that maximum-likelihood estimation under general regularity conditions is asymptotically efficient [

13]. Roughly, this means the maximum-likelihood estimator has the least possible asymptotic variance, equal to the inverse of the Fisher information. On the other hand, as the number

n of observations grows, it can be especially difficult to deal with the empirical divergence

of Equation (

3). In the process of searching for the minimum of

, each evaluation of this function, or of its derivatives, will involve a massive number of operations, ultimately becoming unpractical.

Aiming to avoid this difficulty, the second route recursive estimation is based on observation-driven updates, following the general scheme of algorithm (

1a). In this scheme, each new recursive estimate

is computed using only the new observation

. Therefore, as the number of observations grows very large, the overall computational effort required by recursive estimation remains the same.

The main results in the following section show that recursive estimation can achieve the same asymptotic performance as maximum-likelihood estimation as the number n of observations grows large. As a word of caution, it should be mentioned that recursive estimation does not, in general, fare better than maximum-likelihood estimation for moderate values of the number n of observations, and models with a small number of parameters. However, it is a very desirable substitute to maximum-likelihood estimation for models with a large number of parameters, which typically require a very large number of observations in order to be estimated correctly.

3. Main Results

The motivation of the following Propositions 1 to 5 is to provide general conditions, which guarantee that Algorithm (

1a) computes fast, asymptotically efficient, recursive estimates

of the true parameter

. In the statement of these propositions, it is implicitly assumed that conditions (

1b) and (

1c) are verified. Moreover, the following assumptions are considered.

- (d1)

the divergence function has an isolated stationary point at , and Lipschitz gradient in a neighborhood of this point.

- (d2)

this stationary point is moreover attractive: is twice differentiable at , with positive definite Hessian at this point.

- (u1)

in a neighborhood of , the function is uniformly bounded.

- (u2)

in a neighborhood of , the function is uniformly bounded.

For Assumption (d1), the definition of a Lipschitz vector field on a Riemannian manifold may be found in [

21]. For Assumptions (u1) and (u2),

denotes the Riemannian norm.

Assumptions (u1) and (u2) are so-called moment control assumptions. They imply that the noise in Algorithm (

1a) does not cause the iterates

to diverge, and are also crucial to proving the asymptotic normality of these iterates.

Let

be a neighborhood of

which verifies (d1), (u1), and (u2). Without loss of generality, it is assumed that

is compact and convex (see the definition of convexity in [

16,

22]). Then,

admits a system of normal coordinates

with origin at

. With respect to these coordinates, denote the components of

by

and let

,

When (d2) is verified, denote the components of the Hessian of

at

by

,

Then, the matrix

is positive definite [

23]. Denote by

its smallest eigenvalue.

Propositions 1 to 5 require the condition that the recursive estimates are stable, which means that all the lie in , almost surely. The need for this condition is discussed in Remark 3. Note that, if lies in , then is determined by its normal coordinates .

Proposition 1 (consistency). assume (d1) and (u1) are verified, and the recursive estimates are stable. Then, almost surely.

Proposition 2 (mean-square rate).

assume (d1), (d2) and (u1) are verified, the recursive estimates are stable, and where . Then Proposition 3 (almost-sure rate).

assume the conditions of Proposition 2 are verified. Then, Proposition 4 (asymptotic normality).

assume the conditions of Proposition 2, as well as (u2), are verified. Then, the distribution of the re-scaled coordinates converges to a centred d-variate normal distribution, with covariance matrix Σ given by Lyapunov’s equationwhere with (here, δ denotes Kronecker’s delta). Proposition 5 (asymptotic efficiency).

assume the Riemannian metric of Θ coincides with the information metric of the model P, and let be the Kullback-Leibler divergence (2a). Further, assume (d1), (d2), (u1) and (u2) are verified, the recursive estimates are stable, and where . Then,(i)the rates of convergence (5) and (6) hold true. (ii)if , the distribution of the re-scaled coordinates converges to a centred d-variate normal distribution, with covariance matrix .

(iii)if , and is given by (2b), then is the identity matrix, and the recursive estimates are asymptotically efficient. (iv)the following rates of convergence also hold The following remarks are concerned with the scope of Assumptions (d1), (d2), (u1), and (u2), and with the applicability of Propositions 1 to 5.

Remark 1. (d2), (u1) and (u2) do not depend on the Riemannian metric of Θ. Precisely, if they are verified for one Riemannian metric on Θ, then they are verified for any Riemannian metric on Θ. Moreover, if the function is , then the same is true for (d1). In this case, Propositions 1 to 5 apply for any Riemannian metric on Θ, so that the choice of the metric is a purely practical matter, to be decided according to applications.

Remark 2. the conclusion of Proposition 1 continues to hold, if (1c) is replaced byThen, it is even possible to preserve Propositions 2, 3, and 4, provided (d2) is replaced by the assumption that the mean vector field, , has an attractive stationary point at . This generalisation of Propositions 1 to 4 can be achieved following essentially the same approach as laid out in Section 6. However, in the present work, it will not be carried out in detail. Remark 3. the condition that the recursive estimates are stable is standard in all prior work on stochastic optimisation in manifolds [1,2,3]. In practice, this condition can be enforced through replacing Algorithm (1a) by a so-called projected or truncated algorithm. This is identical to (1a), except that is projected back onto the neighborhood of , whenever it falls outside of this neighborhood [10,17]. On the other hand, if the are not required to be stable, but (d1) and (u1) are replaced by global assumptions, - (d1’)

has compact level sets and globally Lipschitz gradient.

- (u1’)

for some constant C and for all .

then, applying the same arguments as in the proof of Proposition 1, it follows that the converge to the set of stationary points of , almost surely.

Remark 4. from (ii) and (iii) of Proposition 5, it follows that the distribution of converges to a -distribution with d degrees of freedom. This provides a practical means of confirming the asymptotic efficiency of the recursive estimates .

4. Application: Estimation of ECD

Here, the conclusion of Proposition 5 is illustrated, by applying Algorithm (

1a) to the estimation of elliptically contoured distributions (ECD) [

24,

25]. Precisely, in the notation of

Section 2, let

the space of

positive definite matrices, and

. Moreover, let each

have probability density function

where

is fixed, has negative values, and is decreasing, and

denotes the transpose. Then,

is called an ECD with scatter matrix

. To begin, let

be i.i.d. random vectors in

, with distribution

given by (

10), and consider the problem of estimating the true scatter matrix

. The standard approach to this problem is based on maximum-likelihood estimation [

25,

26]. An original approach, based on recursive estimation, is now introduced using Algorithm (

1a).

As in Proposition 5, the parameter space

will be equipped with the information metric of the statistical model

P just described. In [

27], it is proved that this information metric is an affine-invariant metric on

. In other words, it is of the general form [

28]

parameterised by constants

and

, where

denotes the trace and

the squared trace, and where

denotes the tangent space at

to the manifold

. Precisely [

27], for the information metric of the model

P,

where

is a further constant, given by the expectation

with

the identity matrix, and

the derivative of

h. This expression of the information metric can now be used to specify Algorithm (

1a).

First, since the information metric is affine-invariant, it is enough to recall that all affine-invariant metrics on

have the same Riemannian exponential map [

25,

29],

where exp denotes the matrix exponential. Second, as in (ii) of Proposition 5, choose the sequence of step sizes

Third, as in (iii) of Proposition 5, let

be the vector field on

given by (

2b),

where

denotes the gradient with respect to the information metric, and

is the likelihood ratio, equal to

divided by

. Now, replacing (12) into (

1a) defines an original algorithm for recursive estimation of the true scatter matrix

.

To apply this algorithm in practice, one may evaluate

via the following steps. Denote

the gradient of

with respect to the affine-invariant metric of [

29], which corresponds to

and

. By direct calculation from (

10), this is given by

Moreover, introduce the constants

and

. Then,

can be evaluated,

from the orthogonal decomposition of

,

Figure 1 and

Figure 2 below display numerical results from an application to Kotz-type distributions, which correspond to

in (

10) and

in (

11c) [

24,

27]. These figures were generated from

Monte Carlo runs of the algorithm defined by (

1a) and (12), with random initialisation, for the specific values

and

. Essentially the same numerical results could be observed for any

and

.

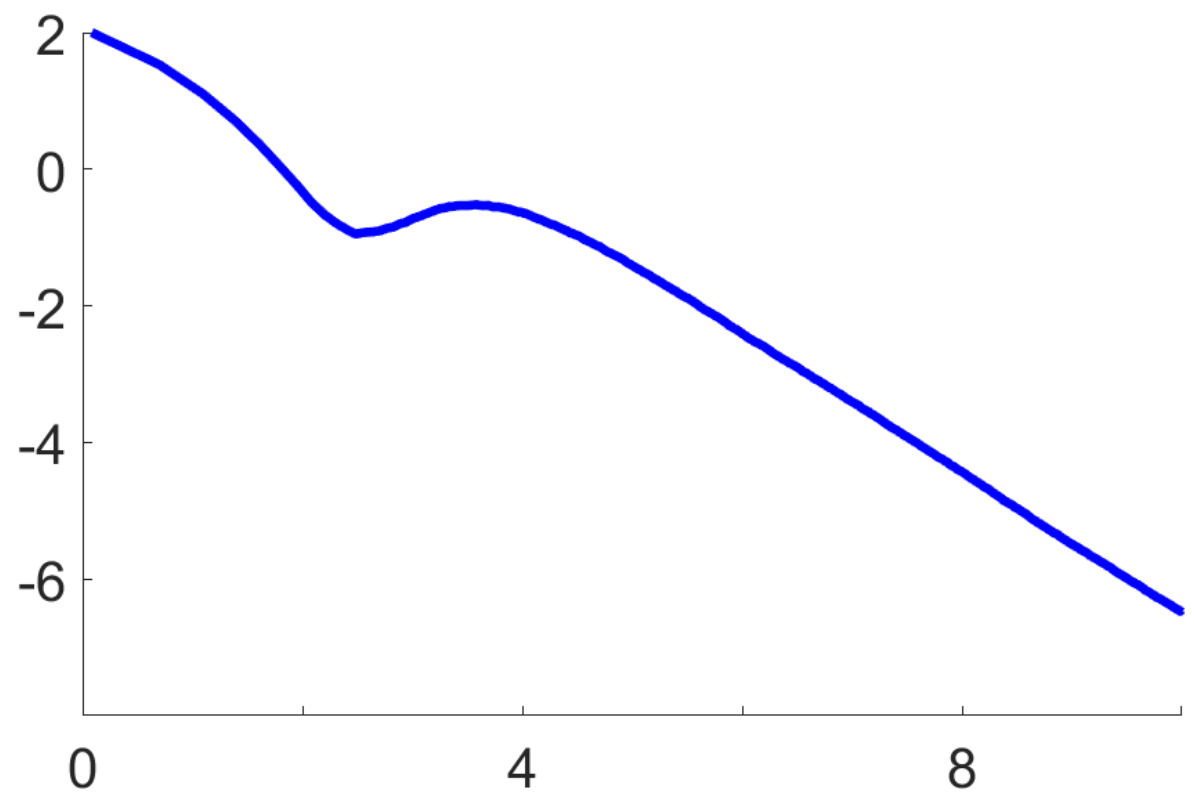

Figure 1 confirms the fast non-asymptotic rate of convergence (

5), stated in (i) of Proposition 5. On a log-log scale, it shows the empirical mean

over Monte Carlo runs, as a function of

n. This decreases with a constant negative slope equal to

, starting roughly at

. Here, the Riemannian distance

induced by the information metric (11) is given by [

28]

where log denotes the symmetric matrix logarithm [

30].

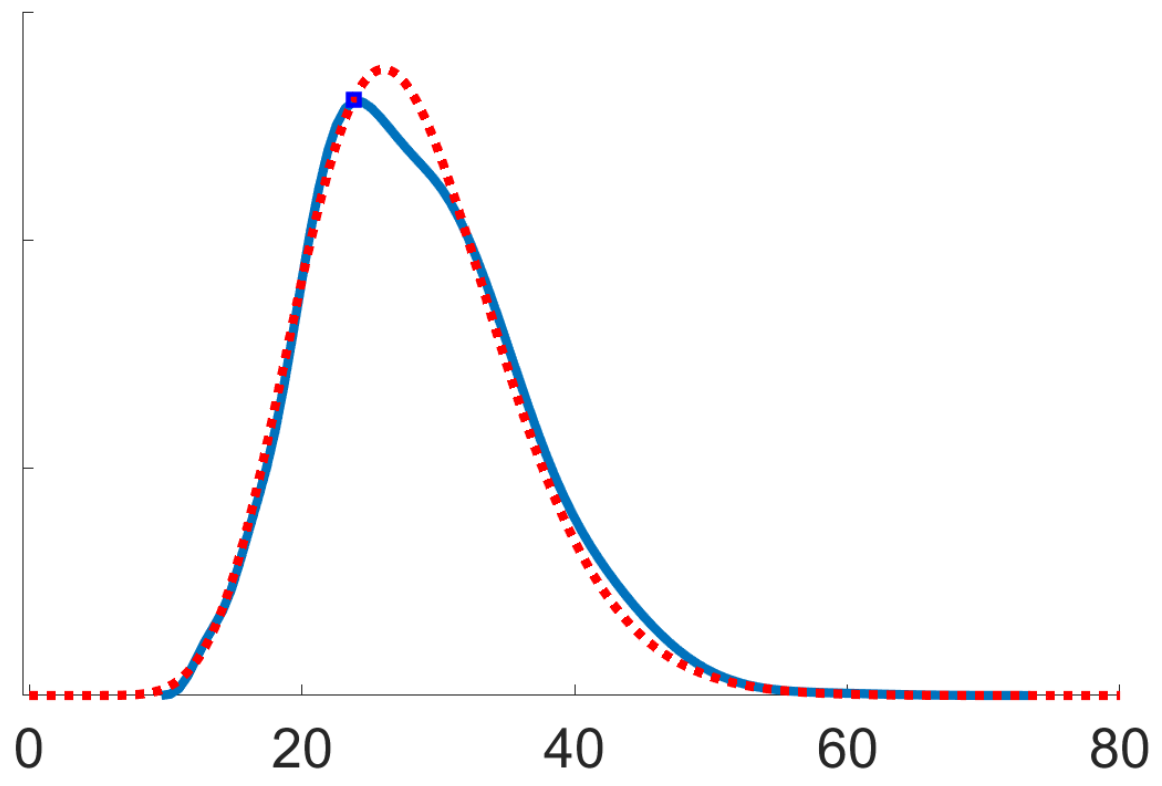

Figure 2 confirms the asymptotic efficiency of the recursive estimates

, stated in (iii) of Proposition 5, using Remark 4. It shows a kernel density estimate of

where

(solid blue curve). This agrees with a

-distribution with 28 degrees of freedom (dotted red curve), where

is indeed the dimension of the parameter space

for

.

5. Conclusions

Recursive estimation is a subject that is over fifty years old [

10], with its foundation in the general theory of stochastic optimisation [

9,

15]. Its applications are very wide-ranging, as they cover areas from control theory to machine learning [

17].

With the increasing role of Riemannian manifolds in statistical inference and machine learning, it was natural to generalise the techniques of stochastic optimisation, from Euclidean space to Riemannian manifolds. Indeed, this started with the work of Bonnabel [

1], which impulsed a series of successive works, such as [

2,

3].

These works have mostly sought to directly adapt classical results, known in Euclidean space, which concern optimal rates of convergence to a unique attractive minimum of a cost function. The present work also belongs to this line of thinking. It shows that when dealing with a recursive estimation problem, where the unknown statistical parameter belongs to a differentiable manifold, equipping this manifold with the information metric of the underlying statistical model, leads to optimal algorithm performance, which is moreover automatic (does not involve parameter tuning).

The results obtained in the present work (as well as in [

2,

3]) suffer from inherent limitations. Indeed, being only focused on convergence to a unique attractive minimum, it does not tackle the following important open problems:

stability of stochastic optimisation algorithms finding verifiable conditions which ensure a stochastic optimisation algorithm remains within a compact set. A more general form of this problem is computing the probability of a stochastic optimisation algorithm exiting a certain neighborhood of a stationary point (whether attractive or not) within a finite number of iterations.

non-asymptotic performance of stochastic optimisation algorithms: this involves computing explicitly the outcome which the algorithm is able to achieve, after a given finite number of iterations. This provides a much stronger theoretical guarantee, to the user, than standard results which compute a rate of convergence.

These problems have attracted much attention and generated well-known results when considered in the Euclidean case [

31,

32], but remain open in the context of Riemannian manifolds. They involve much richer interaction between Riemannian geometry and stochastic optimisation, due to their global nature.