Abstract

As we discuss, a stationary stochastic process is nonergodic when a random persistent topic can be detected in the infinite random text sampled from the process, whereas we call the process strongly nonergodic when an infinite sequence of independent random bits, called probabilistic facts, is needed to describe this topic completely. Replacing probabilistic facts with an algorithmically random sequence of bits, called algorithmic facts, we adapt this property back to ergodic processes. Subsequently, we call a process perigraphic if the number of algorithmic facts which can be inferred from a finite text sampled from the process grows like a power of the text length. We present a simple example of such a process. Moreover, we demonstrate an assertion which we call the theorem about facts and words. This proposition states that the number of probabilistic or algorithmic facts which can be inferred from a text drawn from a process must be roughly smaller than the number of distinct word-like strings detected in this text by means of the Prediction by Partial Matching (PPM) compression algorithm. We also observe that the number of the word-like strings for a sample of plays by Shakespeare follows an empirical stepwise power law, in a stark contrast to Markov processes. Hence, we suppose that natural language considered as a process is not only non-Markov but also perigraphic.

Keywords:

stationary processes; PPM code; mutual information; power laws; algorithmic information theory; natural language MSC:

94A17; 60G10; 94A29; 68Q30; 68T50

1. Introduction

One of the motivating assumptions of information theory [1,2,3] is that communication in natural language can be reasonably modeled as a discrete stationary stochastic process, namely, an infinite sequence of discrete random variables with a well defined time-invariant probability distribution. The same assumption is made in several practical applications of computational linguistics, such as speech recognition [4] or part-of-speech tagging [5]. Whereas state-of-the-art stochastic models of natural language are far from being satisfactory, we may ask a more theoretically oriented question, namely:

What can be some general mathematical properties of natural language treated as a stochastic process, in view of empirical data?

In this paper, we will investigate a question of whether it is reasonable to assume that natural language communication is a perigraphic process.

To recall, a stationary process is called ergodic if the relative frequencies of all finite substrings in the infinite text generated by the process converge in the long run with probability one to some constants—the probabilities of the respective strings. Now, some basic linguistic intuition suggests that natural language does not satisfy this property, cf. ([3], Section 6.4). Namely, we can probably agree that there is a variation of topics of texts in natural language, and these topics can be empirically distinguished by counting relative frequencies of certain substrings called keywords. Hence, we expect that the relative frequencies of keywords in a randomly selected text in natural language are random variables depending on the random text topic. In the limit, for an infinitely long text, we may further suppose that the limits of relative frequencies of keywords persist to be random, and if this is true then natural language is not ergodic, i.e., it is nonergodic.

In this paper, we will entertain first a stronger hypothesis, namely, that natural language communication is strongly nonergodic. Informally speaking, a stationary process will be called strongly nonergodic if its random persistent topic has to be described using an infinite sequence of probabilistically independent binary random variables, called probabilistic facts. Like nonergodicity, strong nonergodicity is not empirically verifiable if we only have a single infinite sequence of data. However, replacing probabilistic facts with an algorithmically random sequence of bits, called algorithmic facts, we can adapt the property of strong nonergodicity back to ergodic processes. Subsequently, we will call a process perigraphic if the number of algorithmic facts which can be inferred from a finite text sampled from the process grows like a power of the text length. It is a general observation that perigraphic processes have uncomputable distributions.

It is interesting to note that perigraphic processes can be singled out by some statistical properties of the texts they generate. We will exhibit a proposition, which we call the theorem about facts and words. Suppose that we have a finite text drawn from a stationary process. The theorem about facts and words says that the number of independent probabilistic or algorithmic facts that can be reasonably inferred from the text must be roughly smaller than the number of distinct word-like strings detected in the text by some standard data compression algorithm called the Prediction by Partial Matching (PPM) code [6,7]. It is important to stress that in this theorem we do not relate the numbers all facts and all word-like strings, which would sound trivial, but we compare only the numbers of independent facts and distinct word-like strings.

Having the theorem about facts and words, we can also discuss some empirical data. Since the number of distinct word-like strings for texts in natural language follows an empirical stepwise power law, in a stark contrast to Markov processes, consequently, we suppose that the number of inferrable random facts for natural language also follows a power law. That is, we suppose that natural language is not only non-Markov but also perigraphic.

Whereas in this paper we fill several important missing gaps and provide an overarching narration, the basic ideas presented in this paper are not so new. The starting point was a corollary of Zipf’s law and a hypothesis by Hilberg. Zipf’s law is an empirical observation that in texts in natural language, the frequencies of words obey a power law decay when we sort the words according to their decreasing frequencies [8,9]. A corollary of this law, called Heaps’ law [10,11,12,13], states that the number of distinct words in a text in natural language grows like a power of the text length. In contrast to these simple empirical observations, Hilberg’s hypothesis is a less known conjecture about natural language that the entropy of a text chunk of an increasing length [14] or the mutual information between two adjacent text chunks [15,16,17,18] obey also a power law growth. In Ref. [19], it was heuristically shown that, if Hilberg’s hypothesis for mutual information is satisfied for an arbitrary stationary stochastic process, then texts drawn from this process satisfy also a kind of Heaps’ law if we detect the words using the grammar-based codes [20,21,22,23]. This result is a historical antecedent of the theorem about facts and words.

Another important step was a discovery of some simple strongly nonergodic processes, satisfying the power law growth of mutual information, called Santa Fe processes, discovered by Dębowski in August 2002, but first reported only in [24]. Subsequently, in Ref. [25], a completely formal proof of the theorem about facts and words for strictly minimal grammar-based codes [23,26] was provided. The respective related theory of natural language was later reviewed in [27,28] and supplemented by a discussion of Santa Fe processes in [29]. A drawback of this theory at that time was that strictly minimal grammar-based codes used in the statement of the theorem about facts and words are not computable in a polynomial time [26]. This precluded an empirical verification of the theory.

To state the relative novelty, in this paper, we are glad to announce a new stronger version of the theorem about facts and words for a somewhat more elegant definition of inferrable facts and the PPM code, which is computable almost in a linear time. For the first time, we also present two cases of the theorem: one for strongly nonergodic processes, applying Shannon information theory, and one for general stationary processes, applying algorithmic information theory. Having these results, we can supplement them finally with a rudimentary discussion of some empirical data.

The organization of this paper is as follows. In Section 2, we discuss some properties of ergodic and nonergodic processes. In Section 3, we define strongly nonergodic processes and we present some examples of them. Analogically, in Section 4, we discuss perigraphic processes. In Section 5, we discuss two versions of the theorem about facts and words. In Section 6, we discuss some empirical data and we suppose that natural language may be a perigraphic process. In Section 7, we offer concluding remarks. Moreover, three appendices follow the body of the paper. In Appendix A, we prove the first part of the theorem about facts and words. In Appendix B, we prove the second part of this theorem. In Appendix C, we show that that the number of inferrable facts for the Santa Fe processes follows a power law.

2. Ergodic and Nonergodic Processes

We assume that the reader is familiar with some probability measure theory [30]. For a real-valued random variable Y on a probability space , we denote its expectation

Consider now a discrete stochastic process , where random variables take values from a set of countably many distinct symbols, such as letters with which we write down texts in natural language. We denote blocks of consecutive random variables and symbols . Let us define a binary random variable telling whether some string has occurred in sequence on positions from i to ,

where

The expectation of this random variable,

is the probability of the chosen string according to the considered probability measure P, whereas the arithmetic average of consecutive random variables is the relative frequency of the same string in a finite sequence of random symbols .

Process is called stationary (with respect to a probability measure P) if expectations do not depend on position i for any string . In this case, we have the following well known theorem, which establishes that the limiting relative frequencies of strings in infinite sequence exist almost surely, i.e., with probability 1:

Theorem 1

(ergodic theorem, cf. e.g., [31]). For any discrete stationary process , there exist limits

with expectations .

In general, limits are random variables depending on a particular value of infinite sequence . It is quite natural, however, to require that the relative frequencies of strings are almost surely constants, equal to the expectations . Subsequently, process will be called ergodic (with respect to a probability measure P) if limits are almost surely constant for any string . The standard definition of an ergodic process is more abstract but is equivalent to this statement ([31], Lemma 7.15).

The following examples of ergodic processes are well known:

- Process is called IID (independent identically distributed) ifAll IID processes are ergodic.

- Process is called Markov (of order 1) ifA Markov process is ergodic in particular ifFor a sufficient and necessary condition, see ([32], Theorem 7.16).

- Process is called hidden Markov if for a certain Markov process and a function g. A hidden Markov process is ergodic in particular if the underlying Markov process is ergodic.

Whereas IID and Markov processes are some basic models in probability theory, hidden Markov processes are of practical importance in computational linguistics [4,5]. Hidden Markov processes as considered there usually satisfy condition (8) and therefore they are ergodic.

Let us call a probability measure P stationary or ergodic, respectively, if the process is stationary or ergodic with respect to the measure P. Suppose that we have a stationary measure P that generates some data . We can define a new random measure F equal to the relative frequencies of blocks in the data . It turns out that the measure F is almost surely ergodic. Formally, we have this proposition.

Theorem 2

(cf. ([33], Theorem 9.10)). Any process with a stationary measure P is almost surely ergodic with respect to the random measure F given by

Moreover, from the random measure F, we can obtain the stationary measure P by integration, . The following result asserts that this integral representation of measure P is unique.

Theorem 3

(ergodic decomposition, cf. ([33], Theorem 9.12)). Any stationary probability measure P can be represented as

where ν is a unique measure on stationary ergodic measures.

In other words, stationary ergodic measures are some building blocks from which we can construct any stationary measure. For a stationary probability measure P, the particular values of the random ergodic measure F are called the ergodic components of measure P.

Consider for instance, a Bernoulli() process with measure

where and . This measure will be contrasted with the measure of a mixture Bernoulli process with parameter uniformly distributed on interval ,

Measure (11) is a measure of an IID process and is therefore ergodic, whereas measure (12) is a mixture of ergodic measures and hence it is nonergodic.

3. Strongly Nonergodic Processes

According to our definition, a process is ergodic when the relative frequencies of any strings in a random sample in the long run converge to some constants. Consider now the following thought experiment. Suppose that we select a random book from a library. In [34], it was observed that there is hardly any book that contains both the word lemma and the word love, namely, there are some keywords that are specific to particular topics of texts. We can pursue this idea one little step farther. Counting the relative frequencies of keywords, such as lemma for a text on mathematics and love for a romance, we can effectively recognize the topic of the book. Simply put, the relative frequencies of some keywords will be higher for books concerning some topics, whereas they will be lower for books concerning other topics. Hence, in our thought experiment, we expect that the relative frequencies of keywords are some random variables with values depending on the particular topic of the randomly selected book. Since keywords are just some particular strings, we may conclude that the stochastic process that models natural language should be nonergodic.

The above thought experiment provides another perspective onto nonergodic processes. According to the following theorem, a process is nonergodic when we can effectively distinguish in the limit at least two random topics in it. In the statement, function assumes values 0 or 1 when we can identify the topic, whereas it takes value 2 when we are not certain which topic a given text is about.

Theorem 4

(cf. [24]). A stationary discrete process is nonergodic if and only if there exists a function and a binary random variable Z such that and

for any position .

A binary variable Z satisfying condition (13) will be called a probabilistic fact. A probabilistic fact tells which of two topics the infinite text generated by the stationary process is about. It is a kind of a random switch which is preset before we start scanning the infinite text; compare a similar wording in [35]. To keep the proofs simple, here we only give a new elementary proof of the “⇒” statement of Theorem 4. The proof of the “⇐” part applies some measure theory and follows the idea of Theorem 9 from [24] for strongly nonergodic processes, which we will discuss in the next paragraph.

Proof.

(only ⇒) Suppose that process is nonergodic. Then, there exists a string such that for with some positive probability. Hence, there exists a real number y such that and

Define and , where

Since almost surely and satisfies (14), convergence also holds almost surely. Applying the Lebesgue dominated convergence theorem, we obtain

☐

As for books in the natural language, we may have an intuition that the pool of available book topics is extremely large and contains many more topics than just two. For this reason, we may need not a single probabilistic fact Z but rather a sequence of probabilistic facts to specify the topic of a random book completely. Formally, stationary processes requiring an infinite sequence of independent uniformly distributed probabilistic facts to describe the topic of an infinitely long text will be called strongly nonergodic.

Definition 1

(cf. [24,25]). A stationary discrete process is called strongly nonergodic if there exist a function and a binary IID process such that and

for any position and any index .

As we have stated above, for a strongly nonergodic process, there is an infinite number of independent probabilistic facts with a uniform distribution on the set . Formally, these probabilistic facts can be assembled into a single real random variable , which is uniformly distributed on the unit interval . The value of variable T identifies the topic of a random infinite text generated by the stationary process. Thus, for a strongly nonergodic process, we have a continuum of available topics which can be incrementally identified from any sufficiently long text. Put formally, according to Theorem 9 from [24], a stationary process is strongly nonergodic if and only if its shift-invariant -field contains a nonatomic sub--field. We note in passing that in [24] strongly nonergodic processes were called uncountable description processes.

In view of Theorem 9 from [24], the mixture Bernoulli process (12) is some example of a strongly nonergodic process. In this case, the parameter plays the role of the random variable . Showing that condition (17) is satisfied for this process in an elementary fashion is a tedious exercise. Hence, let us present now a simpler guiding example of a strongly nonergodic process, which we introduced in [24,25] and called the Santa Fe process. Let be a binary IID process with . Let be an IID process with assuming values in natural numbers with a power-law distribution

The Santa Fe process with exponent is a sequence , where

are pairs of a random number and the corresponding probabilistic fact . The Santa Fe process is strongly nonergodic since condition (17) holds for example for

Simply speaking, function returns 0 or 1 when an unambiguous value of the second constituent can be read off from pairs and returns 2 when there is some ambiguity. Condition (17) is satisfied since

Some salient property of the Santa Fe process is the power law growth of the expected number of probabilistic facts, which can be inferred from a finite text drawn from the process. Consider a strongly nonergodic process . The set of initial independent probabilistic facts inferrable from a finite text will be defined as

In other words, we have , where l is the largest number such that for all . To capture the power-law growth of an arbitrary function , we will denote the Hilberg exponent defined

where for and for , cf. [36]. In contrast to Ref. [36], for technical reasons, we define the Hilberg exponent only for an exponentially sparse subsequence of terms rather than all terms . Moreover, in [36], the Hilberg exponent was considered only for mutual information , defined later in Equation (51). We observe that for the exact power law growth with we have . More generally, the Hilberg exponent captures an asymptotic power-law growth of the sequence. As shown in Appendix C, for the Santa Fe process with exponent , we have the asymptotic power-law growth

This property distinguishes the Santa Fe process from the mixture Bernoulli process (12), for which the respective Hilberg exponent is zero, as we discuss in Section 6.

4. Perigraphic Processes

Is it possible to demonstrate by a statistical investigation of texts that natural language is really strongly nonergodic and satisfies a condition similar to (24)? In the thought experiment described in the beginning of the previous section, we have ignored the issue of constructing an infinitely long text. In reality, every book with a well defined topic is finite. If we want to obtain an unbounded collection of texts, we need to assemble a corpus of different books and it depends on our assembling criteria whether the books in the corpus will concern some persistent random topic. Moreover, if we already have a single infinite sequence of books generated by some stationary source and we estimate probabilities as relative frequencies of blocks of symbols in this sequence, then, by Theorem 2, we will obtain an ergodic probability measure almost surely.

In this situation, we may ask whether the idea of the power-law growth of the number of inferrable probabilistic facts can be translated somehow to the case of ergodic measures. Some straightforward method to apply is to replace the sequence of independent uniformly distributed probabilistic facts , being random variables, with an algorithmically random sequence of particular binary digits . Such digits will be called algorithmic facts in contrast to variables being called probabilistic facts.

Let us recall some basic concepts. For a discrete random variable X, let denote the random variable that takes value when X takes value x. We will introduce the pointwise entropy

where log stands for the natural logarithm. The prefix-free Kolmogorov complexity of a string u is the length of the shortest self-delimiting program written in binary digits that prints out string u ([37], Chapter 3). is the founding concept of the algorithmic information theory and is an analogue of the pointwise entropy. To keep our notation analogical to (25), we will write the algorithmic entropy

If the probability measure is computable, then the algorithmic entropy is close to the pointwise entropy. On the one hand, by the Shannon–Fano coding for a computable probability measure, the algorithmic entropy is less than the pointwise entropy plus a constant which depends on the probability measure and the dimensionality of the distribution ([37], Corollary 4.3.1). Formally,

where is a certain constant depending on the probability measure P. On the other hand, since the prefix-free Kolmogorov complexity is also the length of a prefix-free code, we have

It is also true that for sufficiently large n almost surely ([38], Theorem 3.1). Thus, we have shown that the algorithmic entropy is in some sense close to the pointwise entropy, for a computable probability measure.

Next, we will discuss the difference between probabilistic and algorithmic randomness. Whereas for an IID sequence of random variables with we have

similarly an infinite sequence of binary digits is called algorithmically random (in the Martin-Löf sense) when there exists a constant such that

for all ([37], Theorem 3.6.1). The probability that the aforementioned sequence of random variables is algorithmically random equals 1—for example by ([38], Theorem 3.1), so algorithmically random sequences are typical realizations of sequence .

Let be a stationary process. We observe that generalizing condition (17) in an algorithmic fashion does not make much sense. Namely, condition

is trivially satisfied for any stationary process for a certain computable function and an algorithmically random sequence . It turns out so since there exists a computable function such that , where is the binary expansion of the halting probability , which is a lower semi-computable algorithmically random sequence ([37], Section 3.6.2).

In spite of this negative result, the power-law growth of the number of inferrable algorithmic facts corresponds to some nontrivial property. For a computable function and an algorithmically random sequence of binary digits , which we will call algorithmic facts, the set of initial algorithmic facts inferrable from a finite text will be defined as

Subsequently, we will call a process perigraphic if the expected number of algorithmic facts which can be inferred from a finite text sampled from the process grows asymptotically like a power of the text length.

Definition 2.

A stationary discrete process is called perigraphic if

for some computable function and an algorithmically random sequence of binary digits .

Perigraphic processes can be ergodic. The proof of Theorem A10 from Appendix C can be easily adapted to show that some example of a perigraphic process is the Santa Fe process with sequence replaced by an algorithmically random sequence of binary digits . To be very concrete, the example of a perigraphic process can be process with

where is the binary expansion of the halting probability and is an IID process with assuming values in natural numbers with the power-law distribution (18). This process is not only perigraphic but also IID and hence ergodic.

We can also easily show the following proposition.

Theorem 5.

Any perigraphic process has an uncomputable measure P.

Proof.

Assume that a perigraphic process has a computable measure P. By inequalities (A25) and (A26) from Appendix A, we have

Since we have obtained a contradiction with the assumption that the process is perigraphic, measure P cannot be computable. ☐

5. Theorem about Facts and Words

In this section, we will present a result about stationary processes, which we call the theorem about facts and words. This proposition states that the expected number of independent probabilistic or algorithmic facts inferrable from the text drawn from a stationary process must be roughly less than the expected number of distinct word-like strings detectable in the text by a simple procedure involving the PPM compression algorithm. This result states, in particular, that an asymptotic power law growth of the number of inferrable probabilistic or algorithmic facts as a function of the text length produces a statistically measurable effect, namely, an asymptotic power law growth of the number of word-like strings.

To state the theorem about facts and words formally, we need first to discuss the PPM code. The general idea of the PPM code comes from Refs. [6,7], developed independently. This compression scheme was called the PPM code in [7], which stands for “Prediction by Partial Matching” and prevails in the literature, whereas it was called measure R in [6,39]. Whereas Ref. [7] focused on practical applications to data compression and earned most of the fame, in Refs. [6,39], one can find a few results that matter for theoretical considerations. Let us denote strings of symbols , adopting an important convention that is the empty string for . In the following, we consider strings over a finite alphabet, say, . We define the frequency of a substring in a string as

Now, we will define the PPM probabilities in a way that is closer to the conventions of paper [6,39] than to the conventions of Ref. [7]. In particular, in Equation (38), we consider frequencies of strings and in different strings, and , respectively, in the numerator and in the denominator to guarantee the proper normalization according to our definition of .

Definition 3

(cf. [6,7]). For and , we put

Quantity is called the conditional PPM probability of order k of symbol given string . Next, we put

Quantity is called the PPM probability of order k of string . Finally, we put

Quantity is called the (total) PPM probability of the string .

Quantity is an incremental approximation of the unknown true probability of the string , assuming that the string has been generated by a Markov process of order k. In contrast, quantity is a mixture of such Markov approximations for all finite orders. In general, the PPM probabilities are probability distributions over strings of a fixed length. That is:

- and ,

- and ,

- and .

In the following, we define an analogue of the pointwise entropy

Quantity will be called the length of the PPM code for the string . By nonnegativity of the Kullback–Leibler divergence, we have for any random block that

The length of the PPM code or the PPM probability, respectively, have two notable properties. First, the PPM probability is a universal probability, i.e., in the limit, the length of the PPM code consistently estimates the entropy rate of a stationary source. Second, the PPM probability can be effectively computed, i.e., the summation in definition (40) can be rewritten as a finite sum. Let us state these two results formally.

Theorem 6

(cf. [39]). The PPM probability is universal in expectation, i.e., we have

for any stationary process .

For stationary ergodic processes, the above claim follows by an iterated application of the ergodic theorem as shown, e.g., in Theorem 1.1 from [39] for the measure R, which is a slight modification of the PPM probability. To generalize the claim for nonergodic processes, one can use the ergodic decomposition theorem, but the exact proof requires too large of a theoretical overload to be presented within the framework of this paper.

Theorem 7.

The PPM probability can be effectively computed, i.e., we have

where

is the maximal repetition of string .

Proof.

We have for . Hence, for and, in view of this, we obtain the claim. ☐

Maximal repetition as a function of a string was studied, e.g., in [40,41]. Since the PPM probability is a computable probability distribution, then, by (27) for a certain constant we have

Let us denote the length of the PPM code of order k,

As we can easily see, the code length is approximately equal to the minimal code length where the minimization goes over . Thus, it is meaningful to consider this definition of the PPM order of an arbitrary string.

Definition 4.

The PPM order is the smallest G such that

Theorem 8.

We have .

Proof.

It follows by for . ☐

Let us divert for a short while from the PPM code definition. The set of distinct substrings of length m in string is

The cardinality of set as a function of substring length m is called the subword complexity of string [40]. Now let us apply the concept of the PPM order to define some special set of substrings of an arbitrary string . The set of distinct PPM words detected in will be defined as the set for , i.e.,

Let us define the pointwise mutual information

and the algorithmic mutual information

Now, we may write down the theorem about facts and words. The theorem states that the Hilberg exponent for the expected number of initial independent inferrable facts is less than the Hilberg exponent for the expected mutual information and this is less than the Hilberg exponent for the expected number of distinct detected PPM words plus the PPM order. (The PPM order is usually much less than the number of distinct PPM words.)

Theorem 9

(facts and words I, cf. [25]). Let be a stationary strongly nonergodic process over a finite alphabet. We have inequalities

Proof.

The claim follows by conjunction of Theorem A2 from Appendix A and Theorem A8 from Appendix B. ☐

Theorem 9 also has an algorithmic version, for ergodic processes in particular.

Theorem 10

(facts and words II). Let be a stationary process over a finite alphabet. We have inequalities

Proof.

The claim follows by conjunction of Theorem A3 from Appendix A and Theorem A8 from Appendix B. ☐

The theorem about facts and words previously proven in [25] differs from Theorem 9 in three aspects. First of all, the theorem in [25] did not apply the concept of the Hilberg exponent and compared with rather than with . Second, the number of inferrable facts was defined as a functional of the process distribution rather than a random variable depending on a particular text. Third, the number of words was defined using a minimal grammar-based code rather than the concept of the PPM order. Minimal grammar-based codes are not computable in a polynomial time in contrast to the PPM order. Thus, we may claim that Theorem 9 is stronger than the theorem about facts and words previously proven in [25]. Moreover, applying Kolmogorov complexity and algorithmic randomness to formulate and prove Theorem 10 is a new idea.

It is an interesting question whether we have an almost sure version of Theorems 9 and 10, namely, whether

for strongly nonergodic processes, or

for general stationary processes. We leave this question as an open problem.

6. Hilberg Exponents and Empirical Data

It is advisable to show that the Hilberg exponents considered in Theorem 9 can assume any value in range and the difference between them can be arbitrarily large. We adopt a convention that the set of inferrable probabilistic facts is empty for ergodic processes, . With this remark in mind, let us inspect some examples of processes.

First of all, for Markov processes and their strongly nonergodic mixtures, of any order k, but, over a finite alphabet, we have

This happens to be so since the sufficient statistic of text for predicting text is the maximum likelihood estimate of the transition matrix, the elements of which can assume at most distinct values. Hence, , where D is the cardinality of the alphabet and k is the Markov order of the process. Similarly, it can be shown for these processes that the PPM order satisfies . Hence, the number of PPM words, which satisfies inequality , is also bounded above. In consequence, for Markov processes and their strongly nonergodic mixtures, of any order but over a finite alphabet, we obtain

In contrast, Santa Fe processes are strongly nonergodic mixtures of some IID processes over an infinite alphabet. Being mixtures of IID processes over an infinite alphabet, they need not satisfy condition (58). In fact, as shown in [25,29] and Appendix C, for the Santa Fe process with exponent , we have the asymptotic power-law growth

The same equality for the number of inferrable probabilistic facts and the mutual information is also satisfied by a stationary coding of the Santa Fe process into a finite alphabet (see [29]).

Let us also note that, whereas the theorem about facts and words provides an inequality of Hilberg exponents, this inequality can be strict. To provide some substance, in [29], we have constructed a modification of the Santa Fe process that is ergodic and over a finite alphabet. For this modification, we have only the power-law growth of mutual information

Since, in this case, , then the difference between the Hilberg exponents for the number of inferrable probabilistic facts and the number of PPM words can be an arbitrary number in range .

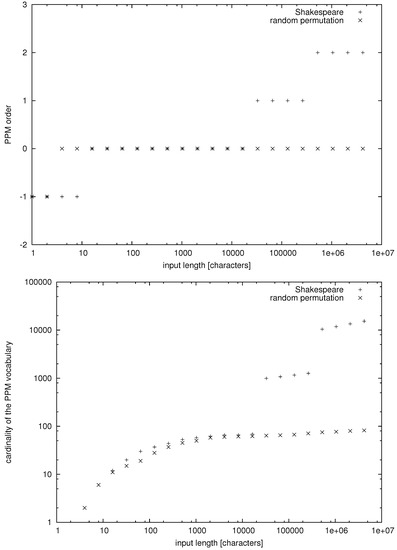

Now, we are in a position to discuss some empirical data. In this case, we cannot directly measure the number of facts and the mutual information, but we can compute the PPM order and count the number of PPM words. In Figure 1, we have presented data for a collection of 35 plays by William Shakespeare (downloaded from the Project Gutenberg, https://www.gutenberg.org/) and a random permutation of characters appearing in this collection of texts. The random permutation of characters is an IID process over a finite alphabet, so, in this case, we obtain

Figure 1.

The PPM order and the cardinality of the PPM vocabulary versus the input length n for William Shakespeare’s First Folio/35 Plays and a random permutation of the text’s characters.

In contrast, for the plays of Shakespeare, we seem to have a stepwise power law growth of the number of distinct PPM words. Thus, we may suppose that, for natural language, we have more generally

If relationship (62) holds true, then natural language cannot be a Markov process of any order. Moreover, in view of the striking difference between observations (61) and (62), we may suppose that the number of inferrable probabilistic or algorithmic facts for texts in natural language also obeys a power-law growth. Formally speaking, this condition would translate to natural language being strongly nonergodic or perigraphic. We note that this hypothesis arises only as a form of a weak inductive inference since formally we cannot deduce condition (33) from mere condition (62), regardless of the amount of data supporting condition (62).

7. Conclusions

In this article, a stationary process has been called strongly nonergodic if some persistent random topic can be detected in the process and an infinite number of independent binary random variables, called probabilistic facts, is needed to describe this topic completely. Replacing probabilistic facts with an algorithmically random sequence of bits, called algorithmic facts, we have adapted this property back to ergodic processes. Subsequently, we have called a process perigraphic if the number of algorithmic facts which can be inferred from a finite text sampled from the process grows like a power of the text length.

We have demonstrated an assertion, which we call the theorem about facts and words. This proposition states that the number of independent probabilistic or algorithmic facts which can be inferred from a text drawn from a process must be roughly smaller than the number of distinct word-like strings detected in this text by means of the PPM compression algorithm. We have exhibited two versions of this theorem: one for strongly nonergodic processes, applying the Shannon information theory, and one for ergodic processes, applying the algorithmic information theory.

Subsequently, we have exhibited an empirical observation that the number of distinct word-like strings grows like a stepwise power law for a collection of plays by William Shakespeare, in stark contrast to Markov processes. This observation does not rule out that the number of probabilistic or algorithmic facts inferrable from texts in natural language also grows like a power law. Hence, we have supposed that natural language is a perigraphic process.

We suppose that the path of the future related research should lead through a further analysis of the theorem about facts and words, and demonstrating an almost sure version of this statement. It is also an important, still unresolved question whether theoretical analysis of effective universal coding algorithms and their rates of convergence to the entropy rate can contribute to some definite statements about natural language treated as a stochastic process. We realize that the results of this paper as far as the linguistic theory is concerned may be still too inconclusive. As we see it, the main merit of this paper lies in linking some concepts in the Shannon information theory and the algorithmic information theory and providing some linguistic interpretations of them.

Acknowledgments

We wish to thank Jacek Koronacki, Jan Mielniczuk, Vladimir Vovk, and Boris Ryabko for helpful comments.

Conflicts of Interest

The author declares no conflict of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| IID | independent identically distributed |

| PPM | prediction by partial matching |

Appendix A. Facts and Mutual Information

In the appendices, we will make use of several kinds of information measures.

- First, there are four pointwise Shannon information measures:

- entropy,

- conditional entropy,

- mutual information,

- conditional mutual information,

where is the probability of a random variable X and is the conditional probability of a random variable X given a random variable Z. The above definitions make sense for discrete-valued random variables X and Y and an arbitrary random variable Z. If Z is a discrete-valued random variable, then also and . - Moreover, we will use four algorithmic information measures:

- entropy,

- conditional entropy,

- mutual information,

- conditional mutual information,

where is the prefix-free Kolmogorov complexity of an object x and is the prefix-free Kolmogorov complexity of an object x given an object z. In the above definitions, x and y must be finite objects (finite texts), whereas z can be also an infinite object (an infinite sequence). If z is a finite object, then rather than being equal to , where , , and are the equality and the inequalities up to an additive constant ([37], Theorem 3.9.1). Hence,

In the following, we will prove a result for Hilberg exponents.

Theorem A1.

For a function , define . If the limit exists and is finite, then

with an equality if for all but finitely many n.

Proof.

The proof makes use of the telescope sum

Denote . Since , it is sufficient to prove inequality (A2) for . In this case, for all but finitely many n for any . Then, for , by the telescope sum (A3), we obtain for sufficiently large n that

Since can be taken arbitrarily small, we obtain (A2).

Now assume that for all but finitely many n. By the telescope sum (A3), we have for sufficiently large n. Hence,

Combining this with (A2), we obtain . ☐

For any stationary process over a finite alphabet, there exists a limit

called the entropy rate of process [3]. By (28), (43), and (46), we also have

Moreover, for a stationary process, the mutual information satisfies

Hence, by Theorem A1, we obtain

Subsequently, we will prove the initial parts of Theorems 9 and 10, i.e., the two versions of the theorem about facts and words. The probabilistic statement for strongly nonergodic processes goes first.

Theorem A2

(facts and mutual information I). Let be a stationary strongly nonergodic process over a finite alphabet. We have inequality

Proof.

Let us write . Observe that

where the second row of inequalities follows by the maximum entropy bound from ([3], Lemma 13.5.4). Hence, by the inequality

for a measurable function f, we obtain that

Now, we observe that

since the sequence of random variables is a measurable function of the sequence of random variables , as shown in [24,25]. Hence, we have

By inequalities (A17) and (A18) and equality (A10), we obtain inequality (A12). ☐

The algorithmic version of the theorem about facts and words follows roughly the same idea, with some necessary adjustments.

Theorem A3

(facts and mutual information II). Let be a stationary process over a finite alphabet. We have inequality

Proof.

Let us write . Observe that

where the first row of inequalities follows by the algorithmic randomness of , whereas the second and the third row of inequalities follow by the bounds for and . Moreover, for any a computable function f, there exists a constant such that

Hence, we obtain that

Since by the Jensen inequality, then

Now, we observe that

since the conditional prefix-free Kolmogorov complexity with the second argument fixed is the length of a prefix-free code. Hence, we have

By inequalities (A25) and (A27) and equality (A11), we obtain inequality (A19). ☐

Appendix B. Mutual Information and PPM Words

In this appendix, we will investigate some algebraic properties of the length of the PPM code to be used for proving the second part of the theorem about facts and words. First of all, it can be seen that

Expression (A28) can be further rewritten using notation

Then, for , we define

As a result for we obtain

In the following, we will analyze the terms on the right-hand side of (A34).

Theorem A4.

For and , we have

where .

Proof.

Observe that . Hence, the summation in can be restricted to such that . Consider such a u and write and .

Since and (the second inequality follows by subadditivity of ), we obtain first

where we use and . On the other hand, function is concave so by and the Jensen inequality for , we obtain

since

Moreover, function is growing in argument N. Hence,

Summing inequalities (A36) and (A39) over such that , we obtain the claim. ☐

The mutual information is defined as a difference of entropies. Replacing the entropy with an arbitrary function , we obtain this quantity:

Definition A1.

The Q pointwise mutual information is defined as

We will show that the pointwise mutual information cannot be positive.

Theorem A5.

For , where , we have

Proof.

Write , , , and . We observe that

which is N times the Kullback–Leibler divergence between distributions and and thus is nonnegative. ☐

Theorem A6.

For , we have

Proof.

Consider . For and , we have

Thus, using Theorem A5, we obtain

Since the second term on the right-hand side is greater than or equal zero, we may omit it and summing the remaining terms over all we obtain the claim. ☐

Now, we will show that the PPM pointwise mutual information between two parts of a string is roughly bounded above by the cardinality of the PPM vocabulary of the string multiplied by the logarithm of the string length.

Theorem A7.

We have

Proof.

Consider . By Theorems A4 and A6, we obtain

In contrast, . Now, let . Since

and

for any and , we obtain

Hence, the claim follows. ☐

Consequently, we may prove the second part of Theorems 9 and 10, i.e., the theorems about facts and words.

Theorem A8

(mutual information and words). Let be a stationary process over a finite alphabet. We have inequalities

Appendix C. Hilberg Exponents for Santa Fe Processes

We begin with a general observation for Hilberg exponents. In [36], this result was discussed only for the Hilberg exponent of mutual information.

Theorem A9

(cf. [36]). For a sequence of random variables , we have

Proof.

Denote . From the Markov inequality, we have

where . Hence, by the Borel–Cantelli lemma, we have for all but finitely many n almost surely. Since we can choose arbitrarily small, in particular, we obtain inequality (A54). ☐

In [29,36], it was shown that the Santa Fe process with exponent satisfies equalities

We will now show a similar result for the number of probabilistic facts inferrable from the Santa Fe process almost surely and in expectation. Since Santa Fe processes are processes over an infinite alphabet, we cannot apply the theorem about facts and words.

Theorem A10.

For the Santa Fe process with exponent α, we have

Proof.

First, we obtain

where is the zeta function. Put now for an . It is easy to observe that . Hence, by the Borel–Cantelli lemma, we have inequality for all but finitely many n almost surely.

Second, we obtain

Recalling from Appendix B that , where is subadditive, we obtain

by . Put now for a . We obtain

where so . Hence, by the Borel–Cantelli lemma, we have inequality for all but finitely many n almost surely. Combining this result with the previous result yields equality (A58).

To obtain equality (A59), we invoke Theorem A9 for the lower bound, whereas, for the upper bound, we observe that

where the last term decays according to the stretched exponential bound (A63) for . ☐

References

- Shannon, C. A mathematical theory of communication. Bell Syst. Tech. J. 1948, 30, 379–423, 623–656. [Google Scholar] [CrossRef]

- Shannon, C. Prediction and entropy of printed English. Bell Syst. Tech. J. 1951, 30, 50–64. [Google Scholar] [CrossRef]

- Cover, T.M.; Thomas, J.A. Elements of Information Theory, 2nd ed.; Wiley: Hoboken, NJ, USA, 2006. [Google Scholar]

- Jelinek, F. Statistical Methods for Speech Recognition; The MIT Press: Cambridge, MA, USA, 1997. [Google Scholar]

- Manning, C.D.; Schütze, H. Foundations of Statistical Natural Language Processing; The MIT Press: Cambridge, MA, USA, 1999. [Google Scholar]

- Ryabko, B. Twice-universal coding. Probl. Inf. Transm. 1984, 20, 173–177. [Google Scholar]

- Cleary, J.G.; Witten, I.H. Data compression using adaptive coding and partial string matching. IEEE Trans. Commun. 1984, 32, 396–402. [Google Scholar] [CrossRef]

- Zipf, G.K. The Psycho-Biology of Language: An Introduction to Dynamic Philology, 2nd ed.; The MIT Press: Cambridge, MA, USA, 1965. [Google Scholar]

- Mandelbrot, B. Structure formelle des textes et communication. Word 1954, 10, 1–27. [Google Scholar] [CrossRef]

- Kuraszkiewicz, W.; Łukaszewicz, J. The number of different words as a function of text length. Pamięt. Lit. 1951, 42, 168–182. (In Polish) [Google Scholar]

- Guiraud, P. Les Caractères Statistiques du Vocabulaire; Presses Universitaires de France: Paris, France, 1954. [Google Scholar]

- Herdan, G. Quantitative Linguistics; Butterworths: London, UK, 1964. [Google Scholar]

- Heaps, H.S. Information Retrieval—Computational and Theoretical Aspects; Academic Press: Cambridge, MA, USA, 1978. [Google Scholar]

- Hilberg, W. Der bekannte Grenzwert der redundanzfreien Information in Texten—Eine Fehlinterpretation der Shannonschen Experimente? Frequenz 1990, 44, 243–248. [Google Scholar] [CrossRef]

- Ebeling, W.; Nicolis, G. Entropy of Symbolic Sequences: The Role of Correlations. Europhys. Lett. 1991, 14, 191–196. [Google Scholar] [CrossRef]

- Ebeling, W.; Pöschel, T. Entropy and long-range correlations in literary English. Europhys. Lett. 1994, 26, 241–246. [Google Scholar] [CrossRef]

- Bialek, W.; Nemenman, I.; Tishby, N. Complexity through nonextensivity. Physica A 2001, 302, 89–99. [Google Scholar] [CrossRef]

- Crutchfield, J.P.; Feldman, D.P. Regularities unseen, randomness observed: The entropy convergence hierarchy. Chaos 2003, 15, 25–54. [Google Scholar] [CrossRef]

- Dębowski, Ł. On Hilberg’s law and its links with Guiraud’s law. J. Quant. Linguist. 2006, 13, 81–109. [Google Scholar]

- Wolff, J.G. Language acquisition and the discovery of phrase structure. Lang. Speech 1980, 23, 255–269. [Google Scholar] [CrossRef] [PubMed]

- De Marcken, C.G. Unsupervised Language Acquisition. Ph.D. Thesis, Massachussetts Institute of Technology, Cambridge, MA, USA, 1996. [Google Scholar]

- Kit, C.; Wilks, Y. Unsupervised Learning of Word Boundary with Description Length Gain. In Proceedings of the Computational Natural Language Learning ACL Workshop, Bergen; Osborne, M., Sang, E.T.K., Eds.; The Association for Computational Linguistics: Stroudsburg, PA, USA, 1999; pp. 1–6. [Google Scholar]

- Kieffer, J.C.; Yang, E. Grammar-based codes: A new class of universal lossless source codes. IEEE Trans. Inf. Theory 2000, 46, 737–754. [Google Scholar] [CrossRef]

- Dębowski, Ł. A general definition of conditional information and its application to ergodic decomposition. Statist. Probab. Lett. 2009, 79, 1260–1268. [Google Scholar]

- Dębowski, Ł. On the Vocabulary of Grammar-Based Codes and the Logical Consistency of Texts. IEEE Trans. Inf. Theory 2011, 57, 4589–4599. [Google Scholar]

- Charikar, M.; Lehman, E.; Lehman, A.; Liu, D.; Panigrahy, R.; Prabhakaran, M.; Sahai, A.; Shelat, A. The Smallest Grammar Problem. IEEE Trans. Inf. Theory 2005, 51, 2554–2576. [Google Scholar] [CrossRef]

- Dębowski, Ł. Excess entropy in natural language: present state and perspectives. Chaos 2011, 21, 037105. [Google Scholar]

- Dębowski, Ł. The Relaxed Hilberg Conjecture: A Review and New Experimental Support. J. Quantit. Linguist. 2015, 22, 311–337. [Google Scholar]

- Dębowski, Ł. Mixing, Ergodic, and Nonergodic Processes with Rapidly Growing Information between Blocks. IEEE Trans. Inf. Theory 2012, 58, 3392–3401. [Google Scholar]

- Billingsley, P. Probability and Measure; Wiley: Hoboken, NJ, USA, 1979. [Google Scholar]

- Gray, R.M. Probability, Random Processes, and Ergodic Properties; Springer: Berlin/Heidelberg, Germany, 2009. [Google Scholar]

- Breiman, L. Probability; SIAM: Philadephia, PA, USA, 1992. [Google Scholar]

- Kallenberg, O. Foundations of Modern Probability; Springer: Berlin/Heidelberg, Germany, 1997. [Google Scholar]

- Yaglom, A.M.; Yaglom, I.M. Probability and Information. In Theory and Decision Library; Springer: Berlin/Heidelberg, Germany, 1983. [Google Scholar]

- Gray, R.M.; Davisson, L.D. The ergodic decomposition of stationary discrete random processses. IEEE Trans. Inf. Theory 1974, 20, 625–636. [Google Scholar] [CrossRef]

- Dębowski, Ł. Hilberg Exponents: New Measures of Long Memory in the Process. IEEE Trans. Inf. Theory 2015, 61, 5716–5726. [Google Scholar]

- Li, M.; Vitányi, P.M.B. An Introduction to Kolmogorov Complexity and Its Applications, 3rd ed.; Springer: Berlin/Heidelberg, Germany, 2008. [Google Scholar]

- Barron, A.R. Logically Smooth Density Estimation. Ph.D. Thesis, Stanford University, Stanford, CA, USA, 1985. [Google Scholar]

- Ryabko, B. Applications of Universal Source Coding to Statistical Analysis of Time Series. In Selected Topics in Information and Coding Theory; Series on Coding and Cryptology; Woungang, I., Misra, S., Misra, S.C., Eds.; World Scientific Publishing: Singapore, 2010. [Google Scholar]

- De Luca, A. On the combinatorics of finite words. Theor. Comput. Sci. 1999, 218, 13–39. [Google Scholar] [CrossRef]

- Dębowski, Ł. Maximal Repetitions in Written Texts: Finite Energy Hypothesis vs. Strong Hilberg Conjecture. Entropy 2015, 17, 5903–5919. [Google Scholar]

© 2018 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).