Recursive Minimum Complex Kernel Risk-Sensitive Loss Algorithm

Abstract

1. Introduction

2. Fixed Point Algorithm under Minimizing Complex Kernel Risk-Sensitive Loss

2.1. Complex Kernel Risk-Sensitive Loss

2.2. Recursive Minimum Complex Kernel Risk-Sensitive Loss (RMCKRSL)

2.2.1. Cost Function

2.2.2. Recursive Solution

| Algorithm 1: RMCKRSL. |

| Input:, , , 1. Initializations: , , , , 2. While available, do 3. 4. 5. 6. 7. 8. 9. End while |

| 10. |

| Output: Estimated filter weight |

3. Convergence Analysis

3.1. Stability Analysis

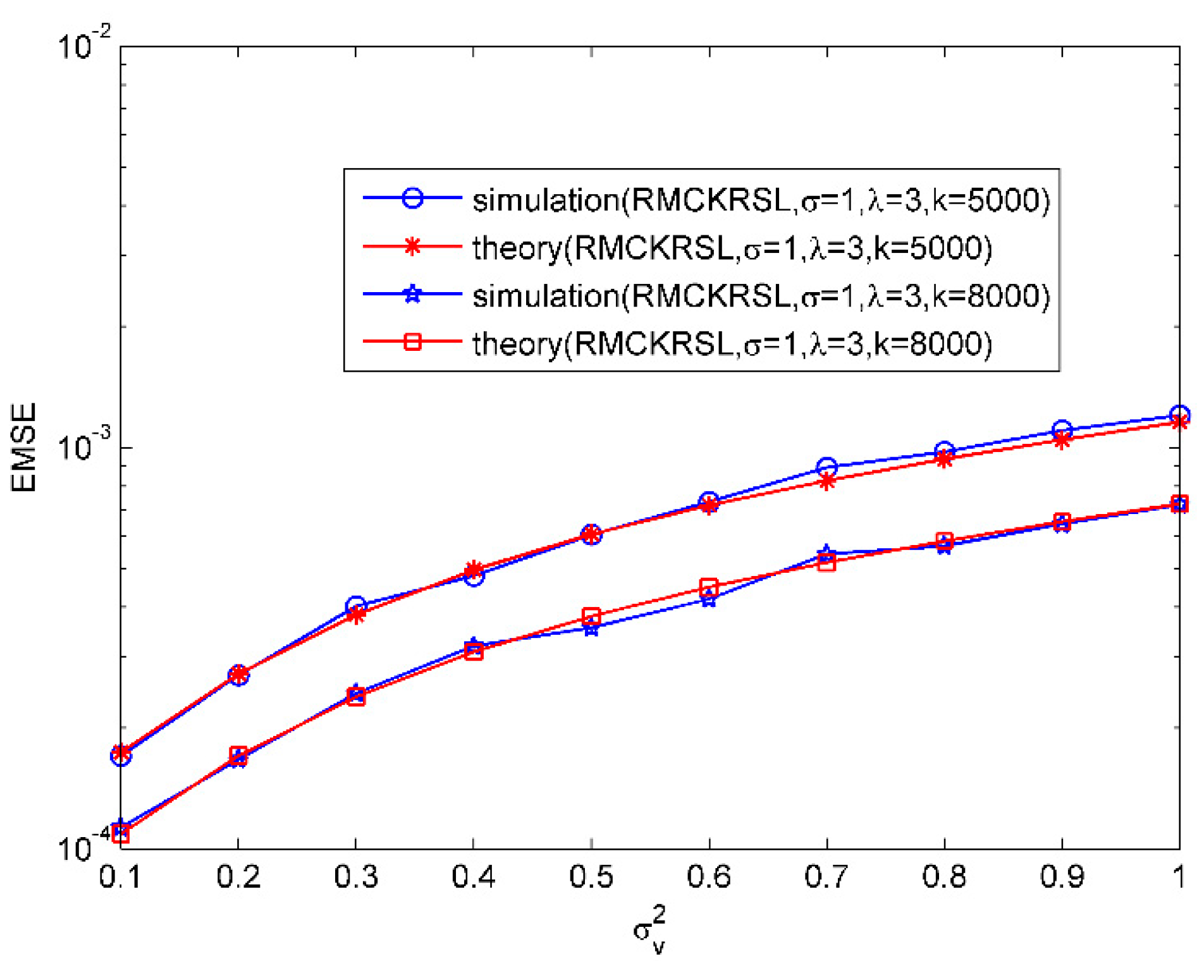

3.2. Excess Mean Square Error

4. Simulation

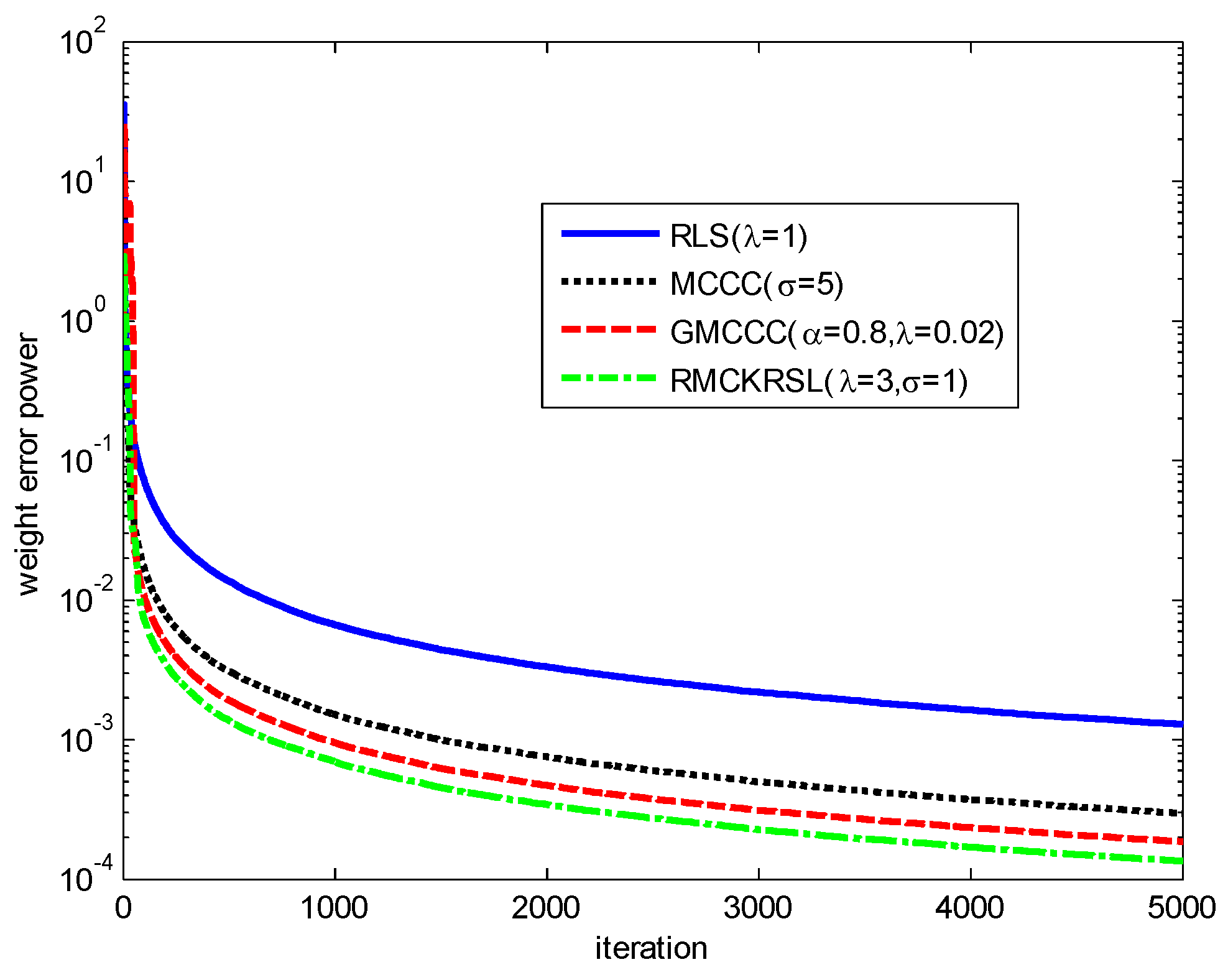

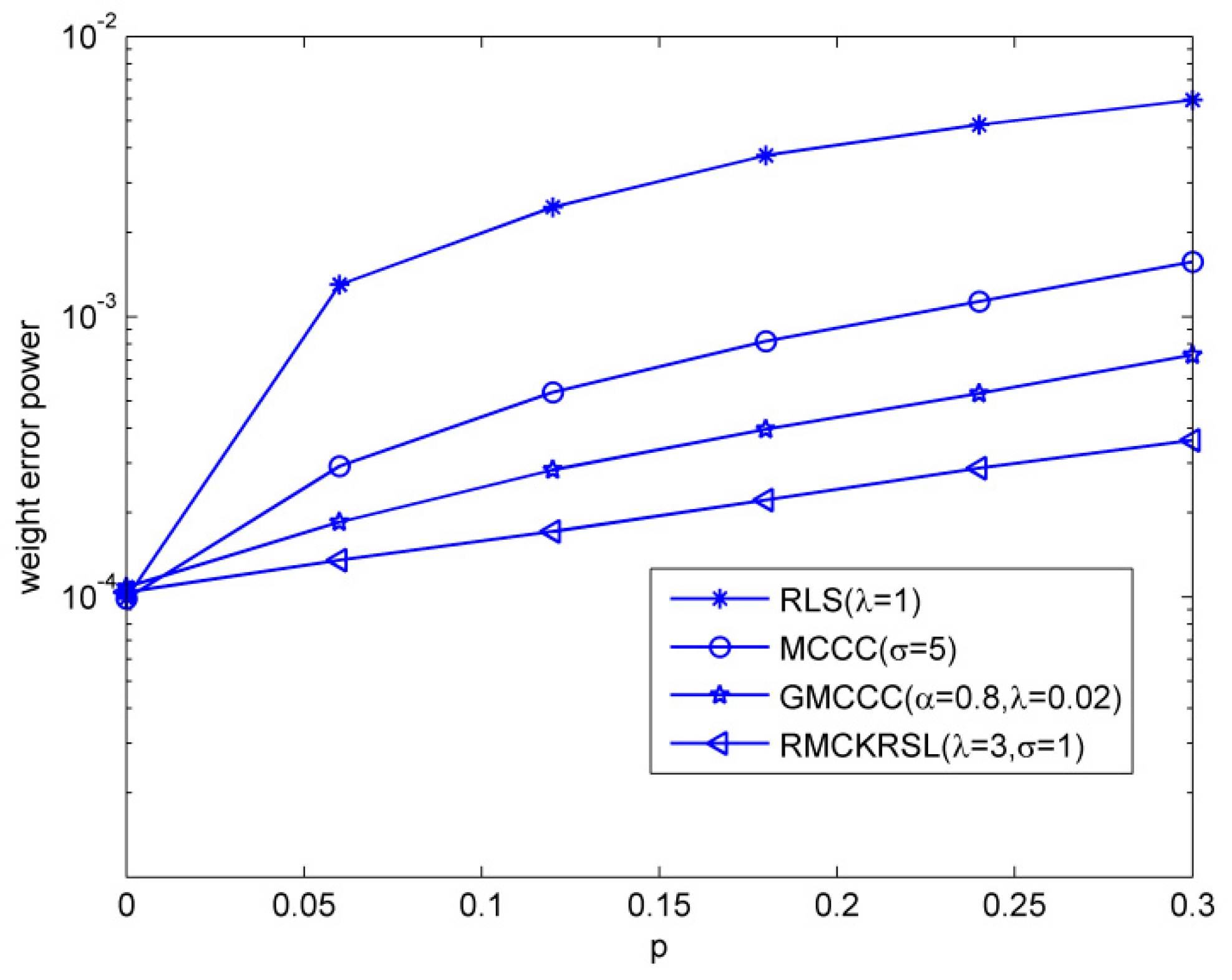

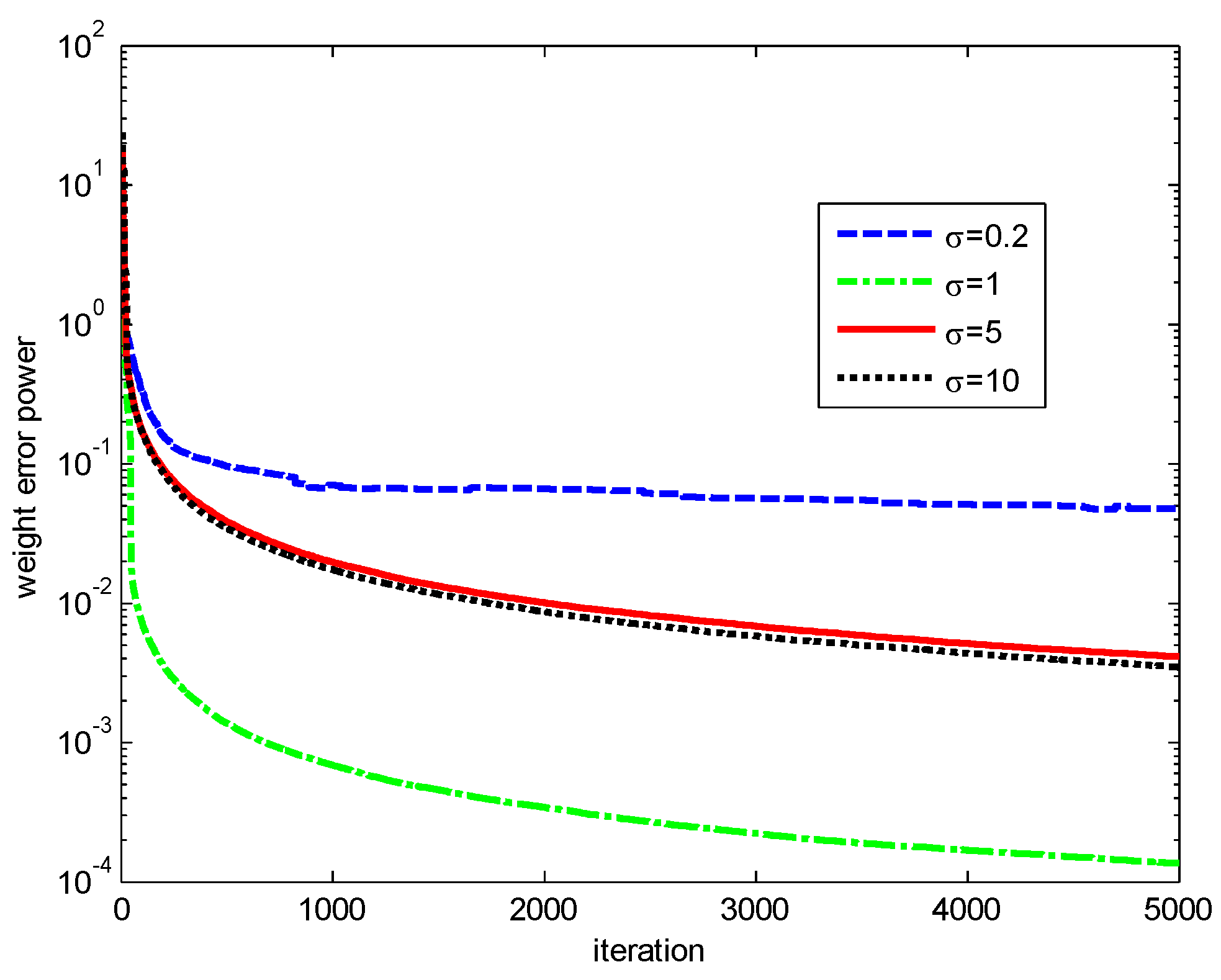

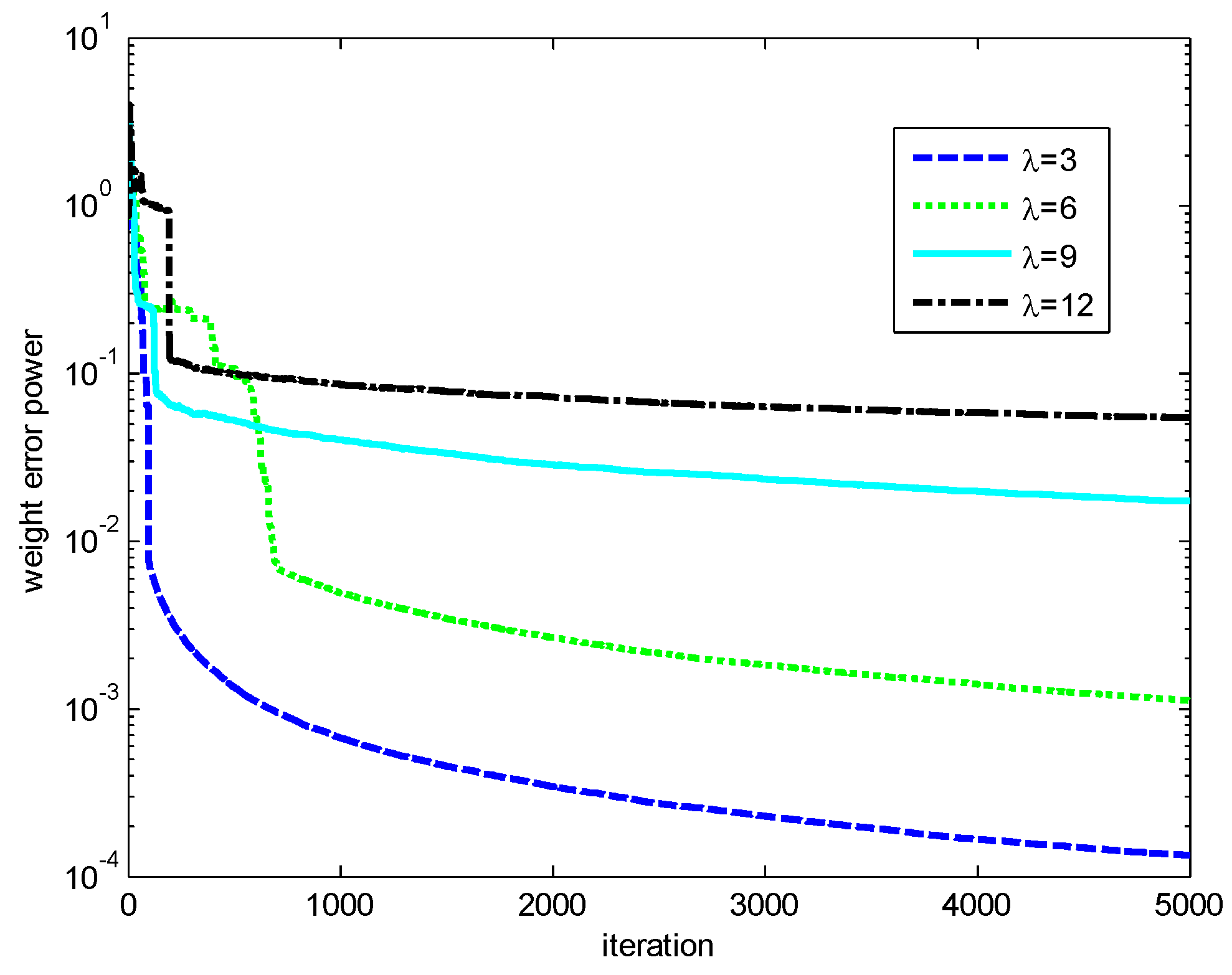

4.1. Example 1

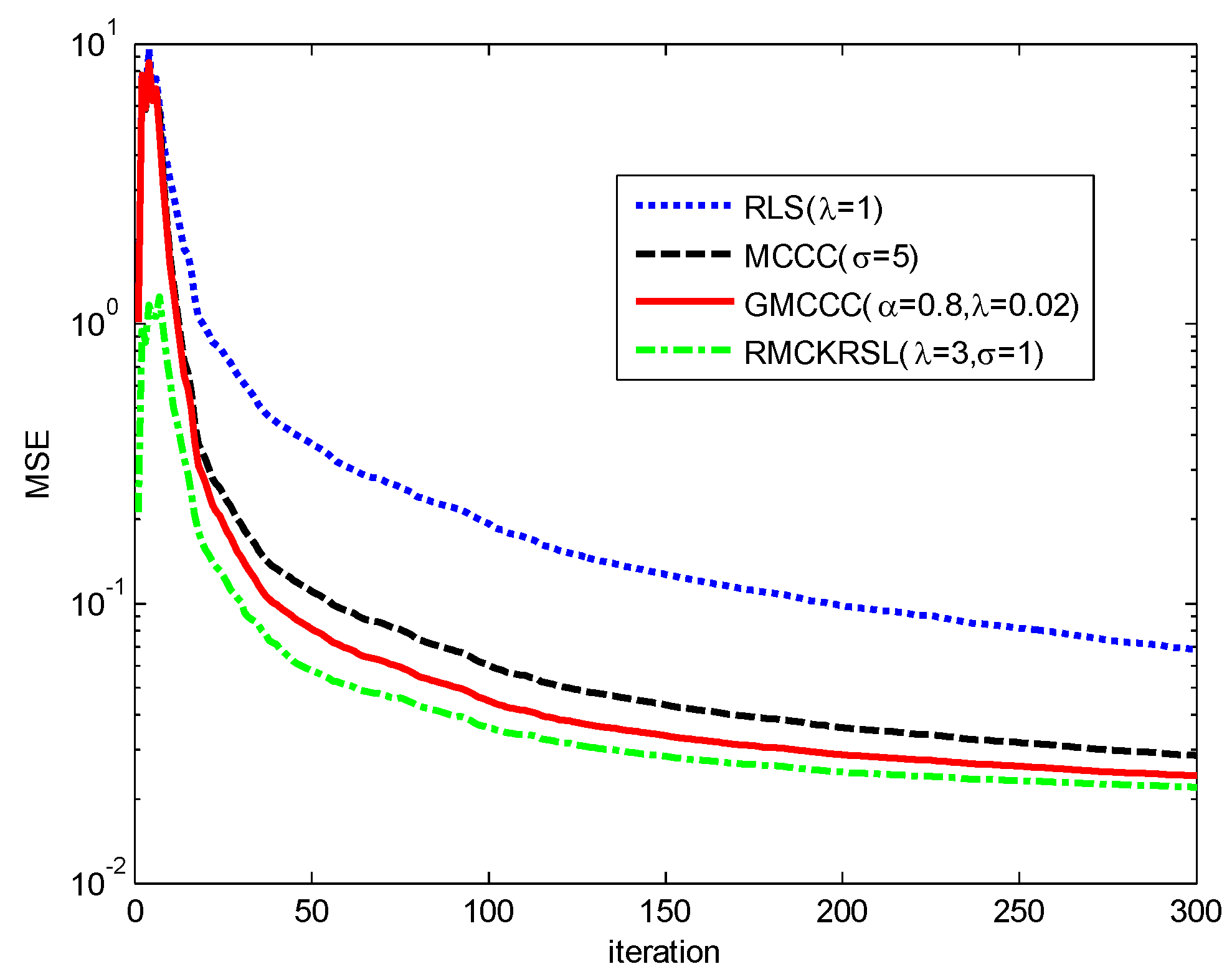

4.2. Example 2

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Principe, J.C. Information Theoretic Learning: Renyi’s Entropy and Kernel Perspectives; Springer: New York, NY, USA, 2010. [Google Scholar]

- Chen, B.; Zhu, Y.; Hu, J.; Principe, J.C. System Parameter Identification: Information Criteria and Algorithms; Newnes: Oxford, UK, 2013. [Google Scholar]

- Liu, W.; Pokharel, P.P.; Pr´ıncipe, J. Correntropy: A localized similarity measure. In Proceedings of the 2006 IEEE International Joint Conference on Neural Network (IJCNN), Vancouver, BC, Canada, 16–21 July 2006; pp. 4919–4924. [Google Scholar]

- Liu, W.; Pokharel, P.P.; Principe, J.C. Correntropy: Properties and applications in non-Gaussian signal processing. IEEE Trans. Signal Process. 2007, 55, 5286–5298. [Google Scholar] [CrossRef]

- Singh, A.; Principe, J.C. Using correntropy as a cost function in linear adaptive filters. In Proceedings of the 2009 International Joint Conference on Neural Networks (IJCNN), Atlanta, GA, USA, 14–19 June 2009; pp. 2950–2955. [Google Scholar]

- Singh, A.; Principe, J.C. A loss function for classification based on a robust similarity metric. In Proceedings of the 2010 International Joint Conference on Neural Networks (IJCNN), Barcelona, Spain, 18–23 July 2010; pp. 1–6. [Google Scholar]

- Zhao, S.; Chen, B.; Principe, J.C. Kernel adaptive filtering with maximum correntropy criterion. In Proceedings of the 2011 International Joint Conference on Neural Networks (IJCNN), San Jose, CA, USA, 31 July–5 August 2011; pp. 2012–2017. [Google Scholar]

- Chen, B.; Xing, L.; Liang, J.; Zheng, N.; Principe, J.C. Steady-state mean-square error analysis for adaptive filtering under the maximum correntropy criterion. IEEE Signal Process. Lett. 2014, 21, 880–884. [Google Scholar]

- Wu, Z.; Peng, S.; Chen, B.; Zhao, H. Robust Hammerstein adaptive filtering under maximum correntropy criterion. Entropy 2015, 17, 7149–7166. [Google Scholar] [CrossRef]

- Chen, B.; Wang, J.; Zhao, H.; Zheng, N.; Principe, J.C. Convergence of a Fixed-Point Algorithm under Maximum Correntropy Criterion. IEEE Signal Process. Lett. 2015, 22, 1723–1727. [Google Scholar] [CrossRef]

- Wang, W.; Zhao, J.; Qu, H.; Chen, B.; Principe, J.C. Convergence performance analysis of an adaptive kernel width MCC algorithm. AEU-Int. J. Electron. Commun. 2017, 76, 71–76. [Google Scholar] [CrossRef]

- Liu, X.; Chen, B.; Zhao, H.; Qin, J.; Cao, J. Maximum Correntropy Kalman Filter with State Constraints. IEEE Access 2017, 5, 25846–25853. [Google Scholar] [CrossRef]

- Wang, F.; He, Y.; Wang, S.; Chen, B. Maximum total correntropy adaptive filtering against heavy-tailed noises. Signal Process. 2017, 141, 84–95. [Google Scholar] [CrossRef]

- Chen, B.; Liu, X.; Zhao, H.; Principe, J.C. Maximum correntropy Kalman filter. Automatica 2017, 76, 70–77. [Google Scholar] [CrossRef]

- Wang, S.; Dang, L.; Wang, W.; Qian, G.; Tse, C.K. Kernel Adaptive Filters with Feedback Based on Maximum Correntropy. IEEE Access 2018, 6, 10540–10552. [Google Scholar] [CrossRef]

- He, Y.; Wang, F.; Yang, J.; Rong, H.; Chen, B. Kernel adaptive filtering under generalized Maximum Correntropy Criterion. In Proceedings of the 2016 International Joint Conference on Neural Networks (IJCNN), Vancouver, BC, Canada, 24–29 July 2016; pp. 1738–1745. [Google Scholar]

- Chen, B.; Xing, L.; Zhao, H.; Zheng, N.; Príncipe, J.C. Generalized correntropy for robust adaptive filtering. IEEE Trans. Signal Process. 2016, 64, 3376–3387. [Google Scholar] [CrossRef]

- Chen, B.; Wang, R. Risk-sensitive loss in kernel space for robust adaptive filtering. In Proceedings of the 2015 IEEE International Conference on Digital Signal Processing (DSP), Singapore, 21–24 July 2015; pp. 921–925. [Google Scholar]

- Chen, B.; Xing, L.; Xu, B.; Zhao, H.; Zheng, N.; Príncipe, J.C. Kernel Risk-Sensitive Loss: Definition, Properties and Application to Robust Adaptive Filtering. IEEE Trans. Signal Process. 2017, 65, 2888–2901. [Google Scholar] [CrossRef]

- Guimaraes, J.P.F.; Fontes, A.I.R.; Rego, J.B.A.; Martins, A.M.; Principe, J.C. Complex correntropy: Probabilistic interpretation and application to complex-valued data. IEEE Signal Process. Lett. 2017, 24, 42–45. [Google Scholar] [CrossRef]

- Guimaraes, J.P.F.; Fontes, A.I.R.; Rego, J.B.A.; Martins, A.M.; Principe, J.C. Complex Correntropy Function: Properties, and application to a channel equalization problem. Expert Syst. Appl. 2018, 107, 173–181. [Google Scholar] [CrossRef]

- Alliney, S.; Ruzinsky, S.A. An algorithm for the minimization of mixed l1 and l2 norms with application to Bayesian estimation. IEEE Trans. Signal Process. 1994, 42, 618–627. [Google Scholar] [CrossRef]

- Mandic, D.; Goh, V. Complex Valued Nonlinear Adaptive Filters: Noncircularity, Widely Linear and Neural Models (ser. Adaptive and Cognitive Dynamic Systems: Signal Processing, Learning, Communications and Control); John Wiley & Sons: New York, NY, USA, 2009. [Google Scholar]

- Diniz, P.S.R. Adaptive Filtering: Algorithms and Practical Implementation, 4th ed.; Springer-Verlag: New York, NY, USA, 2013. [Google Scholar]

- Qian, G.; Wang, S.; Wang, L.; Duan, S. Convergence Analysis of a Fixed Point Algorithm under Maximum Complex Correntropy Criterion. IEEE Signal Process. Lett. 2018, 24, 1830–1834. [Google Scholar] [CrossRef]

- Qian, G.; Wang, S. Generalized Complex Correntropy: Application to Adaptive Filtering of Complex Data. IEEE Access 2018, 6, 19113–19120. [Google Scholar] [CrossRef]

- Qian, G.; Wang, S. Complex Kernel Risk-Sensitive Loss: Application to Robust Adaptive Filtering in Complex Domain. IEEE Access 2018, 6, 2169–3536. [Google Scholar] [CrossRef]

- Wirtinger, W. Zur formalen theorie der funktionen von mehr complexen veränderlichen. Math. Ann. 1927, 97, 357–375. [Google Scholar] [CrossRef]

- Bouboulis, P.; Theodoridis, S. Extension of Wirtinger’s calculus to reproducing Kernel Hilbert spaces and the complex kernel LMS. IEEE Trans. Signal Process. 2011, 59, 964–978. [Google Scholar]

- Zhang, X. Matrix Analysis and Application, 2nd ed.; Tsinghua University Press: Beijing, China, 2013. [Google Scholar]

- Picinbono, B. On circularity. IEEE Trans. Signal Process. 1994, 42, 3473–3482. [Google Scholar] [CrossRef]

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Qian, G.; Luo, D.; Wang, S. Recursive Minimum Complex Kernel Risk-Sensitive Loss Algorithm. Entropy 2018, 20, 902. https://doi.org/10.3390/e20120902

Qian G, Luo D, Wang S. Recursive Minimum Complex Kernel Risk-Sensitive Loss Algorithm. Entropy. 2018; 20(12):902. https://doi.org/10.3390/e20120902

Chicago/Turabian StyleQian, Guobing, Dan Luo, and Shiyuan Wang. 2018. "Recursive Minimum Complex Kernel Risk-Sensitive Loss Algorithm" Entropy 20, no. 12: 902. https://doi.org/10.3390/e20120902

APA StyleQian, G., Luo, D., & Wang, S. (2018). Recursive Minimum Complex Kernel Risk-Sensitive Loss Algorithm. Entropy, 20(12), 902. https://doi.org/10.3390/e20120902