Multivariate Dependence beyond Shannon Information

Abstract

1. Introduction

2. Development

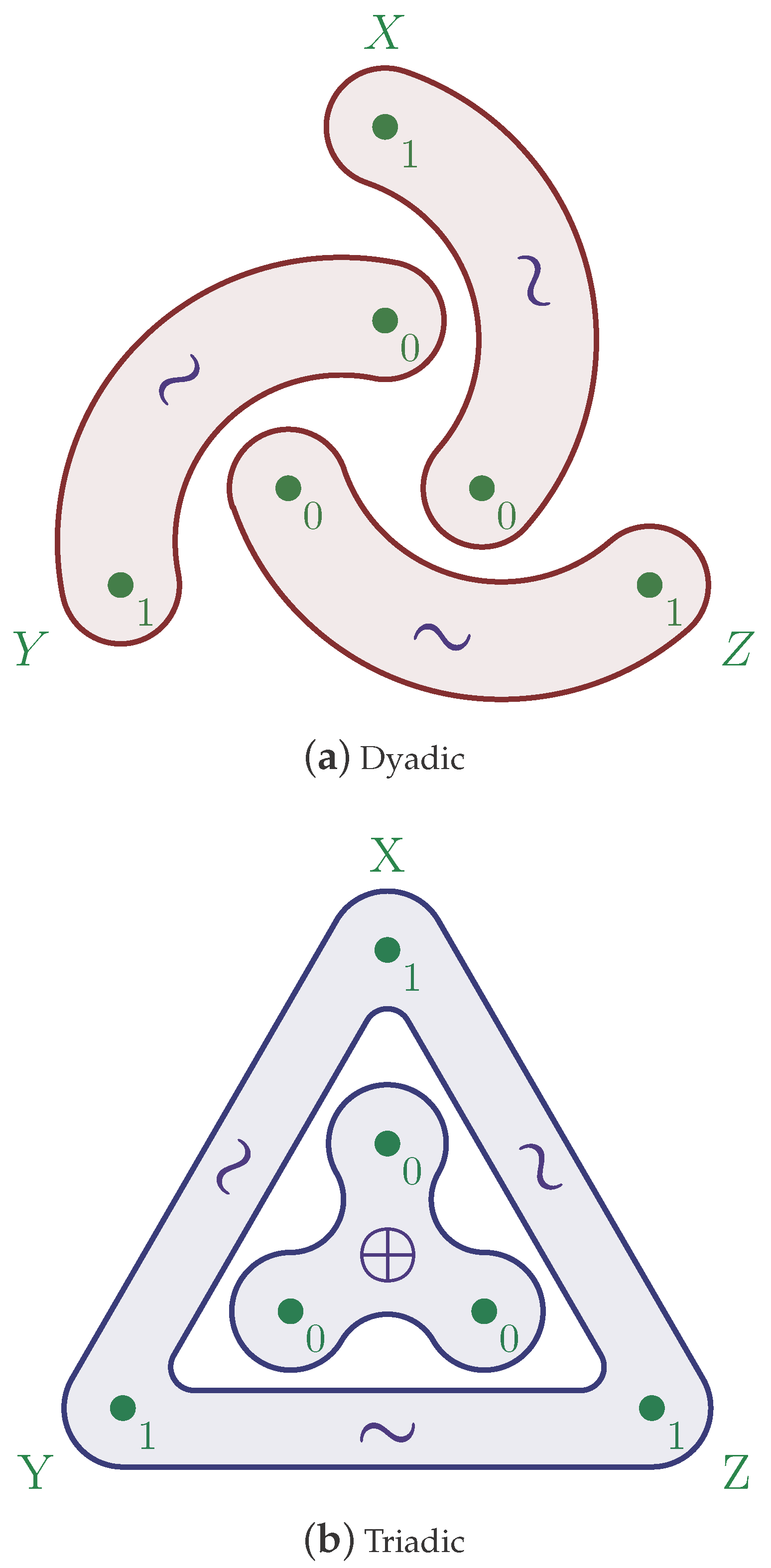

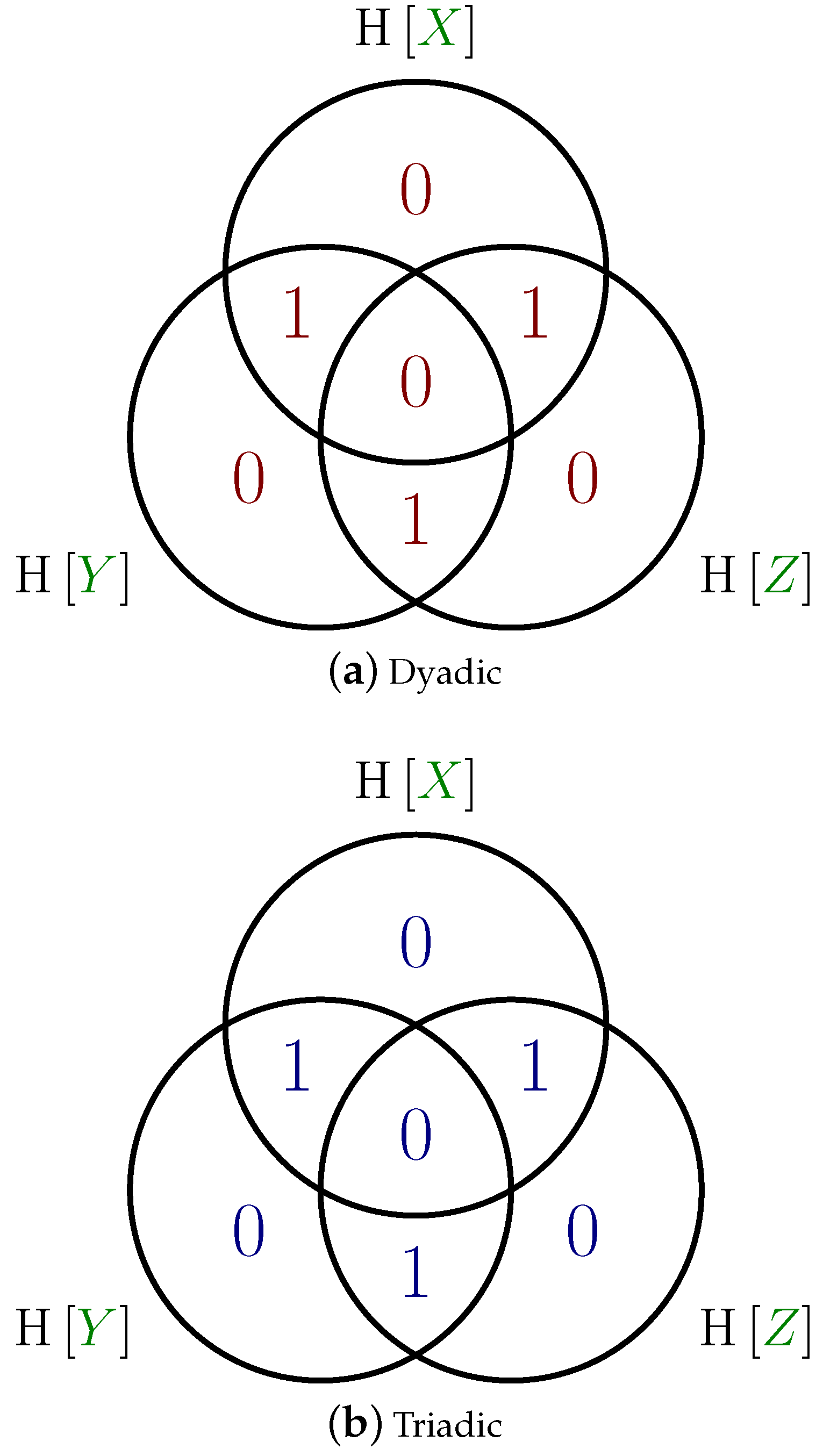

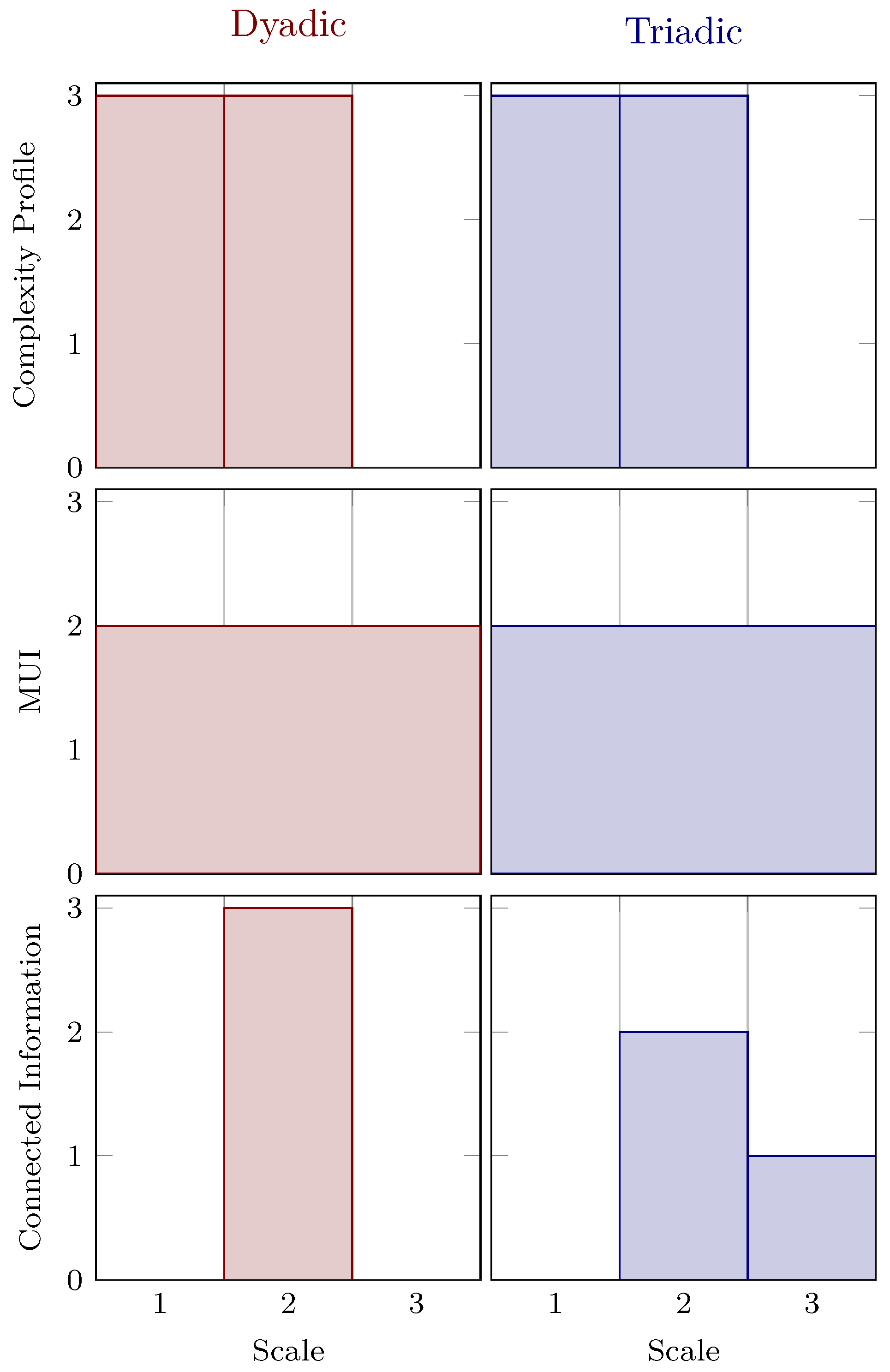

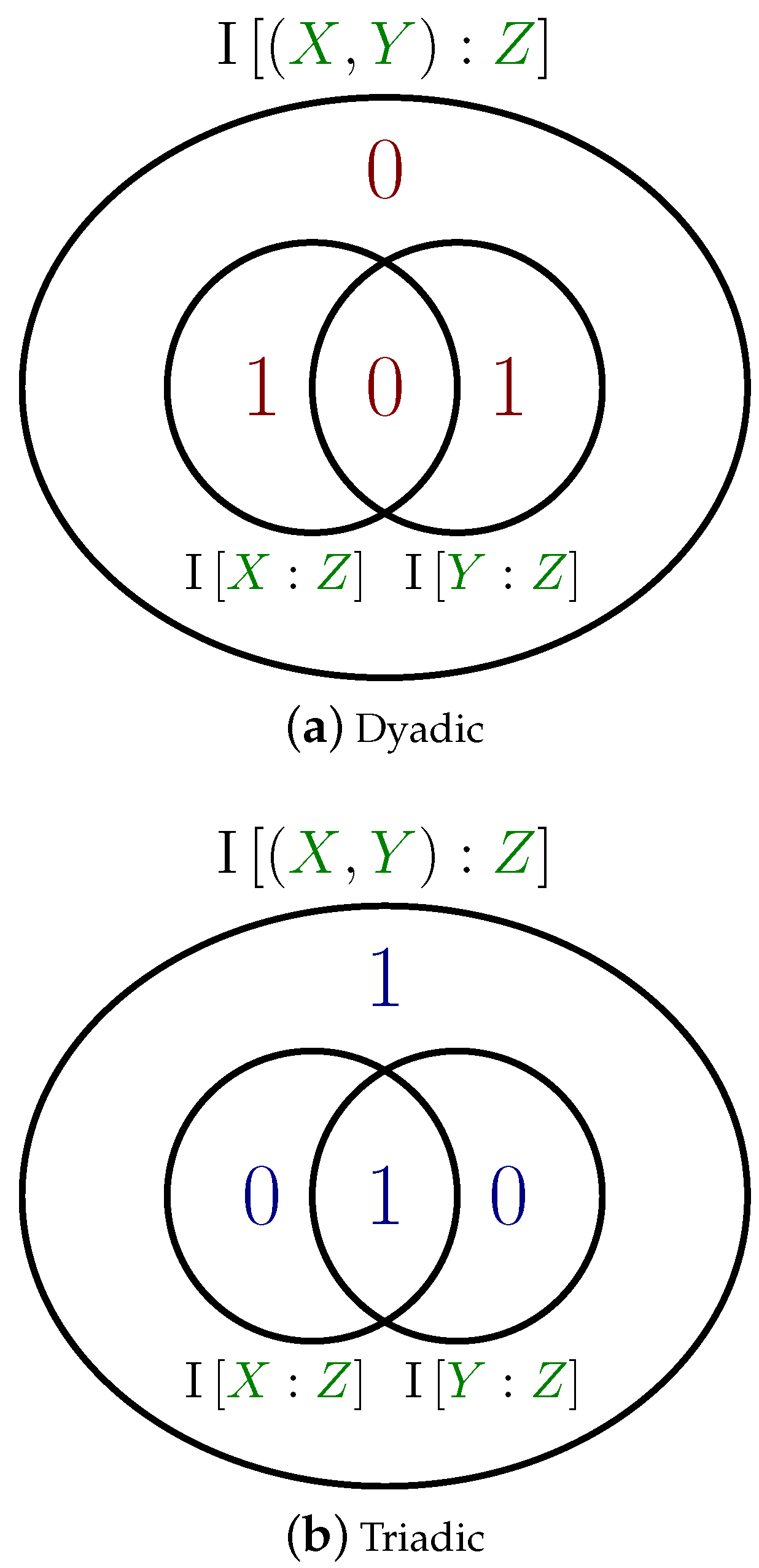

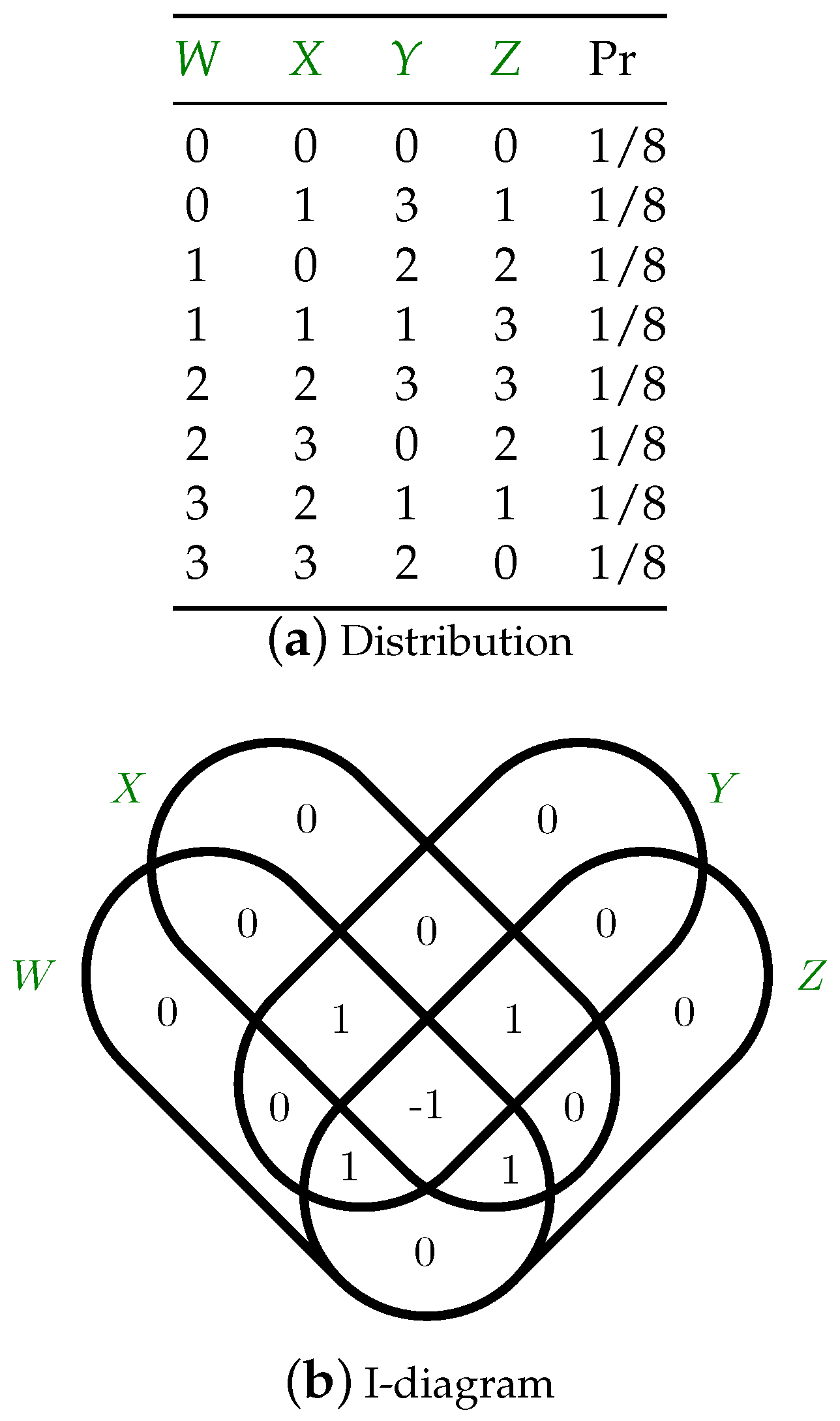

3. Discussion

4. Dyadic Camouflage and Dependency Diffusion

5. Conclusions

“The tools we use have a profound (and devious!) influence on our thinking habits, and, therefore, on our thinking abilities”.(Edsger W. Dijkstra [85])

Acknowledgments

Author Contributions

Conflicts of Interest

Appendix A. A Python Discrete Information Package

References

- Kullback, S. Information Theory and Statistics; Dover: New York, NY, USA, 1968. [Google Scholar]

- Quastler, H. Information Theory in Biology; University of Illinois Press: Urbana-Champaign, IL, USA, 1953. [Google Scholar]

- Quastler, H. The status of information theory in biology—A roundtable discussion. In Symposium on Information Theory in Biology; Yockey, H.P., Ed.; Pergamon Press: New York, NY, USA, 1958; 399p. [Google Scholar]

- Kelly, J. A new interpretation of information rate. IRE Trans. Inf. Theory 1956, 2, 185–189. [Google Scholar] [CrossRef]

- Brillouin, L. Science and Information Theory, 2nd ed.; Academic Press: New York, NY, USA, 1962. [Google Scholar]

- Bialek, W.; Rieke, F.; De Ruyter Van Steveninck, R.R.; Warland, D. Reading a neural code. Science 1991, 252, 1854–1857. [Google Scholar] [CrossRef] [PubMed]

- Strong, S.P.; Koberle, R.; de Ruyter van Steveninck, R.R.; Bialek, W. Entropy and information in neural spike trains. Phys. Rev. Lett. 1998, 80, 197. [Google Scholar] [CrossRef]

- Ulanowicz, R.E. The central role of information theory in ecology. In Towards an Information Theory of Complex Networks; Dehmer, M., Mehler, A., Emmert-Streib, F., Eds.; Springer: Berlin, Germany, 2011; pp. 153–167. [Google Scholar]

- Grandy, W.T., Jr. Entropy and the Time Evolution of Macroscopic Systems; Oxford University Press: Oxford, UK, 2008; Volume 141. [Google Scholar]

- Harte, J. Maximum Entropy and Ecology: A Theory of Abundance, Distribution, and Energetics. Oxford University Press: Oxford, UK, 2011. [Google Scholar]

- Nalewajski, R.F. Information Theory of Molecular Systems; Elsevier: Amsterdam, The Netherlands, 2006. [Google Scholar]

- Garland, J.; James, R.G.; Bradley, E. Model-free quantification of time-series predictability. Phys. Rev. E 2014, 90, 052910. [Google Scholar] [CrossRef] [PubMed]

- Kafri, O. Information theoretic approach to social networks. J. Econ. Soc. Thought 2017, 4, 77. [Google Scholar]

- Varn, D.P.; Crutchfield, J.P. Chaotic crystallography: How the physics of information reveals structural order in materials. Curr. Opin. Chem. Eng. 2015, 777, 47–56. [Google Scholar]

- Varn, D.P.; Crutchfield, J.P. What did Erwin mean? The physics of information from the materials genomics of aperiodic crystals and water to molecular information catalysts and life. Phil. Trans. R. Soc. A 2016, 374. [Google Scholar] [CrossRef] [PubMed]

- Zhou, X.-Y.; Rong, C.; Lu, T.; Zhou, P.; Liu, S. Information functional theory: Electronic properties as functionals of information for atoms and molecules. J. Phys. Chem. A 2016, 120, 3634–3642. [Google Scholar] [CrossRef] [PubMed]

- Kirst, C.; Timme, M.; Battaglia, D. Dynamic information routing in complex networks. Nat. Commun. 2016, 7, 11061. [Google Scholar] [CrossRef] [PubMed]

- Izquierdo, E.J.; Williams, P.L.; Beer, R.D. Information flow through a model of the C. elegans klinotaxis circuit. PLoS ONE 2015, 10, e0140397. [Google Scholar]

- James, R.G.; Burke, K.; Crutchfield, J.P. Chaos forgets and remembers: Measuring information creation, destruction, and storage. Phys. Lett. A 2014, 378, 2124–2127. [Google Scholar] [CrossRef]

- Schreiber, T. Measuring information transfer. Phys. Rev. Lett. 2000, 85, 461. [Google Scholar] [CrossRef] [PubMed]

- Fiedor, P. Partial mutual information analysis of financial networks. Acta Phys. Pol. A 2015, 127, 863–867. [Google Scholar] [CrossRef]

- Sun, J.; Bollt, E.M. Causation entropy identifies indirect influences, dominance of neighbors and anticipatory couplings. Phys. D Nonlinear Phenom. 2014, 267, 49–57. [Google Scholar] [CrossRef]

- Lizier, J.T.; Prokopenko, M.; Zomaya, A.Y. Local information transfer as a spatiotemporal filter for complex systems. Phys. Rev. E 2008, 77. [Google Scholar] [CrossRef] [PubMed]

- Walker, S.I.; Kim, H.; Davies, P.C.W. The informational architecture of the cell. Phil. Trans. R. Soc. A 2016, 273. [Google Scholar] [CrossRef] [PubMed]

- Lee, U.; Blain-Moraes, S.; Mashour, G.A. Assessing levels of consciousness with symbolic analysis. Phil. Trans. R. Soc. Lond. A 2015, 373. [Google Scholar] [CrossRef] [PubMed]

- Maurer, U.; Wolf, S. The intrinsic conditional mutual information and perfect secrecy. In Proceedings of the 1997 IEEE International Symposium on Information Theory, Ulm, Germany, 29 June–4 July 1997; p. 8. [Google Scholar]

- Renner, R.; Skripsky, J.; Wolf, S. A new measure for conditional mutual information and its properties. In Proceedings of the 2003 IEEE International Symposium on Information Theory, Yokohama, Japan, 29 June–4 July 2003; p. 259. [Google Scholar]

- James, R.G.; Barnett, N.; Crutchfield, J.P. Information flows? A critique of transfer entropies. Phys. Rev. Lett. 2016, 116, 238701. [Google Scholar] [CrossRef] [PubMed]

- Williams, P.L.; Beer, R.D. Nonnegative decomposition of multivariate information. arXiv, 2010; arXiv:1004.2515. [Google Scholar]

- Bertschinger, N.; Rauh, J.; Olbrich, E.; Jost, J. Shared information: New insights and problems in decomposing information in complex systems. In Proceedings of the European Conference on Complex Systems 2012; Springer: Berlin, Germany, 2013; pp. 251–269. [Google Scholar]

- Lizier, J.T. The Local Information Dynamics of Distributed Computation in Complex Systems. Ph.D. Thesis, University of Sydney, Sydney, Austrilia, 2010. [Google Scholar]

- Ay, N.; Polani, D. Information flows in causal networks. Adv. Complex Syst. 2008, 11, 17–41. [Google Scholar] [CrossRef]

- Chicharro, D.; Ledberg, A. When two become one: The limits of causality analysis of brain dynamics. PLoS ONE 2012, 7, e32466. [Google Scholar] [CrossRef] [PubMed]

- Lizier, J.T.; Prokopenko, M. Differentiating information transfer and causal effect. Eur. Phys. J. B Condens. Matter Complex Syst. 2010, 73, 605–615. [Google Scholar] [CrossRef]

- Cover, T.M.; Thomas, J.A. Elements of Information Theory; John Wiley & Sons: New York, NY, USA, 2012. [Google Scholar]

- Yeung, R.W. A First Course in Information Theory; Springer Science & Business Media: Berlin, Germany, 2012. [Google Scholar]

- Csiszar, I.; Körner, J. Information Theory: Coding Theorems for Discrete Memoryless Systems; Cambridge University Press: Cambridge, UK, 2011. [Google Scholar]

- MacKay, D.J.C. Information Theory, Inference and Learning Algorithms; Cambridge University Press: Cambridge, UK, 2003. [Google Scholar]

- Griffith, V.; Koch, C. Quantifying synergistic mutual information. In Guided Self-Organization: Inception; Springer: Berlin, Germany, 2014; pp. 159–190. [Google Scholar]

- Cook, M. Networks of Relations. Ph.D. Thesis, California Institute of Technology, Pasadena, CA, USA, 2005. [Google Scholar]

- Merchan, L.; Nemenman, I. On the sufficiency of pairwise interactions in maximum entropy models of networks. J. Stat. Phys. 2016, 162, 1294–1308. [Google Scholar] [CrossRef]

- Reza, F.M. An Introduction to Information Theory; Courier Corporation: North Chelmsford, MA, USA, 1961. [Google Scholar]

- Yeung, R.W. A new outlook on Shannon’s information measures. IEEE Trans. Inf. Theory 1991, 37, 466–474. [Google Scholar] [CrossRef]

- Bell, A.J. The co-information lattice. In Proceedings of the 4th International Workshop on Independent Component Analysis and Blind Signal Separation, Nara, Japan, 1–4 April 2003; Amari, S.M.S., Cichocki, A., Murata, N., Eds.; Springer: New York, NY, USA, 2003; Volume ICA 2003, pp. 921–926. [Google Scholar]

- Bettencourt, L.M.A.; Stephens, G.J.; Ham, M.I.; Gross, G.W. Functional structure of cortical neuronal networks grown in vitro. Phys. Rev. E 2007, 75, 021915. [Google Scholar] [CrossRef] [PubMed]

- Krippendorff, K. Information of interactions in complex systems. Int. J. Gen. Syst. 2009, 38, 669–680. [Google Scholar] [CrossRef]

- Watanabe, S. Information theoretical analysis of multivariate correlation. IBM J. Res. Dev. 1960, 4, 66–82. [Google Scholar] [CrossRef]

- Han, T.S. Linear dependence structure of the entropy space. Inf. Control 1975, 29, 337–368. [Google Scholar] [CrossRef]

- Chan, C.; Al-Bashabsheh, A.; Ebrahimi, J.B.; Kaced, T.; Liu, T. Multivariate mutual information inspired by secret-key agreement. Proc. IEEE 2015, 103, 1883–1913. [Google Scholar] [CrossRef]

- James, R.G.; Ellison, C.J.; Crutchfield, J.P. Anatomy of a bit: Information in a time series observation. Chaos Interdiscip. J. Nonlinear Sci. 2011, 21, 037109. [Google Scholar] [CrossRef] [PubMed]

- Lamberti, P.W.; Martin, M.T.; Plastino, A.; Rosso, O.A. Intensive entropic non-triviality measure. Physica A 2004, 334, 119–131. [Google Scholar] [CrossRef]

- Massey, J. Causality, feedback and directed information. In Proceedings of the International Symposium on Information Theory and Its Applications, Waikiki, HI, USA, 27–30 November 1990; Volume ISITA-90, pp. 303–305. [Google Scholar]

- Marko, H. The bidirectional communication theory: A generalization of information theory. IEEE Trans. Commun. 1973, 21, 1345–1351. [Google Scholar] [CrossRef]

- Bettencourt, L.M.A.; Gintautas, V.; Ham, M.I. Identification of functional information subgraphs in complex networks. Phys. Rev. Lett. 2008, 100, 238701. [Google Scholar] [CrossRef] [PubMed]

- Bar-Yam, Y. Multiscale complexity/entropy. Adv. Complex Syst. 2004, 7, 47–63. [Google Scholar] [CrossRef]

- Allen, B.; Stacey, B.C.; Bar-Yam, Y. Multiscale Information Theory and the Marginal Utility of Information. Entropy 2017, 19, 273. [Google Scholar] [CrossRef]

- Gács, P.; Körner, J. Common information is far less than mutual information. Probl. Control Inf. 1973, 2, 149–162. [Google Scholar]

- Tyagi, H.; Narayan, P.; Gupta, P. When is a function securely computable? IEEE Trans. Inf. Theory 2011, 57, 6337–6350. [Google Scholar] [CrossRef]

- Ay, N.; Olbrich, E.; Bertschinger, N.; Jost, J. A unifying framework for complexity measures of finite systems. In Proceedings of the European Conference on Complex Systems 2006 (ECCS06); European Complex Systems Society (ECSS): Paris, France, 2006. [Google Scholar]

- Verdu, S.; Weissman, T. The information lost in erasures. IEEE Trans. Inf. Theory 2008, 54, 5030–5058. [Google Scholar] [CrossRef]

- Rényi, A. On measures of entropy and information. In Proceedings of the Fourth Berkeley Symposium on Mathematical Statistics and Probability, Oakland, CA, USA, 20 June–30 July 1960; pp. 547–561. [Google Scholar]

- Tsallis, C. Possible generalization of Boltzmann-Gibbs statistics. J. Stat. Phys. 1988, 52, 479–487. [Google Scholar] [CrossRef]

- Abdallah, S.A.; Plumbley, M.D. A measure of statistical complexity based on predictive information with application to finite spin systems. Phys. Lett. A 2012, 376, 275–281. [Google Scholar] [CrossRef]

- McGill, W.J. Multivariate information transmission. Psychometrika 1954, 19, 97–116. [Google Scholar] [CrossRef]

- Wyner, A.D. The common information of two dependent random variables. IEEE Trans. Inf. Theory 1975, 21, 163–179. [Google Scholar] [CrossRef]

- Liu, W.; Xu, G.; Chen, B. The common information of n dependent random variables. In Proceedings of the 2010 48th Annual Allerton Conference on Communication, Control, and Computing (Allerton), Monticello, IL, USA, 29 September–1 October 2010; pp. 836–843. [Google Scholar]

- Kumar, G.R.; Li, C.T.; El Gamal, A. Exact common information. In Proceedings of the 2014 IEEE International Symposium on Information Theory (ISIT), Honolulu, HI, USA, 29 June–4 July 2014; pp. 161–165. [Google Scholar]

- Lad, F.; Sanfilippo, G.; Agrò, G. Extropy: Complementary dual of entropy. Stat. Sci. 2015, 30, 40–58. [Google Scholar] [CrossRef]

- Jelinek, F.; Mercer, R.L.; Bahl, L.R.; Baker, J.K. Perplexity—A measure of the difficulty of speech recognition tasks. J. Acoust. Soc. Am. 1977, 62, S63. [Google Scholar] [CrossRef]

- Schneidman, E.; Still, S.; Berry, M.J.; Bialek, W. Network information and connected correlations. Phys. Rev. Lett. 2003, 91, 238701. [Google Scholar] [CrossRef] [PubMed]

- Pearl, J. Causality; Cambridge University Press: Cambridge, UK, 2009. [Google Scholar]

- Williams, P.L.; Beer, R.D. Generalized measures of information transfer. arXiv, 2011; arXiv:1102.1507. [Google Scholar]

- Bertschinger, N.; Rauh, J.; Olbrich, E.; Jost, J.; Ay, N. Quantifying unique information. Entropy 2014, 16, 2161–2183. [Google Scholar] [CrossRef]

- Harder, M.; Salge, C.; Polani, D. Bivariate measure of redundant information. Phys. Rev. E 2013, 87, 012130. [Google Scholar] [CrossRef] [PubMed]

- Griffith, V.; Chong, E.K.P.; James, R.G.; Ellison, C.J.; Crutchfield, J.P. Intersection information based on common randomness. Entropy 2014, 16, 1985–2000. [Google Scholar] [CrossRef]

- Ince, R.A.A. Measuring multivariate redundant information with pointwise common change in surprisal. arXiv, 2016; arXiv:1602.05063. [Google Scholar]

- Albantakis, L.; Oizumi, M.; Tononi, G. From the phenomenology to the mechanisms of consciousness: Integrated information theory 3.0. PLoS Comput. Biol. 2014, 10, e1003588. [Google Scholar]

- Takemura, S.; Bharioke, A.; Lu, Z.; Nern, A.; Vitaladevuni, S.; Rivlin, P.K.; Katz, W.T.; Olbris, D.J.; Plaza, S.M.; Winston, P.; et al. A visual motion detection circuit suggested by Drosophila connectomics. Nature 2013, 500, 175–181. [Google Scholar] [CrossRef] [PubMed]

- Rosas, F.; Ntranos, V.; Ellison, C.J.; Pollin, S.; Verhelst, M. Understanding interdependency through complex information sharing. Entropy 2016, 18, 38. [Google Scholar] [CrossRef]

- Ince, R.A. The Partial Entropy Decomposition: Decomposing multivariate entropy and mutual information via pointwise common surprisal. Entropy 2017, 19, 318. [Google Scholar] [CrossRef]

- Pica, G.; Piasini, E.; Chicharro, D.; Panzeri, S. Invariant components of synergy, redundancy, and unique information among three variables. Entropy 2017, 19, 451. [Google Scholar] [CrossRef]

- Garey, M.R.; Johnson, D.S. Computers and Intractability: A Guide to the Theory of NP-Completeness; W. H. Freeman: New York, NY, USA, 1979. [Google Scholar]

- Chen, Q.; Cheng, F.; Lie, T.; Yeung, R.W. A marginal characterization of entropy functions for conditional mutually independent random variables (with application to Wyner’s common information). In Proceedings of the 2015 IEEE International Symposium on Information Theory (ISIT), Hong Kong, China, 14–19 June 2015; pp. 974–978. [Google Scholar]

- Shannon, C.E. The bandwagon. IEEE Trans. Inf. Theory 1956, 2, 3. [Google Scholar] [CrossRef]

- Dijkstra, E.W. How do we tell truths that might hurt? In Selected Writings on Computing: A Personal Perspective; Springer: Berlin, Germany, 1982; pp. 129–131. [Google Scholar]

- Jupyter. Available online: https://github.com/jupyter/notebook (accessed on 7 October 2017).

- James, R.G.; Ellison, C.J.; Crutchfield, J.P. Dit: Discrete Information Theory in Python. Available online: https://github.com/dit/dit (accessed on 7 October 2017).

| (a) Dyadic | (b) Triadic | ||||||

|---|---|---|---|---|---|---|---|

| X | Y | Z | Pr | X | Y | Z | Pr |

| 0 | 0 | 0 | 1/8 | 0 | 0 | 0 | 1/8 |

| 0 | 2 | 1 | 1/8 | 1 | 1 | 1 | 1/8 |

| 1 | 0 | 2 | 1/8 | 0 | 2 | 2 | 1/8 |

| 1 | 2 | 3 | 1/8 | 1 | 3 | 3 | 1/8 |

| 2 | 1 | 0 | 1/8 | 2 | 0 | 2 | 1/8 |

| 2 | 3 | 1 | 1/8 | 3 | 1 | 3 | 1/8 |

| 3 | 1 | 2 | 1/8 | 2 | 2 | 0 | 1/8 |

| 3 | 3 | 3 | 1/8 | 3 | 3 | 1 | 1/8 |

| (a) Dyadic | (b) Triadic | |||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| X | Y | Z | X | Y | Z | |||||||||||||||

| X0 | X1 | Y0 | Y1 | Z0 | Z1 | Pr | X0 | X1 | Y0 | Y1 | Z0 | Z1 | Pr | |||||||

| 0 | 0 | 0 | 0 | 0 | 0 | 1/8 | 0 | 0 | 0 | 0 | 0 | 0 | 1/8 | |||||||

| 0 | 0 | 1 | 0 | 0 | 1 | 1/8 | 0 | 1 | 0 | 1 | 0 | 1 | 1/8 | |||||||

| 0 | 1 | 0 | 0 | 1 | 0 | 1/8 | 0 | 0 | 1 | 0 | 1 | 0 | 1/8 | |||||||

| 0 | 1 | 1 | 0 | 1 | 1 | 1/8 | 0 | 1 | 1 | 1 | 1 | 1 | 1/8 | |||||||

| 1 | 0 | 0 | 1 | 0 | 0 | 1/8 | 1 | 0 | 0 | 0 | 1 | 0 | 1/8 | |||||||

| 1 | 0 | 1 | 1 | 0 | 1 | 1/8 | 1 | 1 | 0 | 1 | 1 | 1 | 1/8 | |||||||

| 1 | 1 | 0 | 1 | 1 | 0 | 1/8 | 1 | 0 | 1 | 0 | 0 | 0 | 1/8 | |||||||

| 1 | 1 | 1 | 1 | 1 | 1 | 1/8 | 1 | 1 | 1 | 1 | 0 | 1 | 1/8 | |||||||

| Measures | Dyadic | Triadic | ||

|---|---|---|---|---|

| H [X,Y,Z] | 3 bit | 3 bit | ||

| H2 [X,Y,Z] | 3 bit | 3 bit | ||

| S2 [X,Y,Z] | 0.875 bit | 0.875 bit | ||

| I [X:Y:Z] | 0 bit | 0 bit | ||

| T [X:Y:Z] | 3 bit | 3 bit | ||

| B [X:Y:Z] | 3 bit | 3 bit | ||

| J [X:Y:Z] | 1.5 bit | 1.5 bit | ||

| II [X:Y:Z] | 0 bit | 0 bit | ||

| K [X:Y:Z] | 0 bit | 1 bit | ||

| C [X:Y:Z] | 3 bit | 3 bit | ||

| G [X:Y:Z] | 3 bit | 3 bit | ||

| F [X:Y:Z] | 3 bit | 3 bit | ||

| M [X:Y:Z] | 3 bit | 3 bit | ||

| I [X:Y↓Z] | 1 bit | 0 bit | ||

| I [X:Y⇓Z] | 1 bit | 0 bit | ||

| X [X,Y,Z] | 1.349 bit | 1.349 bit | ||

| R [X:Y:Z] | 0 bit | 0 bit | ||

| P [X,Y,Z] | 8 | 8 | ||

| D [X,Y,Z] | 0.761 bit | 0.761 bit | ||

| CLMRP [X,Y,Z] | 0.381 bit | 0.381 bit | ||

| TSE [X:Y:Z] | 2 bit | 2 bit |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

James, R.G.; Crutchfield, J.P. Multivariate Dependence beyond Shannon Information. Entropy 2017, 19, 531. https://doi.org/10.3390/e19100531

James RG, Crutchfield JP. Multivariate Dependence beyond Shannon Information. Entropy. 2017; 19(10):531. https://doi.org/10.3390/e19100531

Chicago/Turabian StyleJames, Ryan G., and James P. Crutchfield. 2017. "Multivariate Dependence beyond Shannon Information" Entropy 19, no. 10: 531. https://doi.org/10.3390/e19100531

APA StyleJames, R. G., & Crutchfield, J. P. (2017). Multivariate Dependence beyond Shannon Information. Entropy, 19(10), 531. https://doi.org/10.3390/e19100531