Abstract

In this paper, we study Amari’s natural gradient flows of real functions defined on the densities belonging to an exponential family on a finite sample space. Our main example is the minimization of the expected value of a real function defined on the sample space. In such a case, the natural gradient flow converges to densities with reduced support that belong to the border of the exponential family. We have suggested in previous works to use the natural gradient evaluated in the mixture geometry. Here, we show that in some cases, the differential equation can be extended to a bigger domain in such a way that the densities at the border of the exponential family are actually internal points in the extended problem. The extension is based on the algebraic concept of an exponential variety. We study in full detail a toy example and obtain positive partial results in the important case of a binary sample space.

1. Introduction

For the purpose of obtaining a clear presentation of our approach to the geometry of statistical models, we start with a recap of nonparametric statistical manifold; see, e.g., the review paper [1]. However, we will shortly move to the actual setup of the present paper, i.e., the finite state space case.

Let

be a measured space of sample points x ∈ Ω. We denote by

the simplex of (probability) densities and by

the convex set of strictly positive densities. If Ω is finite, then

is the topological interior of

. We denote by

the affine space generated by

.

The set

holds the exponential geometry, which is an affine geometry, whose geodesics are curves of the form

. The set

holds the mixture geometry, whose geodesics are of the form t ↦ pt = (1 − t)p0 + tp1. A proper definition of the exponential and mixture geometry, where probability densities are considered points, requires the definition of the proper tangent space to hold the vectors representing the velocity of a curve. In both cases, the tangent space Tp at a point p is a space of random variables V with zero expected value, Ep [V] = 0. On the tangent space Tp, a natural scalar product is defined, ⟨U, V⟩p = Ep [UV], so that a pseudo-Riemannian structure is available. Note that the Riemannian structure is a third geometry, different from both the exponential and the mixture geometries. Note also that both the expected value and the covariance can be naturally extended to be defined on

.

For each lower bounded objective function

and each statistical model

, the (stochastic) relaxation of f to

is the function

,

; cf. [2]. The minimization of the stochastic relaxation as a tool to minimize the objective function has been studied by many authors [3–7].

If we have a parameterization ξ ↦ pξ of

, the parametric expression of the relaxed function is

. Under integrability and differentiability conditions on both ξ ↦ pξ and x ↦ f(x),

is differentiable, with

and

; see [1,8]. In order to properly describe the gradient flow of a relaxed random variable, these classical computations are better cast into the formal language of information geometry (see [9]) and, even better, in the language of non-parametric differential geometry [10] that was used in [11]. The previous computations suggest to take the Fisher score

as the definition of a tangent vector at the j-th coordinate curve. While the development of this analogy in the finite state space case does not require a special setup, in the non-finite state space, some care has to be taken.

In this paper, we follow the non-parametric setup discussed in [1] and, in particular, the notion of an exponential family ℇ and the identification of the tangent space at each

with a space of p-centered random variables.

The paper is organized as follows. We discuss in Section 2 the generalities of the finite state space case; in particular, we carefully define the various notions of the Fisher information matrix and natural gradient that arise from a given parameterization. In Section 3, we discuss a toy example in order to introduce the construction of an algebraic variety extending the exponential family from positive probabilities

to signed probabilities

; this construction is applied to the natural gradient flow in the expectation parameters; moreover, it is shown that this model has a variety that is ruled. The last Section 4 is devoted to the treatment of the special important case when the sample space is binary.

The present paper is a development of the paper [12], which was presented as a poster at the MaxEnt Conference 2014. While the topic is the same, the actual overlapping between the two papers is minimal and concerns mainly the generalities that are repeated for the convenience of the reader.

2. Gradient Flow of Relaxed Optimization

Let Ω be a finite set of points x = (x1, …, xn) and µ the counting measure of Ω. In this case, a density

is a probability function, i.e.,

, such that

.

Let

be a set of random variables, such that, if

is constant, then c1 = ⋯ = cd = 0; for instance consider

such that

, j = 0,…,d, and

is a linear basis. We say that

is a set of affinely independent random variables. If

is a linear basis it is affinely independent if and only if {1, T1, …, Td} is a linear basis.

We consider the statistical model ℇ whose elements are uniquely identified by the natural parameters θ in the exponential family with sufficient statistics

namely:

see [13].

The proper convex function

,

is the cumulant generating function of the sufficient statistics T, in particular,

Moreover, the entropy of pθ is:

The mapping ∇ψ is one-to-one onto the interior M° of the marginal polytope, that is the convex span of the values of the sufficient statistics M = {T (x)|x ∈ Ω}. Note that no extra condition is required, because on a finite state space, all random variables are bounded. Nonetheless, even in this case, the proof is not trivial; see [13].

Convex conjugation applies [14] (Section 25) with the definition:

The concave function θ ↦ η · θ − ψ(θ) has divergence mapping θ ↦ η − ∇ψ(θ), and the equation η = ∇ψ(θ) has a solution if and only if η belongs to the interior M° of the marginal polytope. The restriction

is the Legendre conjugate of ψ, and it is computed by:

The Legendre conjugate ϕ is such that ∇ϕ = (∇ψ)−1, and it provides an alternative parameterization of ℇ with the so-called expectation or mixture parameter η = ∇ψ(θ),

While in the θ parameters, the entropy is H(pθ) = ψ(θ) − θ · ∇ψ(θ), in the η parameters, the ϕ function gives the negative entropy:

.

Proposition 1.

- Hess ϕ (η) = (Hess ψ(θ))−1 when η = ∇ψ(θ).

- The Fisher information matrix of the statistical model given by the exponential family in the θ parameters is Ie(θ) = Covpθ (∇ log pθ, ∇ log pθ) = Hess ψ(θ).

- The Fisher information matrix of the statistical model given by the exponential family in the η parameters is Im(θ) = Covpη (∇ log pη, ∇ log pη) = Hess ϕ (η).

Proof. Derivation of the equality ∇ ϕ = (∇ψ)−1 gives the first item. The second item is a property of the cumulant generating function ψ. The third item follows from Equation (1). □

2.1. Statistical Manifold

The exponential family ℇ is an elementary manifold in either the θ or the η parameterization, named respectively exponential or mixture parameterization. We discuss now the proper definition of the tangent bundle T ℇ.

Definition 1 (Velocity). If I ∋ t ↦ pt, I open interval, is a differentiable curve in ℇ, then its velocity vector is identified with its Fisher score:

The capital D notation is taken from differential geometry; see the classical monograph [15].

Definition 2 (Tangent space). In the expression of the curve by the exponential parameters, the velocity is:

that is it equals the statistics whose coordinates are in the basis of the sufficient statistics centered at pt. As a consequence, we identify the tangent space at each p ∈ ℇ with the vector space of centered sufficient statistics, that is:

In the mixture parameterization of Equation (1), the computation of the velocity is:

The last equality provides the interpretation of

as the coordinate of the velocity in the conjugate vector basis Hess ϕ (η(t)) (T − η(t)), that is the basis of velocities along the η coordinates.

In conclusion, the first order geometry is characterized as follows.

Definition 3 (Tangent bundle T ℇ). The tangent space at each p ∈ ℇ is a vector space of random variables Tpℇ = Span (Tj − Ep [Tj]|j = 1, …, d), and the tangent bundle T ℇ = {(p, V)|p ∈ ℇ, V ∈ Tp ℇ}, as a manifold, is defined by the chart:

Proposition 2.

- If V = v · (T − η) ∈ Tpηℇ, then V is represented in the conjugate basis as:

- The mapping (Hess ϕ (η))−1 maps the coordinates v of a tangent vector V ∈ Tpη ℇ with respect to the basis of centered sufficient statistics to the coordinates v* with respect to the conjugate basis.

- In the θ parameters, the transformation is v ↦ v* = Hess ψ(θ)v.

Remark 1. In the finite state space case, it is not necessary to go on to the formal construction of a dual tangent bundle, because all finite dimensional vector spaces are isomorphic. However, this step is compulsory in the infinite state space case, as was done in [1]. Moreover, the explicit construction of natural connections and natural parallel transports of the tangent and dual tangent bundle is unavoidable when considering the second-order calculus, as was done in [1,8], in order to compute Hessians and implement Newton methods of optimization. However, the scope of the present paper is restricted to a basic study of gradient flows; hence, from now on, we focus on the Riemannian structure and disregard all second-order topics.

Proposition 3 (Riemannian metric). The tangent bundle has a Riemannian structure with the natural scalar product of each Tpℇ, ⟨V, W⟩p = Ep [VW]. In the basis of sufficient statistics, the metric is expressed by the Fisher information matrix I(p) = Covp (T, T), while in the conjugate basis, it is expressed by the inverse Fisher matrix I−1(p).

Proof. In the basis of the sufficient statistics, V = v · (T − Ep [T]), W = w · (T − Ep [T]), so that:

where I(p) = Covp (T, T) is the Fisher information matrix.

If p = pθ = pη, the conjugate basis at p is:

so that for elements of the tangent space expressed in the conjugate basis, we have V = v* · I−1(p) (T − Ep [T]), W = w* · I−1(p) (T − Ep [T]); thus:

2.2. Gradient

For each C1 real function

, its gradient is defined by taking the derivative along a C1 curve I ↦ p(t), p = p(0), and writing it with the Riemannian metrics,

If

is the expression of F in the parameter θ and t ↦ θ (t) is the expression of the curve, then

, so that at p = pθ (0), with velocity

, so that we obtain the celebrated Amari’s natural gradient of [16]:

If

is the expression of F in the parameter η and t ↦ η (t) is the expression of the curve, then

so that at p = pη(0), with velocity

,

We summarize all notions of gradient in the following definition.

Definition 4 (Gradients).

- The random variable ∇F (p) uniquely defined by Equation (9) is called the (geometric) gradient of F at p. The mapping ∇F : ℇ ∋ p ↦ ∇F (p) is a vector field of T ℇ.

- The vector of Equation (10) is the expression of the geometric gradient in the θ in the basis of sufficient statistics, and it is called the natural gradient, while, which is the expression in the conjugate basis of the sufficient statistics, is called the vanilla gradient.

- The vector of Equation (10) is the expression of the geometric gradient in the η parameter and in the conjugate basis of sufficient statistics, and it is called the natural gradient, while, which is the expression in the basis of sufficient statistics, is called the vanilla gradient.

Given a vector field of ℇ, i.e., a mapping G defined on ℇ, such that G(p) ∈ Tp ℇ, which is called a section of the tangent bundle in the standard differential geometric language, an integral curve from p is a curve I ∋ t ↦ p(t), such that p(0) = p and

. In the θ parameters, G(pθ) = Ĝ(θ) · (T − ∇ψ(θ)), so that the differential equation is expressed by

. In the η parameters,

, and the differential equation is

.

Definition 5 (Gradient flow). The gradient flow of the real function F : ℇ is the flow of the differential equation, i.e.,

. The expression in the θ parameters is, and the expression in the η parameters is.

The cases of gradient computation we have discussed above are just a special case of a generic argument. Let us briefly study the gradient flow in a general chart f : ζ ↦ pζ. Consider the change of parametrization from ζ to θ,

and denote the Jacobian matrix of the parameters’ change by J(ζ). We have:

and the ζ coordinate basis of the tangent space

consists of the components of the gradient with respect to ζ,

It should be noted that in this case, the expression of the Fisher information matrix does not have the form of a Hessian of a potential function. In fact, the case of the exponential and the mixture parameters point to a special structure, which is called the Hessian manifold; see [17].

2.3. Gradient Flow in the Mixture Geometry

From now on, we are going to focus on the expression of the gradient flow in the η parameters. From Definition 4, we have:

where I(p) = Covp (T, T). As p ↦ Covp (T, T) is the restriction to the simplex of a quadratic function, while p ↦ η is the restriction to the exponential family ℇ of a linear function, in some cases, we can naturally consider the extension of the gradient flow equation outside M°. One notable case is when the function F is a relaxation of a non-constant state space function f : Ω → ℝ, as it is defined in, e.g., [3].

Proposition 4. Let f : Ω → ℝ, and let F (p) = Ep [f] be its relaxation on p ∈ ℇ. It follows:

- ∇F (p) is the least square projection of f onto Tpℇ, that is:

- The expressions in the exponential parameters θ are, respectively.

- The expressions in the mixture parameters η are and , respectively.

Proof. On a generic curve through p with velocity V, we have

. If V ∈ Tpℇ, we can orthogonally project f to get

.

Remark 2. Let us briefly recall the behavior of the gradient flow in the relaxation case. Let θn, n = 1, 2, …, be a minimizing sequence for, and let be a limit point of the sequence. It follows that has a defective support, in particular; see [18,19]. For a proof along lines coherent with the present paper, see [20] (Theorem 1). It is found that the support is exposed, that is is a face of the marginal polytope M = con {T (x)|x ∈ Ω}. In particular,

belongs to a face of the marginal polytope M. If a is the (interior) orthogonal of the face, that is a · T (x) + b ≥ 0 for all x ∈ Ω and a · T (x) + b = 0 on the exposed set, then on the face, so that. If we extend the mapping η ↦ Covη (f, T) on the closed marginal polytope M to be the limit of the vector field of the gradient on the faces of the marginal polytope, we expect to see that such a vector field is tangent to the faces. This remark is further elaborated below in the binary case.

2.4. The Saturated Model

A case of special tutorial interest is obtained when the exponential family contains all probability densities, that is when

. This case has been treated by many authors; here, we use the presentation of [21].

It is convenient to recode the sample space as Ω = {0, …, d}, where x = 0 is a distinguished point. If X is the identity on Ω, we define the sufficient statistics to be the indicator functions of points Tj = (X = j), j = 1, …, d. The saturated exponential family consists of all of the positive densities written as:

where:

Note that, in this case, the expectation parameter ηj = E ((X = j)) is the probability of case x = j and the marginal polytope is the probability simplex Δd.

The gradient mapping is:

the inverse gradient mapping is defined for η ∈]0, 1[d by:

the negative entropy (Legendre conjugate) is:

the η parameterization (1) of the probability is:

Remark 3. The previous equation prompts three crucial remarks:

- The expression of the probability in the η parameters is a normalized monomial in the parameters.

- The expression continuously extends the exponential family to the probabilities in.

- The expression actually is a polynomial parameterization of the signed densities.

We proceed to approach the three issues above. The Hessian functions are:

The matrix Hess ψ(θ) is the Fisher information matrix I(p) of the exponential family at p = pθ, and the matrix Hess ϕ (η) is the inverse Fisher information matrix I−1(p) at p = pη. It follows that the natural gradient of a function η ↦ h(η) will be:

whose behavior depends on the following theorem; see [21] (Proposition 3).

Proposition 5.

- The inverse Fisher information matrix I(p)−1 is zero on the vertexes of the simplex, only.

- The determinant of the inverse Fisher information matrix I(p)−1 is:

- The determinant of the inverse Fisher information matrix I(p)−1 is zero on the borders of the simplex, only.

- On the interior of each facet, the rank of the inverse Fisher information matrix I(p)−1 is (n − 1), and the (n − 1) linear independent column vectors generate the subspace parallel to the facet itself.

A generic statistical model can be seen as a submanifold of the saturated model, so that the form of the gradient in the submanifold is derived according to the general results in differential geometry. We do not do that here, and we switch to some very specific examples.

3. Toric Models: A Tutorial Example

Exponential families whose sample space is an integer lattice, such as finite subsets of ℤ2 or {+1, −1}d, have special algebro-combinatorial features that fall under the name of algebraic statistics. Seminal papers have been [22,23]. Monographs on the topic are [24–26]. The book [27] covers both information geometry and algebraic statistics.

We do not assume the reader has detailed information about algebraic statistics. In this section, we work on a toy example intended to show both the basic mechanism of algebraic statistics and how the algebraic concepts are applied to the gradient flow problem as it was described in the previous section.

First, we give a general definition of the object on which we focus. A toric model is an exponential family, such that the orthogonal space of the space generated by the sufficient statistics and the constant has a vector basis of integer-valued random variables. We consider this example:

which corresponds to a variation of the classical independence model, where the design corresponds to the vertices of a square. It this example we moved the point {4} from (1, 1) to (2, 1).

T3 is an integer-valued vector basis of the orthogonal space Span (1, T1, T2)⊥.

For the purpose of the generalization to less trivial examples, it should be noted that

, that is (−2, 1, 2,−1) = (0, 1, 2, 0) − (2, 0, 0, 1). The couple

connects the lattice defined by:

Such a set of generators is called a Markov basis of the lattice; see [22]. Algorithms are available to compute such a set of generators and are implemented, for instance, in the software suite 4ti2; see [28].

The sample space can be identified with the value of the sufficient statistics, hence with a finite subset of ℚ2 ⊃ Ω, Ω = {(0, 0), (0, 1), (1, 0), (2, 1)}; see Figure 1. Given a finite subset of ℝd, it is a general algebraic fact that there exists a filtering set of monomial functions that is a vector basis of all real functions on the subset itself; see an exposition and the applications to statistics in [24] or [27]. In our case, the monomial basis is 1, T1, T2, T1T2, and we define the matrix of the saturated model to be:

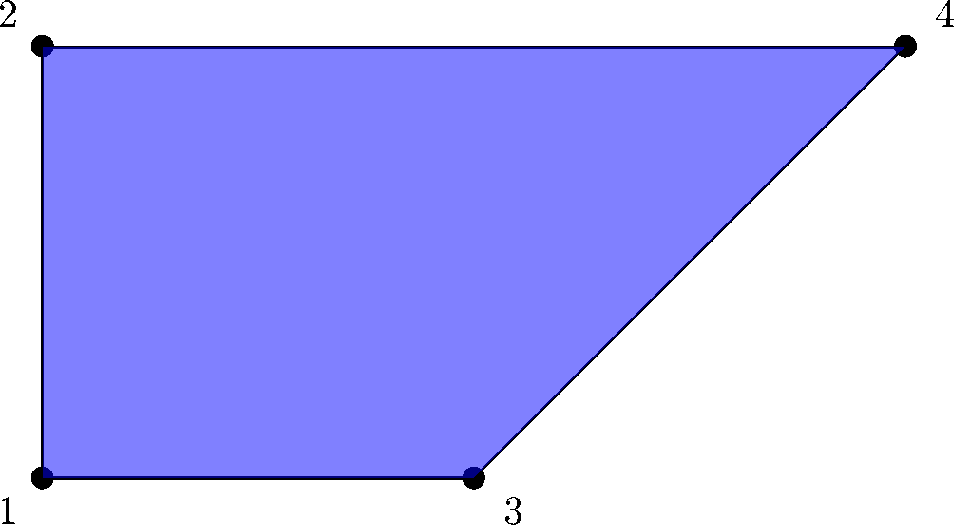

Figure 1.

Marginal polytope of the exponential family in Equations (12) and (13). The coordinates of the vertices are given by (T1, T2).

The matrix A one-to-one maps probabilities into expected values,

and vice versa,

On Model (13), the (positive) probabilities are constrained by the model:

If we introduce the parameters ζ1 = exp (θ1), ζ2 = exp (θ2), the model is shown to be a (piece of an) algebraic variety, that is a set described by the rational parametric equations:

The peculiar structure of the toric model is best seen by considering the unnormalized probabilities:

In algebraic terms, the homogeneous coordinates [q1 : q2 : q3 : q4] belong to the projective space P3. Precisely, the (real) projective space P3 is the set of all non-zero points of ℝ4 together with the equivalence relation

if, and only if,

, k ≠ 0. The domain of unnormalized signed probabilities as projective points is the open subset

of ℙ3 where q1 + q2 + q3 + q4 ≠ 0. On this set, we can compute the normalization:

where *ℇ is the affine space generated by the simplex Δ3. Notice that this embedding produces a number of natural geometrical structures on *ℇ.

Because of the form of (13), a positive density p belongs to that family if, and only if, log p ∈ Span (1, T1, T2), which, in turn, is equivalent to log p ⊥ T3. We can rewrite the orthogonality as:

Dropping the log function in the last expression, we observe that the positive probabilities described by either Equation (17) with θ1, θ2 ∈ ℝ or Equation (18) with ζ1, ζ2 ∈ ℝ> are equivalently described by the equations:

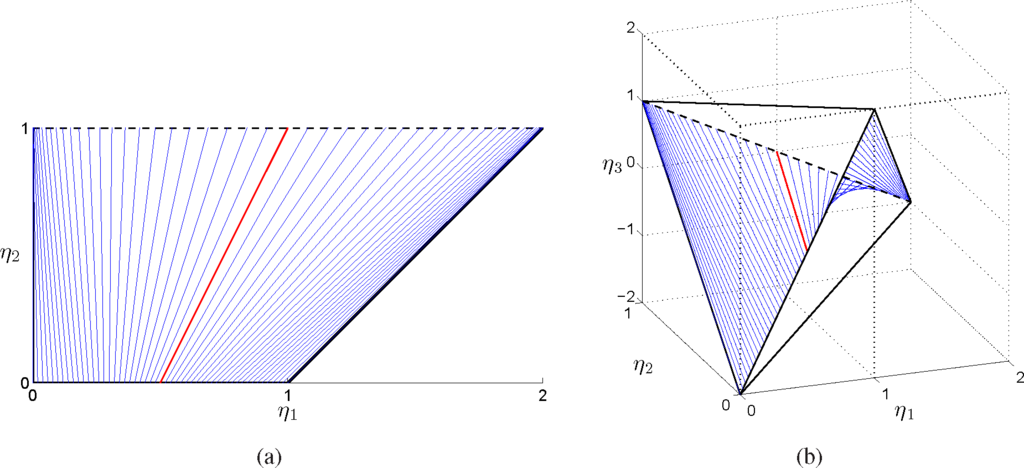

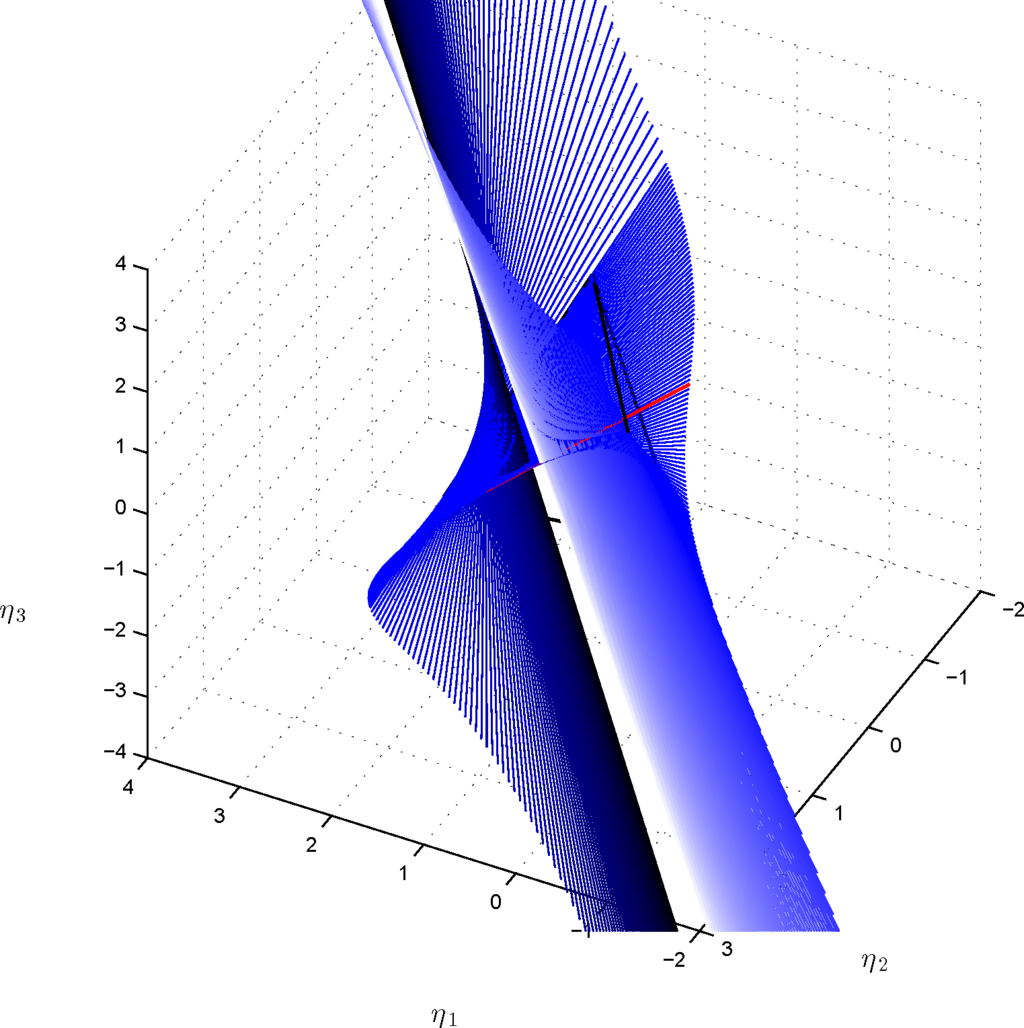

Equation (21) identifies a surface within the probability simplex Δ3, which is represented in Figure 2 by the triangularization of a grid of points that satisfy the invariant.

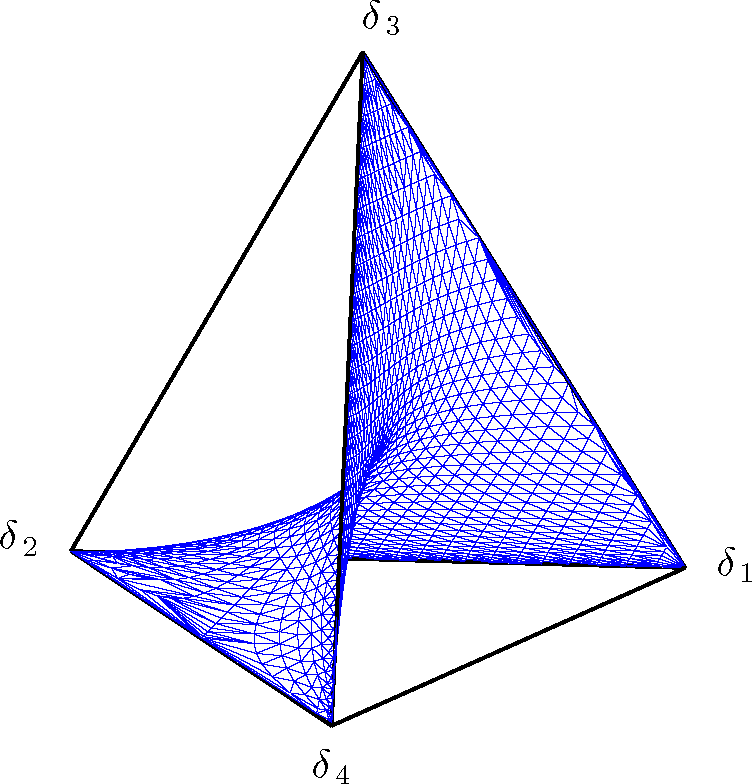

Figure 2.

Representation of the exponential family in Equations (12) and (13) as a surface that intersects the probability simplex ∆3. The surface is obtained by the triangularization of a grid of points that satisfy the invariant in Equation (21).

By choosing a basis for the space orthogonal to Span (1, T1, T2)⊥, we can embed the marginal polytope of Figure 1 into the associated full marginal polytope. By expressing probabilities as a function of the expectation parameters, Equation (21) identifies a relationship between η1, η2 and the expected values of the chosen basis for the orthogonal space. This corresponds to an equivalent invariant in the expectation parameters, which, in turn, identifies a surface in the full marginal polytope.

For instance, consider the full marginal polytope parametrized by η = (η1, η2, η3), with

, which corresponds to the choice of T3 as a basis for the space orthogonal to the span of the sufficient statistics of the model, together with the constant 1, as in Equation (12). We introduce the following matrix:

and similarly to Equation (15), we use the B matrix to one-to-one map probabilities into expected values, that is:

and:

Then, by expressing probabilities as a function of the expectation parameters in Equation (21), we obtain the following invariant in η associated with the model:

From the linear relationship between probabilities and expectation probabilities, we know that on the interior of the full marginal polytope, there exists a unique η3 which can be computed as a function of the other expectation parameters. Solving Equation (25) for η3 allows one to express explicitly the value of η3 given (η1, η2) and represent the surface associated with the invariant in the full marginal polytope. However, the cubic polynomial in Equation (25) in general admits three roots. The unique value of η3 can be obtained from the roots of the cubic polynomial, by imposing that η3 must be real and belong to the full marginal polytope given by Conv {(T1(x), T2(x), T3(x))|x ∈ Ω}.

We remind that the determinant Δ associated with the cubic function in Equation (25) in the η3 variable:

with:

is given by:

For Δ = 0, the polynomial has a real root with multiplicity equal to three; for Δ < 0, we have one real root and two complex conjugates roots, while for Δ > 0, there exist three real roots. The three roots of the polynomial as a function of the coefficients are given by:

for k ∈ {1, 2, 3}, with:

and:

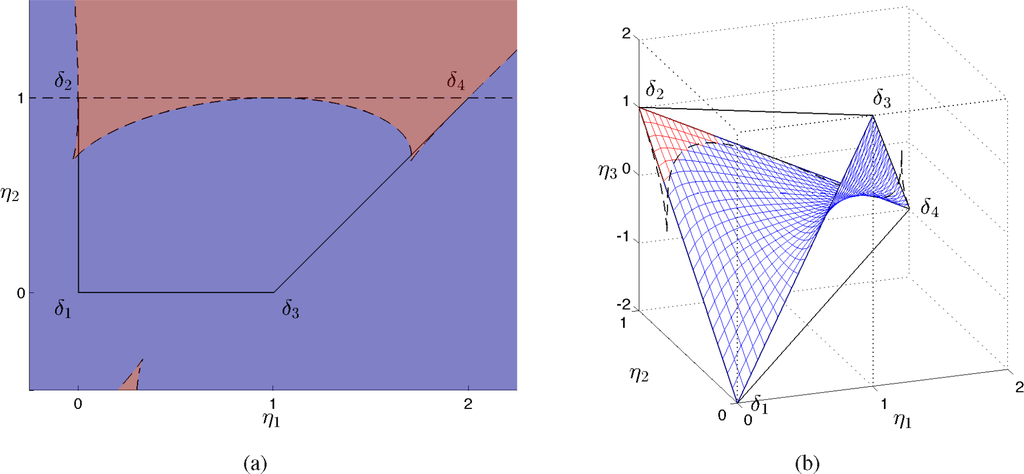

In Figure 3(a), we represent in blue the region of the space (η1, η2) where Δ < 0, in red where Δ > 0, and the points where Δ = 0 with a dashed line. For Δ < 0, the only real root is η3,1, which identifies the blue surface in the full marginal polytope in Figure 3(b). For Δ > 0, it is easy to verify that only η3,2 belongs to the interior of the full marginal polytope parametrized by (η1, η2, η3), since it satisfies the inequalities given by the facets of the marginal polytope, and is represented in Figure 3(b) by the red surface. Finally, the three real roots coincide for Δ = 0, that is, for η2 = 1, and where:

Figure 3.

Marginal polytope of the exponential family in Equations (12) and (13) (a). The dashed lines correspond to the points where ∆ = 0, where ∆ is the discriminant in Equation (31); over the red regions ∆ > 0 and over the blue regions ∆ < 0. Representation of the exponential family as a surface in the full marginal polytope parametrized by (η1, η2, η3) (b). The blue surface is given by the unique real root η3,1 in Equation (32); the red surface corresponds to the unique real root η3,2, which belongs to the full marginal polytope; over the dashed lines, which have been computed solving Equation (40) numerically, Equation (26) admits a real root with multiplicity equal to three.

In the polynomial ring ℚ [p1, p2, p3, p4], the model ideal:

consists of all the polynomials of the form:

The algebraic variety of

uniquely extends the exponential family outside the positive octant. In the language of commutative algebra, it is the real Zariski closure of the exponential family model, cf. [29]. It is a notable example of toric variety. The general theory is in the monograph [30], and the applications to statistical models were first discussed in [31,32].

Let us discuss in some detail the parameterization of the toric variety as the submanifold of ℝ4 defined by Equations (20) and (21). The Jacobian matrix is:

It has rank one, that is, there is a singularity, if, and only if,

This is equivalent to

, which is a subspace of dimension two, whose intersection with Equation (20), is a line

in the affine space *ℇ = {p ∈ ℝ4|p1 + p2 + p3 + p4 = 1}. This (double) critical line intersects the simplex along the edge δ2 ↔ δ4. Outside

, that is in the open complement set, the equations of the toric variety are locally solvable in two among the pi’s under the condition that the corresponding minor is not zero. To have a picture of what this critical set looks like, let us intersect our surface with the plane p3 = 0. On the affine space p1 + p2 + p4 = 1 we have

, that is the union of the double line

with the line p4 = 0.

In the following, we derive a parameterization based on an algebraic argument, the Bézout theorem. In fact, it is remarkable that the cubic surface defined by Equations (20) and (21) is a well known example of ruled surface, see Exercise 5.8.15 in [33]. In fact, the singular line is a double line, so that the intersection of the cubic surface with any plane through the singular line is of degree 1 = 3 − 2, by the Bézout theorem, and thus, it is a line.

The line

is said to be double because the polynomial

belongs to the ideal generated by

and

. Let us consider the sheaf of planes through the singular line defined for each [α : β] ∈ P1 by the equations:

Let us intersect each plane

of the sheaf with the model variety

by solving the system of equations:

On the critical line

, a generic point is parameterized as p(τ, 0) = (0, τ, 0, 1 − τ), which satisfies Equation (42) for τ ∈ ℝ. If 0 ≤ τ ≤ 1, then p(τ, 0) belongs to the edge δ2 ↔ δ4.

As the critical line is double and the intersection of the model variety with the plane of the sheaf is a cubic curve, we expect the remaining part to be of degree 3 − 2 = 1, that is to be a line. Assume first α, β ≠ 0. Outside the critical line, as p1, p3 are not both zero and αp1 + βp3 = 0, then αp1 = − βp3 ≠ 0. It follows (αp1)2 = (βp3)2≠ 0; hence:

We have found that for α, β ≠ 0, the intersection between the plane

and the model variety

is the union of the critical line

and the line of equations:

This line intersects the critical line where:

that is in the point:

The same equations hold in the previously excluded case αβ = 0.

Positive values of components 1 and 3 of the probability are obtained in Equation (44) for αβ < 0 and βt > 0, say α < 0, β > 0, t > 0. In this case, we have for component 2:

which is positive if t < (β − α)−1. The same condition applies to component 4. As

, we can always assume β > 0 and β − α = 1 that is, α = β − 1; hence β < 1. The parameterization of the positive probabilities in the model becomes:

For example, with

, we have:

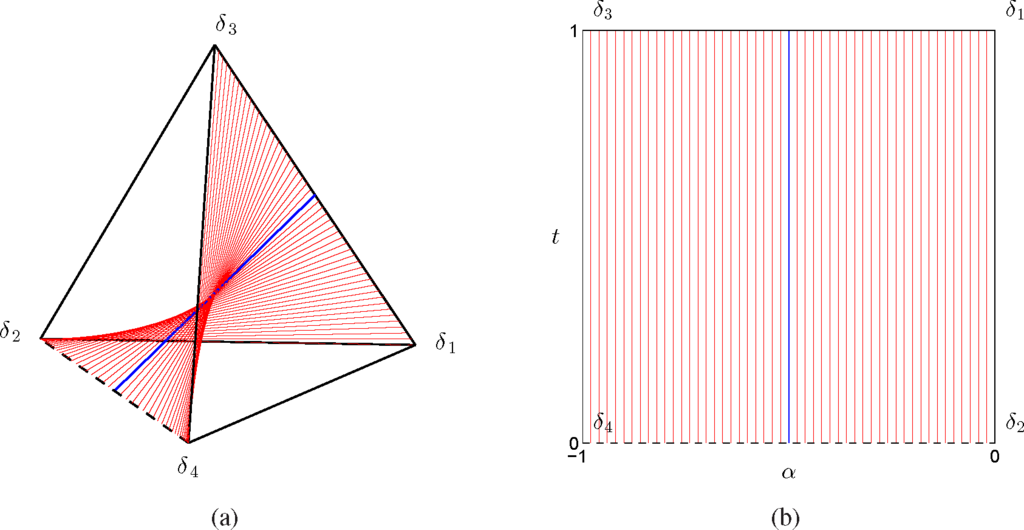

In Figure 4(a), we represented the surface associated with the invariant of Equation (21) as a ruled surface in the probability simplex, according to Equations (45), where the blue line corresponds to the case

. The ruled surface corresponds to the surface in Figure 2 that was approximated by the triangularization of a grid of points satisfying the invariant. In Figure 4(b), we represent the same lines of Figure 4(a) in the chart (α, t).

Figure 4.

Representation of the exponential family in Equations (12) and (13) as a ruled surface in the probability simplex (a) and in the parameter space (α, t) (b). The dashed line corresponds to the critical edge δ2 ↔ δ4 and the blue line to the case

.

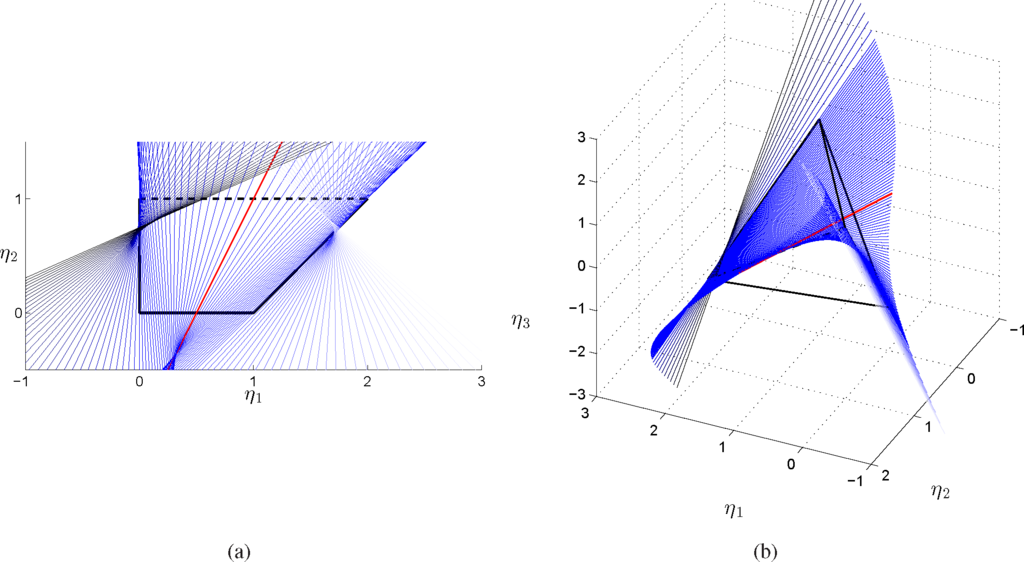

Notice that the dependence on (α, t) is rational. In Figure 5(a), the ruled surface has been represented in the full marginal polytope, while in Figure 5(a), the lines have been projected over the marginal polytope.

Figure 5.

Representation of the exponential family in Equations (12) and (13) as a ruled surface in the marginal polytope (η1, η2) (a) and in the full marginal polytope parametrized by (η1, η2 η3) (b) The dashed line corresponds to the critical line δ2 ↔ δ4

Let us invert Equation (45) to obtain the corresponding chart p ↦ (β, t). From p1 and p3, we obtain β = p1/(p1 + p3). As p2 + p4 = 1 − t, we have the chart:

It is remarkable that the model depends on the probability restricted to {1, 3}; similarly, the expectation parameters depend on p1 and p3 only.

From the theory of exponential families, we know that the gradient mapping:

is one-to-one from ℝ2 onto the interior of the marginal polytope M; see Figure 3(b). The equations:

are uniquely solvable for (η1, η2) ∈ M°. We study the local solvability in ζ1, ζ2 of:

that is,

The Jacobian is:

Instead, if we use the variable η3, from Equations (16) and (41), it is possible to derive the equation of the model variety in the η1, η2, η3 parameters. From Equation (18), we have:

Let us solve for the ζ, that is:

There is another way to derive the model constraint in the η. In the example, the sample space has four points; the monomials 1, T1, T2, T1T2 are a vector basis of the linear space of the columns of the matrix A, in particular T3 is a linear combination:

It follows that:

3.1. Border

Let us consider the points in the model variety that are probabilities, that is,

From the equation above, we see that single zeros are not allowed, that is to say there are no intersections between the model in Equation (49) and the open facets of the probability simplex. We now consider the full marginal polytope obtained by adding the sufficient statistics T1T2, and parametrized by (η1, η2, η12). By Equation (16), the marginal polytope is represented by the inequalities:

which is a convex set with vertexes (0, 0, 0), (0, 1, 0), (1, 0, 0), (2, 1, 2), which corresponds to the full marginal polytope associated to the sufficient statistics {T1, T2, T1T2}. As the critical set is the edge δ2 ↔ δ4 in the p space, it is the edge (0, 1, 0) ↔ (2, 1, 2) in the η space.

We have the following possible models on the border of the probability simplex and on the border of the full marginal polytope, where the values for η1 and η2 are obtained from Equation (15).

That is, the domains that can be support of probabilities in the algebraic model are the faces of the marginal polytope. This is general; see [20,34].

3.2. Fisher Information

Let us consider the covariance matrix of the sufficient statistics. Let us denote by A|12 the block of the two central columns in A in Equation (14) and by p the row vector of probabilities. Then, the variance matrix is:

On each of the cases of probabilities supported by a single point, the matrix p − pT p is zero; hence, the covariance matrix is zero. In each of the cases where the probability is supported by a facet, say {1, 2}, the matrix p − pT p reduces to the corresponding block, and the covariance matrix is:

The space generated by the covariance matrix is ℚ (0, 1), that is the affine space that contains the facets itself. Analogous results hold for each facet, and this result is general.

We note that the determinant of the covariance matrix is a polynomial of degree six in the indeterminates p1, p2, p3. This polynomial is zero on each facet.

The η parameters can be given as a function of either θ or ζ. We have:

We know from the theory of exponential families that the mapping:

is one-to-one. We look for an algebraic inversion of the equations:

Gauss elimination produces a linear system in ζ1, ζ2 with coefficients that are polynomials in η1, η2, η3 to be considered with the implicit equation derived from

. The system is:

3.3. Extension of the Model

In this subsection, we study an extension to signed probabilities of the exponential family in Equations (12) and (13) based on the representation of the statistical model as a ruled surface in the probability simplex. Our motivation for such an analysis is the study of the stability of the critical points of a gradient field in the η parameters, in particular when the critical points belong to the boundary of the model. Indeed, by extending the gradient field outside the marginal polytope, we can identify open neighborhoods for critical points on the boundary of the polytope, which allow one to study the convergence of the differential equations associated with the gradient flows, for instance by means of Lyapunov stability.

In the following, we describe more in detail how the extension can be obtained. Let a be a point along the edge δ2 ↔ δ4 of the full marginal polytope parametrized by (η1, η2, η3) and b the coordinates of the corresponding point over δ1 ↔ δ3 obtained by intersecting the line of the ruled surface through a with the edge δ1 ↔ δ3. The values of the η2 coordinate for a and b are one and zero, respectively. The other coordinates of b depend on those of a though α. First, we obtain the values of the η3 coordinates as a function of the η1 coordinate. For a, we find the equation of the line to which δ2 ↔ δ4 belongs, given by:

from which we obtain η3 = 1 − η1. Similarly, for the η3 coordinate of b, we consider the line through δ1 ↔ δ3, that is:

which gives us η3 = 4η1 − 2. Finally, for the η1 coordinate, we use Equations (44). In a, since t = 0 and p1 = p3 = 0, then

and

. From Equation (24), it follows that:

Similarly, for b, we have p2 = p4 = 0 and t = 1, so that p1 = α + 1 and p3 = −α. From Equation (24), it follows that:

As a result, the coordinates of a and b both depend on α as follows,

The ruled surface in the full marginal polytope is given by the lines through a and b described by the following implicit representation, for −1 < α < 1 and 0 < t < 1,

The ruled surface can be extended outside the marginal polytope by taking values of α, t ∈ ℝ and considering the set of lines through a and b for different values of α. For α → ±∞, the η1 coordinate of b tends to ∓∞, while the η1 of a tends to one. For α → ±∞, the ruled surface admits the same limit given by the line parallel to δ1 ↔ δ3 passing through (1, 1, 0). The surface intersects the interior of the marginal polytope for t ∈ (0, 1) and α ∈ (−1, 0). Moreover, the surface intersects the critical line twice, for t = 0, α ∈ [−1, 0] and for t = 0, α ∉ [−1, 0].

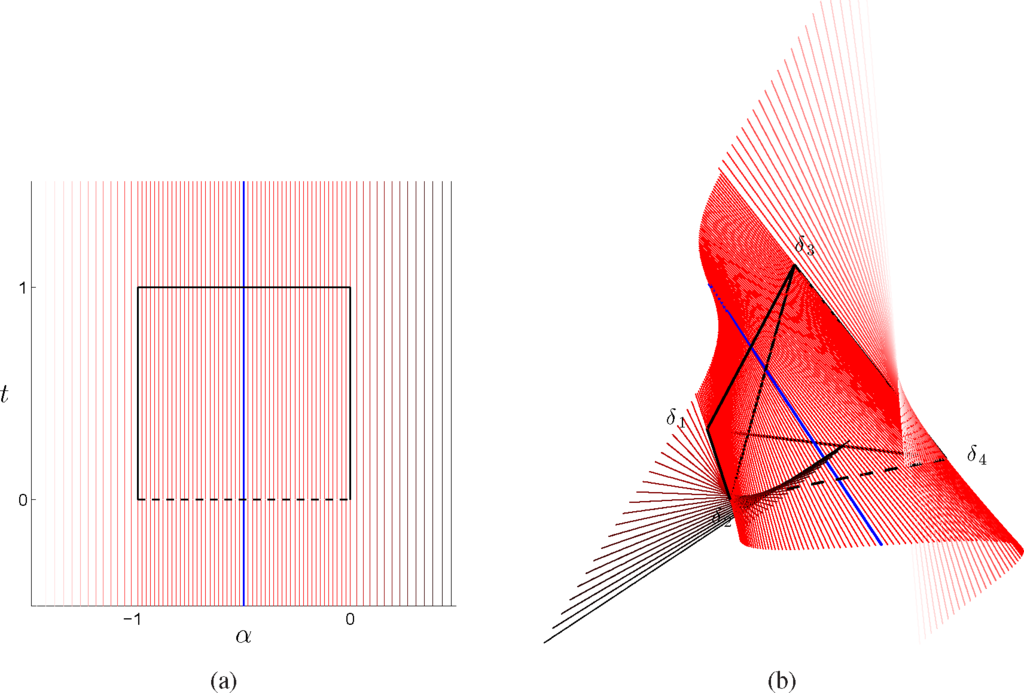

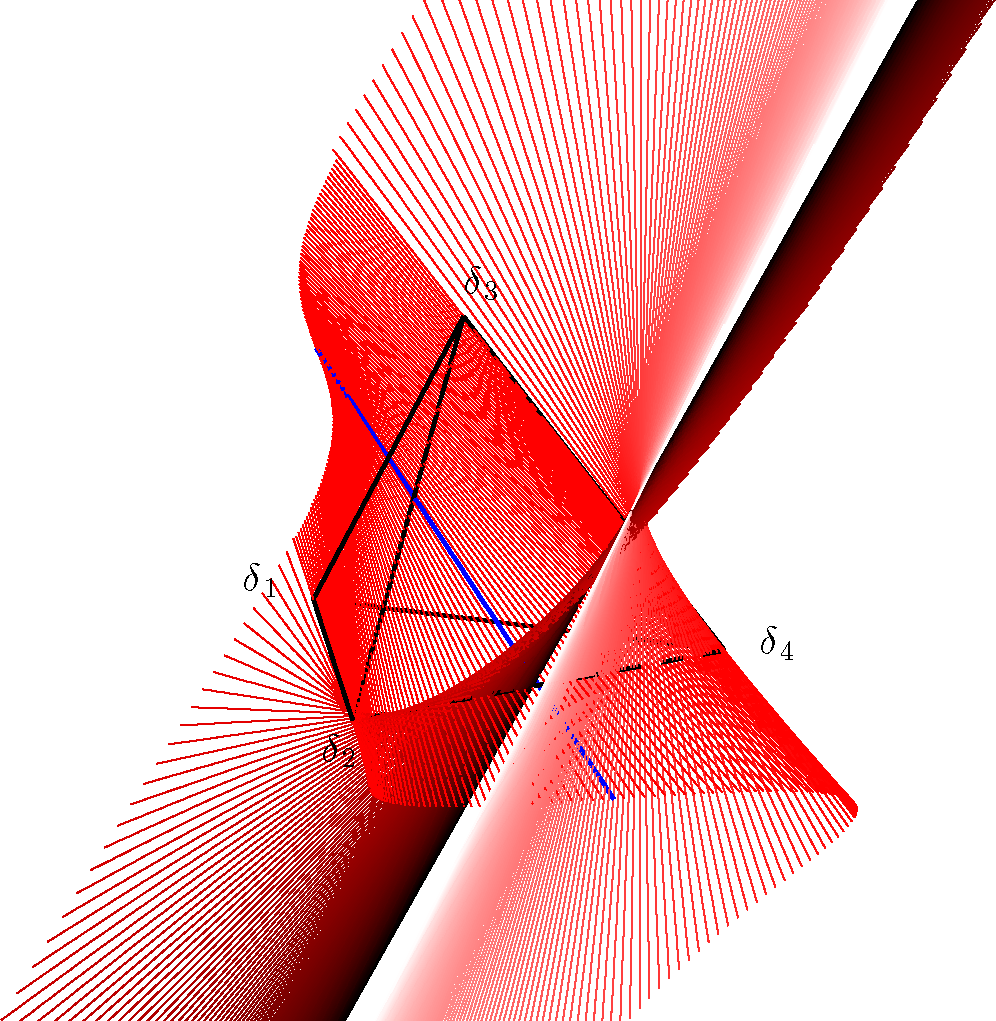

In Figures 6 and 7, we represent the extension of the ruled surface outside the probability simplex and in the (α, t) chart, while in Figures 8 and 9, the extended surface has been represented in the full marginal polytope parametrized by (η1, η2, η3) and in the marginal polytope parametrized by (η1, η2).

Figure 6.

The segments that form the ruled surface in Figure 4 have been extended, for −0.5 < t < 1.5. New lines described by Equations (60) have been represented for 0 < α < exp(0.7) (shading from red to black for increasing values of α) and for exp(0.7) − 1 < α < −1 (shading from red to white for decreasing values of α). The simplex in (b) has been rotated with respect to Figure 4(a) to better visualize the intersection of the lines with the critical edge δ2 ↔ δ4.

Figure 7.

Extension of the ruled surface associated with the exponential family in Equations (12) and (13) as in Figure 6(b), for exp(3.5) − 1 < α < exp(3.5) and −0.5 < t < 1.5; for α → ±∞, the lines of the extended surface admit the same limit.

Figure 8.

The segments that form the ruled surface in Figure 5 have been extended, for −0.5 < t < 1.5. New lines described by Equations (60) have been represented for 0 < α < exp(1) (shading from blue to black for increasing values of α) and exp(1) − 1 < α < −1 (shading from blue to white for decreasing values of α). The full marginal polytope in (b) has been rotated with respect to Figure 5(b) to better visualize the intersection of the lines with the critical edge δ2 ↔ δ4.

Figure 9.

Extension of the ruled surface associated with the exponential family in Equations (12) and (13) as in Figure 8(b), for exp(3)−1 < α < exp(3) and −0.5 < t < 1.5; notice that for α → ±∞, the lines of the extended surface admit the same limit.

3.4. Optimization and Natural Gradient Flows

We are interested in the study of natural gradient flows of functions defined over statistical models. Our motivation is the study of the optimization of the stochastic relaxation of a function, i.e., the optimization of the expected value of the function itself with respect to a distribution p in a statistical model. Natural gradient flows associated with the stochastic relaxation converge to the boundary of the model, where the probability mass is concentrated on some instances of the search space. To study the convergence over the boundary, we proposed to extend the natural gradient field outside the marginal polytope and the probability simplex, by employing a parameterization that describes the model as a ruled surface, as we described in the tutorial example of this section.

In the following, we focus on the optimization of a function f : Ω → ℝ, and we consider its stochastic relaxation with respect to a probability distribution in the exponential family in Equations (12) and (13). First, we compute a basis for all real-valued functions defined over Ω using algebraic arguments. Consider the zero-dimensional ideal I associated with the set of points in Ω, and let R be the polynomial ring with the field of real coefficients; a vector space basis for the quotient ring R/I defines a basis for all functions defined over Ω. In CoCoA [36], this can be computed with the command QuotientBasis.

Coming back to our example, with Ω = {1, 2, 3, 4}, by fixing the graded reverse lexicographical monomial order, which is the default one in CoCoA [36], we obtain a basis given by {1, x1, x2, x1 x2}, so that any f : Ω → ℝ can be written as:

We are interested in the study of the natural gradient field of

. Recall that T3 = 4x1 + 3x2 − 5x1x2 − 2 and

, so that:

which implies:

In order to study the gradient field of Fη(η) over the marginal polytope parameterized by (η1, η2), we need to express η3 as a function of η1 and η2. In order to do that, we parametrize the exponential family as a ruled surface by means of the (α, t) parameters. Moreover, this parametrization has a natural extension outside the marginal polytope, which allows one to study the stability of the critical points on the boundary of the marginal polytope. We start by evaluating the gradient field of Fα,t(α, t) in the (α, t) parametrization, then we map it to the marginal polytope in the η parameterization.

By expressing (η1, η2) as a function of (α, t), we obtain:

In the (α, t) parameterization, the Fisher information matrix reads:

Finally, the natural gradient becomes:

We obtained a rational formula for the natural gradient in the (α, t) parameterization, which can be easily extended outside the marginal polytope. However, notice that the inverse Fisher information matrix and the natural gradient are not defined for:

We also remark that over the boundary of the model, for t ∈ {0, 1} and α ∈ {−1, 0}, the determinant of the inverse Fisher information vanishes, so that the matrix is not full rank. It follows that the trajectories associated with natural gradient flows with initial conditions in the interior of the marginal polytope remain in the marginal polytope.

In order to study the natural gradient field over the marginal polytope, we apply a reparameterization of a tangent vector from the (α, t) parameterization to the (η1, η2) parameterization. Indeed, by the chain rule and the inverse function theorem, we have:

The Jacobian of the map (α, t) 7↦ (η1, η2) is:

with inverse:

It follows that:

Notice that, as for the inverse Fisher information matrix, the inverse Jacobian J(α, t)−1 is not defined for t which satisfies Equation (69).

We compute the inverse Fisher information matrix by evaluating the covariance between the sufficient statistics of the exponential family. Since over Ω, we have

and

, it follows that:

By parameterizing

with (α, t), we have:

Finally, we derive the following rational formula for the natural gradient over the marginal polytope parametrized as a ruled surface by (α, t):

3.5. Examples with Global and Local Optima

We conclude this section with two examples of natural gradient flows associated with two different f functions. First, consider the case where c0 = 0, c1 = 1, c2 = 2, c3 = 3, so that:

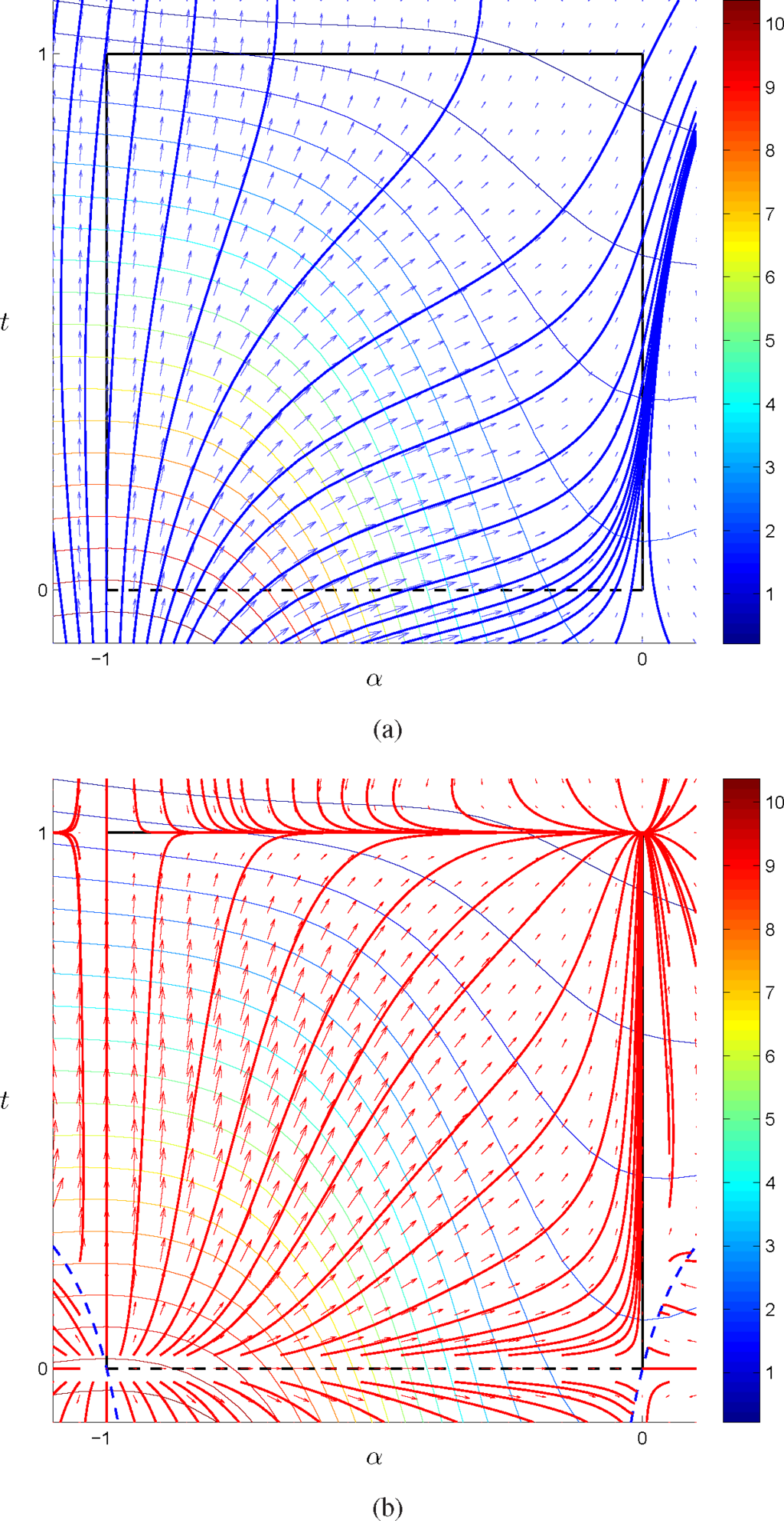

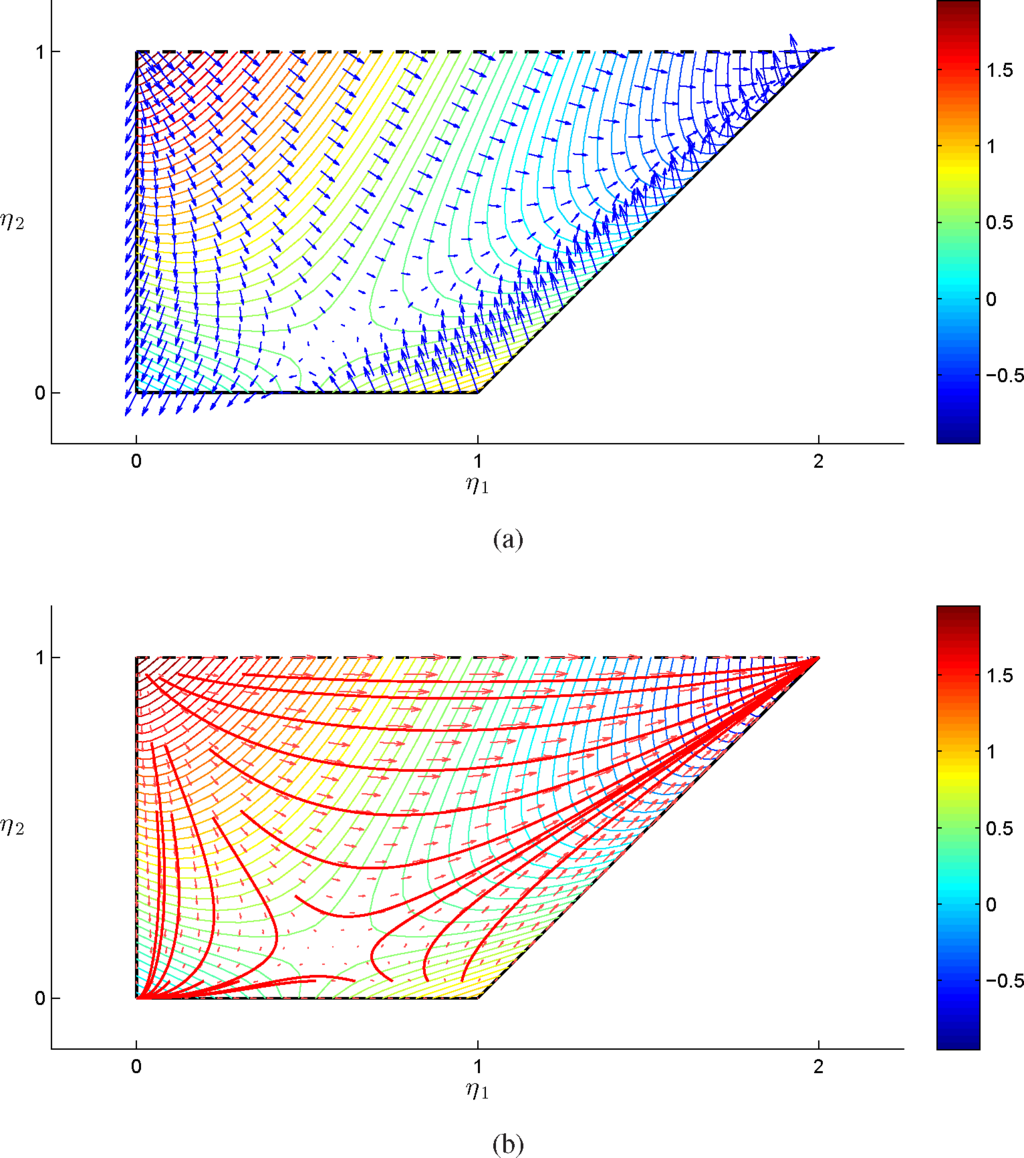

The function admits a minimum on {1}. In Figure 10, we plotted the vector fields associated with the vanilla and natural gradient, together with some gradient flows for different initial conditions, in the (α, t) parameterization. In Figure 11, we represent the vanilla and natural gradient field over the marginal polytope in the (η1, η2) parameterization. Notice that, as expected, differently from the vanilla gradient, the natural gradient flows converge to the unique global optima, which corresponds to the vertex where all of the probability is concentrated over {1}. In the (α, t) parameterization, the flows have been extended outside the statistical model by prolonging the lines of the ruled surface, and as we can see, they remain compatible with the flows on the interior of the model, in the sense that the nature of the critical point is the same for trajectories with initial conditions on the interior and on the exterior of the model. In other words, the global optima is an attractor from both the interior and the exterior of the model and similarly for the other critical points on the vertices, both for saddle points and the unstable points, where the natural gradient vanishes.

Figure 10.

Vanilla gradient field and flows in blue (a) and natural gradient field and flows in red (b), together with level lines associated with Fα,t(α, t) in the (α, t) parameterization, for c0 = 0, c1 = 1, c2 = 2 and c3 = 3; the dashed blue lines in (b) represent the points where

is not defined; see Equation (68).

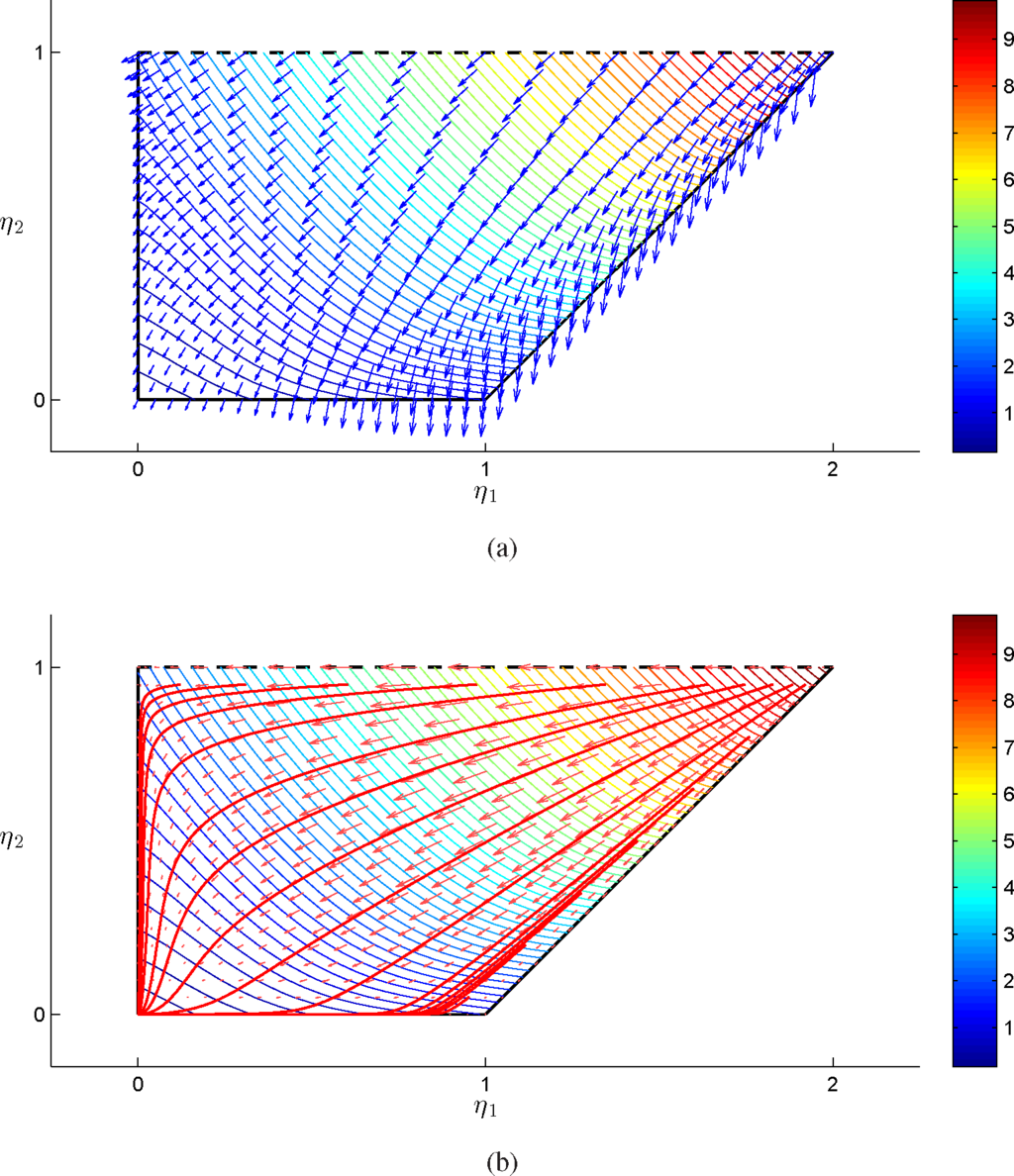

Figure 11.

Vanilla gradient field in blue (a) and natural gradient field and flows in red (b), together with level lines associated with Fη(α, t) over the marginal polytope, for c0 = 0, c1 = 1, c2 = 2 and c3 = 3.

In the second example, we set c0 = 0, c1 = 1, c2 = 2, c3 = −5/2, and we have:

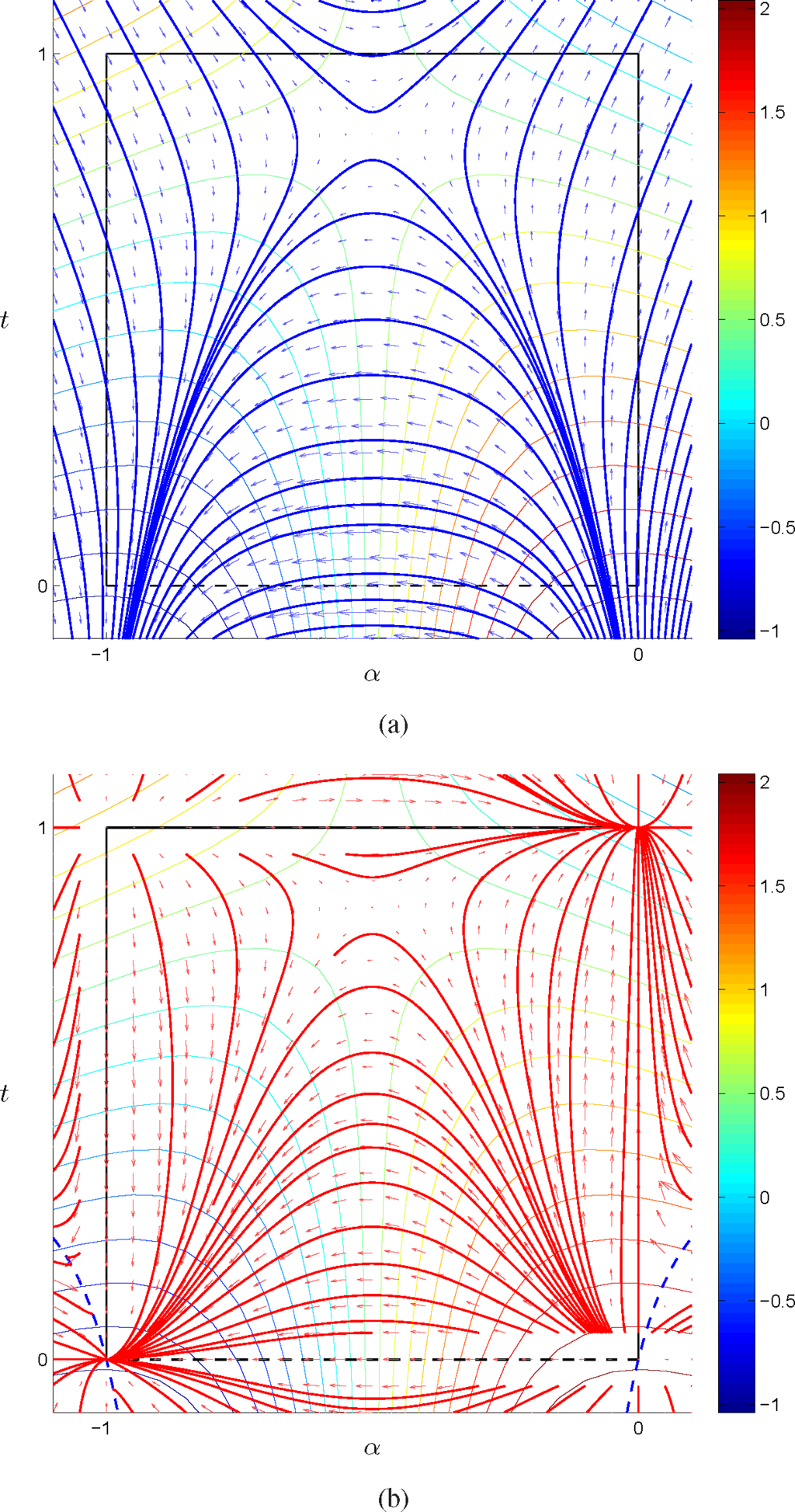

so that f2 admits a minimum on {4}. In Figures 12 and 13, we plotted the vector fields associated with the vanilla and natural gradient, together with some gradient flows for different initial conditions, in the (α, t) and (η1, η2) parameterization, respectively. As in the previous example, natural gradient flows converge to the vertices of the model; however, in this case, we have one local optima in {1} and one global optima in {4}, together with a saddle point in the interior of the model. Similarly to the previous example, in the (α, t) parameterization, the flows have been extended outside the statistical model, and the nature of the critical points is the same for trajectories with initial conditions in the statistical model and in the extension of the statistical model.

Figure 12.

Vanilla gradient field and flows in blue (a) and natural gradient field and flows in red (b) as in Figure 10, for c0 = 0, c1 = 1, c2 = 2 and

.

Figure 13.

Vanilla gradient field in blue (a) and natural gradient field and flows in red (b) as in Figure 11, for c0 = 0, c1 = 1, c2 = 2 and

.

We conclude the section by noticing that in both examples, for certain values of t in Equation (69), the natural gradient flows are not defined on the extension of the statistical model. As represented in the figures, once a trajectory encounters the dashed blue line in the (α, t) parameterization, the flow stops at that point.

4. Pseudo-Boolean Functions

We turn to discuss a case of considerable practical interest to see which of the results obtained in the example of the previous section we are able to extend.

For binary variables, we use the coding ±1, that is x = (x1,…,xn) ∈ {+1, −1}n = Ω. For any function f : Ω ↦ ℝ, with multi-index notation, f(x) = ∑α∈L aαxα, with L = {0, 1}n and

, 00=1. If M ⊂ L* = L\{0}, the model where p ∈ ε if:

has been considered in a number of papers on combinatorial optimization; see [3–5]. The following statements are results in algebraic statistics; cf. [20,35]. Let

.

Proposition 6 (Implicitization of the exponential family). Given a function p: Ω → ℝ, then and p ∈ ε if, and only if, the following conditions all hold:

- p(x) > 0, x ∈ Ω;

- ∑x∈Ωp(x)=1;

- for all β ∈ L*\M.

Proof. (⇒) If p ∈ ε, then p(x) > 0, x ∈ Ω (Item 1) and ∑x∈Ω p(x) = 1 (Item 2). Moreover, log

. The function log p is orthogonal to each Xβ, β ∈ L* \ M. Hence:

which is equivalent to Item 3.

(⇐) Oppositely, the computation in Equation (79) implies that log p is orthogonal to each Xβ; hence, there exists θ, such that log

. Now, Item 2 implies C = −ψ(θ).

Let ℝ [Ω] denote the ring of polynomials in the indeterminates {p(x)|x ∈ Ω}. Given a binary model M, the set of polynomials:

generates an ideal

, which is called the toric ideal of the model M. Its variety

is called the exponential variety of M.

Proposition 7.

- The exponential variety of M is the Zariski closure of the exponential model ε.

- The closure of ε in is characterized by p(x) ≥ 0, x ∈ Ω, together with Items 2 and 3 of Proposition 6.

- The algebraic variety of the ring ℝ[p(x): x ∈ Ω], which is generated by the polynomials Σx∈Ωp(x)−1, , β ∈ L* \ M, is an extension ε1 of ε to .

- Define the moments, α ∈ L, i.e., the discrete Fourier transform of p, with inverse. There exists an algebraic extension of the moment function ε ∋ p ↔ η(p) ∈ M° to a mapping defined on ε1.

- Proof. 1. According to the implicitization Proposition 6, the exponential family is characterized by the positivity condition together with the algebraic binomial conditions.

- This follows from the implicit form, and it is proven, for example, in [20].

- By definition.

- As the mapping from the probabilities to the moments is affine and one-to-one, such a transformation extends to a one-to-one mapping from the extended model to the affine space of the marginal polytope.

We conclude this section by introducing the so-called no three-way interaction example. On Ω = {0, 1}3, the full model in the statistics 0 ↦ 1, 1 ↦ −1, that is t = (−1)x = 1 − 2x, is described by the matrix:

Note the lexicographic order of both the sample points and the statistics’ exponents.

The exponential family without the interaction term T1T2T3 is the same model as the toric model without the three-way interaction, which is based on the matrix:

that is the probabilities as a function of the ζ’s are:

The toric ideal of the toric model in Equation (82) is generated by the polynomial:

this means that the closure of the exponential family is given by the solution of the equations:

The η parameters are the expected values of the sufficient statistics of the full model,

In the ring:

we can consider the ideal

generated by the Equations (84) together with Equations (85). The elimination ideal:

will express the model as a dependence between the η’s.

Computation with CoCoA [36] gives the following polynomial:

The equation:

is an expression of the model in the expectation parameters, and this expression is a polynomial equation. We know unique solvability in η7 if (η1, η2, η3, η4, η5, η6) is in the interior of the marginal polytope. As in the example of the previous section, it is possible to intersect the polynomial invariant in Equation (83) with one or more sheaves of hyperplanes around some faces of the simplex, in order to lower the degree of the invariant and thus decompose the model as the convex hull of probabilities on the boundary of the model. We do not describe the details here, and we postpone the discussion of this example to a paper which is in preparation.

5. Conclusions

Geometry and algebra play a fundamental role in the study of statistical models, and in particular in the exponential family. In the fist part of the paper, starting from the definition of the natural gradient over an exponential family, we described the relationship between its expression in the basis of the sufficient statistics and in the conjugate basis. From this perspective, the terms natural gradient and vanilla gradient, to denote gradients evaluated with respect to the Fisher and the Euclidean geometry, together with their duality in the natural and expectation parameters, assume a new meaning, since these definitions depend on the choice of the basis for the tangent space.

In order to study natural gradient flows for a generic discrete exponential model and, in particular, their convergence, it is convenient to move to the mixture geometry of the expectation parameters and to study trajectories over the marginal polytope. However, in order to obtain explicit equations for the flows, it is necessary to determine the dependence between the moments associated with the sufficient statistics of the model, which are constrained to belong to the marginal polytope, and the remaining moments, which on the other side are not free. Such a relationship, which for finite search spaces is given by a system of polynomial invariants, cannot be easily solved explicitly in general. In the second part of the paper, by using algebraic tools, we proposed a novel parameterization based on ruled surfaces for an exponential family, which does not require to solve the polynomial invariant explicitly. We applied our approach to a simple example, and we showed that the surface associated with the model in the full marginal polytope is a ruled surface. We claim that these results are not peculiar to the example we described, and we are working towards an extension of this approach in a more general case.

Acknowledgments

The authors would like to thank Gianfranco Casnati from Politecnico di Torino for the useful discussions on the geometry of ruled surfaces. Giovanni Pistone is supported by de Castro Statistics of Collegio Carlo Alberto at Moncalieri and is a member of INdAM/GNAMPA.

Author Contributions

Both authors contributed to the design of the research. The research was carried out by all of the authors. The manuscript was written by Luigi Malagò and Giovanni Pistone. Both authors read and approved the final manuscript.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Pistone, G. Nonparametric information geometry. In Geometric Science of Information, Proceedings of the First International Conference (GSI 2013), Paris, France, 28–30 August 2013; Nielsen, F., Barbaresco, F., Eds.; Springer: Heidelberg, Germany, 2013; 8085, pp. 5–36. [Google Scholar]

- Malagò, L.; Matteucci, M.; Pistone, G. Stochastic Relaxation as a Unifying Approach in 0/1 Programming, 2009, Proceedings of the NIPS 2009 Workshop on Discrete Optimization in Machine Learning: Submodularity, Sparsity & Polyhedra (DISCML), Whistler Resort & Spa, BC, Canada, 11–12 December 2009.

- Malagò, L.; Matteucci, M.; Pistone, G. Towards the geometry of estimation of distribution algorithms based on the exponential family. Proceedings of the 11th Workshop on Foundations of Genetic Algorithms (FOGA ’11), Schwarzenberg, Austria, 5–8 January 2011; ACM: New York, NY, USA, 2011; pp. 230–242. [Google Scholar]

- Malagò, L.; Matteucci, M.; Pistone, G. Stochastic Natural Gradient Descent by estimation of empirical covariances. Proceedings of the 2011 IEEE Congress on Evolutionary Computation (CEC), New Orleans, LA, USA, 5–8 June 2011; pp. 949–956.

- Malagò, L.; Matteucci, M.; Pistone, G. Natural gradient, fitness modelling and model selection: A unifying perspective, Proceedings of the 2013 IEEE Congress on Evolutionary Computation (CEC), Cancun, Mexico, 20–23 June 2013; pp. 486–493.

- Wierstra, D.; Schaul, T.; Peters, J.; Schmidhuber, J. Natural evolution strategies. Proceedings of the 2008 IEEE Congress on Evolutionary Computation, Hong Kong, China, 1–6 June 2008; pp. 3381–3387.

- Ollivier, Y.; Arnold, L.; Auger, A.; Hansen, N. Information-Geometric Optimization Algorithms: A Unifying Picture via Invariance Principles 2011. arXiv: 1106.3708.

- Malagò, L.; Pistone, G. Combinatorial Optimization with Information Geometry: Newton method. Entropy 2014, 16, 4260–4289. [Google Scholar]

- Amari, S.; Nagaoka, H. Methods of Information Geometry; American Mathematical Society: Providence, RI, USA, 2000; Translated from the 1993 Japanese original by Daishi Harada. [Google Scholar]

- Bourbaki, N. Variétés differentielles et analytiques. Fascicule de résultats / Paragraphes 1 à 7; Number XXXIII in Éléments de mathématiques; Hermann: Paris, France, 1971. [Google Scholar]

- Pistone, G.; Sempi, C. An infinite-dimensional geometric structure on the space of all the probability measures equivalent to a given one. Ann. Stat. 1995, 23, 1543–1561. [Google Scholar]

- Malagò, L.; Pistone, G. Gradient Flow of the Stochastic Relaxation on a Generic Exponential Family. Proceedings of Conference of Bayesian Inference and Maximum Entropy Methods in Science and Engineering (MaxEnt 2014), Clos Lucé, Amboise, France, 21–26 September 2014; Mohammad-Djafari, A., Barbaresco, F., Eds.; pp. 353–360.

- Brown, L.D. Fundamentals of Statistical Exponential Families With Applications in Statistical Decision Theory; Number 9 in IMS Lecture Notes, Monograph Series; Institute of Mathematical Statistics: Hayward, CA, USA, 1986. [Google Scholar]

- Rockafellar, R.T. Convex Analysis; Princeton Mathematical Series No. 28; Princeton University Press: Princeton, NJ, USA, 1970. [Google Scholar]

- Do Carmo, M.P. Riemannian Geometry; Mathematics: Theory & Applications; Birkhäuser Boston Inc.: Boston, MA, USA, 1992; Translated from the second Portuguese edition by Francis Flaherty. [Google Scholar]

- Amari, S.I. Natural gradient works efficiently in learning. Neur. Comput. 1998, 10, 251–276. [Google Scholar]

- Shima, H. The Geometry of Hessian Structures; World Scientific Publishing Co. Pte. Ltd.: Hackensack, NJ, USA, 2007. [Google Scholar]

- Rinaldo, A.; Fienberg, S.E.; Zhou, Y. On the geometry of discrete exponential families with application to exponential random graph models. Electron. J. Stat. 2009, 3, 446–484. [Google Scholar]

- Rauh, J.; Kahle, T.; Ay, N. Support Sets in Exponential Families and Oriented Matroid Theory. Int. J. Approx. Reas. 2011, 52, 613–626. [Google Scholar]

- Malagò, L.; Pistone, G. A note on the border of an exponential family 2010. arXiv:1012.0637v1.

- Pistone, G.; Rogantin, M. The gradient flow of the polarization measure. With an appendix 2015. arXiv:1502.06718. [Google Scholar]

- Diaconis, P.; Sturmfels, B. Algebraic algorithms for sampling from conditional distributions. Ann. Stat. 1998, 26, 363–397. [Google Scholar]

- Pistone, G.; Wynn, H.P. Generalised confounding with Gröbner bases. Biometrika 1996, 83, 653–666. [Google Scholar]

- Pistone, G.; Riccomagno, E.; Wynn, H.P. Algebraic Statistics: Computational Commutative Algebra in Statistics; Volume 89, Monographs on Statistics and Applied Probability; Chapman & Hall/CRC: Boca Raton, FL, USA, 2001. [Google Scholar]

- Drton, M.; Sturmfels, B.; Sullivant, S. Lectures on Algebraic Statistics; Volume 39, Oberwolfach Seminars; Birkhäuser Verlag: Basel, Germany, 2009. [Google Scholar]

- Pachter, L.; Sturmfels, B. (Eds.) Algebraic Statistics for Computational Biology; Cambridge University Press: Cambridge, UK, 2005.

- Gibilisco, P.; Riccomagno, E.; Rogantin, M.P.; Wynn, H.P. Algebraic and Geometric Methods in Statistics; Cambridge University Press: Cambridge, UK, 2010. [Google Scholar]

- 4ti2 team. 4ti2—A software package for algebraic, geometric and combinatorial problems on linear spaces. Available online: http://www.4ti2.de accessed on 2 June 2015.

- Michałek, M.; Sturmfels, B.; Uhler, C.; Zwiernik, P. Exponential Varieties 2014. arXiv:1412.6185.

- Sturmfels, B. Gröbner Bases and Convex Polytopes; American Mathematical Society: Providence, RI, USA, 1996. [Google Scholar]

- Geiger, D.; Meek, C.; Sturmfels, B. On the toric algebra of graphical models. Ann. Stat. 2006, 34, 1463–1492. [Google Scholar]

- Rapallo, F. Toric statistical models: Parametric and binomial representations. Ann. Inst. Stat. Math. 2007, 59, 727–740. [Google Scholar]

- Beltrametti, M.; Carletti, E.; Gallarati, D.; Monti Bragadin, G. Lectures on Curves, Surfaces and Projective Varieties: A Classical View of Algebraic Geometry; EMS textbooks in mathematics; European Mathematical Society: Zürich, Switzerland, 2009. [Google Scholar]

- Rinaldo, A.; Fienberg, S.E.; Zhou, Y. On the geometry of discrete exponential families with application to exponential random graph models. Electron. J. Stat. 2009, 3, 446–484. [Google Scholar]

- Pistone, G. Algebraic varieties vs. differentiable manifolds in statistical models. In Algebraic and Geometric Methods in Statistics; Gibilisco, P., Riccomagno, E., Rogantin, M., Wynn, H.P., Eds.; Cambridge University Press: Cambridge, UK, 2009; Chapter 21; pp. 339–363. [Google Scholar]

- Abbott, J.; Bigatti, A.; Lagorio, G. CoCoA-5: A system for doing Computations in Commutative Algebra. Available online: http://cocoa.dima.unige.it accessed on 2 June 2015.

© 2015 by the authors; licensee MDPI, Basel, Switzerland This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/4.0/).