Entropy, Function and Evolution: Naturalizing Peircian Semiosis

Abstract

:Even at the purely phenomenological level, entropy is an anthropomorphic concept.E.T. Jaynes

1. Introduction

1.1. Signs are physical!

1.2. Summary of the argument

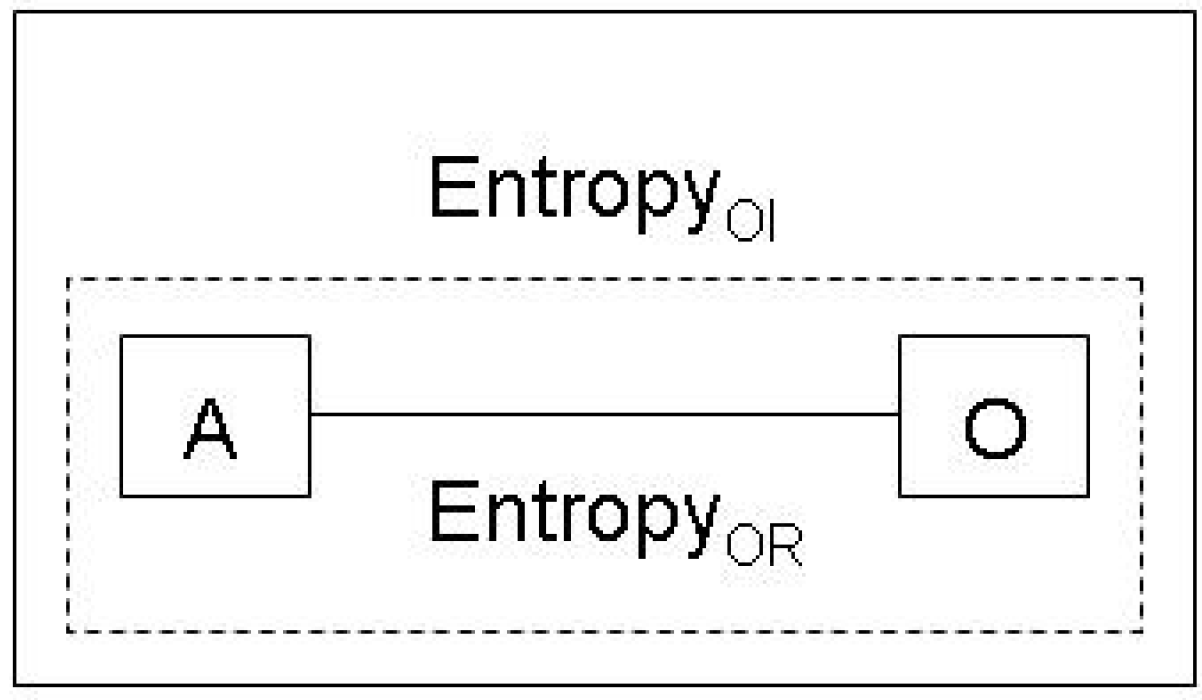

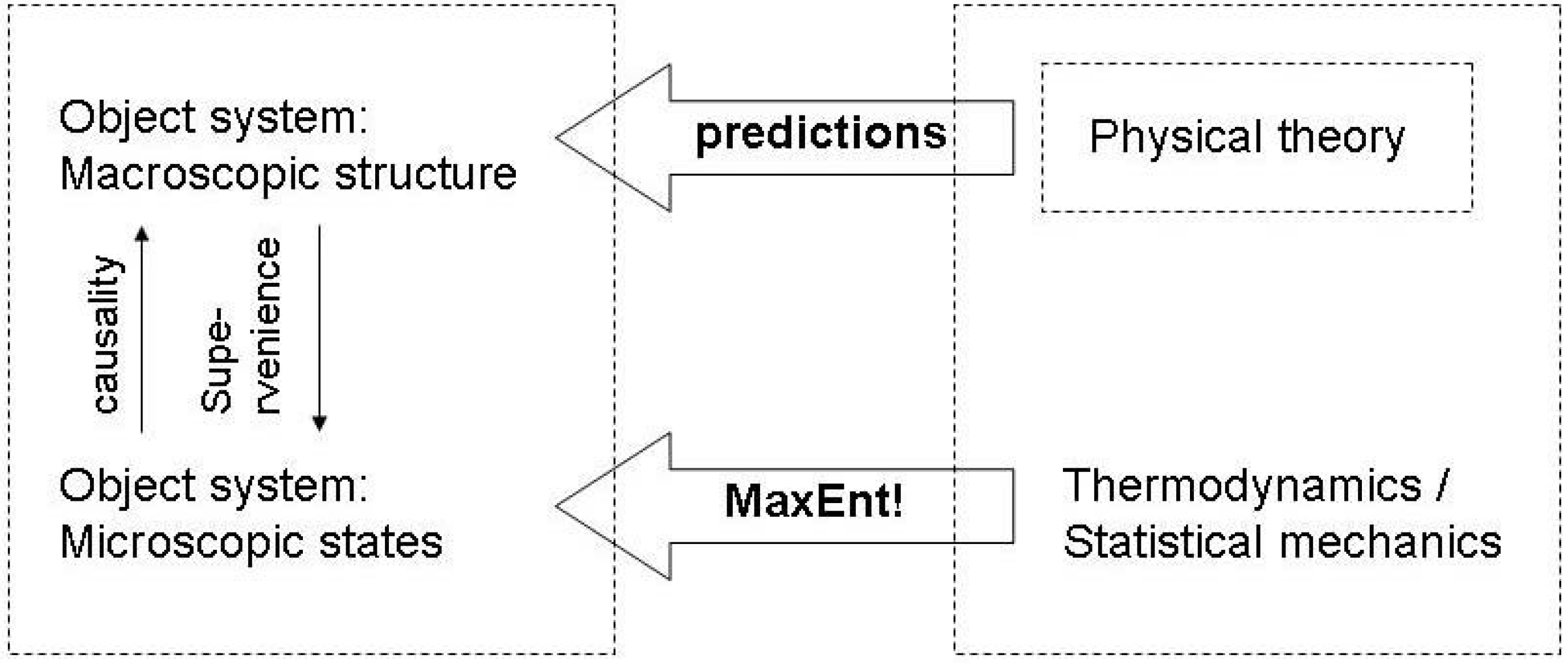

2. Causality, Information and Entropy

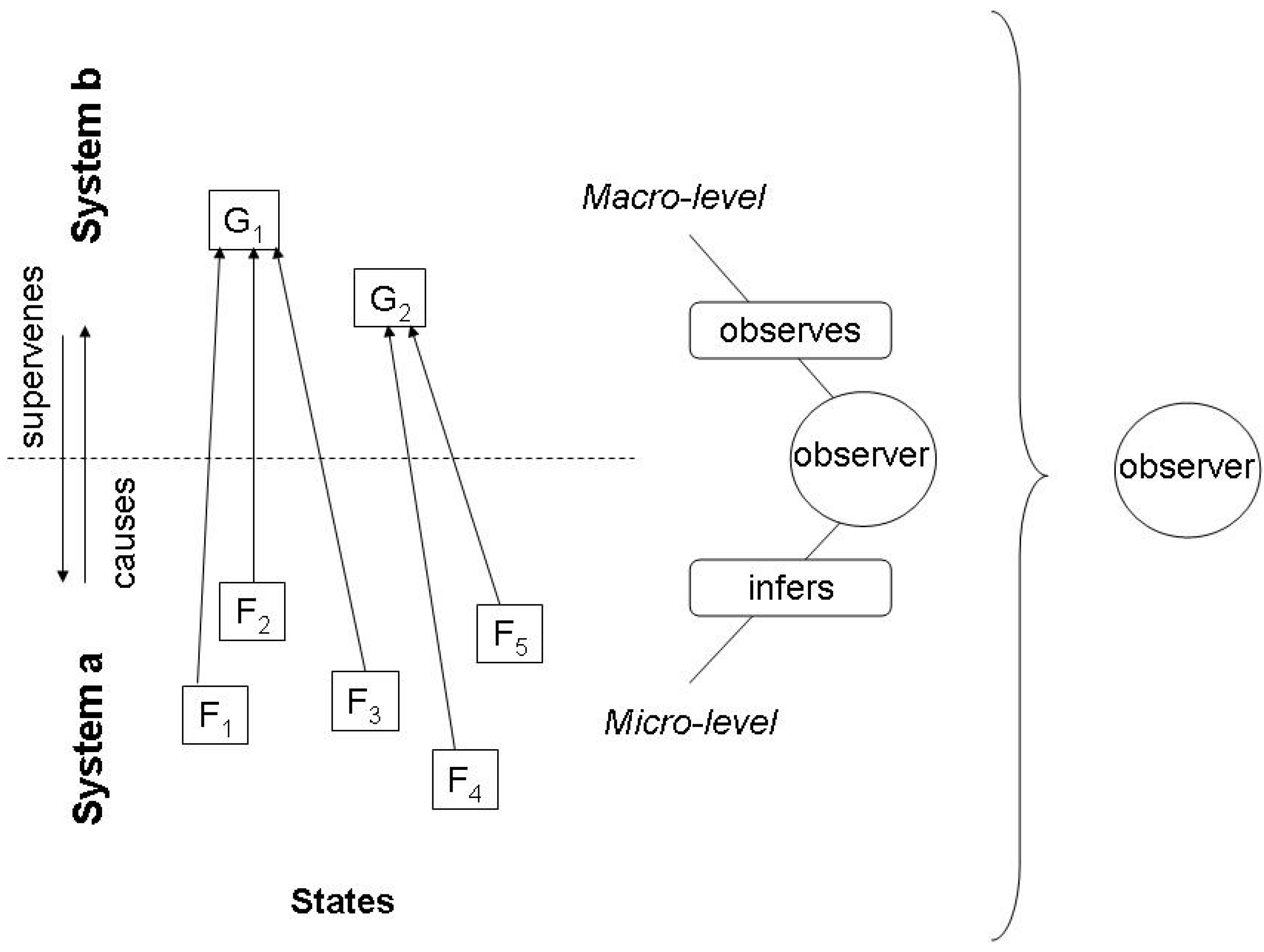

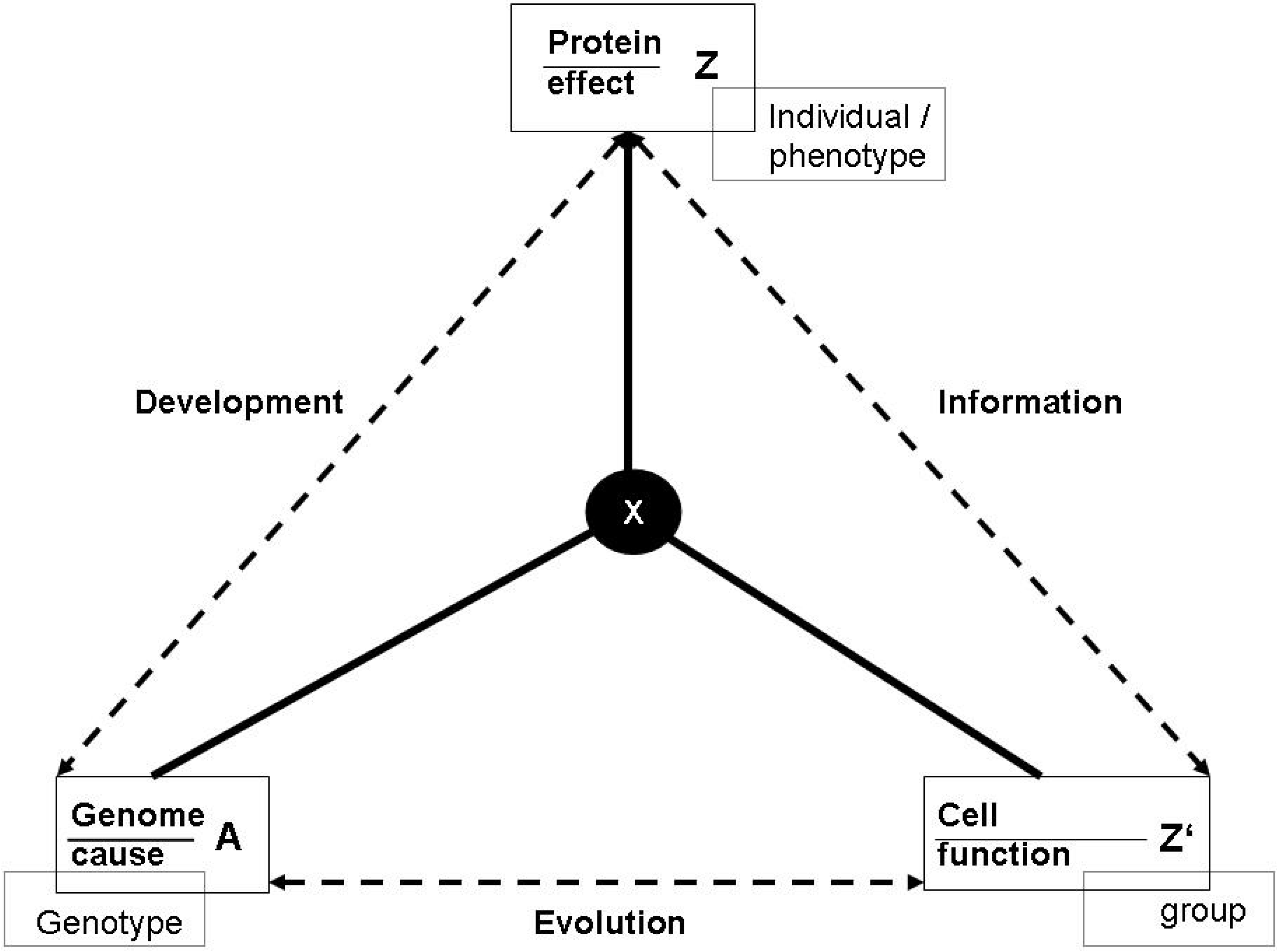

2.1. The missing link between causality and information

2.2. Inference and the Jaynes notion of entropy

3. Functions, Evolution and Entropy

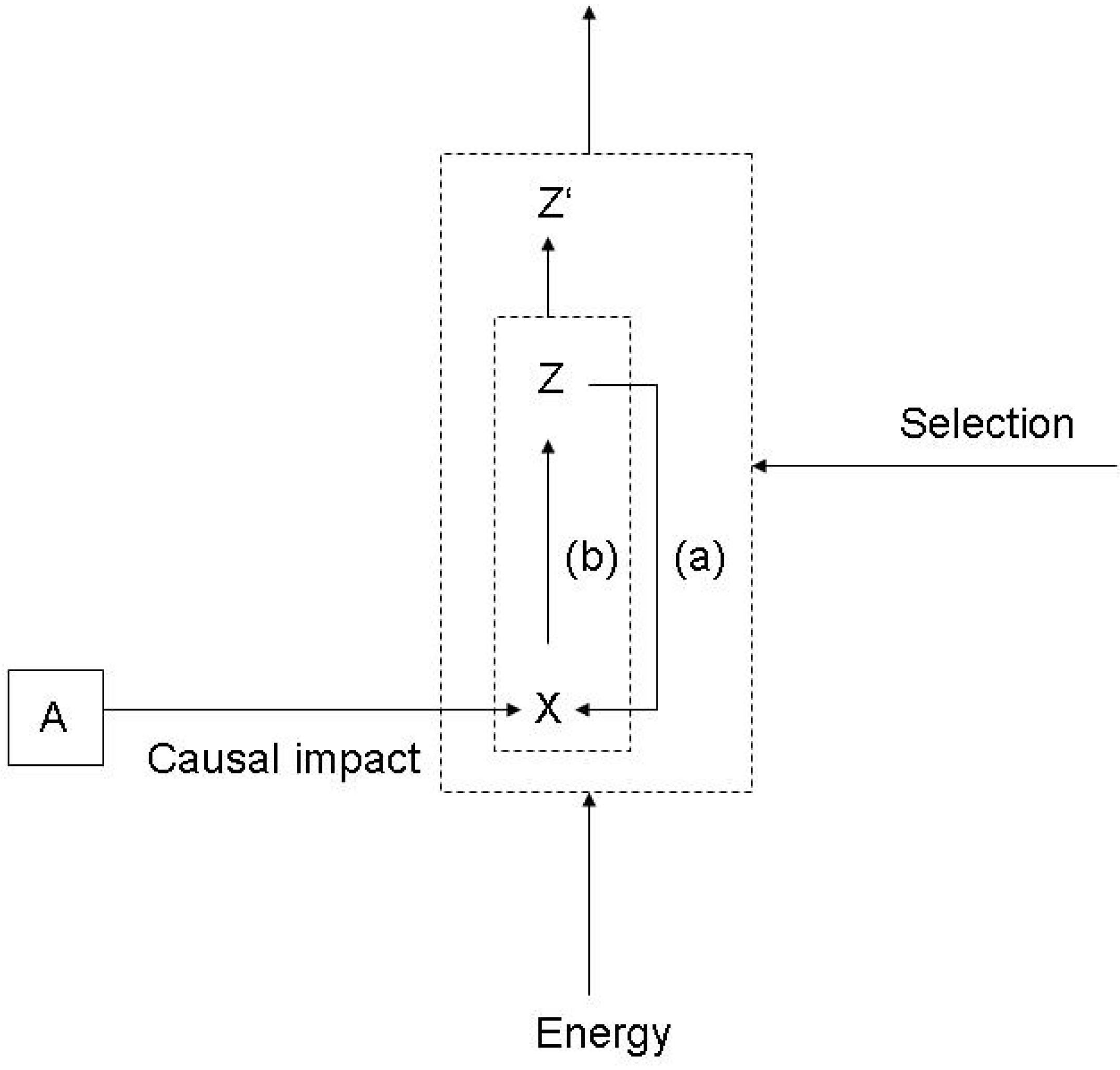

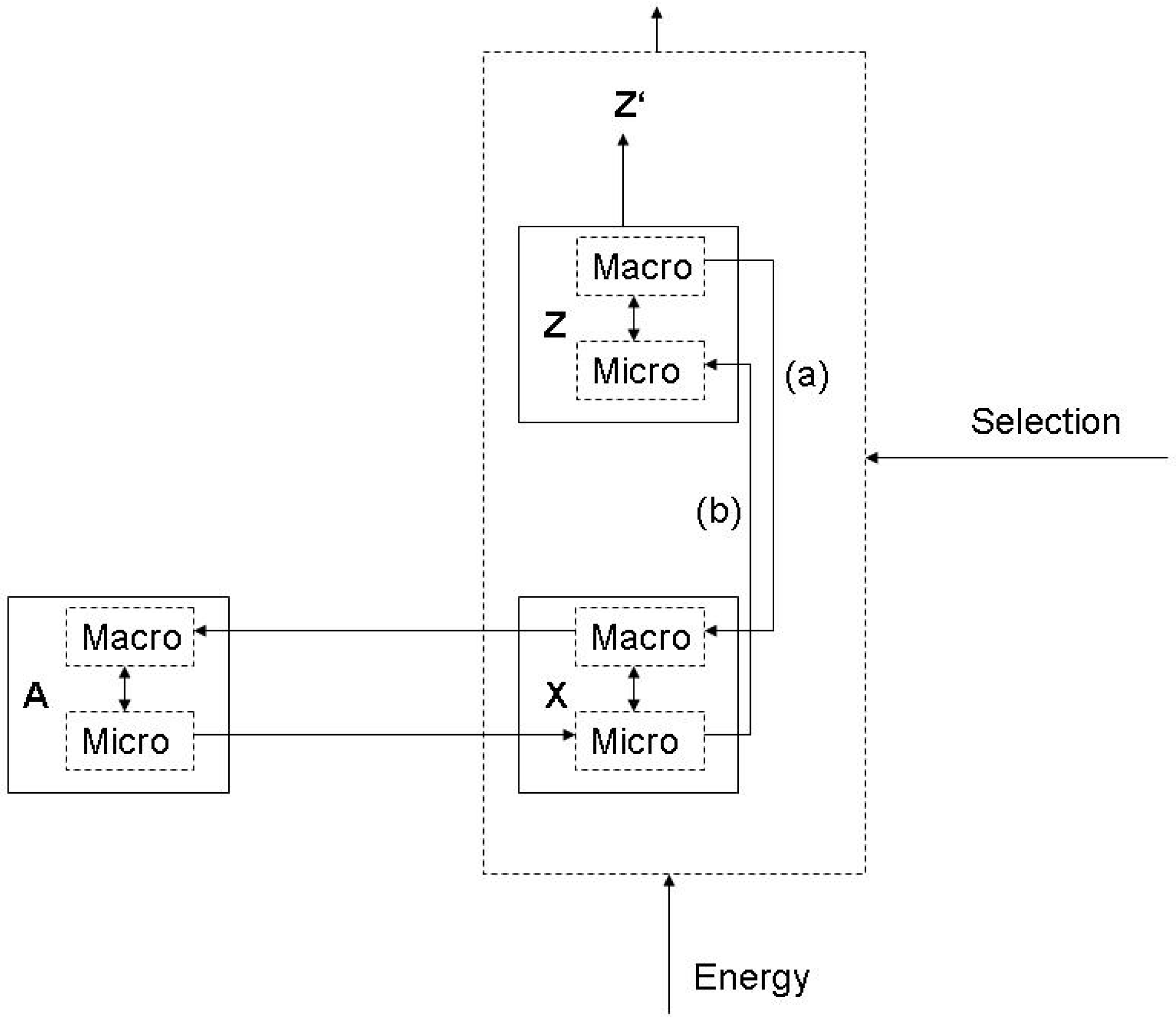

3.1. Functions and the subjective/objective distinction

- (a)

- X is there because it does Z, and

- (b)

- Z is a consequence (or result) of X’s being there.

- Technological functions are epistemologically objective because statements on them relate to physical laws, but they are ontologically subjective because they relate to human design;

- Biological functions are epistemologically objective because statements on them are science-based, and they are ontologically objective, because they are the result of natural selection;

- Mental functions are ontologically objective because they relate to neuronal states, which are observer independent, but they are epistemologically subjective because we can only refer to them from the viewpoint of the person who experiences them (such as pain);

- Semantic functions are ontologically subjective because they relate to individual mental states, and they are epistemologically subjective, because they relate to individual intentionality.

| Judgement | Epistemically subjective | Epistemically objective | |

|---|---|---|---|

| Entity | |||

| Ontologically subjective | Semantic function | Technological function | |

| Ontologically objective | Mental function | Biological function | |

- Either as an epistemologically objective, but ontologically subjective notion, as long we consider the standard use in physics, which relates object systems with mental states of observers who conduct experiments,

- Or as an equally epistemologically objective and ontologically objective notion, if we consider the integrated system of observer and object system, and conceive of the former as a physical system, which implies that not only the experiment as such, but also the design of the experiment is a physical process.

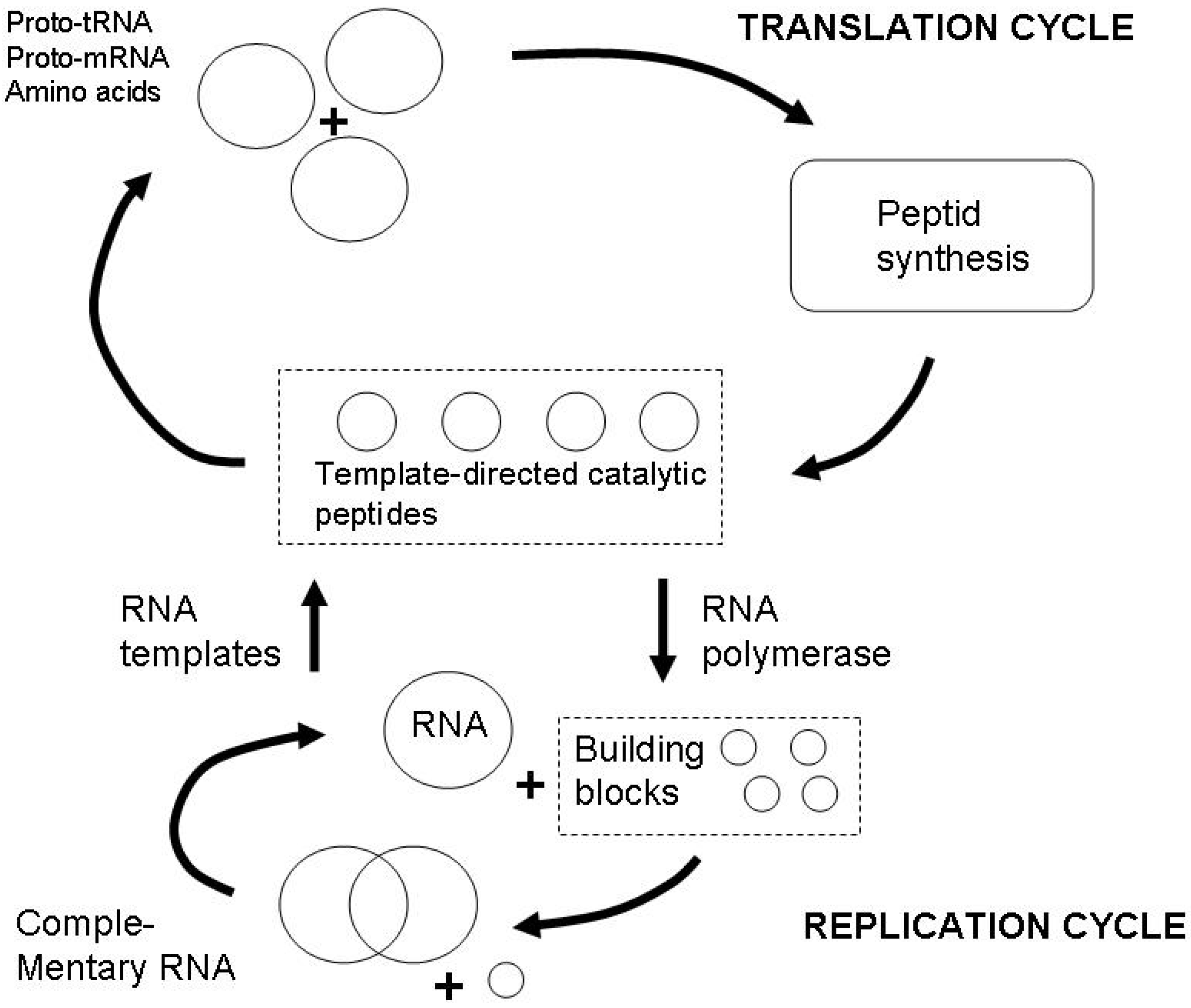

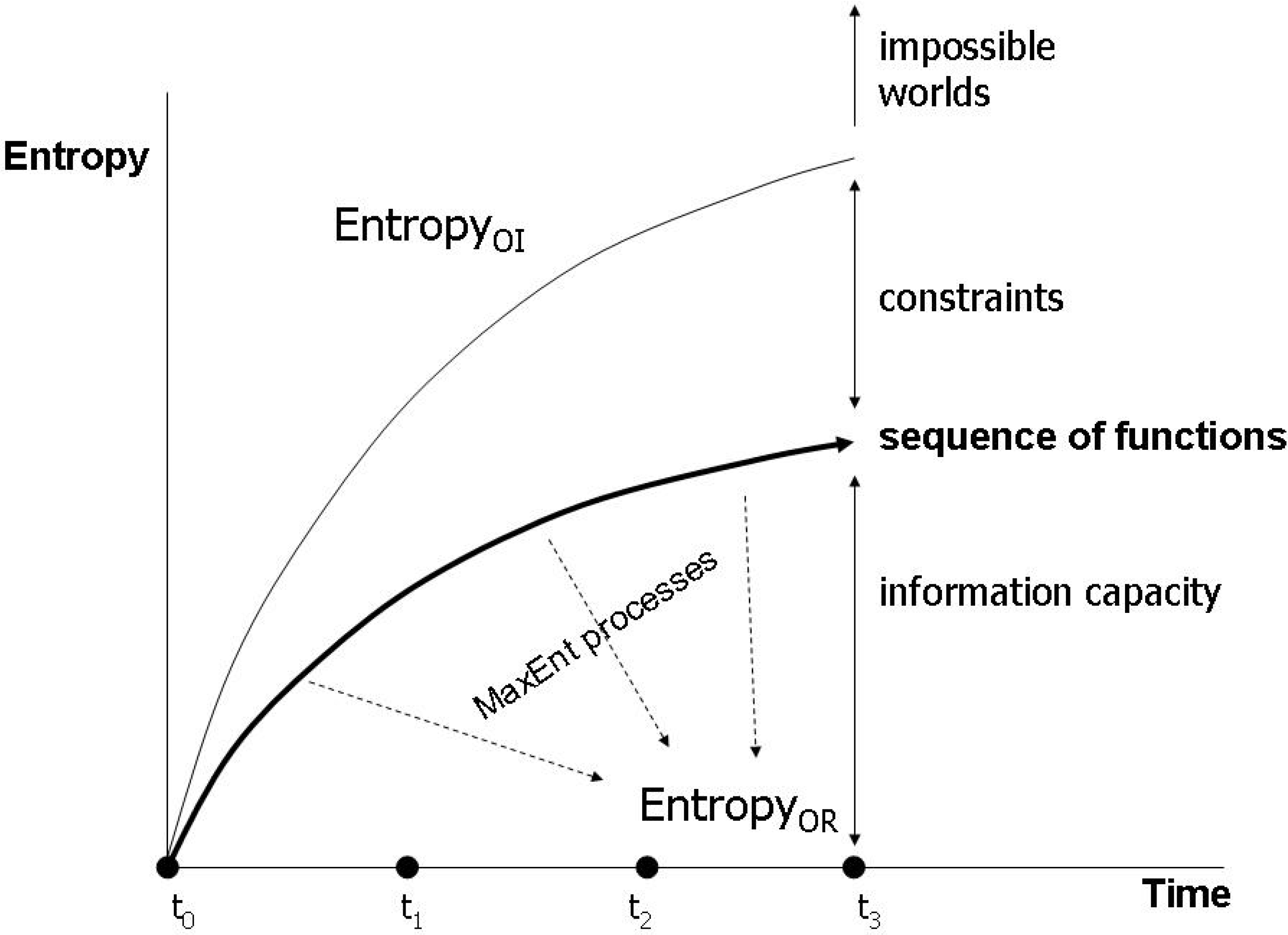

3.2. Evolving functions and maximum entropy production

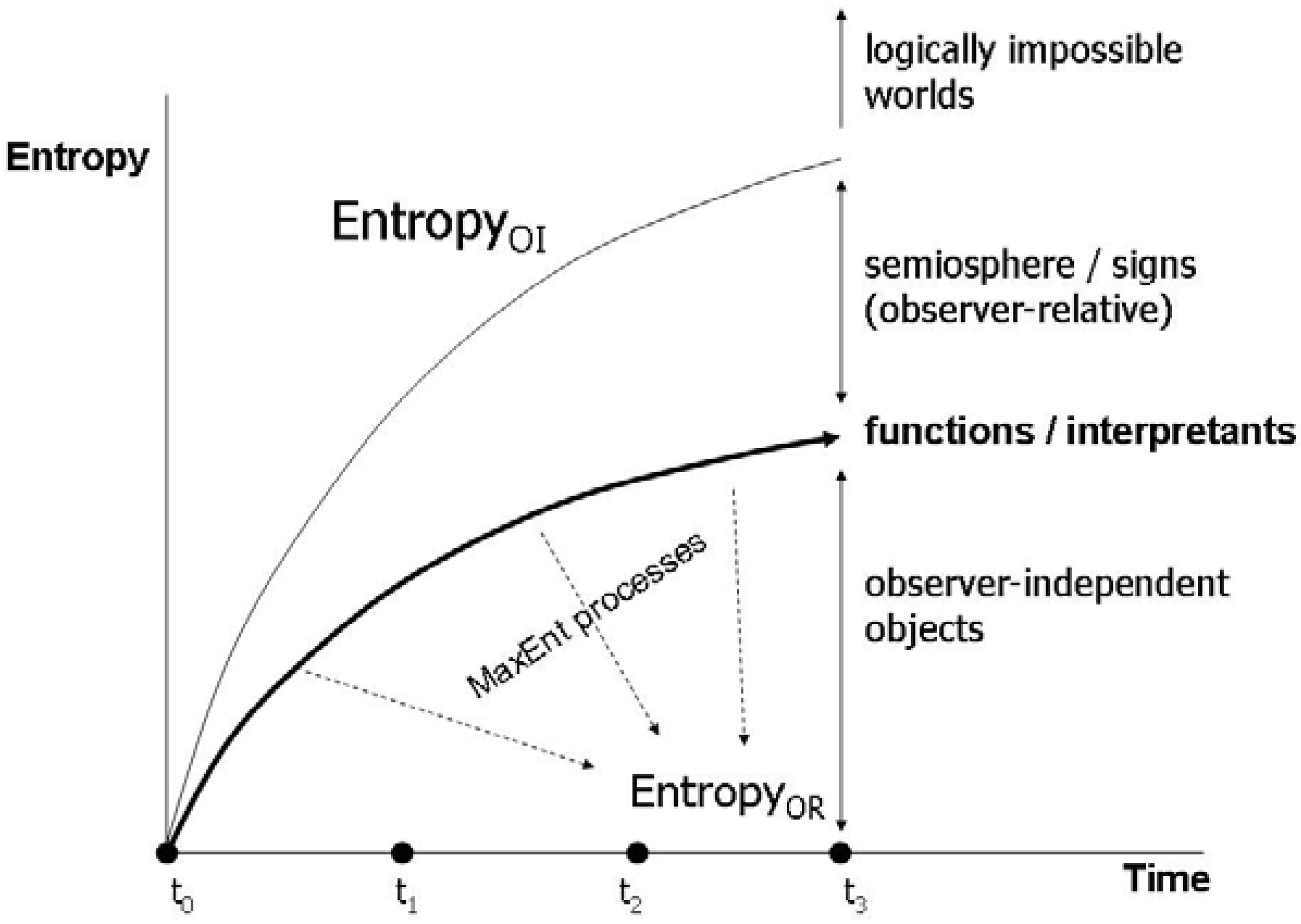

3.3. Endogenous entropy and evolution

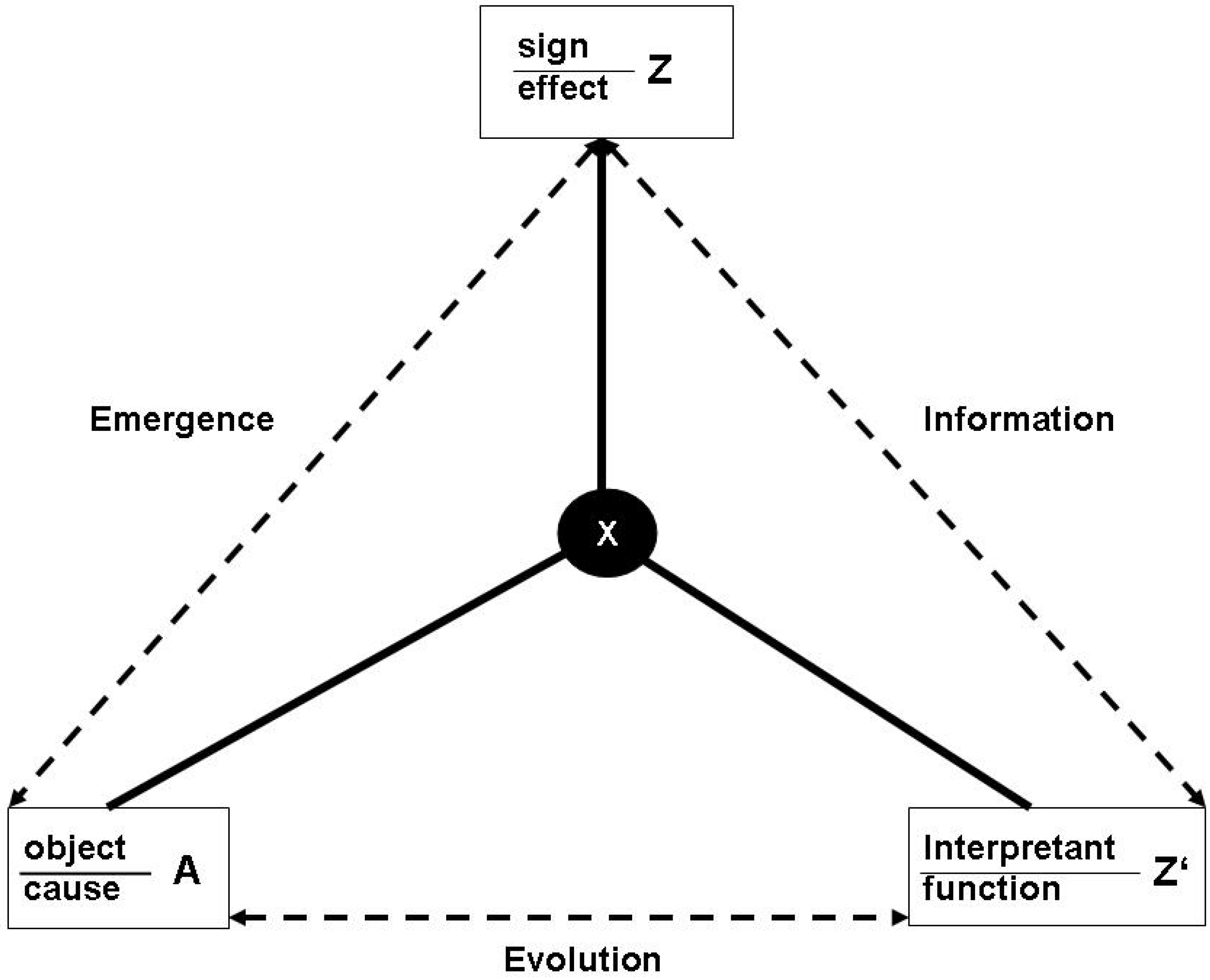

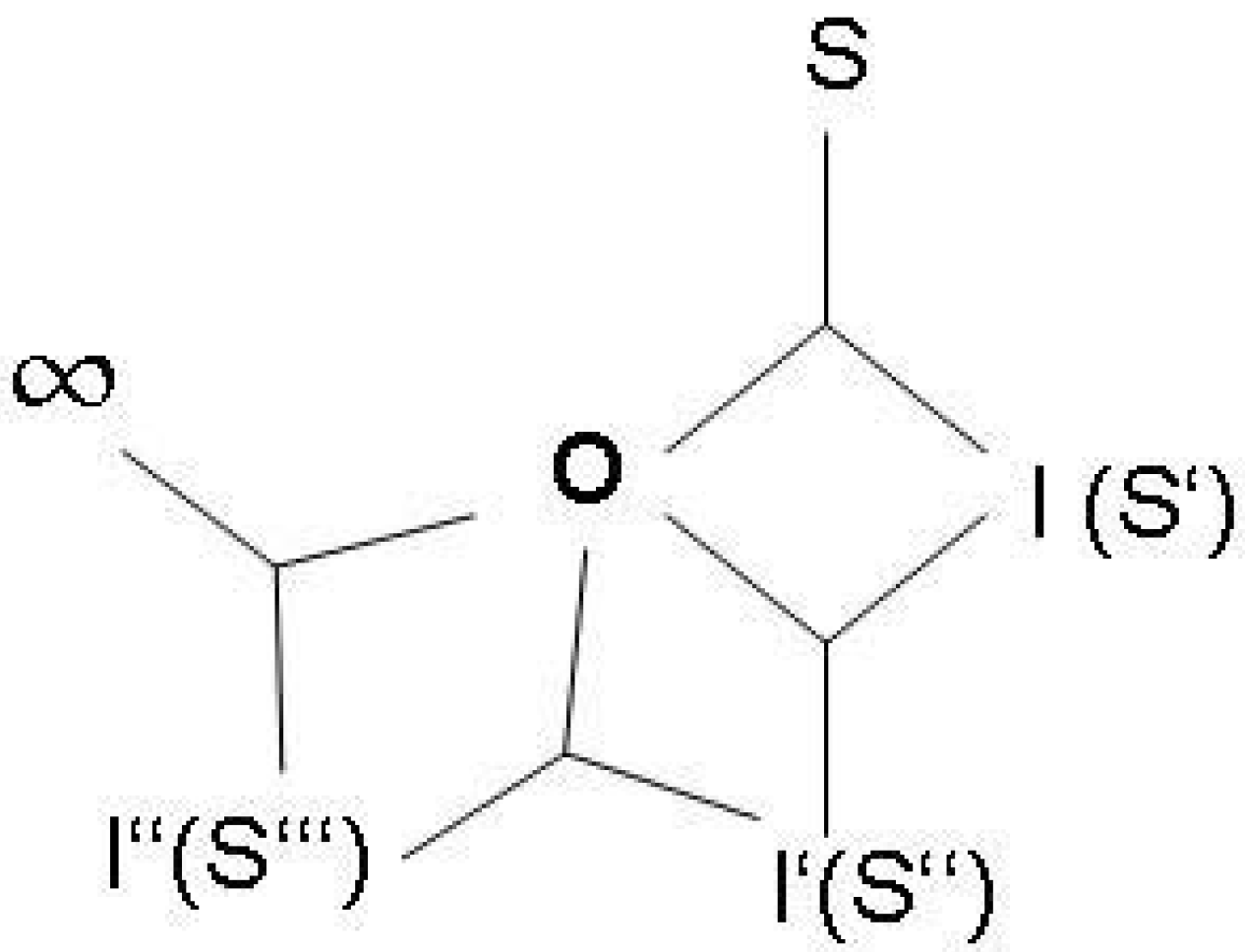

4. Entropy and Semiosis: Naturalizing Peirce

4.1. Reconstructing semiosis as proper functioning

4.2. Energetics of the semiosphere

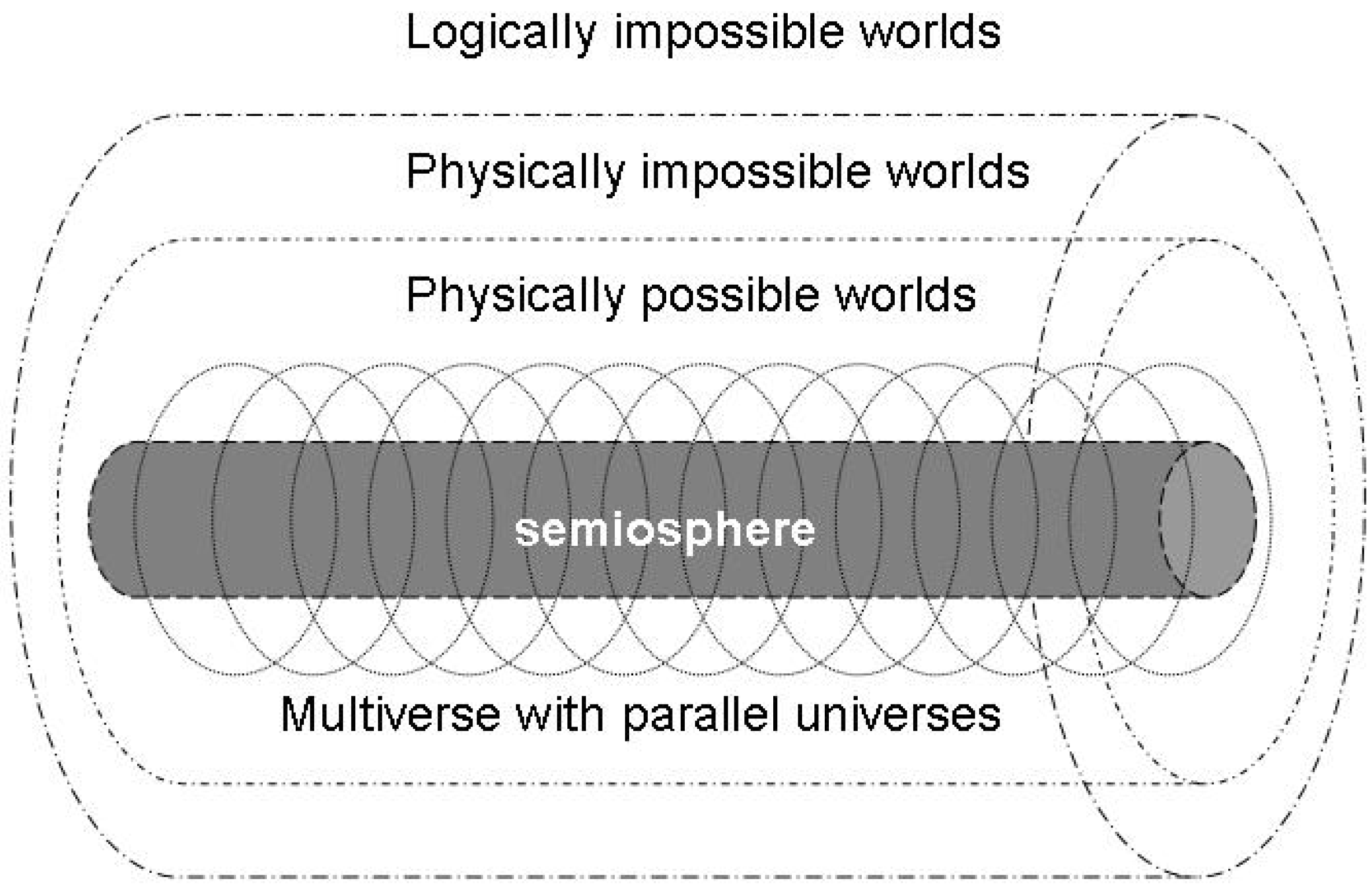

4.3. A semiotic reformulation of the anthropic principle

5. The Final Brick: Meaning and Paradox

6. Conclusions

Acknowledgements

References and Notes

- Volkenstein, M.V. Entropy and Information; Birkhäuser: Basel, Boston, Berlin, 2009. [Google Scholar]

- Brier, S. Cybersemiotics. Why Information Is Not Enough! University of Toronto Press: London, UK, 2008. [Google Scholar]

- Maroney, O. Information processing and thermodynamic entropy. The Stanford Encyclopedia of Philosophy; Fall 2009 ed.. Edward, N., Ed.; The Metaphysics Research Lab Center for the Study of Language and Information Stanford University. http://plato.stanford.edu/archives/fall2009/entries/information-entropy/ (accessed on 3 January 2010).

- Taborsky, E. The complex information process. Entropy 2000, 2, 81–97. [Google Scholar] [CrossRef]

- Landauer, R. Irreversibility and heat generation in the computing process (reprint). IBM J. Res. Develop. 1961/2000, 4, 261–269. [Google Scholar]

- von Baeyer, H.C. Information. The New Language of Science; Harvard University Press: Cambridge, MA and London, USA and UK, 2003. [Google Scholar]

- Aunger, R. The Electric Meme. A New Theory of How We Think; Free Press: New York, NY, USA, 2002. [Google Scholar]

- Bunge, M. Ontology I: The furniture of the world. In Treatise on Basic Philosophy; Reidel: Dordrecht, Netherland, 1977; Volume 3. [Google Scholar]

- Schrödinger, E. What Is Life? The Physical Aspect of the Living Cell; Cambridge University Press: Cambridge, UK, 1944. [Google Scholar]

- Corning, P.A. Holistic Darwinism. Synergy, Cybernetics, and the Bioeconomics of Evolution; Chicago University Press: London, UK, 2005. [Google Scholar]

- Ben Jacob, E.; Shapira, Y.; Tauber, A.I. Seeking the foundations of cognition in bacteria: from schrödinger’s negative entropy to latent information. Physica A 2006, 359, 495–524. [Google Scholar] [CrossRef]

- Elitzur, A.C. When form outlasts its medium: a definition of life integrating platonism and thermodynamics. In Life as We Know It; Seckbach, J., Ed.; Kluwer Academic Publishers: Dordrecht, Netherland, 2005; pp. 607–620. [Google Scholar]

- Peirce, C.S. The Essential Peirce. Selected Philosophical Writings; Houser, N., Kloesel, C., Eds.; Indiana University Press: Bloomington, IN, USA, 1992; Volume 1. [Google Scholar]

- Vehkavaara, T. Why and how to naturalize semiotic concepts for biosemiotics. Sign Syst. Stud. 2002, 30, 293–313. [Google Scholar]

- Emmeche, C. The chicken and the orphan egg: on the function of meaning and the meaning of function. Sign Syst. Stud. 2002, 30, 15–32. [Google Scholar]

- Jaynes, E.T. Gibbs vs. Boltzmann Entropies. Amer. J. Phys. 1965, 33, 391–398. [Google Scholar] [CrossRef]

- Searle, J.R. The Construction of Social Reality; Free Press: New York, NY, USA, 1995. [Google Scholar]

- Salthe, S.N. Development and Evolution. Complexity and Change in Biology; MIT Press: Cambridge MA and London, USA and UK, 1993. [Google Scholar]

- Macdonald, G.; Papineau, D. (Eds.) Teleosemantics. New Philosophical Essays; Oxford University Press: Oxford, New York, NY, USA, 2006.

- Seager, W.; Sean, A.-H. Panpsychism. The Stanford Encyclopedia of Philosophy; Edward, N.Z., Ed.; The Metaphysics Research Lab Center for the Study of Language and Information Stanford University, 2007. http://plato.stanford.edu/archives/spr2007/entries/panpsychism/ (accessed on 12 August, 2008).

- Burch, R.; Charles, S. Peirce. The Stanford Encyclopedia of Philosophy; Spring 2010 ed.. Edward, N.Z., Ed.; The Metaphysics Research Lab Center for the Study of Language and Information Stanford University, 2010. http://plato.stanford.edu/archives/spr2010/entries/peirce/ (accessed on 5 January, 2010).

- von Hayek, F.A. The Sensory Order. An Inquiry into the Foundations of Theoretical Psychology; University of Chicago Press: Chicago, IL, USA, 1952. [Google Scholar]

- Georgescu-Roegen, N. The Entropy Law and the Economic Process; Harvard University Press: Cambridge, MA, USA, 1971. [Google Scholar]

- Ayres, R.U. Information, Entropy, and Progress. A New Evolutionary Paradigm; AIP Press: New York, NY, USA, 1994. [Google Scholar]

- Ayres, R.U.; Warr, B. Accounting for growth: the role of physical work. Struct. Change Econ. Dyn. 2005, 16, 181–209. [Google Scholar] [CrossRef]

- Ruth, M. Insights from thermodynamics for the analysis of economic processes. In Non-equilibrium Thermodynamics and the Production of Entropy. Life, Earth, and Beyond; Kleidon, A., Lorenz, R., Eds.; Springer: Heidelberg, Germany, 2005; pp. 243–254. [Google Scholar]

- Annila, A.; Salthe, S. Economies evolve by energy dispersal. Entropy 2009, 11, 606–633. [Google Scholar] [CrossRef]

- Floridi, L. Semantic Conceptions of information. The Stanford Encyclopedia of Philosoph; Spring 2007 ed.. Edward, N.Z., Ed.; The Metaphysics Research Lab Center for the Study of Language and Information Stanford University, 2007. http://plato.stanford.edu/archives/spr2007/ entries/information-semantic/ (accessed on1 March, 2009).

- Bub, J. Maxwell’s demon and the thermodynamics of computation. arXiv:quant-ph/0203017, 2002. [Google Scholar] [CrossRef]

- Bennett, C.H. Notes on landauer’s principle, reversible computation, and maxwell’s demon. arXiv:physics/0210005, 2003. [Google Scholar] [CrossRef]

- Lloyd, S. Ultimate physical limits to computation. arXiv:quant-ph/9908043, 2000. [Google Scholar]

- Lloyd, S. Computational capacity of the universe. arXiv:quant-ph/0110141, 2001. [Google Scholar] [CrossRef]

- Lloyd, S. Progamming the Universe. A Quantum Computer Scientist Takes on the Cosmos; Knopf: New York, NY, USA, 2006. [Google Scholar]

- Zeilinger, A. A foundational principle for quantum mechanics. Found. Phys. 1999, 29, 631–643. [Google Scholar] [CrossRef]

- Floridi, L. Information. In The Blackwell Guide to the Philosophy of Computing and Information; Floridi, L., Ed.; Blackwell: Oxford, UK, 2003; pp. 40–61. [Google Scholar]

- El-Hani, C.N.; Queiroz, J.; Emmeche, C. A semiotic analysis of the genetic information system. Semiotica 2006, 160, 1–68. [Google Scholar] [CrossRef]

- Smith, J.M. The concept of information in biology. Phil. Sci. 2000, 67, 177–194. [Google Scholar] [CrossRef]

- Griffiths, P.E. Genetic information: A metaphor in search of a theory. Phil. Sci. 2001, 68, 394–412. [Google Scholar] [CrossRef]

- Rheinberger, H.-J.; Staffan, M.-W. Gene. The Stanford Encyclopedia of Philosophy; Fall 2007 ed.. Edward, N.Z., Ed.; The Metaphysics Research Lab Center for the Study of Language and Information Stanford University. http://plato.stanford.edu/archives/fall2007/entries/gene/ (accessed on 3 June, 2008).

- Küppers, B.-O. Der Ursprung biologischer Information. Zur Naturphilosophie der Lebensentstehun; Piper: München, Zürich, 1986. [Google Scholar]

- Hoffmeyer, J. The biology of signification. Perspect Biol.Med. 2000, 43, 252–268. [Google Scholar] [CrossRef] [PubMed]

- Oyama, S. Evolution’s Eye. A Systems View of the Biology-Culture Divide; Duke University Press: Durham NC and London, USA and UK, 2000. [Google Scholar]

- Oyama, S. The Ontogeny of Information. Developmental Systems and Evolution; Duke University Press: Durham NC and London, USA and UK, 2001. [Google Scholar]

- Dretske, F. Knowledge and the Flow of Information, Reprint ed.; CSLI Publications: Stanford, CA, USA, 1981/1999. [Google Scholar]

- Penrose, R. The Road to Reality. A Complete Guide to the Laws of the Universe; Knopf: New York, NY, USA, 2006. [Google Scholar]

- Jaynes, E.T. The second law as physical fact and as human inference. http://bayes.wustl.edu/etj/articles/second.law.pdf/ (accessed 12 November 2009).

- Gull, S.F. Some misconceptions about entropy. http://www.ucl.ac.uk/~ucesjph/reality/ entropy/text.html/ (accessed on 3 December, 2009).

- Laughlin, R.B. A Different Universe. Reinventing Physics From the Bottom Down; Basic Books: New York, NY, USA, 2005. [Google Scholar]

- Schaffer, J. The metaphysics of causation. The Stanford Encyclopedia of Philosophy; Winter 2007 ed.. Edward, N.Z., Ed.; The Metaphysics Research Lab Center for the Study of Language and Information Stanford University. http://plato.stanford.edu/archives/win2007/entries/causation-metaphysics/ (accessed on 3 March, 2008).

- Woodward, J. Making Things Happen. A Theory of Causal Explanation; Oxford Uiniversity Press: Oxford, UK, 2003. [Google Scholar]

- McLaughlin, B.; Bennett, K. Supervenience. The Stanford Encyclopedia of Philosophy; Fall 2006 ed.. Edward, N.Z., Ed.; The Metaphysics Research Lab Center for the Study of Language and Information Stanford University. http://plato.stanford.edu/archives/fall2006/entries/supervenience/ (accessed on 3 April, 2007).

- Perlman, M. Changing the mission of theories of teleology: dos and don’ts for thinking about function. In Functions in Biological and Artifical Worlds; Krohs, U., Kroes, P., Eds.; MIT Press: Cambridge, MA, 2009; pp. 17–35. [Google Scholar]

- Wright, L. Functions. Philos. Rev. 1973, 82, 139–168. [Google Scholar] [CrossRef]

- Smith, J.M.; Szathmáry, E. The Major Transitions in Evolution; Freeman: New York, NY, USA, 1995. [Google Scholar]

- Stein, R. Towards a process philosophy of chemistry. HYLE–Int. J. Philos. Chem. 2004, 10, 5–22. [Google Scholar]

- Vermaas, P.E. On unification: taking technological functions as objective (and biological functions as subjective). In Functions in Biological and Artifical Worlds; Krohs, U., Kroes, P., Eds.; MIT Press: Cambridge, MA, USA, 2009; pp. 69–87. [Google Scholar]

- Searle, J.R. The Construction of Social Reality; Free Press: New York, NY, USA, 1995. [Google Scholar]

- Ziman, J. (Ed.) Technological Innovation as an Evolutionary Process; Cambridge University Press: Cambridge, MA, USA, 2000.

- Lewens, T. Innovation and population. In Functions in Biological and Artifical Worlds; Krohs, Ul., Kroes, P., Eds.; MIT Press: Cambridge, MA, USA, 2009; pp. 243–257. [Google Scholar]

- Campbell, D.T. Blind variation and selective retention in creative thought as in other knowledge processes. In Evolutionary Epistemology, Rationality, and the Sociology of Knowledge; Radnitzky, G., Bartley, W.W., III, Eds.; Open Court: La Salle, France, 1987/1960; pp. 91–114. [Google Scholar]

- Edelman, G.M. Neural Darwinism. The Theory of Neuronal Group Selection; Basic Books: New York, NY, USA, 1987. [Google Scholar]

- Edelman, Gerald M. Second Nature. Brain Science and Human Knowledge; Yale University Press: New Haven and London, 2006. [Google Scholar]

- Macdonald, G.; Papineau, D. Introduction: Prospects and Problems for teleosemantics. In Teleosemantics. New Philosophical Essays; Macdonald, G., Papineau, D, Eds.; Oxford University Press: New York, NY, USA, 2006; pp. 1–22. [Google Scholar]

- Ellis, G.F. On the nature of causation in complex systems. 2008. http://www.mth.uct.ac.za/~ellis/Top-down%20Ellis.pdf/ (accessed on 24 January, 2010).

- Popper, K.R. Objective Knowledge. An Evolutionary Approach; Oxford: Clarendon, UK, 1972. [Google Scholar]

- Bradie, M.; Harms, W. Evolutionary Epistemology. The Stanford Encyclopedia of Philosophy; Fall 2006 ed.. Edward, N.Z., Ed.; The Metaphysics Research Lab Center for the Study of Language and Information Stanford University, 2006. http://plato.stanford.edu/archives/fall2006/entries/epistemology-evolutionary/ (accessed on 24 July, 2008).

- Hendry, R.F. Is There Downward Causation in Chemistry? In Philosophy of Chemistry. Synthesis of a New Discipline; Baird, D., Scerri, E., McIntyre, L., Eds.; Springer: Dordrecht, Netherlands, 2006; pp. 173–190. [Google Scholar]

- Del Re, G. Ontological status of molecular structure. HYLE–Int. J. Philos. Chem. 1998, 4, 81–103. [Google Scholar]

- van Brakel, J. The nature of chemical substances. In Of Minds and Molecules. New Philosophical Perspectives on Chemistry; Bhushan, N., Rosenfeld, S., Eds.; Oxford University Press: New York, NY, USA, 2000; pp. 162–18. [Google Scholar]

- Schummer, J. The chemical core of chemistry I: a conceptual approach. HYLE–Int. J. Philos. Chem. 1998, 4, 129–162. [Google Scholar]

- Dewar, R.C. Maximum entropy production as an inference algorithm that rranslates physical assumptions into macroscopic predictions: don’ shoot the messenger. Entropy 2009, 11, 931–944. [Google Scholar] [CrossRef]

- Dewar, R.C. Maximum-entropy production and non-equilibrium statistical mechanics. In Non-equilibrium Thermodynamics and the Production of Entropy. Life, Earth, and Beyond; Kleidon, A., Lorenz, R., Eds.; Springer: Heidelberg, Germany, 2005; pp. 41–55. [Google Scholar]

- Kleidon, A.; Lorenz, R. (Eds.) Non-equilibrium Thermodynamics and the Production of Entropy. Life, Earth, and Beyond; Springer: Heidelberg, Germany, 2005.

- Kleidon, A. Non-equilibrium thermodynamics and maximum entropy production in the earth system: applications and implications. Naturwissenschaften 2009, 96, 653–677. [Google Scholar] [CrossRef] [PubMed]

- Virgo, N. From maximum entropy to maximum entropy production: a new approach. Entropy 2010, 12, 107–126. [Google Scholar] [CrossRef]

- Kleidon, A. Non-equilibrium thermodynamics, maximum entropy production and earth-system evolution. Philos. Trans. R. Sco. London A 2010, 368, 181–196. [Google Scholar] [CrossRef] [PubMed]

- Paltridge, G.W. A story and a recommendation about the principle of maximum entropy production. Entropy 2009, 11, 945–948. [Google Scholar] [CrossRef]

- Deutsch, D. The Fabric of Reality; Penguin: London, UK, 1997. [Google Scholar]

- Lahav, N.; Nir, S.; Elitzur, A.C. The emergence of life on earth. Progr. Biophys. Mol. Biol. 2001, 75, 75–120. [Google Scholar] [CrossRef]

- Hoffmeyer, J. Genes, development and semiosis. In Genes in Development. Re-reading the Molecular Paradigm; Neumann-Held, E., Rehmann-Sutter, C., Eds.; Duke University Press: London, UK, 2006; pp. 152–174. [Google Scholar]

- Sober, E.; Wilson, D.S. Unto Others. The Evolution and Psychology of Un-selfish Behavior; Harvard University Press: London, UK, 1998. [Google Scholar]

- Price, R.G. The Nature of Selection. J. Theor. Biol. 1995, 175, 389–396. [Google Scholar] [CrossRef] [PubMed]

- Kleidon, A.; Ralph, L. Entropy production in earth system processes. In Non-equilibrium Thermodynamics and the Production of Entropy. Life, Earth, and Beyond; Kleidon, A., Lorenz, R., Eds.; Springer: Heidelberg, Germany, 2005; pp. 1–20. [Google Scholar]

- Lotka, A. Contribution to the energetics of evolution. Proc. Natl. Acad. Sci. USA 1922, 8, 147–151. [Google Scholar] [CrossRef] [PubMed]

- Lotka, A. Natural selection as a physical principle. Proc. Natl. Acad. Sci. USA 1922, 8, 151–154. [Google Scholar] [CrossRef] [PubMed]

- Lotka, A. The law of evolution as a maximal principle. Human Biology 1945, 17, 167–194. [Google Scholar]

- Vermeij, G.J. Nature: An Economic History; Princeton University Press: Princeton, NJ, USA, 2004. [Google Scholar]

- Odum, H.T. Environment, Power, and Society for the Twenty-First Century. The Hierarchy of Energy; Columbia University Press: New York, NY, USA, 2007. [Google Scholar]

- Robson, A.J. Complex evolutionary systems and the red queen. Econ. J. 2005, 115, F211–F224. [Google Scholar] [CrossRef]

- Zahavi, A.; Zahavi, A. . The Handicap Principle. A Missing Piece of Darwin’s Puzzle; Oxford University Press: New York, NY, USA, 1997. [Google Scholar]

- Dawkins, R. The Selfish Gene. New edition; Oxford University Press: Oxford, UK, 1989. [Google Scholar]

- Grafen, A. Biological signals as handicaps. J. Theor. Biol. 1990, 144, 517–546. [Google Scholar] [CrossRef]

- Brooks, D.R.; Wiley, E.O. Evolution as Entropy. Toward a Unified Theory of Biology; Chicago University Press: Chicago, IL, USA, 1988. [Google Scholar]

- Layzer, D. Growth of order in the universe. In Entropy, Information, and Evolution. New Perspectives on Physical and Biological Evolution; Weber, B.H., Depew, D.J., Smith, J.D., Eds.; MIT Press: Cambridge, MA, USA, 1988; pp. 23–40. [Google Scholar]

- Matthen, M. Teleosemantics and the consumer. In Teleosemantics. New Philosophical Essays; Macdonald, G., Papineau, D., Eds.; Oxford University Press: Oxford, UK, 2006; pp. 146–166. [Google Scholar]

- Ben Jacob, E. Bacterial wisdom, gödel’s theorem and creative genomic webs. Physica. A 1998, 248, 57–76. [Google Scholar] [CrossRef]

- Emmeche, C.; Hoffmeyer, J. From language to nature–the semiotic metaphor in biology. Semiotica 1991, 84, 1–42. [Google Scholar] [CrossRef]

- Atkin, A. Peirce's theory of signs. The Stanford Encyclopedia of Philosophy; Spring 2009 ed.. Edward, N.Z., Ed.; The Metaphysics Research Lab Center for the Study of Language and Information Stanford University. http://plato.stanford.edu/archives/spr2009/entries/peirce-semiotics/ (accessed on 3 January, 2010).

- Peirce, C.S. The Essential Peirce. Selected Philosophical Writings; Houser, N., Kloesel, C., Eds.; Indiana University Press: Bloomington, IN, USA, 1998; Volume 2. [Google Scholar]

- Neander, K. Content for cognitive science. In Teleosemantics. New Philosophical Essays; Macdonald, G., Papineau, D., Eds.; Oxford University Press: New York, NY, USA, 2006; pp. 167–194. [Google Scholar]

- Millikan, R. Biosemantics. J. Philos. 1989, 86, 281–297. [Google Scholar] [CrossRef]

- Millikan, R. Language: A Biological Model; Clarendon: Oxford, UK, 2005. [Google Scholar]

- Lotman, J. On the semiosphere. Sign Syst. Stud. 2005, 33, 205–229. [Google Scholar] [CrossRef]

- Hoffmeyer, J. Signs of Meaning in the Universe; Indiana University Press: Bloomington, IN, USA, 1999. [Google Scholar]

- Smil, V. Energy in Nature and Society. General Energetics of Complex Systems; MIT Press: Cambridge, MA, USA, 2008. [Google Scholar]

- Andrade, E. A Semiotic framework for evolutionary and developmental biology. Biosystems 2007, 90, 389–404. [Google Scholar] [CrossRef] [PubMed]

- Chaisson, E.J. Cosmic Evolution. The Rise of Complexity in Nature; Harvard University Press: Cambridge, MA, USA, 2001. [Google Scholar]

- Chaisson, E.J. Non-equilibrium thermodynamics in an energy-rich universe. In Non-equilibrium Thermodynamics and the Production of Entropy. Life, Earth, and Beyond; Kleidon, A., Lorenz, R, Eds.; Springer: Heidelberg, Germany, 2005; pp. 21–31. [Google Scholar]

- Susskind, L. The Cosmic Landscape. String Theory and the Illusion of Intelligent Design; Little, Brown and Company: New York, NY, USA, 2006. [Google Scholar]

- Smolin, L. The Life of The Cosmos; Oxford University Press: Oxford, UK, 1997. [Google Scholar]

- Smolin, L. Scientific alternatives to the anthropic principle. 2007; Cornell University Library; arXiv:hep-th/0407213. [Google Scholar]

- Popper, K.R. Realism and the Aim of Science; Hutchinson: London, UK, 1983. [Google Scholar]

- Herrmann-Pillath, C. The brain, its sensory order and the evolutionary concept of mind. on hayek's contribution to evolutionary epistemology. J. Soc. Biol. Struct. 1992, 15, 145–187. [Google Scholar]

- von Hayek, F.A. Studies in Philosophy, Politics, and Economics; Routledge & Kegan Paul: London, UK, 1967. [Google Scholar]

- Wolfram, S. A New Kind of Science; Wolfram Media: Champaign, IL, USA, 2002. [Google Scholar]

- Wolpert, D. Computational capabilities of physical systems. Phys. Rev. E. 2001, 65, 016128. [Google Scholar] [CrossRef]

- Tomasello, M.; Carpenter, M.; Call, J.; Behne, T.; Moll, H. Understanding and sharing intentions: the origin of cultural cognition. Behav. Brain Sci. 2005, 28, 675–735. [Google Scholar] [CrossRef] [PubMed]

- Frith, C.D.; Singer, T. The role of social cognition in decision making. Philos. Trans. R. Sco. London B 2008, 363, 3975–3886. [Google Scholar] [CrossRef] [PubMed]

- Frith, U.; Frith, C.D. Development and neurophysiology of mentalizing. Philos. Trans. R. Sco. London B 2003, 358, 459–473. [Google Scholar] [CrossRef] [PubMed]

- Penrose, R. The Emperor’s New Mind. Concerning Computers, Minds, and the Laws of Physics; Oxford University Press: Oxford, UK, 1989. [Google Scholar]

- Lucas, J.R. Minds, machines, and Gödel. Philosophy 1961, XXXVI, 112–127. [Google Scholar] [CrossRef]

- Buenstorf, G. The Economics of Energy and the Production Process. An Evolutionary Approach; Edward Elgar: Cheltenham, UK, 2004. [Google Scholar]

- Herrmann-Pillath, C. The Economics of Identity and Creativity. A Cultural Science Approach; University of Queensland Press: Brisbane, Australia, 2010. [Google Scholar]

- Ayres, C.E. The Theory of Economic Progress; University of North Carolina Press: Chapel Hill, NC, USA, 1944. [Google Scholar]

- Pinch, T.; Swedberg, R. (Eds.) Living in a Material World. Economic Sociology Meets Science and Technology Studies; MIT Press: Cambridge, MA, USA, 2008.

© 2010 by the author; licensee Molecular Diversity Preservation International, Basel, Switzerland. This article is an open-access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/3.0/).

Share and Cite

Herrmann-Pillath, C. Entropy, Function and Evolution: Naturalizing Peircian Semiosis. Entropy 2010, 12, 197-242. https://doi.org/10.3390/e12020197

Herrmann-Pillath C. Entropy, Function and Evolution: Naturalizing Peircian Semiosis. Entropy. 2010; 12(2):197-242. https://doi.org/10.3390/e12020197

Chicago/Turabian StyleHerrmann-Pillath, Carsten. 2010. "Entropy, Function and Evolution: Naturalizing Peircian Semiosis" Entropy 12, no. 2: 197-242. https://doi.org/10.3390/e12020197

APA StyleHerrmann-Pillath, C. (2010). Entropy, Function and Evolution: Naturalizing Peircian Semiosis. Entropy, 12(2), 197-242. https://doi.org/10.3390/e12020197