What Crowdsourcing Platforms Do for Solvers in Problem-Solving Contests: A Content Analysis of Their Websites

Abstract

1. Introduction

2. Related Work

2.1. Problem-Solving Contests in Crowdsourcing

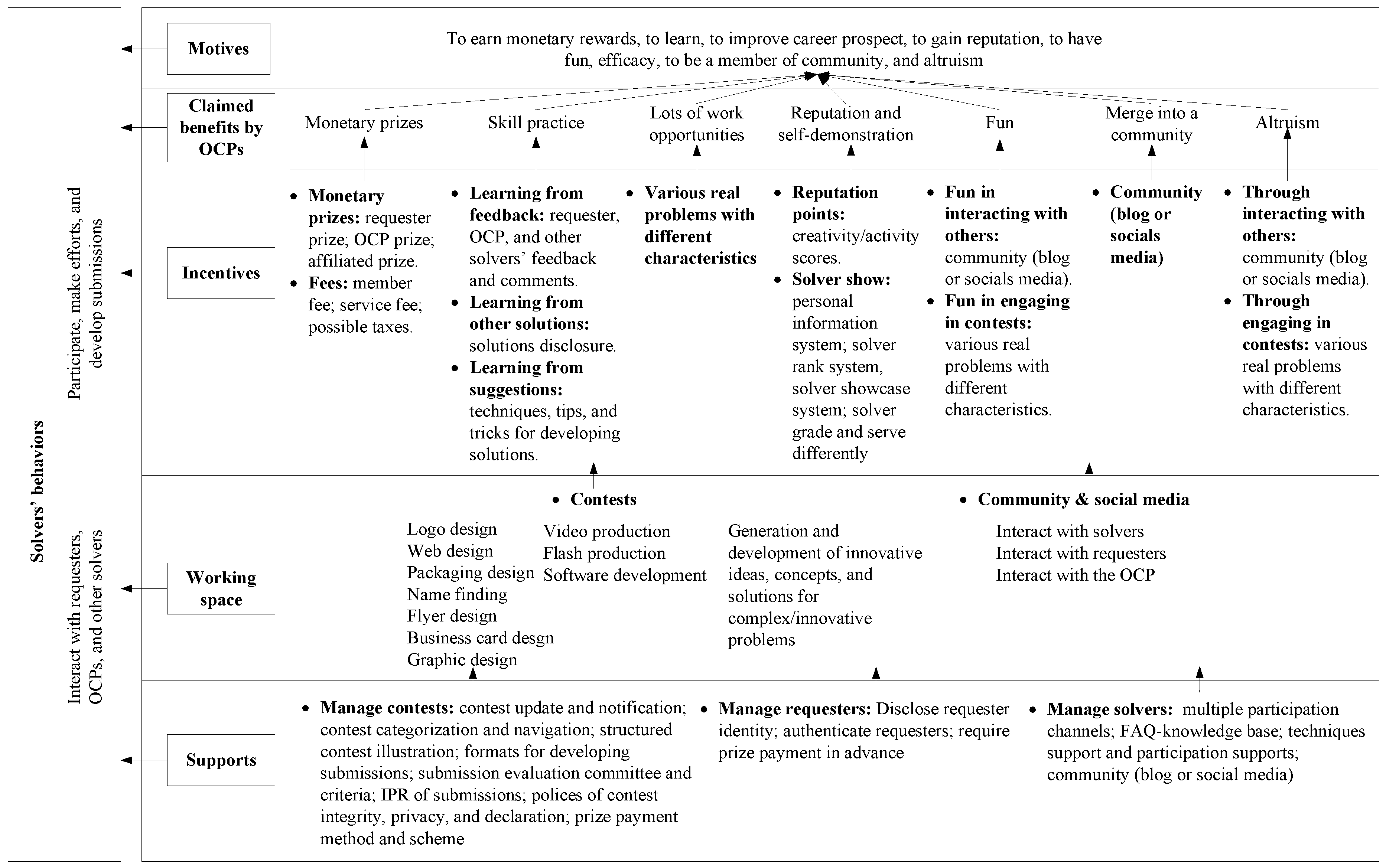

2.2. Factors Influencing Solvers’ Participation in Crowdsourcing

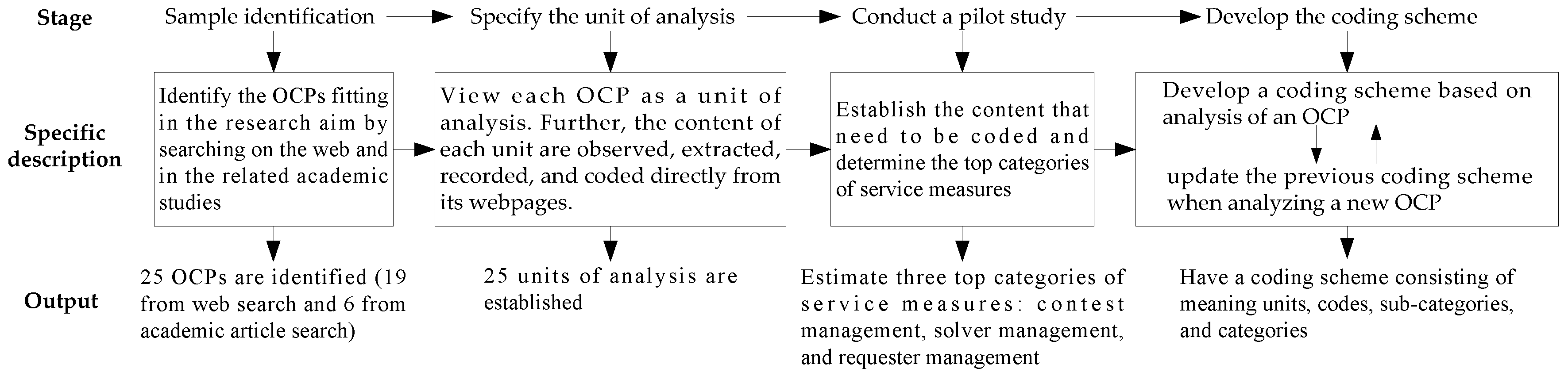

3. Methods

3.1. Sample Identification

3.2. Unit of Analysis and Data Preservation

3.3. The Pilot Study

3.4. Coding Guide Development and Coding Procedures

4. Results Analysis

4.1. Service Measures Related to Contest Management

4.2. Service Measures Related to Solver Management

4.3. Service Measures Related to Requester Management

5. Discussion and Implications

5.1. Discussion of the Results

5.2. Implications for Practice

5.2.1. For OCPs

5.2.2. For Solvers

5.3. Limitations and Future Research

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Estellés-Arolas, E.; González-Ladrón-De-Guevara, F. Towards an integrated crowdsourcing definition. J. Inf. Sci. 2012, 38, 189–200. [Google Scholar] [CrossRef]

- Bassi, H.; Lee, C.J.; Misener, L.; Johnson, A.M. Exploring the characteristics of crowdsourcing: An online observational study. J. Inf. Sci. 2020, 46, 291–312. [Google Scholar] [CrossRef]

- Terwiesch, C.; Xu, Y. Innovation Contests, Open Innovation, and Multiagent Problem Solving. Manag. Sci. 2008, 54, 1529–1543. [Google Scholar] [CrossRef]

- Brabham, D.C. Crowdsourcing as a Model for Problem Solving. Converg. Int. J. Res. New Media Technol. 2008, 14, 75–90. [Google Scholar] [CrossRef]

- Segev, E. Crowdsourcing contests. Eur. J. Oper. Res. 2020, 281, 241–255. [Google Scholar] [CrossRef]

- Khasraghi, H.J.; Aghaie, A. Crowdsourcing contests: Understanding the effect of competitors’ participation history on their performance. Behav. Inf. Technol. 2014, 33, 1383–1395. [Google Scholar] [CrossRef]

- Blohm, I.; Zogaj, S.; Bretschneider, U.; Leimeister, J.M. How to Manage Crowdsourcing Platforms Effectively? Calif. Manag. Rev. 2018, 60, 122–149. [Google Scholar] [CrossRef]

- Sun, Y.; Fang, Y.; Lim, K.H. Understanding sustained participation in transactional virtual communities. Decis. Support Syst. 2012, 53, 12–22. [Google Scholar] [CrossRef]

- Wang, X.; Khasraghi, H.J.; Schneider, H. Towards an Understanding of Participants’ Sustained Participation in Crowdsourcing. Contests. Inf. Syst. Manag. 2020, 37, 213–226. [Google Scholar] [CrossRef]

- Zhao, Y.C.; Zhu, Q. Effects of extrinsic and intrinsic motivation on participation in crowdsourcing contest: A perspective of self-determination theory. Online Inf. Rev. 2014, 38, 896–917. [Google Scholar] [CrossRef]

- Acar, O.A. Motivations and solution appropriateness in crowdsourcing challenges for innovation. Res. Policy 2019, 48, 103716. [Google Scholar] [CrossRef]

- Mack, T.; Landau, C. Submission quality in open innovation contests—An analysis of individual-level determinants of idea innovativeness. R&D Manag. 2020, 50, 47–62. [Google Scholar] [CrossRef]

- Zhu, J.J.; Li, S.Y.; Andrews, M. Ideator Expertise and Cocreator Inputs in Crowdsourcing-Based New Product Development. J. Prod. Innov. Manag. 2017, 34, 598–616. [Google Scholar] [CrossRef]

- Chua, R.Y.J.; Roth, Y.; Lemoine, J.-F. The Impact of Culture on Creativity: How Cultural Tightness and Cultural Distance Affect Global Innovation Crowdsourcing Work. Adm. Sci. Q. 2014, 60, 189–227. [Google Scholar] [CrossRef]

- Bockstedt, J.; Druehl, C.; Mishra, A. Problem-solving effort and success in innovation contests: The role of national wealth and national culture. J. Oper. Manag. 2015, 36, 187–200. [Google Scholar] [CrossRef]

- Franke, N.; Keinz, P.; Klausberger, K. “Does This Sound Like a Fair Deal?”: Antecedents and Consequences of Fairness Expectations in the Individual’s Decision to Participate in Firm Innovation. Organ. Sci. 2012, 24, 1495–1516. [Google Scholar] [CrossRef]

- Zou, L.; Zhang, J.; Liu, W. Perceived justice and creativity in crowdsourcing communities: Empirical evidence from China. Soc. Sci. Inf. 2015, 54, 253–279. [Google Scholar] [CrossRef]

- Heo, M.; Toomey, N. Motivating continued knowledge sharing in crowdsourcing: The impact of different types of visual feedback. Online Inf. Rev. 2015, 39, 795–811. [Google Scholar] [CrossRef]

- Wooten, J.O.; Ulrich, K.T. Idea Generation and the Role of Feedback: Evidence from Field Experiments with Innovation Tournaments. Prod. Oper. Manag. 2017, 26, 80–99. [Google Scholar] [CrossRef]

- Steils, N.; Hanine, S. Recruiting valuable participants in online IDEA generation: The role of brief instructions. J. Bus. Res. 2019, 14–25. [Google Scholar] [CrossRef]

- Vrgović, P.; Jošanov-Vrgović, I. Crowdsourcing user solutions: Which questions should companies ask to elicit the most ideas from its users? Innovation 2017, 19, 452–462. [Google Scholar] [CrossRef]

- Mazzola, E.; Acur, N.; Piazza, M.; Perrone, G. “To Own or Not to Own?” A Study on the Determinants and Consequences of Alternative Intellectual Property Rights Arrangements in Crowdsourcing for Innovation Contests. J. Prod. Innov. Manag. 2018, 35, 908–929. [Google Scholar] [CrossRef]

- Kohler, T. How to Scale Crowdsourcing Platforms. Calif. Manag. Rev. 2017, 60, 98–121. [Google Scholar] [CrossRef]

- Johnson, E.; Liew, C.L. Engagement-oriented design: A study of New Zealand public cultural heritage institutions crowdsourcing platforms. Online Inf. Rev. 2020, 44, 887–912. [Google Scholar] [CrossRef]

- Ebner, W.; Leimeister, J.M.; Krcmar, H. Community engineering for innovations: The ideas competition as a method to nurture a virtual community for innovations. R&D Manag. 2009, 39, 342–356. [Google Scholar] [CrossRef]

- Ren, J.; Ozturk, P.; Yeoh, W. Online Crowdsourcing Campaigns: Bottom-Up versus Top-Down Process Model. J. Comput. Inf. Syst. 2019, 59, 266–276. [Google Scholar] [CrossRef]

- Acar, O.A. Harnessing the creative potential of consumers: Money, participation, and creativity in idea crowdsourcing. Mark. Lett. 2018, 29, 177–188. [Google Scholar] [CrossRef]

- Malone, T.; Laubacher, R.; Dellarocas, C. The collective intelligence genome. IEEE Eng. Manag. Rev. 2010, 38, 38–52. [Google Scholar] [CrossRef]

- Schenk, E.; Guittard, C. Towards a characterization of crowdsourcing practices. J. Innov. Econ. 2011, 7, 93–107. [Google Scholar] [CrossRef]

- Malone, T.W.; Nickerson, J.V.; Laubacher, R.J.; Fisher, L.H.; Boer, P.; Han, Y.; Ben Towne, W. Putting the Pieces Back Together Again: Contest Webs for Large-Scale Problem Solving. In Proceedings of the 2017 ACM Conference on Computer Supported Cooperative Work and Social Computing, Portland, OR, USA, 25 February–1 March 2017. [Google Scholar]

- Battistella, C.; Nonino, F. Exploring the impact of motivations on the attraction of innovation roles in open innovation web-based platforms. Prod. Plan. Control. 2013, 24, 226–245. [Google Scholar] [CrossRef]

- Alam, S.L.; Campbell, J. Temporal Motivations of Volunteers to Participate in Cultural Crowdsourcing Work. Inf. Syst. Res. 2017, 28, 744–759. [Google Scholar] [CrossRef]

- Leimeister, J.M.; Huber, M.; Bretschneider, U.; Krcmar, H. Leveraging Crowdsourcing: Activation-Supporting Components for IT-Based Ideas Competition. Manag. Inf. Syst. 2009, 26, 197–224. [Google Scholar] [CrossRef]

- Bagheri, S.K.; Raoufi, P.; Eshtehardi, M.S.A.; Shaverdy, S.; Akbarabad, B.R.; Moghaddam, B.; Mardani, A. Using the crowd for business model innovation: The case of Digikala. R&D Manag. 2020, 50, 3–17. [Google Scholar] [CrossRef]

- Zhang, X.; Gong, B.; Cao, Y.; Ding, Y.; Su, J. Investigating participants’ attributes for participant estimation in knowledge-intensive crowdsourcing: A fuzzy DEMATEL based approach. Electron. Commer. Res. 2020, 50, 3–17. [Google Scholar] [CrossRef]

- Zhang, X.; Su, J. A combined fuzzy DEMATEL and TOPSIS approach for estimating participants in knowledge-intensive crowdsourcing. Comput. Ind. Eng. 2019, 137, 106085. [Google Scholar] [CrossRef]

- Ye, H.; Kankanhalli, A. Solvers’ participation in crowdsourcing platforms: Examining the impacts of trust, and benefit and cost factors. J. Strat. Inf. Syst. 2017, 26, 101–117. [Google Scholar] [CrossRef]

- Liu, Q.; Du, Q.; Hong, Y.; Fan, W.; Wu, S. User idea implementation in open innovation communities: Evidence from a new product development crowdsourcing community. Inf. Syst. J. 2020, 30, 899–927. [Google Scholar] [CrossRef]

- Frey, K.; Lüthje, C.; Haag, S. Whom Should Firms Attract to Open Innovation Platforms? The Role of Knowledge Diversity and Motivation. Long Range Plan. 2011, 44, 397–420. [Google Scholar] [CrossRef]

- Zheng, H.; Li, D.; Hou, W. Task Design, Motivation, and Participation in Crowdsourcing Contests. Int. J. Electron. Commer. 2011, 15, 57–88. [Google Scholar] [CrossRef]

- Durward, D.; Blohm, I.; Leimeister, J.M. The Nature of Crowd Work and its Effects on Individuals’ Work Perception. J. Manag. Inf. Syst. 2020, 37, 66–95. [Google Scholar] [CrossRef]

- Jian, L.; Yang, S.; Ba, S.L.; Lu, L.; Jiang, L.C. Managing the Crowds: The Effect of Prize Guarantees and In-Process Feedback on Participation in Crowdsourcing Contests. Mis Q. 2019, 43, 97–112. [Google Scholar] [CrossRef]

- Zhu, H.; Kock, A.; Wentker, M.; Leker, J. How Does Online Interaction Affect Idea Quality? The Effect of Feedback in Firm-Internal Idea Competitions. J. Prod. Innov. Manag. 2019, 36, 24–40. [Google Scholar] [CrossRef]

- Boons, M.; Stam, D.; Barkema, H.G. Feelings of Pride and Respect as Drivers of Ongoing Member Activity on Crowdsourcing Platforms. J. Manag. Stud. 2015, 52, 717–741. [Google Scholar] [CrossRef]

- Malhotra, A.; Majchrzak, A. Managing Crowds in Innovation Challenges. Calif. Manag. Rev. 2014, 56, 103–123. [Google Scholar] [CrossRef]

- Schörpf, P.; Flecker, J.; Schönauer, A.; Eichmann, H. Triangular love-hate: Management and control in creative crowdworking. New Technol. Work. Employ. 2017, 32, 43–58. [Google Scholar] [CrossRef]

- Wen, Z.; Lin, L. Optimal Fee Structures of Crowdsourcing Platforms. Decis. Sci. 2016, 47, 820–850. [Google Scholar] [CrossRef]

- Täuscher, K. Leveraging collective intelligence: How to design and manage crowd-based business models. Bus. Horizons 2017, 60, 237–245. [Google Scholar] [CrossRef]

- Pollok, P.; Lüttgens, D.; Piller, F.T. Attracting solutions in crowdsourcing contests: The role of knowledge distance, identity disclosure, and seeker status. Res. Policy 2019, 48, 98–114. [Google Scholar] [CrossRef]

- Martinez, M.G. Solver engagement in knowledge sharing in crowdsourcing communities: Exploring the link to creativity. Res. Policy 2015, 44, 1419–1430. [Google Scholar] [CrossRef]

- Camacho, N.; Nam, H.; Kannan, P.; Stremersch, S. Tournaments to Crowdsource Innovation: The Role of Moderator Feedback and Participation Intensity. J. Mark. 2019, 83, 138–157. [Google Scholar] [CrossRef]

- Piezunka, H.; Dahlander, L. Idea Rejected, Tie Formed: Organizations’ Feedback on Crowdsourced Ideas. Acad. Manag. J. 2018, 62, 503–530. [Google Scholar] [CrossRef]

- Chan, K.W.; Li, S.Y.; Zhu, J.J. Fostering Customer Ideation in Crowdsourcing Community: The Role of Peer-to-peer and Peer-to-firm Interactions. J. Interact. Mark. 2015, 31, 42–62. [Google Scholar] [CrossRef]

- Karyotakis, M.-A.; Antonopoulos, N. Web Communication: A Content Analysis of Green Hosting Companies. Sustainability 2021, 13, 495. [Google Scholar] [CrossRef]

- Colbert, S.; Thornton, L.; Richmond, R. Content analysis of websites selling alcohol online in Australia. Drug Alcohol Rev. 2020, 162–169. [Google Scholar] [CrossRef]

- Gerodimos, R. Mobilising young citizens in the UK: A content analysis of youth and issue websites. Inf. Commun. Soc. 2008, 11, 964–988. [Google Scholar] [CrossRef]

- Elo, S.; Kyngäs, H. The qualitative content analysis process. J. Adv. Nurs. 2008, 62, 107–115. [Google Scholar] [CrossRef] [PubMed]

- Hsieh, H.-F.; Shannon, S.E. Three approaches to qualitative content analysis. Qual. Health Res. 2005, 15, 1277–1288. [Google Scholar] [CrossRef]

- Battistella, C.; Nonino, F. Open innovation web-based platforms: The impact of different forms of motivation on collaboration. Innovation 2012, 14, 557–575. [Google Scholar] [CrossRef]

- Dissanayake, I.; Zhang, J.; Gu, B. Task Division for Team Success in Crowdsourcing Contests: Resource Allocation and Alignment Effects. J. Manag. Inf. Syst. 2015, 32, 8–39. [Google Scholar] [CrossRef]

- Graneheim, U.; Lundman, B. Qualitative content analysis in nursing research: Concepts, procedures and measures to achieve trustworthiness. Nurse Educ. Today 2004, 24, 105–112. [Google Scholar] [CrossRef]

- Von Rosestiel, L. Grundlagen der Organisationspsychologie: Basiswissen und Anwendungshinweise (Basics of Organizational Psychology); Schäffer-Poeschel: Stuttgart, Germany, 2007. [Google Scholar]

- Pee, L.; Koh, E.; Goh, M. Trait motivations of crowdsourcing and task choice: A distal-proximal perspective. Int. J. Inf. Manag. 2018, 40, 28–41. [Google Scholar] [CrossRef]

- Liang, H.; Wang, M.-M.; Wang, J.-J.; Xue, Y. How intrinsic motivation and extrinsic incentives affect task effort in crowdsourcing contests: A mediated moderation model. Comput. Hum. Behav. 2018, 81, 168–176. [Google Scholar] [CrossRef]

- Ogink, T.; Dong, J.Q. Stimulating innovation by user feedback on social media: The case of an online user innovation community. Technol. Forecast. Soc. Chang. 2019, 144, 295–302. [Google Scholar] [CrossRef]

- Tinati, R.; Luczak-Roesch, M.; Simperl, E.; Hall, W. An investigation of player motivations in Eyewire, a gamified citizen science project. Comput. Hum. Behav. 2017, 73, 527–540. [Google Scholar] [CrossRef]

- Brabham, D.C. Moving the Crowd at Threadless. Info. Commun. Soc. 2010, 13, 1122–1145. [Google Scholar] [CrossRef]

- Feng, Y.; Ye, H.J.; Yu, Y.; Yang, C.; Cui, T. Gamification artifacts and crowdsourcing participation: Examining the mediating role of intrinsic motivations. Comput. Hum. Behav. 2018, 81, 124–136. [Google Scholar] [CrossRef]

- Sun, Y.; Wang, N.; Yin, C.; Zhang, J.X. Understanding the relationships between motivators and effort in crowdsourcing marketplaces: A nonlinear analysis. Int. J. Inf. Manag. 2015, 35, 267–276. [Google Scholar] [CrossRef]

| OCPs | Popular Contests | No. of Engaged Solvers | URL |

|---|---|---|---|

| Freelancer | Web and graphic design, mobile app development | 46,117,079 (employers and solvers) | www.freelancer.com |

| EPWK | Logo, web, and packaging design, app development | 23,547,269 (employers and solvers) | www.epwk.com |

| Designcrowd | Logo, web, graphic, T-shirt, and flyer design | 846,093 | www.designcrowd.com |

| Crowdspring | Logo, identity, product, packaging design, web and mobile design, naming and branding | 220,000 | www.crowdspring.com |

| Designhill | Logo design, business card design, brand identity, social media pack | 152,334 | www.designhill.com |

| Crowdsite | Logo and flyer design, name and slogan finding | 90,237 | www.crowdsite.com |

| Logomyway | Logo design | 30,000 | www.logomyway.com |

| 99designs | Logo and brand identity pack | / | www.99designs.com |

| Guerra creativa | Logo design | / | www.guerra-creativa.com |

| 48hourslogo | Logo design | / | www.48hourslogo.com |

| ZBJ.COM | Logo, web, and packaging design, app development | / | www.zbj.com |

| eÿeka | Innovative ideas, concepts, and solutions development | 439,693 | www.eyeka.com |

| Innocentive | Innovative ideas, concepts, and solutions development | 400,000 | www.innocentive.com |

| Open innovability | Innovative ideas, concepts, and solutions development for seeking sustainable development | 400,000 | www.openinnovability.enel.com |

| Herox | Innovative ideas, concepts, and solutions development | 158,593 | www.herox.com |

| HYVE | Innovative ideas, concepts, and solutions development | 98,000 | www.hyvecrowd.com |

| Ideaconnection | Innovative ideas, concepts, and solutions development | 20,000 | www.ideaconnection.com |

| Challenge.gov | Innovative ideas, concepts, and solutions development for the US government | / | www.challenge.gov |

| Tongal | Videos, graphics, photos, and concepts production | 200,000 | www.tongal.com |

| Userfarm | Videos, graphics, photos, and concepts production | 120,000 | www.userfarm.com |

| Zooppa | Videos, graphics, photos, and concepts production | / | www.zooppa.com |

| GoPillar | Architecture and interior design | 40,000 | www.gopillar.com |

| Cad crowd | CAD design and modeling | 31,217 | www.cadcrowd.com |

| Arcbazar | Architecture and interior design | / | www.arcbazar.com |

| Topcoder | Software design and build | 1,500,000 | www.topcoder.com |

| Features | Related OCPs | |

|---|---|---|

| Submitting | Have a guideline for submission submitting | All. |

| Evaluation | Publish an evaluation jury | eÿeka, Open innovability, HYVE, Challenge.gov |

| OCP’s managers/experts aid to evaluate | eÿeka, Open innovability, Zooppa, Topcoder | |

| Publish evaluation criteria | eÿeka, Innocentive, HYVE, Challenge.gov, GoPillar, Arcbazar, Topcoder | |

| Disclosure | Disclose the won submissions of a contest to all solvers | All |

| Disclose a solver’s submissions in a contest to other solvers | All OCPs do it with some preconditions except Open innovability, Herox, Challenge.gov, and Topcoder, which do not do this | |

| Ownership transfer and use | Requesters can own and freely use submissions that have been paid for | All |

| Features | Related OCPs | |

|---|---|---|

| Monetary prizes | Monetary/physical prize | All |

| Multiple winners in a contest decided by requesters | 48hourslogo, Designcrowd, eÿeka, Innocentive, Open innovability, Herox, Ideaconnection, HYVE, Challenge.gov, Tongal, Userfarm, Zooppa, GoPillar, Cad crowd, Arcbazar | |

| Multiple awarded non-winners in a contest decided by OCPs | Designcrowd, eÿeka, HYVE | |

| Affiliate prize | 99designs, Designcrowd, Crowdspring, Crowdsite, Logomyway, Tongal | |

| Non-monetary prizes | Creativity points and/or activity points | Guerra creative, Crowdspring, Designhill, Crowdsite, Freelancer, eÿeka, HYVE, Tongal, GoPillar, Cad crowd, Arcbazar, ZBJ.COM, EPWK |

| Fees | Service fee | Designcrowd, Logomyway, Freelancer, Arcbazar, ZBJ.COM, EPWK |

| Membership fee | Freelancer, GoPillar | |

| Possible taxes | All | |

| Incentives | Specific Service Measures | Decisions Suggested OCPs to Make |

|---|---|---|

| Monetary rewards | Requester prize for winners | Must be provided. |

| OCP prize for non-winners with good performance in a contest | (1) Set or not, (2) how many solvers should be selected to award, (3) what criteria to choose these solvers, and (4) how much should be paid. | |

| Multiple winners in a contest | (1) Suggest requesters set or not, (2) which contests should be suggested, (3) how to talk to requesters, and (4) how to allocate prizes. | |

| Affiliated prize | (1) Set or not, (2) how much should be paid, and (3) how to establish the success of a recommendation by a solver. | |

| Fees | Service fee | (1) Charge or not, (2) who and (3) how much should be charged. |

| Membership fee | (1) Charge or not, (2) how much should be charged, and (3) what priorities should solvers with different membership levels have. | |

| Learning | Disclosure of submissions | (1) Disclose or not, and (2) at contest completed or in-process, (3) all submissions or just the won submissions, and (4) how to protect the IPR. |

| Technique tips, tricks, tools for developing solutions | (1) Offer or not, (2) which techniques should be offered, (3) how to recommend them to solvers, and (4) how often do update them. | |

| Feedback | (1) How to encourage requesters and solvers to give feedback, (2) feedback types, giving timing, and content, and (3) how to calculate and display the performance of solvers or requesters who gave the feedback. | |

| Reputation | Reputation points (creativity and/or activity points) | (1) Set or not, (2) how to compute them, and (3) what can they be used to do. |

| Solver show system | (1) What information should be presented on the solver’s personal homepage, (2) develop solver rank and showcase system or not, (3) what criteria are used to rank solvers, and (4) how to use them to stimulate solvers. | |

| Merge into a community | Community | (1) What information should be presented in the community, and (2) how to make the community a place where solvers, requesters, and OCPs like to share, discuss, and connect with each other. |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, X.; Du, L. What Crowdsourcing Platforms Do for Solvers in Problem-Solving Contests: A Content Analysis of Their Websites. J. Theor. Appl. Electron. Commer. Res. 2021, 16, 1311-1331. https://doi.org/10.3390/jtaer16050074

Zhang X, Du L. What Crowdsourcing Platforms Do for Solvers in Problem-Solving Contests: A Content Analysis of Their Websites. Journal of Theoretical and Applied Electronic Commerce Research. 2021; 16(5):1311-1331. https://doi.org/10.3390/jtaer16050074

Chicago/Turabian StyleZhang, Xuefeng, and Lin Du. 2021. "What Crowdsourcing Platforms Do for Solvers in Problem-Solving Contests: A Content Analysis of Their Websites" Journal of Theoretical and Applied Electronic Commerce Research 16, no. 5: 1311-1331. https://doi.org/10.3390/jtaer16050074

APA StyleZhang, X., & Du, L. (2021). What Crowdsourcing Platforms Do for Solvers in Problem-Solving Contests: A Content Analysis of Their Websites. Journal of Theoretical and Applied Electronic Commerce Research, 16(5), 1311-1331. https://doi.org/10.3390/jtaer16050074