Health Monitoring of Civil Structures: A MCMC Approach Based on a Multi-Fidelity Deep Neural Network Surrogate †

Abstract

:1. Introduction

2. SHM Methodology

2.1. Datasets Definition

2.2. Datasets Population

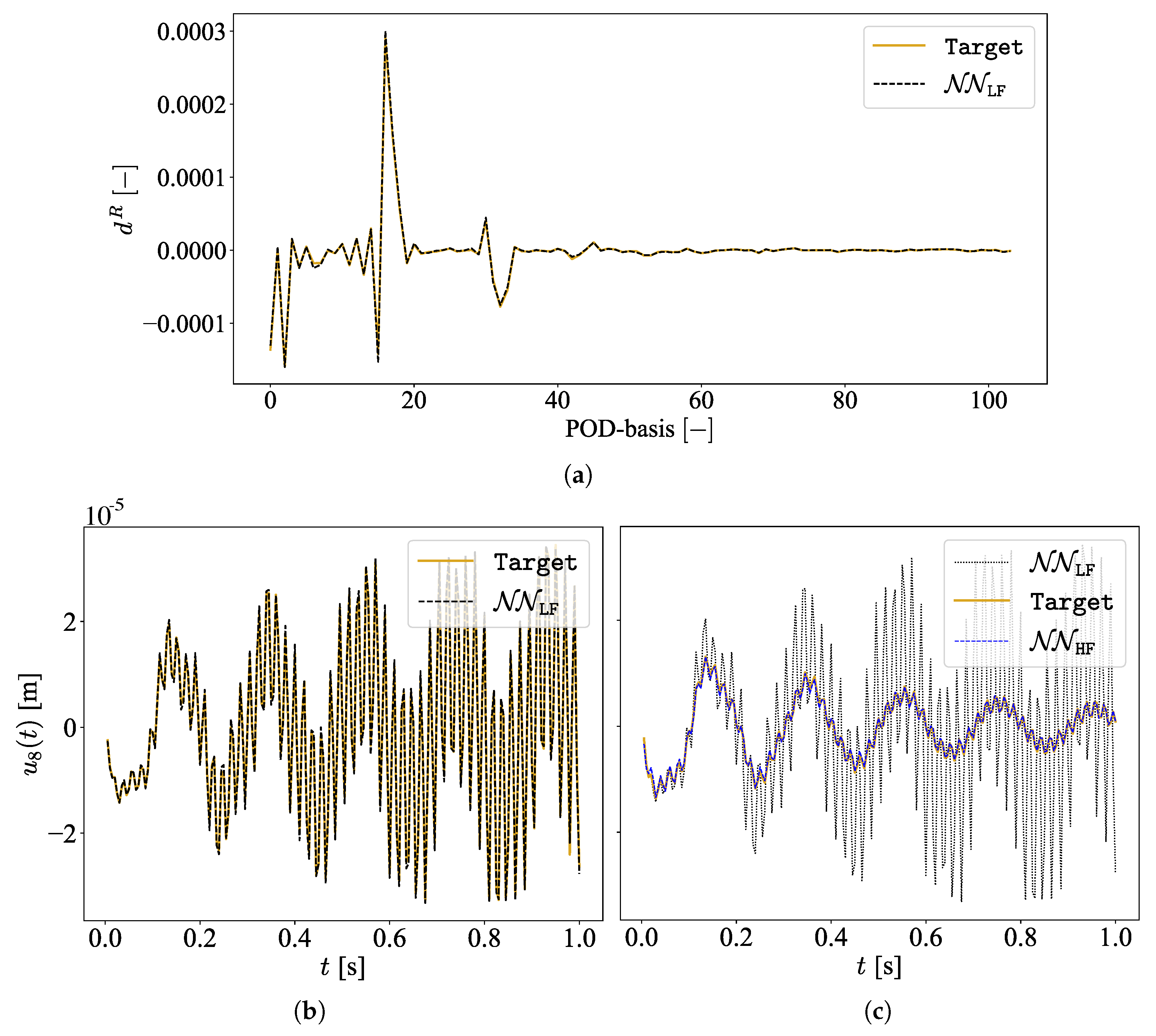

2.3. MF-DNN Surrogate Model

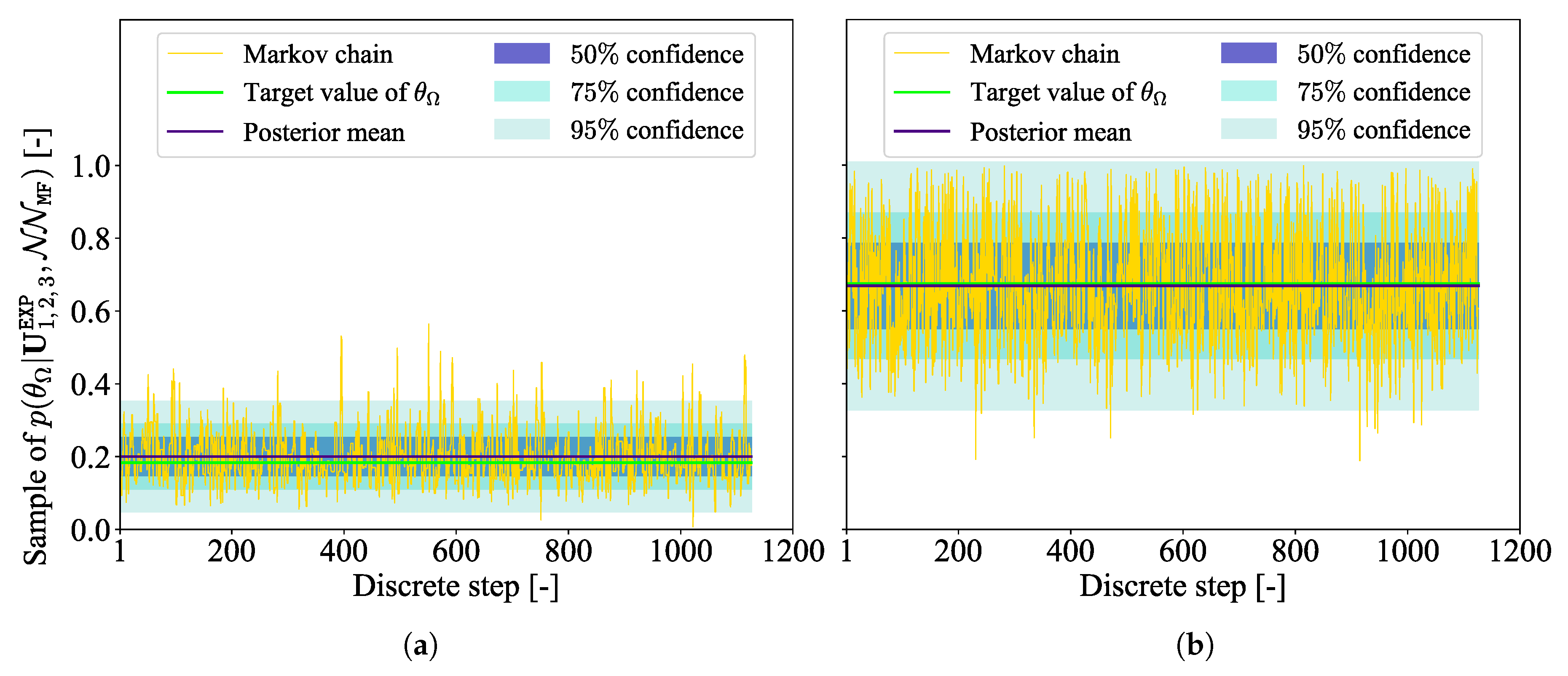

2.4. Damage Localization via MCMC

3. Virtual Experiment

4. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Flah, M.; Nunez, I.; Ben Chaabene, W.; Nehdi, M.L. Machine Learning Algorithms in Civil Structural Health Monitoring: A Systematic Review. Arch. Comput. Methods Eng. 2021, 28, 2621–2643. [Google Scholar] [CrossRef]

- Mariani, S.; Azam, S.E. Health Monitoring of Flexible Structures Via Surface-mounted Microsensors: Network Optimization and Damage Detection. In Proceedings of the 5th ICRAE, Singapore, 20–22 November 2020; pp. 81–86. [Google Scholar] [CrossRef]

- Worden, K. Structural fault detection using a novelty measure. J. Sound Vib. 1997, 201, 85–101. [Google Scholar] [CrossRef]

- Corigliano, A.; Mariani, S. Parameter identification in explicit structural dynamics: Performance of the extended Kalman filter. Comput. Methods Appl. Mech. Eng. 2004, 193, 3807–3835. [Google Scholar] [CrossRef]

- Eftekhar Azam, S.; Chatzi, E.; Papadimitriou, C. A dual Kalman filter approach for state estimation via output-only acceleration measurements. Mech. Syst. Signal Process. 2015, 60–61, 866–886. [Google Scholar] [CrossRef]

- Farrar, C.; Worden, K. Structural Health Monitoring A Machine Learning Perspective; John Wiley & Sons: Hoboken, NJ, USA, 2013. [Google Scholar]

- Fink, O.; Wang, Q.; Svensen, M.; Dersin, P.; Lee, W.; Ducoffe, M. Potential, Challenges and Future Directions for Deep Learning in Prognostics and Health Management Applications. Eng. Appl. Artif. Intell. 2020, 92, 103678. [Google Scholar] [CrossRef]

- Bishop, C.M. Pattern Recognition and Machine Learning (Information Science and Statistics); Springer: Berlin/Heidelberg, Germany, 2006. [Google Scholar]

- Rosafalco, L.; Torzoni, M.; Manzoni, A.; Mariani, S.; Corigliano, A. Online structural health monitoring by model order reduction and deep learning algorithms. Comput. Struct. 2021, 255, 106604. [Google Scholar] [CrossRef]

- Sajedi, S.O.; Liang, X. Vibration-based semantic damage segmentation for large-scale structural health monitoring. Comput.-Aided Civ. 2020, 35, 579–596. [Google Scholar] [CrossRef]

- Mirzazadeh, R.; Eftekhar Azam, S.; Mariani, S. Mechanical characterization of polysilicon MEMS: A hybrid TMCMC/POD-kriging approach. Sensors 2018, 18, 1243. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Lam, H.F.; Yang, J.H.; Au, S.K. Markov chain Monte Carlo-based Bayesian method for structural model updating and damage detection. Struct. Contr. Health Monit. 2018, 25, e2140. [Google Scholar] [CrossRef]

- Torzoni, M.; Rosafalco, L.; Manzoni, A. A Combined Model-Order Reduction and Deep Learning Approach for Structural Health Monitoring Under Varying Operational and Environmental Conditions. Eng. Proc. 2020, 2, 94. [Google Scholar] [CrossRef]

- Meng, X.; Babaee, H.; Karniadakis, G.E. Multi-fidelity Bayesian Neural Networks: Algorithms and Applications. J. Comput. Phys. 2021, 438, 110361. [Google Scholar] [CrossRef]

- Guo, M.; Manzoni, A.; Amendt, M.; Conti, P.; Hesthaven, J.S. Multi-fidelity regression using artificial neural networks: Efficient approximation of parameter-dependent output quantities. arXiv 2021, arXiv:2102.13403. [Google Scholar] [CrossRef]

- Meng, X.; Karniadakis, G.E. A composite neural network that learns from multi-fidelity data: Application to function approximation and inverse PDE problems. J. Comput. Phys. 2020, 401, 109020. [Google Scholar] [CrossRef] [Green Version]

- Quarteroni, A.; Manzoni, A.; Negri, F. Reduced Basis Methods for Partial Differential Equations: An Introduction; Springer: Berlin/Heidelberg, Germany, 2015. [Google Scholar]

- Papadimitriou, C.; Lombaert, G. The effect of prediction error correlation on optimal sensor placement in structural dynamics. Mech. Syst. Signal Process. 2012, 28, 105–127. [Google Scholar] [CrossRef]

- Hastings, W.K. Monte Carlo Sampling Methods Using Markov Chains and Their Applications. Biometrika 1970, 57, 97–109. [Google Scholar] [CrossRef]

- Goulet, J.A. Probabilistic Machine Learning for Civil Engineers; MIT Press: Cambridge, MA, USA, 2020. [Google Scholar]

- Kingma, D.; Ba, J. Adam: A Method for Stochastic Optimization. In Proceedings of the 3rd ICLR, San Diego, CA, USA, 7–9 May 2015; pp. 1–13. [Google Scholar]

- Keras. 2015. Available online: https://keras.io (accessed on 24 February 2022).

- Haario, H.; Saksman, E.; Tamminen, J. An adaptive Metropolis algorithm. Bernoulli 2001, 7, 223–242. [Google Scholar] [CrossRef] [Green Version]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Torzoni, M.; Manzoni, A.; Mariani, S. Health Monitoring of Civil Structures: A MCMC Approach Based on a Multi-Fidelity Deep Neural Network Surrogate. Comput. Sci. Math. Forum 2022, 2, 16. https://doi.org/10.3390/IOCA2021-10889

Torzoni M, Manzoni A, Mariani S. Health Monitoring of Civil Structures: A MCMC Approach Based on a Multi-Fidelity Deep Neural Network Surrogate. Computer Sciences & Mathematics Forum. 2022; 2(1):16. https://doi.org/10.3390/IOCA2021-10889

Chicago/Turabian StyleTorzoni, Matteo, Andrea Manzoni, and Stefano Mariani. 2022. "Health Monitoring of Civil Structures: A MCMC Approach Based on a Multi-Fidelity Deep Neural Network Surrogate" Computer Sciences & Mathematics Forum 2, no. 1: 16. https://doi.org/10.3390/IOCA2021-10889

APA StyleTorzoni, M., Manzoni, A., & Mariani, S. (2022). Health Monitoring of Civil Structures: A MCMC Approach Based on a Multi-Fidelity Deep Neural Network Surrogate. Computer Sciences & Mathematics Forum, 2(1), 16. https://doi.org/10.3390/IOCA2021-10889