Abstract

The expansion of 5G networks has led to larger attack surfaces due to more applications and use cases, more IoT connections, and the distributed 5G system architecture. Existing security frameworks often lack the ability to perform real-time, context-aware risk assessments that are specifically adapted to dynamic 5G environments. In this paper, we present an integrated framework that combines Snort intrusion detection with a risk and impact assessment model to evaluate threats in real time. By correlating intrusion alerts with contextual risk metrics tied to 5G core functions, the framework prioritizes incidents and supports timely mitigation. Evaluation in a controlled testbed shows the framework’s stability, scalability, and effective risk classification, thereby strengthening cybersecurity for next-generation networks.

1. Introduction

5G networks are a fundamental part of the European Union’s digital transformation, providing high-speed connectivity, ultra-low latency, and enabling large-scale Internet of Things (IoT) deployments. Nevertheless, their virtualized, software-defined, and disaggregated architecture brings substantial security challenges. As cyber threats continue to evolve in sophistication, ensuring the robustness of 5G infrastructure demands advanced security mechanisms, including real-time intrusion detection and risk assessment.

Current security frameworks for 5G mainly focus on individual security mechanisms outlined in various standards, such as authentication [1], encryption [1], network slicing security [2], and anomaly detection [3]. While these mechanisms strengthen specific aspects of security, they do not provide a comprehensive, real-time risk and impact assessment framework that dynamically incorporates intrusion detection data. Furthermore, outputs from Intrusion Detection Systems (IDSs), like Snort alerts, are often underutilized in evaluating evolving threats within 5G infrastructures.

While our prior work [4] introduced the concept of correlating intrusion alerts with contextual risk metrics, the present study expands on that foundation by developing a complete risk and impact assessment model and evaluating it in a more extensive testbed.

To bridge these gaps, this paper introduces a risk and impact assessment methodology based on Snort-generated data, developed within the European Security Assessments for Networks and Services in 5G (SAND5G) project [5]. It is a 36-month European initiative involving academic partners, SMEs, National Authorities, and 5G security experts, aiming to deliver a risk and impact assessment platform aligned with EU 5G cybersecurity policies and the 5G security toolbox. The proposed framework serves as a cybersecurity risk and impact assessment platform, created as part of a European research initiative aimed at strengthening the security of 5G infrastructures. It integrates several security tools, including IDSs, honeypots, and real-time risk evaluation models, to detect, analyze, and mitigate cyber threats in 5G networks. Unlike traditional security frameworks that focus only on generating alerts, this platform offers a structured mechanism to assess and prioritize security incidents using contextual information, thereby enhancing the overall resilience of 5G environments. Our approach leverages Snort alerts as inputs for an automated risk assessment framework, enabling real-time evaluation of cyber threats and their potential impact on 5G infrastructure.

The contributions of this paper are threefold: (i) the integration of Snort into the 5G Risk and Impact Assessment Platform security framework, addressing the lack of mechanisms that dynamically incorporate real-time IDS data into 5G risk assessments, (ii) the development of a contextualized risk and impact assessment model that systematically prioritizes security events based on both technical severity and operational criticality, overcoming the limitations of existing models that treat alerts in isolation, and (iii) the experimental evaluation of the proposed methodology within a virtualized 5G testbed, demonstrating its effectiveness in detecting, classifying, and mitigating cyber threats while assessing system scalability and resilience, which has not been shown in prior works. Together, these contributions advance the state of the art in 5G security by providing a practical, modular, and adaptive framework that leverages IDS outputs for real-time, context-aware risk assessment.

Our methodology facilitates continuous security monitoring by utilizing Snort intrusion detection data for proactive risk assessment. This ensures early detection of cyber threats, reduces attack surfaces, and enables quicker mitigation. By correlating Snort alerts with broader security metrics, the framework provides a comprehensive view of network vulnerabilities, enhancing cybersecurity resilience in 5G environments. Moreover, real-time risk assessment through Snort alert analysis is essential for adapting to rapidly emerging threats. By incorporating automation and adaptive risk evaluation techniques, the framework enhances security decision-making, delivering actionable insights to effectively counter cyber threats. Instead of introducing new detection algorithms, our contribution lies in integrating existing IDS outputs with a context-aware risk evaluation framework designed specifically for 5G architectures. The approach is practical and modular, facilitating adaptive threat responses.

The remainder of the paper is organized as follows: Section 2 reviews related work in 5G security and risk assessment. Section 3 describes the proposed methodology, including the integration of Snort with the risk assessment framework. Section 4 details the testbed used to evaluate our solution, and presents the results obtained from the testbed. Finally, Section 5 concludes the paper and outlines directions for future research.

2. Relevant Works

The following section surveys major research directions that shape modern security in telecommunication systems. We organize the discussion into four subsections corresponding to the dominant research themes found in the literature: (i) intrusion detection systems adapted to 5G architectures, (ii) risk and impact assessment approaches used in telecom security, (iii) NFV-based monitoring and orchestration for secure virtualized networks, and (iv) methods that transform IDS alerts into real-time, actionable risk indicators. This structure allows us to highlight how existing solutions address detection, monitoring, and risk evaluation individually, while revealing gaps in unified, cross-layer security integration.

2.1. IDS for 5G

Recent studies emphasize the need for detection mechanisms capable of handling the scale, diversity, and strict latency requirements of 5G networks. Traditional intrusion detection systems often struggle with dynamic network slices and the high-speed, low-latency traffic of modern 5G environments.

One approach proposes a two-stage anomaly detection system that first reduces the size of network data and then uses a neural network to identify abnormal traffic patterns [6]. Experiments using a realistic 5G network platform deployed in the cloud show that this system can detect anomalies in real time with very high accuracy while processing fewer features, reducing computational effort.

Another study examines placing software-based network security functions, such as traffic monitoring and protective mechanisms, on standard hardware within a 5G network [7]. Tests with synthetic traffic show that these functions can meet quality-of-service requirements for different applications, demonstrating flexibility and scalability compared to traditional dedicated hardware solutions.

In addition, transfer learning models have been proposed to detect anomalies in 5G-connected Internet of Things devices [8]. These models combine knowledge from multiple datasets to improve detection across different scenarios. Both neural networks and convolutional networks achieved nearly 99 percent accuracy, showing that these models are robust and adaptable across various 5G IoT deployments.

Slice-specific models have also been developed to detect intrusions in different 5G network slices [9]. For example, one model performs best in high-throughput slices, while another is more efficient for slices requiring very low latency. Evaluation metrics included accuracy, detection quality, and execution time, illustrating trade-offs between detection performance and speed.

Finally, distributed frameworks have been proposed to detect complex attacks that exploit interactions between different network slices [10]. One solution monitors performance indicators across slices to detect coordinated attacks and achieves a very high detection score, demonstrating reliability in large, dynamic 5G networks.

These studies differ in how they handle real-time detection, adaptability to different slices, model complexity, computational cost, and the types of attacks they cover. These dimensions will guide the structured comparison table in Section 2.5.

Despite these advances, most frameworks focus on individual slices or specific attack types and do not provide a unified process that transforms alerts into actionable risk assessments. Our work fills this gap by integrating alerts from multiple slices into a single system that evaluates and mitigates risks in real time.

2.2. Assessment in Telecom Security

Effective risk and impact assessment is essential for maintaining resilient telecommunication operations. Traditional approaches quantify risks by evaluating the economic impact of events and their likelihood, enabling companies to estimate potential losses and categorize risk levels [11]. For example, one study introduces a structured methodology for operational risk assessment in telecommunications companies, combining self-assessment procedures with quantitative analysis to calculate economic losses. Results show that this method provides reliable insights for management decision-making while highlighting operational vulnerabilities.

In addition, frameworks that integrate artificial intelligence can proactively detect anomalies and anticipate potential vulnerabilities in digital infrastructures [12]. Such approaches combine technical and organizational factors, including human processes and regulatory requirements, to provide a holistic view of cyber risks. By using real-time data analysis and automated decision-making, these AI-enabled systems enhance proactive threat detection and adaptive risk management strategies.

Risk assessment frameworks based on established standards, such as ISO 27001 [13], also provide structured procedures for evaluating information security risks [14]. These frameworks guide organizations through the systematic identification, evaluation, and prioritization of threats to ensure that protective measures align with regulatory and operational objectives. Case studies demonstrate that the consistent application of such frameworks improves the reliability and trustworthiness of IT systems in dynamic operational environments.

Beyond these foundational approaches, more recent studies have proposed holistic models that combine technical, economic, operational, and organizational factors to provide comprehensive risk evaluation [15]. These models emphasize multi-layered assessment, considering internal and external threats, regulatory compliance, third-party dependencies, and emerging technologies. By integrating threat identification, vulnerability analysis, and mitigation planning, these frameworks aim to maintain continuous protection and align with industry best practices.

Hybrid approaches that combine artificial intelligence with policy-based evaluation have also emerged, particularly in critical infrastructure sectors [16]. These approaches leverage AI to process large volumes of security data in real time, detect anomalies, and recommend or automate mitigation actions. Evaluations using real-world datasets and case studies demonstrate that AI-enhanced systems improve threat detection accuracy and response speed, while highlighting the importance of balancing performance with regulatory compliance and data privacy concerns.

These frameworks differ in their focus on economic versus operational impact, integration of automated versus human-driven processes, adaptability to dynamic networks, and use of AI for proactive threat management. These dimensions will guide the structured comparison table in Section 2.5.

Despite these advances, most frameworks focus on either operational risk, security compliance, or AI-enhanced detection, without providing a unified, real-time method that transforms alerts into actionable risk mitigation strategies. Our work addresses this gap by combining risk scoring, policy evaluation, and real-time alert analysis into a single integrated system.

2.3. Security Monitoring NFV-Based

Network function virtualization has transformed network management by enabling scalable, flexible, and cost-effective deployment of network services. Virtualized Network Functions and Service Function Chains are core components of this architecture, and their security is critical for maintaining overall network integrity. Embedding anomaly detection mechanisms into NFV architectures allows continuous monitoring of these components, ensuring that unusual behaviors are promptly identified [17]. Experimental results demonstrate that entropy-based detection techniques can achieve up to 98 percent accuracy while preserving the performance of the virtualized environment.

Further work has explored the integration of machine learning algorithms within NFV Management and Orchestration systems. These algorithms monitor threats across network services and virtualized functions, enabling adaptive response to detected anomalies [18]. Using supervised learning models such as Random Forest, Support Vector Machines, and Gradient Boosting, experiments conducted on Kubernetes-based virtual networks achieved over 99 percent accuracy in detecting various network attacks, including denial-of-service, probing, and remote exploits. These approaches highlight the effectiveness of automated monitoring and management in reducing human intervention and improving service quality.

In addition, threat taxonomies for NFV-based 5G networks illustrate the need for continuous improvement in security monitoring to address complex attack surfaces arising from multi-domain collaboration [19]. By understanding potential vulnerabilities introduced by shared infrastructure and multiple business collaborations, these frameworks provide a basis for enhancing proactive defenses and supporting real-time risk assessment.

More recent research proposes intelligent self-healing frameworks that integrate anomaly detection with automated orchestration [20]. These solutions use hybrid AI models, including deep autoencoders and isolation forests, to detect both known and unknown anomalies. Coupled with self-healing modules, the frameworks automatically scale, migrate, or reroute virtual network functions in response to detected faults. Experiments demonstrate a fifty-five percent reduction in mean time to recovery, fewer false alarms, and detection accuracy above 95 percent, illustrating the potential for resilient and self-managed virtual networks.

Another line of research focuses on security service chaining, which combines virtual network function deployment with optimized scheduling and resource allocation [21]. These approaches consider both execution cost and security requirements, using multi-level security models and optimization techniques to ensure that service chains meet quality-of-service and deadline constraints. Heuristic scheduling methods, such as particle swarm optimization, have been shown to reduce security violation ratios, service-level agreement violations, and average delays compared to conventional models.

These studies differ in their focus on proactive versus reactive monitoring, automated self-healing capabilities, integration of machine learning for anomaly detection, security-aware scheduling, and resilience under dynamic network conditions. These dimensions will guide the structured comparison table in Section 2.5.

Despite these advances, most NFV-based monitoring frameworks focus on either anomaly detection, orchestration, or security-aware scheduling, without combining these elements into a unified, real-time risk assessment system. Our work addresses this gap by integrating automated monitoring, intelligent anomaly detection, and security service chaining into a single framework for comprehensive network resilience.

2.4. IDS Alerts for Risk Assessment

Turning intrusion detection alerts into actionable risk indicators has become central to modern cybersecurity architectures. Recent frameworks emphasize collaborative intelligence environments that unify data from different security tools and operational domains to support real-time risk evaluation [22]. By combining machine learning techniques with large-scale data orchestration, these systems correlate alerts, identify behavioral patterns, and flag anomalies that traditional rule-based mechanisms tend to overlook. This line of work highlights the value of rapid information exchange and coordinated workflows, especially in environments where threats emerge across interconnected digital and physical systems.

Building on this direction, risk assessment approaches in artificial intelligence and Internet-of-Things ecosystems focus on adaptive learning and predictive modeling [23]. These methods integrate anomaly detection with behavioral analytics to capture unusual device activity, adversarial manipulations, and early indicators of coordinated attacks. Experimental results demonstrate that adaptive models can detect risks in fast-changing environments where static signature-based systems fall short. Such approaches also underline the need for continuous model refinement, as new attack vectors appear at the intersection of artificial intelligence, automation, and pervasive connectivity.

A related stream of research explores artificial intelligence-driven alert correlation for risk assessment, emphasizing predictive analysis and robustness against hostile manipulation [24]. These studies investigate how attackers use deceptive inputs to bypass machine learning models and compromise automated decision-making processes. By modeling these techniques and integrating adversarial resilience into cybersecurity workflows, the proposed frameworks improve the interpretability, transparency, and reliability of alert handling. The findings emphasize that trusted artificial intelligence models can significantly enhance cyber resilience when alert correlation is performed in real time.

Our previous work examines intrusion detection for fifth-generation mobile networks, where virtualized and software-defined architectures expose extensive attack surfaces. Within this context, recent research proposes real-time threat and impact assessment using intrusion detection alerts correlated with dynamic risk metrics [4]. The solution processes alerts at runtime, evaluates their severity, and supports network operators in selecting mitigation strategies. Preliminary results indicate enhanced accuracy in identifying harmful traffic patterns and improved responsiveness compared to traditional reactive mechanisms.

Complementary research describes risk assessment challenges and testing methodologies tailored to fifth-generation mobile infrastructures [25]. These studies highlight the limitations of relying solely on static security design, stressing the importance of continuous testing across the service lifecycle. Proposed frameworks include specialized test suites, cryptographic function validation, and ontology-based models for organizing the complexity of fifth-generation threats. This work underlines the need for continuous monitoring and detailed scenario analysis to ensure that intrusion alerts reflect operational conditions in cloud-native and slice-based environments.

These contributions differ in several dimensions relevant to the structured comparison in Section 2.5, including real-time versus offline alert processing, predictive versus reactive correlation, resilience against adversarial manipulation, adaptation to fifth-generation architectures, and the ability to translate alerts into risk scores that guide mitigation.

Despite significant progress, current systems often address only part of the problem-either predictive alert correlation, artificial intelligence-based anomaly detection, or fifth-generation-specific risk modeling—without providing a unified mechanism that processes intrusion alerts, quantifies their impact, and updates risk levels across all network layers. Our work addresses this gap by integrating real-time intrusion detection alerts into a dynamic risk and impact assessment model tailored to next-generation network environments.

2.5. Comparative Analysis of Existing Approaches

To contextualize the position of SAND5G within current research, we compare the surveyed frameworks across the key criteria identified in this section: (i) real-time detection capability, (ii) adaptability to 5G network slicing, (iii) integration of risk and impact assessment, (iv) computational and operational efficiency, and (v) empirical validation in realistic environments. Table 1 summarizes the main characteristics of representative solutions.

Table 1.

Comparison of related 5G security, monitoring, and risk assessment approaches.

The analysis highlights that existing solutions typically optimize for a single dimension-either anomaly detection, NFV orchestration, slice-specific monitoring, or standalone risk modeling. Very few integrate these components, and none combine multi-slice IDS alerts with contextual 5G risk scoring and automated mitigation workflows. In contrast, SAND5G provides a unified, cross-layer mechanism that correlates real-time intrusion alerts with adaptive risk models tailored to 5G architectures. Its multi-dimensional evaluation enables operators to move beyond detection toward actionable, continuously updated security intelligence.

In summary, although significant advances have been made across IDS design, risk assessment methodologies, and NFV-based monitoring, current solutions generally address these components in isolation. Most approaches deliver strong detection, proactive monitoring, or structured risk modeling, but few provide a coordinated mechanism that correlates distributed IDS alerts with comprehensive, real-time risk evaluation. Our work addresses this gap by introducing an integrated framework that (i) performs accurate IDS alert triaging, (ii) evaluates alerts through context-aware risk metrics, and (iii) orchestrates rapid, policy-driven mitigation actions. This unified perspective strengthens the resilience of modern telecommunication networks by bridging detection, assessment, and response.

SAND5G (as previously introduced SAND5G is a modular research platform developed within an ongoing European research project and is not released as a standalone software package with versioned public releases) distinguishes itself from existing 5G security frameworks by combining intrusion detection alerts with a dynamic risk and impact assessment model tailored specifically to 5G architectures. Whereas standard IDS solutions focus primarily on traffic signatures or anomaly patterns, they often overlook the operational complexity of network slicing, signaling procedures, and cloud-native service chains. By correlating Snort3 (version snort3-3.10.0.0) alerts with contextual 5G risk metrics-derived from slice characteristics, service functions, and signaling behaviors-SAND5G provides a multi-layered, adaptive evaluation of ongoing threats. This holistic approach captures the evolving nature of 5G environments and establishes a foundation for resilient network operations by translating raw alerts into actionable risk intelligence.

3. Proposed Methodology

Ensuring robust security in 5G networks requires a holistic approach that goes beyond standalone intrusion detection or static risk assessment. The rapid growth of 5G infrastructure introduces new cybersecurity challenges due to the increased complexity of network functions, extensive virtualization, and highly dynamic network traffic. Traditional IDS solutions typically focus on signature-based or anomaly-based detection but do not provide contextualized risk assessment. As a result, they generate large volumes of alerts without offering sufficient insight into the potential impact of each detected threat. This often leads to alert fatigue, where security analysts must manually examine numerous alerts, many of which are false positives or low-priority events, delaying timely responses to critical threats.

To overcome these limitations, we propose a unified security framework that integrates a Snort-based IDS into our security platform, combining continuous threat detection with a risk and impact assessment module. This integration allows for real-time processing of IDS alerts, transforming raw security events into actionable intelligence that reflects both threat severity and potential consequences for network operations. By assessing each alert against a set of predefined security metrics, our framework automatically assigns risk scores, enabling security teams to prioritize high-impact threats and filter out less significant anomalies.

Unlike traditional IDS deployments that rely exclusively on static, rule-based evaluations, our framework incorporates an adaptive risk assessment mechanism that continuously updates threat classifications as new risks emerge. This approach ensures that 5G networks remain resilient against evolving cyber threats, reduces false positives, and enhances detection accuracy. Moreover, by automating the prioritization and mitigation process, our framework significantly shortens response times, facilitating proactive security management rather than reactive measures.

This study advances 5G security through the integration of Snort3 within our proposed cybersecurity risk and impact assessment platform. The solution provides a multi-layered security evaluation framework that correlates real-time IDS alerts with contextual risk metrics. Integrating Snort3 into this system establishes a direct connection between intrusion detection and structured risk assessment, enabling automated prioritization of threats based on severity and impact on 5G network functions. While Snort was chosen as the primary IDS due to its widespread adoption and adaptability, alternative open-source solutions such as Suricata (version 8.0.2) [26] or Zeek (version 7.0.11) [27] also provide similar capabilities, albeit with different focuses on network traffic analysis. Snort’s flexible rule sets make it particularly suitable for 5G monitoring, allowing adaptation to the unique traffic patterns and protocols inherent in these networks.

The SAND5G project represents an active and ongoing European research initiative focused on strengthening the security of emerging 5G infrastructures. The platform integrates modern security tools, such as including IDSs, honeypots, and dynamic risk assessment engines. It provides capabilities beyond traditional IDS-only approaches. Although it builds upon foundational cybersecurity concepts, SAND5G is a current and actively maintained solution developed within a consortium of academic, industrial, and national authority partners. Its role is to deliver a real-time risk and impact assessment platform aligned with European 5G cybersecurity policies, making it suitable for contemporary 5G security research and operational evaluation.

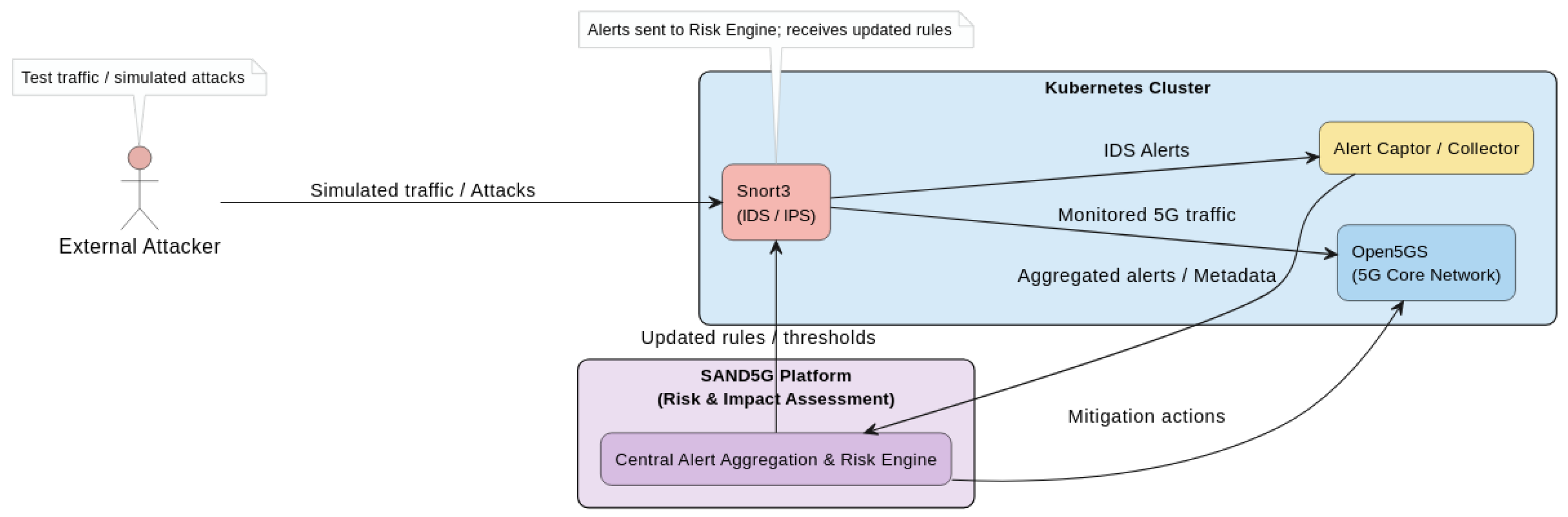

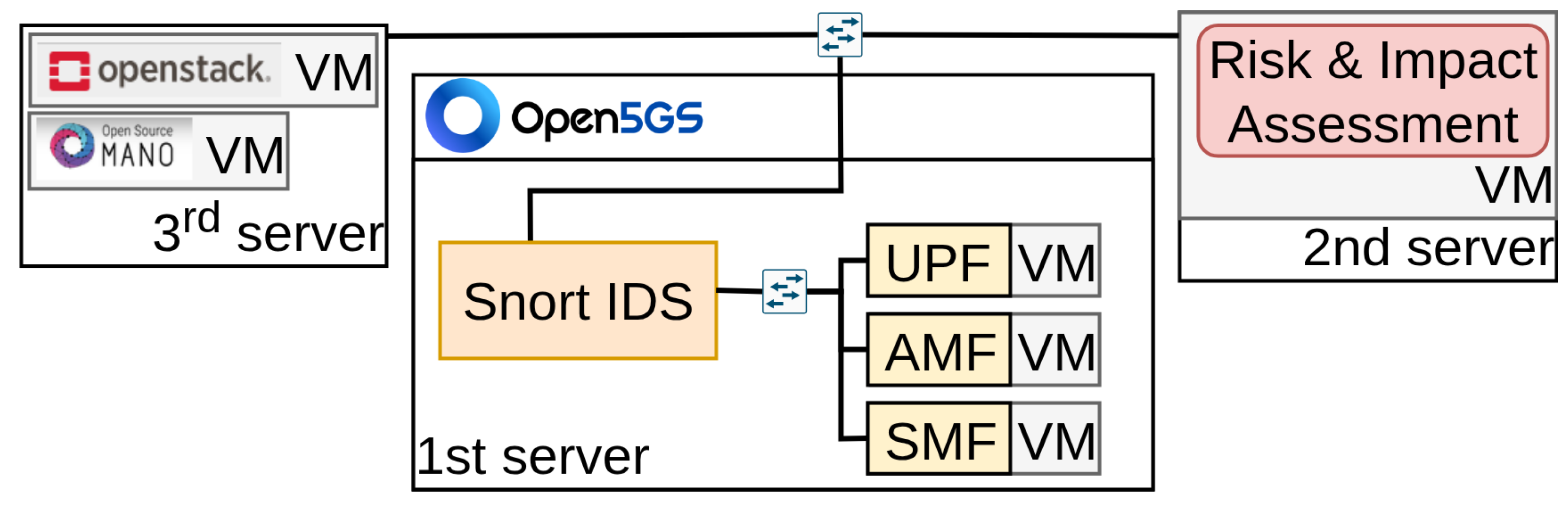

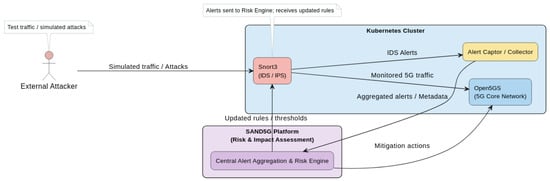

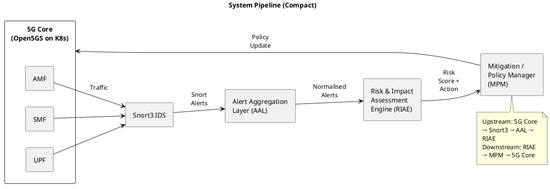

To better illustrate the proposed framework, Figure 1 presents a schematic overview of our architecture. The diagram highlights the key components of the experimental setup: (i) the 5G core network (Open5GS) running within a Kubernetes cluster, (ii) the Snort3 IDS for real-time traffic monitoring, and (iii) the central alert aggregation and risk assessment engine within the SAND5G platform. It also shows the data flow from detected network events to the risk evaluation module and back to the 5G infrastructure for mitigation or rule updates. This figure serves as a reference for understanding the subsequent experimental evaluation, as it shows how the framework collects, processes, and prioritizes alerts during testing.

Figure 1.

Overviewof our proposed architecture integrating Snort3 with the 5G core network and the central risk assessment engine.

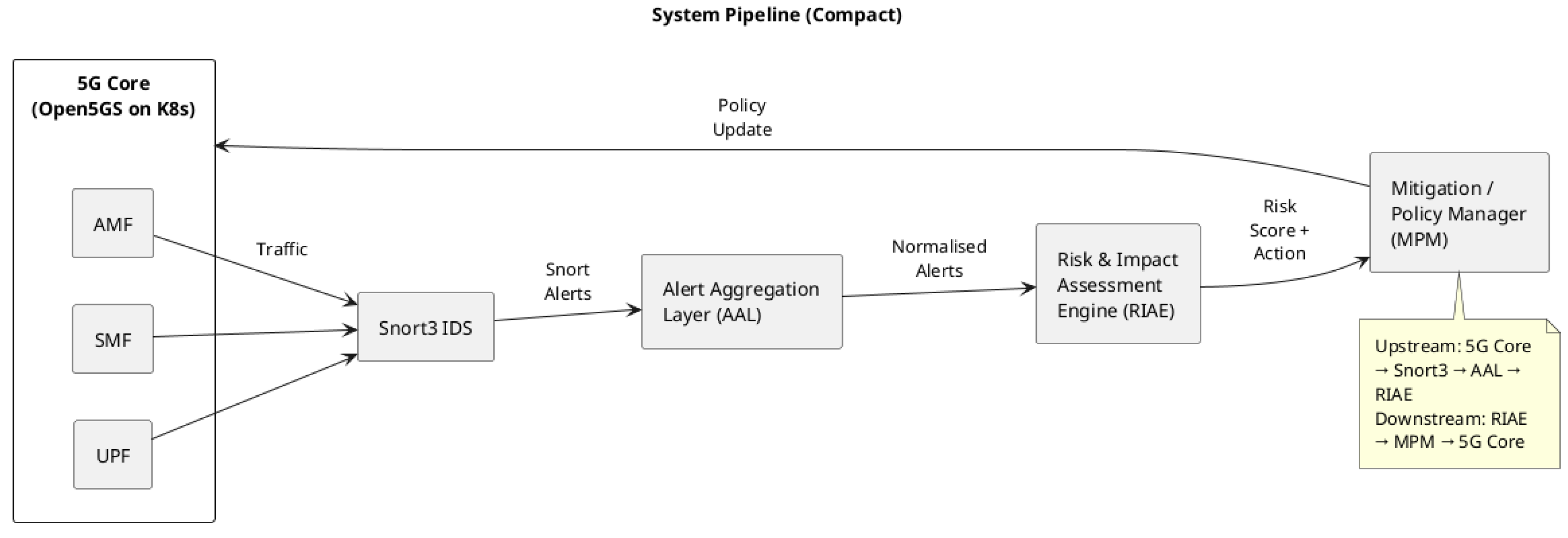

Figure 2 presents the end-to-end pipeline that links traffic monitoring in the 5G Core with the platform’s risk assessment engine. The upstream path starts with packets flowing through the AMF, SMF, and UPF, which are continuously inspected by Snort3. Alerts generated by Snort are forwarded to the alert aggregation layer, where they are normalised and enriched before entering the risk evaluation engine. The downstream path begins once the engine computes a contextualised risk score and determines the appropriate mitigation or policy action. This decision is then pushed back to the 5G Core through the mitigation manager, completing a full detection-to-response loop.

Figure 2.

System pipeline (Upstream/Downstream).

Additionally, this integration contributes to the broader objective of strengthening automated cybersecurity monitoring in 5G networks, ensuring that real-time attack detection is not only driven by raw alerts but also contextualized within a structured risk assessment framework. This approach establishes a foundation for future extensions, such as incorporating additional IDS solutions or leveraging AI-based analytics for more adaptive and intelligent threat classification. Although the current implementation focuses on rule-based detection and contextual risk scoring, the architecture has been intentionally designed to support the integration of AI-driven analytics in future iterations. Such extensions may include machine learning models for anomaly detection, adaptive thresholding, or predictive risk scoring, enabling more proactive and intelligent security responses. These capabilities are part of the planned roadmap of the SAND5G platform and will be explored as future work.

Building on this foundation, the contributions of this work are twofold. (i) We integrate Snort into the SAND5G security framework, enabling real-time correlation of IDS alerts with risk assessment models. By embedding Snort directly into the platform, intrusion detection is no longer an isolated process; instead, it feeds into a structured risk evaluation mechanism that supports more informed, context-aware security responses. (ii) We develop a risk and impact assessment model that introduces an adaptive mechanism for assigning priority levels to detected threats. This ensures mitigation strategies align dynamically with real-time risk evaluations, optimizing resource allocation and enhancing the resilience of 5G networks against evolving cyber threats.

3.1. Integration of IDS Within SAND5G

A central contribution of this work is the seamless integration of Snort into our architecture. Snort is configured to continuously monitor network traffic, identifying anomalous behavior and generating security alerts. Unlike conventional IDS deployments that operate independently, our approach links Snort directly with the risk assessment module, ensuring that alerts are automatically and immediately processed upon detection. This real-time connection improves overall security monitoring by correlating intrusion alerts with network context, reducing false positives and enhancing threat classification accuracy.

Within the 5G Core, Snort targets multiple components for attack detection: (i) the User Plane Function (UPF), responsible for packet routing, forwarding, and Quality of Service (QoS) enforcement, which is susceptible to Internet Protocol (IP) attacks; (ii) the Access and Mobility Management Function (AMF), which handles user authentication, mobility management, and session control, exposing it to User Datagram Protocol (UDP) signaling attacks; and (iii) the Session Management Function (SMF), which manages session establishment and maintenance, making it vulnerable to Transmission Control Protocol (TCP) threats, such as session hijacking or malicious payload injections These components are essential for 5G operations and represent high-value targets for attackers.

Snort inspects both incoming and outgoing 5G Core traffic, applying both rule-based and anomaly-based detection techniques to identify potential threats. When suspicious activity is detected, it generates alerts containing key information, including attack type, source and destination addresses, and severity. These alerts are automatically forwarded to the risk assessment module through a dedicated processing pipeline. Unlike traditional security solutions that require manual intervention for alert analysis, our framework evaluates each alert in real time, accelerating decision-making and enabling rapid differentiation between minor anomalies and critical security incidents, which demand immediate attention.

By embedding Snort within the platform and coupling it with an automated risk assessment system, our approach ensures that threat detection is not merely reactive but also intelligently prioritized, reinforcing the overall security posture of 5G networks.

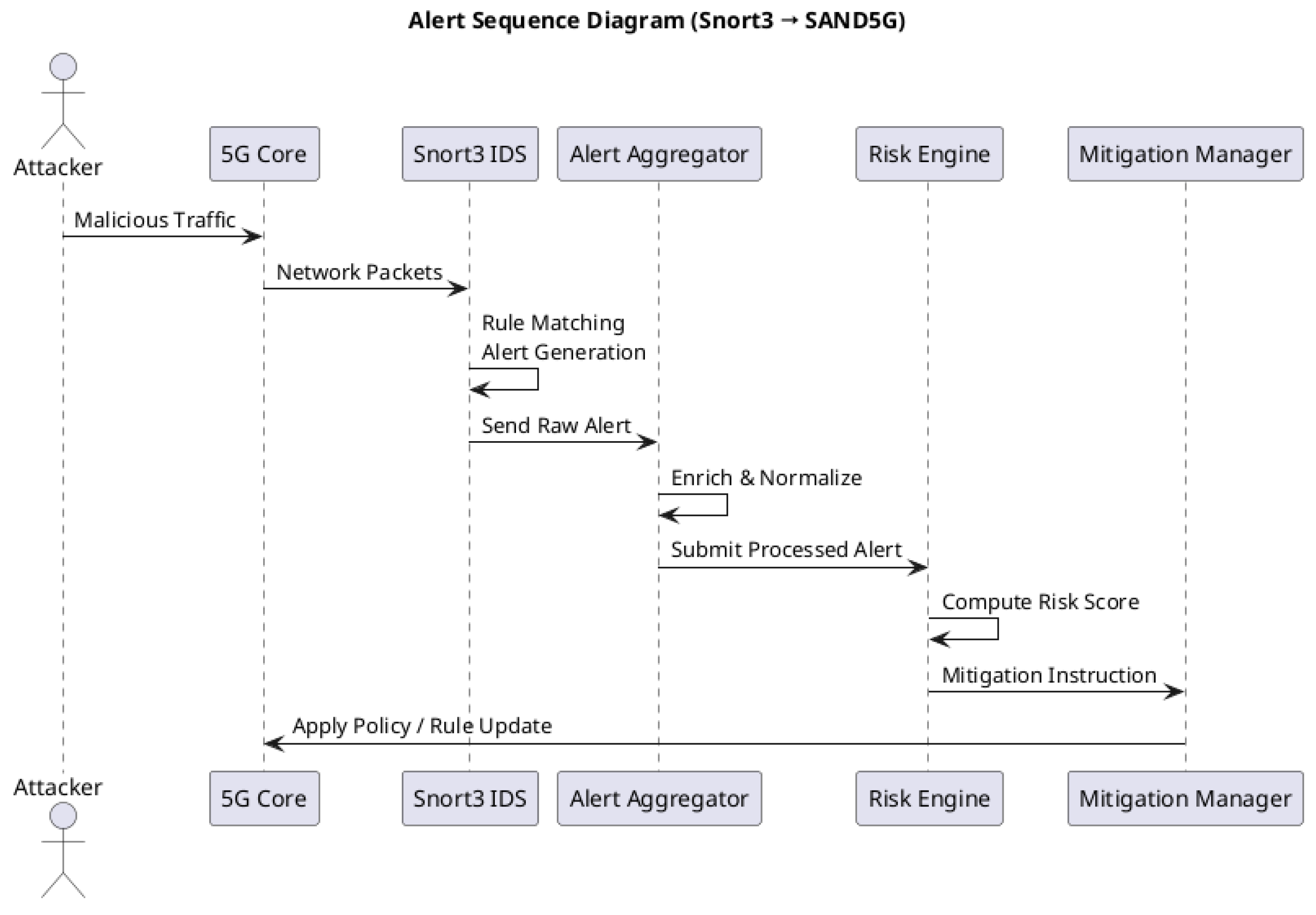

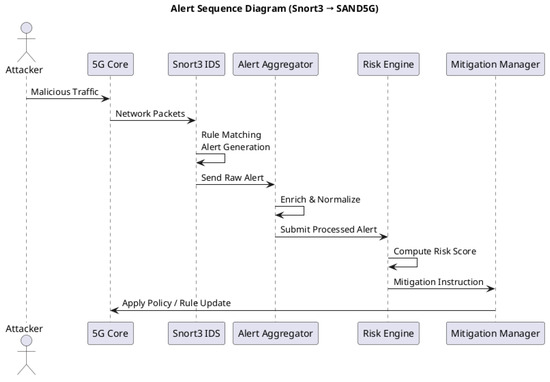

In Figure 3, the sequence diagram shows how a detected event travels through the system from the moment malicious traffic enters the 5G Core until the corresponding mitigation is applied. Snort captures packet flows, checks them against its rule set, and generates an alert that is immediately forwarded to the aggregation layer. After enrichment, the alert is passed to the risk engine, which computes its contextual risk score. If required, a mitigation instruction is issued and enforced through the 5G Core, completing an automated detection-classification-response cycle.

Figure 3.

Alert sequence diagram.

3.2. Model of Risk and Impact Assessment

Our second primary contribution is the development of a risk and impact assessment model that evaluates IDS alerts in real time. In contrast to static risk assessment approaches that rely only on predefined rules, our model dynamically adapts to new threat intelligence and evolving attack patterns, ensuring that high-risk incidents are given prompt attention.

The assessment process is structured around two main components: (i) quantitative metrics, including estimated economic impact and attack probability, and (ii) qualitative factors, such as the network function affected and potential operational disruption. Upon receiving an alert from Snort, the system evaluates it against these parameters and assigns it to a specific risk tier. This ensures that critical threats trigger immediate mitigation actions, while lower-priority alerts are monitored over time, optimizing the allocation of security resources and reinforcing network resilience.

In the Appendix A, Table A1 illustrates a full mapping of all Snort-detected issues to their corresponding contextualized risk scores. Each row in Table A1 corresponds to a security alert detected by Snort3 and contains various metadata fields alongside a contextualized risk evaluation. The columns in the table represent, respectively, the targeted 5G network function (such as AMF, SMF, or UPF), the attack type and classtype [28] which provide basic classifications derived from the Snort rule headers and taxonomy, a brief textual description summarizing the nature of the attack, and the priority level assigned by Snort where a value of 1 denotes very high priority and 4 indicates low priority. Additionally, the MITRE ATT&CK [29] Technique column maps each alert to known adversarial tactics from the widely-used MITRE ATT&CK framework, while the detected issue specifies the particular system component or data object affected by the alert. The mitigation column lists recommended countermeasures or policies designed to address the threat. Finally, the risk score column presents the risk label computed by our proposed model, reflecting a combined assessment of the threat’s severity and impact.

To keep the main body of the paper concise while still capturing the diversity of detected threats, Table A1 presents a carefully selected subset of Snort3 alerts. This subset was extracted from a larger dataset and chosen to provide a balanced representation across key dimensions: it includes alerts covering all Snort priority levels (1–4), spans all three core 5G network functions (UPF, SMF, AMF), and maps to a variety of MITRE ATT&CK techniques (e.g., reconnaissance, privilege escalation, remote execution, and denial-of-service). Furthermore, it features a mix of resulting risk classifications, from low to critical, illustrating how the proposed model handles a spectrum of threat scenarios.

To better illustrate the structure and semantics of Table A1, consider the following two examples. In the first case, a “Successful User Privilege Gain” alert is detected in the SMF component, associated with the Snort “successful-user” classtype and MITRE ATT&CK technique “Valid Accounts”. This alert indicates unauthorized access to an account, which could compromise session management functions in the control plane. The assigned priority level is 1, corresponding to the highest urgency. Given the high severity of this attack technique, the critical role of SMF, and the potential for stealthy credential misuse, the model computes a high risk score of 18, which places this incident in the “Critical” risk tier. The proposed mitigation, “Privileged Account Management”, reflects best practices for restricting access and ensuring the integrity of sensitive functions.

In contrast, consider an alert classified as “Generic ICMP Event”, detected in the UPF component and tagged with the MITRE technique “Ingress Tool Transfer”. This type of alert typically reflects the use of common network utilities (e.g., ping) for reconnaissance or transfer of payloads. It carries a priority level of 3 (low urgency) and maps to a low-severity technique. Since the UPF represents the user plane, it is assigned a relatively low impact weight, and the visibility of ICMP traffic makes the alert easily detectable. The resulting risk score is 3, placing the issue in the “Low” risk category. The recommended mitigation is general “Network Intrusion Prevention”, emphasizing early-stage containment and monitoring.

The risk score in the final column is calculated using a multiplicative formula that combines four distinct weighted factors as follows:

Each weight captures a specific dimension of risk: (i) priority weight, (ii) severity weight, (iii) impact weight, and (iv) detectability weight. The Priority weight () is derived by inverting the Snort priority scale to reflect greater urgency with higher weight (see Table 2):

Table 2.

Priority weight.

The Severity weight () is assigned based on the MITRE ATT&CK technique impact and attacker goals (see Table 3):

Table 3.

Severity weight.

The Impact weight () reflects the criticality of the targeted 5G network function (see Table 4):

Table 4.

Impact weight.

The Detectability weight () captures the ease or difficulty of detecting the threat (see Table 5):

Table 5.

Detectability weight.

After computing the raw risk score by multiplying these weights, it is mapped to qualitative risk labels as follows (see Table 6):

Table 6.

Riskscore.

This method allows the model to contextually prioritize security alerts by combining technical severity, asset criticality, and attack characteristics into a comprehensive risk score.

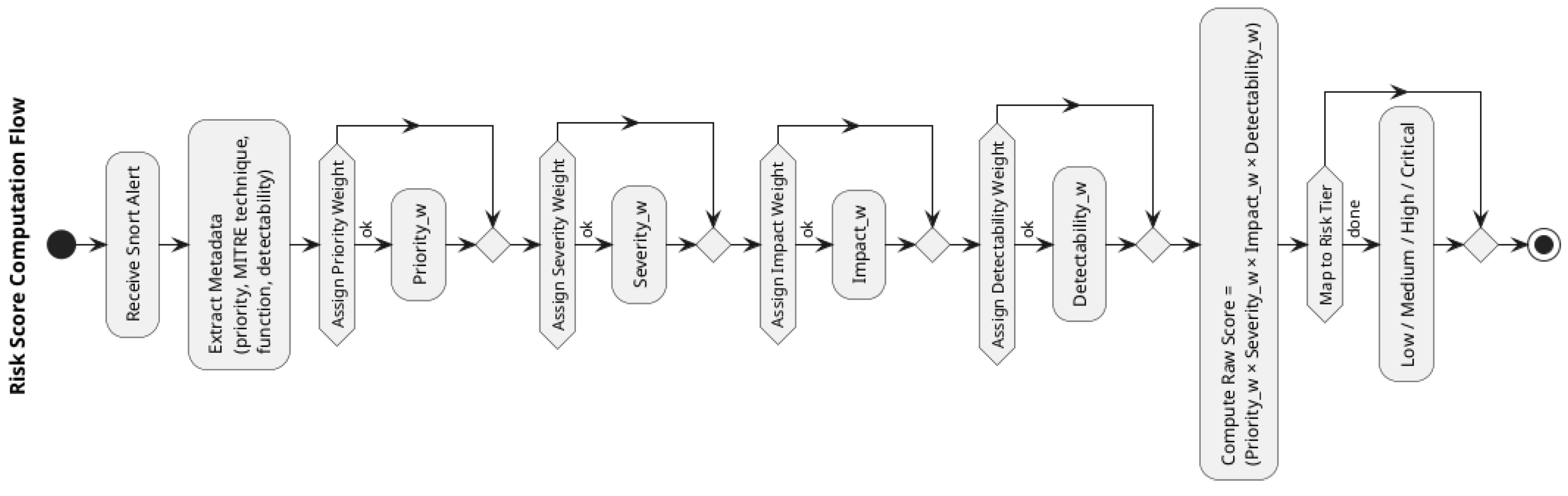

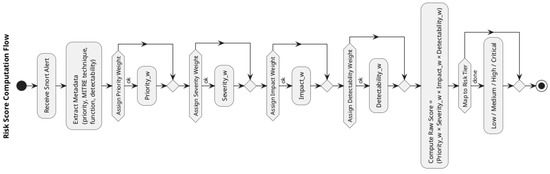

Figure 4 breaks down the computation steps of the proposed risk model. Once a Snort alert arrives, the system extracts key metadata-including priority, MITRE classification, targeted network function, and detectability. Each element is mapped to its corresponding weight based on predefined tables. The raw risk score is then computed as the product of the four weights. Finally, the numerical value is mapped to a qualitative label (Low, Medium, High, or Critical), which determines how the system prioritizes the security incident.

Figure 4.

Risk score computation flow diagram.

To illustrate the calculation of the risk score, consider the following entry from Table A1 as an example:

SMF, TCP, suspicious-filename-detect, A suspicious filename was detected, 2, User Execution: Malicious File, File, Execution Prevention, Critical (18)

This alert targets the SMF (Session Management Function), is classified with a Snort priority of 2, maps to the MITRE ATT&CK technique “User Execution: Malicious File” (a high-severity technique), and is characterized as moderately stealthy regarding detectability. Based on the weights defined above:

Multiplying these together yields the raw score:

According to the risk label mapping (see Table 7), a raw score of 18 corresponds to the Critical tier. This example demonstrates how the model contextualizes Snort alerts and maps them into actionable risk tiers by combining both technical and operational factors.

Table 7.

CriticalTier.

More specifically, to evaluate the impact of detected intrusions in a contextualized manner, our proposed model incorporates a risk assessment mechanism that correlates real-time alerts from the IDS with contextual asset importance and threat severity. The resulting risk level reflects both the technical severity of the alert and the functional criticality of the affected asset within the 5G architecture.

We define five distinct risk levels to capture the progression from minor issues to high-impact security breaches. Very Low Risk (Level 1) represents minor events with negligible impact on system integrity, typically resulting from benign or misconfigured traffic. Low Risk (Level 2) corresponds to alerts indicating limited exploitation potential or affecting non-critical components with minimal disruption. Medium Risk (Level 3) includes events that may disrupt localized functions or expose vulnerabilities requiring attention, yet do not compromise core services. High Risk (Level 4) denotes alerts suggesting successful exploitation or persistent attacks on essential functions, potentially impacting operational continuity. Finally, Very High Risk (Level 5) refers to critical threats with severe consequences, such as denial-of-service attacks on vital services, unauthorized access to sensitive data, or cascading effects across multiple network slices.

These risk levels are computed dynamically by combining IDS-generated severity scores with contextual asset weights, derived from domain-specific importance metrics and the 5G system topology. Aggregating these factors allows for the prioritization of mitigation actions and effective allocation of security resources, supporting a resilient and adaptive incident response strategy.

In our model, impact assessment evaluates the potential consequences of a security event on the system’s operational state, considering both technical and functional importance of the targeted components. Specifically, the assessment focuses on the criticality of assets within the 5G architecture, for instance, whether a compromised element supports core control functions, handles sensitive user data, or facilitates inter-slice communication. This contextual approach enables estimation of each incident’s disruption potential, accounting not only for immediate service degradation, but also for longer-term effects such as loss of availability, integrity violations, or cascading disruptions across dependent services. By correlating these impact metrics with real-time IDS alert data, the model provides a nuanced understanding of how different threats influence system resilience, informing risk prioritization and response strategies.

While Table A1 summarizes the mapping between alert severity, asset criticality, and corresponding risk levels for selected cases, this description clarifies the rationale behind each level and highlights the model’s contribution to real-time risk awareness in 5G networks.

The proposed model integrates both qualitative and quantitative measures to enable comprehensive risk and impact evaluation. These include, among others, the IDS alert frequency and severity, the classification of attack types, and the criticality of affected assets. Although these metrics form the core of our current implementation, they are not intended to be exhaustive or final.

Rather, this framework represents a preliminary, extensible approach designed to demonstrate the feasibility of correlating real-time IDS outputs with contextual asset information in 5G environments. The architecture is modular, allowing future integration of additional standardized metrics, such as those from NIST [30], ISO/IEC [31], or ENISA [32], as well as customized indicators tailored to specific deployment scenarios.

This approach balances practical implementation constraints with the need for meaningful evaluation, ensuring that risk and impact assessment remains both actionable and adaptable in real-time threat environments.

A notable feature of our model is its ability to continuously update risk assessments as new alerts and contextual information become available. This ensures adaptability to evolving threats, reduces false positives, and enhances situational awareness. By combining real-time IDS alerts with broader risk evaluation metrics, our model provides a structured, automated method for prioritizing security incidents, improving both efficiency and threat mitigation in complex 5G networks.

Our proposed architecture supports context-aware cybersecurity assessment within 5G infrastructures, emphasizing real-time intrusion detection, risk evaluation, and responsive mitigation. It is designed as a modular, extensible framework that ingests live alerts from a deployed IDS, such as Snort3, and correlates them with asset-specific contextual data, including functional criticality and network topology. Central to the system is a Risk and Impact Assessment Engine, which calculates contextualized risk scores by combining IDS alert severity with asset criticality. This evaluation is continuously updated as new alerts arrive and asset states evolve. The output feeds directly into a Decision-Making and Response Module, triggering automated mitigation actions according to predefined escalation policies and threat levels.

The architecture also incorporates a Continuous Monitoring Loop, ensuring persistent observation of both mitigation effectiveness and overall network posture. This loop enables adaptive refinement of risk scores, supports identification of emerging threats or cascading effects, and informs operators through an alert prioritization interface when human-in-the-loop decisions are needed. This integrated approach promotes resilient 5G operations via a feedback-driven, automated security mechanism that adapts to both cyber threats and system-level dynamics. The design also allows for future expansion with: (i) additional detection engines, (ii) analytical modules, or (iii) policy refinement tools, all while maintaining scalability and adaptability across heterogeneous deployments.

In summary, our integrated framework represents a significant advancement in 5G security by bridging real-time intrusion detection with adaptive risk assessment. Snort’s integration within the SAND5G platform enables continuous monitoring, while our risk model transforms IDS alerts into structured risk evaluations. The inclusion of a dedicated risk evaluation table and comprehensive schematic figure highlights the framework’s practicality and effectiveness. This contribution lays a strong foundation for enhancing cybersecurity in next-generation networks by supporting faster, more informed security responses. Specifically, our approach improves incident response times in industrial control systems by integrating real-time IDS alerts with our risk assessment model, enabling automated threat prioritization. Consequently, critical threats can be mitigated much more rapidly than with conventional manual processes. Having established the design and functionality of our proposed framework, the next step involves evaluating its effectiveness in a real-world environment.

4. Results Analysis

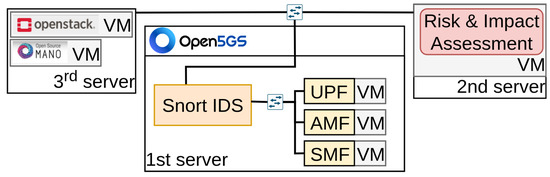

To assess the effectiveness of our proposed security framework, we deployed a dedicated testbed within our platform, simulating realistic 5G Core operations. The testbed comprises three Virtual Machines (VMs), each assigned a specific 5G Core function and subjected to a corresponding type of attack. These VMs are hosted on a server equipped with an Intel Core i7 processor (4.6 GHz, 16 threads), 16 GB RAM, and 15.6 GB swap memory. The host runs Linux (Ubuntu 22.04) to ensure system stability. Each VM is allocated 6 GB of RAM and 3 virtual Central Processing Units (vCPUs) to maintain optimal computational efficiency. The virtualization layer is managed by OpenStack, which handles VM instantiation and resource orchestration. The 5G Core functions are deployed using free5GC, a widely adopted open-source implementation. Table 8 lists the attack types simulated within each VM. Although this setup currently operates within a controlled virtualized environment, it was intentionally designed to validate the feasibility and responsiveness of the IDS risk assessment integration, providing a baseline proof-of-concept that can be extended to real-world 5G deployments with more complex topologies and operational variability in future work.

Table 8.

Mapping of 5G Core Components to Simulated Cyberattack Protocols.

Each VM runs an independent Snort IDS instance in Network Intrusion Detection Mode (NIDS) to inspect live network traffic. Snort is configured with customized rule sets for both signature- and anomaly-based threat detection. Upon identifying an anomaly, Snort generates an alert in Unified2 format, which is transmitted via a lightweight message queue to the Risk and Impact Assessment Module, deployed as a separate virtualized function.

Snort3 produces alerts corresponding to detected intrusions, which are logged and structured for further analysis. These logs capture timestamps, detection events, and rule-specific metadata, enabling the computation of detection latency by comparing attack and alert timestamps. System resource utilization is also monitored during detection events to evaluate performance impact. Using OpenStack (version 2025.1 Epoxy)for virtualization provides a scalable, modular testbed closely resembling real-world 5G deployments. The VM-based setup simulates 5G network functions, ensuring that Snort3’s monitoring operates in an environment representative of cloud-based 5G infrastructures.

The risk assessment module follows a client-server architecture, where Snort acts as a client sending alerts to the module (server) for classification. Upon receiving an alert, the module extracts metadata such as attack type, source/destination IP, timestamp, and severity. Each alert is assigned a risk score based on predefined security metrics, determining whether immediate mitigation is necessary or further monitoring is sufficient. These metrics include alert severity, frequency of similar alerts within a given timeframe, criticality of the targeted network function, and historical patterns of the specific attack type. Together, these parameters enable context-aware threat prioritization. High-risk alerts trigger automated mitigation actions, such as traffic filtering, IP blacklisting, or rate-limiting, while lower-priority alerts are logged for continuous monitoring to detect coordinated attacks.

For structured communication between Snort and the risk module, a RESTful Application Programming Interface (API) was implemented, allowing real-time data exchange. Alerts are formatted in JSON (following the ECMA-404 standard)and transmitted via HTTP POST (compliant with HTTP/1.1)requests for efficient processing. The decision-making engine within the risk module cross-references incoming alerts with historical data, dynamically refining risk classifications. This approach ensures adaptability to emerging threats while minimizing false positives.

The overall testbed architecture is illustrated in Figure 5, showing the three VMs executing 5G Core functions, Snort IDS instances monitoring network traffic, and the data flow between Snort and the Risk and Impact Assessment Module. The figure also highlights the feedback loop connecting the risk module with the security management dashboard, supporting continuous monitoring and automated security responses.

Figure 5.

Testbed schematic: Snort IDS instances on each VM.

Anomaly detection follows a structured workflow. Snort continuously inspects network packets in each VM, identifying suspicious patterns based on configured rules. Upon detecting an attack, Snort generates an alert containing metadata such as attack vector, timestamp, and IP addresses. Alerts are forwarded to the risk module via the REST API for classification. Critical events trigger automated mitigation, while lower-risk alerts are logged and monitored to enable correlation with future network activity.

The testbed is designed to scale, allowing additional VMs and expanded attack scenarios. Future enhancements will include refinement of Snort’s detection rules, integration of machine learning models for adaptive anomaly detection, and optimization of risk thresholds to reduce false positives. While the current evaluation focuses on IP, TCP, and UDP-based attacks, these do not fully capture 5G-specific challenges. Future evaluations will incorporate attacks such as signaling storms, slice misconfigurations, and service-based architecture exploits. Addressing these 5G-specific threats will provide a more realistic assessment of Snort’s capabilities in protecting next-generation networks.

Our evaluation shows a significant reduction in response times for high-priority threats. Automating threat prioritization through real-time IDS alerts and the risk assessment model enables faster mitigation, demonstrating improved incident resolution. Although comprehensive metrics will be explored in future work, initial results indicate notable gains in response speed compared to traditional manual methods.

With the testbed fully deployed and integrated, we conducted controlled experiments to evaluate Snort’s detection capabilities across multiple attack types, assess the accuracy of the risk assessment module, and measure system responsiveness under real-time attack conditions. The following section presents the findings, detailing the framework’s effectiveness in detecting, classifying, and mitigating security threats within a 5G environment.

To evaluate the performance of our Snort-based security framework within our platform, we conducted two main tests: (i) a stability test, designed to measure detection time consistency across repeated executions, and (ii) a scalability test, aimed at assessing how the system handles increasing alert volumes. Both tests considered three attack types: IP-based, UDP-based, and TCP-based.

Our assessment of Snort3’s intrusion detection capabilities provides insights into both its strengths and potential limitations. Detection times varied across protocols, reflecting the way Snort3 processes different attack types. On average, TCP-based attacks were detected faster than UDP and IP-based threats. This aligns with expectations because TCP’s connection-oriented behavior facilitates more reliable rule-based signature matching. In contrast, UDP attacks exhibited higher detection latency, likely due to the protocol’s stateless nature, which makes tracking attack patterns more challenging for Snort.

Detected alerts were categorized into risk levels using our risk and impact assessment model. As shown in Table A1, various attack types—including suspicious traffic, UDP flood attempts, and malicious TCP payloads—were mapped to specific priority levels. This ensures that high-risk alerts trigger immediate mitigation, while lower-priority incidents are continuously monitored for potential escalation. This structured mapping reinforces the correspondence between detection outputs and our risk-based prioritization framework.

A key feature of our system is real-time risk updating. As new alerts are generated by Snort, the risk assessment module continuously re-evaluates and prioritizes them, allowing network operators to respond dynamically to unfolding security events. This capability provides real-time insights into evolving threats, enhancing the overall resilience of the 5G infrastructure.

The risk levels shown in Table A1 correlate closely with the detection performance observed during the scalability tests. For instance, attacks that exhibit longer detection times, such as UDP flood attempts, are assigned higher risk levels to ensure immediate mitigation.

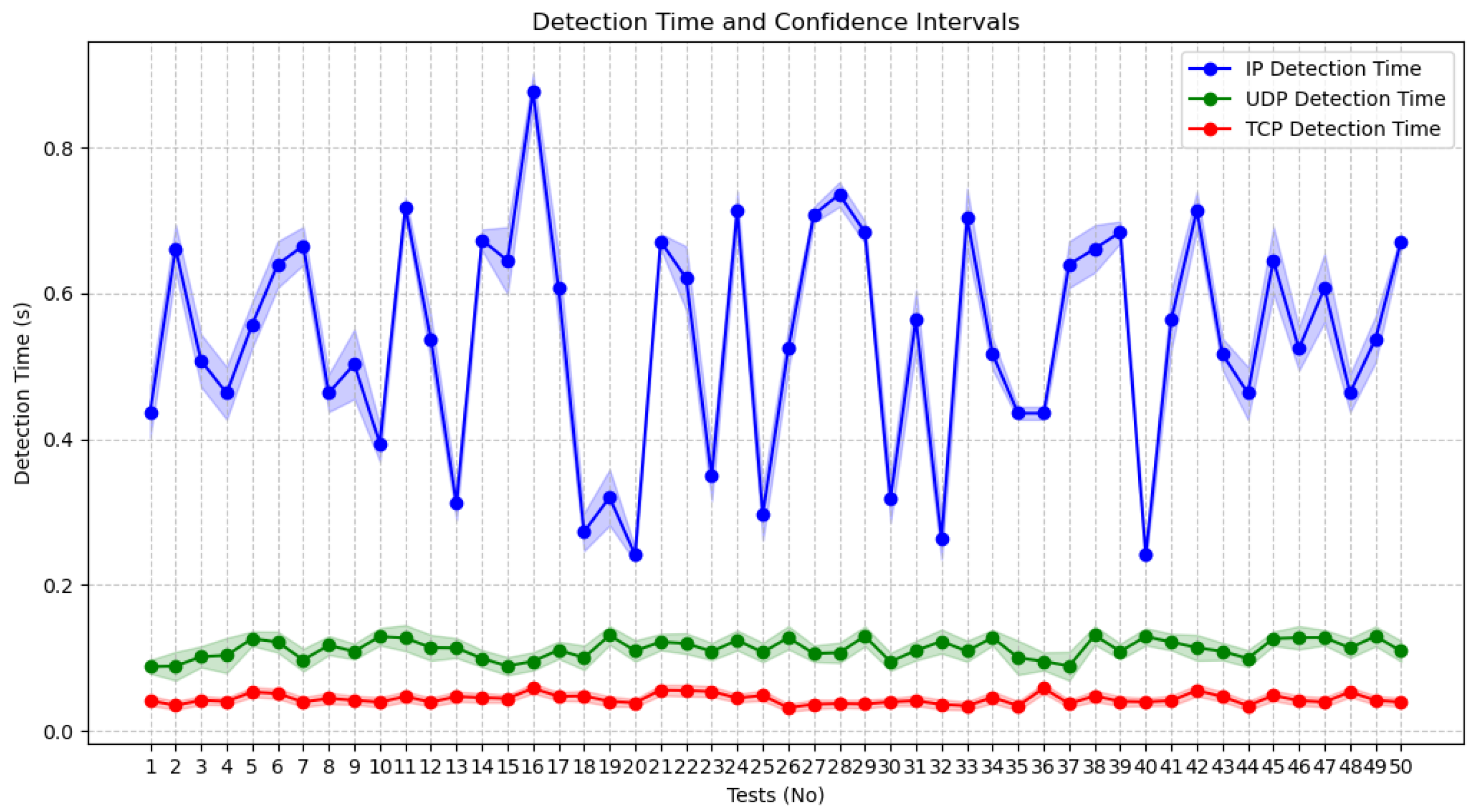

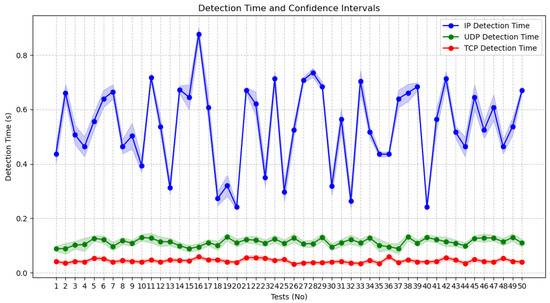

The stability evaluation consisted of 50 repeated detection experiments, each lasting one minute. This aimed to verify whether Snort’s detection times remained consistent across multiple runs. Figure 6 shows that TCP and UDP detection times remained consistently low, below 0.2 s, with minimal fluctuations. These results indicate reliable threat detection and classification for these protocols.

Figure 6.

Detection times measured over 50 repeated runs for for evaluating stability.

IP-based detection, however, displayed greater variability, ranging from 0.2 to 0.9 s. This is primarily due to the methodology used to measure detection times. For IP-based attacks, timestamps were derived from the Internet Control Message Protocol (ICMP) data fields, directly reflecting detection delays. For TCP and UDP, detection times were estimated from Wireshark analyses of .pcap files, using the time delta between captured frames, since these protocols lack dedicated timestamp fields. Despite the observed fluctuations, IP detection remained within acceptable bounds, demonstrating overall system stability across attack types.

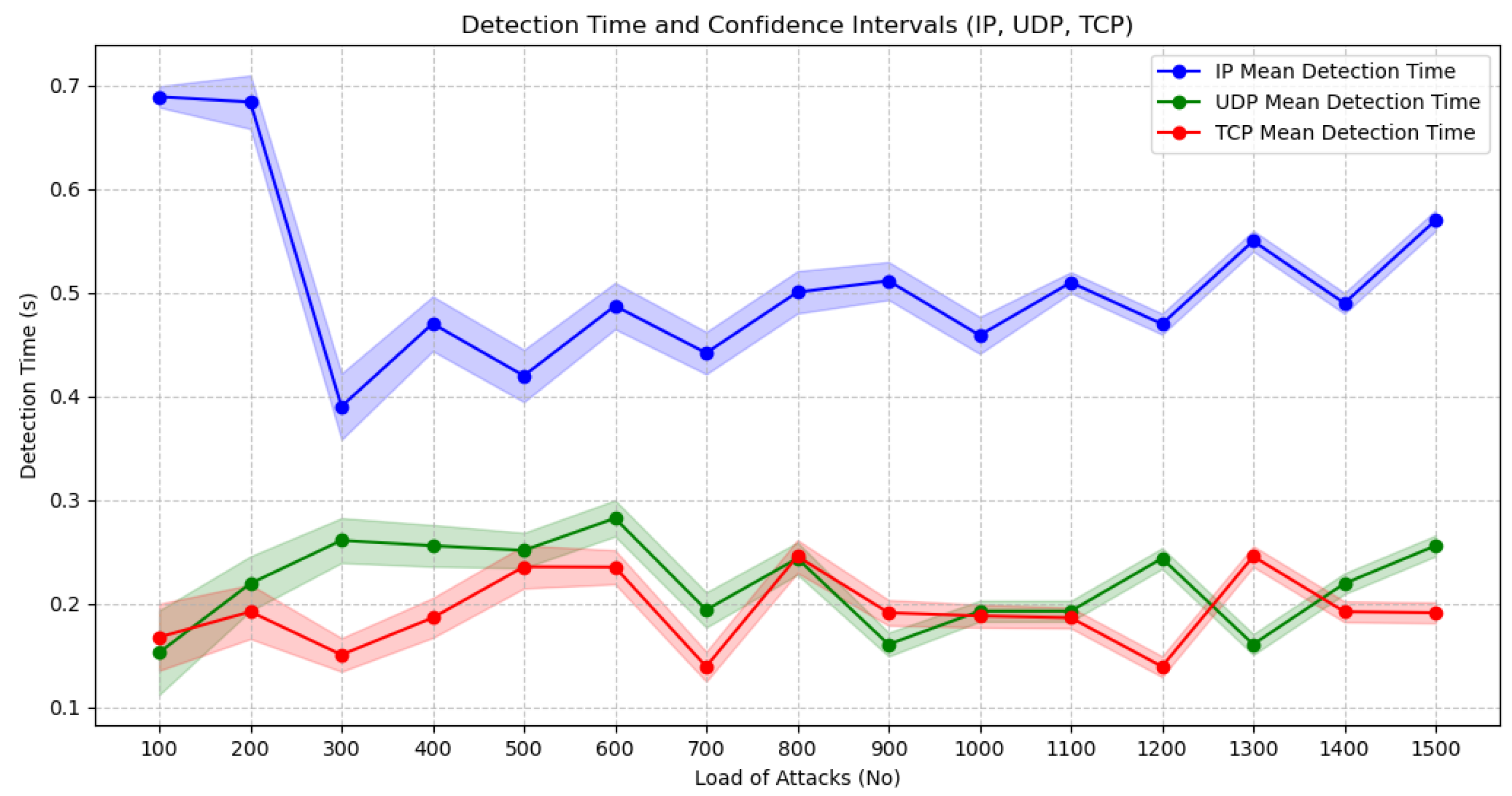

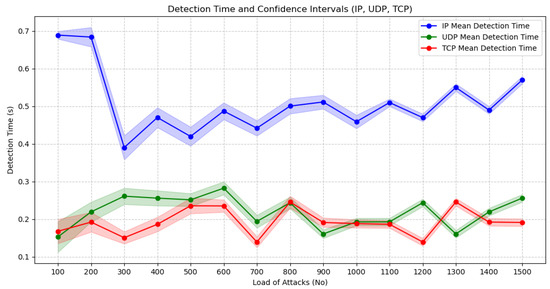

To assess scalability, we measured the system’s performance under increasing alert loads. Alerts were generated incrementally, starting at 100 and increasing by 100 per iteration up to 1500 alerts. Figure 7 shows that TCP and UDP detection times remained below 0.3 s, even as alert volume grew, confirming that the system scales effectively and maintains consistent performance.

Figure 7.

Detection times observed as the number of alerts increases from 100 to 1500 for evaluating scalability.

Results indicate that Snort3 performs well under normal load conditions but experiences slight performance degradation at higher attack intensities. As the number of attacks per second increased, detection delays grew modestly, with CPU utilization peaking at 85% in some scenarios. This suggests that while Snort3 can manage real-time detection under typical conditions, high-traffic 5G environments may require optimized rule sets or parallelized deployments. The evaluation emphasizes response times and computational overhead as primary performance metrics, while future work will extend this to include false positive/negative rates, mitigation latency, and impact on service continuity across network slices.

For IP-based detection, response times ranged from 0.3 to 0.7 s but did not increase noticeably with higher alert volumes, indicating that the framework can efficiently handle large alert loads without degrading detection performance, making it suitable for real-time 5G security monitoring.

The analysis highlights two main observations: (i) stability is maintained across repeated tests, with minimal deviations in TCP and UDP detection times, and (ii) scalability is achieved, as detection times remain consistent even as alert volume grows. These results confirm that the framework is robust and efficient, capable of sustaining real-time intrusion detection in dynamic 5G environments. While our evaluation currently focuses on detection times, future work will examine false positives, missed detections, and system overhead under more realistic, large-scale conditions to fully assess performance in real-world 5G scenarios.

The stability and scalability results align closely with the risk assessment outcomes in Table A1. Detection times for different attack types directly influence the prioritization of alerts, ensuring that high-risk incidents, such as UDP floods or malicious TCP payloads, trigger immediate mitigation, while lower-priority events are continuously monitored.

Risk Prioritization Based on the Impact of Performance Metrics

To better understand operational performance, we examined how real-time detection latency and system resource utilization influence alert prioritization and the corresponding mapping to risk levels shown in Table A1.

Detection latency affects how quickly an alert can be processed and assigned a risk level. Although latency is not currently a direct input to our risk scoring formula, shorter detection times accelerate the response cycle for high-priority incidents.

By cross-referencing selected Snort3 alerts from Table A1 with their average detection latencies observed during stability and scalability tests, we derived a representative mapping of detection times to risk scores (Table 9). The highest-risk alerts generally corresponded to lower-latency detections, such as malicious file access or privilege escalation events, enabling prompt mitigation. However, not all low-latency events are high-risk (e.g., routine TCP connection detection), nor are all high-latency events low-risk. For example, UDP flood attacks—though slightly slower to detect—still receive high-risk scores due to their potential impact.

Table 9.

Representative Alerts: Risk Score vs. Detection Latency.

These observations suggest that, while detection latency does not directly define risk, there is a meaningful correlation between faster detection and higher-priority incidents in our testbed conditions. Additionally, attack characteristics, such as stateless UDP or ICMP protocols, inherently contribute to longer detection times, a factor that must be considered when designing mitigation and response policies.

5. Conclusions

This paper presents a comprehensive risk and impact assessment framework for 5G Core security, leveraging Snort intrusion detection integrated within the SAND5G platform. Our framework effectively detects, classifies, and contextualizes security alerts in real time, transforming raw IDS data into structured risk scores that enable prioritized incident response.

Evaluation results confirm that the risk classification model accurately maps detected alerts to meaningful risk tiers, reflecting both technical severity and asset criticality. The observed stability and scalability of detection latency across different protocols and alert volumes underscore the framework’s capability to maintain reliable and timely risk assessment even under heavy load conditions.

By correlating detection latency with assigned risk scores, we demonstrate that higher-risk alerts are typically processed faster, facilitating prompt mitigation. Furthermore, the contextualization of alerts based on 5G network functions ensures that security incidents impacting critical assets receive appropriate attention. This integration of real-time IDS outputs with a risk model provides a modular and extensible foundation for resilient 5G security monitoring and incident management.

Despite the demonstrated effectiveness of our framework, several limitations should be acknowledged. The current evaluation is conducted within a controlled virtualized environment, which, while representative, does not fully capture the complexity and variability of real-world 5G deployments. The implemented testbed simulates only three core network functions and a limited set of attack types, leaving other 5G-specific threats, such as signaling storms or slice misconfigurations, untested. Additionally, the risk model currently relies on predefined weights and thresholds, which may not fully account for dynamic or evolving attack patterns, and detection latency is not directly incorporated into the risk scoring formula. Performance assessments primarily focus on detection times and system stability, without considering metrics such as false positives, false negatives, or the impact of mitigation actions on service continuity across multiple slices. These factors collectively highlight areas for further refinement to ensure broader applicability and robustness in operational 5G networks.

Future work will extend this framework to address more complex and realistic 5G deployments. Planned enhancements include the integration of additional IDS solutions and AI-based analytics for adaptive threat classification, refinement of detection rules to reduce false positives, and incorporation of standardized or customized risk metrics. The framework’s modular architecture allows expansion with additional detection engines, analytical modules, or policy refinement tools while maintaining scalability across heterogeneous environments. Furthermore, future evaluations will consider 5G-specific threats, such as signaling storms, slice misconfigurations, and service-based architecture exploits, alongside performance metrics including false positives/negatives, mitigation latency, and overall impact on service continuity. These extensions aim to ensure resilient, automated, and context-aware security monitoring for next-generation networks.

Author Contributions

Conceptualization, D.V., K.L., S.D. and P.K.; methodology, D.V., K.L., S.D. and P.K.; software, D.V.; validation, S.D.; formal analysis, D.V.; investigation, D.V. and P.K.; resources, P.K.; data curation, D.V.; writing—original draft preparation, D.V.; writing—review and editing, D.V., K.L., S.D. and P.K.; visualization, D.V.; supervision, S.D. and P.K.; project administration, P.K.; All authors have read and agreed to the published version of the manuscript.

Funding

This article describes work undertaken in the context of the SAND5G project, “Security Assessments for Networks anD services in 5G” which has received funding from the European Union’s Digital Europe programme under grant agreement No 101127979 and is supported by European Cybersecurity Competence Center. Views and opinions expressed are however those of the author(s) only and do not necessarily reflect those of the European Union. Neither the European Union nor the granting authority can be held responsible for them.

Data Availability Statement

The data supporting the findings of this study are available from the corresponding author upon reasonable request.

Conflicts of Interest

The authors declare no conflicts of interest.

Appendix A

Table A1.

Detected Issues mapped to Risk Levels.

Table A1.

Detected Issues mapped to Risk Levels.

| Function | Attack Type | Classtype | Description | Priority | MITRE ATT&CK Technique | Detected Issue | Mitigation | Risk Score |

|---|---|---|---|---|---|---|---|---|

| UPF | IP | not-suspicious | Not Suspicious Traffic | 3 | Not applicable | Normal IP-based service | Baseline Monitoring | Low (1) |

| UPF | IP | unknown | Unknown Traffic | 3 | Active Scanning | Network Traffic | Pre-compro-mise | Low (2) |

| SMF | TCP | bad-unknown | Potentially Bad Traffic | 2 | Exploita-tion for Client Execution | Network Traffic | Exploit Protection | Critical (18) |

| AMF | UDP | attempted-recon | Attempted Information Leak | 2 | Active Scanning | Network Traffic | Pre-compro-mise | Medium (12) |

| AMF | UDP | successful-recon-limited | Information Leak | 2 | Gather Victim Network Info | Network Traffic | Pre-compro-mise | Medium (12) |

| AMF | UDP | successful-recon-largescale | Large Scale Information Leak | 2 | Gather Victim Identity Information | Network Traffic | Pre-compro-mise | Medium (12) |

| AMF | UDP | attempted-dos | Attempted Denial of Service | 2 | Endpoint Denial of Service | Network Traffic | Filter Network Traffic | Critical (27) |

| AMF | UDP | successful-dos | Denial of Service | 2 | Endpoint Denial of Service | Network Traffic | Filter Network Traffic | Critical (27) |

| SMF | TCP | attempted-user | Attempted User Privilege Gain | 1 | Valid Accounts | User Account | Privileged Account Management | Critical (18) |

| SMF | TCP | unsuccess-ful-user | Unsuccessful User Privilege Gain | 1 | Valid Accounts | User Account | Privileged Account Management | Critical (18) |

| SMF | TCP | successful-user | Successful User Privilege Gain | 1 | Valid Accounts | User Account | Privileged Account Management | Critical (18) |

| SMF | TCP | attempted-admin | Attempted Administrator Privilege Gain | 1 | Exploita-tion for Privilege Escalation | Process | Exploit Protection | Critical (27) |

| SMF | TCP | successful-admin | Successful Administrator Privilege Gain | 1 | Exploita-tion for Privilege Escalation | Process | Exploit Protection | Critical (27) |

| AMF | UDP | rpc-portmap-decode | Decode of an RPC Query | 2 | Remote Services | Network Traffic | Limit Access to Resource Over Network | Medium (12) |

| SMF | TCP | shellcode-detect | Executable code was detected | 1 | Exploitation for Client Execution | Process | Applica-tion Isolation and Sandboxing | Critical (27) |

| SMF | TCP | string-detect | A suspicious string was detected | 3 | Command and Scripting Interpreter | Command | Execution Prevention | Medium (9) |

| SMF | TCP | suspicious-filename-detect | A suspicious filename was detected | 2 | User Execution: Malicious File | File | Execution Prevention | Critical (18) |

| SMF | TCP | suspicious-login | An attempted login using a suspicious username was detected | 2 | Valid Accounts | Logon Session | Multi-factor Authentication | Critical (18) |

| SMF | TCP | system-call-detect | A system call was detected | 2 | Process Injection | Process | Behavior Prevention on Endpoint | Medium (12) |

| UPF | IP | tcp-connection | A TCP connection was detected | 4 | Applica-tion Layer Protocol: Web Protocols | Network Traffic | Network Intrusion Prevention | Low (0) |

| SMF | TCP | trojan-activity | A Network Trojan was detected | 1 | Ingress Tool Transfer | Network Traffic | Network Intrusion Prevention | Critical (27) |

| UPF | IP | unusual-client-port-connection | A client was using an unusual port | 2 | Applica-tion Layer Protocol: Web Protocols | Network Traffic | Network Intrusion Prevention | Low (6) |

| AMF | UDP | network-scan | Detection of a Network Scan | 3 | Network Service Discovery | Network Traffic | Network Segmentation | Low (4.5) |

| AMF | UDP | denial-of-service | Detection of a Denial of Service Attack | 2 | Endpoint Denial of Service | Network Traffic | Filter Network Traffic | Critical (27) |

| UPF | IP | non-standard-protocol | Detection of a non-standard protocol or event | 2 | Ingress Tool Transfer | Network Traffic | Network Intrusion Prevention | Medium (9) |

| SMF | TCP | protocol-command-decode | Generic Protocol Command Decode | 3 | Command and Scripting Interpreter | Command | Execution Prevention | Medium (9) |

| SMF | TCP | web-application-activity | Access to a potentially vulnerable web application | 2 | Exploit Public-Facing Application | Network Traffic | Update Software | Medium (12) |

| SMF | TCP | web applica-tion attack | Web Application Attack | 1 | Exploit Public-Facing Application | Network Traffic | Exploit Protection | Critical (18) |

| SMF | TCP | misc-activity | Misc Activity | 3 | Obfuscated Files or Information | Process | Behavior Prevention on Endpoint | Low (6) |

| SMF | TCP | misc-attack | Misc Attack | 2 | Exploita-tion for Client Execution | Application Log | Update Software | Critical (18) |

| UPF | IP | icmp-event | Generic ICMP event | 3 | Ingress Tool Transfer | Network Traffic | Network Intrusion Prevention | Low (3) |

| UPF | IP | inappro-priate-content | Inappropriate Content was Detected | 1 | Exfiltration Over Web Service | Network Traffic | Restrict Web-Based Content | Critical (18) |

| UPF | IP | policy-violation | Potential Corporate Privacy Violation | 1 | Data Encrypted for Impact | Network Share | Data Backup | High (13.5) |

| AMF | UDP | default-login-attempt | Attempt to login by a default username and password | 2 | Valid Accounts | Logon Session | Password Policies | Critical (18) |

| SMF | TCP | sdf | Sensitive Data | 2 | Data from Information Repositories | Application Log | Encrypt Sensitive Information | Medium (12) |

| SMF | TCP | file-format | Known malicious file or file based exploit | 1 | User Execution: Malicious File | File | Execution Prevention | Critical (27) |

| SMF | TCP | malware-cnc | Known malware command and control traffic | 1 | Application Layer Protocol: Web Protocols | Network Traffic | Network Intrusion Prevention | Critical (36) |

| SMF | TCP | client-side-exploit | Known client side exploit attempt | 1 | Exploita-tion for Client Execution | File | Exploit Protection | Critical (36) |

References

- ETSI. Security Architecture and Procedures for 5G System; 3GPP TS 33.501, Release 16; ETSI: Sophia Antipoli, France, 2020. [Google Scholar]

- ETSI. Multi-Access Edge Computing (MEC); Threat Analysis; GR MEC 024 V2.1.1; ETSI: Sophia Antipoli, France, 2019. [Google Scholar]

- ETSI. Network Functions Virtualisation (NFV) Release 3; Security; Security Management and Monitoring Specification; GR NFV-SEC 013; ETSI: Sophia Antipoli, France, 2017. [Google Scholar]

- Varvarigou, D.; Lampropoulos, K.; Koufopavlou, O.; Denazis, S.; Kitsos, P. An Efficient Methodology for Real-Time Risk and Impact Assessment in 5G Networks. In Proceedings of the 2025 IEEE International Conference on Cyber Security and Resilience (CSR), Chania, Greece, 4–6 August 2025; pp. 240–247. [Google Scholar]

- Sand5G. Security Assessments for Networks and Services in 5G. Available online: https://sand5g-project.eu/ (accessed on 17 December 2025).

- Sood, K.; Nosouhi, M.R.; Nguyen, D.D.N.; Jiang, F.; Chowdhury, M.; Doss, R. Intrusion detection scheme with dimensionality reduction in next generation networks. IEEE Trans. Inf. Forensics Secur. 2023, 18, 965–979. [Google Scholar] [CrossRef]

- Malik, S.; Bera, S. Security-as-a-Function in 5G Network: Implementation and Performance Evaluation. In Proceedings of the 2024 International Conference on Signal Processing and Communications (SPCOM), Bangalore, India, 1–4 July 2024. [Google Scholar]

- Dwedar, M.; Bayram, F.; Eberhard, J.; Jesser, A. Dynamic Anomaly Detection in 5G-Connected IoT Devices using Transfer Learning. In Proceedings of the 2024 IEEE 3rd International Conference on Computing and Machine Intelligence (ICMI), Mount Pleasant, MI, USA, 13–14 April 2024; pp. 1–6. [Google Scholar]

- Akpan, V.A.; Njoku, E.C.; Obi, E.I. Slice-Specific Machine Learning Models for Intrusion Detection in 5G Telecommunication Networks. Int. J. 2025, 12, 93–118. [Google Scholar] [CrossRef]

- Molina, R.M.A.; Wehbe, N.; Alameddine, H.A.; Pourzandi, M.; Assi, C. Inter-Slice Defender: An Anomaly Detection Solution for Distributed Slice Mobility Attacks. In Proceedings of the 2024 IFIP Networking Conference (IFIP Networking), Thessaloniki, Greece, 3–6 June 2024; pp. 432–440. [Google Scholar]

- Ruiz-Canela López, J. How can enterprise risk management help in evaluating the operational risks for a telecommunications company? J. Risk Financ. Manag. 2021, 14, 139. [Google Scholar]

- Shoetan, P.O.; Amoo, O.O.; Okafor, E.S.; Olorunfemi, O.L. Synthesizing AI’S impact on cybersecurity in telecommunications: A conceptual framework. Comput. Sci. Res. J. 2024, 5, 594–605. [Google Scholar]

- ISO/IEC 27001; Information Security, Cybersecurity and Privacy Protection-Information Security Management Systems-Requirements. International Organization for Standardization: Geneva, Switzerland, 2022.

- Kitsios, F.; Chatzidimitriou, E.; Kamariotou, M. Developing a risk analysis strategy framework for impact assessment in information security management systems: A case study in it consulting industry. Sustainability 2022, 14, 1269. [Google Scholar] [CrossRef]

- Babatunde, G.O.; Mustapha, S.D.; Ike, C.C.; Alabi, A.A. A holistic cyber risk assessment model to identify and mitigate threats in us and canadian enterprises. Int. J. Multidiscip. Res. Growth Eval. 2025, 6, 773–787. [Google Scholar]

- Goffer, M.A.; Uddin, M.S.; Kaur, J.; Hasan, S.N.; Barikdar, C.R.; Hassan, J.; Das, N.; Chakraborty, P.; Hasan, R. AI-Enhanced Cyber Threat Detection and Response Advancing National Security in Critical Infrastructure. J. Posthumanism 2025, 5, 1667–1689. [Google Scholar] [CrossRef]

- Bondan, L.; Wauter, T.; Volckaert, B.; De Turck, F.; Granville, L.Z. Nfv anomaly detection: Case study through a security module. IEEE Commun. Mag. 2022, 60, 18–24. [Google Scholar] [CrossRef]

- Alshammari, N.; Shahzadi, S.; Alanazi, S.A.; Naseem, S.; Anwar, M.; Alruwaili, M.; Abid, M.R.; Alruwaili, O.; Alsayat, A. Security monitoring and management for the network services in the orchestration of SDN-NFV environment using machine learning techniques. Comput. Syst. Sci. Eng. 2024, 48, 363–394. [Google Scholar] [CrossRef]

- Madi, T.; Alameddine, H.A.; Pourzandi, M.; Boukhtouta, A. NFV security survey in 5G networks: A three-dimensional threat taxonomy. Comput. Netw. 2021, 197, 108288. [Google Scholar] [CrossRef]

- Kamran, S.A. Machine Learning and Java-Based Orchestration for Intelligent Anomaly Detection and Self-Healing in Virtual Networks. Int. J. Sci. Eng. Appl. 2025, 14, 49–57. [Google Scholar]

- Dubba, S.; Killi, B.R. Security-Aware Cost Optimized Dynamic Service Function Chain Scheduling. J. Netw. Syst. Manag. 2025, 33, 4. [Google Scholar] [CrossRef]

- Hernández, C.; López, M.; García, J.; Vander Peterson, C. Optimizing collaborative intelligence systems for end-to-end cybersecurity monitoring in global supply chain networks. Int. J. Responsible Artif. Intell. 2024, 28–36. [Google Scholar]

- Simanjuntak, T. Emerging Cybersecurity Threats in the Era of AI and IoT: A Risk Assessment Framework Using Machine Learning for Proactive Threat Mitigation. Int. J. Inf. Syst. Innov. Technol. 2024, 3, 15–22. [Google Scholar] [CrossRef]