A Model of Gamification by Combining and Motivating E-Learners and Filtering Jobs for Candidates †

Abstract

:1. Introduction

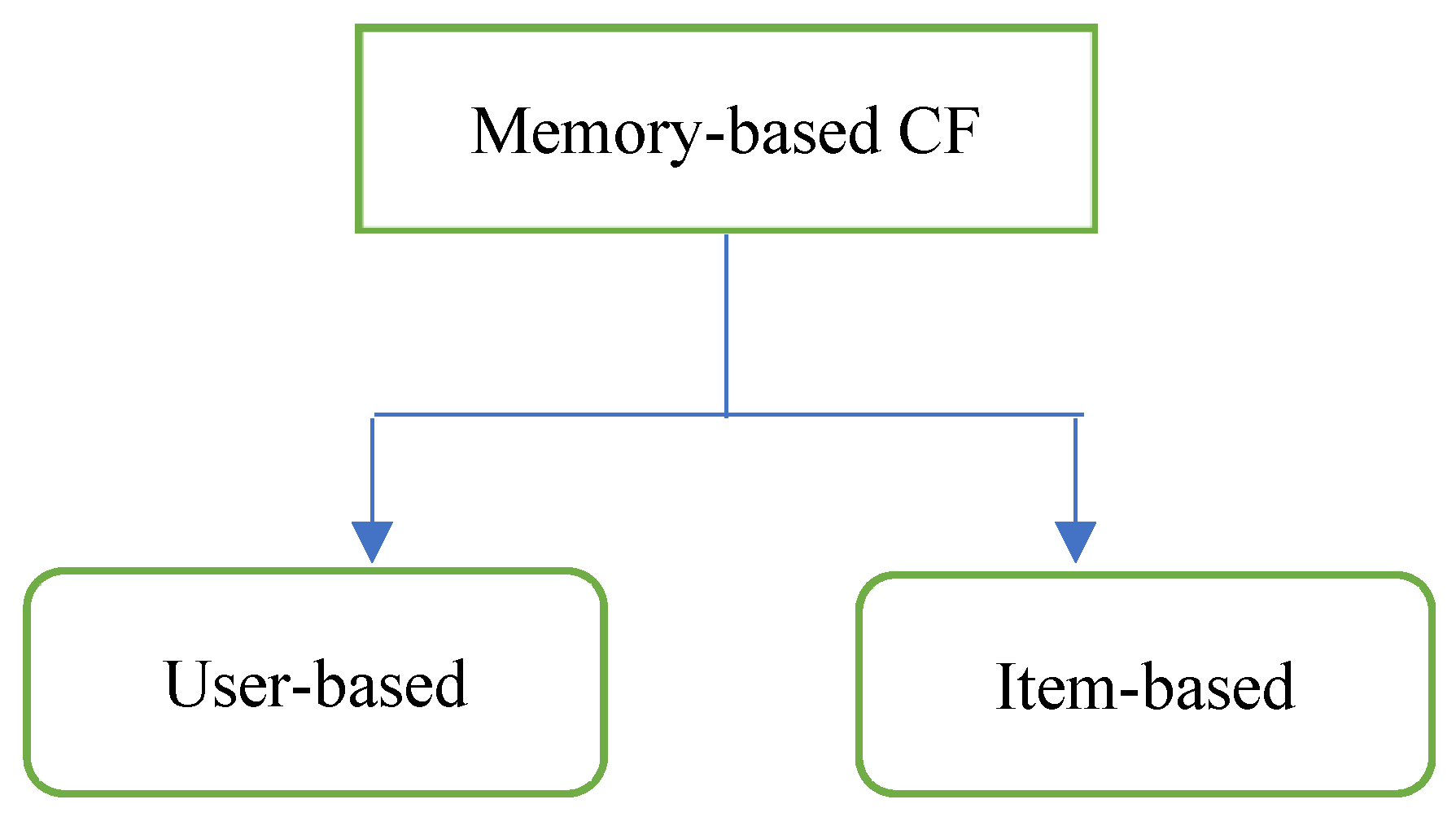

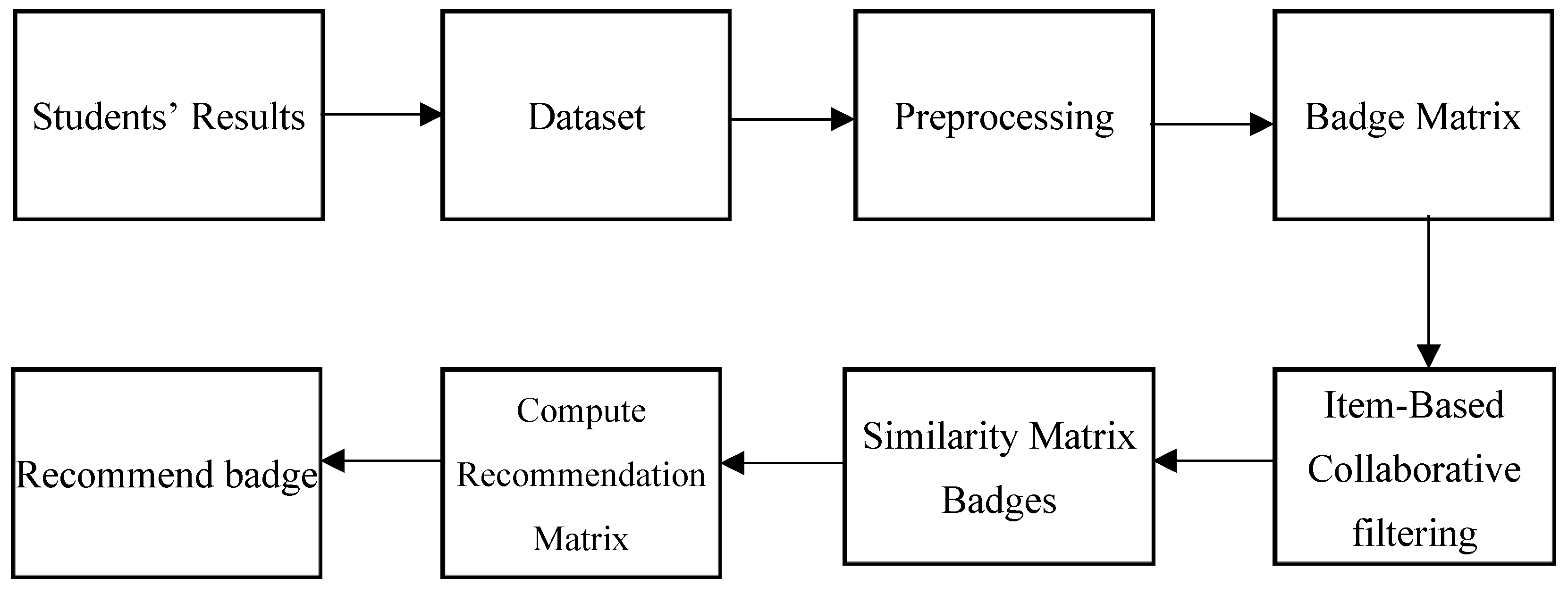

1.1. Item-Based Approach

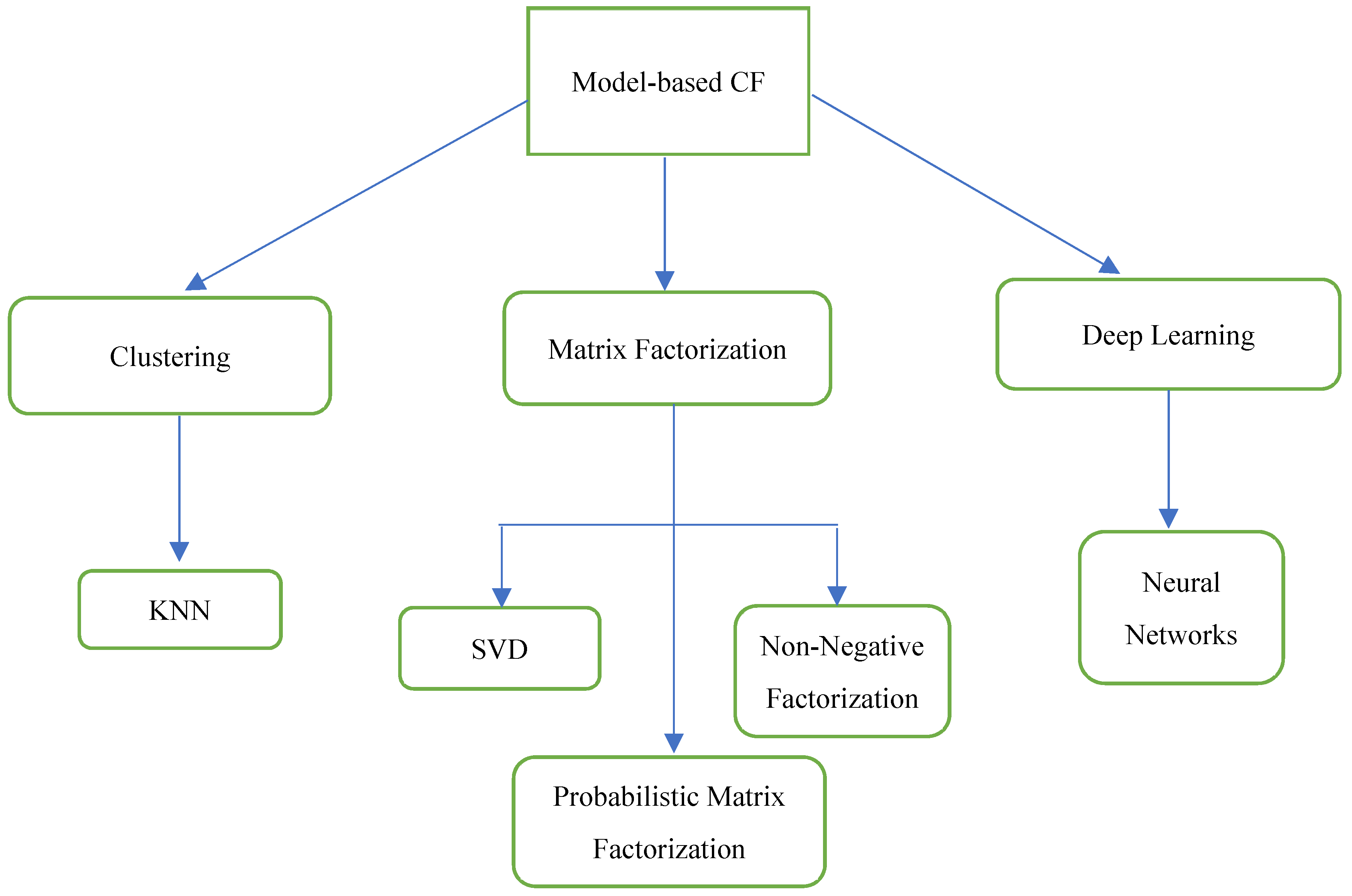

1.2. Model-Based Approach

2. Related Works

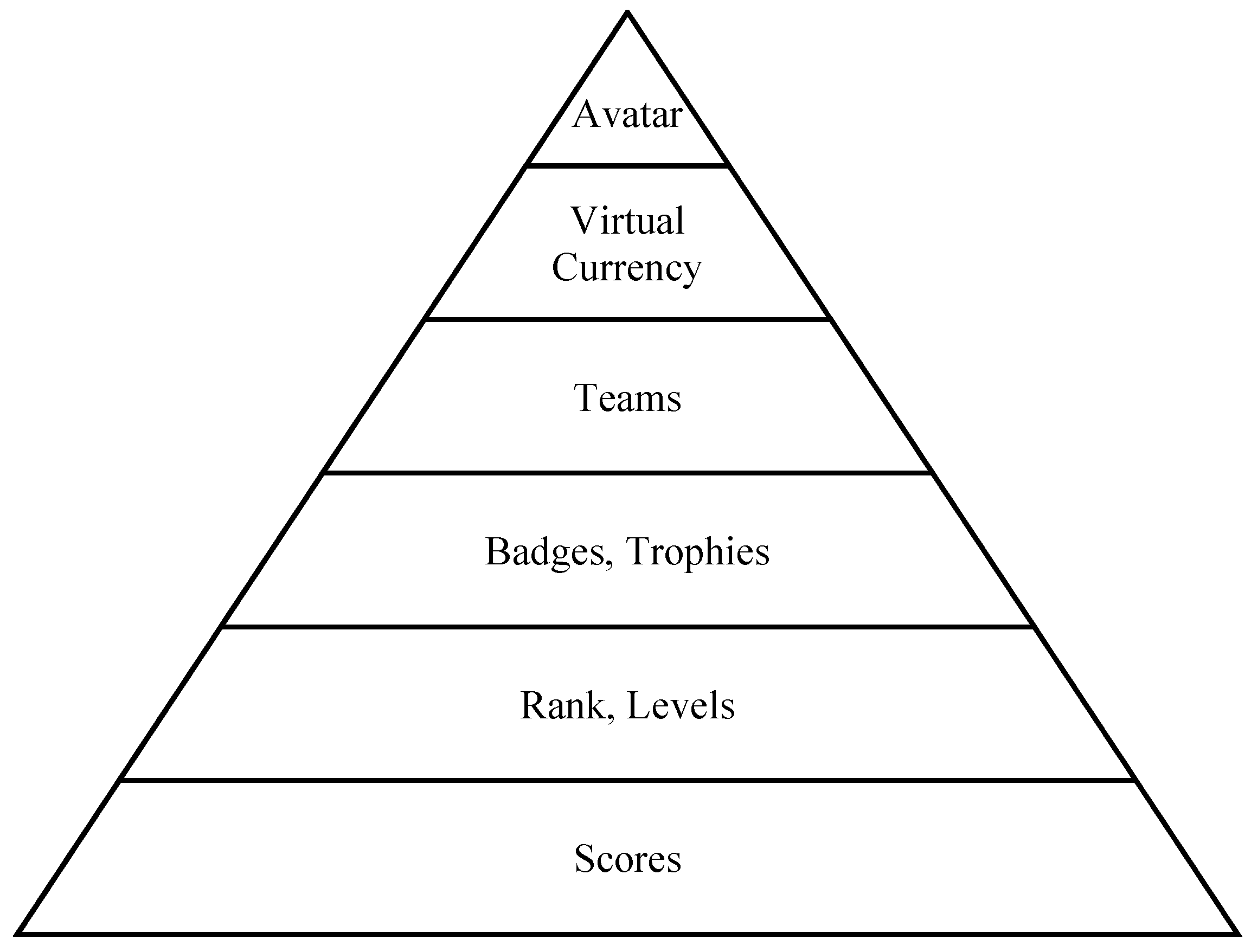

2.1. Gamification

2.2. Gamification in E-Learning

2.3. Collaborative Filtering in Recommender Systems

2.4. Problem Definition

3. Recommendation Model

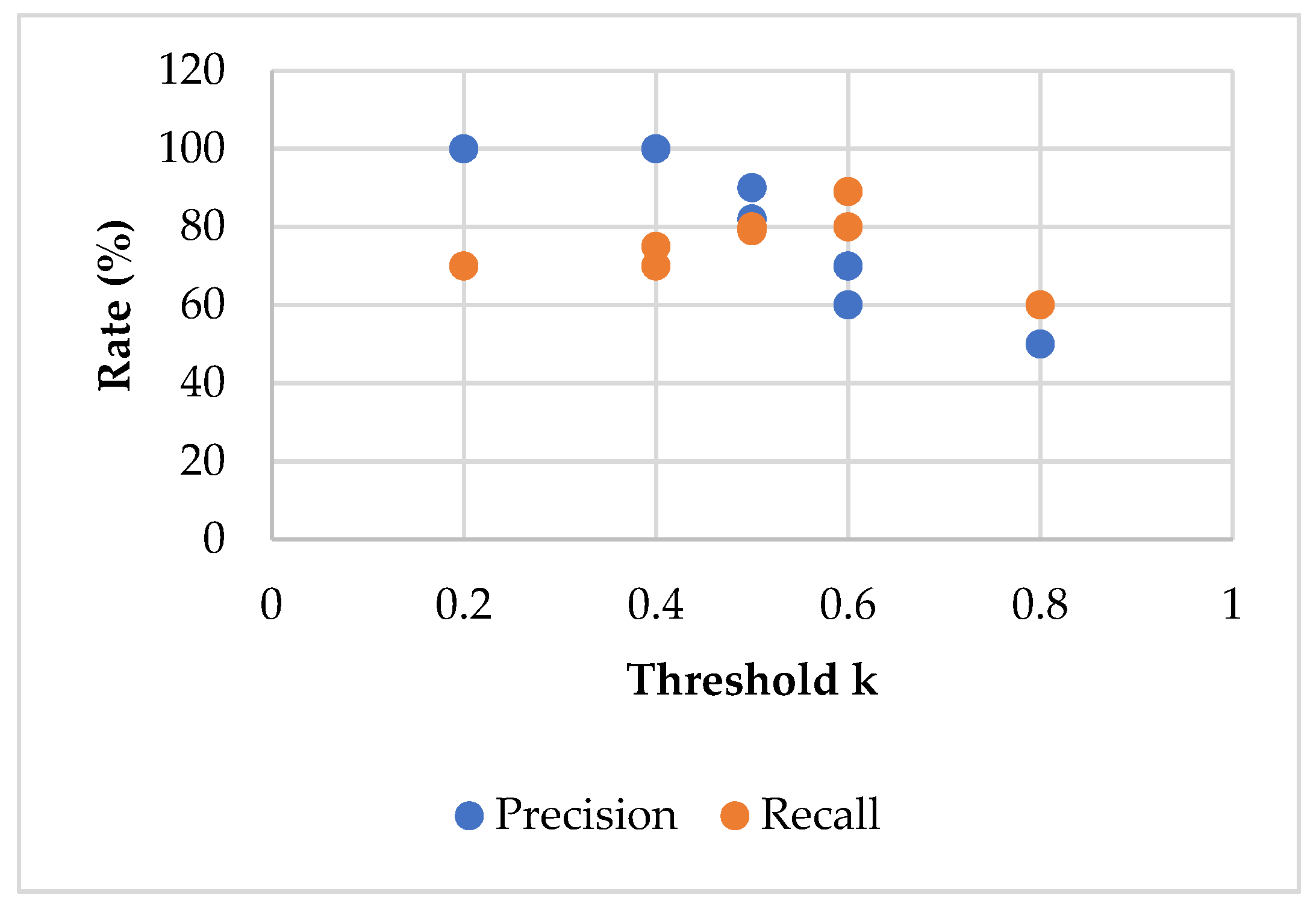

Experimental Evaluation

4. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Schafer, J.B.; Frankowski, D.; Herlocker, J.; Sen, S. Collaborative filtering recommender systems. In The Adaptive Web: Methods and Strategies of Web Personalization; Springer: Berlin, Germany, 2007; pp. 291–324. [Google Scholar]

- Ekstrand, M.D.; Riedl, J.T.; Konstan, J.A. Collaborative filtering recommender systems. Found. Trends Hum.-Comput. Interact. 2011, 4, 81–173. [Google Scholar] [CrossRef]

- Adomavicius, G.; Tuzhilin, A. Toward the next generation of recommender systems: A survey of the state-of-the-art and possible extensions. IEEE Trans. Knowl. Data Eng. 2005, 17, 734–749. [Google Scholar] [CrossRef]

- Sarwar, B.; Karypis, G.; Konstan, J.; Riedl, J. Item-based collaborative filtering recommendation algorithms. In Proceedings of the 10th International Conference on World Wide Web, Hong Kong, China, 1–5 May 2001. [Google Scholar]

- Wang, J.; De Vries, A.P.; Reinders, M.J. Unifying user-based and item-based collaborative filtering approaches by similarity fusion. In Proceedings of the 29th Annual International ACM SIGIR Conference on Research and Development in Information Retrieval, Seattle, WA, USA, 6–11 August 2006. [Google Scholar]

- Aggarwal, C.C. Model-Based Collaborative Filtering. In Recommender Systems: The Textbook; Springer: Cham, Switzerland, 2016; pp. 71–138. [Google Scholar]

- Bergner, Y.; Droschler, S.; Kortemeyer, G.; Rayyan, S.; Seaton, D.; Pritchard, D.E. Model-based collaborative filtering analysis of student response data: Machine-learning item response theory. In Proceedings of the International Conference on Educational Data Mining (EDM), Chania, Greece, 19–21 June 2012. [Google Scholar]

- Tondello, G.F.; Orji, R.; Nacke, L.E. Recommender systems for personalized gamification. In Proceedings of the Adjunct Publication of the 25th Conference on User Modeling, Adaptation and Personalization, Bratislava, Slovakia, 9–12 July 2017. [Google Scholar]

- Seaborn, K.; Fels, D.I. Gamification in theory and action: A survey. Int. J. Hum. Comput. 2015, 74, 14–31. [Google Scholar] [CrossRef]

- Prakasa, F.B.P.; Emanuel, A.W.R. Review of benefit using gamification element for countryside tourism. In Proceedings of the International Conference of Artificial Intelligence and Information Technology (ICAIIT), Yogyakarta, Indonesia, 13–15 March 2019. [Google Scholar]

- Rinc, S. Integrating gamification with knowledge management. In Proceedings of the Management, Knowledge and Learning, International Conference, International School for Social and Business Studies, Portorož, Slovenia, 25–27 June 2014. [Google Scholar]

- Pilar, L.; Moulis, P.; Pitrová, J.; Bouda, P.; Gresham, G.; Balcarová, T.; Rojík, S. Education and Business as a key topic at the Instagram posts in the area of Gamification. J. Effic. Responsib. Educ. Sci. 2019, 12, 26–33. [Google Scholar] [CrossRef]

- de Paula Porto, D.; de Jesus, G.M.; Ferrari, F.C.; Fabbri, S.C.P.F. Initiatives and challenges of using gamification in software engineering: A Systematic Mapping. J. Syst. Softw. 2021, 173, 1–46. [Google Scholar]

- Corbett, S. Learning by Playing: Video Games in the Classroom. The New York Times, 15 September 2010. [Google Scholar]

- Deterding, S.; Dixon, D.; Khaled, R.; Nacke, L. From game design elements to gamefulness: Defining gamification. In Proceedings of the 15th International Academic MindTrek Conference: Envisioning Future Media Environments, Tampere, Finland, 28–30 September 2011. [Google Scholar]

- Wertz, R.J. Reality is Broken—Why Games Make Us Better and How They Can Change the World. J. Commun. Media Stud. 2011, 3, 174–176. [Google Scholar]

- Lattal, K.A.; Chase, P.N. (Eds.) Behavior Theory and Philosophy; Springer Science & Business Media; West Virginia University: Morgantown, WV, USA, 2013. [Google Scholar]

- Hassan, M.A.; Habiba, U.; Majeed, F.; Shoaib, M. Adaptive gamification in e-learning based on students’ learning styles. Interact. Learn. Environ. 2021, 29, 545–565. [Google Scholar] [CrossRef]

- Hamari, J.; Koivisto, J. Why do people use gamification services? Int. J. Inf. Manag. 2015, 35, 419–431. [Google Scholar] [CrossRef]

- Huang, B.; Hwang, G.J.; Hew, K.F.; Warning, P. Effects of gamification on students’ online interactive patterns and peer-feedback. Distance Educ. 2019, 40, 350–379. [Google Scholar] [CrossRef]

- Urh, M.; Vukovic, G.; Jereb, E. The model for introduction of gamification into e-learning in higher education. In Proceedings of the Procedia-Social and Behavioral Sciences, Novotel Athens Convention Center, Athens, Greece, 5–7 February 2015. [Google Scholar]

- de Marcos Ortega, L.; García-Cabo, A.; López, E.G. Towards the social gamification of e-learning: A practical experiment. Int. J. Eng. Educ. 2017, 33, 66–73. [Google Scholar]

- Yildirim, I. The effects of gamification-based teaching practices on student achievement and students’ attitudes toward lessons. Internet High. Educ. 2017, 33, 86–92. [Google Scholar] [CrossRef]

- Göksün, D.O.; Gürsoy, G. Comparing success and engagement in gamified learning experiences via Kahoot and Quizizz. Comput. Educ. 2019, 135, 15–29. [Google Scholar] [CrossRef]

- Lopez, C.E.; Tucker, C.S. The effects of player type on performance: A gamification case study. Comput. Hum. Behav. 2019, 91, 333–345. [Google Scholar] [CrossRef]

- Eliyas, S.; Ranjana, P. Gamification: Is E-next Learning’s Big Thing. J. Internet Serv. Inf. Secur. 2022, 12, 238–245. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Eliyas, S.; P, R. A Model of Gamification by Combining and Motivating E-Learners and Filtering Jobs for Candidates. Eng. Proc. 2023, 59, 7. https://doi.org/10.3390/engproc2023059007

Eliyas S, P R. A Model of Gamification by Combining and Motivating E-Learners and Filtering Jobs for Candidates. Engineering Proceedings. 2023; 59(1):7. https://doi.org/10.3390/engproc2023059007

Chicago/Turabian StyleEliyas, Sherin, and Ranjana P. 2023. "A Model of Gamification by Combining and Motivating E-Learners and Filtering Jobs for Candidates" Engineering Proceedings 59, no. 1: 7. https://doi.org/10.3390/engproc2023059007

APA StyleEliyas, S., & P, R. (2023). A Model of Gamification by Combining and Motivating E-Learners and Filtering Jobs for Candidates. Engineering Proceedings, 59(1), 7. https://doi.org/10.3390/engproc2023059007