1. Introduction

With the rise of modern optimization techniques in combination with continuously increasing computational power, it is becoming more and more likely to find outstanding solutions to certain design tasks, even if such optima are hidden in the narrowest design-space gaps [

1,

2]. In the field of electrical machine design, usual optimization targets are performance measures, like efficiency, cost, or torque ripple [

3]. A common problem of highly optimized designs is that a small deviation of the design parameters from the ideal values may lead to a significant deterioration of the performance—the design is thus sensitive to parameter tolerances. To obtain designs which are not prone to a significant performance degradation in the presence of inevitable tolerances, some measure describing this resistance has to be incorporated into the (multi-objective) optimization process. A design which has a certain resistance against tolerances is usually called a robust design, and an optimization which takes into account the effect of tolerances is called a robust optimization. In this paper, a method is presented on how to evaluate the robustness of a design that has a large number of tolerance-affected parameters. The application of the method within the scope of an optimization will be dealt with in future work.

In the field of electrical machine design, intensive research is being carried out on the topic of robust design. A huge number of publications deal with the effect of tolerances, especially considering the cogging torque change caused by permanent magnet uncertainties, such as in [

4,

5,

6,

7]. The different influences of size, positioning, or magnetization strength and direction are often investigated by applying two or three discrete tolerance levels and searching for worst-case combinations. To reduce the inherent computational burden associated with finite element analyses, symmetry conditions with semi-analytical methods [

5] or surrogate models [

7,

8] are often utilized. Besides searching for worst-case configurations based on discrete tolerance levels, stochastic approaches, like the six sigma quality method [

9,

10,

11], are also investigated to predict the behavior of systems subject to tolerances. Using stochastic measures like the standard deviation

might be preferable compared to the worst-case method [

12]. For example, when designing an airplane propulsion, the worst-case measure might definitely be the proper method, since each single failure can have tremendous consequences; whereas for a standard industrial electric machine, this leads to an over-designed, and thus over-priced system which is not competitive.

An overview of different robustness criteria applied in the field of electrical machine design was recently presented in [

13], where a quantile measure based on the evaluation of cumulative distribution functions (CDFs) was also introduced for a problem with four tolerance-affected design parameters. The capability of CDFs for large-scale tolerance analysis of problems showing a huge number of parameters and the feasibility of predicting their confidence bounds is presented in this paper by focusing on electric machine applications. The chosen test problem will be presented in

Section 2, followed by an introduction to the cumulative distribution function and a theoretical method for confidence estimation in

Section 3. In

Section 4, the calculation of the sample data is described and the evolution of the CDF with increasing number of samples is illustrated. For a data-based estimation of the CDFs‘ confidence, the bootstrap method [

14,

15,

16] was utilized (

Section 5). Finally, we discuss benefits and limitations of the presented method and give a short outlook and conclusion.

2. Test Case Definition

To show the capabilities of using CDFs as a robustness measure for large-scale tolerance analysis, an outer rotor Vernier motor is used as a striking example. Such motors modulate the flux created by a low pole count winding system to an air gap field with major higher harmonic components. As a consequence, an appropriate high number of magnets has to be arranged on the outer rotor to interact with the corresponding air gap field. Such motors can be designed to have outstanding performance in low speed and high torque applications, but also suffer from a significantly nonlinear relationship between the dimensions and the magnetic flux distribution [

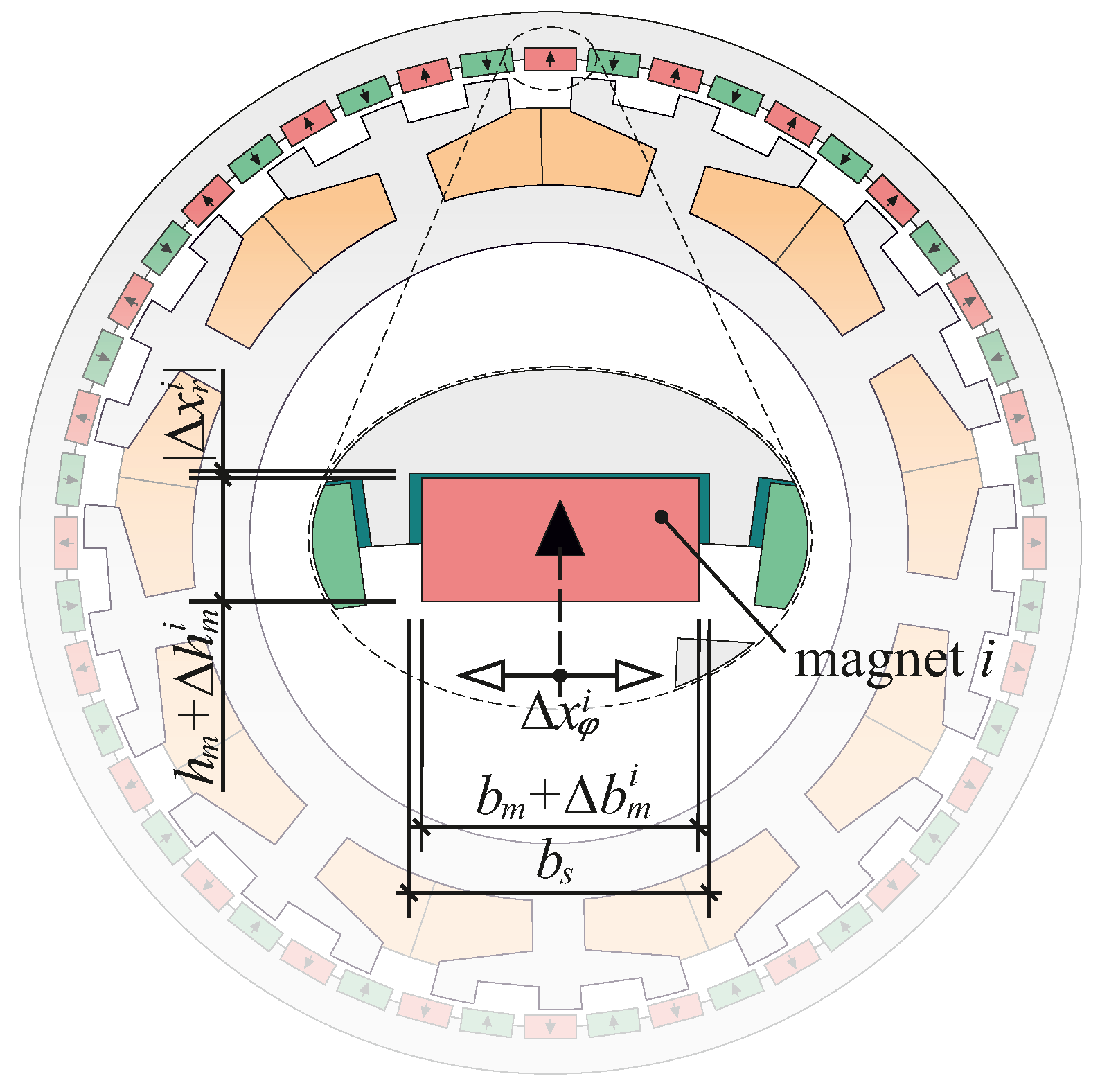

17]. Thus, reliable surrogate models with sufficient accuracy are hard to obtain. The Vernier motor used for this investigation is shown in

Figure 1, and some basic parameters are given in

Table 1.

It might be mentioned that this Vernier motor has not a claim to be optimal in any sense—that is, it was not optimized for any special purpose, but should only serve as an illustrative and demanding real-world test case. The goal of this investigation was to predict the impact of permanent magnet uncertainties on the cogging torque. The uncertainties concern the

The tolerance-affected parameters are also shown in

Figure 1. The width and height vary due to the manufacturing process of the permanent magnets, and their tolerances have maximum values of

and

, respectively. Radial and circumferential positions can vary due to the assembling process of the permanent magnets. If, for example, the magnets are glued, the adhesive gap thickness can vary, leading to a radial position which deviates from the expected value. For this investigation, a radial maximum displacement tolerance of

is assumed. Note that for the radial displacement, only a negative tolerance limit is given, what corresponds to a movement towards the center. The fourth parameter is the circumferential position of the magnets. They are buried into slots with a width of

, as illustrated in the highlighted area of

Figure 1. The maximum circumferential displacement of a certain magnet

i is a function of its actual tolerance-affected width, that is,

, where

.

In the test machine, the slots have a width of

. Thus, a magnet

i prone to the maximum tolerance level, that is,

, cannot move any more (

). A magnet with a minimum width of

can move up to

. All parameters subject to tolerances are assumed to have uniform distribution and are summarized in

Table 2.

It might be worth emphasizing that due to the stochastic nature of the tolerances, all 48 magnets are likely to have individual values for a given design. Therefore, their specific values will be modeled independently. Assuming a discretization of three steps for each of the four parameters, this leads to possible combinations for a single magnet. Taking into account that every magnet features its independent tolerance values, a total of different variations are possible. If the simulation of one design would need one microsecond, the evaluation of all variants would need more than years. Even if a proper analytical (surrogate) model would be available to calculate cogging torque, evaluating all combinations would never be an option. Hence, alternative approaches need to be developed.

3. Introduction to Cumulative Distribution Functions

From a set of

n tolerance-affected sample values

, that is,

n samples of a certain target evaluated under the influence of tolerances, the empirical cumulative distribution function (ECDF)

is defined as [

18]

In words,

is the weighted count of samples

for which

is below or equal to the threshold,

x. A set of exemplary random samples

, and the respective ECDF

are plotted in

Figure 2.

From the cumulative distribution function, it is easy to determine how many of the samples have a value below or equal to a certain limit, or what the parameter limit

is, where a certain percentage

p of the samples has a smaller or equal value. This threshold

is called the

p-quantile. In

Figure 2, the 90% quantile is shown, meaning that 90% of the samples have a value smaller or equal to

.

Given the

n samples, a continuous probability density function

can also be fitted, using kernel density estimation (KDE). KDE is a popular approach to estimate the probability density function of random datasets, and due to its non-parametric nature, it is also capable of fitting complicated distributions [

18,

19,

20]. In

Figure 2, this KDE estimate

is shown as a blue line.

From

, the CDF

can be determined as

The 90%-quantile in

Figure 2 is shown based on this continuous CDF. As an absolute measure for the robustness of a design, any quantile value can be used. Another indicator is the steepness of

—that is, the steeper the CDF is, the more robust the system is.

It is clear that the ECDF

, and consequently, also the fitted CDF

, can only be an estimate of the real CDF with

. Thus, the remaining but essential question is: How good is this estimate for a given number of

n? From the Dvoretzky–Kiefer–Wolfowitz inequality, a

-confidence band for

can be constructed as follows [

18]:

where

and

Equation (

3) implies that the probability

of the real CDF

lying in between some lower

and upper

boundary is greater than or equal to

, where the definitions of the lower and upper bound are given by (

4) and (

5). For example, if we want 95% confidence (

) of the estimation

shown in

Figure 2 with

samples, from (

5) an uncertainty of

, which is equivalent to

, can be calculated. Thus, the real CDF has a probability of 95% laying in the confidence slot of

. This slot, bounded by the light-grey dashed lines

and

, is also displayed in

Figure 2. The method based on (

3) to (

5) will be referred to as the DKW method throughout the paper.

Let us assume that a 95% confidence interval with a slot of

is desired. An approximation of the required sample size can be calculated by rearranging (

5), leading to

n = 18,445 samples for this example. Note that no information about the actual problem is necessary using the DKW method. However, from (

5), it can also be observed that the confidence slot and thus

converges with

.

If we are interested in the confidence range in parameter space x rather than in the probability space , the (E)CDF can be inverted to get . Since is basically the definition of the p-quantile, the inverse is also called a quantile function.

4. Creation of Sample Data and Evolution of the CDF

To create the test data for this investigation, a parametric finite element model was set up using the SyMSpace framework [

21]. For the finite element calculations, FEMM software was used [

22], to which SyMSpace features an interface. Since the circumferential positioning

of each magnet

i is dependent on its actual width, which is also subject to tolerances, for this parameter the uniform distribution is mapped to a normalized range of

, where

Considering (

6) and

Figure 1, if

, the affected magnet is moved in clockwise direction, such that it is touching the edge of the slot. For

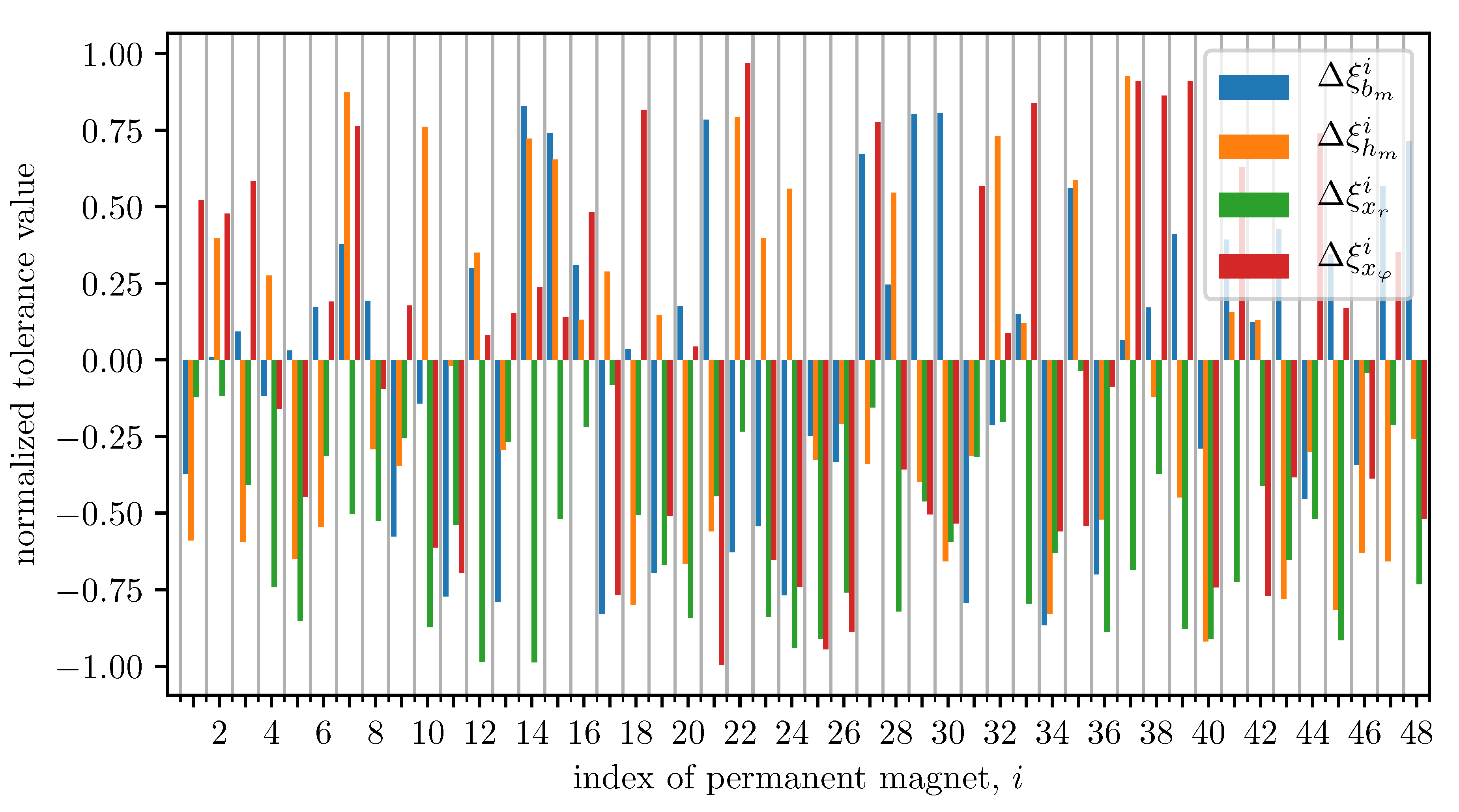

, the magnet is against the slot edge in counterclockwise direction. In

Figure 3 the normalized tolerance values of all magnets for an exemplary sample are visualized, where

To speed up calculations done by the finite element program, usually sectors with some periodic boundary conditions are defined and the rotor is rotated by one electrical period. Since these investigations consider tolerances leading to asymmetric designs, that is, every magnet is exposed to different tolerance values, the full cross-section has to be modeled in the finite element program and the rotor has to be rotated by the angular width of one main stator tooth ( in this case) to allow for analysis of all possible harmonics of the cogging torque. In our case, such a calculation takes approximately 15 min. From the obtained cogging torque as a function of the rotor angle , the peak-to-peak value is evaluated, which also serves as a target variable for the following investigations.

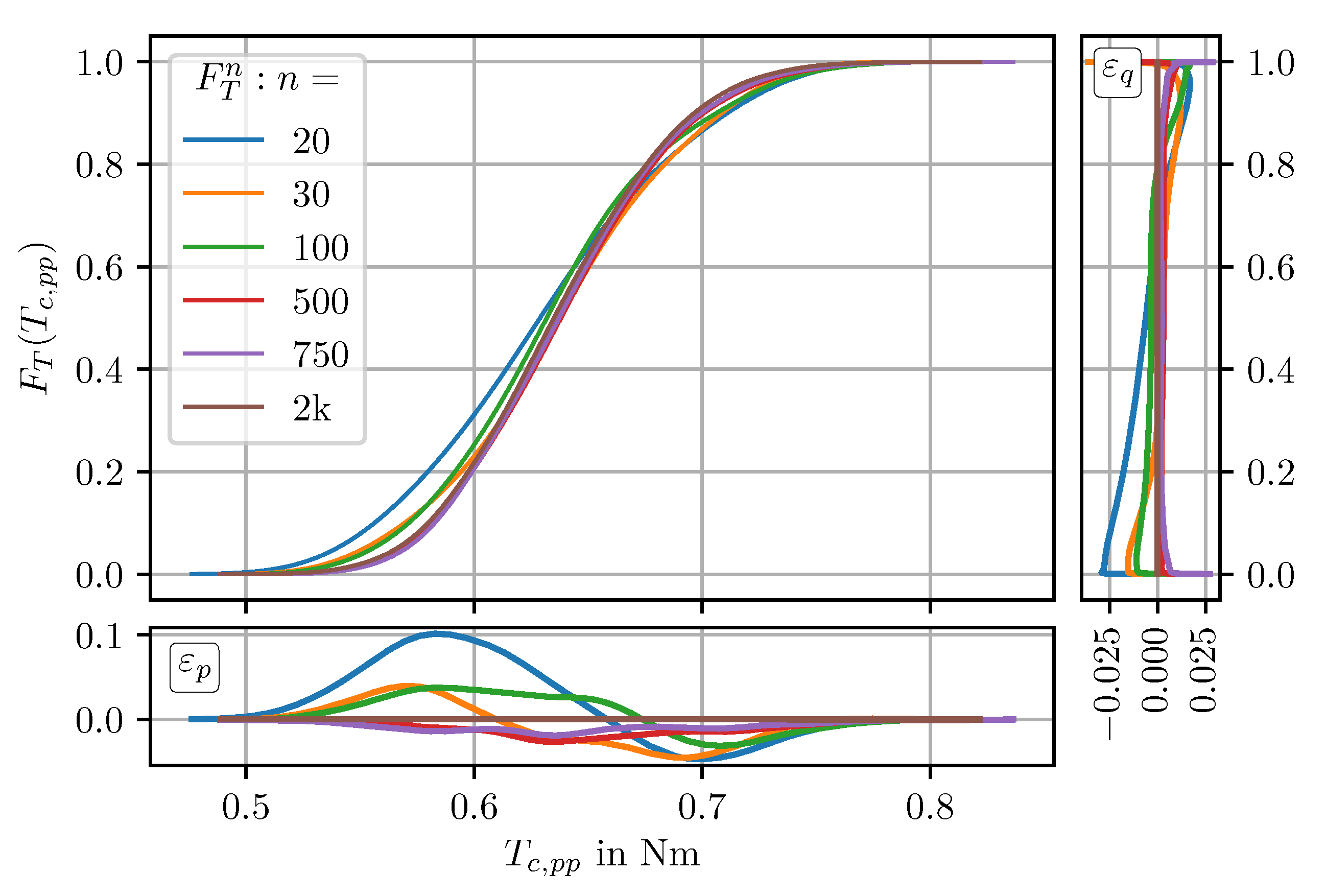

After evaluating

randomly defined motor cross-sections (a motor subject to a random set of tolerances will also be referred to as a sample throughout the paper), the CDF

is fitted from the

values, as displayed in

Figure 4. The right superscript denotes the number of

n samples upon which the CDF is based, that is,

.

The continuous CDF

, which is fitted by kernel density estimation using a Gauss kernel, as well as its deviation to the empiric CDF

with respect to probability

(lower axis), and its deviation to

with respect to the quantile value (cogging torque)

(right axis) are also shown in

Figure 4. From the appended axes, it can be observed that the deviation from the fitted to the empiric CDF is below 10% and

.

To have a single measure for the deviation between two (E)CDFs,

and

, the absolute area between them,

, can be evaluated:

Since the (E)CDF is a normalized quantity, has the unit of the parameter which the distribution describes ( in this case). , generally defined as the deviation between two arbitrary CDF functions, will serve as a parameter predicting the convergence of the approach presented in the following.

Now, from

Figure 4 and also from

Figure 2, it can be seen that the ECDF can be reasonably fitted by kernel density estimation, but it is not clear if a CDF, based on a low number of samples, reflects the statistical behavior to be expected when manufacturing this motor in a mass production process. Thus, the idea is to observe the evolution of the CDF when more and more samples are calculated. Starting from the CDF based on 20 samples, a selection of CDFs based on an increased number of samples is shown in

Figure 5.

In total, 2000 samples were evaluated. The CDF fitted from this full dataset will serve as a reference CDF

. In

Figure 5, and also in all the following investigations, only the KDE-fitted CDFs are considered.

Now, from

Figure 5, it can be observed that

converges very fast. The appended axes below and on the right side show the deviation of

to

. From this Figure, it seems that

already converged quite close to a “final” CDF. Thus, it looks like a statistical statement on the robustness of cogging torque against magnet tolerances can be derived within 500 simulations for a problem with an incredible number of possible combinations.

An explanation for this desirable behavior is the insensitivity of the KDE-fitted CDF to fluctuations of the underlying data. On the one hand, the CDF is based on a set of sample values, where each single sample contributes with a value of to the functional, and on the other hand, the fitting process has a smoothing effect.

However, thinking on the iterative procedure of creating new samples and updating

, the question of how to define convergence remains, since in

Figure 5 the convergence was identified by referring to a CDF based on much more samples (

) than actually available. We cannot rely on a comparison to this “best available” CDF, but have to compare to the CDFs from previous update steps and track the evolution of the CDFs.

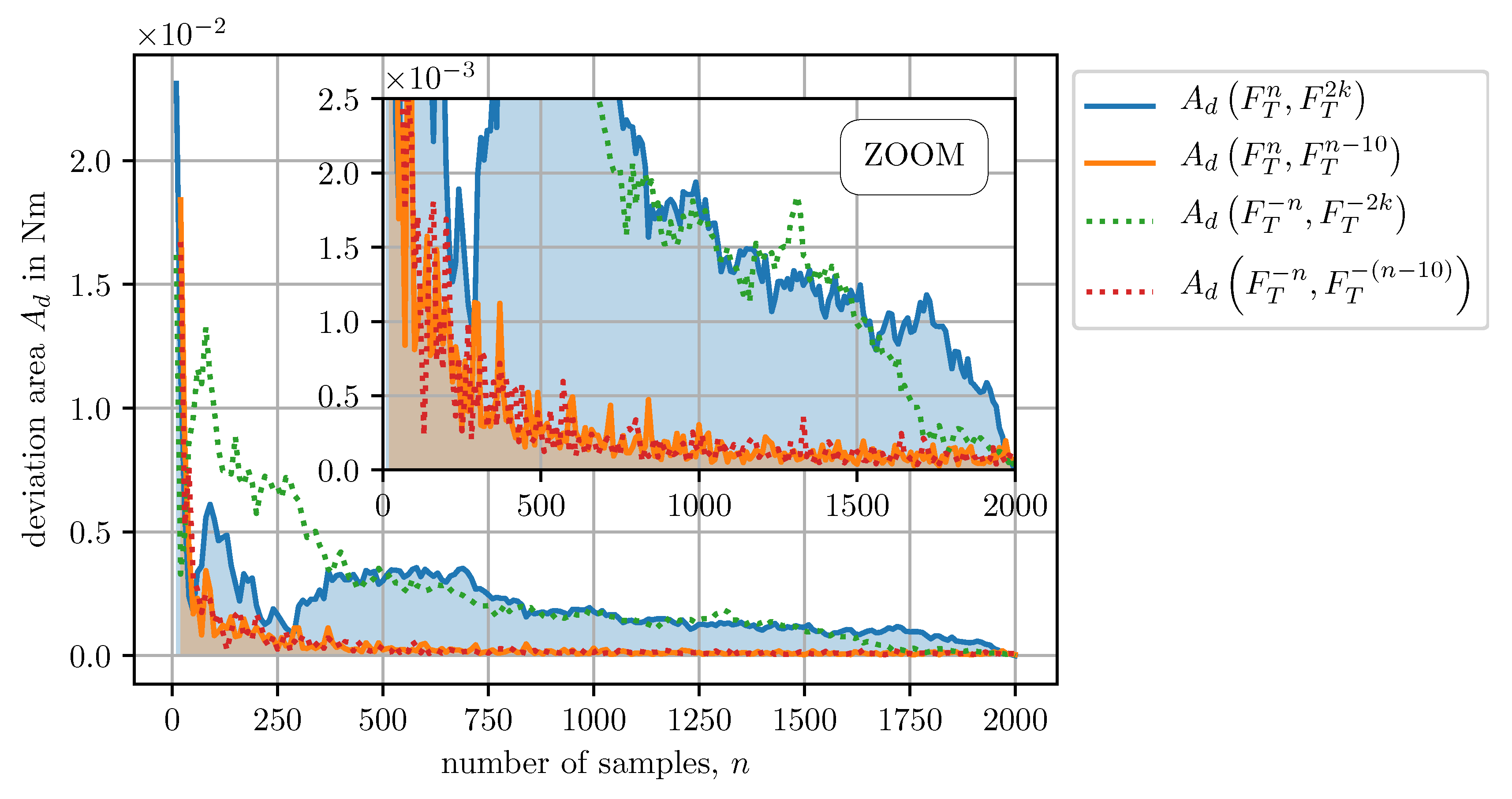

To evaluate this behavior, every 10 samples a CDF was calculated, that is,

, and the deviation area between successive CDFs,

, was evaluated. This course, and also the deviation with respect to

,

, is shown in

Figure 6.

Looking at the deviation to

, which gives an absolute measure of the convergence, it turns out that a significant drop appears within the first 100 samples, then there is some fluctuation and from about 500 samples,

is stable and shows a steady convergence towards zero. The relative deviation,

, converges even faster to very low values, as can be seen from the lower axis of

Figure 6. Thus, from

, one could interpret a faster convergence of

, but since it is a relative measure, it could have an integrating character. This means that even if

is low,

could slowly but steadily move in one direction, and no information is available about the “real” limit,

.

Another point which was not discussed so far is also shown in

Figure 6—the effect of random sampling. Since the samples are defined randomly, or more precisely, the tolerance values of each parameter for every magnet are selected randomly considering its distribution, it is obvious that quite different CDFs can emerge, especially at the beginning. To verify this, the evolution of

and

was evaluated with the available samples in reversed order—that is, the sample number 2000 was used as the first one, sample number 1999 was used as the second one, and so on. The reversed order was designated with a negative sign of the sample index

n in the corresponding deviation function, as drawn in

Figure 6 with dotted lines. When comparing the deviations based on the standard ordered samples with the ones based on the reversed ordered samples, it is worth emphasizing that, up to sample index 1000, they relied on completely different samples. Having this in mind, the comparison shows interesting insights—the first one comes by observing

, which has an (expected) deviation to

in the lower samples range, but shows good agreement with

already from

onwards. The second one is that the relative evolution shows pretty much the same behavior, regardless of whether the samples are in standard or in reversed order.

To summarize this section:

5. Evaluation of Data-Based CDF Confidence Values

For an iterative approach, with cannot be evaluated, since no “best” reference ( in the previous section) is available. From the relative evolution of , or more precisely, of , no statement can further be drawn about the absolute deviation, due to the (possible) integrating character.

From the presented theory of

Section 3, especially (

5), one could determine which sample count

n is required for guaranteeing a specified confidence interval. However, the underlying problem to be investigated is not taken into account, as (

5) was developed for deriving confidence intervals for any empiric cumulative distribution function without loss of generality. Such general (not problem-specific) derivations usually implicate very conservative measures, such as confidence interval bounds. Besides, they often imply assumptions, like unbiased data sets, which can hardly be ensured for practical applications. While it can be more easily guaranteed for the tolerance-affected input variables, ensuring an unbiased performance measure is practically impossible, as the input–output relation is not known in general.

To overcome the lack of problem-specific information, the bootstrap approach is used to estimating the confidence interval [

14,

15,

16,

20]. This common method in statistical inference utilizes the sample data itself and not only the number of samples for confidence boundary estimation.

The confidence value is created using the following approach:

Create batches (bootstrap samples), where every batch contains n samples which are drawn from the original dataset with replacement. The original dataset also features n samples.

Create a CDF for every bootstrapped batch of samples and calculate the deviation to the CDF based on the original dataset, .

Investigate the distribution of the deviations by creating a CDF based on the deviation values .

Evaluate a confidence value based on .

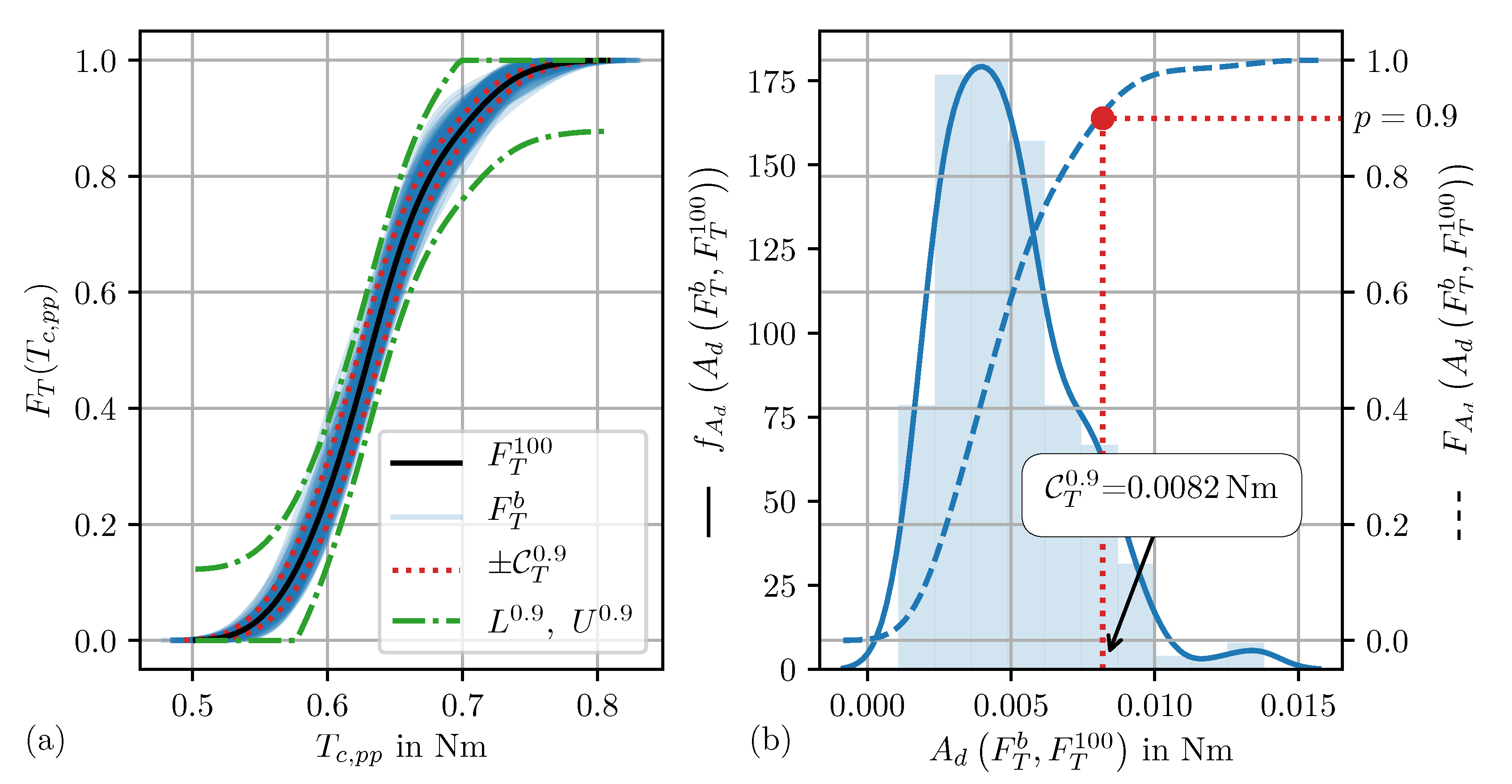

Let us go through the steps using an example: Assume a dataset with

samples. From these 100 samples,

batches of samples are created, where each batch also contains

samples. The samples of one batch are randomly selected out of the full dataset with replacement. From this, it follows that a specific sample from the original dataset can occur in a batch multiple times. The CDF based on all available samples,

, is plotted in

Figure 7a together with the

different CDFs calculated from the 200 batches,

.

Since every batch consists of a different set of samples, the corresponding CDFs look different. They form a tube around

, which defines some kind of confidence for the case where 100 samples are available. In the next step, we want to have a look at the deviation of every batch CDF to the original data CDF,

. The probability density function

of these deviations, as well as the corresponding cumulative distribution function

are shown in

Figure 7b.

From

, we can now easily read which share of the batches leads to a deviation area below a certain limit, or the other way round: what is the maximum deviation area that a certain amount of batches does not exceed? In our example, 90% of the batches lead to a deviation area of

, as also shown in

Figure 7b. This parameter will be used to rate the confidence of the batches and is designated by

. Although

gives the 90%-quantile of

, the index

T is used. On the one hand, this was chosen because it should serve as a confidence value for pretending the distribution of the cogging torque

. On the other hand, this choice can be argued by the following interpretation of

: If any CDF

is horizontally shifted by any value

, the area

between the original and the shifted CDF just corresponds to the shift value, since

is always normalized between zero and one—thus,

. So, if in our example

is shifted by

on the parameter axis, as depicted in

Figure 7a, it can be interpreted as a worst-case slot, where 90% of all batch CDFs are within.

An aspect to consider when using this confidence interval is related to the worst-case scenario—especially for large-scale problems, it is very unlikely that the worst-case performance can be identified through the relatively small share of initially evaluated samples. Additionally, the CDF turns very flat when approaching a quantile level close to 1, following significant errors for the corresponding quantile values (here, cogging torque levels), even if the CDF exhibits only small uncertainties on the quantile level (that is, probability value). To be on the safe side, it is recommended to use this method only up to a quantile level of approximately 0.95.

In

Figure 7a, the 90% limit slots calculated with the DKW method are additionally shown. Not to be confused with the

limit, the

slot defines the slot, wherein the real CDF (

) is with a probability of 90%. Similar to the evolution of

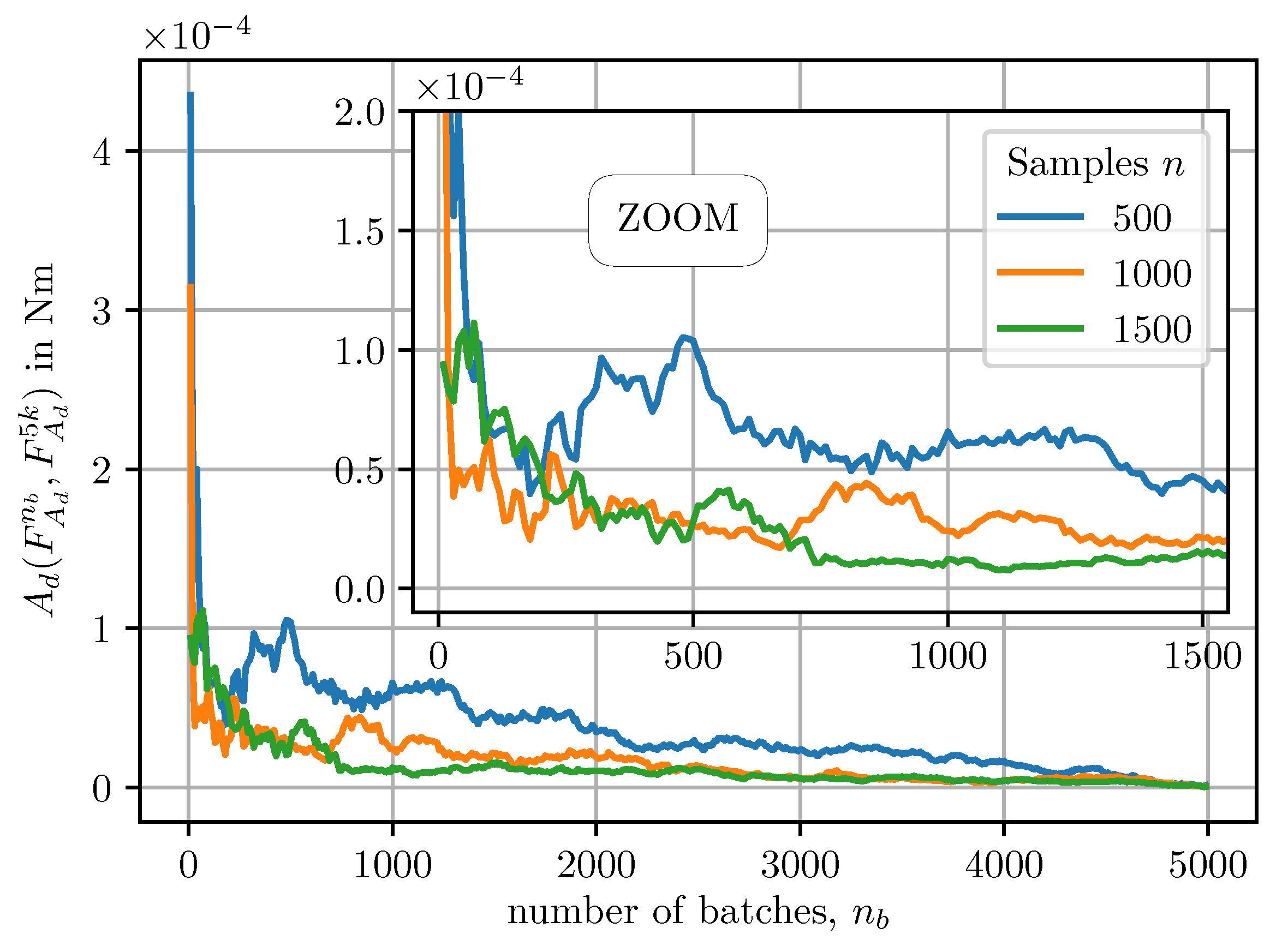

, now the question arises about how many batches are necessary until

converges. Since it is again about convergence of a cumulative distribution function, Equations (

3)–(

5) can be applied, which basically means the same convergence behavior for

as for

. For 5000 batches,

can be evaluated from (

5). To show convergence, the deviation of

to

,

was evaluated as a function of the number of batches

and presented in

Figure 8.

It can be seen that convergence is fast, and that the prediction continuously gets better with an increasing number of samples. It is further important to emphasize that the creation of

is very fast, because no finite element calculations are needed. One additional aspect concerning convergence is that taking the shape of the whole CDF into account is probably too strict as a criterion. Actually, we are interested in the convergence of particular quantiles

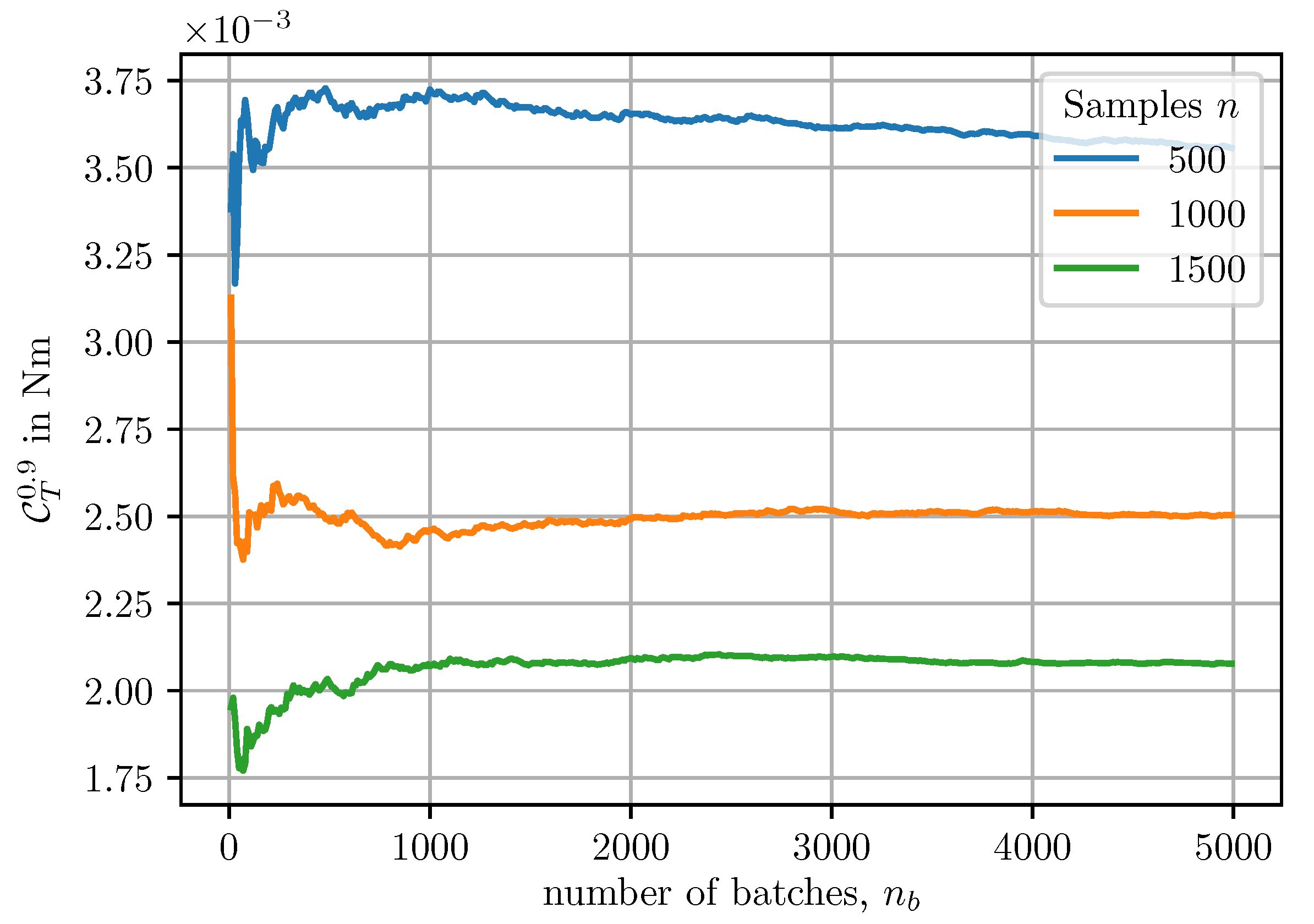

, rather than the convergence of the whole distribution function. In

Figure 9,

is shown over the number of batches and for different values of total samples

n.

It can be observed that

is fluctuating within the first 500 batches and has almost reached convergence after 1000 batches. What can further be seen even better than in

Figure 8 is that

drops significantly with an increasing number of total samples.

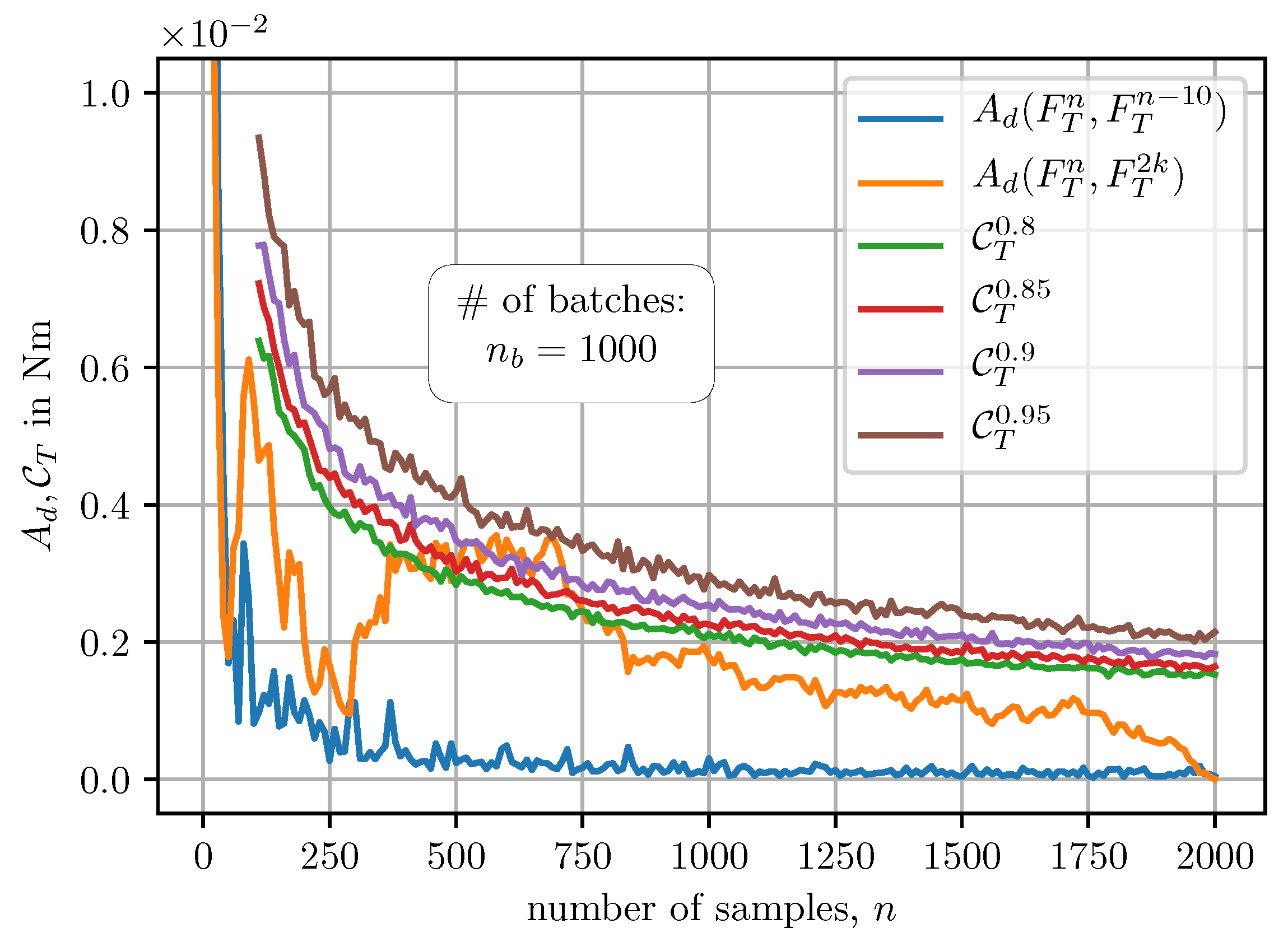

The evolution of different confidence values over the number of available samples is shown in

Figure 10, together with the absolute and relative deviation area (cf.

Figure 6).

What can be observed from

Figure 10 is that

seems to be a good measure for the absolute deviation, although it is only based on data available at every sample step.

In

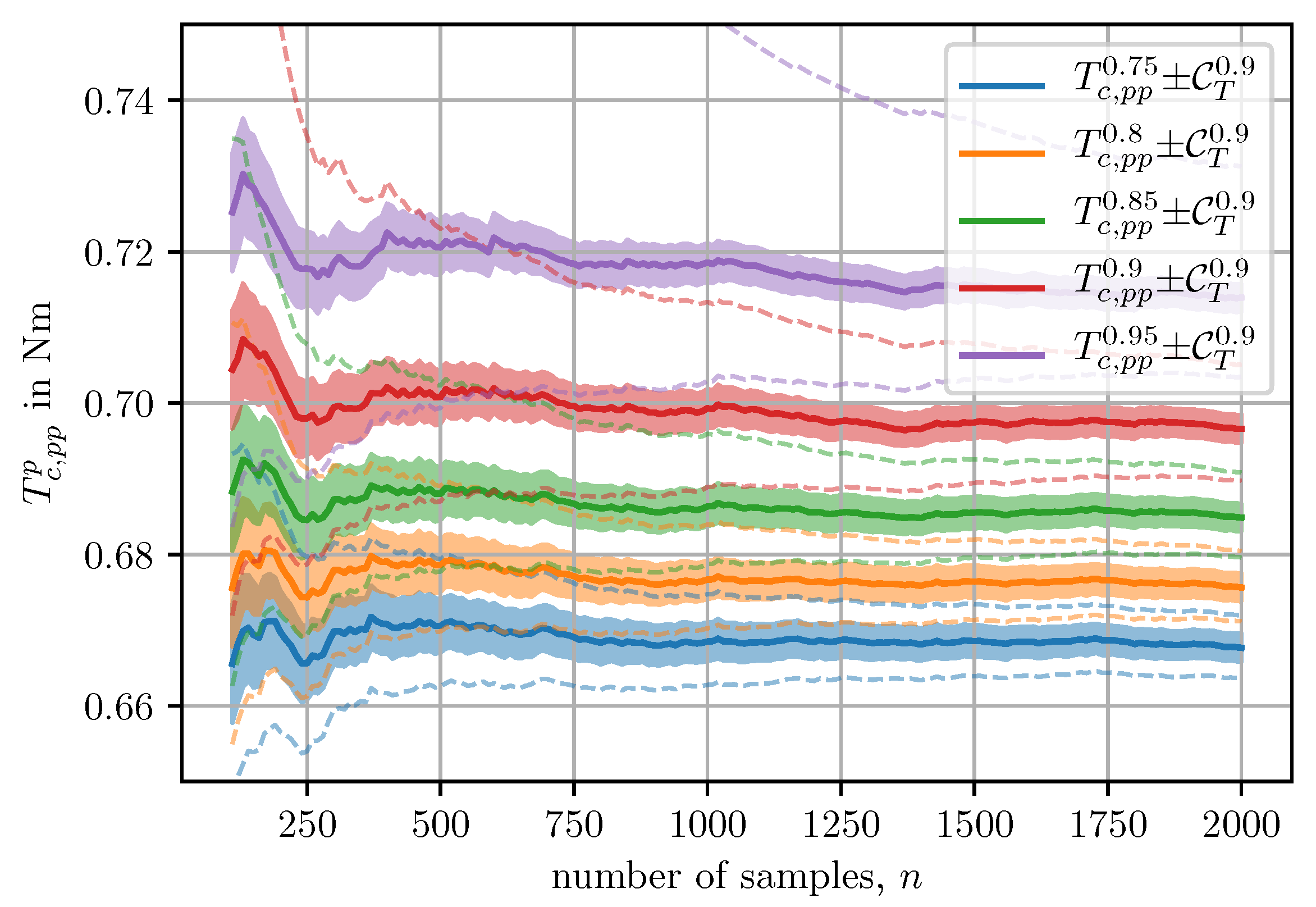

Figure 11 the evolution of different quantile values of the cogging torque

are plotted together with the worst-case 90% confidence band calculated with the batch method presented within this section.

Additionally, the confidence bounds based on the quantile functions of L and U of the DKW method are illustrated by dashed lines. Especially for high quantile levels, p, the DKW method leads to much more conservative predictions of the confidence slot.

6. Discussion

What we have shown so far is a method to statistically predict the effect of manufacturing tolerances apparent in electric machine mass production, where a large number of tolerance-affected parameters are taken into account. If the performance variation of such an investigation is not satisfying, it might be interesting to investigate which of the tolerance-affected parameters has the highest impact. Unfortunately, this information cannot be deduced from the resulting CDF,

. Luckily, the method gives reasonable results with low computational effort, measured against the complexity of the problem. For example, the 2000 samples of the test case were simulated within approximately 8 h on an available HTCondor [

23] cluster with around 150 workstation cores (where, of course, not all of them were used simultaneously). So, to identify which tolerance parameter has a high influence, and also which one can maybe neglected, the presented analysis can be performed for every parameter in a one-factor-at-a-time manner. For the test case, four different runs (every parameter of

Table 2) would be necessary.

It should also be mentioned that for problems with a low number of tolerance-affected parameters, this method might not be the first choice. Sampling of tolerance-affected space using design of experiments, creating surrogate models upon these samples, and evaluating the influence of tolerances using the surrogate models will be more effective. The blurry and problem-dependent decision boundary for using state-of-the-art methods or the one presented here will be a topic of further investigation.

So far, most of the presented work on tolerance analysis has been about symmetric changes, such as the same change of the height of all utilized permanent magnets. This is done because the more realistic individual changes require much more computational effort and the modeling and evaluation complexity are significantly increased. The approach presented here allows for analyzing realistic scenarios that are much more likely to be present in the mass manufacturing of electric machines, while still maintaining reasonable computational cost.

By contrast, available counterparts from the literature featuring a considerable number of individual parameter changes are, for example, either based on (i) analytical models [

24] or (ii) on theoretical investigations about worst-case scenarios [

12]. Such approaches constitute solutions with much less required computational effort. However, they are generally not flexible, considering their application on many different machine types and corresponding designs. Besides, they are often limited to, for example, a particular performance measure, such as the worst-case one [

12].

With the approach derived here, those limitations do not apply. In order to further improve the approach presented here, the authors are considering the development of a hybrid version, that is, an analysis facilitated through making use of the cumulative distribution function, which is both based on finite element-based analysis of selected samples, and consequent surrogate modeling and evaluation of further configurations. This would allow for further minimization of the computational burden, while utmost flexibility for analyzing diverse scenarios is sustained. Consequently, such further improved approaches are more likely to be applied for online robustness evaluation of designs to be evaluated within electric machine design optimization problems.