1. Introduction

A one-parameter distribution is presented, hereby referred to as the 1D-Weibull, which has asymptotic behaviour similar to the conventional Weibull. Unlike the two parameter formulation of the latter, the 1D-Weibull only has one parameter, w, bounded by the interval . The estimator of this parameter depends only on the sample mean. Distributions like the Weibull generally have two or more parameters in order to characterise the statistical behaviour of physical phenomena. This presents a number of issues such as the fact that estimating two or more parameters introduces greater loss associated with small or poor sampling during the estimation process. The question arises as to whether it is possible to reduce such losses by using ‘simple’ distributions with less parameters but with equally good performance. Thus, aside from introducing the 1D-Weibull as a stand-alone distribution, the paper will also examine whether the 1D-Weibull can be used as a one-parameter alternative to the Weibull. To answer this question, a metric is required that determines the degree of separation or relative entropy (divergence) between them. For small relative entropy the two-parameter Weibull can be replaced by the simpler one-parameter 1D-Weibull distribution. Under this condition, the 1D-Weibull is expected to perform at least as well as the two-parameter conventional Weibull distribution.

One reason why there is interest at finding distributions with a smaller number of parameters is because they don’t have issues of complexity and over-fitting. The simpler distribution with good performance is better for modelling purposes. This can be deduced from the Akaike information criterion (AIC) [

1,

2] and other variants such as the Bayesian information criterion when examining the performance between two distributions against data. The AIC was obtained by Akaike while seeking to find a connection between the relative entropy of information theory and the maximum likelihood method. When comparing a number of models against the same data, the model with the minimum AIC is the one preferred but the AIC includes a penalty for those models with more parameters in order to discourage over-fitting. This means that for two distributions that perform equally well against the same data the one with the smaller number of parameters will be the best model. The AIC gives an asymptotic estimate of the performance between distributions and only on the same data. It does not assume knowledge of the distribution that created the data. The AIC between the 1D-Weibull and Weibull will be compared in the paper. However, a more powerful analytic approach for comparing two distributions whose mathematical forms are known, regardless of whether the data is the same or not, is to calculate the relative entropy between them.

The relative entropy between the 1D-Weibull and Weibull distributions will be derived on the basis of the Kullback-Leibler (K-L) divergence formulation [

3,

4,

5,

6,

7,

8,

9,

10]. The Kullback-Leibler approach has been used previously to examine the separation between probability densities [

11,

12,

13,

14]. This has been done with the aim of replacing one standard version with another whenever the relative entropy between them is small or zero. The conventional relative entropy between two standard distributions, while of academic interest, serves no practical purpose in the analysis of real physical processes. This is due to the fact that the solutions which achieve small relative entropy between them are trivial or unique and might arise from intersections for example. Failure to obtain small or zero relative entropy between two distributions is mainly due to their different mathematical forms which are used for modelling specific problems. The requirement is to find distributions that have zero or very close to zero relative entropy with respect to more complicated distributions while also having a smaller number of parameters. In addition, a small relative entropy must be valid for a larger solution set beyond the unique or trivial cases. Only then is it useful to replace a complicated distribution with a simpler one.

The K-L relative entropy gives solutions which contain the parameters of the two densities. This allows testing of different estimators for all the parameters since the goal is to find estimators that minimise or set the relative entropy to zero. This will be seen later in the paper when it is shown that the estimator of the 1D-Weibull density is equivalent to , derived from the expression for the relative entropy, that minimises the divergence between the 1D-Weibull and Weibull densities. In other words, the estimator obtained from the maximum likelihood method (MLM) is equivalent to the ‘theoretical’ expression which minimises the relative entropy between the 1D-Weibull and standard Weibull densities. Thus the latter approach can be explored as an alternative to the MLM for obtaining parameter estimators. In addition, the relative entropy allows easy determination of upper and lower bounds for such estimators. Under certain conditions, the relative entropy can be connected to the Fisher information matrix directly.

It it worth noting two ‘issues’ with the K-L formulation. The K-L is a pseudo-metric as it is not symmetric for large separations between the two densities and does not obey the triangle inequality. It is rather simple to make it symmetric for large separations however. If two probability densities

and

have relative entropy

, then their relative entropy can be made symmetric using the following expression

The relative entropy has a mathematical duality with the Fisher-Rao geodesic which is symmetric for all separations and obeys the triangle inequality [

15]. This is especially true in the limit of small separation between the two densities. For almost all cases of interest however, the issues of symmetry and large separation of densities are of no consequence.

In addition to deriving distributions with reduced parameters such as the 1D-Weibull, it is possible to increase the number of parameters too. For instance, a standard distribution such as the Exponential which has decaying characteristics, can be modified to include an extra parameter. The (fractional) two-parameter Exponential distribution will also be presented which contains the standard Exponential decaying characteristics as a special limit of the second parameter. It also has performance that is analogous to the standard Weibull and extreme-value type distributions. It will be shown that via the use of fractional mathematics, a simple transformation can be obtained that makes this possible. The transformation takes a random variable

x and transforms it to a form that is a function of the second parameter. This parameter

is the fractional order appearing in the transformation. The fractional order is inherent in the operators which are used in fractional differentiation and integration. Fractional mathematics has the potential to change the way statistical analysis of problems is made. The reader is referred to [

16,

17,

18] for details and the references therein. It is worth noting that the word ‘fractional’ is a historical misnomer. The term ‘fractional’ mathematics should in fact be understood to mean ‘generalised’ mathematics. For example integer order differentiation and integration are special limits of the fractional (general) versions.

Firstly however, the 1D-Weibull distribution is considered and its performance against the conventional two-parameter Weibull is investigated theoretically and later in

Section 5 on real data.

2. The 1D-Weibull Distribution and Its Statistical Properties

The conventional Weibull distribution is parametrised by a shape parameter

k and scale parameter

such that the CDF is given by

and the PDF is given by the derivative of the CDF which becomes

It is worth pointing out that when the Weibull shape parameter takes the value

, the asymptotic performance of the Weibull distribution behaves like the exponential distribution. The case when

is a very special limit and the Weibull reduces to the Rayleigh distribution. Finally when

, the asymptotic behaviour of the Weibull distribution resembles a Gaussian or Normal distribution. Generally, in order to model a physical system, both of the parameters

k and

are required. These parameters can be estimated by sampling observed data sets. Using the maximum likelihood approach an estimator for

can be obtained as:

Given the form of the estimator for

k, (

4) must be solved numerically. The estimator for

is obtained via a simpler expression,

where the total number of variables sampled is

n. In order to obtain

in (

5), the shape parameter

k has to be estimated first using (

4).

A one-parameter Weibull distribution which has asymptotic behaviour that is similar to the two-parameter Weibull distribution is now considered. The one parameter Weibull-type distribution (1D-Weibull) has parameter

w whose estimator is obtained from the sample mean. The CDF is given as

The density (PDF) is given as the derivative of (

6) so that:

Both the CDF (

6) and PDF (

7) depend only on one parameter

w whose values are bounded in the interval

. The following limits apply to the PDF:

and

for the CDF.

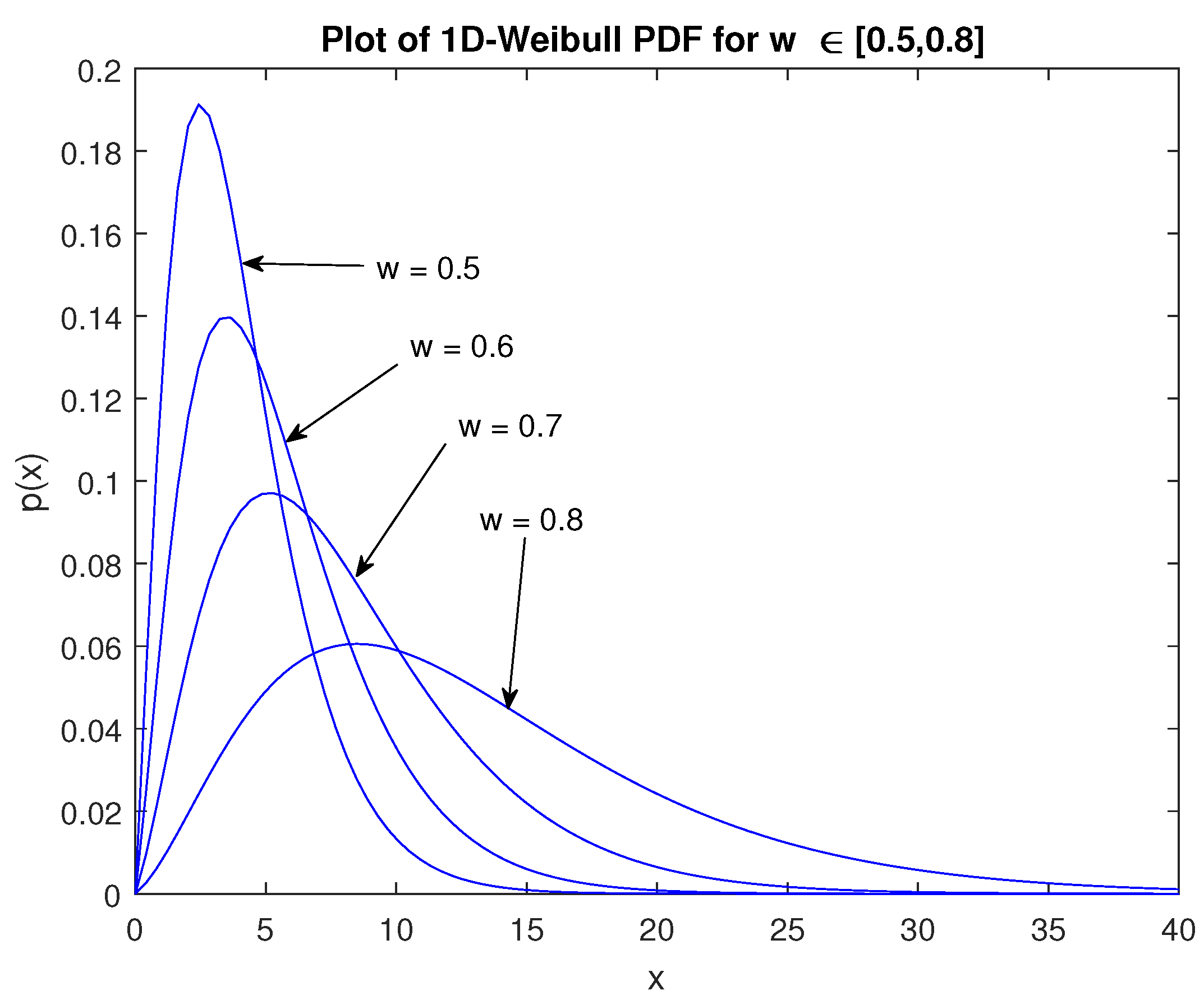

Figure 1 shows plots of the density for varying

w values. The behaviour of the 1D-Weibull distribution is analogous to the two parameter Weibull. How much so will require a separation metric or divergence that can determine the degree of separation (or similarity) between them. This will be done in the context of the relative entropy in the next Section but before that, some important statistical properties of the 1D-Weibull distribution will be derived. An estimator

can be obtained from the maximum likelihood method. Let the likelihood function be

The log-likelihood of (

10) is expanded as follows where the left hand side is written as

:

The estimator

can be obtained by taking the derivative of (

11) and setting it to zero,

By simplifying (

12), it is shown that the estimator is easily obtained via the quadratic equation:

where

is the mean. The solution can be readily obtained via the use of the transformation

so that

As expected from a quadratic equation, there are two solutions such that

The negative solution ensures that the estimator lies within the correct bounds

. Thus using the definition of

x, the following form is obtained for the estimator

:

where

can be either the population or sample mean respectively, i.e.,

and

n is the total number of random variables sampled in the data. In order to test the convergence of the estimator

, i.e., (

16), Monte Carlo simulations were performed using a seed value for

w. The convergence of the estimator to the seed was tested and a typical result is shown in

Figure 2.

In this example,

samples were generated randomly from the 1D-Weibull PDF each time for

simulations. From

Figure 2 the estimator for the 1D-Weibull distribution possesses the correct convergence. This is to be expected as the estimator

depends on the sample mean. Thus the convergence of the estimator depends on the correct estimation of the mean of the randomly generated data samples. Next, the moments

of the 1D-Weibull will be presented for

. The moments are obtained from,

where the 1D-Weibull density is given by (

7) and the integration domain is

. Specifically, the moments are calculated as:

Expanding the integrand and using integration by parts yields the following result for the 1D-Weibull moments,

Observe that the

order gives

which is also readily seen from (

18), that is, the second axiom of probability is obtained which states that the integral of the density over the entire domain is unity. The expectation or mean is given by the

order and from (

20) it becomes

The variance for the 1D-Weibull is obtained by using the

orders so that,

Higher order moments can be computed using (

20). In order to generate random variables that are 1D-Weibull distributed (

) the CDF (

6) has to be inverted and must be solved as a function of the variable

q whose value is obtained from a uniform distribution

. To do this, consider the fundamental transformation law of probabilities for two densities

and

:

Since

and

,

Let

be the 1D-Weibull density and

be the uniform density

valid in the interval

and

. Then from (

24)

where

are positive. The integrals on both sides of (

26) are nothing more than the CDF of the 1D-Weibull on the left and the uniform distribution on the right respectively. Let

q be a random number drawn from the uniform distribution in the interval

and

. Then (

26) becomes,

This requires inversion of (

27) in order to solve for

y. That is, solve for the 1D-Weibull random number

y for a given random number

q generated from the uniform distribution for a particular value of the parameter

w. However, solving for

y in (

27) requires numerical computation given the form of the 1D-Weibull CDF. A typical numerical solution for randomly generated variables from the 1D-Weibull distribution is shown in

Figure 3. The theoretical 1D-Weibull and standard Weibull have been plotted as a comparison. As expected, the theoretical 1D-Weibull PDF matches the generated data extremely well. Finally, it is worth highlighting how the PDF and CDF of the 1D-Weibull distribution were derived. The PDF was obtained by minimising the relative entropy between the standard Weibull and a density

whose form includes a polynomial term such that,

where

and

A is a normalization constant. The normalization can be performed using the expression:

From (

28), it can be seen that the 1D-Weibull is a special case for which

A is the coefficient, refer to (

7), with

and

. Once the PDF is obtained, namely (

7), the CDF is easily determined by integrating the PDF to obtain (

6). In the next Section, a comparison of the 1D-Weibull and Weibull distributions is presented based on the relative entropy or Kullback-Leibler divergence between them.

3. The Relative Entropy between the 1D-Weilbull and Weibull Densities

The relative entropy between two probability densities will be obtained using the Kullback-Leibler formulation:

where

is the domain of integration. Here

is the probability density that another probability density

asymptotically approaches. In other words,

is the theoretical or accepted model that more ‘accurately’ describes a physical process or system. The density

is a model (approximation) for which knowledge is required as to how close it is to the density

. Whenever the relative entropy or divergence between the two densities is small it is possible to replace

with the approximation

. In the context of this paper,

is the Weibull density with two parameters and the requirement is to replace it with the one parameter 1D-Weibull. Thus let

be:

and

be:

Substituting (

31) and (

32) into (

30) gives the following expression for the relative entropy:

It is now a matter of performing the integrations as given in (

33) which require transformations and integration by parts to obtain the solutions. The first term is straightforward to evaluate since the

function is independent of the variable

x. By the second axiom of probability

so that:

In a similar way the next term can be computed to obtain the following result:

where

is the Euler-constant. After evaluating the next integral, the result turns out to have the following form:

Performing the next integration results in the solution:

Unfortunately, unlike the other integral terms appearing in (

33), the final integral does not have a closed form and requires numerical solution. However it can be transformed to two separate integrals with different terminals which can then be solved analytically as follows:

In view of (

38), the final integral can now be evaluated in terms of transformations and integration by parts. The solution then becomes:

where

is the incomplete Gamma-function and

is the exponential-integral function. Equation (

39) is

exact for large (realistic) values of

and an excellent approximation for small

. After substituting (

34)–(

37) and (

39) into (

33), the relative entropy between the 1D-Weibull and Weibull densities is given by the following expression:

The relative entropy or divergence (

40) gives the separation between the 1D-Weibull and Weibull distributions as a function of their parameters, namely

w,

k and

respectively. From the relative entropy (

40), it is possible to find an expression for

w that minimizes the separation between the 1D-Weibull and Weibull distributions. Taking the derivative gives:

Setting (

41) equal to zero and simplifying terms gives the following quadratic equation for

w:

Solving (

42) and considering the negative root only, the expression for

that minimizes the relative entropy or divergence is given by:

Equation (

43) can be plotted as a surface in terms of the Weibull parameters

. This means that for any

, the corresponding point on the surface is

that minimizes the relative entropy between the 1D-Weibull and Weibull distributions. Using a fixed value for

as determined from (

43), plots are shown for the relative entropy as a function of the Weibull parameters

in

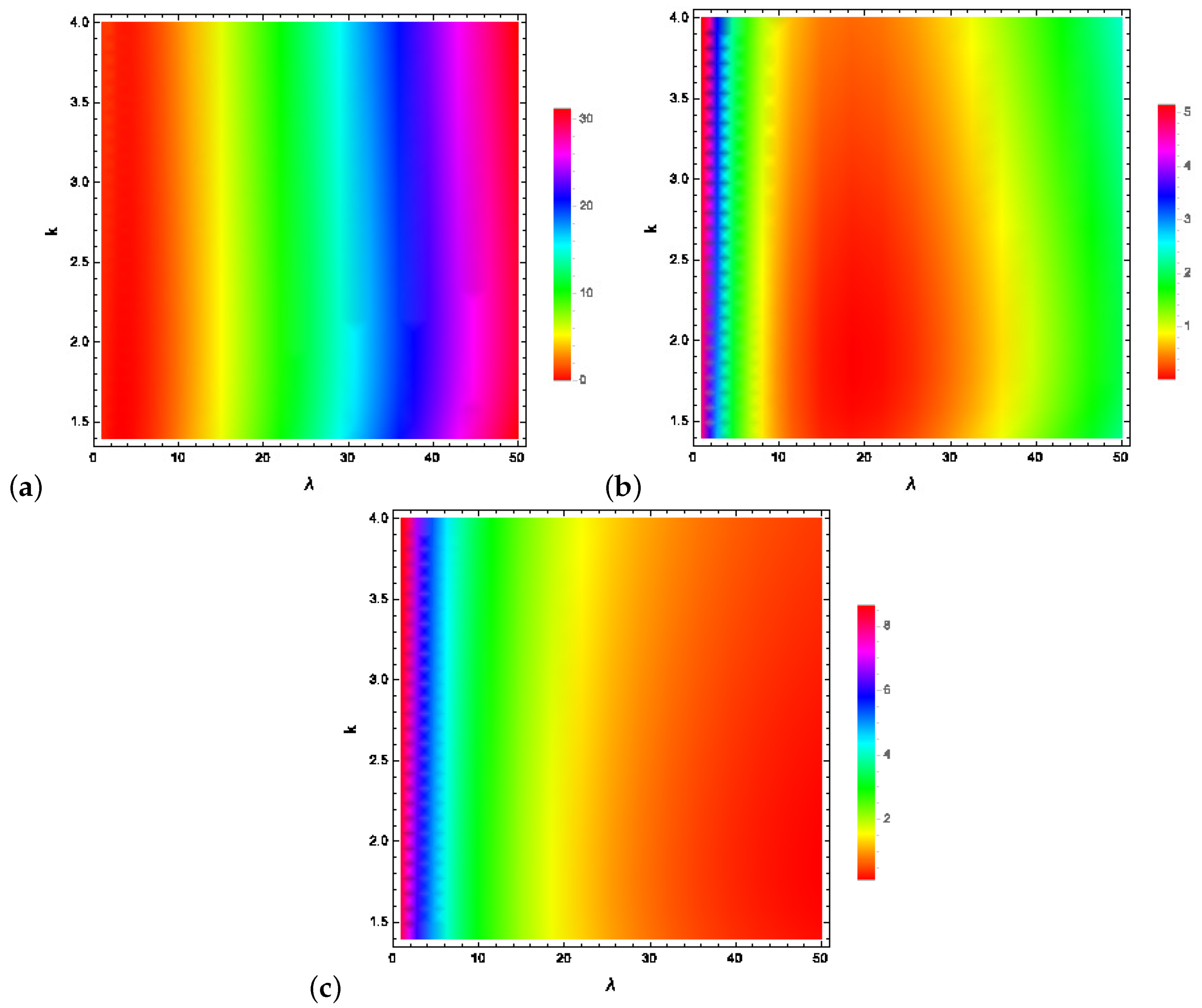

Figure 4.

Figure 4a shows the relative entropy for

values between approximately zero to just over

where the divergence between the 1D-Weibull and Weibull distributions is zero or very close to zero. This occurs when

. In this region, see

Figure 4a, and for the Weibull parameter values

shown, the Weibull distribution can be replaced by the 1D-Weibull. In order to have a relative entropy or divergence that is close to zero or even zero for values of

greater than say

requires using (43) to obtain

. The results are shown in

Figure 4b where the relative entropy is close to zero or zero everywhere for approximately

. Finally, small to zero divergence for higher values of the Weibull scale parameter,

, can be achieved when

as the results of

Figure 4c show. Note that in all cases, the shape parameter values of interest for the Weibull distribution are covered, i.e.,

.

As further examination of the performance of the 1D-Weibull density, the Akaike information criterion

A will be considered. The Akaike information criterion (AIC) has the following definition:

where the idea is to use estimators for the parameters

that maximise the log-likelihood function

. Here

p represents the total number of parameters for each model being compared using certain data. The AIC penalises a model that has too many parameters because of over-fitting. This is especially true for small sample sizes

n for which (

44) is valid. When the sample size increases

, the second term goes to zero and the AIC takes the form:

Another formulation of the AIC involves the residual sum of squares

R, assuming that the residuals are distributed according to independent and identical normal distributions with zero mean,

In the case of the AIC involving the residual sum of squares, the idea is to have

. When this happens two models fit data equally well except that, according to the AIC, any discrepancy that might be present relies on the number of parameters

p they each have. The same condition holds for the AIC formulated on the basis of the log-likelihood function, (

45), however the log-likelihood function must be as large as possible before the first term dominates. Preference is for simpler models with fewer parameters that avoid the issues of over-fitting and complexity. On that basis, it appears that the 1D-Weibull with one less parameter compared to the Weibull has the advantage. The AIC value of the model that is generally smallest indicates the model that best fits the data. In order to compare the 1D-Weibull and Weibull using the AIC approach, care must be taken with the data being modelled. If the data is 1D-Weibull distributed it will fit better than the Weibull, see for example

Figure 3. This is also because it has one parameter

p less which means that the AIC values will be smaller. If the data is Weibull distributed, for cases where the 1D-Weibull has small or zero relative entropy with respect to the Weibull, both will fit the data equally well but with the better model being the 1D-Weibull since smaller AIC values will also be due to its one parameter compared to the two-parameter Weibull.

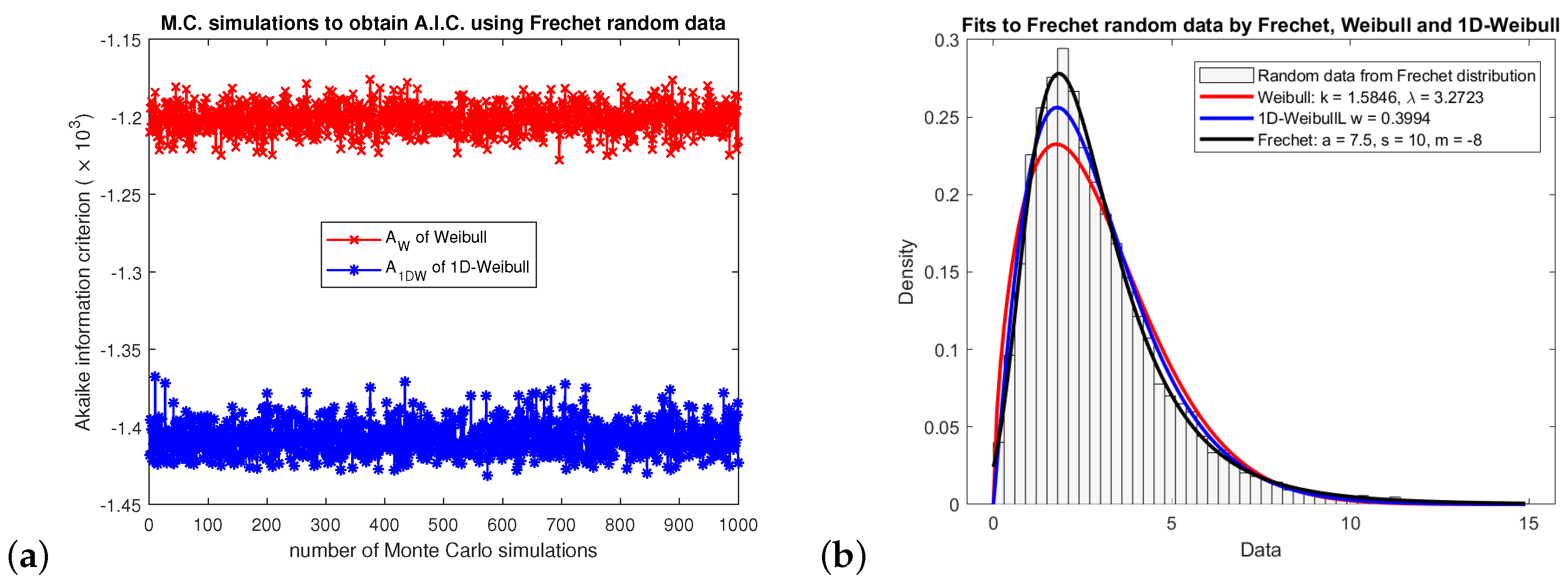

To better examine whether the 1D-Weibull has good performance relative to Weibull, random data was generated using the Frechet distribution as an alternative:

where the Frechet distribution has three parameters,

a-shape,

s-scale and

m-location. Using seed values for the Frechet distribution, Frechet random variables were generated and

Monte Carlo simulations were run with different random numbers generated each time. The 1D-Weibull and Weibull parameters were estimated each time and fitted to the Frechet density. The corresponding AIC values were calculated for each case.

Figure 5a shows the Monte Carlo simulations and the AIC values. In all cases the 1D-Weibull has smaller values and hence it is the best model compared to Weibull for fitting the Frechet data.

Figure 5b shows a typical fit to random Frechet data plotted as a density histogram together with fits from the 1D-Weibull, Weibull and the theoretical Frechet density.

So far, consideration has been given to finding a distribution, namely the 1D-Weibull, that has only one parameter and can fit data as well as the two-parameter Weibull when the relative entropy is small between them. It will be shown to fit data from different areas of research in

Section 5. What will be considered next is a way of adding an extra parameter to standard one-parameter distributions. This modification not only allows the performance of the modified distribution to widen but it also maintains its previous one-parameter characteristics as a special limit of the second parameter. This will be done on the basis of transforms obtained from fractional mathematics that have been used to derive fractional distributions such as the Pareto [

16,

17]. The latter has been shown to model radar clutter extremely well. This idea is considered next and demonstrated by deriving a (fractional) two parameter version for the Exponential distribution.

4. A (Fractional) Two Parameter Exponential Distribution

The standard Exponential distribution has one parameter

where

is the sample mean. In order to introduce another parameter to it that acts like the shape parameter of many two-parameter distributions it will be necessary to manipulate the variable

x in the Exponential distribution,

The variable can be either continuous

x or discrete

. The requirement is to find a simple transformation that relates

x or

to a modified version of themselves as a function of the second parameter

. Once this new

is obtained it is substituted into the CDF version of (

48) to derive a two parameter form for the Exponential distribution. This will be done by using an operator that maps a function to a fractional or generalised version whose extra parameter

will act as the shape parameter. Let

be a i.i.d. random variable which will be transformed to the variable

as follows:

where

is an operator that has the following form [

18]:

The argument appearing in the operator

means that the variable

x maps on to the variable

y as shown below. Let

be a variable that is to be modified by

to

. The integrand of (

49) becomes:

The integral can be carried out if a linear transformation

is used. Then

and substituting the transformation into the integrand gives

where the relation

has been used. The final step is to map the variable

x to

y such that

becomes

, that is,

Substituting into (

49) and noting that

on the left means that this function (variable) is mapped as follows,

The integral is easy to calculate and using the fact that

, the final transformation for a variable

x to its fractional analogue is,

for the continuous case and

for the discrete case. It is now possible to use the continuous transformation to construct a fractional or two-parameter Exponential distribution. Replacing

x in the standard CDF gives the following form

The PDF is easily obtained using the derivative of the CDF:

Notice that when

both the CDF and PDF collapse to the standard versions. Hence the fractional two-parameter Exponential also has the same performance characteristics as the standard Exponential in addition to its wider applicability due to the second parameter

. The reason why the modulus is required is because the parameter

can also take any value that is real

or complex

. This ensures that

and avoids complex values. An important property that (

58) must have if it is indeed a density is that it’s integral must be unity, otherwise it is not a probability density. It is easy to establish this since

and so the fractional or two-parameter Exponential distribution is a density. This means that the following properties hold:

and

The moments can be obtained via the usual process,

Using linear transformations and integration by parts it can be shown that the moments have the following closed form,

Setting

in (

63) gives the second axiom of probability which means the the moment is equal to unity over the entire integration domain. The expectation or mean is given by

:

When the parameter

takes on the value

, the (fractional) two-parameter Exponential distribution collapses to the standard Exponential. Thus setting

into (

64) gives the standard expectation:

where

and

is the standard expectation. Using

and

, the variance

becomes,

The standard Exponential variance is obtained when

so that,

as expected. Finally, to obtain the estimators for the two parameters

use of the maximum likelihood method is made where the likelihood function is:

From this, the log-likelihood function is derived in the following form,

Before deriving the estimator for

from (

69), consider the alternative approach first. From (

56) for a discrete variable, the fractional transform implies that the standard estimator

can be replaced as follows:

When

,

. Returning to (

69), the estimator for

is given by,

which means that the fractional transform method for obtaining the estimator agrees with the maximum likelihood approach. If

is chosen to be any value

or

then the modulus is considered, i.e.,

. The right-hand side of the

’s is of course the sample (or population) mean

. Unfortunately, the estimator for the second (fractional) parameter

does not have a closed form and must be solved numerically similarly to how the shape parameter for the standard Weibull

k is obtained. From (

69), the requirement is to solve for

Then the estimator

is obtained numerically from:

where

and

are the poly-gamma functions of order zero and one respectively. Using (

71),

can be eliminated in (

73).

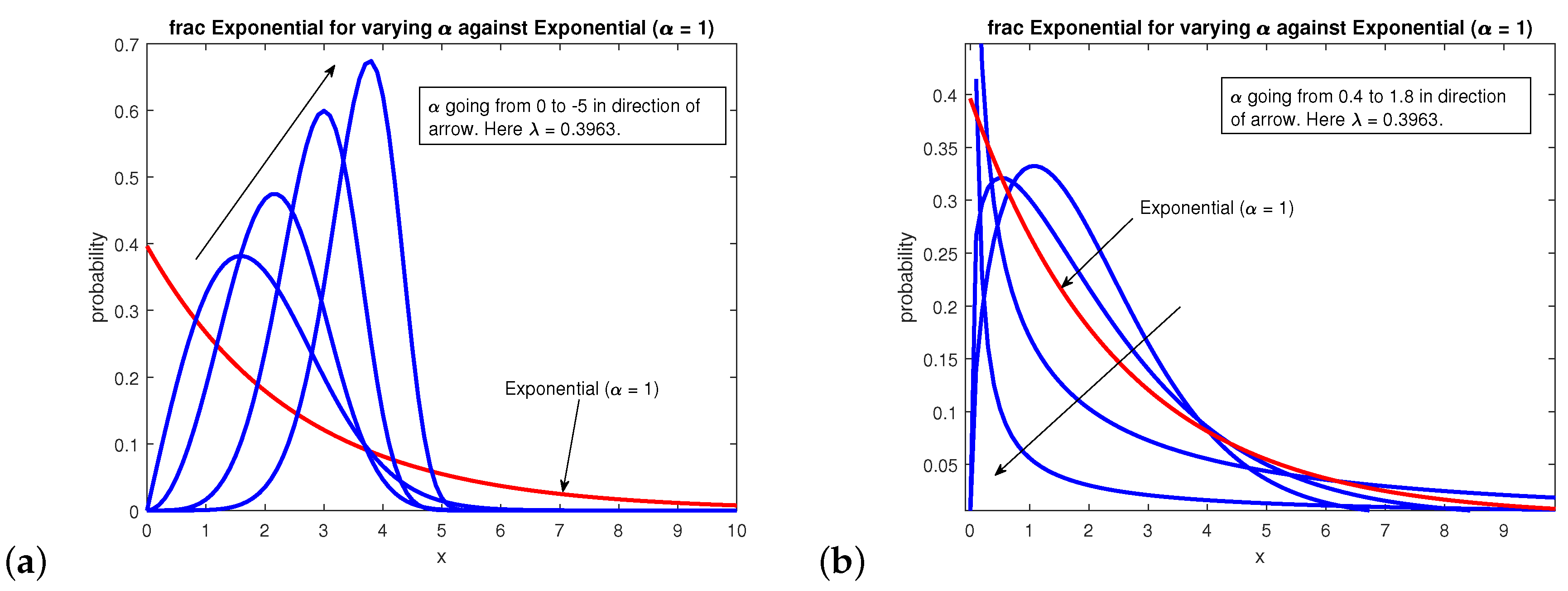

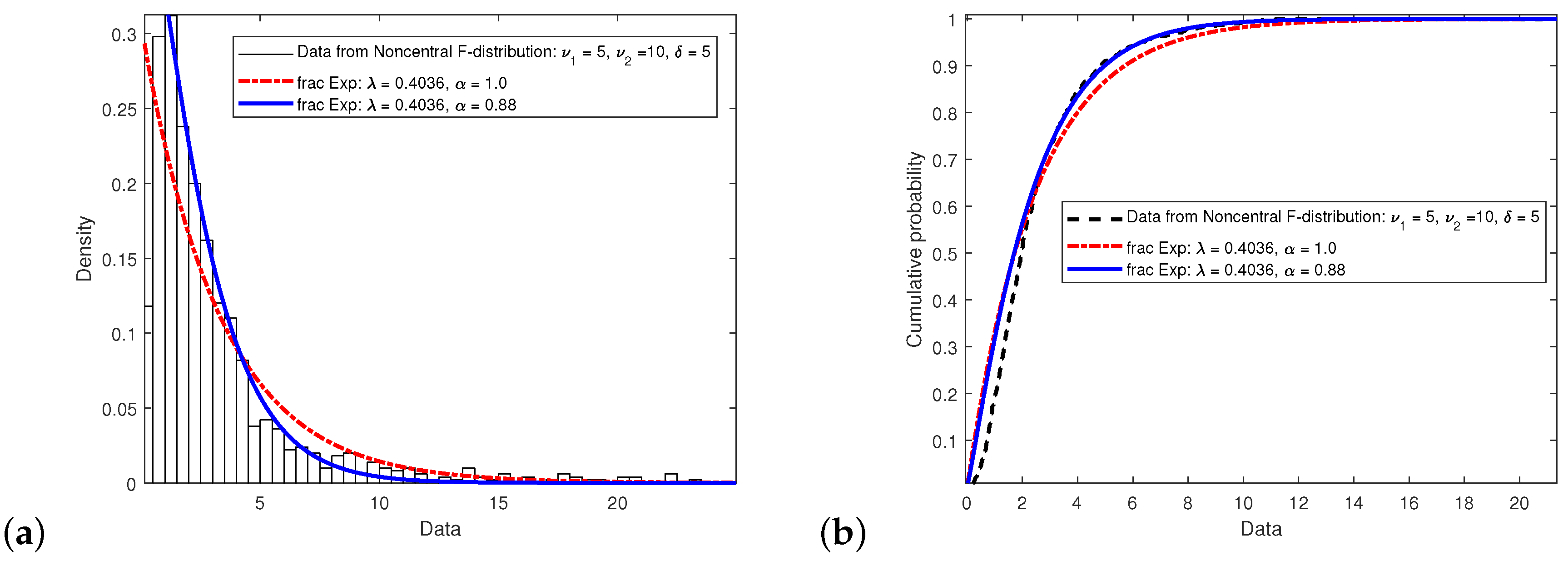

Figure 6 shows plots for different values of

including negative ones. Again this is possible since

and

. The conventional Exponential density is a special case when

, see (

58) in the limit

. Furthermore, the fractional exponential displays mathematical characteristics that are Weibull in nature as well as those of extreme value-type distributions. The standard Exponential and the two-parameter Exponential distributions were tested against random data generated by the non-local F-distribution which has three parameters: two that are the degree of freedom

and

respectively and the locality parameter

. When

, the non-local F-distribution reduces to the F-distribution. The reason why data was chosen from this distribution is because the Exponential with only one parameter

is not expected to fit the three parameter F-data well as

Figure 7 shows. Nevertheless by modifying the Exponential distribution to a two parameter form, it is possible to achieve better fits to the data by varying

while keeping

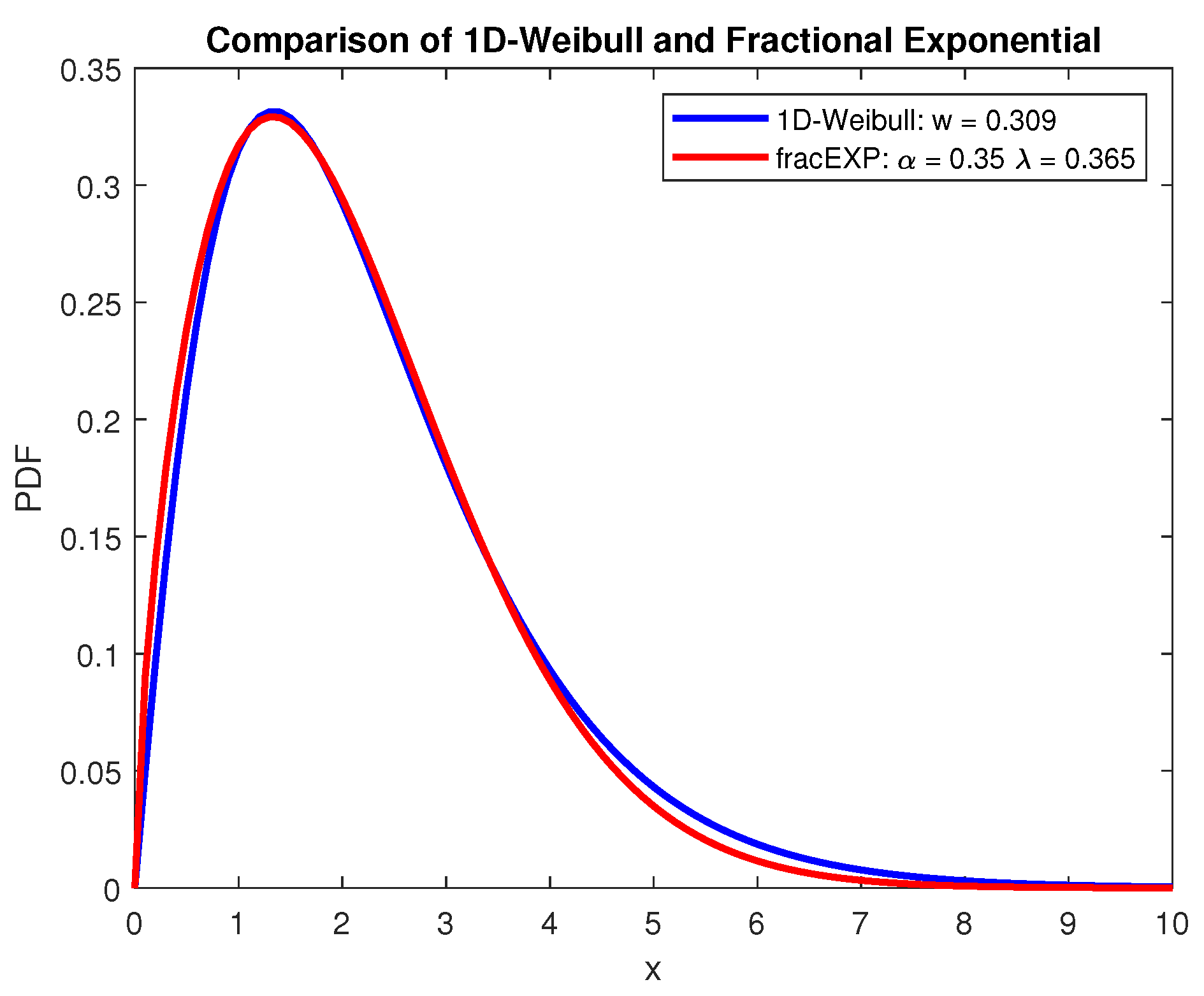

fixed. As a further comparison,

Figure 8 shows the 1D-Weibull and (fractional) two-parameter Exponential densities using non-optimised parameters. What is interesting is that in essence both of these distributions should not have Weibull-type characteristics. This is because the 1D-Weibull has only one parameter while the Exponential has a decaying trend. By modifying the Exponential to two parameters, it not only has the standard Exponential decay characteristics but it also exhibits Weibull and extreme value behaviour too.

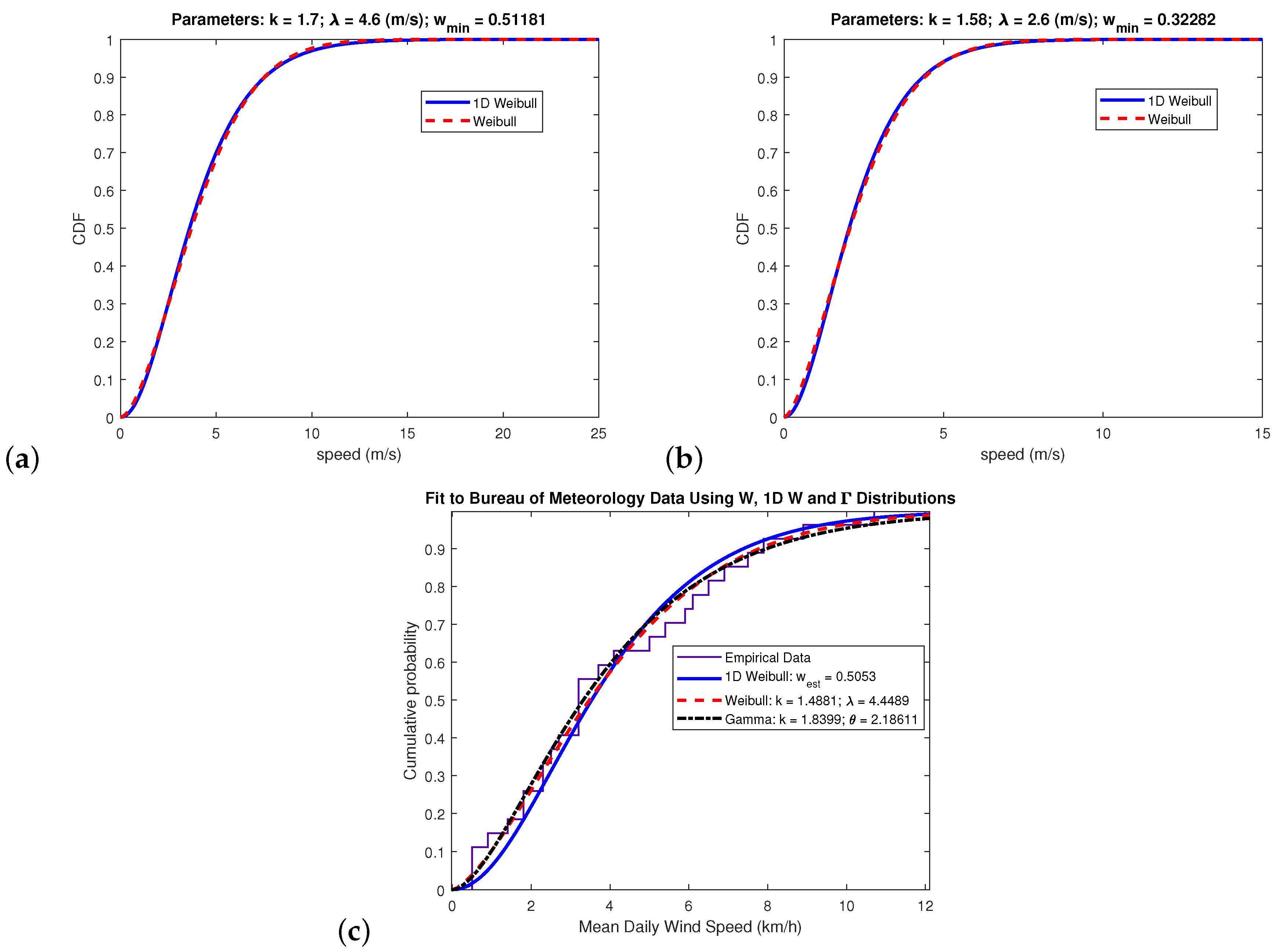

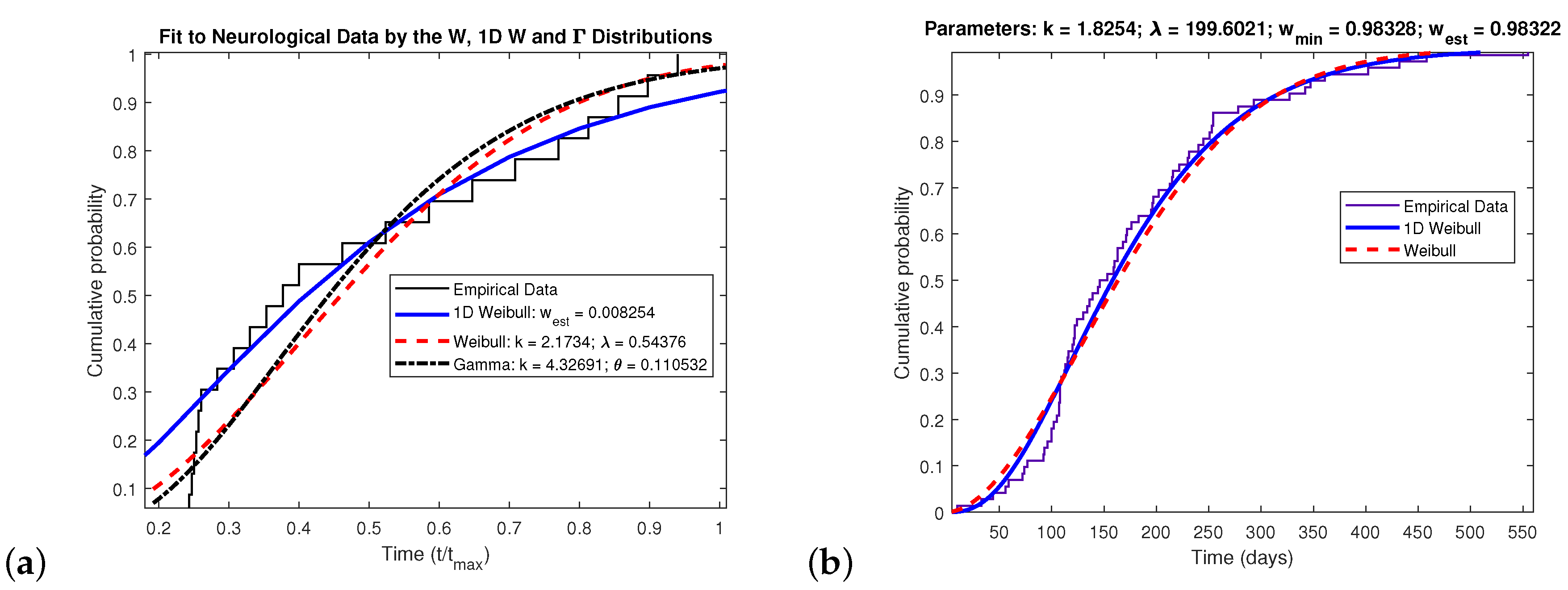

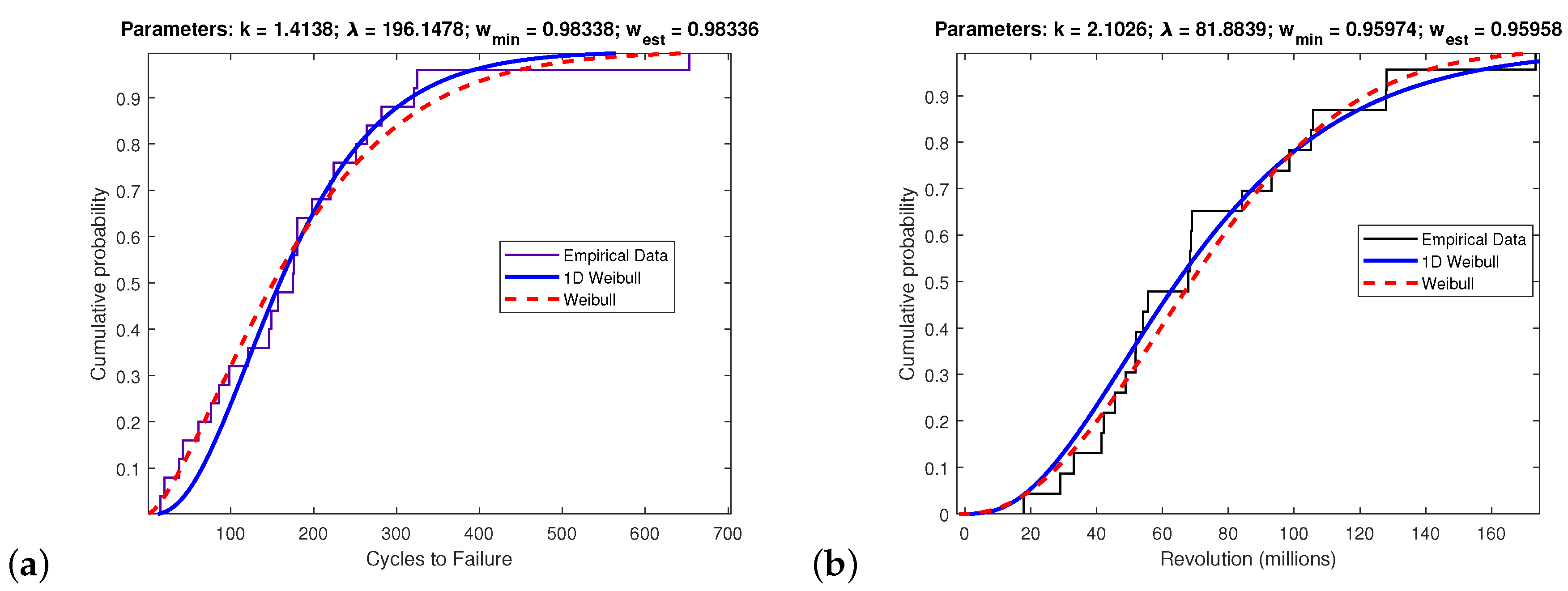

It should be clear from previously that the divergence between the 1D-Weibull and conventional Weibull indicates for what Weibull parameters the 1D-Weibull matches the performance of the Weibull. In other words, regardless of the data being investigated, if the Weibull fits such data then it is also possible to ascertain whether the 1D-Weibull does too. Whenever the divergence is zero or small enough, it is then possible to replace the two-parameter Weibull with the one-parameter 1D-Weibull which only requires the sample mean to fit such data. In order to examine the performance of the 1D-Weibull, real data will be tested in this Section taken from different fields of research.