Detection and Identification of Expansion Joint Gap of Road Bridges by Machine Learning Using Line-Scan Camera Images

Abstract

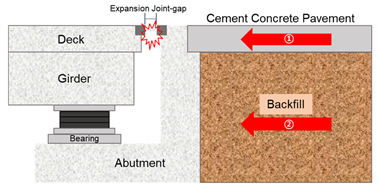

:1. Introduction

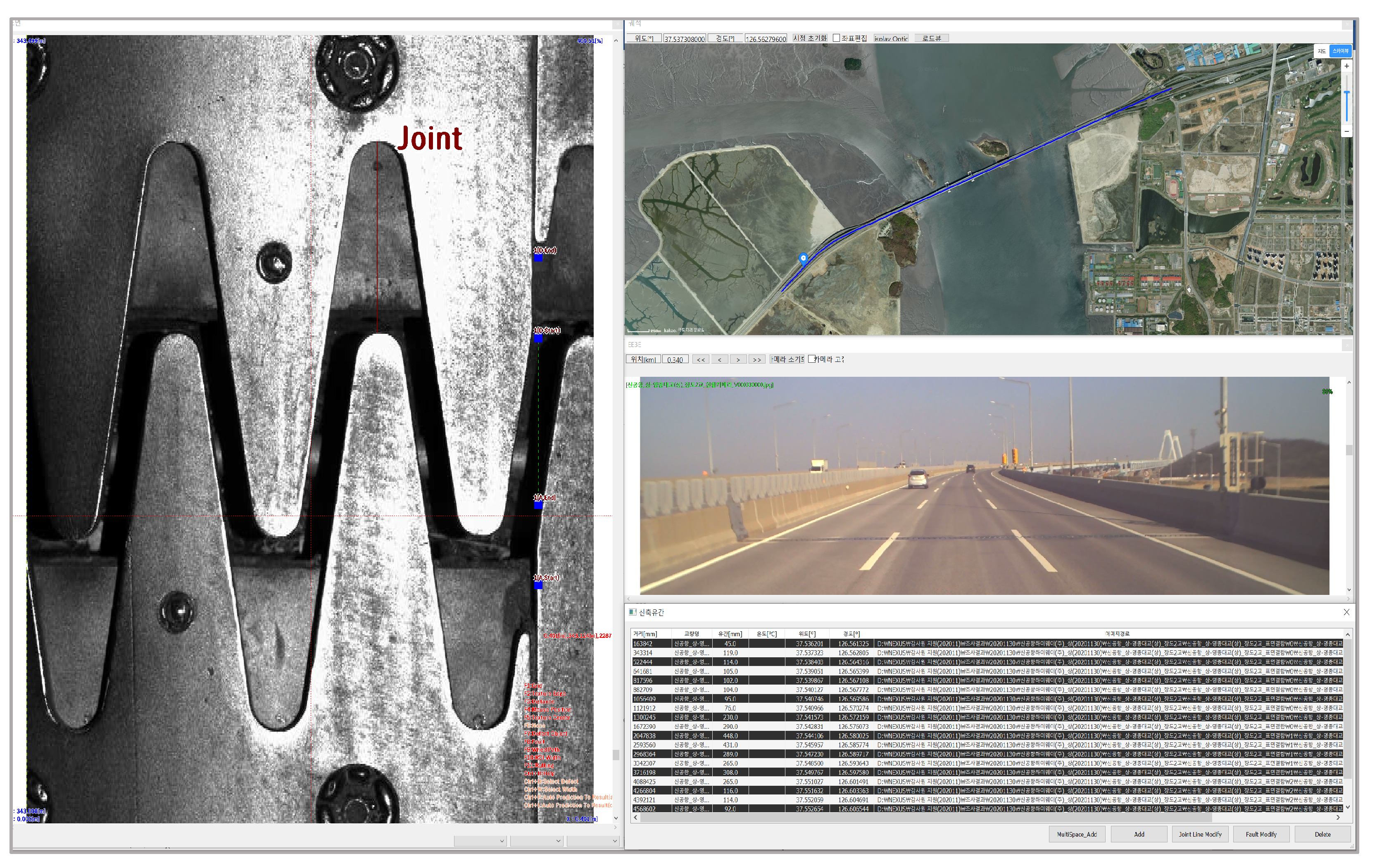

- Section 2 introduces a system developed to survey the road surface while driving at high speed (100 km/h) with a line-scan camera and an M/V imaging device. It introduces operating equipment and explains the main functions and test results.

- Section 3 describes the adequacy review of pre-setting by surveying newly constructed bridge construction joints with standard computer vision methods applied to the initial system.

- Section 4 describes another detection mechanism that uses machine learning.

- Section 5 concludes the paper and proposes future work.

2. Development of Monitoring Technology for Bridge Expansion Joint Using Line-Scan Cameras

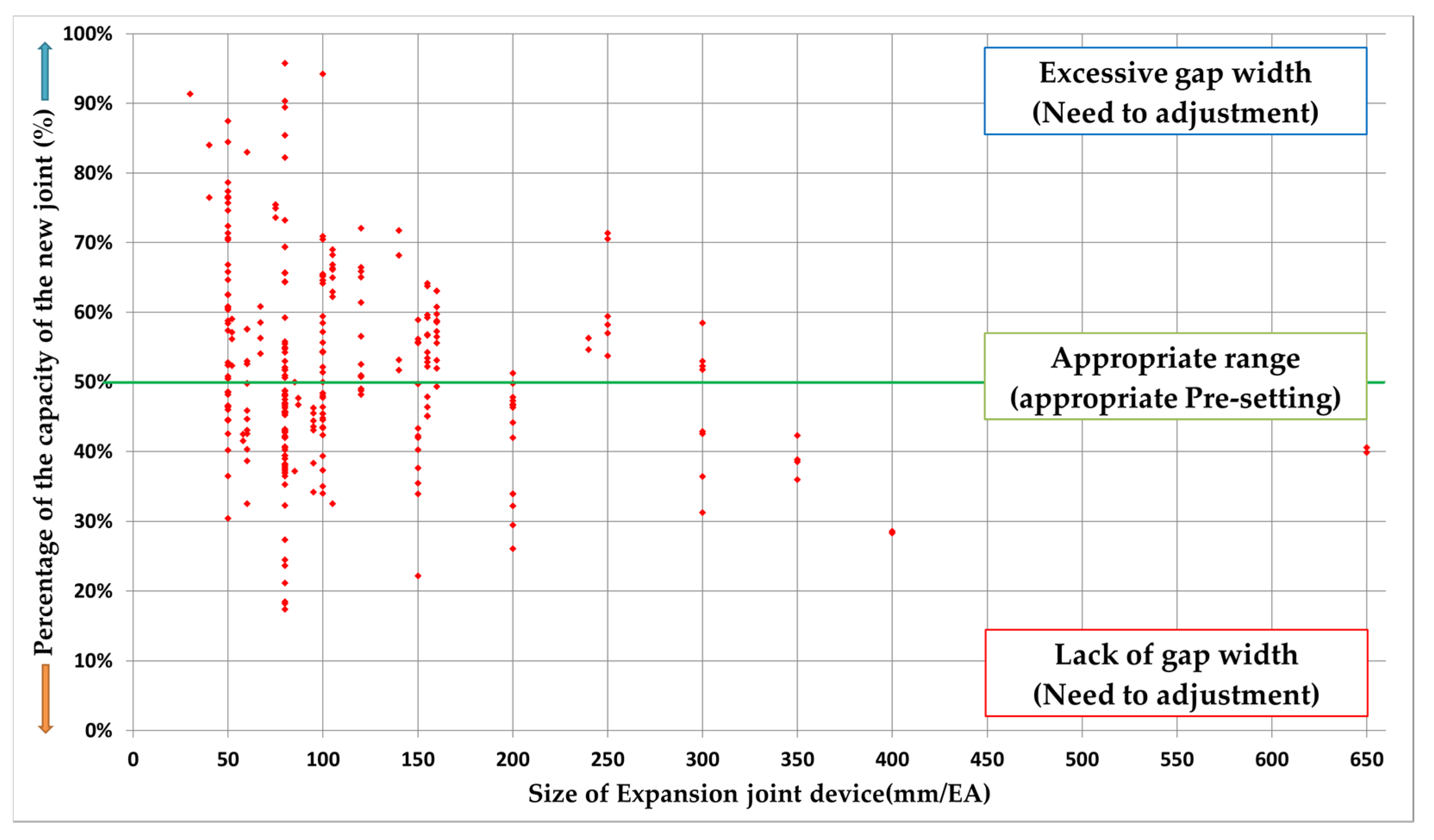

3. Initial Gap Measurement and Evaluation of New Bridge Expansion Joint Device

- (1)

- After examining the joint gap and the average daily temperature on any day,

- (2)

- The joint gap converted into the reference temperature of 15 °C is expressed as a percentage of the capacity of the new joint.

- (a)

- If it is close to 50%, it means that it is installed in the middle of the absolute value of the joint gap. (If it is an expansion and contraction joint with a capacity of 100 mm, it represents 50 mm when the joint gap is 15 °C, which is the reference temperature.)

- (b)

- If it is near 10%, it means that it is installed at a small value of the absolute value of the joint gap. (In the case of an expansion joint with a capacity of 100 mm, it shows 10 mm when the joint gap is 15 °C, which is the reference temperature, so the joint gap is insufficient in summer.)

- (c)

- If it is near 90%, it means that it is installed at a large value of the absolute value of the joint gap. (If it is a 100 mm stretchable joint, it is 90 mm when the joint gap is 15 °C, which is the reference temperature, so the gap is exceeded in winter.)

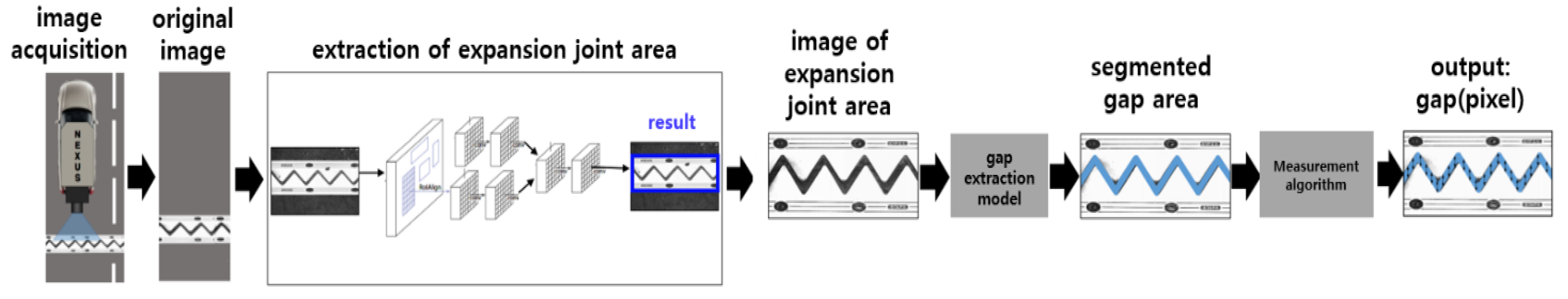

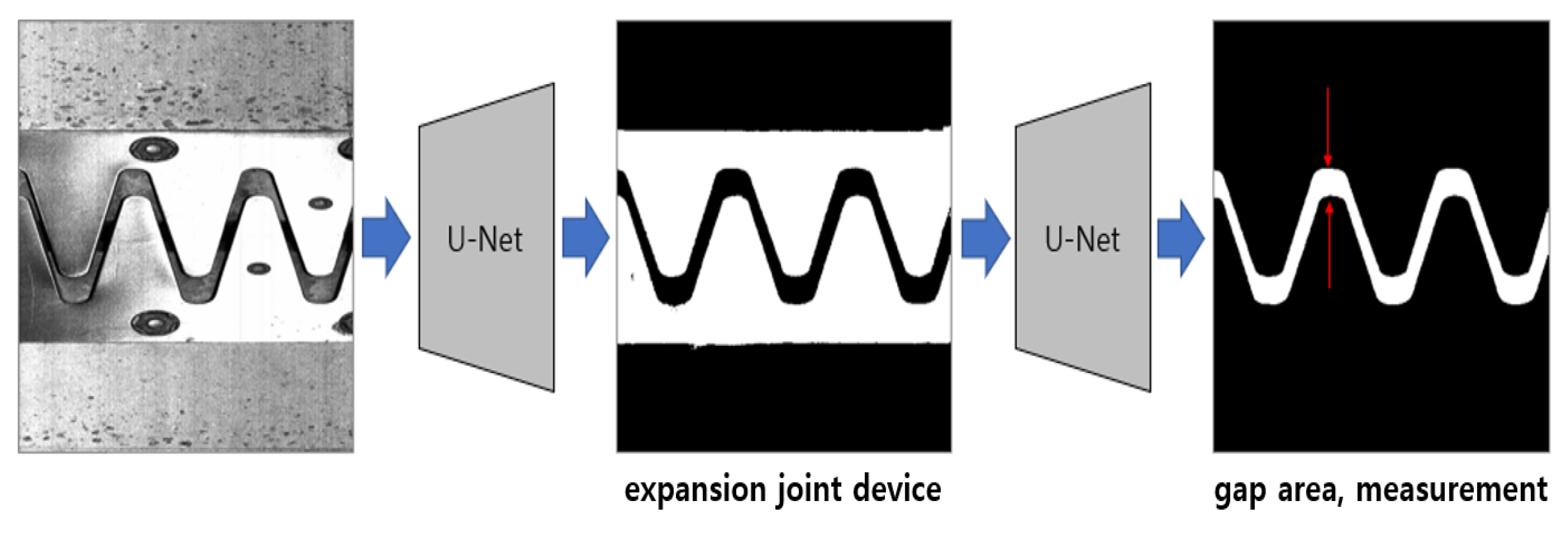

4. Advanced Identification of Expansion Gap Using Machine Learning

4.1. AI-Based Image Analysis

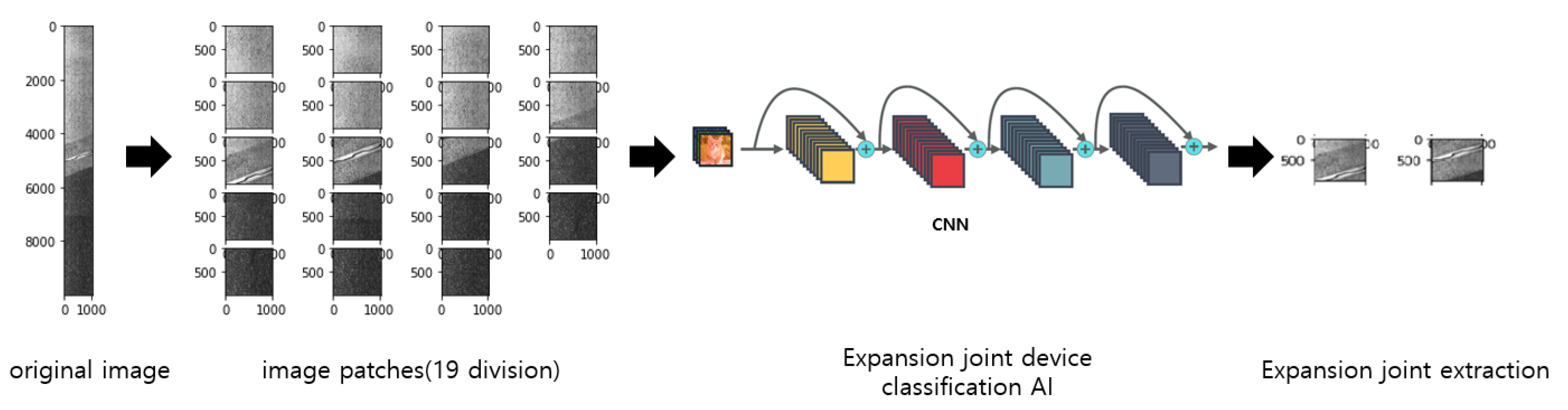

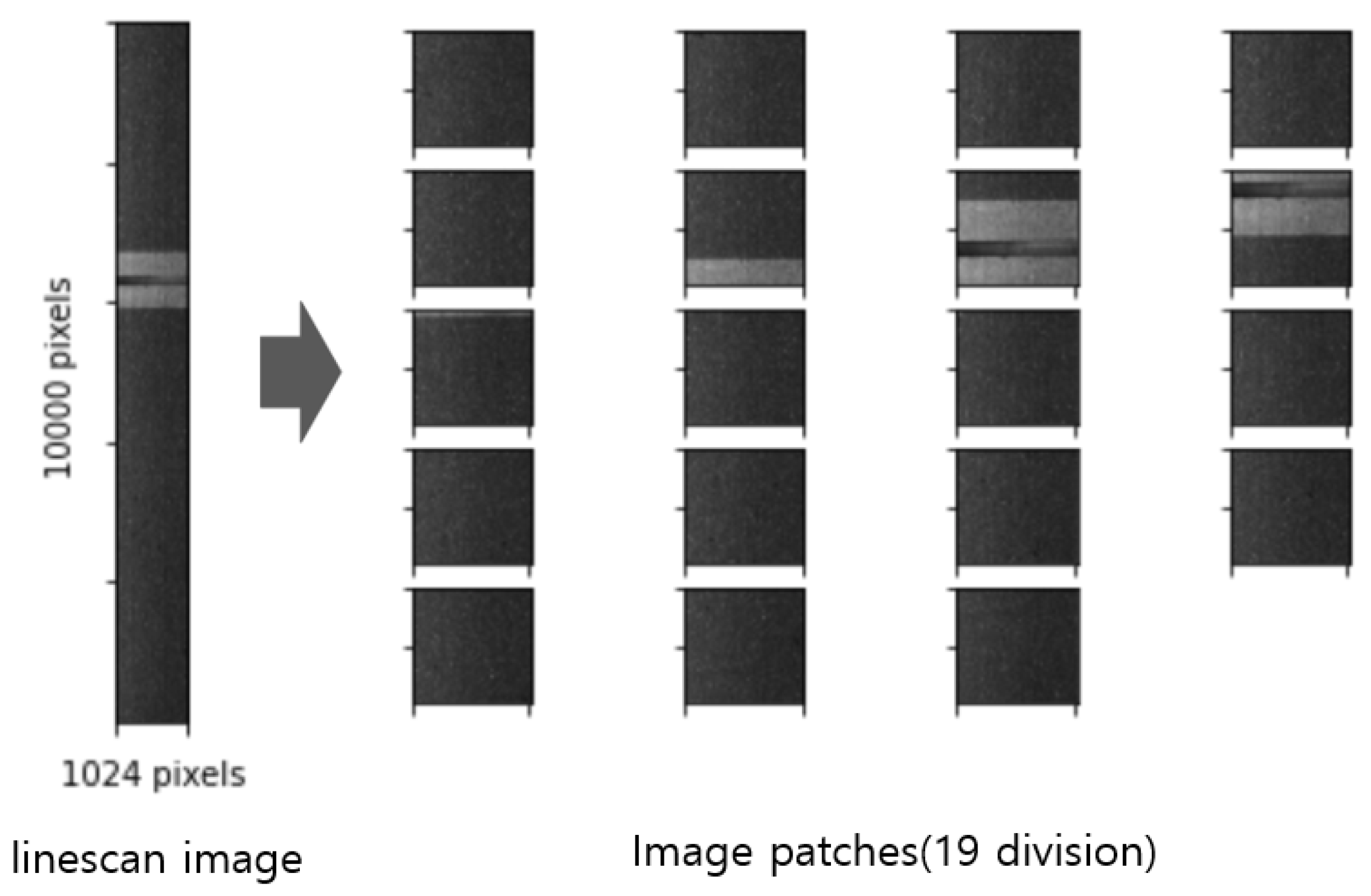

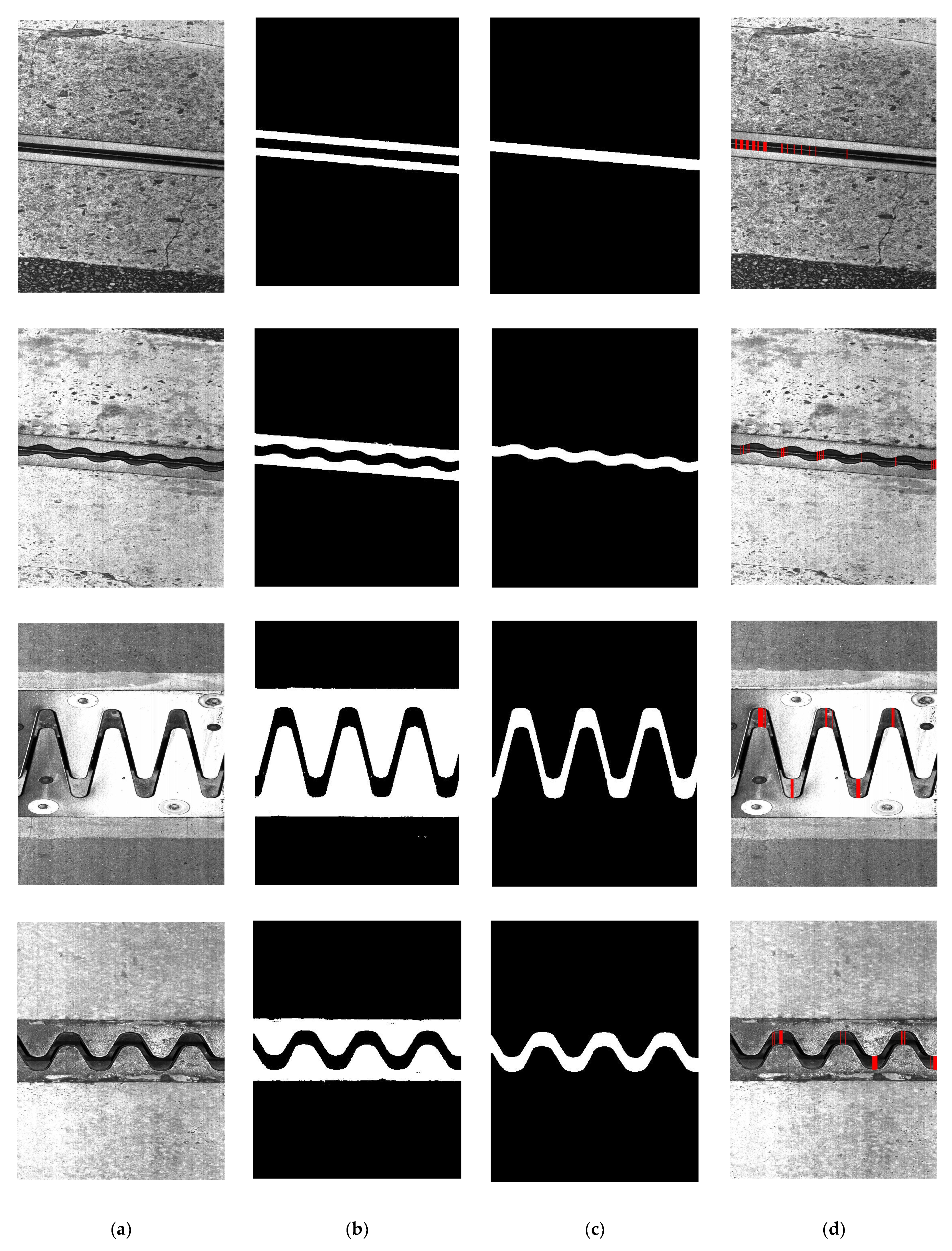

4.2. Expansion Joint Device Recognition

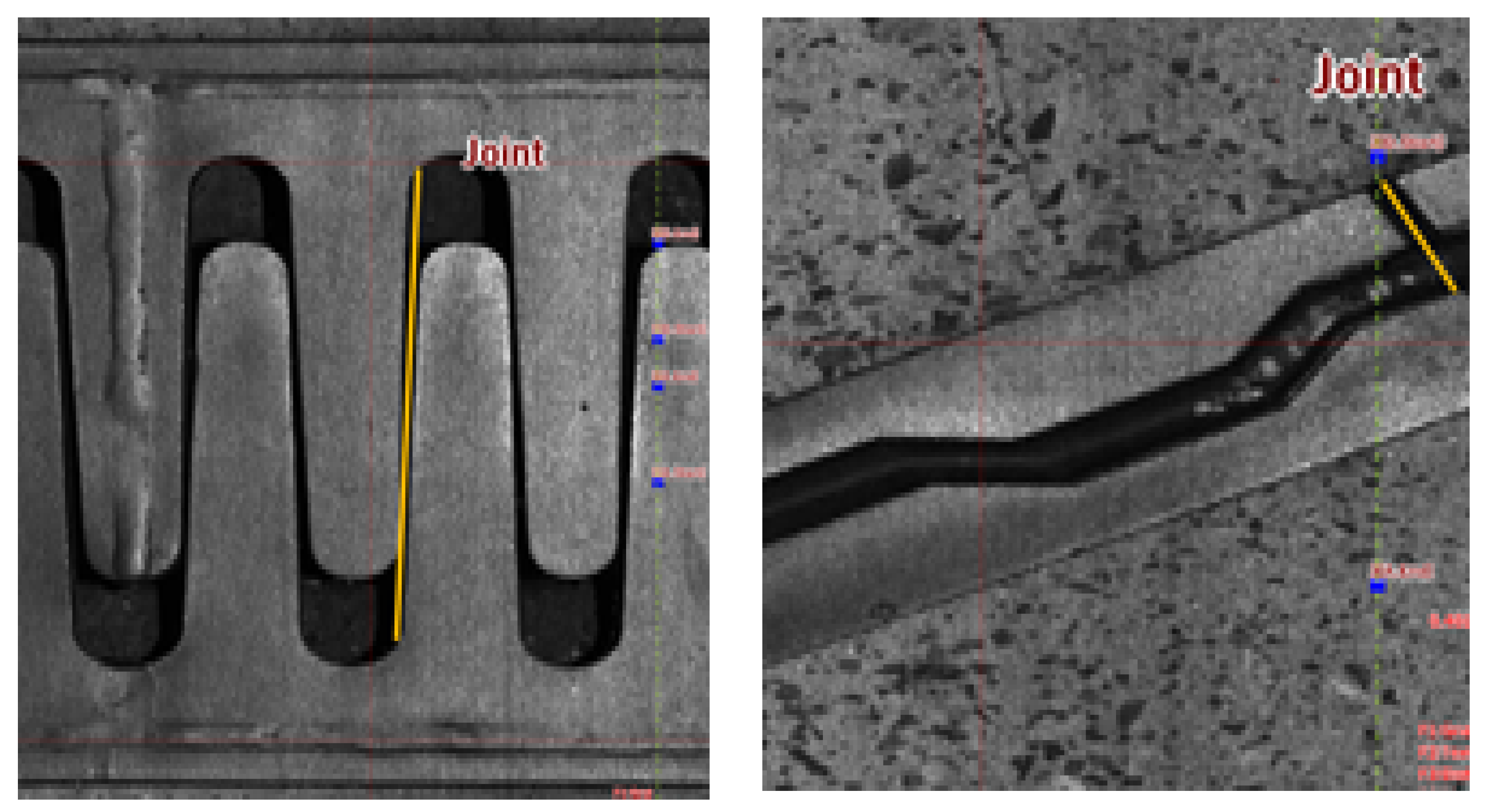

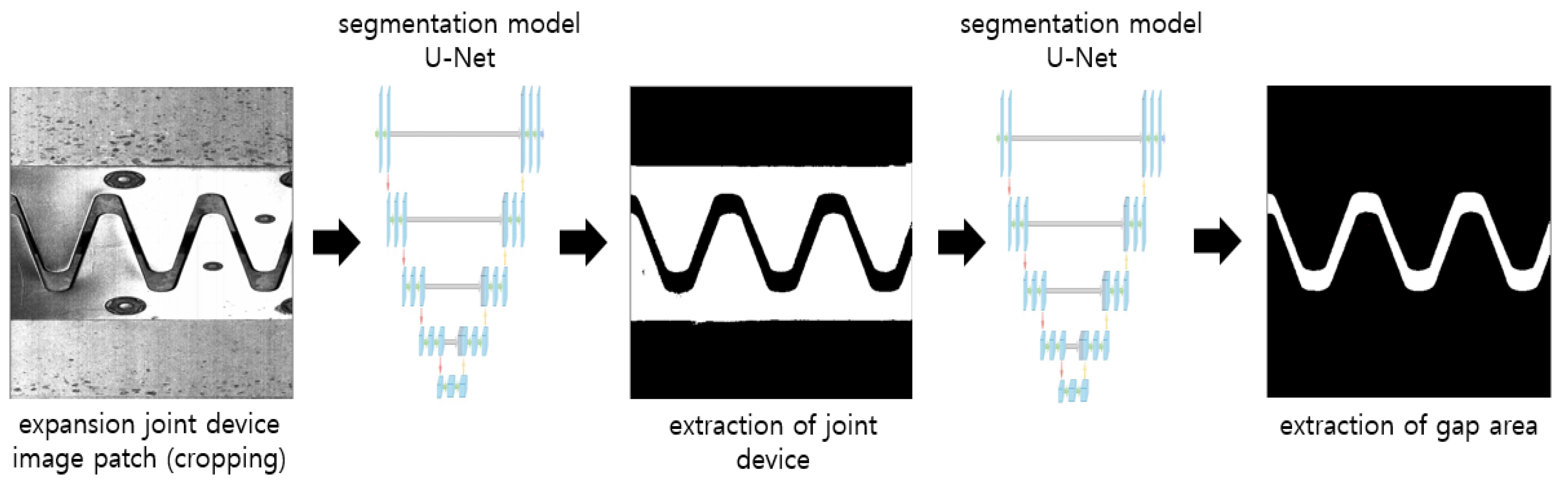

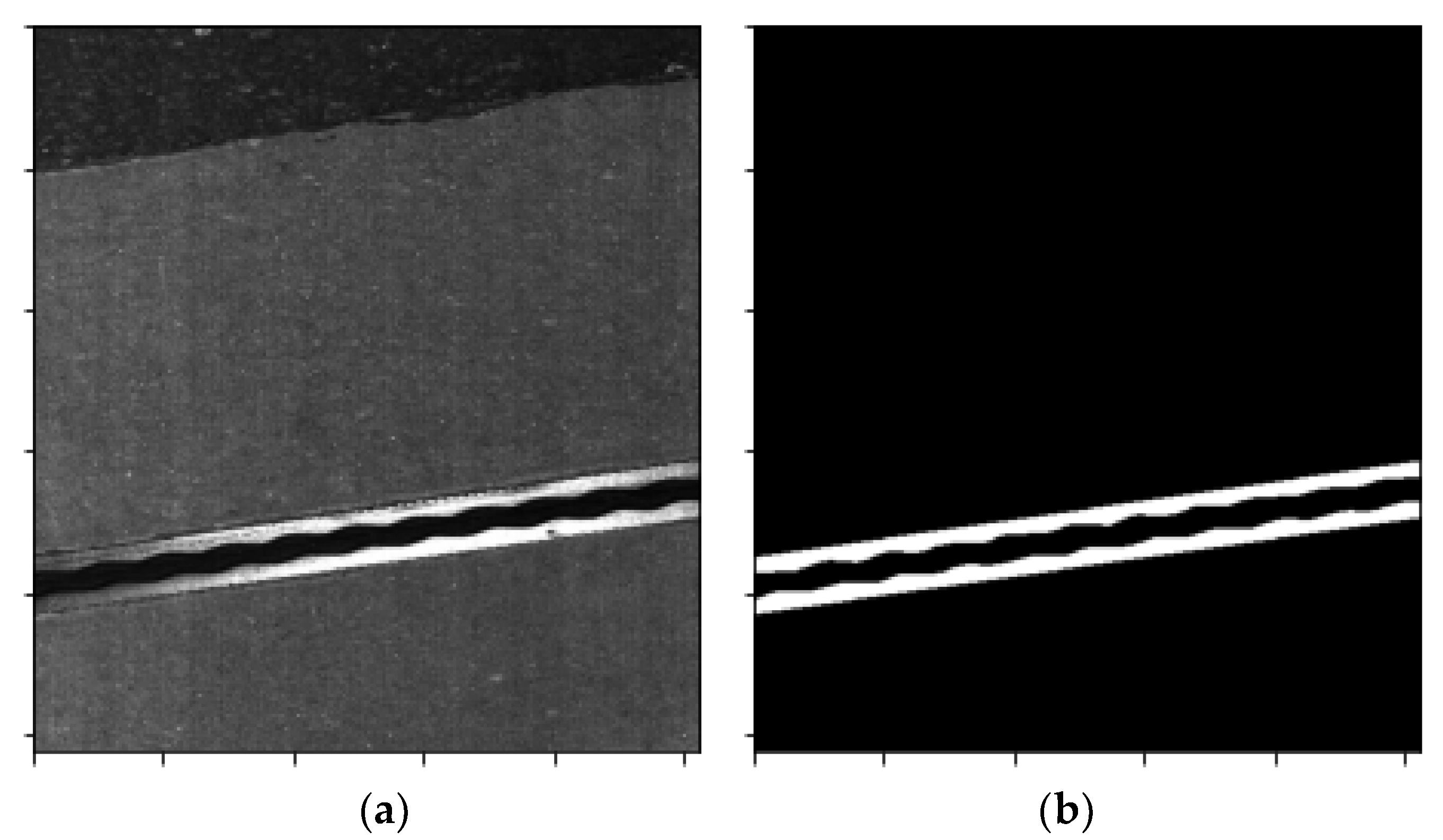

4.3. Expansion Joint Gap Segmentation

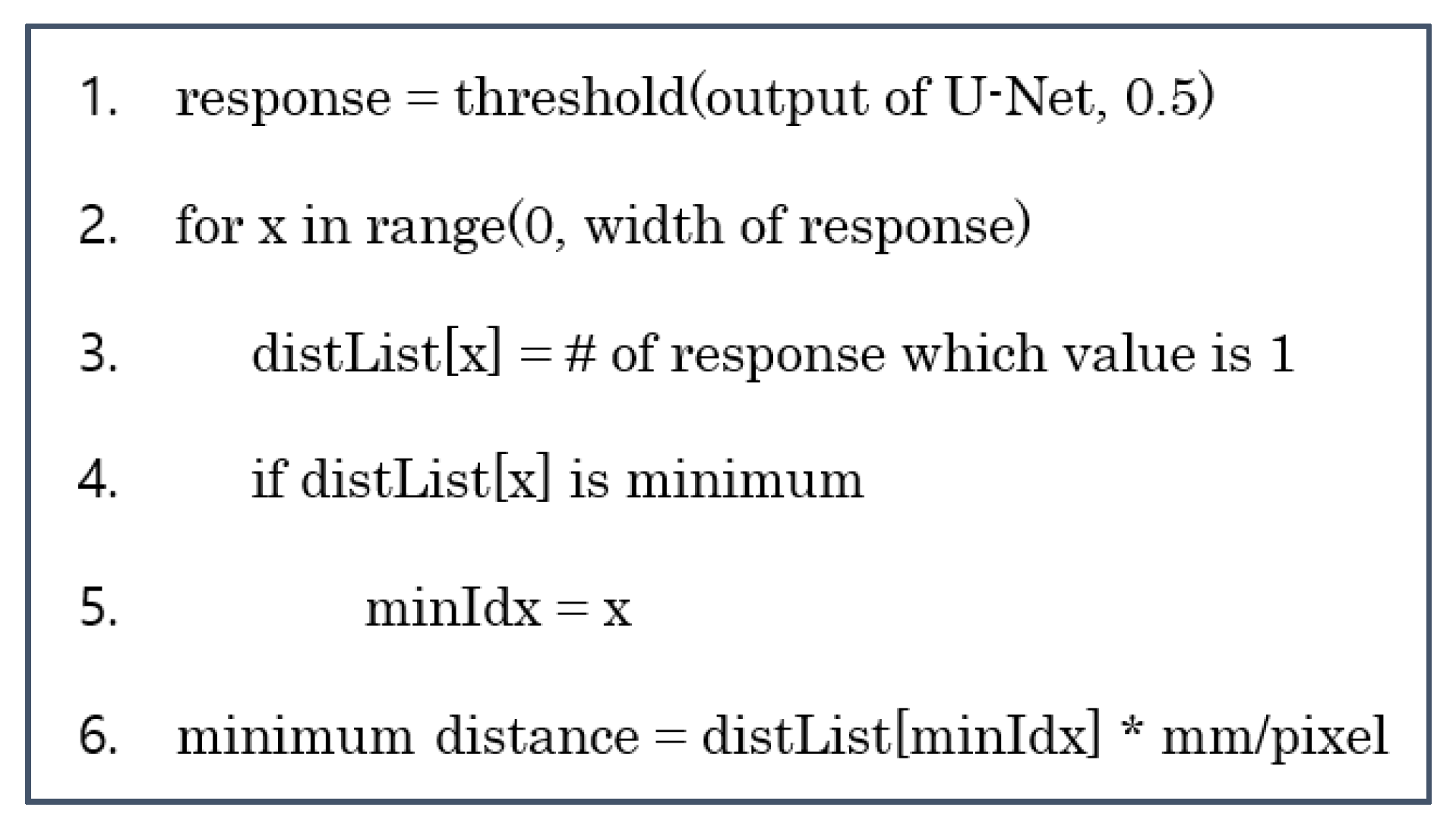

4.4. Gap Distance Analysis Algorithm

4.5. Gap Identification Verification

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Conflicts of Interest

References

- In Bae, K.; Byung Ju, L.; Chang Ho, P. Development of Behavior Evaluation Method for Bridge Expansion Joints Based on Ultrafast Laser Line Scanning System; The Korea Expressway Corporation Research Institute: Hwaseong-si, Korea, 2018; OTKCRK190185. [Google Scholar]

- Hyun Ho, C.; In Bae, K.; Hong Sam, K.; Yu Sung, S. A Study on Proper Construction and Management Standards for Bridge Expansion Joints to Cope with Lack of Joint-Gap; The Korea Expressway Corporation Research Institute: Hwaseong-si, Korea, 2020; OTKCRK210798. [Google Scholar]

- Spuler, T.; Loehrer, R.; O’Suilleabhain, C. Life-cycle considerations in the selection and design of bridge expansion joints. In Proceedings of the IABSE Congress on Innovative Infrastructures towards Human Urbanism, Seoul, Korea, 19–21 September 2012. [Google Scholar]

- Moor, G.; Meng, N.; O’Suilleabhain, C. Remote structural health monitoring systems for bridge expansion joints and bearings. In Proceedings of the 2nd Conference on Smart Monitoring Assessment and Rehabilitation of Civil Structures, Istanbul, Turkey, 9–11 September 2013. [Google Scholar]

- Joo, O.; Hyun Sup, S.; Sang Suk, L.; Hu Seung, K. Bridge Expansion Joint Design and Construction; CIR: Seoul, Korea, 2015. [Google Scholar]

- Korea Expressway Corporation. Technical Advisory Case Book (Bridge Support and Expansion Joint Device); Korea Expressway Corporation: Gimcheon-si, Korea, 2008. [Google Scholar]

- Korea Expressway Corporation. Expressway Construction Professional Specifications/Civil Edition; Korea Expressway Corporation: Gimcheon-si, Korea, 2017. [Google Scholar]

- Steger, C.; Ulrich, M.; Wiedemann, C. Machine Vision Algorithms and Applications, 2nd ed.; Wiley-VCH: Weinheim, Germany, 2018; p. 1. ISBN 978-3-527-41365-2. [Google Scholar]

- Beyerer, J.; León, F.P.; Frese, C. Machine Vision—Automated Visual Inspection: Theory, Practice and Applications; Springer: Berlin/Heidelberg, Germany, 2016; ISBN 978-3-662-47793-9. [Google Scholar] [CrossRef]

- Graves, M.; Batchelor, B. Machine Vision for the Inspection of Natural Products; Springer: Berlin/Heidelberg, Germany, 2003; p. 5. ISBN 978-1-85233-525-0. [Google Scholar]

- Holton, W.C. By Any Other Name. Vis. Syst. Des. 2010, 15, 1089–3709. [Google Scholar]

- Turek, F.D. Machine Vision Fundamentals, How to Make Robots See. NASA Tech. Briefs 2011, 35, 60–62. [Google Scholar]

- Zhuang, H.; Raghavan, S. Development of a machine vision laboratory. Age 2003, 8, 1. [Google Scholar]

- Belbachir, A.N. (Ed.) Smart Cameras; Springer: Berlin/Heidelberg, Germany, 2009; ISBN 978-1-4419-0952-7. [Google Scholar]

- Dechow, D. Explore the Fundamentals of Machine Vision: Part 1. Vis. Syst. Des. 2013, 18, 14–15. [Google Scholar]

- Wilson, A. The Infrared Choice. Vis. Syst. Des. 2011, 16, 20–23. [Google Scholar]

- Jang, J.; Shin, M.; Lim, S.; Park, J.; Kim, J.; Paik, J. Intelligent image-based railway inspection system using deep learning-based object detection and weber contrast-based image comparison. Sensors 2019, 19, 4738. [Google Scholar] [CrossRef] [Green Version]

- Li, L.; Luo, W.T.; Wang, K.C.P. Lane marking detection and reconstruction with line-scan imaging data. Sensors 2018, 18, 1635. [Google Scholar] [CrossRef] [Green Version]

- Wendel, A.; Underwood, J. Extrinsic Parameter Calibration for Line Scanning Cameras on Ground Vehicles with Navigation Systems Using a Calibration Pattern. Sensors 2017, 17, 2491. [Google Scholar] [CrossRef] [Green Version]

- Lopes, G.; Ribeiro, A.; Sillero, N.; Gonçalves-Seco, L.; Silva, C.; Franch, M.; Trigueiros, P. High Resolution Trichromatic Road Surface Scanning with a Line Scan Camera and Light Emitting Diode Lighting for Road-Kill Detection. Sensors 2016, 16, 558. [Google Scholar] [CrossRef] [Green Version]

- Chien, J.-C.; Wu, M.-T.; Lee, J.-D. Inspection and Classification of Semiconductor Wafer Surface Defects Using CNN Deep Learning Networks. Appl. Sci. 2020, 10, 5340. [Google Scholar] [CrossRef]

- Wang, J.; Lee, S. Data Augmentation Methods Applying Grayscale Images for Convolutional Neural Networks in Machine Vision. Appl. Sci. 2021, 11, 6721. [Google Scholar] [CrossRef]

- Chen, A.; Orlov-Levin, V.; Meron, M. Applying High-Resolution Visible-Channel Aerial Scan of Crop Canopy to Precision Irrigation Management. Proceedings 2018, 2, 335. [Google Scholar] [CrossRef] [Green Version]

- Amziane, A.; Losson, O.; Mathon, B.; Dumenil, A.; Macaire, L. Reflectance Estimation from Multispectral Linescan Acquisitions under Varying Illumination—Application to Outdoor Weed Identification. Sensors 2021, 21, 3601. [Google Scholar] [CrossRef] [PubMed]

- Wu, N.; Haruyama, S. The 20k Samples-Per-Second Real Time Detection of Acoustic Vibration Based on Displacement Estimation of One-Dimensional Laser Speckle Images. Sensors 2021, 21, 2938. [Google Scholar] [CrossRef] [PubMed]

- Tzu, F.-M.; Chen, J.-S.; Hsu, S.-H. Light Emitted Diode on Detecting Thin-Film Transistor through Line-Scan Photosensor. Micromachines 2021, 12, 434. [Google Scholar] [CrossRef] [PubMed]

- Kim, H.; Choi, Y. Autonomous Driving Robot That Drives and Returns along a Planned Route in Underground Mines by Recognizing Road Signs. Appl. Sci. 2021, 11, 10235. [Google Scholar] [CrossRef]

- Xu, D.; Qi, X.; Li, C.; Sheng, Z.; Huang, H. Wise Information Technology of Med: Human Pose Recognition in Elderly Care. Sensors 2021, 21, 7130. [Google Scholar] [CrossRef]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Tamuly, S.; Jyotsna, C.; Amudha, J. Deep learning model for image classification. In Proceedings of the International Conference on Computational Vision and Bio-Inspired Computing, Coimbatore, India, 25–26 September 2019; Springer: Cham, Switzerland, 2019. [Google Scholar]

- Liu, L.; Ouyang, W.; Wang, X.; Fieguth, P.; Chen, J.; Liu, X.; Pietikäinen, M. Deep Learning for Generic Object Detection: A Survey. Int. J. Comput. Vis. 2020, 128, 261–318. [Google Scholar] [CrossRef] [Green Version]

- Lateef, F.; Ruichek, Y. Survey on semantic segmentation using deep learning techniques. Neurocomputing 2019, 338, 321–348. [Google Scholar] [CrossRef]

- Russakovsky, O.; Deng, J.; Su, H.; Krause, J.; Satheesh, S.; Ma, S.; Huang, Z.; Karpathy, A.; Khosla, A.; Bernstein, M.; et al. Imagenet large scale visual recognition challenge. Int. J. Comput. Vis. 2015, 115, 211–252. [Google Scholar] [CrossRef] [Green Version]

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. Mobilenets: Efficient convolutional neural networks for mobile vision applications. arXiv 2017, arXiv:1704.04861. [Google Scholar]

- Tan, M.; Le, Q. Efficientnet: Rethinking model scaling for convolutional neural networks. In Proceedings of the International Conference on Machine Learning, PMLR, Long Beach, CA, USA, 9–15 June 2019. [Google Scholar]

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, Munich, Germany, 5–9 October 2015; Springer: Cham, Switzerland, 2015. [Google Scholar]

- Dreiseitl, S.; Ohno-Machado, L. Logistic regression and artificial neural network classification models: A methodology review. J. Biomed. Inform. 2002, 35, 352–359. [Google Scholar] [CrossRef] [Green Version]

- Long, J.; Shelhamer, E.; Darrell, T. Fully convolutional networks for semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015. [Google Scholar]

- Noh, H.; Hong, S.; Han, B. Learning deconvolution network for semantic segmentation. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chille, 7–13 December 2015. [Google Scholar]

- Badrinarayanan, V.; Kendall, A.; Cipolla, R. SegNet: A Deep Convolutional Encoder-Decoder Architecture for Image Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 2481–2495. [Google Scholar] [CrossRef]

- Chen, L.-C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. DeepLab: Semantic Image Segmentation with Deep Convolutional Nets, Atrous Convolution, and Fully Connected CRFs. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 40, 834–848. [Google Scholar] [CrossRef] [PubMed]

- Hartung, J.; Jahn, A.; Bocksrocker, O.; Heizmann, M. Camera-Based In-Process Quality Measurement of Hairpin Welding. Appl. Sci. 2021, 11, 10375. [Google Scholar] [CrossRef]

- Martins, J.; Nogueira, K.; Osco, L.; Gomes, F.; Furuya, D.; Gonçalves, W.; Sant’Ana, D.; Ramos, A.; Liesenberg, V.; dos Santos, J.; et al. Semantic Segmentation of Tree-Canopy in Urban Environment with Pixel-Wise Deep Learning. Remote. Sens. 2021, 13, 3054. [Google Scholar] [CrossRef]

- Mohajerani, Y.; Wood, M.; Velicogna, I.; Rignot, E. Detection of Glacier Calving Margins with Convolutional Neural Networks: A Case Study. Remote. Sens. 2019, 11, 74. [Google Scholar] [CrossRef] [Green Version]

- Hirahara, K.; Ikeuchi, K. Detection of street-parking vehicles using line scan camera and scanning laser range sensor. In Proceedings of the EEE IV2003 Intelligent Vehicles Symposium. Proceedings (Cat. No. 03TH8683), Columbus, OH, USA, 9–11 June 2003; IEEE: Piscataway, NJ, USA, 2003. [Google Scholar]

- Dvorák, M.; Kanich, O.; Drahanský, M. Scalable Imaging Device using Line Scan Camera for Use in Biometric Recognition and Medical Imaging. In Proceedings of the 14th International Joint Conference on Biomedical Engineering Systems and Technologies (BIOSTEC 2021)-Volume 1: BIODEVICES, Online Streaming, 11–13 February 2021; pp. 160–168. [Google Scholar]

- Zhang, L.; Yang, F.; Zhang, Y.D.; Zhu, Y.J. Road crack detection using deep convolutional neural network. In Proceedings of the 2016 IEEE International Conference on Image Processing (ICIP), Phoenix, AZ, USA, 25–28 September 2016; IEEE: Piscataway, NJ, USA, 2016. [Google Scholar]

- Shi, Y.; Cui, L.; Qi, Z.; Meng, F.; Chen, Z. Automatic road crack detection using random structured forests. IEEE Trans. Intell. Transp. Syst. 2016, 17, 3434–3445. [Google Scholar] [CrossRef]

- Bang, S.; Park, S.; Kim, H.; Kim, H. Encoder–decoder network for pixel-level road crack detection in black-box images. Comput. Aided Civ. Infrastruct. Eng. 2019, 34, 713–727. [Google Scholar] [CrossRef]

- Kruachottikul, P.; Cooharojananone, N.; Phanomchoeng, G.; Chavarnakul, T.; Kovitanggoon, K.; Trakulwaranont, D.; Atchariyachanvanich, K. Bridge sub structure defect inspection assistance by using deep learning. In Proceedings of the 2019 IEEE 10th International Conference on Awareness Science and Technology (iCAST), Morioka, Japan, 23–25 October 2019; IEEE: Piscataway, NJ, USA, 2019. [Google Scholar]

- Pereira, V.; Tamura, S.; Hayamizu, S.; Fukai, H. Classification of paved and unpaved road image using convolutional neural network for road condition inspection system. In Proceedings of the 2018 5th International Conference on Advanced Informatics: Concept Theory and Applications (ICAICTA), Krabi, Thailand, 14–17 August 2018; IEEE: Piscataway, NJ, USA, 2018. [Google Scholar]

- Guo, W.; Wang, N.; Fang, H. Design of airport road surface inspection system based on machine vision and deep learning. J. Phys. Conf. Ser. 2021, 1885, 052046. [Google Scholar] [CrossRef]

- Mei, Q.; Gül, M. A cost effective solution for pavement crack inspection using cameras and deep neural networks. Constr. Build. Mater. 2020, 256, 119397. [Google Scholar] [CrossRef]

- Maeda, H.; Sekimoto, Y.; Seto, T.; Kashiyama, T.; Omata, H. Road Damage Detection and Classification Using Deep Neural Networks with Smartphone Images. Comput. Civ. Infrastruct. Eng. 2018, 33, 1127–1141. [Google Scholar] [CrossRef]

- Cha, Y.-J.; Choi, W.; Suh, G.; Mahmoudkhani, S.; Büyüköztürk, O. Autonomous Structural Visual Inspection Using Region-Based Deep Learning for Detecting Multiple Damage Types. Comput.-Aided Civ. Infrastruct. Eng. 2018, 33, 731–747. [Google Scholar] [CrossRef]

- Chehri, A.; Saeidi, A. IoT and Deep Learning Solutions for an Automated Crack Detection for the Inspection of Concrete Bridge Structures. In International Conference on Human-Centered Intelligent Systems; Springer: Singapore, 2021. [Google Scholar]

- Wang, D.; Zhang, Y.; Pan, Y.; Peng, B.; Liu, H.; Ma, R. An Automated Inspection Method for the Steel Box Girder Bottom of Long-Span Bridges Based on Deep Learning. IEEE Access 2020, 8, 94010–94023. [Google Scholar] [CrossRef]

- Mukherjee, R.; Iqbal, H.; Marzban, S.; Badar, A.; Brouns, T.; Gowda, S.; Arani, E.; Zonooz, B. AI Driven Road Maintenance Inspection. arXiv 2021, arXiv:2106.02567. [Google Scholar]

- Maeda, H.; Sekimoto, Y.; Seto, T.; Kashiyama, T.; Omata, H. Road damage detection using deep neural networks with images captured through a smartphone. arXiv 2018, arXiv:1801.09454. [Google Scholar]

- Siriborvornratanakul, T. An automatic road distress visual inspection system using an onboard in-car camera. Adv. Multimed. 2018, 2018, 2561953. [Google Scholar] [CrossRef] [Green Version]

- Abdellatif, M.; Peel, H.; Cohn, A.G.; Fuentes, R. Hyperspectral imaging for autonomous inspection of road pavement defects. In Proceedings of the 36th International Symposium on Automation and Robotics in Construction (ISARC), Banff, AB, Canada, 21–24 May 2019. [Google Scholar]

- Zhao, X.; Li, S.; Su, H.; Zhou, L.; Loh, K.J. Image-based comprehensive maintenance and inspection method for bridges using deep learning. In Smart Materials, Adaptive Structures and Intelligent Systems; American Society of Mechanical Engineers: New York, NY, USA, 2018; Volume 51951. [Google Scholar]

- The NEXUS System and the On-Site Test Survey Introduction. Available online: https://www.youtube.com/watch?v=S7p6P3VG-40 (accessed on 27 July 2018).

- Cox, D.R. The regression analysis of binary sequences. J. R. Stat. Soc. Ser. B (Methodol.) 1959, 21, 238. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar] [CrossRef] [Green Version]

- Schwing, A.G.; Urtasun, R. Fully connected deep structured networks. arXiv 2015, arXiv:1503.02351. [Google Scholar]

- Manzoor, B.; Othman, I.; Durdyev, S.; Ismail, S.; Wahab, M.H. Influence of Artificial Intelligence in Civil Engineering toward Sustainable Development—A Systematic Literature Review. Appl. Syst. Innov. 2021, 4, 52. [Google Scholar] [CrossRef]

- Grác, Š.; Beňo, P.; Duchoň, F.; Dekan, M.; Tölgyessy, M. Automated Detection of Multi-Rotor UAVs Using a Machine-Learning Approach. Appl. Syst. Innov. 2020, 3, 29. [Google Scholar] [CrossRef]

- Xue, D.; Wang, X.; Zhu, J.; Davis, D.N.; Wang, B.; Zhao, W.; Peng, Y.; Cheng, Y. An adaptive ensemble approach to ambient intelligence assisted people search. Appl. Syst. Innov. 2018, 1, 33. [Google Scholar] [CrossRef] [Green Version]

- Robbins, H.; Monro, S. A stochastic approximation method. Ann. Math. Stat. 1951, 22, 400–407. [Google Scholar] [CrossRef]

- Zhao, W.; Meng, Z.; Wang, K.; Zhang, J.; Lu, S. Hierarchical Active Tracking Control for UAVs via Deep Reinforcement Learning. Appl. Sci. 2021, 11, 10595. [Google Scholar] [CrossRef]

- Pantho, M.J.H.; Bhowmik, P.; Bobda, C. Towards an Efficient CNN Inference Architecture Enabling In-Sensor Processing. Sensors 2021, 21, 1955. [Google Scholar] [CrossRef] [PubMed]

- Hennessy, P.J.; Esau, T.J.; Farooque, A.A.; Schumann, A.W.; Zaman, Q.U.; Corscadden, K.W. Hair Fescue and Sheep Sorrel Identification Using Deep Learning in Wild Blueberry Production. Remote Sens. 2021, 13, 943. [Google Scholar] [CrossRef]

- Minnetti, E.; Chiariotti, P.; Paone, N.; Garcia, G.; Vicente, H.; Violini, L.; Castellini, P. A Smartphone Integrated Hand-Held Gap and Flush Measurement System for in Line Quality Control of Car Body Assembly. Sensors 2020, 20, 3300. [Google Scholar] [CrossRef]

- Pham, T.-A.; Yoo, M. Nighttime Vehicle Detection and Tracking with Occlusion Handling by Pairing Headlights and Taillights. Appl. Sci. 2020, 10, 3986. [Google Scholar] [CrossRef]

- Zhang, T.; Hu, X.; Xiao, J.; Zhang, G. A Machine Learning Method for Vision-Based Unmanned Aerial Vehicle Systems to Understand Unknown Environments. Sensors 2020, 20, 3245. [Google Scholar] [CrossRef]

- Guo, Y.; Chai, L.; Aggrey, S.E.; Oladeinde, A.; Johnson, J.; Zock, G. A Machine Vision-Based Method for Monitoring Broiler Chicken Floor Distribution. Sensors 2020, 20, 3179. [Google Scholar] [CrossRef]

- Hawkins, D.M. The Problem of Overfitting. J. Chem. Inf. Comput. Sci. 2004, 44, 1–12. [Google Scholar] [CrossRef] [PubMed]

- Brehar, R.; Mitrea, D.-A.; Vancea, F.; Marita, T.; Nedevschi, S.; Lupsor-Platon, M.; Rotaru, M.; Badea, R.I. Comparison of Deep-Learning and Conventional Machine-Learning Methods for the Automatic Recognition of the Hepatocellular Carcinoma Areas from Ultrasound Images. Sensors 2020, 20, 3085. [Google Scholar] [CrossRef]

- Azimi, M.; Eslamlou, A.D.; Pekcan, G. Data-Driven Structural Health Monitoring and Damage Detection through Deep Learning: State-of-the-Art Review. Sensors 2020, 20, 2778. [Google Scholar] [CrossRef] [PubMed]

- Zhou, J.; Pan, L.; Li, Y.; Liu, P.; Liu, L. Real-Time Stripe Width Computation Using Back Propagation Neural Network for Adaptive Control of Line Structured Light Sensors. Sensors 2020, 20, 2618. [Google Scholar] [CrossRef] [PubMed]

- Huang, Y.; Qiu, C.; Wang, X.; Wang, S.; Yuan, K. A Compact Convolutional Neural Network for Surface Defect Inspection. Sensors 2020, 20, 1974. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Zhang, Q.; Liu, Y.; Gong, C.; Chen, Y.; Yu, H. Applications of Deep Learning for Dense Scenes Analysis in Agriculture: A Review. Sensors 2020, 20, 1520. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Guo, Y.; He, D.; Chai, L. A Machine Vision-Based Method for Monitoring Scene-Interactive Behaviors of Dairy Calf. Animals 2020, 10, 190. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Hochreiter, S. The vanishing gradient problem during learning recurrent neural nets and problem solutions. Int. J. Uncertain. Fuzziness Knowl.-Based Syst. 1998, 6, 107–116. [Google Scholar] [CrossRef] [Green Version]

| Year | 2011 | 2012 | 2013 | 2014 | 2015 | 2016 | 2017 | 2018 | Recent Average (2011–2018) | Average Year (1981–2010) | Difference | Ratio |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Average Temperature (°C) | 24.0 | 24.7 | 25.4 | 23.6 | 23.7 | 24.8 | 24.5 | 25.4 | 24.5 | 23.6 | +0.8 | 3.8% |

| Maximum Temperature (°C) | 36.7 | 38.7 | 39.2 | 37.9 | 38.7 | 39.6 | 39.7 | 41.0 | 38.9 | 37.5 | +1.4 | 3.7% |

| Number of Heatwave Days (days) | 14 | 15 | 18 | 6 | 10 | 22 | 14 | 32 | 14.2 | 9.8 | +4.4 | 45% |

| Sum (Bridges) | Major causes | |

| Expansion of cement concrete pavement ① | Deformation of backfill ② | ||

| 276 | 166 (60%) | 110 (40%) | |

| Safety Management | Sum | Bridges | Tunnels | Box Culverts |

|---|---|---|---|---|

| Sum (EA) | 27,682 | 15,636 | 2118 | 9928 |

| Regular safety inspection 1 | 25,219 | 13,648 | 1643 | 9928 |

| Precision safety inspection 2 | 2205 | 1783 | 422 | - |

| Precision safety diagnosis | 258 | 205 | 53 | - |

| Division | Total | Rail Type | Steel Finger Type | Mono Cell Type |

|---|---|---|---|---|

| Total | 302 places | 169 places | 128 places | 5 places |

| 100% | 56% | 42% | 2% | |

| lane A | 171 places | 98 places | 71 places | 2 places |

| 100% | 57% | 42% | 1% | |

| lane B | 131 places | 71 places | 57 places | 3 places |

| 100% | 54% | 44% | 2% |

| Installation | Sum | Mono Cell Type | Finger Type | Rail Type | Others |

|---|---|---|---|---|---|

| EA | 14,784 | 7793 | 1786 | 4228 | 977 |

| Prop (%) | 100 | 53 | 12 | 29 | 7 |

| Discrimination (%) | Average (%) | Mono Cell Type | Finger Type | Rail Type | Others |

|---|---|---|---|---|---|

| Accuracy | 67.5 | 71 | 51 | 87 | 61 |

| Loss | 32.5 | 29 | 49 | 13 | 39 |

| Accuracy by Type of Expansion Joint (%) | Precision | Recall | f1-Score |

|---|---|---|---|

| Positive (pixels of expansion joints) | 96.61 | 94.38 | 95.49 |

| Negative (other pixels) | 99.23 | 99.55 | 99.39 |

| Accuracy (%) | Average | Mono Cell Type | Finger Type | Rail Type | Others |

|---|---|---|---|---|---|

| Conventional algorithm | 67.5 | 71 | 51 | 87 | 61 |

| AI algorithm (machine learning) | 95 | 98 | 92 | 99 | 91 |

| Improvement rate | ↑27.5 | ↑27 | ↑41 | ↑12 | ↑30 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kim, I.B.; Cho, J.S.; Zi, G.S.; Cho, B.S.; Lee, S.M.; Kim, H.U. Detection and Identification of Expansion Joint Gap of Road Bridges by Machine Learning Using Line-Scan Camera Images. Appl. Syst. Innov. 2021, 4, 94. https://doi.org/10.3390/asi4040094

Kim IB, Cho JS, Zi GS, Cho BS, Lee SM, Kim HU. Detection and Identification of Expansion Joint Gap of Road Bridges by Machine Learning Using Line-Scan Camera Images. Applied System Innovation. 2021; 4(4):94. https://doi.org/10.3390/asi4040094

Chicago/Turabian StyleKim, In Bae, Jun Sang Cho, Goang Seup Zi, Beom Seok Cho, Seon Min Lee, and Hyoung Uk Kim. 2021. "Detection and Identification of Expansion Joint Gap of Road Bridges by Machine Learning Using Line-Scan Camera Images" Applied System Innovation 4, no. 4: 94. https://doi.org/10.3390/asi4040094

APA StyleKim, I. B., Cho, J. S., Zi, G. S., Cho, B. S., Lee, S. M., & Kim, H. U. (2021). Detection and Identification of Expansion Joint Gap of Road Bridges by Machine Learning Using Line-Scan Camera Images. Applied System Innovation, 4(4), 94. https://doi.org/10.3390/asi4040094