Evidence-Based Regularization for Neural Networks

Abstract

1. Introduction

- Regularization of the training data: This form of regularization involves augmentation of the data fed to the layers of a neural network or the introduction of transformations to expand the observed set of training samples. The most popular methods in this category are batch normalization [3] and layer normalization [4]. A related form of regularization is the addition of randomness to input data or the labels. One of the most popular methods in this category is the addition of Gaussian noise to input data [5,6], label smoothing [7], and dropout [8,9];

- Regularization via the network architecture: The network topology is selected to match certain assumptions regarding the data-generating process. Such regularization limits the search space of models or introduces certain invariances. Popular methods in this category are limiting the size (either breadth or depth) of the network, the number of convolutional or pooling layers [10,11], or the number of skip connections [12,13];

- Regularization term added to the loss function: This is a term that is independent of the targets that changes the loss, and thereby the gradient step, in a certain manner. Common approaches are weight decay by adding the norm of the weights to the loss (typically either L1, L2, or the sum of those) [14] and weight smoothing by adding the norm of gradients [14];

- Regularization via the optimization procedure: The final form of regularization is via the optimization procedure itself, or, more precisely, the interplay between loss function, regularization terms, and optimization iterations. The most common forms of regularization in this category are mini-batch optimization [18], early stopping [19], initialization approach [20,21], learning-rate scheduling [22], and specific update rules such as Momentum, RMSProp, or Adam [23].

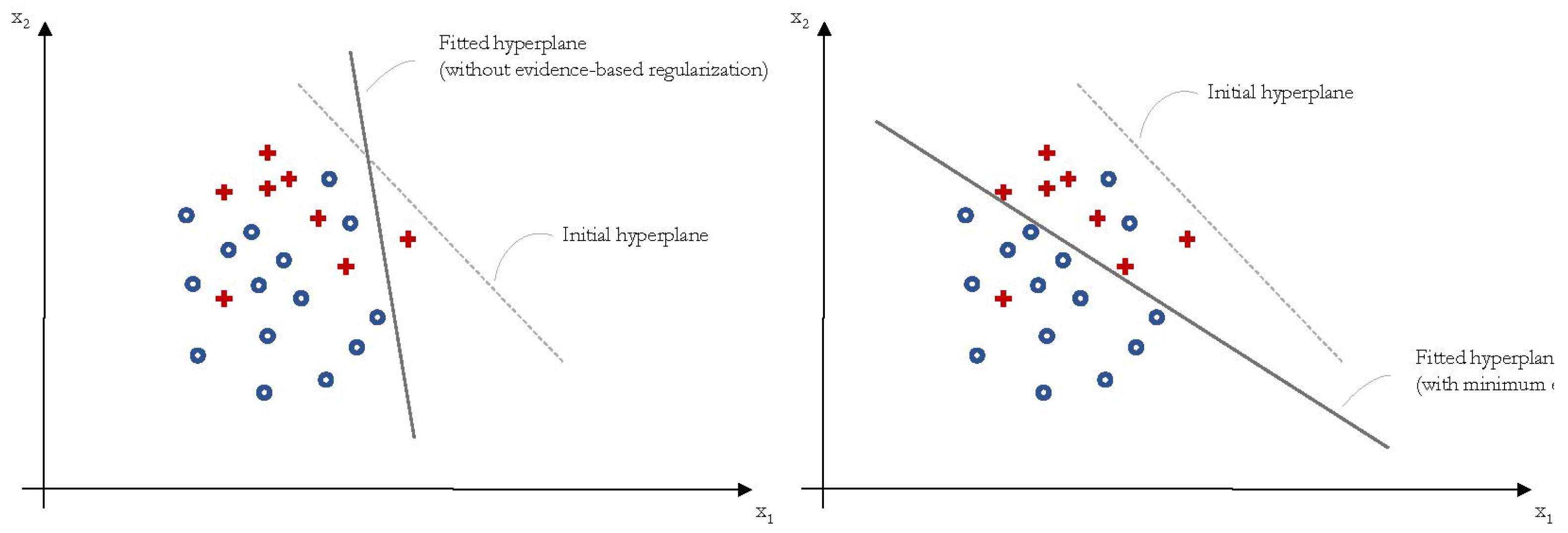

2. Evidence-Based Regularization

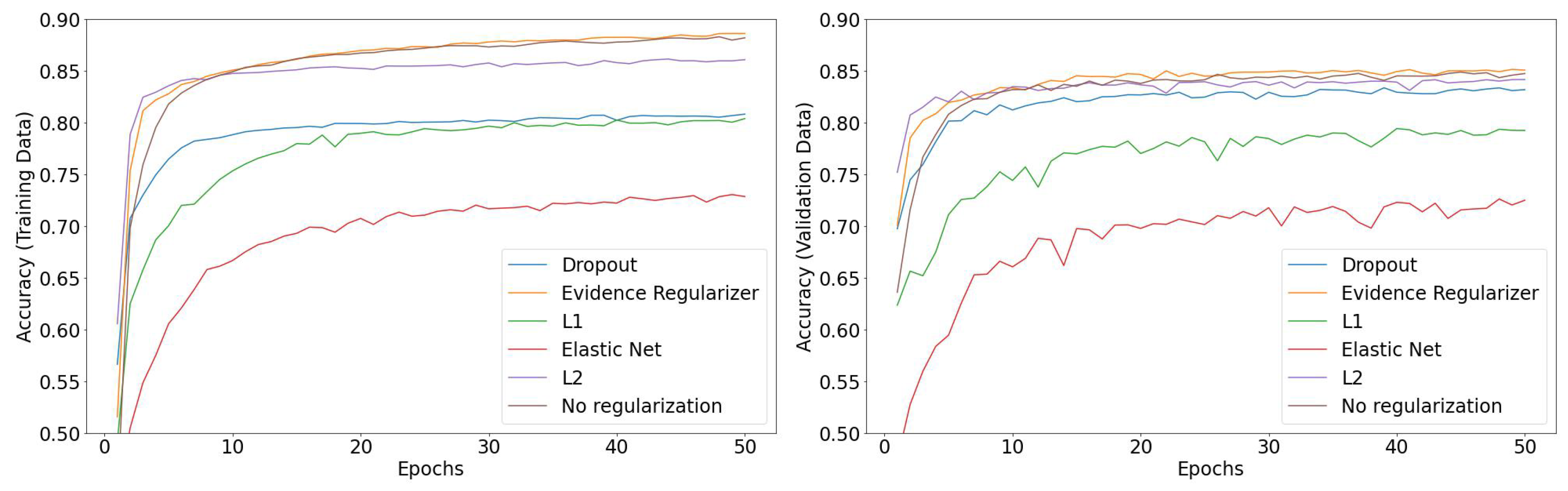

3. Empirical Results

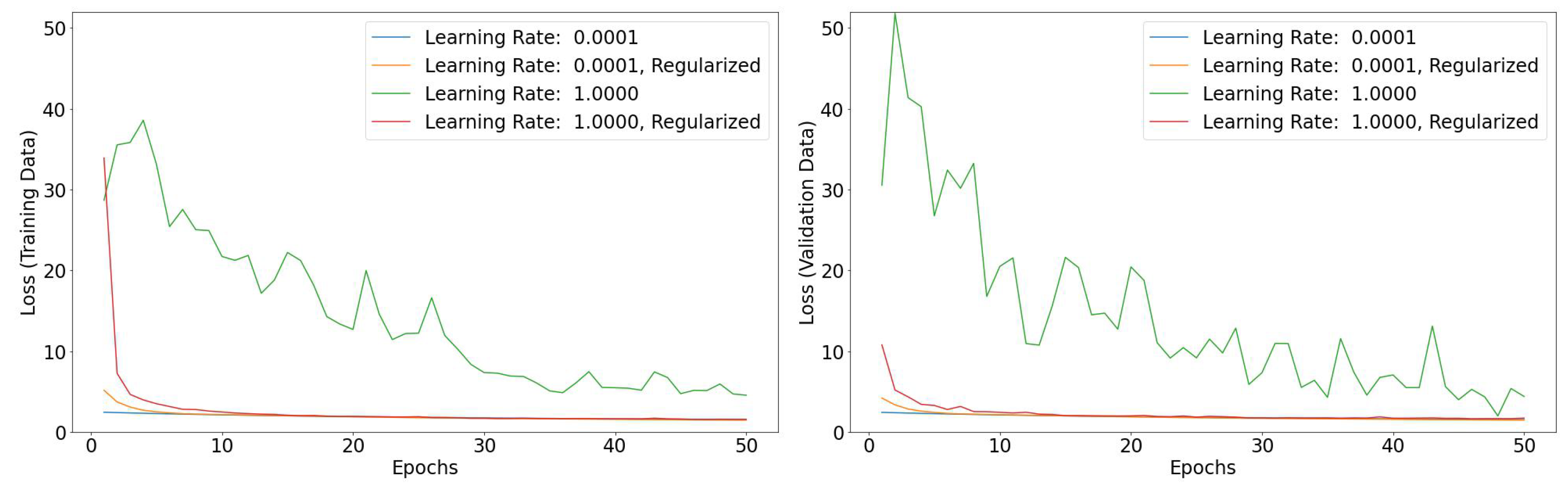

3.1. Large Learning Rates

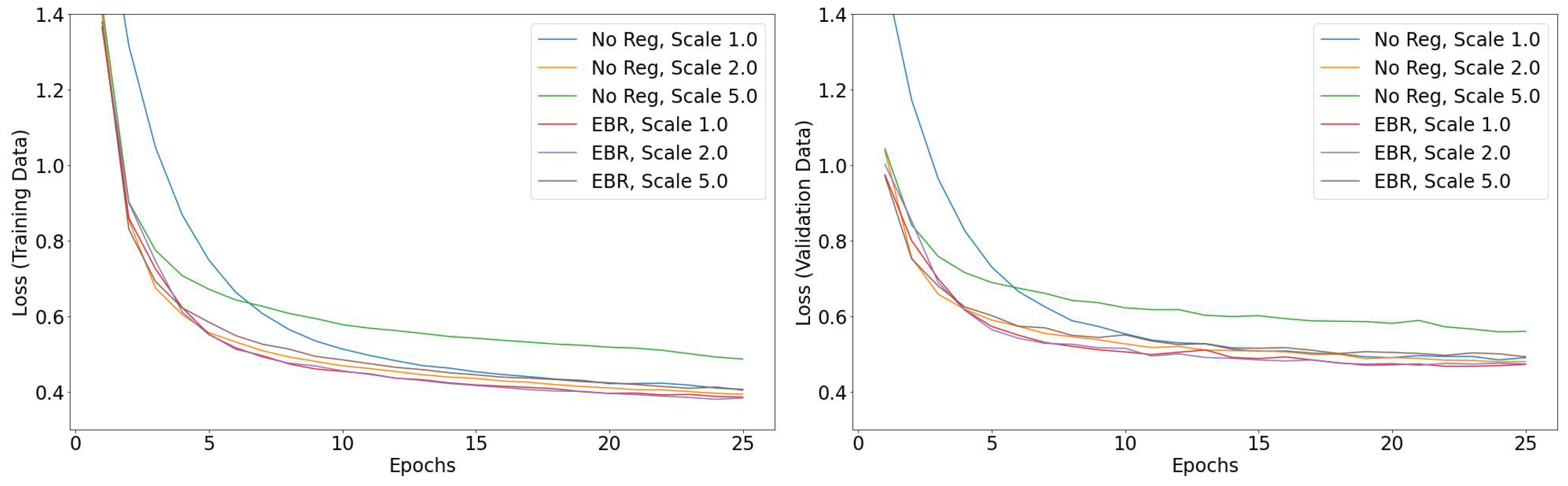

3.2. Preventing Vanishing and Exploding Gradients

3.3. Training Deeper Networks

3.4. CIFAR10 Results

4. Concluding Remarks

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| EBR | Evidence-Based Regularization |

| MNIST | Modified National Institute of Standards and Technology |

| ReLU | Rectified Linear Unit |

Appendix A. TensorFlow Implementation

import tensorflow as tf

class EvidenceRegularizerLayer(tf.keras.layers.Layer):

def __init__(self, threshold: float, cutoff: float):

super().__init__()

self.threshold = threshold

self.cutoff = cutoff

def get_config(self):

return dict(threshold=self.threshold, cutoff=self.cutoff)

def call(self, inputs):

positive_activation = tf.reduce_sum(tf.maximum(inputs, self.cutoff), axis=0)

negative_activation = tf.reduce_sum(tf.maximum(-inputs, self.cutoff), axis=0)

node_activation = tf.minimum(positive_activation, negative_activation)

regularization = tf.minimum(node_activation - self.threshold, 0.0)

batch_size = tf.cast(tf.shape(inputs)[0], dtype=tf.float32)

self.add_loss(-tf.reduce_sum(regularization) / batch_size)

self.add_metric(

tf.reduce_min(positive_activation),

name=f"minimal_positive_activation_{

self._name.split(’_’)[-1]}",aggregation="mean",

)

self.add_metric(

tf.reduce_min(negative_activation),

name=f"minimal_negative_activation_{

self._name.split(’_’)[-1]}",aggregation="mean",

)

self.add_metric(

tf.math.count_nonzero(

tf.math.less(node_activation, self.threshold),

dtype=tf.float32

),

name=f"number_of_nodes_below_threshold_{

self._name.split(’_’)[-1]}", aggregation="mean",

)

return inputs

class EvidenceRegularizer(tf.keras.regularizers.Regularizer):

def __init__(self, threshold: float, cutoff: float):

self.threshold = threshold

self.cutoff = cutoff

def get_config(self):

return dict(threshold=self.threshold, cutoff=self.cutoff)

def __call__(self, x):

positive_activation = tf.reduce_sum(tf.maximum(x, self.cutoff), axis=0)

negative_activation = tf.reduce_sum(tf.maximum(-x, self.cutoff), axis=0)

node_activation = tf.minimum(positive_activation, negative_activation)

regularization = tf.minimum(node_activation - self.threshold, 0.0)

return -tf.reduce_sum(regularization)

Appendix B. Seeds for Reproducability

9218, 2059, 6670, 3606, 2077, 7205, 7736, 1593, 9788, 2621, 5193, 4173,

6735, 3156, 7767, 1635, 8803, 9828, 619, 2155, 7277, 5234, 5240, 6778,

651, 4750, 8847, 5263, 4248, 8345, 6813, 7844, 9382, 3260, 5822, 4306,

5845, 4311, 4825, 9433, 7392, 7403, 8433, 8951, 5880, 3321, 9983, 3845,

1294, 2832, 7448, 8984, 5406, 8991, 801, 4402, 5431, 3387, 1345, 9544,

5450, 4938, 1868, 3409, 4948, 5467, 349, 9060, 2917, 2923, 8562, 6520,

1408, 4481, 9093, 392, 7048, 1419, 8076, 3982, 8594, 5524, 2969, 410,

8602, 2971, 945, 8116, 8628, 9659, 6078, 1472, 961, 9160, 5068, 7632,

2007, 6634, 2546, 1019

Appendix C. Digit MNIST Experiments for Two Narrow Neural Networks (Widths 10 and 20) Up to Depth 35

Appendix D. Detailed Specifications

References

- Goodfellow, I.J.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2016; Available online: http://www.deeplearningbook.org (accessed on 25 February 2022).

- Kukacka, J.; Golkov, V.; Cremers, D. Regularization for Deep Learning: A Taxonomy. arXiv 2017, arXiv:1710.10686. [Google Scholar] [CrossRef]

- Ioffe, S.; Szegedy, C. Batch normalization: Accelerating deep network training by reducing internal covariate shift. In Proceedings of the 32nd International Conference on Machine Learning, Volume 37, July 2015; PMLR: Lille, France, 2015; pp. 448–456. [Google Scholar]

- Ba, J.L.; Kiros, J.R.; Hinton, G.E. Layer normalization. arXiv 2016, arXiv:1607.06450. [Google Scholar]

- Bishop, C.M. Neural Networks for Pattern Recognition; Oxford University Press: Oxford, UK, 1995. [Google Scholar]

- DeVries, T.; Taylor, G.W. Dataset augmentation in feature space. arXiv 2017, arXiv:1702.05538. [Google Scholar]

- Szegedy, C.; Vanhoucke, V.; Ioffe, S.; Shlens, J.; Wojna, Z. Rethinking the inception architecture for computer vision. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 2818–2826. [Google Scholar]

- Hinton, G.E.; Srivastava, N.; Krizhevsky, A.; Sutskever, I.; Salakhutdinov, R. Improving neural networks by preventing co-adaptation of feature detectors. arXiv 2012, arXiv:1207.0580. [Google Scholar]

- Srivastava, N.; Hinton, G.; Krizhevsky, A.; Sutskever, I.; Salakhutdinov, R. Dropout: A Simple Way to Prevent Neural Networks from Overfitting. J. Mach. Learn. Res. 2014, 15, 1929–1958. [Google Scholar]

- Fukushima, K.; Miyake, S. Neocognitron: A self-organizing neural network model for a mechanism of visual pattern recognition. In Competition and Cooperation in Neural Nets; Springer: Berlin/Heidelberg, Germany, 1982; pp. 267–285. [Google Scholar]

- Rumelhard, D.E.; McClelland, J.L.; PDP Research Group. Parallel distributed processing: Explorations in the microstructures of cognition. Volume 1: Foundations. In Parallel Distributed Processing; MIT Press: Cambridge, MA, USA, 1988; Volume 1. [Google Scholar]

- Long, J.; Shelhamer, E.; Darrell, T. Fully convolutional networks for semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 3431–3440. [Google Scholar]

- Huang, G.; Liu, Z.; Van Der Maaten, L.; Weinberger, K.Q. Densely connected convolutional networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 4700–4708. [Google Scholar]

- Lang, K.; Hinton, G.E. Dimensionality reduction and prior knowledge in e-set recognition. In Advances in Neural Information Processing Systems; MIT Press: Cambridge, MA, USA, 1989; Volume 2. [Google Scholar]

- Lin, T.Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal loss for dense object detection. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 2980–2988. [Google Scholar]

- Caruana, R. A dozen tricks with multitask learning. In Neural Networks: Tricks of the Trade; Springer: Berlin/Heidelberg, Germany, 1998; pp. 165–191. [Google Scholar]

- Ruder, S. An overview of multi-task learning in deep neural networks. arXiv 2017, arXiv:1706.05098. [Google Scholar]

- Hardt, M.; Recht, B.; Singer, Y. Train faster, generalize better: Stability of stochastic gradient descent. In Proceedings of the 33rd International Conference on Machine Learning—Volume 48; PMLR: New York, NY, USA, 2016; pp. 1225–1234. [Google Scholar]

- Prechelt, L. Automatic early stopping using cross validation: Quantifying the criteria. Neural Netw. 1998, 11, 761–767. [Google Scholar] [CrossRef]

- Glorot, X.; Bengio, Y. Understanding the difficulty of training deep feedforward neural networks. In Proceedings of the Thirteenth International Conference on Artificial Intelligence and Statistics, Sardinia, Italy, 13–15 May 2010; JMLR Workshop and Conference Proceedings. pp. 249–256. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Delving deep into rectifiers: Surpassing human-level performance on imagenet classification. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015; pp. 1026–1034. [Google Scholar]

- Smith, L.N. A disciplined approach to neural network hyper-parameters: Part 1—learning rate, batch size, momentum, and weight decay. arXiv 2018, arXiv:1803.09820. [Google Scholar] [CrossRef]

- Wilson, A.C.; Roelofs, R.; Stern, M.; Srebro, N.; Recht, B. The marginal value of adaptive gradient methods in machine learning. In Proceedings of the 31st International Conference on Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; Volume 30. [Google Scholar]

- Geifman, Y.; El-Yaniv, R. Selective Classification for Deep Neural Networks. In Proceedings of the 31st International Conference on Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; Curran Associates Inc.: Red Hook, NY, USA, 2017; pp. 4885–4894. [Google Scholar]

- Xiao, H.; Rasul, K.; Vollgraf, R. Fashion-MNIST: A Novel Image Dataset for Benchmarking Machine Learning Algorithms. arXiv 2017, arXiv:1708.07747. [Google Scholar] [CrossRef]

- Menon, A.K.; van Rooyen, B.; Natarajan, N. Learning from Binary Labels with Instance-Dependent Corruption. arXiv 2016, arXiv:1605.00751. [Google Scholar] [CrossRef]

- Patrini, G.; Rozza, A.; Menon, A.; Nock, R.; Qu, L. Making Deep Neural Networks Robust to Label Noise: A Loss Correction Approach. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 1944–1952. [Google Scholar] [CrossRef]

- Smith, L.N. Cyclical Learning Rates for Training Neural Networks. In Proceedings of the 2017 IEEE Winter Conference on Applications of Computer Vision (WACV), Santa Rosa, CA, USA, 24–31 March 2017. [Google Scholar] [CrossRef]

- Lu, Z.; Pu, H.; Wang, F.; Hu, Z.; Wang, L. The Expressive Power of Neural Networks: A View from the Width. In Proceedings of the 31st International Conference on Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; Curran Associates Inc.: Red Hook, NY, USA, 2017; pp. 6232–6240. [Google Scholar]

- Wei, J.; Zhu, Z.; Cheng, H.; Liu, T.; Niu, G.; Liu, Y. Learning with Noisy Labels Revisited: A Study Using Real-World Human Annotations. In Proceedings of the International Conference on Learning Representations, Vancouver, BC, Canada, 3–7 May 2021. [Google Scholar]

- Abadi, M.; Agarwal, A.; Barham, P.; Brevdo, E.; Chen, Z.; Citro, C.; Corrado, G.S.; Davis, A.; Dean, J.; Devin, M.; et al. TensorFlow: Large-Scale Machine Learning on Heterogeneous Systems, 2015. Software. Available online: tensorflow.org (accessed on 25 January 2022).

- Chollet, F. Keras. 2015. Available online: https://keras.io (accessed on 25 January 2022).

| Std. Dev. | 0 | 0.1 | 0.25 | 0.5 | 0.75 | 1 | 2 | 3 | 4 |

|---|---|---|---|---|---|---|---|---|---|

| Training Set | |||||||||

| L1 | 0.80 | 0.80 | 0.79 | 0.76 | 0.69 | 0.66 | 0.32 | 0.27 | 0.10 |

| L2 | 0.86 | 0.86 | 0.85 | 0.82 | 0.80 | 0.78 | 0.68 | 0.58 | 0.46 |

| Dropout | 0.80 | 0.80 | 0.79 | 0.77 | 0.75 | 0.71 | 0.60 | 0.48 | 0.39 |

| EBR | 0.89 | 0.88 | 0.86 | 0.84 | 0.81 | 0.78 | 0.68 | 0.59 | 0.51 |

| Validation Set | |||||||||

| L1 | 0.80 | 0.79 | 0.78 | 0.75 | 0.64 | 0.58 | 0.24 | 0.21 | 0.10 |

| L2 | 0.84 | 0.84 | 0.84 | 0.83 | 0.82 | 0.80 | 0.70 | 0.60 | 0.49 |

| Dropout | 0.82 | 0.82 | 0.83 | 0.81 | 0.80 | 0.77 | 0.68 | 0.58 | 0.54 |

| EBR | 0.85 | 0.85 | 0.85 | 0.85 | 0.83 | 0.82 | 0.74 | 0.65 | 0.55 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Nuti, G.; Cross, A.-I.; Rindler, P. Evidence-Based Regularization for Neural Networks. Mach. Learn. Knowl. Extr. 2022, 4, 1011-1023. https://doi.org/10.3390/make4040051

Nuti G, Cross A-I, Rindler P. Evidence-Based Regularization for Neural Networks. Machine Learning and Knowledge Extraction. 2022; 4(4):1011-1023. https://doi.org/10.3390/make4040051

Chicago/Turabian StyleNuti, Giuseppe, Andreea-Ingrid Cross, and Philipp Rindler. 2022. "Evidence-Based Regularization for Neural Networks" Machine Learning and Knowledge Extraction 4, no. 4: 1011-1023. https://doi.org/10.3390/make4040051

APA StyleNuti, G., Cross, A.-I., & Rindler, P. (2022). Evidence-Based Regularization for Neural Networks. Machine Learning and Knowledge Extraction, 4(4), 1011-1023. https://doi.org/10.3390/make4040051