An Agent-Based System for Automated Configuration and Coordination of Robotic Operations in Real Time—A Case Study on a Car Floor Welding Process

Abstract

1. Introduction

- Intelligent components and architectures that support the vertical integration of the component-level agents with higher-level systems.

- Reconfiguration services that will enable more autonomy in the (re)configuration process.

- Fault diagnosis and recovery.

- Safety.

- The combination of ontology for representing knowledge in manufacturing automation systems—together with the multi-agent approach to enable, in real time, the automated configuration/reconfiguration, control and coordination of manufacturing operations.

- The reduction of the programming complexity required to coordinate heterogenous systems and the lower expertise required by the technical personnel.

- The ability of production systems to reconfigure their operation with minimal intervention, reducing downtime and associated costs significantly.

1.1. Literature Review

1.2. Case Study Overview and Technology Selection

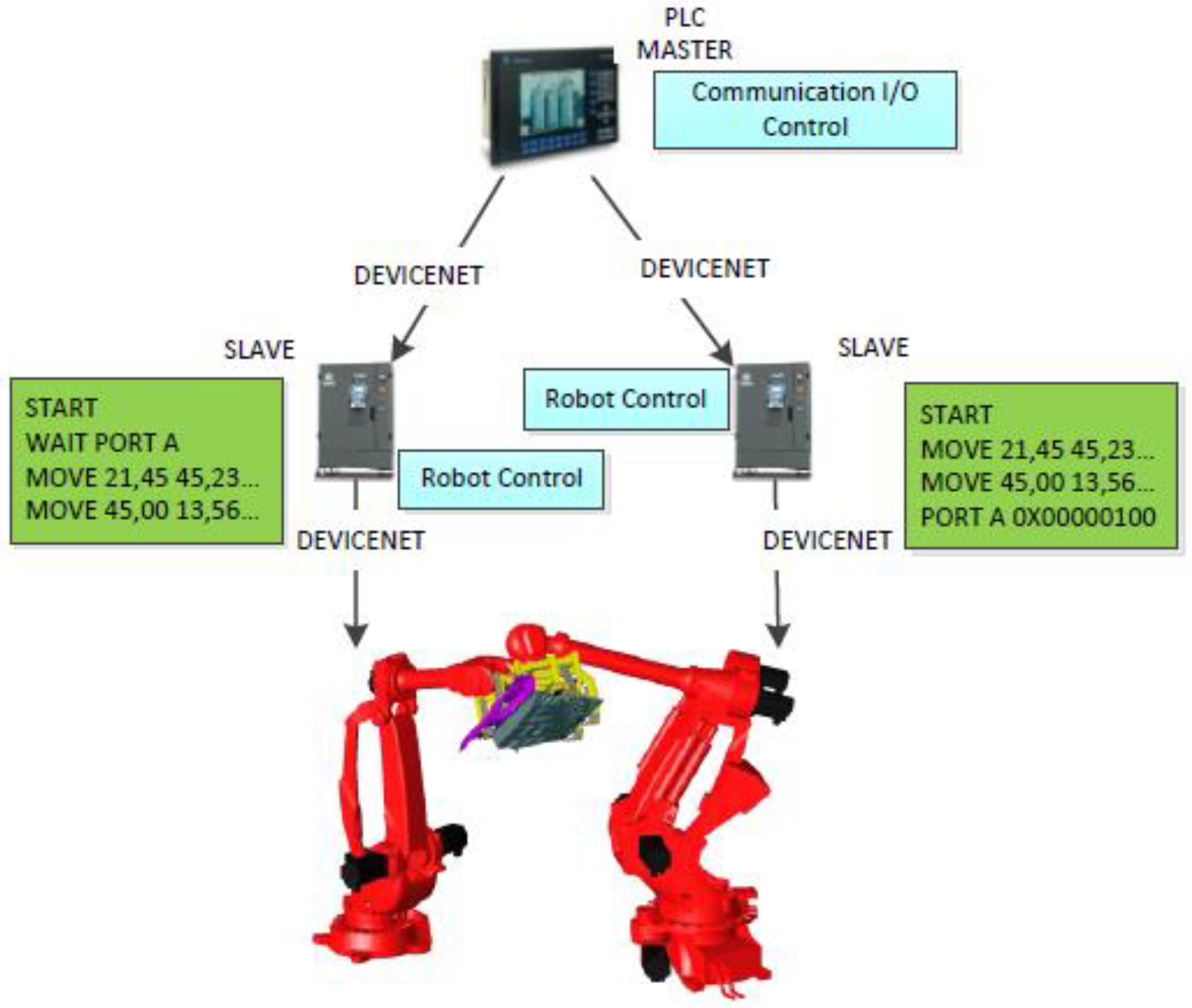

- A Comau Smart NJ 130, which is equipped with a spot-welding gun (robot depicted on the left-hand side of Figure 1b).

- A Comau Smart NJ 370, which uses a semi-flexible gripper that can hold both parts simultaneously (robot depicted on the right-hand side of Figure 1b). The gripper is able to accommodate two variants of the vehicle floor, which differ in geometry but have common grasping points. The use of sensors to identify the grasped parts allows the gripper to adjust its behavior by actuating specific clamping units each time.

- The operator loads the parts of the floor on a loading table, which is located in the working area of the robots. The table is designed to provide adequate tolerance with respect to the positioning of the accommodated parts.

- The handling Robot simultaneously picks up all the parts from the loading table by using a geo-gripper that can guarantee relative positioning accuracy and adequate grasping forces through simple pneumatic clamps that are controlled through an Input/Output (I/O) module. It allows the final product’s correct geometry to be achieved.

- Inductive sensors mounted onto the gripper perform presence detection for the part throughout the execution of the welding operation.

- The operation of the robots is cooperative in the sense that:

- The handling robot manipulates the part in midair in order to achieve orientations that maximize the accessibility of the welding gun to all spot0weld locations.

- The proposed framework is used to orchestrate the communication between the two robots, which are able to exchange content-rich messages to declare the start/end/progress of each task that they are executing.

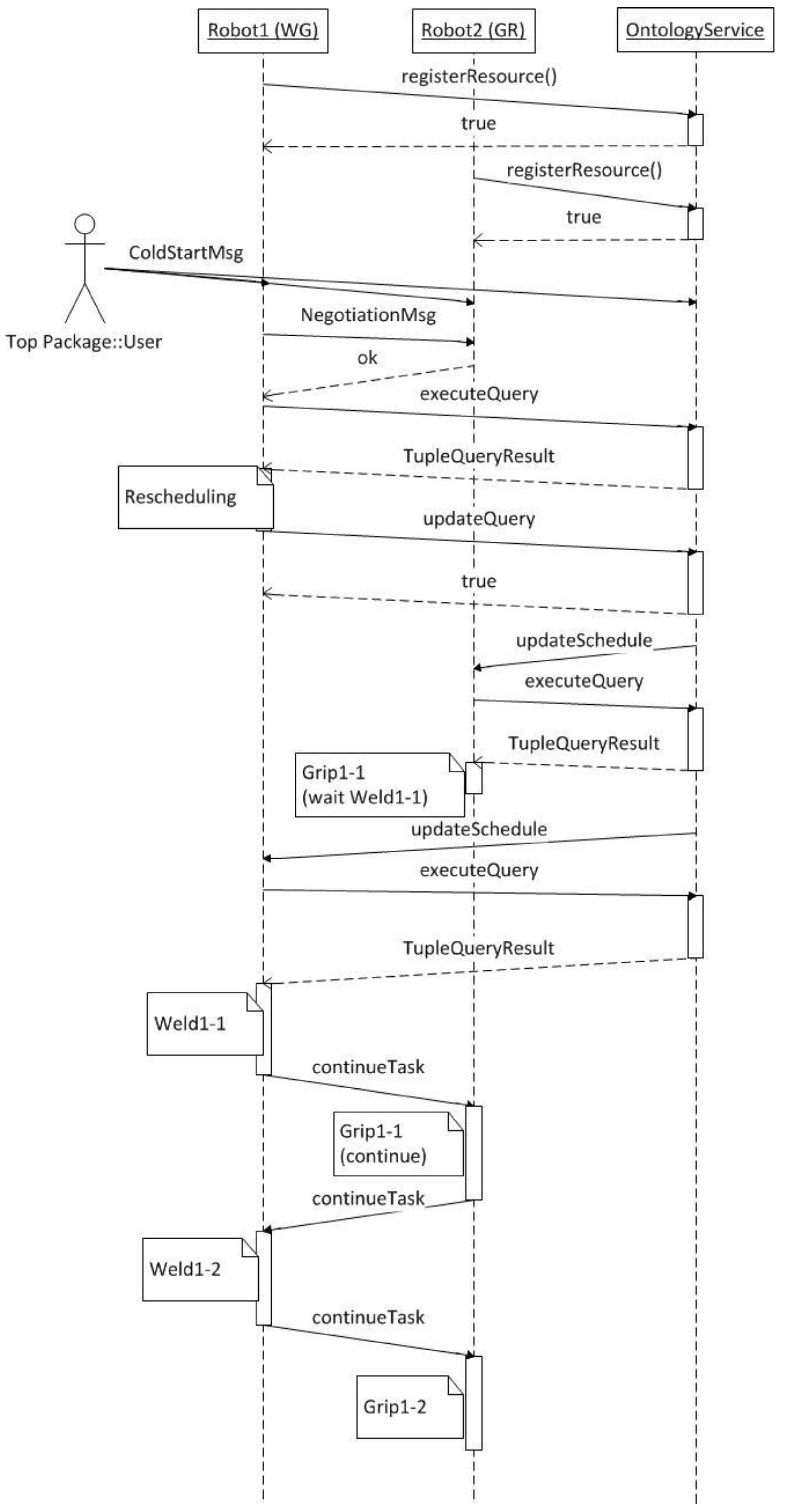

- This scenario considers four tasks, namely, two welding and two handling operations. More specifically, tasks “Weld1-1” and “Weld1-2” represent the Geo and spot-welding tasks on the part. Similarly, tasks “Grip1-1” and “Grip1-2” represent the parts’ movement in space (for accessibility purposes) and the placing of the part on the table. The sequence constraints that have been introduced in the system involve:

- Task “Weld1-1” having to be carried out before “Weld1-2”.

- Task “Grip1-1” being a prerequisite for the task “Weld1-2”.

- Task “Weld1-2” needing to precede the task “Grip1-2”.

- It is oriented towards real-time or soft real-time applications.

- It provides mechanisms for the implementation of services and a service-oriented architecture.

- It facilitates the requirements of the case study since it provides the necessary libraries and drivers for the selected hardware.

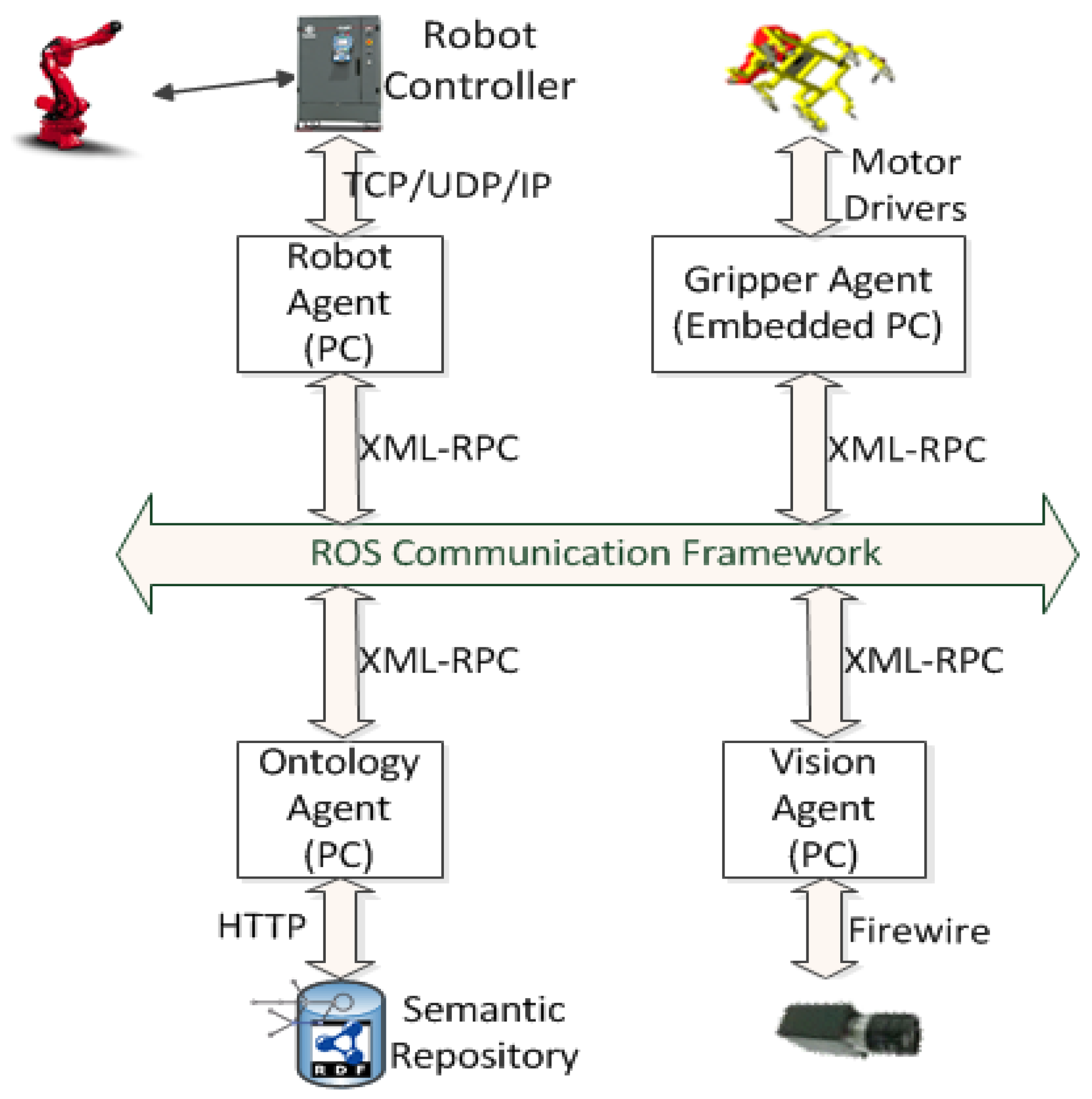

2. Approach for Multi-Agent Service-Oriented Integration

- Robot.

- Gripper.

- Ontology.

- Vision.

- Asynchronously, by broadcasting XML-RPC messages to a topic. All agents that have subscribed to the topic receive certain data.

- Synchronously, via the ROS Services mechanism. Each agent exposes its own services in certain names. Therefore, when another agent needs to communicate, it calls the right service and provides the appropriate input message.

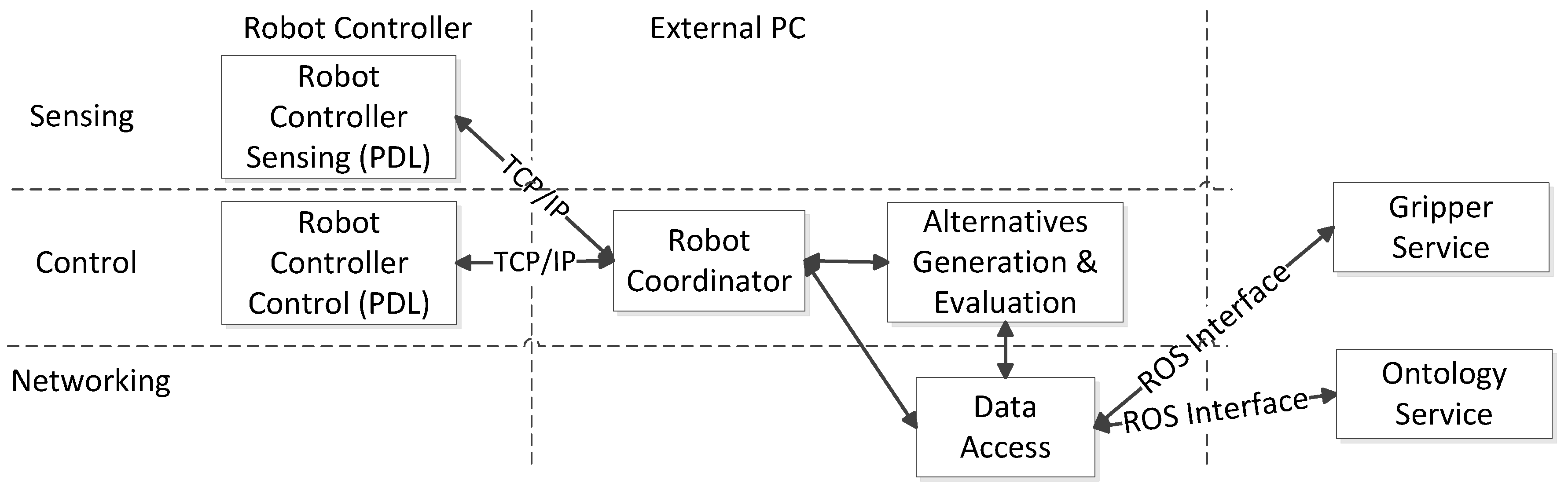

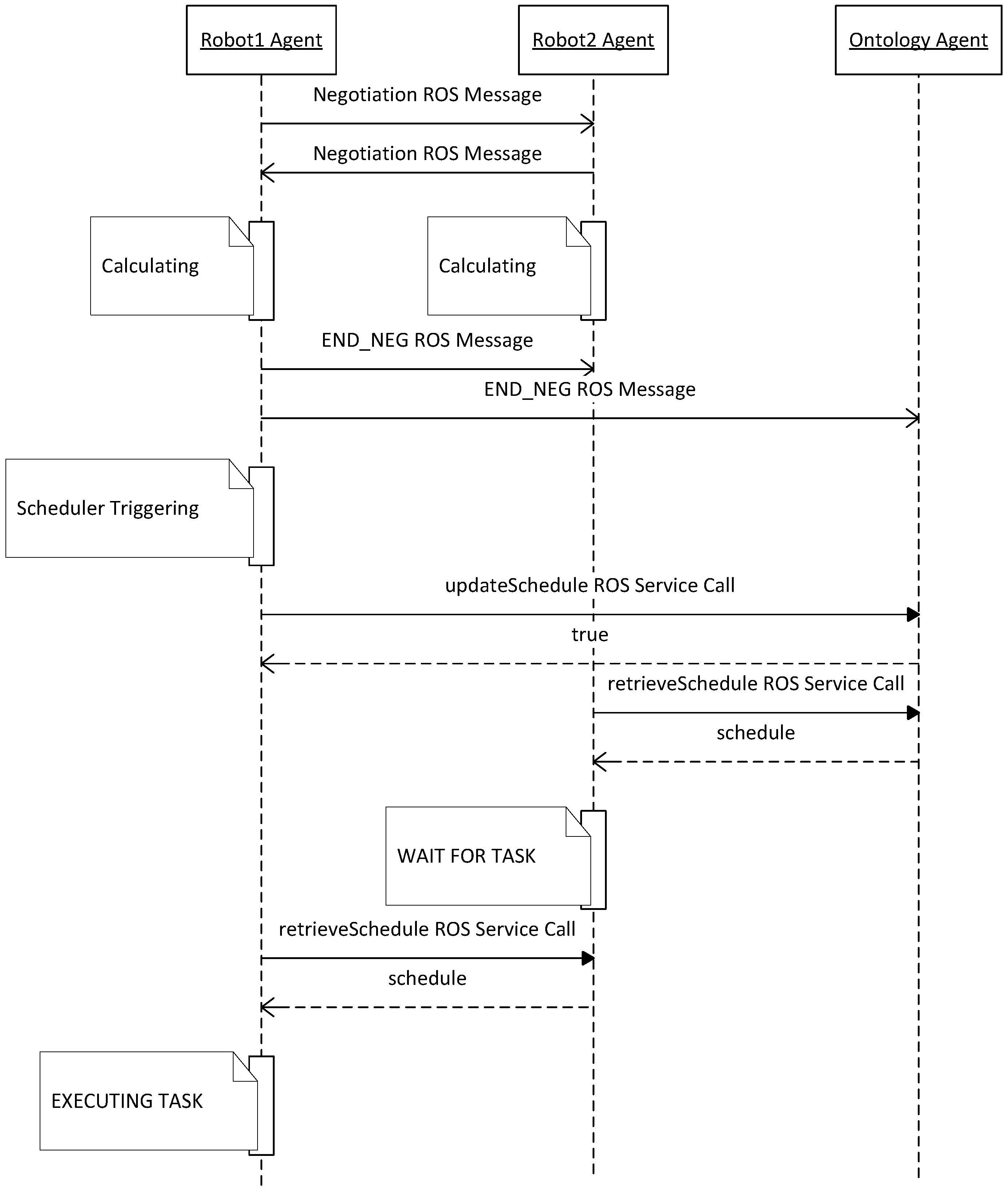

2.1. Robot

- Low-level—written in robot programming language. The layer undertakes the real-time control functionalities to ensure adequate response times for the control system. It is also able to generate events aimed at the higher-level layer of the agent in order to create awareness of a new condition or state that needs to be acknowledged. The low-level part of the agent is hosted by the robot’s controller, which runs on a firmware that is able to execute low-level programs. This firmware communicates with the “Robot Coordinator” module through a typical TCP/IP interface. Sockets are defined over the TCP channel and are used in order to pass parameters to the low-level program that finally controls the robot’s movements.

- High-level—written in a programming language such as C++ or Java. The high-level part controls the agent’s global behavior towards achieving its individual goal as well as coordinating its actions with other agents acting in the same system. The latter is the core of the agent, which is able to generate and implement alternative operation sequences and is hosted by a typical PC. The “Robot Coordinator” is a software module, whose role is to coordinate the tasks executed by the robotic unit and is connected to the low-level robot controller. It monitors the robotic unit and feeds it with information received from the rest of the platform (i.e., information about the welding spots of a subassembly). The “Data Access” module implements the communication mechanism. It is further responsible for composing valid messages from the “Robot Coordinator” data and feeding them to the platform upon request. It extracts the data contained in these messages and feeds them to the “Robot Coordinator”. Following the service-oriented paradigm, the “Data Access” module implements a set of services and advertises them to the platform. This set of services forms the “Robot Services”, and when any other resource or service consumes them, information can be retrieved from the “Robot Coordinator” about the robotic unit or feed information to it. For the implementation of these services, the ROS framework was used. Moreover, the robot agent contains the “Alternatives Generation and Evaluation” module, which can perform the scheduling and rescheduling of the shop floor’s pending tasks whenever requested.

- getPosition: When called, the robot service demands the robot arm coordinates from the Robot Controller by sending a message. Afterwards, the service returns these coordinates as a response to the client that asked for them.

- continueTask: It is used to inform the robot resource that it should continue executing its next pending task. This method is useful when a manufacturing job comprises more than one task, which has pre- and post-conditions. If, for example, a manufacturing job is composed of two tasks, Task A and Task B—with Task B having, as a pre-condition, Task A—the following sequence of actions happens: the robot, which is assigned to perform Task B, suspends this task’s execution until Task A has been completed. When the resource assigned to perform Task A finishes it, it is informed by calling the proper “Ontology Service” of the resource assigned to Task B, and it calls upon this resource’s continueTask method. When this method has been called upon, the resource is informed of the pre-condition task’s completion and it resumes the execution of its assigned task.

2.2. Gripper

- reconfigure: The Data Access module of the Gripper agent takes as input the ID of the target work piece. Then, it calls for the appropriate function of the Gripper Firmware Interface and passes from any current status to that with the arm configuration assigned to it. All arms and sliders are operated.

- closeClamps: This service is called; the gripper is ready to grab the target work piece. The Data Access module calls the openClamps() function of the Gripper Firmware, and the gripper passes from any current status to that with all the relevant clamps closed.

- openClamps: When this service is called, the gripper is ready to release the grabbed part. The openClamps() function of the Firmware Interface is responsible for opening the Gripper’s clamps.

2.3. Ontology

- registerResource and registerService: The registerResource and registerService methods are called upon in order to inform the Ontology that a new resource or service has just been connected to the platform and should be registered. Every resource or service participating in the framework calls for the appropriate method to register itself to the platform. When registered, the Ontology knows that this resource or service is ready, online, to serve shop-floor tasks. Only registered services are taken into consideration when rescheduling is performed for the pending shop-floor tasks. Furthermore, the Ontology Service, by analyzing the broadcasted messages, remains aware of the unexpected platform events, and the resource or service break downs and updates the Ontology information.

- executeQuery (string SPARQLQuery): It allows the platform resources to query the Ontology and retrieve information from it. For instance, the resource that performs rescheduling queries the Ontology about the existing shop-floor pending tasks, the online resources and their availabilities, and the suitability of “match” among the resources and the pending tasks.

- updateQuery (string SPARQLQuery): It allows the platform resources and services to update the existing information lying in the Ontology or feed it with new ones. For example, when the robot agent finishes the rescheduling task, it calls for this method so as to supply the Ontology with the new assignments and update the already existing ones.

- retrieveSchedule: This service is called for by every Robot’s agent, after a rescheduling task has taken place. After calling these services, the Data Access module of the Ontology makes a query at the Ontology Repository about the Robot’s tasks and operations. A list of the assigned tasks is returned as a response.

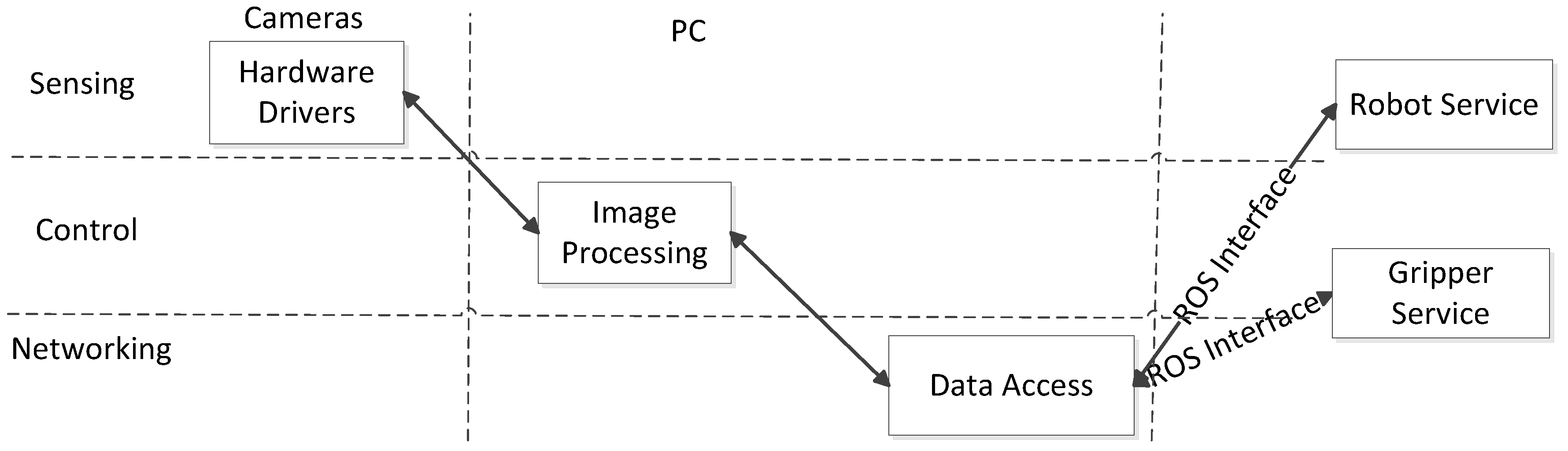

2.4. Vision

- performCalibration: The method performs the calculations for the identification of the relative position of the mobile unit base in relation to the docking station base. The method is required for the calculation of the mobile unit’s position with high precision.

- identifyPart: The method identifies the automotive part types at each rack.

- identifyPartLocation: The method identifies the parts’ positions and orientations inside the racks.

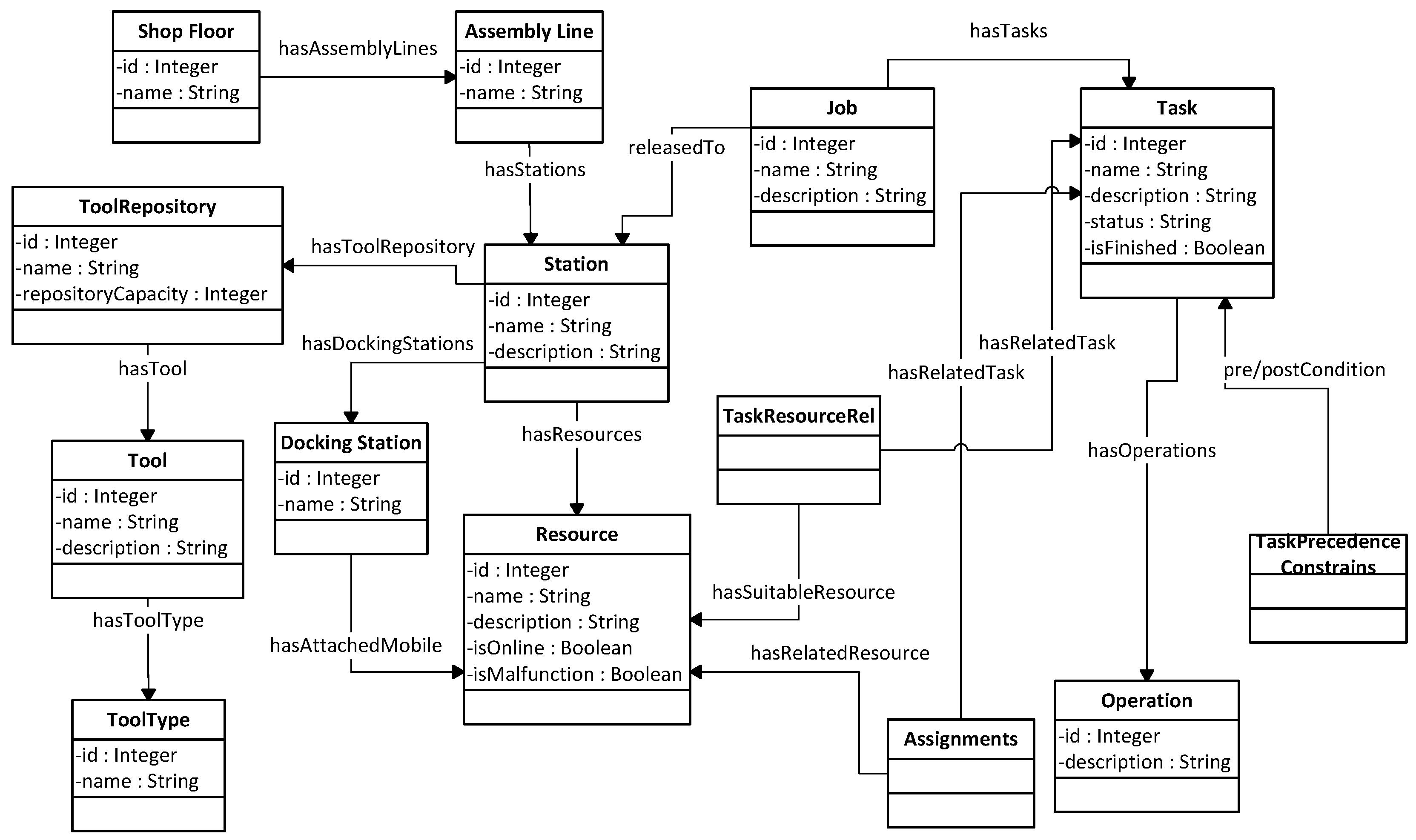

2.5. Ontology-Based Approach for Coordinating the Execution of Robotic Operations

- An ontology knowledge base.

- A decentralized negotiation and coordination mechanism.

- “hasAssemblyLine” declares that an assembly line belongs to a certain shop floor.

- “hasStation” connects an assembly line with all the working stations located in it.

- “hasResource” connects a working station with all the robots located in it.

- “hasToolRepository” is used to associate each work station with its tool repository.

- “hasDockingStation” associates each work station with its docking station.

- “hasAttachedMobileResource” connects the docking station with the mobile resource docked on it.

- “hasTool” connects the resources with their attached tools.

- “hasToolType” declares the type of each tool by connecting it with the specified tool type.

- “releasedToStation” declares that a job is released and will be executed within a certain station.

- “hasTasks” connects the job with its tasks.

- “hasOperations” connects a task with its operations.

- “hasPreCondition” declares that a task must be completed before a certain task begins.

- “hasPostCondition” declares that a task must start after a certain task is completed.

- “hasRelatedTask” is a property of the TaskResourceRel class. It is used together with the “hasSuitableResource” property to denote that a certain task can be executed by a certain resource.

- “hasRelatedResource” is a property of the Assignment class. The Assignment class is connected with a resource using this property and with a task using the “hasRelatedTask” property.

3. Case Study Implementation and Discussion of Results

3.1. Implementation Details

3.2. Discussion and Comparison with Other Approaches

- Network configuration: With the proposed approach, the network configuration time is significantly reduced. Using the PLC approach, approximately 8 h is needed to configure the fieldbus network including the gripper I/O configuration and the mapping of the robot I/Os to those configured at the gripper side. On the other hand, with a resource that can support the TCP/IP protocol, the only requirement is to connect all the resources at the same network (0.5–1 h).

- Robot program: There is not any foreseen effect on the time needed for the robot programming. The writing of the robot program takes approximately the same time in both approaches.

- Task sequencing configuration: If no standard is used for PLC programming—as is the case in several industrial sites—the development of interlocking PLC commands for task sequencing takes 5–8 h. Using the proposed approach, it is only necessary to configure the precedence constraints of each couple of tasks at the ontology, which may take approximately 1–2 h if a generic purpose Ontology editing tool (e.g., Protégé) is used or even less (approximately 10–20 min) if a specific end-user application is available.

- Integration of new resources: Using the traditional hierarchical approach, in case the new resource has the same configuration (same vendor, same gripper and same IO configuration) as the already installed resource, it is only necessary to copy the robot program to the controller (0.5 h). However, if the robot is not configured properly (e.g., has a different vendor), we also need to program the PLC signaling (approximately 3 h). On the other hand, when using the agent-based system developed in this paper, we only need to add the new robot in the ontology and configure the task-resource suitability (10–20 min using the Protégé Ontology editor).

4. Conclusions and Future Work

Author Contributions

Funding

Conflicts of Interest

References

- Chryssolouris, G. Manufacturing Systems: Theory and Practice, 2nd ed.; Springer: New York, NY, USA, 2006. [Google Scholar]

- Brennan, W.R.; Vrba, P.; Tichy, P.; Zoitl, A.; Sunder, C.; Strasser, T.; Marik, V. Developments in dynamic and intelligent reconfiguration of industrial automation. Comput. Ind. 2008, 59, 533–547. [Google Scholar] [CrossRef]

- Leitao, P. Agent-based distributed manufacturing control: A state-of-the-art survey. Eng. Appl. Artif. Intell. 2009, 22, 979–991. [Google Scholar] [CrossRef]

- Scholz-Reiter, B.; Freitag, M. Autonomous processes in assembly systems. CIRP Ann. 2007, 56, 712–729. [Google Scholar] [CrossRef]

- Monostori, L.; Váncza, J.; Kumara, S.R.T. Agent-based systems for manufacturing. CIRP Ann. 2006, 55, 697–720. [Google Scholar] [CrossRef]

- Mourtzis, D.; Papakostas, N.; Mavrikios, D.; Makris, S.; Alexopoulos, K. The role of simulation in digital manufacturing—Applications and outlook. Int. J. Comput. Integr. Manuf. 2015, 28, 3–24. [Google Scholar] [CrossRef]

- Monostori, L.; Kádár, B.; Pfeiffer, A.; Karnok, D. Solution approaches to real-time control of customized mass production. Ann. CIRP 2007, 56, 431–434. [Google Scholar] [CrossRef]

- Guo, Q.; Zhang, M. An agent-oriented approach to resolve scheduling optimization in intelligent manufacturing. Robot. Comput. Integr. Manuf. 2010, 26, 39–45. [Google Scholar] [CrossRef]

- Mezgebe, T.T.; Bril El Haouzi, H.; Demesure, G.; Pannequin, R.; Thomas, A. Multi-agent systems negotiation to deal with dynamic scheduling in disturbed industrial context. J. Intell. Manuf. 2020, 31, 1367–1382. [Google Scholar] [CrossRef]

- Mishra, N.; Singh, A.; Kumari, S.; Govindan, K.; Ali, S.I. Cloud-based multi-agent architecture for effective planning and scheduling of distributed manufacturing. Int. J. Prod. Res. 2016, 54, 7115–7128. [Google Scholar] [CrossRef]

- Papakostas, N.; Mourtzis, D.; Makris, S.; Michalos, G.; Chryssolouris, G. An agent-based methodology for manufacturing decision making: A textile case study. Int. J. Comput. Integr. Manuf. 2012, 25, 509–526. [Google Scholar] [CrossRef]

- Rocha, A.; Barata, D.; di Orio, G.; Santos, T.; Barata, J. PRIME as a Generic Agent Based Framework to Support Pluggability and Reconfigurability Using Different Technologies. In Proceedings of the 6th IFIP WG 5.5/SOCOLNET Doctoral Conference on Computing, Electrical and Industrial Systems, DoCEIS 2015, Costa de Caparica, Portugal, 13–15 April 2015. [Google Scholar] [CrossRef]

- Cruz Salazar, L.A.; Ryashentseva, D.; Lüder, A.; Vogel-Heuser, B. Cyber-physical production systems architecture based on multi-agent’s design pattern—Comparison of selected approaches mapping four agent patterns. Int. J. Adv. Manuf. Technol. 2019, 105, 4005–4034. [Google Scholar] [CrossRef]

- Shen, W.; Hao, Q.; Wang, S.; Li, Y.; Ghenniwa, H. An agent-based service-oriented integration architecture for collaborative intelligent manufacturing. Robot. Comput.-Integr. Manuf. 2007, 23, 315–325. [Google Scholar] [CrossRef]

- Zhang, Y.; Huang, G.Q.; Qu, T.; Ho, O.; Sun, S. Agent-based smart objects management system for real-time ubiquitous manufacturing. Robot. Comput.-Integr. Manuf. 2011, 27, 538–549. [Google Scholar] [CrossRef]

- Candido, G.; Jammes, F.; de Oliveira, J.B.; Colombo, A.W. SOA at device level in the industrial domain: Assessment of OPC UA and DPWS specifications. In Proceedings of the 8th IEEE International Conference on Industrial Informatics (INDIN), Osaka, Japan, 13–16 July 2010; pp. 598–603. [Google Scholar] [CrossRef]

- Wang, C.; Shen, W.; Ghenniwa, H. An adaptive negotiation framework for agent based dynamic manufacturing scheduling, IEEE International Conference on Systems. Man Cybern. 2003, 2, 1211–1216. [Google Scholar]

- Vrba, P.; Radakovič, M.; Obitko, M.; Mařík, V. Semantic technologies: Latest advances in agent-based manufacturing control systems. Int. J. Prod. Res. 2011, 49, 1483–1496. [Google Scholar] [CrossRef]

- Secchi, C.; Bonfe, M.; Fantuzzi, C. On the Use of UML for Modeling Mechatronic Systems. IEEE Trans. Autom. Sci. Eng. 2007, 4, 105–113. [Google Scholar] [CrossRef]

- Ocker, F.; Kovalenko, I.; Barton, K.; Tilbury, D.; Vogel-Heuser, B. A Framework for Automatic Initialization of Multi-Agent Production Systems Using Semantic Web Technologies. IEEE Robot. Autom. Lett. 2019, 4, 4330–4337. [Google Scholar] [CrossRef]

- Ciortea, A.; Mayer, S.; Michahelles, F. Repurposing manufacturing lines on the fly with multi-agent systems for the Web of Things. In Proceedings of the 17th International Conference on Autonomous Agents and MultiAgent Systems (AAMAS), Stockholm, Sweden, 10–15 July 2018; pp. 813–822. [Google Scholar]

- Reinhart, G.; Krug, S. Automatic Configuration (Plug & Produce) of Robot Systems—Data-Interpretation and Exchange. In Enabling Manufacturing Competitiveness and Economic Sustainability; Springer: Berlin/Heidelberg, Germany, 2012; pp. 147–152. [Google Scholar]

- Efthymiou, K.; Sipsas, K.; Melekos, D.; Georgoulias, K.; Chryssolouris, G. A Manufacturing Ontology Following Performance Indicators Approach. In Proceedings of the 7th International Conference on Digital Enterprise Technology, Athens, Greece, 28–30 September 2011; pp. 586–595. [Google Scholar]

- Efthymiou, K.; Alexopoulos, K.; Sipsas, P.; Mourtzis, D.; Chryssolouris, G. Knowledge management framework supporting manufacturing system design. In Proceedings of the 7th International Conference on Digital Enterprise Technology, Athens, Greece, 28–30 September 2011; pp. 577–585. [Google Scholar]

- Lepuschitz, W.; Zoitl, A.; Merdan, M. Ontology-Driven Automated Software Configuration for Manufacturing System Components. In Proceedings of the IEEE International Conference on Systems, Man, and Cybernetics (SMC), Anchorage, AK, USA, 9–12 October 2011. [Google Scholar]

- Lohse, N.; Ratchev, S.; Barata, J. Evolvable Assembly Systems—On the role of design frameworks and supporting ontologies. IEEE Int. Symp. Ind. Electron. 2006. [Google Scholar] [CrossRef]

- Alsafi, Y.; Vyatkin, V. Ontology-based reconfiguration agent for intelligent mechatronic systems in flexible manufacturing. Robot. Comput.-Integr. Manuf. 2010, 26, 381–391. [Google Scholar] [CrossRef]

- Orio, G.; Rocha, A.; Ribeiro, L.; Barata, J. The PRIME Semantic Language: Plug and Produce in Standardbased Manufacturing Production Systems. In Proceedings of the Flexible Automation and Intelligent Manufacturing, FAIM 2015, Wolverhampton, UK, 23–26 June 2015. [Google Scholar]

- Quigley, M.; Gerkey, B.; Conley, K.; Faust, J.; Foote, T.; Leibs, J.; Berger, E.; Wheeler, R.; Ng, A. ROS: An open-source Robot Operating System. In Proceedings of the Open-Source Software Workshop (ICRA), Kobe, Japan, 12–13 May and 17 May 2009. [Google Scholar]

- Jena Framework. Available online: http://jena.apache.org/ (accessed on 9 September 2020).

- Lo, V.; Zhou, D.; Liu, Y.; GauthierDickey, C.; Li, J. Scalable Supernode Selection in Peer-to-Peer Overlay Networks. In Proceedings of the Second International Workshop on Hot Topics in Peer-to-Peer Systems, San Diego, CA, USA, 21 July 2005; pp. 18–27. [Google Scholar]

- Michalos, G.; Sipsas, P.; Makris, S.; Chryssolouris, G. Decision making logic for flexible assembly lines reconfiguration. Robot. Comput.-Integr. Manuf. 2016, 37, 233–250. [Google Scholar] [CrossRef]

- Kousi, N.; Dimosthenopoulos, D.; Matthaiakis, A.-S.; Michalos, G.; Makris, S. AI based combined scheduling and motion planning in flexible robotic assembly lines. Procedia CIRP 2019, 86, 74–79. [Google Scholar] [CrossRef]

- Makris, S.; Michalos, G.; Chryssolouris, G. Virtual Commissioning of an Assembly Cell with Cooperating Robots. Adv. Decis. Sci. 2012, 2012, 428060. [Google Scholar] [CrossRef]

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Makris, S.; Alexopoulos, K.; Michalos, G.; Sardelis, A. An Agent-Based System for Automated Configuration and Coordination of Robotic Operations in Real Time—A Case Study on a Car Floor Welding Process. J. Manuf. Mater. Process. 2020, 4, 95. https://doi.org/10.3390/jmmp4030095

Makris S, Alexopoulos K, Michalos G, Sardelis A. An Agent-Based System for Automated Configuration and Coordination of Robotic Operations in Real Time—A Case Study on a Car Floor Welding Process. Journal of Manufacturing and Materials Processing. 2020; 4(3):95. https://doi.org/10.3390/jmmp4030095

Chicago/Turabian StyleMakris, Sotiris, Kosmas Alexopoulos, George Michalos, and Andreas Sardelis. 2020. "An Agent-Based System for Automated Configuration and Coordination of Robotic Operations in Real Time—A Case Study on a Car Floor Welding Process" Journal of Manufacturing and Materials Processing 4, no. 3: 95. https://doi.org/10.3390/jmmp4030095

APA StyleMakris, S., Alexopoulos, K., Michalos, G., & Sardelis, A. (2020). An Agent-Based System for Automated Configuration and Coordination of Robotic Operations in Real Time—A Case Study on a Car Floor Welding Process. Journal of Manufacturing and Materials Processing, 4(3), 95. https://doi.org/10.3390/jmmp4030095