Early Estimation of Olive Production from Light Drone Orthophoto, through Canopy Radius

Abstract

:1. Introduction

2. Materials and Methods

2.1. Olive Trees Phenology

- Vegetative stasis: suspension or slowing of growth of vegetative organs (winter period);

- Sprouting: apical and lateral buds enlarge, elongate, and the emission of new vegetation begins (late winter and early spring);

- Budding: growth of the vegetative apex with appearance of new leaves, nodes, and internodes (early spring);

- Pinking: from the flowering buds and, where present, from the mixed ones, inflorescences form and develop (between March and April);

- Flowering, from the opening of flower buds to the fall of stamens and petals (between May and June);

- Cheerfulness: enlargement of the ovary in the calyceal portion still persistent, presence of the browned stigma (June);

- Fruit growth: increase in size of drupes until they reach their final size (between June and September);

- Flooding: gradual change from green to straw yellow, up to red-purple color for at least 50% of the surface of the drupe and decreased consistency of the pulp (from September to November);

- Maturation: complete acquisition of the typical color of the cultivar, or of the color corresponding to the commercial use of the product; beginning of the appearance of senescence symptoms (between November and December);

- Leaf fall: gradual appearance of yellowish color until complete yellowing of the leaf and subsequent phylloptosis (during winter).

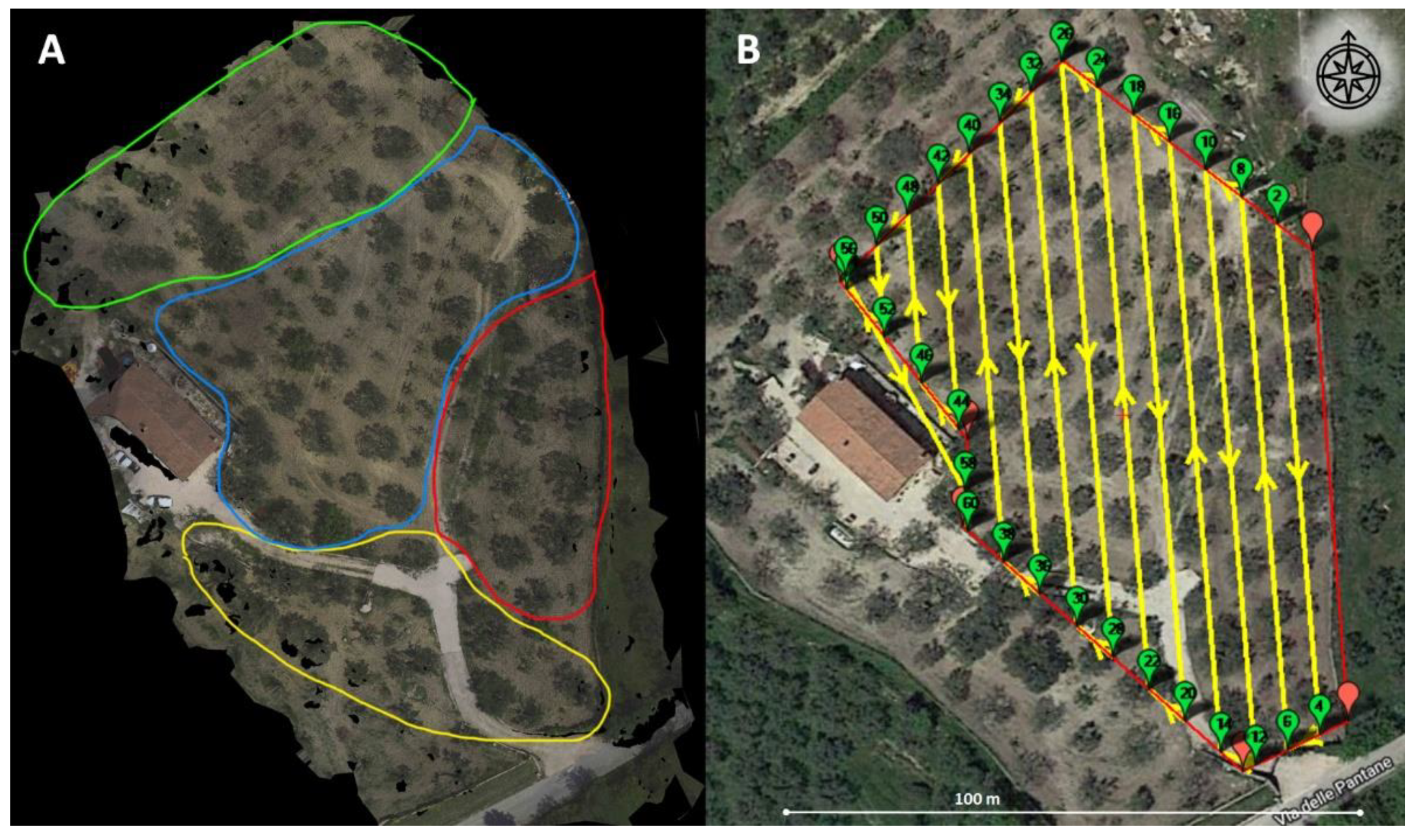

2.2. Experimental Field and Setup

2.3. UAV Images Acquisition and Orthoimage Reconstruction

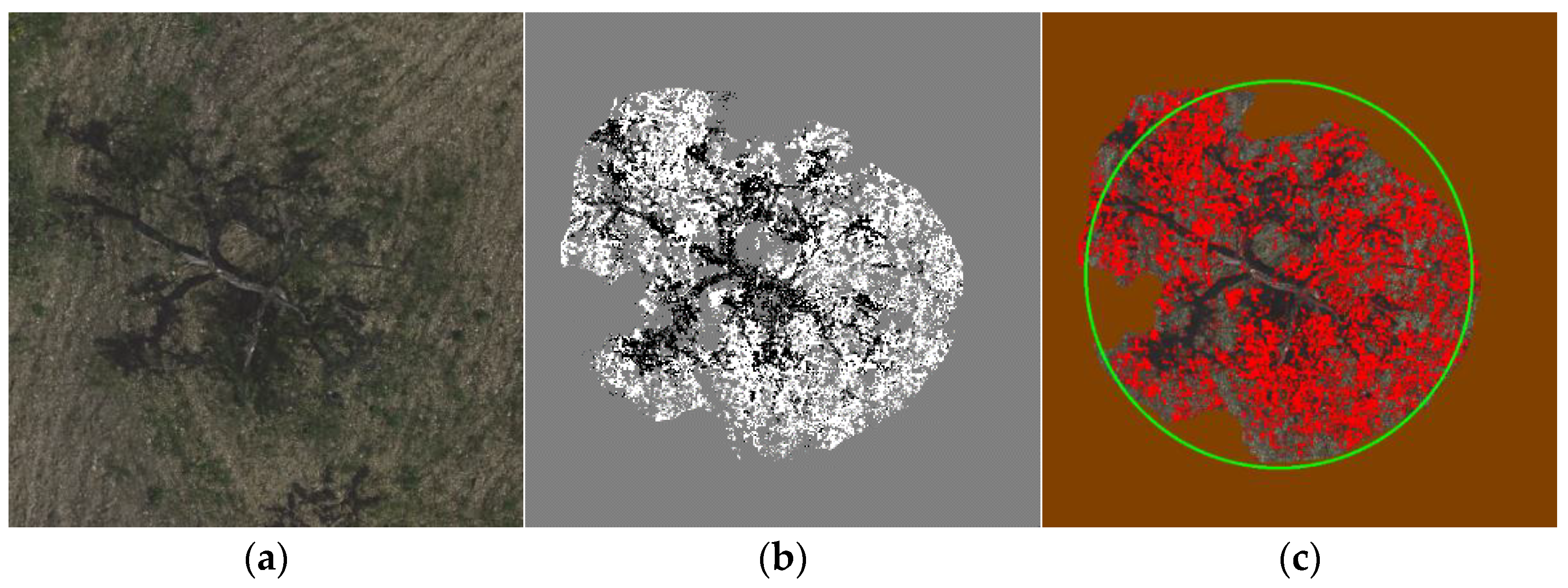

2.4. Leaf Area Estimation

2.5. Canopy Radius Estimation

2.6. Olive and EVOO Production Estimation

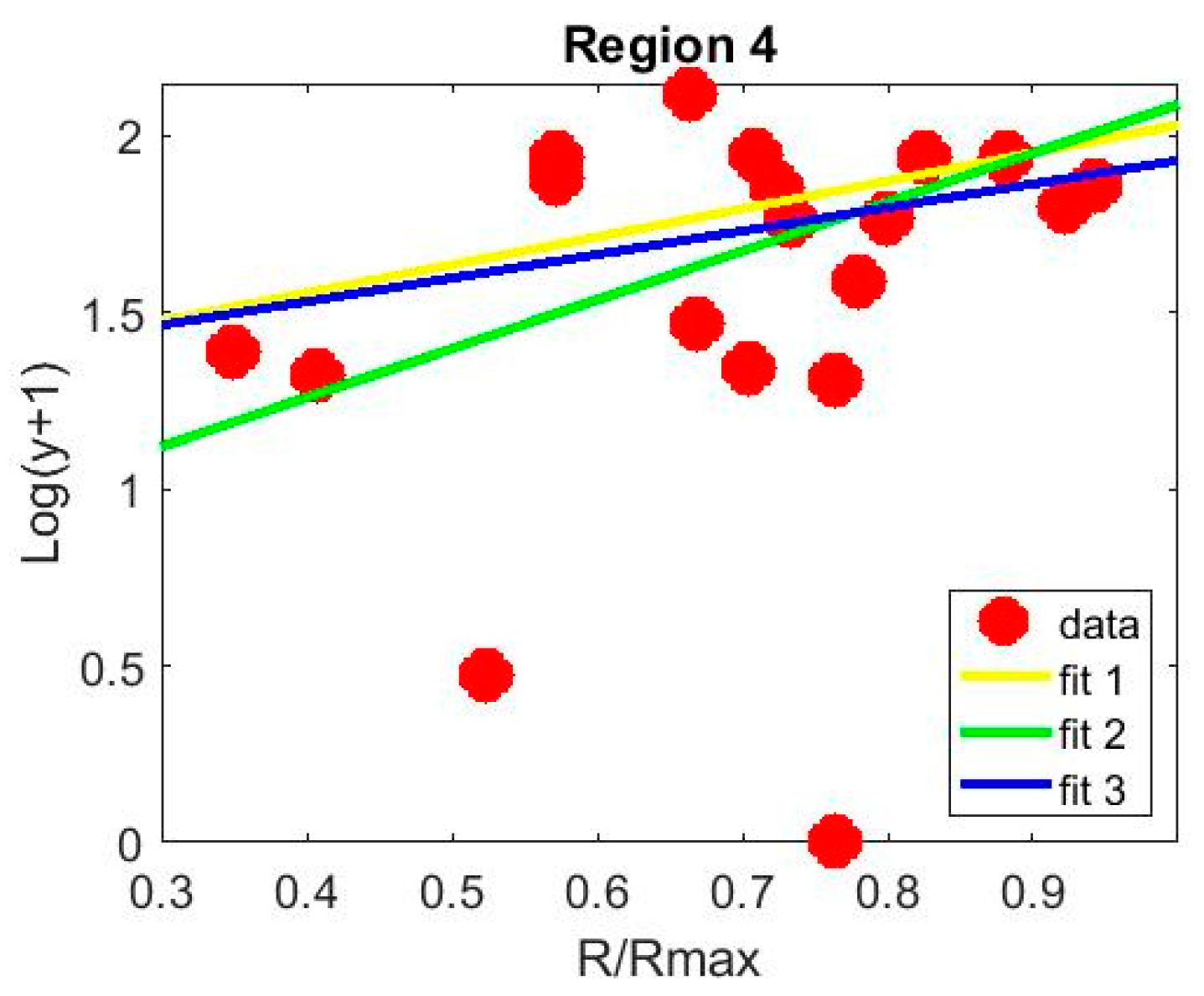

3. Results

3.1. Loading and Unloading Subsets

3.2. Leaf Area and Canopy Radius Estimate from kNN Image Segmentation

4. Discussion and Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

References

- Violino, S.; Pallottino, F.; Sperandio, G.; Figorilli, S.; Ortenzi, L.; Tocci, F.; Vasta, S.; Imperi, G.; Costa, C. A Full Technological Traceability System for Extra Virgin Olive Oil. Foods 2020, 9, 624. [Google Scholar] [CrossRef]

- Cajka, T.; Riddellova, K.; Klimankova, E.; Cerna, M.; Pudil, F.; Hajslova, J. Traceability of olive oil based on volatiles pattern and multivariate analysis. Food Chem. 2010, 121, 282–289. [Google Scholar] [CrossRef]

- Violino, S.; Pallottino, F.; Sperandio, G.; Figorilli, S.; Antonucci, F.; Ioannoni, V.; Fappiano, D.; Costa, C. Are the Innovative Electronic Labels for Extra Virgin Olive Oil Sustainable, Traceable, and Accepted by Consumers? Foods 2019, 8, 529. [Google Scholar] [CrossRef] [Green Version]

- Vecchio, R.; Annunziata, A. The role of PDO/PGI labelling in Italian consumers’ food choices. Agric. Econ. Rev. 2011, 12, 80–89. [Google Scholar]

- Cicia, G.; Cembalo, L.; Del Giudice, T. Country-of-origin effects on German peaches consumers. New Medit. 2012, 11, 75–79. [Google Scholar]

- Lanza, B.; Di Serio, M.G.; Iannucci, E.; Russi, F.; Marfisi, P. Nutritional, textural and sensorial characterisation of Italian table olives (Olea europaea L. cv.‘Intosso d’Abruzzo’). Int. J. Food SCi. Technol. 2010, 45, 67–74. [Google Scholar] [CrossRef]

- Di Vita, G.; D’Amico, M.; La Via, G.; Caniglia, E. Quality Perception of PDO extra-virgin Olive Oil: Which attributes most influence Italian consumers? Agric. Econ. Rev. 2013, 14, 46–58. [Google Scholar]

- Tseng, T.H.; Balabanis, G. Explaining the product-specificity of country-of-origin effects. Int. Mark. Rev. 2011, 28, 581–600. [Google Scholar] [CrossRef]

- Poiana, M.A.; Alexa, E.; Munteanu, M.F.; Gligor, R.; Moigradean, D.; Mateescu, C. Use of ATR-FTIR spectroscopy to detect the changes in extra virgin olive oil by adulteration with soybean oil and high temperature heat treatment. Open Chem. 2015, 13, 1. [Google Scholar] [CrossRef]

- Agrimonti, C.; Vietina, M.; Pafundo, S.; Marmiroli, N. The use of food genomics to ensure the traceability of olive oil. Trends Food Sci. Technol. 2011, 22, 237–244. [Google Scholar] [CrossRef]

- Bontempo, L.; Paolini, M.; Franceschi, P.; Ziller, L.; García-González, D.L.; Camin, F. Characterisation and attempted differentiation of European and extra-European olive oils using stable isotope ratio analysis. Food Chem. 2019, 276, 782–789. [Google Scholar] [CrossRef]

- Guido, R.; Mirabelli, G.; Palermo, E.; Solina, V. A framework for food traceability: Case study–Italian extra-virgin olive oil supply chain. Int. J. Ind. Eng. Manag. 2020, 11, 50–60. [Google Scholar] [CrossRef]

- Bianchini, A. A Blockchain-Based System for LoT-Aided Certification and Traceability of EVOO. 2018. Available online: https://etd.adm.unipi.it/theses/available/etd-08312018-174046/unrestricted/tesi.pdf (accessed on 6 September 2021).

- Miranda-Fuentes, A.; Llorens, J.; Gamarra-Diezma, J.L.; Gil-Ribes, J.A.; Gil, E. Towards an optimized method of olive tree crown volume measurement. Sensors 2015, 15, 3671–3687. [Google Scholar] [CrossRef] [Green Version]

- Fanigliulo, R.; Antonucci, F.; Figorilli, S.; Pochi, D.; Pallottino, F.; Fornaciari, L.; Grilli, R.; Costa, C. Light Drone-Based Application to Assess Soil Tillage Quality Parameters. Sensors 2020, 20, 728. [Google Scholar] [CrossRef] [Green Version]

- Caruso, G.; Zarco-Tejada, P.J.; Gonzalez-Dugo, V.; Moriondo, M.; Tozzini, L.; Palai, G.; Rallo, G.; Hornero, A.; Primicerio, J.; Gucci, R. High-resolution imagery acquired from an unmanned platform to estimate biophysical and geometrical parameters of olive trees under different irrigation regimes. PLoS ONE 2019, 14, e0210804. [Google Scholar] [CrossRef] [Green Version]

- Díaz-Varela, R.A.; De la Rosa, R.; León, L.; Zarco-Tejada, P.J. High-resolution airborne UAV imagery to assess olive tree crown parameters using 3D photo reconstruction: Application in breeding trials. Remote Sens. 2015, 7, 4213–4232. [Google Scholar] [CrossRef] [Green Version]

- Torres-Sánchez, J.; López-Granados, F.; Serrano, N.; Arquero, O.; Peña, J.M. High-throughput 3-D monitoring of agricultural-tree plantations with unmanned aerial vehicle (UAV) technology. PLoS ONE 2015, 10, e0130479. [Google Scholar] [CrossRef] [Green Version]

- Zarco-Tejada, P.J.; Diaz-Varela, R.; Angileri, V.; Loudjani, P. Tree height quantification using very high resolution imagery acquired from an unmanned aerial vehicle (UAV) and automatic 3D photo-reconstruction methods. Eur. J. Agron. 2014, 55, 89–99. [Google Scholar] [CrossRef]

- Rallo, P.; de Castro, A.I.; López-Granados, F.; Morales-Sillero, A.; Torres-Sánchez, J.; Jiménez, M.R.; Casanova, L.; Suárez, M.P. Exploring UAV-imagery to support genotype selection in olive breeding programs. Sci. Hortic. 2020, 273, 109615. [Google Scholar] [CrossRef]

- Cheng, Z.; Qi, L.; Cheng, Y.; Wu, Y.; Zhang, H. Interlacing orchard canopy separation and assessment using UAV images. Remote Sens. 2020, 12, 767. [Google Scholar] [CrossRef] [Green Version]

- Park, S.; Ryu, D.; Fuentes, S.; Chung, H.; Hernández-Montes, E.; O’Connell, M. Adaptive estimation of crop water stress in nectarine and peach orchards using high-resolution imagery from an unmanned aerial vehicle (UAV). Remote Sens. 2017, 9, 828. [Google Scholar] [CrossRef] [Green Version]

- Bertrad, E. The beneficial cardiovascular effects of the Mediterranean diet. Olivae 2002, 90, 29–31. [Google Scholar]

- Deidda, P.; Nieddu, G.; Chessa, I. La Fenologia. In Olea Trattato di Olivicoltura; Fiorino, P., Ed.; Edagricole: Bologna, Italy, 2003; pp. 57–73,461. [Google Scholar]

- Ramos-Román, M.J.; Jiménez-Moreno, G.; Anderson, R.S.; García-Alix, A.; Camuera, J.; Mesa-Fernández, J.M.; Manzano, S. Climate controlled historic olive tree occurrences and olive oil production in southern Spain. Glob. Planet. Chang. 2019, 182, 102996. [Google Scholar] [CrossRef]

- Oborne, M. Mission Planner. 2015. Available online: http://planner.ardupilot.com (accessed on 6 September 2021).

- Menesatti, P.; Angelini, C.; Pallottino, F.; Antonucci, F.; Aguzzi, J.; Costa, C. RGB color calibration for quantitative image analysis: The “3D Thin-Plate Spline” warping approach. Sensors 2012, 12, 7063–7079. [Google Scholar] [CrossRef] [Green Version]

- Anwar, N.; Izhar, M.A.; Najam, F.A. Construction monitoring and reporting using drones and unmanned aerial vehicles (UAVs). In Proceedings of the Tenth International Conference on Construction in the 21st Century (CITC-10), Colombo, Sri Lanka, 2–4 July 2018; pp. 2–4. [Google Scholar]

- Pallottino, F.; Menesatti, P.; Figorilli, S.; Antonucci, F.; Tomasone, R.; Colantoni, A.; Costa, C. Machine vision retrofit system for mechanical weed control in precision agriculture applications. Sustainability 2018, 10, 2209. [Google Scholar] [CrossRef] [Green Version]

- Guijun, Y.; Liu, J.; Zhao, C.; Li, Z.; Huang, Y.; Yu, H.; Xu, B.; Yang, X.; Zhu, D.; Zhang, X.; et al. Unmanned aerial vehicle remote sensing for feld-based crop phenotyping: Current status and perspectives. Front. Plant Sci. 2017, 8, 1111. [Google Scholar] [CrossRef]

- Paulus, S. Measuring crops in 3D: Using geometry for plant phenotyping. Plant Methods 2019, 15, 103. [Google Scholar] [CrossRef] [PubMed]

- Torres-Sánchez, J.; de la Rosa, R.; León, L.; Jiménez-Brenes, F.M.; Kharrat, A.; López-Granados, F. Quantification of dwarfing effect of different rootstocks in ‘Picual’ olive cultivar using UAV-photogrammetry. Precis. Agric. 2021. [Google Scholar] [CrossRef]

- Nevavuori, P.; Narra, N.; Lipping, T. Crop yield prediction with deep convolutional neural networks. Comput. Electron. Agric. 2019, 163, 104859. [Google Scholar] [CrossRef]

- Panday, U.S.; Shrestha, N.; Maharjan, S.; Pratihast, A.K.; Shrestha, K.L.; Aryal, J. Correlating the plant height of wheat with above-ground biomass and crop yield using drone imagery and crop surface model, a case study from Nepal. Drones 2020, 4, 28. [Google Scholar] [CrossRef]

| Details | Items | Specifications |

|---|---|---|

| UAV | Weight | 297 g |

| Dimensions | 143 mm × 143 mm × 55 mm | |

| Max speed | 50 km/h | |

| Satellite positioning systems | GPS/GLONASS | |

| Digital camera | Camera Focal length | 4.5 mm |

| Sensor dimensions (W × H) | 6.17 mm × 4.56 mm | |

| Sensor Resolution | 12 megapixels | |

| Image Sensor Type | CMOS | |

| Capture Formats | MP4 (MPEG-4 AVC/H.264) | |

| Still Image Formats | JPEG | |

| Video Recorder Resolutions | 1920 × 1080 (1080 p) | |

| Frame Rate | 30 frames per second | |

| Still Image Resolutions | 3968 × 2976 | |

| GIMBAL | Control range Inclination | from −85° to 0° |

| Stabilization | Mechanical 2 axes (inclination, roll) | |

| Obstacle detection distance | 0.2–5 m | |

| Operating environment | Surfaces with diffuse reflectivity (>20%) and dimensions greater than 20 × 20 cm (walls, trees, people, etc.) | |

| Remote Control | Operating Frequency | 5.8 GHz |

| Max Operating Distance | 1.6 km | |

| Battery | Supported Battery Configurations | 3S |

| Rechargeable Battery | Rechargeable | |

| Technology | lithium polymer | |

| Voltage Provided | 11.4 V | |

| Capacity | 1480 mAh | |

| Run Time (Up to) | 16 min | |

| Recharge Time | 52 min |

| Region 1 (kg) | Region 2 (kg) | Region 3 (kg) | Region 4 (kg) | Average Yield (lt/hw) | |

|---|---|---|---|---|---|

| Carboncella | 691.5 | 627.0 | 1021.5 | 827.5 | 17.2 |

| Leccino | 284.5 | 258.5 | 29.0 | 132.5 | 20 |

| Frantoio | 0.0 | 11.5 | 0.0 | 62.5 | 17.8 |

| Total | 976.0 | 897.0 | 1050.5 | 1022.5 |

| Region 1 | Region 2 | Region 3 | Region 4 | |

|---|---|---|---|---|

| m | 0.42 | 0.45 | 0.36 | 0.45 |

| q | 0.01 | −0.04 | −0.01 | −0.03 |

| Coefficient of determination R2 | 0.87 | 0.80 | 0.93 | 0.78 |

| Region 1 | Region 2 | Region 3 | |

|---|---|---|---|

| a (see Equation (4)) | 0.7931 | 1.3836 | 0.6662 |

| b (see Equation (4)) | 1.2388 | 0.7065 | 1.2651 |

| Coefficient of determination R2 | 0.6220 | 0.9787 | 0.8007 |

| Predicted weight (kg) | 976.7 | 922.4 | 936.1 |

| Measured weight (kg) | 976.0 | 897.0 | 1050.5 |

| % error on the weight | 1.0 | 2.8 | −11.5 |

| Predicted EVOO (lt) | 174.2 | 165.4 | 161.8 |

| Meaure EVOO | 175.8 | 161.6 | 181.5 |

| Predicted Weight (kg) | % Error on the Weight | Predicted EVOO (IT) | EVOO Error% | |

|---|---|---|---|---|

| Region 1 | 1208.9 | 16.7 | 214.7 | 17.6 |

| Region 2 | 984.7 | −3.8 | 174.7 | −3.0 |

| Region 3 | 1032.7 | 0.99 | 180.0 | 1.9 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ortenzi, L.; Violino, S.; Pallottino, F.; Figorilli, S.; Vasta, S.; Tocci, F.; Antonucci, F.; Imperi, G.; Costa, C. Early Estimation of Olive Production from Light Drone Orthophoto, through Canopy Radius. Drones 2021, 5, 118. https://doi.org/10.3390/drones5040118

Ortenzi L, Violino S, Pallottino F, Figorilli S, Vasta S, Tocci F, Antonucci F, Imperi G, Costa C. Early Estimation of Olive Production from Light Drone Orthophoto, through Canopy Radius. Drones. 2021; 5(4):118. https://doi.org/10.3390/drones5040118

Chicago/Turabian StyleOrtenzi, Luciano, Simona Violino, Federico Pallottino, Simone Figorilli, Simone Vasta, Francesco Tocci, Francesca Antonucci, Giancarlo Imperi, and Corrado Costa. 2021. "Early Estimation of Olive Production from Light Drone Orthophoto, through Canopy Radius" Drones 5, no. 4: 118. https://doi.org/10.3390/drones5040118

APA StyleOrtenzi, L., Violino, S., Pallottino, F., Figorilli, S., Vasta, S., Tocci, F., Antonucci, F., Imperi, G., & Costa, C. (2021). Early Estimation of Olive Production from Light Drone Orthophoto, through Canopy Radius. Drones, 5(4), 118. https://doi.org/10.3390/drones5040118