1. Introduction

Collaborative multi-robot SLAM technology has advanced significantly in recent years. For example, prior work [

1] developed a collaborative SLAM framework for air–ground scenarios involving multiple unmanned platforms. This framework supports global map initialization by matching visual feature points without the need for GNSS information and generates loop closures during the subsequent motion of the platforms. However, in practical applications, unmanned platforms often follow non-overlapping or diverging paths to conserve energy and maximize operational efficiency. This divergence complicates the generation of positional loop closures between platforms after initialization. In large-scale outdoor tasks, if global localization information such as GNSS is lacking, the localization error of each unmanned platform will continuously diverge, rendering the localization results unusable after a period of time due to excessive drift error. The work described in this paper aims to further improve the positioning accuracy of multi-agent collaborative localization without relying on GNSS in order to promote the widespread application of this technology in outdoor rescue, collaborative logistics transportation, indoor or cave exploration, and other application scenarios where GNSS positioning is denied. To constrain the drift of odometry, the fusion of single unmanned platform odometry with UWB positioning information has become a mature technology. For example, Yang et al.’s previous research [

2] adopted an improved extended Kalman filter-based scheme to fuse UWB positioning information with IMU. Hashim et al. achieved better results using a nonlinear stochastic complementary filter [

3]. Nguyen et al.’s work [

4] employed a factor graph optimization-based approach to tightly couple camera, IMU, and UWB positioning information. Shin et al. [

5] designed a robust odometry that fuses VIO with UWB information using a cost function based on mutual information. Xu developed a dual free-size least squares support vector machines assisted maximum correntropy Kalman filter for fusing INS and UWB data [

6]. Cao et al. combined multiple methods to improve the accuracy of positioning using UWB ranging alone in complex interference conditions [

7]. In the multi-agent domain, Zhou et al. integrated UWB positioning information with laser odometry in multi-robot systems [

8]. This method fuses three-dimensional position information calculated from known UWB anchor positions and UWB ranging information. Although the performance of the aforementioned prior research has been validated in small-scale scenarios, the positioning accuracy is significantly affected when the UWB tag moves outside the area surrounded by the anchors during the UWB positioning process. Therefore, the above methods that fuse UWB positioning information are not applicable when the agent’s motion range is extremely large or when it is impossible to deploy or calibrate anchors in advance. Unlike the aforementioned studies, the method used in this paper does not require fixed anchors and relies solely on the UWB ranging information between two agents. This is similar to the following works. Nguyen et al. [

9] proposed a method that utilizes UWB ranging information and odometry measurements from neighboring robots to obtain more accurate global position estimates. Liu et al. proposed a distributed SLAM framework [

10] that uses Pairwise Consistency Maximization (PCM) to examine the quality of loop closures and perform outlier rejections. Cai et al.’s method [

11] divides UWB measurements into trusted or untrusted states using an adaptive threshold and finally establishes a factor graph model to optimize poses. Nguyen et al.’s latest research [

12] addresses the problem of estimating the four-degree-of-freedom (three-dimensional position and orientation) robot-to-robot relative coordinate transformation using onboard odometry and inter-robot distance measurements. However, in the experiments described in the above works, the motion range of all agents is within a few tens of meters or even a few meters. Considering this, the drift of VIO and the ranging error of UWB are both at a very low order of magnitude. If the UWB measurement is affected by Non-Line-of-Sight (NLOS) interference, the localization accuracy can be improved by directly removing the outliers in the UWB ranging values. However, when the length of the agent’s motion trajectory reaches several kilometers or more, and the distance between two agents reaches several hundred meters, the localization results of VIO will gradually become unreliable due to drift error. In this case, even if the UWB ranging is affected by factors such as NLOS or multipath effects, it can still provide effective constraints for the global position. Especially in complex outdoor environments, it is impossible to guarantee that there will always be Line-of-Sight (LOS) UWB measurements between agents during the motion process.

In addition to the cooperative and UWB-based methods mentioned above, the work also relates to recent research exploring object-based, multi-sensor, and robust SLAM frameworks in changed or large-scale environments. For instance, ObVi-SLAM [

13] tackles long-term consistency by building an object-centric map with uncertainty updates, enabling robust outdoor localization under seasonal/weather changes. LiDAR-UWB-INS integrated positioning approaches [

14] further show benefits in sparse-feature environments by leveraging LiDAR super-resolution and multi-sensor fusion to mitigate drifting. Some systems focus on characterizing or mitigating non-line-of-sight conditions for UWB measurements, such as a reliable UWB/INS approach [

15] and magnetic-UWB fusion [

16], both demonstrating improved accuracy under challenging signal occlusions. Moreover, classification-based UWB methods [

17] employ learning models (e.g., LSTM) to adaptively identify distance errors under heavy multipath or outliers, making UWB more robust in large-scale tasks. Meanwhile, PIPO-SLAM [

18] explores a lightweight visual–inertial backend with “pose-only” multiple-view geometry factorization, reducing the complexity and memory usage compared to classic BA. Recent range-aided visual–inertial estimation has also progressed rapidly. For example, Goudar et al. proposed a range–visual–inertial fusion framework for micro aerial vehicles [

19]. Ma et al. introduced VIRAA-SLAM, which tightly couples visual–inertial measurements with UWB range and angle-of-arrival constraints for robust localization without pre-calibrated anchor positions [

20]. For swarm-scale relative localization, Zhao et al. presented a robust and scalable multi-robot localization method based on stereo UWB arrays [

21]. Beyond model design, Stirling et al. studied Gaussian variational inference with non-Gaussian factors for state estimation and demonstrated improved robustness for UWB localization under heavy-tailed NLOS/multipath errors [

22]. Public resources such as the MILUV dataset further facilitate benchmarking of multi-UAV UWB–vision fusion algorithms [

23]. Compared with these methods, the proposed framework integrates inter-platform UWB distance constraints into a factor-graph-based collaborative SLAM backend and self-tunes the confidence of UWB factors using VIO state covariance and measurement–state residuals. The proposed method does not require scene object detection [

13], does not rely on pre-surveyed static anchors or a single sensor being fully robust [

14,

15,

16,

17], and does not eliminate 3D point parameterization altogether [

18]. Instead, it targets a balanced design for GNSS-denied, large-scale multi-robot deployments where UWB measurements are intermittently NLOS. The key distinguishing features are (i) anchor-free fusion using only inter-robot ranges; (ii) covariance-aware outlier rejection to avoid early-stage bias when VIO is still accurate; and (iii) adaptive Fisher-information weighting for time-varying UWB uncertainty, thereby sustaining accurate global localization at large scales.

In the proposed method, UWB ranging information is fused into a factor graph optimization-based collaborative SLAM algorithm framework. The pairwise ranging information between unmanned platforms provides distance constraints on the global map, thereby suppressing VIO drift. During the modeling process, UWB ranging is considered as a Dual One-way Ranging (DOWR) measurement, which is a non-coherent ranging based on signal time-of-flight. Since it is subject to interference from unknown external environments, the entire optimization problem can be regarded as the fusion of measurement information with unknown noise distribution on the basis of traditional visual–inertial bundle adjustment (BA) optimization. The processing of UWB measurement information can be divided into two steps: first, the drift error of VIO is estimated through the covariance of the states in the single-platform VIO factor graph optimization process. If the residual between the UWB measured distance and the distance obtained by the VIO of the two agents is greater than the estimated VIO drift error at this time, the UWB ranging is directly determined as an outlier. Finally, in the global optimization process, the Fisher information of UWB ranging is estimated using the residuals between the state variables and the UWB ranging. The main contributions of this paper are as follows:

- (1)

For the UWB ranging information in the collaborative localization algorithm framework, a method for outlier rejection of UWB point-to-point ranging under NLOS conditions based on state covariance estimation is proposed.

- (2)

In the factor graph optimization process that fuses visual–inertial and UWB information, the covariance of the UWB ranging factor is estimated using the residuals between the states and measurements, improving the accuracy of the final position estimation while fully utilizing the measurement information.

- (3)

Finally, the effectiveness of the method is demonstrated through real-world experiments.

2. Materials and Methods

2.1. Collaborative Localization Algorithm Overview

In this paper, the main agent refers to the unmanned ground vehicle (UGV) due to its superior on-board computational power. Other unmanned platforms, such as unmanned aerial vehicles (UAVs), are considered secondary agents. The system is divided into two parts: each agent’s independent program and the server program. These programs run separately on each agent platform and the server. The server program is deployed on the main agent, which means the UGV needs to run both the independent program and the server program simultaneously. The global coordinate system {W} is aligned with the local coordinate system of the main agent. The coordinate system {B} is defined with the first frame of the agent’s camera as the origin. The camera optical axis is set as the

x-axis, the positive direction of pixel rows as the

y-axis, and the negative direction of pixel columns as the

z-axis. Each agent runs a modified version of ORB-SLAM3 (open-source C++ library; UZ-SLAMLab, University of Zaragoza, Zaragoza, Spain) [

24] with visual–inertial odometry (VIO). Due to the proximity of the initial positions, each agent can achieve loop closure through visual alignment at the time of departure. By relaxing the initial loop-closure constraints, each agent estimates an initial rigid-body transform TBnW between its local coordinate system {Bn} and the global coordinate system {W}. The loop closure detection and pose recognition methods in the global map follow previous work [

1]. In this paper, the adjustment of each agent’s position in the global map is constrained by both visual loop closure and UWB range measurements. The overall collaborative localization pipeline is shown in

Figure 1.

At time step

ti, during the motion of a given agent

n, its locally running VIO continuously updates the pose

pBn,i ∈ SE(3) in the local coordinate system {

Bn}, as well as the related map points. Meanwhile, agent

n retrieves the poses of other agents in the global coordinate system {

W} from the server. Using the method described in

Section 2.3, the raw UWB readings are converted into distances between keyframes. By comparing the variance of the VIO states with the residuals between the state variables and the measurements, outliers are identified and removed.

The keyframe poses pWn,i and pWm,j (poses in {W}) corresponding to the UWB measurements, as well as the signals of newly observed map points for the keyframe, are transmitted to the server.

The data packet transmitted from the secondary agents to the main agent contains newly added keyframe information from the last transmission to the current moment. This includes the pose of the keyframe in the local map of the current agent, as well as UWB measurements related to other agents (if available, see

Section 2.3 of this chapter for details). Additionally, the data packet includes information about newly added map points, such as the descriptors of the map points and their 3D positions in the local map of the current agent. Due to the centralized communication structure, simultaneous data transmissions from multiple secondary agents can impose significant bandwidth pressure on the main agent. To address this issue, the communication interface of the secondary agent is designed to send a transmission request to the main agent only when at least 5 s have elapsed since the last data transmission and a minimum of 30 keyframes have been accumulated. Data transmission only begins when the main agent’s available bandwidth meets the required conditions. Once the server receives the augmented keyframe information from all agents, a global optimization is performed based on the method described in

Section 2.2. The optimized global poses are then updated, and the corrected poses are sent back to all agents. Each agent adjusts the positions of its local map points and keyframes accordingly. It is worth noting that during the global optimization process at the server level, the Fisher information of each UWB measurement is replaced with the estimated value obtained by the method described in

Section 2.3.

2.2. Collaborative Position Estimation Integrating VIO and Ranging Information

The proposed algorithm is deployed on a central server, integrating the keyframes, map points, and UWB information collected from various unmanned platforms. Once the server receives the measurement data of each agent through the communication module, the optimization problem is formulated using a factor graph model. Similar factor-graph-based range-aided visual–inertial fusion frameworks have been adopted in recent work [

19,

20]. Let

X represent the set of state variables and

Z represent the set of measurement variables. The state estimation problem is formulated as the maximum a posteriori estimation of the state given the measurements:

The function

h(

x) represents the measurement model for the state variables. The residual between the state

xi at time

i and the measurement

zi is defined as

The cost function

c(

x) represents the total measurement cost of the state estimation, which is defined as

In the above equation,

denotes the Mahalanobis distance. The cost functions

cVISION(

X),

cIMU (

X), and

cUWB (

X) correspond to the visual, IMU-predicted, and UWB measurement costs, respectively. When

l 3D points are observed by visual tracking, the set of keyframes observing the

j-th 3Dpoint is denoted as

Kj. The specific forms of the cost functions are as follows:

The specific forms of the visual residual

rVISION(X) and the IMU-predicted residual

rIMU(X) follow ORB-SLAM3. At time

ti, the specific form of the UWB residual

rUWB(X) is as follows:

In the visual cost function

cVISION(

X), Hub(∙) represents the Huber robust kernel function. The information matrix of the visual reprojection residual is the inverse of the covariance matrix ∑

i,j of the visual reprojection error, which is associated with the pyramid level and scale of the FAST feature points extracted. For the IMU cost function

cIMU(

X), the covariance matrix of the IMU prediction is derived through noise back-propagation using Allan variance due to the interference of external measurement error functions, which are expressed as

The pose optimization problem described in this paper can be represented by the factor graph model shown in

Figure 2.

Assuming that the state variables of the system follow a Gaussian distribution, Bayesian inference indicates that the posterior probability of the state variables is proportional to the product of the error functions. Taking the negative logarithm of the product of the error functions yields the posterior probability density function of the state variables

X given the measurements

Z, which is proportional to the sum of the residuals at each time step for each sensor:

Thus, the maximum a posteriori estimation problem is transformed into a nonlinear least squares optimization problem:

2.3. Processing of UWB Ranging

Since the sampling frequency of the UWB receiver/transmitter ranges from 100 to 400 Hz, during the global optimization process, we only consider the distance relationships between keyframes. If each measurement is fused directly, it would significantly increase the computational load. To make full use of as much UWB measurement information as possible and save computational resources, the original multiple UWB data are transformed into a single distance measurement between keyframes. The algorithm introduced in this section runs locally on each agent, used to fuse multiple measurement data and associate them with a specific keyframe, while excluding measurements that may negatively impact subsequent global optimization.

When an agent obtains a raw UWB measurement Rhn→m at time th, this measurement needs to be associated with the closest keyframe ki of the agent on the time axis at time ti. All measurements associated with ki from tx to ty are denoted as {Rx, Ri, …, Ry}.

Based on the VIO localization results of two neighboring frames at time

th, the positions of agent

n and agent

m at time

th are calculated using a constant velocity motion model as

Phn and

Phm, respectively. The residual between the distance calculated by the VIO localization result and the UWB measurement at time

th is defined as

rhn→m:

Similarly, all measurements correspond to a residual set, denoted as {rx, ri, …, ry}. Since the time interval between keyframes is short (commonly within 0.3–1 s in ORB-SLAM), it is assumed that the external environment of this group of UWB measurements does not change significantly. The errors of this group of UWB measurements over a short time are assumed to follow a normal distribution. The proposed method removes outliers through the following steps:

- (1)

Perform Anderson-Darling test to determine whether {rx, ri, …, ry} follows a normal distribution. The significance level α is set to α = 0.1. If the test is not passed, the entire group of measurements is classified as outliers.

- (2)

For the remaining residuals, compute the median ; and the median absolute deviation (MAD). For each residual ri, define the corrected standardized distance zi = (ri − )/(c·MAD), where c ≈ 1.483 for Gaussian data. Residuals with |zi| > kappa are treated as outliers. In this work, kappa = 3 is used as a conservative default; it can be tuned according to the chip specification and field conditions.

- (3)

Let N be the total number of residuals in the current keyframe interval, and let n be the number of remaining inliers. If n < N/3, the whole interval is considered unreliable (e.g., strong interference) and is discarded; otherwise, the inlier sequence is fused to a single keyframe-to-keyframe range using a Kalman filter at time ti.

If a valid measurement sequence is obtained through the above steps, all valid measurements are used to estimate the distance Rin→m from agent n to agent m at time ti using a Kalman filter. Here, it is assumed that the unmanned platforms follow a constant velocity motion model, and the state transition matrix in the Kalman filter is set to 1. The corresponding frame of agent m at time ti is also treated as a keyframe and sent to the server.

The predicted distance

||pin −

pim|| is compared with the UWB-measured distance

Rin→m and combined with the uncertainty in position estimation (i.e., the covariance matrix of state estimation) to evaluate whether the current

Rin→m is accurate, thereby determining whether it can provide valid information for state estimation. Assume that the covariance matrices of the positions of the two agents in the previous optimization step are

ΣnVIO and

ΣmVIO, respectively. The variance of the predicted distance is calculated based on the law of error propagation as

Since the noise variance of UWB ranging is unknown and variable, we can use the uncertainty

σ2VIO of the predicted distance to evaluate whether the measurement residual

rin→m is within a reasonable range. The standardized residual is defined as

Assuming that the measurement errors follow a Gaussian distribution, the square of the Mahalanobis distance follows a chi-squared distribution with 1 degree of freedom. The threshold is set to γ < 0.71, corresponding to a 30% confidence level under the Gaussian assumption. If this threshold is exceeded, the UWB ranging measurement corresponding to this keyframe is considered invalid and removed.

2.4. Adaptive Fisher Information Estimation of UWB Ranging Factor

Before each global pose optimization begins, it is necessary to estimate the Fisher information of the newly added keyframes’ UWB range measurements, thus highlighting the importance of UWB in the overall optimization. Suppose that at time

ti, agents

n and

m occupy positions

pin and

pim, respectively, and there is a valid UWB measurement

Rin→m. For simplicity, this section only considers the state variables of two platforms and their direct range measurement; for multiple platforms, an extension with multiple pairwise measurements can be applied. Let the state estimate from the previous global optimization be

with the corresponding covariance

Assuming that at time

ti, the prior state distribution of agents

n and

m follows a Gaussian distribution, we can write

When a new range measurement is introduced into the global optimization, we aim to obtain a posterior distribution

Qi(

x) to characterize the new state uncertainty after considering the UWB range measurement. Since the noise distribution of UWB is unknown (often non-Gaussian under NLOS/multipath [

22]), we only know that

Rin→m is related to the true distance in some way. To avoid overly strong assumptions about the distribution, we can adopt the method of “expectation equals the measured value” to represent this measurement constraint:

Among all feasible posterior distributions

Qi(

x) satisfying the above measurement constraint, we choose the one that is “closest” to the prior distribution

Pi(

x). If we use the KL divergence to measure the information gain between

Q*(

x) and

P(

x), we have

To minimize the KL divergence, we can formulate the problem as

subject to

This is a typical minimum KL-divergence problem with a linear constraint, which can be solved using the method of Lagrange multipliers. Let λ be the Lagrange multiplier corresponding to the distance expectation constraint, ensuring that the expected distance equals the UWB measurement:

Let α be the Lagrange multiplier corresponding to the normalization constraint. The Lagrangian functional is then constructed as

By taking the functional derivative of

Qi(

x) and setting it to zero, we obtain the optimal solution:

where

Z(

λ) is the normalization constant defined by

Taking the negative logarithm with respect to

x, we obtain

We can view ln

Qi*(

x) as the “additional cost function” introduced by this range measurement. We ignore the part involving

Pi(

x) because it is already included in the prior or in other factors; the truly new penalty term from the measurement is

In standard nonlinear least-squares factor-graph optimization, when performing Nonlinear Least Squares type factor-graph optimization, we typically expect each measurement factor to contribute a quadratic term involving the measurement residual and the information matrix. However, it is clearly not a quadratic form but rather a weighted distance. Therefore, we need to perform a second-order expansion with respect to the state x* i.

For convenience, let

be denoted by

f (

x), where

x =

μi, and take the first-and second-order derivatives of

f at

x =

μi:

Then, performing a second-order expansion of

f(

x) around

x* yields

Substituting the above notation, this can be expressed as

If we consider

f(

x) to be the cost function described in

Section 2.2, the current UWB cost function for the state

X can be written as

We can interpret the “second-order” term as a form of

residual

T information residual, leading us to approximate the information matrix by

The multiplier

λi is obtained by enforcing the constraint that the expected distance under the posterior equals the UWB measurement, i.e.,

E (under

Qi*) [

d(

x)] =

Ri. Because the corresponding integral has no closed-form solution,

λi is solved numerically. In practice, the prior distribution is approximated by samples (or sigma points), and

λi is found with a one-dimensional search (e.g., bisection or golden-section search).

Given samples

x(s) from the prior

N(

μi, Σ

i), the expectation

E (under

Qi*) [

d(

x)] can be evaluated with normalized weights proportional to exp(−

λ·

d(

x(s))). After

λi is obtained, the resulting posterior can be interpreted as a prior updated by a range-consistency factor. During successive global optimizations, the weights of the ranging factors converge to reasonable values: when the discrepancy between the measurement and the prior is large (typical under NLOS),

λi becomes smaller; when the measurement is consistent with the prior,

λi becomes larger. The procedure is summarized in Algorithm 1.

| Algorithm 1. Adaptive Fisher-information estimation for a UWB range factor |

Input: prior mean/covariance (μi, Σi) from the last global optimization; UWB range measurement Ri for agents n and m at keyframe time ti.

Sample: draw S samples x(s) ~ N(μi, Σi) (or use sigma points) and compute distances d(s) = ||pn(s) − pm(s)||.

Search: find λi ≥ 0 such that the weighted expectation ∑s ws(λi)·d(s) matches Ri, where ws(λ) ∝ exp(−λ·d(s)).

Update: use the local second-order approximation (around μi) to convert the tilted posterior into an equivalent quadratic factor and compute the information matrix (31).

Output: adaptive information (weight) for the UWB factor to be used in the next global optimization. |

3. Experimental Evaluation

3.1. Experiment Setup

To validate the effectiveness of the proposed method, we conducted experiments on a system comprising three UAVs and two UGVs. Each platform was equipped with identical sensors, with image and IMU data provided by the RealSense D455 (Intel Corporation, Santa Clara, CA, USA), and the UWB module based on the DW1000 chip (Decawave Ltd., Dublin, Ireland). The UAVs were equipped with vertically polarized UWB antennas with a gain of 6.3 dBi, while the UGVs used UWB antennas with a gain of 14.2 dBi. Supported by high-gain antennas, the selected UWB module achieved a maximum ranging distance of up to 1.5 km under LOS conditions, with an average ranging error of +0.6 m. Under NLOS conditions, depending on the type of obstacle, the maximum ranging distance still exceeded 500 m, though the maximum ranging error could exceed 20 m.

The ground truth of the platform trajectories was obtained by fusing data from a high-performance MTI300 IMU (Xsens Technologies B.V., Enschede, The Netherlands) and GPS corrected with RTK. Both the UAVs and UGVs utilized the NVIDIA AGX platform (NVIDIA Corporation, Santa Clara, CA, USA) as their main control unit. The server used in this experiment was equipped with an AMD 5995WX processor (Advanced Micro Devices, Inc., Santa Clara, CA, USA) and 128 GB of memory. The UAV and UGV platforms and sensor/antenna placement are shown in

Figure 3.

All sensor extrinsics (GNSS, UWB, IMU, and camera) were calibrated prior to the experiments.

The experiments were conducted at two locations: the multi-level rooftop of the School of Automation building at Nanjing University of Science and Technology and a forested area with open spaces to the north of the building, as shown in

Figure 4. The total area spanned approximately 1.6 square kilometers and included various obstacles that could affect UWB ranging, such as walls, wire fences, small structures, reinforced concrete buildings, and large trees.

Eight experimental groups were conducted: four on the multi-level rooftop of the School of Automation building and four in the nearby forest/open area (

Figure 4). The full platform set consists of three UAVs and two UGVs; depending on site layout and safety constraints, a subset of agents may be used in a given group (

Table 1). Each dataset includes stereo images and IMU measurements from the RealSense sensors, navigation data from the MTI300 unit, and RTK-corrected GNSS for ground truth.

During the physical experiments, the main agent received data packets from each secondary agent at an average interval of 9.7 s, with an average packet size of 1.7 MB. Since the system adopts a centralized architecture, the bandwidth pressure increases linearly with the number of secondary agents.

3.2. Experimental Results

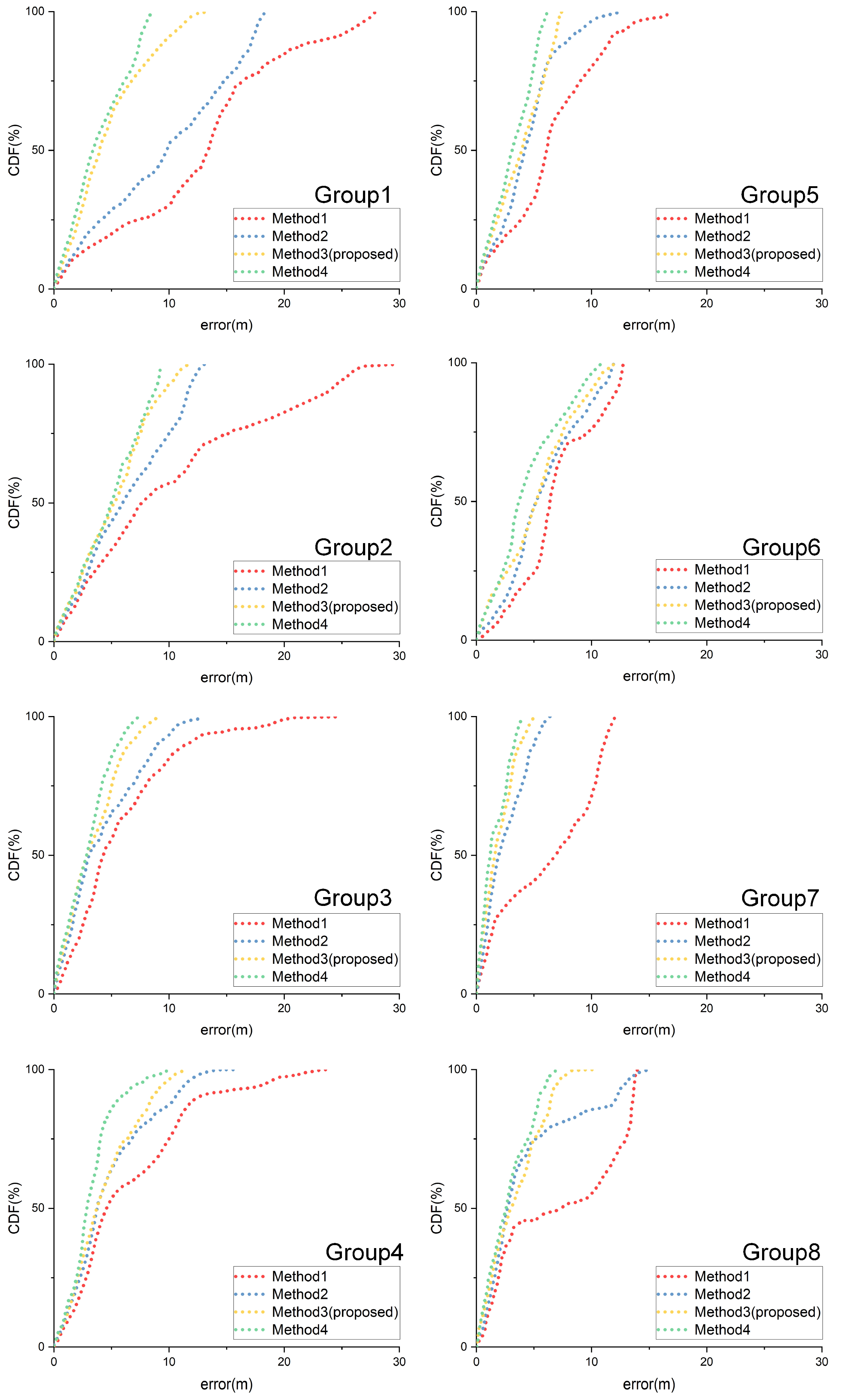

To evaluate the contributions of the proposed components (covariance-based UWB outlier rejection and adaptive Fisher-information weighting), we conduct an ablation study with four methods:

Method 1 (Baseline): Non-cooperative single-platform localization using monocular-inertial ORB-SLAM3 (VIO only).

Method 2: Covariance-based UWB outlier rejection (

Section 2.3) + fixed UWB weight (information set to 1) in the global factor graph optimization.

Method 3 (Proposed): Method 2 + adaptive Fisher-information estimation for UWB factors (

Section 2.4).

Method 4 (Idealized reference): Oracle UWB outlier removal using ground truth and weights set to the inverse of the true UWB error variance (upper-bound reference).

To independently evaluate the impact of UWB ranging constraints on localization accuracy, visual loop closure detection was disabled in all methods during the experimental process.

This progressive ablation clearly reveals the individual and combined effectiveness of the proposed components. Due to space limitations,

Figure 5 only shows the true value of the trajectory of one experiment (experimental group 1) and the trajectory obtained by the proposed method.

Figure 6 shows the error curves of the positioning of each unmanned platform in Experimental Group 1 using four methods.

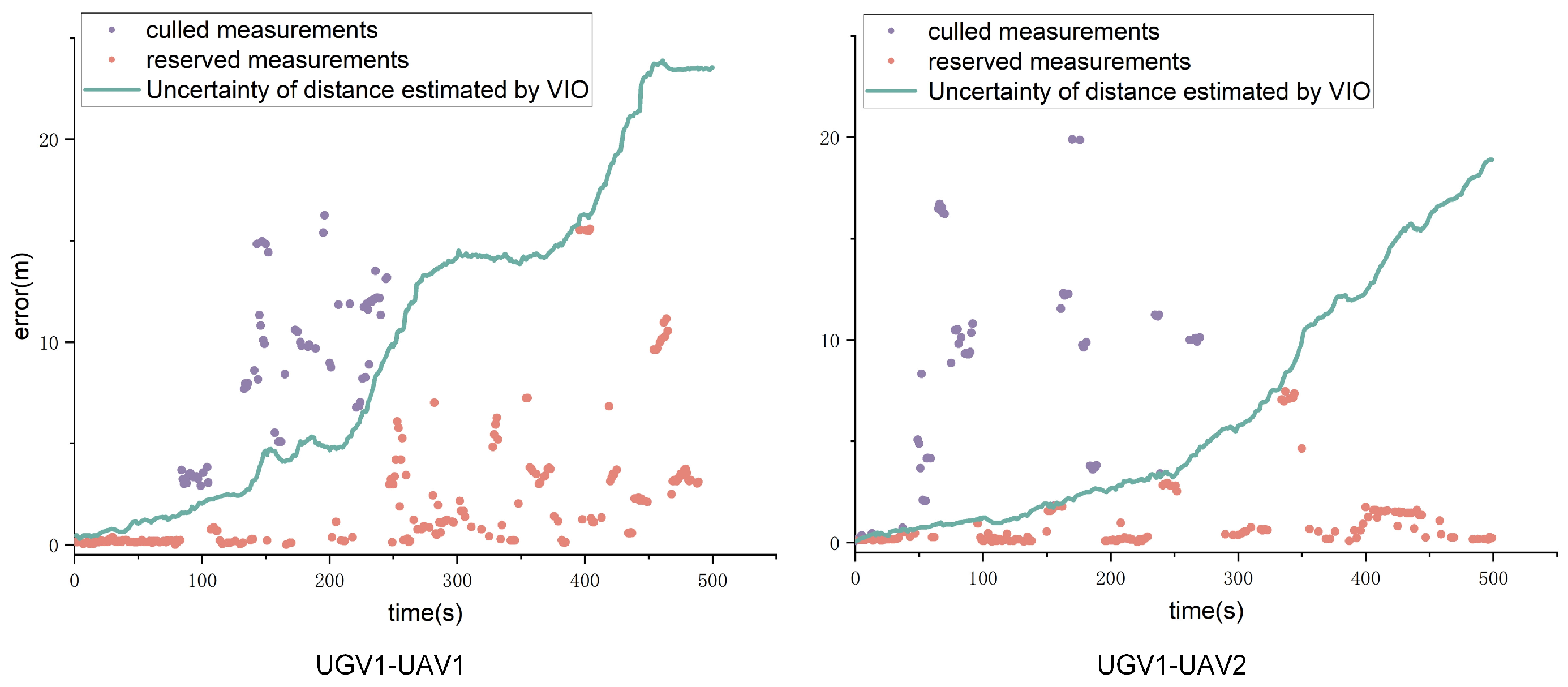

Figure 7 shows the UWB measurements of UGV1 relative to UAV1 and UAV2 in Experimental Group 1 and describes how to eliminate measurements that may provide effective information for subsequent optimization through the positioning confidence of UGV1′s VIO. UGV1 is selected here because the UWB measurements of the unmanned vehicle are subject to more NLOS interference.

Figure 8 shows the cumulative distribution functions (CDFs) curves of the error of the eight groups of experiments.

Figure 9 describes the error of the UWB measurement of each of the eight groups of experiments, which reflects the degree of interference to the UWB ranging of the unmanned platform during movement.

Localization accuracy is evaluated using the position root mean square error (RMSE) and the maximum position error (MPE) [

25]. RMSE summarizes the average error over the trajectory, while MPE captures the worst-case deviation, which is important for safety-critical navigation. The results for all eight experimental groups are summarized in

Table 2.

5. Conclusions

This study proposes a multi-robot collaborative localization method based on the fusion of UWB and VIO, aiming to address the challenges of collaborative localization for unmanned platforms in large-scale and complex environments. By dynamically estimating the Fisher information of UWB measurements and leveraging state covariance for outlier rejection, the proposed method effectively suppresses VIO drift errors and improves global localization accuracy and robustness without relying on fixed anchors.

The method was validated through multiple experiments conducted in multi-level rooftop areas, forested regions, and open spaces. Experimental results demonstrate that the proposed method significantly outperforms traditional single-platform localization and unoptimized collaborative localization approaches, particularly in scenarios where UWB signals are heavily disturbed. In GNSS-denied environments, the proposed method exhibits high localization accuracy and adaptability in long-distance movements and complex environments.

In the future, the proposed method has the potential to be applied in various practical scenarios, such as disaster rescue, collaborative logistics, and underground exploration. Additionally, integrating data from complementary sensors (e.g, LiDAR or millimeter-wave radar) and exploring advanced distributed optimization algorithms represent promising directions for further improving system performance.

Beyond the current evaluation, the framework can be strengthened by explicitly studying scalability (agent count, communication delay, and packet loss) and by benchmarking against representative distributed backends under matched sensing and communication assumptions. In addition, reporting percentile-based error statistics (e.g., 95th percentile) alongside RMSE and MPE would further characterize tail behavior in safety-critical deployments.

Finally, combining the proposed model-based weighting with learned NLOS classification or signal-quality features is a promising hybrid direction: learning can provide fast, environment-adaptive priors, while the factor-graph backend preserves global consistency and uncertainty propagation.