Information Geometry Conflicts With Independence †

Abstract

:1. Introduction

2. Counter-Example

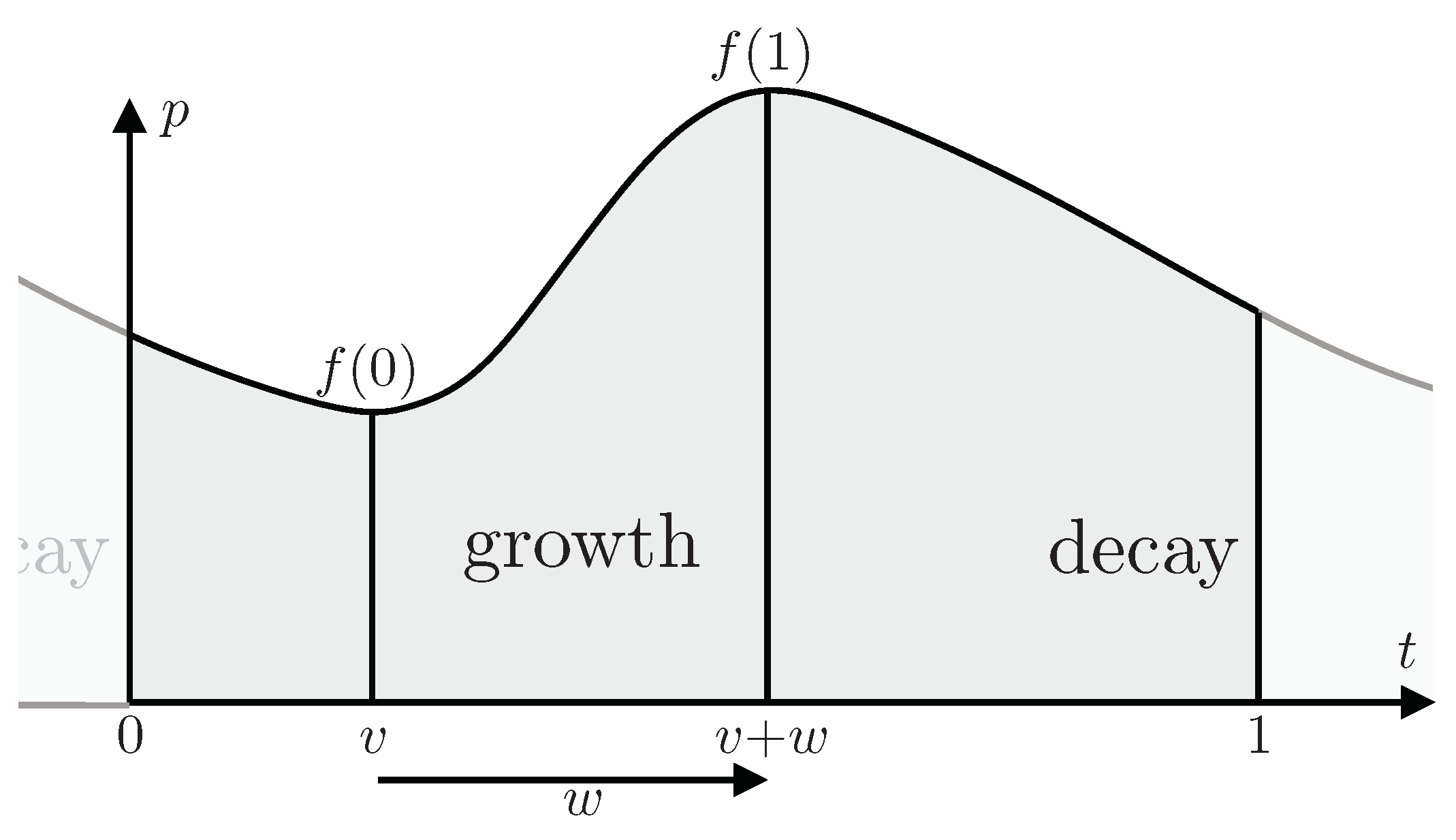

2.1. Two Parameters v and w

2.2. One Parameter w

2.3. Comparison of One and Two Parameters

2.4. Science

| Treatment of v should not influence inference about w | [Science] |

3. Conclusions

Funding

Acknowledgments

Conflicts of Interest

References

- Amari, S. Differential-geometrical methods in statistics. In Lecture Notes in Statistics; Springer-Verlag: Berlin, Germany, 1985. [Google Scholar]

- Fisher, R. A. Theory of statistical estimation. Proc. Camb. Philos. Soc. 1925, 122, 700–725. [Google Scholar] [CrossRef]

- Rao, C.R. Information and the accuracy attainable in the estimation of statistical parameters. Bull. Calcutta Math. Soc. 1945, 37, 81–89. [Google Scholar]

- Shannon, C.F. A Mathematical theory of Communication. Bell Syst. Tech. J. 1948, 27, 379–423, 623–656. [Google Scholar] [CrossRef]

- Knuth, K.H.; Skilling, J. Foundations of Inference. Axioms 2012, 1, 38–73. [Google Scholar] [CrossRef]

- Skilling, J. Critique of Information Geometry. AIP Conf. Proc. 2013, 1636, 24–29. [Google Scholar]

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Skilling, J. Information Geometry Conflicts With Independence. Proceedings 2019, 33, 20. https://doi.org/10.3390/proceedings2019033020

Skilling J. Information Geometry Conflicts With Independence. Proceedings. 2019; 33(1):20. https://doi.org/10.3390/proceedings2019033020

Chicago/Turabian StyleSkilling, John. 2019. "Information Geometry Conflicts With Independence" Proceedings 33, no. 1: 20. https://doi.org/10.3390/proceedings2019033020

APA StyleSkilling, J. (2019). Information Geometry Conflicts With Independence. Proceedings, 33(1), 20. https://doi.org/10.3390/proceedings2019033020