Abstract

Nowadays, multiple choice questions play an important role in the self-evaluation process. The present work undertakes the design of a computer tool—Excel® worksheet—that will allow the calculation of different parameters that are usually employed for the evaluation of tests: difficulty, discrimination index, consistency, etc. The designed tool is used to evaluate the goodness to fit of multiple-choice tests on a practical particular case: self-teaching by competencies on the academic field of Pharmaceutical Technology of the Pharmacy Degree at Complutense University of Madrid. The easy-access computer tool designed makes it possible to evaluate tests from the empirical evidence, with respect to the fulfilment of the desired psychometric requirements, aiming to be useful for the student self-learning.

1. Introduction

Nowadays, the multiple choice exams play an important role in the evaluation process [1].

The incorporation of the students on the creation of tests, the difficulty of their conception and the importance of their correct design to act as a useful tool or resource for the teaching-learning process lead to the need of individual evaluation of the items to rationalize their selection [1].

Then, it is logical to consider the necessity of relying on a tool which allows us to evaluate individually the quality of each of the questions belonging to a multiple choice exam. Hence, allows us to carry out an appropriate selection of those suited before they actually become part of the battery of questions that will be used to create future exams [2,3,4,5].

In this context, the present work undertakes the design and application of a computer tool that would verify the reliability and quality of test exams. Although there are existing computer tools designed for this matter as those developed by Assessment System Corporation y Brooks [6,7], it is proposed to use an easy tool, and for this reason, an Excel® sheet is designed in order to allow the calculation of the different parameters used in the evaluation of the items.

2. Materials and Methods

The methodology of the present work involves the selection of contents belonging to the subject Pharmaceutical Technology, the design and verification of a multiple choice exam. Their quality, reliability and utility on the student self-taught are analyzed using the computer tool designed. The evaluated multiple choice exams are then distributed to groups of students studying Pharmaceutical Technology (Pharmacy degree) at the Complutense University of Madrid [5].

2.1. Multiple Choice Exams Design

2.1.1. Defining the Content to Be Studied

The amount of detail in the contents is directly proportional to the facility to draft the items and to improve the exam contents’ validity.

2.1.2. Table of Specifications of Educational Objectives (TSEO) according to Bloom’s Taxonomy with the Following Three Levels of Knowledge

- Basic: on facts and concepts (knowledge and comprehension).

- Medium: on procedures (application and analysis).

- Superior or metacognitive (synthesis and evaluation).

The TSEO is then completed by specifying the amount of items needed to correctly depict each one of the aspects to be evaluated.

2.1.3. Item Creation

Multiple choice questions (PEM) are addressed, paying special attention to those incorrect or distracting options to ensure their attractiveness to people who do not know the correct answer or simply have a superficial knowledge on the subject raised on the item and in turn to result irrelevant to those who possess a good knowledge on the evaluated subject.

2.2. Questions’ Psychometric Properties

It involves the analysis of the questions, following the multiple choice exams questions’ resolution by the students. By using the evaluated computer tool, the following aspects are determined: Basic descriptive statistics, Item’s difficulty rate (ID) and marking difficulty rate (IDc), Item’s discrimination rate, Relation between discrimination and difficulty, Test verification: error measurement or standard error measurement (SEM), Test verification: reliability. Internal consistency analysis is carried out (Cronbach’s alpha-coefficient).

2.3. Analysis Decisions

Decision making is addressed towards the removal of items, answers, possible changes on the questions’ formulation, etc. To be able to establish a common evaluation criterion, to be able to take these decisions, a relation between quality indicators was created and measures to be taken established. The discrimination rate is an extremely useful parameter when rationally selecting the questions. Table 1 shows those suggested by Ebel in 1965 [5].

Table 1.

Criteria for the discrimination rate’s values [5].

A relation between difficulty and discrimination rates exists: if an item is fully easy (p = 1), it cannot be discriminating (D = 0). Same happens with one fully difficult. Table 2 shows the relation between both rates.

Table 2.

Relation between the discriminating capacity of an item and its difficulty.

3. Results and Discussion

3.1. Multiple Choice Exam Design

The TSEO is shown in Table 3: each item is related to a competence.

Table 3.

TSEO for the domain “Medicament and Pharmaceutical Technology: Introduction”.

3.2. Psychometric Properties

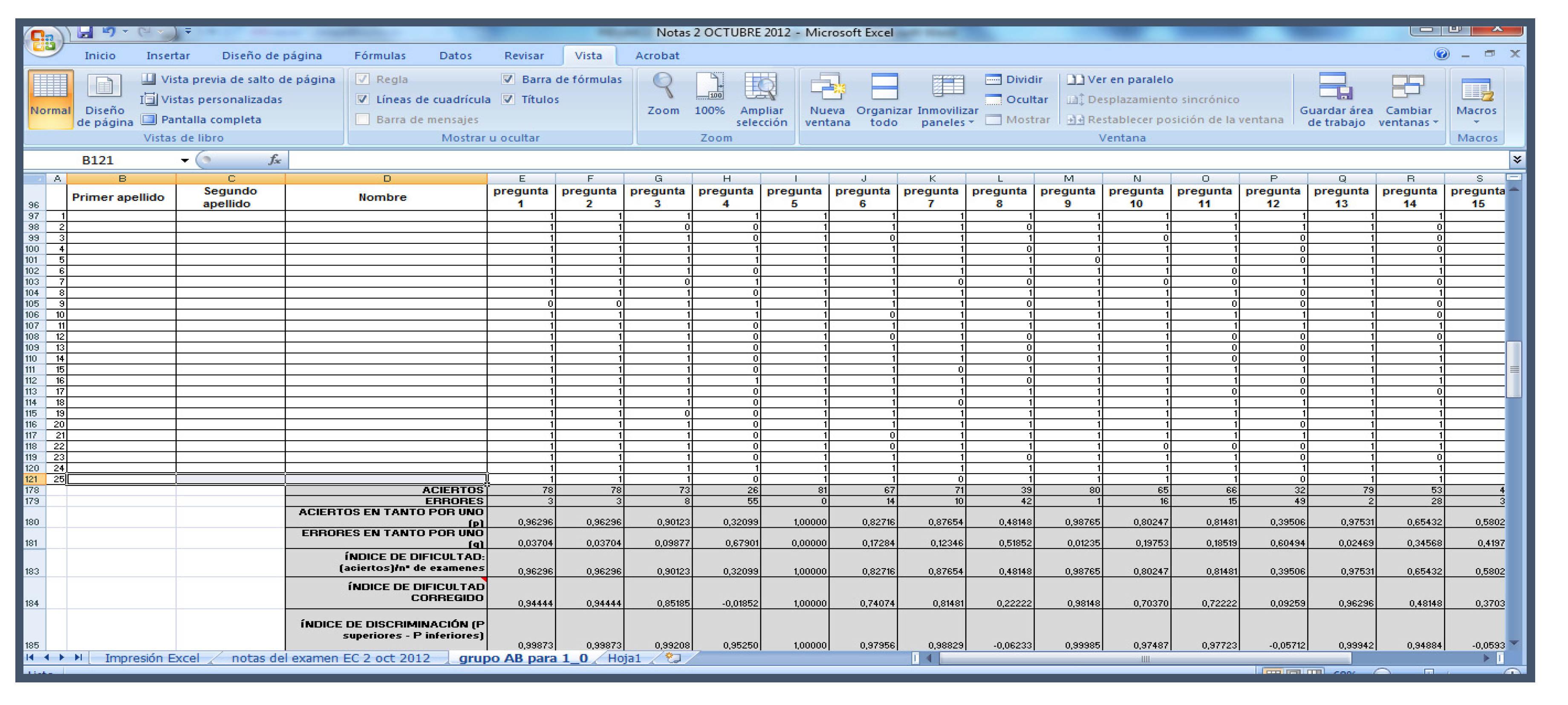

An Excel sheet is designed placing the items and the students involved (Figure 1). Once the exam has been carried out, cells are filled: “1” for correct answers and “0” for wrong answers. The formula for calculating the parameters used to verify the exam (ID, IDc, …) is placed at the end.

Figure 1.

Excel sheet showing the values of the evaluating parameters for an exam.

From the students’ answers and the corrected multiple choice exams, was then carried through. Table 4 shows the results of the items’ analysis obtained for the conceptual domain: “Medicament and Pharmaceutical Technology: Introduction” (n = number of students). To verify the exam’s reliability, an analysis of its internal consistency was carried out (Table 5). In all cases significant values were obtained, indicating the reliability of the exams.

Table 4.

Psychometric properties of the items of the multiple choice exam.

Table 5.

Multiple choice exam’s quality.

3.3. Analysis Decisions

The defined criteria application leads to the actions shown in Table 6.

Table 6.

Decisions from the analysis of the items.

Acknowledgments

The current work belongs to the “Innovation and improvement of teaching quality projects” of the Complutense University of Madrid (PIMCD-UCM).

Conflicts of Interest

The authors declare no conflict of interest. The founding sponsor—PIMCD UCM—had no role in the design of the study; in the collection, analyses, or interpretation of data; in the writing of the manuscript, and in the decision to publish the results.

References

- Sanchez-Elez, M.; Pardines, I.; Garcia, P.; Miñana, G.; Roman, S.; Sanchez, M.; Risco, J.L. Enhancing Students’ Learning Process through Self-Generated Tests. J. Sci. Educ. Technol. 2014, 23, 15–25. [Google Scholar] [CrossRef]

- Galofré, T.A.; Wright, A.C.N. Índice de calidad para evaluar preguntas de opción múltiple. Revista de Educación en Ciencias de la Salud 2010, 7, 141–145. [Google Scholar]

- Gali, A.; Roiter, H.; De Mollein, D.; Swieszkowski, S.; Atamañuk, N.; Ahuad Guerrero, A.; Grancelli, H.; Barero, C. Evaluación de la calidad de las preguntas de selección múltiple utilizadas en los exámenes de Certificación y Recertificación en Cardiología. Revista Argentina de Cardiología 2011, 79, 15–20. [Google Scholar]

- Gómez de Terreros, I. Análisis evaluativo de calidad de la prueba objetiva tipo test (preguntas de elección múltiple). Revista de Enseñanza Universitaria 1998, 13, 105–111. [Google Scholar]

- Doval, E.; Renom, J. Desarrollo y verificación de la calidad de pruebas tipo test. Curso organizado por UB, IL3 e ICE-UB, 2010. [Google Scholar]

- Assessment System Corporation. Available online: http://www.assess.com (accessed on 30 May 2018).

- Brooks, G.P. “TAP (Test Analysis Program)”, Ohio University. Available online: https://www.ohio.edu/education/faculty-and-staff/profiles.cfm?profile=brooksg (accessed on 30 May 2018).

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).